Chapter 2. OpenStack networking concepts

OpenStack Networking has system services to manage core services such as routing, DHCP, and metadata. Together, these services are included in the concept of the Controller node, which is a conceptual role assigned to a physical server.

A physical server is typically assigned the role of Network node and dedicated to the task of managing Layer 3 routing for network traffic to and from instances. In OpenStack Networking, you can have multiple physical hosts performing this role, allowing for redundant service in the event of hardware failure. For more information, see the chapter on Layer 3 High Availability.

Red Hat OpenStack Platform 11 added support for composable roles, allowing you to separate network services into a custom role. However, for simplicity, this guide assumes that a deployment uses the default controller role.

2.1. Installing OpenStack Networking (neutron)

The OpenStack Networking component is installed as part of a Red Hat OpenStack Platform director deployment. For more information about director deployment, see Director Installation and Usage.

2.2. OpenStack Networking diagram

This diagram depicts a sample OpenStack Networking deployment, with a dedicated OpenStack Networking node performing layer 3 routing and DHCP, and running the advanced service load balancing as a Service (LBaaS). Two Compute nodes run the Open vSwitch (openvswitch-agent) and have two physical network cards each, one for project traffic, and another for management connectivity. The OpenStack Networking node has a third network card specifically for provider traffic:

2.3. Security groups

Security groups and rules filter the type and direction of network traffic that neutron ports send and receive. This provides an additional layer of security to complement any firewall rules present on the compute instance. The security group is a container object with one or more security rules. A single security group can manage traffic to multiple compute instances.

Ports created for floating IP addresses, OpenStack Networking LBaaS VIPs, and instances are associated with a security group. If you do not specify a security group, then the port is associated with the default security group. By default, this group drops all inbound traffic and allows all outbound traffic. However, traffic flows between instances that are members of the default security group, because the group has a remote group ID that points to itself.

To change the filtering behavior of the default security group, you can add security rules to the group, or create entirely new security groups.

2.4. Open vSwitch

Open vSwitch (OVS) is a software-defined networking (SDN) virtual switch similar to the Linux software bridge. OVS provides switching services to virtualized networks with support for industry standard , OpenFlow, and sFlow. OVS can also integrate with physical switches using layer 2 features, such as STP, LACP, and 802.1Q VLAN tagging. Open vSwitch version 1.11.0-1.el6 or later also supports tunneling with VXLAN and GRE.

For more information about network interface bonds, see the Network Interface Bonding chapter of the Advanced Overcloud Customization guide.

To mitigate the risk of network loops in OVS, only a single interface or a single bond can be a member of a given bridge. If you require multiple bonds or interfaces, you can configure multiple bridges.

Using single root I/O virtualization (SR-IOV) on bonded interfaces is not supported.

2.5. Changing the OpenFlow interface for Open vSwitch

In Red Hat OpenStack Platform 13, the Networking service (neutron) uses Python 2.7 which does not work well with the python-ryu library that Open vSwitch depends on for managing OpenFlow rules.

If you experience timeouts when the neutron Open vSwitch (OVS) agent connects to OVS, then you must change the value for the OpenFlow interface and OVS database options.

Prerequesites

- You are using Open vSwitch in RHOSP 13.

Procedure

On the undercloud host, logged in as the stack user, create a custom YAML environment file.

Example

$ vi /home/stack/templates/my-ovs-environment.yamlTipThe Orchestration service (heat) uses a set of plans called templates to install and configure your environment. You can customize aspects of the overcloud with a custom environment file, which is a special type of template that provides customization for your heat templates.

In the YAML environment file under

parameter_defaults, add the following Puppet variables:parameter_defaults: ExtraConfig: neutron::agents::ml2::ovs::of_interface: ovs-ofctl neutron::agents::ml2::ovs::ovsdb_interface: vsctl ...ImportantEnsure that you add a whitespace character between the single colon (:) and the value.

Run the

openstack overcloud deploycommand and include the core heat templates, environment files, and this new custom environment file.ImportantThe order of the environment files is important as the parameters and resources defined in subsequent environment files take precedence.

Example

$ openstack overcloud deploy --templates \ -e [your-environment-files] \ -e /usr/share/openstack-tripleo-heat-templates/environments/services/my-ovs-environment.yaml

Additional resources

- Puppet: Customizing Hieradata for Individual Nodes in the Advanced Overcloud Customization guide

- Environment files in the Advanced Overcloud Customization guide

- Including Environment Files in Overcloud Creation in the Advanced Overcloud Customization guide

2.6. Modular layer 2 (ML2) networking

ML2 is the OpenStack Networking core plug-in introduced in the OpenStack Havana release. Superseding the previous model of monolithic plug-ins, the ML2 modular design enables the concurrent operation of mixed network technologies. The monolithic Open vSwitch and Linux Bridge plug-ins have been deprecated and removed; their functionality is now implemented by ML2 mechanism drivers.

ML2 is the default OpenStack Networking plug-in, with OVN configured as the default mechanism driver.

2.6.1. The reasoning behind ML2

Previously, OpenStack Networking deployments could use only the plug-in selected at implementation time. For example, a deployment running the Open vSwitch (OVS) plug-in was required to use the OVS plug-in exclusively. The monolithic plug-in did not support the simultaneously use of another plug-in such as linuxbridge. This limitation made it difficult to meet the needs of environments with heterogeneous requirements.

2.6.2. ML2 network types

Multiple network segment types can be operated concurrently. In addition, these network segments can interconnect using ML2 support for multi-segmented networks. Ports are automatically bound to the segment with connectivity; it is not necessary to bind ports to a specific segment. Depending on the mechanism driver, ML2 supports the following network segment types:

- flat

- GRE

- local

- VLAN

- VXLAN

- Geneve

Enable Type drivers in the ML2 section of the ml2_conf.ini file. For example:

[ml2]

type_drivers = local,flat,vlan,gre,vxlan,geneve2.6.3. ML2 mechanism drivers

Plug-ins are implemented as mechanisms with a common code base. This approach enables code reuse and eliminates much of the complexity around code maintenance and testing.

The default mechanism driver is OVN. You enable mechanism drivers using the Orchestration service (heat) parameter, NeutronMechanismDrivers. Here is an example from a heat custom environment file:

parameter_defaults:

...

NeutronMechanismDrivers: ansible,ovn,baremetal

...

The order in which you specify the mechanism drivers matters. In the earlier example, if you want to bind a port using the baremetal mechanism driver, then you must specify baremetal before ansible. Otherwise, the ansible driver will bind the port, because it precedes baremetal in the list of values for NeutronMechanismDrivers.

2.7. ML2 type and mechanism driver compatibility

| Mechanism Driver | Type Driver | ||||

|---|---|---|---|---|---|

| flat | gre | vlan | vxlan | geneve | |

| ovn | yes | no | yes | no | yes |

| openvswitch | yes | yes | yes | yes | no |

2.8. Limits of the ML2/OVN mechanism driver

The following table describes features that Red Hat does not yet support with ML2/OVN. Red Hat plans to support each of these features in a future Red Hat OpenStack Platform release.

In addition, this release of the Red Hat OpenStack Platform (RHOSP) does not provide a supported migration from the ML2/OVS mechanism driver to the ML2/OVN mechanism driver. This RHPOSP release does not support the OpenStack community migration strategy. Migration support is planned for a future RHOSP release.

| Feature | Notes | Track this Feature |

|---|---|---|

| Fragmentation / Jumbo Frames | OVN does not yet support sending ICMP "fragmentation needed" packets. Larger ICMP/UDP packets that require fragmentation do not work with ML2/OVN as they would with the ML2/OVS driver implementation. TCP traffic is handled by maximum segment sized (MSS) clamping. | https://bugzilla.redhat.com/show_bug.cgi?id=1547074 (ovn-network) https://bugzilla.redhat.com/show_bug.cgi?id=1702331 (Core ovn) |

| Port Forwarding | OVN does not support port forwarding. | https://bugzilla.redhat.com/show_bug.cgi?id=1654608 https://blueprints.launchpad.net/neutron/+spec/port-forwarding |

| Security Groups Logging API | ML2/OVN does not provide a log file that logs security group events such as an instance trying to execute restricted operations or access restricted ports in remote servers. | |

| Multicast | When using ML2/OVN as the integration bridge, multicast traffic is treated as broadcast traffic. The integration bridge operates in FLOW mode, so IGMP snooping is not available. To support this, core OVN must support IGMP snooping. | |

| SR-IOV | Presently, SR-IOV only works with the neutron DHCP agent deployed. | |

| Provisioning Baremetal Machines with OVN DHCP |

The built-in DHCP server on OVN presently can not provision baremetal nodes. It cannot serve DHCP for the provisioning networks. Chainbooting iPXE requires tagging ( | |

| OVS_DPDK | OVS_DPDK is presently not supported with OVN. |

2.9. Limit for non-secure ports with ML2/OVN

Ports might become unreachable if you disable the port security plug-in extension in Red Hat Open Stack Platform (RHOSP) deployments with the default ML2/OVN mechanism driver and a large number of ports.

In some large ML2/OVN RHSOP deployments, a flow chain limit inside ML2/OVN can drop ARP requests that are targeted to ports where the security plug-in is disabled.

There is no documented maximum limit for the actual number of logical switch ports that ML2/OVN can support, but the limit approximates 4,000 ports.

Attributes that contribute to the approximated limit are the number of resubmits in the OpenFlow pipeline that ML2/OVN generates, and changes to the overall logical topology.

2.10. Configuring the L2 population driver

The L2 Population driver enables broadcast, multicast, and unicast traffic to scale out on large overlay networks. By default, Open vSwitch GRE and VXLAN replicate broadcasts to every agent, including those that do not host the destination network. This design requires the acceptance of significant network and processing overhead. The alternative design introduced by the L2 Population driver implements a partial mesh for ARP resolution and MAC learning traffic; it also creates tunnels for a particular network only between the nodes that host the network. This traffic is sent only to the necessary agent by encapsulating it as a targeted unicast.

To enable the L2 Population driver, complete the following steps:

1. Enable the L2 population driver by adding it to the list of mechanism drivers. You also must enable at least one tunneling driver enabled; either GRE, VXLAN, or both. Add the appropriate configuration options to the ml2_conf.ini file:

[ml2]

type_drivers = local,flat,vlan,gre,vxlan

mechanism_drivers = openvswitch,linuxbridge,l2populationNeutron’s Linux Bridge ML2 driver and agent were deprecated in Red Hat OpenStack Platform 11. The Open vSwitch (OVS) plugin OpenStack Platform director default, and is recommended by Red Hat for general usage.

2. Enable L2 population in the openvswitch_agent.ini file. Enable it on each node that contains the L2 agent:

[agent]

l2_population = True

To install ARP reply flows, configure the arp_responder flag:

[agent]

l2_population = True

arp_responder = True2.11. OpenStack Networking services

By default, Red Hat OpenStack Platform includes components that integrate with the ML2 and Open vSwitch plugin to provide networking functionality in your deployment:

2.11.1. L3 agent

The L3 agent is part of the openstack-neutron package. Use network namespaces to provide each project with its own isolated layer 3 routers, which direct traffic and provide gateway services for the layer 2 networks. The L3 agent assists with managing these routers. The nodes that host the L3 agent must not have a manually-configured IP address on a network interface that is connected to an external network. Instead there must be a range of IP addresses from the external network that are available for use by OpenStack Networking. Neutron assigns these IP addresses to the routers that provide the link between the internal and external networks. The IP range that you select must be large enough to provide a unique IP address for each router in the deployment as well as each floating IP.

2.11.2. DHCP agent

The OpenStack Networking DHCP agent manages the network namespaces that are spawned for each project subnet to act as DHCP server. Each namespace runs a dnsmasq process that can allocate IP addresses to virtual machines on the network. If the agent is enabled and running when a subnet is created then by default that subnet has DHCP enabled.

2.11.3. Open vSwitch agent

The Open vSwitch (OVS) neutron plug-in uses its own agent, which runs on each node and manages the OVS bridges. The ML2 plugin integrates with a dedicated agent to manage L2 networks. By default, Red Hat OpenStack Platform uses ovs-agent, which builds overlay networks using OVS bridges.

2.12. Project and provider networks

The following diagram presents an overview of the project and provider network types, and illustrates how they interact within the overall OpenStack Networking topology:

2.12.1. Project networks

Users create project networks for connectivity within projects. Project networks are fully isolated by default and are not shared with other projects. OpenStack Networking supports a range of project network types:

- Flat - All instances reside on the same network, which can also be shared with the hosts. No VLAN tagging or other network segregation occurs.

- VLAN - OpenStack Networking allows users to create multiple provider or project networks using VLAN IDs (802.1Q tagged) that correspond to VLANs present in the physical network. This allows instances to communicate with each other across the environment. They can also communicate with dedicated servers, firewalls, load balancers and other network infrastructure on the same layer 2 VLAN.

- VXLAN and GRE tunnels - VXLAN and GRE use network overlays to support private communication between instances. An OpenStack Networking router is required to enable traffic to traverse outside of the GRE or VXLAN project network. A router is also required to connect directly-connected project networks with external networks, including the Internet; the router provides the ability to connect to instances directly from an external network using floating IP addresses. VXLAN and GRE type drivers are compatible with the ML2/OVS mechanism driver.

- GENEVE tunnels - GENEVE recognizes and accommodates changing capabilities and needs of different devices in network virtualization. It provides a framework for tunneling rather than being prescriptive about the entire system. Geneve defines the content of the metadata flexibly that is added during encapsulation and tries to adapt to various virtualization scenarios. It uses UDP as its transport protocol and is dynamic in size using extensible option headers. Geneve supports unicast, multicast, and broadcast. The GENEVE type driver is compatible with the ML2/OVN mechanism driver.

2.12.2. Provider networks

The OpenStack administrator creates provider networks. Provider networks map directly to an existing physical network in the data center. Useful network types in this category include flat (untagged) and VLAN (802.1Q tagged). You can also share provider networks among projects as part of the network creation process.

2.13. Layer 2 and layer 3 networking

When designing your virtual network, anticipate where the majority of traffic is going to be sent. Network traffic moves faster within the same logical network, rather than between multiple logical networks. This is because traffic between logical networks (using different subnets) must pass through a router, resulting in additional latency.

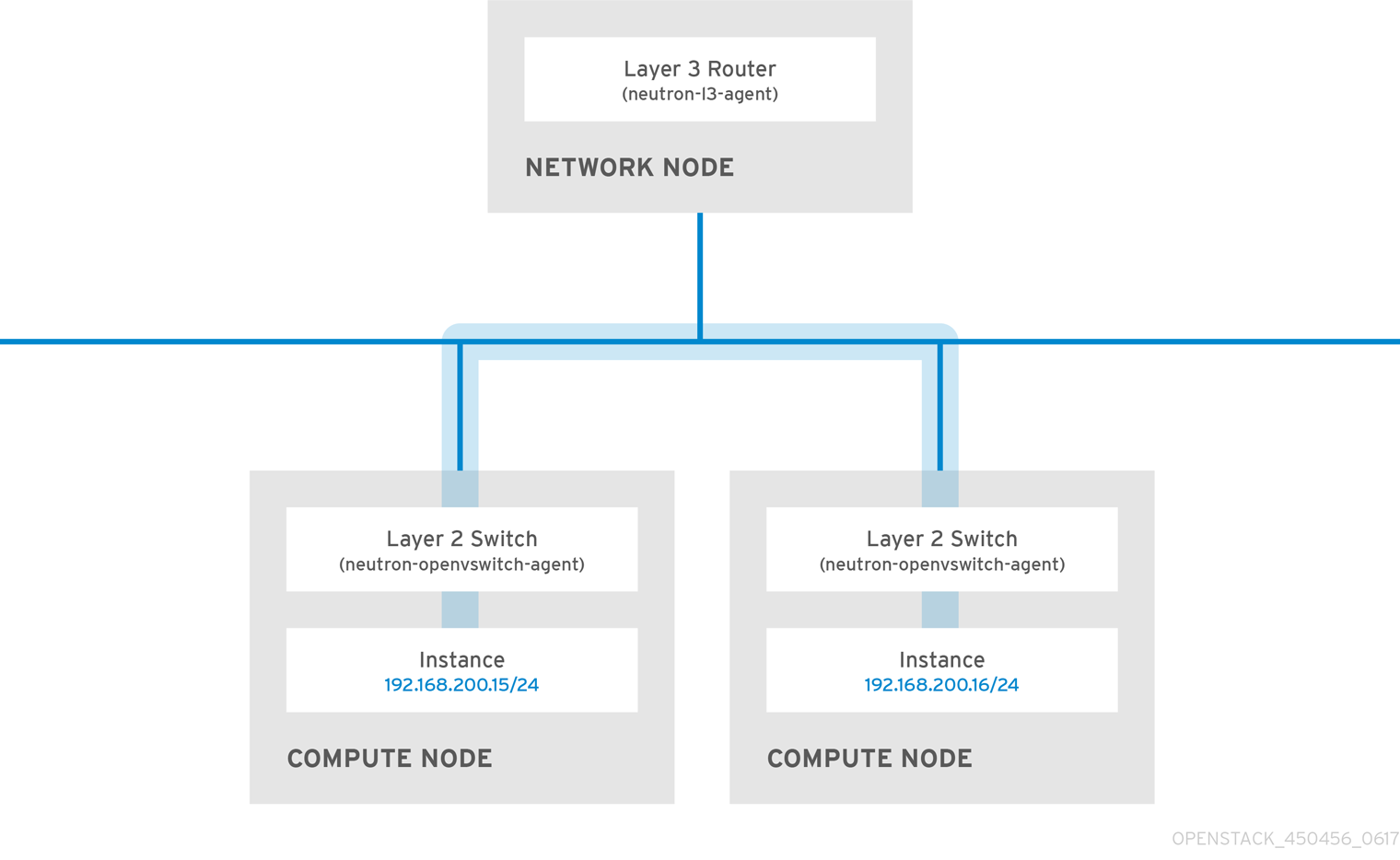

Consider the diagram below which has network traffic flowing between instances on separate VLANs:

Even a high performance hardware router adds latency to this configuration.

2.13.1. Use switching where possible

Because switching occurs at a lower level of the network (layer 2) it can function faster than the routing that occurs at layer 3. Design as few hops as possible between systems that communicate frequently.

For example, the following diagram depicts a switched network that spans two physical nodes, allowing the two instances to communicate directly without using a router for navigation first. Note that the instances now share the same subnet, to indicate that they are on the same logical network:

To allow instances on separate nodes to communicate as if they are on the same logical network, use an encapsulation tunnel such as VXLAN or GRE. Red Hat recommends adjusting the MTU size from end-to-end to accommodate the additional bits required for the tunnel header, otherwise network performance can be negatively impacted as a result of fragmentation. For more information, see Configure MTU Settings.

You can further improve the performance of VXLAN tunneling by using supported hardware that features VXLAN offload capabilities.