Chapter 1. Introduction

This guide provides information of how to construct a spine-leaf network topology for your Red Hat OpenStack Platform environment. This includes a full end-to-end scenario and example files to help replicate a more extensive network topology within your own environment.

1.1. Spine-leaf networking

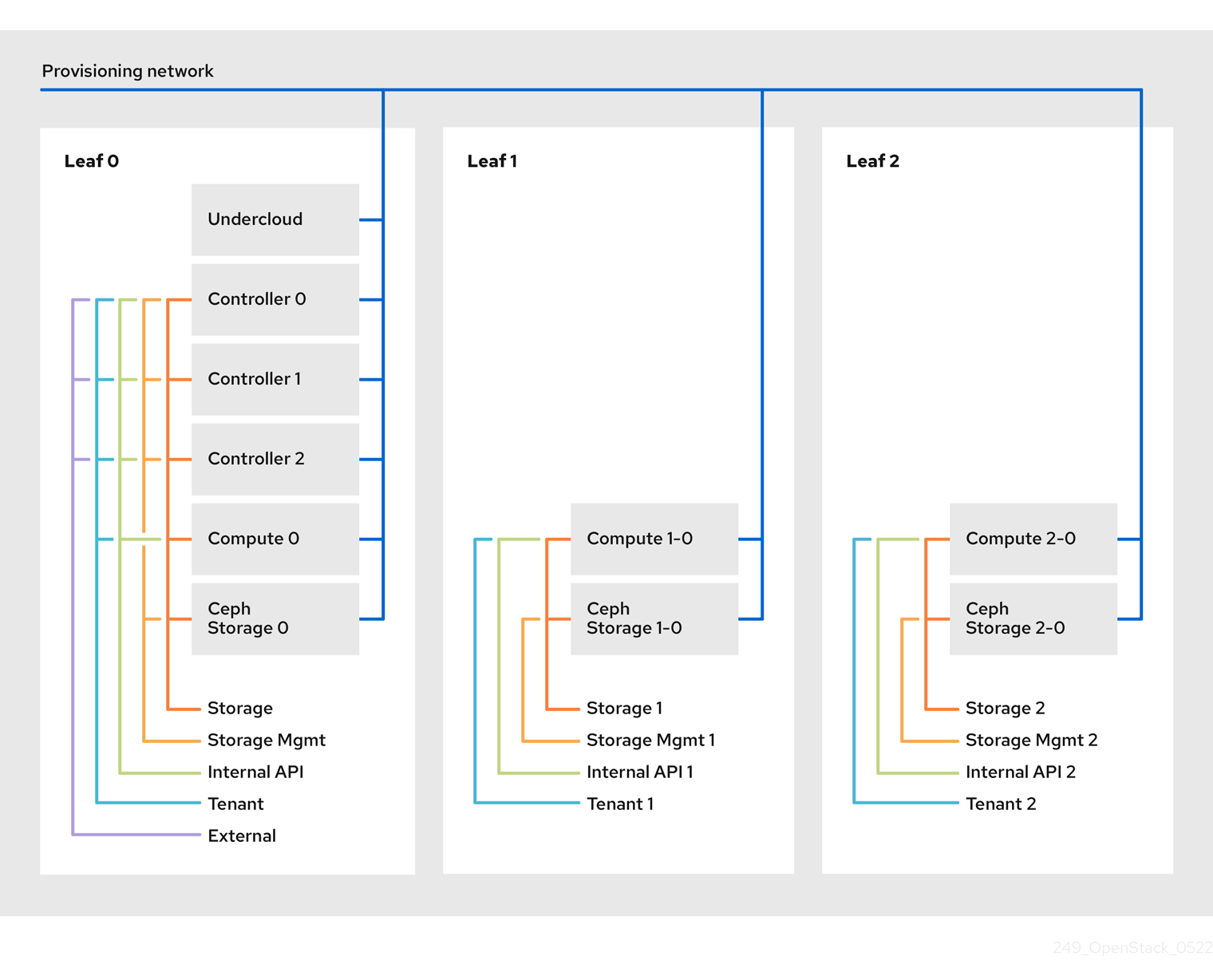

Red Hat OpenStack Platform’s composable network architecture allows you to adapt your networking to the popular routed spine-leaf data center topology. In a practical application of routed spine-leaf, a leaf is represented as a composable Compute or Storage role usually in a data center rack, as shown in Figure 1.1, “Routed spine-leaf example”. The Leaf 0 rack has an undercloud node, controllers, and compute nodes. The composable networks are presented to the nodes, which have been assigned to composable roles. In this diagram:

-

The

StorageLeafnetworks are presented to the Ceph storage and Compute nodes. -

The

NetworkLeafrepresents an example of any network you might want to compose.

Figure 1.1. Routed spine-leaf example

1.2. Network topology

The routed spine-leaf bare metal environment has one or more layer 3 capable switches, which route traffic between the isolated VLANs in the separate layer 2 broadcast domains.

The intention of this design is to isolate the traffic according to function. For example, if the controller nodes host an API on the Internal API network, when a compute node accesses the API it should use its own version of the Internal API network. For this routing to work, you need routes that force traffic destined for the Internal API network to use the required interface. This can be configured using supernet routes. For example, if you use 172.18.0.0/24 as the Internal API network for the controller nodes, you can use 172.18.1.0/24 for the second Internal API network, and 172.18.2.0/24 for the third, and so on. As a result, you can have a route pointing to the larger 172.18.0.0/16 supernet that uses the gateway IP on the local Internal API network for each role in each layer 2 domain.

This scenario uses the following networks:

| Network | Roles attached | Interface | Bridge | Subnet |

|---|---|---|---|---|

| Provisioning / Control Plane | All | nic1 | br-ctlplane (undercloud) | 192.168.10.0/24 |

| Storage | Controller | nic2 | 172.16.0.0/24 | |

| Storage Mgmt | Controller | nic3 | 172.17.0.0/24 | |

| Internal API | Controller | nic4 | 172.18.0.0/24 | |

| Tenant | Controller | nic5 | 172.19.0.0/24 | |

| External | Controller | nic6 | br-ex | 10.1.1.0/24 |

| Network | Roles attached | Interface | Bridge | Subnet |

|---|---|---|---|---|

| Provisioning / Control Plane | All | nic1 | br-ctlplane (undercloud) | 192.168.11.0/24 |

| Storage1 | Compute1, Ceph1 | nic2 | 172.16.1.0/24 | |

| Storage Mgmt1 | Ceph1 | nic3 | 172.17.1.0/24 | |

| Internal API1 | Compute1 | nic4 | 172.18.1.0/24 | |

| Tenant1 | Compute1 | nic5 | 172.19.1.0/24 |

| Network | Roles attached | Interface | Bridge | Subnet |

|---|---|---|---|---|

| Provisioning / Control Plane | All | nic1 | br-ctlplane (undercloud) | 192.168.12.0/24 |

| Storage2 | Compute2, Ceph2 | nic2 | 172.16.2.0/24 | |

| Storage Mgmt2 | Ceph2 | nic3 | 172.17.2.0/24 | |

| Internal API2 | Compute2 | nic4 | 172.18.2.0/24 | |

| Tenant2 | Compute2 | nic5 | 172.19.2.0/24 |

| Network | Subnet |

|---|---|

| Storage | 172.16.0.0/16 |

| Storage Mgmt | 172.17.0.0/16 |

| Internal API | 172.18.0.0/16 |

| Tenant | 172.19.0.0/16 |

1.3. Spine-leaf requirements

To deploy the overcloud on a network with a layer-3 routed architecture, you must meet the following requirements:

- Layer-3 routing

- The network infrastructure must have routing configured to enable traffic between the different layer-2 segments. This can be statically or dynamically configured.

- DHCP-Relay

-

Each layer-2 segment not local to the undercloud must provide

dhcp-relay. You must forward DHCP requests to the undercloud on the provisioning network segment where the undercloud is connected.

The undercloud uses two DHCP servers. One for baremetal node introspection, and another for deploying overcloud nodes. Make sure to read DHCP relay configuration to understand the requirements when configuring dhcp-relay.

1.4. Spine-leaf limitations

- Some roles, such as the Controller role, use virtual IP addresses and clustering. The mechanism behind this functionality requires layer-2 network connectivity between these nodes. These nodes are all be placed within the same leaf.

- Similar restrictions apply to Networker nodes. The network service implements highly-available default paths in the network using Virtual Router Redundancy Protocol (VRRP). Since VRRP uses a virtual router IP address, you must connect master and backup nodes to the same L2 network segment.

- When using tenant or provider networks with VLAN segmentation, you must share the particular VLANs between all Networker and Compute nodes.

It is possible to configure the network service with multiple sets of Networker nodes. Each set share routes for their networks, and VRRP would provide highly-available default paths within each set of Networker nodes. In such configuration all Networker nodes sharing networks must be on the same L2 network segment.