Experience Red Hat OpenShift Virtualization: Advanced operations and automation

Red Hat® OpenShift® Virtualization allows organizations to run and deploy new and existing virtual machines on a modernized platform. Learn how to manage your environment and perform critical operational tasks often required of virtual machine administrators from day-2 onward.

This learning path is for operations teams or system administrators

Developers may want to check out Foundations of OpenShift on developers.redhat.com.

Advanced networking and security

With the security audit underway, our virtual machine (VM)-based application architecture is becoming more distributed, spanning multiple namespaces, projects, and even different cloud environments. Our VMs and new containerized services need to communicate seamlessly. We need clear visibility and precise control over network traffic, especially as managing both virtual machines and containers increases complexity in ensuring efficient and secure operations. In this section, we will introduce how to leverage the Network Observability Operator to visualize our network traffic, how to shape and secure our network by implementing network policies, and how to automatically segregate our projects and their virtual workloads using UDN.

Prerequisites:

- A computer with a web browser and internet access

- A Chromium-based browser is preferred: these are recommended, as some copy/paste functions don’t work in Firefox for the time being

- (Recommended for non-US users) Familiarity with special characters in other countries’ keyboard layouts, since the remote access console uses the US keyboard by default

In this resource, you will:

- Leverage the Network Observability Operator to visualize network traffic

- Shape and secure the network by implementing network policies

- Automatically segregate projects and their virtual workloads using UDN

Configure network policies to manager cluster egress

In some secure environments, network traffic is not allowed to leave the cluster without first passing through a proxy or some other secure gateway. Likewise, many network configurations allow for cluster egress by default. In this section of the lab we will be configuring a network policy that secures our cluster by blocking egress to outside websites.

Confirm network egress on virtual machines

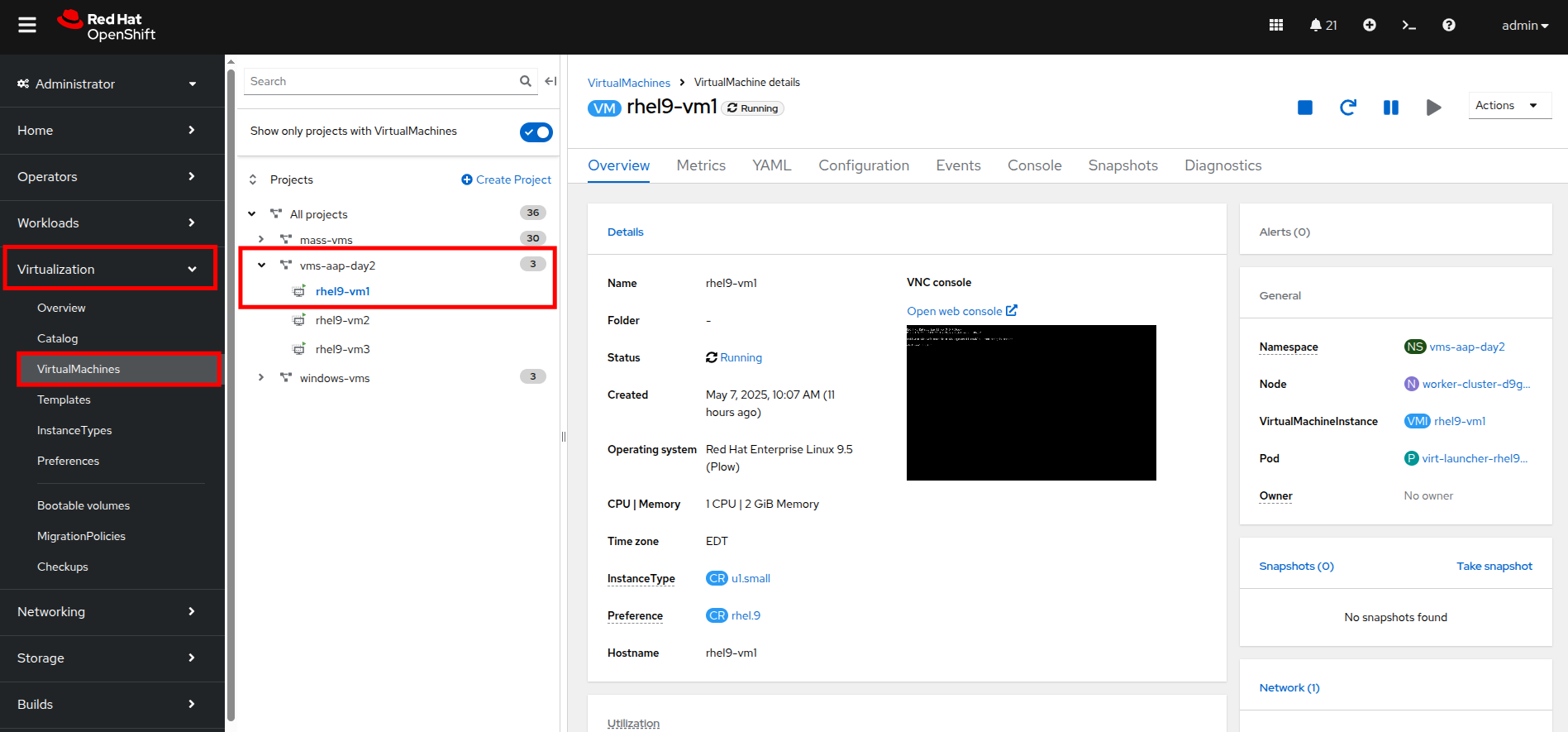

- On the left side navigation menu, click on Virtualization then click VirtualMachines, and select the rhel9-vm1 virtual machine under the vms-aap-day2 project in the center column.

Figure 1. View VM

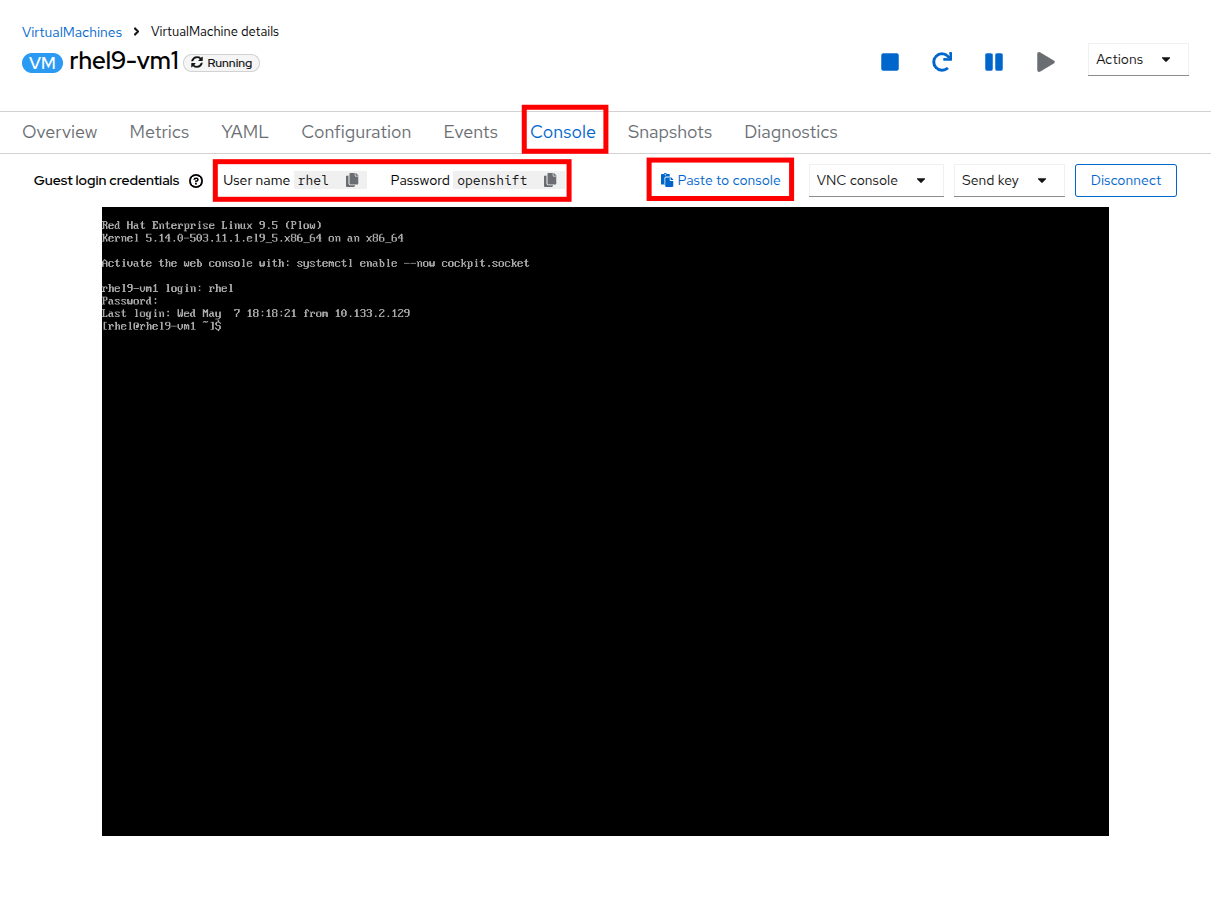

- Click on the Console tab and use the provided credentials, as well as the built-in copy/paste functionality, to authenticate to the VM.

Figure 2. Log in to VM

| Note: You may see a popup that asks you to enable the copy/paste functionality. If prompted click Allow. |

Once you are logged in, execute the following command to start an outward bound ping to Google:

ping www.google.com

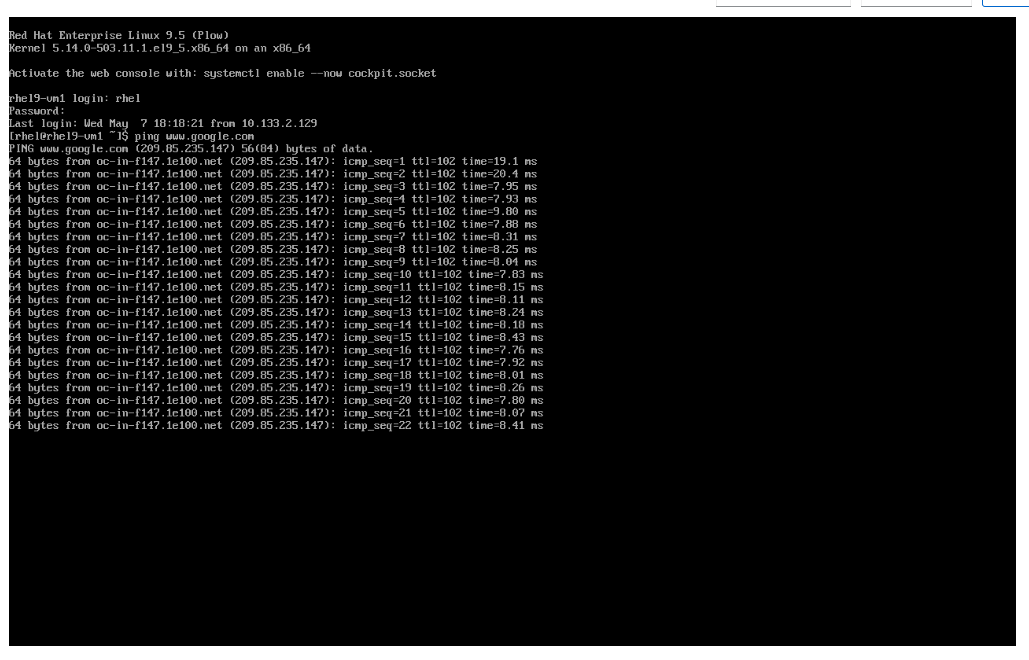

Figure 3. Ping Google

- Press Control+C to stop the ping.

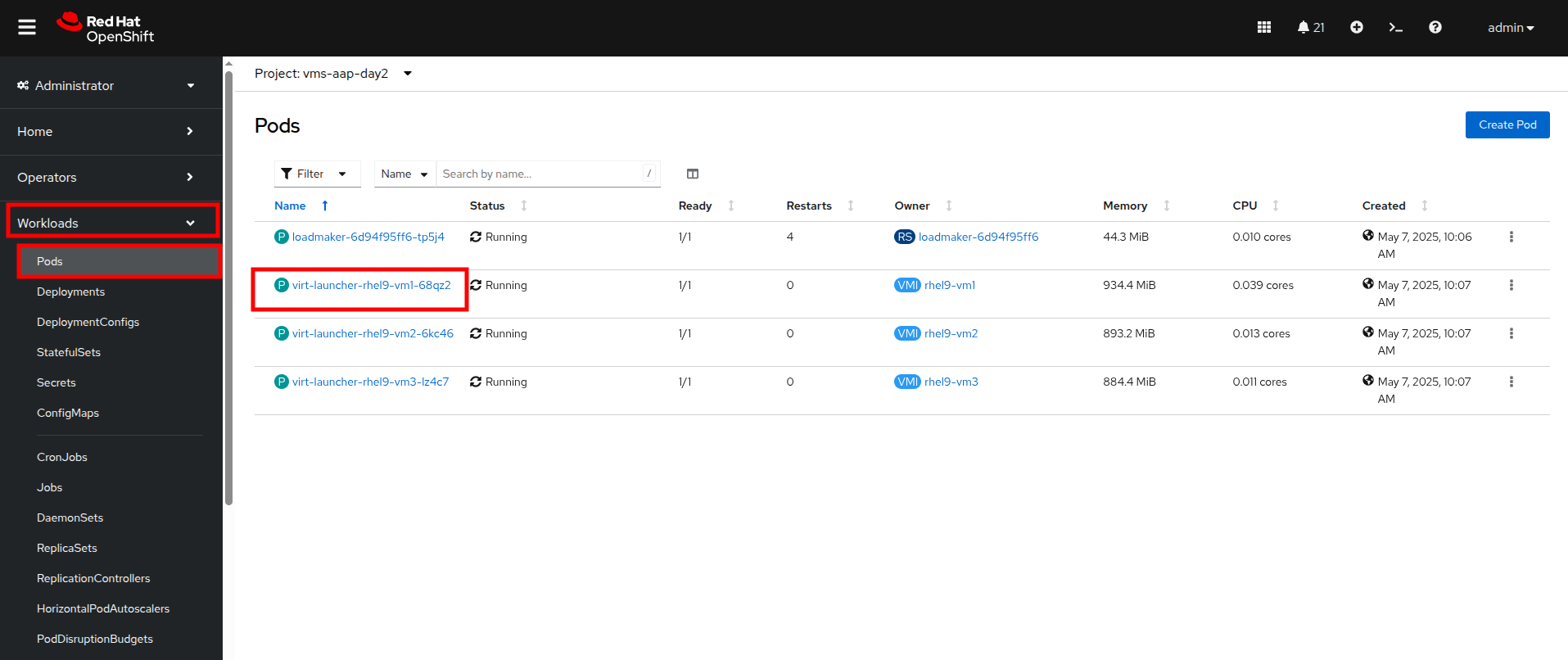

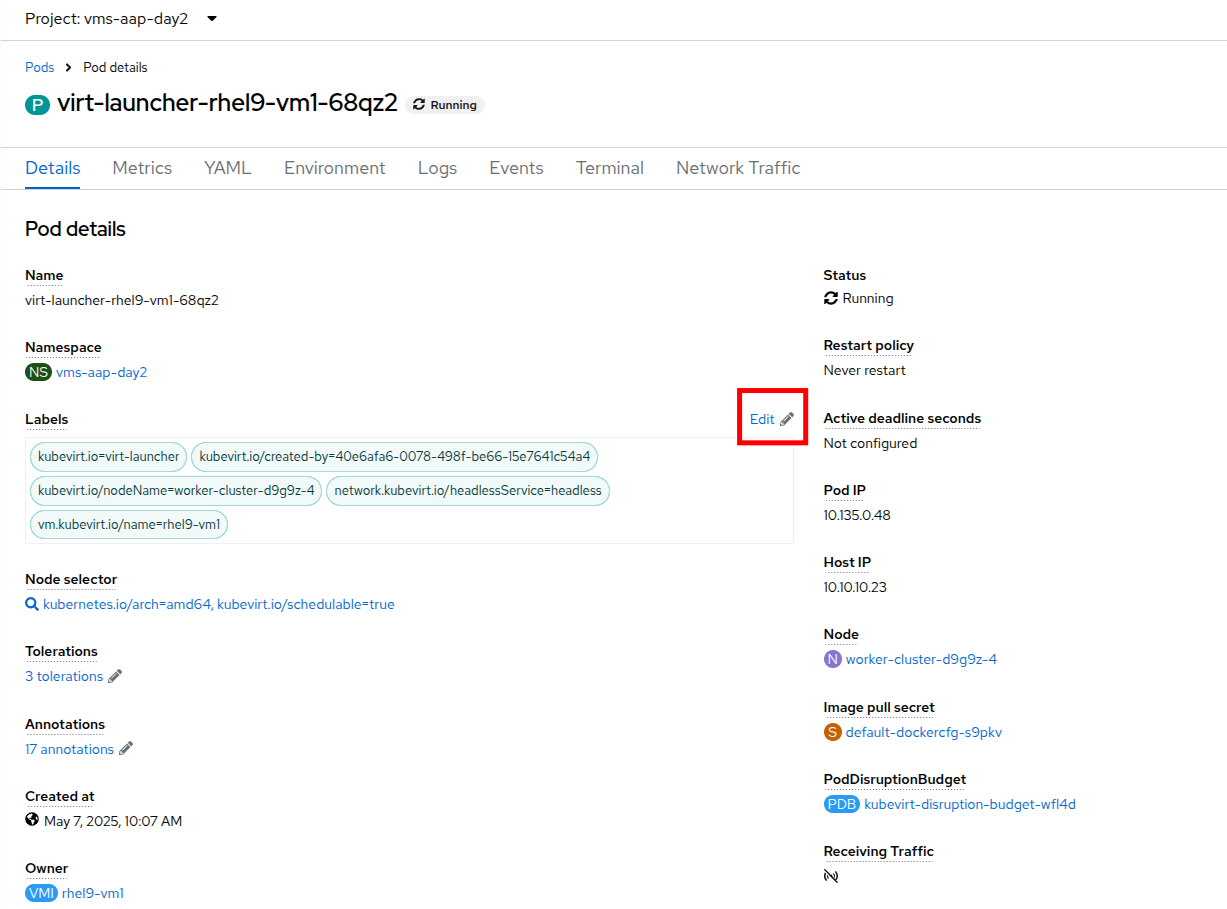

- From the left side navigation menu, click on Workloads and then Pods, and then click on the virt-launcher pod for the one that represents the VM rhel9-vm1 to view the pod details.

Figure 4. Select pod

| Note: Pod names are randomly generated, so yours will most likely not match the screenshot above. |

- On the Pod details page, click the Edit option on the Labels section.

Figure 5. Edit pod details

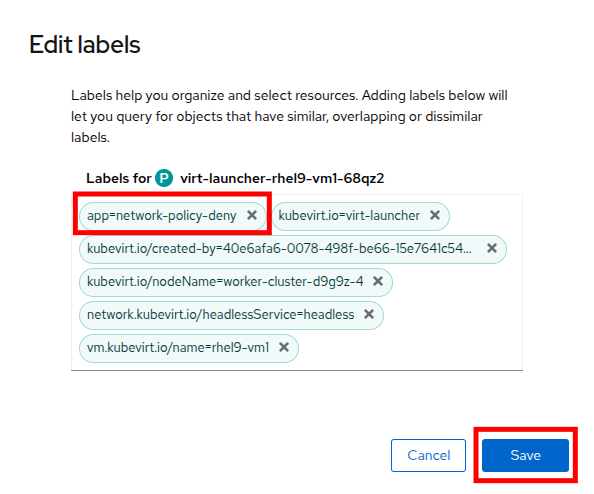

- An Edit labels window will appear. You can click into the center box and add a label for app=network-policy-deny, press the Enter key to commit it, and then click the Save button.

Figure 6. Edit pod labels

- Repeat the same process for the rhel9-vm2 virtual machine.

Create the network policy

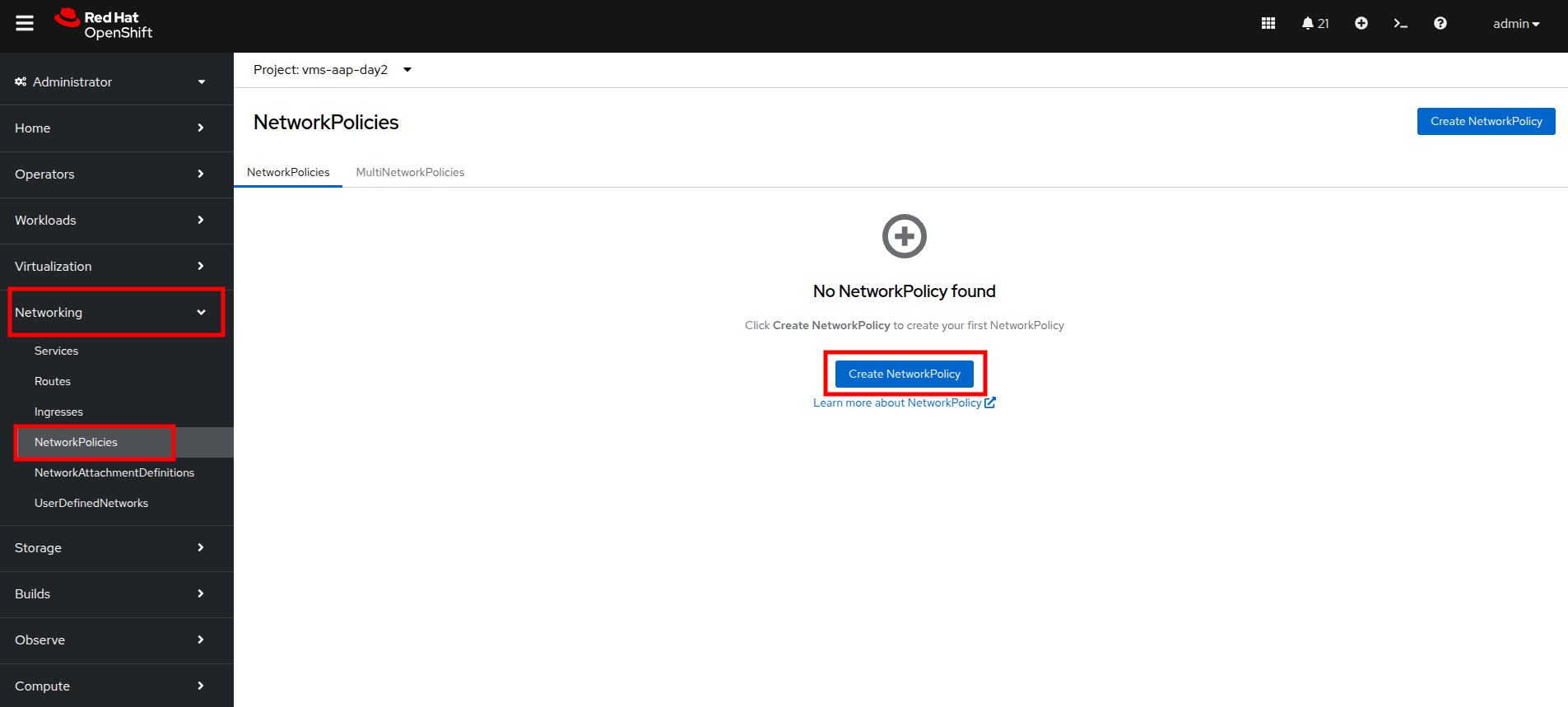

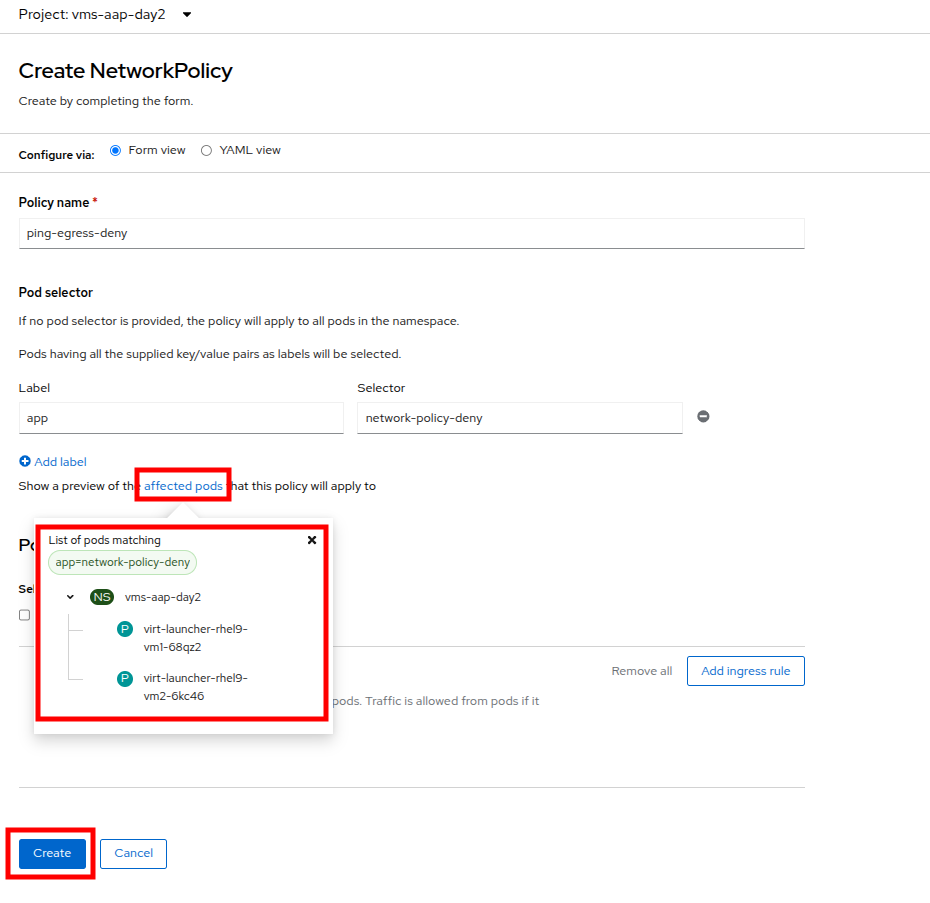

- From the left side navigation menu, click on Networking and then click on NetworkPolicies, then click on the Create NetworkPolicy button in the center of the screen.

Figure 7. Network policy

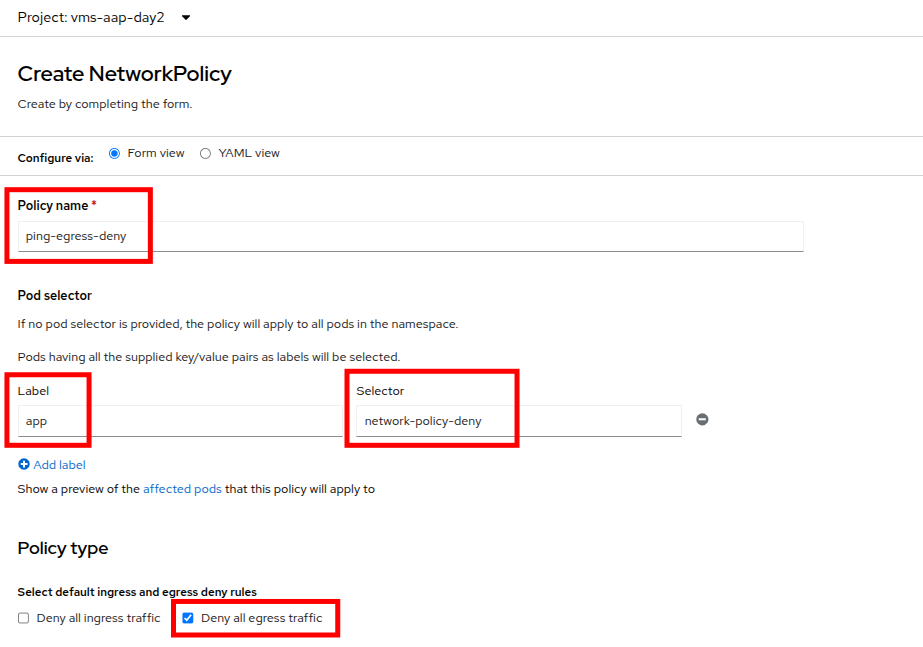

- In NetworkPolicies, fill out the following fields:

- Policy name: ping-egress-deny

- Key: app

- Value: network-policy-deny

- Deny all egress traffic checkbox: checked

Figure 8. Configure network policy

- With the values filled out, you can click the affected pods link under the Pod selector section to show which pods are affected by this policy. Once you are satisfied with your settings you can click the Create button.

Figure 9. Affected pods

- With the policy created, go test it out.

Confirm the effects of the network policy on the VM

- Return to the console of the rhel9-vm1 virtual machine to test our policy.

- Using the left side navigation menu, click on Virtualization, then VirtualMachines, and select rhel9-vm1 from the center column.

- Click the Console tab of the VM, you should still be logged in from before.

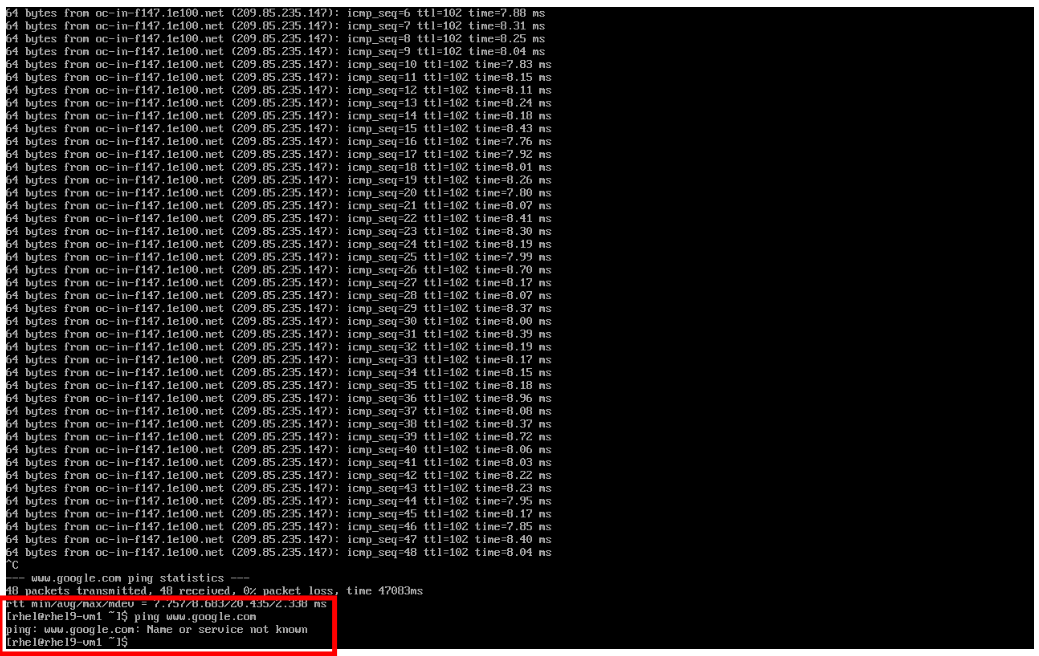

Copy and paste the following syntax to test out the new Network Policy:

ping www.google.com

Figure 10. Egress blocked

- Egress from the cluster is completely blocked, including DNS lookups.

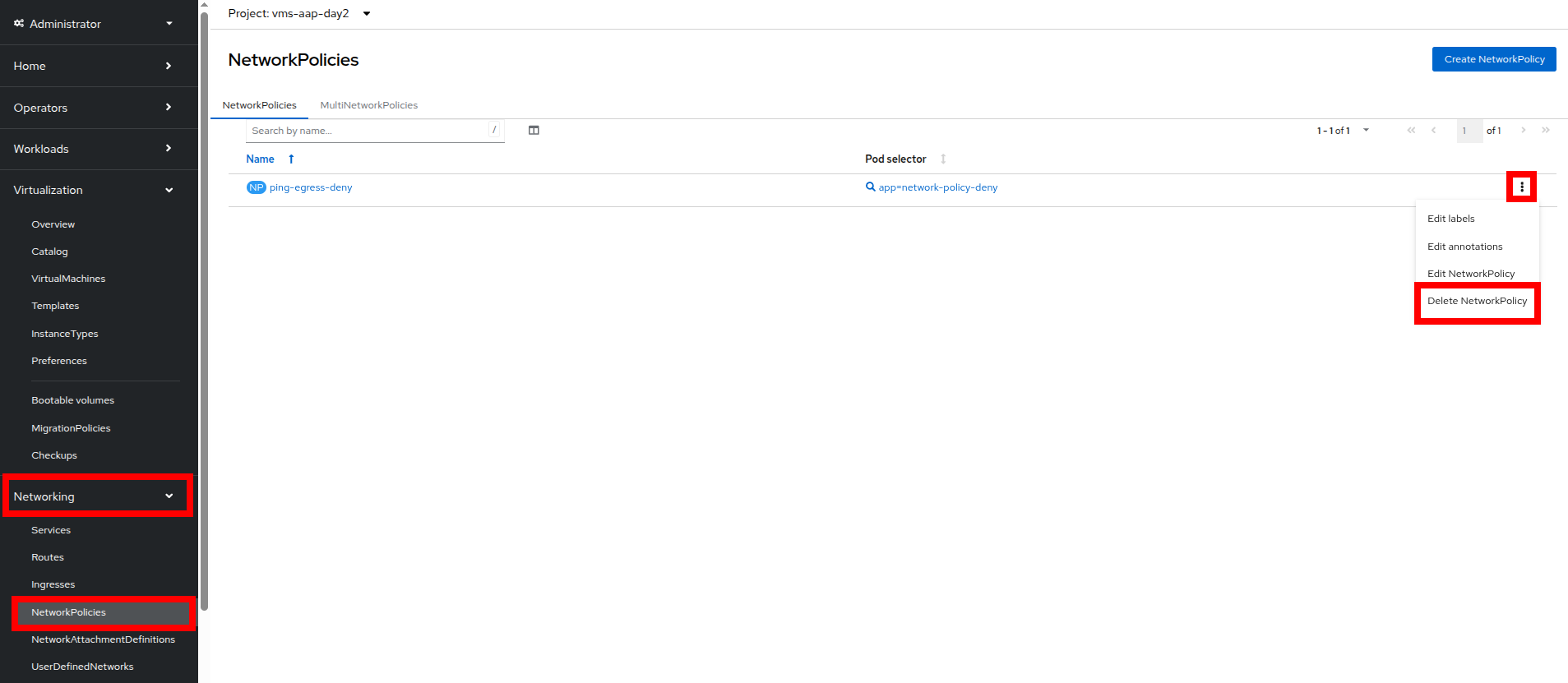

- Once you have completed this exercise, return to Networking and NetworkPolicies. Delete the ping-egress-deny policy using the three-dot menu on the right, and confirm in the pop-up box.

Figure 11. Delete policy

Configure UDN to manage VM traffic between projects

While it may seem like a basic security configuration to ensure that virtual machines are unable to reach unwanted external website, network policy also provides us with a diverse set of tools that allow us to shape traffic between our VMs and the projects in which they reside.

| Note: For this section we are going to make use of UDN (User Defined Networks) and configure our three namespaces for dev, test, and production so that they have limited network traffic between the resources defined in each one. Currently for a namespace to make use of Primary UDN functionality it must have a specialized label applied at creation that allows it to override the default cluster networking configuration. |

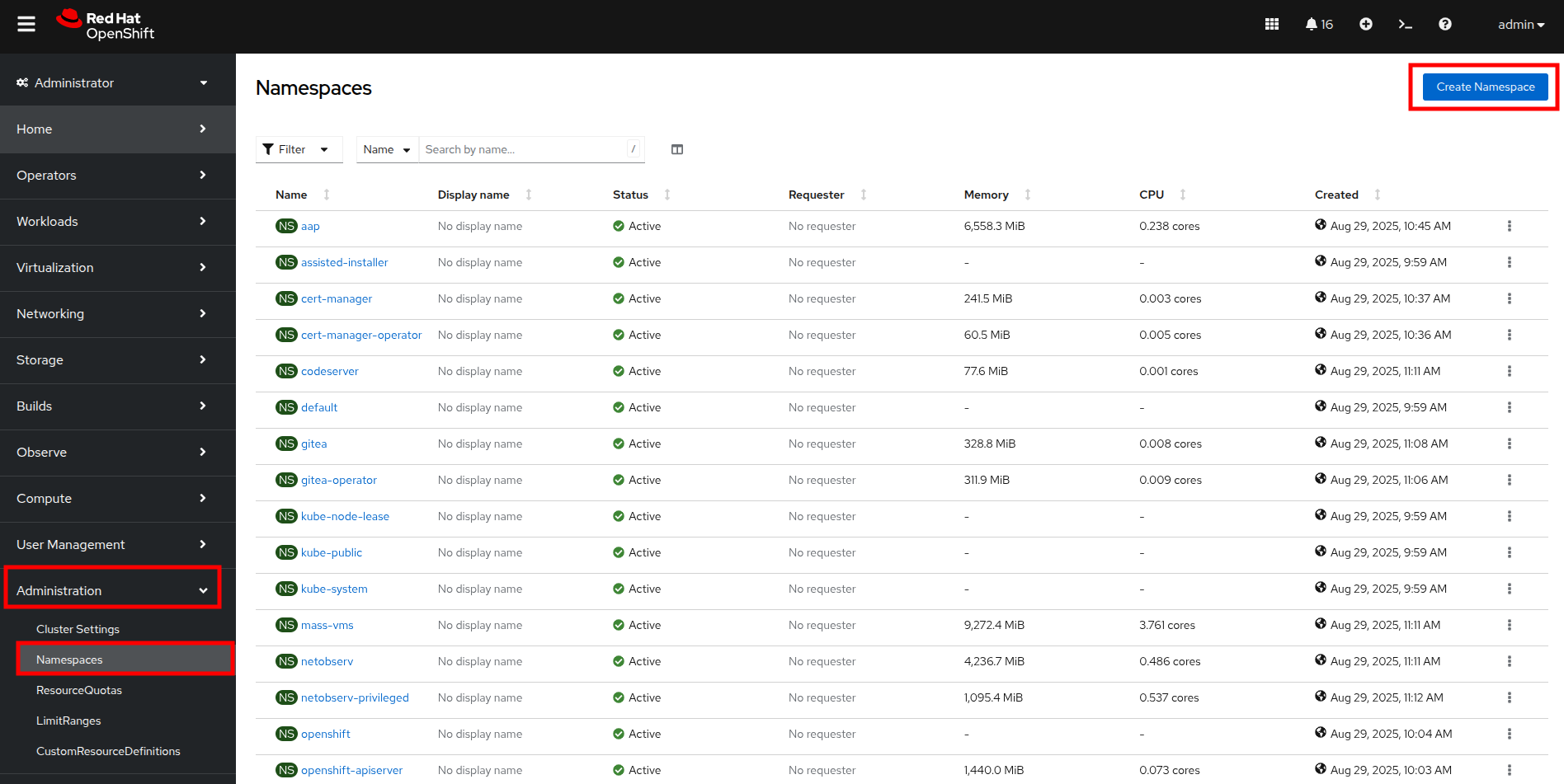

Create UDN enabled namespaces

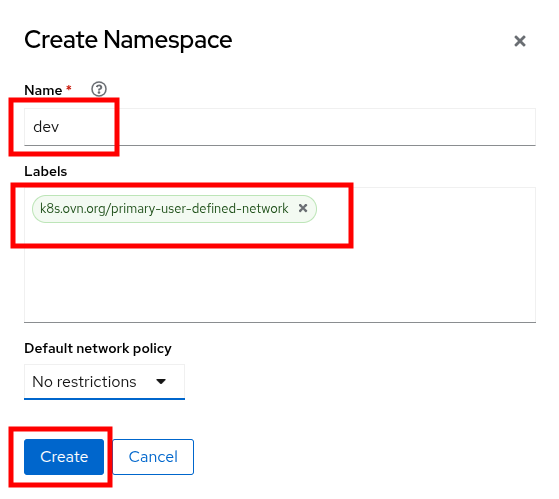

- From your OpenShift console, click on Administration, followed by Namespaces and the Create Namespace button in the corner.

Figure 12. Create namespace

- The Create Namespace prompt will open, and give you the option to enter a name, and add any custom labels to the namespace. Type in the name dev and add the following label: k8s.ovn.org/primary-user-defined-network, and click the Create button.

Figure 13. Create Namespace dialog

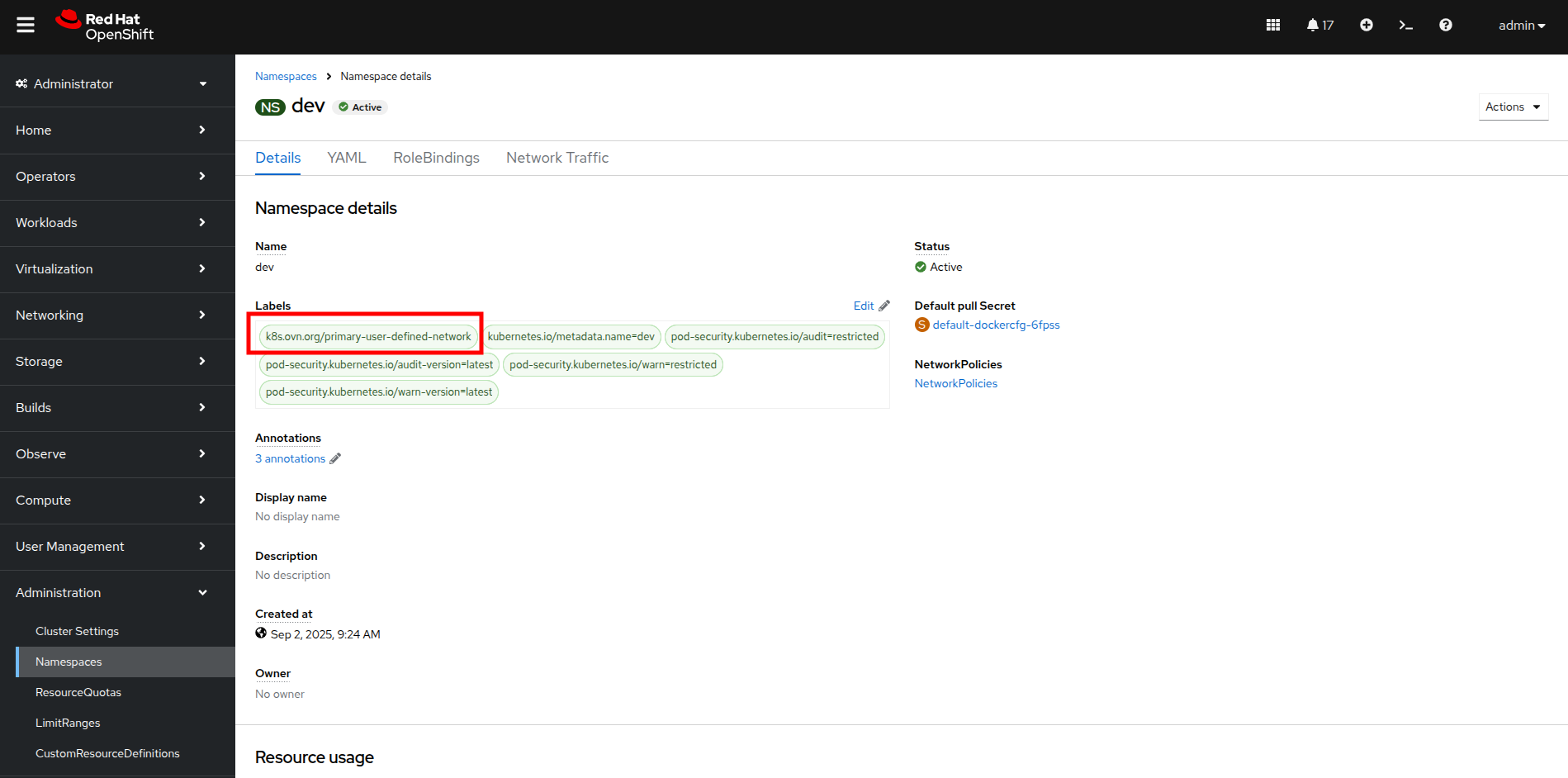

- When the namespace is created, you will be taken to the Namespace details page where you should see the label you applied listed.

Figure 14. Namespace details

- Repeat these steps to create namespaces for the test and prod namespaces as well.

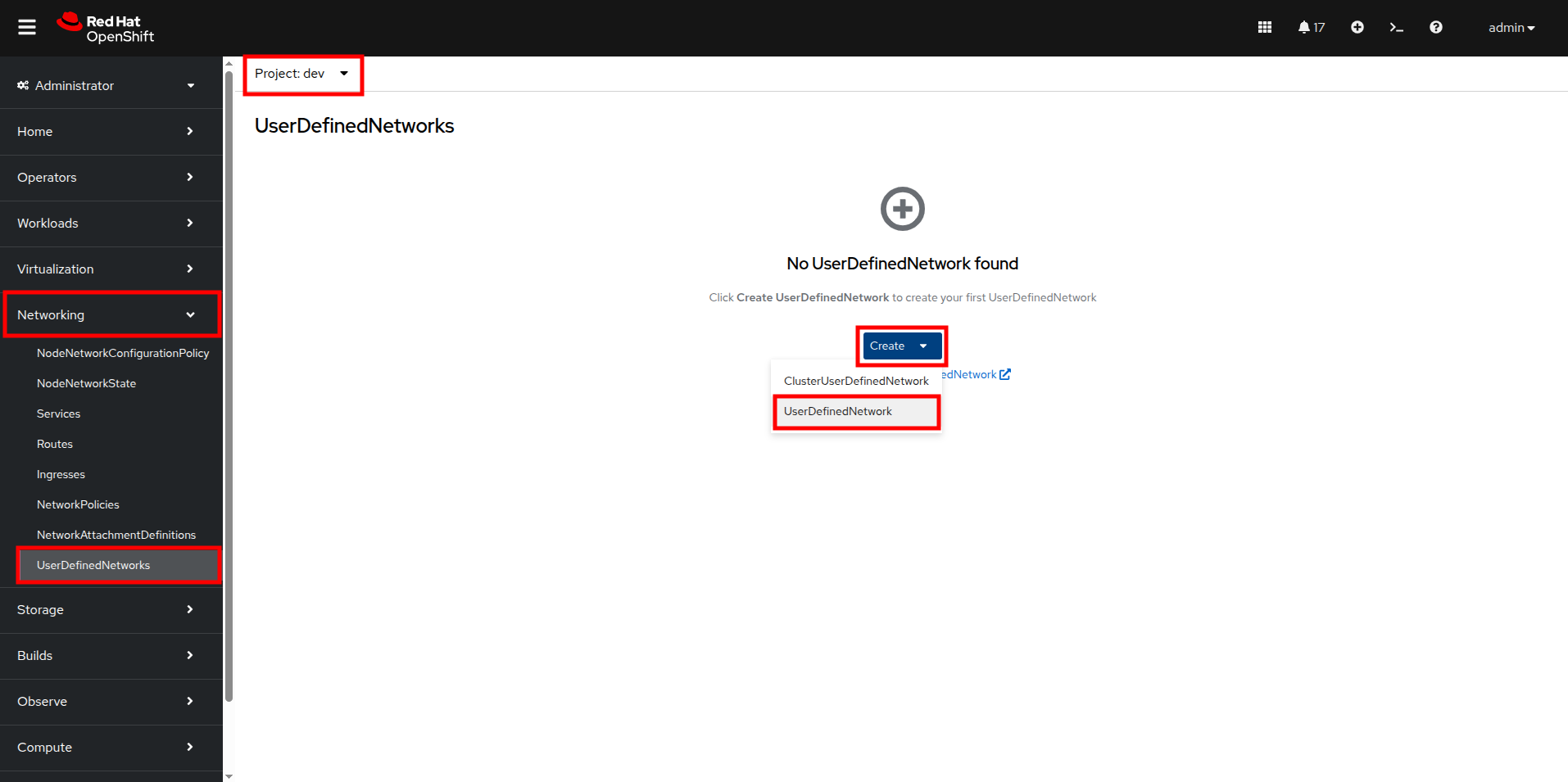

Create UDNs for each namespace

- Click on Networking followed by UserDefinedNetworks. Confirm that you are in your dev project and click the Create button in the center of the screen. Then select UserDefinedNetwork from the dropdown menu.

Figure 15. Create UDN

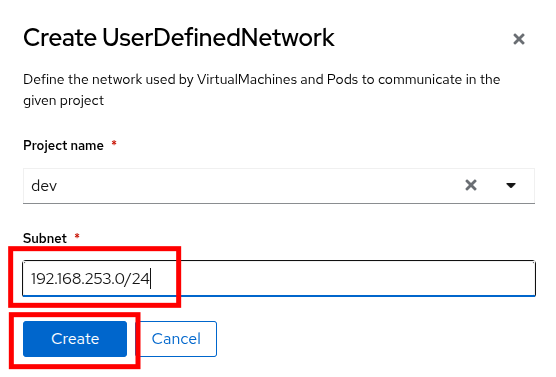

- In the dialog box that appears, your project name will already be defined, you just need to enter the subnet you want to use. Type 192.168.253.0/24 into the box for the dev project, and click the Create button.

Figure 16. Create UDN dialog

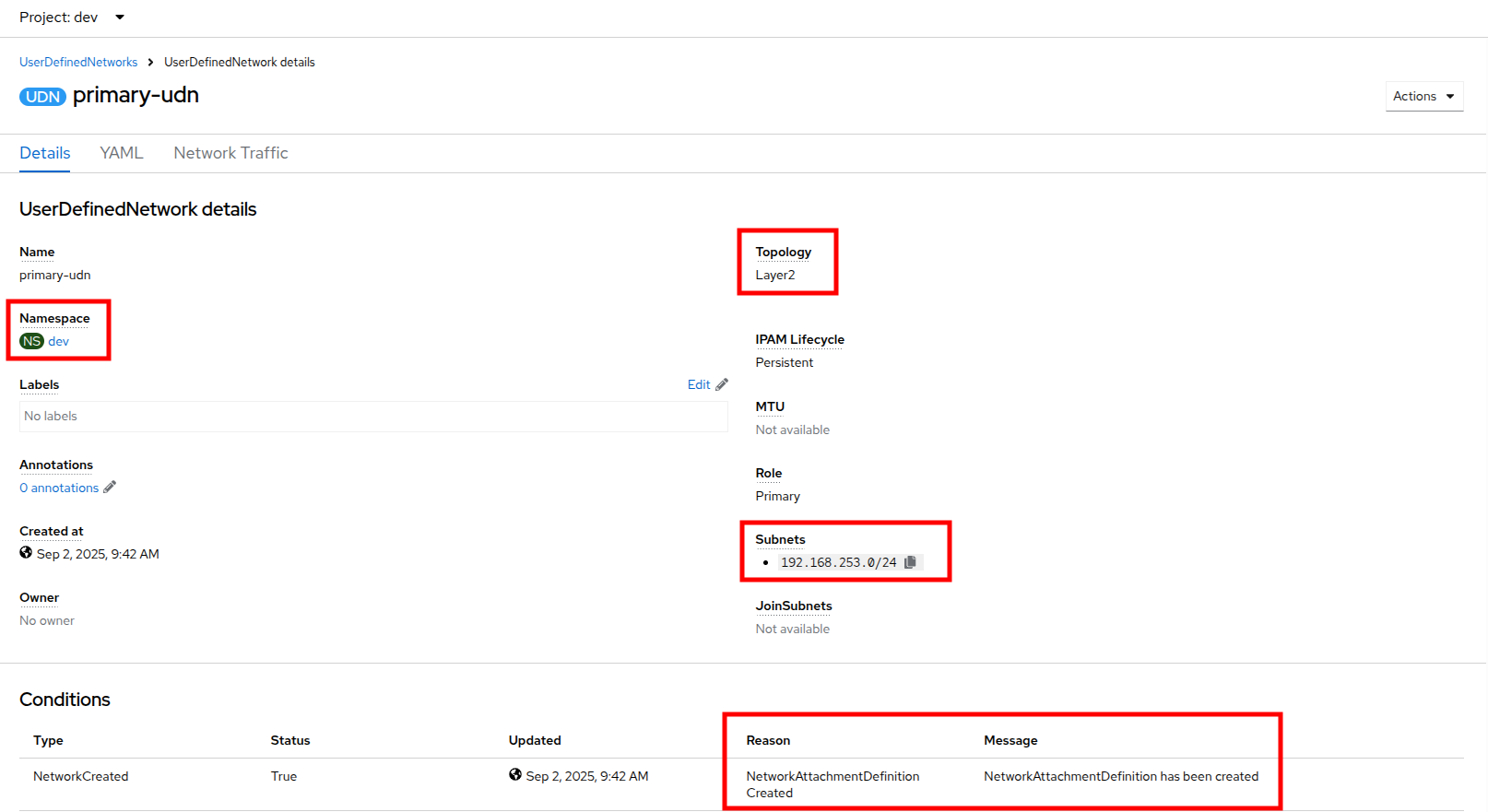

- You will be taken to the UserDefinedNetwork details page which shows information about the UDN you just created, including details such as its namespace, topology, and subnet. It also shows you that it has automatically created a Network Attachment Definition for you to connect your virtual machines.

Figure 17. UDN details

- Repeat these steps to create a UDN in the test namespace with subnet 192.168.254.0/24 and in the prod namespace with the subnet 192.168.255.0/24.

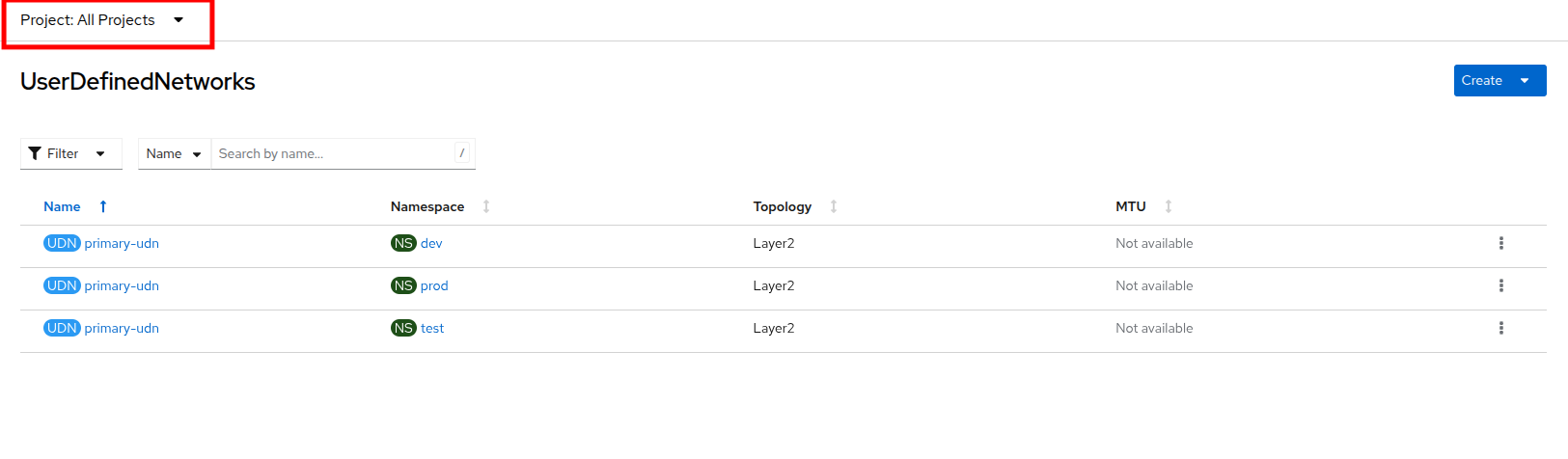

- Once all three are created, you should be able to click the Project dropdown at the top of the page and select All Projects to see them all listed.

Figure 18. UDN list

Create VMs and attach them to the UDNs

Now that we have defined our namespaces and created our UDNs, we need to put them in practice by creating a few virtual machines to test out connectivity.

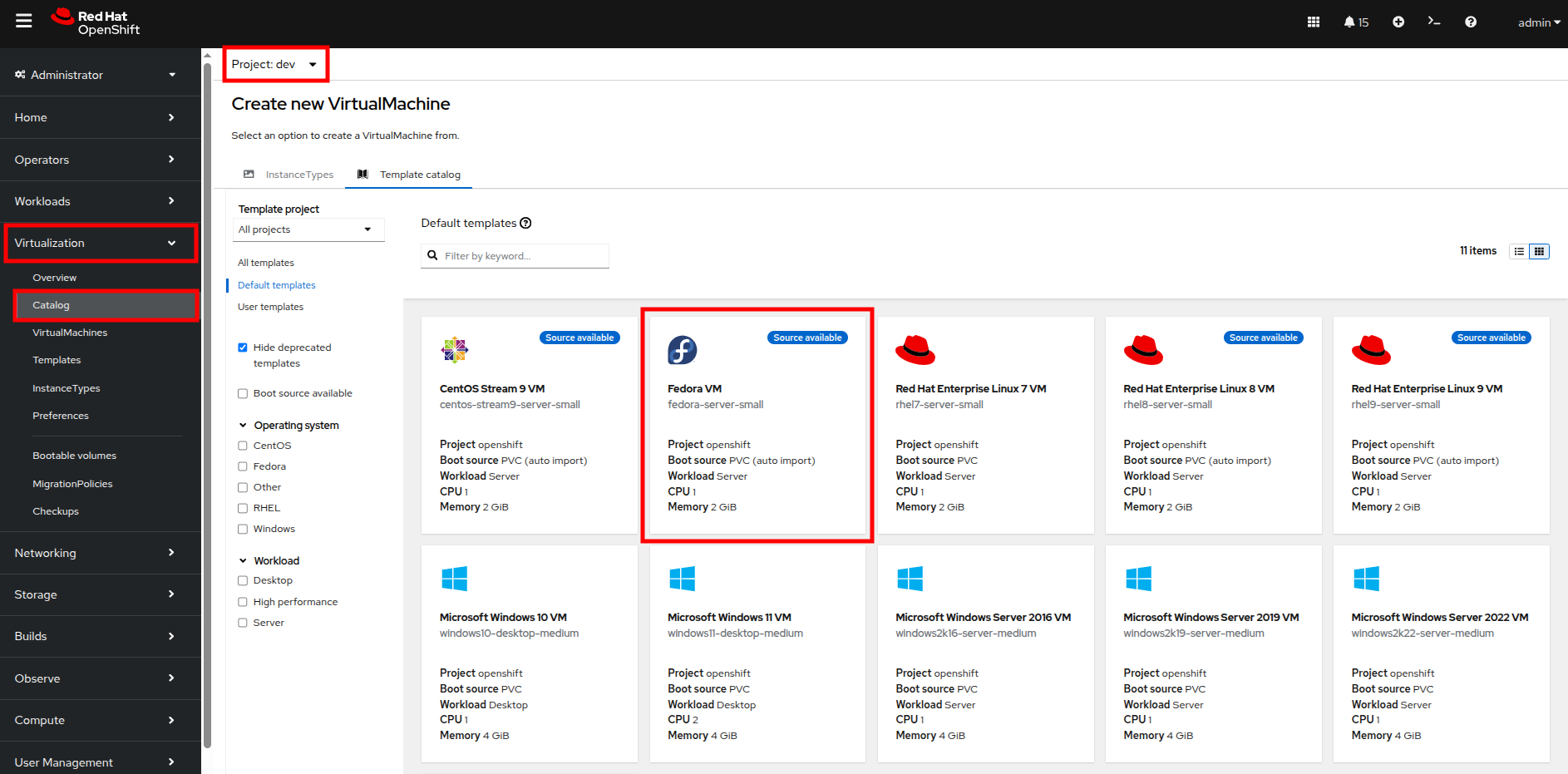

- Click on Virtualization and Catalog. Ensure that you are in the dev project, and select the tile for the Fedora VM template.

Figure 19. VM Catalog

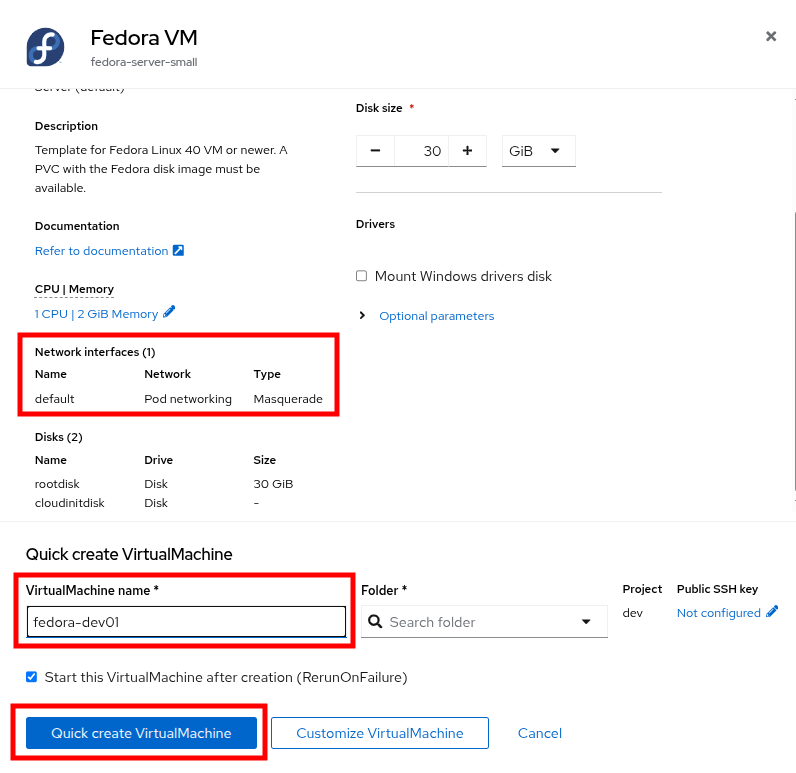

- After clicking, you will be presented with the Fedora VM dialog. Notice that the VM template shows that it is connected to the OpenShift pod network by default. However, because of the label we placed on the namespace, it knows that it should default to using the UDN. Name your first VM fedora-dev01, and click on the Quick create VirtualMachine button.

Figure 20. Create VM dialog

- With this VM started, please repeat the steps to create an additional VM fedora-dev02 in the dev namespace, followed by fedora-test01 in the test namespace, and fedora-prod01 in the prod namespace.

- With the list of VMs created we can now test how traffic flows and doesn’t flow between the VMs both within and between projects.

Test VM connectivity

To validate connectivity within namespaces we can just attempt a few simple pings between our virtual guests.

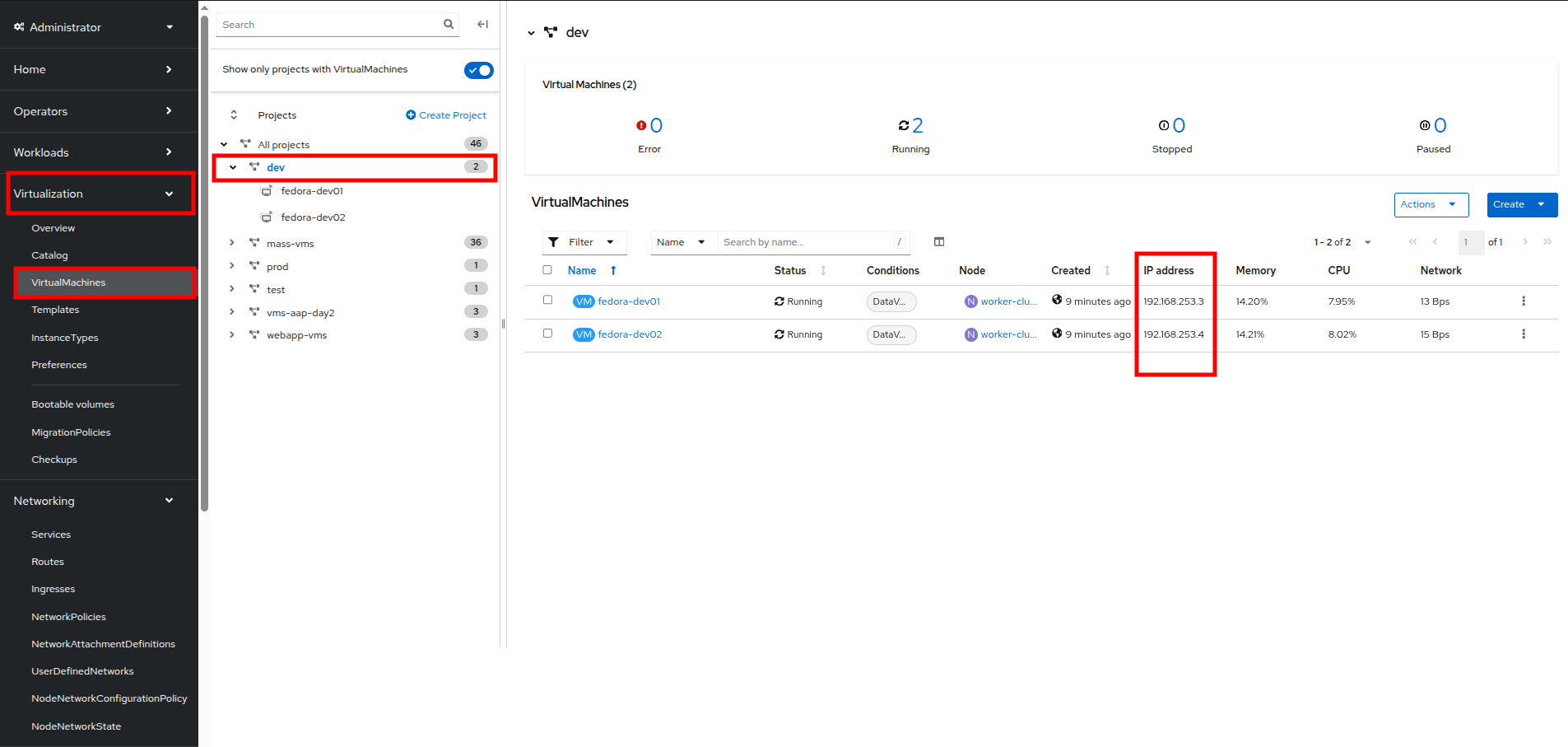

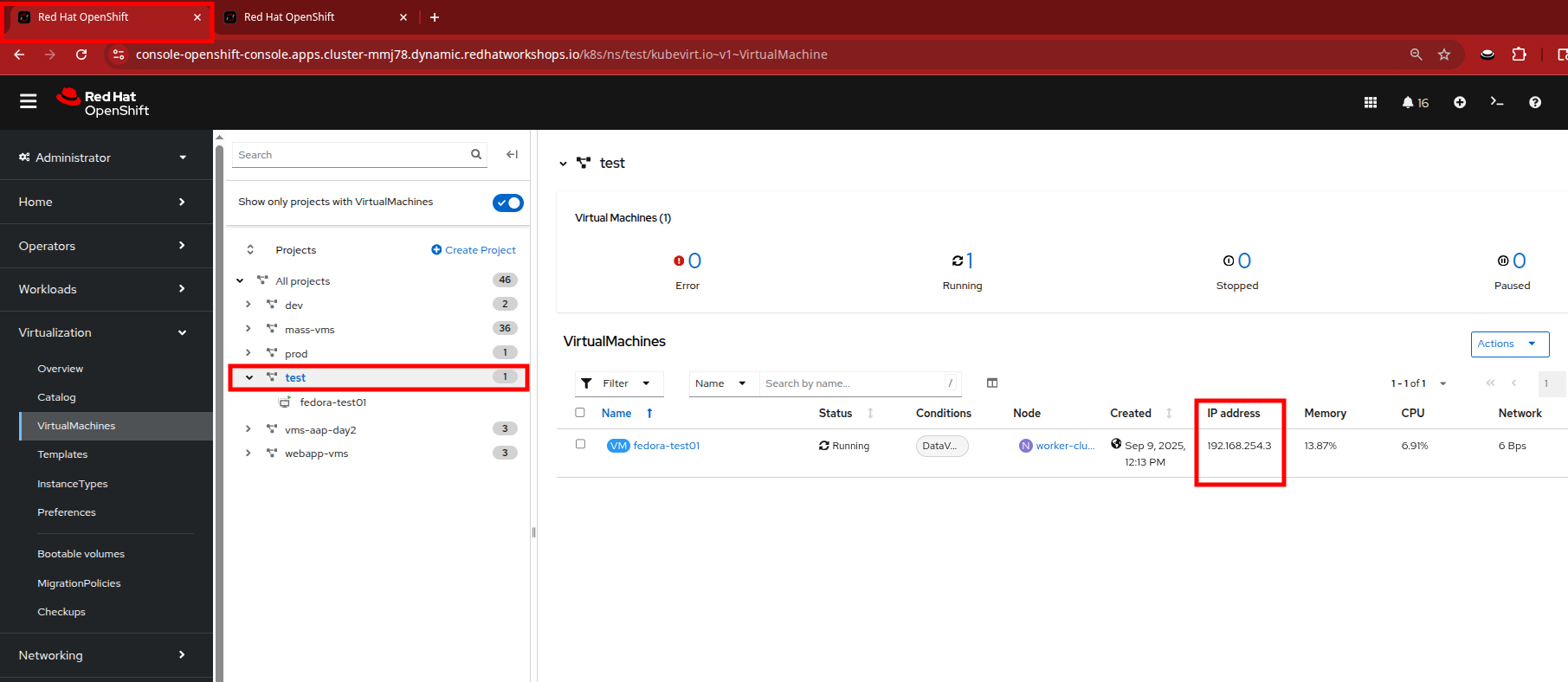

- Click on Virtualization and VirtualMachines in the left side menu, and click on your dev namespace to see the two VMs that you provisioned there.

- Notice that they both have IP addresses from your UDN subnet. Make note of these two IP addresses.

Figure 21. Dev VMs

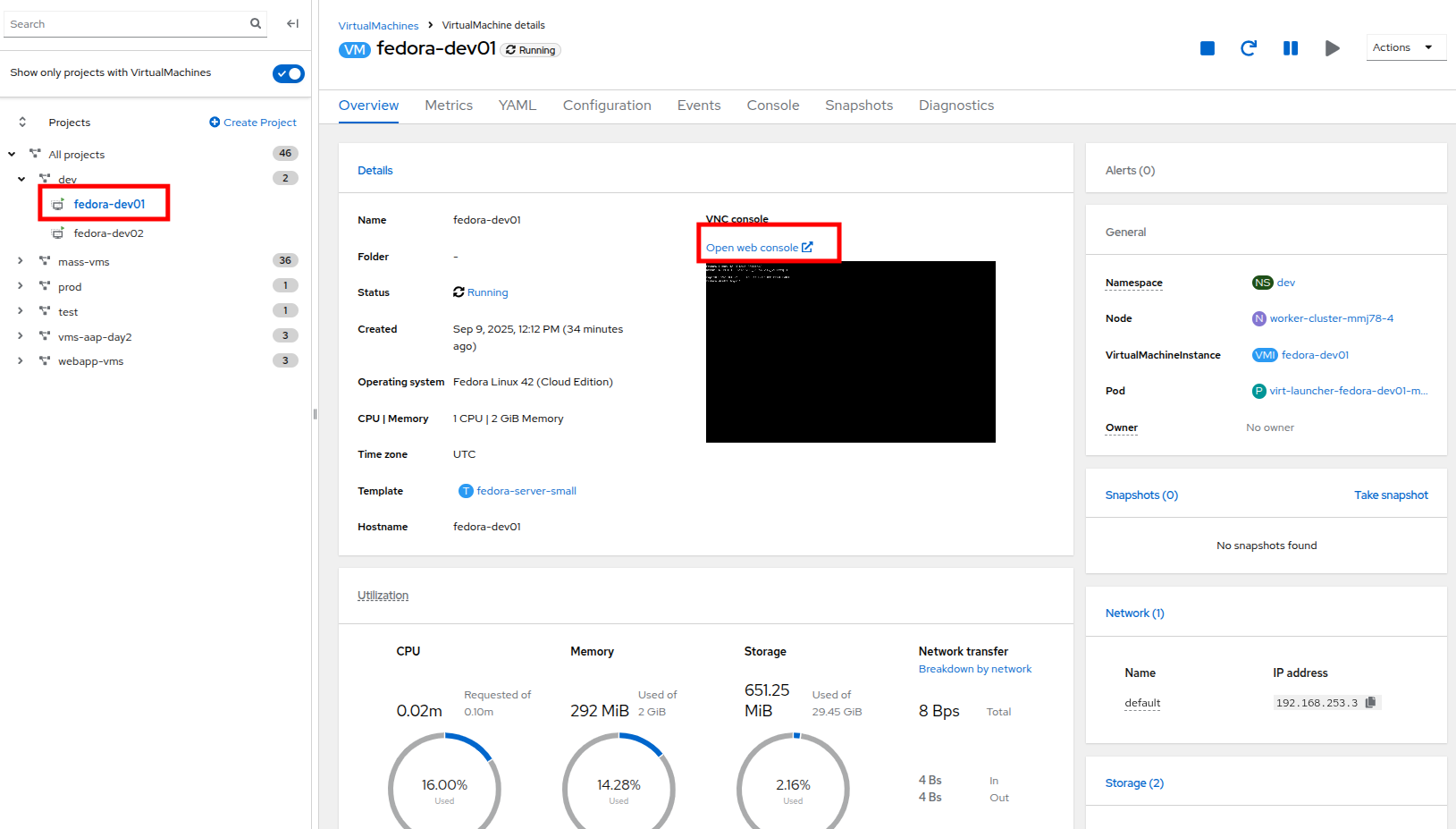

- Click on fedora-dev01, and click the button to launch its web console.

Figure 22. Fedora-Dev01 details

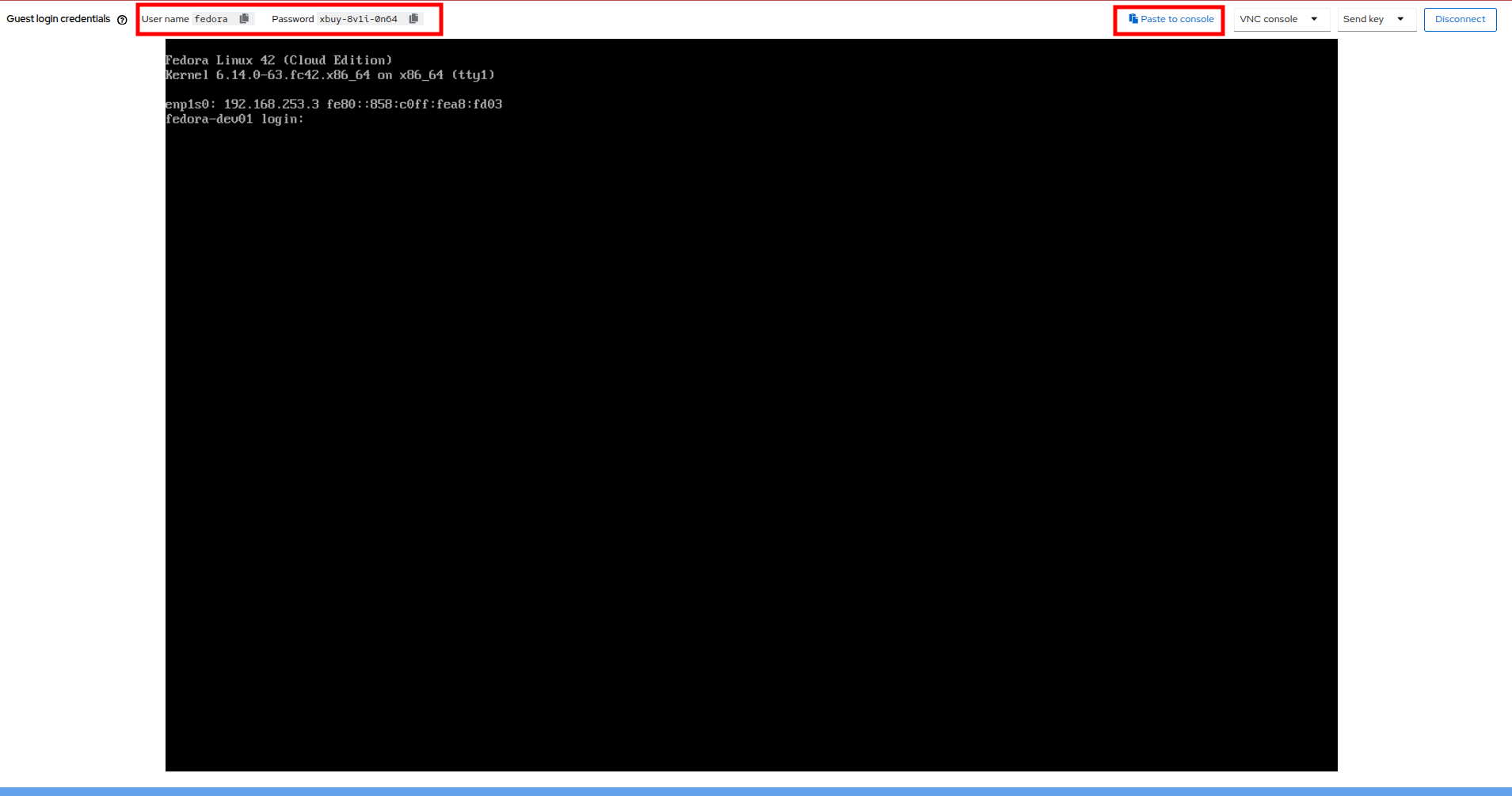

- Use the built-in Copy/Paste functionality to log into the system with the credentials provided.

Figure 23. Fedora-Dev01 console

| Note: You may receive a prompt to allow this feature, click the Allow button. |

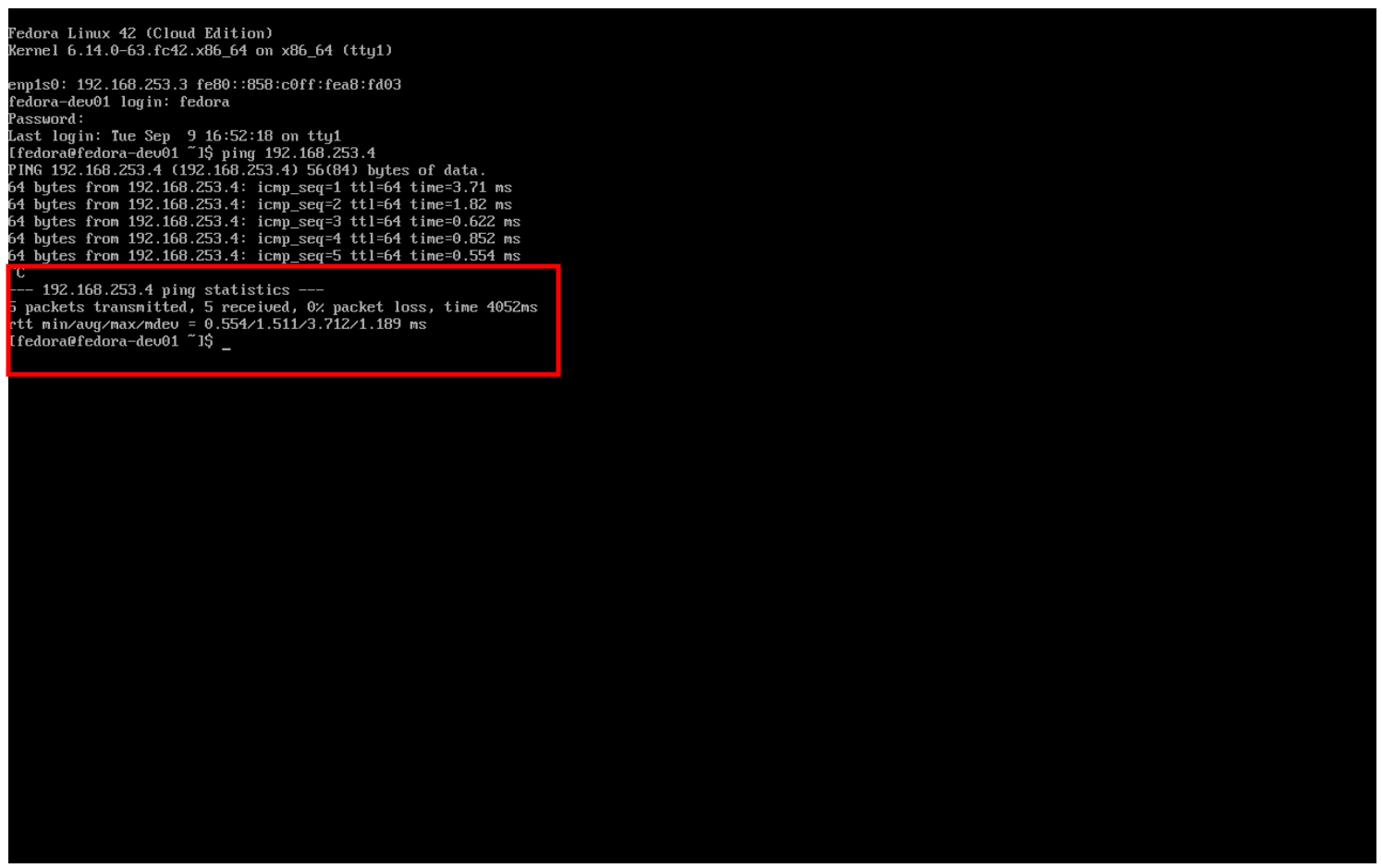

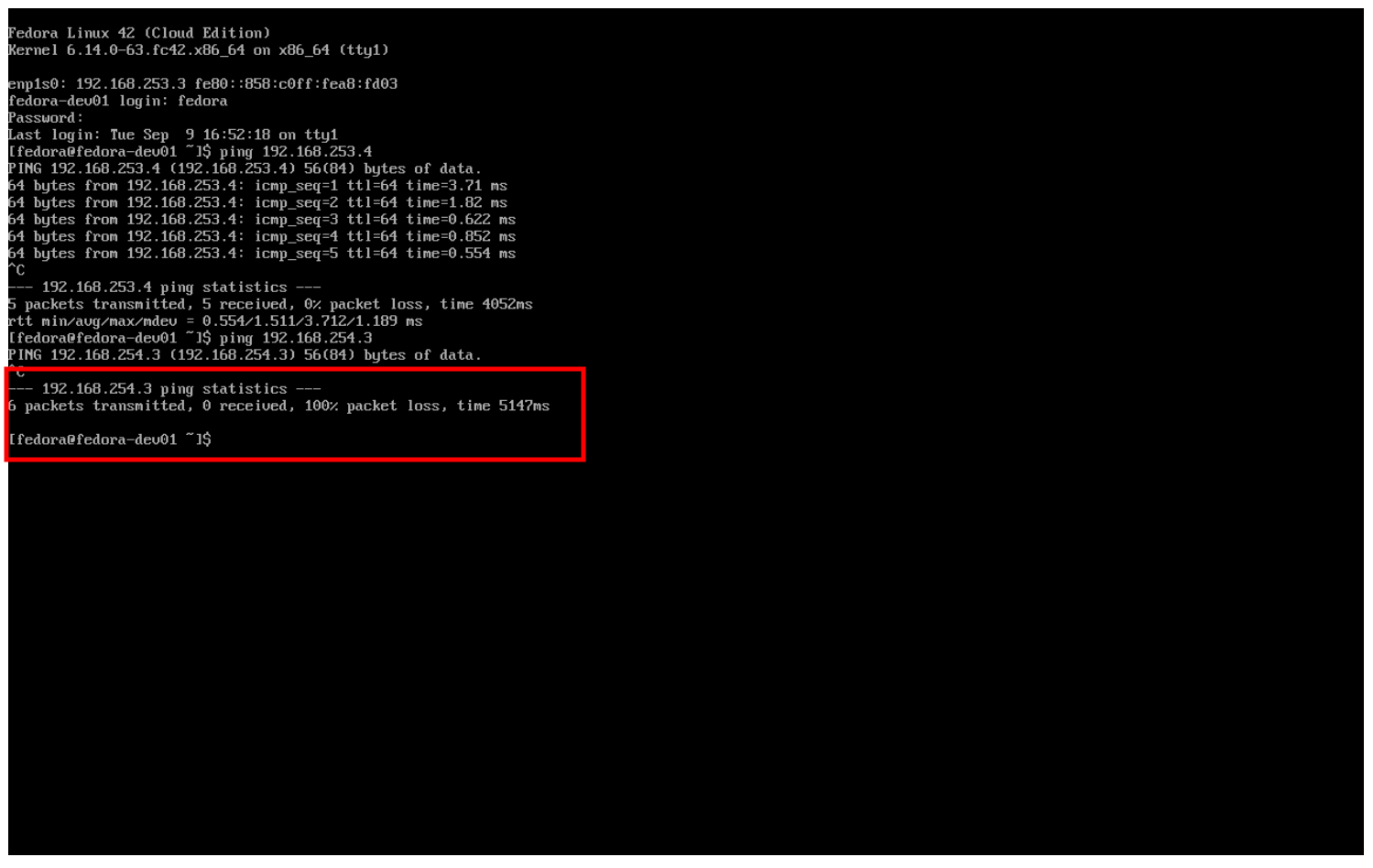

- When you are logged in, attempt to ping the other VM in our dev namespace, fedora-dev02. The IP pings successfully.

Figure 24. Fedora-Dev02 ping success

- Now that we have tested the ping within our namespace, let’s try between namespaces and networks to see what happens.

- Return to the tab where you have the fedora-dev01 details pulled up and click on the test project in the center column.

Figure 25. Fedora-Test01

- Make note of the IP address that the fedora-test01 VM has.

- Now return to the tab where you have the console for fedora-dev01, and open and attempt to ping this IP address.

Figure 26. Fedora-Test02 ping fail

You can see that the ping attempt, across networks and namespaces fails, showing how UDN helps segregate networks and workloads by default.

Note: With advanced networking configurations it is possible to enable routing between these subnets, but that is currently out of scope for this lab.

Examine network traffic with the Network Observability operator

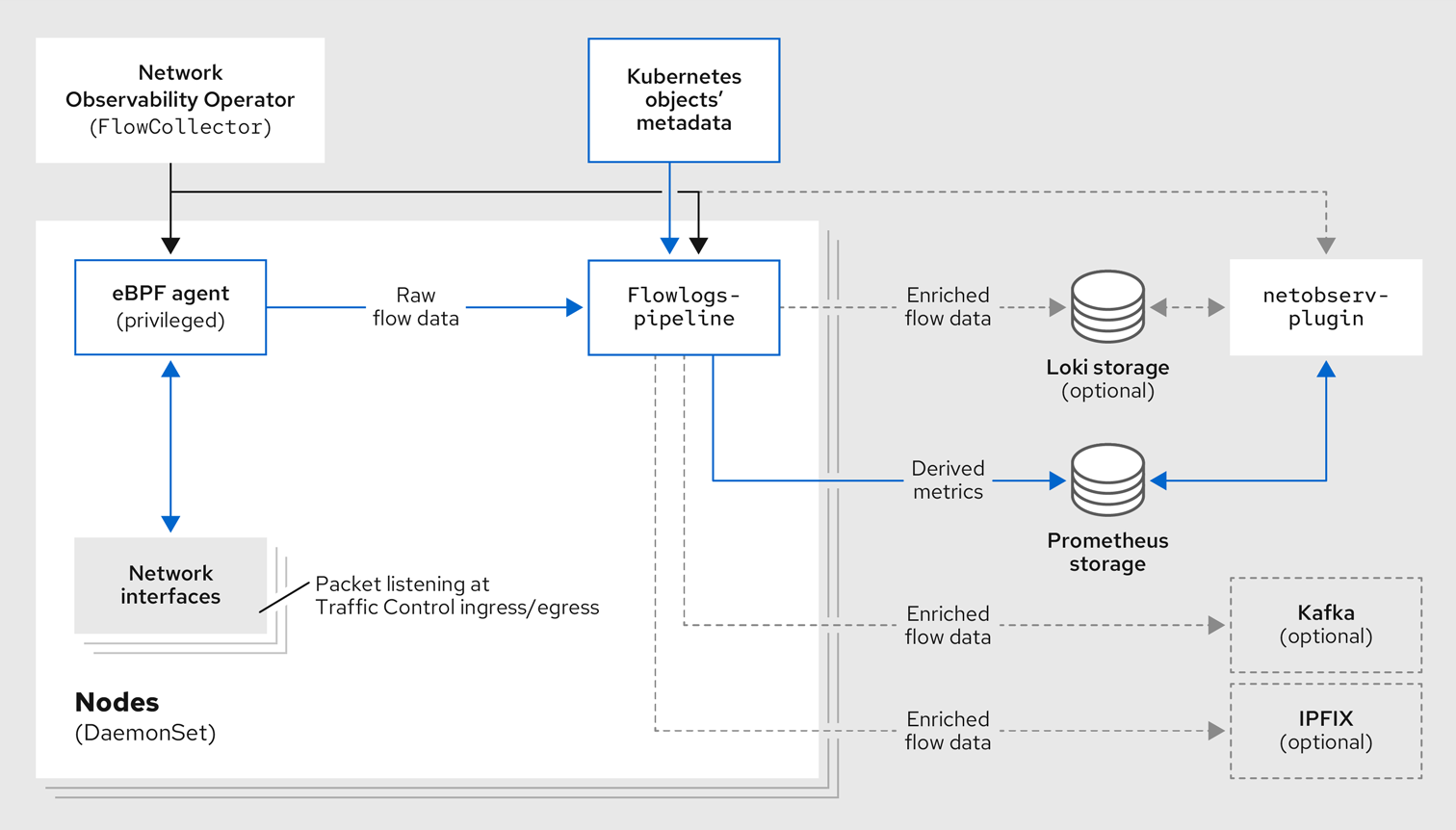

Another powerful tool that can be used to manage your networking environment is the Network Observability Operator. The Network Observability Operator analyzes traffic entering and leaving the cluster, and traveling between namespaces to help you visualize what your virtual and container-based workloads are communicating with.

This is a look at how the operator is configured and how it collects the data to create the graphs.

Figure 27. Network Observability operator architecture

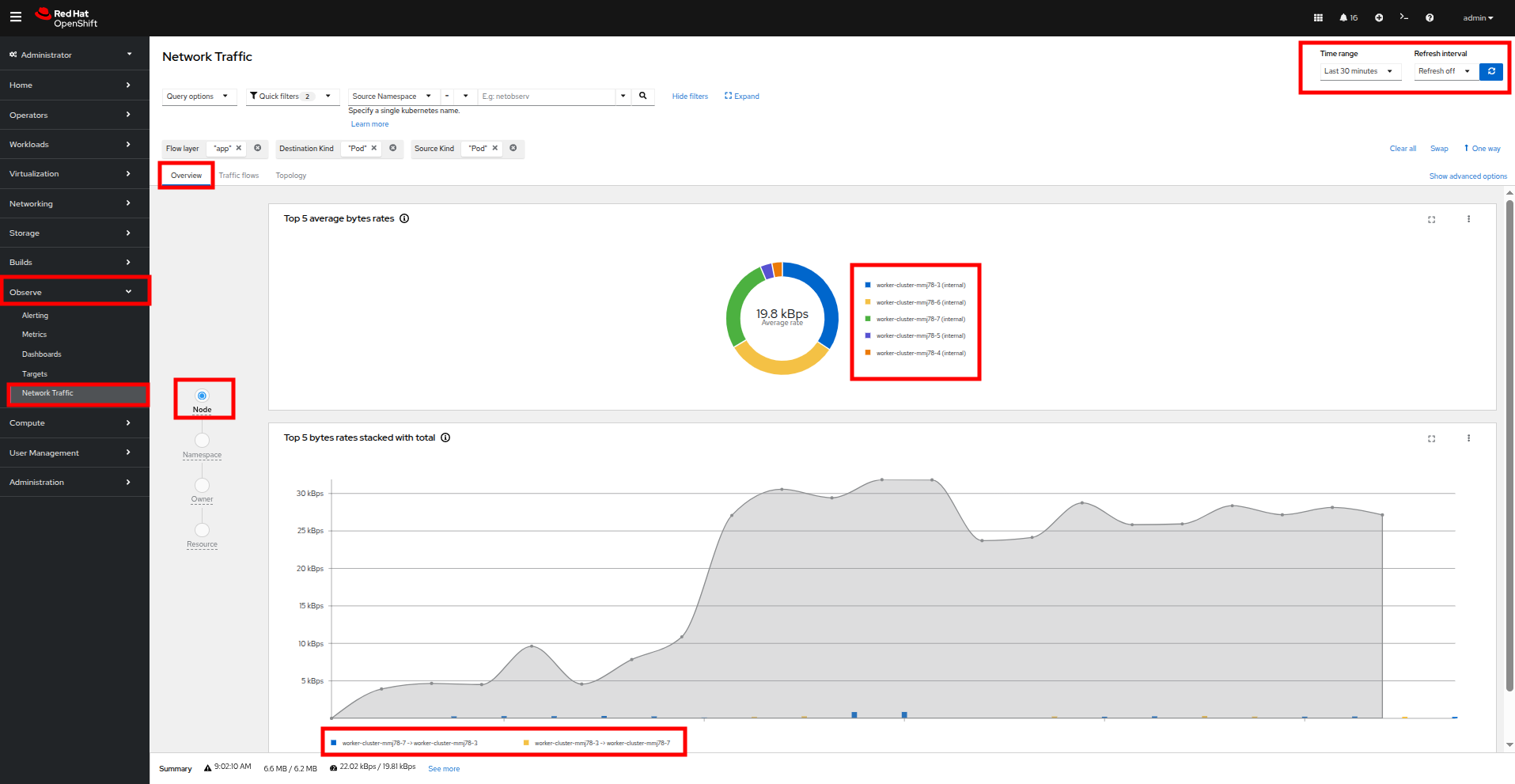

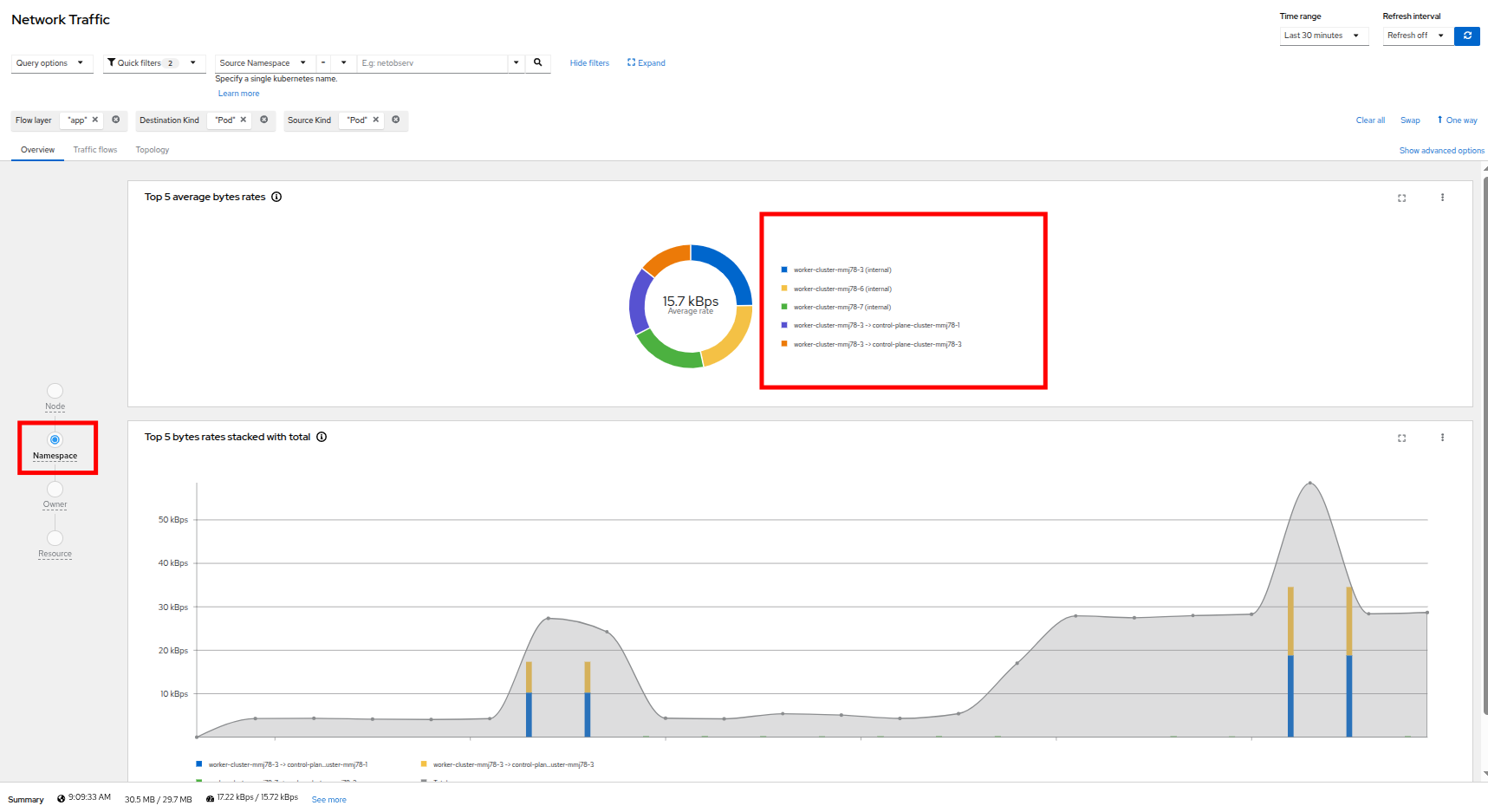

- To get started with the Network Observability operator, we want to click on Observe on the left side menu, and then on Network Traffic. The initial view is called the Overview and shows us node view by default, where we see our top five average byte rates produced by current workloads. It also shows a graph of our workflows. These views can be adjusted over time range of collected data and will refresh automatically if desired.

Figure 28. Network traffic node

- As you explore the screen, notice that a majority of our traffic is local, between the worker nodes, and most of it is generated by the loadmaker from earlier sending requests against our webapp application.

- The graph at the bottom shows the workloads stacked to show total throughput points. You can see peaks from individual worker nodes represented as bars near the bottom.

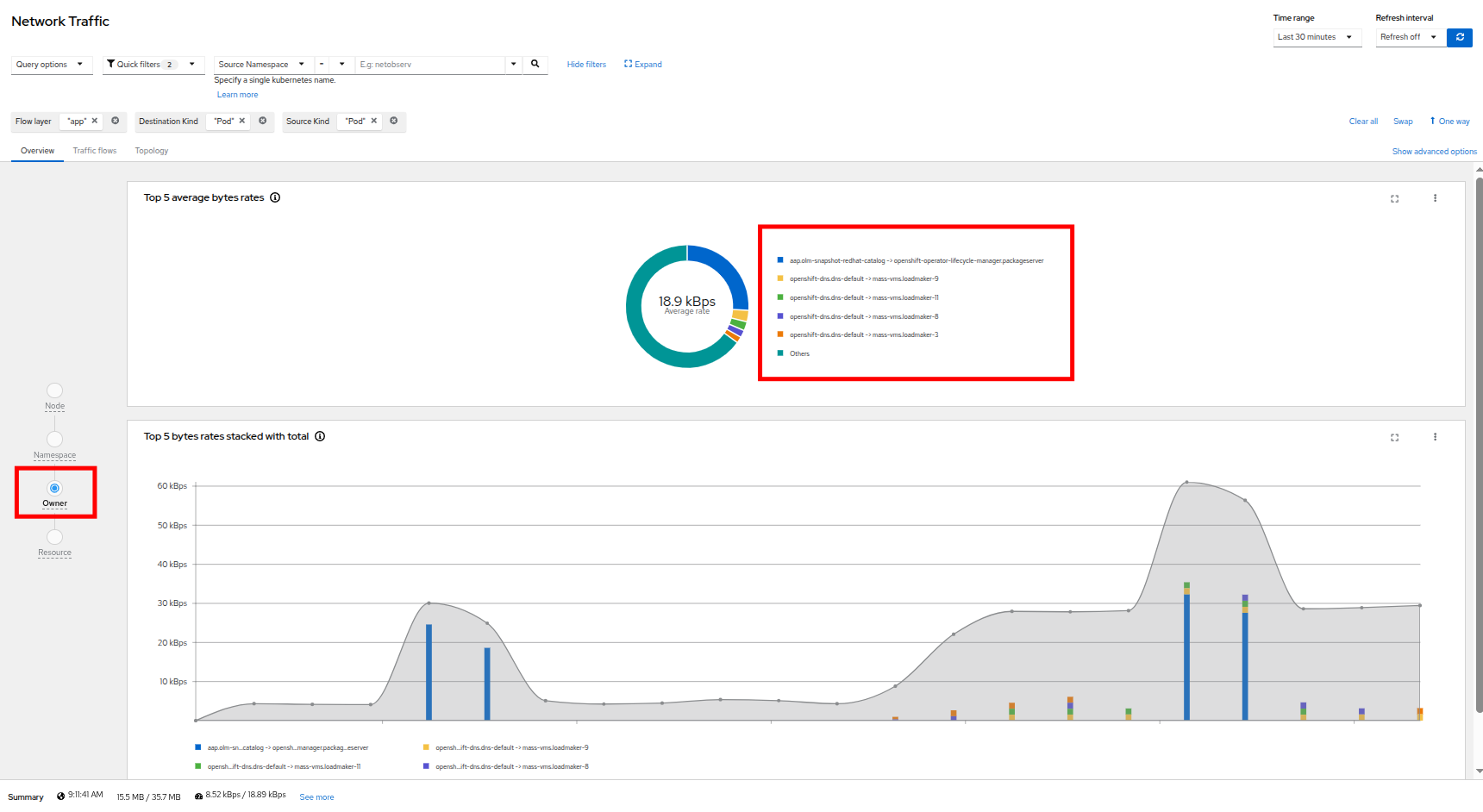

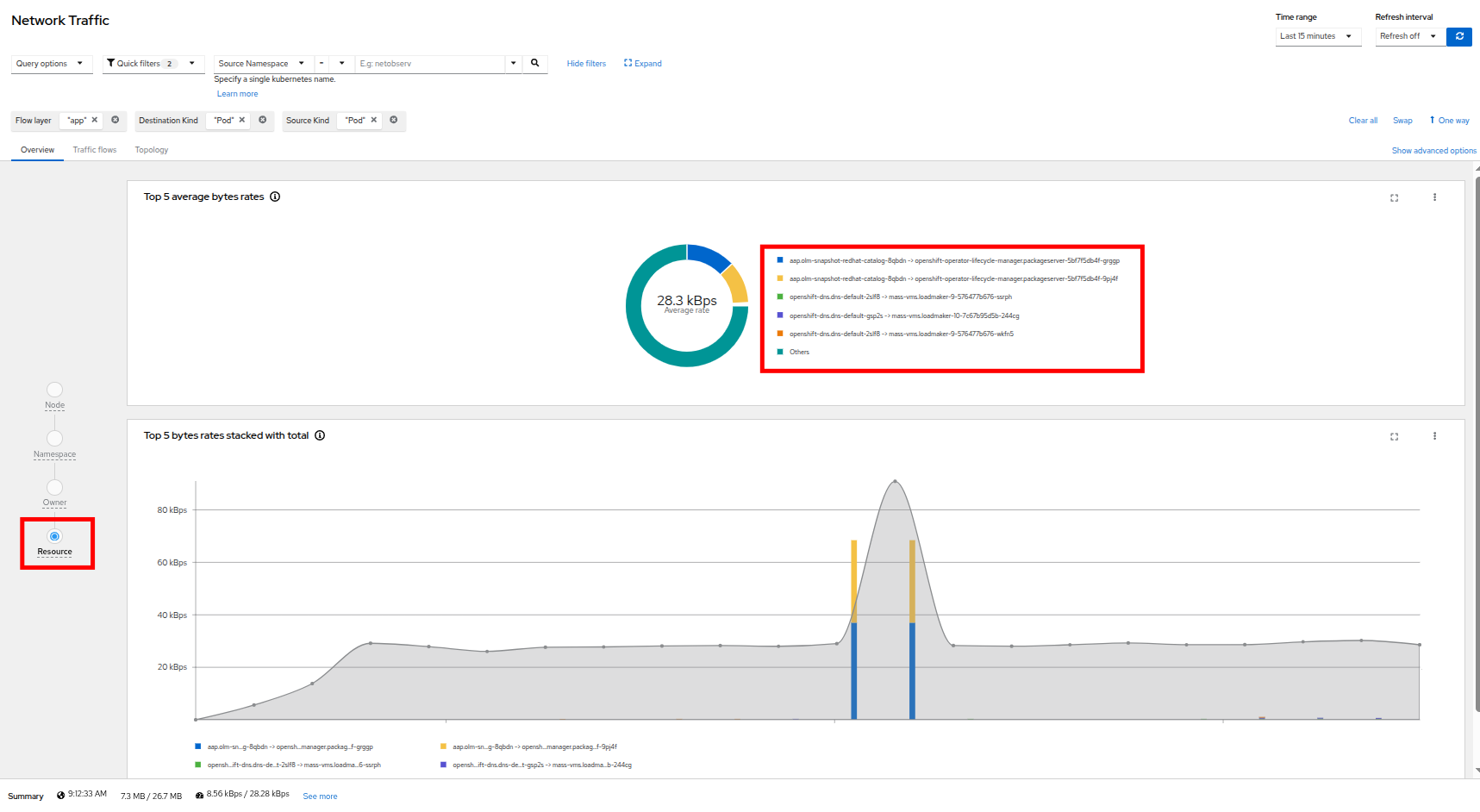

- There are also options to view traffic by namespace, workload owner, and managed resource. Click through and explore each of these at your leisure.

Figure 29. Network Traffic Namespace

Figure 30. Network traffic owner

Figure 31. Network traffic resource

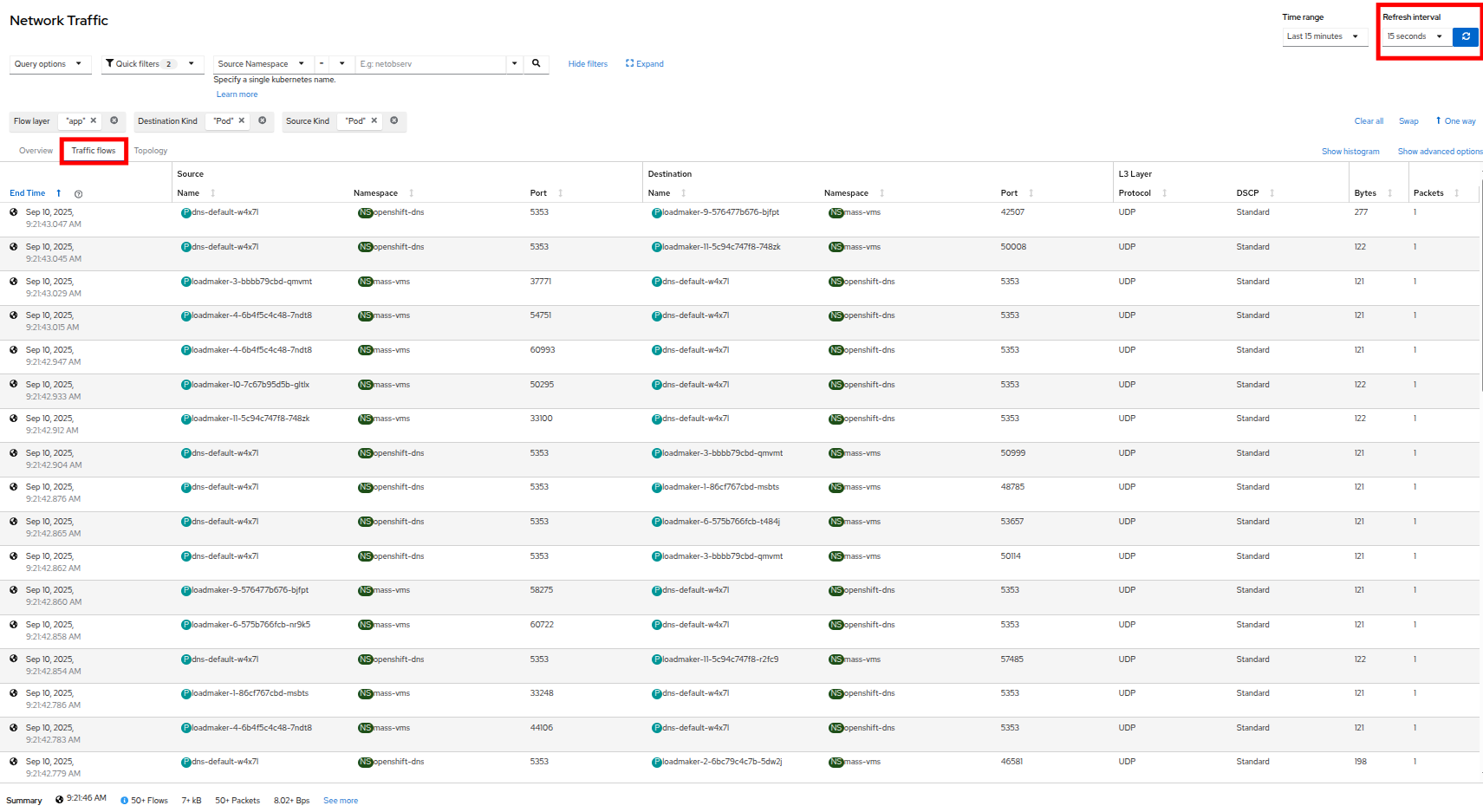

- The next thing we can dig down into is actual traffic flows across our environment, by clicking on the Traffic flows tab next to Overview. Here we can see records of each of the pods and namespaces that are initiating a network request, what port they are sourced from, and what destination pod, namespace, and port is receiving that. Set the refresh interval to 15 seconds so that we can see these requests update in realtime.

Figure 32. Traffic flows

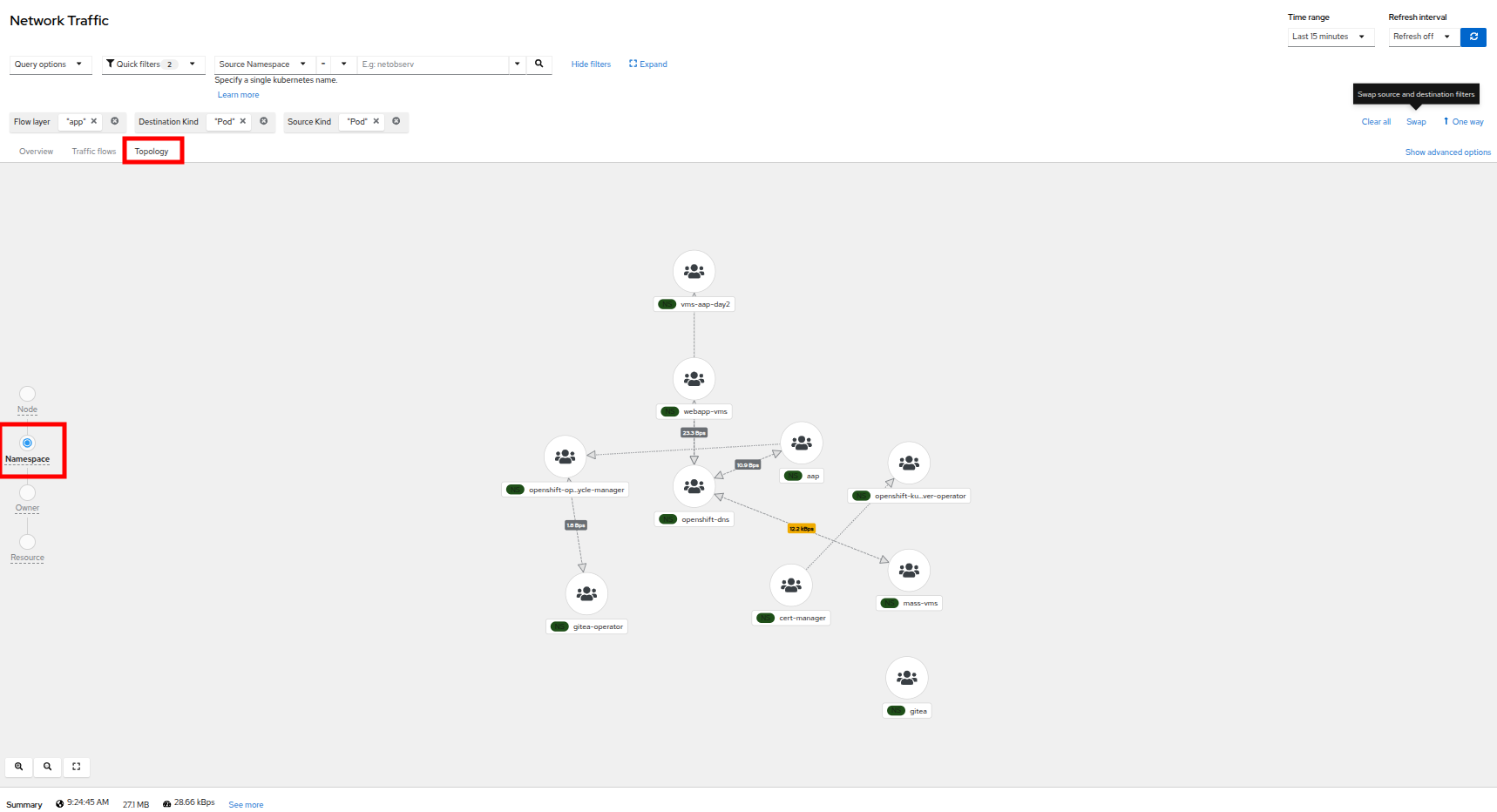

- An additional, but sometimes chaotic, graphic that is on our list is that of the topology map, which helps to visualize further incoming and outgoing traffic from specific pods. Like the Overview it can be sorted by node, namespace, owner, and resource. Click on the Topology tab to initialize this view, and sort by Namespace for a clean view of our cluster applications and their network traffic.

Figure 33. Topology namespace

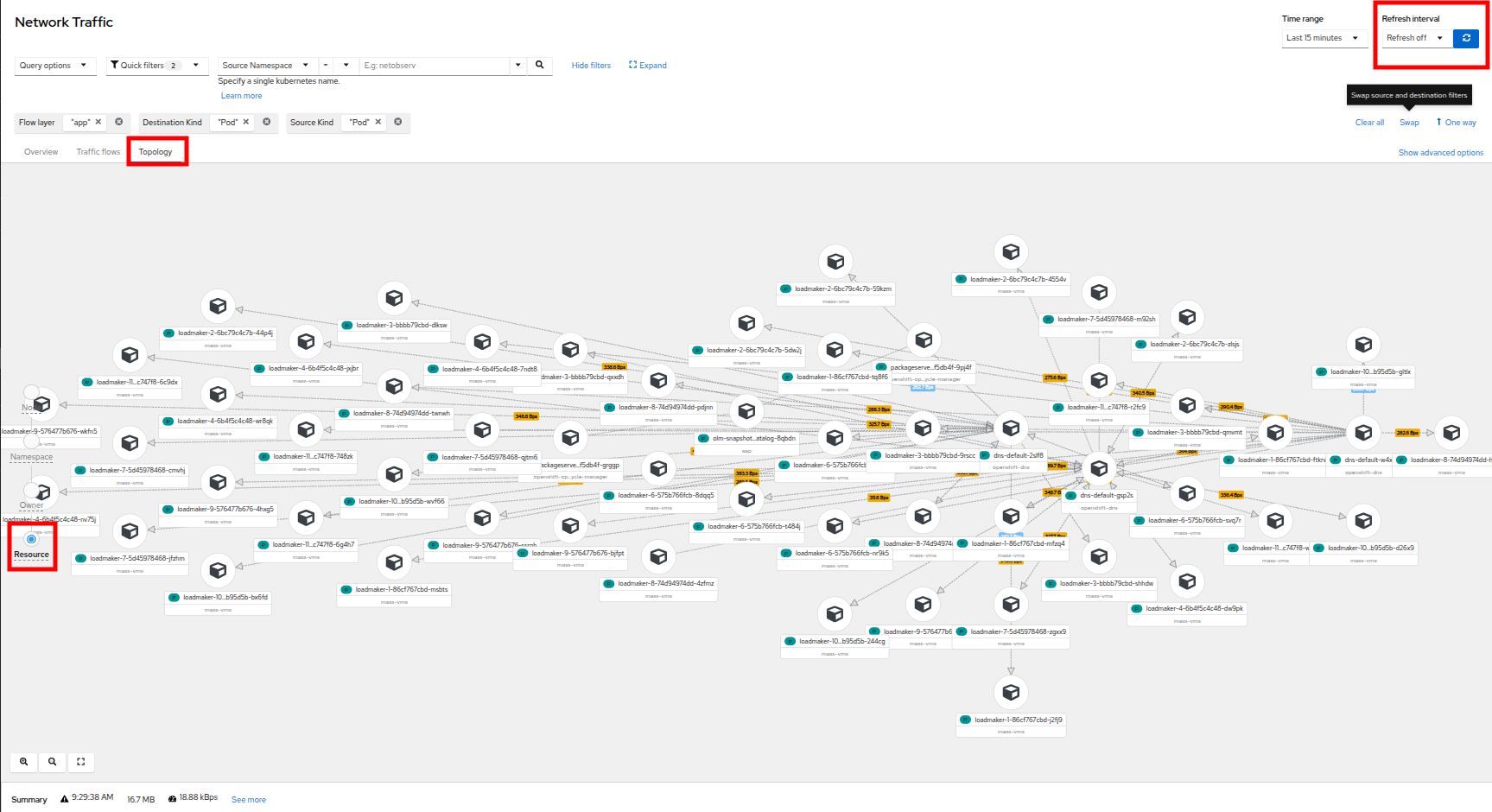

- For fun, click on Resource to see a more chaotic view. Please note that the icons on the graph can be clicked and dragged to make the view easier.

Figure 34. Topology resource

| Note: It can be helpful here to turn off the refresh interval to have a chance to examine the graphs. |

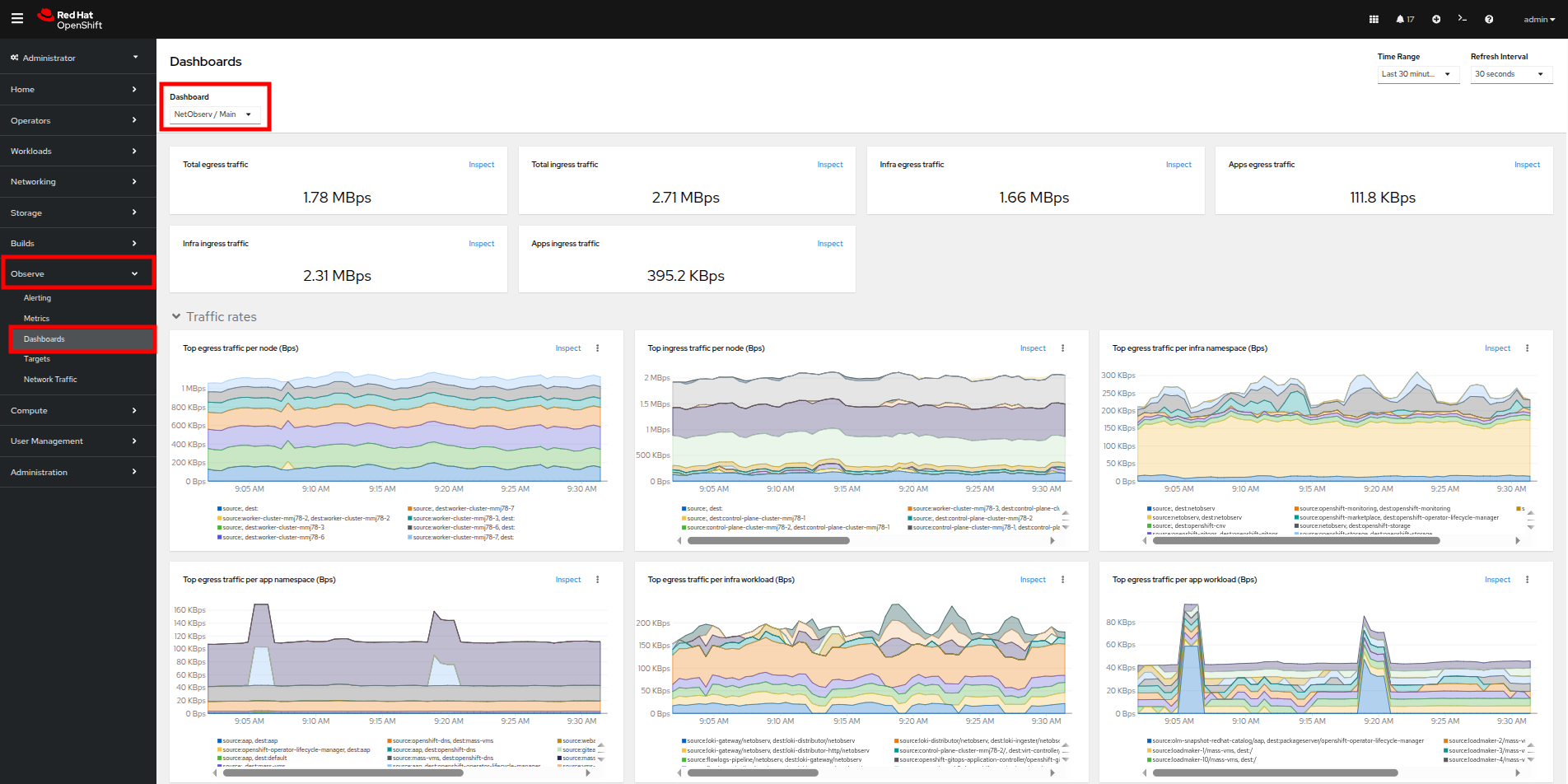

- It is also helpful to know that, in addition to the Network Traffic plugin to the OpenShift console, there are also default dashboards created for network observability, similar to the ones we used in module 1 for VMs.

- To take a look at these click on Observe in the left menu, and click on Dashboards. From the Dashboard dropdown, select NetObserv/Main.

Figure 35. NetObserv dashboard

- The dashboard provides a number of default graphs that would be useful to network administrators when trying to visualize the network traffic in their cluster.

The Network Observability Operator is a very powerful tool for OpenShift that equips both network and virtual machine administrators to ensure the security of their environment, and confirm that no unexpected traffic is entering or leaving their cluster.

Summary

In this section, we learned how to create and apply a simple network policy to block egress traffic from a virtual machine to a public website. As an advanced example, we learned how to isolate traffic between virtual guests and projects on the same cluster by leveraging UDN’s native network segregation. We also took a brief look at the benefits provided by the Network Observability Operator, and how it can make visualizing the network traffic flow in your cluster much easier.

In the next resource, we’ll explore the automation of virtual machines.