Operator

OpenShift Container Platform의 Operator 작업

초록

1장. Operator 개요

Operator는 OpenShift Container Platform의 가장 중요한 구성 요소 중 하나입니다. Operator는 컨트롤 플레인에서 서비스를 패키징, 배포 및 관리하는 기본 방법입니다. 또한 사용자가 실행하는 애플리케이션에 이점을 제공할 수 있습니다.

Operator는 kubectl 및 oc 명령과 같은 CLI 툴 및 Kubernetes API와 통합됩니다. 애플리케이션 모니터링, 상태 점검 수행, OTA(Over-the-air) 업데이트 관리 및 애플리케이션이 지정된 상태로 유지되도록 하는 수단을 제공합니다.

두 가지 모두 유사한 Operator 개념과 목표를 따르지만 OpenShift Container Platform의 Operator는 목적에 따라 서로 다른 두 시스템에서 관리합니다.

- CVO(Cluster Version Operator)에서 관리하는 Platform Operator는 클러스터 기능을 수행하기 위해 기본적으로 설치됩니다.

- OLM(Operator Lifecycle Manager)에서 관리하는 선택적 추가 기능 Operator는 사용자가 애플리케이션에서 실행할 수 있도록 액세스할 수 있습니다.

Operator를 사용하면 클러스터에서 실행 중인 서비스를 모니터링할 수 있는 애플리케이션을 생성합니다. Operator는 애플리케이션을 위해 특별히 설계되었습니다. Operator는 설치 및 구성과 같은 일반적인 Day 1 작업과 자동 확장 및 축소 및 백업과 같은 Day 2 작업을 구현하고 자동화합니다. 이러한 모든 활동은 클러스터 내에서 실행되는 소프트웨어입니다.

1.1. 개발자의 경우

개발자는 다음 Operator 작업을 수행할 수 있습니다.

1.2. 관리자의 경우

클러스터 관리자는 다음 Operator 작업을 수행할 수 있습니다.

operator-sdk CLI에서 생성된 파일 및 디렉터리에 대한 자세한 내용은 Appendices를 참조하십시오.

Red Hat Operator에 대한 모든 정보를 알아보려면 Red Hat Operator를 참조하십시오.

1.3. 다음 단계

Operator에 대한 자세한 내용은 Operator를 참조하십시오. https://access.redhat.com/documentation/en-us/openshift_container_platform/4.6/html-single/operators/#olm-what-operators-are

2장. Operator 이해

2.1. Operator란 무엇인가?

개념적으로 Operator는 사람의 운영 지식을 소비자와 더 쉽게 공유할 수 있는 소프트웨어로 인코딩합니다.

Operator는 다른 소프트웨어를 실행하는 데 따르는 운영의 복잡성을 완화해주는 소프트웨어입니다. OpenShift Container Platform과 같은 Kubernetes 환경을 조사하고 현재 상태를 사용하여 실시간으로 의사 결정을 내리는 소프트웨어 벤더의 엔지니어링 팀의 확장 기능처럼 작동합니다. 고급 Operator는 업그레이드를 원활하게 처리하고 오류에 자동으로 대응하며 시간을 절약하기 위해 소프트웨어 백업 프로세스를 생략하는 등의 바로 가기를 실행하지 않습니다.

Operator는 Kubernetes 애플리케이션을 패키징, 배포, 관리하는 메서드입니다.

Kubernetes 애플리케이션은 Kubernetes API 및 kubectl 또는 oc 툴링을 사용하여 Kubernetes API에 배포하고 관리하는 앱입니다. Kubernetes를 최대한 활용하기 위해서는 Kubernetes에서 실행되는 앱을 제공하고 관리하기 위해 확장할 응집력 있는 일련의 API가 필요합니다. Operator는 Kubernetes에서 이러한 유형의 앱을 관리하는 런타임으로 생각할 수 있습니다.

2.1.1. Operator를 사용하는 이유는 무엇입니까?

Operator는 다음과 같은 기능을 제공합니다.

- 반복된 설치 및 업그레이드.

- 모든 시스템 구성 요소에 대한 지속적인 상태 점검.

- OpenShift 구성 요소 및 ISV 콘텐츠에 대한 OTA(Over-The-Air) 업데이트

- 필드 엔지니어의 지식을 캡슐화하여 한두 명이 아닌 모든 사용자에게 전파.

- Kubernetes에 배포하는 이유는 무엇입니까?

- Kubernetes(및 확장으로)에는 온프레미스 및 클라우드 공급자 전체에서 작동하는 복잡한 분산 시스템을 빌드하는 데 필요한 모든 기본 기능(비밀 처리, 로드 밸런싱, 서비스 검색, 자동 스케일링)이 포함되어 있습니다.

- Kubernetes API 및

kubectl툴링으로 앱을 관리하는 이유는 무엇입니까? -

이러한 API는 기능이 다양하고 모든 플랫폼에 대한 클라이언트가 있으며 클러스터의 액세스 제어/감사에 연결됩니다. Operator는 Kubernetes 확장 메커니즘인 CRD(사용자 정의 리소스 정의)를 사용하므로 사용자 정의 오브젝트(예:

MongoDB)가 기본 제공되는 네이티브 Kubernetes 오브젝트처럼 보이고 작동합니다. - Operator는 서비스 브로커와 어떻게 다릅니까?

- 서비스 브로커는 앱의 프로그래밍 방식 검색 및 배포를 위한 단계입니다. 그러나 오래 실행되는 프로세스가 아니므로 업그레이드, 장애 조치 또는 스케일링과 같은 2일 차 작업을 실행할 수 없습니다. 튜닝할 수 있는 항목에 대한 사용자 정의 및 매개변수화는 설치 시 제공되지만 Operator는 클러스터의 현재 상태를 지속적으로 관찰합니다. 클러스터 외부 서비스는 서비스 브로커에 적합하지만 해당 서비스를 위한 Operator도 있습니다.

2.1.2. Operator 프레임워크

Operator 프레임워크는 위에서 설명한 고객 경험을 제공하는 툴 및 기능 제품군입니다. 코드를 작성하는 데 그치지 않고 Operator를 테스트, 제공, 업데이트하는 것이 중요합니다. Operator 프레임워크 구성 요소는 이러한 문제를 해결하는 오픈 소스 툴로 구성됩니다.

- Operator SDK

- Operator SDK는 Operator 작성자가 Kubernetes API 복잡성에 대한 지식이 없어도 전문 지식을 기반으로 자체 Operator를 부트스트랩, 빌드, 테스트, 패키지할 수 있도록 지원합니다.

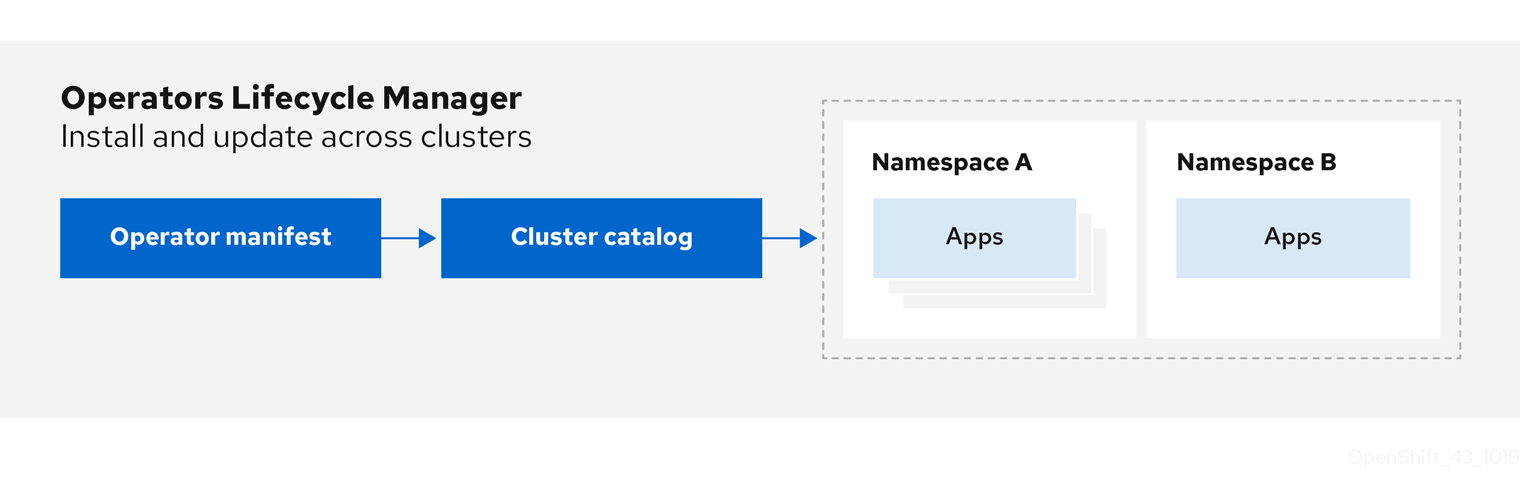

- Operator Lifecycle Manager

- OLM(Operator Lifecycle Manager)은 클러스터에서 Operator의 설치, 업그레이드, RBAC(역할 기반 액세스 제어)를 제어합니다. OpenShift Container Platform 4.6에 기본적으로 배포됩니다.

- Operator 레지스트리

- Operator 레지스트리는 CSV(클러스터 서비스 버전) 및 CRD(사용자 정의 리소스 정의)를 클러스터에 생성하기 위해 저장하고 패키지 및 채널에 대한 Operator 메타데이터를 저장합니다. 이 Operator 카탈로그 데이터를 OLM에 제공하기 위해 Kubernetes 또는 OpenShift 클러스터에서 실행됩니다.

- OperatorHub

- OperatorHub는 클러스터 관리자가 클러스터에 설치할 Operator를 검색하고 선택할 수 있는 웹 콘솔입니다. OpenShift Container Platform에 기본적으로 배포됩니다.

이러한 툴은 구성 가능하도록 설계되어 있어 유용한 툴을 모두 사용할 수 있습니다.

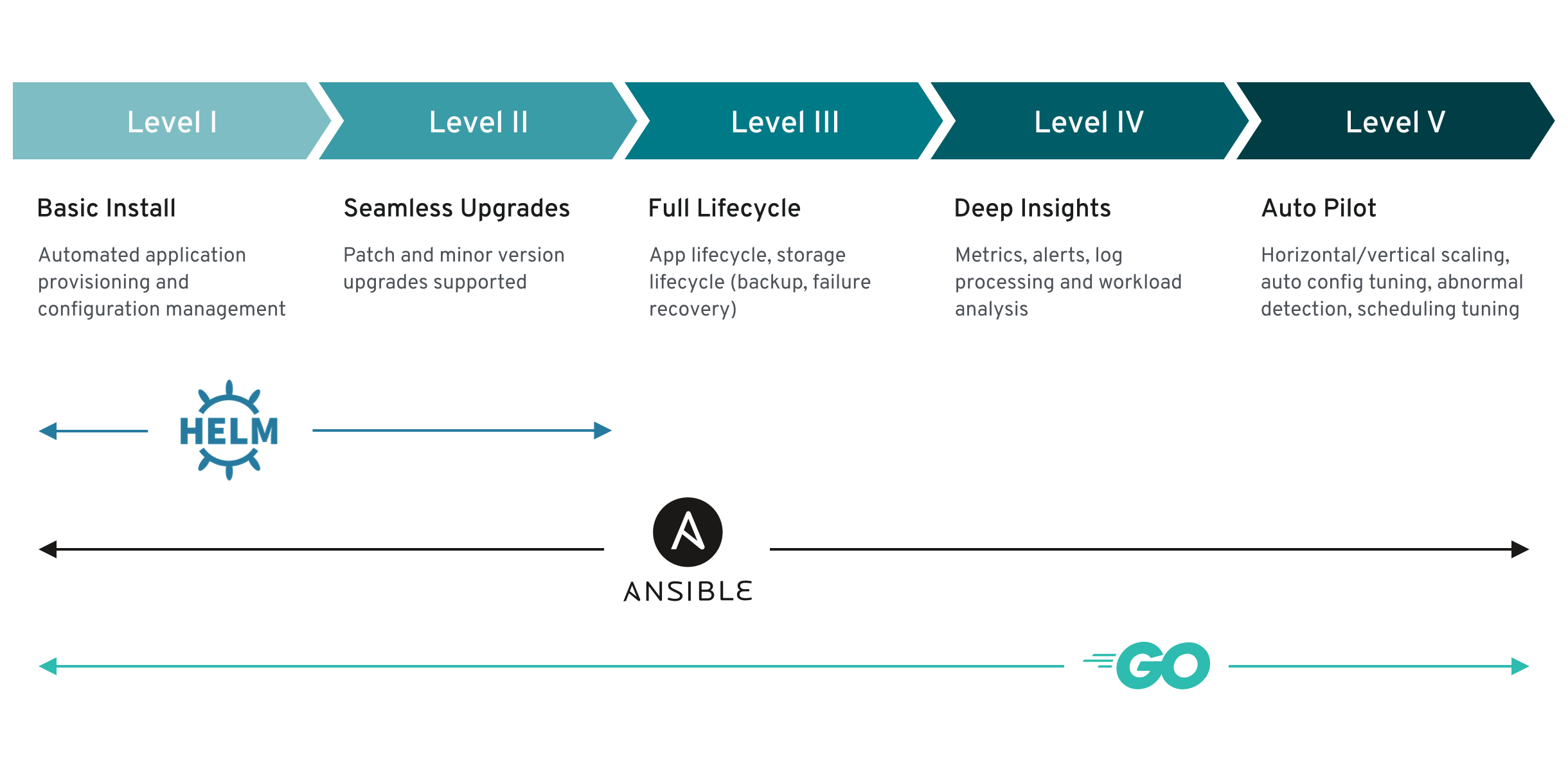

2.1.3. Operator 완성 모델

Operator 내에 캡슐화된 관리 논리의 세분화 수준은 다를 수 있습니다. 이 논리는 일반적으로 Operator에서 표시하는 서비스 유형에 따라 크게 달라집니다.

그러나 대부분의 Operator에 포함될 수 있는 특정 기능 세트의 경우 캡슐화된 Operator 작업의 완성 정도를 일반화할 수 있습니다. 이를 위해 다음 Operator 완성 모델에서는 Operator의 일반적인 2일 차 작업에 대해 5단계 완성도를 정의합니다.

그림 2.1. Operator 완성 모델

또한 위 모델은 Operator SDK의 Helm, Go, Ansible 기능을 통해 이러한 기능을 가장 잘 개발할 수 있는 방법을 보여줍니다.

2.2. Operator 프레임워크 일반 용어집

이 주제에서는 두 패키징 형식에 대해 OLM(Operator Lifecycle Manager) 및 Operator SDK를 포함하여 Operator 프레임워크와 관련된 일반 용어집을 제공합니다. 패키지 매니페스트 형식 및 번들 형식.

2.2.1. 일반 Operator 프레임워크 용어

2.2.1.1. 번들

번들 형식에서 번들은 Operator CSV, 매니페스트, 메타데이터로 이루어진 컬렉션입니다. 이러한 구성 요소가 모여 클러스터에 설치할 수 있는 고유한 버전의 Operator를 형성합니다.

2.2.1.2. 번들 이미지

번들 형식에서 번들 이미지는 Operator 매니페스트에서 빌드하고 하나의 번들을 포함하는 컨테이너 이미지입니다. 번들 이미지는 Quay.io 또는 DockerHub와 같은 OCI(Open Container Initiative) 사양 컨테이너 레지스트리에서 저장 및 배포합니다.

2.2.1.3. 카탈로그 소스

카탈로그 소스는 애플리케이션을 정의하는 CSV, CRD, 패키지의 리포지토리입니다.

2.2.1.4. 카탈로그 이미지

패키지 매니페스트 형식에서 카탈로그 이미지는 OLM을 사용하여 클러스터에 설치할 수 있는 Operator 메타데이터 및 업데이트 메타데이터 세트를 설명하는 컨테이너화된 데이터 저장소입니다.

2.2.1.5. 채널

채널은 Operator의 업데이트 스트림을 정의하고 구독자에게 업데이트를 배포하는 데 사용됩니다. 헤드는 해당 채널의 최신 버전을 가리킵니다. 예를 들어 stable 채널에는 Operator의 모든 안정적인 버전이 가장 오래된 것부터 최신 순으로 정렬되어 있습니다.

Operator에는 여러 개의 채널이 있을 수 있으며 특정 채널에 대한 서브스크립션 바인딩에서는 해당 채널의 업데이트만 찾습니다.

2.2.1.6. 채널 헤드

채널 헤드는 알려진 특정 채널의 최신 업데이트를 나타냅니다.

2.2.1.7. 클러스터 서비스 버전

CSV(클러스터 서비스 버전)는 Operator 메타데이터에서 생성하는 YAML 매니페스트로, OLM이 클러스터에서 Operator를 실행하는 것을 지원합니다. 로고, 설명, 버전과 같은 정보로 사용자 인터페이스를 채우는 데 사용되는 Operator 컨테이너 이미지와 함께 제공되는 메타데이터입니다.

또한 필요한 RBAC 규칙 및 관리하거나 사용하는 CR(사용자 정의 리소스)과 같이 Operator를 실행하는 데 필요한 기술 정보의 소스이기도 합니다.

2.2.1.8. 종속성

Operator는 클러스터에 있는 다른 Operator에 종속되어 있을 수 있습니다. 예를 들어 Vault Operator는 데이터 지속성 계층과 관련하여 etcd Operator에 종속됩니다.

OLM은 설치 단계 동안 지정된 모든 버전의 Operator 및 CRD가 클러스터에 설치되도록 하여 종속 항목을 해결합니다. 이러한 종속성은 필수 CRD API를 충족하고 패키지 또는 번들과 관련이 없는 카탈로그에서 Operator를 찾아 설치함으로써 해결할 수 있습니다.

2.2.1.9. 인덱스 이미지

번들 형식에서 인덱스 이미지는 모든 버전의 CSV 및 CRD를 포함하여 Operator 번들에 대한 정보를 포함하는 데이터베이스 이미지(데이터베이스 스냅샷)를 나타냅니다. 이 인덱스는 클러스터에서 Operator 기록을 호스팅하고 opm CLI 툴을 사용하여 Operator를 추가하거나 제거하는 방식으로 유지 관리할 수 있습니다.

2.2.1.10. 설치 계획

설치 계획은 CSV를 자동으로 설치하거나 업그레이드하기 위해 생성하는 계산된 리소스 목록입니다.

2.2.1.11. Operator group

Operator group은 동일한 네임스페이스에 배포된 모든 Operator를 OperatorGroup 오브젝트로 구성하여 네임스페이스 목록 또는 클러스터 수준에서 CR을 조사합니다.

2.2.1.12. 패키지

번들 형식에서 패키지는 각 버전과 함께 Operator의 모든 릴리스 내역을 포함하는 디렉터리입니다. 릴리스된 Operator 버전은 CRD와 함께 CSV 매니페스트에 설명되어 있습니다.

2.2.1.13. 레지스트리

레지스트리는 각각 모든 채널의 최신 버전 및 이전 버전이 모두 포함된 Operator의 번들 이미지를 저장하는 데이터베이스입니다.

2.2.1.14. Subscription

서브스크립션은 패키지의 채널을 추적하여 CSV를 최신 상태로 유지합니다.

2.2.1.15. 업데이트 그래프

업데이트 그래프는 패키지된 다른 소프트웨어의 업데이트 그래프와 유사하게 CSV 버전을 함께 연결합니다. Operator를 순서대로 설치하거나 특정 버전을 건너뛸 수 있습니다. 업데이트 그래프는 최신 버전이 추가됨에 따라 앞부분에서만 증가할 것으로 예상됩니다.

2.3. Operator 프레임워크 패키지 형식

이 가이드에서는 OpenShift Container Platform의 OLM(Operator Lifecycle Manager)에서 지원하는 Operator의 패키지 형식에 대해 간단히 설명합니다.

2.3.1. 번들 형식

Operator의 번들 형식은 Operator 프레임워크에서 도입한 새로운 패키지 형식입니다. 번들 형식 사양에서는 확장성을 개선하고 자체 카탈로그를 호스팅하는 업스트림 사용자를 더 효과적으로 지원하기 위해 Operator 메타데이터의 배포를 단순화합니다.

Operator 번들은 단일 버전의 Operator를 나타냅니다. 디스크상의 번들 매니페스트는 컨테이너화되어 번들 이미지(Kubernetes 매니페스트와 Operator 메타데이터를 저장하는 실행 불가능한 컨테이너 이미지)로 제공됩니다. 그런 다음 podman 및 docker와 같은 기존 컨테이너 툴과 Quay와 같은 컨테이너 레지스트리를 사용하여 번들 이미지의 저장 및 배포를 관리합니다.

Operator 메타데이터에는 다음이 포함될 수 있습니다.

- Operator 확인 정보(예: 이름 및 버전)

- UI를 구동하는 추가 정보(예: 아이콘 및 일부 예제 CR(사용자 정의 리소스))

- 필요한 API 및 제공된 API.

- 관련 이미지.

Operator 레지스트리 데이터베이스에 매니페스트를 로드할 때 다음 요구 사항이 검증됩니다.

- 번들의 주석에 하나 이상의 채널이 정의되어 있어야 합니다.

- 모든 번들에 정확히 하나의 CSV(클러스터 서비스 버전)가 있습니다.

- CSV에 CRD(사용자 정의 리소스 정의)가 포함된 경우 해당 CRD가 번들에 있어야 합니다.

2.3.1.1. 매니페스트

번들 매니페스트는 Operator의 배포 및 RBAC 모델을 정의하는 Kubernetes 매니페스트 세트를 나타냅니다.

번들에는 디렉터리당 하나의 CSV와 일반적으로 /manifests 디렉터리에서 CSV의 고유 API를 정의하는 CRD가 포함됩니다.

Bundle 형식 레이아웃의 예

etcd

├── manifests

│ ├── etcdcluster.crd.yaml

│ └── etcdoperator.clusterserviceversion.yaml

│ └── secret.yaml

│ └── configmap.yaml

└── metadata

└── annotations.yaml

└── dependencies.yaml추가 지원 오브젝트

다음과 같은 오브젝트 유형도 번들의 /manifests 디렉터리에 선택적으로 포함될 수 있습니다.

지원되는 선택적 오브젝트 유형

-

ClusterRole -

ClusterRoleBinding -

ConfigMap -

PodDisruptionBudget -

PriorityClass -

PrometheusRule -

Role -

RoleBinding -

Secret -

Service -

ServiceAccount -

ServiceMonitor -

VerticalPodAutoscaler

이러한 선택적 오브젝트가 번들에 포함된 경우 OLM(Operator Lifecycle Manager)은 번들에서 해당 오브젝트를 생성하고 CSV와 함께 해당 라이프사이클을 관리할 수 있습니다.

선택적 오브젝트의 라이프사이클

- CSV가 삭제되면 OLM은 선택적 오브젝트를 삭제합니다.

CSV가 업그레이드되면 다음을 수행합니다.

- 선택적 오브젝트의 이름이 동일하면 OLM에서 해당 오브젝트를 대신 업데이트합니다.

- 버전 간 선택적 오브젝트의 이름이 변경된 경우 OLM은 해당 오브젝트를 삭제하고 다시 생성합니다.

2.3.1.2. 주석

번들의 /metadata 디렉터리에는 annotations.yaml 파일도 포함되어 있습니다. 이 파일에서는 번들을 번들 인덱스에 추가하는 방법에 대한 형식 및 패키지 정보를 설명하는 데 도움이 되는 고급 집계 데이터를 정의합니다.

예제 annotations.yaml

annotations:

operators.operatorframework.io.bundle.mediatype.v1: "registry+v1"

operators.operatorframework.io.bundle.manifests.v1: "manifests/"

operators.operatorframework.io.bundle.metadata.v1: "metadata/"

operators.operatorframework.io.bundle.package.v1: "test-operator"

operators.operatorframework.io.bundle.channels.v1: "beta,stable"

operators.operatorframework.io.bundle.channel.default.v1: "stable" - 1

- Operator 번들의 미디어 유형 또는 형식입니다.

registry+v1형식은 CSV 및 관련 Kubernetes 오브젝트가 포함됨을 나타냅니다. - 2

- Operator 매니페스트가 포함된 디렉터리의 이미지 경로입니다. 이 라벨은 나중에 사용할 수 있도록 예약되어 있으며 현재 기본값은

manifests/입니다. 값manifests.v1은 번들에 Operator 매니페스트가 포함되어 있음을 나타냅니다. - 3

- 번들에 대한 메타데이터 파일이 포함된 디렉터리의 이미지의 경로입니다. 이 라벨은 나중에 사용할 수 있도록 예약되어 있으며 현재 기본값은

metadata/입니다. 값metadata.v1은 이 번들에 Operator 메타데이터가 있음을 나타냅니다. - 4

- 번들의 패키지 이름입니다.

- 5

- Operator 레지스트리에 추가될 때 번들이 서브스크립션되는 채널 목록입니다.

- 6

- 레지스트리에서 설치할 때 Operator를 서브스크립션해야 하는 기본 채널입니다.

불일치하는 경우 이러한 주석을 사용하는 클러스터상의 Operator 레지스트리만 이 파일에 액세스할 수 있기 때문에 annotations.yaml 파일을 신뢰할 수 있습니다.

2.3.1.3. 종속 항목 파일

Operator의 종속 항목은 번들의 metadata/ 폴더에 있는 dependencies.yaml 파일에 나열되어 있습니다. 이 파일은 선택 사항이며 현재는 명시적인 Operator 버전 종속 항목을 지정하는 데만 사용됩니다.

종속성 목록에는 종속성의 유형을 지정하기 위해 각 항목에 대한 type 필드가 포함되어 있습니다. 지원되는 Operator 종속 항목에는 두 가지가 있습니다.

-

olm.package: 이 유형은 특정 Operator 버전에 대한 종속성을 나타냅니다. 종속 정보에는 패키지 이름과 패키지 버전이 semver 형식으로 포함되어야 합니다. 예를 들어0.5.2와 같은 정확한 버전이나>0.5.1과 같은 버전 범위를 지정할 수 있습니다. -

olm.gvk:gvk유형을 사용하면 작성자가 CSV의 기존 CRD 및 API 기반 사용과 유사하게 GVK(그룹/버전/종류) 정보로 종속성을 지정할 수 있습니다. 이 경로를 통해 Operator 작성자는 모든 종속 항목, API 또는 명시적 버전을 동일한 위치에 통합할 수 있습니다.

다음 예제에서는 Prometheus Operator 및 etcd CRD에 대한 종속 항목을 지정합니다.

dependencies.yaml 파일의 예

dependencies:

- type: olm.package

value:

packageName: prometheus

version: ">0.27.0"

- type: olm.gvk

value:

group: etcd.database.coreos.com

kind: EtcdCluster

version: v1beta22.3.1.4. opm 정보

opm CLI 툴은 Operator 번들 형식과 함께 사용할 수 있도록 Operator 프레임워크에서 제공합니다. 이 툴을 사용하면 소프트웨어 리포지토리와 유사한 인덱스라는 번들 목록에서 Operator 카탈로그를 생성하고 유지 관리할 수 있습니다. 결과적으로 인덱스 이미지라는 컨테이너 이미지를 컨테이너 레지스트리에 저장한 다음 클러스터에 설치할 수 있습니다.

인덱스에는 컨테이너 이미지 실행 시 제공된 포함 API를 통해 쿼리할 수 있는 Operator 매니페스트 콘텐츠에 대한 포인터 데이터베이스가 포함되어 있습니다. OpenShift Container Platform에서 OLM(Operator Lifecycle Manager)은 CatalogSource 오브젝트에서 인덱스 이미지를 카탈로그로 참조하여 사용할 수 있으며 주기적으로 이미지를 폴링하여 클러스터에 설치된 Operator를 자주 업데이트할 수 있습니다.

-

opmCLI 설치 단계는 CLI 툴을 참조하십시오.

2.3.2. 패키지 매니페스트 형식

Operator를 위한 패키지 매니페스트 형식은 Operator 프레임워크에서 도입한 레거시 패키지 형식입니다. 이 형식은 OpenShift Container Platform 4.5에서 더 이상 사용되지 않지만 계속 지원되며 Red Hat에서 제공하는 Operator는 현재 이 메서드를 사용하여 제공됩니다.

이 형식에서는 Operator 버전이 단일 CSV(클러스터 서비스 버전)와 일반적으로 CSV의 고유 API를 정의하는 CRD(사용자 정의 리소스 정의)로 표시되지만 추가 오브젝트가 포함될 수 있습니다.

모든 Operator 버전은 단일 디렉터리에 중첩됩니다.

패키지 매니페스트 형식 레이아웃 예제

etcd

├── 0.6.1

│ ├── etcdcluster.crd.yaml

│ └── etcdoperator.clusterserviceversion.yaml

├── 0.9.0

│ ├── etcdbackup.crd.yaml

│ ├── etcdcluster.crd.yaml

│ ├── etcdoperator.v0.9.0.clusterserviceversion.yaml

│ └── etcdrestore.crd.yaml

├── 0.9.2

│ ├── etcdbackup.crd.yaml

│ ├── etcdcluster.crd.yaml

│ ├── etcdoperator.v0.9.2.clusterserviceversion.yaml

│ └── etcdrestore.crd.yaml

└── etcd.package.yaml

또한 패키지 이름 및 채널 세부 정보를 정의하는 패키지 매니페스트인 <name>.package.yaml 파일도 포함합니다.

패키지 매니페스트 예제

packageName: etcd

channels:

- name: alpha

currentCSV: etcdoperator.v0.9.2

- name: beta

currentCSV: etcdoperator.v0.9.0

- name: stable

currentCSV: etcdoperator.v0.9.2

defaultChannel: alphaOperator 레지스트리 데이터베이스에 패키지 매니페스트를 로드할 때 다음 요구 사항이 검증됩니다.

- 모든 패키지에는 채널이 한 개 이상 있습니다.

- 패키지의 채널이 가리키는 모든 CSV가 존재합니다.

- Operator의 모든 버전에는 정확히 하나의 CSV가 있습니다.

- CSV에서 CRD를 보유하는 경우 해당 CRD는 Operator 버전의 디렉터리에 있어야 합니다.

- CSV가 교체되는 경우 기존 CSV와 새 CSV 둘 다 패키지에 있어야 합니다.

2.4. OLM(Operator Lifecycle Manager)

2.4.1. Operator Lifecycle Manager 개념 및 리소스

이 가이드에서는 OpenShift Container Platform에서 OLM(Operator Lifecycle Manager)을 구동하는 개념에 대한 개요를 제공합니다.

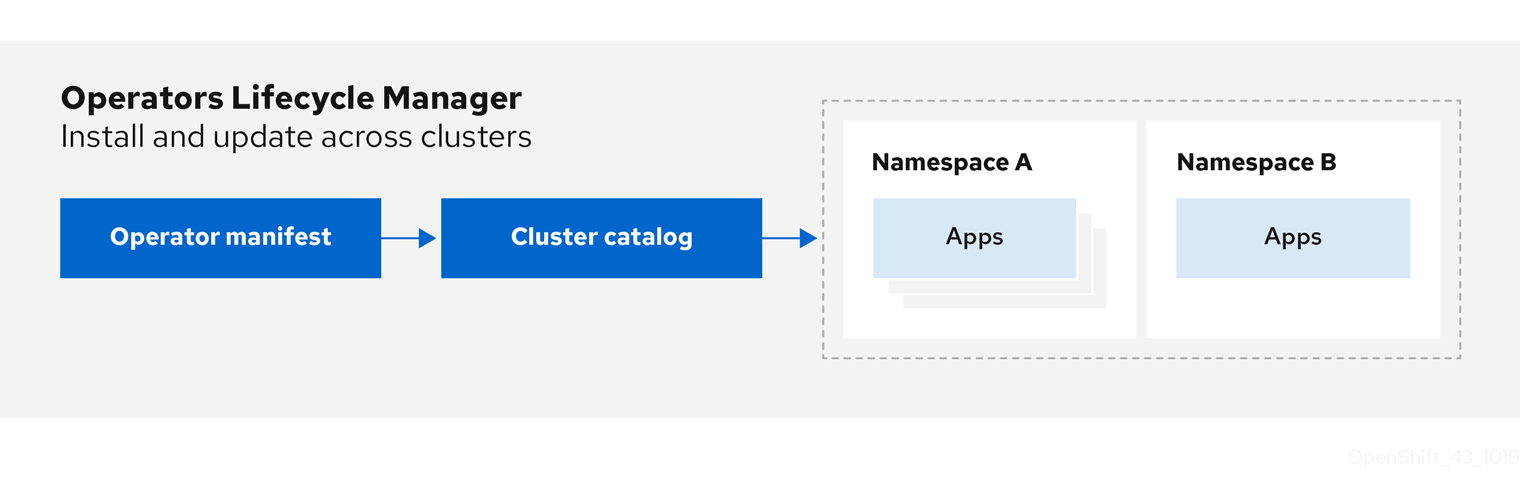

2.4.1.1. Operator Lifecycle Manager란?

OLM(Operator Lifecycle Manager)은 OpenShift Container Platform 클러스터에서 실행되는 Kubernetes 네이티브 애플리케이션(Operator) 및 관련 서비스의 라이프사이클을 설치, 업데이트, 관리하는 데 도움이 됩니다. Operator 프레임워크의 일부로, 효과적이고 자동화되었으며 확장 가능한 방식으로 Operator를 관리하도록 설계된 오픈 소스 툴킷입니다.

그림 2.2. Operator Lifecycle Manager 워크플로

OLM은 OpenShift Container Platform 4.6에서 기본적으로 실행되므로 클러스터 관리자가 클러스터에서 실행되는 Operator를 설치, 업그레이드 및 부여할 수 있습니다. OpenShift Container Platform 웹 콘솔은 클러스터 관리자가 Operator를 설치할 수 있는 관리 화면을 제공하고, 클러스터에 제공되는 Operator 카탈로그를 사용할 수 있는 액세스 권한을 특정 프로젝트에 부여합니다.

개발자의 경우 분야별 전문가가 아니어도 셀프서비스 경험을 통해 데이터베이스, 모니터링, 빅 데이터 서비스의 인스턴스를 프로비저닝하고 구성할 수 있습니다. Operator에서 해당 지식을 제공하기 때문입니다.

2.4.1.2. OLM 리소스

다음 CRD(사용자 정의 리소스 정의)는 OLM(Operator Lifecycle Manager)에서 정의하고 관리합니다.

| 리소스 | 짧은 이름 | 설명 |

|---|---|---|

|

|

| 애플리케이션 메타데이터입니다. 예를 들면 이름, 버전, 아이콘, 필수 리소스입니다. |

|

|

| 애플리케이션을 정의하는 CSV, CRD, 패키지의 리포지토리입니다. |

|

|

| 패키지의 채널을 추적하여 CSV를 최신 상태로 유지합니다. |

|

|

| CSV를 자동으로 설치하거나 업그레이드하기 위해 생성하는 계산된 리소스 목록입니다. |

|

|

|

동일한 네임스페이스에 배포된 모든 Operator를 |

2.4.1.2.1. 클러스터 서비스 버전

CSV(클러스터 서비스 버전)는 OpenShift Container Platform 클러스터에서 실행 중인 특정 버전의 Operator를 나타냅니다. 클러스터에서 Operator를 실행할 때 OLM(Operator Lifecycle Manager)을 지원하는 Operator 메타데이터에서 생성한 YAML 매니페스트입니다.

이러한 Operator 관련 메타데이터는 OLM이 클러스터에서 Operator가 계속 안전하게 실행되도록 유지하고 새 버전의 Operator가 게시되면 업데이트 적용 방법에 대한 정보를 제공하는 데 필요합니다. 이는 기존 운영 체제의 패키징 소프트웨어와 유사합니다. OLM 패키징 단계를 rpm, deb 또는 apk 번들을 생성하는 단계로 고려해 보십시오.

CSV에는 Operator 컨테이너 이미지와 함께 제공되는 메타데이터가 포함되며 이러한 데이터는 이름, 버전, 설명, 라벨, 리포지토리 링크, 로고와 같은 정보로 사용자 인터페이스를 채우는 데 사용됩니다.

CSV는 Operator를 실행하는 데 필요한 기술 정보의 소스이기도 합니다(예: RBAC 규칙, 클러스터 요구 사항, 설치 전략을 관리하고 사용하는 CR(사용자 정의 리소스)). 이 정보는 OLM에 필요한 리소스를 생성하고 Operator를 배포로 설정하는 방법을 지정합니다.

2.4.1.2.2. 카탈로그 소스

카탈로그 소스는 일반적으로 컨테이너 레지스트리에 저장된 인덱스 이미지를 참조하여 메타데이터 저장소를 나타냅니다. OLM(Operator Lifecycle Manager)은 카탈로그 소스를 쿼리하여 Operator 및 해당 종속성을 검색하고 설치합니다. OpenShift Container Platform 웹 콘솔의 OperatorHub에는 카탈로그 소스에서 제공하는 Operator도 표시됩니다.

클러스터 관리자는 웹 콘솔의 관리 → 클러스터 설정 → 글로벌 구성 → OperatorHub 페이지를 사용하여 클러스터에서 활성화된 카탈로그 소스에서 제공하는 전체 Operator 목록을 볼 수 있습니다.

CatalogSource 오브젝트의 spec은 Pod를 구성하는 방법 또는 Operator Registry gRPC API를 제공하는 서비스와 통신하는 방법을 나타냅니다.

예 2.1. CatalogSource 오브젝트의 예

apiVersion: operators.coreos.com/v1alpha1

kind: CatalogSource

metadata:

generation: 1

name: example-catalog

namespace: openshift-marketplace

spec:

displayName: Example Catalog

image: quay.io/example-org/example-catalog:v1

priority: -400

publisher: Example Org

sourceType: grpc

updateStrategy:

registryPoll:

interval: 30m0s

status:

connectionState:

address: example-catalog.openshift-marketplace.svc:50051

lastConnect: 2021-08-26T18:14:31Z

lastObservedState: READY

latestImageRegistryPoll: 2021-08-26T18:46:25Z

registryService:

createdAt: 2021-08-26T16:16:37Z

port: 50051

protocol: grpc

serviceName: example-catalog

serviceNamespace: openshift-marketplace- 1

CatalogSource오브젝트의 이름입니다. 이 값은 요청된 네임스페이스에 생성된 관련 Pod의 이름으로도 사용됩니다.- 2

- 사용 가능한 카탈로그를 만드는 네임스페이스입니다. 카탈로그를 모든 네임스페이스에서 클러스터 전체로 사용하려면 이 값을

openshift-marketplace로 설정합니다. 기본 Red Hat 제공 카탈로그 소스에서도openshift-marketplace네임스페이스를 사용합니다. 그러지 않으면 해당 네임스페이스에서만 Operator를 사용할 수 있도록 값을 특정 네임스페이스로 설정합니다. - 3

- 웹 콘솔 및 CLI에 있는 카탈로그의 표시 이름입니다.

- 4

- 카탈로그의 인덱스 이미지입니다.

- 5

- 카탈로그 소스의 가중치입니다. OLM은 종속성 확인 중에 가중치를 사용하여 우선순위를 지정합니다. 가중치가 높을수록 가중치가 낮은 카탈로그보다 카탈로그가 선호됨을 나타냅니다.

- 6

- 소스 유형에는 다음이 포함됩니다.

-

이미지참조가있는gRPC: OLM은 이미지를 가져와서 호환되는 API를 제공해야 하는 Pod를 실행합니다. -

address필드 -

configmap: OLM은 구성 맵 데이터를 구문 분석하고 이를 통해 gRPC API를 제공할 수 있는 Pod를 실행합니다.

-

- 7

- 지정된 간격으로 새 버전을 자동으로 확인하여 최신 상태를 유지합니다.

- 8

- 카탈로그 연결의 마지막으로 관찰된 상태입니다. 예를 들면 다음과 같습니다.

-

준비됨 : 연결이 성공적으로 설정되었습니다. -

연결 중 :연결이 설정하려고 시도하고 있습니다. -

TRANSIENT_FAILURE: 연결을 설정하려고 시도하는 동안 시간 제한과 같은 임시 문제가 발생했습니다. 상태는 결국CONNECTING으로 다시 전환되고 다시 연결 시도합니다.

자세한 내용은 gRPC 문서의 연결 상태를 참조하십시오.

-

- 9

- 카탈로그 이미지를 저장하는 컨테이너 레지스트리가 폴링되어 이미지가 최신 상태인지 확인할 수 있는 마지막 시간입니다.

- 10

- 카탈로그의 Operator 레지스트리 서비스의 상태 정보입니다.

서브스크립션의 CatalogSource 오브젝트 name을 참조하면 요청된 Operator를 찾기 위해 검색할 위치를 OLM에 지시합니다.

예 2.2. 카탈로그 소스를 참조하는 Subscription 오브젝트의 예

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: example-operator

namespace: example-namespace

spec:

channel: stable

name: example-operator

source: example-catalog

sourceNamespace: openshift-marketplace2.4.1.2.3. 서브스크립션

Subscription 오브젝트에서 정의하는 서브스크립션은 Operator를 설치하려는 의도를 나타냅니다. Operator와 카탈로그 소스를 연결하는 사용자 정의 리소스입니다.

서브스크립션은 Operator 패키지에서 구독할 채널과 업데이트를 자동 또는 수동으로 수행할지를 나타냅니다. 자동으로 설정된 경우 OLM(Operator Lifecycle Manager)은 서브스크립션을 통해 클러스터에서 항상 최신 버전의 Operator가 실행되도록 Operator를 관리하고 업그레이드합니다.

Subscription 개체 예

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: example-operator

namespace: example-namespace

spec:

channel: stable

name: example-operator

source: example-catalog

sourceNamespace: openshift-marketplace

이 Subscription 오브젝트는 Operator의 이름 및 네임스페이스, Operator 데이터를 확인할 수 있는 카탈로그를 정의합니다. alpha, beta 또는 stable과 같은 채널은 카탈로그 소스에서 설치해야 하는 Operator 스트림을 결정하는 데 도움이 됩니다.

서브스크립션에서 채널 이름은 Operator마다 다를 수 있지만 이름 지정 스키마는 지정된 Operator 내의 공통 규칙을 따라야 합니다. 예를 들어 채널 이름은 Operator(1.2, 1.3) 또는 릴리스 빈도(stable, fast)에서 제공하는 애플리케이션의 마이너 릴리스 업데이트 스트림을 따를 수 있습니다.

OpenShift Container Platform 웹 콘솔에서 쉽게 확인할 수 있을 뿐만 아니라 관련 서브스크립션의 상태를 검사하여 사용 가능한 최신 버전의 Operator가 있는 경우 이를 확인할 수 있습니다. currentCSV 필드와 연결된 값은 OLM에 알려진 최신 버전이고 installedCSV는 클러스터에 설치된 버전입니다.

2.4.1.2.4. 설치 계획

InstallPlan 오브젝트에서 정의하는 설치 계획은 OLM(Operator Lifecycle Manager)에서 특정 버전의 Operator로 설치 또는 업그레이드하기 위해 생성하는 리소스 세트를 설명합니다. 버전은 CSV(클러스터 서비스 버전)에서 정의합니다.

Operator, 클러스터 관리자 또는 Operator 설치 권한이 부여된 사용자를 설치하려면 먼저 Subscription 오브젝트를 생성해야 합니다. 서브스크립션은 카탈로그 소스에서 사용 가능한 Operator 버전의 스트림을 구독하려는 의도를 나타냅니다. 그런 다음 서브스크립션을 통해 Operator의 리소스를 쉽게 설치할 수 있도록 InstallPlan 오브젝트가 생성됩니다.

그런 다음 다음 승인 전략 중 하나에 따라 설치 계획을 승인해야 합니다.

-

서브스크립션의

spec.installPlanApproval필드가Automatic로 설정된 경우 설치 계획이 자동으로 승인됩니다. -

서브스크립션의

spec.installPlanApproval필드가Manual로 설정된 경우 클러스터 관리자 또는 적절한 권한이 있는 사용자가 설치 계획을 수동으로 승인해야 합니다.

설치 계획이 승인되면 OLM에서 지정된 리소스를 생성하고 서브스크립션에서 지정한 네임스페이스에 Operator를 설치합니다.

예 2.3. InstallPlan 오브젝트의 예

apiVersion: operators.coreos.com/v1alpha1

kind: InstallPlan

metadata:

name: install-abcde

namespace: operators

spec:

approval: Automatic

approved: true

clusterServiceVersionNames:

- my-operator.v1.0.1

generation: 1

status:

...

catalogSources: []

conditions:

- lastTransitionTime: '2021-01-01T20:17:27Z'

lastUpdateTime: '2021-01-01T20:17:27Z'

status: 'True'

type: Installed

phase: Complete

plan:

- resolving: my-operator.v1.0.1

resource:

group: operators.coreos.com

kind: ClusterServiceVersion

manifest: >-

...

name: my-operator.v1.0.1

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1alpha1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: apiextensions.k8s.io

kind: CustomResourceDefinition

manifest: >-

...

name: webservers.web.servers.org

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1beta1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: ''

kind: ServiceAccount

manifest: >-

...

name: my-operator

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: rbac.authorization.k8s.io

kind: Role

manifest: >-

...

name: my-operator.v1.0.1-my-operator-6d7cbc6f57

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: rbac.authorization.k8s.io

kind: RoleBinding

manifest: >-

...

name: my-operator.v1.0.1-my-operator-6d7cbc6f57

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

...2.4.1.2.5. Operator groups

OperatorGroup 리소스에서 정의하는 Operator group에서는 OLM에서 설치한 Operator에 다중 테넌트 구성을 제공합니다. Operator group은 멤버 Operator에 필요한 RBAC 액세스 권한을 생성할 대상 네임스페이스를 선택합니다.

대상 네임스페이스 세트는 쉼표로 구분된 문자열 형식으로 제공되며 CSV(클러스터 서비스 버전)의 olm.targetNamespaces 주석에 저장되어 있습니다. 이 주석은 멤버 Operator의 CSV 인스턴스에 적용되며 해당 배포에 프로젝션됩니다.

2.4.2. Operator Lifecycle Manager 아키텍처

이 가이드에서는 OpenShift Container Platform의 OLM(Operator Lifecycle Manager) 구성 요소 아키텍처를 간략하게 설명합니다.

2.4.2.1. 구성 요소의 역할

OLM(Operator Lifecycle Manager)은 OLM Operator와 Catalog Operator의 두 Operator로 구성됩니다.

각 Operator는 OLM 프레임워크의 기반이 되는 CRD(사용자 정의 리소스 정의)를 관리합니다.

| 리소스 | 짧은 이름 | 소유자 | Description |

|---|---|---|---|

|

|

| OLM | 애플리케이션 메타데이터: 이름, 버전, 아이콘, 필수 리소스, 설치 등입니다. |

|

|

| 카탈로그 | CSV를 자동으로 설치하거나 업그레이드하기 위해 생성하는 계산된 리소스 목록입니다. |

|

|

| 카탈로그 | 애플리케이션을 정의하는 CSV, CRD, 패키지의 리포지토리입니다. |

|

|

| 카탈로그 | 패키지의 채널을 추적하여 CSV를 최신 상태로 유지하는 데 사용됩니다. |

|

|

| OLM |

동일한 네임스페이스에 배포된 모든 Operator를 |

또한 각 Operator는 다음 리소스를 생성합니다.

| 리소스 | 소유자 |

|---|---|

|

| OLM |

|

| |

|

| |

|

| |

|

CRD( | 카탈로그 |

|

|

2.4.2.2. OLM Operator

CSV에 지정된 필수 리소스가 클러스터에 제공되면 OLM Operator는 CSV 리소스에서 정의하는 애플리케이션을 배포합니다.

OLM Operator는 필수 리소스 생성과는 관련이 없습니다. CLI 또는 Catalog Operator를 사용하여 이러한 리소스를 수동으로 생성하도록 선택할 수 있습니다. 이와 같은 분리를 통해 사용자는 애플리케이션에 활용하기 위해 선택하는 OLM 프레임워크의 양을 점차 늘리며 구매할 수 있습니다.

OLM Operator에서는 다음 워크플로를 사용합니다.

- 네임스페이스에서 CSV(클러스터 서비스 버전)를 조사하고 해당 요구 사항이 충족되는지 확인합니다.

요구사항이 충족되면 CSV에 대한 설치 전략을 실행합니다.

참고설치 전략을 실행하기 위해서는 CSV가 Operator group의 활성 멤버여야 합니다.

2.4.2.3. Catalog Operator

Catalog Operator는 CSV(클러스터 서비스 버전) 및 CSV에서 지정하는 필수 리소스를 확인하고 설치합니다. 또한 채널에서 패키지 업데이트에 대한 카탈로그 소스를 조사하고 원하는 경우 사용 가능한 최신 버전으로 자동으로 업그레이드합니다.

채널에서 패키지를 추적하려면 원하는 패키지를 구성하는 Subscription 오브젝트, 채널, 업데이트를 가져오는 데 사용할 CatalogSource 오브젝트를 생성하면 됩니다. 업데이트가 확인되면 사용자를 대신하여 네임스페이스에 적절한 InstallPlan 오브젝트를 기록합니다.

Catalog Operator에서는 다음 워크플로를 사용합니다.

- 클러스터의 각 카탈로그 소스에 연결합니다.

사용자가 생성한 설치 계획 중 확인되지 않은 계획이 있는지 조사하고 있는 경우 다음을 수행합니다.

- 요청한 이름과 일치하는 CSV를 찾아 확인된 리소스로 추가합니다.

- 각 관리 또는 필수 CRD의 경우 CRD를 확인된 리소스로 추가합니다.

- 각 필수 CRD에 대해 이를 관리하는 CSV를 확인합니다.

- 확인된 설치 계획을 조사하고 사용자의 승인에 따라 또는 자동으로 해당 계획에 대해 검색된 리소스를 모두 생성합니다.

- 카탈로그 소스 및 서브스크립션을 조사하고 이에 따라 설치 계획을 생성합니다.

2.4.2.4. 카탈로그 레지스트리

Catalog 레지스트리는 클러스터에서 생성할 CSV 및 CRD를 저장하고 패키지 및 채널에 대한 메타데이터를 저장합니다.

패키지 매니페스트는 패키지 ID를 CSV 세트와 연결하는 카탈로그 레지스트리의 항목입니다. 패키지 내에서 채널은 특정 CSV를 가리킵니다. CSV는 교체하는 CSV를 명시적으로 참조하므로 패키지 매니페스트는 Catalog Operator에 각 중간 버전을 거쳐 CSV를 최신 버전으로 업데이트하는 데 필요한 모든 정보를 제공합니다.

2.4.3. Operator Lifecycle Manager 워크플로

이 가이드에서는 OpenShift Container Platform의 OLM(Operator Lifecycle Manager)의 워크플로를 간략하게 설명합니다.

2.4.3.1. OLM의 Operator 설치 및 업그레이드 워크플로

OLM(Operator Lifecycle Manager) 에코시스템에서 다음 리소스를 사용하여 Operator 설치 및 업그레이드를 확인합니다.

-

ClusterServiceVersion(CSV) -

CatalogSource -

서브스크립션

CSV에 정의된 Operator 메타데이터는 카탈로그 소스라는 컬렉션에 저장할 수 있습니다. OLM은 Operator Registry API를 사용하는 카탈로그 소스를 통해 사용 가능한 Operator와 설치된 Operator의 업그레이드를 쿼리합니다.

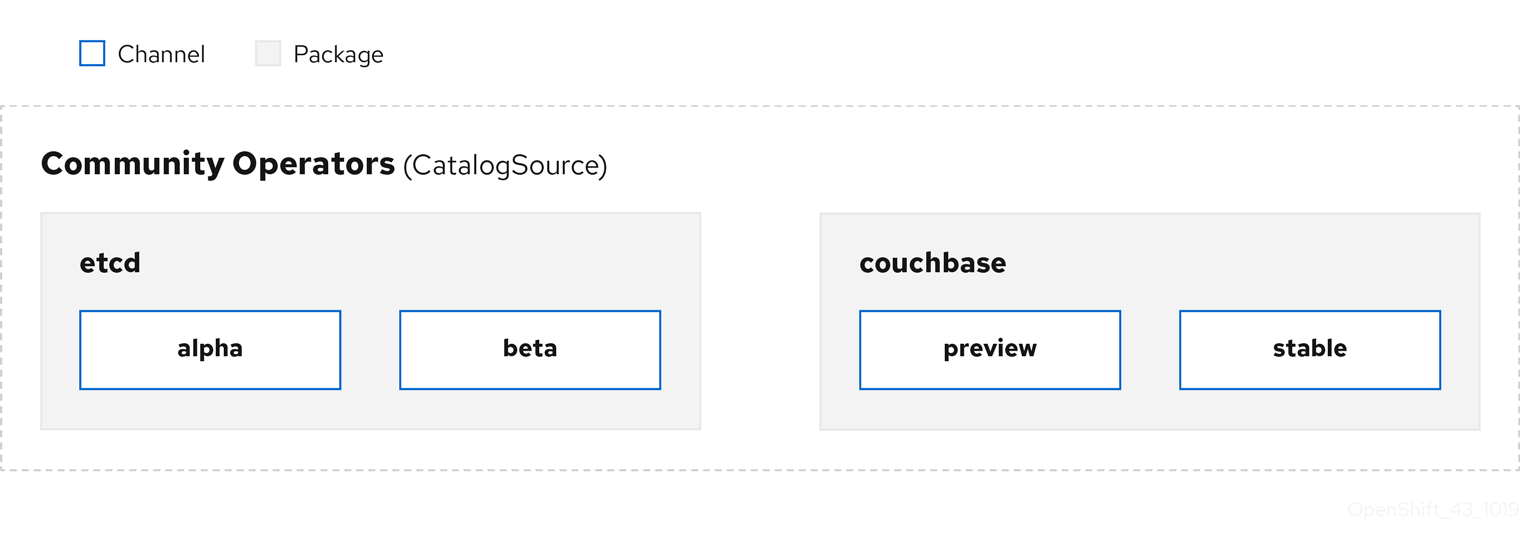

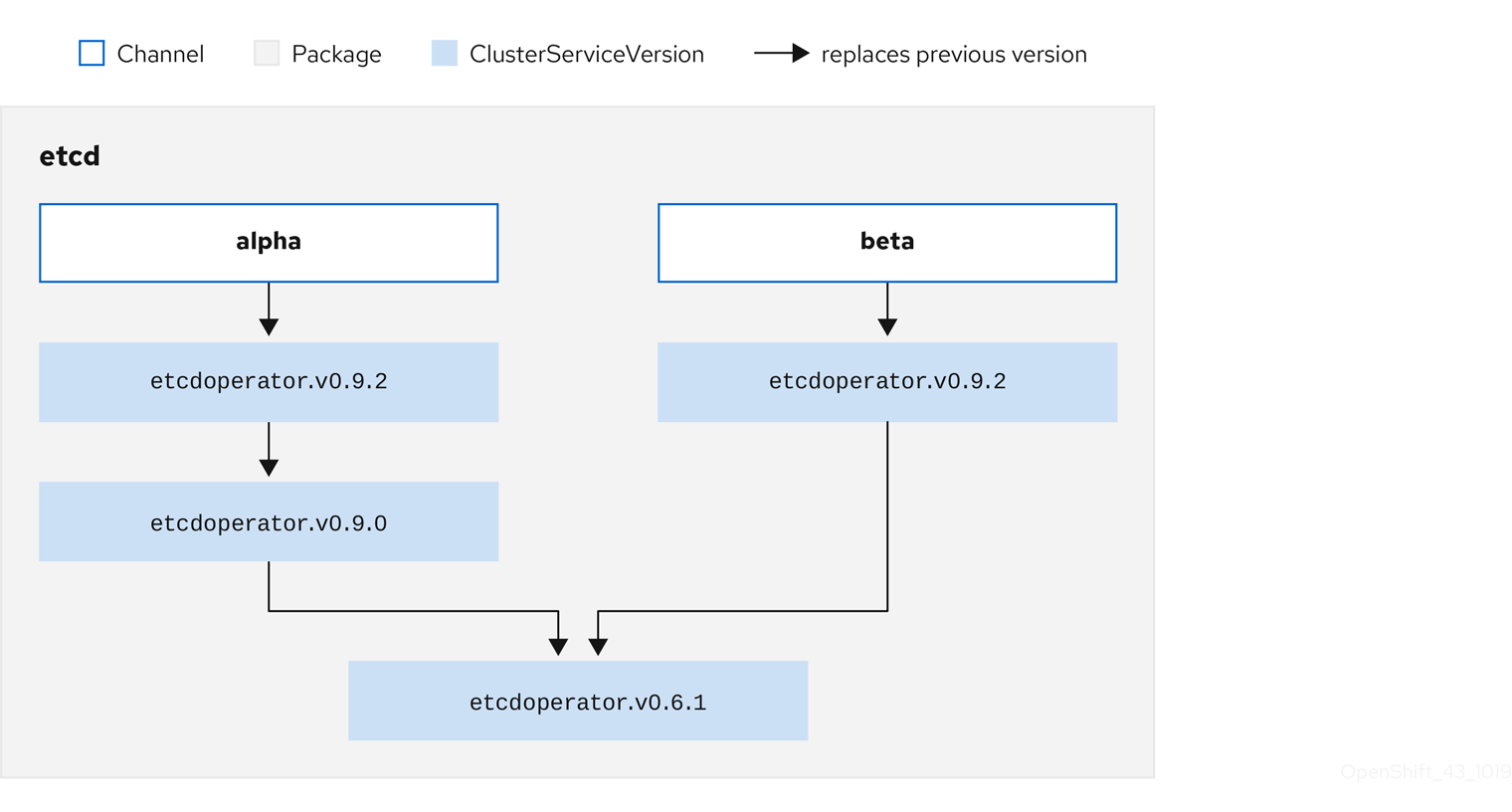

그림 2.3. 카탈로그 소스 개요

Operator는 카탈로그 소스 내에서 패키지와 채널이라는 업데이트 스트림으로 구성되는데, 채널은 웹 브라우저와 같이 연속 릴리스 주기에서 OpenShift Container Platform 또는 기타 소프트웨어에 친숙한 업데이트 패턴이어야 합니다.

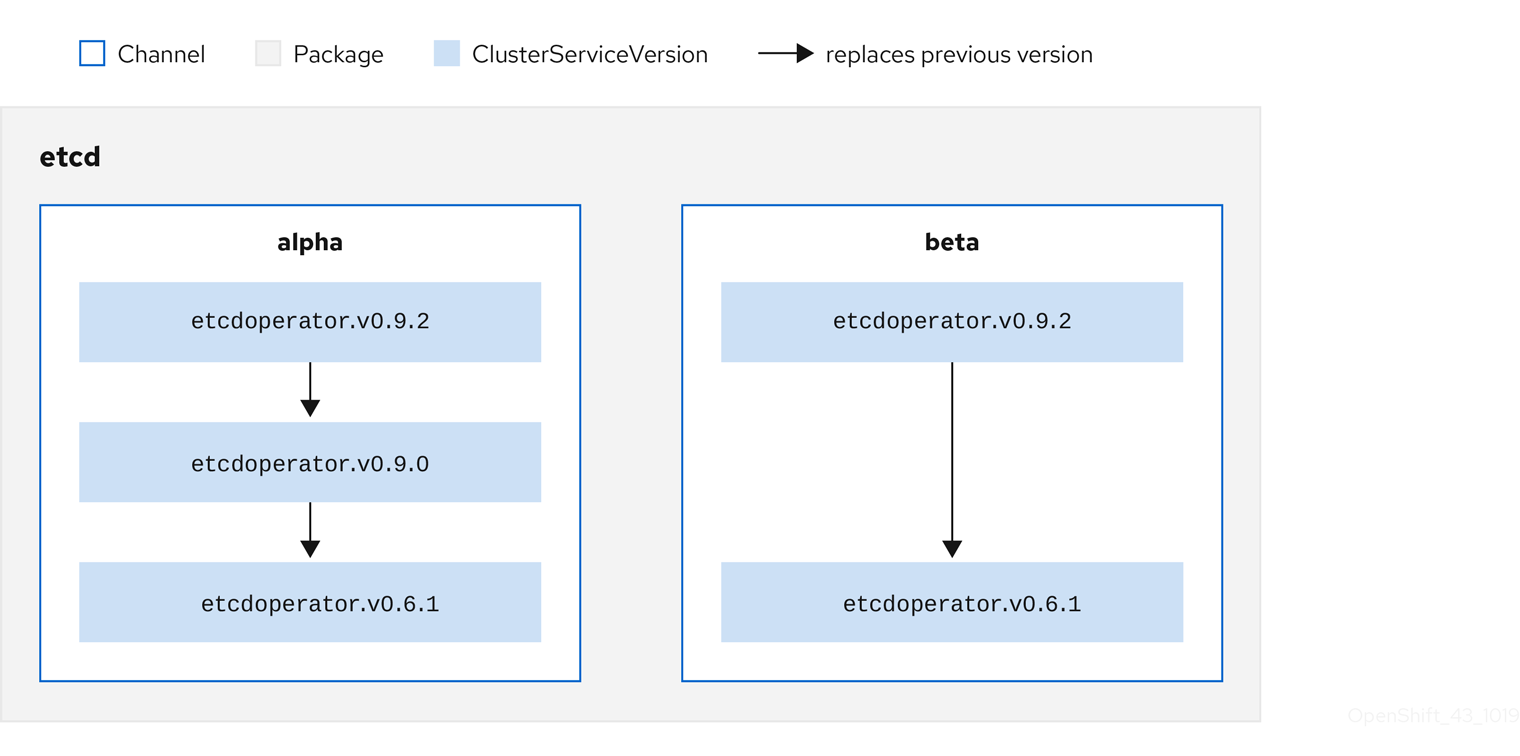

그림 2.4. 카탈로그 소스의 패키지 및 채널

사용자는 서브스크립션의 특정 카탈로그 소스에서 특정 패키지 및 채널(예: etcd 패키지 및 해당 alpha 채널)을 나타냅니다. 네임스페이스에 아직 설치되지 않은 패키지에 서브스크립션이 생성되면 해당 패키지의 최신 Operator가 설치됩니다.

OLM에서는 의도적으로 버전을 비교하지 않으므로 지정된 카탈로그 → 채널 → 패키지 경로에서 사용 가능한 "최신" Operator의 버전 번호가 가장 높은 버전 번호일 필요는 없습니다. Git 리포지토리와 유사하게 채널의 헤드 참조로 간주해야 합니다.

각 CSV에는 교체 대상 Operator를 나타내는 replaces 매개변수가 있습니다. 이 매개변수를 통해 OLM에서 쿼리할 수 있는 CSV 그래프가 빌드되고 업데이트를 채널 간에 공유할 수 있습니다. 채널은 업데이트 그래프의 진입점으로 간주할 수 있습니다.

그림 2.5. 사용 가능한 채널 업데이트의 OLM 그래프

패키지에 포함된 채널의 예

packageName: example

channels:

- name: alpha

currentCSV: example.v0.1.2

- name: beta

currentCSV: example.v0.1.3

defaultChannel: alpha

OLM에서 카탈로그 소스, 패키지, 채널, CSV와 관련된 업데이트를 쿼리하려면 카탈로그에서 입력 CSV를 replaces하는 단일 CSV를 모호하지 않게 결정적으로 반환할 수 있어야 합니다.

2.4.3.1.1. 업그레이드 경로의 예

업그레이드 시나리오 예제에서는 CSV 버전 0.1.1에 해당하는 Operator가 설치되어 있는 것으로 간주합니다. OLM은 카탈로그 소스를 쿼리하고 구독 채널에서 이전 버전이지만 설치되지 않은 CSV 버전 0.1.2를 교체하는(결국 설치된 이전 CSV 버전 0.1.1을 교체함) 새 CSV 버전 0.1.3이 포함된 업그레이드를 탐지합니다.

OLM은 CSV에 지정된 replaces 필드를 통해 채널 헤드에서 이전 버전으로 돌아가 업그레이드 경로 0.1.3 → 0.1.2 → 0.1.1을 결정합니다. 화살표 방향은 전자가 후자를 대체함을 나타냅니다. OLM은 채널 헤드에 도달할 때까지 Operator 버전을 한 번에 하나씩 업그레이드합니다.

지정된 이 시나리오의 경우 OLM은 Operator 버전 0.1.2를 설치하여 기존 Operator 버전 0.1.1을 교체합니다. 그런 다음 Operator 버전 0.1.3을 설치하여 이전에 설치한 Operator 버전 0.1.2를 대체합니다. 이 시점에 설치한 Operator 버전 0.1.3이 채널 헤드와 일치하며 업그레이드가 완료됩니다.

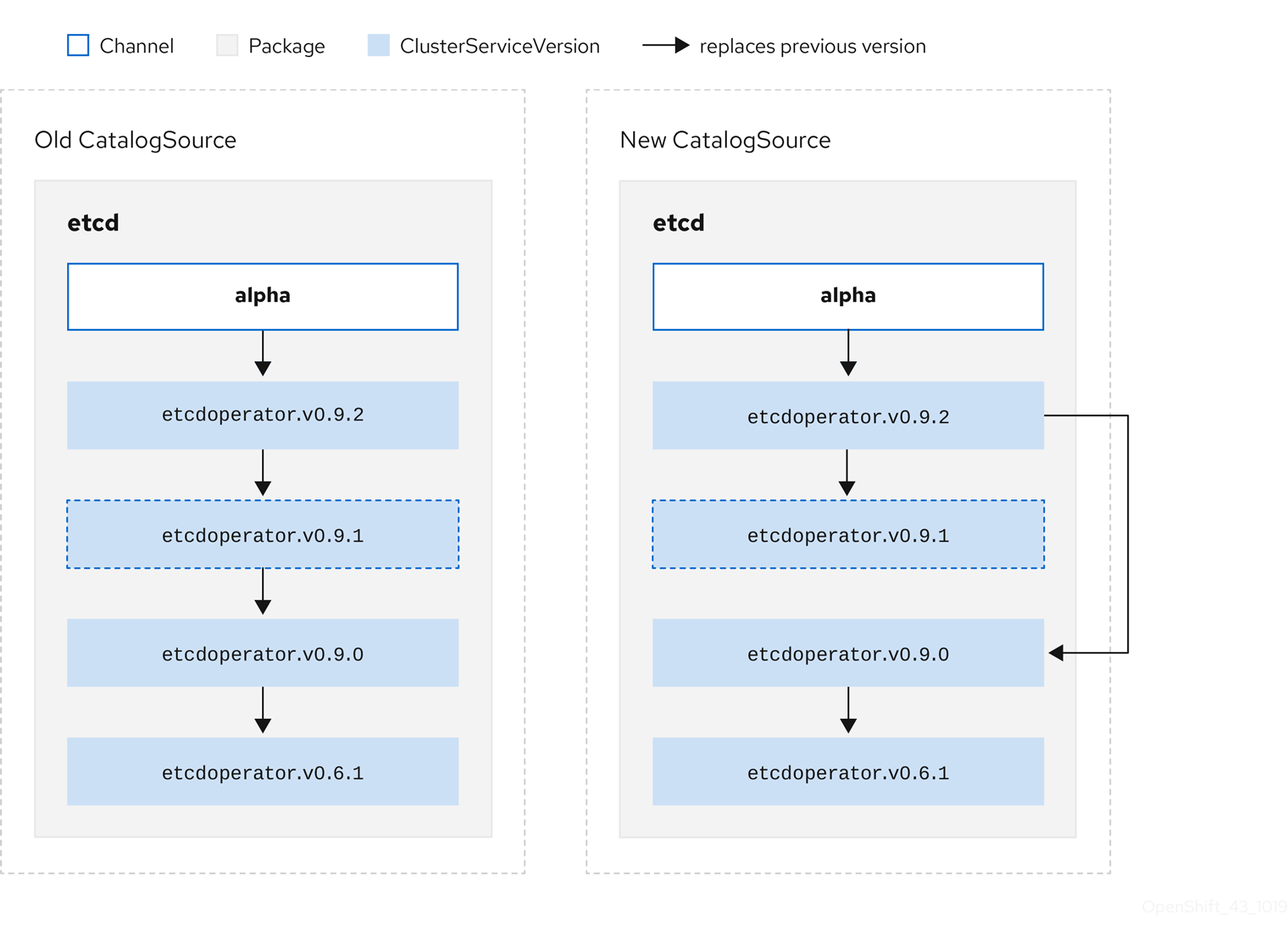

2.4.3.1.2. 업그레이드 건너뛰기

OLM의 기본 업그레이드 경로는 다음과 같습니다.

- 카탈로그 소스는 Operator에 대한 하나 이상의 업데이트로 업데이트됩니다.

- OLM은 카탈로그 소스에 포함된 최신 버전에 도달할 때까지 Operator의 모든 버전을 트래버스합니다.

그러나 경우에 따라 이 작업을 수행하는 것이 안전하지 않을 수 있습니다. 게시된 버전의 Operator가 아직 설치되지 않은 경우 클러스터에 설치해서는 안 되는 경우가 있습니다. 예를 들면 버전에 심각한 취약성이 있기 때문입니다.

이러한 경우 OLM에서는 두 가지 클러스터 상태를 고려하여 다음을 둘 다 지원하는 업데이트 그래프를 제공해야 합니다.

- "잘못"된 중간 Operator가 클러스터에 표시되고 설치되었습니다.

- "잘못된" 중간 Operator가 클러스터에 아직 설치되지 않았습니다.

새 카탈로그를 제공하고 건너뛰기 릴리스를 추가하면 클러스터 상태 및 잘못된 업데이트가 있는지와 관계없이 OLM에서 항상 고유한 단일 업데이트를 가져올 수 있습니다.

릴리스를 건너뛰는 CSV의 예

apiVersion: operators.coreos.com/v1alpha1

kind: ClusterServiceVersion

metadata:

name: etcdoperator.v0.9.2

namespace: placeholder

annotations:

spec:

displayName: etcd

description: Etcd Operator

replaces: etcdoperator.v0.9.0

skips:

- etcdoperator.v0.9.1기존 CatalogSource 및 새 CatalogSource의 다음 예제를 고려하십시오.

그림 2.6. 업데이트 건너뛰기

이 그래프에는 다음이 유지됩니다.

- 기존 CatalogSource에 있는 모든 Operator에는 새 CatalogSource에 단일 대체 항목이 있습니다.

- 새 CatalogSource에 있는 모든 Operator에는 새 CatalogSource에 단일 대체 항목이 있습니다.

- 잘못된 업데이트가 아직 설치되지 않은 경우 설치되지 않습니다.

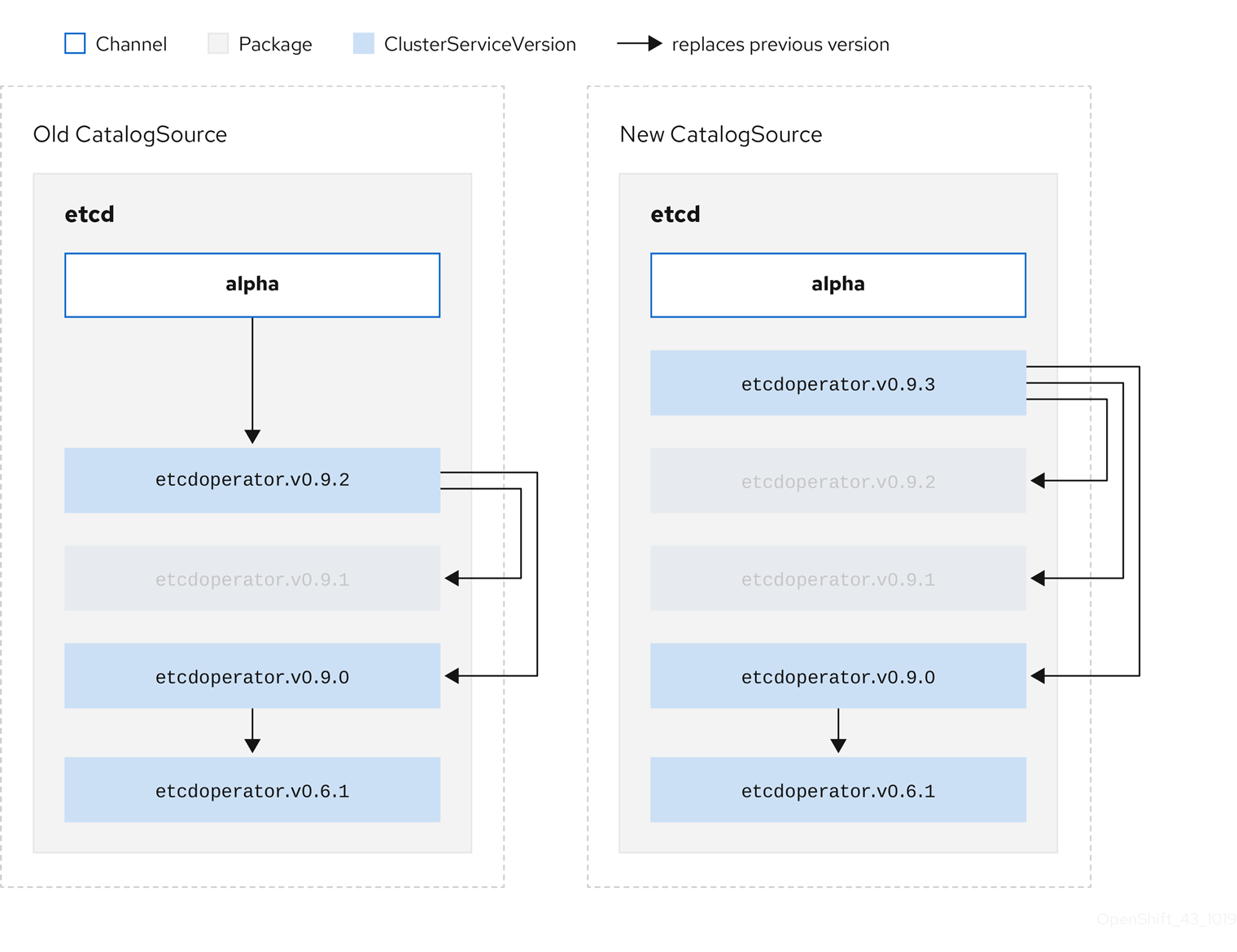

2.4.3.1.3. 여러 Operator 교체

설명된 새 CatalogSource를 생성하려면 하나의 Operator를 replace하지만 여러 Operator를 건너뛸 수 있는 CSV를 게시해야 합니다. 이 작업은 skipRange 주석을 사용하여 수행할 수 있습니다.

olm.skipRange: <semver_range>

여기서 <semver_range>에는 semver 라이브러리에서 지원하는 버전 범위 형식이 있습니다.

카탈로그에서 업데이트를 검색할 때 채널 헤드에 skipRange 주석이 있고 현재 설치된 Operator에 범위에 해당하는 버전 필드가 있는 경우 OLM이 채널의 최신 항목으로 업데이트됩니다.

우선순위 순서는 다음과 같습니다.

-

기타 건너뛰기 기준이 충족되는 경우 서브스크립션의

sourceName에 지정된 소스의 채널 헤드 -

sourceName에 지정된 소스의 현재 Operator를 대체하는 다음 Operator - 기타 건너뛰기 조건이 충족되는 경우 서브스크립션에 표시되는 다른 소스의 채널 헤드.

- 서브스크립션에 표시되는 모든 소스의 현재 Operator를 대체하는 다음 Operator.

skipRange가 있는 CSV의 예

apiVersion: operators.coreos.com/v1alpha1

kind: ClusterServiceVersion

metadata:

name: elasticsearch-operator.v4.1.2

namespace: <namespace>

annotations:

olm.skipRange: '>=4.1.0 <4.1.2'2.4.3.1.4. z-stream 지원

마이너 버전이 동일한 경우 z-stream 또는 패치 릴리스로 이전 z-stream 릴리스를 모두 교체해야 합니다. OLM은 메이저, 마이너 또는 패치 버전을 구분하지 않으므로 카탈로그에 올바른 그래프만 빌드해야 합니다.

즉 OLM은 이전 CatalogSource에서와 같이 그래프를 가져올 수 있어야 하고 이전과 유사하게 새 CatalogSource에서와 같이 그래프를 생성할 수 있어야 합니다.

그림 2.7. 여러 Operator 교체

이 그래프에는 다음이 유지됩니다.

- 기존 CatalogSource에 있는 모든 Operator에는 새 CatalogSource에 단일 대체 항목이 있습니다.

- 새 CatalogSource에 있는 모든 Operator에는 새 CatalogSource에 단일 대체 항목이 있습니다.

- 이전 CatalogSource의 모든 z-stream 릴리스가 새 CatalogSource의 최신 z-stream 릴리스로 업데이트됩니다.

- 사용할 수 없는 릴리스는 "가상" 그래프 노드로 간주할 수 있습니다. 해당 콘텐츠가 존재할 필요는 없으며 그래프가 이와 같은 경우 레지스트리에서 응답하기만 하면 됩니다.

2.4.4. Operator Lifecycle Manager 종속성 확인

이 가이드에서는 OpenShift Container Platform의 OLM(Operator Lifecycle Manager)을 사용한 종속성 해결 및 CRD(사용자 정의 리소스 정의) 업그레이드 라이프사이클에 대해 간단히 설명합니다.

2.4.4.1. 종속성 확인 정보

OLM은 실행 중인 Operator의 종속성 확인 및 업그레이드 라이프사이클을 관리합니다. OLM에서 발생하는 문제는 다양한 방식에서 yum 및 rpm과 같은 기타 운영 체제 패키지 관리자와 유사합니다.

그러나 OLM에는 유사한 시스템에는 일반적으로 해당하지 않는 한 가지 제약 조건이 있습니다. 즉 Operator가 항상 실행되고 있으므로 OLM에서 서로 함께 작동하지 않는 Operator 세트를 제공하지 않도록 합니다.

즉 OLM에서 다음을 수행하지 않아야 합니다.

- 제공할 수 없는 API가 필요한 Operator 세트를 설치합니다.

- Operator에 종속된 다른 Operator를 중단하는 방식으로 Operator를 업데이트합니다.

2.4.4.2. 종속 항목 파일

Operator의 종속 항목은 번들의 metadata/ 폴더에 있는 dependencies.yaml 파일에 나열되어 있습니다. 이 파일은 선택 사항이며 현재는 명시적인 Operator 버전 종속 항목을 지정하는 데만 사용됩니다.

종속성 목록에는 종속성의 유형을 지정하기 위해 각 항목에 대한 type 필드가 포함되어 있습니다. 지원되는 Operator 종속 항목에는 두 가지가 있습니다.

-

olm.package: 이 유형은 특정 Operator 버전에 대한 종속성을 나타냅니다. 종속 정보에는 패키지 이름과 패키지 버전이 semver 형식으로 포함되어야 합니다. 예를 들어0.5.2와 같은 정확한 버전이나>0.5.1과 같은 버전 범위를 지정할 수 있습니다. -

olm.gvk:gvk유형을 사용하면 작성자가 CSV의 기존 CRD 및 API 기반 사용과 유사하게 GVK(그룹/버전/종류) 정보로 종속성을 지정할 수 있습니다. 이 경로를 통해 Operator 작성자는 모든 종속 항목, API 또는 명시적 버전을 동일한 위치에 통합할 수 있습니다.

다음 예제에서는 Prometheus Operator 및 etcd CRD에 대한 종속 항목을 지정합니다.

dependencies.yaml 파일의 예

dependencies:

- type: olm.package

value:

packageName: prometheus

version: ">0.27.0"

- type: olm.gvk

value:

group: etcd.database.coreos.com

kind: EtcdCluster

version: v1beta22.4.4.3. 종속 기본 설정

Operator의 종속성을 동등하게 충족하는 옵션이 여러 개가 있을 수 있습니다. OLM(Operator Lifecycle Manager)의 종속성 확인자는 요청된 Operator의 요구 사항에 가장 적합한 옵션을 결정합니다. Operator 작성자 또는 사용자에게는 명확한 종속성 확인을 위해 이러한 선택이 어떻게 이루어지는지 이해하는 것이 중요할 수 있습니다.

2.4.4.3.1. 카탈로그 우선순위

OpenShift Container Platform 클러스터에서 OLM은 카탈로그 소스를 읽고 설치에 사용할 수 있는 Operator를 확인합니다.

CatalogSource 오브젝트의 예

apiVersion: "operators.coreos.com/v1alpha1"

kind: "CatalogSource"

metadata:

name: "my-operators"

namespace: "operators"

spec:

sourceType: grpc

image: example.com/my/operator-index:v1

displayName: "My Operators"

priority: 100

CatalogSource 오브젝트에는 priority 필드가 있으며 확인자는 이 필드를 통해 종속성에 대한 옵션의 우선순위를 부여하는 방법을 확인합니다.

카탈로그 기본 설정을 관리하는 규칙에는 다음 두 가지가 있습니다.

- 우선순위가 높은 카탈로그의 옵션이 우선순위가 낮은 카탈로그의 옵션보다 우선합니다.

- 종속 항목과 동일한 카탈로그의 옵션이 다른 카탈로그보다 우선합니다.

2.4.4.3.2. 채널 순서

카탈로그의 Operator 패키지는 사용자가 OpenShift Container Platform 클러스터에서 구독할 수 있는 업데이트 채널 컬렉션입니다. 채널은 마이너 릴리스(1.2, 1.3) 또는 릴리스 빈도(stable, fast)에 대해 특정 업데이트 스트림을 제공하는 데 사용할 수 있습니다.

동일한 패키지에 있지만 채널이 다른 Operator로 종속성을 충족할 수 있습니다. 예를 들어 버전 1.2의 Operator는 stable 및 fast 채널 모두에 존재할 수 있습니다.

각 패키지에는 기본이 채널이 있으며 항상 기본이 아닌 채널에 우선합니다. 기본 채널의 옵션으로 종속성을 충족할 수 없는 경우 남아 있는 채널의 옵션을 채널 이름의 사전 순으로 고려합니다.

2.4.4.3.3. 채널 내 순서

대부분의 경우 단일 채널 내에는 종속성을 충족하는 옵션이 여러 개 있습니다. 예를 들어 하나의 패키지 및 채널에 있는 Operator에서는 동일한 API 세트를 제공합니다.

이는 사용자가 서브스크립션을 생성할 때 업데이트를 수신하는 채널을 나타냅니다. 이를 통해 검색 범위가 이 하나의 채널로 즉시 줄어듭니다. 하지만 채널 내에서 다수의 Operator가 종속성을 충족할 수 있습니다.

채널 내에서는 업데이트 그래프에서 더 높이 있는 최신 Operator가 우선합니다. 채널 헤드에서 종속성을 충족하면 먼저 시도됩니다.

2.4.4.3.4. 기타 제약 조건

패키지 종속 항목에서 제공하는 제약 조건 외에도 OLM에는 필요한 사용자 상태를 나타내고 확인 불변성을 적용하는 추가 제약 조건이 포함됩니다.

2.4.4.3.4.1. 서브스크립션 제약 조건

서브스크립션 제약 조건은 서브스크립션을 충족할 수 있는 Operator 세트를 필터링합니다. 서브스크립션은 종속성 확인자에 대한 사용자 제공 제약 조건입니다. Operator가 클러스터에 없는 경우 새 Operator를 설치하거나 기존 Operator를 계속 업데이트할지를 선언합니다.

2.4.4.3.4.2. 패키지 제약 조건

하나의 네임스페이스 내에 동일한 패키지의 두 Operator가 제공되지 않을 수 있습니다.

2.4.4.4. CRD 업그레이드

OLM은 CRD(사용자 정의 리소스 정의)가 단수형 CSV(클러스터 서비스 버전)에 속하는 경우 CRD를 즉시 업그레이드합니다. CRD가 여러 CSV에 속하는 경우에는 다음과 같은 하위 호환 조건을 모두 충족할 때 CRD가 업그레이드됩니다.

- 현재 CRD의 기존 서비스 버전은 모두 새 CRD에 있습니다.

- CRD 제공 버전과 연결된 기존의 모든 인스턴스 또는 사용자 정의 리소스는 새 CRD의 검증 스키마에 대해 검증할 때 유효합니다.

2.4.4.5. 종속성 모범 사례

종속 항목을 지정할 때는 모범 사례를 고려해야 합니다.

- API 또는 특정 버전의 Operator 범위에 따라

-

Operator는 언제든지 API를 추가하거나 제거할 수 있습니다. 항상 Operator에서 요구하는 API에

olm.gvk종속성을 지정합니다. 이에 대한 예외는 대신olm.package제약 조건을 지정하는 경우입니다. - 최소 버전 설정

API 변경에 대한 Kubernetes 설명서에서는 Kubernetes 스타일 Operator에 허용되는 변경 사항을 설명합니다. 이러한 버전 관리 규칙을 사용하면 API가 이전 버전과 호환되는 경우 Operator에서 API 버전 충돌 없이 API를 업데이트할 수 있습니다.

Operator 종속 항목의 경우 이는 API 버전의 종속성을 확인하는 것으로는 종속 Operator가 의도한 대로 작동하는지 확인하는 데 충분하지 않을 수 있을 의미합니다.

예를 들면 다음과 같습니다.

-

TestOperator v1.0.0에서는 v1alpha1 API 버전의

MyObject리소스를 제공합니다. -

TestOperator v1.0.1에서는 새 필드

spec.newfield를MyObject에 추가하지만 여전히 v1alpha1입니다.

Operator에

spec.newfield를MyObject리소스에 쓰는 기능이 필요할 수 있습니다.olm.gvk제약 조건만으로는 OLM에서 TestOperator v1.0.0이 아닌 TestOperator v1.0.1이 필요한 것으로 판단하는 데 충분하지 않습니다.가능한 경우 API를 제공하는 특정 Operator를 미리 알고 있는 경우 추가

olm.package제약 조건을 지정하여 최솟값을 설정합니다.-

TestOperator v1.0.0에서는 v1alpha1 API 버전의

- 최대 버전 생략 또는 광범위한 범위 허용

Operator는 API 서비스 및 CRD와 같은 클러스터 범위의 리소스를 제공하기 때문에 짧은 종속성 기간을 지정하는 Operator는 해당 종속성의 다른 소비자에 대한 업데이트를 불필요하게 제한할 수 있습니다.

가능한 경우 최대 버전을 설정하지 마십시오. 또는 다른 Operator와 충돌하지 않도록 매우 광범위한 의미 범위를 설정하십시오. 예를 들면

>1.0.0 <2.0.0과 같습니다.기존 패키지 관리자와 달리 Operator 작성자는 OLM의 채널을 통해 업데이트가 안전함을 명시적으로 인코딩합니다. 기존 서브스크립션에 대한 업데이트가 제공되면 Operator 작성자가 이전 버전에서 업데이트할 수 있음을 나타내는 것으로 간주합니다. 종속성에 최대 버전을 설정하면 특정 상한에서 불필요하게 잘라 작성자의 업데이트 스트림을 덮어씁니다.

참고클러스터 관리자는 Operator 작성자가 설정한 종속 항목을 덮어쓸 수 없습니다.

그러나 피해야 하는 알려진 비호환성이 있는 경우 최대 버전을 설정할 수 있으며 설정해야 합니다. 버전 범위 구문을 사용하여 특정 버전을 생략할 수 있습니다(예:

> 1.0.0 !1.2.1).

2.4.4.6. 종속성 경고

종속성을 지정할 때 고려해야 할 경고 사항이 있습니다.

- 혼합 제약 조건(AND) 없음

현재 제약 조건 간 AND 관계를 지정할 수 있는 방법은 없습니다. 즉 하나의 Operator가 지정된 API를 제공하면서 버전이

>1.1.0인 다른 Operator에 종속되도록 지정할 수 없습니다.즉 다음과 같은 종속성을 지정할 때를 나타냅니다.

dependencies: - type: olm.package value: packageName: etcd version: ">3.1.0" - type: olm.gvk value: group: etcd.database.coreos.com kind: EtcdCluster version: v1beta2OLM은 EtcdCluster를 제공하는 Operator와 버전이

>3.1.0인 Operator를 사용하여 이러한 조건을 충족할 수 있습니다. 이러한 상황이 발생하는지 또는 두 제약 조건을 모두 충족하는 Operator가 선택되었는지는 잠재적 옵션을 방문하는 순서에 따라 다릅니다. 종속성 기본 설정 및 순서 지정 옵션은 잘 정의되어 있으며 추론할 수 있지만 주의를 기울이기 위해 Operator는 둘 중 하나의 메커니즘을 유지해야 합니다.- 네임스페이스 간 호환성

- OLM은 네임스페이스 범위에서 종속성 확인을 수행합니다. 한 네임스페이스의 Operator를 업데이트하면 다른 네임스페이스의 Operator에 문제가 되고 반대의 경우도 마찬가지인 경우 업데이트 교착 상태에 빠질 수 있습니다.

2.4.4.7. 종속성 확인 시나리오 예제

다음 예제에서 공급자는 CRD 또는 API 서비스를 "보유"한 Operator입니다.

예제: 종속 API 사용 중단

A 및 B는 다음과 같은 API입니다(CRD).

- A 공급자는 B에 의존합니다.

- B 공급자에는 서브스크립션이 있습니다.

- C를 제공하도록 B 공급자를 업데이트하지만 B를 더 이상 사용하지 않습니다.

결과는 다음과 같습니다.

- B에는 더 이상 공급자가 없습니다.

- A가 더 이상 작동하지 않습니다.

이는 OLM에서 업그레이드 전략으로 방지하는 사례입니다.

예제: 버전 교착 상태

A 및 B는 다음과 같은 API입니다.

- A 공급자에는 B가 필요합니다.

- B 공급자에는 A가 필요합니다.

- A 공급자를 업데이트(A2 제공, B2 요청)하고 A를 더 이상 사용하지 않습니다.

- B 공급자를 업데이트(A2 제공, B2 요청)하고 B를 더 이상 사용하지 않습니다.

OLM에서 B를 동시에 업데이트하지 않고 A를 업데이트하거나 반대 방향으로 시도하는 경우 새 호환 가능 세트가 있는 경우에도 새 버전의 Operator로 진행할 수 없습니다.

이는 OLM에서 업그레이드 전략으로 방지하는 또 다른 사례입니다.

2.4.5. Operator groups

이 가이드에서는 OpenShift Container Platform의 OLM(Operator Lifecycle Manager)에서 Operator groups을 사용하는 방법을 간략하게 설명합니다.

2.4.5.1. Operator groups 정의

OperatorGroup 리소스에서 정의하는 Operator group에서는 OLM에서 설치한 Operator에 다중 테넌트 구성을 제공합니다. Operator group은 멤버 Operator에 필요한 RBAC 액세스 권한을 생성할 대상 네임스페이스를 선택합니다.

대상 네임스페이스 세트는 쉼표로 구분된 문자열 형식으로 제공되며 CSV(클러스터 서비스 버전)의 olm.targetNamespaces 주석에 저장되어 있습니다. 이 주석은 멤버 Operator의 CSV 인스턴스에 적용되며 해당 배포에 프로젝션됩니다.

2.4.5.2. Operator group 멤버십

다음 조건이 충족되면 Operator가 Operator group의 멤버로 간주됩니다.

- Operator의 CSV는 Operator group과 동일한 네임스페이스에 있습니다.

- Operator의 CSV 설치 모드에서는 Operator group이 대상으로 하는 네임스페이스 세트를 지원합니다.

CSV의 설치 모드는 InstallModeType 필드 및 부울 Supported 필드로 구성됩니다. CSV 사양에는 다음 네 가지 InstallModeTypes로 구성된 설치 모드 세트가 포함될 수 있습니다.

| InstallModeType | 설명 |

|---|---|

|

| Operator가 자체 네임스페이스를 선택하는 Operator group의 멤버일 수 있습니다. |

|

| Operator가 하나의 네임스페이스를 선택하는 Operator group의 멤버일 수 있습니다. |

|

| Operator가 네임스페이스를 두 개 이상 선택하는 Operator group의 멤버일 수 있습니다. |

|

|

Operator가 네임스페이스를 모두 선택하는 Operator group의 멤버일 수 있습니다(대상 네임스페이스 세트는 빈 문자열( |

CSV 사양에서 InstallModeType 항목이 생략되면 암시적으로 지원하는 기존 항목에서 지원을 유추할 수 있는 경우를 제외하고 해당 유형을 지원하지 않는 것으로 간주합니다.

2.4.5.3. 대상 네임스페이스 선택

spec.targetNamespaces 매개변수를 사용하여 Operator group의 대상 네임스페이스의 이름을 명시적으로 지정할 수 있습니다.

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: my-group

namespace: my-namespace

spec:

targetNamespaces:

- my-namespace

또는 라벨 선택기를 spec.selector 매개변수와 함께 사용하여 네임스페이스를 지정할 수도 있습니다.

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: my-group

namespace: my-namespace

spec:

selector:

cool.io/prod: "true"

spec.targetNamespaces를 통해 여러 네임스페이스를 나열하거나 spec.selector를 통해 라벨 선택기를 사용하는 것은 바람직하지 않습니다. Operator group의 대상 네임스페이스 두 개 이상에 대한 지원이 향후 릴리스에서 제거될 수 있습니다.

spec.targetNamespaces 및 spec.selector를 둘 다 정의하면 spec.selector가 무시됩니다. 또는 모든 네임스페이스를 선택하는 global Operator group을 지정하려면 spec.selector 및 spec.targetNamespaces를 둘 다 생략하면 됩니다.

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: my-group

namespace: my-namespace

선택된 네임스페이스의 확인된 세트는 Opeator group의 status.namespaces 매개변수에 표시됩니다. 글로벌 Operator group의 status.namespace에는 사용 중인 Operator에 모든 네임스페이스를 조사해야 함을 알리는 빈 문자열("")이 포함됩니다.

2.4.5.4. Operator group CSV 주석

Operator group의 멤버 CSV에는 다음과 같은 주석이 있습니다.

| 주석 | 설명 |

|---|---|

|

| Operator group의 이름을 포함합니다. |

|

| Operator group의 네임스페이스를 포함합니다. |

|

| Operator group의 대상 네임스페이스 선택 사항을 나열하는 쉼표로 구분된 문자열을 포함합니다. |

olm.targetNamespaces를 제외한 모든 주석은 CSV 복사본에 포함됩니다. CSV 복제본에서 olm.targetNamespaces 주석을 생략하면 테넌트 간에 대상 네임스페이스를 복제할 수 없습니다.

2.4.5.5. 제공된 API 주석

GVK(그룹/버전/종류)는 Kubernetes API의 고유 ID입니다. Operator group에서 제공하는 GVK에 대한 정보는 olm.providedAPIs 주석에 표시됩니다. 주석 값은 <kind>.<version>.<group>으로 구성된 문자열로, 쉼표로 구분됩니다. Operator group의 모든 활성 멤버 CSV에서 제공하는 CRD 및 API 서비스의 GVK가 포함됩니다.

PackageManifest 리소스를 제공하는 하나의 활성 멤버 CSV에서 OperatorGroup 오브젝트의 다음 예제를 검토합니다.

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

annotations:

olm.providedAPIs: PackageManifest.v1alpha1.packages.apps.redhat.com

name: olm-operators

namespace: local

...

spec:

selector: {}

serviceAccount:

metadata:

creationTimestamp: null

targetNamespaces:

- local

status:

lastUpdated: 2019-02-19T16:18:28Z

namespaces:

- local2.4.5.6. 역할 기반 액세스 제어

Operator v이 생성되면 세 개의 클러스터 역할이 생성됩니다. 각 역할에는 다음과 같이 라벨과 일치하도록 클러스터 역할 선택기가 설정된 단일 집계 규칙이 포함됩니다.

| 클러스터 역할 | 일치해야 하는 라벨 |

|---|---|

|

|

|

|

|

|

|

|

|

다음 RBAC 리소스는 CSV가 AllNamespaces 설치 모드로 모든 네임스페이스를 조사하고 이유가 InterOperatorGroupOwnerConflict인 실패 상태가 아닌 한 CSV가 Operator group의 활성 멤버가 될 때 생성됩니다.

- CRD의 각 API 리소스에 대한 클러스터 역할

- API 서비스의 각 API 리소스에 대한 클러스터 역할

- 추가 역할 및 역할 바인딩

| 클러스터 역할 | 설정 |

|---|---|

|

|

집계 라벨:

|

|

|

집계 라벨:

|

|

|

집계 라벨:

|

|

|

집계 라벨:

|

| 클러스터 역할 | 설정 |

|---|---|

|

|

집계 라벨:

|

|

|

집계 라벨:

|

|

|

집계 라벨:

|

추가 역할 및 역할 바인딩

-

CSV에서

*를 포함하는 정확히 하나의 대상 네임스페이스를 정의하는 경우 CSV의permissions필드에 정의된 각 권한에 대해 클러스터 역할 및 해당 클러스터 역할 바인딩이 생성됩니다. 생성된 모든 리소스에는olm.owner: <csv_name>및olm.owner.namespace: <csv_namespace>라벨이 지정됩니다. -

CSV에서

*를 포함하는 정확히 하나의 대상 네임스페이스를 정의하지 않는 경우에는olm.owner: <csv_name>및olm.owner.namespace: <csv_namespace>라벨이 있는 Operator 네임스페이스의 모든 역할 및 역할 바인딩이 대상 네임스페이스에 복사됩니다.

2.4.5.7. CSV 복사본

OLM은 해당 Operator group의 각 대상 네임스페이스에서 Operator group의 모든 활성 멤버에 대한 CSV 복사본을 생성합니다. CSV 복사본의 용도는 대상 네임스페이스의 사용자에게 특정 Operator가 그곳에서 생성된 리소스를 조사하도록 구성됨을 알리는 것입니다.

CSV 복사본은 상태 이유가 Copied이고 해당 소스 CSV의 상태와 일치하도록 업데이트됩니다. olm.targetNamespaces 주석은 해당 주석이 클러스터에서 생성되기 전에 CSV 복사본에서 제거됩니다. 대상 네임스페이스 선택 단계를 생략하면 테넌트 간 대상 네임스페이스가 중복되지 않습니다.

CSV 복사본은 복사본의 소스 CSV가 더 이상 존재하지 않거나 소스 CSV가 속한 Operator group이 더 이상 CSV 복사본의 네임스페이스를 대상으로 하지 않는 경우 삭제됩니다.

2.4.5.8. 정적 Operator groups

spec.staticProvidedAPIs 필드가 true로 설정된 경우 Operator group은 static입니다. 결과적으로 OLM은 Operator group의 olm.providedAPIs 주석을 수정하지 않으므로 사전에 설정할 수 있습니다. 이는 사용자가 Operator group을 사용하여 일련의 네임스페이스에서 리소스 경합을 방지하려고 하지만 해당 리소스에 대한 API를 제공하는 활성 멤버 CSV가 없는 경우 유용합니다.

다음은 something.cool.io/cluster-monitoring: "true" 주석을 사용하여 모든 네임스페이스에서 Prometheus 리소스를 보호하는 Operator group의 예입니다.

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: cluster-monitoring

namespace: cluster-monitoring

annotations:

olm.providedAPIs: Alertmanager.v1.monitoring.coreos.com,Prometheus.v1.monitoring.coreos.com,PrometheusRule.v1.monitoring.coreos.com,ServiceMonitor.v1.monitoring.coreos.com

spec:

staticProvidedAPIs: true

selector:

matchLabels:

something.cool.io/cluster-monitoring: "true"2.4.5.9. Operator group 교집합

대상 네임스페이스 세트의 교집합이 빈 세트가 아니고 olm.providedAPIs 주석으로 정의되어 제공된 API 세트의 교집합이 빈 세트가 아닌 경우 두 Operator groups을 교차 제공 API가 있다고 합니다.

잠재적인 문제는 교차 제공 API가 있는 Operator groups이 교차 네임스페이스 세트에서 동일한 리소스에 대해 경쟁할 수 있다는 점입니다.

교집합 규칙을 확인할 때는 Operator group 네임스페이스가 항상 선택한 대상 네임스페이스의 일부로 포함됩니다.

교집합 규칙

활성 멤버 CSV가 동기화될 때마다 OLM은 CSV의 Operator group 및 기타 모든 그룹 간에 교차 제공되는 API 세트에 대한 클러스터를 쿼리합니다. 그런 다음 OLM은 해당 세트가 빈 세트인지 확인합니다.

true및 CSV 제공 API가 Operator group의 서브 세트인 경우:- 계속 전환합니다.

true및 CSV 제공 API가 Operator group의 서브 세트가 아닌 경우:Operator group이 정적이면 다음을 수행합니다.

- CSV에 속하는 배포를 정리합니다.

-

상태 이유

CannotModifyStaticOperatorGroupProvidedAPIs와 함께 CSV를 실패 상태로 전환합니다.

Operator group이 정적이 아니면 다음을 수행합니다.

-

Operator group의

olm.providedAPIs주석을 주석 자체와 CSV 제공 API를 결합한 내용으로 교체합니다.

-

Operator group의

false및 CSV 제공 API가 Operator group의 서브 세트가 아닌 경우:- CSV에 속하는 배포를 정리합니다.

-

상태 이유

InterOperatorGroupOwnerConflict와 함께 CSV를 실패 상태로 전환합니다.

false및 CSV 제공 API가 Operator group의 서브 세트인 경우:Operator group이 정적이면 다음을 수행합니다.

- CSV에 속하는 배포를 정리합니다.

-

상태 이유

CannotModifyStaticOperatorGroupProvidedAPIs와 함께 CSV를 실패 상태로 전환합니다.

Operator group이 정적이 아니면 다음을 수행합니다.

-

Operator group의

olm.providedAPIs주석을 주석 자체와 CSV 제공 API 간의 차이로 교체합니다.

-

Operator group의

Operator group으로 인한 실패 상태는 터미널이 아닙니다.

Operator group이 동기화될 때마다 다음 작업이 수행됩니다.

- 활성 멤버 CSV에서 제공되는 API 세트는 클러스터에서 계산됩니다. CSV 복사본은 무시됩니다.

-

클러스터 세트는

olm.providedAPIs와 비교되며olm.providedAPIs에 추가 API가 포함된 경우 해당 API가 정리됩니다. - 모든 네임스페이스에서 동일한 API를 제공하는 모든 CSV가 다시 큐에 추가됩니다. 그러면 충돌하는 CSV의 크기 조정 또는 삭제를 통해 충돌이 해결되었을 수 있음을 교차 group의 충돌하는 CSV에 알립니다.

2.4.5.10. 멀티 테넌트 Operator 관리에 대한 제한 사항

OpenShift Container Platform은 클러스터에 다양한 Operator 변형을 동시에 설치할 수 있도록 제한된 지원을 제공합니다. Operator는 컨트롤 플레인 확장입니다. 모든 테넌트 또는 네임스페이스는 클러스터의 동일한 컨트롤 플레인을 공유합니다. 따라서 멀티 테넌트 환경의 테넌트도 Operator를 공유해야 합니다.

OLM(Operator Lifecycle Manager)은 다른 네임스페이스에 Operator를 여러 번 설치합니다. 이에 대한 한 가지 제한 사항은 Operator의 API 버전이 동일해야 한다는 것입니다.

Operator의 다른 주요 버전에는 종종 호환되지 않는 CRD(사용자 정의 리소스 정의)가 있습니다. 이렇게 하면 OLM을 신속하게 확인하기 어렵습니다.

2.4.5.11. Operator groups 문제 해결

멤버십

-

단일 네임스페이스에 두 개 이상의 Operator groups이 있는 경우 해당 네임스페이스에서 생성된 모든 CSV는

TooManyOperatorGroups이유와 함께 실패 상태로 전환됩니다. 이러한 이유로 실패한 상태의 CSV는 네임스페이스의 Operator groups 수가 1에 도달하면 보류 중으로 전환됩니다. -

CSV의 설치 모드가 해당 네임스페이스에서 Operator group의 대상 네임스페이스 선택을 지원하지 않는 경우 CSV는

UnsupportedOperatorGroup이유와 함께 실패 상태로 전환됩니다. 이러한 이유로 실패 상태에 있는 CSV는 Operator group의 대상 네임스페이스 선택이 지원되는 구성으로 변경된 후 보류 중으로 전환되거나 대상 네임스페이스 선택을 지원하도록 CSV의 설치 모드가 수정됩니다.

2.4.6. Operator Lifecycle Manager 지표

2.4.6.1. 표시되는 지표

OLM(Operator Lifecycle Manager)에서는 Prometheus 기반 OpenShift Container Platform 클러스터 모니터링 스택에서 사용할 특정 OLM 관련 리소스를 표시합니다.

| 이름 | 설명 |

|---|---|

|

| 카탈로그 소스 수입니다. |

|

|

CSV(클러스터 서비스 버전)를 조정할 때 CSV 버전이 |

|

| 성공적으로 등록된 CSV 수입니다. |

|

|

CSV를 재조정할 때 CSV 버전이 |

|

| CSV 업그레이드의 단조 수입니다. |

|

| 설치 계획 수입니다. |

|

| 서브스크립션 수입니다. |

|

|

서브스크립션 동기화의 단조 수입니다. |

2.4.7. Operator Lifecycle Manager의 Webhook 관리

Operator 작성자는 Webhook를 통해 리소스를 오브젝트 저장소에 저장하고 Operator 컨트롤러에서 이를 처리하기 전에 리소스를 가로채기, 수정, 수락 또는 거부할 수 있습니다. Operator와 함께 webhook 가 제공 될 때 OLM (Operator Lifecycle Manager)은 이러한 Webhook의 라이프 사이클을 관리할 수 있습니다.

Operator 개발자가 Operator의 Webhook 를 정의하는 방법과 OLM에서 실행할 때의 고려 사항에 대한 자세한 내용은 CSV(클러스터 서비스 버전) 생성.

2.5. OperatorHub 이해

2.5.1. OperatorHub 정보

OperatorHub는 클러스터 관리자가 Operator를 검색하고 설치하는 데 사용하는 OpenShift Container Platform의 웹 콘솔 인터페이스입니다. 한 번의 클릭으로 Operator를 클러스터 외부 소스에서 가져와서 클러스터에 설치 및 구독하고 엔지니어링 팀에서 OLM(Operator Lifecycle Manager)을 사용하여 배포 환경에서 제품을 셀프서비스로 관리할 수 있습니다.

클러스터 관리자는 다음 카테고리로 그룹화된 카탈로그에서 선택할 수 있습니다.

| 카테고리 | 설명 |

|---|---|

| Red Hat Operator | Red Hat에서 Red Hat 제품을 패키지 및 제공합니다. Red Hat에서 지원합니다. |

| 인증된 Operator | 선도적인 ISV(독립 소프트웨어 벤더)의 제품입니다. Red Hat은 패키지 및 제공을 위해 ISV와 협력합니다. ISV에서 지원합니다. |

| Red Hat Marketplace | Red Hat Marketplace에서 구매할 수 있는 인증 소프트웨어입니다. |

| 커뮤니티 Operator | operator-framework/community-operators GitHub 리포지토리의 관련 담당자가 유지 관리하는 선택적 표시 소프트웨어입니다. 공식적으로 지원되지 않습니다. |

| 사용자 정의 Operator | 클러스터에 직접 추가하는 Operator입니다. 사용자 정의 Operator를 추가하지 않은 경우 OperatorHub의 웹 콘솔에 사용자 정의 카테고리가 표시되지 않습니다. |

OperatorHub의 Operator는 OLM에서 실행되도록 패키지됩니다. 여기에는 Operator를 설치하고 안전하게 실행하는 데 필요한 모든 CRD, RBAC 규칙, 배포, 컨테이너 이미지가 포함된 CSV(클러스터 서비스 버전)라는 YAML 파일이 포함됩니다. 또한 해당 기능 및 지원되는 Kubernetes 버전에 대한 설명과 같이 사용자가 볼 수 있는 정보가 포함됩니다.

Operator SDK는 OLM 및 OperatorHub에서 사용하도록 Operator를 패키지하는 개발자를 지원하는 데 사용할 수 있습니다. 고객이 액세스할 수 있도록 설정할 상용 애플리케이션이 있는 경우 Red Hat Partner Connect 포털(connect.redhat.com)에 제공된 인증 워크플로를 사용하여 포함합니다.

2.5.2. OperatorHub 아키텍처

OperatorHub UI 구성 요소는 기본적으로 openshift-marketplace 네임스페이스의 OpenShift Container Platform에서 Marketplace Operator에 의해 구동됩니다.

2.5.2.1. OperatorHub 사용자 정의 리소스

Marketplace Operator는 OperatorHub와 함께 제공되는 기본 CatalogSource 오브젝트를 관리하는 cluster라는 OperatorHub CR(사용자 정의 리소스)을 관리합니다. 이 리소스를 수정하여 기본 카탈로그를 활성화하거나 비활성화할 수 있어 제한된 네트워크 환경에서 OpenShift Container Platform을 구성할 때 유용합니다.

OperatorHub 사용자 정의 리소스의 예

apiVersion: config.openshift.io/v1

kind: OperatorHub

metadata:

name: cluster

spec:

disableAllDefaultSources: true

sources: [

{

name: "community-operators",

disabled: false

}

]2.6. Red Hat 제공 Operator 카탈로그

2.6.1. Operator 카탈로그 정보

Operator 카탈로그는 OLM(Operator Lifecycle Manager)에서 쿼리하여 Operator 및 해당 종속성을 검색하고 설치할 수 있는 메타데이터 리포지토리입니다. OLM은 항상 최신 버전의 카탈로그에 있는 Operator를 설치합니다. OpenShift Container Platform 4.6부터는 인덱스 이미지를 사용하여 Red Hat에서 제공하는 카탈로그가 배포됩니다.

Operator 번들 형식을 기반으로 하는 인덱스 이미지는 컨테이너화된 카탈로그 스냅샷입니다. 일련의 Operator 매니페스트 콘텐츠에 대한 포인터의 데이터베이스를 포함하는 변경 불가능한 아티팩트입니다. 카탈로그는 인덱스 이미지를 참조하여 클러스터에서 OLM에 대한 콘텐츠를 소싱할 수 있습니다.

OpenShift Container Platform 4.6부터는 Red Hat에서 제공하는 인덱스 이미지가 더 이상 사용되지 않는 패키지 매니페스트 형식을 기반으로 이전 버전의 OpenShift Container Platform 4에 배포되는 앱 레지스트리 카탈로그 이미지를 대체합니다. 앱 레지스트리 카탈로그 이미지는 Red Hat for OpenShift Container Platform 4.6 이상에서 배포되지 않지만 패키지 매니페스트 형식을 기반으로 하는 사용자 정의 카탈로그 이미지는 계속 지원됩니다.

카탈로그가 업데이트되면 최신 버전의 Operator가 변경되고 이전 버전은 제거되거나 변경될 수 있습니다. 또한 OLM이 네트워크가 제한된 환경의 OpenShift Container Platform 클러스터에서 실행되면 최신 콘텐츠를 가져오기 위해 인터넷에서 카탈로그에 직접 액세스할 수 없습니다.

클러스터 관리자는 Red Hat에서 제공하는 카탈로그를 기반으로 또는 처음부터 자체 사용자 정의 인덱스 이미지를 생성할 수 있습니다. 이 이미지는 클러스터에서 카탈로그 콘텐츠를 소싱하는 데 사용할 수 있습니다. 자체 인덱스 이미지를 생성하고 업데이트하면 클러스터에서 사용 가능한 Operator 세트를 사용자 정의할 수 있을 뿐만 아니라 앞서 언급한 제한된 네트워크 환경 문제도 방지할 수 있습니다.

사용자 정의 카탈로그 이미지를 생성할 때 이전 버전의 OpenShift Container Platform 4에서는 여러 릴리스에서 더 이상 사용되지 않는 oc adm catalog build 명령을 사용해야 했습니다. OpenShift Container Platform 4.6부터는 Red Hat 제공 인덱스 이미지를 사용할 수 있으므로 카탈로그 빌더는 향후 릴리스에서 oc adm catalog build 명령이 제거되기 전에 인덱스 이미지를 관리하는 데 opm index 명령을 사용하도록 전환해야 합니다.

2.6.2. Red Hat 제공 Operator 카탈로그 정보

다음은 Red Hat에서 제공하는 Operator 카탈로그입니다.

| 카탈로그 | 인덱스 이미지 | 설명 |

|---|---|---|

|

|

| Red Hat에서 Red Hat 제품을 패키지 및 제공합니다. Red Hat에서 지원합니다. |

|

|

| 선도적인 ISV(독립 소프트웨어 벤더)의 제품입니다. Red Hat은 패키지 및 제공을 위해 ISV와 협력합니다. ISV에서 지원합니다. |

|

|

| Red Hat Marketplace에서 구매할 수 있는 인증 소프트웨어입니다. |

|

|

| operator-framework/community-operators GitHub 리포지토리의 관련 담당자가 유지 관리하는 소프트웨어입니다. 공식적으로 지원되지 않습니다. |

2.7. CRD

2.7.1. 사용자 정의 리소스 정의를 사용하여 Kubernetes API 확장

이 가이드에서는 클러스터 관리자가 CRD(사용자 정의 리소스 정의)를 생성하고 관리하여 OpenShift Container Platform 클러스터를 확장할 수 있는 방법을 설명합니다.

2.7.1.1. 사용자 정의 리소스 정의

Kubernetes API에서 리소스는 특정 종류의 API 오브젝트 컬렉션을 저장하는 끝점입니다. 예를 들어 기본 제공 Pod 리소스에는 Pod 오브젝트의 컬렉션이 포함됩니다.

CRD(사용자 정의 리소스 정의) 오브젝트는 클러스터에서 종류라는 새로운 고유한 오브젝트 유형을 정의하고 Kubernetes API 서버에서 전체 라이프사이클을 처리하도록 합니다.

CR(사용자 정의 리소스) 오브젝트는 클러스터 관리자가 클러스터에 추가한 CRD에서 생성하므로 모든 클러스터 사용자가 새 리소스 유형을 프로젝트에 추가할 수 있습니다.

클러스터 관리자가 새 CRD를 클러스터에 추가하면 Kubernetes API 서버는 전체 클러스터 또는 단일 프로젝트(네임스페이스)에서 액세스할 수 있는 새 RESTful 리소스 경로를 생성하여 반응하고 지정된 CR을 제공하기 시작합니다.

클러스터 관리자가 다른 사용자에게 CRD에 대한 액세스 권한을 부여하려면 클러스터 역할 집계를 사용하여 admin, edit 또는 view 기본 클러스터 역할이 있는 사용자에게 액세스 권한을 부여할 수 있습니다. 클러스터 역할 집계를 사용하면 이러한 클러스터 역할에 사용자 정의 정책 규칙을 삽입할 수 있습니다. 이 동작은 새 리소스를 기본 제공 리소스인 것처럼 클러스터의 RBAC 정책에 통합합니다.

특히 운영자는 CRD를 필수 RBAC 정책 및 기타 소프트웨어별 논리와 함께 패키지로 제공하는 방식으로 CRD를 사용합니다. 또한 클러스터 관리자는 Operator의 라이프사이클 외부에서 클러스터에 CRD를 수동으로 추가하여 모든 사용자에게 제공할 수 있습니다.

클러스터 관리자만 CRD를 생성할 수 있지만 기존 CRD에 대한 읽기 및 쓰기 권한이 있는 개발자의 경우 기존 CRD에서 CR을 생성할 수 있습니다.

2.7.1.2. 사용자 정의 리소스 정의 생성

사용자 정의 리소스(CR) 오브젝트를 생성하려면 클러스터 관리자가 먼저 CRD(사용자 정의 리소스 정의)를 생성해야 합니다.

사전 요구 사항

-

cluster-admin사용자 권한을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다.

프로세스

CRD를 생성하려면 다음을 수행합니다.

다음 필드 유형을 포함하는 YAML 파일을 생성합니다.

CRD에 대한 YAML 파일의 예

apiVersion: apiextensions.k8s.io/v11 kind: CustomResourceDefinition metadata: name: crontabs.stable.example.com2 spec: group: stable.example.com3 versions: name: v14 scope: Namespaced5 names: plural: crontabs6 singular: crontab7 kind: CronTab8 shortNames: - ct9 - 1

apiextensions.k8s.io/v1API를 사용합니다.- 2

- 정의의 이름을 지정합니다.

group및plural필드의 값을 사용하는<plural-name>.<group>형식이어야 합니다. - 3

- API의 그룹 이름을 지정합니다. API 그룹은 논리적으로 관련된 오브젝트의 컬렉션입니다. 예를 들어

Job또는ScheduledJob과 같은 배치 오브젝트는 모두 배치 API 그룹(예:batch.api.example.com)에 있을 수 있습니다. 조직의 FQDN(정규화된 도메인 이름)을 사용하는 것이 좋습니다. - 4

- URL에 사용할 버전 이름을 지정합니다. 각 API 그룹은 여러 버전(예:

v1alpha,v1beta,v1)에 있을 수 있습니다. - 5

- 특정 프로젝트(

Namespaced) 또는 클러스터의 모든 프로젝트(Cluster)에서 사용자 정의 오브젝트를 사용할 수 있는지 지정합니다. - 6

- URL에서 사용할 복수형 이름을 지정합니다.

plural필드는 API URL의 리소스와 동일합니다. - 7

- CLI 및 디스플레이에서 별칭으로 사용할 단수형 이름을 지정합니다.

- 8

- 생성할 수 있는 오브젝트의 종류를 지정합니다. 유형은 CamelCase에 있을 수 있습니다.

- 9

- CLI의 리소스와 일치하는 짧은 문자열을 지정합니다.

참고기본적으로 CRD는 클러스터 범위이며 모든 프로젝트에서 사용할 수 있습니다.

CRD 오브젝트를 생성합니다.

$ oc create -f <file_name>.yaml다음에 새 RESTful API 끝점이 생성됩니다.

/apis/<spec:group>/<spec:version>/<scope>/*/<names-plural>/...예를 들어 예제 파일을 사용하면 다음 끝점이 생성됩니다.

/apis/stable.example.com/v1/namespaces/*/crontabs/...이 끝점 URL을 사용하여 CR을 생성하고 관리할 수 있습니다. 오브젝트 종류는 생성한 CRD 오브젝트의

spec.kind필드를 기반으로 합니다.

2.7.1.3. 사용자 정의 리소스 정의에 대한 클러스터 역할 생성

클러스터 관리자는 기존 클러스터 범위의 CRD(사용자 정의 리소스 정의)에 권한을 부여할 수 있습니다. admin, edit, view 기본 클러스터 역할을 사용하는 경우 해당 규칙에 클러스터 역할 집계를 활용할 수 있습니다.

이러한 각 역할에 대한 권한을 명시적으로 할당해야 합니다. 권한이 더 많은 역할은 권한이 더 적은 역할의 규칙을 상속하지 않습니다. 역할에 규칙을 할당하는 경우 권한이 더 많은 역할에 해당 동사를 할당해야 합니다. 예를 들어 보기 역할에 get crontabs 권한을 부여하는 경우 edit 및 admin 역할에도 부여해야 합니다. admin 또는 edit 역할은 일반적으로 프로젝트 템플릿을 통해 프로젝트를 생성한 사용자에게 할당됩니다.

사전 요구 사항

- CRD를 생성합니다.

프로세스

CRD의 클러스터 역할 정의 파일을 생성합니다. 클러스터 역할 정의는 각 클러스터 역할에 적용되는 규칙이 포함된 YAML 파일입니다. OpenShift Container Platform 컨트롤러는 사용자가 지정하는 규칙을 기본 클러스터 역할에 추가합니다.

클러스터 역할 정의에 대한 YAML 파일의 예

kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v11 metadata: name: aggregate-cron-tabs-admin-edit2 labels: rbac.authorization.k8s.io/aggregate-to-admin: "true"3 rbac.authorization.k8s.io/aggregate-to-edit: "true"4 rules: - apiGroups: ["stable.example.com"]5 resources: ["crontabs"]6 verbs: ["get", "list", "watch", "create", "update", "patch", "delete", "deletecollection"]7 --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: aggregate-cron-tabs-view8 labels: # Add these permissions to the "view" default role. rbac.authorization.k8s.io/aggregate-to-view: "true"9 rbac.authorization.k8s.io/aggregate-to-cluster-reader: "true"10 rules: - apiGroups: ["stable.example.com"]11 resources: ["crontabs"]12 verbs: ["get", "list", "watch"]13 - 1

rbac.authorization.k8s.io/v1API를 사용합니다.- 2 8

- 정의의 이름을 지정합니다.

- 3

- 관리 기본 역할에 권한을 부여하려면 이 라벨을 지정합니다.

- 4

- 편집 기본 역할에 권한을 부여하려면 이 라벨을 지정합니다.

- 5 11

- CRD의 그룹 이름을 지정합니다.

- 6 12

- 이러한 규칙이 적용되는 CRD의 복수형 이름을 지정합니다.

- 7 13

- 역할에 부여된 권한을 나타내는 동사를 지정합니다. 예를 들어

admin및edit역할에는 읽기 및 쓰기 권한을 적용하고view역할에는 읽기 권한만 적용합니다. - 9

view기본 역할에 권한을 부여하려면 이 라벨을 지정합니다.- 10

cluster-reader기본 역할에 권한을 부여하려면 이 라벨을 지정합니다.

클러스터 역할을 생성합니다.

$ oc create -f <file_name>.yaml

2.7.1.4. 파일에서 사용자 정의 리소스 생성

CRD(사용자 정의 리소스 정의)가 클러스터에 추가되면 CR 사양을 사용하여 파일에서 CLI를 통해 CR(사용자 정의 리소스)을 생성할 수 있습니다.

사전 요구 사항

- 클러스터 관리자가 클러스터에 CRD를 추가했습니다.

프로세스

CR에 대한 YAML 파일을 생성합니다. 다음 예제 정의에서

cronSpec및image사용자 정의 필드는Kind CR에 설정됩니다. CronTab.Kind는 CRD 오브젝트의spec.kind필드에서 제공합니다.CR에 대한 YAML 파일의 예

apiVersion: "stable.example.com/v1"1 kind: CronTab2 metadata: name: my-new-cron-object3 finalizers:4 - finalizer.stable.example.com spec:5 cronSpec: "* * * * /5" image: my-awesome-cron-image파일을 생성한 후 오브젝트를 생성합니다.

$ oc create -f <file_name>.yaml

2.7.1.5. 사용자 정의 리소스 검사

CLI를 사용하여 클러스터에 존재하는 CR(사용자 정의 리소스) 오브젝트를 검사할 수 있습니다.

사전 요구 사항

- CR 오브젝트는 액세스할 수 있는 네임스페이스에 있습니다.

프로세스

특정 종류의 CR에 대한 정보를 얻으려면 다음을 실행합니다.

$ oc get <kind>예를 들면 다음과 같습니다.

$ oc get crontab출력 예

NAME KIND my-new-cron-object CronTab.v1.stable.example.com리소스 이름은 대소문자를 구분하지 않으며 CRD에 정의된 단수형 또는 복수형 양식이나 짧은 이름을 사용할 수 있습니다. 예를 들면 다음과 같습니다.

$ oc get crontabs$ oc get crontab$ oc get ctCR의 원시 YAML 데이터를 볼 수도 있습니다.

$ oc get <kind> -o yaml예를 들면 다음과 같습니다.

$ oc get ct -o yaml출력 예

apiVersion: v1 items: - apiVersion: stable.example.com/v1 kind: CronTab metadata: clusterName: "" creationTimestamp: 2017-05-31T12:56:35Z deletionGracePeriodSeconds: null deletionTimestamp: null name: my-new-cron-object namespace: default resourceVersion: "285" selfLink: /apis/stable.example.com/v1/namespaces/default/crontabs/my-new-cron-object uid: 9423255b-4600-11e7-af6a-28d2447dc82b spec: cronSpec: '* * * * /5'1 image: my-awesome-cron-image2

2.7.2. 사용자 정의 리소스 정의에서 리소스 관리

이 가이드에서는 개발자가 CRD(사용자 정의 리소스 정의)에서 제공하는 CR(사용자 정의 리소스)을 관리하는 방법을 설명합니다.

2.7.2.1. 사용자 정의 리소스 정의

Kubernetes API에서 리소스는 특정 종류의 API 오브젝트 컬렉션을 저장하는 끝점입니다. 예를 들어 기본 제공 Pod 리소스에는 Pod 오브젝트의 컬렉션이 포함됩니다.

CRD(사용자 정의 리소스 정의) 오브젝트는 클러스터에서 종류라는 새로운 고유한 오브젝트 유형을 정의하고 Kubernetes API 서버에서 전체 라이프사이클을 처리하도록 합니다.

CR(사용자 정의 리소스) 오브젝트는 클러스터 관리자가 클러스터에 추가한 CRD에서 생성하므로 모든 클러스터 사용자가 새 리소스 유형을 프로젝트에 추가할 수 있습니다.

특히 운영자는 CRD를 필수 RBAC 정책 및 기타 소프트웨어별 논리와 함께 패키지로 제공하는 방식으로 CRD를 사용합니다. 또한 클러스터 관리자는 Operator의 라이프사이클 외부에서 클러스터에 CRD를 수동으로 추가하여 모든 사용자에게 제공할 수 있습니다.

클러스터 관리자만 CRD를 생성할 수 있지만 기존 CRD에 대한 읽기 및 쓰기 권한이 있는 개발자의 경우 기존 CRD에서 CR을 생성할 수 있습니다.

2.7.2.2. 파일에서 사용자 정의 리소스 생성

CRD(사용자 정의 리소스 정의)가 클러스터에 추가되면 CR 사양을 사용하여 파일에서 CLI를 통해 CR(사용자 정의 리소스)을 생성할 수 있습니다.

사전 요구 사항

- 클러스터 관리자가 클러스터에 CRD를 추가했습니다.

프로세스

CR에 대한 YAML 파일을 생성합니다. 다음 예제 정의에서

cronSpec및image사용자 정의 필드는Kind CR에 설정됩니다. CronTab.Kind는 CRD 오브젝트의spec.kind필드에서 제공합니다.CR에 대한 YAML 파일의 예

apiVersion: "stable.example.com/v1"1 kind: CronTab2 metadata: name: my-new-cron-object3 finalizers:4 - finalizer.stable.example.com spec:5 cronSpec: "* * * * /5" image: my-awesome-cron-image파일을 생성한 후 오브젝트를 생성합니다.

$ oc create -f <file_name>.yaml

2.7.2.3. 사용자 정의 리소스 검사

CLI를 사용하여 클러스터에 존재하는 CR(사용자 정의 리소스) 오브젝트를 검사할 수 있습니다.

사전 요구 사항

- CR 오브젝트는 액세스할 수 있는 네임스페이스에 있습니다.

프로세스

특정 종류의 CR에 대한 정보를 얻으려면 다음을 실행합니다.

$ oc get <kind>예를 들면 다음과 같습니다.

$ oc get crontab출력 예

NAME KIND my-new-cron-object CronTab.v1.stable.example.com리소스 이름은 대소문자를 구분하지 않으며 CRD에 정의된 단수형 또는 복수형 양식이나 짧은 이름을 사용할 수 있습니다. 예를 들면 다음과 같습니다.

$ oc get crontabs$ oc get crontab$ oc get ctCR의 원시 YAML 데이터를 볼 수도 있습니다.

$ oc get <kind> -o yaml예를 들면 다음과 같습니다.

$ oc get ct -o yaml출력 예

apiVersion: v1 items: - apiVersion: stable.example.com/v1 kind: CronTab metadata: clusterName: "" creationTimestamp: 2017-05-31T12:56:35Z deletionGracePeriodSeconds: null deletionTimestamp: null name: my-new-cron-object namespace: default resourceVersion: "285" selfLink: /apis/stable.example.com/v1/namespaces/default/crontabs/my-new-cron-object uid: 9423255b-4600-11e7-af6a-28d2447dc82b spec: cronSpec: '* * * * /5'1 image: my-awesome-cron-image2

3장. 사용자 작업

3.1. 설치된 Operator에서 애플리케이션 생성

이 가이드에서는 개발자에게 OpenShift Container Platform 웹 콘솔을 사용하여 설치한 Operator에서 애플리케이션을 생성하는 예제를 안내합니다.

3.1.1. Operator를 사용하여 etcd 클러스터 생성

이 절차에서는 OLM(Operator Lifecycle Manager)에서 관리하는 etcd Operator를 사용하여 새 etcd 클러스터를 생성하는 과정을 안내합니다.

사전 요구 사항

- OpenShift Container Platform 4.6 클러스터에 액세스할 수 있습니다.

- 관리자가 클러스터 수준에 etcd Operator를 이미 설치했습니다.

프로세스

-

이 절차를 위해 OpenShift Container Platform 웹 콘솔에 새 프로젝트를 생성합니다. 이 예제에서는

my-etcd라는 프로젝트를 사용합니다. Operator → 설치된 Operator 페이지로 이동합니다. 이 페이지에는 클러스터 관리자가 클러스터에 설치하여 사용할 수 있는 Operator가 CSV(클러스터 서비스 버전) 목록으로 표시됩니다. CSV는 Operator에서 제공하는 소프트웨어를 시작하고 관리하는 데 사용됩니다.

작은 정보다음을 사용하여 CLI에서 이 목록을 가져올 수 있습니다.

$ oc get csv자세한 내용과 사용 가능한 작업을 확인하려면 설치된 Operator 페이지에서 etcd Operator를 클릭합니다.

이 Operator에서는 제공된 API 아래에 표시된 것과 같이 etcd 클러스터(

EtcdCluster리소스)용 하나를 포함하여 새로운 리소스 유형 세 가지를 사용할 수 있습니다. 이러한 오브젝트는 내장된 네이티브 Kubernetes 오브젝트(예:Deployment또는ReplicaSet)와 비슷하게 작동하지만 etcd 관리와 관련된 논리가 포함됩니다.새 etcd 클러스터를 생성합니다.

- etcd 클러스터 API 상자에서 인스턴스 생성을 클릭합니다.

-

다음 화면을 사용하면 클러스터 크기와 같은

EtcdCluster오브젝트의 최소 시작 템플릿을 수정할 수 있습니다. 지금은 생성을 클릭하여 종료하십시오. 그러면 Operator에서 새 etcd 클러스터의 Pod, 서비스 및 기타 구성 요소를 가동합니다.

예제 etcd 클러스터를 클릭한 다음 리소스 탭을 클릭하여 Operator에서 자동으로 생성 및 구성한 리소스 수가 프로젝트에 포함되는지 확인합니다.

프로젝트의 다른 Pod에서 데이터베이스에 액세스할 수 있도록 Kubernetes 서비스가 생성되었는지 확인합니다.

지정된 프로젝트에서

edit역할을 가진 모든 사용자는 클라우드 서비스와 마찬가지로 셀프 서비스 방식으로 프로젝트에 이미 생성된 Operator에서 관리하는 애플리케이션 인스턴스(이 예제의 etcd 클러스터)를 생성, 관리, 삭제할 수 있습니다. 이 기능을 사용하여 추가 사용자를 활성화하려면 프로젝트 관리자가 다음 명령을 사용하여 역할을 추가하면 됩니다.$ oc policy add-role-to-user edit <user> -n <target_project>

이제 Pod가 비정상적인 상태가 되거나 클러스터의 다른 노드로 마이그레이션되면 오류에 반응하고 데이터를 재조정할 etcd 클러스터가 생성되었습니다. 가장 중요한 점은 적절한 액세스 권한이 있는 클러스터 관리자 또는 개발자가 애플리케이션과 함께 데이터베이스를 쉽게 사용할 수 있다는 점입니다.

3.2. 네임스페이스에 Operator 설치

클러스터 관리자가 계정에 Operator 설치 권한을 위임한 경우 셀프서비스 방식으로 Operator를 설치하고 네임스페이스에 등록할 수 있습니다.

3.2.1. 사전 요구 사항

- 클러스터 관리자는 셀프서비스 Operator를 네임스페이스에 설치할 수 있도록 OpenShift Container Platform 사용자 계정에 특정 권한을 추가해야 합니다. 자세한 내용은 비 클러스터 관리자가 Operator를 설치하도록 허용을 참조하십시오.

3.2.2. OperatorHub를 통한 Operator 설치

OperatorHub는 Operator를 검색하는 사용자 인터페이스입니다. 이는 클러스터에 Operator를 설치하고 관리하는 OLM(Operator Lifecycle Manager)과 함께 작동합니다.

적절한 권한이 있는 클러스터 관리자는 OpenShift Container Platform 웹 콘솔 또는 CLI를 사용하여 OperatorHub에서 Operator를 설치할 수 있습니다.

설치하는 동안 Operator의 다음 초기 설정을 결정해야합니다.

- 설치 모드

- Operator를 설치할 특정 네임스페이스를 선택합니다.

- 업데이트 채널

- 여러 채널을 통해 Operator를 사용할 수있는 경우 구독할 채널을 선택할 수 있습니다. 예를 들어, stable 채널에서 배치하려면 (사용 가능한 경우) 목록에서 해당 채널을 선택합니다.

- 승인 전략

자동 또는 수동 업데이트를 선택할 수 있습니다.

설치된 Operator에 대해 자동 업데이트를 선택하는 경우 선택한 채널에 해당 Operator의 새 버전이 제공되면 OLM(Operator Lifecycle Manager)에서 Operator의 실행 중인 인스턴스를 개입 없이 자동으로 업그레이드합니다.

수동 업데이트를 선택하면 최신 버전의 Operator가 사용 가능할 때 OLM이 업데이트 요청을 작성합니다. 클러스터 관리자는 Operator를 새 버전으로 업데이트하려면 OLM 업데이트 요청을 수동으로 승인해야 합니다.

3.2.3. 웹 콘솔을 사용하여 OperatorHub에서 설치

OpenShift Container Platform 웹 콘솔을 사용하여 OperatorHub에서 Operator를 설치하고 구독할 수 있습니다.

사전 요구 사항

- Operator 설치 권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다.

프로세스

- 웹 콘솔에서 Operators → OperatorHub 페이지로 이동합니다.

원하는 Operator를 찾으려면 키워드를 Filter by keyword 상자에 입력하거나 스크롤합니다. 예를 들어 Kubernetes Operator의 고급 클러스터 관리 기능을 찾으려면

advanced를 입력합니다.인프라 기능에서 옵션을 필터링할 수 있습니다. 예를 들어, 연결이 끊긴 환경 (제한된 네트워크 환경이라고도 함)에서 작업하는 Operator를 표시하려면 Disconnected를 선택합니다.

Operator를 선택하여 추가 정보를 표시합니다.

참고커뮤니티 Operator를 선택하면 Red Hat이 커뮤니티 Operator를 인증하지 않는다고 경고합니다. 계속하기 전에 경고를 확인해야합니다.

- Operator에 대한 정보를 확인하고 Install을 클릭합니다.

Operator 설치 페이지에서 다음을 수행합니다.

- Operator를 설치할 특정 단일 네임스페이스를 선택합니다. Operator는 이 단일 네임 스페이스에서만 모니터링 및 사용할 수 있게 됩니다.

- Update Channe을 선택합니다 (하나 이상이 사용 가능한 경우).

- 앞에서 설명한 대로 자동 또는 수동 승인 전략을 선택합니다.

이 OpenShift Container Platform 클러스터에서 선택한 네임스페이스에서 Operator를 사용할 수 있도록 하려면 설치를 클릭합니다.

수동 승인 전략을 선택한 경우 설치 계획을 검토하고 승인할 때까지 서브스크립션의 업그레이드 상태가 업그레이드 중으로 유지됩니다.

Install Plan 페이지에서 승인 한 후 subscription 업그레이드 상태가 Up to date로 이동합니다.

- 자동 승인 전략을 선택한 경우 업그레이드 상태가 개입 없이 최신 상태로 확인되어야 합니다.

서브스크립션의 업그레이드 상태가 최신이면 Operator → 설치된 Operator를 선택하여 설치된 Operator의 CSV(클러스터 서비스 버전)가 최종적으로 표시되는지 확인합니다. 상태는 최종적으로 관련 네임스페이스에서 InstallSucceeded로 확인되어야 합니다.

참고모든 네임 스페이스… 설치 모드에서는

openshift-operators네임스페이스에서 상태가 InstallSucceeded 로 확인 되지만 다른 네임스페이스에서 확인하면 상태가 복사됩니다.그러지 않은 경우 다음을 수행합니다.

-

openshift-operators프로젝트(또는 A 특정 네임 스페이스… 워크로드 → Pod 페이지에서 추가 문제 해결을 위해 문제를 보고하는 설치 모드가 선택되었습니다.

-

3.2.4. CLI를 사용하여 OperatorHub에서 설치

OpenShift Container Platform 웹 콘솔을 사용하는 대신 CLI를 사용하여 OperatorHub에서 Operator를 설치할 수 있습니다. oc 명령을 사용하여 Subscription 개체를 만들거나 업데이트합니다.

사전 요구 사항

- Operator 설치 권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다.

-

로컬 시스템에

oc명령을 설치합니다.

프로세스

OperatorHub에서 클러스터에 사용 가능한 Operator의 목록을 표시합니다.

$ oc get packagemanifests -n openshift-marketplace출력 예

NAME CATALOG AGE 3scale-operator Red Hat Operators 91m advanced-cluster-management Red Hat Operators 91m amq7-cert-manager Red Hat Operators 91m ... couchbase-enterprise-certified Certified Operators 91m crunchy-postgres-operator Certified Operators 91m mongodb-enterprise Certified Operators 91m ... etcd Community Operators 91m jaeger Community Operators 91m kubefed Community Operators 91m ...필요한 Operator의 카탈로그를 기록해 둡니다.

필요한 Operator를 검사하여 지원되는 설치 모드 및 사용 가능한 채널을 확인합니다.

$ oc describe packagemanifests <operator_name> -n openshift-marketplaceOperatorGroup오브젝트로 정의되는 Operator group에서 Operator group과 동일한 네임스페이스에 있는 모든 Operator에 대해 필요한 RBAC 액세스 권한을 생성할 대상 네임스페이스를 선택합니다.Operator를 서브스크립션하는 네임스페이스에는 Operator의 설치 모드, 즉

AllNamespaces또는SingleNamespace모드와 일치하는 Operator group이 있어야 합니다. 설치하려는 Operator에서AllNamespaces를 사용하는 경우openshift-operators네임스페이스에 적절한 Operator group이 이미 있습니다.그러나 Operator에서

SingleNamespace모드를 사용하고 적절한 Operator group이 없는 경우 이를 생성해야 합니다.참고이 프로세스의 웹 콘솔 버전에서는

SingleNamespace모드를 선택할 때 자동으로OperatorGroup및Subscription개체 생성을 자동으로 처리합니다.OperatorGroup개체 YAML 파일을 만듭니다 (예:operatorgroup.yaml).OperatorGroup오브젝트의 예apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: <operatorgroup_name> namespace: <namespace> spec: targetNamespaces: - <namespace>OperatorGroup개체를 생성합니다.$ oc apply -f operatorgroup.yaml

Subscription개체 YAML 파일을 생성하여 OpenShift Pipelines Operator에 네임스페이스를 등록합니다(예:sub.yaml).Subscription개체 예apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: <subscription_name> namespace: openshift-operators1 spec: channel: <channel_name>2 name: <operator_name>3 source: redhat-operators4 sourceNamespace: openshift-marketplace5 config: env:6 - name: ARGS value: "-v=10" envFrom:7 - secretRef: name: license-secret volumes:8 - name: <volume_name> configMap: name: <configmap_name> volumeMounts:9 - mountPath: <directory_name> name: <volume_name> tolerations:10 - operator: "Exists" resources:11 requests: memory: "64Mi" cpu: "250m" limits: memory: "128Mi" cpu: "500m" nodeSelector:12 foo: bar- 1

AllNamespaces설치 모드를 사용하려면openshift-operators네임스페이스를 지정합니다. 그 외에는SingleNamespace설치 모드를 사용하도록 관련 단일 네임스페이스를 지정합니다.- 2

- 등록할 채널의 이름입니다.

- 3

- 등록할 Operator의 이름입니다.

- 4

- Operator를 제공하는 카탈로그 소스의 이름입니다.

- 5

- 카탈로그 소스의 네임스페이스입니다. 기본 OperatorHub 카탈로그 소스에는

openshift-marketplace를 사용합니다. - 6

env매개변수는 OLM에서 생성한 Pod의 모든 컨테이너에 존재해야 하는 환경 변수 목록을 정의합니다.- 7

envFrom매개 변수는 컨테이너에 환경 변수를 채울 소스 목록을 정의합니다.- 8

volumes매개변수는 OLM에서 생성한 Pod에 있어야 하는 볼륨 목록을 정의합니다.- 9

volumeMounts매개변수는 OLM에서 생성한 Pod의 모든 컨테이너에 존재해야 하는 VolumeMounts 목록을 정의합니다.volumeMount가 존재하지 않는볼륨을참조하는 경우 OLM에서 Operator를 배포하지 못합니다.- 10

tolerations매개변수는 OLM에서 생성한 Pod의 허용 오차 목록을 정의합니다.- 11

resources매개변수는 OLM에서 생성한 Pod의 모든 컨테이너에 대한 리소스 제약 조건을 정의합니다.- 12

nodeSelector매개변수는 OLM에서 생성한 Pod에 대한NodeSelector를 정의합니다.

Subscription오브젝트를 생성합니다.$ oc apply -f sub.yaml이 시점에서 OLM은 이제 선택한 Operator를 인식합니다. Operator의 CSV(클러스터 서비스 버전)가 대상 네임스페이스에 표시되고 Operator에서 제공하는 API를 생성에 사용할 수 있어야 합니다.

3.2.5. 특정 버전의 Operator 설치

Subscription 오브젝트에 CSV(클러스터 서비스 버전)를 설정하면 특정 버전의 Operator를 설치할 수 있습니다.

사전 요구 사항

- Operator 설치 권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다.

-

OpenShift CLI(

oc)가 설치됨

프로세스

startingCSV필드를 설정하여 특정 버전의 Operator에 네임스페이스를 서브스크립션하는Subscription오브젝트 YAML 파일을 생성합니다. 카탈로그에 이후 버전이 있는 경우 Operator가 자동으로 업그레이드되지 않도록installPlanApproval필드를Manual로 설정합니다.예를 들어 다음

sub.yaml파일을 사용하여 특별히 3.4.0 버전에 Red Hat Quay Operator를 설치할 수 있습니다.특정 시작 Operator 버전이 있는 서브스크립션

apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: quay-operator namespace: quay spec: channel: quay-v3.4 installPlanApproval: Manual1 name: quay-operator source: redhat-operators sourceNamespace: openshift-marketplace startingCSV: quay-operator.v3.4.02 Subscription오브젝트를 생성합니다.$ oc apply -f sub.yaml- 보류 중인 설치 계획을 수동으로 승인하여 Operator 설치를 완료합니다.

4장. 관리자 작업

4.1. 클러스터에 Operator 추가

클러스터 관리자는 OperatorHub가 있는 네임스페이스에 Operator를 등록하여 OpenShift Container Platform 클러스터에 Operator를 설치할 수 있습니다.

4.1.1. OperatorHub를 통한 Operator 설치

OperatorHub는 Operator를 검색하는 사용자 인터페이스입니다. 이는 클러스터에 Operator를 설치하고 관리하는 OLM(Operator Lifecycle Manager)과 함께 작동합니다.

적절한 권한이 있는 클러스터 관리자는 OpenShift Container Platform 웹 콘솔 또는 CLI를 사용하여 OperatorHub에서 Operator를 설치할 수 있습니다.

설치하는 동안 Operator의 다음 초기 설정을 결정해야합니다.

- 설치 모드

- Operator를 설치할 특정 네임스페이스를 선택합니다.

- 업데이트 채널

- 여러 채널을 통해 Operator를 사용할 수있는 경우 구독할 채널을 선택할 수 있습니다. 예를 들어, stable 채널에서 배치하려면 (사용 가능한 경우) 목록에서 해당 채널을 선택합니다.

- 승인 전략

자동 또는 수동 업데이트를 선택할 수 있습니다.

설치된 Operator에 대해 자동 업데이트를 선택하는 경우 선택한 채널에 해당 Operator의 새 버전이 제공되면 OLM(Operator Lifecycle Manager)에서 Operator의 실행 중인 인스턴스를 개입 없이 자동으로 업그레이드합니다.

수동 업데이트를 선택하면 최신 버전의 Operator가 사용 가능할 때 OLM이 업데이트 요청을 작성합니다. 클러스터 관리자는 Operator를 새 버전으로 업데이트하려면 OLM 업데이트 요청을 수동으로 승인해야 합니다.

4.1.2. 웹 콘솔을 사용하여 OperatorHub에서 설치

OpenShift Container Platform 웹 콘솔을 사용하여 OperatorHub에서 Operator를 설치하고 구독할 수 있습니다.

사전 요구 사항

-

cluster-admin권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다. - Operator 설치 권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다.

프로세스

- 웹 콘솔에서 Operators → OperatorHub 페이지로 이동합니다.

원하는 Operator를 찾으려면 키워드를 Filter by keyword 상자에 입력하거나 스크롤합니다. 예를 들어 Kubernetes Operator의 고급 클러스터 관리 기능을 찾으려면

advanced를 입력합니다.인프라 기능에서 옵션을 필터링할 수 있습니다. 예를 들어, 연결이 끊긴 환경 (제한된 네트워크 환경이라고도 함)에서 작업하는 Operator를 표시하려면 Disconnected를 선택합니다.

Operator를 선택하여 추가 정보를 표시합니다.

참고커뮤니티 Operator를 선택하면 Red Hat이 커뮤니티 Operator를 인증하지 않는다고 경고합니다. 계속하기 전에 경고를 확인해야합니다.

- Operator에 대한 정보를 확인하고 Install을 클릭합니다.

Operator 설치 페이지에서 다음을 수행합니다.

다음 명령 중 하나를 선택합니다.

-

All namespaces on the cluster (default)에서는 기본

openshift-operators네임스페이스에 Operator가 설치되므로 Operator가 클러스터의 모든 네임스페이스를 모니터링하고 사용할 수 있습니다. 이 옵션을 항상 사용할 수있는 것은 아닙니다. - A specific namespace on the cluster를 사용하면 Operator를 설치할 특정 단일 네임 스페이스를 선택할 수 있습니다. Operator는 이 단일 네임 스페이스에서만 모니터링 및 사용할 수 있게 됩니다.

-

All namespaces on the cluster (default)에서는 기본

- Operator를 설치할 특정 단일 네임스페이스를 선택합니다. Operator는 이 단일 네임 스페이스에서만 모니터링 및 사용할 수 있게 됩니다.

- Update Channe을 선택합니다 (하나 이상이 사용 가능한 경우).

- 앞에서 설명한 대로 자동 또는 수동 승인 전략을 선택합니다.

이 OpenShift Container Platform 클러스터에서 선택한 네임스페이스에서 Operator를 사용할 수 있도록 하려면 설치를 클릭합니다.

수동 승인 전략을 선택한 경우 설치 계획을 검토하고 승인할 때까지 서브스크립션의 업그레이드 상태가 업그레이드 중으로 유지됩니다.

Install Plan 페이지에서 승인 한 후 subscription 업그레이드 상태가 Up to date로 이동합니다.

- 자동 승인 전략을 선택한 경우 업그레이드 상태가 개입 없이 최신 상태로 확인되어야 합니다.

서브스크립션의 업그레이드 상태가 최신이면 Operator → 설치된 Operator를 선택하여 설치된 Operator의 CSV(클러스터 서비스 버전)가 최종적으로 표시되는지 확인합니다. 상태는 최종적으로 관련 네임스페이스에서 InstallSucceeded로 확인되어야 합니다.

참고모든 네임 스페이스… 설치 모드에서는

openshift-operators네임스페이스에서 상태가 InstallSucceeded 로 확인 되지만 다른 네임스페이스에서 확인하면 상태가 복사됩니다.그러지 않은 경우 다음을 수행합니다.

-

openshift-operators프로젝트(또는 A 특정 네임 스페이스… 워크로드 → Pod 페이지에서 추가 문제 해결을 위해 문제를 보고하는 설치 모드가 선택되었습니다.

-

4.1.3. CLI를 사용하여 OperatorHub에서 설치

OpenShift Container Platform 웹 콘솔을 사용하는 대신 CLI를 사용하여 OperatorHub에서 Operator를 설치할 수 있습니다. oc 명령을 사용하여 Subscription 개체를 만들거나 업데이트합니다.

사전 요구 사항

- Operator 설치 권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다.

-

로컬 시스템에

oc명령을 설치합니다.

프로세스

OperatorHub에서 클러스터에 사용 가능한 Operator의 목록을 표시합니다.

$ oc get packagemanifests -n openshift-marketplace출력 예

NAME CATALOG AGE 3scale-operator Red Hat Operators 91m advanced-cluster-management Red Hat Operators 91m amq7-cert-manager Red Hat Operators 91m ... couchbase-enterprise-certified Certified Operators 91m crunchy-postgres-operator Certified Operators 91m mongodb-enterprise Certified Operators 91m ... etcd Community Operators 91m jaeger Community Operators 91m kubefed Community Operators 91m ...필요한 Operator의 카탈로그를 기록해 둡니다.

필요한 Operator를 검사하여 지원되는 설치 모드 및 사용 가능한 채널을 확인합니다.

$ oc describe packagemanifests <operator_name> -n openshift-marketplaceOperatorGroup오브젝트로 정의되는 Operator group에서 Operator group과 동일한 네임스페이스에 있는 모든 Operator에 대해 필요한 RBAC 액세스 권한을 생성할 대상 네임스페이스를 선택합니다.Operator를 서브스크립션하는 네임스페이스에는 Operator의 설치 모드, 즉

AllNamespaces또는SingleNamespace모드와 일치하는 Operator group이 있어야 합니다. 설치하려는 Operator에서AllNamespaces를 사용하는 경우openshift-operators네임스페이스에 적절한 Operator group이 이미 있습니다.그러나 Operator에서

SingleNamespace모드를 사용하고 적절한 Operator group이 없는 경우 이를 생성해야 합니다.참고이 프로세스의 웹 콘솔 버전에서는

SingleNamespace모드를 선택할 때 자동으로OperatorGroup및Subscription개체 생성을 자동으로 처리합니다.OperatorGroup개체 YAML 파일을 만듭니다 (예:operatorgroup.yaml).OperatorGroup오브젝트의 예apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: <operatorgroup_name> namespace: <namespace> spec: targetNamespaces: - <namespace>OperatorGroup개체를 생성합니다.$ oc apply -f operatorgroup.yaml

Subscription개체 YAML 파일을 생성하여 OpenShift Pipelines Operator에 네임스페이스를 등록합니다(예:sub.yaml).Subscription개체 예apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: <subscription_name> namespace: openshift-operators1 spec: channel: <channel_name>2 name: <operator_name>3 source: redhat-operators4 sourceNamespace: openshift-marketplace5 config: env:6 - name: ARGS value: "-v=10" envFrom:7 - secretRef: name: license-secret volumes:8 - name: <volume_name> configMap: name: <configmap_name> volumeMounts:9 - mountPath: <directory_name> name: <volume_name> tolerations:10 - operator: "Exists" resources:11 requests: memory: "64Mi" cpu: "250m" limits: memory: "128Mi" cpu: "500m" nodeSelector:12 foo: bar- 1

AllNamespaces설치 모드를 사용하려면openshift-operators네임스페이스를 지정합니다. 그 외에는SingleNamespace설치 모드를 사용하도록 관련 단일 네임스페이스를 지정합니다.- 2

- 등록할 채널의 이름입니다.

- 3

- 등록할 Operator의 이름입니다.

- 4

- Operator를 제공하는 카탈로그 소스의 이름입니다.

- 5

- 카탈로그 소스의 네임스페이스입니다. 기본 OperatorHub 카탈로그 소스에는

openshift-marketplace를 사용합니다. - 6

env매개변수는 OLM에서 생성한 Pod의 모든 컨테이너에 존재해야 하는 환경 변수 목록을 정의합니다.- 7

envFrom매개 변수는 컨테이너에 환경 변수를 채울 소스 목록을 정의합니다.- 8

volumes매개변수는 OLM에서 생성한 Pod에 있어야 하는 볼륨 목록을 정의합니다.- 9

volumeMounts매개변수는 OLM에서 생성한 Pod의 모든 컨테이너에 존재해야 하는 VolumeMounts 목록을 정의합니다.volumeMount가 존재하지 않는볼륨을참조하는 경우 OLM에서 Operator를 배포하지 못합니다.- 10

tolerations매개변수는 OLM에서 생성한 Pod의 허용 오차 목록을 정의합니다.- 11

resources매개변수는 OLM에서 생성한 Pod의 모든 컨테이너에 대한 리소스 제약 조건을 정의합니다.- 12

nodeSelector매개변수는 OLM에서 생성한 Pod에 대한NodeSelector를 정의합니다.

Subscription오브젝트를 생성합니다.$ oc apply -f sub.yaml이 시점에서 OLM은 이제 선택한 Operator를 인식합니다. Operator의 CSV(클러스터 서비스 버전)가 대상 네임스페이스에 표시되고 Operator에서 제공하는 API를 생성에 사용할 수 있어야 합니다.

4.1.4. 특정 버전의 Operator 설치

Subscription 오브젝트에 CSV(클러스터 서비스 버전)를 설정하면 특정 버전의 Operator를 설치할 수 있습니다.

사전 요구 사항

- Operator 설치 권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다.

-

OpenShift CLI(

oc)가 설치됨

프로세스

startingCSV필드를 설정하여 특정 버전의 Operator에 네임스페이스를 서브스크립션하는Subscription오브젝트 YAML 파일을 생성합니다. 카탈로그에 이후 버전이 있는 경우 Operator가 자동으로 업그레이드되지 않도록installPlanApproval필드를Manual로 설정합니다.예를 들어 다음

sub.yaml파일을 사용하여 특별히 3.4.0 버전에 Red Hat Quay Operator를 설치할 수 있습니다.특정 시작 Operator 버전이 있는 서브스크립션

apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: quay-operator namespace: quay spec: channel: quay-v3.4 installPlanApproval: Manual1 name: quay-operator source: redhat-operators sourceNamespace: openshift-marketplace startingCSV: quay-operator.v3.4.02 Subscription오브젝트를 생성합니다.$ oc apply -f sub.yaml- 보류 중인 설치 계획을 수동으로 승인하여 Operator 설치를 완료합니다.

4.2. 설치된 Operator 업그레이드

클러스터 관리자는 OpenShift Container Platform 클러스터에서 OLM(Operator Lifecycle Manager)을 사용하여 이전에 설치한 Operator를 업그레이드할 수 있습니다.

4.2.1. Operator의 업데이트 채널 변경

설치된 Operator의 서브스크립션은 Operator의 업데이트를 추적하고 수신하는 데 사용되는 업데이트 채널을 지정합니다. Operator를 업그레이드하여 추적을 시작하고 최신 채널에서 업데이트를 수신하려면 서브스크립션의 업데이트 채널을 변경하면 됩니다.

서브스크립션의 업데이트 채널 이름은 Operator마다 다를 수 있지만 이름 지정 스키마는 지정된 Operator 내의 공통 규칙을 따라야 합니다. 예를 들어 채널 이름은 Operator(1.2, 1.3) 또는 릴리스 빈도(stable, fast)에서 제공하는 애플리케이션의 마이너 릴리스 업데이트 스트림을 따를 수 있습니다.

설치된 Operator는 현재 채널보다 오래된 채널로 변경할 수 없습니다.

서브스크립션의 승인 전략이 자동으로 설정되어 있으면 선택한 채널에서 새 Operator 버전을 사용할 수 있게 되는 즉시 업그레이드 프로세스가 시작됩니다. 승인 전략이 수동으로 설정된 경우 보류 중인 업그레이드를 수동으로 승인해야 합니다.

사전 요구 사항

- OLM(Operator Lifecycle Manager)을 사용하여 이전에 설치한 Operator입니다.

절차

- OpenShift Container Platform 웹 콘솔의 관리자 관점에서 Operator → 설치된 Operator로 이동합니다.

- 업데이트 채널을 변경할 Operator 이름을 클릭합니다.

- 서브스크립션 탭을 클릭합니다.

- 채널에서 업데이트 채널의 이름을 클릭합니다.

- 변경할 최신 업데이트 채널을 클릭한 다음 저장을 클릭합니다.

자동 승인 전략이 있는 서브스크립션의 경우 업그레이드가 자동으로 시작됩니다. Operator → 설치된 Operator 페이지로 이동하여 업그레이드 진행 상황을 모니터링합니다. 완료되면 상태가 성공 및 최신으로 변경됩니다.

수동 승인 전략이 있는 서브스크립션의 경우 서브스크립션 탭에서 업그레이드를 수동으로 승인할 수 있습니다.

4.2.2. 보류 중인 Operator 업그레이드 수동 승인

설치된 Operator의 서브스크립션에 있는 승인 전략이 수동으로 설정된 경우 새 업데이트가 현재 업데이트 채널에 릴리스될 때 업데이트를 수동으로 승인해야 설치가 시작됩니다.

사전 요구 사항

- OLM(Operator Lifecycle Manager)을 사용하여 이전에 설치한 Operator입니다.

절차

- OpenShift Container Platform 웹 콘솔의 관리자 관점에서 Operator → 설치된 Operator로 이동합니다.

- 보류 중인 업그레이드가 있는 Operator에 업그레이드 사용 가능 상태가 표시됩니다. 업그레이드할 Operator 이름을 클릭합니다.

- 서브스크립션 탭을 클릭합니다. 승인이 필요한 업그레이드는 업그레이드 상태 옆에 표시됩니다. 예를 들어 1 승인 필요가 표시될 수 있습니다.

- 1 승인 필요를 클릭한 다음 설치 계획 프리뷰를 클릭합니다.

- 업그레이드할 수 있는 것으로 나열된 리소스를 검토합니다. 문제가 없는 경우 승인을 클릭합니다.

- Operator → 설치된 Operator 페이지로 이동하여 업그레이드 진행 상황을 모니터링합니다. 완료되면 상태가 성공 및 최신으로 변경됩니다.

4.3. 클러스터에서 Operator 삭제

다음에서는 OpenShift Container Platform 클러스터에서 OLM(Operator Lifecycle Manager)을 사용하여 이전에 설치한 Operator를 삭제하는 방법을 설명합니다.

4.3.1. 웹 콘솔을 사용하여 클러스터에서 Operator 삭제

클러스터 관리자는 웹 콘솔을 사용하여 선택한 네임스페이스에서 설치된 Operator를 삭제할 수 있습니다.

사전 요구 사항

-

cluster-admin권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터 웹 콘솔에 액세스할 수 있습니다.

프로세스

- Operator → 설치된 Operator 페이지에서 스크롤하거나 이름별 필터링에 키워드를 입력하여 원하는 Operator를 찾습니다. 그런 다음 해당 Operator를 클릭합니다.

Operator 세부 정보 페이지 오른쪽에 있는 작업 목록에서 Operator 제거를 선택합니다.

Operator를 설치 제거하시겠습니까? 대화 상자가 표시되고 다음 메시지가 표시됩니다.

Operator를 제거해도 사용자 정의 리소스 정의 또는 관리형 리소스는 제거되지 않습니다. Operator에서 클러스터에 애플리케이션을 배포하거나 클러스터 외부 리소스를 구성한 경우 해당 리소스는 계속 실행되며 수동으로 정리해야 합니다.

이 작업은 Operator 및 Operator 배포 및 Pod(있는 경우)를 제거합니다. CRD 및 CR을 포함하여 Operator에서 관리하는 Operand 및 리소스는 제거되지 않습니다. 웹 콘솔에서는 일부 Operator의 대시보드 및 탐색 항목을 활성화합니다. Operator를 설치 제거한 후 해당 항목을 제거하려면 Operator CRD를 수동으로 삭제해야 할 수 있습니다.

- 설치 제거를 선택합니다. 이 Operator는 실행을 중지하고 더 이상 업데이트를 수신하지 않습니다.

4.3.2. CLI를 사용하여 클러스터에서 Operator 삭제

클러스터 관리자는 CLI를 사용하여 선택한 네임스페이스에서 설치된 Operator를 삭제할 수 있습니다.

사전 요구 사항

-

cluster-admin권한이 있는 계정을 사용하여 OpenShift Container Platform 클러스터에 액세스할 수 있습니다. -

oc명령이 워크스테이션에 설치되어 있습니다.

프로세스

currentCSV필드에서 구독한 Operator(예:jaeger)의 현재 버전을 확인합니다.$ oc get subscription jaeger -n openshift-operators -o yaml | grep currentCSV출력 예

currentCSV: jaeger-operator.v1.8.2서브스크립션을 삭제합니다(예:

jaeger).$ oc delete subscription jaeger -n openshift-operators출력 예

subscription.operators.coreos.com "jaeger" deleted이전 단계의