Este contenido no está disponible en el idioma seleccionado.

CLI tools

Learning how to use the command-line tools for OpenShift Container Platform

Abstract

Chapter 1. OpenShift Container Platform CLI tools overview

A user performs a range of operations while working on OpenShift Container Platform such as the following:

- Managing clusters

- Building, deploying, and managing applications

- Managing deployment processes

- Developing Operators

- Creating and maintaining Operator catalogs

OpenShift Container Platform offers a set of command-line interface (CLI) tools that simplify these tasks by enabling users to perform various administration and development operations from the terminal. These tools expose simple commands to manage the applications, as well as interact with each component of the system.

1.1. List of CLI tools

The following set of CLI tools are available in OpenShift Container Platform:

- OpenShift CLI (oc): This is the most commonly used CLI tool by OpenShift Container Platform users. It helps both cluster administrators and developers to perform end-to-end operations across OpenShift Container Platform using the terminal. Unlike the web console, it allows the user to work directly with the project source code using command scripts.

-

Developer CLI (odo): The

odoCLI tool helps developers focus on their main goal of creating and maintaining applications on OpenShift Container Platform by abstracting away complex Kubernetes and OpenShift Container Platform concepts. It helps the developers to write, build, and debug applications on a cluster from the terminal without the need to administer the cluster. -

Knative CLI (kn): The Knative (

kn) CLI tool provides simple and intuitive terminal commands that can be used to interact with OpenShift Serverless components, such as Knative Serving and Eventing. -

Pipelines CLI (tkn): OpenShift Pipelines is a continuous integration and continuous delivery (CI/CD) solution in OpenShift Container Platform, which internally uses Tekton. The

tknCLI tool provides simple and intuitive commands to interact with OpenShift Pipelines using the terminal. -

opm CLI: The

opmCLI tool helps the Operator developers and cluster administrators to create and maintain the catalogs of Operators from the terminal. - Operator SDK: The Operator SDK, a component of the Operator Framework, provides a CLI tool that Operator developers can use to build, test, and deploy an Operator from the terminal. It simplifies the process of building Kubernetes-native applications, which can require deep, application-specific operational knowledge.

Chapter 2. OpenShift CLI (oc)

2.1. Getting started with the OpenShift CLI

2.1.1. About the OpenShift CLI

With the OpenShift command-line interface (CLI), the oc command, you can create applications and manage OpenShift Container Platform projects from a terminal. The OpenShift CLI is ideal in the following situations:

- Working directly with project source code

- Scripting OpenShift Container Platform operations

- Managing projects while restricted by bandwidth resources and the web console is unavailable

2.1.2. Installing the OpenShift CLI

You can install the OpenShift CLI (oc) either by downloading the binary or by using an RPM.

2.1.2.1. Installing the OpenShift CLI by downloading the binary

You can install the OpenShift CLI (oc) to interact with OpenShift Container Platform from a command-line interface. You can install oc on Linux, Windows, or macOS.

If you installed an earlier version of oc, you cannot use it to complete all of the commands in OpenShift Container Platform 4.8. Download and install the new version of oc.

Installing the OpenShift CLI on Linux

You can install the OpenShift CLI (oc) binary on Linux by using the following procedure.

Procedure

- Navigate to the OpenShift Container Platform downloads page on the Red Hat Customer Portal.

- Select the appropriate version in the Version drop-down menu.

- Click Download Now next to the OpenShift v4.8 Linux Client entry and save the file.

Unpack the archive:

$ tar xvzf <file>Place the

ocbinary in a directory that is on yourPATH.To check your

PATH, execute the following command:$ echo $PATH

After you install the OpenShift CLI, it is available using the oc command:

$ oc <command>Installing the OpenShift CLI on Windows

You can install the OpenShift CLI (oc) binary on Windows by using the following procedure.

Procedure

- Navigate to the OpenShift Container Platform downloads page on the Red Hat Customer Portal.

- Select the appropriate version in the Version drop-down menu.

- Click Download Now next to the OpenShift v4.8 Windows Client entry and save the file.

- Unzip the archive with a ZIP program.

Move the

ocbinary to a directory that is on yourPATH.To check your

PATH, open the command prompt and execute the following command:C:\> path

After you install the OpenShift CLI, it is available using the oc command:

C:\> oc <command>Installing the OpenShift CLI on macOS

You can install the OpenShift CLI (oc) binary on macOS by using the following procedure.

Procedure

- Navigate to the OpenShift Container Platform downloads page on the Red Hat Customer Portal.

- Select the appropriate version in the Version drop-down menu.

- Click Download Now next to the OpenShift v4.8 MacOSX Client entry and save the file.

- Unpack and unzip the archive.

Move the

ocbinary to a directory on your PATH.To check your

PATH, open a terminal and execute the following command:$ echo $PATH

After you install the OpenShift CLI, it is available using the oc command:

$ oc <command>2.1.2.2. Installing the OpenShift CLI by using the web console

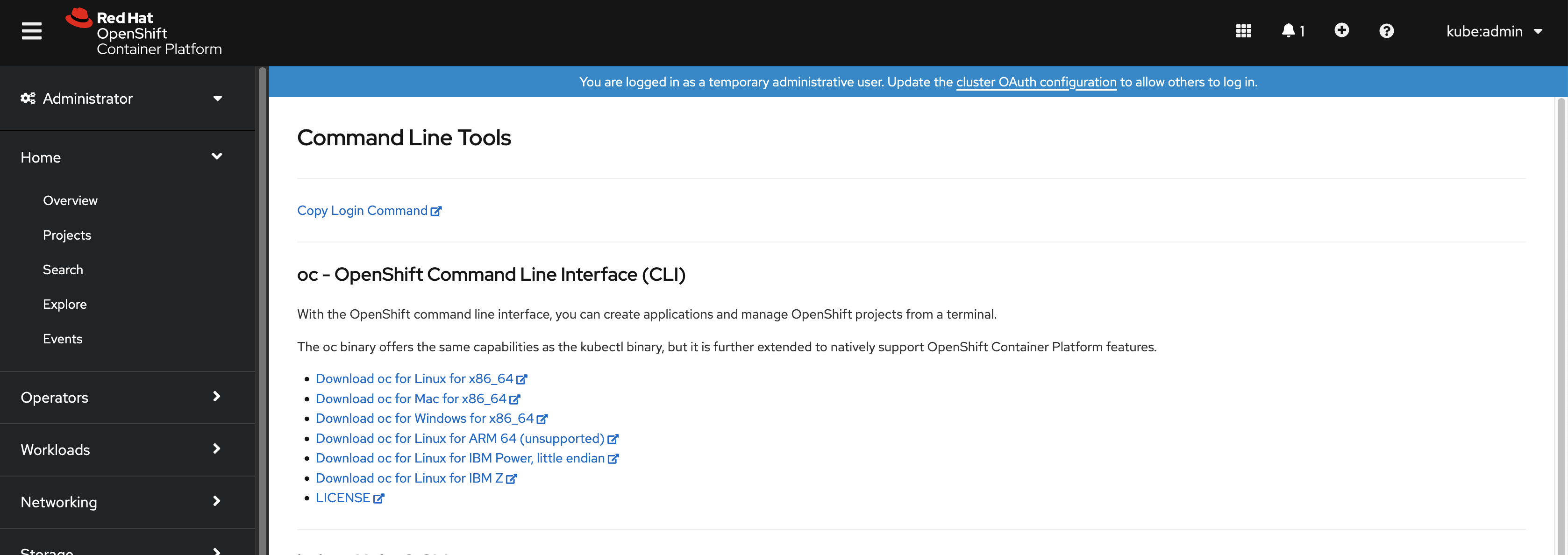

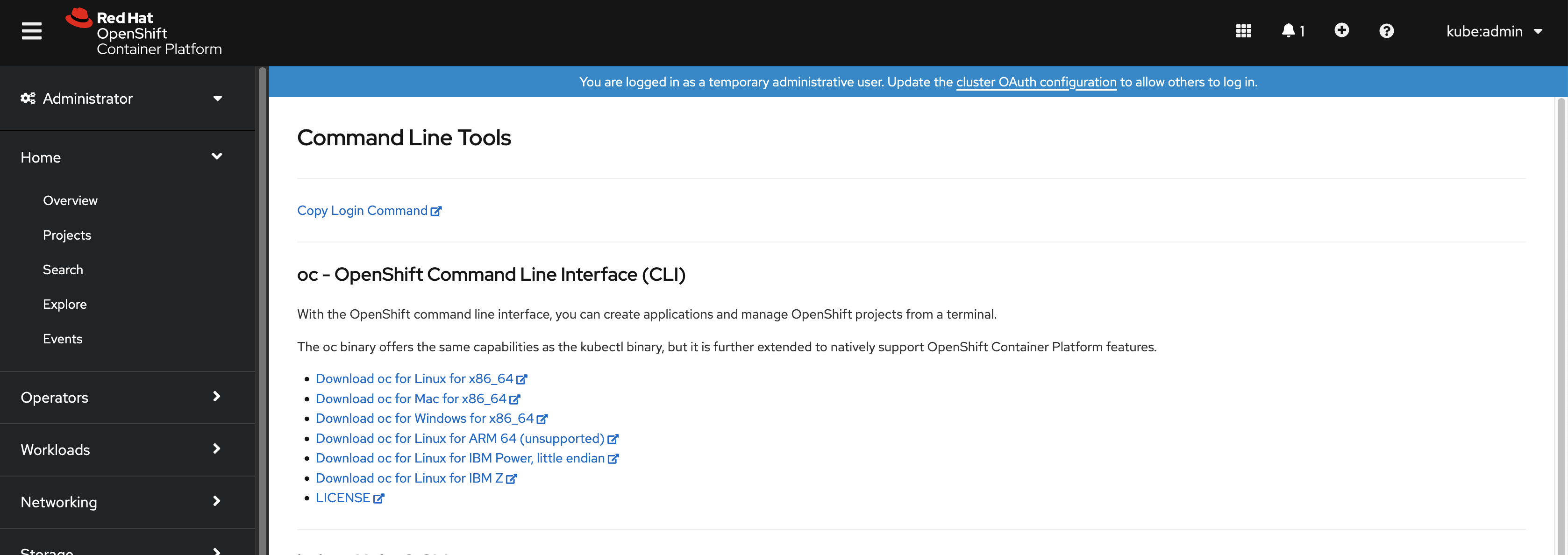

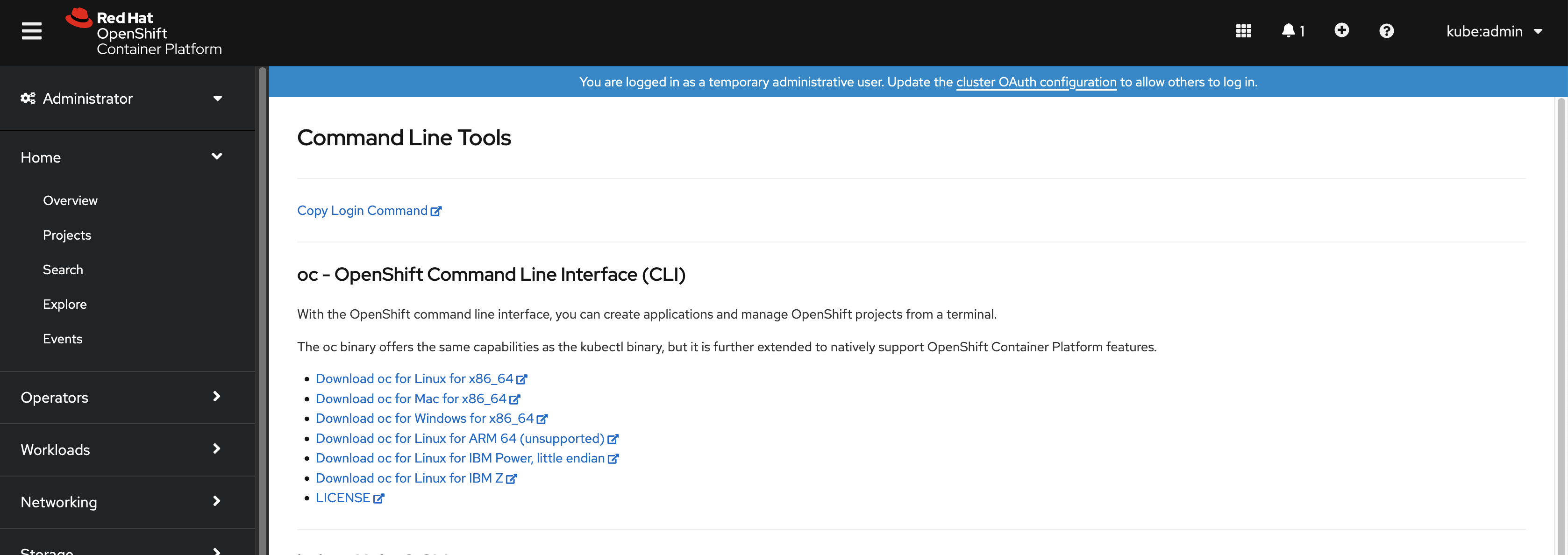

You can install the OpenShift CLI (oc) to interact with OpenShift Container Platform from a web console. You can install oc on Linux, Windows, or macOS.

If you installed an earlier version of oc, you cannot use it to complete all of the commands in OpenShift Container Platform 4.8. Download and install the new version of oc.

2.1.2.2.1. Installing the OpenShift CLI on Linux using the web console

You can install the OpenShift CLI (oc) binary on Linux by using the following procedure.

Procedure

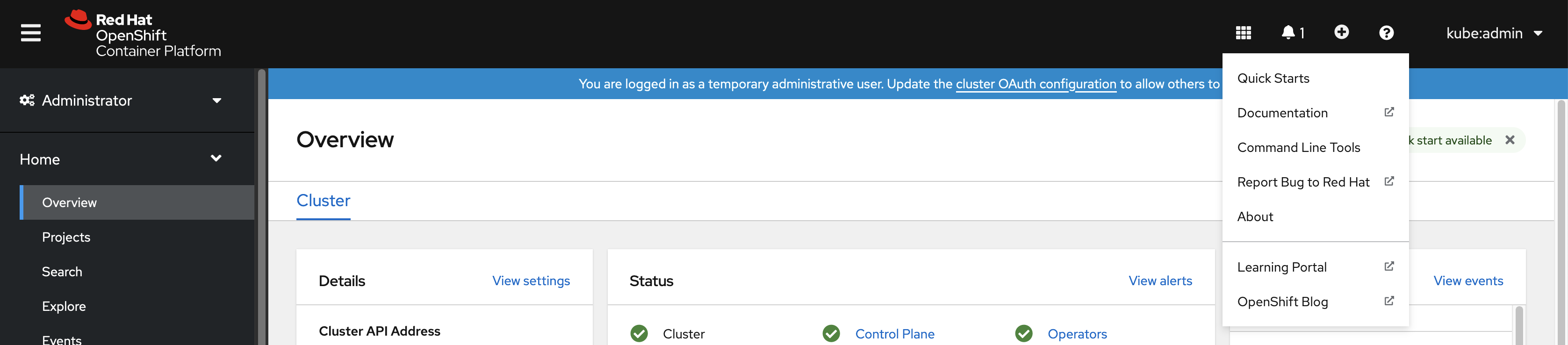

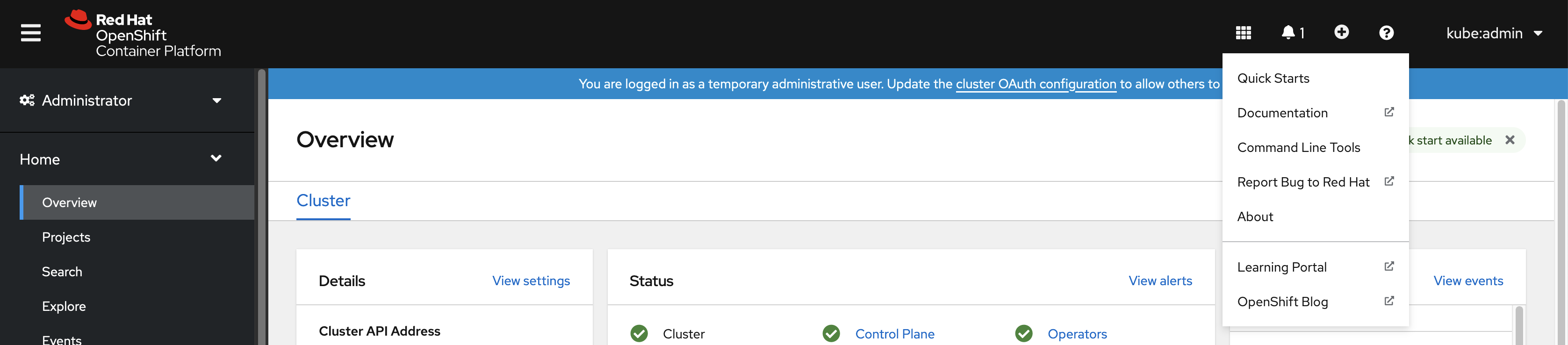

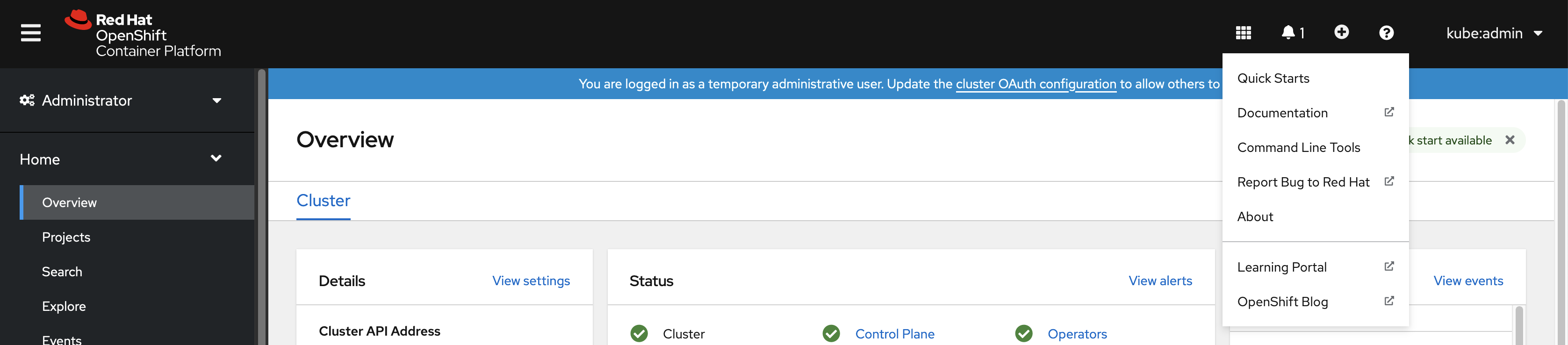

From the web console, click ?.

Click Command Line Tools.

-

Select appropriate

ocbinary for your Linux platform, and then click Download oc for Linux. - Save the file.

Unpack the archive.

$ tar xvzf <file>Move the

ocbinary to a directory that is on yourPATH.To check your

PATH, execute the following command:$ echo $PATH

After you install the OpenShift CLI, it is available using the oc command:

$ oc <command>2.1.2.2.2. Installing the OpenShift CLI on Windows using the web console

You can install the OpenShift CLI (oc) binary on Winndows by using the following procedure.

Procedure

From the web console, click ?.

Click Command Line Tools.

-

Select the

ocbinary for Windows platform, and then click Download oc for Windows for x86_64. - Save the file.

- Unzip the archive with a ZIP program.

Move the

ocbinary to a directory that is on yourPATH.To check your

PATH, open the command prompt and execute the following command:C:\> path

After you install the OpenShift CLI, it is available using the oc command:

C:\> oc <command>2.1.2.2.3. Installing the OpenShift CLI on macOS using the web console

You can install the OpenShift CLI (oc) binary on macOS by using the following procedure.

Procedure

From the web console, click ?.

Click Command Line Tools.

-

Select the

ocbinary for macOS platform, and then click Download oc for Mac for x86_64. - Save the file.

- Unpack and unzip the archive.

Move the

ocbinary to a directory on your PATH.To check your

PATH, open a terminal and execute the following command:$ echo $PATH

After you install the OpenShift CLI, it is available using the oc command:

$ oc <command>2.1.2.3. Installing the OpenShift CLI by using an RPM

For Red Hat Enterprise Linux (RHEL), you can install the OpenShift CLI (oc) as an RPM if you have an active OpenShift Container Platform subscription on your Red Hat account.

Prerequisites

- Must have root or sudo privileges.

Procedure

Register with Red Hat Subscription Manager:

# subscription-manager registerPull the latest subscription data:

# subscription-manager refreshList the available subscriptions:

# subscription-manager list --available --matches '*OpenShift*'In the output for the previous command, find the pool ID for an OpenShift Container Platform subscription and attach the subscription to the registered system:

# subscription-manager attach --pool=<pool_id>Enable the repositories required by OpenShift Container Platform 4.8.

For Red Hat Enterprise Linux 8:

# subscription-manager repos --enable="rhocp-4.8-for-rhel-8-x86_64-rpms"For Red Hat Enterprise Linux 7:

# subscription-manager repos --enable="rhel-7-server-ose-4.8-rpms"

Install the

openshift-clientspackage:# yum install openshift-clients

After you install the CLI, it is available using the oc command:

$ oc <command>2.1.2.4. Installing the OpenShift CLI by using Homebrew

For macOS, you can install the OpenShift CLI (oc) by using the Homebrew package manager.

Prerequisites

-

You must have Homebrew (

brew) installed.

Procedure

Run the following command to install the openshift-cli package:

$ brew install openshift-cli

2.1.3. Logging in to the OpenShift CLI

You can log in to the OpenShift CLI (oc) to access and manage your cluster.

Prerequisites

- You must have access to an OpenShift Container Platform cluster.

-

You must have installed the OpenShift CLI (

oc).

To access a cluster that is accessible only over an HTTP proxy server, you can set the HTTP_PROXY, HTTPS_PROXY and NO_PROXY variables. These environment variables are respected by the oc CLI so that all communication with the cluster goes through the HTTP proxy.

Authentication headers are sent only when using HTTPS transport.

Procedure

Enter the

oc logincommand and pass in a user name:$ oc login -u user1When prompted, enter the required information:

Example output

Server [https://localhost:8443]: https://openshift.example.com:64431 The server uses a certificate signed by an unknown authority. You can bypass the certificate check, but any data you send to the server could be intercepted by others. Use insecure connections? (y/n): y2 Authentication required for https://openshift.example.com:6443 (openshift) Username: user1 Password:3 Login successful. You don't have any projects. You can try to create a new project, by running oc new-project <projectname> Welcome! See 'oc help' to get started.

If you are logged in to the web console, you can generate an oc login command that includes your token and server information. You can use the command to log in to the OpenShift Container Platform CLI without the interactive prompts. To generate the command, select Copy login command from the username drop-down menu at the top right of the web console.

You can now create a project or issue other commands for managing your cluster.

2.1.4. Using the OpenShift CLI

Review the following sections to learn how to complete common tasks using the CLI.

2.1.4.1. Creating a project

Use the oc new-project command to create a new project.

$ oc new-project my-projectExample output

Now using project "my-project" on server "https://openshift.example.com:6443".2.1.4.2. Creating a new app

Use the oc new-app command to create a new application.

$ oc new-app https://github.com/sclorg/cakephp-exExample output

--> Found image 40de956 (9 days old) in imagestream "openshift/php" under tag "7.2" for "php"

...

Run 'oc status' to view your app.2.1.4.3. Viewing pods

Use the oc get pods command to view the pods for the current project.

When you run oc inside a pod and do not specify a namespace, the namespace of the pod is used by default.

$ oc get pods -o wideExample output

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

cakephp-ex-1-build 0/1 Completed 0 5m45s 10.131.0.10 ip-10-0-141-74.ec2.internal <none>

cakephp-ex-1-deploy 0/1 Completed 0 3m44s 10.129.2.9 ip-10-0-147-65.ec2.internal <none>

cakephp-ex-1-ktz97 1/1 Running 0 3m33s 10.128.2.11 ip-10-0-168-105.ec2.internal <none>2.1.4.4. Viewing pod logs

Use the oc logs command to view logs for a particular pod.

$ oc logs cakephp-ex-1-deployExample output

--> Scaling cakephp-ex-1 to 1

--> Success2.1.4.5. Viewing the current project

Use the oc project command to view the current project.

$ oc projectExample output

Using project "my-project" on server "https://openshift.example.com:6443".2.1.4.6. Viewing the status for the current project

Use the oc status command to view information about the current project, such as services, deployments, and build configs.

$ oc statusExample output

In project my-project on server https://openshift.example.com:6443

svc/cakephp-ex - 172.30.236.80 ports 8080, 8443

dc/cakephp-ex deploys istag/cakephp-ex:latest <-

bc/cakephp-ex source builds https://github.com/sclorg/cakephp-ex on openshift/php:7.2

deployment #1 deployed 2 minutes ago - 1 pod

3 infos identified, use 'oc status --suggest' to see details.2.1.4.7. Listing supported API resources

Use the oc api-resources command to view the list of supported API resources on the server.

$ oc api-resourcesExample output

NAME SHORTNAMES APIGROUP NAMESPACED KIND

bindings true Binding

componentstatuses cs false ComponentStatus

configmaps cm true ConfigMap

...2.1.5. Getting help

You can get help with CLI commands and OpenShift Container Platform resources in the following ways.

Use

oc helpto get a list and description of all available CLI commands:Example: Get general help for the CLI

$ oc helpExample output

OpenShift Client This client helps you develop, build, deploy, and run your applications on any OpenShift or Kubernetes compatible platform. It also includes the administrative commands for managing a cluster under the 'adm' subcommand. Usage: oc [flags] Basic Commands: login Log in to a server new-project Request a new project new-app Create a new application ...Use the

--helpflag to get help about a specific CLI command:Example: Get help for the

oc createcommand$ oc create --helpExample output

Create a resource by filename or stdin JSON and YAML formats are accepted. Usage: oc create -f FILENAME [flags] ...Use the

oc explaincommand to view the description and fields for a particular resource:Example: View documentation for the

Podresource$ oc explain podsExample output

KIND: Pod VERSION: v1 DESCRIPTION: Pod is a collection of containers that can run on a host. This resource is created by clients and scheduled onto hosts. FIELDS: apiVersion <string> APIVersion defines the versioned schema of this representation of an object. Servers should convert recognized schemas to the latest internal value, and may reject unrecognized values. More info: https://git.k8s.io/community/contributors/devel/api-conventions.md#resources ...

2.1.6. Logging out of the OpenShift CLI

You can log out the OpenShift CLI to end your current session.

Use the

oc logoutcommand.$ oc logoutExample output

Logged "user1" out on "https://openshift.example.com"

This deletes the saved authentication token from the server and removes it from your configuration file.

2.2. Configuring the OpenShift CLI

2.2.1. Enabling tab completion

You can enable tab completion for the Bash or Zsh shells.

2.2.1.1. Enabling tab completion for Bash

After you install the OpenShift CLI (oc), you can enable tab completion to automatically complete oc commands or suggest options when you press Tab. The following procedure enables tab completion for the Bash shell.

Prerequisites

-

You must have the OpenShift CLI (

oc) installed. -

You must have the package

bash-completioninstalled.

Procedure

Save the Bash completion code to a file:

$ oc completion bash > oc_bash_completionCopy the file to

/etc/bash_completion.d/:$ sudo cp oc_bash_completion /etc/bash_completion.d/You can also save the file to a local directory and source it from your

.bashrcfile instead.

Tab completion is enabled when you open a new terminal.

2.2.1.2. Enabling tab completion for Zsh

After you install the OpenShift CLI (oc), you can enable tab completion to automatically complete oc commands or suggest options when you press Tab. The following procedure enables tab completion for the Zsh shell.

Prerequisites

-

You must have the OpenShift CLI (

oc) installed.

Procedure

To add tab completion for

octo your.zshrcfile, run the following command:$ cat >>~/.zshrc<<EOF if [ $commands[oc] ]; then source <(oc completion zsh) compdef _oc oc fi EOF

Tab completion is enabled when you open a new terminal.

2.3. Managing CLI profiles

A CLI configuration file allows you to configure different profiles, or contexts, for use with the CLI tools overview. A context consists of user authentication and OpenShift Container Platform server information associated with a nickname.

2.3.1. About switches between CLI profiles

Contexts allow you to easily switch between multiple users across multiple OpenShift Container Platform servers, or clusters, when using CLI operations. Nicknames make managing CLI configurations easier by providing short-hand references to contexts, user credentials, and cluster details. After logging in with the CLI for the first time, OpenShift Container Platform creates a ~/.kube/config file if one does not already exist. As more authentication and connection details are provided to the CLI, either automatically during an oc login operation or by manually configuring CLI profiles, the updated information is stored in the configuration file:

CLI config file

apiVersion: v1

clusters:

- cluster:

insecure-skip-tls-verify: true

server: https://openshift1.example.com:8443

name: openshift1.example.com:8443

- cluster:

insecure-skip-tls-verify: true

server: https://openshift2.example.com:8443

name: openshift2.example.com:8443

contexts:

- context:

cluster: openshift1.example.com:8443

namespace: alice-project

user: alice/openshift1.example.com:8443

name: alice-project/openshift1.example.com:8443/alice

- context:

cluster: openshift1.example.com:8443

namespace: joe-project

user: alice/openshift1.example.com:8443

name: joe-project/openshift1/alice

current-context: joe-project/openshift1.example.com:8443/alice

kind: Config

preferences: {}

users:

- name: alice/openshift1.example.com:8443

user:

token: xZHd2piv5_9vQrg-SKXRJ2Dsl9SceNJdhNTljEKTb8k- 1

- The

clusterssection defines connection details for OpenShift Container Platform clusters, including the address for their master server. In this example, one cluster is nicknamedopenshift1.example.com:8443and another is nicknamedopenshift2.example.com:8443. - 2

- This

contextssection defines two contexts: one nicknamedalice-project/openshift1.example.com:8443/alice, using thealice-projectproject,openshift1.example.com:8443cluster, andaliceuser, and another nicknamedjoe-project/openshift1.example.com:8443/alice, using thejoe-projectproject,openshift1.example.com:8443cluster andaliceuser. - 3

- The

current-contextparameter shows that thejoe-project/openshift1.example.com:8443/alicecontext is currently in use, allowing thealiceuser to work in thejoe-projectproject on theopenshift1.example.com:8443cluster. - 4

- The

userssection defines user credentials. In this example, the user nicknamealice/openshift1.example.com:8443uses an access token.

The CLI can support multiple configuration files which are loaded at runtime and merged together along with any override options specified from the command line. After you are logged in, you can use the oc status or oc project command to verify your current working environment:

Verify the current working environment

$ oc statusExample output

oc status

In project Joe's Project (joe-project)

service database (172.30.43.12:5434 -> 3306)

database deploys docker.io/openshift/mysql-55-centos7:latest

#1 deployed 25 minutes ago - 1 pod

service frontend (172.30.159.137:5432 -> 8080)

frontend deploys origin-ruby-sample:latest <-

builds https://github.com/openshift/ruby-hello-world with joe-project/ruby-20-centos7:latest

#1 deployed 22 minutes ago - 2 pods

To see more information about a service or deployment, use 'oc describe service <name>' or 'oc describe dc <name>'.

You can use 'oc get all' to see lists of each of the types described in this example.List the current project

$ oc projectExample output

Using project "joe-project" from context named "joe-project/openshift1.example.com:8443/alice" on server "https://openshift1.example.com:8443".

You can run the oc login command again and supply the required information during the interactive process, to log in using any other combination of user credentials and cluster details. A context is constructed based on the supplied information if one does not already exist. If you are already logged in and want to switch to another project the current user already has access to, use the oc project command and enter the name of the project:

$ oc project alice-projectExample output

Now using project "alice-project" on server "https://openshift1.example.com:8443".

At any time, you can use the oc config view command to view your current CLI configuration, as seen in the output. Additional CLI configuration commands are also available for more advanced usage.

If you have access to administrator credentials but are no longer logged in as the default system user system:admin, you can log back in as this user at any time as long as the credentials are still present in your CLI config file. The following command logs in and switches to the default project:

$ oc login -u system:admin -n default2.3.2. Manual configuration of CLI profiles

This section covers more advanced usage of CLI configurations. In most situations, you can use the oc login and oc project commands to log in and switch between contexts and projects.

If you want to manually configure your CLI config files, you can use the oc config command instead of directly modifying the files. The oc config command includes a number of helpful sub-commands for this purpose:

| Subcommand | Usage |

|---|---|

|

| Sets a cluster entry in the CLI config file. If the referenced cluster nickname already exists, the specified information is merged in. |

|

| Sets a context entry in the CLI config file. If the referenced context nickname already exists, the specified information is merged in. |

|

| Sets the current context using the specified context nickname. |

|

| Sets an individual value in the CLI config file.

The |

|

| Unsets individual values in the CLI config file.

The |

|

| Displays the merged CLI configuration currently in use. Displays the result of the specified CLI config file. |

Example usage

-

Log in as a user that uses an access token. This token is used by the

aliceuser:

$ oc login https://openshift1.example.com --token=ns7yVhuRNpDM9cgzfhhxQ7bM5s7N2ZVrkZepSRf4LC0- View the cluster entry automatically created:

$ oc config viewExample output

apiVersion: v1

clusters:

- cluster:

insecure-skip-tls-verify: true

server: https://openshift1.example.com

name: openshift1-example-com

contexts:

- context:

cluster: openshift1-example-com

namespace: default

user: alice/openshift1-example-com

name: default/openshift1-example-com/alice

current-context: default/openshift1-example-com/alice

kind: Config

preferences: {}

users:

- name: alice/openshift1.example.com

user:

token: ns7yVhuRNpDM9cgzfhhxQ7bM5s7N2ZVrkZepSRf4LC0- Update the current context to have users log in to the desired namespace:

$ oc config set-context `oc config current-context` --namespace=<project_name>- Examine the current context, to confirm that the changes are implemented:

$ oc whoami -cAll subsequent CLI operations uses the new context, unless otherwise specified by overriding CLI options or until the context is switched.

2.3.3. Load and merge rules

You can follow these rules, when issuing CLI operations for the loading and merging order for the CLI configuration:

CLI config files are retrieved from your workstation, using the following hierarchy and merge rules:

-

If the

--configoption is set, then only that file is loaded. The flag is set once and no merging takes place. -

If the

$KUBECONFIGenvironment variable is set, then it is used. The variable can be a list of paths, and if so the paths are merged together. When a value is modified, it is modified in the file that defines the stanza. When a value is created, it is created in the first file that exists. If no files in the chain exist, then it creates the last file in the list. -

Otherwise, the

~/.kube/configfile is used and no merging takes place.

-

If the

The context to use is determined based on the first match in the following flow:

-

The value of the

--contextoption. -

The

current-contextvalue from the CLI config file. - An empty value is allowed at this stage.

-

The value of the

The user and cluster to use is determined. At this point, you may or may not have a context; they are built based on the first match in the following flow, which is run once for the user and once for the cluster:

-

The value of the

--userfor user name and--clusteroption for cluster name. -

If the

--contextoption is present, then use the context’s value. - An empty value is allowed at this stage.

-

The value of the

The actual cluster information to use is determined. At this point, you may or may not have cluster information. Each piece of the cluster information is built based on the first match in the following flow:

The values of any of the following command line options:

-

--server, -

--api-version -

--certificate-authority -

--insecure-skip-tls-verify

-

- If cluster information and a value for the attribute is present, then use it.

- If you do not have a server location, then there is an error.

The actual user information to use is determined. Users are built using the same rules as clusters, except that you can only have one authentication technique per user; conflicting techniques cause the operation to fail. Command line options take precedence over config file values. Valid command line options are:

-

--auth-path -

--client-certificate -

--client-key -

--token

-

- For any information that is still missing, default values are used and prompts are given for additional information.

2.4. Extending the OpenShift CLI with plugins

You can write and install plugins to build on the default oc commands, allowing you to perform new and more complex tasks with the OpenShift Container Platform CLI.

2.4.1. Writing CLI plugins

You can write a plugin for the OpenShift Container Platform CLI in any programming language or script that allows you to write command-line commands. Note that you can not use a plugin to overwrite an existing oc command.

Procedure

This procedure creates a simple Bash plugin that prints a message to the terminal when the oc foo command is issued.

Create a file called

oc-foo.When naming your plugin file, keep the following in mind:

-

The file must begin with

oc-orkubectl-to be recognized as a plugin. -

The file name determines the command that invokes the plugin. For example, a plugin with the file name

oc-foo-barcan be invoked by a command ofoc foo bar. You can also use underscores if you want the command to contain dashes. For example, a plugin with the file nameoc-foo_barcan be invoked by a command ofoc foo-bar.

-

The file must begin with

Add the following contents to the file.

#!/bin/bash # optional argument handling if [[ "$1" == "version" ]] then echo "1.0.0" exit 0 fi # optional argument handling if [[ "$1" == "config" ]] then echo $KUBECONFIG exit 0 fi echo "I am a plugin named kubectl-foo"

After you install this plugin for the OpenShift Container Platform CLI, it can be invoked using the oc foo command.

2.4.2. Installing and using CLI plugins

After you write a custom plugin for the OpenShift Container Platform CLI, you must install it to use the functionality that it provides.

Prerequisites

-

You must have the

ocCLI tool installed. -

You must have a CLI plugin file that begins with

oc-orkubectl-.

Procedure

If necessary, update the plugin file to be executable.

$ chmod +x <plugin_file>Place the file anywhere in your

PATH, such as/usr/local/bin/.$ sudo mv <plugin_file> /usr/local/bin/.Run

oc plugin listto make sure that the plugin is listed.$ oc plugin listExample output

The following compatible plugins are available: /usr/local/bin/<plugin_file>If your plugin is not listed here, verify that the file begins with

oc-orkubectl-, is executable, and is on yourPATH.Invoke the new command or option introduced by the plugin.

For example, if you built and installed the

kubectl-nsplugin from the Sample plugin repository, you can use the following command to view the current namespace.$ oc nsNote that the command to invoke the plugin depends on the plugin file name. For example, a plugin with the file name of

oc-foo-baris invoked by theoc foo barcommand.

2.5. OpenShift CLI developer command reference

This reference provides descriptions and example commands for OpenShift CLI (oc) developer commands. For administrator commands, see the OpenShift CLI administrator command reference.

Run oc help to list all commands or run oc <command> --help to get additional details for a specific command.

2.5.1. OpenShift CLI (oc) developer commands

2.5.1.1. oc annotate

Update the annotations on a resource

Example usage

# Update pod 'foo' with the annotation 'description' and the value 'my frontend'.

# If the same annotation is set multiple times, only the last value will be applied

oc annotate pods foo description='my frontend'

# Update a pod identified by type and name in "pod.json"

oc annotate -f pod.json description='my frontend'

# Update pod 'foo' with the annotation 'description' and the value 'my frontend running nginx', overwriting any existing value.

oc annotate --overwrite pods foo description='my frontend running nginx'

# Update all pods in the namespace

oc annotate pods --all description='my frontend running nginx'

# Update pod 'foo' only if the resource is unchanged from version 1.

oc annotate pods foo description='my frontend running nginx' --resource-version=1

# Update pod 'foo' by removing an annotation named 'description' if it exists.

# Does not require the --overwrite flag.

oc annotate pods foo description-2.5.1.2. oc api-resources

Print the supported API resources on the server

Example usage

# Print the supported API Resources

oc api-resources

# Print the supported API Resources with more information

oc api-resources -o wide

# Print the supported API Resources sorted by a column

oc api-resources --sort-by=name

# Print the supported namespaced resources

oc api-resources --namespaced=true

# Print the supported non-namespaced resources

oc api-resources --namespaced=false

# Print the supported API Resources with specific APIGroup

oc api-resources --api-group=extensions2.5.1.3. oc api-versions

Print the supported API versions on the server, in the form of "group/version"

Example usage

# Print the supported API versions

oc api-versions2.5.1.4. oc apply

Apply a configuration to a resource by filename or stdin

Example usage

# Apply the configuration in pod.json to a pod.

oc apply -f ./pod.json

# Apply resources from a directory containing kustomization.yaml - e.g. dir/kustomization.yaml.

oc apply -k dir/

# Apply the JSON passed into stdin to a pod.

cat pod.json | oc apply -f -

# Note: --prune is still in Alpha

# Apply the configuration in manifest.yaml that matches label app=nginx and delete all the other resources that are not in the file and match label app=nginx.

oc apply --prune -f manifest.yaml -l app=nginx

# Apply the configuration in manifest.yaml and delete all the other configmaps that are not in the file.

oc apply --prune -f manifest.yaml --all --prune-whitelist=core/v1/ConfigMap2.5.1.5. oc apply edit-last-applied

Edit latest last-applied-configuration annotations of a resource/object

Example usage

# Edit the last-applied-configuration annotations by type/name in YAML.

oc apply edit-last-applied deployment/nginx

# Edit the last-applied-configuration annotations by file in JSON.

oc apply edit-last-applied -f deploy.yaml -o json2.5.1.6. oc apply set-last-applied

Set the last-applied-configuration annotation on a live object to match the contents of a file.

Example usage

# Set the last-applied-configuration of a resource to match the contents of a file.

oc apply set-last-applied -f deploy.yaml

# Execute set-last-applied against each configuration file in a directory.

oc apply set-last-applied -f path/

# Set the last-applied-configuration of a resource to match the contents of a file, will create the annotation if it does not already exist.

oc apply set-last-applied -f deploy.yaml --create-annotation=true2.5.1.7. oc apply view-last-applied

View latest last-applied-configuration annotations of a resource/object

Example usage

# View the last-applied-configuration annotations by type/name in YAML.

oc apply view-last-applied deployment/nginx

# View the last-applied-configuration annotations by file in JSON

oc apply view-last-applied -f deploy.yaml -o json2.5.1.8. oc attach

Attach to a running container

Example usage

# Get output from running pod mypod, use the oc.kubernetes.io/default-container annotation

# for selecting the container to be attached or the first container in the pod will be chosen

oc attach mypod

# Get output from ruby-container from pod mypod

oc attach mypod -c ruby-container

# Switch to raw terminal mode, sends stdin to 'bash' in ruby-container from pod mypod

# and sends stdout/stderr from 'bash' back to the client

oc attach mypod -c ruby-container -i -t

# Get output from the first pod of a ReplicaSet named nginx

oc attach rs/nginx2.5.1.9. oc auth can-i

Check whether an action is allowed

Example usage

# Check to see if I can create pods in any namespace

oc auth can-i create pods --all-namespaces

# Check to see if I can list deployments in my current namespace

oc auth can-i list deployments.apps

# Check to see if I can do everything in my current namespace ("*" means all)

oc auth can-i '*' '*'

# Check to see if I can get the job named "bar" in namespace "foo"

oc auth can-i list jobs.batch/bar -n foo

# Check to see if I can read pod logs

oc auth can-i get pods --subresource=log

# Check to see if I can access the URL /logs/

oc auth can-i get /logs/

# List all allowed actions in namespace "foo"

oc auth can-i --list --namespace=foo2.5.1.10. oc auth reconcile

Reconciles rules for RBAC Role, RoleBinding, ClusterRole, and ClusterRoleBinding objects

Example usage

# Reconcile rbac resources from a file

oc auth reconcile -f my-rbac-rules.yaml2.5.1.11. oc autoscale

Autoscale a deployment config, deployment, replica set, stateful set, or replication controller

Example usage

# Auto scale a deployment "foo", with the number of pods between 2 and 10, no target CPU utilization specified so a default autoscaling policy will be used:

oc autoscale deployment foo --min=2 --max=10

# Auto scale a replication controller "foo", with the number of pods between 1 and 5, target CPU utilization at 80%:

oc autoscale rc foo --max=5 --cpu-percent=802.5.1.12. oc cancel-build

Cancel running, pending, or new builds

Example usage

# Cancel the build with the given name

oc cancel-build ruby-build-2

# Cancel the named build and print the build logs

oc cancel-build ruby-build-2 --dump-logs

# Cancel the named build and create a new one with the same parameters

oc cancel-build ruby-build-2 --restart

# Cancel multiple builds

oc cancel-build ruby-build-1 ruby-build-2 ruby-build-3

# Cancel all builds created from the 'ruby-build' build config that are in the 'new' state

oc cancel-build bc/ruby-build --state=new2.5.1.13. oc cluster-info

Display cluster info

Example usage

# Print the address of the control plane and cluster services

oc cluster-info2.5.1.14. oc cluster-info dump

Dump lots of relevant info for debugging and diagnosis

Example usage

# Dump current cluster state to stdout

oc cluster-info dump

# Dump current cluster state to /path/to/cluster-state

oc cluster-info dump --output-directory=/path/to/cluster-state

# Dump all namespaces to stdout

oc cluster-info dump --all-namespaces

# Dump a set of namespaces to /path/to/cluster-state

oc cluster-info dump --namespaces default,kube-system --output-directory=/path/to/cluster-state2.5.1.15. oc completion

Output shell completion code for the specified shell (bash or zsh)

Example usage

# Installing bash completion on macOS using homebrew

## If running Bash 3.2 included with macOS

brew install bash-completion

## or, if running Bash 4.1+

brew install bash-completion@2

## If oc is installed via homebrew, this should start working immediately.

## If you've installed via other means, you may need add the completion to your completion directory

oc completion bash > $(brew --prefix)/etc/bash_completion.d/oc

# Installing bash completion on Linux

## If bash-completion is not installed on Linux, please install the 'bash-completion' package

## via your distribution's package manager.

## Load the oc completion code for bash into the current shell

source <(oc completion bash)

## Write bash completion code to a file and source it from .bash_profile

oc completion bash > ~/.kube/completion.bash.inc

printf "

# Kubectl shell completion

source '$HOME/.kube/completion.bash.inc'

" >> $HOME/.bash_profile

source $HOME/.bash_profile

# Load the oc completion code for zsh[1] into the current shell

source <(oc completion zsh)

# Set the oc completion code for zsh[1] to autoload on startup

oc completion zsh > "${fpath[1]}/_oc"2.5.1.16. oc config current-context

Displays the current-context

Example usage

# Display the current-context

oc config current-context2.5.1.17. oc config delete-cluster

Delete the specified cluster from the kubeconfig

Example usage

# Delete the minikube cluster

oc config delete-cluster minikube2.5.1.18. oc config delete-context

Delete the specified context from the kubeconfig

Example usage

# Delete the context for the minikube cluster

oc config delete-context minikube2.5.1.19. oc config delete-user

Delete the specified user from the kubeconfig

Example usage

# Delete the minikube user

oc config delete-user minikube2.5.1.20. oc config get-clusters

Display clusters defined in the kubeconfig

Example usage

# List the clusters oc knows about

oc config get-clusters2.5.1.21. oc config get-contexts

Describe one or many contexts

Example usage

# List all the contexts in your kubeconfig file

oc config get-contexts

# Describe one context in your kubeconfig file.

oc config get-contexts my-context2.5.1.22. oc config get-users

Display users defined in the kubeconfig

Example usage

# List the users oc knows about

oc config get-users2.5.1.23. oc config rename-context

Renames a context from the kubeconfig file.

Example usage

# Rename the context 'old-name' to 'new-name' in your kubeconfig file

oc config rename-context old-name new-name2.5.1.24. oc config set

Sets an individual value in a kubeconfig file

Example usage

# Set server field on the my-cluster cluster to https://1.2.3.4

oc config set clusters.my-cluster.server https://1.2.3.4

# Set certificate-authority-data field on the my-cluster cluster.

oc config set clusters.my-cluster.certificate-authority-data $(echo "cert_data_here" | base64 -i -)

# Set cluster field in the my-context context to my-cluster.

oc config set contexts.my-context.cluster my-cluster

# Set client-key-data field in the cluster-admin user using --set-raw-bytes option.

oc config set users.cluster-admin.client-key-data cert_data_here --set-raw-bytes=true2.5.1.25. oc config set-cluster

Sets a cluster entry in kubeconfig

Example usage

# Set only the server field on the e2e cluster entry without touching other values.

oc config set-cluster e2e --server=https://1.2.3.4

# Embed certificate authority data for the e2e cluster entry

oc config set-cluster e2e --embed-certs --certificate-authority=~/.kube/e2e/kubernetes.ca.crt

# Disable cert checking for the dev cluster entry

oc config set-cluster e2e --insecure-skip-tls-verify=true

# Set custom TLS server name to use for validation for the e2e cluster entry

oc config set-cluster e2e --tls-server-name=my-cluster-name2.5.1.26. oc config set-context

Sets a context entry in kubeconfig

Example usage

# Set the user field on the gce context entry without touching other values

oc config set-context gce --user=cluster-admin2.5.1.27. oc config set-credentials

Sets a user entry in kubeconfig

Example usage

# Set only the "client-key" field on the "cluster-admin"

# entry, without touching other values:

oc config set-credentials cluster-admin --client-key=~/.kube/admin.key

# Set basic auth for the "cluster-admin" entry

oc config set-credentials cluster-admin --username=admin --password=uXFGweU9l35qcif

# Embed client certificate data in the "cluster-admin" entry

oc config set-credentials cluster-admin --client-certificate=~/.kube/admin.crt --embed-certs=true

# Enable the Google Compute Platform auth provider for the "cluster-admin" entry

oc config set-credentials cluster-admin --auth-provider=gcp

# Enable the OpenID Connect auth provider for the "cluster-admin" entry with additional args

oc config set-credentials cluster-admin --auth-provider=oidc --auth-provider-arg=client-id=foo --auth-provider-arg=client-secret=bar

# Remove the "client-secret" config value for the OpenID Connect auth provider for the "cluster-admin" entry

oc config set-credentials cluster-admin --auth-provider=oidc --auth-provider-arg=client-secret-

# Enable new exec auth plugin for the "cluster-admin" entry

oc config set-credentials cluster-admin --exec-command=/path/to/the/executable --exec-api-version=client.authentication.k8s.io/v1beta1

# Define new exec auth plugin args for the "cluster-admin" entry

oc config set-credentials cluster-admin --exec-arg=arg1 --exec-arg=arg2

# Create or update exec auth plugin environment variables for the "cluster-admin" entry

oc config set-credentials cluster-admin --exec-env=key1=val1 --exec-env=key2=val2

# Remove exec auth plugin environment variables for the "cluster-admin" entry

oc config set-credentials cluster-admin --exec-env=var-to-remove-2.5.1.28. oc config unset

Unsets an individual value in a kubeconfig file

Example usage

# Unset the current-context.

oc config unset current-context

# Unset namespace in foo context.

oc config unset contexts.foo.namespace2.5.1.29. oc config use-context

Sets the current-context in a kubeconfig file

Example usage

# Use the context for the minikube cluster

oc config use-context minikube2.5.1.30. oc config view

Display merged kubeconfig settings or a specified kubeconfig file

Example usage

# Show merged kubeconfig settings.

oc config view

# Show merged kubeconfig settings and raw certificate data.

oc config view --raw

# Get the password for the e2e user

oc config view -o jsonpath='{.users[?(@.name == "e2e")].user.password}'2.5.1.31. oc cp

Copy files and directories to and from containers.

Example usage

# !!!Important Note!!!

# Requires that the 'tar' binary is present in your container

# image. If 'tar' is not present, 'oc cp' will fail.

#

# For advanced use cases, such as symlinks, wildcard expansion or

# file mode preservation consider using 'oc exec'.

# Copy /tmp/foo local file to /tmp/bar in a remote pod in namespace <some-namespace>

tar cf - /tmp/foo | oc exec -i -n <some-namespace> <some-pod> -- tar xf - -C /tmp/bar

# Copy /tmp/foo from a remote pod to /tmp/bar locally

oc exec -n <some-namespace> <some-pod> -- tar cf - /tmp/foo | tar xf - -C /tmp/bar

# Copy /tmp/foo_dir local directory to /tmp/bar_dir in a remote pod in the default namespace

oc cp /tmp/foo_dir <some-pod>:/tmp/bar_dir

# Copy /tmp/foo local file to /tmp/bar in a remote pod in a specific container

oc cp /tmp/foo <some-pod>:/tmp/bar -c <specific-container>

# Copy /tmp/foo local file to /tmp/bar in a remote pod in namespace <some-namespace>

oc cp /tmp/foo <some-namespace>/<some-pod>:/tmp/bar

# Copy /tmp/foo from a remote pod to /tmp/bar locally

oc cp <some-namespace>/<some-pod>:/tmp/foo /tmp/bar2.5.1.32. oc create

Create a resource from a file or from stdin.

Example usage

# Create a pod using the data in pod.json.

oc create -f ./pod.json

# Create a pod based on the JSON passed into stdin.

cat pod.json | oc create -f -

# Edit the data in docker-registry.yaml in JSON then create the resource using the edited data.

oc create -f docker-registry.yaml --edit -o json2.5.1.33. oc create build

Create a new build

Example usage

# Create a new build

oc create build myapp2.5.1.34. oc create clusterresourcequota

Create a cluster resource quota

Example usage

# Create a cluster resource quota limited to 10 pods

oc create clusterresourcequota limit-bob --project-annotation-selector=openshift.io/requester=user-bob --hard=pods=102.5.1.35. oc create clusterrole

Create a ClusterRole.

Example usage

# Create a ClusterRole named "pod-reader" that allows user to perform "get", "watch" and "list" on pods

oc create clusterrole pod-reader --verb=get,list,watch --resource=pods

# Create a ClusterRole named "pod-reader" with ResourceName specified

oc create clusterrole pod-reader --verb=get --resource=pods --resource-name=readablepod --resource-name=anotherpod

# Create a ClusterRole named "foo" with API Group specified

oc create clusterrole foo --verb=get,list,watch --resource=rs.extensions

# Create a ClusterRole named "foo" with SubResource specified

oc create clusterrole foo --verb=get,list,watch --resource=pods,pods/status

# Create a ClusterRole name "foo" with NonResourceURL specified

oc create clusterrole "foo" --verb=get --non-resource-url=/logs/*

# Create a ClusterRole name "monitoring" with AggregationRule specified

oc create clusterrole monitoring --aggregation-rule="rbac.example.com/aggregate-to-monitoring=true"2.5.1.36. oc create clusterrolebinding

Create a ClusterRoleBinding for a particular ClusterRole

Example usage

# Create a ClusterRoleBinding for user1, user2, and group1 using the cluster-admin ClusterRole

oc create clusterrolebinding cluster-admin --clusterrole=cluster-admin --user=user1 --user=user2 --group=group12.5.1.37. oc create configmap

Create a configmap from a local file, directory or literal value

Example usage

# Create a new configmap named my-config based on folder bar

oc create configmap my-config --from-file=path/to/bar

# Create a new configmap named my-config with specified keys instead of file basenames on disk

oc create configmap my-config --from-file=key1=/path/to/bar/file1.txt --from-file=key2=/path/to/bar/file2.txt

# Create a new configmap named my-config with key1=config1 and key2=config2

oc create configmap my-config --from-literal=key1=config1 --from-literal=key2=config2

# Create a new configmap named my-config from the key=value pairs in the file

oc create configmap my-config --from-file=path/to/bar

# Create a new configmap named my-config from an env file

oc create configmap my-config --from-env-file=path/to/bar.env2.5.1.38. oc create cronjob

Create a cronjob with the specified name.

Example usage

# Create a cronjob

oc create cronjob my-job --image=busybox --schedule="*/1 * * * *"

# Create a cronjob with command

oc create cronjob my-job --image=busybox --schedule="*/1 * * * *" -- date2.5.1.39. oc create deployment

Create a deployment with the specified name.

Example usage

# Create a deployment named my-dep that runs the busybox image.

oc create deployment my-dep --image=busybox

# Create a deployment with command

oc create deployment my-dep --image=busybox -- date

# Create a deployment named my-dep that runs the nginx image with 3 replicas.

oc create deployment my-dep --image=nginx --replicas=3

# Create a deployment named my-dep that runs the busybox image and expose port 5701.

oc create deployment my-dep --image=busybox --port=57012.5.1.40. oc create deploymentconfig

Create a deployment config with default options that uses a given image

Example usage

# Create an nginx deployment config named my-nginx

oc create deploymentconfig my-nginx --image=nginx2.5.1.41. oc create identity

Manually create an identity (only needed if automatic creation is disabled)

Example usage

# Create an identity with identity provider "acme_ldap" and the identity provider username "adamjones"

oc create identity acme_ldap:adamjones2.5.1.42. oc create imagestream

Create a new empty image stream

Example usage

# Create a new image stream

oc create imagestream mysql2.5.1.43. oc create imagestreamtag

Create a new image stream tag

Example usage

# Create a new image stream tag based on an image in a remote registry

oc create imagestreamtag mysql:latest --from-image=myregistry.local/mysql/mysql:5.02.5.1.44. oc create ingress

Create an ingress with the specified name.

Example usage

# Create a single ingress called 'simple' that directs requests to foo.com/bar to svc

# svc1:8080 with a tls secret "my-cert"

oc create ingress simple --rule="foo.com/bar=svc1:8080,tls=my-cert"

# Create a catch all ingress of "/path" pointing to service svc:port and Ingress Class as "otheringress"

oc create ingress catch-all --class=otheringress --rule="/path=svc:port"

# Create an ingress with two annotations: ingress.annotation1 and ingress.annotations2

oc create ingress annotated --class=default --rule="foo.com/bar=svc:port" \

--annotation ingress.annotation1=foo \

--annotation ingress.annotation2=bla

# Create an ingress with the same host and multiple paths

oc create ingress multipath --class=default \

--rule="foo.com/=svc:port" \

--rule="foo.com/admin/=svcadmin:portadmin"

# Create an ingress with multiple hosts and the pathType as Prefix

oc create ingress ingress1 --class=default \

--rule="foo.com/path*=svc:8080" \

--rule="bar.com/admin*=svc2:http"

# Create an ingress with TLS enabled using the default ingress certificate and different path types

oc create ingress ingtls --class=default \

--rule="foo.com/=svc:https,tls" \

--rule="foo.com/path/subpath*=othersvc:8080"

# Create an ingress with TLS enabled using a specific secret and pathType as Prefix

oc create ingress ingsecret --class=default \

--rule="foo.com/*=svc:8080,tls=secret1"

# Create an ingress with a default backend

oc create ingress ingdefault --class=default \

--default-backend=defaultsvc:http \

--rule="foo.com/*=svc:8080,tls=secret1"2.5.1.45. oc create job

Create a job with the specified name.

Example usage

# Create a job

oc create job my-job --image=busybox

# Create a job with command

oc create job my-job --image=busybox -- date

# Create a job from a CronJob named "a-cronjob"

oc create job test-job --from=cronjob/a-cronjob2.5.1.46. oc create namespace

Create a namespace with the specified name

Example usage

# Create a new namespace named my-namespace

oc create namespace my-namespace2.5.1.47. oc create poddisruptionbudget

Create a pod disruption budget with the specified name.

Example usage

# Create a pod disruption budget named my-pdb that will select all pods with the app=rails label

# and require at least one of them being available at any point in time.

oc create poddisruptionbudget my-pdb --selector=app=rails --min-available=1

# Create a pod disruption budget named my-pdb that will select all pods with the app=nginx label

# and require at least half of the pods selected to be available at any point in time.

oc create pdb my-pdb --selector=app=nginx --min-available=50%2.5.1.48. oc create priorityclass

Create a priorityclass with the specified name.

Example usage

# Create a priorityclass named high-priority

oc create priorityclass high-priority --value=1000 --description="high priority"

# Create a priorityclass named default-priority that considered as the global default priority

oc create priorityclass default-priority --value=1000 --global-default=true --description="default priority"

# Create a priorityclass named high-priority that can not preempt pods with lower priority

oc create priorityclass high-priority --value=1000 --description="high priority" --preemption-policy="Never"2.5.1.49. oc create quota

Create a quota with the specified name.

Example usage

# Create a new resourcequota named my-quota

oc create quota my-quota --hard=cpu=1,memory=1G,pods=2,services=3,replicationcontrollers=2,resourcequotas=1,secrets=5,persistentvolumeclaims=10

# Create a new resourcequota named best-effort

oc create quota best-effort --hard=pods=100 --scopes=BestEffort2.5.1.50. oc create role

Create a role with single rule.

Example usage

# Create a Role named "pod-reader" that allows user to perform "get", "watch" and "list" on pods

oc create role pod-reader --verb=get --verb=list --verb=watch --resource=pods

# Create a Role named "pod-reader" with ResourceName specified

oc create role pod-reader --verb=get --resource=pods --resource-name=readablepod --resource-name=anotherpod

# Create a Role named "foo" with API Group specified

oc create role foo --verb=get,list,watch --resource=rs.extensions

# Create a Role named "foo" with SubResource specified

oc create role foo --verb=get,list,watch --resource=pods,pods/status2.5.1.51. oc create rolebinding

Create a RoleBinding for a particular Role or ClusterRole

Example usage

# Create a RoleBinding for user1, user2, and group1 using the admin ClusterRole

oc create rolebinding admin --clusterrole=admin --user=user1 --user=user2 --group=group12.5.1.52. oc create route edge

Create a route that uses edge TLS termination

Example usage

# Create an edge route named "my-route" that exposes the frontend service

oc create route edge my-route --service=frontend

# Create an edge route that exposes the frontend service and specify a path

# If the route name is omitted, the service name will be used

oc create route edge --service=frontend --path /assets2.5.1.53. oc create route passthrough

Create a route that uses passthrough TLS termination

Example usage

# Create a passthrough route named "my-route" that exposes the frontend service

oc create route passthrough my-route --service=frontend

# Create a passthrough route that exposes the frontend service and specify

# a host name. If the route name is omitted, the service name will be used

oc create route passthrough --service=frontend --hostname=www.example.com2.5.1.54. oc create route reencrypt

Create a route that uses reencrypt TLS termination

Example usage

# Create a route named "my-route" that exposes the frontend service

oc create route reencrypt my-route --service=frontend --dest-ca-cert cert.cert

# Create a reencrypt route that exposes the frontend service, letting the

# route name default to the service name and the destination CA certificate

# default to the service CA

oc create route reencrypt --service=frontend2.5.1.55. oc create secret docker-registry

Create a secret for use with a Docker registry

Example usage

# If you don't already have a .dockercfg file, you can create a dockercfg secret directly by using:

oc create secret docker-registry my-secret --docker-server=DOCKER_REGISTRY_SERVER --docker-username=DOCKER_USER --docker-password=DOCKER_PASSWORD --docker-email=DOCKER_EMAIL

# Create a new secret named my-secret from ~/.docker/config.json

oc create secret docker-registry my-secret --from-file=.dockerconfigjson=path/to/.docker/config.json2.5.1.56. oc create secret generic

Create a secret from a local file, directory or literal value

Example usage

# Create a new secret named my-secret with keys for each file in folder bar

oc create secret generic my-secret --from-file=path/to/bar

# Create a new secret named my-secret with specified keys instead of names on disk

oc create secret generic my-secret --from-file=ssh-privatekey=path/to/id_rsa --from-file=ssh-publickey=path/to/id_rsa.pub

# Create a new secret named my-secret with key1=supersecret and key2=topsecret

oc create secret generic my-secret --from-literal=key1=supersecret --from-literal=key2=topsecret

# Create a new secret named my-secret using a combination of a file and a literal

oc create secret generic my-secret --from-file=ssh-privatekey=path/to/id_rsa --from-literal=passphrase=topsecret

# Create a new secret named my-secret from an env file

oc create secret generic my-secret --from-env-file=path/to/bar.env2.5.1.57. oc create secret tls

Create a TLS secret

Example usage

# Create a new TLS secret named tls-secret with the given key pair:

oc create secret tls tls-secret --cert=path/to/tls.cert --key=path/to/tls.key2.5.1.58. oc create service clusterip

Create a ClusterIP service.

Example usage

# Create a new ClusterIP service named my-cs

oc create service clusterip my-cs --tcp=5678:8080

# Create a new ClusterIP service named my-cs (in headless mode)

oc create service clusterip my-cs --clusterip="None"2.5.1.59. oc create service externalname

Create an ExternalName service.

Example usage

# Create a new ExternalName service named my-ns

oc create service externalname my-ns --external-name bar.com2.5.1.60. oc create service loadbalancer

Create a LoadBalancer service.

Example usage

# Create a new LoadBalancer service named my-lbs

oc create service loadbalancer my-lbs --tcp=5678:80802.5.1.61. oc create service nodeport

Create a NodePort service.

Example usage

# Create a new NodePort service named my-ns

oc create service nodeport my-ns --tcp=5678:80802.5.1.62. oc create serviceaccount

Create a service account with the specified name

Example usage

# Create a new service account named my-service-account

oc create serviceaccount my-service-account2.5.1.63. oc create user

Manually create a user (only needed if automatic creation is disabled)

Example usage

# Create a user with the username "ajones" and the display name "Adam Jones"

oc create user ajones --full-name="Adam Jones"2.5.1.64. oc create useridentitymapping

Manually map an identity to a user

Example usage

# Map the identity "acme_ldap:adamjones" to the user "ajones"

oc create useridentitymapping acme_ldap:adamjones ajones2.5.1.65. oc debug

Launch a new instance of a pod for debugging

Example usage

# Start a shell session into a pod using the OpenShift tools image

oc debug

# Debug a currently running deployment by creating a new pod

oc debug deploy/test

# Debug a node as an administrator

oc debug node/master-1

# Launch a shell in a pod using the provided image stream tag

oc debug istag/mysql:latest -n openshift

# Test running a job as a non-root user

oc debug job/test --as-user=1000000

# Debug a specific failing container by running the env command in the 'second' container

oc debug daemonset/test -c second -- /bin/env

# See the pod that would be created to debug

oc debug mypod-9xbc -o yaml

# Debug a resource but launch the debug pod in another namespace

# Note: Not all resources can be debugged using --to-namespace without modification. For example,

# volumes and service accounts are namespace-dependent. Add '-o yaml' to output the debug pod definition

# to disk. If necessary, edit the definition then run 'oc debug -f -' or run without --to-namespace

oc debug mypod-9xbc --to-namespace testns2.5.1.66. oc delete

Delete resources by filenames, stdin, resources and names, or by resources and label selector

Example usage

# Delete a pod using the type and name specified in pod.json.

oc delete -f ./pod.json

# Delete resources from a directory containing kustomization.yaml - e.g. dir/kustomization.yaml.

oc delete -k dir

# Delete a pod based on the type and name in the JSON passed into stdin.

cat pod.json | oc delete -f -

# Delete pods and services with same names "baz" and "foo"

oc delete pod,service baz foo

# Delete pods and services with label name=myLabel.

oc delete pods,services -l name=myLabel

# Delete a pod with minimal delay

oc delete pod foo --now

# Force delete a pod on a dead node

oc delete pod foo --force

# Delete all pods

oc delete pods --all2.5.1.67. oc describe

Show details of a specific resource or group of resources

Example usage

# Describe a node

oc describe nodes kubernetes-node-emt8.c.myproject.internal

# Describe a pod

oc describe pods/nginx

# Describe a pod identified by type and name in "pod.json"

oc describe -f pod.json

# Describe all pods

oc describe pods

# Describe pods by label name=myLabel

oc describe po -l name=myLabel

# Describe all pods managed by the 'frontend' replication controller (rc-created pods

# get the name of the rc as a prefix in the pod the name).

oc describe pods frontend2.5.1.68. oc diff

Diff live version against would-be applied version

Example usage

# Diff resources included in pod.json.

oc diff -f pod.json

# Diff file read from stdin

cat service.yaml | oc diff -f -2.5.1.69. oc edit

Edit a resource on the server

Example usage

# Edit the service named 'docker-registry':

oc edit svc/docker-registry

# Use an alternative editor

KUBE_EDITOR="nano" oc edit svc/docker-registry

# Edit the job 'myjob' in JSON using the v1 API format:

oc edit job.v1.batch/myjob -o json

# Edit the deployment 'mydeployment' in YAML and save the modified config in its annotation:

oc edit deployment/mydeployment -o yaml --save-config2.5.1.70. oc ex dockergc

Perform garbage collection to free space in docker storage

Example usage

# Perform garbage collection with the default settings

oc ex dockergc2.5.1.71. oc exec

Execute a command in a container

Example usage

# Get output from running 'date' command from pod mypod, using the first container by default

oc exec mypod -- date

# Get output from running 'date' command in ruby-container from pod mypod

oc exec mypod -c ruby-container -- date

# Switch to raw terminal mode, sends stdin to 'bash' in ruby-container from pod mypod

# and sends stdout/stderr from 'bash' back to the client

oc exec mypod -c ruby-container -i -t -- bash -il

# List contents of /usr from the first container of pod mypod and sort by modification time.

# If the command you want to execute in the pod has any flags in common (e.g. -i),

# you must use two dashes (--) to separate your command's flags/arguments.

# Also note, do not surround your command and its flags/arguments with quotes

# unless that is how you would execute it normally (i.e., do ls -t /usr, not "ls -t /usr").

oc exec mypod -i -t -- ls -t /usr

# Get output from running 'date' command from the first pod of the deployment mydeployment, using the first container by default

oc exec deploy/mydeployment -- date

# Get output from running 'date' command from the first pod of the service myservice, using the first container by default

oc exec svc/myservice -- date2.5.1.72. oc explain

Documentation of resources

Example usage

# Get the documentation of the resource and its fields

oc explain pods

# Get the documentation of a specific field of a resource

oc explain pods.spec.containers2.5.1.73. oc expose

Expose a replicated application as a service or route

Example usage

# Create a route based on service nginx. The new route will reuse nginx's labels

oc expose service nginx

# Create a route and specify your own label and route name

oc expose service nginx -l name=myroute --name=fromdowntown

# Create a route and specify a host name

oc expose service nginx --hostname=www.example.com

# Create a route with a wildcard

oc expose service nginx --hostname=x.example.com --wildcard-policy=Subdomain

# This would be equivalent to *.example.com. NOTE: only hosts are matched by the wildcard; subdomains would not be included

# Expose a deployment configuration as a service and use the specified port

oc expose dc ruby-hello-world --port=8080

# Expose a service as a route in the specified path

oc expose service nginx --path=/nginx

# Expose a service using different generators

oc expose service nginx --name=exposed-svc --port=12201 --protocol="TCP" --generator="service/v2"

oc expose service nginx --name=my-route --port=12201 --generator="route/v1"

# Exposing a service using the "route/v1" generator (default) will create a new exposed route with the "--name" provided

# (or the name of the service otherwise). You may not specify a "--protocol" or "--target-port" option when using this generator2.5.1.74. oc extract

Extract secrets or config maps to disk

Example usage

# Extract the secret "test" to the current directory

oc extract secret/test

# Extract the config map "nginx" to the /tmp directory

oc extract configmap/nginx --to=/tmp

# Extract the config map "nginx" to STDOUT

oc extract configmap/nginx --to=-

# Extract only the key "nginx.conf" from config map "nginx" to the /tmp directory

oc extract configmap/nginx --to=/tmp --keys=nginx.conf2.5.1.75. oc get

Display one or many resources

Example usage

# List all pods in ps output format.

oc get pods

# List all pods in ps output format with more information (such as node name).

oc get pods -o wide

# List a single replication controller with specified NAME in ps output format.

oc get replicationcontroller web

# List deployments in JSON output format, in the "v1" version of the "apps" API group:

oc get deployments.v1.apps -o json

# List a single pod in JSON output format.

oc get -o json pod web-pod-13je7

# List a pod identified by type and name specified in "pod.yaml" in JSON output format.

oc get -f pod.yaml -o json

# List resources from a directory with kustomization.yaml - e.g. dir/kustomization.yaml.

oc get -k dir/

# Return only the phase value of the specified pod.

oc get -o template pod/web-pod-13je7 --template={{.status.phase}}

# List resource information in custom columns.

oc get pod test-pod -o custom-columns=CONTAINER:.spec.containers[0].name,IMAGE:.spec.containers[0].image

# List all replication controllers and services together in ps output format.

oc get rc,services

# List one or more resources by their type and names.

oc get rc/web service/frontend pods/web-pod-13je72.5.1.76. oc idle

Idle scalable resources

Example usage

# Idle the scalable controllers associated with the services listed in to-idle.txt

$ oc idle --resource-names-file to-idle.txt2.5.1.77. oc image append

Add layers to images and push them to a registry

Example usage

# Remove the entrypoint on the mysql:latest image

oc image append --from mysql:latest --to myregistry.com/myimage:latest --image '{"Entrypoint":null}'

# Add a new layer to the image

oc image append --from mysql:latest --to myregistry.com/myimage:latest layer.tar.gz

# Add a new layer to the image and store the result on disk

# This results in $(pwd)/v2/mysql/blobs,manifests

oc image append --from mysql:latest --to file://mysql:local layer.tar.gz

# Add a new layer to the image and store the result on disk in a designated directory

# This will result in $(pwd)/mysql-local/v2/mysql/blobs,manifests

oc image append --from mysql:latest --to file://mysql:local --dir mysql-local layer.tar.gz

# Add a new layer to an image that is stored on disk (~/mysql-local/v2/image exists)

oc image append --from-dir ~/mysql-local --to myregistry.com/myimage:latest layer.tar.gz

# Add a new layer to an image that was mirrored to the current directory on disk ($(pwd)/v2/image exists)

oc image append --from-dir v2 --to myregistry.com/myimage:latest layer.tar.gz

# Add a new layer to a multi-architecture image for an os/arch that is different from the system's os/arch

# Note: Wildcard filter is not supported with append. Pass a single os/arch to append

oc image append --from docker.io/library/busybox:latest --filter-by-os=linux/s390x --to myregistry.com/myimage:latest layer.tar.gz2.5.1.78. oc image extract

Copy files from an image to the file system

Example usage

# Extract the busybox image into the current directory

oc image extract docker.io/library/busybox:latest

# Extract the busybox image into a designated directory (must exist)

oc image extract docker.io/library/busybox:latest --path /:/tmp/busybox

# Extract the busybox image into the current directory for linux/s390x platform

# Note: Wildcard filter is not supported with extract. Pass a single os/arch to extract

oc image extract docker.io/library/busybox:latest --filter-by-os=linux/s390x

# Extract a single file from the image into the current directory

oc image extract docker.io/library/centos:7 --path /bin/bash:.

# Extract all .repo files from the image's /etc/yum.repos.d/ folder into the current directory

oc image extract docker.io/library/centos:7 --path /etc/yum.repos.d/*.repo:.

# Extract all .repo files from the image's /etc/yum.repos.d/ folder into a designated directory (must exist)

# This results in /tmp/yum.repos.d/*.repo on local system

oc image extract docker.io/library/centos:7 --path /etc/yum.repos.d/*.repo:/tmp/yum.repos.d

# Extract an image stored on disk into the current directory ($(pwd)/v2/busybox/blobs,manifests exists)

# --confirm is required because the current directory is not empty

oc image extract file://busybox:local --confirm

# Extract an image stored on disk in a directory other than $(pwd)/v2 into the current directory

# --confirm is required because the current directory is not empty ($(pwd)/busybox-mirror-dir/v2/busybox exists)

oc image extract file://busybox:local --dir busybox-mirror-dir --confirm

# Extract an image stored on disk in a directory other than $(pwd)/v2 into a designated directory (must exist)

oc image extract file://busybox:local --dir busybox-mirror-dir --path /:/tmp/busybox

# Extract the last layer in the image

oc image extract docker.io/library/centos:7[-1]

# Extract the first three layers of the image

oc image extract docker.io/library/centos:7[:3]

# Extract the last three layers of the image

oc image extract docker.io/library/centos:7[-3:]2.5.1.79. oc image info

Display information about an image

Example usage

# Show information about an image

oc image info quay.io/openshift/cli:latest

# Show information about images matching a wildcard

oc image info quay.io/openshift/cli:4.*

# Show information about a file mirrored to disk under DIR

oc image info --dir=DIR file://library/busybox:latest

# Select which image from a multi-OS image to show

oc image info library/busybox:latest --filter-by-os=linux/arm642.5.1.80. oc image mirror

Mirror images from one repository to another

Example usage

# Copy image to another tag

oc image mirror myregistry.com/myimage:latest myregistry.com/myimage:stable

# Copy image to another registry

oc image mirror myregistry.com/myimage:latest docker.io/myrepository/myimage:stable

# Copy all tags starting with mysql to the destination repository

oc image mirror myregistry.com/myimage:mysql* docker.io/myrepository/myimage

# Copy image to disk, creating a directory structure that can be served as a registry

oc image mirror myregistry.com/myimage:latest file://myrepository/myimage:latest

# Copy image to S3 (pull from <bucket>.s3.amazonaws.com/image:latest)

oc image mirror myregistry.com/myimage:latest s3://s3.amazonaws.com/<region>/<bucket>/image:latest

# Copy image to S3 without setting a tag (pull via @<digest>)

oc image mirror myregistry.com/myimage:latest s3://s3.amazonaws.com/<region>/<bucket>/image

# Copy image to multiple locations

oc image mirror myregistry.com/myimage:latest docker.io/myrepository/myimage:stable \

docker.io/myrepository/myimage:dev

# Copy multiple images

oc image mirror myregistry.com/myimage:latest=myregistry.com/other:test \

myregistry.com/myimage:new=myregistry.com/other:target

# Copy manifest list of a multi-architecture image, even if only a single image is found

oc image mirror myregistry.com/myimage:latest=myregistry.com/other:test \

--keep-manifest-list=true

# Copy specific os/arch manifest of a multi-architecture image

# Run 'oc image info myregistry.com/myimage:latest' to see available os/arch for multi-arch images

# Note that with multi-arch images, this results in a new manifest list digest that includes only

# the filtered manifests

oc image mirror myregistry.com/myimage:latest=myregistry.com/other:test \

--filter-by-os=os/arch

# Copy all os/arch manifests of a multi-architecture image

# Run 'oc image info myregistry.com/myimage:latest' to see list of os/arch manifests that will be mirrored

oc image mirror myregistry.com/myimage:latest=myregistry.com/other:test \

--keep-manifest-list=true

# Note the above command is equivalent to

oc image mirror myregistry.com/myimage:latest=myregistry.com/other:test \

--filter-by-os=.*2.5.1.81. oc import-image

Import images from a container image registry

Example usage

# Import tag latest into a new image stream

oc import-image mystream --from=registry.io/repo/image:latest --confirm

# Update imported data for tag latest in an already existing image stream

oc import-image mystream

# Update imported data for tag stable in an already existing image stream

oc import-image mystream:stable

# Update imported data for all tags in an existing image stream

oc import-image mystream --all

# Import all tags into a new image stream

oc import-image mystream --from=registry.io/repo/image --all --confirm

# Import all tags into a new image stream using a custom timeout

oc --request-timeout=5m import-image mystream --from=registry.io/repo/image --all --confirm2.5.1.82. oc kustomize

Build a kustomization target from a directory or URL.

Example usage

# Build the current working directory

oc kustomize

# Build some shared configuration directory

oc kustomize /home/config/production

# Build from github