Storage

Configuring and managing storage in OpenShift Container Platform 4.2

Abstract

Chapter 1. Understanding persistent storage

1.1. Persistent storage overview

Managing storage is a distinct problem from managing compute resources. OpenShift Container Platform uses the Kubernetes persistent volume (PV) framework to allow cluster administrators to provision persistent storage for a cluster. Developers can use persistent volume claims (PVCs) to request PV resources without having specific knowledge of the underlying storage infrastructure.

PVCs are specific to a project, and are created and used by developers as a means to use a PV. PV resources on their own are not scoped to any single project; they can be shared across the entire OpenShift Container Platform cluster and claimed from any project. After a PV is bound to a PVC, that PV can not then be bound to additional PVCs. This has the effect of scoping a bound PV to a single namespace, that of the binding project.

PVs are defined by a PersistentVolume API object, which represents a piece of existing storage in the cluster that was either statically provisioned by the cluster administrator or dynamically provisioned using a StorageClass object. It is a resource in the cluster just like a node is a cluster resource.

PVs are volume plug-ins like Volumes but have a lifecycle that is independent of any individual Pod that uses the PV. PV objects capture the details of the implementation of the storage, be that NFS, iSCSI, or a cloud-provider-specific storage system.

High availability of storage in the infrastructure is left to the underlying storage provider.

PVCs are defined by a PersistentVolumeClaim API object, which represents a request for storage by a developer. It is similar to a Pod in that Pods consume node resources and PVCs consume PV resources. For example, Pods can request specific levels of resources, such as CPU and memory, while PVCs can request specific storage capacity and access modes. For example, they can be mounted once read-write or many times read-only.

1.2. Lifecycle of a volume and claim

PVs are resources in the cluster. PVCs are requests for those resources and also act as claim checks to the resource. The interaction between PVs and PVCs have the following lifecycle.

1.2.1. Provision storage

In response to requests from a developer defined in a PVC, a cluster administrator configures one or more dynamic provisioners that provision storage and a matching PV.

Alternatively, a cluster administrator can create a number of PVs in advance that carry the details of the real storage that is available for use. PVs exist in the API and are available for use.

1.2.2. Bind claims

When you create a PVC, you request a specific amount of storage, specify the required access mode, and create a storage class to describe and classify the storage. The control loop in the master watches for new PVCs and binds the new PVC to an appropriate PV. If an appropriate PV does not exist, a provisioner for the storage class creates one.

The size of all PVs might exceed your PVC size. This is especially true with manually provisioned PVs. To minimize the excess, OpenShift Container Platform binds to the smallest PV that matches all other criteria.

Claims remain unbound indefinitely if a matching volume does not exist or can not be created with any available provisioner servicing a storage class. Claims are bound as matching volumes become available. For example, a cluster with many manually provisioned 50Gi volumes would not match a PVC requesting 100Gi. The PVC can be bound when a 100Gi PV is added to the cluster.

1.2.3. Use Pods and claimed PVs

Pods use claims as volumes. The cluster inspects the claim to find the bound volume and mounts that volume for a Pod. For those volumes that support multiple access modes, you must specify which mode applies when you use the claim as a volume in a Pod.

Once you have a claim and that claim is bound, the bound PV belongs to you for as long as you need it. You can schedule Pods and access claimed PVs by including persistentVolumeClaim in the Pod’s volumes block.

1.2.4. Storage Object in Use Protection

The Storage Object in Use Protection feature ensures that PVCs in active use by a Pod and PVs that are bound to PVCs are not removed from the system, as this can result in data loss.

Storage Object in Use Protection is enabled by default.

A PVC is in active use by a Pod when a Pod object exists that uses the PVC.

If a user deletes a PVC that is in active use by a Pod, the PVC is not removed immediately. PVC removal is postponed until the PVC is no longer actively used by any Pods. Also, if a cluster admin deletes a PV that is bound to a PVC, the PV is not removed immediately. PV removal is postponed until the PV is no longer bound to a PVC.

1.2.5. Release volumes

When you are finished with a volume, you can delete the PVC object from the API, which allows reclamation of the resource. The volume is considered released when the claim is deleted, but it is not yet available for another claim. The previous claimant’s data remains on the volume and must be handled according to policy.

1.2.6. Reclaim volumes

The reclaim policy of a PersistentVolume tells the cluster what to do with the volume after it is released. Volumes reclaim policy can either be Retain, Recycle, or Delete.

-

Retainreclaim policy allows manual reclamation of the resource for those volume plug-ins that support it. -

Recyclereclaim policy recycles the volume back into the pool of unbound persistent volumes once it is released from its claim.

The Recycle reclaim policy is deprecated in OpenShift Container Platform 4. Dynamic provisioning is recommended for equivalent and better functionality.

-

Deletereclaim policy deletes both thePersistentVolumeobject from OpenShift Container Platform and the associated storage asset in external infrastructure, such as AWS EBS or VMware vSphere.

Dynamically provisioned volumes are always deleted.

1.3. Persistent volumes

Each PV contains a spec and status, which is the specification and status of the volume, for example:

PV object definition example

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

...

status:

...1.3.1. Types of PVs

OpenShift Container Platform supports the following PersistentVolume plug-ins:

- AWS Elastic Block Store (EBS)

- Azure Disk

- Azure File

- Cinder

- Fibre Channel

- GCE Persistent Disk

- HostPath

- iSCSI

- Local volume

- NFS

- Red Hat OpenShift Container Storage

- VMware vSphere

1.3.2. Capacity

Generally, a PV has a specific storage capacity. This is set by using the PV’s capacity attribute.

Currently, storage capacity is the only resource that can be set or requested. Future attributes may include IOPS, throughput, and so on.

1.3.3. Access modes

A PersistentVolume can be mounted on a host in any way supported by the resource provider. Providers have different capabilities and each PV’s access modes are set to the specific modes supported by that particular volume. For example, NFS can support multiple read-write clients, but a specific NFS PV might be exported on the server as read-only. Each PV gets its own set of access modes describing that specific PV’s capabilities.

Claims are matched to volumes with similar access modes. The only two matching criteria are access modes and size. A claim’s access modes represent a request. Therefore, you might be granted more, but never less. For example, if a claim requests RWO, but the only volume available is an NFS PV (RWO+ROX+RWX), the claim would then match NFS because it supports RWO.

Direct matches are always attempted first. The volume’s modes must match or contain more modes than you requested. The size must be greater than or equal to what is expected. If two types of volumes, such as NFS and iSCSI, have the same set of access modes, either of them can match a claim with those modes. There is no ordering between types of volumes and no way to choose one type over another.

All volumes with the same modes are grouped, and then sorted by size, smallest to largest. The binder gets the group with matching modes and iterates over each, in size order, until one size matches.

The following table lists the access modes:

| Access Mode | CLI abbreviation | Description |

|---|---|---|

| ReadWriteOnce |

| The volume can be mounted as read-write by a single node. |

| ReadOnlyMany |

| The volume can be mounted as read-only by many nodes. |

| ReadWriteMany |

| The volume can be mounted as read-write by many nodes. |

A volume’s AccessModes are descriptors of the volume’s capabilities. They are not enforced constraints. The storage provider is responsible for runtime errors resulting from invalid use of the resource.

For example, NFS offers ReadWriteOnce access mode. You must mark the claims as read-only if you want to use the volume’s ROX capability. Errors in the provider show up at runtime as mount errors.

iSCSI and Fibre Channel volumes do not currently have any fencing mechanisms. You must ensure the volumes are only used by one node at a time. In certain situations, such as draining a node, the volumes can be used simultaneously by two nodes. Before draining the node, first ensure the Pods that use these volumes are deleted.

| Volume Plug-in | ReadWriteOnce | ReadOnlyMany | ReadWriteMany |

|---|---|---|---|

| AWS EBS |

✅ |

- |

- |

| Azure File |

✅ |

✅ |

✅ |

| Azure Disk |

✅ |

- |

- |

| Cinder |

✅ |

- |

- |

| Fibre Channel |

✅ |

✅ |

- |

| GCE Persistent Disk |

✅ |

- |

- |

| HostPath |

✅ |

- |

- |

| iSCSI |

✅ |

✅ |

- |

| Local volume |

✅ |

- |

- |

| NFS |

✅ |

✅ |

✅ |

| Red Hat OpenShift Container Storage See Available dynamic provisioning plug-ins for more information. |

|

- |

|

| VMware vSphere |

✅ |

- |

- |

Use a recreate deployment strategy for Pods that rely on AWS EBS.

1.3.4. Phase

Volumes can be found in one of the following phases:

| Phase | Description |

|---|---|

| Available | A free resource not yet bound to a claim. |

| Bound | The volume is bound to a claim. |

| Released | The claim was deleted, but the resource is not yet reclaimed by the cluster. |

| Failed | The volume has failed its automatic reclamation. |

You can view the name of the PVC bound to the PV by running:

$ oc get pv <pv-claim>1.3.4.1. Mount options

You can specify mount options while mounting a PV by using the annotation volume.beta.kubernetes.io/mount-options.

For example:

Mount options example

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

annotations:

volume.beta.kubernetes.io/mount-options: rw,nfsvers=4,noexec

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

nfs:

path: /tmp

server: 172.17.0.2

persistentVolumeReclaimPolicy: Retain

claimRef:

name: claim1

namespace: default- 1

- Specified mount options are used while mounting the PV to the disk.

The following PV types support mount options:

- AWS Elastic Block Store (EBS)

- Azure Disk

- Azure File

- Cinder

- GCE Persistent Disk

- iSCSI

- Local volume

- NFS

- Red Hat OpenShift Container Storage (Ceph RBD only)

- VMware vSphere

Fibre Channel and HostPath PVs do not support mount options.

1.4. Persistent volume claims

Each persistent volume claim (PVC) contains a spec and status, which is the specification and status of the claim, for example:

PVC object definition example

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: myclaim

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 8Gi

storageClassName: gold

status:

...1.4.1. Storage classes

Claims can optionally request a specific storage class by specifying the storage class’s name in the storageClassName attribute. Only PVs of the requested class, ones with the same storageClassName as the PVC, can be bound to the PVC. The cluster administrator can configure dynamic provisioners to service one or more storage classes. The cluster administrator can create a PV on demand that matches the specifications in the PVC.

The ClusterStorageOperator may install a default StorageClass depending on the platform in use. This StorageClass is owned and controlled by the operator. It cannot be deleted or modified beyond defining annotations and labels. If different behavior is desired, you must define a custom StorageClass.

The cluster administrator can also set a default storage class for all PVCs. When a default storage class is configured, the PVC must explicitly ask for StorageClass or storageClassName annotations set to "" to be bound to a PV without a storage class.

If more than one StorageClass is marked as default, a PVC can only be created if the storageClassName is explicitly specified. Therefore, only one StorageClass should be set as the default.

1.4.2. Access modes

Claims use the same conventions as volumes when requesting storage with specific access modes.

1.4.3. Resources

Claims, such as Pods, can request specific quantities of a resource. In this case, the request is for storage. The same resource model applies to volumes and claims.

1.4.4. Claims as volumes

Pods access storage by using the claim as a volume. Claims must exist in the same namespace as the Pod by using the claim. The cluster finds the claim in the Pod’s namespace and uses it to get the PersistentVolume backing the claim. The volume is mounted to the host and into the Pod, for example:

Mount volume to the host and into the Pod example

kind: Pod

apiVersion: v1

metadata:

name: mypod

spec:

containers:

- name: myfrontend

image: dockerfile/nginx

volumeMounts:

- mountPath: "/var/www/html"

name: mypd

volumes:

- name: mypd

persistentVolumeClaim:

claimName: myclaim 1.5. Block volume support

OpenShift Container Platform can statically provision raw block volumes. These volumes do not have a file system, and can provide performance benefits for applications that either write to the disk directly or implement their own storage service.

Raw block volumes are provisioned by specifying volumeMode: Block in the PV and PVC specification.

Pods using raw block volumes must be configured to allow privileged containers.

The following table displays which volume plug-ins support block volumes.

| Volume Plug-in | Manually provisioned | Dynamically provisioned | Fully supported |

|---|---|---|---|

| AWS EBS | ✅ | ✅ | ✅ |

| Azure Disk | ✅ | ✅ | ✅ |

| Azure File | |||

| Cinder | |||

| Fibre Channel | ✅ | ||

| GCP | ✅ | ✅ | ✅ |

| HostPath | |||

| iSCSI | ✅ | ||

| Local volume | ✅ | ✅ | |

| NFS | |||

| Red Hat OpenShift Container Storage | ✅ | ✅ | ✅ |

| VMware vSphere | ✅ | ✅ | ✅ |

Any of the block volumes that can be provisioned manually, but are not provided as fully supported, are included as a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process. For more information about the support scope of Red Hat Technology Preview features, see https://access.redhat.com/support/offerings/techpreview/.

1.5.1. Block volume examples

PV example

apiVersion: v1

kind: PersistentVolume

metadata:

name: block-pv

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteOnce

volumeMode: Block

persistentVolumeReclaimPolicy: Retain

fc:

targetWWNs: ["50060e801049cfd1"]

lun: 0

readOnly: false- 1

volumeModemust be set toBlockto indicate that this PV is a raw block volume.

PVC example

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: block-pvc

spec:

accessModes:

- ReadWriteOnce

volumeMode: Block

resources:

requests:

storage: 10Gi- 1

volumeModemust be set toBlockto indicate that a raw block PVC is requested.

Pod specification example

apiVersion: v1

kind: Pod

metadata:

name: pod-with-block-volume

spec:

containers:

- name: fc-container

image: fedora:26

command: ["/bin/sh", "-c"]

args: [ "tail -f /dev/null" ]

volumeDevices:

- name: data

devicePath: /dev/xvda

volumes:

- name: data

persistentVolumeClaim:

claimName: block-pvc - 1

volumeDevices, instead ofvolumeMounts, is used for block devices. OnlyPersistentVolumeClaimsources can be used with raw block volumes.- 2

devicePath, instead ofmountPath, represents the path to the physical device where the raw block is mapped to the system.- 3

- The volume source must be of type

persistentVolumeClaimand must match the name of the PVC as expected.

| Value | Default |

|---|---|

| Filesystem | Yes |

| Block | No |

| PV VolumeMode | PVC VolumeMode | Binding Result |

|---|---|---|

| Filesystem | Filesystem | Bind |

| Unspecified | Unspecified | Bind |

| Filesystem | Unspecified | Bind |

| Unspecified | Filesystem | Bind |

| Block | Block | Bind |

| Unspecified | Block | No Bind |

| Block | Unspecified | No Bind |

| Filesystem | Block | No Bind |

| Block | Filesystem | No Bind |

Unspecified values result in the default value of Filesystem.

Chapter 2. Configuring persistent storage

2.1. Persistent storage using AWS Elastic File System

OpenShift Container Platform allows use of Amazon Web Services (AWS) Elastic File System volumes (EFS). You can provision your OpenShift Container Platform cluster with persistent storage using AWS EC2. Some familiarity with Kubernetes and AWS is assumed.

Elastic File System is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see https://access.redhat.com/support/offerings/techpreview/.

The Kubernetes persistent volume framework allows administrators to provision a cluster with persistent storage and gives users a way to request those resources without having any knowledge of the underlying infrastructure. AWS Elastic Block Store volumes can be provisioned dynamically. PersistentVolumes are not bound to a single project or namespace; they can be shared across the OpenShift Container Platform cluster. PersistentVolumeClaims are specific to a project or namespace and can be requested by users.

Prerequisites

- Configure the AWS security groups to allow inbound NFS traffic from the EFS volume’s security group.

- Configure the AWS EFS volume to allow incoming SSH traffic from any host.

Additional references

2.1.1. Store the EFS variables in a ConfigMap

It is recommended to use a ConfigMap to contain all the environment variables that are required for the EFS provisioner.

Procedure

Define an OpenShift Container Platform ConfigMap that contains the environment variables by creating a

configmap.yamlfile that contains following contents:apiVersion: v1 kind: ConfigMap metadata: name: efs-provisioner data: file.system.id: <file-system-id>1 aws.region: <aws-region>2 provisioner.name: openshift.org/aws-efs3 dns.name: ""4 - 1

- Defines the Amazon Web Services (AWS) EFS file system ID.

- 2

- The AWS region of the EFS file system, such as

us-east-1. - 3

- The name of the provisioner for the associated StorageClass.

- 4

- An optional argument that specifies the new DNS name where the EFS volume is located. If no DNS name is provided, the provisioner will search for the EFS volume at

<file-system-id>.efs.<aws-region>.amazonaws.com.

After the file has been configured, create it in your cluster by running the following command:

$ oc create -f configmap.yaml -n <namespace>

2.1.2. Configuring authorization for EFS volumes

The EFS provisioner must be authorized to communicate to the AWS endpoints, along with observing and updating OpenShift Container Platform storage resources. The following instructions create the necessary permissions for the EFS provisioner.

Procedure

Create an

efs-provisionerservice account:$ oc create serviceaccount efs-provisionerCreate a file,

clusterrole.yamlthat defines the necessary permissions:kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: efs-provisioner-runner rules: - apiGroups: [""] resources: ["persistentvolumes"] verbs: ["get", "list", "watch", "create", "delete"] - apiGroups: [""] resources: ["persistentvolumeclaims"] verbs: ["get", "list", "watch", "update"] - apiGroups: ["storage.k8s.io"] resources: ["storageclasses"] verbs: ["get", "list", "watch"] - apiGroups: [""] resources: ["events"] verbs: ["create", "update", "patch"] - apiGroups: ["security.openshift.io"] resources: ["securitycontextconstraints"] verbs: ["use"] resourceNames: ["hostmount-anyuid"]Create a file,

clusterrolebinding.yaml, that defines a cluster role binding that assigns the defined role to the service account:kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: run-efs-provisioner subjects: - kind: ServiceAccount name: efs-provisioner namespace: default1 roleRef: kind: ClusterRole name: efs-provisioner-runner apiGroup: rbac.authorization.k8s.io- 1

- The namespace where the EFS provisioner pod will run. If the EFS provisioner is running in a namespace other than

default, this value must be updated.

Create a file,

role.yaml, that defines a role with the necessary permissions:kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: name: leader-locking-efs-provisioner rules: - apiGroups: [""] resources: ["endpoints"] verbs: ["get", "list", "watch", "create", "update", "patch"]Create a file,

rolebinding.yaml, that defines a role binding that assigns this role to the service account:kind: RoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: leader-locking-efs-provisioner subjects: - kind: ServiceAccount name: efs-provisioner namespace: default1 roleRef: kind: Role name: leader-locking-efs-provisioner apiGroup: rbac.authorization.k8s.io- 1

- The namespace where the EFS provisioner pod will run. If the EFS provisioner is running in a namespace other than

default, this value must be updated.

Create the resources inside the OpenShift Container Platform cluster:

$ oc create -f clusterrole.yaml,clusterrolebinding.yaml,role.yaml,rolebinding.yaml

2.1.3. Create the EFS StorageClass

Before PersistentVolumeClaims can be created, a StorageClass must exist in the OpenShift Container Platform cluster. The following instructions create the StorageClass for the EFS provisioner.

Procedure

Define an OpenShift Container Platform ConfigMap that contains the environment variables by creating a

storageclass.yamlwith the following contents:apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: aws-efs provisioner: openshift.org/aws-efs parameters: gidMin: "2048"1 gidMax: "2147483647"2 gidAllocate: "true"3 - 1

- An optional argument that defines the minimum group ID (GID) for volume assignments. The default value is

2048. - 2

- An optional argument that defines the maximum GID for volume assignments. The default value is

2147483647. - 3

- An optional argument that determines if GIDs are assigned to volumes. If

false, dynamically provisioned volumes are not allocated GIDs, allowing all users to read and write to the created volumes. The default value istrue.

After the file has been configured, create it in your cluster by running the following command:

$ oc create -f storageclass.yaml

2.1.4. Create the EFS provisioner

The EFS provisioner is an OpenShift Container Platform Pod that mounts the EFS volume as an NFS share.

Prerequisites

- Create A ConfigMap that defines the EFS environment variables.

- Create a service account that contains the necessary cluster and role permissions.

- Create a StorageClass for provisioning volumes.

- Configure the Amazon Web Services (AWS) security groups to allow incoming NFS traffic on all OpenShift Container Platform nodes.

- Configure the AWS EFS volume security groups to allow incoming SSH traffic from all sources.

Procedure

Define the EFS provisioner by creating a

provisioner.yamlwith the following contents:kind: Pod apiVersion: v1 metadata: name: efs-provisioner spec: serviceAccount: efs-provisioner containers: - name: efs-provisioner image: quay.io/external_storage/efs-provisioner:latest env: - name: PROVISIONER_NAME valueFrom: configMapKeyRef: name: efs-provisioner key: provisioner.name - name: FILE_SYSTEM_ID valueFrom: configMapKeyRef: name: efs-provisioner key: file.system.id - name: AWS_REGION valueFrom: configMapKeyRef: name: efs-provisioner key: aws.region - name: DNS_NAME valueFrom: configMapKeyRef: name: efs-provisioner key: dns.name optional: true volumeMounts: - name: pv-volume mountPath: /persistentvolumes volumes: - name: pv-volume nfs: server: <file-system-id>.efs.<region>.amazonaws.com1 path: /2 - 1

- Contains the DNS name of the EFS volume. This field must be updated for the Pod to discover the EFS volume.

- 2

- The mount path of the EFS volume. Each persistent volume is created as a separate subdirectory on the EFS volume. If this EFS volume is used for other projects outside of OpenShift Container Platform, then it is recommended to create a unique subdirectory OpenShift Container Platform manually on EFS for the cluster to prevent projects from accessing another project’s data. Specifying a directory that does not exist results in an error.

After the file has been configured, create it in your cluster by running the following command:

$ oc create -f provisioner.yaml

2.1.5. Create the EFS PersistentVolumeClaim

EFS PersistentVolumeClaims are created to allow Pods to mount the underlying EFS storage.

Prerequisites

- Create the EFS provisioner pod.

Procedure (UI)

- In the OpenShift Container Platform console, click Storage → Persistent Volume Claims.

- In the persistent volume claims overview, click Create Persistent Volume Claim.

Define the required options on the resulting page.

- Select the storage class that you created from the list.

- Enter a unique name for the storage claim.

- Select the access mode to determine the read and write access for the created storage claim.

Define the size of the storage claim.

NoteAlthough you must enter a size, every Pod that access the EFS volume has unlimited storage. Define a value, such as

1Mi, that will remind you that the storage size is unlimited.

- Click Create to create the persistent volume claim and generate a persistent volume.

Procedure (CLI)

Alternately, you can define EFS PersistentVolumeClaims by creating a file,

pvc.yaml, with the following contents:kind: PersistentVolumeClaim apiVersion: v1 metadata: name: efs-claim1 namespace: test-efs annotations: volume.beta.kubernetes.io/storage-provisioner: openshift.org/aws-efs finalizers: - kubernetes.io/pvc-protection spec: accessModes: - ReadWriteOnce2 resources: requests: storage: 5Gi3 storageClassName: aws-efs4 volumeMode: FilesystemAfter the file has been configured, create it in your cluster by running the following command:

$ oc create -f pvc.yaml

2.2. Persistent Storage Using AWS Elastic Block Store

OpenShift Container Platform supports AWS Elastic Block Store volumes (EBS). You can provision your OpenShift Container Platform cluster with persistent storage using AWS EC2. Some familiarity with Kubernetes and AWS is assumed.

The Kubernetes persistent volume framework allows administrators to provision a cluster with persistent storage and gives users a way to request those resources without having any knowledge of the underlying infrastructure. AWS Elastic Block Store volumes can be provisioned dynamically. Persistent volumes are not bound to a single project or namespace; they can be shared across the OpenShift Container Platform cluster. Persistent volume claims are specific to a project or namespace and can be requested by users.

High-availability of storage in the infrastructure is left to the underlying storage provider.

Additional References

2.2.1. Creating the EBS Storage Class

StorageClasses are used to differentiate and delineate storage levels and usages. By defining a storage class, users can obtain dynamically provisioned persistent volumes.

Procedure

- In the OpenShift Container Platform console, click Storage → Storage Classes.

- In the storage class overview, click Create Storage Class.

Define the desired options on the page that appears.

- Enter a name to reference the storage class.

- Enter an optional description.

- Select the reclaim policy.

-

Select

kubernetes.io/aws-ebsfrom the drop down list. - Enter additional parameters for the storage class as desired.

- Click Create to create the storage class.

2.2.2. Creating the Persistent Volume Claim

Prerequisites

Storage must exist in the underlying infrastructure before it can be mounted as a volume in OpenShift Container Platform.

Procedure

- In the OpenShift Container Platform console, click Storage → Persistent Volume Claims.

- In the persistent volume claims overview, click Create Persistent Volume Claim.

Define the desired options on the page that appears.

- Select the storage class created previously from the drop-down menu.

- Enter a unique name for the storage claim.

- Select the access mode. This determines the read and write access for the created storage claim.

- Define the size of the storage claim.

- Click Create to create the persistent volume claim and generate a persistent volume.

2.2.3. Volume format

Before OpenShift Container Platform mounts the volume and passes it to a container, it checks that it contains a file system as specified by the fsType parameter in the persistent volume definition. If the device is not formatted with the file system, all data from the device is erased and the device is automatically formatted with the given file system.

This allows using unformatted AWS volumes as persistent volumes, because OpenShift Container Platform formats them before the first use.

2.2.4. Maximum Number of EBS Volumes on a Node

By default, OpenShift Container Platform supports a maximum of 39 EBS volumes attached to one node. This limit is consistent with the AWS volume limits.

OpenShift Container Platform can be configured to have a higher limit by setting the environment variable KUBE_MAX_PD_VOLS. However, AWS requires a particular naming scheme (AWS Device Naming) for attached devices, which only supports a maximum of 52 volumes. This limits the number of volumes that can be attached to a node via OpenShift Container Platform to 52.

2.3. Persistent storage using Azure

OpenShift Container Platform supports Microsoft Azure Disk volumes. You can provision your OpenShift Container Platform cluster with persistent storage using Azure. Some familiarity with Kubernetes and Azure is assumed. The Kubernetes persistent volume framework allows administrators to provision a cluster with persistent storage and gives users a way to request those resources without having any knowledge of the underlying infrastructure. Azure Disk volumes can be provisioned dynamically. Persistent volumes are not bound to a single project or namespace; they can be shared across the OpenShift Container Platform cluster. Persistent volume claims are specific to a project or namespace and can be requested by users.

High availability of storage in the infrastructure is left to the underlying storage provider.

Additional references

2.3.1. Creating the Azure storage class

StorageClasses are used to differentiate and delineate storage levels and usages. By defining a storage class, users can obtain dynamically provisioned persistent volumes.

Additional References

Procedure

- In the OpenShift Container Platform console, click Storage → Storage Classes.

- In the storage class overview, click Create Storage Class.

Define the desired options on the page that appears.

- Enter a name to reference the storage class.

- Enter an optional description.

- Select the reclaim policy.

Select

kubernetes.io/azure-diskfrom the drop down list.-

Enter the storage account type. This corresponds to your Azure storage account SKU tier. Valid options are

Premium_LRS,Standard_LRS,StandardSSD_LRS, andUltraSSD_LRS. -

Enter the kind of account. Valid options are

shared,dedicated,andmanaged.

-

Enter the storage account type. This corresponds to your Azure storage account SKU tier. Valid options are

- Enter additional parameters for the storage class as desired.

- Click Create to create the storage class.

2.3.2. Creating the Persistent Volume Claim

Prerequisites

Storage must exist in the underlying infrastructure before it can be mounted as a volume in OpenShift Container Platform.

Procedure

- In the OpenShift Container Platform console, click Storage → Persistent Volume Claims.

- In the persistent volume claims overview, click Create Persistent Volume Claim.

Define the desired options on the page that appears.

- Select the storage class created previously from the drop-down menu.

- Enter a unique name for the storage claim.

- Select the access mode. This determines the read and write access for the created storage claim.

- Define the size of the storage claim.

- Click Create to create the persistent volume claim and generate a persistent volume.

2.3.3. Volume format

Before OpenShift Container Platform mounts the volume and passes it to a container, it checks that it contains a file system as specified by the fsType parameter in the persistent volume definition. If the device is not formatted with the file system, all data from the device is erased and the device is automatically formatted with the given file system.

This allows using unformatted Azure volumes as persistent volumes, because OpenShift Container Platform formats them before the first use.

2.4. Persistent storage using Azure File

OpenShift Container Platform supports Microsoft Azure File volumes. You can provision your OpenShift Container Platform cluster with persistent storage using Azure. Some familiarity with Kubernetes and Azure is assumed.

The Kubernetes persistent volume framework allows administrators to provision a cluster with persistent storage and gives users a way to request those resources without having any knowledge of the underlying infrastructure. Azure File volumes can be provisioned dynamically.

PersistentVolumes are not bound to a single project or namespace; they can be shared across the OpenShift Container Platform cluster. PersistentVolumeClaims are specific to a project or namespace and can be requested by users for use in applications.

High availability of storage in the infrastructure is left to the underlying storage provider.

Additional references

2.4.1. Create the Azure File share PersistentVolumeClaim

To create the PersistentVolumeClaim, you must first define a Secret that contains the Azure account and key. This Secret is used in the PersistentVolume definition, and will be referenced by the PersistentVolumeClaim for use in applications.

Prerequisites

- An Azure File share exists.

- The credentials to access this share, specifically the storage account and key, are available.

Procedure

Create a Secret that contains the Azure File credentials:

$ oc create secret generic <secret-name> --from-literal=azurestorageaccountname=<storage-account> \1 --from-literal=azurestorageaccountkey=<storage-account-key>2 Create a PersistentVolume that references the Secret you created:

apiVersion: "v1" kind: "PersistentVolume" metadata: name: "pv0001"1 spec: capacity: storage: "5Gi"2 accessModes: - "ReadWriteOnce" storageClassName: azure-file-sc azureFile: secretName: <secret-name>3 shareName: share-14 readOnly: falseCreate a PersistentVolumeClaim that maps to the PersistentVolume you created:

apiVersion: "v1" kind: "PersistentVolumeClaim" metadata: name: "claim1"1 spec: accessModes: - "ReadWriteOnce" resources: requests: storage: "5Gi"2 storageClassName: azure-file-sc3 volumeName: "pv0001"4 - 1

- The name of the PersistentVolumeClaim.

- 2

- The size of this PersistentVolumeClaim.

- 3

- The name of the StorageClass that is used to provision the PersistentVolume. Specify the StorageClass used in the PersistentVolume definition.

- 4

- The name of the existing PersistentVolume that references the Azure File share.

2.4.2. Mount the Azure File share in a Pod

After the PersistentVolumeClaim has been created, it can be used inside by an application. The following example demonstrates mounting this share inside of a Pod.

Prerequisites

- A PersistentVolumeClaim exists that is mapped to the underlying Azure File share.

Procedure

Create a Pod that mounts the existing PersistentVolumeClaim:

apiVersion: v1 kind: Pod metadata: name: pod-name1 spec: containers: ... volumeMounts: - mountPath: "/data"2 name: azure-file-share volumes: - name: azure-file-share persistentVolumeClaim: claimName: claim13

2.5. Persistent storage using Cinder

OpenShift Container Platform supports OpenStack Cinder. Some familiarity with Kubernetes and OpenStack is assumed.

Cinder volumes can be provisioned dynamically. Persistent volumes are not bound to a single project or namespace; they can be shared across the OpenShift Container Platform cluster. Persistent volume claims are specific to a project or namespace and can be requested by users.

Additional resources

- For more information about how OpenStack Block Storage provides persistent block storage management for virtual hard drives, see OpenStack Cinder.

2.5.1. Manual provisioning with Cinder

Storage must exist in the underlying infrastructure before it can be mounted as a volume in OpenShift Container Platform.

Prerequisites

- OpenShift Container Platform configured for Red Hat OpenStack Platform (RHOSP)

- Cinder volume ID

2.5.1.1. Creating the persistent volume

You must define your persistent volume (PV) in an object definition before creating it in OpenShift Container Platform:

Procedure

Save your object definition to a file.

cinder-persistentvolume.yaml

apiVersion: "v1" kind: "PersistentVolume" metadata: name: "pv0001"1 spec: capacity: storage: "5Gi"2 accessModes: - "ReadWriteOnce" cinder:3 fsType: "ext3"4 volumeID: "f37a03aa-6212-4c62-a805-9ce139fab180"5 - 1

- The name of the volume that is used by persistent volume claims or pods.

- 2

- The amount of storage allocated to this volume.

- 3

- Indicates

cinderfor Red Hat OpenStack Platform (RHOSP) Cinder volumes. - 4

- The file system that is created when the volume is mounted for the first time.

- 5

- The Cinder volume to use.

ImportantDo not change the

fstypeparameter value after the volume is formatted and provisioned. Changing this value can result in data loss and Pod failure.Create the object definition file you saved in the previous step.

$ oc create -f cinder-persistentvolume.yaml

2.5.1.2. Persistent volume formatting

You can use unformatted Cinder volumes as PVs because OpenShift Container Platform formats them before the first use.

Before OpenShift Container Platform mounts the volume and passes it to a container, the system checks that it contains a file system as specified by the fsType parameter in the PV definition. If the device is not formatted with the file system, all data from the device is erased and the device is automatically formatted with the given file system.

2.5.1.3. Cinder volume security

If you use Cinder PVs in your application, configure security for their deployment configurations.

Prerequisite

-

An SCC must be created that uses the appropriate

fsGroupstrategy.

Procedure

Create a service account and add it to the SCC:

$ oc create serviceaccount <service_account> $ oc adm policy add-scc-to-user <new_scc> -z <service_account> -n <project>In your application’s deployment configuration, provide the service account name and

securityContext:apiVersion: v1 kind: ReplicationController metadata: name: frontend-1 spec: replicas: 11 selector:2 name: frontend template:3 metadata: labels:4 name: frontend5 spec: containers: - image: openshift/hello-openshift name: helloworld ports: - containerPort: 8080 protocol: TCP restartPolicy: Always serviceAccountName: <service_account>6 securityContext: fsGroup: 77777 - 1

- The number of copies of the Pod to run.

- 2

- The label selector of the Pod to run.

- 3

- A template for the Pod that the controller creates.

- 4

- The labels on the Pod. They must include labels from the label selector.

- 5

- The maximum name length after expanding any parameters is 63 characters.

- 6

- Specifies the service account you created.

- 7

- Specifies an

fsGroupfor the Pods.

2.6. Persistent storage using the Container Storage Interface (CSI)

The Container Storage Interface (CSI) allows OpenShift Container Platform to consume storage from storage backends that implement the CSI interface as persistent storage.

OpenShift Container Platform does not ship with any CSI drivers. It is recommended to use the CSI drivers provided by community or storage vendors.

Installation instructions differ by driver, and are found in each driver’s documentation. Follow the instructions provided by the CSI driver.

OpenShift Container Platform 4.2 supports version 1.1.0 of the CSI specification.

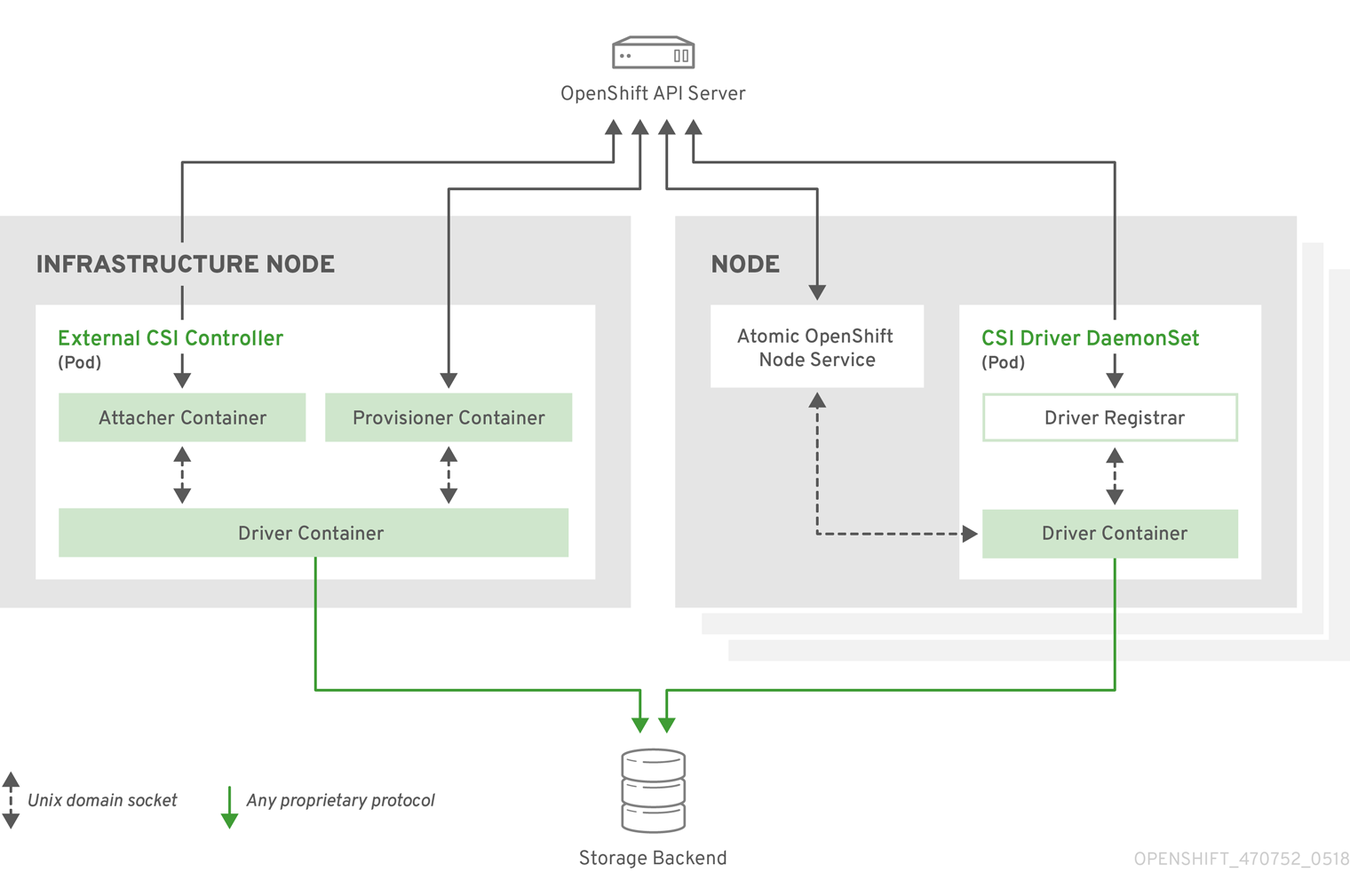

2.6.1. CSI Architecture

CSI drivers are typically shipped as container images. These containers are not aware of OpenShift Container Platform where they run. To use CSI-compatible storage backend in OpenShift Container Platform, the cluster administrator must deploy several components that serve as a bridge between OpenShift Container Platform and the storage driver.

The following diagram provides a high-level overview about the components running in pods in the OpenShift Container Platform cluster.

It is possible to run multiple CSI drivers for different storage backends. Each driver needs its own external controllers' deployment and DaemonSet with the driver and CSI registrar.

2.6.1.1. External CSI controllers

External CSI Controllers is a deployment that deploys one or more pods with three containers:

-

An external CSI attacher container translates

attachanddetachcalls from OpenShift Container Platform to respectiveControllerPublishandControllerUnpublishcalls to the CSI driver. -

An external CSI provisioner container that translates

provisionanddeletecalls from OpenShift Container Platform to respectiveCreateVolumeandDeleteVolumecalls to the CSI driver. - A CSI driver container

The CSI attacher and CSI provisioner containers communicate with the CSI driver container using UNIX Domain Sockets, ensuring that no CSI communication leaves the pod. The CSI driver is not accessible from outside of the pod.

attach, detach, provision, and delete operations typically require the CSI driver to use credentials to the storage backend. Run the CSI controller pods on infrastructure nodes so the credentials are never leaked to user processes, even in the event of a catastrophic security breach on a compute node.

The external attacher must also run for CSI drivers that do not support third-party attach or detach operations. The external attacher will not issue any ControllerPublish or ControllerUnpublish operations to the CSI driver. However, it still must run to implement the necessary OpenShift Container Platform attachment API.

2.6.1.2. CSI Driver DaemonSet

The CSI driver DaemonSet runs a pod on every node that allows OpenShift Container Platform to mount storage provided by the CSI driver to the node and use it in user workloads (pods) as persistent volumes (PVs). The pod with the CSI driver installed contains the following containers:

-

A CSI driver registrar, which registers the CSI driver into the

openshift-nodeservice running on the node. Theopenshift-nodeprocess running on the node then directly connects with the CSI driver using the UNIX Domain Socket available on the node. - A CSI driver.

The CSI driver deployed on the node should have as few credentials to the storage backend as possible. OpenShift Container Platform will only use the node plug-in set of CSI calls such as NodePublish/NodeUnpublish and NodeStage/NodeUnstage, if these calls are implemented.

2.6.2. Dynamic Provisioning

Dynamic provisioning of persistent storage depends on the capabilities of the CSI driver and underlying storage backend. The provider of the CSI driver should document how to create a StorageClass in OpenShift Container Platform and the parameters available for configuration.

The created StorageClass can be configured to enable dynamic provisioning.

Procedure

Create a default storage class that ensures all PVCs that do not require any special storage class are provisioned by the installed CSI driver.

# oc create -f - << EOF apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: <storage-class>1 annotations: storageclass.kubernetes.io/is-default-class: "true" provisioner: <provisioner-name>2 parameters: EOF

2.6.3. Example using the CSI driver

The following example installs a default MySQL template without any changes to the template.

Prerequisites

- The CSI driver has been deployed.

- A StorageClass has been created for dynamic provisioning.

Procedure

Create the MySQL template:

# oc new-app mysql-persistent --> Deploying template "openshift/mysql-persistent" to project default ... # oc get pvc NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE mysql Bound kubernetes-dynamic-pv-3271ffcb4e1811e8 1Gi RWO cinder 3s

2.7. Persistent storage using Fibre Channel

OpenShift Container Platform supports Fibre Channel, allowing you to provision your OpenShift Container Platform cluster with persistent storage using Fibre channel volumes. Some familiarity with Kubernetes and Fibre Channel is assumed.

The Kubernetes persistent volume framework allows administrators to provision a cluster with persistent storage and gives users a way to request those resources without having any knowledge of the underlying infrastructure. PersistentVolumes are not bound to a single project or namespace; they can be shared across the OpenShift Container Platform cluster. PersistentVolumeClaims are specific to a project or namespace and can be requested by users.

High availability of storage in the infrastructure is left to the underlying storage provider.

Additional references

2.7.1. Provisioning

To provision Fibre Channel volumes using the PersistentVolume API the following must be available:

-

The

targetWWNs(array of Fibre Channel target’s World Wide Names). - A valid LUN number.

- The filesystem type.

A PersistentVolume and a LUN have a one-to-one mapping between them.

Prerequisites

- Fibre Channel LUNs must exist in the underlying infrastructure.

PersistentVolume Object Definition

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

fc:

targetWWNs: ['500a0981891b8dc5', '500a0981991b8dc5']

lun: 2

fsType: ext4- 1

- Fibre Channel WWNs are identified as

/dev/disk/by-path/pci-<IDENTIFIER>-fc-0x<WWN>-lun-<LUN#>, but you do not need to provide any part of the path leading up to theWWN, including the0x, and anything after, including the-(hyphen).

Changing the value of the fstype parameter after the volume has been formatted and provisioned can result in data loss and pod failure.

2.7.1.1. Enforcing disk quotas

Use LUN partitions to enforce disk quotas and size constraints. Each LUN is mapped to a single PersistentVolume, and unique names must be used for PersistentVolumes.

Enforcing quotas in this way allows the end user to request persistent storage by a specific amount, such as 10Gi, and be matched with a corresponding volume of equal or greater capacity.

2.7.1.2. Fibre Channel volume security

Users request storage with a PersistentVolumeClaim. This claim only lives in the user’s namespace, and can only be referenced by a pod within that same namespace. Any attempt to access a PersistentVolume across a namespace causes the pod to fail.

Each Fibre Channel LUN must be accessible by all nodes in the cluster.

2.8. Persistent storage using FlexVolume

OpenShift Container Platform supports FlexVolume, an out-of-tree plug-in that uses an executable model to interface with drivers.

To use storage from a back-end that does not have a built-in plug-in, you can extend OpenShift Container Platform through FlexVolume drivers and provide persistent storage to applications.

Pods interact with FlexVolume drivers through the flexvolume in-tree plugin.

Additional References

2.8.1. About FlexVolume drivers

A FlexVolume driver is an executable file that resides in a well-defined directory on all nodes in the cluster. OpenShift Container Platform calls the FlexVolume driver whenever it needs to mount or unmount a volume represented by a PersistentVolume with flexVolume as the source.

Attach and detach operations are not supported in OpenShift Container Platform for FlexVolume.

2.8.2. FlexVolume driver example

The first command-line argument of the FlexVolume driver is always an operation name. Other parameters are specific to each operation. Most of the operations take a JavaScript Object Notation (JSON) string as a parameter. This parameter is a complete JSON string, and not the name of a file with the JSON data.

The FlexVolume driver contains:

-

All

flexVolume.options. -

Some options from

flexVolumeprefixed bykubernetes.io/, such asfsTypeandreadwrite. -

The content of the referenced secret, if specified, prefixed by

kubernetes.io/secret/.

FlexVolume driver JSON input example

{

"fooServer": "192.168.0.1:1234",

"fooVolumeName": "bar",

"kubernetes.io/fsType": "ext4",

"kubernetes.io/readwrite": "ro",

"kubernetes.io/secret/<key name>": "<key value>",

"kubernetes.io/secret/<another key name>": "<another key value>",

}OpenShift Container Platform expects JSON data on standard output of the driver. When not specified, the output describes the result of the operation.

FlexVolume driver default output example

{

"status": "<Success/Failure/Not supported>",

"message": "<Reason for success/failure>"

}

Exit code of the driver should be 0 for success and 1 for error.

Operations should be idempotent, which means that the mounting of an already mounted volume should result in a successful operation.

2.8.3. Installing FlexVolume drivers

FlexVolume drivers that are used to extend OpenShift Container Platform are executed only on the node. To implement FlexVolumes, a list of operations to call and the installation path are all that is required.

Prerequisites

FlexVolume drivers must implement these operations:

initInitializes the driver. It is called during initialization of all nodes.

- Arguments: none

- Executed on: node

- Expected output: default JSON

mountMounts a volume to directory. This can include anything that is necessary to mount the volume, including finding the device and then mounting the device.

-

Arguments:

<mount-dir><json> - Executed on: node

- Expected output: default JSON

-

Arguments:

unmountUnmounts a volume from a directory. This can include anything that is necessary to clean up the volume after unmounting.

-

Arguments:

<mount-dir> - Executed on: node

- Expected output: default JSON

-

Arguments:

mountdevice- Mounts a volume’s device to a directory where individual Pods can then bind mount.

This call-out does not pass "secrets" specified in the FlexVolume spec. If your driver requires secrets, do not implement this call-out.

-

Arguments:

<mount-dir><json> - Executed on: node

Expected output: default JSON

unmountdevice- Unmounts a volume’s device from a directory.

-

Arguments:

<mount-dir> - Executed on: node

Expected output: default JSON

-

All other operations should return JSON with

{"status": "Not supported"}and exit code1.

-

All other operations should return JSON with

Procedure

To install the FlexVolume driver:

- Ensure that the executable file exists on all nodes in the cluster.

- Place the executable file at the volume plug-in path: /etc/kubernetes/kubelet-plugins/volume/exec/<vendor>~<driver>/<driver>.

For example, to install the FlexVolume driver for the storage foo, place the executable file at: /etc/kubernetes/kubelet-plugins/volume/exec/openshift.com~foo/foo.

2.8.4. Consuming storage using FlexVolume drivers

Each PersistentVolume object in OpenShift Container Platform represents one storage asset in the storage back-end, such as a volume.

Procedure

-

Use the

PersistentVolumeobject to reference the installed storage.

Persistent volume object definition using FlexVolume drivers example

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

flexVolume:

driver: openshift.com/foo

fsType: "ext4"

secretRef: foo-secret

readOnly: true

options:

fooServer: 192.168.0.1:1234

fooVolumeName: bar- 1

- The name of the volume. This is how it is identified through persistent volume claims or from Pods. This name can be different from the name of the volume on back-end storage.

- 2

- The amount of storage allocated to this volume.

- 3

- The name of the driver. This field is mandatory.

- 4

- The file system that is present on the volume. This field is optional.

- 5

- The reference to a secret. Keys and values from this secret are provided to the FlexVolume driver on invocation. This field is optional.

- 6

- The read-only flag. This field is optional.

- 7

- The additional options for the FlexVolume driver. In addition to the flags specified by the user in the

optionsfield, the following flags are also passed to the executable:"fsType":"<FS type>", "readwrite":"<rw>", "secret/key1":"<secret1>" ... "secret/keyN":"<secretN>"

Secrets are passed only to mount or unmount call-outs.

2.9. Persistent storage using GCE Persistent Disk

OpenShift Container Platform supports GCE Persistent Disk volumes (gcePD). You can provision your OpenShift Container Platform cluster with persistent storage using GCE. Some familiarity with Kubernetes and GCE is assumed.

The Kubernetes persistent volume framework allows administrators to provision a cluster with persistent storage and gives users a way to request those resources without having any knowledge of the underlying infrastructure.

GCE Persistent Disk volumes can be provisioned dynamically.

Persistent volumes are not bound to a single project or namespace; they can be shared across the OpenShift Container Platform cluster. Persistent volume claims are specific to a project or namespace and can be requested by users.

High availability of storage in the infrastructure is left to the underlying storage provider.

Additional references

2.9.1. Creating the GCE Storage Class

StorageClasses are used to differentiate and delineate storage levels and usages. By defining a storage class, users can obtain dynamically provisioned persistent volumes.

Procedure

- In the OpenShift Container Platform console, click Storage → Storage Classes.

- In the storage class overview, click Create Storage Class.

Define the desired options on the page that appears.

- Enter a name to reference the storage class.

- Enter an optional description.

- Select the reclaim policy.

-

Select

kubernetes.io/gce-pdfrom the drop down list. - Enter additional parameters for the storage class as desired.

- Click Create to create the storage class.

2.9.2. Creating the Persistent Volume Claim

Prerequisites

Storage must exist in the underlying infrastructure before it can be mounted as a volume in OpenShift Container Platform.

Procedure

- In the OpenShift Container Platform console, click Storage → Persistent Volume Claims.

- In the persistent volume claims overview, click Create Persistent Volume Claim.

Define the desired options on the page that appears.

- Select the storage class created previously from the drop-down menu.

- Enter a unique name for the storage claim.

- Select the access mode. This determines the read and write access for the created storage claim.

- Define the size of the storage claim.

- Click Create to create the persistent volume claim and generate a persistent volume.

2.9.3. Volume format

Before OpenShift Container Platform mounts the volume and passes it to a container, it checks that it contains a file system as specified by the fsType parameter in the persistent volume definition. If the device is not formatted with the file system, all data from the device is erased and the device is automatically formatted with the given file system.

This allows using unformatted GCE volumes as persistent volumes, because OpenShift Container Platform formats them before the first use.

2.10. Persistent storage using hostPath

A hostPath volume in an OpenShift Container Platform cluster mounts a file or directory from the host node’s filesystem into your Pod. Most Pods will not need a hostPath volume, but it does offer a quick option for testing should an application require it.

The cluster administrator must configure Pods to run as privileged. This grants access to Pods in the same node.

2.10.1. Overview

OpenShift Container Platform supports hostPath mounting for development and testing on a single-node cluster.

In a production cluster, you would not use hostPath. Instead, a cluster administrator would provision a network resource, such as a GCE Persistent Disk volume, an NFS share, or an Amazon EBS volume. Network resources support the use of StorageClasses to set up dynamic provisioning.

A hostPath volume must be provisioned statically.

2.10.2. Statically provisioning hostPath volumes

A Pod that uses a hostPath volume must be referenced by manual (static) provisioning.

Procedure

Define the persistent volume (PV). Create a file,

pv.yaml, with the PersistentVolume object definition:apiVersion: v1 kind: PersistentVolume metadata: name: task-pv-volume1 labels: type: local spec: storageClassName: manual2 capacity: storage: 5Gi accessModes: - ReadWriteOnce3 persistentVolumeReclaimPolicy: Retain hostPath: path: "/mnt/data"4 - 1

- The name of the volume. This name is how it is identified by PersistentVolumeClaims or Pods.

- 2

- Used to bind PersistentVolumeClaim requests to this PersistentVolume.

- 3

- The volume can be mounted as

read-writeby a single node. - 4

- The configuration file specifies that the volume is at

/mnt/dataon the cluster’s node.

Create the PV from the file:

$ oc create -f pv.yamlDefine the persistent volume claim (PVC). Create a file,

pvc.yaml, with the PersistentVolumeClaim object definition:apiVersion: v1 kind: PersistentVolumeClaim metadata: name: task-pvc-volume spec: accessModes: - ReadWriteOnce resources: requests: storage: 1Gi storageClassName: manualCreate the PVC from the file:

$ oc create -f pvc.yaml

2.10.3. Mounting the hostPath share in a privileged Pod

After the PersistentVolumeClaim has been created, it can be used inside by an application. The following example demonstrates mounting this share inside of a Pod.

Prerequisites

- A PersistentVolumeClaim exists that is mapped to the underlying hostPath share.

Procedure

Create a privileged Pod that mounts the existing PersistentVolumeClaim:

apiVersion: v1 kind: Pod metadata: name: pod-name1 spec: containers: ... securityContext: privileged: true2 volumeMounts: - mountPath: /data3 name: hostpath-privileged ... securityContext: {} volumes: - name: hostpath-privileged persistentVolumeClaim: claimName: task-pvc-volume4

2.11. Persistent Storage Using iSCSI

You can provision your OpenShift Container Platform cluster with persistent storage using iSCSI. Some familiarity with Kubernetes and iSCSI is assumed.

The Kubernetes persistent volume framework allows administrators to provision a cluster with persistent storage and gives users a way to request those resources without having any knowledge of the underlying infrastructure.

Persistent storage using iSCSI is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see https://access.redhat.com/support/offerings/techpreview/.

High-availability of storage in the infrastructure is left to the underlying storage provider.

When you use iSCSI on Amazon Web Services, you must update the default security policy to include TCP traffic between nodes on the iSCSI ports. By default, they are ports 860 and 3260.

OpenShift assumes that all nodes in the cluster have already configured iSCSI initator, i.e. have installed iscsi-initiator-utils package and configured their initiator name in /etc/iscsi/initiatorname.iscsi. See Storage Administration Guide linked above.

2.11.1. Provisioning

Verify that the storage exists in the underlying infrastructure before mounting it as a volume in OpenShift Container Platform. All that is required for the iSCSI is the iSCSI target portal, a valid iSCSI Qualified Name (IQN), a valid LUN number, the filesystem type, and the PersistentVolume API.

Example 2.1. Persistent Volume Object Definition

apiVersion: v1

kind: PersistentVolume

metadata:

name: iscsi-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

iscsi:

targetPortal: 10.16.154.81:3260

iqn: iqn.2014-12.example.server:storage.target00

lun: 0

fsType: 'ext4'2.11.2. Enforcing Disk Quotas

Use LUN partitions to enforce disk quotas and size constraints. Each LUN is one persistent volume. Kubernetes enforces unique names for persistent volumes.

Enforcing quotas in this way allows the end user to request persistent storage by a specific amount (e.g, 10Gi) and be matched with a corresponding volume of equal or greater capacity.

2.11.3. iSCSI Volume Security

Users request storage with a PersistentVolumeClaim. This claim only lives in the user’s namespace and can only be referenced by a pod within that same namespace. Any attempt to access a persistent volume claim across a namespace causes the pod to fail.

Each iSCSI LUN must be accessible by all nodes in the cluster.

2.11.3.1. Challenge Handshake Authentication Protocol (CHAP) configuration

Optionally, OpenShift can use CHAP to authenticate itself to iSCSI targets:

apiVersion: v1

kind: PersistentVolume

metadata:

name: iscsi-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

iscsi:

targetPortal: 10.0.0.1:3260

iqn: iqn.2016-04.test.com:storage.target00

lun: 0

fsType: ext4

chapAuthDiscovery: true

chapAuthSession: true

secretRef:

name: chap-secret 2.11.4. iSCSI Multipathing

For iSCSI-based storage, you can configure multiple paths by using the same IQN for more than one target portal IP address. Multipathing ensures access to the persistent volume when one or more of the components in a path fail.

To specify multi-paths in the pod specification use the portals field. For example:

apiVersion: v1

kind: PersistentVolume

metadata:

name: iscsi-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

iscsi:

targetPortal: 10.0.0.1:3260

portals: ['10.0.2.16:3260', '10.0.2.17:3260', '10.0.2.18:3260']

iqn: iqn.2016-04.test.com:storage.target00

lun: 0

fsType: ext4

readOnly: false- 1

- Add additional target portals using the

portalsfield.

2.11.5. iSCSI Custom Initiator IQN

Configure the custom initiator iSCSI Qualified Name (IQN) if the iSCSI targets are restricted to certain IQNs, but the nodes that the iSCSI PVs are attached to are not guaranteed to have these IQNs.

To specify a custom initiator IQN, use initiatorName field.

apiVersion: v1

kind: PersistentVolume

metadata:

name: iscsi-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

iscsi:

targetPortal: 10.0.0.1:3260

portals: ['10.0.2.16:3260', '10.0.2.17:3260', '10.0.2.18:3260']

iqn: iqn.2016-04.test.com:storage.target00

lun: 0

initiatorName: iqn.2016-04.test.com:custom.iqn

fsType: ext4

readOnly: false- 1

- Specify the name of the initiator.

2.12. Persistent storage using local volumes

OpenShift Container Platform can be provisioned with persistent storage by using local volumes. Local persistent volumes allow you to access local storage devices, such as a disk or partition, by using the standard PVC interface.

Local volumes can be used without manually scheduling Pods to nodes, because the system is aware of the volume node’s constraints. However, local volumes are still subject to the availability of the underlying node and are not suitable for all applications.

Local volumes can only be used as a statically created Persistent Volume.

2.12.1. Installing the Local Storage Operator

The Local Storage Operator is not installed in OpenShift Container Platform by default. Use the following procedure to install and configure this Operator to enable local volumes in your cluster.

Prerequisites

- Access to the OpenShift Container Platform web console or command-line interface (CLI).

Procedure

Create the

local-storageproject:$ oc new-project local-storageOptional: Allow local storage creation on master and infrastructure nodes.

You might want to use the Local Storage Operator to create volumes on master and infrastructure nodes, and not just worker nodes, to support components such as logging and monitoring.

To allow local storage creation on master and infrastructure nodes, add a toleration to the DaemonSet by entering the following commands:

$ oc patch ds local-storage-local-diskmaker -n local-storage -p '{"spec": {"template": {"spec": {"tolerations":[{"operator": "Exists"}]}}}}'$ oc patch ds local-storage-local-provisioner -n local-storage -p '{"spec": {"template": {"spec": {"tolerations":[{"operator": "Exists"}]}}}}'

From the UI

To install the Local Storage Operator from the web console, follow these steps:

- Log in to the OpenShift Container Platform web console.

- Navigate to Operators → OperatorHub.

- Type Local Storage into the filter box to locate the Local Storage Operator.

- Click Install.

- On the Create Operator Subscription page, select A specific namespace on the cluster. Select local-storage from the drop-down menu.

- Adjust the values for Update Channel and Approval Strategy to the values that you want.

- Click Subscribe.

Once finished, the Local Storage Operator will be listed in the Installed Operators section of the web console.

From the CLI

Install the Local Storage Operator from the CLI.

Create an object YAML file to define a Namespace, OperatorGroup, and Subscription for the Local Storage Operator, such as

local-storage.yaml:Example local-storage

apiVersion: v1 kind: Namespace metadata: name: local-storage --- apiVersion: operators.coreos.com/v1alpha2 kind: OperatorGroup metadata: name: local-operator-group namespace: local-storage spec: targetNamespaces: - local-storage --- apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: local-storage-operator namespace: local-storage spec: channel: "{product-version}"1 installPlanApproval: Automatic name: local-storage-operator source: redhat-operators sourceNamespace: openshift-marketplace- 1

- This field can be edited to match your release selection of OpenShift Container Platform.

Create the Local Storage Operator object by entering the following command:

$ oc apply -f local-storage.yamlAt this point, the Operator Lifecycle Manager (OLM) is now aware of the Local Storage Operator. A ClusterServiceVersion (CSV) for the Operator should appear in the target namespace, and APIs provided by the Operator should be available for creation.

Verify local storage installation by checking that all Pods and the Local Storage Operator have been created:

Check that all the required Pods have been created:

$ oc -n local-storage get pods NAME READY STATUS RESTARTS AGE local-storage-operator-746bf599c9-vlt5t 1/1 Running 0 19mCheck the ClusterServiceVersion (CSV) YAML manifest to see that the Local Storage Operator is available in the

local-storageproject:$ oc get csvs -n local-storage NAME DISPLAY VERSION REPLACES PHASE local-storage-operator.4.2.26-202003230335 Local Storage 4.2.26-202003230335 Succeeded

After all checks have passed, the Local Storage Operator is installed successfully.

2.12.2. Provision the local volumes

Local volumes cannot be created by dynamic provisioning. Instead, PersistentVolumes must be created by the Local Storage Operator. This provisioner will look for any devices, both file system and block volumes, at the paths specified in defined resource.

Prerequisites

- The Local Storage Operator is installed.

- Local disks are attached to the OpenShift Container Platform nodes.

Procedure

Create the local volume resource. This must define the nodes and paths to the local volumes.

NoteDo not use different StorageClass names for the same device. Doing so will create multiple persistent volumes (PVs).

Example: Filesystem

apiVersion: "local.storage.openshift.io/v1" kind: "LocalVolume" metadata: name: "local-disks" namespace: "local-storage"1 spec: nodeSelector:2 nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - ip-10-0-140-183 - ip-10-0-158-139 - ip-10-0-164-33 storageClassDevices: - storageClassName: "local-sc" volumeMode: Filesystem3 fsType: xfs4 devicePaths:5 - /path/to/device6 - 1

- The namespace where the Local Storage Operator is installed.

- 2

- Optional: A node selector containing a list of nodes where the local storage volumes are attached. This example uses the node host names, obtained from

oc get node. If a value is not defined, then the Local Storage Operator will attempt to find matching disks on all available nodes. - 3

- The volume mode, either

FilesystemorBlock, defining the type of the local volumes. - 4

- The file system that is created when the local volume is mounted for the first time.

- 5

- The path containing a list of local storage devices to choose from.

- 6

- Replace this value with your actual local disks filepath to the LocalVolume resource, such as

/dev/xvdg. PVs are created for these local disks when the provisioner is deployed successfully.

Example: Block

apiVersion: "local.storage.openshift.io/v1" kind: "LocalVolume" metadata: name: "local-disks" namespace: "local-storage"1 spec: nodeSelector:2 nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - ip-10-0-136-143 - ip-10-0-140-255 - ip-10-0-144-180 storageClassDevices: - storageClassName: "localblock-sc" volumeMode: Block3 devicePaths:4 - /path/to/device5 - 1

- The namespace where the Local Storage Operator is installed.

- 2

- Optional: A node selector containing a list of nodes where the local storage volumes are attached. This example uses the node host names, obtained from

oc get node. If a value is not defined, then the Local Storage Operator will attempt to find matching disks on all available nodes. - 3

- The volume mode, either

FilesystemorBlock, defining the type of the local volumes. - 4

- The path containing a list of local storage devices to choose from.

- 5

- Replace this value with your actual local disks filepath to the LocalVolume resource, such as

/dev/xvdg. PVs are created for these local disks when the provisioner is deployed successfully.

Create the local volume resource in your OpenShift Container Platform cluster, specifying the file you just created:

$ oc create -f <local-volume>.yamlVerify the provisioner was created, and the corresponding DaemonSets were created:

$ oc get all -n local-storage NAME READY STATUS RESTARTS AGE pod/local-disks-local-provisioner-h97hj 1/1 Running 0 46m pod/local-disks-local-provisioner-j4mnn 1/1 Running 0 46m pod/local-disks-local-provisioner-kbdnx 1/1 Running 0 46m pod/local-disks-local-diskmaker-ldldw 1/1 Running 0 46m pod/local-disks-local-diskmaker-lvrv4 1/1 Running 0 46m pod/local-disks-local-diskmaker-phxdq 1/1 Running 0 46m pod/local-storage-operator-54564d9988-vxvhx 1/1 Running 0 47m NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/local-storage-operator ClusterIP 172.30.49.90 <none> 60000/TCP 47m NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/local-disks-local-provisioner 3 3 3 3 3 <none> 46m daemonset.apps/local-disks-local-diskmaker 3 3 3 3 3 <none> 46m NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/local-storage-operator 1/1 1 1 47m NAME DESIRED CURRENT READY AGE replicaset.apps/local-storage-operator-54564d9988 1 1 1 47mNote the desired and current number of DaemonSet processes. If the desired count is

0, it indicates the label selectors were invalid.Verify that the PersistentVolumes were created:

$ oc get pv NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE local-pv-1cec77cf 100Gi RWO Delete Available local-sc 88m local-pv-2ef7cd2a 100Gi RWO Delete Available local-sc 82m local-pv-3fa1c73 100Gi RWO Delete Available local-sc 48m

2.12.3. Create the local volume PersistentVolumeClaim

Local volumes must be statically created as a PersistentVolumeClaim (PVC) to be accessed by the Pod.

Prerequisite

- PersistentVolumes have been created using the local volume provisioner.

Procedure

Create the PVC using the corresponding StorageClass:

kind: PersistentVolumeClaim apiVersion: v1 metadata: name: local-pvc-name1 spec: accessModes: - ReadWriteOnce volumeMode: Filesystem2 resources: requests: storage: 100Gi3 storageClassName: local-sc4 Create the PVC in the OpenShift Container Platform cluster, specifying the file you just created:

$ oc create -f <local-pvc>.yaml

2.12.4. Attach the local claim

After a local volume has been mapped to a PersistentVolumeClaim (PVC) it can be specified inside of a resource.

Prerequisites

- A PVC exists in the same namespace.

Procedure

Include the defined claim in the resource’s Spec. The following example declares the PVC inside a Pod:

apiVersion: v1 kind: Pod spec: ... containers: volumeMounts: - name: localpvc1 mountPath: "/data"2 volumes: - name: localpvc persistentVolumeClaim: claimName: localpvc3 Create the resource in the OpenShift Container Platform cluster, specifying the file you just created:

$ oc create -f <local-pod>.yaml

2.12.5. Deleting the Local Storage Operator’s resources

2.12.5.1. Removing a local volume