Chapter 15. Geo-replication

Geo-replication allows multiple, geographically distributed Red Hat Quay deployments to work as a single registry from the perspective of a client or user. It significantly improves push and pull performance in a globally-distributed Red Hat Quay setup. Image data is asynchronously replicated in the background with transparent failover and redirect for clients.

With Red Hat Quay 3.7, deployments of Red Hat Quay with geo-replication is supported on standalone and Operator deployments.

15.1. Geo-replication features

- When geo-replication is configured, container image pushes will be written to the preferred storage engine for that Red Hat Quay instance. This is typically the nearest storage backend within the region.

- After the initial push, image data will be replicated in the background to other storage engines.

- The list of replication locations is configurable and those can be different storage backends.

- An image pull will always use the closest available storage engine, to maximize pull performance.

- If replication has not been completed yet, the pull will use the source storage backend instead.

15.2. Geo-replication requirements and constraints

- In geo-replicated setups, Red Hat Quay requires that all regions are able to read and write to all other region’s object storage. Object storage must be geographically accessible by all other regions.

- In case of an object storage system failure of one geo-replicating site, that site’s Red Hat Quay deployment must be shut down so that clients are redirected to the remaining site with intact storage systems by a global load balancer. Otherwise, clients will experience pull and push failures.

- Red Hat Quay has no internal awareness of the health or availability of the connected object storage system. If the object storage system of one site becomes unavailable, there will be no automatic redirect to the remaining storage system, or systems, of the remaining site, or sites.

- Geo-replication is asynchronous. The permanent loss of a site incurs the loss of the data that has been saved in that sites' object storage system but has not yet been replicated to the remaining sites at the time of failure.

A single database, and therefore all metadata and Red Hat Quay configuration, is shared across all regions.

Geo-replication does not replicate the database. In the event of an outage, Red Hat Quay with geo-replication enabled will not failover to another database.

- A single Redis cache is shared across the entire Red Hat Quay setup and needs to accessible by all Red Hat Quay pods.

-

The exact same configuration should be used across all regions, with exception of the storage backend, which can be configured explicitly using the

QUAY_DISTRIBUTED_STORAGE_PREFERENCEenvironment variable. - Geo-replication requires object storage in each region. It does not work with local storage.

- Each region must be able to access every storage engine in each region, which requires a network path.

- Alternatively, the storage proxy option can be used.

- The entire storage backend, for example, all blobs, is replicated. Repository mirroring, by contrast, can be limited to a repository, or an image.

- All Red Hat Quay instances must share the same entrypoint, typically through a load balancer.

- All Red Hat Quay instances must have the same set of superusers, as they are defined inside the common configuration file.

-

Geo-replication requires your Clair configuration to be set to

unmanaged. An unmanaged Clair database allows the Red Hat Quay Operator to work in a geo-replicated environment, where multiple instances of the Red Hat Quay Operator must communicate with the same database. For more information, see Advanced Clair configuration. - Geo-Replication requires SSL/TSL certificates and keys. For more information, see Using SSL/TSL to protect connections to Red Hat Quay.

If the above requirements cannot be met, you should instead use two or more distinct Red Hat Quay deployments and take advantage of repository mirroring functions.

15.3. Geo-replication using standalone Red Hat Quay

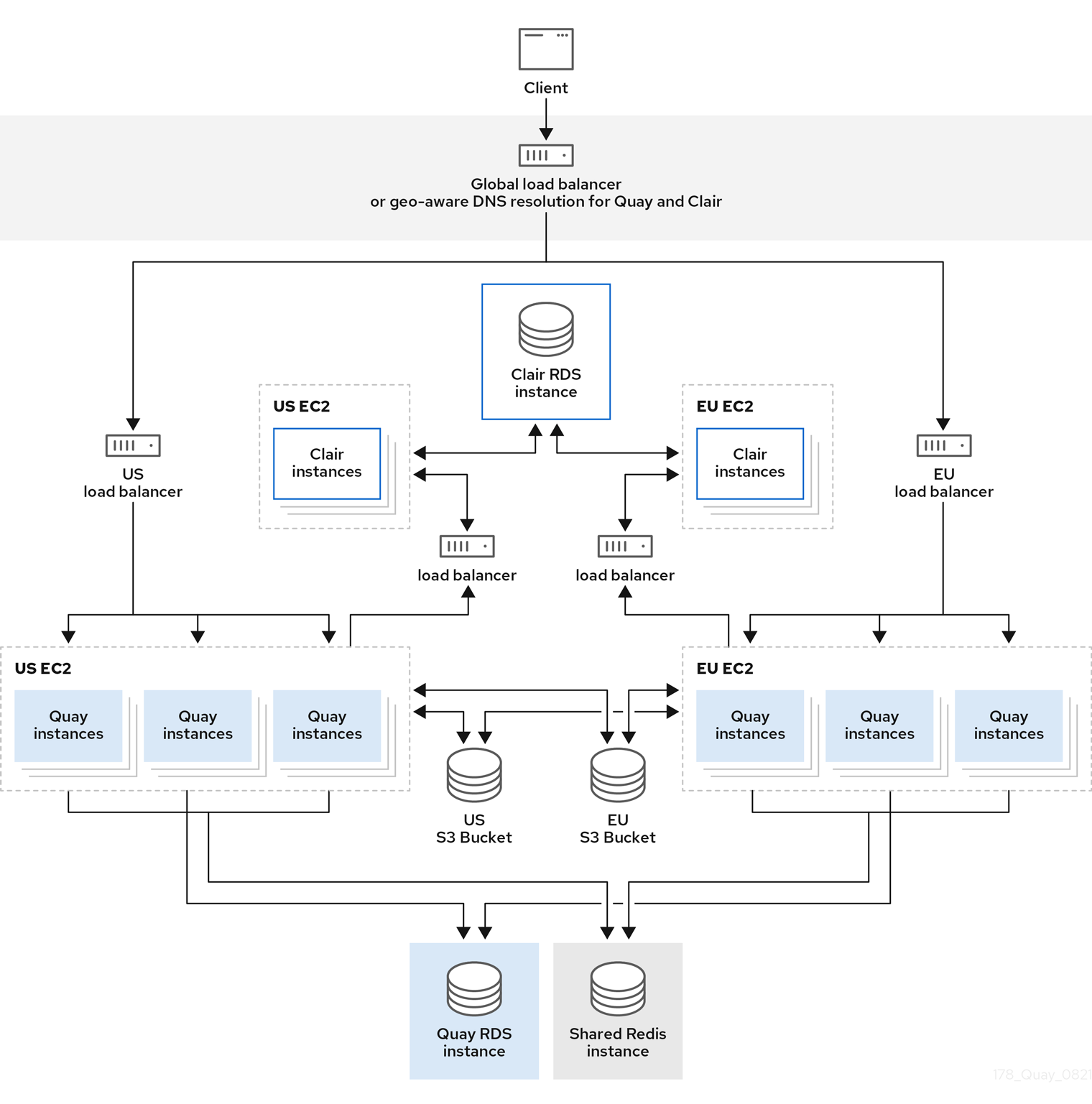

In the following image, Red Hat Quay is running standalone in two separate regions, with a common database and a common Redis instance. Localized image storage is provided in each region and image pulls are served from the closest available storage engine. Container image pushes are written to the preferred storage engine for the Red Hat Quay instance, and will then be replicated, in the background, to the other storage engines.

If Clair fails in one cluster, for example, the US cluster, US users would not see vulnerability reports in Red Hat Quay for the second cluster (EU). This is because all Clair instances have the same state. When Clair fails, it is usually because of a problem within the cluster.

Geo-replication architecture

15.3.1. Enable storage replication - standalone Quay

Use the following procedure to enable storage replication on Red Hat Quay.

Procedure

- In your Red Hat Quay config editor, locate the Registry Storage section.

- Click Enable Storage Replication.

- Add each of the storage engines to which data will be replicated. All storage engines to be used must be listed.

If complete replication of all images to all storage engines is required, click Replicate to storage engine by default under each storage engine configuration. This ensures that all images are replicated to that storage engine.

NoteTo enable per-namespace replication, contact Red Hat Quay support.

- When finished, click Save Configuration Changes. The configuration changes will take effect after Red Hat Quay restarts.

After adding storage and enabling Replicate to storage engine by default for geo-replication, you must sync existing image data across all storage. To do this, you must

oc exec(alternatively,docker execorkubectl exec) into the container and enter the following commands:# scl enable python27 bash # python -m util.backfillreplicationNoteThis is a one time operation to sync content after adding new storage.

15.3.2. Run Red Hat Quay with storage preferences

- Copy the config.yaml to all machines running Red Hat Quay

For each machine in each region, add a

QUAY_DISTRIBUTED_STORAGE_PREFERENCEenvironment variable with the preferred storage engine for the region in which the machine is running.For example, for a machine running in Europe with the config directory on the host available from

$QUAY/config:$ sudo podman run -d --rm -p 80:8080 -p 443:8443 \ --name=quay \ -v $QUAY/config:/conf/stack:Z \ -e QUAY_DISTRIBUTED_STORAGE_PREFERENCE=europestorage \ registry.redhat.io/quay/quay-rhel8:v3.8.15NoteThe value of the environment variable specified must match the name of a Location ID as defined in the config panel.

- Restart all Red Hat Quay containers

15.4. Geo-replication using the Red Hat Quay Operator

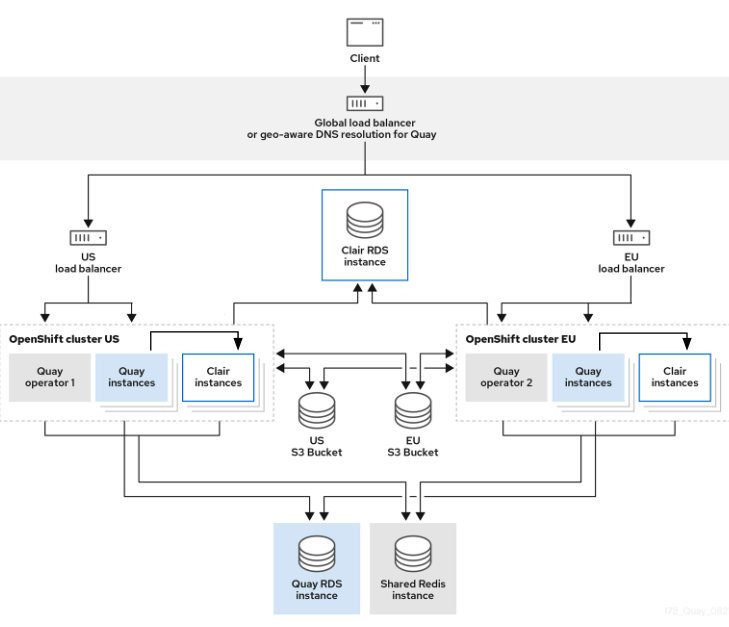

In the example shown above, the Red Hat Quay Operator is deployed in two separate regions, with a common database and a common Redis instance. Localized image storage is provided in each region and image pulls are served from the closest available storage engine. Container image pushes are written to the preferred storage engine for the Quay instance, and will then be replicated, in the background, to the other storage engines.

Because the Operator now manages the Clair security scanner and its database separately, geo-replication setups can be leveraged so that they do not manage the Clair database. Instead, an external shared database would be used. Red Hat Quay and Clair support several providers and vendors of PostgreSQL, which can be found in the Red Hat Quay 3.x test matrix. Additionally, the Operator also supports custom Clair configurations that can be injected into the deployment, which allows users to configure Clair with the connection credentials for the external database.

15.4.1. Setting up geo-replication on Openshift

Procedure

Deploy Quay postgres instance:

- Login to the database

Create a database for Quay

CREATE DATABASE quay;Enable pg_trm extension inside the database

\c quay; CREATE EXTENSION IF NOT EXISTS pg_trgm;

Deploy a Redis instance:

Note- Deploying a Redis instance might be unnecessary if your cloud provider has its own service.

- Deploying a Redis instance is required if you are leveraging Builders.

- Deploy a VM for Redis

- Make sure that it is accessible from the clusters where Quay is running

- Port 6379/TCP must be open

Run Redis inside the instance

sudo dnf install -y podman podman run -d --name redis -p 6379:6379 redis

Create two object storage backends, one for each cluster

Ideally one object storage bucket will be close to the 1st cluster (primary) while the other will run closer to the 2nd cluster (secondary).

- Deploy the clusters with the same config bundle, using environment variable overrides to select the appropriate storage backend for an individual cluster

- Configure a load balancer, to provide a single entry point to the clusters

15.4.1.1. Configuration

The config.yaml file is shared between clusters, and will contain the details for the common PostgreSQL, Redis and storage backends:

config.yaml

SERVER_HOSTNAME: <georep.quayteam.org or any other name>

DB_CONNECTION_ARGS:

autorollback: true

threadlocals: true

DB_URI: postgresql://postgres:password@10.19.0.1:5432/quay

BUILDLOGS_REDIS:

host: 10.19.0.2

port: 6379

USER_EVENTS_REDIS:

host: 10.19.0.2

port: 6379

DISTRIBUTED_STORAGE_CONFIG:

usstorage:

- GoogleCloudStorage

- access_key: GOOGQGPGVMASAAMQABCDEFG

bucket_name: georep-test-bucket-0

secret_key: AYWfEaxX/u84XRA2vUX5C987654321

storage_path: /quaygcp

eustorage:

- GoogleCloudStorage

- access_key: GOOGQGPGVMASAAMQWERTYUIOP

bucket_name: georep-test-bucket-1

secret_key: AYWfEaxX/u84XRA2vUX5Cuj12345678

storage_path: /quaygcp

DISTRIBUTED_STORAGE_DEFAULT_LOCATIONS:

- usstorage

- eustorage

DISTRIBUTED_STORAGE_PREFERENCE:

- usstorage

- eustorage

FEATURE_STORAGE_REPLICATION: true- 1

- A proper

SERVER_HOSTNAMEmust be used for the route and must match the hostname of the global load balancer. - 2

- To retrieve the configuration file for a Clair instance deployed using the OpenShift Operator, see Retrieving the Clair config.

Create the configBundleSecret:

$ oc create secret generic --from-file config.yaml=./config.yaml georep-config-bundle

In each of the clusters, set the configBundleSecret and use the QUAY_DISTRIBUTED_STORAGE_PREFERENCE environmental variable override to configure the appropriate storage for that cluster:

The config.yaml file between both deployments must match. If making a change to one cluster, it must also be changed in the other.

US cluster

apiVersion: quay.redhat.com/v1

kind: QuayRegistry

metadata:

name: example-registry

namespace: quay-enterprise

spec:

configBundleSecret: georep-config-bundle

components:

- kind: objectstorage

managed: false

- kind: route

managed: true

- kind: tls

managed: false

- kind: postgres

managed: false

- kind: clairpostgres

managed: false

- kind: redis

managed: false

- kind: quay

managed: true

overrides:

env:

- name: QUAY_DISTRIBUTED_STORAGE_PREFERENCE

value: usstorage

- kind: mirror

managed: true

overrides:

env:

- name: QUAY_DISTRIBUTED_STORAGE_PREFERENCE

value: usstorage+

Because TLS is unmanaged, and the route is managed, you must supply the certificates with either with the config tool or directly in the config bundle. For more information, see Configuring TLS and routes.

European cluster

apiVersion: quay.redhat.com/v1

kind: QuayRegistry

metadata:

name: example-registry

namespace: quay-enterprise

spec:

configBundleSecret: georep-config-bundle

components:

- kind: objectstorage

managed: false

- kind: route

managed: true

- kind: tls

managed: false

- kind: postgres

managed: false

- kind: clairpostgres

managed: false

- kind: redis

managed: false

- kind: quay

managed: true

overrides:

env:

- name: QUAY_DISTRIBUTED_STORAGE_PREFERENCE

value: eustorage

- kind: mirror

managed: true

overrides:

env:

- name: QUAY_DISTRIBUTED_STORAGE_PREFERENCE

value: eustorage+

Because TLS is unmanaged, and the route is managed, you must supply the certificates with either with the config tool or directly in the config bundle. For more information, see Configuring TLS and routes.

15.4.2. Mixed storage for geo-replication

Red Hat Quay geo-replication supports the use of different and multiple replication targets, for example, using AWS S3 storage on public cloud and using Ceph storage on premise. This complicates the key requirement of granting access to all storage backends from all Red Hat Quay pods and cluster nodes. As a result, it is recommended that you use the following:

- A VPN to prevent visibility of the internal storage, or

- A token pair that only allows access to the specified bucket used by Red Hat Quay

This will result in the public cloud instance of Red Hat Quay having access to on premise storage, but the network will be encrypted, protected, and will use ACLs, thereby meeting security requirements.

If you cannot implement these security measures, it may be preferable to deploy two distinct Red Hat Quay registries and to use repository mirroring as an alternative to geo-replication.