Este contenido no está disponible en el idioma seleccionado.

Post-installation configuration

Day 2 operations for OpenShift Container Platform

Abstract

Chapter 1. Post-installation configuration overview

After installing OpenShift Container Platform, a cluster administrator can configure and customize the following components:

- Machine

- Cluster

- Node

- Network

- Storage

- Users

- Alerts and notifications

1.1. Configuration tasks to perform after installation

Cluster administrators can perform the following post-installation configuration tasks:

Configure operating system features: Machine Config Operator (MCO) manages

MachineConfigobjects. By using MCO, you can perform the following tasks on an OpenShift Container Platform cluster:-

Configure nodes by using

MachineConfigobjects - Configure MCO-related custom resources

-

Configure nodes by using

Configure cluster features: As a cluster administrator, you can modify the configuration resources of the major features of an OpenShift Container Platform cluster. These features include:

- Image registry

- Networking configuration

- Image build behavior

- Identity provider

- The etcd configuration

- Machine set creation to handle the workloads

- Cloud provider credential management

Configure cluster components to be private: By default, the installation program provisions OpenShift Container Platform by using a publicly accessible DNS and endpoints. If you want your cluster to be accessible only from within an internal network, configure the following components to be private:

- DNS

- Ingress Controller

- API server

Perform node operations: By default, OpenShift Container Platform uses Red Hat Enterprise Linux CoreOS (RHCOS) compute machines. As a cluster administrator, you can perform the following operations with the machines in your OpenShift Container Platform cluster:

- Add and remove compute machines

- Add and remove taints and tolerations to the nodes

- Configure the maximum number of pods per node

- Enable Device Manager

Configure network: After installing OpenShift Container Platform, you can configure the following:

- Ingress cluster traffic

- Node port service range

- Network policy

- Enabling the cluster-wide proxy

Configure storage: By default, containers operate using ephemeral storage or transient local storage. The ephemeral storage has a lifetime limitation. TO store the data for a long time, you must configure persistent storage. You can configure storage by using one of the following methods:

- Dynamic provisioning: You can dynamically provision storage on demand by defining and creating storage classes that control different levels of storage, including storage access.

- Static provisioning: You can use Kubernetes persistent volumes to make existing storage available to a cluster. Static provisioning can support various device configurations and mount options.

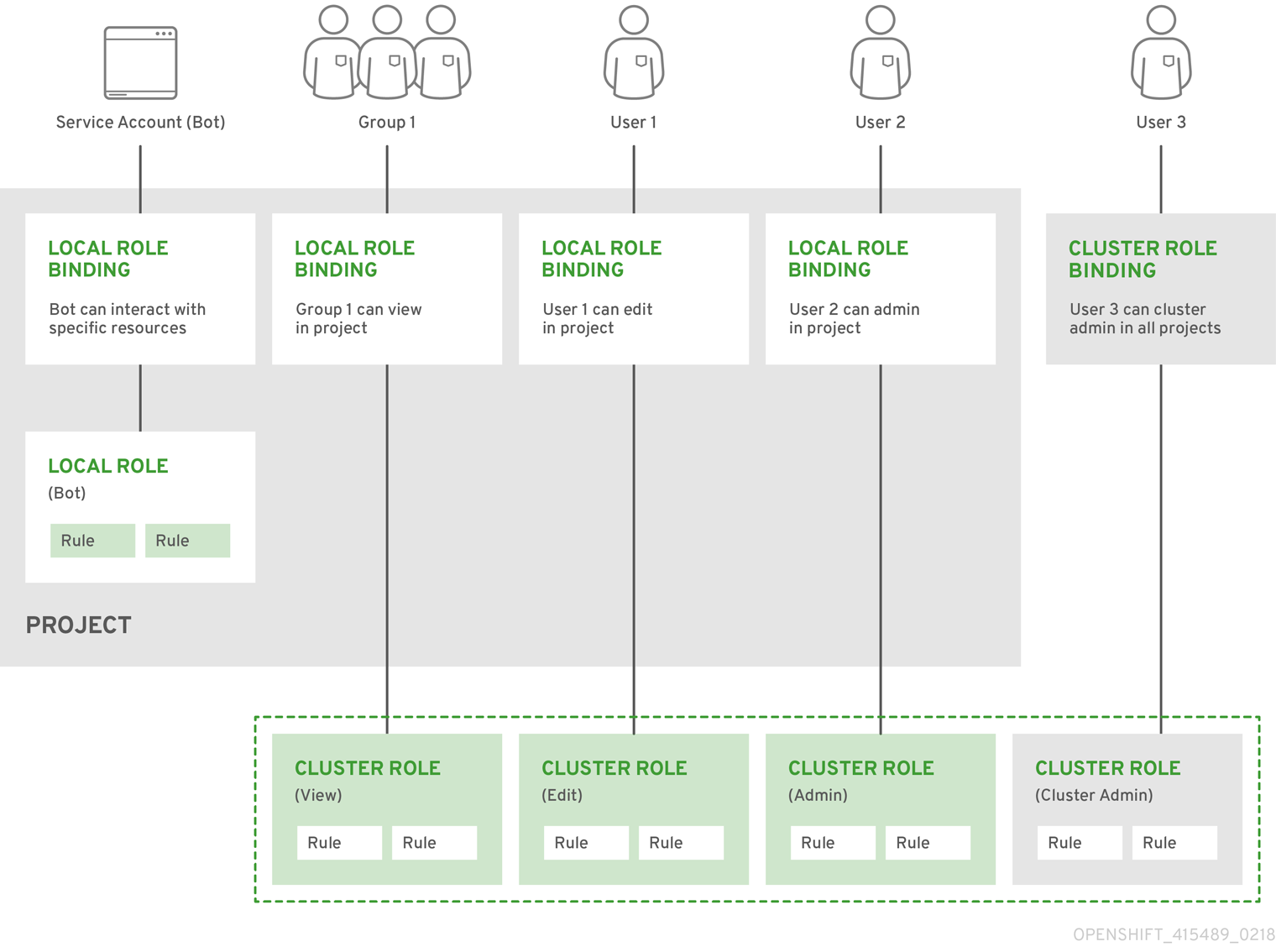

- Configure users: OAuth access tokens allow users to authenticate themselves to the API. As a cluster administrator, you can configure OAuth to perform the following tasks:

- Specify an identity provider

- Use role-based access control to define and supply permissions to users

- Install an Operator from OperatorHub

- Manage alerts and notifications: By default, firing alerts are displayed on the Alerting UI of the web console. You can also configure OpenShift Container Platform to send alert notifications to external systems.

Chapter 2. Configuring a private cluster

After you install an OpenShift Container Platform version 4.8 cluster, you can set some of its core components to be private.

2.1. About private clusters

By default, OpenShift Container Platform is provisioned using publicly-accessible DNS and endpoints. You can set the DNS, Ingress Controller, and API server to private after you deploy your private cluster.

If the cluster has any public subnets, load balancer services created by administrators might be publicly accessible. To ensure cluster security, verify that these services are explicitly annotated as private.

DNS

If you install OpenShift Container Platform on installer-provisioned infrastructure, the installation program creates records in a pre-existing public zone and, where possible, creates a private zone for the cluster’s own DNS resolution. In both the public zone and the private zone, the installation program or cluster creates DNS entries for *.apps, for the Ingress object, and api, for the API server.

The *.apps records in the public and private zone are identical, so when you delete the public zone, the private zone seamlessly provides all DNS resolution for the cluster.

Ingress Controller

Because the default Ingress object is created as public, the load balancer is internet-facing and in the public subnets. You can replace the default Ingress Controller with an internal one.

API server

By default, the installation program creates appropriate network load balancers for the API server to use for both internal and external traffic.

On Amazon Web Services (AWS), separate public and private load balancers are created. The load balancers are identical except that an additional port is available on the internal one for use within the cluster. Although the installation program automatically creates or destroys the load balancer based on API server requirements, the cluster does not manage or maintain them. As long as you preserve the cluster’s access to the API server, you can manually modify or move the load balancers. For the public load balancer, port 6443 is open and the health check is configured for HTTPS against the /readyz path.

On Google Cloud Platform, a single load balancer is created to manage both internal and external API traffic, so you do not need to modify the load balancer.

On Microsoft Azure, both public and private load balancers are created. However, because of limitations in current implementation, you just retain both load balancers in a private cluster.

2.2. Setting DNS to private

After you deploy a cluster, you can modify its DNS to use only a private zone.

Procedure

Review the

DNScustom resource for your cluster:$ oc get dnses.config.openshift.io/cluster -o yamlExample output

apiVersion: config.openshift.io/v1 kind: DNS metadata: creationTimestamp: "2019-10-25T18:27:09Z" generation: 2 name: cluster resourceVersion: "37966" selfLink: /apis/config.openshift.io/v1/dnses/cluster uid: 0e714746-f755-11f9-9cb1-02ff55d8f976 spec: baseDomain: <base_domain> privateZone: tags: Name: <infrastructure_id>-int kubernetes.io/cluster/<infrastructure_id>: owned publicZone: id: Z2XXXXXXXXXXA4 status: {}Note that the

specsection contains both a private and a public zone.Patch the

DNScustom resource to remove the public zone:$ oc patch dnses.config.openshift.io/cluster --type=merge --patch='{"spec": {"publicZone": null}}' dns.config.openshift.io/cluster patchedBecause the Ingress Controller consults the

DNSdefinition when it createsIngressobjects, when you create or modifyIngressobjects, only private records are created.ImportantDNS records for the existing Ingress objects are not modified when you remove the public zone.

Optional: Review the

DNScustom resource for your cluster and confirm that the public zone was removed:$ oc get dnses.config.openshift.io/cluster -o yamlExample output

apiVersion: config.openshift.io/v1 kind: DNS metadata: creationTimestamp: "2019-10-25T18:27:09Z" generation: 2 name: cluster resourceVersion: "37966" selfLink: /apis/config.openshift.io/v1/dnses/cluster uid: 0e714746-f755-11f9-9cb1-02ff55d8f976 spec: baseDomain: <base_domain> privateZone: tags: Name: <infrastructure_id>-int kubernetes.io/cluster/<infrastructure_id>-wfpg4: owned status: {}

2.3. Setting the Ingress Controller to private

After you deploy a cluster, you can modify its Ingress Controller to use only a private zone.

Procedure

Modify the default Ingress Controller to use only an internal endpoint:

$ oc replace --force --wait --filename - <<EOF apiVersion: operator.openshift.io/v1 kind: IngressController metadata: namespace: openshift-ingress-operator name: default spec: endpointPublishingStrategy: type: LoadBalancerService loadBalancer: scope: Internal EOFExample output

ingresscontroller.operator.openshift.io "default" deleted ingresscontroller.operator.openshift.io/default replacedThe public DNS entry is removed, and the private zone entry is updated.

2.4. Restricting the API server to private

After you deploy a cluster to Amazon Web Services (AWS) or Microsoft Azure, you can reconfigure the API server to use only the private zone.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Have access to the web console as a user with

adminprivileges.

Procedure

In the web portal or console for AWS or Azure, take the following actions:

Locate and delete appropriate load balancer component.

- For AWS, delete the external load balancer. The API DNS entry in the private zone already points to the internal load balancer, which uses an identical configuration, so you do not need to modify the internal load balancer.

-

For Azure, delete the

api-internalrule for the load balancer.

-

Delete the

api.$clustername.$yourdomainDNS entry in the public zone.

Remove the external load balancers:

ImportantYou can run the following steps only for an installer-provisioned infrastructure (IPI) cluster. For a user-provisioned infrastructure (UPI) cluster, you must manually remove or disable the external load balancers.

From your terminal, list the cluster machines:

$ oc get machine -n openshift-machine-apiExample output

NAME STATE TYPE REGION ZONE AGE lk4pj-master-0 running m4.xlarge us-east-1 us-east-1a 17m lk4pj-master-1 running m4.xlarge us-east-1 us-east-1b 17m lk4pj-master-2 running m4.xlarge us-east-1 us-east-1a 17m lk4pj-worker-us-east-1a-5fzfj running m4.xlarge us-east-1 us-east-1a 15m lk4pj-worker-us-east-1a-vbghs running m4.xlarge us-east-1 us-east-1a 15m lk4pj-worker-us-east-1b-zgpzg running m4.xlarge us-east-1 us-east-1b 15mYou modify the control plane machines, which contain

masterin the name, in the following step.Remove the external load balancer from each control plane machine.

Edit a control plane

Machineobject to remove the reference to the external load balancer:$ oc edit machines -n openshift-machine-api <master_name>1 - 1

- Specify the name of the control plane, or master,

Machineobject to modify.

Remove the lines that describe the external load balancer, which are marked in the following example, and save and exit the object specification:

... spec: providerSpec: value: ... loadBalancers: - name: lk4pj-ext1 type: network2 - name: lk4pj-int type: network-

Repeat this process for each of the machines that contains

masterin the name.

Chapter 3. Post-installation machine configuration tasks

There are times when you need to make changes to the operating systems running on OpenShift Container Platform nodes. This can include changing settings for network time service, adding kernel arguments, or configuring journaling in a specific way.

Aside from a few specialized features, most changes to operating systems on OpenShift Container Platform nodes can be done by creating what are referred to as MachineConfig objects that are managed by the Machine Config Operator.

Tasks in this section describe how to use features of the Machine Config Operator to configure operating system features on OpenShift Container Platform nodes.

3.1. Understanding the Machine Config Operator

3.1.1. Machine Config Operator

Purpose

The Machine Config Operator manages and applies configuration and updates of the base operating system and container runtime, including everything between the kernel and kubelet.

There are four components:

-

machine-config-server: Provides Ignition configuration to new machines joining the cluster. -

machine-config-controller: Coordinates the upgrade of machines to the desired configurations defined by aMachineConfigobject. Options are provided to control the upgrade for sets of machines individually. -

machine-config-daemon: Applies new machine configuration during update. Validates and verifies the state of the machine to the requested machine configuration. -

machine-config: Provides a complete source of machine configuration at installation, first start up, and updates for a machine.

Project

3.1.2. Machine config overview

The Machine Config Operator (MCO) manages updates to systemd, CRI-O and Kubelet, the kernel, Network Manager and other system features. It also offers a MachineConfig CRD that can write configuration files onto the host (see machine-config-operator). Understanding what MCO does and how it interacts with other components is critical to making advanced, system-level changes to an OpenShift Container Platform cluster. Here are some things you should know about MCO, machine configs, and how they are used:

- A machine config can make a specific change to a file or service on the operating system of each system representing a pool of OpenShift Container Platform nodes.

MCO applies changes to operating systems in pools of machines. All OpenShift Container Platform clusters start with worker and control plane node (also known as the master node) pools. By adding more role labels, you can configure custom pools of nodes. For example, you can set up a custom pool of worker nodes that includes particular hardware features needed by an application. However, examples in this section focus on changes to the default pool types.

ImportantA node can have multiple labels applied that indicate its type, such as

masterorworker, however it can be a member of only a single machine config pool.- Some machine configuration must be in place before OpenShift Container Platform is installed to disk. In most cases, this can be accomplished by creating a machine config that is injected directly into the OpenShift Container Platform installer process, instead of running as a post-installation machine config. In other cases, you might need to do bare metal installation where you pass kernel arguments at OpenShift Container Platform installer startup, to do such things as setting per-node individual IP addresses or advanced disk partitioning.

- MCO manages items that are set in machine configs. Manual changes you do to your systems will not be overwritten by MCO, unless MCO is explicitly told to manage a conflicting file. In other words, MCO only makes specific updates you request, it does not claim control over the whole node.

- Manual changes to nodes are strongly discouraged. If you need to decommission a node and start a new one, those direct changes would be lost.

-

MCO is only supported for writing to files in

/etcand/vardirectories, although there are symbolic links to some directories that can be writeable by being symbolically linked to one of those areas. The/optand/usr/localdirectories are examples. - Ignition is the configuration format used in MachineConfigs. See the Ignition Configuration Specification v3.2.0 for details.

- Although Ignition config settings can be delivered directly at OpenShift Container Platform installation time, and are formatted in the same way that MCO delivers Ignition configs, MCO has no way of seeing what those original Ignition configs are. Therefore, you should wrap Ignition config settings into a machine config before deploying them.

-

When a file managed by MCO changes outside of MCO, the Machine Config Daemon (MCD) sets the node as

degraded. It will not overwrite the offending file, however, and should continue to operate in adegradedstate. -

A key reason for using a machine config is that it will be applied when you spin up new nodes for a pool in your OpenShift Container Platform cluster. The

machine-api-operatorprovisions a new machine and MCO configures it.

MCO uses Ignition as the configuration format. OpenShift Container Platform 4.6 moved from Ignition config specification version 2 to version 3.

3.1.2.1. What can you change with machine configs?

The kinds of components that MCO can change include:

config: Create Ignition config objects (see the Ignition configuration specification) to do things like modify files, systemd services, and other features on OpenShift Container Platform machines, including:

-

Configuration files: Create or overwrite files in the

/varor/etcdirectory. - systemd units: Create and set the status of a systemd service or add to an existing systemd service by dropping in additional settings.

- users and groups: Change SSH keys in the passwd section post-installation.

-

Configuration files: Create or overwrite files in the

Changing SSH keys via machine configs is only supported for the core user.

- kernelArguments: Add arguments to the kernel command line when OpenShift Container Platform nodes boot.

-

kernelType: Optionally identify a non-standard kernel to use instead of the standard kernel. Use

realtimeto use the RT kernel (for RAN). This is only supported on select platforms. - fips: Enable FIPS mode. FIPS should be set at installation-time setting and not a post-installation procedure.

The use of FIPS Validated / Modules in Process cryptographic libraries is only supported on OpenShift Container Platform deployments on the x86_64 architecture.

- extensions: Extend RHCOS features by adding selected pre-packaged software. For this feature, available extensions include usbguard and kernel modules.

-

Custom resources (for

ContainerRuntimeandKubelet): Outside of machine configs, MCO manages two special custom resources for modifying CRI-O container runtime settings (ContainerRuntimeCR) and the Kubelet service (KubeletCR).

The MCO is not the only Operator that can change operating system components on OpenShift Container Platform nodes. Other Operators can modify operating system-level features as well. One example is the Node Tuning Operator, which allows you to do node-level tuning through Tuned daemon profiles.

Tasks for the MCO configuration that can be done post-installation are included in the following procedures. See descriptions of RHCOS bare metal installation for system configuration tasks that must be done during or before OpenShift Container Platform installation.

3.1.2.2. Project

See the openshift-machine-config-operator GitHub site for details.

3.1.3. Checking machine config pool status

To see the status of the Machine Config Operator (MCO), its sub-components, and the resources it manages, use the following oc commands:

Procedure

To see the number of MCO-managed nodes available on your cluster for each machine config pool (MCP), run the following command:

$ oc get machineconfigpoolExample output

NAME CONFIG UPDATED UPDATING DEGRADED MACHINECOUNT READYMACHINECOUNT UPDATEDMACHINECOUNT DEGRADEDMACHINECOUNT AGE master rendered-master-06c9c4… True False False 3 3 3 0 4h42m worker rendered-worker-f4b64… False True False 3 2 2 0 4h42mwhere:

- UPDATED

-

The

Truestatus indicates that the MCO has applied the current machine config to the nodes in that MCP. The current machine config is specified in theSTATUSfield in theoc get mcpoutput. TheFalsestatus indicates a node in the MCP is updating. - UPDATING

-

The

Truestatus indicates that the MCO is applying the desired machine config, as specified in theMachineConfigPoolcustom resource, to at least one of the nodes in that MCP. The desired machine config is the new, edited machine config. Nodes that are updating might not be available for scheduling. TheFalsestatus indicates that all nodes in the MCP are updated. - DEGRADED

-

A

Truestatus indicates the MCO is blocked from applying the current or desired machine config to at least one of the nodes in that MCP, or the configuration is failing. Nodes that are degraded might not be available for scheduling. AFalsestatus indicates that all nodes in the MCP are ready. - MACHINECOUNT

- Indicates the total number of machines in that MCP.

- READYMACHINECOUNT

- Indicates the total number of machines in that MCP that are ready for scheduling.

- UPDATEDMACHINECOUNT

- Indicates the total number of machines in that MCP that have the current machine config.

- DEGRADEDMACHINECOUNT

- Indicates the total number of machines in that MCP that are marked as degraded or unreconcilable.

In the previous output, there are three control plane (master) nodes and three worker nodes. The control plane MCP and the associated nodes are updated to the current machine config. The nodes in the worker MCP are being updated to the desired machine config. Two of the nodes in the worker MCP are updated and one is still updating, as indicated by the

UPDATEDMACHINECOUNTbeing2. There are no issues, as indicated by theDEGRADEDMACHINECOUNTbeing0andDEGRADEDbeingFalse.While the nodes in the MCP are updating, the machine config listed under

CONFIGis the current machine config, which the MCP is being updated from. When the update is complete, the listed machine config is the desired machine config, which the MCP was updated to.NoteIf a node is being cordoned, that node is not included in the

READYMACHINECOUNT, but is included in theMACHINECOUNT. Also, the MCP status is set toUPDATING. Because the node has the current machine config, it is counted in theUPDATEDMACHINECOUNTtotal:Example output

NAME CONFIG UPDATED UPDATING DEGRADED MACHINECOUNT READYMACHINECOUNT UPDATEDMACHINECOUNT DEGRADEDMACHINECOUNT AGE master rendered-master-06c9c4… True False False 3 3 3 0 4h42m worker rendered-worker-c1b41a… False True False 3 2 3 0 4h42mTo check the status of the nodes in an MCP by examining the

MachineConfigPoolcustom resource, run the following command: :$ oc describe mcp workerExample output

... Degraded Machine Count: 0 Machine Count: 3 Observed Generation: 2 Ready Machine Count: 3 Unavailable Machine Count: 0 Updated Machine Count: 3 Events: <none>NoteIf a node is being cordoned, the node is not included in the

Ready Machine Count. It is included in theUnavailable Machine Count:Example output

... Degraded Machine Count: 0 Machine Count: 3 Observed Generation: 2 Ready Machine Count: 2 Unavailable Machine Count: 1 Updated Machine Count: 3To see each existing

MachineConfigobject, run the following command:$ oc get machineconfigsExample output

NAME GENERATEDBYCONTROLLER IGNITIONVERSION AGE 00-master 2c9371fbb673b97a6fe8b1c52... 3.2.0 5h18m 00-worker 2c9371fbb673b97a6fe8b1c52... 3.2.0 5h18m 01-master-container-runtime 2c9371fbb673b97a6fe8b1c52... 3.2.0 5h18m 01-master-kubelet 2c9371fbb673b97a6fe8b1c52… 3.2.0 5h18m ... rendered-master-dde... 2c9371fbb673b97a6fe8b1c52... 3.2.0 5h18m rendered-worker-fde... 2c9371fbb673b97a6fe8b1c52... 3.2.0 5h18mNote that the

MachineConfigobjects listed asrenderedare not meant to be changed or deleted.To view the contents of a particular machine config (in this case,

01-master-kubelet), run the following command:$ oc describe machineconfigs 01-master-kubeletThe output from the command shows that this

MachineConfigobject contains both configuration files (cloud.confandkubelet.conf) and a systemd service (Kubernetes Kubelet):Example output

Name: 01-master-kubelet ... Spec: Config: Ignition: Version: 3.2.0 Storage: Files: Contents: Source: data:, Mode: 420 Overwrite: true Path: /etc/kubernetes/cloud.conf Contents: Source: data:,kind%3A%20KubeletConfiguration%0AapiVersion%3A%20kubelet.config.k8s.io%2Fv1beta1%0Aauthentication%3A%0A%20%20x509%3A%0A%20%20%20%20clientCAFile%3A%20%2Fetc%2Fkubernetes%2Fkubelet-ca.crt%0A%20%20anonymous... Mode: 420 Overwrite: true Path: /etc/kubernetes/kubelet.conf Systemd: Units: Contents: [Unit] Description=Kubernetes Kubelet Wants=rpc-statd.service network-online.target crio.service After=network-online.target crio.service ExecStart=/usr/bin/hyperkube \ kubelet \ --config=/etc/kubernetes/kubelet.conf \ ...

If something goes wrong with a machine config that you apply, you can always back out that change. For example, if you had run oc create -f ./myconfig.yaml to apply a machine config, you could remove that machine config by running the following command:

$ oc delete -f ./myconfig.yamlIf that was the only problem, the nodes in the affected pool should return to a non-degraded state. This actually causes the rendered configuration to roll back to its previously rendered state.

If you add your own machine configs to your cluster, you can use the commands shown in the previous example to check their status and the related status of the pool to which they are applied.

3.2. Using MachineConfig objects to configure nodes

You can use the tasks in this section to create MachineConfig objects that modify files, systemd unit files, and other operating system features running on OpenShift Container Platform nodes. For more ideas on working with machine configs, see content related to updating SSH authorized keys, verifying image signatures, enabling SCTP, and configuring iSCSI initiatornames for OpenShift Container Platform.

OpenShift Container Platform supports Ignition specification version 3.2. All new machine configs you create going forward should be based on Ignition specification version 3.2. If you are upgrading your OpenShift Container Platform cluster, any existing Ignition specification version 2.x machine configs will be translated automatically to specification version 3.2.

Use the following "Configuring chrony time service" procedure as a model for how to go about adding other configuration files to OpenShift Container Platform nodes.

3.2.1. Configuring chrony time service

You can set the time server and related settings used by the chrony time service (chronyd) by modifying the contents of the chrony.conf file and passing those contents to your nodes as a machine config.

Procedure

Create a Butane config including the contents of the

chrony.conffile. For example, to configure chrony on worker nodes, create a99-worker-chrony.bufile.NoteSee "Creating machine configs with Butane" for information about Butane.

variant: openshift version: 4.8.0 metadata: name: 99-worker-chrony1 labels: machineconfiguration.openshift.io/role: worker2 storage: files: - path: /etc/chrony.conf mode: 06443 overwrite: true contents: inline: | pool 0.rhel.pool.ntp.org iburst4 driftfile /var/lib/chrony/drift makestep 1.0 3 rtcsync logdir /var/log/chrony- 1 2

- On control plane nodes, substitute

masterforworkerin both of these locations. - 3

- Specify an octal value mode for the

modefield in the machine config file. After creating the file and applying the changes, themodeis converted to a decimal value. You can check the YAML file with the commandoc get mc <mc-name> -o yaml. - 4

- Specify any valid, reachable time source, such as the one provided by your DHCP server. Alternately, you can specify any of the following NTP servers:

1.rhel.pool.ntp.org,2.rhel.pool.ntp.org, or3.rhel.pool.ntp.org.

Use Butane to generate a

MachineConfigobject file,99-worker-chrony.yaml, containing the configuration to be delivered to the nodes:$ butane 99-worker-chrony.bu -o 99-worker-chrony.yamlApply the configurations in one of two ways:

-

If the cluster is not running yet, after you generate manifest files, add the

MachineConfigobject file to the<installation_directory>/openshiftdirectory, and then continue to create the cluster. If the cluster is already running, apply the file:

$ oc apply -f ./99-worker-chrony.yaml

-

If the cluster is not running yet, after you generate manifest files, add the

3.2.2. Adding kernel arguments to nodes

In some special cases, you might want to add kernel arguments to a set of nodes in your cluster. This should only be done with caution and clear understanding of the implications of the arguments you set.

Improper use of kernel arguments can result in your systems becoming unbootable.

Examples of kernel arguments you could set include:

- enforcing=0: Configures Security Enhanced Linux (SELinux) to run in permissive mode. In permissive mode, the system acts as if SELinux is enforcing the loaded security policy, including labeling objects and emitting access denial entries in the logs, but it does not actually deny any operations. While not supported for production systems, permissive mode can be helpful for debugging.

-

nosmt: Disables symmetric multithreading (SMT) in the kernel. Multithreading allows multiple logical threads for each CPU. You could consider

nosmtin multi-tenant environments to reduce risks from potential cross-thread attacks. By disabling SMT, you essentially choose security over performance.

See Kernel.org kernel parameters for a list and descriptions of kernel arguments.

In the following procedure, you create a MachineConfig object that identifies:

- A set of machines to which you want to add the kernel argument. In this case, machines with a worker role.

- Kernel arguments that are appended to the end of the existing kernel arguments.

- A label that indicates where in the list of machine configs the change is applied.

Prerequisites

- Have administrative privilege to a working OpenShift Container Platform cluster.

Procedure

List existing

MachineConfigobjects for your OpenShift Container Platform cluster to determine how to label your machine config:$ oc get MachineConfigExample output

NAME GENERATEDBYCONTROLLER IGNITIONVERSION AGE 00-master 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 00-worker 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-master-container-runtime 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-master-kubelet 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-worker-container-runtime 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-worker-kubelet 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 99-master-generated-registries 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 99-master-ssh 3.2.0 40m 99-worker-generated-registries 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 99-worker-ssh 3.2.0 40m rendered-master-23e785de7587df95a4b517e0647e5ab7 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m rendered-worker-5d596d9293ca3ea80c896a1191735bb1 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33mCreate a

MachineConfigobject file that identifies the kernel argument (for example,05-worker-kernelarg-selinuxpermissive.yaml)apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: worker1 name: 05-worker-kernelarg-selinuxpermissive2 spec: config: ignition: version: 3.2.0 kernelArguments: - enforcing=03 Create the new machine config:

$ oc create -f 05-worker-kernelarg-selinuxpermissive.yamlCheck the machine configs to see that the new one was added:

$ oc get MachineConfigExample output

NAME GENERATEDBYCONTROLLER IGNITIONVERSION AGE 00-master 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 00-worker 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-master-container-runtime 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-master-kubelet 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-worker-container-runtime 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-worker-kubelet 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 05-worker-kernelarg-selinuxpermissive 3.2.0 105s 99-master-generated-registries 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 99-master-ssh 3.2.0 40m 99-worker-generated-registries 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 99-worker-ssh 3.2.0 40m rendered-master-23e785de7587df95a4b517e0647e5ab7 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m rendered-worker-5d596d9293ca3ea80c896a1191735bb1 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33mCheck the nodes:

$ oc get nodesExample output

NAME STATUS ROLES AGE VERSION ip-10-0-136-161.ec2.internal Ready worker 28m v1.21.0 ip-10-0-136-243.ec2.internal Ready master 34m v1.21.0 ip-10-0-141-105.ec2.internal Ready,SchedulingDisabled worker 28m v1.21.0 ip-10-0-142-249.ec2.internal Ready master 34m v1.21.0 ip-10-0-153-11.ec2.internal Ready worker 28m v1.21.0 ip-10-0-153-150.ec2.internal Ready master 34m v1.21.0You can see that scheduling on each worker node is disabled as the change is being applied.

Check that the kernel argument worked by going to one of the worker nodes and listing the kernel command line arguments (in

/proc/cmdlineon the host):$ oc debug node/ip-10-0-141-105.ec2.internalExample output

Starting pod/ip-10-0-141-105ec2internal-debug ... To use host binaries, run `chroot /host` sh-4.2# cat /host/proc/cmdline BOOT_IMAGE=/ostree/rhcos-... console=tty0 console=ttyS0,115200n8 rootflags=defaults,prjquota rw root=UUID=fd0... ostree=/ostree/boot.0/rhcos/16... coreos.oem.id=qemu coreos.oem.id=ec2 ignition.platform.id=ec2 enforcing=0 sh-4.2# exitYou should see the

enforcing=0argument added to the other kernel arguments.

3.2.3. Enabling multipathing with kernel arguments on RHCOS

Red Hat Enterprise Linux CoreOS (RHCOS) supports multipathing on the primary disk, allowing stronger resilience to hardware failure to achieve higher host availability. Post-installation support is available by activating multipathing via the machine config.

Enabling multipathing during installation is supported and recommended for nodes provisioned in OpenShift Container Platform 4.8 or higher. In setups where any I/O to non-optimized paths results in I/O system errors, you must enable multipathing at installation time. For more information about enabling multipathing during installation time, see "Enabling multipathing with kernel arguments on RHCOS" in the Installing on bare metal documentation.

On IBM Z and LinuxONE, you can enable multipathing only if you configured your cluster for it during installation. For more information, see "Installing RHCOS and starting the OpenShift Container Platform bootstrap process" in Installing a cluster with z/VM on IBM Z and LinuxONE.

Prerequisites

- You have a running OpenShift Container Platform cluster that uses version 4.7 or later.

- You are logged in to the cluster as a user with administrative privileges.

Procedure

To enable multipathing post-installation on control plane nodes:

Create a machine config file, such as

99-master-kargs-mpath.yaml, that instructs the cluster to add themasterlabel and that identifies the multipath kernel argument, for example:apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: "master" name: 99-master-kargs-mpath spec: kernelArguments: - 'rd.multipath=default' - 'root=/dev/disk/by-label/dm-mpath-root'

To enable multipathing post-installation on worker nodes:

Create a machine config file, such as

99-worker-kargs-mpath.yaml, that instructs the cluster to add theworkerlabel and that identifies the multipath kernel argument, for example:apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: "worker" name: 99-worker-kargs-mpath spec: kernelArguments: - 'rd.multipath=default' - 'root=/dev/disk/by-label/dm-mpath-root'

Create the new machine config by using either the master or worker YAML file you previously created:

$ oc create -f ./99-worker-kargs-mpath.yamlCheck the machine configs to see that the new one was added:

$ oc get MachineConfigExample output

NAME GENERATEDBYCONTROLLER IGNITIONVERSION AGE 00-master 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 00-worker 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-master-container-runtime 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-master-kubelet 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-worker-container-runtime 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 01-worker-kubelet 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 99-master-generated-registries 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 99-master-ssh 3.2.0 40m 99-worker-generated-registries 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m 99-worker-kargs-mpath 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 105s 99-worker-ssh 3.2.0 40m rendered-master-23e785de7587df95a4b517e0647e5ab7 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33m rendered-worker-5d596d9293ca3ea80c896a1191735bb1 52dd3ba6a9a527fc3ab42afac8d12b693534c8c9 3.2.0 33mCheck the nodes:

$ oc get nodesExample output

NAME STATUS ROLES AGE VERSION ip-10-0-136-161.ec2.internal Ready worker 28m v1.20.0 ip-10-0-136-243.ec2.internal Ready master 34m v1.20.0 ip-10-0-141-105.ec2.internal Ready,SchedulingDisabled worker 28m v1.20.0 ip-10-0-142-249.ec2.internal Ready master 34m v1.20.0 ip-10-0-153-11.ec2.internal Ready worker 28m v1.20.0 ip-10-0-153-150.ec2.internal Ready master 34m v1.20.0You can see that scheduling on each worker node is disabled as the change is being applied.

Check that the kernel argument worked by going to one of the worker nodes and listing the kernel command line arguments (in

/proc/cmdlineon the host):$ oc debug node/ip-10-0-141-105.ec2.internalExample output

Starting pod/ip-10-0-141-105ec2internal-debug ... To use host binaries, run `chroot /host` sh-4.2# cat /host/proc/cmdline ... rd.multipath=default root=/dev/disk/by-label/dm-mpath-root ... sh-4.2# exitYou should see the added kernel arguments.

3.2.4. Adding a real-time kernel to nodes

Some OpenShift Container Platform workloads require a high degree of determinism.While Linux is not a real-time operating system, the Linux real-time kernel includes a preemptive scheduler that provides the operating system with real-time characteristics.

If your OpenShift Container Platform workloads require these real-time characteristics, you can switch your machines to the Linux real-time kernel. For OpenShift Container Platform, 4.8 you can make this switch using a MachineConfig object. Although making the change is as simple as changing a machine config kernelType setting to realtime, there are a few other considerations before making the change:

- Currently, real-time kernel is supported only on worker nodes, and only for radio access network (RAN) use.

- The following procedure is fully supported with bare metal installations that use systems that are certified for Red Hat Enterprise Linux for Real Time 8.

- Real-time support in OpenShift Container Platform is limited to specific subscriptions.

- The following procedure is also supported for use with Google Cloud Platform.

Prerequisites

- Have a running OpenShift Container Platform cluster (version 4.4 or later).

- Log in to the cluster as a user with administrative privileges.

Procedure

Create a machine config for the real-time kernel: Create a YAML file (for example,

99-worker-realtime.yaml) that contains aMachineConfigobject for therealtimekernel type. This example tells the cluster to use a real-time kernel for all worker nodes:$ cat << EOF > 99-worker-realtime.yaml apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: "worker" name: 99-worker-realtime spec: kernelType: realtime EOFAdd the machine config to the cluster. Type the following to add the machine config to the cluster:

$ oc create -f 99-worker-realtime.yamlCheck the real-time kernel: Once each impacted node reboots, log in to the cluster and run the following commands to make sure that the real-time kernel has replaced the regular kernel for the set of nodes you configured:

$ oc get nodesExample output

NAME STATUS ROLES AGE VERSION ip-10-0-143-147.us-east-2.compute.internal Ready worker 103m v1.21.0 ip-10-0-146-92.us-east-2.compute.internal Ready worker 101m v1.21.0 ip-10-0-169-2.us-east-2.compute.internal Ready worker 102m v1.21.0$ oc debug node/ip-10-0-143-147.us-east-2.compute.internalExample output

Starting pod/ip-10-0-143-147us-east-2computeinternal-debug ... To use host binaries, run `chroot /host` sh-4.4# uname -a Linux <worker_node> 4.18.0-147.3.1.rt24.96.el8_1.x86_64 #1 SMP PREEMPT RT Wed Nov 27 18:29:55 UTC 2019 x86_64 x86_64 x86_64 GNU/LinuxThe kernel name contains

rtand text “PREEMPT RT” indicates that this is a real-time kernel.To go back to the regular kernel, delete the

MachineConfigobject:$ oc delete -f 99-worker-realtime.yaml

3.2.5. Configuring journald settings

If you need to configure settings for the journald service on OpenShift Container Platform nodes, you can do that by modifying the appropriate configuration file and passing the file to the appropriate pool of nodes as a machine config.

This procedure describes how to modify journald rate limiting settings in the /etc/systemd/journald.conf file and apply them to worker nodes. See the journald.conf man page for information on how to use that file.

Prerequisites

- Have a running OpenShift Container Platform cluster.

- Log in to the cluster as a user with administrative privileges.

Procedure

Create a Butane config file,

40-worker-custom-journald.bu, that includes an/etc/systemd/journald.conffile with the required settings.NoteSee "Creating machine configs with Butane" for information about Butane.

variant: openshift version: 4.8.0 metadata: name: 40-worker-custom-journald labels: machineconfiguration.openshift.io/role: worker storage: files: - path: /etc/systemd/journald.conf mode: 0644 overwrite: true contents: inline: | # Disable rate limiting RateLimitInterval=1s RateLimitBurst=10000 Storage=volatile Compress=no MaxRetentionSec=30sUse Butane to generate a

MachineConfigobject file,40-worker-custom-journald.yaml, containing the configuration to be delivered to the worker nodes:$ butane 40-worker-custom-journald.bu -o 40-worker-custom-journald.yamlApply the machine config to the pool:

$ oc apply -f 40-worker-custom-journald.yamlCheck that the new machine config is applied and that the nodes are not in a degraded state. It might take a few minutes. The worker pool will show the updates in progress, as each node successfully has the new machine config applied:

$ oc get machineconfigpool NAME CONFIG UPDATED UPDATING DEGRADED MACHINECOUNT READYMACHINECOUNT UPDATEDMACHINECOUNT DEGRADEDMACHINECOUNT AGE master rendered-master-35 True False False 3 3 3 0 34m worker rendered-worker-d8 False True False 3 1 1 0 34mTo check that the change was applied, you can log in to a worker node:

$ oc get node | grep worker ip-10-0-0-1.us-east-2.compute.internal Ready worker 39m v0.0.0-master+$Format:%h$ $ oc debug node/ip-10-0-0-1.us-east-2.compute.internal Starting pod/ip-10-0-141-142us-east-2computeinternal-debug ... ... sh-4.2# chroot /host sh-4.4# cat /etc/systemd/journald.conf # Disable rate limiting RateLimitInterval=1s RateLimitBurst=10000 Storage=volatile Compress=no MaxRetentionSec=30s sh-4.4# exit

3.2.6. Adding extensions to RHCOS

RHCOS is a minimal container-oriented RHEL operating system, designed to provide a common set of capabilities to OpenShift Container Platform clusters across all platforms. While adding software packages to RHCOS systems is generally discouraged, the MCO provides an extensions feature you can use to add a minimal set of features to RHCOS nodes.

Currently, the following extension is available:

-

usbguard: Adding the

usbguardextension protects RHCOS systems from attacks from intrusive USB devices. See USBGuard for details.

The following procedure describes how to use a machine config to add one or more extensions to your RHCOS nodes.

Prerequisites

- Have a running OpenShift Container Platform cluster (version 4.6 or later).

- Log in to the cluster as a user with administrative privileges.

Procedure

Create a machine config for extensions: Create a YAML file (for example,

80-extensions.yaml) that contains aMachineConfigextensionsobject. This example tells the cluster to add theusbguardextension.$ cat << EOF > 80-extensions.yaml apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: worker name: 80-worker-extensions spec: config: ignition: version: 3.2.0 extensions: - usbguard EOFAdd the machine config to the cluster. Type the following to add the machine config to the cluster:

$ oc create -f 80-extensions.yamlThis sets all worker nodes to have rpm packages for

usbguardinstalled.Check that the extensions were applied:

$ oc get machineconfig 80-worker-extensionsExample output

NAME GENERATEDBYCONTROLLER IGNITIONVERSION AGE 80-worker-extensions 3.2.0 57sCheck that the new machine config is now applied and that the nodes are not in a degraded state. It may take a few minutes. The worker pool will show the updates in progress, as each machine successfully has the new machine config applied:

$ oc get machineconfigpoolExample output

NAME CONFIG UPDATED UPDATING DEGRADED MACHINECOUNT READYMACHINECOUNT UPDATEDMACHINECOUNT DEGRADEDMACHINECOUNT AGE master rendered-master-35 True False False 3 3 3 0 34m worker rendered-worker-d8 False True False 3 1 1 0 34mCheck the extensions. To check that the extension was applied, run:

$ oc get node | grep workerExample output

NAME STATUS ROLES AGE VERSION ip-10-0-169-2.us-east-2.compute.internal Ready worker 102m v1.18.3$ oc debug node/ip-10-0-169-2.us-east-2.compute.internalExample output

... To use host binaries, run `chroot /host` sh-4.4# chroot /host sh-4.4# rpm -q usbguard usbguard-0.7.4-4.el8.x86_64.rpm

3.2.7. Loading custom firmware blobs in the machine config manifest

Because the default location for firmware blobs in /usr/lib is read-only, you can locate a custom firmware blob by updating the search path. This enables you to load local firmware blobs in the machine config manifest when the blobs are not managed by RHCOS.

Procedure

Create a Butane config file,

98-worker-firmware-blob.bu, that updates the search path so that it is root-owned and writable to local storage. The following example places the custom blob file from your local workstation onto nodes under/var/lib/firmware.NoteSee "Creating machine configs with Butane" for information about Butane.

Butane config file for custom firmware blob

variant: openshift version: 4.9.0 metadata: labels: machineconfiguration.openshift.io/role: worker name: 98-worker-firmware-blob storage: files: - path: /var/lib/firmware/<package_name>1 contents: local: <package_name>2 mode: 06443 openshift: kernel_arguments: - 'firmware_class.path=/var/lib/firmware'4 - 1

- Sets the path on the node where the firmware package is copied to.

- 2

- Specifies a file with contents that are read from a local file directory on the system running Butane. The path of the local file is relative to a

files-dirdirectory, which must be specified by using the--files-diroption with Butane in the following step. - 3

- Sets the permissions for the file on the RHCOS node. It is recommended to set

0644permissions. - 4

- The

firmware_class.pathparameter customizes the kernel search path of where to look for the custom firmware blob that was copied from your local workstation onto the root file system of the node. This example uses/var/lib/firmwareas the customized path.

Run Butane to generate a

MachineConfigobject file that uses a copy of the firmware blob on your local workstation named98-worker-firmware-blob.yaml. The firmware blob contains the configuration to be delivered to the nodes. The following example uses the--files-diroption to specify the directory on your workstation where the local file or files are located:$ butane 98-worker-firmware-blob.bu -o 98-worker-firmware-blob.yaml --files-dir <directory_including_package_name>Apply the configurations to the nodes in one of two ways:

-

If the cluster is not running yet, after you generate manifest files, add the

MachineConfigobject file to the<installation_directory>/openshiftdirectory, and then continue to create the cluster. If the cluster is already running, apply the file:

$ oc apply -f 98-worker-firmware-blob.yamlA

MachineConfigobject YAML file is created for you to finish configuring your machines.

-

If the cluster is not running yet, after you generate manifest files, add the

-

Save the Butane config in case you need to update the

MachineConfigobject in the future.

3.3. Configuring MCO-related custom resources

Besides managing MachineConfig objects, the MCO manages two custom resources (CRs): KubeletConfig and ContainerRuntimeConfig. Those CRs let you change node-level settings impacting how the Kubelet and CRI-O container runtime services behave.

3.3.1. Creating a KubeletConfig CRD to edit kubelet parameters

The kubelet configuration is currently serialized as an Ignition configuration, so it can be directly edited. However, there is also a new kubelet-config-controller added to the Machine Config Controller (MCC). This lets you use a KubeletConfig custom resource (CR) to edit the kubelet parameters.

As the fields in the kubeletConfig object are passed directly to the kubelet from upstream Kubernetes, the kubelet validates those values directly. Invalid values in the kubeletConfig object might cause cluster nodes to become unavailable. For valid values, see the Kubernetes documentation.

Consider the following guidance:

-

Create one

KubeletConfigCR for each machine config pool with all the config changes you want for that pool. If you are applying the same content to all of the pools, you need only oneKubeletConfigCR for all of the pools. -

Edit an existing

KubeletConfigCR to modify existing settings or add new settings, instead of creating a CR for each change. It is recommended that you create a CR only to modify a different machine config pool, or for changes that are intended to be temporary, so that you can revert the changes. -

As needed, create multiple

KubeletConfigCRs with a limit of 10 per cluster. For the firstKubeletConfigCR, the Machine Config Operator (MCO) creates a machine config appended withkubelet. With each subsequent CR, the controller creates anotherkubeletmachine config with a numeric suffix. For example, if you have akubeletmachine config with a-2suffix, the nextkubeletmachine config is appended with-3.

If you want to delete the machine configs, delete them in reverse order to avoid exceeding the limit. For example, you delete the kubelet-3 machine config before deleting the kubelet-2 machine config.

If you have a machine config with a kubelet-9 suffix, and you create another KubeletConfig CR, a new machine config is not created, even if there are fewer than 10 kubelet machine configs.

Example KubeletConfig CR

$ oc get kubeletconfigNAME AGE

set-max-pods 15mExample showing a KubeletConfig machine config

$ oc get mc | grep kubelet...

99-worker-generated-kubelet-1 b5c5119de007945b6fe6fb215db3b8e2ceb12511 3.2.0 26m

...The following procedure is an example to show how to configure the maximum number of pods per node on the worker nodes.

Prerequisites

Obtain the label associated with the static

MachineConfigPoolCR for the type of node you want to configure. Perform one of the following steps:View the machine config pool:

$ oc describe machineconfigpool <name>For example:

$ oc describe machineconfigpool workerExample output

apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfigPool metadata: creationTimestamp: 2019-02-08T14:52:39Z generation: 1 labels: custom-kubelet: set-max-pods1 - 1

- If a label has been added it appears under

labels.

If the label is not present, add a key/value pair:

$ oc label machineconfigpool worker custom-kubelet=set-max-pods

Procedure

View the available machine configuration objects that you can select:

$ oc get machineconfigBy default, the two kubelet-related configs are

01-master-kubeletand01-worker-kubelet.Check the current value for the maximum pods per node:

$ oc describe node <node_name>For example:

$ oc describe node ci-ln-5grqprb-f76d1-ncnqq-worker-a-mdv94Look for

value: pods: <value>in theAllocatablestanza:Example output

Allocatable: attachable-volumes-aws-ebs: 25 cpu: 3500m hugepages-1Gi: 0 hugepages-2Mi: 0 memory: 15341844Ki pods: 250Set the maximum pods per node on the worker nodes by creating a custom resource file that contains the kubelet configuration:

apiVersion: machineconfiguration.openshift.io/v1 kind: KubeletConfig metadata: name: set-max-pods spec: machineConfigPoolSelector: matchLabels: custom-kubelet: set-max-pods1 kubeletConfig: maxPods: 5002 NoteThe rate at which the kubelet talks to the API server depends on queries per second (QPS) and burst values. The default values,

50forkubeAPIQPSand100forkubeAPIBurst, are sufficient if there are limited pods running on each node. It is recommended to update the kubelet QPS and burst rates if there are enough CPU and memory resources on the node.apiVersion: machineconfiguration.openshift.io/v1 kind: KubeletConfig metadata: name: set-max-pods spec: machineConfigPoolSelector: matchLabels: custom-kubelet: set-max-pods kubeletConfig: maxPods: <pod_count> kubeAPIBurst: <burst_rate> kubeAPIQPS: <QPS>Update the machine config pool for workers with the label:

$ oc label machineconfigpool worker custom-kubelet=large-podsCreate the

KubeletConfigobject:$ oc create -f change-maxPods-cr.yamlVerify that the

KubeletConfigobject is created:$ oc get kubeletconfigExample output

NAME AGE set-max-pods 15mDepending on the number of worker nodes in the cluster, wait for the worker nodes to be rebooted one by one. For a cluster with 3 worker nodes, this could take about 10 to 15 minutes.

Verify that the changes are applied to the node:

Check on a worker node that the

maxPodsvalue changed:$ oc describe node <node_name>Locate the

Allocatablestanza:... Allocatable: attachable-volumes-gce-pd: 127 cpu: 3500m ephemeral-storage: 123201474766 hugepages-1Gi: 0 hugepages-2Mi: 0 memory: 14225400Ki pods: 5001 ...- 1

- In this example, the

podsparameter should report the value you set in theKubeletConfigobject.

Verify the change in the

KubeletConfigobject:$ oc get kubeletconfigs set-max-pods -o yamlThis should show a

status: "True"andtype:Success:spec: kubeletConfig: maxPods: 500 machineConfigPoolSelector: matchLabels: custom-kubelet: set-max-pods status: conditions: - lastTransitionTime: "2021-06-30T17:04:07Z" message: Success status: "True" type: Success

3.3.2. Creating a ContainerRuntimeConfig CR to edit CRI-O parameters

You can change some of the settings associated with the OpenShift Container Platform CRI-O runtime for the nodes associated with a specific machine config pool (MCP). Using a ContainerRuntimeConfig custom resource (CR), you set the configuration values and add a label to match the MCP. The MCO then rebuilds the crio.conf and storage.conf configuration files on the associated nodes with the updated values.

To revert the changes implemented by using a ContainerRuntimeConfig CR, you must delete the CR. Removing the label from the machine config pool does not revert the changes.

You can modify the following settings by using a ContainerRuntimeConfig CR:

-

PIDs limit: The

pidsLimitparameter sets the CRI-Opids_limitparameter, which is maximum number of processes allowed in a container. The default is 1024 (pids_limit = 1024). -

Log level: The

logLevelparameter sets the CRI-Olog_levelparameter, which is the level of verbosity for log messages. The default isinfo(log_level = info). Other options includefatal,panic,error,warn,debug, andtrace. -

Overlay size: The

overlaySizeparameter sets the CRI-O Overlay storage driversizeparameter, which is the maximum size of a container image. -

Maximum log size: The

logSizeMaxparameter sets the CRI-Olog_size_maxparameter, which is the maximum size allowed for the container log file. The default is unlimited (log_size_max = -1). If set to a positive number, it must be at least 8192 to not be smaller than the ConMon read buffer. ConMon is a program that monitors communications between a container manager (such as Podman or CRI-O) and the OCI runtime (such as runc or crun) for a single container.

You should have one ContainerRuntimeConfig CR for each machine config pool with all the config changes you want for that pool. If you are applying the same content to all the pools, you only need one ContainerRuntimeConfig CR for all the pools.

You should edit an existing ContainerRuntimeConfig CR to modify existing settings or add new settings instead of creating a new CR for each change. It is recommended to create a new ContainerRuntimeConfig CR only to modify a different machine config pool, or for changes that are intended to be temporary so that you can revert the changes.

You can create multiple ContainerRuntimeConfig CRs, as needed, with a limit of 10 per cluster. For the first ContainerRuntimeConfig CR, the MCO creates a machine config appended with containerruntime. With each subsequent CR, the controller creates a new containerruntime machine config with a numeric suffix. For example, if you have a containerruntime machine config with a -2 suffix, the next containerruntime machine config is appended with -3.

If you want to delete the machine configs, you should delete them in reverse order to avoid exceeding the limit. For example, you should delete the containerruntime-3 machine config before deleting the containerruntime-2 machine config.

If you have a machine config with a containerruntime-9 suffix, and you create another ContainerRuntimeConfig CR, a new machine config is not created, even if there are fewer than 10 containerruntime machine configs.

Example showing multiple ContainerRuntimeConfig CRs

$ oc get ctrcfgExample output

NAME AGE

ctr-pid 24m

ctr-overlay 15m

ctr-level 5m45sExample showing multiple containerruntime machine configs

$ oc get mc | grep containerExample output

...

01-master-container-runtime b5c5119de007945b6fe6fb215db3b8e2ceb12511 3.2.0 57m

...

01-worker-container-runtime b5c5119de007945b6fe6fb215db3b8e2ceb12511 3.2.0 57m

...

99-worker-generated-containerruntime b5c5119de007945b6fe6fb215db3b8e2ceb12511 3.2.0 26m

99-worker-generated-containerruntime-1 b5c5119de007945b6fe6fb215db3b8e2ceb12511 3.2.0 17m

99-worker-generated-containerruntime-2 b5c5119de007945b6fe6fb215db3b8e2ceb12511 3.2.0 7m26s

...

The following example raises the pids_limit to 2048, sets the log_level to debug, sets the overlay size to 8 GB, and sets the log_size_max to unlimited:

Example ContainerRuntimeConfig CR

apiVersion: machineconfiguration.openshift.io/v1

kind: ContainerRuntimeConfig

metadata:

name: overlay-size

spec:

machineConfigPoolSelector:

matchLabels:

pools.operator.machineconfiguration.openshift.io/worker: ''

containerRuntimeConfig:

pidsLimit: 2048

logLevel: debug

overlaySize: 8G

logSizeMax: "-1" - 1

- Specifies the machine config pool label.

- 2

- Optional: Specifies the maximum number of processes allowed in a container.

- 3

- Optional: Specifies the level of verbosity for log messages.

- 4

- Optional: Specifies the maximum size of a container image.

- 5

- Optional: Specifies the maximum size allowed for the container log file. If set to a positive number, it must be at least 8192.

Procedure

To change CRI-O settings using the ContainerRuntimeConfig CR:

Create a YAML file for the

ContainerRuntimeConfigCR:apiVersion: machineconfiguration.openshift.io/v1 kind: ContainerRuntimeConfig metadata: name: overlay-size spec: machineConfigPoolSelector: matchLabels: pools.operator.machineconfiguration.openshift.io/worker: ''1 containerRuntimeConfig:2 pidsLimit: 2048 logLevel: debug overlaySize: 8G logSizeMax: "-1"Create the

ContainerRuntimeConfigCR:$ oc create -f <file_name>.yamlVerify that the CR is created:

$ oc get ContainerRuntimeConfigExample output

NAME AGE overlay-size 3m19sCheck that a new

containerruntimemachine config is created:$ oc get machineconfigs | grep containerrunExample output

99-worker-generated-containerruntime 2c9371fbb673b97a6fe8b1c52691999ed3a1bfc2 3.2.0 31sMonitor the machine config pool until all are shown as ready:

$ oc get mcp workerExample output

NAME CONFIG UPDATED UPDATING DEGRADED MACHINECOUNT READYMACHINECOUNT UPDATEDMACHINECOUNT DEGRADEDMACHINECOUNT AGE worker rendered-worker-169 False True False 3 1 1 0 9hVerify that the settings were applied in CRI-O:

Open an

oc debugsession to a node in the machine config pool and runchroot /host.$ oc debug node/<node_name>sh-4.4# chroot /hostVerify the changes in the

crio.conffile:sh-4.4# crio config | egrep 'log_level|pids_limit|log_size_max'Example output

pids_limit = 2048 log_size_max = -1 log_level = "debug"Verify the changes in the `storage.conf`file:

sh-4.4# head -n 7 /etc/containers/storage.confExample output

[storage] driver = "overlay" runroot = "/var/run/containers/storage" graphroot = "/var/lib/containers/storage" [storage.options] additionalimagestores = [] size = "8G"

3.3.3. Setting the default maximum container root partition size for Overlay with CRI-O

The root partition of each container shows all of the available disk space of the underlying host. Follow this guidance to set a maximum partition size for the root disk of all containers.

To configure the maximum Overlay size, as well as other CRI-O options like the log level and PID limit, you can create the following ContainerRuntimeConfig custom resource definition (CRD):

apiVersion: machineconfiguration.openshift.io/v1

kind: ContainerRuntimeConfig

metadata:

name: overlay-size

spec:

machineConfigPoolSelector:

matchLabels:

custom-crio: overlay-size

containerRuntimeConfig:

pidsLimit: 2048

logLevel: debug

overlaySize: 8GProcedure

Create the configuration object:

$ oc apply -f overlaysize.ymlTo apply the new CRI-O configuration to your worker nodes, edit the worker machine config pool:

$ oc edit machineconfigpool workerAdd the

custom-criolabel based on thematchLabelsname you set in theContainerRuntimeConfigCRD:apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfigPool metadata: creationTimestamp: "2020-07-09T15:46:34Z" generation: 3 labels: custom-crio: overlay-size machineconfiguration.openshift.io/mco-built-in: ""Save the changes, then view the machine configs:

$ oc get machineconfigsNew

99-worker-generated-containerruntimeandrendered-worker-xyzobjects are created:Example output

99-worker-generated-containerruntime 4173030d89fbf4a7a0976d1665491a4d9a6e54f1 3.2.0 7m42s rendered-worker-xyz 4173030d89fbf4a7a0976d1665491a4d9a6e54f1 3.2.0 7m36sAfter those objects are created, monitor the machine config pool for the changes to be applied:

$ oc get mcp workerThe worker nodes show

UPDATINGasTrue, as well as the number of machines, the number updated, and other details:Example output

NAME CONFIG UPDATED UPDATING DEGRADED MACHINECOUNT READYMACHINECOUNT UPDATEDMACHINECOUNT DEGRADEDMACHINECOUNT AGE worker rendered-worker-xyz False True False 3 2 2 0 20hWhen complete, the worker nodes transition back to

UPDATINGasFalse, and theUPDATEDMACHINECOUNTnumber matches theMACHINECOUNT:Example output

NAME CONFIG UPDATED UPDATING DEGRADED MACHINECOUNT READYMACHINECOUNT UPDATEDMACHINECOUNT DEGRADEDMACHINECOUNT AGE worker rendered-worker-xyz True False False 3 3 3 0 20hLooking at a worker machine, you see that the new 8 GB max size configuration is applied to all of the workers:

Example output

head -n 7 /etc/containers/storage.conf [storage] driver = "overlay" runroot = "/var/run/containers/storage" graphroot = "/var/lib/containers/storage" [storage.options] additionalimagestores = [] size = "8G"Looking inside a container, you see that the root partition is now 8 GB:

Example output

~ $ df -h Filesystem Size Used Available Use% Mounted on overlay 8.0G 8.0K 8.0G 0% /

Chapter 4. Post-installation cluster tasks

After installing OpenShift Container Platform, you can further expand and customize your cluster to your requirements.

4.1. Available cluster customizations

You complete most of the cluster configuration and customization after you deploy your OpenShift Container Platform cluster. A number of configuration resources are available.

If you install your cluster on IBM Z, not all features and functions are available.

You modify the configuration resources to configure the major features of the cluster, such as the image registry, networking configuration, image build behavior, and the identity provider.

For current documentation of the settings that you control by using these resources, use the oc explain command, for example oc explain builds --api-version=config.openshift.io/v1

4.1.1. Cluster configuration resources

All cluster configuration resources are globally scoped (not namespaced) and named cluster.

| Resource name | Description |

|---|---|

|

| Provides API server configuration such as certificates and certificate authorities. |

|

| Controls the identity provider and authentication configuration for the cluster. |

|

| Controls default and enforced configuration for all builds on the cluster. |

|

| Configures the behavior of the web console interface, including the logout behavior. |

|

| Enables FeatureGates so that you can use Tech Preview features. |

|

| Configures how specific image registries should be treated (allowed, disallowed, insecure, CA details). |

|

| Configuration details related to routing such as the default domain for routes. |

|

| Configures identity providers and other behavior related to internal OAuth server flows. |

|

| Configures how projects are created including the project template. |

|

| Defines proxies to be used by components needing external network access. Note: not all components currently consume this value. |

|

| Configures scheduler behavior such as policies and default node selectors. |

4.1.2. Operator configuration resources

These configuration resources are cluster-scoped instances, named cluster, which control the behavior of a specific component as owned by a particular Operator.

| Resource name | Description |

|---|---|

|

| Controls console appearance such as branding customizations |

|

| Configures internal image registry settings such as public routing, log levels, proxy settings, resource constraints, replica counts, and storage type. |

|

| Configures the Samples Operator to control which example image streams and templates are installed on the cluster. |

4.1.3. Additional configuration resources

These configuration resources represent a single instance of a particular component. In some cases, you can request multiple instances by creating multiple instances of the resource. In other cases, the Operator can use only a specific resource instance name in a specific namespace. Reference the component-specific documentation for details on how and when you can create additional resource instances.

| Resource name | Instance name | Namespace | Description |

|---|---|---|---|

|

|

|

| Controls the Alertmanager deployment parameters. |

|

|

|

| Configures Ingress Operator behavior such as domain, number of replicas, certificates, and controller placement. |

4.1.4. Informational Resources

You use these resources to retrieve information about the cluster. Some configurations might require you to edit these resources directly.

| Resource name | Instance name | Description |

|---|---|---|

|

|

|

In OpenShift Container Platform 4.8, you must not customize the |

|

|

| You cannot modify the DNS settings for your cluster. You can view the DNS Operator status. |

|

|

| Configuration details allowing the cluster to interact with its cloud provider. |

|

|

| You cannot modify your cluster networking after installation. To customize your network, follow the process to customize networking during installation. |

4.2. Updating the global cluster pull secret

You can update the global pull secret for your cluster by either replacing the current pull secret or appending a new pull secret.

The procedure is required when users use a separate registry to store images than the registry used during installation.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole.

Procedure

Optional: To append a new pull secret to the existing pull secret, complete the following steps:

Enter the following command to download the pull secret:

$ oc get secret/pull-secret -n openshift-config --template='{{index .data ".dockerconfigjson" | base64decode}}' ><pull_secret_location>1 - 1

- Provide the path to the pull secret file.

Enter the following command to add the new pull secret:

$ oc registry login --registry="<registry>" \1 --auth-basic="<username>:<password>" \2 --to=<pull_secret_location>3 Alternatively, you can perform a manual update to the pull secret file.

Enter the following command to update the global pull secret for your cluster:

$ oc set data secret/pull-secret -n openshift-config --from-file=.dockerconfigjson=<pull_secret_location>1 - 1

- Provide the path to the new pull secret file.

This update is rolled out to all nodes, which can take some time depending on the size of your cluster.

NoteAs of OpenShift Container Platform 4.7.4, changes to the global pull secret no longer trigger a node drain or reboot.

4.3. Adjust worker nodes

If you incorrectly sized the worker nodes during deployment, adjust them by creating one or more new machine sets, scale them up, then scale the original machine set down before removing them.

4.3.1. Understanding the difference between machine sets and the machine config pool

MachineSet objects describe OpenShift Container Platform nodes with respect to the cloud or machine provider.

The MachineConfigPool object allows MachineConfigController components to define and provide the status of machines in the context of upgrades.

The MachineConfigPool object allows users to configure how upgrades are rolled out to the OpenShift Container Platform nodes in the machine config pool.

The NodeSelector object can be replaced with a reference to the MachineSet object.

4.3.2. Scaling a machine set manually

To add or remove an instance of a machine in a machine set, you can manually scale the machine set.

This guidance is relevant to fully automated, installer-provisioned infrastructure installations. Customized, user-provisioned infrastructure installations do not have machine sets.

Prerequisites

-

Install an OpenShift Container Platform cluster and the

occommand line. -

Log in to

ocas a user withcluster-adminpermission.

Procedure

View the machine sets that are in the cluster:

$ oc get machinesets -n openshift-machine-apiThe machine sets are listed in the form of

<clusterid>-worker-<aws-region-az>.View the machines that are in the cluster:

$ oc get machine -n openshift-machine-apiSet the annotation on the machine that you want to delete:

$ oc annotate machine/<machine_name> -n openshift-machine-api machine.openshift.io/cluster-api-delete-machine="true"Cordon and drain the node that you want to delete:

$ oc adm cordon <node_name> $ oc adm drain <node_name>Scale the machine set:

$ oc scale --replicas=2 machineset <machineset> -n openshift-machine-apiOr:

$ oc edit machineset <machineset> -n openshift-machine-apiTipYou can alternatively apply the following YAML to scale the machine set:

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: name: <machineset> namespace: openshift-machine-api spec: replicas: 2You can scale the machine set up or down. It takes several minutes for the new machines to be available.

Verification

Verify the deletion of the intended machine:

$ oc get machines

4.3.3. The machine set deletion policy

Random, Newest, and Oldest are the three supported deletion options. The default is Random, meaning that random machines are chosen and deleted when scaling machine sets down. The deletion policy can be set according to the use case by modifying the particular machine set:

spec:

deletePolicy: <delete_policy>

replicas: <desired_replica_count>

Specific machines can also be prioritized for deletion by adding the annotation machine.openshift.io/cluster-api-delete-machine=true to the machine of interest, regardless of the deletion policy.

By default, the OpenShift Container Platform router pods are deployed on workers. Because the router is required to access some cluster resources, including the web console, do not scale the worker machine set to 0 unless you first relocate the router pods.

Custom machine sets can be used for use cases requiring that services run on specific nodes and that those services are ignored by the controller when the worker machine sets are scaling down. This prevents service disruption.

4.3.4. Creating default cluster-wide node selectors

You can use default cluster-wide node selectors on pods together with labels on nodes to constrain all pods created in a cluster to specific nodes.

With cluster-wide node selectors, when you create a pod in that cluster, OpenShift Container Platform adds the default node selectors to the pod and schedules the pod on nodes with matching labels.

You configure cluster-wide node selectors by editing the Scheduler Operator custom resource (CR). You add labels to a node, a machine set, or a machine config. Adding the label to the machine set ensures that if the node or machine goes down, new nodes have the label. Labels added to a node or machine config do not persist if the node or machine goes down.

You can add additional key/value pairs to a pod. But you cannot add a different value for a default key.

Procedure

To add a default cluster-wide node selector:

Edit the Scheduler Operator CR to add the default cluster-wide node selectors:

$ oc edit scheduler clusterExample Scheduler Operator CR with a node selector

apiVersion: config.openshift.io/v1 kind: Scheduler metadata: name: cluster ... spec: defaultNodeSelector: type=user-node,region=east1 mastersSchedulable: false policy: name: ""- 1

- Add a node selector with the appropriate

<key>:<value>pairs.

After making this change, wait for the pods in the

openshift-kube-apiserverproject to redeploy. This can take several minutes. The default cluster-wide node selector does not take effect until the pods redeploy.Add labels to a node by using a machine set or editing the node directly:

Use a machine set to add labels to nodes managed by the machine set when a node is created:

Run the following command to add labels to a

MachineSetobject:$ oc patch MachineSet <name> --type='json' -p='[{"op":"add","path":"/spec/template/spec/metadata/labels", "value":{"<key>"="<value>","<key>"="<value>"}}]' -n openshift-machine-api1 - 1

- Add a

<key>/<value>pair for each label.

For example:

$ oc patch MachineSet ci-ln-l8nry52-f76d1-hl7m7-worker-c --type='json' -p='[{"op":"add","path":"/spec/template/spec/metadata/labels", "value":{"type":"user-node","region":"east"}}]' -n openshift-machine-apiTipYou can alternatively apply the following YAML to add labels to a machine set:

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: name: <machineset> namespace: openshift-machine-api spec: template: spec: metadata: labels: region: "east" type: "user-node"Verify that the labels are added to the

MachineSetobject by using theoc editcommand:For example:

$ oc edit MachineSet abc612-msrtw-worker-us-east-1c -n openshift-machine-apiExample

MachineSetobjectapiVersion: machine.openshift.io/v1beta1 kind: MachineSet ... spec: ... template: metadata: ... spec: metadata: labels: region: east type: user-node ...Redeploy the nodes associated with that machine set by scaling down to

0and scaling up the nodes:For example:

$ oc scale --replicas=0 MachineSet ci-ln-l8nry52-f76d1-hl7m7-worker-c -n openshift-machine-api$ oc scale --replicas=1 MachineSet ci-ln-l8nry52-f76d1-hl7m7-worker-c -n openshift-machine-apiWhen the nodes are ready and available, verify that the label is added to the nodes by using the

oc getcommand:$ oc get nodes -l <key>=<value>For example:

$ oc get nodes -l type=user-nodeExample output

NAME STATUS ROLES AGE VERSION ci-ln-l8nry52-f76d1-hl7m7-worker-c-vmqzp Ready worker 61s v1.18.3+002a51f

Add labels directly to a node:

Edit the

Nodeobject for the node:$ oc label nodes <name> <key>=<value>For example, to label a node:

$ oc label nodes ci-ln-l8nry52-f76d1-hl7m7-worker-b-tgq49 type=user-node region=eastTipYou can alternatively apply the following YAML to add labels to a node:

kind: Node apiVersion: v1 metadata: name: <node_name> labels: type: "user-node" region: "east"Verify that the labels are added to the node using the

oc getcommand:$ oc get nodes -l <key>=<value>,<key>=<value>For example:

$ oc get nodes -l type=user-node,region=eastExample output

NAME STATUS ROLES AGE VERSION ci-ln-l8nry52-f76d1-hl7m7-worker-b-tgq49 Ready worker 17m v1.18.3+002a51f

4.4. Creating infrastructure machine sets for production environments