Este contenido no está disponible en el idioma seleccionado.

Serverless

OpenShift Serverless installation, usage, and release notes

Abstract

Chapter 1. Release notes

For additional information about the OpenShift Serverless life cycle and supported platforms, refer to the Platform Life Cycle Policy.

Release notes contain information about new and deprecated features, breaking changes, and known issues. The following release notes apply for the most recent OpenShift Serverless releases on OpenShift Container Platform.

For an overview of OpenShift Serverless functionality, see About OpenShift Serverless.

OpenShift Serverless is based on the open source Knative project.

For details about the latest Knative component releases, see the Knative blog.

1.1. About API versions

API versions are an important measure of the development status of certain features and custom resources in OpenShift Serverless. Creating resources on your cluster that do not use the correct API version can cause issues in your deployment.

The OpenShift Serverless Operator automatically upgrades older resources that use deprecated versions of APIs to use the latest version. For example, if you have created resources on your cluster that use older versions of the ApiServerSource API, such as v1beta1, the OpenShift Serverless Operator automatically updates these resources to use the v1 version of the API when this is available and the v1beta1 version is deprecated.

After they have been deprecated, older versions of APIs might be removed in any upcoming release. Using deprecated versions of APIs does not cause resources to fail. However, if you try to use a version of an API that has been removed, it will cause resources to fail. Ensure that your manifests are updated to use the latest version to avoid issues.

1.2. Generally Available and Technology Preview features

Features which are Generally Available (GA) are fully supported and are suitable for production use. Technology Preview (TP) features are experimental features and are not intended for production use. See the Technology Preview scope of support on the Red Hat Customer Portal for more information about TP features.

The following table provides information about which OpenShift Serverless features are GA and which are TP:

| Feature | 1.26 | 1.27 | 1.28 |

|---|---|---|---|

|

| GA | GA | GA |

| Quarkus functions | GA | GA | GA |

| Node.js functions | TP | TP | GA |

| TypeScript functions | TP | TP | GA |

| Python functions | - | - | TP |

| Service Mesh mTLS | GA | GA | GA |

|

| GA | GA | GA |

| HTTPS redirection | GA | GA | GA |

| Kafka broker | GA | GA | GA |

| Kafka sink | GA | GA | GA |

| Init containers support for Knative services | GA | GA | GA |

| PVC support for Knative services | GA | GA | GA |

| TLS for internal traffic | TP | TP | TP |

| Namespace-scoped brokers | - | TP | TP |

|

| - | - | TP |

1.3. Deprecated and removed features

Some features that were Generally Available (GA) or a Technology Preview (TP) in previous releases have been deprecated or removed. Deprecated functionality is still included in OpenShift Serverless and continues to be supported; however, it will be removed in a future release of this product and is not recommended for new deployments.

For the most recent list of major functionality deprecated and removed within OpenShift Serverless, refer to the following table:

| Feature | 1.20 | 1.21 | 1.22 to 1.26 | 1.27 | 1.28 |

|---|---|---|---|---|---|

|

| Deprecated | Deprecated | Removed | Removed | Removed |

|

| Deprecated | Removed | Removed | Removed | Removed |

|

Serving and Eventing | - | - | - | Deprecated | Deprecated |

|

| - | - | - | - | Deprecated |

1.4. Release notes for Red Hat OpenShift Serverless 1.28

OpenShift Serverless 1.28 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.4.1. New features

- OpenShift Serverless now uses Knative Serving 1.7.

- OpenShift Serverless now uses Knative Eventing 1.7.

- OpenShift Serverless now uses Kourier 1.7.

-

OpenShift Serverless now uses Knative (

kn) CLI 1.7. - OpenShift Serverless now uses Knative Kafka 1.7.

-

The

kn funcCLI plug-in now usesfunc1.9.1 version. - Node.js and TypeScript runtimes for OpenShift Serverless Functions are now Generally Available (GA).

- Python runtime for OpenShift Serverless Functions is now available as a Technology Preview.

- Multi-container support for Knative Serving is now available as a Technology Preview. This feature allows you to use a single Knative service to deploy a multi-container pod.

In OpenShift Serverless 1.29 or later, the following components of Knative Eventing will be scaled down from two pods to one:

-

imc-controller -

imc-dispatcher -

mt-broker-controller -

mt-broker-filter -

mt-broker-ingress

-

The

serverless.openshift.io/enable-secret-informer-filteringannotation for the Serving CR is now deprecated. The annotation is valid only for Istio, and not for Kourier.With OpenShift Serverless 1.28, the OpenShift Serverless Operator allows injecting the environment variable

ENABLE_SECRET_INFORMER_FILTERING_BY_CERT_UIDfor bothnet-istioandnet-kourier.To prevent problems when upgrading from OpenShift Serverless 1.28 to some future version, users must annotate their secrets with

networking.internal.knative.dev/certificate-uid:some_cuid.

1.4.2. Known issues

Currently, runtimes for Python are not supported for OpenShift Serverless Functions on IBM Power, IBM zSystems, and IBM® LinuxONE.

Node.js, TypeScript, and Quarkus functions are supported on these architectures.

On the Windows platform, Python functions cannot be locally built, run, or deployed using the Source-to-Image builder due to the

app.shfile permissions.To work around this problem, use the Windows Subsystem for Linux.

1.5. Release notes for Red Hat OpenShift Serverless 1.27

OpenShift Serverless 1.27 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

OpenShift Serverless 1.26 is the earliest release that is fully supported on OpenShift Container Platform 4.12. OpenShift Serverless 1.25 and older does not deploy on OpenShift Container Platform 4.12.

For this reason, before upgrading OpenShift Container Platform to version 4.12, first upgrade OpenShift Serverless to version 1.26 or 1.27.

1.5.1. New features

- OpenShift Serverless now uses Knative Serving 1.6.

- OpenShift Serverless now uses Knative Eventing 1.6.

- OpenShift Serverless now uses Kourier 1.6.

-

OpenShift Serverless now uses Knative (

kn) CLI 1.6. - OpenShift Serverless now uses Knative Kafka 1.6.

-

The

kn funcCLI plug-in now usesfunc1.8.1. - Namespace-scoped brokers are now available as a Technology Preview. Such brokers can be used, for instance, to implement role-based access control (RBAC) policies.

-

KafkaSinknow uses theCloudEventbinary content mode by default. The binary content mode is more efficient than the structured mode because it uses headers in its body instead of aCloudEvent. For example, for the HTTP protocol, it uses HTTP headers. - You can now use the gRPC framework over the HTTP/2 protocol for external traffic using the OpenShift Route on OpenShift Container Platform 4.10 and later. This improves efficiency and speed of the communications between the client and server.

-

API version

v1alpha1of the Knative Operator Serving and Eventings CRDs is deprecated in 1.27. It will be removed in future versions. Red Hat strongly recommends to use thev1beta1version instead. This does not affect the existing installations, because CRDs are updated automatically when upgrading the Serverless Operator. - The delivery timeout feature is now enabled by default. It allows you to specify the timeout for each sent HTTP request. The feature remains a Technology Preview.

1.5.2. Fixed issues

-

Previously, Knative services sometimes did not get into the

Readystate, reporting waiting for the load balancer to be ready. This issue has been fixed.

1.5.3. Known issues

-

Integrating OpenShift Serverless with Red Hat OpenShift Service Mesh causes the

net-kourierpod to run out of memory on startup when too many secrets are present on the cluster. Namespace-scoped brokers might leave

ClusterRoleBindingsin the user namespace even after deletion of namespace-scoped brokers.If this happens, delete the

ClusterRoleBindingnamedrbac-proxy-reviews-prom-rb-knative-kafka-broker-data-plane-{{.Namespace}}in the user namespace.If you use

net-istiofor Ingress and enable mTLS via SMCP usingsecurity.dataPlane.mtls: true, Service Mesh deploysDestinationRulesfor the*.localhost, which does not allowDomainMappingfor OpenShift Serverless.To work around this issue, enable mTLS by deploying

PeerAuthenticationinstead of usingsecurity.dataPlane.mtls: true.

1.6. Release notes for Red Hat OpenShift Serverless 1.26

OpenShift Serverless 1.26 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.6.1. New features

- OpenShift Serverless Functions with Quarkus is now GA.

- OpenShift Serverless now uses Knative Serving 1.5.

- OpenShift Serverless now uses Knative Eventing 1.5.

- OpenShift Serverless now uses Kourier 1.5.

-

OpenShift Serverless now uses Knative (

kn) CLI 1.5. - OpenShift Serverless now uses Knative Kafka 1.5.

- OpenShift Serverless now uses Knative Operator 1.3.

-

The

kn funcCLI plugin now usesfunc1.8.1. - Persistent volume claims (PVCs) are now GA. PVCs provide permanent data storage for your Knative services.

The new trigger filters feature is now available as a Developer Preview. It allows users to specify a set of filter expressions, where each expression evaluates to either true or false for each event.

To enable new trigger filters, add the

new-trigger-filters: enabledentry in the section of theKnativeEventingtype in the operator config map:apiVersion: operator.knative.dev/v1beta1 kind: KnativeEventing ... ... spec: config: features: new-trigger-filters: enabled ...Knative Operator 1.3 adds the updated

v1beta1version of the API foroperator.knative.dev.To update from

v1alpha1tov1beta1in yourKnativeServingandKnativeEventingcustom resource config maps, edit theapiVersionkey:Example

KnativeServingcustom resource config mapapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing ...Example

KnativeEventingcustom resource config mapapiVersion: operator.knative.dev/v1beta1 kind: KnativeEventing ...

1.6.2. Fixed issues

- Previously, Federal Information Processing Standards (FIPS) mode was disabled for Kafka broker, Kafka source, and Kafka sink. This has been fixed, and FIPS mode is now available.

1.6.3. Known issues

If you use

net-istiofor Ingress and enable mTLS via SMCP usingsecurity.dataPlane.mtls: true, Service Mesh deploysDestinationRulesfor the*.localhost, which does not allowDomainMappingfor OpenShift Serverless.To work around this issue, enable mTLS by deploying

PeerAuthenticationinstead of usingsecurity.dataPlane.mtls: true.

1.7. Release notes for Red Hat OpenShift Serverless 1.25.0

OpenShift Serverless 1.25.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.7.1. New features

- OpenShift Serverless now uses Knative Serving 1.4.

- OpenShift Serverless now uses Knative Eventing 1.4.

- OpenShift Serverless now uses Kourier 1.4.

-

OpenShift Serverless now uses Knative (

kn) CLI 1.4. - OpenShift Serverless now uses Knative Kafka 1.4.

-

The

kn funcCLI plugin now usesfunc1.7.0. - Integrated development environment (IDE) plugins for creating and deploying functions are now available for Visual Studio Code and IntelliJ.

Knative Kafka broker is now GA. Knative Kafka broker is a highly performant implementation of the Knative broker API, directly targeting Apache Kafka.

It is recommended to not use the MT-Channel-Broker, but the Knative Kafka broker instead.

-

Knative Kafka sink is now GA. A

KafkaSinktakes aCloudEventand sends it to an Apache Kafka topic. Events can be specified in either structured or binary content modes. - Enabling TLS for internal traffic is now available as a Technology Preview.

1.7.2. Fixed issues

- Previously, Knative Serving had an issue where the readiness probe failed if the container was restarted after a liveness probe fail. This issue has been fixed.

1.7.3. Known issues

- The Federal Information Processing Standards (FIPS) mode is disabled for Kafka broker, Kafka source, and Kafka sink.

-

The

SinkBindingobject does not support custom revision names for services. The Knative Serving Controller pod adds a new informer to watch secrets in the cluster. The informer includes the secrets in the cache, which increases memory consumption of the controller pod.

If the pod runs out of memory, you can work around the issue by increasing the memory limit for the deployment.

If you use

net-istiofor Ingress and enable mTLS via SMCP usingsecurity.dataPlane.mtls: true, Service Mesh deploysDestinationRulesfor the*.localhost, which does not allowDomainMappingfor OpenShift Serverless.To work around this issue, enable mTLS by deploying

PeerAuthenticationinstead of usingsecurity.dataPlane.mtls: true.

1.8. Release notes for Red Hat OpenShift Serverless 1.24.0

OpenShift Serverless 1.24.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.8.1. New features

- OpenShift Serverless now uses Knative Serving 1.3.

- OpenShift Serverless now uses Knative Eventing 1.3.

- OpenShift Serverless now uses Kourier 1.3.

-

OpenShift Serverless now uses Knative

knCLI 1.3. - OpenShift Serverless now uses Knative Kafka 1.3.

-

The

kn funcCLI plugin now usesfunc0.24. - Init containers support for Knative services is now generally available (GA).

- OpenShift Serverless logic is now available as a Developer Preview. It enables defining declarative workflow models for managing serverless applications.

- You can now use the cost management service with OpenShift Serverless.

1.8.2. Fixed issues

Integrating OpenShift Serverless with Red Hat OpenShift Service Mesh causes the

net-istio-controllerpod to run out of memory on startup when too many secrets are present on the cluster.It is now possible to enable secret filtering, which causes

net-istio-controllerto consider only secrets with anetworking.internal.knative.dev/certificate-uidlabel, thus reducing the amount of memory needed.- The OpenShift Serverless Functions Technology Preview now uses Cloud Native Buildpacks by default to build container images.

1.8.3. Known issues

- The Federal Information Processing Standards (FIPS) mode is disabled for Kafka broker, Kafka source, and Kafka sink.

In OpenShift Serverless 1.23, support for KafkaBindings and the

kafka-bindingwebhook were removed. However, an existingkafkabindings.webhook.kafka.sources.knative.dev MutatingWebhookConfigurationmight remain, pointing to thekafka-source-webhookservice, which no longer exists.For certain specifications of KafkaBindings on the cluster,

kafkabindings.webhook.kafka.sources.knative.dev MutatingWebhookConfigurationmight be configured to pass any create and update events to various resources, such as Deployments, Knative Services, or Jobs, through the webhook, which would then fail.To work around this issue, manually delete

kafkabindings.webhook.kafka.sources.knative.dev MutatingWebhookConfigurationfrom the cluster after upgrading to OpenShift Serverless 1.23:$ oc delete mutatingwebhookconfiguration kafkabindings.webhook.kafka.sources.knative.devIf you use

net-istiofor Ingress and enable mTLS via SMCP usingsecurity.dataPlane.mtls: true, Service Mesh deploysDestinationRulesfor the*.localhost, which does not allowDomainMappingfor OpenShift Serverless.To work around this issue, enable mTLS by deploying

PeerAuthenticationinstead of usingsecurity.dataPlane.mtls: true.

1.9. Release notes for Red Hat OpenShift Serverless 1.23.0

OpenShift Serverless 1.23.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.9.1. New features

- OpenShift Serverless now uses Knative Serving 1.2.

- OpenShift Serverless now uses Knative Eventing 1.2.

- OpenShift Serverless now uses Kourier 1.2.

-

OpenShift Serverless now uses Knative (

kn) CLI 1.2. - OpenShift Serverless now uses Knative Kafka 1.2.

-

The

kn funcCLI plugin now usesfunc0.24. -

It is now possible to use the

kafka.eventing.knative.dev/external.topicannotation with the Kafka broker. This annotation makes it possible to use an existing externally managed topic instead of the broker creating its own internal topic. -

The

kafka-ch-controllerandkafka-webhookKafka components no longer exist. These components have been replaced by thekafka-webhook-eventingcomponent. - The OpenShift Serverless Functions Technology Preview now uses Source-to-Image (S2I) by default to build container images.

1.9.2. Known issues

- The Federal Information Processing Standards (FIPS) mode is disabled for Kafka broker, Kafka source, and Kafka sink.

-

If you delete a namespace that includes a Kafka broker, the namespace finalizer may fail to be removed if the broker’s

auth.secret.ref.namesecret is deleted before the broker. Running OpenShift Serverless with a large number of Knative services can cause Knative activator pods to run close to their default memory limits of 600MB. These pods might be restarted if memory consumption reaches this limit. Requests and limits for the activator deployment can be configured by modifying the

KnativeServingcustom resource:apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: deployments: - name: activator resources: - container: activator requests: cpu: 300m memory: 60Mi limits: cpu: 1000m memory: 1000Mi-

If you are using Cloud Native Buildpacks as the local build strategy for a function,

kn funcis unable to automatically start podman or use an SSH tunnel to a remote daemon. The workaround for these issues is to have a Docker or podman daemon already running on the local development computer before deploying a function. - On-cluster function builds currently fail for Quarkus and Golang runtimes. They work correctly for Node, Typescript, Python, and Springboot runtimes.

If you use

net-istiofor Ingress and enable mTLS via SMCP usingsecurity.dataPlane.mtls: true, Service Mesh deploysDestinationRulesfor the*.localhost, which does not allowDomainMappingfor OpenShift Serverless.To work around this issue, enable mTLS by deploying

PeerAuthenticationinstead of usingsecurity.dataPlane.mtls: true.

1.10. Release notes for Red Hat OpenShift Serverless 1.22.0

OpenShift Serverless 1.22.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.10.1. New features

- OpenShift Serverless now uses Knative Serving 1.1.

- OpenShift Serverless now uses Knative Eventing 1.1.

- OpenShift Serverless now uses Kourier 1.1.

-

OpenShift Serverless now uses Knative (

kn) CLI 1.1. - OpenShift Serverless now uses Knative Kafka 1.1.

-

The

kn funcCLI plugin now usesfunc0.23. - Init containers support for Knative services is now available as a Technology Preview.

- Persistent volume claim (PVC) support for Knative services is now available as a Technology Preview.

-

The

knative-serving,knative-serving-ingress,knative-eventingandknative-kafkasystem namespaces now have theknative.openshift.io/part-of: "openshift-serverless"label by default. - The Knative Eventing - Kafka Broker/Trigger dashboard has been added, which allows visualizing Kafka broker and trigger metrics in the web console.

- The Knative Eventing - KafkaSink dashboard has been added, which allows visualizing KafkaSink metrics in the web console.

- The Knative Eventing - Broker/Trigger dashboard is now called Knative Eventing - Channel-based Broker/Trigger.

-

The

knative.openshift.io/part-of: "openshift-serverless"label has substituted theknative.openshift.io/system-namespacelabel. -

Naming style in Knative Serving YAML configuration files changed from camel case (

ExampleName) to hyphen style (example-name). Beginning with this release, use the hyphen style notation when creating or editing Knative Serving YAML configuration files.

1.10.2. Known issues

- The Federal Information Processing Standards (FIPS) mode is disabled for Kafka broker, Kafka source, and Kafka sink.

1.11. Release notes for Red Hat OpenShift Serverless 1.21.0

OpenShift Serverless 1.21.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.11.1. New features

- OpenShift Serverless now uses Knative Serving 1.0

- OpenShift Serverless now uses Knative Eventing 1.0.

- OpenShift Serverless now uses Kourier 1.0.

-

OpenShift Serverless now uses Knative (

kn) CLI 1.0. - OpenShift Serverless now uses Knative Kafka 1.0.

-

The

kn funcCLI plugin now usesfunc0.21. - The Kafka sink is now available as a Technology Preview.

-

The Knative open source project has begun to deprecate camel-cased configuration keys in favor of using kebab-cased keys consistently. As a result, the

defaultExternalSchemekey, previously mentioned in the OpenShift Serverless 1.18.0 release notes, is now deprecated and replaced by thedefault-external-schemekey. Usage instructions for the key remain the same.

1.11.2. Fixed issues

-

In OpenShift Serverless 1.20.0, there was an event delivery issue affecting the use of

kn event sendto send events to a service. This issue is now fixed. -

In OpenShift Serverless 1.20.0 (

func0.20), TypeScript functions created with thehttptemplate failed to deploy on the cluster. This issue is now fixed. -

In OpenShift Serverless 1.20.0 (

func0.20), deploying a function using thegcr.ioregistry failed with an error. This issue is now fixed. -

In OpenShift Serverless 1.20.0 (

func0.20), creating a Springboot function project directory with thekn func createcommand and then running thekn func buildcommand failed with an error message. This issue is now fixed. -

In OpenShift Serverless 1.19.0 (

func0.19), some runtimes were unable to build a function by using podman. This issue is now fixed.

1.11.3. Known issues

Currently, the domain mapping controller cannot process the URI of a broker, which contains a path that is currently not supported.

This means that, if you want to use a

DomainMappingcustom resource (CR) to map a custom domain to a broker, you must configure theDomainMappingCR with the broker’s ingress service, and append the exact path of the broker to the custom domain:Example

DomainMappingCRapiVersion: serving.knative.dev/v1alpha1 kind: DomainMapping metadata: name: <domain-name> namespace: knative-eventing spec: ref: name: broker-ingress kind: Service apiVersion: v1The URI for the broker is then

<domain-name>/<broker-namespace>/<broker-name>.

1.12. Release notes for Red Hat OpenShift Serverless 1.20.0

OpenShift Serverless 1.20.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.12.1. New features

- OpenShift Serverless now uses Knative Serving 0.26.

- OpenShift Serverless now uses Knative Eventing 0.26.

- OpenShift Serverless now uses Kourier 0.26.

-

OpenShift Serverless now uses Knative (

kn) CLI 0.26. - OpenShift Serverless now uses Knative Kafka 0.26.

-

The

kn funcCLI plugin now usesfunc0.20. The Kafka broker is now available as a Technology Preview.

ImportantThe Kafka broker, which is currently in Technology Preview, is not supported on FIPS.

-

The

kn eventplugin is now available as a Technology Preview. -

The

--min-scaleand--max-scaleflags for thekn service createcommand have been deprecated. Use the--scale-minand--scale-maxflags instead.

1.12.2. Known issues

OpenShift Serverless deploys Knative services with a default address that uses HTTPS. When sending an event to a resource inside the cluster, the sender does not have the cluster certificate authority (CA) configured. This causes event delivery to fail, unless the cluster uses globally accepted certificates.

For example, an event delivery to a publicly accessible address works:

$ kn event send --to-url https://ce-api.foo.example.com/On the other hand, this delivery fails if the service uses a public address with an HTTPS certificate issued by a custom CA:

$ kn event send --to Service:serving.knative.dev/v1:event-displaySending an event to other addressable objects, such as brokers or channels, is not affected by this issue and works as expected.

- The Kafka broker currently does not work on a cluster with Federal Information Processing Standards (FIPS) mode enabled.

If you create a Springboot function project directory with the

kn func createcommand, subsequent running of thekn func buildcommand fails with this error message:[analyzer] no stack metadata found at path '' [analyzer] ERROR: failed to : set API for buildpack 'paketo-buildpacks/ca-certificates@3.0.2': buildpack API version '0.7' is incompatible with the lifecycleAs a workaround, you can change the

builderproperty togcr.io/paketo-buildpacks/builder:basein the function configuration filefunc.yaml.Deploying a function using the

gcr.ioregistry fails with this error message:Error: failed to get credentials: failed to verify credentials: status code: 404As a workaround, use a different registry than

gcr.io, such asquay.ioordocker.io.TypeScript functions created with the

httptemplate fail to deploy on the cluster.As a workaround, in the

func.yamlfile, replace the following section:buildEnvs: []with this:

buildEnvs: - name: BP_NODE_RUN_SCRIPTS value: buildIn

funcversion 0.20, some runtimes might be unable to build a function by using podman. You might see an error message similar to the following:ERROR: failed to image: error during connect: Get "http://%2Fvar%2Frun%2Fdocker.sock/v1.40/info": EOFThe following workaround exists for this issue:

Update the podman service by adding

--time=0to the serviceExecStartdefinition:Example service configuration

ExecStart=/usr/bin/podman $LOGGING system service --time=0Restart the podman service by running the following commands:

$ systemctl --user daemon-reload$ systemctl restart --user podman.socket

Alternatively, you can expose the podman API by using TCP:

$ podman system service --time=0 tcp:127.0.0.1:5534 & export DOCKER_HOST=tcp://127.0.0.1:5534

1.13. Release notes for Red Hat OpenShift Serverless 1.19.0

OpenShift Serverless 1.19.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.13.1. New features

- OpenShift Serverless now uses Knative Serving 0.25.

- OpenShift Serverless now uses Knative Eventing 0.25.

- OpenShift Serverless now uses Kourier 0.25.

-

OpenShift Serverless now uses Knative (

kn) CLI 0.25. - OpenShift Serverless now uses Knative Kafka 0.25.

-

The

kn funcCLI plugin now usesfunc0.19. -

The

KafkaBindingAPI is deprecated in OpenShift Serverless 1.19.0 and will be removed in a future release. - HTTPS redirection is now supported and can be configured either globally for a cluster or per each Knative service.

1.13.2. Fixed issues

- In previous releases, the Kafka channel dispatcher waited only for the local commit to succeed before responding, which might have caused lost events in the case of an Apache Kafka node failure. The Kafka channel dispatcher now waits for all in-sync replicas to commit before responding.

1.13.3. Known issues

In

funcversion 0.19, some runtimes might be unable to build a function by using podman. You might see an error message similar to the following:ERROR: failed to image: error during connect: Get "http://%2Fvar%2Frun%2Fdocker.sock/v1.40/info": EOFThe following workaround exists for this issue:

Update the podman service by adding

--time=0to the serviceExecStartdefinition:Example service configuration

ExecStart=/usr/bin/podman $LOGGING system service --time=0Restart the podman service by running the following commands:

$ systemctl --user daemon-reload$ systemctl restart --user podman.socket

Alternatively, you can expose the podman API by using TCP:

$ podman system service --time=0 tcp:127.0.0.1:5534 & export DOCKER_HOST=tcp://127.0.0.1:5534

1.14. Release notes for Red Hat OpenShift Serverless 1.18.0

OpenShift Serverless 1.18.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.14.1. New features

- OpenShift Serverless now uses Knative Serving 0.24.0.

- OpenShift Serverless now uses Knative Eventing 0.24.0.

- OpenShift Serverless now uses Kourier 0.24.0.

-

OpenShift Serverless now uses Knative (

kn) CLI 0.24.0. - OpenShift Serverless now uses Knative Kafka 0.24.7.

-

The

kn funcCLI plugin now usesfunc0.18.0. In the upcoming OpenShift Serverless 1.19.0 release, the URL scheme of external routes will default to HTTPS for enhanced security.

If you do not want this change to apply for your workloads, you can override the default setting before upgrading to 1.19.0, by adding the following YAML to your

KnativeServingcustom resource (CR):... spec: config: network: defaultExternalScheme: "http" ...If you want the change to apply in 1.18.0 already, add the following YAML:

... spec: config: network: defaultExternalScheme: "https" ...In the upcoming OpenShift Serverless 1.19.0 release, the default service type by which the Kourier Gateway is exposed will be

ClusterIPand notLoadBalancer.If you do not want this change to apply to your workloads, you can override the default setting before upgrading to 1.19.0, by adding the following YAML to your

KnativeServingcustom resource (CR):... spec: ingress: kourier: service-type: LoadBalancer ...-

You can now use

emptyDirvolumes with OpenShift Serverless. See the OpenShift Serverless documentation about Knative Serving for details. -

Rust templates are now available when you create a function using

kn func.

1.14.2. Fixed issues

- The prior 1.4 version of Camel-K was not compatible with OpenShift Serverless 1.17.0. The issue in Camel-K has been fixed, and Camel-K version 1.4.1 can be used with OpenShift Serverless 1.17.0.

Previously, if you created a new subscription for a Kafka channel, or a new Kafka source, a delay was possible in the Kafka data plane becoming ready to dispatch messages after the newly created subscription or sink reported a ready status.

As a result, messages that were sent during the time when the data plane was not reporting a ready status, might not have been delivered to the subscriber or sink.

In OpenShift Serverless 1.18.0, the issue is fixed and the initial messages are no longer lost. For more information about the issue, see Knowledgebase Article #6343981.

1.14.3. Known issues

Older versions of the Knative

knCLI might use older versions of the Knative Serving and Knative Eventing APIs. For example, version 0.23.2 of theknCLI uses thev1alpha1API version.On the other hand, newer releases of OpenShift Serverless might no longer support older API versions. For example, OpenShift Serverless 1.18.0 no longer supports version

v1alpha1of thekafkasources.sources.knative.devAPI.Consequently, using an older version of the Knative

knCLI with a newer OpenShift Serverless might produce an error because thekncannot find the outdated API. For example, version 0.23.2 of theknCLI does not work with OpenShift Serverless 1.18.0.To avoid issues, use the latest

knCLI version available for your OpenShift Serverless release. For OpenShift Serverless 1.18.0, use KnativeknCLI 0.24.0.

1.15. Release Notes for Red Hat OpenShift Serverless 1.17.0

OpenShift Serverless 1.17.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.15.1. New features

- OpenShift Serverless now uses Knative Serving 0.23.0.

- OpenShift Serverless now uses Knative Eventing 0.23.0.

- OpenShift Serverless now uses Kourier 0.23.0.

-

OpenShift Serverless now uses Knative

knCLI 0.23.0. - OpenShift Serverless now uses Knative Kafka 0.23.0.

-

The

kn funcCLI plug-in now usesfunc0.17.0. In the upcoming OpenShift Serverless 1.19.0 release, the URL scheme of external routes will default to HTTPS for enhanced security.

If you do not want this change to apply for your workloads, you can override the default setting before upgrading to 1.19.0, by adding the following YAML to your

KnativeServingcustom resource (CR):... spec: config: network: defaultExternalScheme: "http" ...- mTLS functionality is now Generally Available (GA).

-

TypeScript templates are now available when you create a function using

kn func. Changes to API versions in Knative Eventing 0.23.0:

-

The

v1alpha1version of theKafkaChannelAPI, which was deprecated in OpenShift Serverless version 1.14.0, has been removed. If theChannelTemplateSpecparameters of your config maps contain references to this older version, you must update this part of the spec to use the correct API version.

-

The

1.15.2. Known issues

If you try to use an older version of the Knative

knCLI with a newer OpenShift Serverless release, the API is not found and an error occurs.For example, if you use the 1.16.0 release of the

knCLI, which uses version 0.22.0, with the 1.17.0 OpenShift Serverless release, which uses the 0.23.0 versions of the Knative Serving and Knative Eventing APIs, the CLI does not work because it continues to look for the outdated 0.22.0 API versions.Ensure that you are using the latest

knCLI version for your OpenShift Serverless release to avoid issues.- Kafka channel metrics are not monitored or shown in the corresponding web console dashboard in this release. This is due to a breaking change in the Kafka dispatcher reconciling process.

If you create a new subscription for a Kafka channel, or a new Kafka source, there might be a delay in the Kafka data plane becoming ready to dispatch messages after the newly created subscription or sink reports a ready status.

As a result, messages that are sent during the time when the data plane is not reporting a ready status might not be delivered to the subscriber or sink.

For more information about this issue and possible workarounds, see Knowledge Article #6343981.

The Camel-K 1.4 release is not compatible with OpenShift Serverless version 1.17.0. This is because Camel-K 1.4 uses APIs that were removed in Knative version 0.23.0. There is currently no workaround available for this issue. If you need to use Camel-K 1.4 with OpenShift Serverless, do not upgrade to OpenShift Serverless version 1.17.0.

NoteThe issue has been fixed, and Camel-K version 1.4.1 is compatible with OpenShift Serverless 1.17.0.

1.16. Release Notes for Red Hat OpenShift Serverless 1.16.0

OpenShift Serverless 1.16.0 is now available. New features, changes, and known issues that pertain to OpenShift Serverless on OpenShift Container Platform are included in this topic.

1.16.1. New features

- OpenShift Serverless now uses Knative Serving 0.22.0.

- OpenShift Serverless now uses Knative Eventing 0.22.0.

- OpenShift Serverless now uses Kourier 0.22.0.

-

OpenShift Serverless now uses Knative

knCLI 0.22.0. - OpenShift Serverless now uses Knative Kafka 0.22.0.

-

The

kn funcCLI plug-in now usesfunc0.16.0. -

The

kn func emitcommand has been added to the functionsknplug-in. You can use this command to send events to test locally deployed functions.

1.16.2. Known issues

- You must upgrade OpenShift Container Platform to version 4.6.30, 4.7.11, or higher before upgrading to OpenShift Serverless 1.16.0.

The AMQ Streams Operator might prevent the installation or upgrade of the OpenShift Serverless Operator. If this happens, the following error is thrown by Operator Lifecycle Manager (OLM):

WARNING: found multiple channel heads: [amqstreams.v1.7.2 amqstreams.v1.6.2], please check the `replaces`/`skipRange` fields of the operator bundles.You can fix this issue by uninstalling the AMQ Streams Operator before installing or upgrading the OpenShift Serverless Operator. You can then reinstall the AMQ Streams Operator.

- If Service Mesh is enabled with mTLS, metrics for Knative Serving are disabled by default because Service Mesh prevents Prometheus from scraping metrics. For instructions on enabling Knative Serving metrics for use with Service Mesh and mTLS, see the "Integrating Service Mesh with OpenShift Serverless" section of the Serverless documentation.

If you deploy Service Mesh CRs with the Istio ingress enabled, you might see the following warning in the

istio-ingressgatewaypod:2021-05-02T12:56:17.700398Z warning envoy config [external/envoy/source/common/config/grpc_subscription_impl.cc:101] gRPC config for type.googleapis.com/envoy.api.v2.Listener rejected: Error adding/updating listener(s) 0.0.0.0_8081: duplicate listener 0.0.0.0_8081 foundYour Knative services might also not be accessible.

You can use the following workaround to fix this issue by recreating the

knative-local-gatewayservice:Delete the existing

knative-local-gatewayservice in theistio-systemnamespace:$ oc delete services -n istio-system knative-local-gatewayCreate and apply a

knative-local-gatewayservice that contains the following YAML:apiVersion: v1 kind: Service metadata: name: knative-local-gateway namespace: istio-system labels: experimental.istio.io/disable-gateway-port-translation: "true" spec: type: ClusterIP selector: istio: ingressgateway ports: - name: http2 port: 80 targetPort: 8081

If you have 1000 Knative services on a cluster, and then perform a reinstall or upgrade of Knative Serving, there is a delay when you create the first new service after the

KnativeServingcustom resource (CR) becomesReady.The

3scale-kourier-controlservice reconciles all previously existing Knative services before processing the creation of a new service, which causes the new service to spend approximately 800 seconds in anIngressNotConfiguredorUnknownstate before the state updates toReady.If you create a new subscription for a Kafka channel, or a new Kafka source, there might be a delay in the Kafka data plane becoming ready to dispatch messages after the newly created subscription or sink reports a ready status.

As a result, messages that are sent during the time when the data plane is not reporting a ready status might not be delivered to the subscriber or sink.

For more information about this issue and possible workarounds, see Knowledge Article #6343981.

Chapter 2. Discover

2.1. About OpenShift Serverless

OpenShift Serverless provides Kubernetes native building blocks that enable developers to create and deploy serverless, event-driven applications on OpenShift Container Platform. OpenShift Serverless is based on the open source Knative project, which provides portability and consistency for hybrid and multi-cloud environments by enabling an enterprise-grade serverless platform.

Because OpenShift Serverless releases on a different cadence from OpenShift Container Platform, the OpenShift Serverless documentation does not maintain separate documentation sets for minor versions of the product. The current documentation set applies to all currently supported versions of OpenShift Serverless unless version-specific limitations are called out in a particular topic or for a particular feature.

For additional information about the OpenShift Serverless life cycle and supported platforms, refer to the Platform Life Cycle Policy.

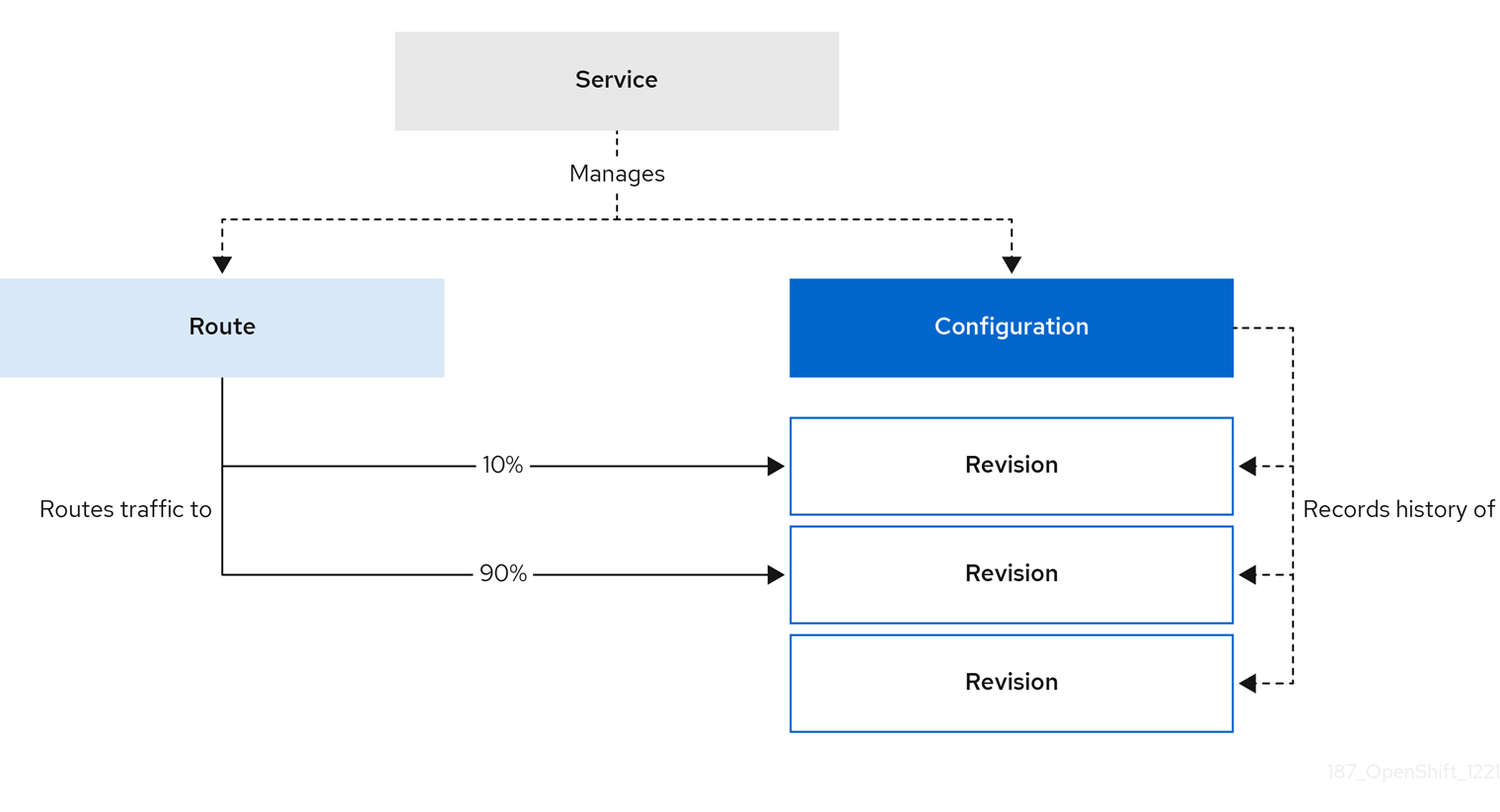

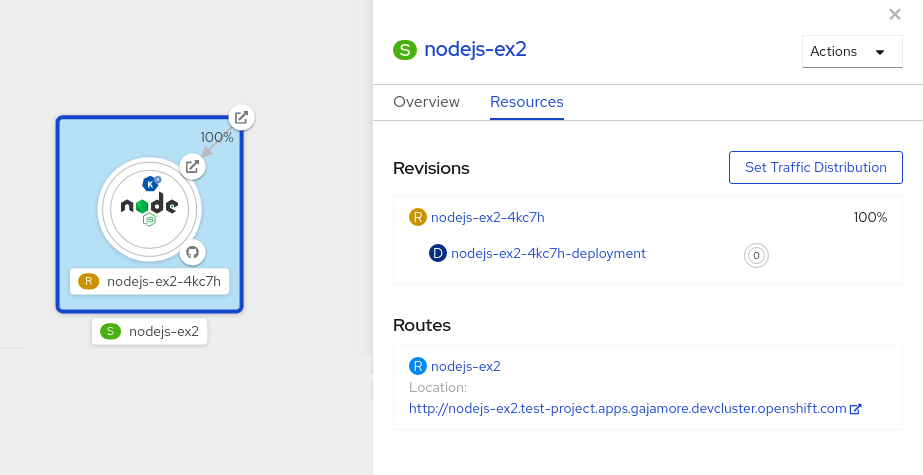

2.1.1. Knative Serving

Knative Serving supports developers who want to create, deploy, and manage cloud-native applications. It provides a set of objects as Kubernetes custom resource definitions (CRDs) that define and control the behavior of serverless workloads on an OpenShift Container Platform cluster.

Developers use these CRDs to create custom resource (CR) instances that can be used as building blocks to address complex use cases. For example:

- Rapidly deploying serverless containers.

- Automatically scaling pods.

2.1.1.1. Knative Serving resources

- Service

-

The

service.serving.knative.devCRD automatically manages the life cycle of your workload to ensure that the application is deployed and reachable through the network. It creates a route, a configuration, and a new revision for each change to a user created service, or custom resource. Most developer interactions in Knative are carried out by modifying services. - Revision

-

The

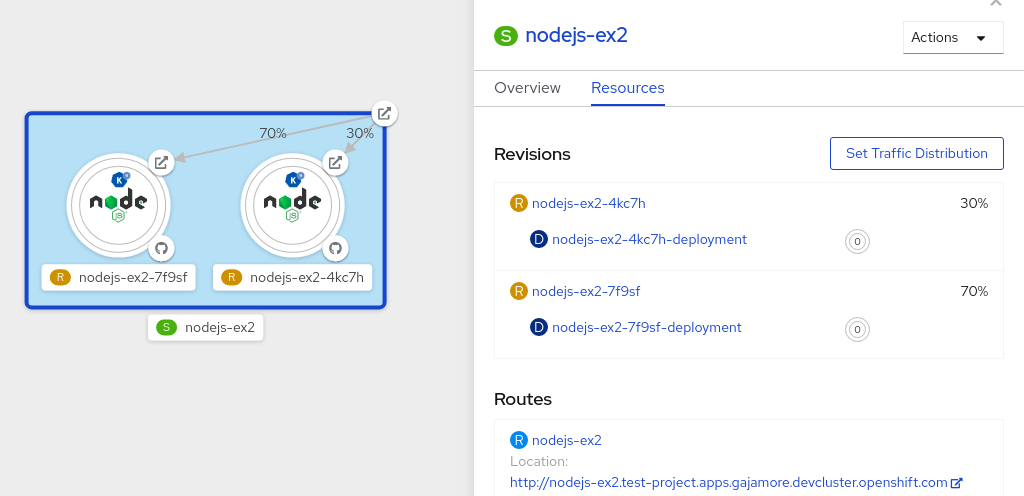

revision.serving.knative.devCRD is a point-in-time snapshot of the code and configuration for each modification made to the workload. Revisions are immutable objects and can be retained for as long as necessary. - Route

-

The

route.serving.knative.devCRD maps a network endpoint to one or more revisions. You can manage the traffic in several ways, including fractional traffic and named routes. - Configuration

-

The

configuration.serving.knative.devCRD maintains the desired state for your deployment. It provides a clean separation between code and configuration. Modifying a configuration creates a new revision.

2.1.2. Knative Eventing

Knative Eventing on OpenShift Container Platform enables developers to use an event-driven architecture with serverless applications. An event-driven architecture is based on the concept of decoupled relationships between event producers and event consumers.

Event producers create events, and event sinks, or consumers, receive events. Knative Eventing uses standard HTTP POST requests to send and receive events between event producers and sinks. These events conform to the CloudEvents specifications, which enables creating, parsing, sending, and receiving events in any programming language.

Knative Eventing supports the following use cases:

- Publish an event without creating a consumer

- You can send events to a broker as an HTTP POST, and use binding to decouple the destination configuration from your application that produces events.

- Consume an event without creating a publisher

- You can use a trigger to consume events from a broker based on event attributes. The application receives events as an HTTP POST.

To enable delivery to multiple types of sinks, Knative Eventing defines the following generic interfaces that can be implemented by multiple Kubernetes resources:

- Addressable resources

-

Able to receive and acknowledge an event delivered over HTTP to an address defined in the

status.address.urlfield of the event. The KubernetesServiceresource also satisfies the addressable interface. - Callable resources

-

Able to receive an event delivered over HTTP and transform it, returning

0or1new events in the HTTP response payload. These returned events may be further processed in the same way that events from an external event source are processed.

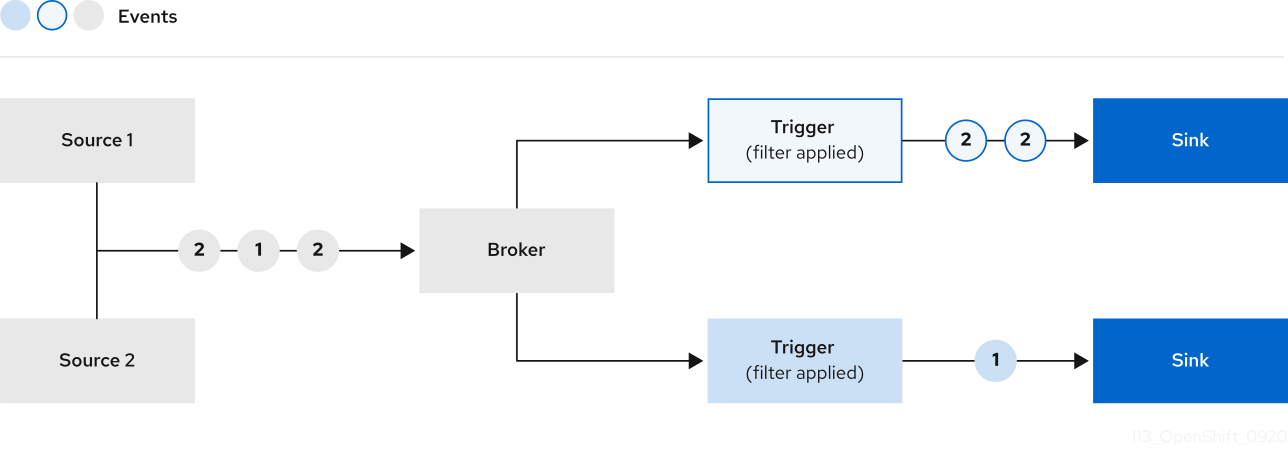

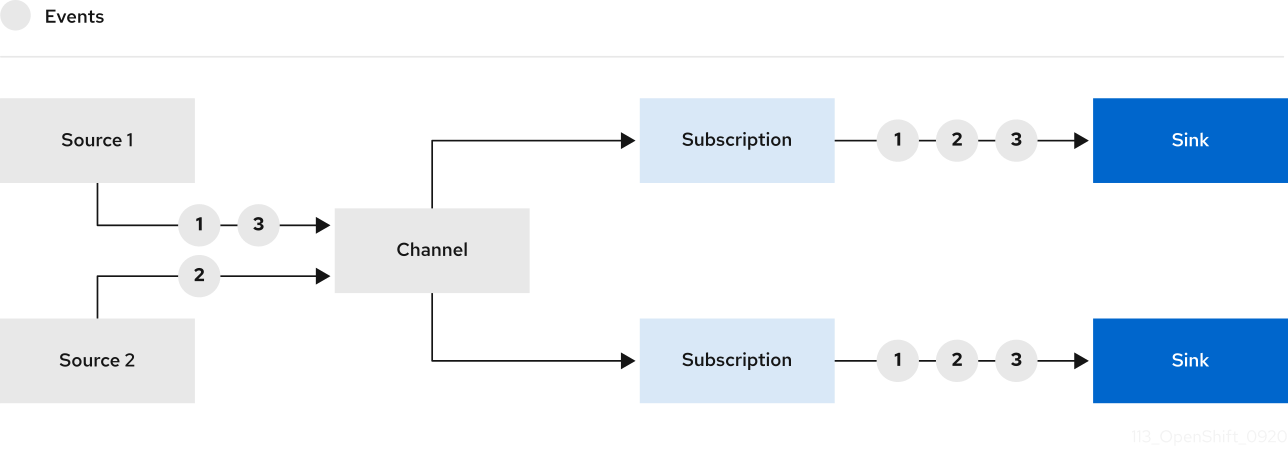

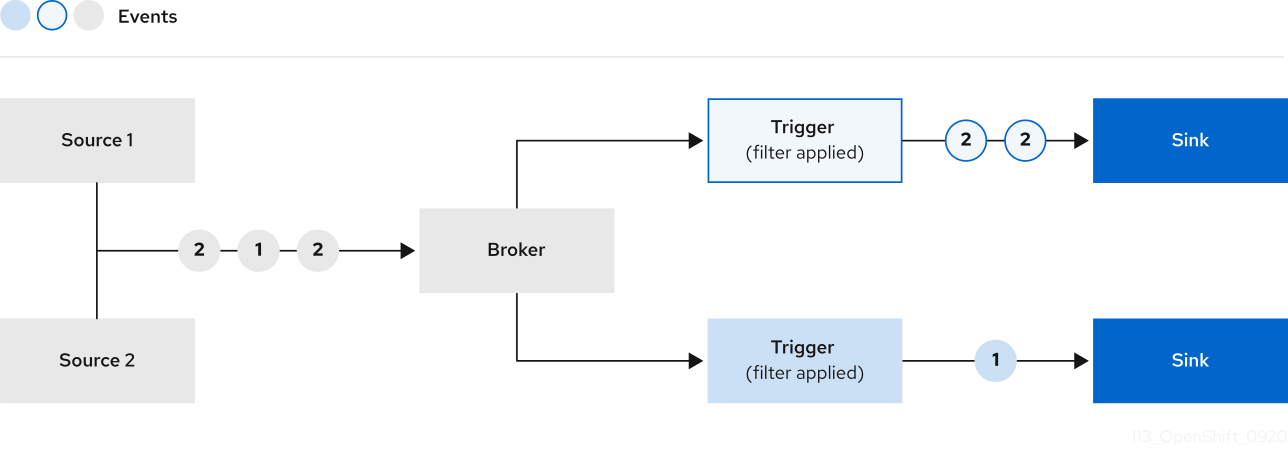

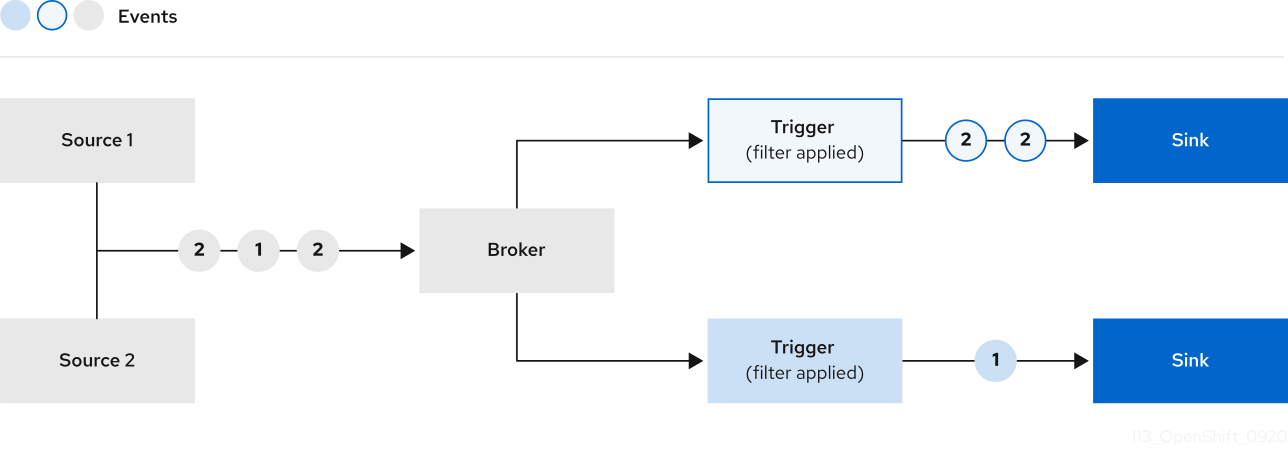

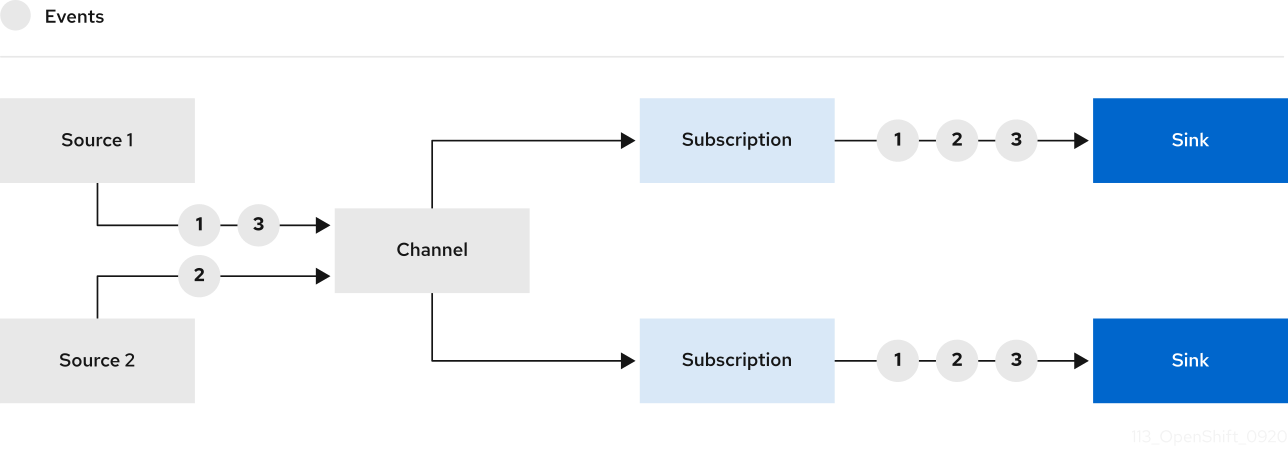

You can propagate an event from an event source to multiple event sinks by using:

- Channels and subscriptions, or

- Brokers and Triggers.

2.1.3. Supported configurations

The set of supported features, configurations, and integrations for OpenShift Serverless, current and past versions, are available at the Supported Configurations page.

2.1.4. Scalability and performance

OpenShift Serverless has been tested with a configuration of 3 main nodes and 3 worker nodes, each of which has 64 CPUs, 457 GB of memory, and 394 GB of storage each.

The maximum number of Knative services that can be created using this configuration is 3,000. This corresponds to the OpenShift Container Platform Kubernetes services limit of 10,000, since 1 Knative service creates 3 Kubernetes services.

The average scale from zero response time was approximately 3.4 seconds, with a maximum response time of 8 seconds, and a 99.9th percentile of 4.5 seconds for a simple Quarkus application. These times might vary depending on the application and the runtime of the application.

2.2. About OpenShift Serverless Functions

OpenShift Serverless Functions enables developers to create and deploy stateless, event-driven functions as a Knative service on OpenShift Container Platform. The kn func CLI is provided as a plugin for the Knative kn CLI. You can use the kn func CLI to create, build, and deploy the container image as a Knative service on the cluster.

2.2.1. Included runtimes

OpenShift Serverless Functions provides templates that can be used to create basic functions for the following runtimes:

2.2.2. Next steps

2.3. Event sources

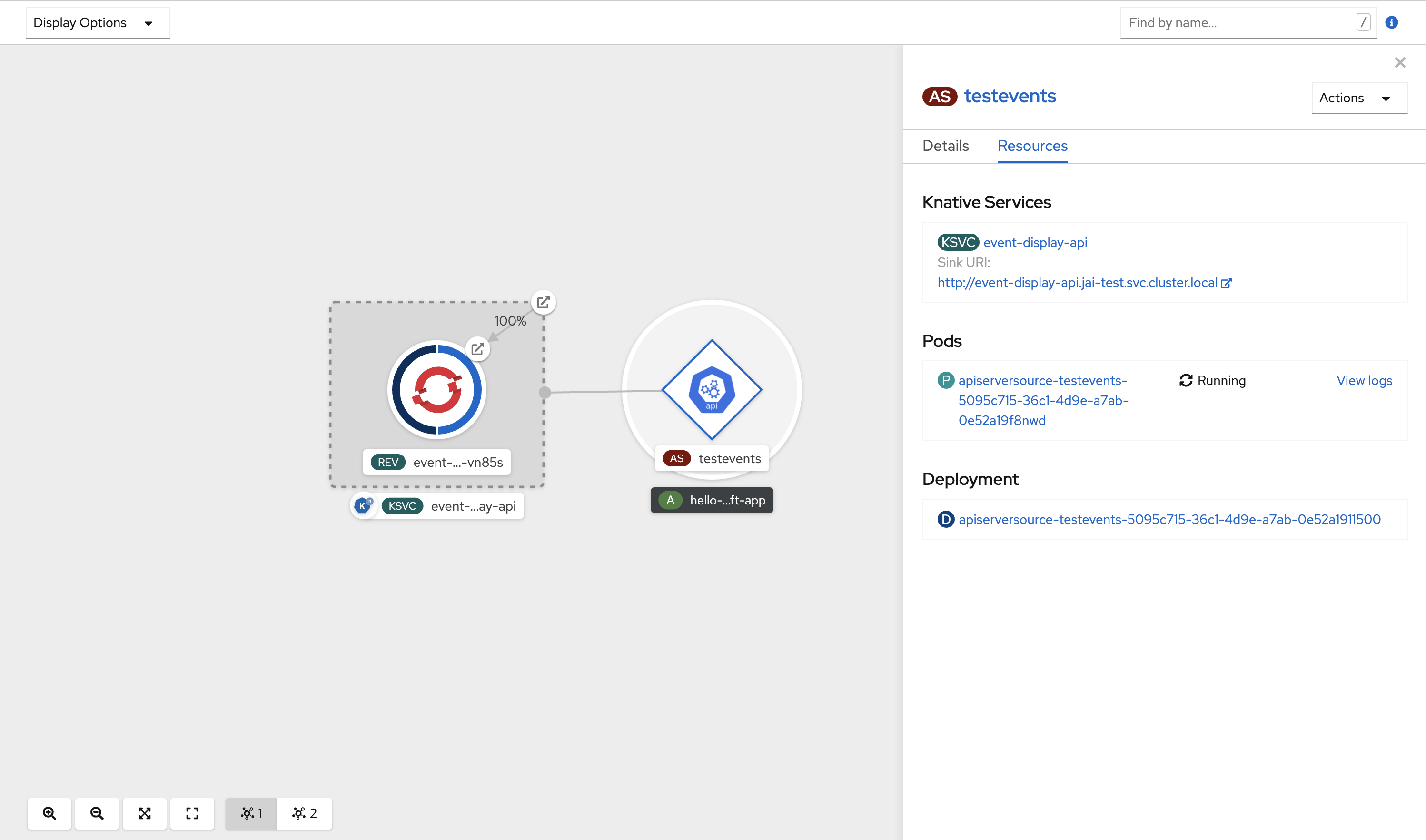

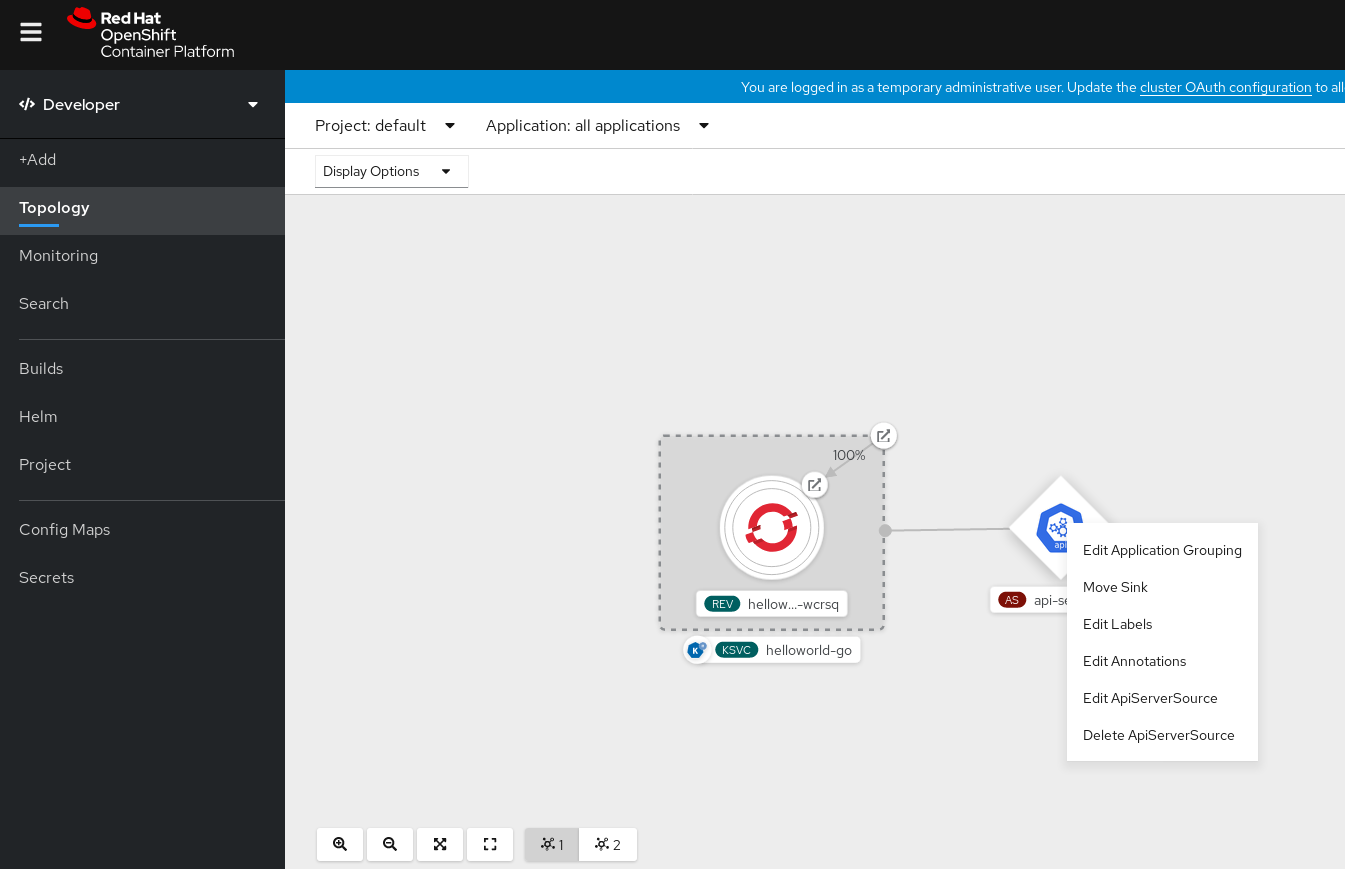

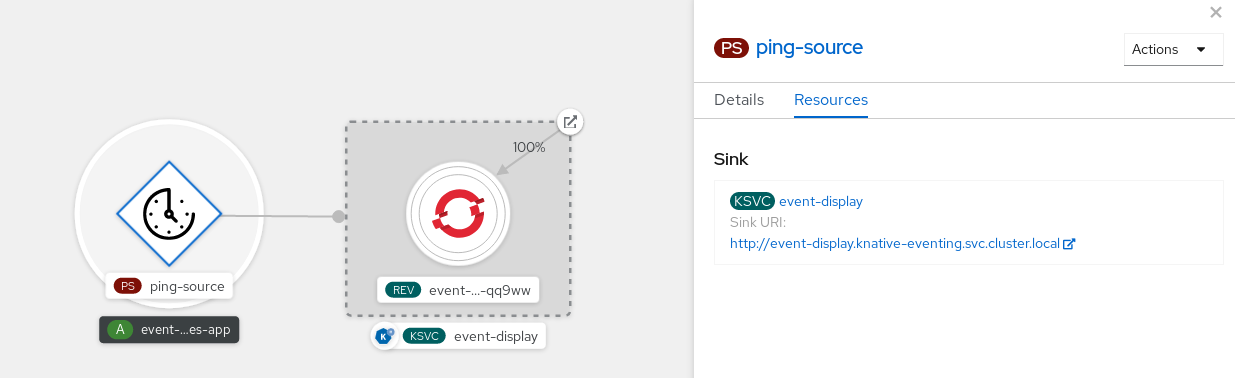

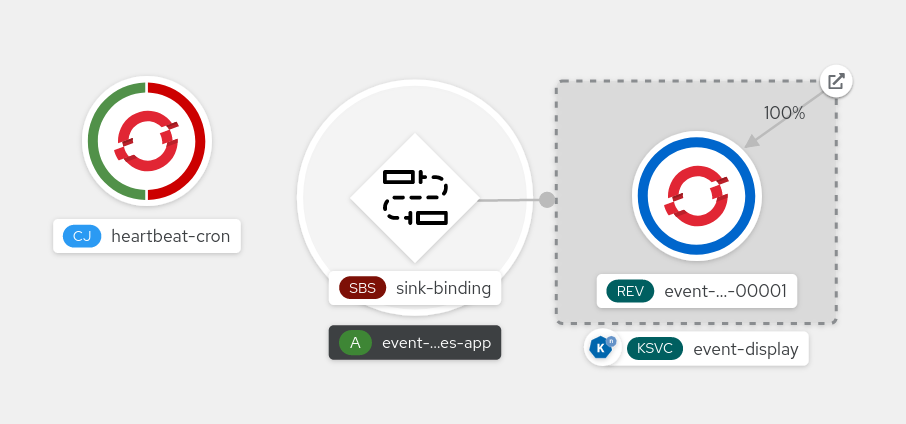

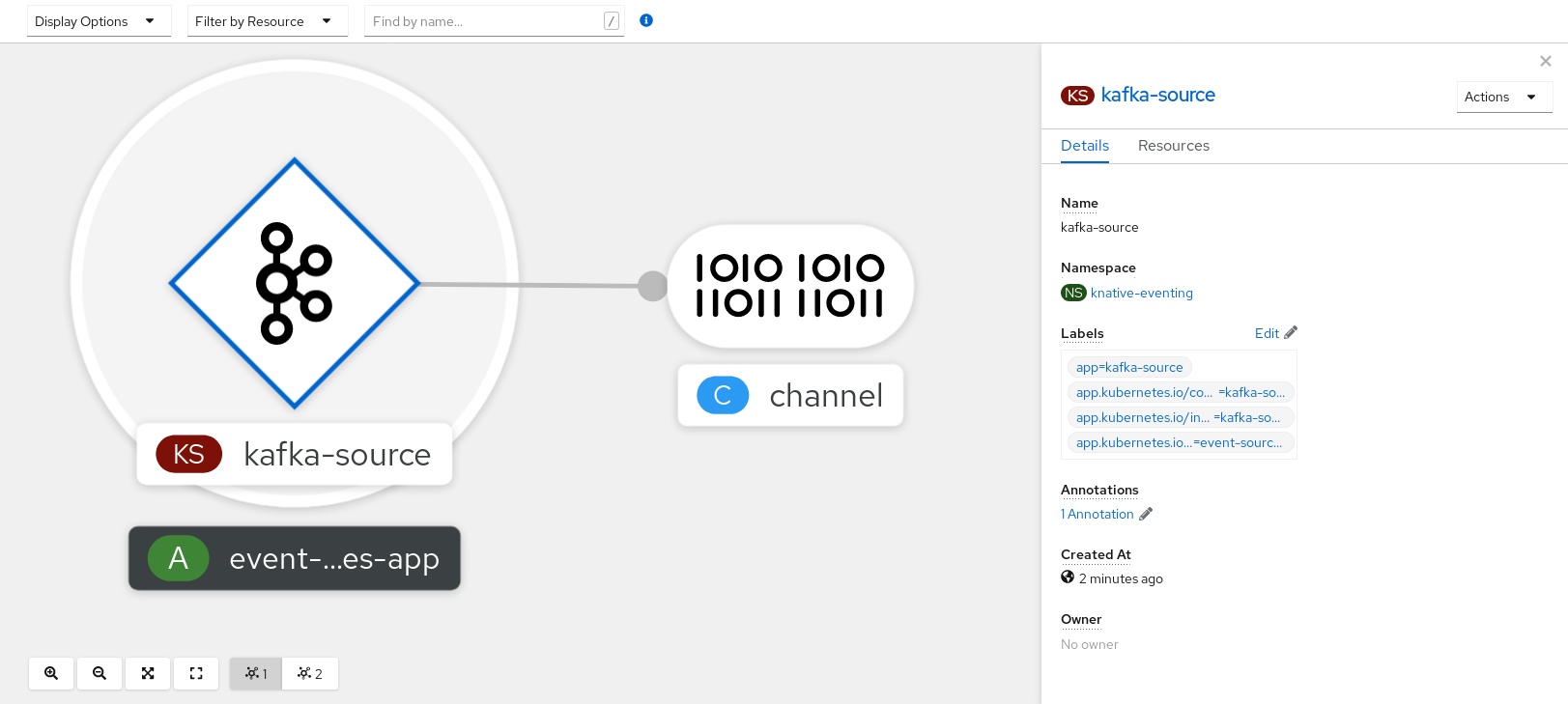

A Knative event source can be any Kubernetes object that generates or imports cloud events, and relays those events to another endpoint, known as a sink. Sourcing events is critical to developing a distributed system that reacts to events.

You can create and manage Knative event sources by using the Developer perspective in the OpenShift Container Platform web console, the Knative (kn) CLI, or by applying YAML files.

Currently, OpenShift Serverless supports the following event source types:

- API server source

- Brings Kubernetes API server events into Knative. The API server source sends a new event each time a Kubernetes resource is created, updated or deleted.

- Ping source

- Produces events with a fixed payload on a specified cron schedule.

- Kafka event source

- Connects a Kafka cluster to a sink as an event source.

You can also create a custom event source.

2.4. Brokers

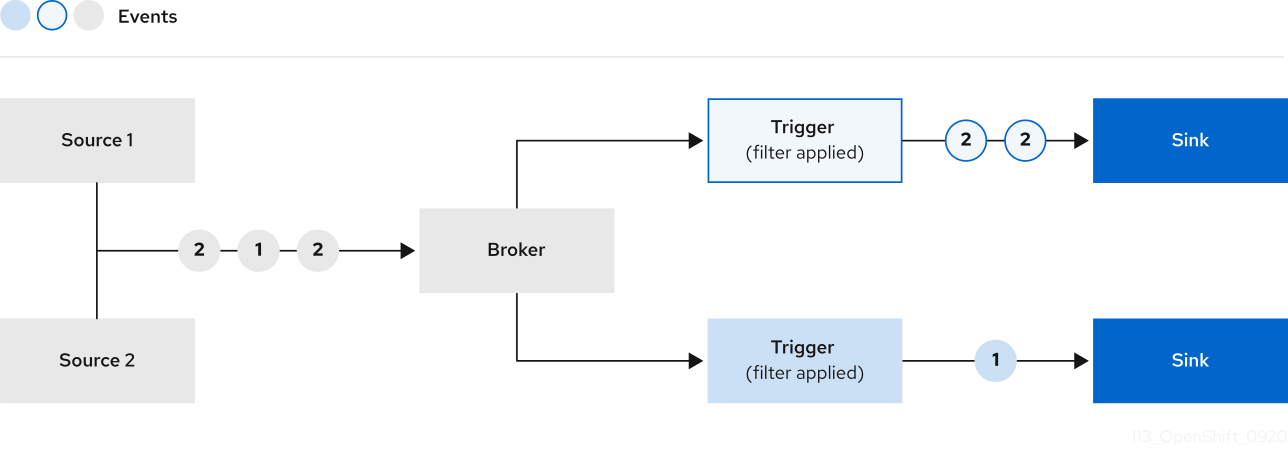

Brokers can be used in combination with triggers to deliver events from an event source to an event sink. Events are sent from an event source to a broker as an HTTP POST request. After events have entered the broker, they can be filtered by CloudEvent attributes using triggers, and sent as an HTTP POST request to an event sink.

2.4.1. Broker types

Cluster administrators can set the default broker implementation for a cluster. When you create a broker, the default broker implementation is used, unless you provide set configurations in the Broker object.

2.4.1.1. Default broker implementation for development purposes

Knative provides a default, channel-based broker implementation. This channel-based broker can be used for development and testing purposes, but does not provide adequate event delivery guarantees for production environments. The default broker is backed by the InMemoryChannel channel implementation by default.

If you want to use Kafka to reduce network hops, use the Kafka broker implementation. Do not configure the channel-based broker to be backed by the KafkaChannel channel implementation.

2.4.1.2. Production-ready Kafka broker implementation

For production-ready Knative Eventing deployments, Red Hat recommends using the Knative Kafka broker implementation. The Kafka broker is an Apache Kafka native implementation of the Knative broker, which sends CloudEvents directly to the Kafka instance.

The Federal Information Processing Standards (FIPS) mode is disabled for Kafka broker.

The Kafka broker has a native integration with Kafka for storing and routing events. This allows better integration with Kafka for the broker and trigger model over other broker types, and reduces network hops. Other benefits of the Kafka broker implementation include:

- At-least-once delivery guarantees

- Ordered delivery of events, based on the CloudEvents partitioning extension

- Control plane high availability

- A horizontally scalable data plane

The Knative Kafka broker stores incoming CloudEvents as Kafka records, using the binary content mode. This means that all CloudEvent attributes and extensions are mapped as headers on the Kafka record, while the data spec of the CloudEvent corresponds to the value of the Kafka record.

2.4.2. Next steps

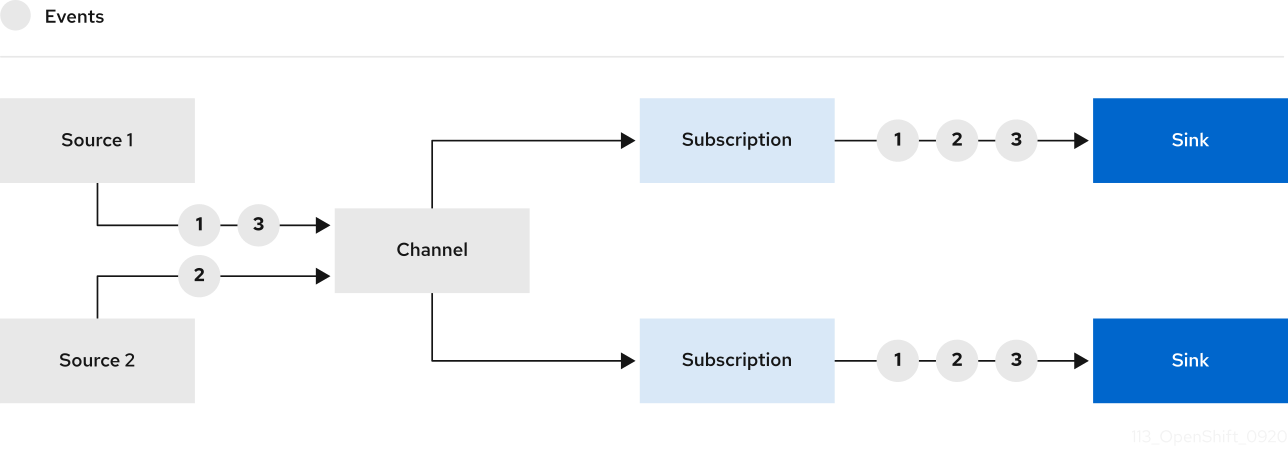

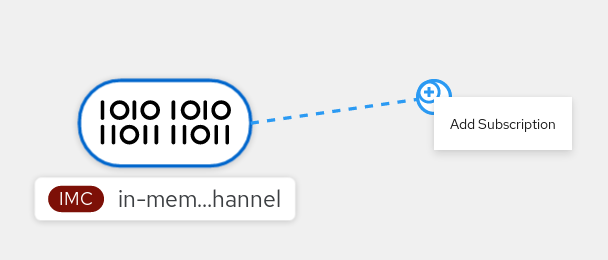

2.5. Channels and subscriptions

Channels are custom resources that define a single event-forwarding and persistence layer. After events have been sent to a channel from an event source or producer, these events can be sent to multiple Knative services or other sinks by using a subscription.

You can create channels by instantiating a supported Channel object, and configure re-delivery attempts by modifying the delivery spec in a Subscription object.

After you create a Channel object, a mutating admission webhook adds a set of spec.channelTemplate properties for the Channel object based on the default channel implementation. For example, for an InMemoryChannel default implementation, the Channel object looks as follows:

apiVersion: messaging.knative.dev/v1

kind: Channel

metadata:

name: example-channel

namespace: default

spec:

channelTemplate:

apiVersion: messaging.knative.dev/v1

kind: InMemoryChannel

The channel controller then creates the backing channel instance based on the spec.channelTemplate configuration.

The spec.channelTemplate properties cannot be changed after creation, because they are set by the default channel mechanism rather than by the user.

When this mechanism is used with the preceding example, two objects are created: a generic backing channel and an InMemoryChannel channel. If you are using a different default channel implementation, the InMemoryChannel is replaced with one that is specific to your implementation. For example, with Knative Kafka, the KafkaChannel channel is created.

The backing channel acts as a proxy that copies its subscriptions to the user-created channel object, and sets the user-created channel object status to reflect the status of the backing channel.

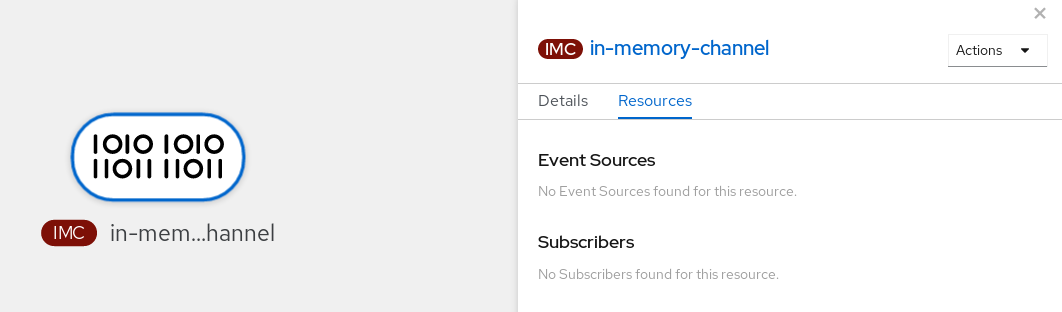

2.5.1. Channel implementation types

InMemoryChannel and KafkaChannel channel implementations can be used with OpenShift Serverless for development use.

The following are limitations of InMemoryChannel type channels:

- No event persistence is available. If a pod goes down, events on that pod are lost.

-

InMemoryChannelchannels do not implement event ordering, so two events that are received in the channel at the same time can be delivered to a subscriber in any order. -

If a subscriber rejects an event, there are no re-delivery attempts by default. You can configure re-delivery attempts by modifying the

deliveryspec in theSubscriptionobject.

For more information about Kafka channels, see the Knative Kafka documentation.

2.5.2. Next steps

- Create a channel.

- If you are a cluster administrator, you can configure default settings for channels. See Configuring channel defaults.

Chapter 3. Install

3.1. Installing the OpenShift Serverless Operator

Installing the OpenShift Serverless Operator enables you to install and use Knative Serving, Knative Eventing, and Knative Kafka on a OpenShift Container Platform cluster. The OpenShift Serverless Operator manages Knative custom resource definitions (CRDs) for your cluster and enables you to configure them without directly modifying individual config maps for each component.

3.1.1. Before you begin

Read the following information about supported configurations and prerequisites before you install OpenShift Serverless.

- OpenShift Serverless is supported for installation in a restricted network environment.

- OpenShift Serverless currently cannot be used in a multi-tenant configuration on a single cluster.

3.1.1.1. Defining cluster size requirements

To install and use OpenShift Serverless, the OpenShift Container Platform cluster must be sized correctly.

The following requirements relate only to the pool of worker machines of the OpenShift Container Platform cluster. Control plane nodes are not used for general scheduling and are omitted from the requirements.

The minimum requirement to use OpenShift Serverless is a cluster with 10 CPUs and 40GB memory. By default, each pod requests ~400m of CPU, so the minimum requirements are based on this value.

The total size requirements to run OpenShift Serverless are dependent on the components that are installed and the applications that are deployed, and might vary depending on your deployment.

3.1.1.2. Scaling your cluster using machine sets

You can use the OpenShift Container Platform MachineSet API to manually scale your cluster up to the desired size. The minimum requirements usually mean that you must scale up one of the default machine sets by two additional machines. See Manually scaling a machine set.

3.1.2. Installing the OpenShift Serverless Operator from the web console

You can install the OpenShift Serverless Operator from the OperatorHub by using the OpenShift Container Platform web console. Installing this Operator enables you to install and use Knative components.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have logged in to the OpenShift Container Platform web console.

Procedure

- In the OpenShift Container Platform web console, navigate to the Operators → OperatorHub page.

- Scroll, or type the keyword Serverless into the Filter by keyword box to find the OpenShift Serverless Operator.

- Review the information about the Operator and click Install.

On the Install Operator page:

-

The Installation Mode is All namespaces on the cluster (default). This mode installs the Operator in the default

openshift-serverlessnamespace to watch and be made available to all namespaces in the cluster. -

The Installed Namespace is

openshift-serverless. - Select the stable channel as the Update Channel. The stable channel will enable installation of the latest stable release of the OpenShift Serverless Operator.

- Select Automatic or Manual approval strategy.

-

The Installation Mode is All namespaces on the cluster (default). This mode installs the Operator in the default

- Click Install to make the Operator available to the selected namespaces on this OpenShift Container Platform cluster.

From the Catalog → Operator Management page, you can monitor the OpenShift Serverless Operator subscription’s installation and upgrade progress.

- If you selected a Manual approval strategy, the subscription’s upgrade status will remain Upgrading until you review and approve its install plan. After approving on the Install Plan page, the subscription upgrade status moves to Up to date.

- If you selected an Automatic approval strategy, the upgrade status should resolve to Up to date without intervention.

Verification

After the Subscription’s upgrade status is Up to date, select Catalog → Installed Operators to verify that the OpenShift Serverless Operator eventually shows up and its Status ultimately resolves to InstallSucceeded in the relevant namespace.

If it does not:

- Switch to the Catalog → Operator Management page and inspect the Operator Subscriptions and Install Plans tabs for any failure or errors under Status.

-

Check the logs in any pods in the

openshift-serverlessproject on the Workloads → Pods page that are reporting issues to troubleshoot further.

If you want to use Red Hat OpenShift distributed tracing with OpenShift Serverless, you must install and configure Red Hat OpenShift distributed tracing before you install Knative Serving or Knative Eventing.

3.1.3. Installing the OpenShift Serverless Operator from the CLI

You can install the OpenShift Serverless Operator from the OperatorHub by using the CLI. Installing this Operator enables you to install and use Knative components.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- Your cluster has the Marketplace capability enabled or the Red Hat Operator catalog source configured manually.

- You have logged in to the OpenShift Container Platform cluster.

Procedure

Create a YAML file containing

Namespace,OperatorGroup, andSubscriptionobjects to subscribe a namespace to the OpenShift Serverless Operator. For example, create the fileserverless-subscription.yamlwith the following content:Example subscription

--- apiVersion: v1 kind: Namespace metadata: name: openshift-serverless --- apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: serverless-operators namespace: openshift-serverless spec: {} --- apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: serverless-operator namespace: openshift-serverless spec: channel: stable1 name: serverless-operator2 source: redhat-operators3 sourceNamespace: openshift-marketplace4 - 1

- The channel name of the Operator. The

stablechannel enables installation of the most recent stable version of the OpenShift Serverless Operator. - 2

- The name of the Operator to subscribe to. For the OpenShift Serverless Operator, this is always

serverless-operator. - 3

- The name of the CatalogSource that provides the Operator. Use

redhat-operatorsfor the default OperatorHub catalog sources. - 4

- The namespace of the CatalogSource. Use

openshift-marketplacefor the default OperatorHub catalog sources.

Create the

Subscriptionobject:$ oc apply -f serverless-subscription.yaml

Verification

Check that the cluster service version (CSV) has reached the Succeeded phase:

Example command

$ oc get csvExample output

NAME DISPLAY VERSION REPLACES PHASE

serverless-operator.v1.25.0 Red Hat OpenShift Serverless 1.25.0 serverless-operator.v1.24.0 SucceededIf you want to use Red Hat OpenShift distributed tracing with OpenShift Serverless, you must install and configure Red Hat OpenShift distributed tracing before you install Knative Serving or Knative Eventing.

3.1.5. Next steps

- After the OpenShift Serverless Operator is installed, you can install Knative Serving or install Knative Eventing.

3.2. Installing Knative Serving

Installing Knative Serving allows you to create Knative services and functions on your cluster. It also allows you to use additional functionality such as autoscaling and networking options for your applications.

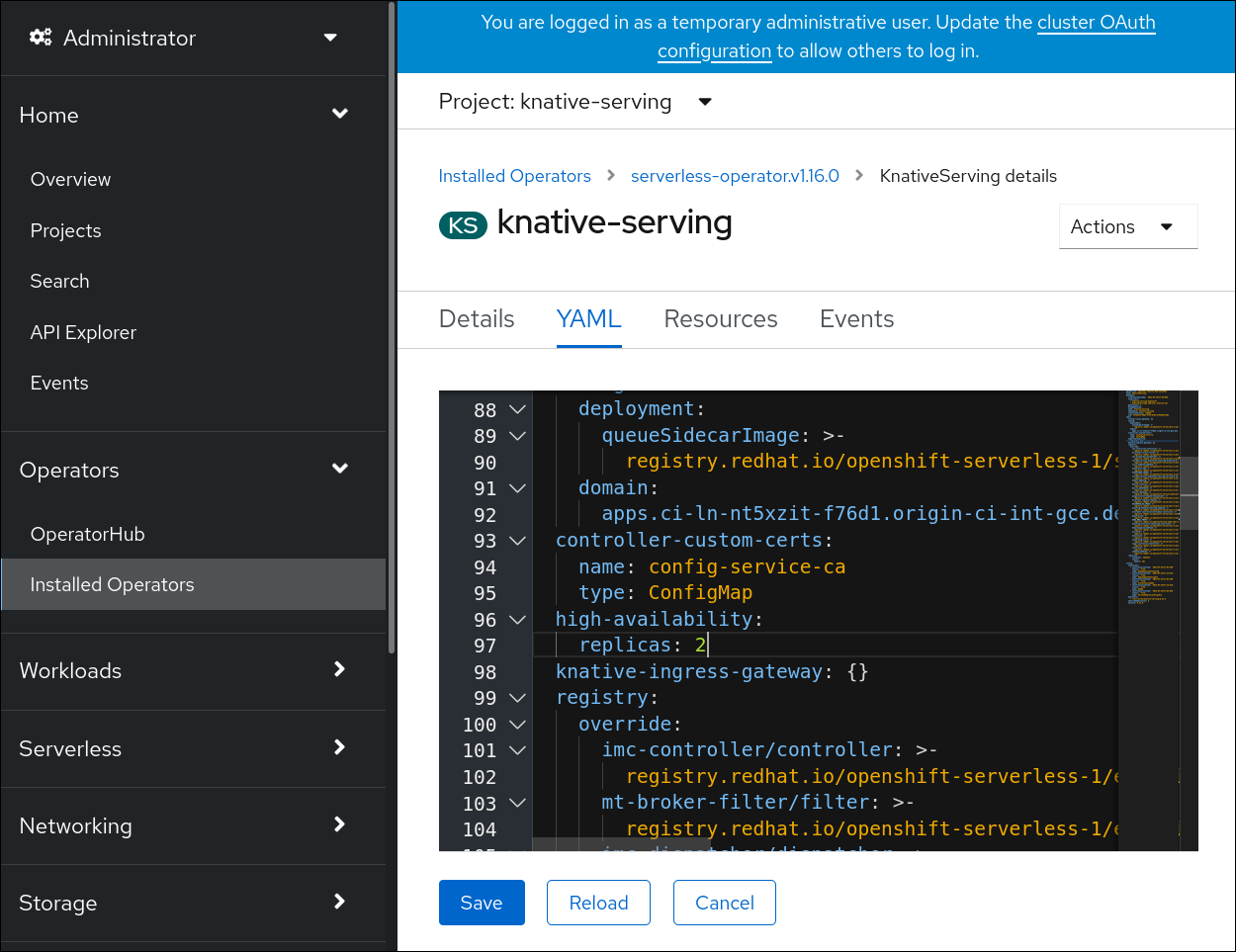

After you install the OpenShift Serverless Operator, you can install Knative Serving by using the default settings, or configure more advanced settings in the KnativeServing custom resource (CR). For more information about configuration options for the KnativeServing CR, see Global configuration.

If you want to use Red Hat OpenShift distributed tracing with OpenShift Serverless, you must install and configure Red Hat OpenShift distributed tracing before you install Knative Serving.

3.2.1. Installing Knative Serving by using the web console

After you install the OpenShift Serverless Operator, install Knative Serving by using the OpenShift Container Platform web console. You can install Knative Serving by using the default settings or configure more advanced settings in the KnativeServing custom resource (CR).

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have logged in to the OpenShift Container Platform web console.

- You have installed the OpenShift Serverless Operator.

Procedure

- In the Administrator perspective of the OpenShift Container Platform web console, navigate to Operators → Installed Operators.

- Check that the Project dropdown at the top of the page is set to Project: knative-serving.

- Click Knative Serving in the list of Provided APIs for the OpenShift Serverless Operator to go to the Knative Serving tab.

- Click Create Knative Serving.

In the Create Knative Serving page, you can install Knative Serving using the default settings by clicking Create.

You can also modify settings for the Knative Serving installation by editing the

KnativeServingobject using either the form provided, or by editing the YAML.-

Using the form is recommended for simpler configurations that do not require full control of

KnativeServingobject creation. Editing the YAML is recommended for more complex configurations that require full control of

KnativeServingobject creation. You can access the YAML by clicking the edit YAML link in the top right of the Create Knative Serving page.After you complete the form, or have finished modifying the YAML, click Create.

NoteFor more information about configuration options for the KnativeServing custom resource definition, see the documentation on Advanced installation configuration options.

-

Using the form is recommended for simpler configurations that do not require full control of

-

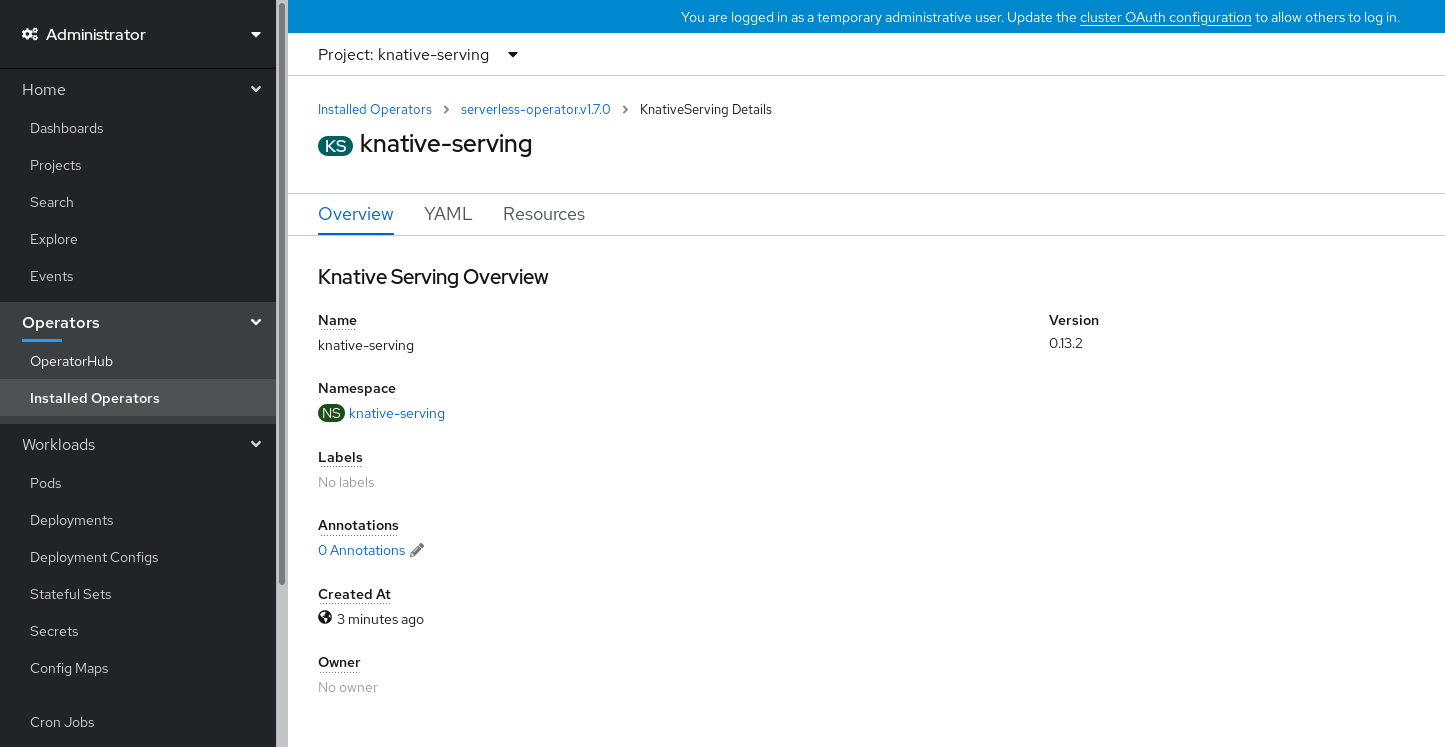

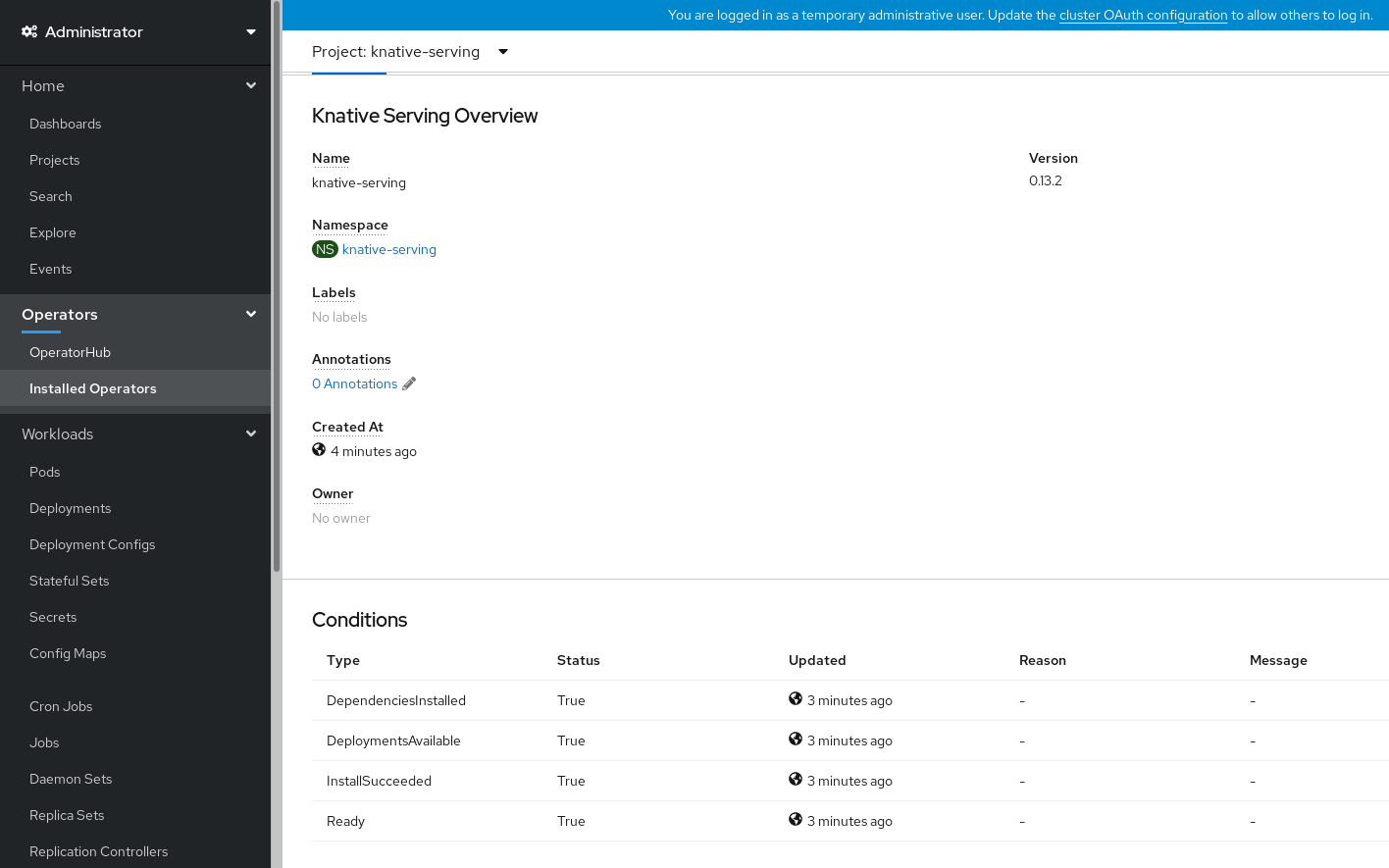

After you have installed Knative Serving, the

KnativeServingobject is created, and you are automatically directed to the Knative Serving tab. You will see theknative-servingcustom resource in the list of resources.

Verification

-

Click on

knative-servingcustom resource in the Knative Serving tab. You will be automatically directed to the Knative Serving Overview page.

- Scroll down to look at the list of Conditions.

You should see a list of conditions with a status of True, as shown in the example image.

Note

NoteIt may take a few seconds for the Knative Serving resources to be created. You can check their status in the Resources tab.

- If the conditions have a status of Unknown or False, wait a few moments and then check again after you have confirmed that the resources have been created.

3.2.2. Installing Knative Serving by using YAML

After you install the OpenShift Serverless Operator, you can install Knative Serving by using the default settings, or configure more advanced settings in the KnativeServing custom resource (CR). You can use the following procedure to install Knative Serving by using YAML files and the oc CLI.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have installed the OpenShift Serverless Operator.

-

Install the OpenShift CLI (

oc).

Procedure

Create a file named

serving.yamland copy the following example YAML into it:apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-servingApply the

serving.yamlfile:$ oc apply -f serving.yaml

Verification

To verify the installation is complete, enter the following command:

$ oc get knativeserving.operator.knative.dev/knative-serving -n knative-serving --template='{{range .status.conditions}}{{printf "%s=%s\n" .type .status}}{{end}}'Example output

DependenciesInstalled=True DeploymentsAvailable=True InstallSucceeded=True Ready=TrueNoteIt may take a few seconds for the Knative Serving resources to be created.

If the conditions have a status of

UnknownorFalse, wait a few moments and then check again after you have confirmed that the resources have been created.Check that the Knative Serving resources have been created:

$ oc get pods -n knative-servingExample output

NAME READY STATUS RESTARTS AGE activator-67ddf8c9d7-p7rm5 2/2 Running 0 4m activator-67ddf8c9d7-q84fz 2/2 Running 0 4m autoscaler-5d87bc6dbf-6nqc6 2/2 Running 0 3m59s autoscaler-5d87bc6dbf-h64rl 2/2 Running 0 3m59s autoscaler-hpa-77f85f5cc4-lrts7 2/2 Running 0 3m57s autoscaler-hpa-77f85f5cc4-zx7hl 2/2 Running 0 3m56s controller-5cfc7cb8db-nlccl 2/2 Running 0 3m50s controller-5cfc7cb8db-rmv7r 2/2 Running 0 3m18s domain-mapping-86d84bb6b4-r746m 2/2 Running 0 3m58s domain-mapping-86d84bb6b4-v7nh8 2/2 Running 0 3m58s domainmapping-webhook-769d679d45-bkcnj 2/2 Running 0 3m58s domainmapping-webhook-769d679d45-fff68 2/2 Running 0 3m58s storage-version-migration-serving-serving-0.26.0--1-6qlkb 0/1 Completed 0 3m56s webhook-5fb774f8d8-6bqrt 2/2 Running 0 3m57s webhook-5fb774f8d8-b8lt5 2/2 Running 0 3m57sCheck that the necessary networking components have been installed to the automatically created

knative-serving-ingressnamespace:$ oc get pods -n knative-serving-ingressExample output

NAME READY STATUS RESTARTS AGE net-kourier-controller-7d4b6c5d95-62mkf 1/1 Running 0 76s net-kourier-controller-7d4b6c5d95-qmgm2 1/1 Running 0 76s 3scale-kourier-gateway-6688b49568-987qz 1/1 Running 0 75s 3scale-kourier-gateway-6688b49568-b5tnp 1/1 Running 0 75s

3.2.3. Next steps

- If you want to use Knative event-driven architecture you can install Knative Eventing.

3.3. Installing Knative Eventing

To use event-driven architecture on your cluster, install Knative Eventing. You can create Knative components such as event sources, brokers, and channels and then use them to send events to applications or external systems.

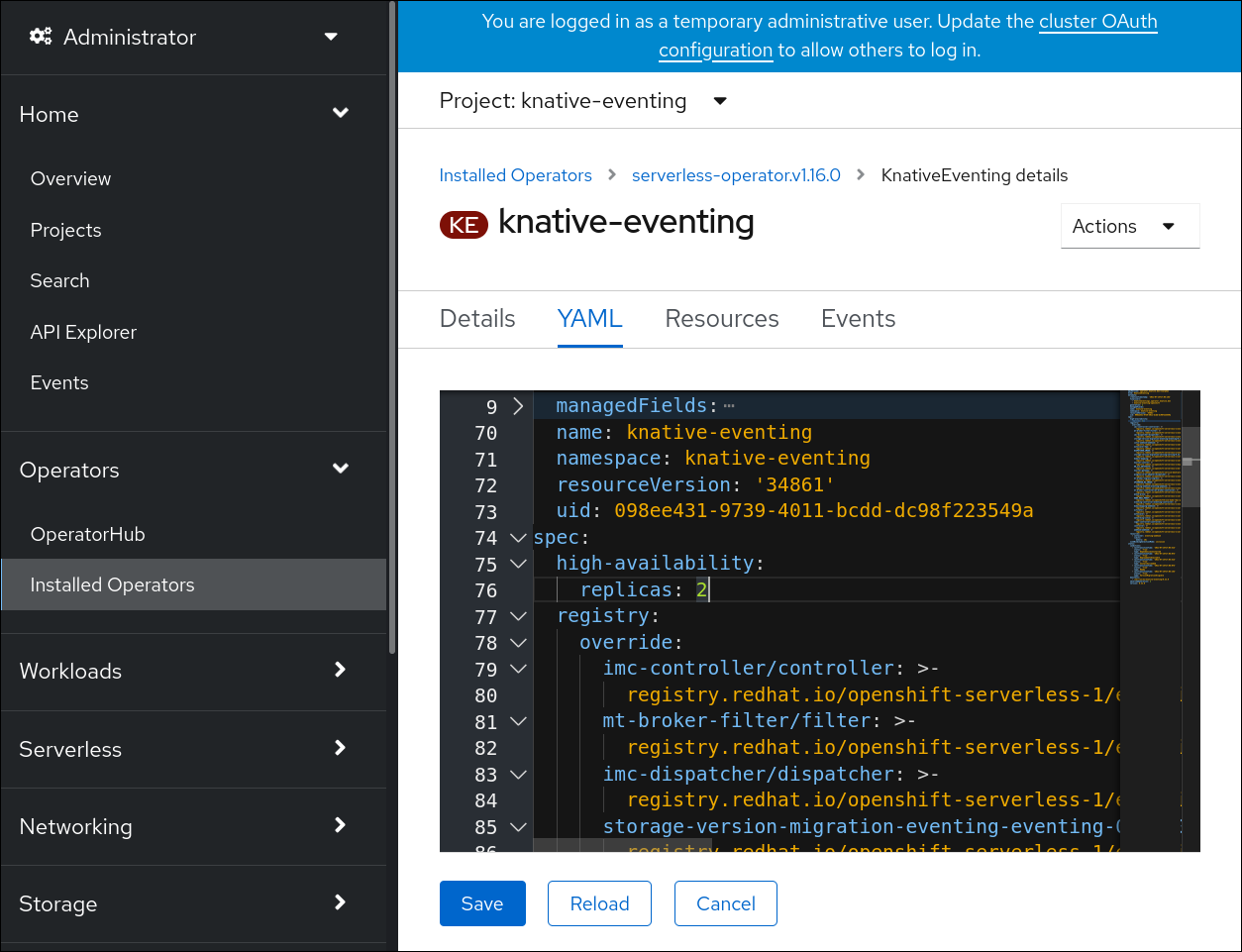

After you install the OpenShift Serverless Operator, you can install Knative Eventing by using the default settings, or configure more advanced settings in the KnativeEventing custom resource (CR). For more information about configuration options for the KnativeEventing CR, see Global configuration.

If you want to use Red Hat OpenShift distributed tracing with OpenShift Serverless, you must install and configure Red Hat OpenShift distributed tracing before you install Knative Eventing.

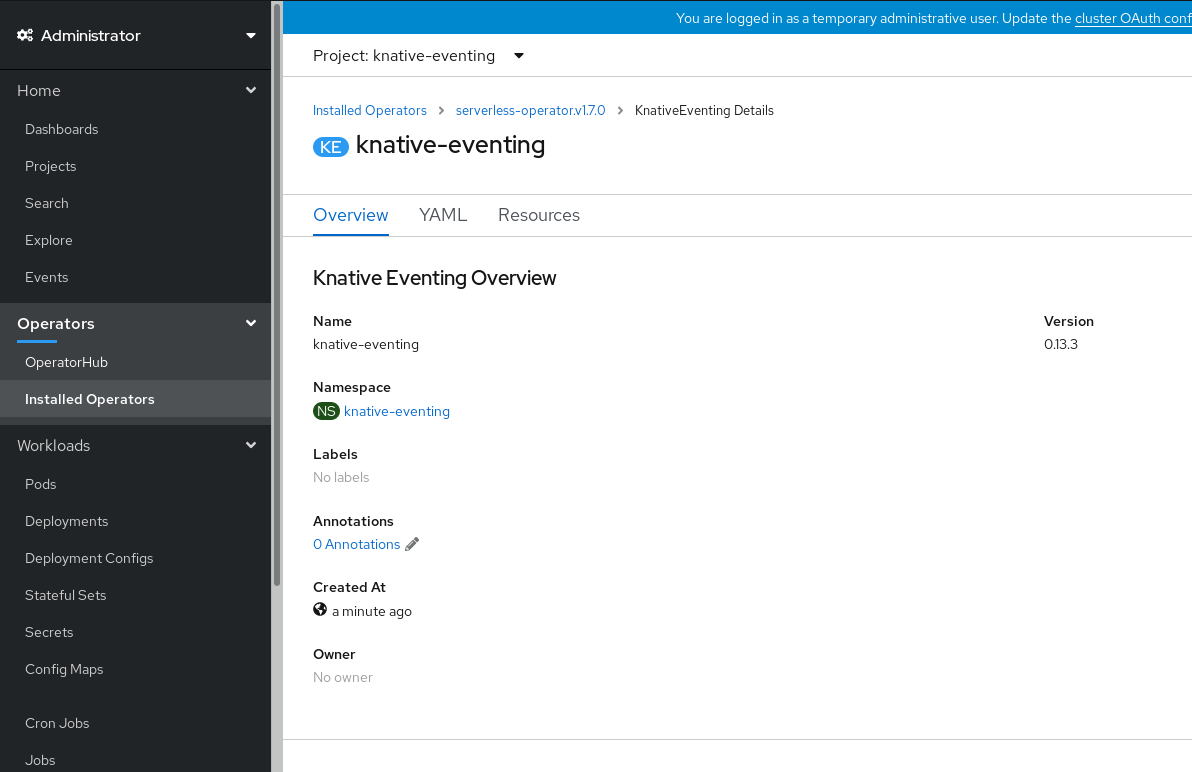

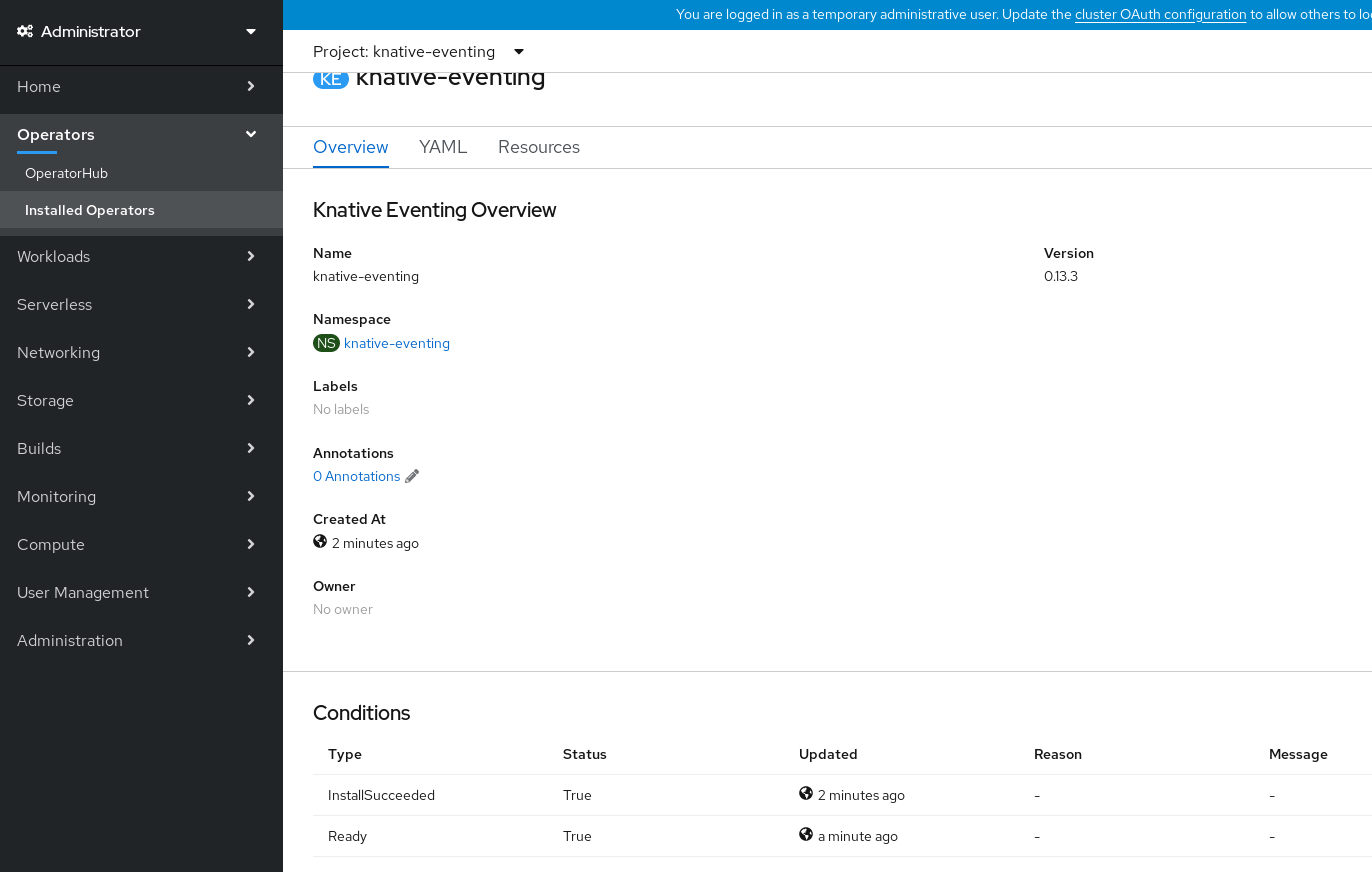

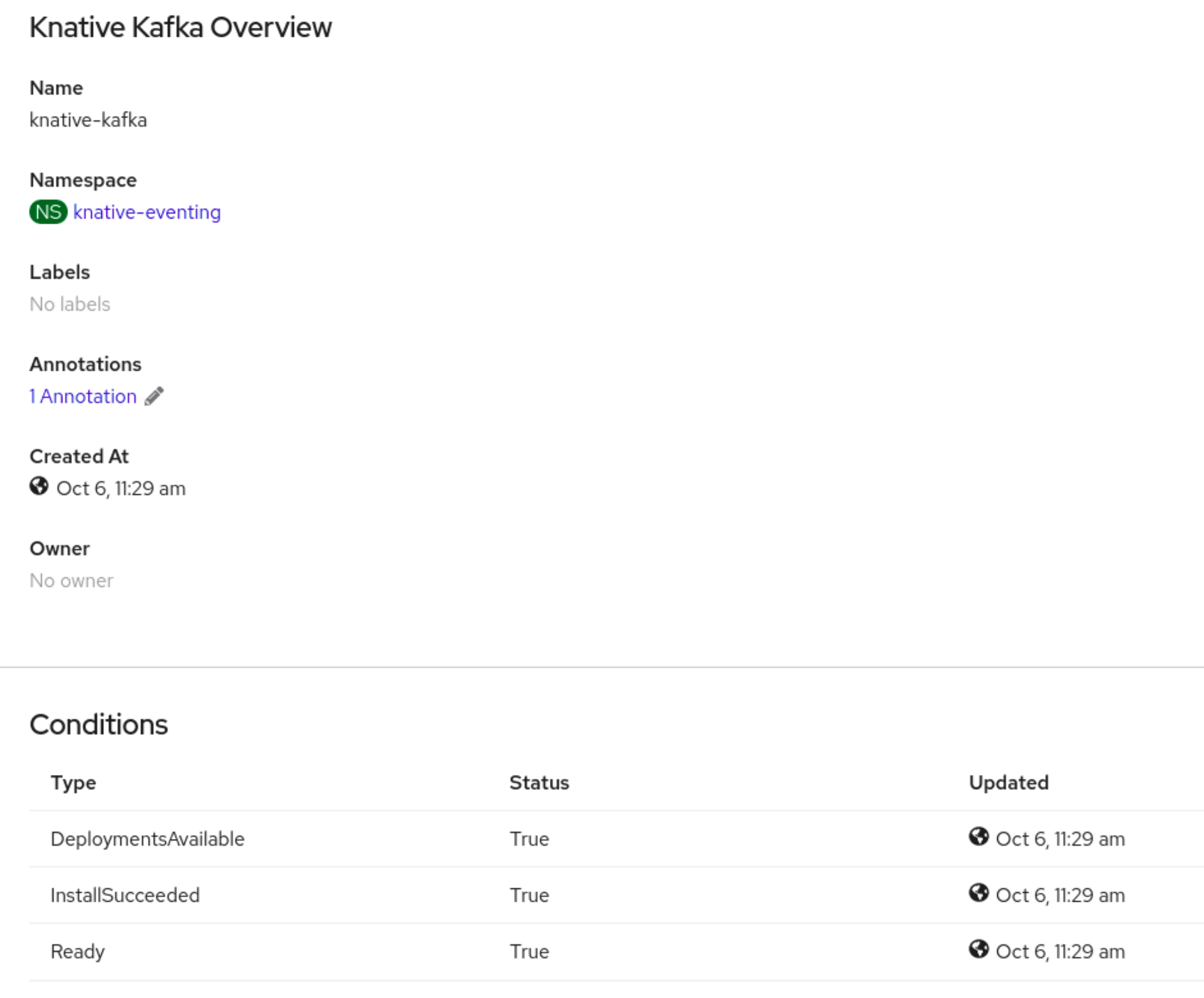

3.3.1. Installing Knative Eventing by using the web console

After you install the OpenShift Serverless Operator, install Knative Eventing by using the OpenShift Container Platform web console. You can install Knative Eventing by using the default settings or configure more advanced settings in the KnativeEventing custom resource (CR).

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have logged in to the OpenShift Container Platform web console.

- You have installed the OpenShift Serverless Operator.

Procedure

- In the Administrator perspective of the OpenShift Container Platform web console, navigate to Operators → Installed Operators.

- Check that the Project dropdown at the top of the page is set to Project: knative-eventing.

- Click Knative Eventing in the list of Provided APIs for the OpenShift Serverless Operator to go to the Knative Eventing tab.

- Click Create Knative Eventing.

In the Create Knative Eventing page, you can choose to configure the

KnativeEventingobject by using either the default form provided, or by editing the YAML.Using the form is recommended for simpler configurations that do not require full control of

KnativeEventingobject creation.Optional. If you are configuring the

KnativeEventingobject using the form, make any changes that you want to implement for your Knative Eventing deployment.

Click Create.

Editing the YAML is recommended for more complex configurations that require full control of

KnativeEventingobject creation. You can access the YAML by clicking the edit YAML link in the top right of the Create Knative Eventing page.Optional. If you are configuring the

KnativeEventingobject by editing the YAML, make any changes to the YAML that you want to implement for your Knative Eventing deployment.

- Click Create.

-

After you have installed Knative Eventing, the

KnativeEventingobject is created, and you are automatically directed to the Knative Eventing tab. You will see theknative-eventingcustom resource in the list of resources.

Verification

-

Click on the

knative-eventingcustom resource in the Knative Eventing tab. You are automatically directed to the Knative Eventing Overview page.

- Scroll down to look at the list of Conditions.

You should see a list of conditions with a status of True, as shown in the example image.

Note

NoteIt may take a few seconds for the Knative Eventing resources to be created. You can check their status in the Resources tab.

- If the conditions have a status of Unknown or False, wait a few moments and then check again after you have confirmed that the resources have been created.

3.3.2. Installing Knative Eventing by using YAML

After you install the OpenShift Serverless Operator, you can install Knative Eventing by using the default settings, or configure more advanced settings in the KnativeEventing custom resource (CR). You can use the following procedure to install Knative Eventing by using YAML files and the oc CLI.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have installed the OpenShift Serverless Operator.

-

Install the OpenShift CLI (

oc).

Procedure

-

Create a file named

eventing.yaml. Copy the following sample YAML into

eventing.yaml:apiVersion: operator.knative.dev/v1beta1 kind: KnativeEventing metadata: name: knative-eventing namespace: knative-eventing- Optional. Make any changes to the YAML that you want to implement for your Knative Eventing deployment.

Apply the

eventing.yamlfile by entering:$ oc apply -f eventing.yaml

Verification

Verify the installation is complete by entering the following command and observing the output:

$ oc get knativeeventing.operator.knative.dev/knative-eventing \ -n knative-eventing \ --template='{{range .status.conditions}}{{printf "%s=%s\n" .type .status}}{{end}}'Example output

InstallSucceeded=True Ready=TrueNoteIt may take a few seconds for the Knative Eventing resources to be created.

-

If the conditions have a status of

UnknownorFalse, wait a few moments and then check again after you have confirmed that the resources have been created. Check that the Knative Eventing resources have been created by entering:

$ oc get pods -n knative-eventingExample output

NAME READY STATUS RESTARTS AGE broker-controller-58765d9d49-g9zp6 1/1 Running 0 7m21s eventing-controller-65fdd66b54-jw7bh 1/1 Running 0 7m31s eventing-webhook-57fd74b5bd-kvhlz 1/1 Running 0 7m31s imc-controller-5b75d458fc-ptvm2 1/1 Running 0 7m19s imc-dispatcher-64f6d5fccb-kkc4c 1/1 Running 0 7m18s

3.3.3. Next steps

- If you want to use Knative services you can install Knative Serving.

3.4. Removing OpenShift Serverless

If you need to remove OpenShift Serverless from your cluster, you can do so by manually removing the OpenShift Serverless Operator and other OpenShift Serverless components. Before you can remove the OpenShift Serverless Operator, you must remove Knative Serving and Knative Eventing.

3.4.1. Uninstalling Knative Serving

Before you can remove the OpenShift Serverless Operator, you must remove Knative Serving. To uninstall Knative Serving, you must remove the KnativeServing custom resource (CR) and delete the knative-serving namespace.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

-

Install the OpenShift CLI (

oc).

Procedure

Delete the

KnativeServingCR:$ oc delete knativeservings.operator.knative.dev knative-serving -n knative-servingAfter the command has completed and all pods have been removed from the

knative-servingnamespace, delete the namespace:$ oc delete namespace knative-serving

3.4.2. Uninstalling Knative Eventing

Before you can remove the OpenShift Serverless Operator, you must remove Knative Eventing. To uninstall Knative Eventing, you must remove the KnativeEventing custom resource (CR) and delete the knative-eventing namespace.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

-

Install the OpenShift CLI (

oc).

Procedure

Delete the

KnativeEventingCR:$ oc delete knativeeventings.operator.knative.dev knative-eventing -n knative-eventingAfter the command has completed and all pods have been removed from the

knative-eventingnamespace, delete the namespace:$ oc delete namespace knative-eventing

3.4.3. Removing the OpenShift Serverless Operator

After you have removed Knative Serving and Knative Eventing, you can remove the OpenShift Serverless Operator. You can do this by using the OpenShift Container Platform web console or the oc CLI.

3.4.3.1. Deleting Operators from a cluster using the web console

Cluster administrators can delete installed Operators from a selected namespace by using the web console.

Prerequisites

-

Access to an OpenShift Container Platform cluster web console using an account with

cluster-adminpermissions.

Procedure

- From the Operators → Installed Operators page, scroll or type a keyword into the Filter by name to find the Operator you want. Then, click on it.

On the right side of the Operator Details page, select Uninstall Operator from the Actions list.

An Uninstall Operator? dialog box is displayed, reminding you that:

Removing the Operator will not remove any of its custom resource definitions or managed resources. If your Operator has deployed applications on the cluster or configured off-cluster resources, these will continue to run and need to be cleaned up manually.

This action removes the Operator as well as the Operator deployments and pods, if any. Any Operands, and resources managed by the Operator, including CRDs and CRs, are not removed. The web console enables dashboards and navigation items for some Operators. To remove these after uninstalling the Operator, you might need to manually delete the Operator CRDs.

- Select Uninstall. This Operator stops running and no longer receives updates.

3.4.3.2. Deleting Operators from a cluster using the CLI

Cluster administrators can delete installed Operators from a selected namespace by using the CLI.

Prerequisites

-

Access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions. -

occommand installed on workstation.

Procedure

Check the current version of the subscribed Operator (for example,

jaeger) in thecurrentCSVfield:$ oc get subscription jaeger -n openshift-operators -o yaml | grep currentCSVExample output

currentCSV: jaeger-operator.v1.8.2Delete the subscription (for example,

jaeger):$ oc delete subscription jaeger -n openshift-operatorsExample output

subscription.operators.coreos.com "jaeger" deletedDelete the CSV for the Operator in the target namespace using the

currentCSVvalue from the previous step:$ oc delete clusterserviceversion jaeger-operator.v1.8.2 -n openshift-operatorsExample output

clusterserviceversion.operators.coreos.com "jaeger-operator.v1.8.2" deleted

3.4.3.3. Refreshing failing subscriptions

In Operator Lifecycle Manager (OLM), if you subscribe to an Operator that references images that are not accessible on your network, you can find jobs in the openshift-marketplace namespace that are failing with the following errors:

Example output

ImagePullBackOff for

Back-off pulling image "example.com/openshift4/ose-elasticsearch-operator-bundle@sha256:6d2587129c846ec28d384540322b40b05833e7e00b25cca584e004af9a1d292e"Example output

rpc error: code = Unknown desc = error pinging docker registry example.com: Get "https://example.com/v2/": dial tcp: lookup example.com on 10.0.0.1:53: no such hostAs a result, the subscription is stuck in this failing state and the Operator is unable to install or upgrade.

You can refresh a failing subscription by deleting the subscription, cluster service version (CSV), and other related objects. After recreating the subscription, OLM then reinstalls the correct version of the Operator.

Prerequisites

- You have a failing subscription that is unable to pull an inaccessible bundle image.

- You have confirmed that the correct bundle image is accessible.

Procedure

Get the names of the

SubscriptionandClusterServiceVersionobjects from the namespace where the Operator is installed:$ oc get sub,csv -n <namespace>Example output

NAME PACKAGE SOURCE CHANNEL subscription.operators.coreos.com/elasticsearch-operator elasticsearch-operator redhat-operators 5.0 NAME DISPLAY VERSION REPLACES PHASE clusterserviceversion.operators.coreos.com/elasticsearch-operator.5.0.0-65 OpenShift Elasticsearch Operator 5.0.0-65 SucceededDelete the subscription:

$ oc delete subscription <subscription_name> -n <namespace>Delete the cluster service version: