Dieser Inhalt ist in der von Ihnen ausgewählten Sprache nicht verfügbar.

Chapter 3. Setting up a Router

3.1. Router Overview

3.1.1. About Routers

There are many ways to get traffic into the cluster. The most common approach is to use the OpenShift Container Platform router as the ingress point for external traffic destined for services in your OpenShift Container Platform installation.

OpenShift Container Platform provides and supports the following router plug-ins:

- The HAProxy template router is the default plug-in. It uses the openshift3/ose-haproxy-router image to run an HAProxy instance alongside the template router plug-in inside a container on OpenShift Container Platform. It currently supports HTTP(S) traffic and TLS-enabled traffic via SNI. The router’s container listens on the host network interface, unlike most containers that listen only on private IPs. The router proxies external requests for route names to the IPs of actual pods identified by the service associated with the route.

- The F5 router integrates with an existing F5 BIG-IP® system in your environment to synchronize routes. F5 BIG-IP® version 11.4 or newer is required in order to have the F5 iControl REST API.

- Deploying a Default HAProxy Router

- Deploying a Custom HAProxy Router

- Configuring the HAProxy Router to Use PROXY Protocol

- Configuring Route Timeouts

3.1.2. Router Service Account

Before deploying an OpenShift Container Platform cluster, you must have a service account for the router, which is automatically created during cluster installation. This service account has permissions to a security context constraint (SCC) that allows it to specify host ports.

3.1.2.1. Permission to Access Labels

When namespace labels are used, for example in creating router shards, the service account for the router must have cluster-reader permission.

$ oc adm policy add-cluster-role-to-user \

cluster-reader \

system:serviceaccount:default:routerWith a service account in place, you can proceed to installing a default HAProxy Router or a customized HAProxy Router

3.2. Using the Default HAProxy Router

3.2.1. Overview

The oc adm router command is provided with the administrator CLI to simplify the tasks of setting up routers in a new installation. The oc adm router command creates the service and deployment configuration objects. Use the --service-account option to specify the service account the router will use to contact the master.

The router service account can be created in advance or created by the oc adm router --service-account command.

Every form of communication between OpenShift Container Platform components is secured by TLS and uses various certificates and authentication methods. The --default-certificate .pem format file can be supplied or one is created by the oc adm router command. When routes are created, the user can provide route certificates that the router will use when handling the route.

When deleting a router, ensure the deployment configuration, service, and secret are deleted as well.

Routers are deployed on specific nodes. This makes it easier for the cluster administrator and external network manager to coordinate which IP address will run a router and which traffic the router will handle. The routers are deployed on specific nodes by using node selectors.

Routers use host networking by default, and they directly attach to port 80 and 443 on all interfaces on a host. Restrict routers to hosts where ports 80/443 are available and not being consumed by another service, and set this using node selectors and the scheduler configuration. As an example, you can achieve this by dedicating infrastructure nodes to run services such as routers.

It is recommended to use separate distinct openshift-router service account with your router. This can be provided using the --service-account flag to the oc adm router command.

$ oc adm router --dry-run --service-account=router

Router pods created using oc adm router have default resource requests that a node must satisfy for the router pod to be deployed. In an effort to increase the reliability of infrastructure components, the default resource requests are used to increase the QoS tier of the router pods above pods without resource requests. The default values represent the observed minimum resources required for a basic router to be deployed and can be edited in the routers deployment configuration and you may want to increase them based on the load of the router.

3.2.2. Creating a Router

If the router does not exist, run the following to create a router:

$ oc adm router <router_name> --replicas=<number> --service-account=router --extended-logging=true

--replicas is usually 1 unless a high availability configuration is being created.

--extended-logging=true configures the router to forward logs that are generated by HAProxy to the syslog container.

To find the host IP address of the router:

$ oc get po <router-pod> --template={{.status.hostIP}}You can also use router shards to ensure that the router is filtered to specific namespaces or routes, or set any environment variables after router creation. In this case create a router for each shard.

3.2.3. Other Basic Router Commands

- Checking the Default Router

- The default router service account, named router, is automatically created during cluster installations. To verify that this account already exists:

$ oc adm router --dry-run --service-account=router- Viewing the Default Router

- To see what the default router would look like if created:

$ oc adm router --dry-run -o yaml --service-account=router- Configuring the Router to Forward HAProxy Logs

-

You can configure the router to forward logs that are generated by HAProxy to an rsyslog sidecar container. The

--extended-logging=trueparameter appends the syslog container to forward HAProxy logs to standard output.

$ oc adm router --extended-logging=true

The following example is the configuration for a router that uses --extended-logging=true:

$ oc get pod router-1-xhdb9 -o yaml

apiVersion: v1

kind: Pod

spec:

containers:

- env:

....

- name: ROUTER_SYSLOG_ADDRESS

value: /var/lib/rsyslog/rsyslog.sock

....

- command:

- /sbin/rsyslogd

- -n

- -i

- /tmp/rsyslog.pid

- -f

- /etc/rsyslog/rsyslog.conf

image: registry.redhat.io/openshift3/ose-haproxy-router:v3.11.188

imagePullPolicy: IfNotPresent

name: syslogUse the following commands to view the HAProxy logs:

$ oc set env dc/test-router ROUTER_LOG_LEVEL=info

$ oc logs -f <pod-name> -c syslog The HAProxy logs take the following form:

2020-04-14T03:05:36.629527+00:00 test-311-node-1 haproxy[43]: 10.0.151.166:59594 [14/Apr/2020:03:05:36.627] fe_no_sni~ be_secure:openshift-console:console/pod:console-b475748cb-t6qkq:console:10.128.0.5:8443 0/0/1/1/2 200 393 - - --NI 2/1/0/1/0 0/0 "HEAD / HTTP/1.1"

2020-04-14T03:05:36.633024+00:00 test-311-node-1 haproxy[43]: 10.0.151.166:59594 [14/Apr/2020:03:05:36.528] public_ssl be_no_sni/fe_no_sni 95/1/104 2793 -- 1/1/0/0/0 0/0- Deploying the Router to a Labeled Node

- To deploy the router to any node(s) that match a specified node label:

$ oc adm router <router_name> --replicas=<number> --selector=<label> \

--service-account=router

For example, if you want to create a router named router and have it placed on a node labeled with node-role.kubernetes.io/infra=true:

$ oc adm router router --replicas=1 --selector='node-role.kubernetes.io/infra=true' \

--service-account=router

During cluster installation, the openshift_router_selector and openshift_registry_selector Ansible settings are set to node-role.kubernetes.io/infra=true by default. The default router and registry will only be automatically deployed if a node exists that matches the node-role.kubernetes.io/infra=true label.

For information on updating labels, see Updating Labels on Nodes.

Multiple instances are created on different hosts according to the scheduler policy.

- Using a Different Router Image

- To use a different router image and view the router configuration that would be used:

$ oc adm router <router_name> -o <format> --images=<image> \

--service-account=routerFor example:

$ oc adm router region-west -o yaml --images=myrepo/somerouter:mytag \

--service-account=router3.2.4. Filtering Routes to Specific Routers

Using the ROUTE_LABELS environment variable, you can filter routes so that they are used only by specific routers.

For example, if you have multiple routers, and 100 routes, you can attach labels to the routes so that a portion of them are handled by one router, whereas the rest are handled by another.

After creating a router, use the

ROUTE_LABELSenvironment variable to tag the router:$ oc set env dc/<router=name> ROUTE_LABELS="key=value"Add the label to the desired routes:

oc label route <route=name> key=valueTo verify that the label has been attached to the route, check the route configuration:

$ oc describe route/<route_name>

- Setting the Maximum Number of Concurrent Connections

-

The router can handle a maximum number of 20000 connections by default. You can change that limit depending on your needs. Having too few connections prevents the health check from working, which causes unnecessary restarts. You need to configure the system to support the maximum number of connections. The limits shown in

'sysctl fs.nr_open'and'sysctl fs.file-max'must be large enough. Otherwise, HAproxy will not start.

When the router is created, the --max-connections= option sets the desired limit:

$ oc adm router --max-connections=10000 ....

Edit the ROUTER_MAX_CONNECTIONS environment variable in the router’s deployment configuration to change the value. The router pods are restarted with the new value. If ROUTER_MAX_CONNECTIONS is not present, the default value of 20000, is used.

A connection includes the frontend and internal backend. This counts as two connections. Be sure to set ROUTER_MAX_CONNECTIONS to double than the number of connections you intend to create.

3.2.5. HAProxy Strict SNI

The HAProxy strict-sni can be controlled through the ROUTER_STRICT_SNI environment variable in the router’s deployment configuration. It can also be set when the router is created by using the --strict-sni command line option.

$ oc adm router --strict-sni3.2.6. TLS Cipher Suites

Set the router cipher suite using the --ciphers option when creating a router:

$ oc adm router --ciphers=modern ....

The values are: modern, intermediate, or old, with intermediate as the default. Alternatively, a set of ":" separated ciphers can be provided. The ciphers must be from the set displayed by:

$ openssl ciphers

Alternatively, use the ROUTER_CIPHERS environment variable for an existing router.

3.2.7. Mutual TLS Authentication

Client access to the router and the backend services can be restricted using mutual TLS authentication. The router will reject requests from clients not in its authenticated set. Mutual TLS authentication is implemented on client certificates and can be controlled based on the certifying authorities (CAs) issuing the certificates, the certificate revocation list and/or any certificate subject filters. Use the mutual tls config options --mutual-tls-auth, --mutual-tls-auth-ca, --mutual-tls-auth-crl and --mutual-tls-auth-filter when creating a router:

$ oc adm router --mutual-tls-auth=required \

--mutual-tls-auth-ca=/local/path/to/cacerts.pem ....

The --mutual-tls-auth values are required, optional, or none, with none as the default. The --mutual-tls-auth-ca value specifies a file containing one or more CA certificates. These CA certificates are used by the router to verify a client’s certificate.

The --mutual-tls-auth-crl can be used specify the certificate revocation list to handle cases where certificates (issued by valid certifying authorities) have been revoked.

$ oc adm router --mutual-tls-auth=required \

--mutual-tls-auth-ca=/local/path/to/cacerts.pem \

--mutual-tls-auth-filter='^/CN=my.org/ST=CA/C=US/O=Security/OU=OSE$' \

....

The --mutual-tls-auth-filter value can be used for fine grain access control based on the certificate subject. The value is a regular expression, which is to used to match up the certificate’s subject.

The mutual TLS authentication filter example above shows you a restrictive regular expression (regex) — anchored with ^ and $ — that exactly matches a certificate subject. If you decide to use a less restrictive regular expression, please be aware that this can potentially match certificates issued by any CAs you have deemed to be valid. It is recommended to also use the --mutual-tls-auth-ca option so that you have finer control over the issued certificates.

Using --mutual-tls-auth=required ensures that you only allow authenticated clients access to the backend resources. This means that the client is always required to provide authentication information (aka a client certificate). To make the mutual TLS authentication optional, use --mutual-tls-auth=optional (or use none to disable it - this is the default). Note here that optional means that you do not require a client to present any authentication information and if the client provides any authentication information, that is just passed on to the backend in the X-SSL* HTTP headers.

$ oc adm router --mutual-tls-auth=optional \

--mutual-tls-auth-ca=/local/path/to/cacerts.pem \

....

When mutual TLS authentication support is enabled (either using the required or optional value for the --mutual-tls-auth flag), the client authentication information is passed to the backend in the form of X-SSL* HTTP headers.

Examples of the X-SSL* HTTP headers X-SSL-Client-DN: the full distinguished name (DN) of the certificate subject. X-SSL-Client-NotBefore: the client certificate start date in YYMMDDhhmmss[Z] format. X-SSL-Client-NotAfter: the client certificate end date in YYMMDDhhmmss[Z] format. X-SSL-Client-SHA1: the SHA-1 fingerprint of the client certificate. X-SSL-Client-DER: provides full access to the client certificate. Contains the DER formatted client certificate encoded in base-64 format.

3.2.8. Highly-Available Routers

You can set up a highly-available router on your OpenShift Container Platform cluster using IP failover. This setup has multiple replicas on different nodes so the failover software can switch to another replica if the current one fails.

3.2.9. Customizing the Router Service Ports

You can customize the service ports that a template router binds to by setting the environment variables ROUTER_SERVICE_HTTP_PORT and ROUTER_SERVICE_HTTPS_PORT. This can be done by creating a template router, then editing its deployment configuration.

The following example creates a router deployment with 0 replicas and customizes the router service HTTP and HTTPS ports, then scales it appropriately (to 1 replica).

$ oc adm router --replicas=0 --ports='10080:10080,10443:10443'

$ oc set env dc/router ROUTER_SERVICE_HTTP_PORT=10080 \

ROUTER_SERVICE_HTTPS_PORT=10443

$ oc scale dc/router --replicas=1- 1

- Ensures exposed ports are appropriately set for routers that use the container networking mode

--host-network=false.

If you do customize the template router service ports, you will also need to ensure that the nodes where the router pods run have those custom ports opened in the firewall (either via Ansible or iptables, or any other custom method that you use via firewall-cmd).

The following is an example using iptables to open the custom router service ports.

$ iptables -A OS_FIREWALL_ALLOW -p tcp --dport 10080 -j ACCEPT

$ iptables -A OS_FIREWALL_ALLOW -p tcp --dport 10443 -j ACCEPT3.2.10. Working With Multiple Routers

An administrator can create multiple routers with the same definition to serve the same set of routes. Each router will be on a different node and will have a different IP address. The network administrator will need to get the desired traffic to each node.

Multiple routers can be grouped to distribute routing load in the cluster and separate tenants to different routers or shards. Each router or shard in the group admits routes based on the selectors in the router. An administrator can create shards over the whole cluster using ROUTE_LABELS. A user can create shards over a namespace (project) by using NAMESPACE_LABELS.

3.2.11. Adding a Node Selector to a Deployment Configuration

Making specific routers deploy on specific nodes requires two steps:

Add a label to the desired node:

$ oc label node 10.254.254.28 "router=first"Add a node selector to the router deployment configuration:

$ oc edit dc <deploymentConfigName>Add the

template.spec.nodeSelectorfield with a key and value corresponding to the label:... template: metadata: creationTimestamp: null labels: router: router1 spec: nodeSelector:1 router: "first" ...- 1

- The key and value are

routerandfirst, respectively, corresponding to therouter=firstlabel.

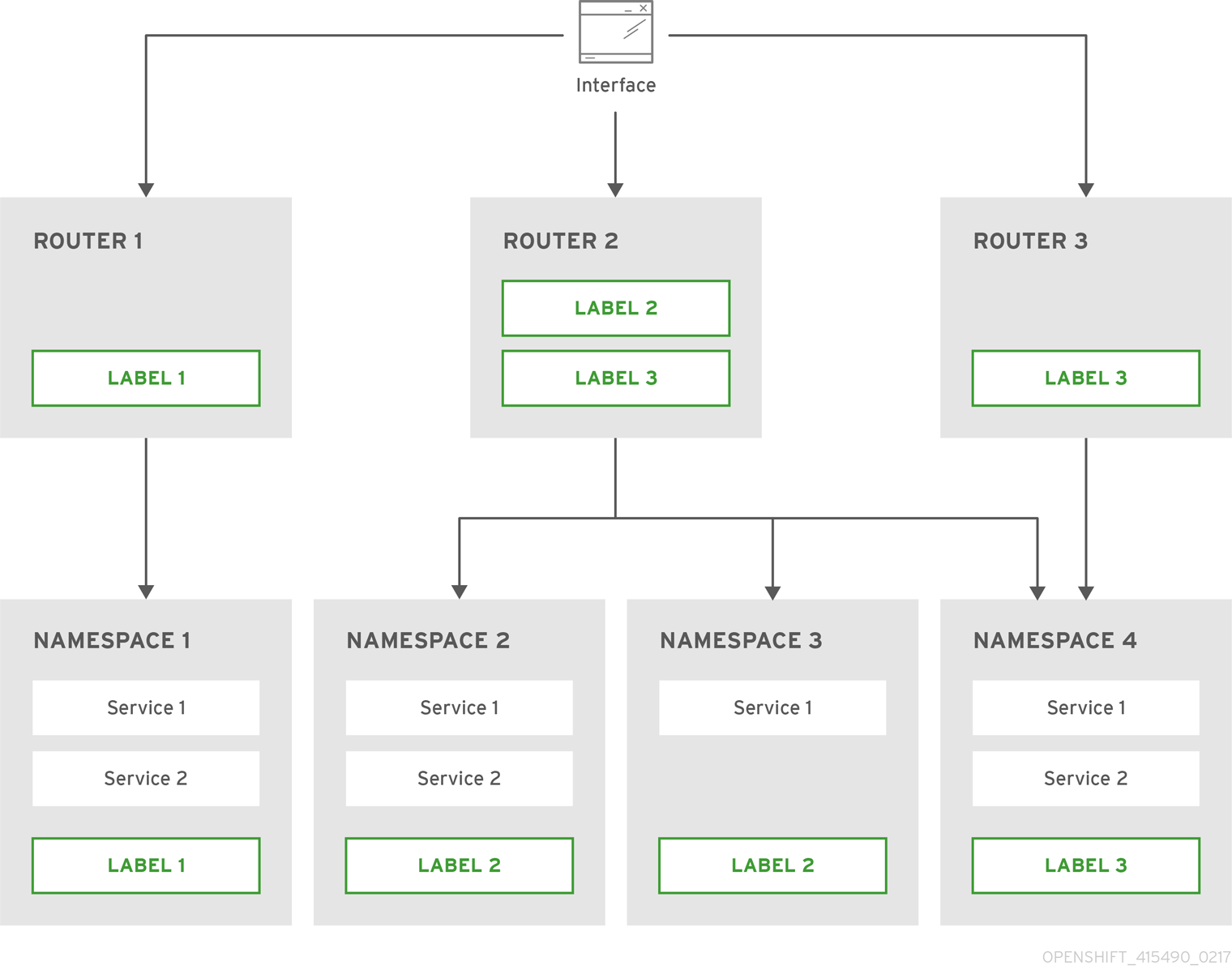

3.2.12. Using Router Shards

Router sharding uses NAMESPACE_LABELS and ROUTE_LABELS, to filter router namespaces and routes. This enables you to distribute subsets of routes over multiple router deployments. By using non-overlapping subsets, you can effectively partition the set of routes. Alternatively, you can define shards comprising overlapping subsets of routes.

By default, a router selects all routes from all projects (namespaces). Sharding involves adding labels to routes or namespaces and label selectors to routers. Each router shard comprises the routes that are selected by a specific set of label selectors or belong to the namespaces that are selected by a specific set of label selectors.

The router service account must have the [cluster reader] permission set to allow access to labels in other namespaces.

Router Sharding and DNS

Because an external DNS server is needed to route requests to the desired shard, the administrator is responsible for making a separate DNS entry for each router in a project. A router will not forward unknown routes to another router.

Consider the following example:

-

Router A lives on host 192.168.0.5 and has routes with

*.foo.com. -

Router B lives on host 192.168.1.9 and has routes with

*.example.com.

Separate DNS entries must resolve *.foo.com to the node hosting Router A and *.example.com to the node hosting Router B:

-

*.foo.com A IN 192.168.0.5 -

*.example.com A IN 192.168.1.9

Router Sharding Examples

This section describes router sharding using namespace and route labels.

Figure 3.1. Router Sharding Based on Namespace Labels

Configure a router with a namespace label selector:

$ oc set env dc/router NAMESPACE_LABELS="router=r1"Because the router has a selector on the namespace, the router will handle routes only for matching namespaces. In order to make this selector match a namespace, label the namespace accordingly:

$ oc label namespace default "router=r1"Now, if you create a route in the default namespace, the route is available in the default router:

$ oc create -f route1.yamlCreate a new project (namespace) and create a route,

route2:$ oc new-project p1 $ oc create -f route2.yamlNotice the route is not available in your router.

Label namespace

p1withrouter=r1$ oc label namespace p1 "router=r1"

Adding this label makes the route available in the router.

- Example

A router deployment

finops-routeris configured with the label selectorNAMESPACE_LABELS="name in (finance, ops)", and a router deploymentdev-routeris configured with the label selectorNAMESPACE_LABELS="name=dev".If all routes are in namespaces labeled

name=finance,name=ops, andname=dev, then this configuration effectively distributes your routes between the two router deployments.In the above scenario, sharding becomes a special case of partitioning, with no overlapping subsets. Routes are divided between router shards.

The criteria for route selection govern how the routes are distributed. It is possible to have overlapping subsets of routes across router deployments.

- Example

In addition to

finops-routeranddev-routerin the example above, you also havedevops-router, which is configured with a label selectorNAMESPACE_LABELS="name in (dev, ops)".The routes in namespaces labeled

name=devorname=opsnow are serviced by two different router deployments. This becomes a case in which you have defined overlapping subsets of routes, as illustrated in the procedure in Router Sharding Based on Namespace Labels.In addition, this enables you to create more complex routing rules, allowing the diversion of higher priority traffic to the dedicated

finops-routerwhile sending lower priority traffic todevops-router.

Router Sharding Based on Route Labels

NAMESPACE_LABELS allows filtering of the projects to service and selecting all the routes from those projects, but you may want to partition routes based on other criteria associated with the routes themselves. The ROUTE_LABELS selector allows you to slice-and-dice the routes themselves.

- Example

A router deployment

prod-routeris configured with the label selectorROUTE_LABELS="mydeployment=prod", and a router deploymentdevtest-routeris configured with the label selectorROUTE_LABELS="mydeployment in (dev, test)".This configuration partitions routes between the two router deployments according to the routes' labels, irrespective of their namespaces.

The example assumes you have all the routes you want to be serviced tagged with a label

"mydeployment=<tag>".

3.2.12.1. Creating Router Shards

This section describes an advanced example of router sharding. Suppose there are 26 routes, named a — z, with various labels:

Possible labels on routes

sla=high geo=east hw=modest dept=finance

sla=medium geo=west hw=strong dept=dev

sla=low dept=opsThese labels express the concepts including service level agreement, geographical location, hardware requirements, and department. The routes can have at most one label from each column. Some routes may have other labels or no labels at all.

| Name(s) | SLA | Geo | HW | Dept | Other Labels |

|---|---|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

| ||

|

|

|

|

| ||

|

|

|

|

| ||

|

|

|

|

| ||

|

|

|

|

Here is a convenience script mkshard that illustrates how oc adm router, oc set env, and oc scale can be used together to make a router shard.

#!/bin/bash

# Usage: mkshard ID SELECTION-EXPRESSION

id=$1

sel="$2"

router=router-shard-$id

oc adm router $router --replicas=0

dc=dc/router-shard-$id

oc set env $dc ROUTE_LABELS="$sel"

oc scale $dc --replicas=3 Running mkshard several times creates several routers:

| Router | Selection Expression | Routes |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

3.2.12.2. Modifying Router Shards

Because a router shard is a construct based on labels, you can modify either the labels (via oc label) or the selection expression (via oc set env).

This section extends the example started in the Creating Router Shards section, demonstrating how to change the selection expression.

Here is a convenience script modshard that modifies an existing router to use a new selection expression:

#!/bin/bash

# Usage: modshard ID SELECTION-EXPRESSION...

id=$1

shift

router=router-shard-$id

dc=dc/$router

oc scale $dc --replicas=0

oc set env $dc "$@"

oc scale $dc --replicas=3 - 1

- The modified router has name

router-shard-<id>. - 2

- The deployment configuration where the modifications occur.

- 3

- Scale it down.

- 4

- Set the new selection expression using

oc set env. Unlikemkshardfrom the Creating Router Shards section, the selection expression specified as the non-IDarguments tomodshardmust include the environment variable name as well as its value. - 5

- Scale it back up.

In modshard, the oc scale commands are not necessary if the deployment strategy for router-shard-<id> is Rolling.

For example, to expand the department for router-shard-3 to include ops as well as dev:

$ modshard 3 ROUTE_LABELS='dept in (dev, ops)'

The result is that router-shard-3 now selects routes g — s (the combined sets of g — k and l — s).

This example takes into account that there are only three departments in this example scenario, and specifies a department to leave out of the shard, thus achieving the same result as the preceding example:

$ modshard 3 ROUTE_LABELS='dept != finance'

This example specifies three comma-separated qualities, and results in only route b being selected:

$ modshard 3 ROUTE_LABELS='hw=strong,type=dynamic,geo=west'

Similarly to ROUTE_LABELS, which involves a route’s labels, you can select routes based on the labels of the route’s namespace using the NAMESPACE_LABELS environment variable. This example modifies router-shard-3 to serve routes whose namespace has the label frequency=weekly:

$ modshard 3 NAMESPACE_LABELS='frequency=weekly'

The last example combines ROUTE_LABELS and NAMESPACE_LABELS to select routes with label sla=low and whose namespace has the label frequency=weekly:

$ modshard 3 \

NAMESPACE_LABELS='frequency=weekly' \

ROUTE_LABELS='sla=low'3.2.13. Finding the Host Name of the Router

When exposing a service, a user can use the same route from the DNS name that external users use to access the application. The network administrator of the external network must make sure the host name resolves to the name of a router that has admitted the route. The user can set up their DNS with a CNAME that points to this host name. However, the user may not know the host name of the router. When it is not known, the cluster administrator can provide it.

The cluster administrator can use the --router-canonical-hostname option with the router’s canonical host name when creating the router. For example:

# oc adm router myrouter --router-canonical-hostname="rtr.example.com"

This creates the ROUTER_CANONICAL_HOSTNAME environment variable in the router’s deployment configuration containing the host name of the router.

For routers that already exist, the cluster administrator can edit the router’s deployment configuration and add the ROUTER_CANONICAL_HOSTNAME environment variable:

spec:

template:

spec:

containers:

- env:

- name: ROUTER_CANONICAL_HOSTNAME

value: rtr.example.com

The ROUTER_CANONICAL_HOSTNAME value is displayed in the route status for all routers that have admitted the route. The route status is refreshed every time the router is reloaded.

When a user creates a route, all of the active routers evaluate the route and, if conditions are met, admit it. When a router that defines the ROUTER_CANONICAL_HOSTNAME environment variable admits the route, the router places the value in the routerCanonicalHostname field in the route status. The user can examine the route status to determine which, if any, routers have admitted the route, select a router from the list, and find the host name of the router to pass along to the network administrator.

status:

ingress:

conditions:

lastTransitionTime: 2016-12-07T15:20:57Z

status: "True"

type: Admitted

host: hello.in.mycloud.com

routerCanonicalHostname: rtr.example.com

routerName: myrouter

wildcardPolicy: None

oc describe inclues the host name when available:

$ oc describe route/hello-route3

...

Requested Host: hello.in.mycloud.com exposed on router myroute (host rtr.example.com) 12 minutes ago

Using the above information, the user can ask the DNS administrator to set up a CNAME from the route’s host, hello.in.mycloud.com, to the router’s canonical hostname, rtr.example.com. This results in any traffic to hello.in.mycloud.com reaching the user’s application.

3.2.14. Customizing the Default Routing Subdomain

You can customize the suffix used as the default routing subdomain for your environment by modifying the master configuration file (the /etc/origin/master/master-config.yaml file by default). Routes that do not specify a host name would have one generated using this default routing subdomain.

The following example shows how you can set the configured suffix to v3.openshift.test:

routingConfig:

subdomain: v3.openshift.testThis change requires a restart of the master if it is running.

With the OpenShift Container Platform master(s) running the above configuration, the generated host name for the example of a route named no-route-hostname without a host name added to a namespace mynamespace would be:

no-route-hostname-mynamespace.v3.openshift.test3.2.15. Forcing Route Host Names to a Custom Routing Subdomain

If an administrator wants to restrict all routes to a specific routing subdomain, they can pass the --force-subdomain option to the oc adm router command. This forces the router to override any host names specified in a route and generate one based on the template provided to the --force-subdomain option.

The following example runs a router, which overrides the route host names using a custom subdomain template ${name}-${namespace}.apps.example.com.

$ oc adm router --force-subdomain='${name}-${namespace}.apps.example.com'3.2.16. Using Wildcard Certificates

A TLS-enabled route that does not include a certificate uses the router’s default certificate instead. In most cases, this certificate should be provided by a trusted certificate authority, but for convenience you can use the OpenShift Container Platform CA to create the certificate. For example:

$ CA=/etc/origin/master

$ oc adm ca create-server-cert --signer-cert=$CA/ca.crt \

--signer-key=$CA/ca.key --signer-serial=$CA/ca.serial.txt \

--hostnames='*.cloudapps.example.com' \

--cert=cloudapps.crt --key=cloudapps.key

The oc adm ca create-server-cert command generates a certificate that is valid for two years. This can be altered with the --expire-days option, but for security reasons, it is recommended to not make it greater than this value.

Run oc adm commands only from the first master listed in the Ansible host inventory file, by default /etc/ansible/hosts.

The router expects the certificate and key to be in PEM format in a single file:

$ cat cloudapps.crt cloudapps.key $CA/ca.crt > cloudapps.router.pem

From there you can use the --default-cert flag:

$ oc adm router --default-cert=cloudapps.router.pem --service-account=routerBrowsers only consider wildcards valid for subdomains one level deep. So in this example, the certificate would be valid for a.cloudapps.example.com but not for a.b.cloudapps.example.com.

3.2.17. Manually Redeploy Certificates

To manually redeploy the router certificates:

Check to see if a secret containing the default router certificate was added to the router:

$ oc set volume dc/router deploymentconfigs/router secret/router-certs as server-certificate mounted at /etc/pki/tls/privateIf the certificate is added, skip the following step and overwrite the secret.

Make sure that you have a default certificate directory set for the following variable

DEFAULT_CERTIFICATE_DIR:$ oc set env dc/router --list DEFAULT_CERTIFICATE_DIR=/etc/pki/tls/privateIf not, create the directory using the following command:

$ oc set env dc/router DEFAULT_CERTIFICATE_DIR=/etc/pki/tls/privateExport the certificate to PEM format:

$ cat custom-router.key custom-router.crt custom-ca.crt > custom-router.crtOverwrite or create a router certificate secret:

If the certificate secret was added to the router, overwrite the secret. If not, create a new secret.

To overwrite the secret, run the following command:

$ oc create secret generic router-certs --from-file=tls.crt=custom-router.crt --from-file=tls.key=custom-router.key --type=kubernetes.io/tls -o json --dry-run | oc replace -f -To create a new secret, run the following commands:

$ oc create secret generic router-certs --from-file=tls.crt=custom-router.crt --from-file=tls.key=custom-router.key --type=kubernetes.io/tls $ oc set volume dc/router --add --mount-path=/etc/pki/tls/private --secret-name='router-certs' --name router-certsDeploy the router.

$ oc rollout latest dc/router

3.2.18. Using Secured Routes

Currently, password protected key files are not supported. HAProxy prompts for a password upon starting and does not have a way to automate this process. To remove a passphrase from a keyfile, you can run:

# openssl rsa -in <passwordProtectedKey.key> -out <new.key>Here is an example of how to use a secure edge terminated route with TLS termination occurring on the router before traffic is proxied to the destination. The secure edge terminated route specifies the TLS certificate and key information. The TLS certificate is served by the router front end.

First, start up a router instance:

# oc adm router --replicas=1 --service-account=router

Next, create a private key, csr and certificate for our edge secured route. The instructions on how to do that would be specific to your certificate authority and provider. For a simple self-signed certificate for a domain named www.example.test, see the example shown below:

# sudo openssl genrsa -out example-test.key 2048

#

# sudo openssl req -new -key example-test.key -out example-test.csr \

-subj "/C=US/ST=CA/L=Mountain View/O=OS3/OU=Eng/CN=www.example.test"

#

# sudo openssl x509 -req -days 366 -in example-test.csr \

-signkey example-test.key -out example-test.crtGenerate a route using the above certificate and key.

$ oc create route edge --service=my-service \

--hostname=www.example.test \

--key=example-test.key --cert=example-test.crt

route "my-service" createdLook at its definition.

$ oc get route/my-service -o yaml

apiVersion: v1

kind: Route

metadata:

name: my-service

spec:

host: www.example.test

to:

kind: Service

name: my-service

tls:

termination: edge

key: |

-----BEGIN PRIVATE KEY-----

[...]

-----END PRIVATE KEY-----

certificate: |

-----BEGIN CERTIFICATE-----

[...]

-----END CERTIFICATE-----

Make sure your DNS entry for www.example.test points to your router instance(s) and the route to your domain should be available. The example below uses curl along with a local resolver to simulate the DNS lookup:

# routerip="4.1.1.1" # replace with IP address of one of your router instances.

# curl -k --resolve www.example.test:443:$routerip https://www.example.test/3.2.19. Using Wildcard Routes (for a Subdomain)

The HAProxy router has support for wildcard routes, which are enabled by setting the ROUTER_ALLOW_WILDCARD_ROUTES environment variable to true. Any routes with a wildcard policy of Subdomain that pass the router admission checks will be serviced by the HAProxy router. Then, the HAProxy router exposes the associated service (for the route) per the route’s wildcard policy.

To change a route’s wildcard policy, you must remove the route and recreate it with the updated wildcard policy. Editing only the route’s wildcard policy in a route’s .yaml file does not work.

$ oc adm router --replicas=0 ...

$ oc set env dc/router ROUTER_ALLOW_WILDCARD_ROUTES=true

$ oc scale dc/router --replicas=1Learn how to configure the web console for wildcard routes.

Using a Secure Wildcard Edge Terminated Route

This example reflects TLS termination occurring on the router before traffic is proxied to the destination. Traffic sent to any hosts in the subdomain example.org (*.example.org) is proxied to the exposed service.

The secure edge terminated route specifies the TLS certificate and key information. The TLS certificate is served by the router front end for all hosts that match the subdomain (*.example.org).

Start up a router instance:

$ oc adm router --replicas=0 --service-account=router $ oc set env dc/router ROUTER_ALLOW_WILDCARD_ROUTES=true $ oc scale dc/router --replicas=1Create a private key, certificate signing request (CSR), and certificate for the edge secured route.

The instructions on how to do this are specific to your certificate authority and provider. For a simple self-signed certificate for a domain named

*.example.test, see this example:# sudo openssl genrsa -out example-test.key 2048 # # sudo openssl req -new -key example-test.key -out example-test.csr \ -subj "/C=US/ST=CA/L=Mountain View/O=OS3/OU=Eng/CN=*.example.test" # # sudo openssl x509 -req -days 366 -in example-test.csr \ -signkey example-test.key -out example-test.crtGenerate a wildcard route using the above certificate and key:

$ cat > route.yaml <<REOF apiVersion: v1 kind: Route metadata: name: my-service spec: host: www.example.test wildcardPolicy: Subdomain to: kind: Service name: my-service tls: termination: edge key: "$(perl -pe 's/\n/\\n/' example-test.key)" certificate: "$(perl -pe 's/\n/\\n/' example-test.cert)" REOF $ oc create -f route.yamlEnsure your DNS entry for

*.example.testpoints to your router instance(s) and the route to your domain is available.This example uses

curlwith a local resolver to simulate the DNS lookup:# routerip="4.1.1.1" # replace with IP address of one of your router instances. # curl -k --resolve www.example.test:443:$routerip https://www.example.test/ # curl -k --resolve abc.example.test:443:$routerip https://abc.example.test/ # curl -k --resolve anyname.example.test:443:$routerip https://anyname.example.test/

For routers that allow wildcard routes (ROUTER_ALLOW_WILDCARD_ROUTES set to true), there are some caveats to the ownership of a subdomain associated with a wildcard route.

Prior to wildcard routes, ownership was based on the claims made for a host name with the namespace with the oldest route winning against any other claimants. For example, route r1 in namespace ns1 with a claim for one.example.test would win over another route r2 in namespace ns2 for the same host name one.example.test if route r1 was older than route r2.

In addition, routes in other namespaces were allowed to claim non-overlapping hostnames. For example, route rone in namespace ns1 could claim www.example.test and another route rtwo in namespace d2 could claim c3po.example.test.

This is still the case if there are no wildcard routes claiming that same subdomain (example.test in the above example).

However, a wildcard route needs to claim all of the host names within a subdomain (host names of the form \*.example.test). A wildcard route’s claim is allowed or denied based on whether or not the oldest route for that subdomain (example.test) is in the same namespace as the wildcard route. The oldest route can be either a regular route or a wildcard route.

For example, if there is already a route eldest that exists in the ns1 namespace that claimed a host named owner.example.test and, if at a later point in time, a new wildcard route wildthing requesting for routes in that subdomain (example.test) is added, the claim by the wildcard route will only be allowed if it is the same namespace (ns1) as the owning route.

The following examples illustrate various scenarios in which claims for wildcard routes will succeed or fail.

In the example below, a router that allows wildcard routes will allow non-overlapping claims for hosts in the subdomain example.test as long as a wildcard route has not claimed a subdomain.

$ oc adm router ...

$ oc set env dc/router ROUTER_ALLOW_WILDCARD_ROUTES=true

$ oc project ns1

$ oc expose service myservice --hostname=owner.example.test

$ oc expose service myservice --hostname=aname.example.test

$ oc expose service myservice --hostname=bname.example.test

$ oc project ns2

$ oc expose service anotherservice --hostname=second.example.test

$ oc expose service anotherservice --hostname=cname.example.test

$ oc project otherns

$ oc expose service thirdservice --hostname=emmy.example.test

$ oc expose service thirdservice --hostname=webby.example.test

In the example below, a router that allows wildcard routes will not allow the claim for owner.example.test or aname.example.test to succeed since the owning namespace is ns1.

$ oc adm router ...

$ oc set env dc/router ROUTER_ALLOW_WILDCARD_ROUTES=true

$ oc project ns1

$ oc expose service myservice --hostname=owner.example.test

$ oc expose service myservice --hostname=aname.example.test

$ oc project ns2

$ oc expose service secondservice --hostname=bname.example.test

$ oc expose service secondservice --hostname=cname.example.test

$ # Router will not allow this claim with a different path name `/p1` as

$ # namespace `ns1` has an older route claiming host `aname.example.test`.

$ oc expose service secondservice --hostname=aname.example.test --path="/p1"

$ # Router will not allow this claim as namespace `ns1` has an older route

$ # claiming host name `owner.example.test`.

$ oc expose service secondservice --hostname=owner.example.test

$ oc project otherns

$ # Router will not allow this claim as namespace `ns1` has an older route

$ # claiming host name `aname.example.test`.

$ oc expose service thirdservice --hostname=aname.example.test

In the example below, a router that allows wildcard routes will allow the claim for `\*.example.test to succeed since the owning namespace is ns1 and the wildcard route belongs to that same namespace.

$ oc adm router ...

$ oc set env dc/router ROUTER_ALLOW_WILDCARD_ROUTES=true

$ oc project ns1

$ oc expose service myservice --hostname=owner.example.test

$ # Reusing the route.yaml from the previous example.

$ # spec:

$ # host: www.example.test

$ # wildcardPolicy: Subdomain

$ oc create -f route.yaml # router will allow this claim.

In the example below, a router that allows wildcard routes will not allow the claim for `\*.example.test to succeed since the owning namespace is ns1 and the wildcard route belongs to another namespace cyclone.

$ oc adm router ...

$ oc set env dc/router ROUTER_ALLOW_WILDCARD_ROUTES=true

$ oc project ns1

$ oc expose service myservice --hostname=owner.example.test

$ # Switch to a different namespace/project.

$ oc project cyclone

$ # Reusing the route.yaml from a prior example.

$ # spec:

$ # host: www.example.test

$ # wildcardPolicy: Subdomain

$ oc create -f route.yaml # router will deny (_NOT_ allow) this claim.Similarly, once a namespace with a wildcard route claims a subdomain, only routes within that namespace can claim any hosts in that same subdomain.

In the example below, once a route in namespace ns1 with a wildcard route claims subdomain example.test, only routes in the namespace ns1 are allowed to claim any hosts in that same subdomain.

$ oc adm router ...

$ oc set env dc/router ROUTER_ALLOW_WILDCARD_ROUTES=true

$ oc project ns1

$ oc expose service myservice --hostname=owner.example.test

$ oc project otherns

$ # namespace `otherns` is allowed to claim for other.example.test

$ oc expose service otherservice --hostname=other.example.test

$ oc project ns1

$ # Reusing the route.yaml from the previous example.

$ # spec:

$ # host: www.example.test

$ # wildcardPolicy: Subdomain

$ oc create -f route.yaml # Router will allow this claim.

$ # In addition, route in namespace otherns will lose its claim to host

$ # `other.example.test` due to the wildcard route claiming the subdomain.

$ # namespace `ns1` is allowed to claim for deux.example.test

$ oc expose service mysecondservice --hostname=deux.example.test

$ # namespace `ns1` is allowed to claim for deux.example.test with path /p1

$ oc expose service mythirdservice --hostname=deux.example.test --path="/p1"

$ oc project otherns

$ # namespace `otherns` is not allowed to claim for deux.example.test

$ # with a different path '/otherpath'

$ oc expose service otherservice --hostname=deux.example.test --path="/otherpath"

$ # namespace `otherns` is not allowed to claim for owner.example.test

$ oc expose service yetanotherservice --hostname=owner.example.test

$ # namespace `otherns` is not allowed to claim for unclaimed.example.test

$ oc expose service yetanotherservice --hostname=unclaimed.example.test

In the example below, different scenarios are shown, in which the owner routes are deleted and ownership is passed within and across namespaces. While a route claiming host eldest.example.test in the namespace ns1 exists, wildcard routes in that namespace can claim subdomain example.test. When the route for host eldest.example.test is deleted, the next oldest route senior.example.test would become the oldest route and would not affect any other routes. Once the route for host senior.example.test is deleted, the next oldest route junior.example.test becomes the oldest route and block the wildcard route claimant.

$ oc adm router ...

$ oc set env dc/router ROUTER_ALLOW_WILDCARD_ROUTES=true

$ oc project ns1

$ oc expose service myservice --hostname=eldest.example.test

$ oc expose service seniorservice --hostname=senior.example.test

$ oc project otherns

$ # namespace `otherns` is allowed to claim for other.example.test

$ oc expose service juniorservice --hostname=junior.example.test

$ oc project ns1

$ # Reusing the route.yaml from the previous example.

$ # spec:

$ # host: www.example.test

$ # wildcardPolicy: Subdomain

$ oc create -f route.yaml # Router will allow this claim.

$ # In addition, route in namespace otherns will lose its claim to host

$ # `junior.example.test` due to the wildcard route claiming the subdomain.

$ # namespace `ns1` is allowed to claim for dos.example.test

$ oc expose service mysecondservice --hostname=dos.example.test

$ # Delete route for host `eldest.example.test`, the next oldest route is

$ # the one claiming `senior.example.test`, so route claims are unaffacted.

$ oc delete route myservice

$ # Delete route for host `senior.example.test`, the next oldest route is

$ # the one claiming `junior.example.test` in another namespace, so claims

$ # for a wildcard route would be affected. The route for the host

$ # `dos.example.test` would be unaffected as there are no other wildcard

$ # claimants blocking it.

$ oc delete route seniorservice3.2.20. Using the Container Network Stack

The OpenShift Container Platform router runs inside a container and the default behavior is to use the network stack of the host (i.e., the node where the router container runs). This default behavior benefits performance because network traffic from remote clients does not need to take multiple hops through user space to reach the target service and container.

Additionally, this default behavior enables the router to get the actual source IP address of the remote connection rather than getting the node’s IP address. This is useful for defining ingress rules based on the originating IP, supporting sticky sessions, and monitoring traffic, among other uses.

This host network behavior is controlled by the --host-network router command line option, and the default behaviour is the equivalent of using --host-network=true. If you wish to run the router with the container network stack, use the --host-network=false option when creating the router. For example:

$ oc adm router --service-account=router --host-network=falseInternally, this means the router container must publish the 80 and 443 ports in order for the external network to communicate with the router.

Running with the container network stack means that the router sees the source IP address of a connection to be the NATed IP address of the node, rather than the actual remote IP address.

On OpenShift Container Platform clusters using multi-tenant network isolation, routers on a non-default namespace with the --host-network=false option will load all routes in the cluster, but routes across the namespaces will not be reachable due to network isolation. With the --host-network=true option, routes bypass the container network and it can access any pod in the cluster. If isolation is needed in this case, then do not add routes across the namespaces.

3.2.21. Using the Dynamic Configuration Manager

You can configure the HAProxy router to support the dynamic configuration manager.

The dynamic configuration manager brings certain types of routes online without requiring HAProxy reload downtime. It handles any route and endpoint life-cycle events such as route and endpoint addition|deletion|update.

Enable the dynamic configuration manager by setting the ROUTER_HAPROXY_CONFIG_MANAGER environment variable to true:

$ oc set env dc/<router_name> ROUTER_HAPROXY_CONFIG_MANAGER='true'If the dynamic configuration manager cannot dynamically configure HAProxy, it rewrites the configuration and reloads the HAProxy process. For example, if a new route contains custom annotations, such as custom timeouts, or if the route requires custom TLS configuration.

The dynamic configuration internally uses the HAProxy socket and configuration API with a pool of pre-allocated routes and back end servers. The pre-allocated pool of routes is created using route blueprints. The default set of blueprints supports unsecured routes, edge secured routes without any custom TLS configuration, and passthrough routes.

re-encrypt routes require custom TLS configuration information, so extra configuration is needed in order to use them with the dynamic configuration manager.

Extend the blueprints that the dynamic configuration manager can use by setting the ROUTER_BLUEPRINT_ROUTE_NAMESPACE and optionally the ROUTER_BLUEPRINT_ROUTE_LABELS environment variables.

All routes, or the routes that match the route labels, in the blueprint route namespace are processed as custom blueprints similar to the default set of blueprints. This includes re-encrypt routes or routes that use custom annotations or routes with custom TLS configuration.

The following procedure assumes you have created three route objects: reencrypt-blueprint, annotated-edge-blueprint, and annotated-unsecured-blueprint. See Route Types for an example of the different route type objects.

Procedure

Create a new project:

$ oc new-project namespace_nameCreate a new route. This method exposes an existing service:

$ oc create route edge edge_route_name --key=/path/to/key.pem \ --cert=/path/to/cert.pem --service=<service> --port=8443Label the route:

$ oc label route edge_route_name type=route_label_1Create three different routes from route object definitions. All have the label

type=route_label_1:$ oc create -f reencrypt-blueprint.yaml $ oc create -f annotated-edge-blueprint.yaml $ oc create -f annotated-unsecured-blueprint.jsonYou can also remove a label from a route, which prevents it from being used as a blueprint route. For example, to prevent the

annotated-unsecured-blueprintfrom being used as a blueprint route:$ oc label route annotated-unsecured-blueprint type-Create a new router to be used for the blueprint pool:

$ oc adm routerSet the environment variables for the new router:

$ oc set env dc/router ROUTER_HAPROXY_CONFIG_MANAGER=true \ ROUTER_BLUEPRINT_ROUTE_NAMESPACE=namespace_name \ ROUTER_BLUEPRINT_ROUTE_LABELS="type=route_label_1"All routes in the namespace or project

namespace_namewith labeltype=route_label_1can be processed and used as custom blueprints.Note that you can also add, update, or remove blueprints by managing the routes as you would normally in that namespace

namespace_name. The dynamic configuration manager watches for changes to routes in the namespacenamespace_namesimilar to how the router watches forroutesandservices.The pool sizes of the pre-allocated routes and back end servers can be controlled with the

ROUTER_BLUEPRINT_ROUTE_POOL_SIZE, which defaults to10, andROUTER_MAX_DYNAMIC_SERVERS, which defaults to5, environment variables. You can also control how often changes made by the dynamic configuration manager are committed to disk, which is when the HAProxy configuration is re-written and the HAProxy process is reloaded. The default is one hour, or 3600 seconds, or when the dynamic configuration manager runs out of pool space. TheCOMMIT_INTERVALenvironment variable controls this setting:$ oc set env dc/router -c router ROUTER_BLUEPRINT_ROUTE_POOL_SIZE=20 \ ROUTER_MAX_DYNAMIC_SERVERS=3 COMMIT_INTERVAL=6hThe example increases the pool size for each blueprint route to

20, reduces the number of dynamic servers to3, and increases the commit interval to6hours.

3.2.22. Exposing Router Metrics

The HAProxy router metrics are, by default, exposed or published in Prometheus format for consumption by external metrics collection and aggregation systems (e.g. Prometheus, statsd). Metrics are also available directly from the HAProxy router in its own HTML format for viewing in a browser or CSV download. These metrics include the HAProxy native metrics and some controller metrics.

When you create a router using the following command, OpenShift Container Platform makes metrics available in Prometheus format on the stats port, by default 1936.

$ oc adm router --service-account=routerTo extract the raw statistics in Prometheus format run the following command:

curl <user>:<password>@<router_IP>:<STATS_PORT>For example:

$ curl admin:sLzdR6SgDJ@10.254.254.35:1936/metricsYou can get the information you need to access the metrics from the router service annotations:

$ oc edit service <router-name> apiVersion: v1 kind: Service metadata: annotations: prometheus.io/port: "1936" prometheus.io/scrape: "true" prometheus.openshift.io/password: IImoDqON02 prometheus.openshift.io/username: adminThe

prometheus.io/portis the stats port, by default 1936. You might need to configure your firewall to permit access. Use the previous user name and password to access the metrics. The path is /metrics.$ curl <user>:<password>@<router_IP>:<STATS_PORT> for example: $ curl admin:sLzdR6SgDJ@10.254.254.35:1936/metrics ... # HELP haproxy_backend_connections_total Total number of connections. # TYPE haproxy_backend_connections_total gauge haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route"} 0 haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route-alt"} 0 haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route01"} 0 ... # HELP haproxy_exporter_server_threshold Number of servers tracked and the current threshold value. # TYPE haproxy_exporter_server_threshold gauge haproxy_exporter_server_threshold{type="current"} 11 haproxy_exporter_server_threshold{type="limit"} 500 ... # HELP haproxy_frontend_bytes_in_total Current total of incoming bytes. # TYPE haproxy_frontend_bytes_in_total gauge haproxy_frontend_bytes_in_total{frontend="fe_no_sni"} 0 haproxy_frontend_bytes_in_total{frontend="fe_sni"} 0 haproxy_frontend_bytes_in_total{frontend="public"} 119070 ... # HELP haproxy_server_bytes_in_total Current total of incoming bytes. # TYPE haproxy_server_bytes_in_total gauge haproxy_server_bytes_in_total{namespace="",pod="",route="",server="fe_no_sni",service=""} 0 haproxy_server_bytes_in_total{namespace="",pod="",route="",server="fe_sni",service=""} 0 haproxy_server_bytes_in_total{namespace="default",pod="docker-registry-5-nk5fz",route="docker-registry",server="10.130.0.89:5000",service="docker-registry"} 0 haproxy_server_bytes_in_total{namespace="default",pod="hello-rc-vkjqx",route="hello-route",server="10.130.0.90:8080",service="hello-svc-1"} 0 ...To get metrics in a browser:

Delete the following environment variables from the router deployment configuration file:

$ oc edit dc router - name: ROUTER_LISTEN_ADDR value: 0.0.0.0:1936 - name: ROUTER_METRICS_TYPE value: haproxyPatch the router readiness probe to use the same path as the liveness probe as it is now served by the haproxy router:

$ oc patch dc router -p '"spec": {"template": {"spec": {"containers": [{"name": "router","readinessProbe": {"httpGet": {"path": "/healthz"}}}]}}}'Launch the stats window using the following URL in a browser, where the

STATS_PORTvalue is1936by default:http://admin:<Password>@<router_IP>:<STATS_PORT>You can get the stats in CSV format by adding

;csvto the URL:For example:

http://admin:<Password>@<router_IP>:1936;csvTo get the router IP, admin name, and password:

oc describe pod <router_pod>

To suppress metrics collection:

$ oc adm router --service-account=router --stats-port=0

3.2.23. ARP Cache Tuning for Large-scale Clusters

In OpenShift Container Platform clusters with large numbers of routes (greater than the value of net.ipv4.neigh.default.gc_thresh3, which is 65536 by default), you must increase the default values of sysctl variables on each node in the cluster running the router pod to allow more entries in the ARP cache.

When the problem is occuring, the kernel messages would be similar to the following:

[ 1738.811139] net_ratelimit: 1045 callbacks suppressed

[ 1743.823136] net_ratelimit: 293 callbacks suppressed

When this issue occurs, the oc commands might start to fail with the following error:

Unable to connect to the server: dial tcp: lookup <hostname> on <ip>:<port>: write udp <ip>:<port>-><ip>:<port>: write: invalid argumentTo verify the actual amount of ARP entries for IPv4, run the following:

# ip -4 neigh show nud all | wc -l

If the number begins to approach the net.ipv4.neigh.default.gc_thresh3 threshold, increase the values. Get the current value by running:

# sysctl net.ipv4.neigh.default.gc_thresh1

net.ipv4.neigh.default.gc_thresh1 = 128

# sysctl net.ipv4.neigh.default.gc_thresh2

net.ipv4.neigh.default.gc_thresh2 = 512

# sysctl net.ipv4.neigh.default.gc_thresh3

net.ipv4.neigh.default.gc_thresh3 = 1024The following sysctl sets the variables to the OpenShift Container Platform current default values.

# sysctl net.ipv4.neigh.default.gc_thresh1=8192

# sysctl net.ipv4.neigh.default.gc_thresh2=32768

# sysctl net.ipv4.neigh.default.gc_thresh3=65536To make these settings permanent, see this document.

3.2.24. Protecting Against DDoS Attacks

Add timeout http-request to the default HAProxy router image to protect the deployment against distributed denial-of-service (DDoS) attacks (for example, slowloris):

# and the haproxy stats socket is available at /var/run/haproxy.stats

global

stats socket ./haproxy.stats level admin

defaults

option http-server-close

mode http

timeout http-request 5s

timeout connect 5s

timeout server 10s

timeout client 30s- 1

- timeout http-request is set up to 5 seconds. HAProxy gives a client 5 seconds *to send its whole HTTP request. Otherwise, HAProxy shuts the connection with *an error.

Also, when the environment variable ROUTER_SLOWLORIS_TIMEOUT is set, it limits the amount of time a client has to send the whole HTTP request. Otherwise, HAProxy will shut down the connection.

Setting the environment variable allows information to be captured as part of the router’s deployment configuration and does not require manual modification of the template, whereas manually adding the HAProxy setting requires you to rebuild the router pod and maintain your router template file.

Using annotations implements basic DDoS protections in the HAProxy template router, including the ability to limit the:

- number of concurrent TCP connections

- rate at which a client can request TCP connections

- rate at which HTTP requests can be made

These are enabled on a per route basis because applications can have extremely different traffic patterns.

| Setting | Description |

|---|---|

|

| Enables the settings be configured (when set to true, for example). |

|

| The number of concurrent TCP connections that can be made by the same IP address on this route. |

|

| The number of TCP connections that can be opened by a client IP. |

|

| The number of HTTP requests that a client IP can make in a 3-second period. |

3.2.25. Enable HAProxy Threading

Enabled threading with the --threads flag. This flag specifies the number of threads that the HAProxy router will use.

3.3. Deploying a Customized HAProxy Router

3.3.1. Overview

The default HAProxy router is intended to satisfy the needs of most users. However, it does not expose all of the capability of HAProxy. Therefore, users may need to modify the router for their own needs.

You may need to implement new features within the application back-ends, or modify the current operation. The router plug-in provides all the facilities necessary to make this customization.

The router pod uses a template file to create the needed HAProxy configuration file. The template file is a golang template. When processing the template, the router has access to OpenShift Container Platform information, including the router’s deployment configuration, the set of admitted routes, and some helper functions.

When the router pod starts, and every time it reloads, it creates an HAProxy configuration file, and then it starts HAProxy. The HAProxy configuration manual describes all of the features of HAProxy and how to construct a valid configuration file.

A configMap can be used to add the new template to the router pod. With this approach, the router deployment configuration is modified to mount the configMap as a volume in the router pod. The TEMPLATE_FILE environment variable is set to the full path name of the template file in the router pod.

It is not guaranteed that router template customizations will still work after you upgrade OpenShift Container Platform.

Also, router template customizations must be applied to the template version of the router that is running.

Alternatively, you can build a custom router image and use it when deploying some or all of your routers. There is no need for all routers to run the same image. To do this, modify the haproxy-template.config file, and rebuild the router image. The new image is pushed to the cluster’s Docker repository, and the router’s deployment configuration image: field is updated with the new name. When the cluster is updated, the image needs to be rebuilt and pushed.

In either case, the router pod starts with the template file.

3.3.2. Obtaining the Router Configuration Template

The HAProxy template file is fairly large and complex. For some changes, it may be easier to modify the existing template rather than writing a complete replacement. You can obtain a haproxy-config.template file from a running router by running this on master, referencing the router pod:

# oc get po

NAME READY STATUS RESTARTS AGE

router-2-40fc3 1/1 Running 0 11d

# oc exec router-2-40fc3 cat haproxy-config.template > haproxy-config.template

# oc exec router-2-40fc3 cat haproxy.config > haproxy.configAlternatively, you can log onto the node that is running the router:

# docker run --rm --interactive=true --tty --entrypoint=cat \

registry.redhat.io/openshift3/ose-haproxy-router:v{product-version} haproxy-config.templateThe image name is from container images.

Save this content to a file for use as the basis of your customized template. The saved haproxy.config shows what is actually running.

3.3.3. Modifying the Router Configuration Template

3.3.3.1. Background

The template is based on the golang template. It can reference any of the environment variables in the router’s deployment configuration, any configuration information that is described below, and router provided helper functions.

The structure of the template file mirrors the resulting HAProxy configuration file. As the template is processed, anything not surrounded by {{" something "}} is directly copied to the configuration file. Passages that are surrounded by {{" something "}} are evaluated. The resulting text, if any, is copied to the configuration file.

3.3.3.2. Go Template Actions

The define action names the file that will contain the processed template.

{{define "/var/lib/haproxy/conf/haproxy.config"}}pipeline{{end}}| Function | Meaning |

|---|---|

|

| Returns the list of valid endpoints. When action is "shuffle", the order of endpoints is randomized. |

|

| Tries to get the named environment variable from the pod. If it is not defined or empty, it returns the optional second argument. Otherwise, it returns an empty string. |

|

| The first argument is a string that contains the regular expression, the second argument is the variable to test. Returns a Boolean value indicating whether the regular expression provided as the first argument matches the string provided as the second argument. |

|

| Determines if a given variable is an integer. |

|

| Compares a given string to a list of allowed strings. Returns first match scanning left to right through the list. |

|

| Compares a given string to a list of allowed strings. Returns "true" if the string is an allowed value, otherwise returns false. |

|

| Generates a regular expression matching the route hosts (and paths). The first argument is the host name, the second is the path, and the third is a wildcard Boolean. |

|

| Generates host name to use for serving/matching certificates. First argument is the host name and the second is the wildcard Boolean. |

|

| Determines if a given variable contains "true". |

These functions are provided by the HAProxy template router plug-in.

3.3.3.3. Router Provided Information

This section reviews the OpenShift Container Platform information that the router makes available to the template. The router configuration parameters are the set of data that the HAProxy router plug-in is given. The fields are accessed by (dot) .Fieldname.

The tables below the Router Configuration Parameters expand on the definitions of the various fields. In particular, .State has the set of admitted routes.

| Field | Type | Description |

|---|---|---|

|

| string | The directory that files will be written to, defaults to /var/lib/containers/router |

|

|

| The routes. |

|

|

| The service lookup. |

|

| string | Full path name to the default certificate in pem format. |

|

|

| Peers. |

|

| string | User name to expose stats with (if the template supports it). |

|

| string | Password to expose stats with (if the template supports it). |

|

| int | Port to expose stats with (if the template supports it). |

|

| bool | Whether the router should bind the default ports. |

| Field | Type | Description |

|---|---|---|

|

| string | The user-specified name of the route. |

|

| string | The namespace of the route. |

|

| string |

The host name. For example, |

|

| string |

Optional path. For example, |

|

|

| The termination policy for this back-end; drives the mapping files and router configuration. |

|

|

| Certificates used for securing this back-end. Keyed by the certificate ID. |

|

|

| Indicates the status of configuration that needs to be persisted. |

|

| string | Indicates the port the user wants to expose. If empty, a port will be selected for the service. |

|

|

|

Indicates desired behavior for insecure connections to an edge-terminated route: |

|

| string | Hash of the route + namespace name used to obscure the cookie ID. |

|

| bool | Indicates this service unit needing wildcard support. |

|

|

| Annotations attached to this route. |

|

|

| Collection of services that support this route, keyed by service name and valued on the weight attached to it with respect to other entries in the map. |

|

| int |

Count of the |

The ServiceAliasConfig is a route for a service. Uniquely identified by host + path. The default template iterates over routes using {{range $cfgIdx, $cfg := .State }}. Within such a {{range}} block, the template can refer to any field of the current ServiceAliasConfig using $cfg.Field.

| Field | Type | Description |

|---|---|---|

|

| string |

Name corresponds to a service name + namespace. Uniquely identifies the |

|

|

| Endpoints that back the service. This translates into a final back-end implementation for routers. |

ServiceUnit is an encapsulation of a service, the endpoints that back that service, and the routes that point to the service. This is the data that drives the creation of the router configuration files

| Field | Type |

|---|---|

|

| string |

|

| string |

|

| string |

|

| string |

|

| string |

|

| string |

|

| bool |

Endpoint is an internal representation of a Kubernetes endpoint.

| Field | Type | Description |

|---|---|---|

|

| string |

Represents a public/private key pair. It is identified by an ID, which will become the file name. A CA certificate will not have a |

|

| string | Indicates that the necessary files for this configuration have been persisted to disk. Valid values: "saved", "". |

| Field | Type | Description |

|---|---|---|

| ID | string | |

| Contents | string | The certificate. |

| PrivateKey | string | The private key. |

| Field | Type | Description |

|---|---|---|

|

| string | Dictates where the secure communication will stop. |

|

| string | Indicates the desired behavior for insecure connections to a route. While each router may make its own decisions on which ports to expose, this is normally port 80. |

TLSTerminationType and InsecureEdgeTerminationPolicyType dictate where the secure communication will stop.

| Constant | Value | Meaning |

|---|---|---|

|

|

| Terminate encryption at the edge router. |

|

|

| Terminate encryption at the destination, the destination is responsible for decrypting traffic. |

|

|

| Terminate encryption at the edge router and re-encrypt it with a new certificate supplied by the destination. |

| Type | Meaning |

|---|---|

|

| Traffic is sent to the server on the insecure port (default). |

|

| No traffic is allowed on the insecure port. |

|

| Clients are redirected to the secure port. |

None ("") is the same as Disable.

3.3.3.4. Annotations

Each route can have annotations attached. Each annotation is just a name and a value.

apiVersion: v1

kind: Route

metadata:

annotations:

haproxy.router.openshift.io/timeout: 5500ms

[...]

The name can be anything that does not conflict with existing Annotations. The value is any string. The string can have multiple tokens separated by a space. For example, aa bb cc. The template uses {{index}} to extract the value of an annotation. For example:

{{$balanceAlgo := index $cfg.Annotations "haproxy.router.openshift.io/balance"}}This is an example of how this could be used for mutual client authorization.

{{ with $cnList := index $cfg.Annotations "whiteListCertCommonName" }}

{{ if ne $cnList "" }}

acl test ssl_c_s_dn(CN) -m str {{ $cnList }}

http-request deny if !test

{{ end }}

{{ end }}Then, you can handle the white-listed CNs with this command.

$ oc annotate route <route-name> --overwrite whiteListCertCommonName="CN1 CN2 CN3"See Route-specific Annotations for more information.

3.3.3.5. Environment Variables

The template can use any environment variables that exist in the router pod. The environment variables can be set in the deployment configuration. New environment variables can be added.

They are referenced by the env function:

{{env "ROUTER_MAX_CONNECTIONS" "20000"}}

The first string is the variable, and the second string is the default when the variable is missing or nil. When ROUTER_MAX_CONNECTIONS is not set or is nil, 20000 is used. Environment variables are a map where the key is the environment variable name and the content is the value of the variable.

See Route-specific Environment variables for more information.

3.3.3.6. Example Usage

Here is a simple template based on the HAProxy template file.

Start with a comment:

{{/*

Here is a small example of how to work with templates

taken from the HAProxy template file.

*/}}The template can create any number of output files. Use a define construct to create an output file. The file name is specified as an argument to define, and everything inside the define block up to the matching end is written as the contents of that file.

{{ define "/var/lib/haproxy/conf/haproxy.config" }}

global

{{ end }}

The above will copy global to the /var/lib/haproxy/conf/haproxy.config file, and then close the file.

Set up logging based on environment variables.

{{ with (env "ROUTER_SYSLOG_ADDRESS" "") }}

log {{.}} {{env "ROUTER_LOG_FACILITY" "local1"}} {{env "ROUTER_LOG_LEVEL" "warning"}}

{{ end }}

The env function extracts the value for the environment variable. If the environment variable is not defined or nil, the second argument is returned.

The with construct sets the value of "." (dot) within the with block to whatever value is provided as an argument to with. The with action tests Dot for nil. If not nil, the clause is processed up to the end. In the above, assume ROUTER_SYSLOG_ADDRESS contains /var/log/msg, ROUTER_LOG_FACILITY is not defined, and ROUTER_LOG_LEVEL contains info. The following will be copied to the output file:

log /var/log/msg local1 info

Each admitted route ends up generating lines in the configuration file. Use range to go through the admitted routes:

{{ range $cfgIdx, $cfg := .State }}

backend be_http_{{$cfgIdx}}

{{end}}

.State is a map of ServiceAliasConfig, where the key is the route name. range steps through the map and, for each pass, it sets $cfgIdx with the key, and sets $cfg to point to the ServiceAliasConfig that describes the route. If there are two routes named myroute and hisroute, the above will copy the following to the output file:

backend be_http_myroute

backend be_http_hisroute

Route Annotations, $cfg.Annotations, is also a map with the annotation name as the key and the content string as the value. The route can have as many annotations as desired and the use is defined by the template author. The user codes the annotation into the route and the template author customized the HAProxy template to handle the annotation.

The common usage is to index the annotation to get the value.

{{$balanceAlgo := index $cfg.Annotations "haproxy.router.openshift.io/balance"}}

The index extracts the value for the given annotation, if any. Therefore, $balanceAlgo will contain the string associated with the annotation or nil. As above, you can test for a non-nil string and act on it with the with construct.

{{ with $balanceAlgo }}

balance $balanceAlgo

{{ end }}

Here when $balanceAlgo is not nil, balance $balanceAlgo is copied to the output file.

In a second example, you want to set a server timeout based on a timeout value set in an annotation.

$value := index $cfg.Annotations "haproxy.router.openshift.io/timeout"

The $value can now be evaluated to make sure it contains a properly constructed string. The matchPattern function accepts a regular expression and returns true if the argument satisfies the expression.

matchPattern "[1-9][0-9]*(us\|ms\|s\|m\|h\|d)?" $value

This would accept 5000ms but not 7y. The results can be used in a test.

{{if (matchPattern "[1-9][0-9]*(us\|ms\|s\|m\|h\|d)?" $value) }}

timeout server {{$value}}

{{ end }}It can also be used to match tokens:

matchPattern "roundrobin|leastconn|source" $balanceAlgo

Alternatively matchValues can be used to match tokens:

matchValues $balanceAlgo "roundrobin" "leastconn" "source"3.3.4. Using a ConfigMap to Replace the Router Configuration Template

You can use a ConfigMap to customize the router instance without rebuilding the router image. The haproxy-config.template, reload-haproxy, and other scripts can be modified as well as creating and modifying router environment variables.

- Copy the haproxy-config.template that you want to modify as described above. Modify it as desired.

Create a ConfigMap:

$ oc create configmap customrouter --from-file=haproxy-config.templateThe

customrouterConfigMap now contains a copy of the modified haproxy-config.template file.Modify the router deployment configuration to mount the ConfigMap as a file and point the

TEMPLATE_FILEenvironment variable to it. This can be done viaoc set envandoc set volumecommands, or alternatively by editing the router deployment configuration.- Using

occommands $ oc set volume dc/router --add --overwrite \ --name=config-volume \ --mount-path=/var/lib/haproxy/conf/custom \ --source='{"configMap": { "name": "customrouter"}}' $ oc set env dc/router \ TEMPLATE_FILE=/var/lib/haproxy/conf/custom/haproxy-config.template- Editing the Router Deployment Configuration

Use