モニタリング

OpenShift Container Platform でのモニタリングスタックの設定および使用

概要

第1章 モニタリングの概要

1.1. OpenShift Container Platform モニタリングについて

OpenShift Container Platform には、コアプラットフォームコンポーネントのモニタリングを提供する事前に設定され、事前にインストールされた自己更新型のモニタリングスタックが含まれます。また、ユーザー定義プロジェクトのモニタリングを有効 にするオプションもあります。

クラスター管理者は、サポートされている設定で モニタリングスタックを設定 できます。OpenShift Container Platform は、追加設定が不要のモニタリングのベストプラクティスを提供します。

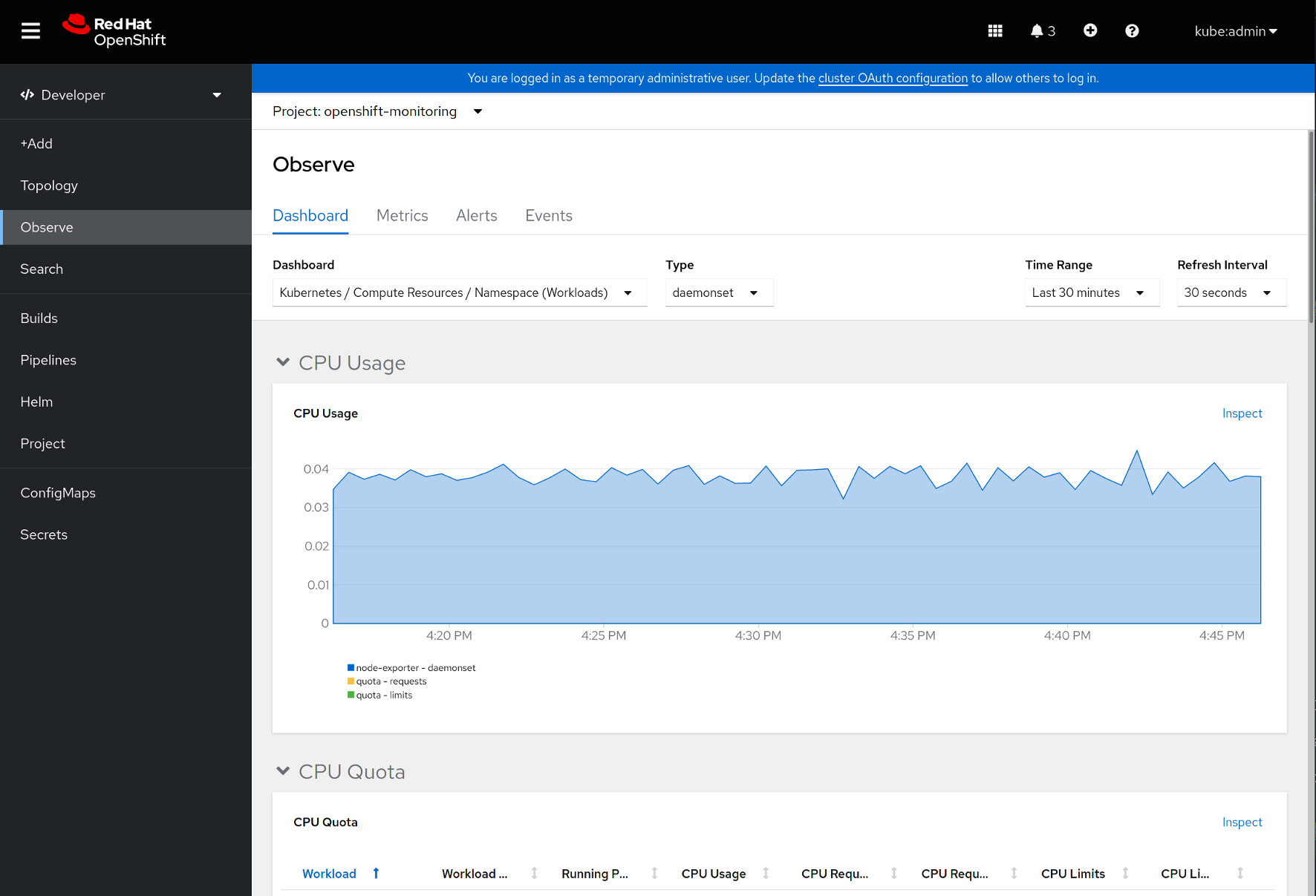

管理者にクラスターの問題について即時に通知するアラートのセットがデフォルトで含まれます。OpenShift Container Platform Web コンソールのデフォルトのダッシュボードには、クラスターの状態をすぐに理解できるようにするクラスターのメトリックの視覚的な表示が含まれます。OpenShift Container Platform Web コンソールを使用して、メトリクスの表示と管理、アラート、および モニタリングダッシュボードの確認 することができます。

OpenShift Container Platform Web コンソールの Observe セクションでは、metrics、alerts、monitoring dashboards、metrics targets などのモニタリング機能にアクセスして管理できます。

OpenShift Container Platform のインストール後に、クラスター管理者はオプションでユーザー定義プロジェクトのモニタリングを有効にできます。この機能を使用することで、クラスター管理者、開発者、および他のユーザーは、サービスと Pod を独自のプロジェクトでモニターする方法を指定できます。クラスター管理者は、Troubleshooting monitoring issues で、Prometheus によるユーザーメトリックの使用不可やディスクスペースの大量消費などの一般的な問題に対する回答を見つけることができます。

1.2. モニタリングスタックについて

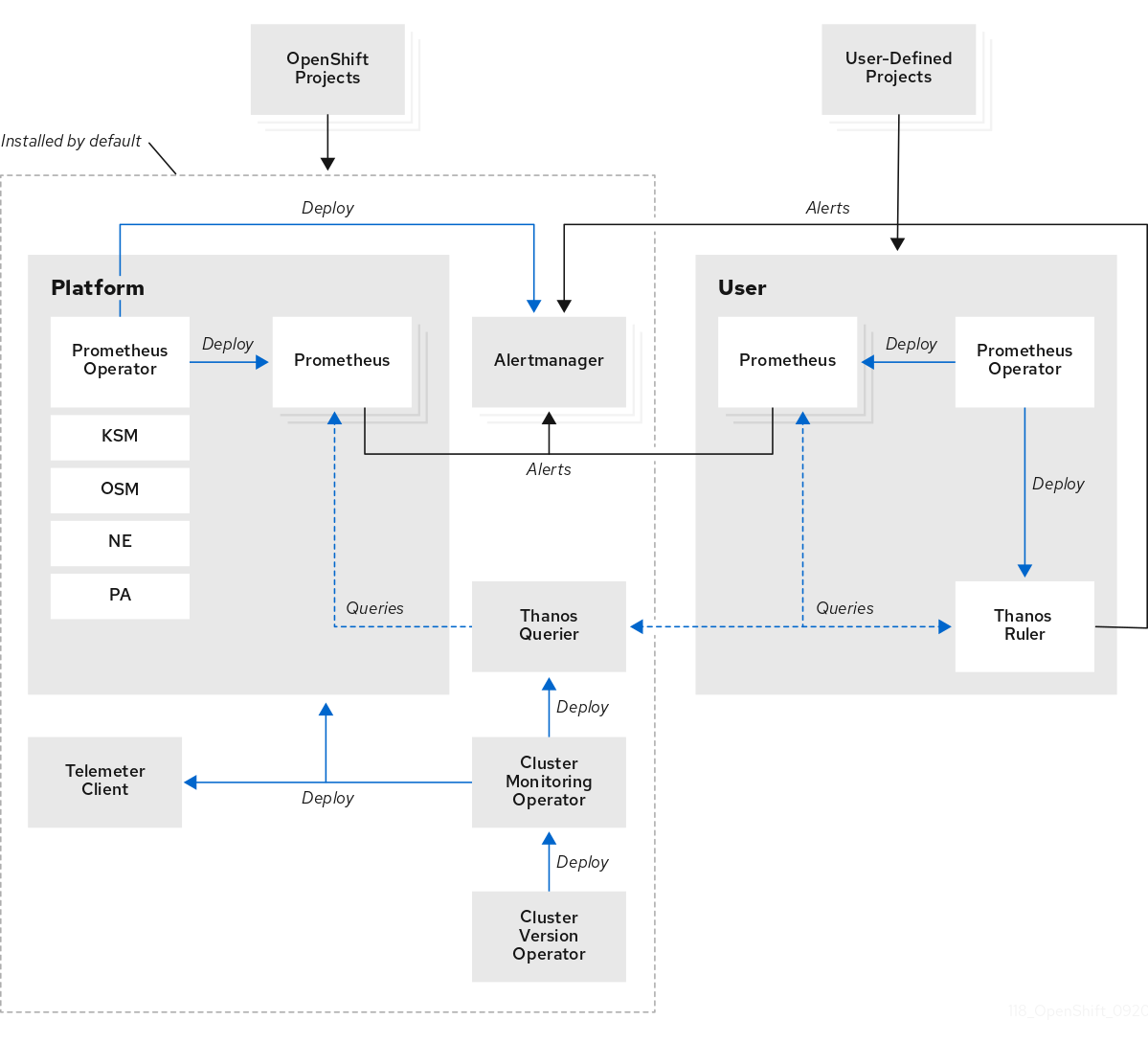

OpenShift Container Platform モニタリングスタックは、Prometheus オープンソースプロジェクトおよびその幅広いエコシステムをベースとしています。モニタリングスタックには、以下のコンポーネントが含まれます。

-

デフォルトのプラットフォームモニタリングコンポーネント。プラットフォームモニタリングコンポーネントのセットは、OpenShift Container Platform のインストール時にデフォルトで

openshift-monitoringプロジェクトにインストールされます。これにより、Kubernetes サービスを含む OpenShift Container Platform のコアコンポーネントのモニタリング機能が提供されます。デフォルトのモニタリングスタックは、クラスターのリモートのヘルスモニタリングも有効にします。これらのコンポーネントは、以下の図の Installed by default (デフォルトのインストール) セクションで説明されています。 -

ユーザー定義のプロジェクトをモニターするためのコンポーネント。オプションでユーザー定義プロジェクトのモニタリングを有効にした後に、追加のモニタリングコンポーネントは

openshift-user-workload-monitoringプロジェクトにインストールされます。これにより、ユーザー定義プロジェクトのモニタリング機能が提供されます。これらのコンポーネントは、以下の図の User (ユーザー) セクションで説明されています。

1.2.1. デフォルトのモニタリングコンポーネント

デフォルトで、OpenShift Container Platform 4.11 モニタリングスタックには、以下のコンポーネントが含まれます。

| コンポーネント | 説明 |

|---|---|

| クラスターモニタリング Operator | Cluster Monitoring Operator (CMO) は、モニタリングスタックの中心的なコンポーネントです。Prometheus および Alertmanager インスタンス、Thanos Querier、Telemeter Client、およびメトリクスターゲットをデプロイ、管理、および自動更新します。CMO は Cluster Version Operator (CVO) によってデプロイされます。 |

| Prometheus Operator |

|

| Prometheus | Prometheus は、OpenShift Container Platform モニタリングスタックのベースとなるモニタリングシステムです。Prometheus は Time Series を使用するデータベースであり、メトリックのルール評価エンジンです。Prometheus は処理のためにアラートを Alertmanager に送信します。 |

| Prometheus アダプター |

Prometheus アダプター (上記の図の PA) は、Prometheus で使用する Kubernetes ノードおよび Pod クエリーを変換します。変換されるリソースメトリックには、CPU およびメモリーの使用率メトリックが含まれます。Prometheus アダプターは、Horizontal Pod Autoscaling のクラスターリソースメトリック API を公開します。Prometheus アダプターは |

| Alertmanager | Alertmanager サービスは、Prometheus から送信されるアラートを処理します。また、Alertmanager は外部の通知システムにアラートを送信します。 |

|

|

|

|

|

OpenShift Container Platform 固有のリソースのメトリックを追加すると、 |

|

|

|

| Thanos Querier | Thanos Querier は、単一のマルチテナントインターフェイスで、OpenShift Container Platform のコアメトリクスおよびユーザー定義プロジェクトのメトリクスを集約し、オプションでこれらの重複を排除します。 |

| Telemeter クライアント | Telemeter Client は、クラスターのリモートヘルスモニタリングを容易にするために、プラットフォーム Prometheus インスタンスから Red Hat にデータのサブセクションを送信します。 |

モニターリグスタックのすべてのコンポーネントはスタックによってモニターされ、OpenShift Container Platform の更新時に自動的に更新されます。

モニタリングスタックのすべてのコンポーネントは、クラスター管理者が一元的に設定する TLS セキュリティープロファイル設定を使用します。TLS セキュリティー設定を使用するモニタリングスタックコンポーネントを設定する場合、コンポーネントはグローバル OpenShift Container Platform apiservers.config.openshift.io/cluster リソースの tlsSecurityProfile フィールドにすでに存在する TLS セキュリティープロファイル設定を使用します。

1.2.2. デフォルトのモニタリングターゲット

スタック自体のコンポーネントに加え、デフォルトのモニタリングスタックは以下をモニターします。

- CoreDNS

- Elasticsearch (ロギングがインストールされている場合)

- etcd

- Fluentd (ロギングがインストールされている場合)

- HAProxy

- イメージレジストリー

- Kubelets

- Kubernetes API サーバー

- Kubernetes コントローラーマネージャー

- Kubernetes スケジューラー

- OpenShift API サーバー

- OpenShift Controller Manager

- Operator Lifecycle Manager (OLM)

各 OpenShift Container Platform コンポーネントはそれぞれのモニタリング設定を行います。OpenShift Container Platform コンポーネントのモニタリングに関する問題については、Jira 問題 で一般的なモニタリングコンポーネントについてではなく、特定のコンポーネントに対してバグを報告してください。

他の OpenShift Container Platform フレームワークのコンポーネントもメトリクスを公開する場合があります。詳細については、それぞれのドキュメントを参照してください。

1.2.3. ユーザー定義プロジェクトをモニターするためのコンポーネント

OpenShift Container Platform 4.11 には、ユーザー定義プロジェクトでサービスおよび Pod をモニターできるモニタリングスタックのオプションの拡張機能が含まれています。この機能には、以下のコンポーネントが含まれます。

| コンポーネント | 説明 |

|---|---|

| Prometheus Operator |

|

| Prometheus | Prometheus は、ユーザー定義のプロジェクト用にモニタリング機能が提供されるモニタリングシステムです。Prometheus は処理のためにアラートを Alertmanager に送信します。 |

| Thanos Ruler | Thanos Ruler は、別のプロセスとしてデプロイされる Prometheus のルール評価エンジンです。OpenShift Container Platform 4.11 では、Thanos Ruler はユーザー定義プロジェクトをモニタリングするためのルールおよびアラート評価を提供します。 |

| Alertmanager | Alertmanager サービスは、Prometheus および Thanos Ruler から送信されるアラートを処理します。Alertmanager はユーザー定義のアラートを外部通知システムに送信します。このサービスのデプロイは任意です。 |

上記の表のコンポーネントは、モニタリングがユーザー定義のプロジェクトに対して有効にされた後にデプロイされます。

モニターリグスタックのすべてのコンポーネントはスタックによってモニターされ、OpenShift Container Platform の更新時に自動的に更新されます。

1.2.4. ユーザー定義プロジェクトのターゲットのモニタリング

モニタリングがユーザー定義プロジェクトについて有効にされている場合には、以下をモニターできます。

- ユーザー定義プロジェクトのサービスエンドポイント経由で提供されるメトリック。

- ユーザー定義プロジェクトで実行される Pod。

1.3. OpenShift Container Platform モニタリングの一般用語集

この用語集では、OpenShift Container Platform アーキテクチャーで使用される一般的な用語を定義します。

- Alertmanager

- Alertmanager は、Prometheus から受信したアラートを処理します。また、Alertmanager は外部の通知システムにアラートを送信します。

- アラートルール

- アラートルールには、クラスター内の特定の状態を示す一連の条件が含まれます。アラートは、これらの条件が true の場合にトリガーされます。アラートルールには、アラートのルーティング方法を定義する重大度を割り当てることができます。

- クラスターモニタリング Operator

- Cluster Monitoring Operator (CMO) は、モニタリングスタックの中心的なコンポーネントです。Thanos Querier、Telemeter Client、メトリクスターゲットなどの Prometheus インスタンスをデプロイおよび管理して、それらが最新であることを確認します。CMO は Cluster Version Operator (CVO) によってデプロイされます。

- Cluster Version Operator

- Cluster Version Operator (CVO) はクラスター Operator のライフサイクルを管理し、その多くはデフォルトで OpenShift Container Platform にインストールされます。

- 設定マップ

-

ConfigMap は、設定データを Pod に注入する方法を提供します。タイプ

ConfigMapのボリューム内の ConfigMap に格納されたデータを参照できます。Pod で実行しているアプリケーションは、このデータを使用できます。 - Container

- コンテナーは、ソフトウェアとそのすべての依存関係を含む軽量で実行可能なイメージです。コンテナーは、オペレーティングシステムを仮想化します。そのため、コンテナーはデータセンターからパブリッククラウド、プライベートクラウド、開発者のラップトップなどまで、場所を問わずコンテナーを実行できます。

- カスタムリソース (CR)

- CR は Kubernetes API のエクステンションです。カスタムリソースを作成できます。

- etcd

- etcd は OpenShift Container Platform のキーと値のストアであり、すべてのリソースオブジェクトの状態を保存します。

- Fluentd

- Fluentd は、ノードからログを収集し、そのログを Elasticsearch に送ります。

- Kubelets

- ノード上で実行され、コンテナーマニフェストを読み取ります。定義されたコンテナーが開始され、実行されていることを確認します。

- Kubernetes API サーバー

- Kubernetes API サーバーは、API オブジェクトのデータを検証して設定します。

- Kubernetes コントローラーマネージャー

- Kubernetes コントローラーマネージャーは、クラスターの状態を管理します。

- Kubernetes スケジューラー

- Kubernetes スケジューラーは Pod をノードに割り当てます。

- labels

- ラベルは、Pod などのオブジェクトのサブセットを整理および選択するために使用できるキーと値のペアです。

- ノード

- OpenShift Container Platform クラスター内のワーカーマシン。ノードは、仮想マシン (VM) または物理マシンのいずれかです。

- Operator

- OpenShift Container Platform クラスターで Kubernetes アプリケーションをパッケージ化、デプロイ、および管理するための推奨される方法。Operator は、人間による操作に関する知識を取り入れて、簡単にパッケージ化してお客様と共有できるソフトウェアにエンコードします。

- Operator Lifecycle Manager (OLM)

- OLM は、Kubernetes ネイティブアプリケーションのライフサイクルをインストール、更新、および管理するのに役立ちます。OLM は、Operator を効果的かつ自動化されたスケーラブルな方法で管理するために設計されたオープンソースのツールキットです。

- 永続ストレージ

- デバイスがシャットダウンされた後でもデータを保存します。Kubernetes は永続ボリュームを使用して、アプリケーションデータを保存します。

- 永続ボリューム要求 (PVC)

- PVC を使用して、PersistentVolume を Pod にマウントできます。クラウド環境の詳細を知らなくてもストレージにアクセスできます。

- pod

- Pod は、Kubernetes における最小の論理単位です。Pod には、ワーカーノードで実行される 1 つ以上のコンテナーが含まれます。

- Prometheus

- Prometheus は、OpenShift Container Platform モニタリングスタックのベースとなるモニタリングシステムです。Prometheus は Time Series を使用するデータベースであり、メトリックのルール評価エンジンです。Prometheus は処理のためにアラートを Alertmanager に送信します。

- Prometheus アダプター

- Prometheus アダプターは、Prometheus で使用するために Kubernetes ノードと Pod のクエリーを変換します。変換されるリソースメトリクスには、CPU およびメモリーの使用率が含まれます。Prometheus アダプターは、Horizontal Pod Autoscaling のクラスターリソースメトリック API を公開します。

- Prometheus Operator

-

openshift-monitoringプロジェクトの Prometheus Operator(PO) は、プラットフォーム Prometheus インスタンスおよび Alertmanager インスタンスを作成し、設定し、管理します。また、Kubernetes ラベルのクエリーに基づいてモニタリングターゲットの設定を自動生成します。 - サイレンス

- サイレンスをアラートに適用し、アラートの条件が true の場合に通知が送信されることを防ぐことができます。初期通知後はアラートをミュートにして、根本的な問題の解決に取り組むことができます。

- storage

- OpenShift Container Platform は、オンプレミスおよびクラウドプロバイダーの両方で、多くのタイプのストレージをサポートします。OpenShift Container Platform クラスターで、永続データおよび非永続データ用のコンテナーストレージを管理できます。

- Thanos Ruler

- Thanos Ruler は、別のプロセスとしてデプロイされる Prometheus のルール評価エンジンです。OpenShift Container Platform では、Thanos Ruler はユーザー定義プロジェクトをモニタリングするためのルールおよびアラート評価を提供します。

- Web コンソール

- OpenShift Container Platform を管理するためのユーザーインターフェイス (UI)。

1.5. 次のステップ

第2章 モニタリングスタックの設定

OpenShift Container Platform 4 インストールプログラムは、インストール前の少数の設定オプションのみを提供します。ほとんどの OpenShift Container Platform フレームワークコンポーネント (クラスターモニタリングスタックを含む) の設定はインストール後に行われます。

このセクションでは、サポートされている設定内容を説明し、モニタリングスタックの設定方法を示し、いくつかの一般的な設定シナリオを示します。

2.1. 前提条件

- モニタリングスタックには、追加のリソース要件があります。コンピューティングリソースの推奨事項については、Cluster Monitoring Operator のスケーリング を参照し、十分なリソースがあることを確認してください。

2.2. モニタリングのメンテナンスおよびサポート

OpenShift Container Platform モニタリングの設定は、本書で説明されているオプションを使用して行う方法がサポートされている方法です。サポートされていない他の設定は使用しないでください。設定のパラダイムが Prometheus リリース間で変更される可能性があり、このような変更には、設定のすべての可能性が制御されている場合のみ適切に対応できます。本セクションで説明されている設定以外の設定を使用する場合、cluster-monitoring-operator が差分を調整するため、変更内容は失われます。Operator はデフォルトで定義された状態へすべてをリセットします。

2.2.1. モニタリングのサポートに関する考慮事項

以下の変更は明示的にサポートされていません。

-

追加の

ServiceMonitor、PodMonitor、およびPrometheusRuleオブジェクトをopenshift-*およびkube-*プロジェクトに作成します。 openshift-monitoringまたはopenshift-user-workload-monitoringプロジェクトにデプロイされるリソースまたはオブジェクト変更OpenShift Container Platform モニタリングスタックによって作成されるリソースは、後方互換性の保証がないために他のリソースで使用されることは意図されていません。注記Alertmanager 設定は、

openshift-monitoringnamespace にシークレットリソースとしてデプロイされます。ユーザー定義のアラートルーティング用に別の Alertmanager インスタンスを有効にしている場合、Alertmanager 設定もopenshift-user-workload-monitoringnamespace のシークレットリソースとしてデプロイされます。Alertmanager のインスタンスの追加ルートを設定するには、そのシークレットをデコードし、変更し、エンコードする必要があります。この手順は、前述のステートメントに対してサポートされる例外です。- スタックのリソースの変更。OpenShift Container Platform モニタリングスタックは、そのリソースが常に期待される状態にあることを確認します。これらが変更される場合、スタックはこれらをリセットします。

-

ユーザー定義ワークロードの

openshift-*、およびkube-*プロジェクトへのデプロイ。これらのプロジェクトは Red Hat が提供するコンポーネント用に予約され、ユーザー定義のワークロードに使用することはできません。 - カスタム Prometheus インスタンスの OpenShift Container Platform へのインストール。カスタムインスタンスは、Prometheus Operator によって管理される Prometheus カスタムリソース (CR) です。

-

Prometheus Operator での

Probeカスタムリソース定義 (CRD) による現象ベースのモニタリングの有効化。

メトリクス、記録ルールまたはアラートルールの後方互換性を保証されません。

2.2.2. Operator のモニタリングについてのサポートポリシー

モニタリング Operator により、OpenShift Container Platform モニタリングリソースの設定およびテスト通りに機能することを確認できます。Operator の Cluster Version Operator (CVO) コントロールがオーバーライドされる場合、Operator は設定の変更に対応せず、クラスターオブジェクトの意図される状態を調整したり、更新を受信したりしません。

Operator の CVO コントロールのオーバーライドはデバッグ時に役立ちますが、これはサポートされず、クラスター管理者は個々のコンポーネントの設定およびアップグレードを完全に制御することを前提としています。

Cluster Version Operator のオーバーライド

spec.overrides パラメーターを CVO の設定に追加すると、管理者はコンポーネントについての CVO の動作にオーバーライドのリストを追加できます。コンポーネントについて spec.overrides[].unmanaged パラメーターを true に設定すると、クラスターのアップグレードがブロックされ、CVO のオーバーライドが設定された後に管理者にアラートが送信されます。

Disabling ownership via cluster version overrides prevents upgrades. Please remove overrides before continuing.CVO のオーバーライドを設定すると、クラスター全体がサポートされていない状態になり、モニタリングスタックをその意図された状態に調整されなくなります。これは Operator に組み込まれた信頼性の機能に影響を与え、更新が受信されなくなります。サポートを継続するには、オーバーライドを削除した後に、報告された問題を再現する必要があります。

2.3. モニタリングスタックの設定の準備

モニタリング設定マップを作成し、更新してモニタリングスタックを設定できます。

2.3.1. クラスターモニタリング設定マップの作成

OpenShift Container Platform のコアモニタリングコンポーネントを設定するには、cluster-monitoring-config ConfigMap オブジェクトを openshift-monitoring プロジェクトに作成する必要があります。

変更を cluster-monitoring-config ConfigMap オブジェクトに保存すると、openshift-monitoring プロジェクトの Pod の一部またはすべてが再デプロイされる可能性があります。これらのコンポーネントが再デプロイするまで時間がかかる場合があります。

前提条件

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

OpenShift CLI (

oc) がインストールされている。

手順

cluster-monitoring-configConfigMapオブジェクトが存在するかどうかを確認します。$ oc -n openshift-monitoring get configmap cluster-monitoring-configConfigMapオブジェクトが存在しない場合:以下の YAML マニフェストを作成します。以下の例では、このファイルは

cluster-monitoring-config.yamlという名前です。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: |設定を適用して

ConfigMapを作成します。$ oc apply -f cluster-monitoring-config.yaml

2.3.2. ユーザー定義のワークロードモニタリング設定マップの作成

ユーザー定義プロジェクトをモニターするコンポーネントを設定するには、user-workload-monitoring-config ConfigMap オブジェクトを openshift-user-workload-monitoring プロジェクトに作成する必要があります。

変更を user-workload-monitoring-config ConfigMap オブジェクトに保存すると、openshift-user-workload-monitoring プロジェクトの Pod の一部またはすべてが再デプロイされる可能性があります。これらのコンポーネントが再デプロイするまで時間がかかる場合があります。ユーザー定義プロジェクトのモニタリングを最初に有効にする前に設定マップを作成し、設定することができます。これにより、Pod を頻繁に再デプロイする必要がなくなります。

前提条件

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

OpenShift CLI (

oc) がインストールされている。

手順

user-workload-monitoring-configConfigMapオブジェクトが存在するかどうかを確認します。$ oc -n openshift-user-workload-monitoring get configmap user-workload-monitoring-configuser-workload-monitoring-configConfigMapオブジェクトが存在しない場合:以下の YAML マニフェストを作成します。以下の例では、このファイルは

user-workload-monitoring-config.yamlという名前です。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: |設定を適用して

ConfigMapを作成します。$ oc apply -f user-workload-monitoring-config.yaml注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。

2.4. モニタリングスタックの設定

OpenShift Container Platform 4.11 では、cluster-monitoring-config または user-workload-monitoring-config ConfigMap オブジェクトを使用して、モニタリングスタックを設定できます。設定マップはクラスターモニタリング Operator (CMO) を設定し、その後にスタックのコンポーネントが設定されます。

前提条件

OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

-

OpenShift CLI (

oc) がインストールされている。

手順

ConfigMapオブジェクトを編集します。OpenShift Container Platform のコアモニタリングコンポーネントを設定するには、以下を実行します。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-config設定を、

data/config.yamlの下に値とキーのペア<component_name>: <component_configuration>として追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | <component>: <configuration_for_the_component><component>および<configuration_for_the_component>を随時置き換えます。以下の

ConfigMapオブジェクトの例は、Prometheus の永続ボリューム要求 (PVC) を設定します。これは、OpenShift Container Platform のコアコンポーネントのみをモニターする Prometheus インスタンスに関連します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s:1 volumeClaimTemplate: spec: storageClassName: fast volumeMode: Filesystem resources: requests: storage: 40Gi- 1

- Prometheus コンポーネントを定義し、後続の行はその設定を定義します。

ユーザー定義のプロジェクトをモニターするコンポーネントを設定するには、以下を実行します。

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-config設定を、

data/config.yamlの下に値とキーのペア<component_name>: <component_configuration>として追加します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | <component>: <configuration_for_the_component><component>および<configuration_for_the_component>を随時置き換えます。以下の

ConfigMapオブジェクトの例は、Prometheus のデータ保持期間および最小コンテナーリソース要求を設定します。これは、ユーザー定義のプロジェクトのみをモニターする Prometheus インスタンスに関連します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus:1 retention: 24h2 resources: requests: cpu: 200m3 memory: 2Gi4 注記Prometheus 設定マップコンポーネントは、

cluster-monitoring-configConfigMapオブジェクトでprometheusK8sと呼ばれ、user-workload-monitoring-configConfigMapオブジェクトでprometheusと呼ばれます。

ファイルを保存して、変更を

ConfigMapオブジェクトに適用します。新規設定の影響を受けた Pod は自動的に再起動されます。注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告変更がモニタリング設定マップに保存されると、関連するプロジェクトの Pod およびその他のリソースが再デプロイされる可能性があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

2.5. 設定可能なモニタリングコンポーネント

以下の表は、設定可能なモニタリングコンポーネントと、cluster-monitoring-config および user-workload-monitoring-config ConfigMap オブジェクトでコンポーネントを指定するために使用されるキーを示しています。

| コンポーネント | cluster-monitoring-config 設定マップキー | user-workload-monitoring-config 設定マップキー |

|---|---|---|

| Prometheus Operator |

|

|

| Prometheus |

|

|

| Alertmanager |

|

|

| kube-state-metrics |

| |

| openshift-state-metrics |

| |

| Telemeter クライアント |

| |

| Prometheus アダプター |

| |

| Thanos Querier |

| |

| Thanos Ruler |

|

Prometheus キーは、cluster-monitoring-config ConfigMap で prometheusK8s と呼ばれ、user-workload-monitoring-config ConfigMap オブジェクトで prometheus と呼ばれています。

2.6. ノードセレクターを使用したモニタリングコンポーネントの移動

ラベル付きノードで nodeSelector 制約を使用すると、任意のモニタリングスタックコンポーネントを特定ノードに移動できます。これにより、クラスター全体のモニタリングコンポーネントの配置と分散を制御できます。

モニタリングコンポーネントの配置と分散を制御することで、システムリソースの使用を最適化し、パフォーマンスを高め、特定の要件やポリシーに基づいてワークロードを分離できます。

2.6.1. ノードセレクターと他の制約の連携

ノードセレクターの制約を使用してモニタリングコンポーネントを移動する場合、クラスターに Pod のスケジューリングを制御するための他の制約があることに注意してください。

- Pod の配置を制御するために、トポロジー分散制約が設定されている可能性があります。

- Prometheus、Thanos Querier、Alertmanager、およびその他のモニタリングコンポーネントでは、コンポーネントの複数の Pod が必ず異なるノードに分散されて高可用性が常に確保されるように、ハードな非アフィニティールールが設定されています。

ノード上で Pod をスケジュールする場合、Pod スケジューラーは既存の制約をすべて満たすように Pod の配置を決定します。つまり、Pod スケジューラーがどの Pod をどのノードに配置するかを決定する際に、すべての制約が組み合わされます。

そのため、ノードセレクター制約を設定しても既存の制約をすべて満たすことができない場合、Pod スケジューラーはすべての制約をマッチさせることができず、ノードへの Pod 配置をスケジュールしません。

モニタリングコンポーネントの耐障害性と高可用性を維持するには、コンポーネントを移動するノードセレクター制約を設定する際に、十分な数のノードが利用可能で、すべての制約がマッチすることを確認してください。

2.6.2. モニタリングコンポーネントの異なるノードへの移動

モニタリングスタックコンポーネントが実行されるクラスター内のノードを指定するには、ノードに割り当てられたラベルと一致するようにコンポーネントの ConfigMap オブジェクトの nodeSelector 制約を設定します。

ノードセレクター制約を既存のスケジュール済み Pod に直接追加することはできません。

前提条件

OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

-

OpenShift CLI (

oc) がインストールされている。

手順

まだの場合は、モニタリングコンポーネントを実行するノードにラベルを追加します。

$ oc label nodes <node-name> <node-label>ConfigMapオブジェクトを編集します。OpenShift Container Platform のコアプロジェクトをモニターするコンポーネントを移行するには、以下を実行します。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configdata/config.yamlでコンポーネントのnodeSelector制約のノードラベルを指定します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | <component>:1 nodeSelector: <node-label-1>2 <node-label-2>3 <...>注記nodeSelectorの制約を設定した後もモニタリングコンポーネントがPending状態のままになっている場合は、Pod イベントでテイントおよび容認に関連するエラーの有無を確認します。

ユーザー定義プロジェクトをモニターするコンポーネントを移動するには、以下を実行します。

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-configdata/config.yamlでコンポーネントのnodeSelector制約のノードラベルを指定します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | <component>:1 nodeSelector: <node-label-1>2 <node-label-2>3 <...>注記nodeSelectorの制約を設定した後もモニタリングコンポーネントがPending状態のままになっている場合は、Pod イベントでテイントおよび容認に関連するエラーの有無を確認します。

変更を適用するためにファイルを保存します。新しい設定で指定されたコンポーネントは、新しいノードに自動的に移動されます。

注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告monitoring config map への変更を保存すると、プロジェクトの Pod およびその他のリソースが再デプロイされる場合があります。そのプロジェクトで実行中のモニタリングプロセスも再起動する場合があります。

2.7. モニタリングコンポーネントへの容認 (Toleration) の割り当て

容認をモニタリングスタックのコンポーネントに割り当て、それらをテイントされたノードに移動することができます。

前提条件

OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

-

OpenShift CLI (

oc) がインストールされている。

手順

ConfigMapオブジェクトを編集します。容認をコア OpenShift Container Platform プロジェクトをモニターするコンポーネントに割り当てるには、以下を実行します。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configコンポーネントの

tolerationsを指定します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | <component>: tolerations: <toleration_specification><component>および<toleration_specification>を随時置き換えます。たとえば、

oc adm taint nodes node1 key1=value1:NoScheduleは、キーがkey1で、値がvalue1のnode1にテイントを追加します。これにより、モニタリングコンポーネントがnode1に Pod をデプロイするのを防ぎます。ただし、そのテイントに対して許容値が設定されている場合を除きます。以下の例は、サンプルのテイントを容認するようにalertmanagerMainコンポーネントを設定します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | alertmanagerMain: tolerations: - key: "key1" operator: "Equal" value: "value1" effect: "NoSchedule"

ユーザー定義プロジェクトをモニターするコンポーネントに容認を割り当てるには、以下を実行します。

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-configコンポーネントの

tolerationsを指定します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | <component>: tolerations: <toleration_specification><component>および<toleration_specification>を随時置き換えます。たとえば、

oc adm taint nodes node1 key1=value1:NoScheduleは、キーがkey1で、値がvalue1のnode1にテイントを追加します。これにより、モニタリングコンポーネントがnode1に Pod をデプロイするのを防ぎます。ただし、そのテイントに対して許容値が設定されている場合を除きます。以下の例では、サンプルのテイントを容認するようにthanosRulerコンポーネントを設定します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | thanosRuler: tolerations: - key: "key1" operator: "Equal" value: "value1" effect: "NoSchedule"

変更を適用するためにファイルを保存します。新しいコンポーネントの配置設定が自動的に適用されます。

注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告変更がモニタリング設定マップに保存されると、関連するプロジェクトの Pod およびその他のリソースが再デプロイされる可能性があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

2.8. メトリクススクレイピング (収集) のボディーサイズ制限の設定

デフォルトでは、スクレイピングされたメトリックターゲットから返されるデータの圧縮されていない本文のサイズに制限はありません。スクレイピングされたターゲットが大量のデータを含む応答を返したときに、Prometheus が大量のメモリーを消費する状況を回避するために、ボディサイズの制限を設定できます。さらに、本体のサイズ制限を設定することで、悪意のあるターゲットが Prometheus およびクラスター全体に与える影響を軽減できます。

enforcedBodySizeLimit の値を設定した後、少なくとも 1 つの Prometheus スクレイプターゲットが、設定された値より大きい応答本文で応答すると、アラート PrometheusScrapeBodySizeLimitHit が発生します。

ターゲットからスクレイピングされたメトリックデータの非圧縮ボディサイズが設定されたサイズ制限を超えていると、スクレイピングは失敗します。次に、Prometheus はこのターゲットがダウンしていると見なし、その up メトリック値を 0 に設定します。これにより、TargetDown アラートをトリガーできます。

前提条件

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

OpenShift CLI (

oc) がインストールされている。

手順

openshift-monitoringnamespace でcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configenforcedBodySizeLimitの値をdata/config.yaml/prometheusK8sに追加して、ターゲットスクレイプごとに受け入れられるボディサイズを制限します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: |- prometheusK8s: enforcedBodySizeLimit: 40MB1 - 1

- スクレイピングされたメトリックターゲットの最大ボディサイズを指定します。この

enforcedBodySizeLimitの例では、ターゲットスクレイプごとの非圧縮サイズを 40 メガバイトに制限しています。有効な数値は、B (バイト)、KB (キロバイト)、MB (メガバイト)、GB (ギガバイト)、TB (テラバイト)、PB (ペタバイト)、および EB (エクサバイト) の Prometheus データサイズ形式を使用します。デフォルト値は0で、制限なしを指定します。値をautomaticに設定して、クラスターの容量に基づいて制限を自動的に計算することもできます。

ファイルを保存して、変更を自動的に適用します。

警告cluster-monitoring-config設定マップへの変更を保存すると、openshift-monitoringプロジェクトの Pod およびその他のリソースが再デプロイされる場合があります。そのプロジェクトで実行中のモニタリングプロセスも再起動する場合があります。

2.9. 専用サービスモニターの設定

専用のサービスモニターを使用してリソースメトリックパイプラインのメトリックを収集するように OpenShift Container Platform コアプラットフォームモニタリングを設定できます。

専用サービスモニターを有効にすると、kubelet エンドポイントから 2 つの追加メトリクスが公開され、honorTimestamps フィールドの値が true に設定されます。

専用のサービスモニターを有効にすることで、oc adm top pod コマンドや horizontal Pod Autoscaler などで使用される Prometheus Adapter ベースの CPU 使用率測定の一貫性を向上させることができます。

2.9.1. 専用サービスモニターの有効化

openshift-monitoring namespace の cluster-monitoring-config ConfigMap オブジェクトで dedicatedServiceMonitors キーを設定することで、専用サービスモニターを使用するようにコアプラットフォームのモニタリングを設定できます。

前提条件

-

OpenShift CLI (

oc) がインストールされている。 -

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

手順

openshift-monitoringnamespace でcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-config次のサンプルに示すように、

enabled: trueのキーと値のペアを追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | k8sPrometheusAdapter: dedicatedServiceMonitors: enabled: true1 - 1

- kubelet

/metrics/resourceエンドポイントを公開する専用サービスモニターをデプロイするには、enabledフィールドの値をtrueに設定します。

ファイルを保存して、変更を自動的に適用します。

警告cluster-monitoring-config設定マップへの変更を保存すると、openshift-monitoringプロジェクトの Pod およびその他のリソースが再デプロイされる場合があります。そのプロジェクトで実行中のモニタリングプロセスも再起動する場合があります。

2.10. Configuring persistent storage

クラスターモニタリングを永続ストレージと共に実行すると、メトリクスは永続ボリューム (PV) に保存され、Pod の再起動または再作成後も維持されます。これは、メトリックデータまたはアラートデータをデータ損失から保護する必要がある場合に適しています。実稼働環境では、永続ストレージを設定することを強く推奨します。IO デマンドが高いため、ローカルストレージを使用することが有利になります。

2.10.1. 永続ストレージの前提条件

- ディスクが一杯にならないように、十分なローカル永続ストレージを確保します。必要な永続ストレージは Pod 数によって異なります。

- 永続ボリューム要求 (PVC) で要求される永続ボリューム (PV) が利用できる状態にあることを確認する必要があります。各レプリカに 1 つの PV が必要です。Prometheus と Alertmanager の両方に 2 つのレプリカがあるため、モニタリングスタック全体をサポートするには 4 つの PV が必要です。PV はローカルストレージ Operator から入手できますが、動的にプロビジョニングされるストレージが有効にされている場合は利用できません。

永続ボリュームを設定する際に、

volumeModeパラメーターのストレージタイプ値としてFilesystemを使用します。注記永続ストレージにローカルボリュームを使用する場合は、

LocalVolumeオブジェクトのvolumeMode: Blockで記述される raw ブロックボリュームを使用しないでください。Prometheus は raw ブロックボリュームを使用できません。重要Prometheus は、POSIX に準拠していないファイルシステムをサポートしません。たとえば、一部の NFS ファイルシステム実装は POSIX に準拠していません。ストレージに NFS ファイルシステムを使用する場合は、NFS 実装が完全に POSIX に準拠していることをベンダーに確認してください。

2.10.2. ローカ永続ボリューム要求 (PVC) の設定

モニタリングコンポーネントが永続ボリューム (PV) を使用できるようにするには、永続ボリューム要求 (PVC) を設定する必要があります。

前提条件

OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

-

OpenShift CLI (

oc) がインストールされている。

手順

ConfigMapオブジェクトを編集します。OpenShift Container Platform のコアプロジェクトをモニターするコンポーネントの PVC を設定するには、以下を実行します。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configコンポーネントの PVC 設定を

data/config.yamlの下に追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | <component>: volumeClaimTemplate: spec: storageClassName: <storage_class> resources: requests: storage: <amount_of_storage>volumeClaimTemplateの指定方法については、PersistentVolumeClaims についての Kubernetes ドキュメント を参照してください。以下の例では、OpenShift Container Platform のコアコンポーネントをモニターする Prometheus インスタンスのローカル永続ストレージを要求する PVC を設定します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: volumeClaimTemplate: spec: storageClassName: local-storage resources: requests: storage: 40Gi上記の例では、ローカルストレージ Operator によって作成されるストレージクラスは

local-storageと呼ばれます。以下の例では、Alertmanager のローカル永続ストレージを要求する PVC を設定します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | alertmanagerMain: volumeClaimTemplate: spec: storageClassName: local-storage resources: requests: storage: 10Gi

ユーザー定義プロジェクトをモニターするコンポーネントの PVC を設定するには、以下を実行します。

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-configコンポーネントの PVC 設定を

data/config.yamlの下に追加します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | <component>: volumeClaimTemplate: spec: storageClassName: <storage_class> resources: requests: storage: <amount_of_storage>volumeClaimTemplateの指定方法については、PersistentVolumeClaims についての Kubernetes ドキュメント を参照してください。以下の例では、ユーザー定義プロジェクトをモニターする Prometheus インスタンスのローカル永続ストレージを要求する PVC を設定します。

apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: volumeClaimTemplate: spec: storageClassName: local-storage resources: requests: storage: 40Gi上記の例では、ローカルストレージ Operator によって作成されるストレージクラスは

local-storageと呼ばれます。以下の例では、Thanos Ruler のローカル永続ストレージを要求する PVC を設定します。

apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | thanosRuler: volumeClaimTemplate: spec: storageClassName: local-storage resources: requests: storage: 10Gi注記thanosRulerコンポーネントのストレージ要件は、評価されルールの数や、各ルールが生成するサンプル数により異なります。

変更を適用するためにファイルを保存します。新規設定の影響を受けた Pod は自動的に再起動され、新規ストレージ設定が適用されます。

注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告変更がモニタリング設定マップに保存されると、関連するプロジェクトの Pod およびその他のリソースが再デプロイされる可能性があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

2.10.3. 永続ストレージボリュームのサイズ変更

OpenShift Container Platform は、使用される基礎となる StorageClass リソースが永続的なボリュームのサイズ変更をサポートしている場合でも、StatefulSet リソースによって使用される既存の永続的なストレージボリュームのサイズ変更をサポートしません。したがって、既存の永続ボリューム要求 (PVC) の storage フィールドをより大きなサイズで更新しても、この設定は関連する永続ボリューム (PV) に反映されません。

ただし、手動プロセスを使用して PV のサイズを変更することは可能です。Prometheus、Thanos Ruler、Alertmanager などのモニタリングコンポーネントの PV のサイズを変更する場合は、コンポーネントが設定されている適切な設定マップを更新できます。次に、PVC にパッチを適用し、Pod を削除して孤立させます。Pod を孤立させると、StatefulSet リソースがすぐに再作成され、Pod にマウントされたボリュームのサイズが新しい PVC 設定で自動的に更新されます。このプロセス中にサービスの中断は発生しません。

前提条件

-

OpenShift CLI (

oc) がインストールされている。 OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。 - コア OpenShift Container Platform モニタリングコンポーネント用に少なくとも 1 つの PVC を設定しました。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。 - ユーザー定義プロジェクトを監視するコンポーネント用に少なくとも 1 つの PVC を設定しました。

-

手順

ConfigMapオブジェクトを編集します。OpenShift Container Platform のコアプロジェクトをモニターするコンポーネントの PVC サイズを変更するには、以下を実行します。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configdata/config.yamlの下に、コンポーネントの PVC 設定用の新しいストレージサイズを追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | <component>:1 volumeClaimTemplate: spec: storageClassName: <storage_class>2 resources: requests: storage: <amount_of_storage>3 以下の例では、コア OpenShift Container Platform コンポーネントをモニターする Prometheus インスタンスのローカル永続ストレージを 100 ギガバイトに設定する PVC を設定します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: volumeClaimTemplate: spec: storageClassName: local-storage resources: requests: storage: 100Gi次の例では、Alertmanager のローカル永続ストレージを 40 ギガバイトに設定する PVC を設定します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | alertmanagerMain: volumeClaimTemplate: spec: storageClassName: local-storage resources: requests: storage: 40Gi

ユーザー定義プロジェクトを監視するコンポーネントの PVC のサイズを変更するには:

注記ユーザー定義のプロジェクトを監視する Thanos Ruler および Prometheus インスタンスのボリュームサイズを変更できます。

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-configdata/config.yaml下のモニタリングコンポーネントの PVC 設定を更新します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | <component>:1 volumeClaimTemplate: spec: storageClassName: <storage_class>2 resources: requests: storage: <amount_of_storage>3 次の例では、ユーザー定義のプロジェクトを監視する Prometheus インスタンスの PVC サイズを 100 ギガバイトに設定します。

apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: volumeClaimTemplate: spec: storageClassName: local-storage resources: requests: storage: 100Gi次の例では、Thanos Ruler の PVC サイズを 20 ギガバイトに設定します。

apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | thanosRuler: volumeClaimTemplate: spec: storageClassName: local-storage resources: requests: storage: 20Gi注記thanosRulerコンポーネントのストレージ要件は、評価されルールの数や、各ルールが生成するサンプル数により異なります。

変更を適用するためにファイルを保存します。新規設定の影響を受けた Pod は自動的に再起動します。

警告monitoring config map への変更を保存すると、関連プロジェクトの Pod およびその他のリソースが再デプロイされる場合があります。そのプロジェクトで実行しているモニタリングプロセスも再開される可能性があります。

更新されたストレージ要求を使用して、すべての PVC に手動でパッチを適用します。以下の例では、

openshift-monitoringnamespace の Prometheus コンポーネントのストレージサイズを 100Gi に変更します。$ for p in $(oc -n openshift-monitoring get pvc -l app.kubernetes.io/name=prometheus -o jsonpath='{range .items[*]}{.metadata.name} {end}'); do \ oc -n openshift-monitoring patch pvc/${p} --patch '{"spec": {"resources": {"requests": {"storage":"100Gi"}}}}'; \ done--cascade=orphanパラメーターを使用して、基になる StatefulSet を削除します。$ oc delete statefulset -l app.kubernetes.io/name=prometheus --cascade=orphan

2.10.4. Prometheus メトリックデータの保持期間およびサイズの変更

デフォルトでは、Prometheus は 15 日間メトリックデータを自動的に保持します。retention フィールドに time 値を指定すると、保持期間を変更し、データの削除方法を変更できます。retentionSize フィールドにサイズの値を指定することで、保持されたメトリックデータが使用する最大ディスク領域を設定することもできます。データがこのサイズ制限に達すると、使用するディスク領域が上限を下回るまで、Prometheus は最も古いデータを削除します。

これらのデータ保持設定は、以下の挙動に注意してください。

-

サイズベースのリテンションポリシーは、

/prometheusディレクトリー内のすべてのデータブロックディレクトリーに適用され、永続ブロック、ライトアヘッドログ (WAL) データ、および m-mapped チャンクも含まれます。 -

walと/head_chunksディレクトリーのデータは保持サイズ制限にカウントされますが、Prometheus はサイズまたは時間ベースの保持ポリシーに基づいてこれらのディレクトリーからデータをパージすることはありません。したがって、/walディレクトリーおよび/head_chunksディレクトリーに設定された最大サイズよりも低い保持サイズ制限を設定すると、/prometheusデータディレクトリーにデータブロックを保持しないようにシステムを設定している。 - サイズベースの保持ポリシーは、Prometheus が新規データブロックをカットする場合にのみ適用されます。これは、WAL に少なくとも 3 時間のデータが含まれてから 2 時間ごとに実行されます。

-

retentionまたはretentionSizeのいずれかの値を明示的に定義しない場合、保持時間はデフォルトで 15 日に設定され、保持サイズは設定されません。 -

retentionおよびretentionSizeの両方に値を定義すると、両方の値が適用されます。データブロックが定義された保持時間または定義されたサイズ制限を超える場合、Prometheus はこれらのデータブロックをパージします。 -

retentionSizeの値を定義してretentionを定義しない場合、retentionSize値のみが適用されます。 -

retentionSizeの値を定義しておらず、pretentionの値のみを定義する場合、retention値のみが適用されます。

前提条件

OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

- クラスター管理者は、ユーザー定義プロジェクトのモニタリングを有効にしている。

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

OpenShift CLI (

oc) がインストールされている。

監視設定マップの変更を保存すると、監視プロセスが再起動し、関連プロジェクトの Pod やその他のリソースが再デプロイされる場合があります。そのプロジェクトで実行中のモニタリングプロセスも再起動する場合があります。

手順

ConfigMapオブジェクトを編集します。OpenShift Container Platform のコアプロジェクトをモニターする Prometheus インスタンスの保持時間とサイズを変更するに は、以下を実行します。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-config保持期間およびサイズ設定を

data/config.yamlに追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: retention: <time_specification>1 retentionSize: <size_specification>2 以下の例では、OpenShift Container Platform のコアコンポーネントをモニターする Prometheus インスタンスの保持期間を 24 時間に設定し、保持サイズを 10 ギガバイトに設定します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: retention: 24h retentionSize: 10GB

ユーザー定義プロジェクトをモニターする Prometheus インスタンスの保持時間とサイズを変更するには、以下を実行します。

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-config保持期間およびサイズ設定を

data/config.yamlに追加します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: retention: <time_specification>1 retentionSize: <size_specification>2 次の例では、ユーザー定義プロジェクトを監視する Prometheus インスタンスについて、保持時間を 24 時間に、保持サイズを 10 ギガバイトに設定しています。

apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: retention: 24h retentionSize: 10GB

- 変更を適用するためにファイルを保存します。新規設定の影響を受けた Pod は自動的に再起動します。

2.10.5. Thanos Ruler メトリックデータの保持期間の変更

デフォルトでは、ユーザー定義のプロジェクトでは、Thanos Ruler は 24 時間にわたりメトリックデータを自動的に保持します。openshift-user-workload-monitoring namespace の user-workload-monitoring-config の Config Map に時間の値を指定して、このデータの保持期間を変更できます。

前提条件

-

OpenShift CLI (

oc) がインストールされている。 - クラスター管理者は、ユーザー定義プロジェクトのモニタリングを有効にしている。

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

監視設定マップの変更を保存すると、監視プロセスが再起動し、関連プロジェクトの Pod やその他のリソースが再デプロイされる場合があります。そのプロジェクトで実行中のモニタリングプロセスも再起動する場合があります。

手順

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-config保持期間の設定を

data/config.yamlに追加します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | thanosRuler: retention: <time_specification>1 - 1

- 保持時間 は、

ms(ミリ秒)、s(秒)、m(分)、h(時)、d(日)、w(週)、y(年) が直後に続く数字で指定します。1h30m15sなどの特定の時間に時間値を組み合わせることもできます。デフォルトは24hです。

以下の例では、Thanos Ruler データの保持期間を 10 日間に設定します。

apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | thanosRuler: retention: 10d- 変更を適用するためにファイルを保存します。新規設定が加えられた Pod は自動的に再起動します。

2.11. リモート書き込みストレージの設定

リモート書き込みストレージを設定して、Prometheus が取り込んだメトリックをリモートシステムに送信して長期保存できるようにします。これを行っても、Prometheus がメトリックを保存する方法や期間には影響はありません。

前提条件

OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

-

OpenShift CLI (

oc) がインストールされている。 - リモート書き込み互換性のあるエンドポイント (Thanos) を設定し、エンドポイント URL を把握している。リモート書き込み機能と互換性のないエンドポイントの情報ては、Prometheus リモートエンドポイントおよびストレージについてのドキュメント を参照してください。

リモート書き込みエンドポイントの

Secretオブジェクトに認証クレデンシャルを設定している。リモート書き込みを設定する Prometheus オブジェクトと同じ namespace にシークレットを作成する必要があります。デフォルトのプラットフォームモニタリングの場合はopenshift-monitoringnamespace 、ユーザーのワークロードモニタリングの場合はopenshift-user-workload-monitoringnamespace です。Importantセキュリティーリスクを軽減するには、HTTPS および認証を使用してメトリックをエンドポイントに送信します。

手順

以下の手順に従って、openshift-monitoring namespace の cluster-monitoring-config config マップで、デフォルトのプラットフォーム監視のリモート書き込みを設定します。

ユーザー定義プロジェクトをモニターする Prometheus インスタンスのリモート書き込みを設定する場合は、openshift-user-workload-monitoring namespace の user-workload-monitoring-config 設定マップと同様の編集を行います。なお、Prometheus のコンフィグマップコンポーネントは、user-workload-monitoring-configConfigMap オブジェクトでは prometheus と呼ばれ、prometheusK8s ではないことに注意してください。これは、cluster-monitoring-configConfigMap オブジェクトにあるためです。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-config-

data/config.yaml/prometheusK8sにremoteWrite:セクションを追加します。 このセクションにエンドポイント URL および認証情報を追加します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: remoteWrite: - url: "https://remote-write-endpoint.example.com"1 <endpoint_authentication_credentials>2 認証クレデンシャルの後に、書き込みの再ラベル設定値を追加します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: remoteWrite: - url: "https://remote-write-endpoint.example.com" <endpoint_authentication_credentials> <write_relabel_configs>1 - 1

- 書き込みの再ラベル設定。

<write_relabel_configs>は、リモートエンドポイントに送信する必要のあるメトリクスの書き込みラベル一覧に置き換えます。以下の例では、

my_metricという単一のメトリックを転送する方法を紹介します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: remoteWrite: - url: "https://remote-write-endpoint.example.com" writeRelabelConfigs: - sourceLabels: [__name__] regex: 'my_metric' action: keep書き込み再ラベル設定オプションについては、Prometheus relabel_config documentation を参照してください。

ファイルを保存して、変更を

ConfigMapオブジェクトに適用します。新規設定の影響を受けた Pod は自動的に再起動します。注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告モニタリング

ConfigMapオブジェクトへの変更を保存すると、関連するプロジェクトの Pod およびその他のリソースが再デプロイされる可能性があります。また、変更を保存すると、そのプロジェクトで実行中のモニタリングプロセスも再起動する可能性があります。

2.11.1. サポート対象のリモート書き込み認証設定

異なる方法を使用して、リモート書き込みエンドポイントとの認証を行うことができます。現在サポートされている認証方法は、AWS 署名バージョン 4、基本認証、Authorization リクエストヘッダーでの HTTP を使用した認証、OAuth 2.0、および TLS クライアントです。以下の表は、リモート書き込みで使用するサポート対象の認証方法の詳細を示しています。

| 認証方法 | 設定マップフィールド | 説明 |

|---|---|---|

| AWS 署名バージョン 4 |

| この方法では、AWS Signature Version 4 認証を使用して要求を署名します。この方法は、認可、OAuth 2.0、または Basic 認証と同時に使用することはできません。 |

| Basic 認証 |

| Basic 認証は、設定されたユーザー名とパスワードを使用してすべてのリモート書き込み要求に承認ヘッダーを設定します。 |

| 認可 |

|

Authorization は、設定されたトークンを使用して、すべてのリモート書き込みリクエストに |

| OAuth 2.0 |

|

OAuth 2.0 設定は、クライアントクレデンシャル付与タイプを使用します。Prometheus は、リモート書き込みエンドポイントにアクセスするために、指定されたクライアント ID およびクライアントシークレットを使用して |

| TLS クライアント |

| TLS クライアント設定は、TLS を使用してリモート書き込みエンドポイントサーバーで認証するために使用される CA 証明書、クライアント証明書、およびクライアントキーファイル情報を指定します。設定例は、CA 証明書ファイル、クライアント証明書ファイル、およびクライアントキーファイルがすでに作成されていることを前提としています。 |

2.11.1.1. 認証設定の設定マップの場所

以下は、デフォルトのプラットフォームモニタリングの ConfigMap オブジェクトの認証設定の場所を示しています。

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

prometheusK8s:

remoteWrite:

- url: "https://remote-write-endpoint.example.com"

<endpoint_authentication_details>

ユーザー定義プロジェクトを監視する Prometheus インスタンスに対してリモート書き込みを設定する場合は、openshift-user-workload-monitoring namespace の user-workload-monitoring-config を編集してください。なお、Prometheus のコンフィグマップコンポーネントは、user-workload-monitoring-configConfigMap オブジェクトでは prometheus と呼ばれ、prometheusK8s ではないことに注意してください。これは、cluster-monitoring-configConfigMap オブジェクトにあるためです。

2.11.1.2. リモート書き込み認証の設定例

次のサンプルは、リモート書き込みエンドポイントに接続するために使用できるさまざまな認証設定を示しています。各サンプルでは、認証情報やその他の関連設定を含む対応する Secret オブジェクトを設定する方法も示しています。それぞれのサンプルは、openshift-monitoring namespace でデフォルトのプラットフォームモニタリングで使用する認証を設定します。

AWS 署名バージョン 4 認証のサンプル YAML

以下は、openshift-monitoring namespace の sigv4-credentials という名前の sigv 4 シークレットの設定を示しています。

apiVersion: v1

kind: Secret

metadata:

name: sigv4-credentials

namespace: openshift-monitoring

stringData:

accessKey: <AWS_access_key>

secretKey: <AWS_secret_key>

type: Opaque

以下は、openshift-monitoring namespace の sigv4-credentials という名前の Secret オブジェクトを使用する AWS Signature Version 4 リモート書き込み認証のサンプルを示しています。

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

prometheusK8s:

remoteWrite:

- url: "https://authorization.example.com/api/write"

sigv4:

region: <AWS_region>

accessKey:

name: sigv4-credentials

key: accessKey

secretKey:

name: sigv4-credentials

key: secretKey

profile: <AWS_profile_name>

roleArn: <AWS_role_arn> 基本認証用のサンプル YAML

以下に、openshift-monitoring namespace 内の rw-basic-auth という名前の Secret オブジェクトの基本認証設定のサンプルを示します。

apiVersion: v1

kind: Secret

metadata:

name: rw-basic-auth

namespace: openshift-monitoring

stringData:

user: <basic_username>

password: <basic_password>

type: Opaque

以下の例は、openshift-monitoring namespace の rw-basic-auth という名前の Secret オブジェクトを使用する basicAuth リモート書き込み設定を示しています。これは、エンドポイントの認証認証情報がすでに設定されていることを前提としています。

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

prometheusK8s:

remoteWrite:

- url: "https://basicauth.example.com/api/write"

basicAuth:

username:

name: rw-basic-auth

key: user

password:

name: rw-basic-auth

key: password Secret オブジェクトを使用したベアラートークンによる認証のサンプル YAML

以下は、openshift-monitoring namespace の rw-bearer-auth という名前の Secret オブジェクトのベアラートークン設定を示しています。

apiVersion: v1

kind: Secret

metadata:

name: rw-bearer-auth

namespace: openshift-monitoring

stringData:

token: <authentication_token>

type: Opaque- 1

- 認証トークン。

以下は、openshift-monitoring namespace の rw-bearer-auth という名前の Secret オブジェクトを使用するベアラートークン設定マップの設定例を示しています。

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

enableUserWorkload: true

prometheusK8s:

remoteWrite:

- url: "https://authorization.example.com/api/write"

authorization:

type: Bearer

credentials:

name: rw-bearer-auth

key: token OAuth 2.0 認証のサンプル YAML

以下は、openshift-monitoring namespace の oauth2-credentials という名前の Secret オブジェクトの OAuth 2.0 設定のサンプルを示しています。

apiVersion: v1

kind: Secret

metadata:

name: oauth2-credentials

namespace: openshift-monitoring

stringData:

id: <oauth2_id>

secret: <oauth2_secret>

token: <oauth2_authentication_token>

type: Opaque

以下は、openshift-monitoring namespace の oauth2-credentials という Secret オブジェクトを使用した oauth2 リモート書き込み認証のサンプル設定です。

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

prometheusK8s:

remoteWrite:

- url: "https://test.example.com/api/write"

oauth2:

clientId:

secret:

name: oauth2-credentials

key: id

clientSecret:

name: oauth2-credentials

key: secret

tokenUrl: https://example.com/oauth2/token

scopes:

- <scope_1>

- <scope_2>

endpointParams:

param1: <parameter_1>

param2: <parameter_2>- 1 3

- 対応する

Secretオブジェクトの名前。ClientIdはConfigMapオブジェクトを参照することもできますが、clientSecretはSecretオブジェクトを参照する必要があることに注意してください。 - 2 4

- 指定された

Secretオブジェクトの OAuth 2.0 認証情報が含まれるキー。 - 5

- 指定された

clientIdおよびclientSecretでトークンを取得するために使用される URL。 - 6

- 認可要求の OAuth 2.0 スコープ。これらのスコープは、トークンがアクセスできるデータを制限します。

- 7

- 認可サーバーに必要な OAuth 2.0 認可要求パラメーター。

TLS クライアント認証のサンプル YAML

以下は、openshift-monitoring namespace 内の mtls-bundle という名前の tlsSecret オブジェクトに対する TLS クライアント設定のサンプルです。

apiVersion: v1

kind: Secret

metadata:

name: mtls-bundle

namespace: openshift-monitoring

data:

ca.crt: <ca_cert>

client.crt: <client_cert>

client.key: <client_key>

type: tls

以下の例は、mtls-bundle という名前の TLS Secret オブジェクトを使用する tlsConfig リモート書き込み認証設定を示しています。

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

prometheusK8s:

remoteWrite:

- url: "https://remote-write-endpoint.example.com"

tlsConfig:

ca:

secret:

name: mtls-bundle

key: ca.crt

cert:

secret:

name: mtls-bundle

key: client.crt

keySecret:

name: mtls-bundle

key: client.key 2.12. クラスター ID ラベルのメトリックへの追加

複数の OpenShift Container Platform クラスターを管理し、リモート書き込み機能を使用してメトリックデータをこれらのクラスターから外部ストレージの場所に送信する場合、クラスター ID ラベルを追加して、異なるクラスターから送られるメトリックデータを特定できます。次に、これらのラベルをクエリーし、メトリックのソースクラスターを特定し、そのデータを他のクラスターによって送信される同様のメトリックデータと区別することができます。

これにより、複数の顧客に対して多数のクラスターを管理し、メトリックデータを単一の集中ストレージシステムに送信する場合、クラスター ID ラベルを使用して特定のクラスターまたはお客様のメトリックをクエリーできます。

クラスター ID ラベルの作成および使用には、以下の 3 つの一般的な手順が必要です。

- リモート書き込みストレージの書き込みラベルの設定。

- クラスター ID ラベルをメトリックに追加します。

- これらのラベルをクエリーし、メトリックのソースクラスターまたはカスタマーを特定します。

2.12.1. メトリックのクラスター ID ラベルの作成

デフォルトプラットフォームのモニタリングおよびユーザーワークロードモニタリングのメトリクスのクラスター ID ラベルを作成できます。

デフォルトのプラットフォームモニタリングの場合、openshift-monitoring namespace の cluster-monitoring-config config map でリモート書き込みストレージの write_relabel 設定でメトリクスのクラスター ID ラベルを追加します。

ユーザーワークロードモニタリングの場合、openshift-user-workload-monitoring namespace の user-workload-monitoring-config config map の設定を編集します。

Prometheus が namespace ラベルを公開するユーザーワークロードターゲットをスクレイプすると、システムはこのラベルを exported_namespace として保存します。この動作により、最終的な namespace ラベル値がターゲット Pod の namespace と等しくなります。このデフォルトは、PodMonitor または ServiceMonitor オブジェクトの honorLabels フィールドの値を true に設定してオーバーライドすることはできません。

前提条件

-

OpenShift CLI (

oc) がインストールされている。 - リモート書き込みストレージを設定している。

デフォルトのプラットフォームモニタリングコンポーネントを設定する場合は、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

手順

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-config注記ユーザー定義プロジェクトをモニターする Prometheus インスタンスのメトリックのクラスター ID ラベルを設定する場合、

openshift-user-workload-monitoringnamespace のuser-workload-monitoring-configconfig map を編集します。Prometheus コンポーネントはこの config map のprometheusと呼ばれ、prometheusK8sではなく、cluster-monitoring-configconfig map で使用される名前であることに注意してください。data/config.yaml/prometheusK8s/remoteWriteの下にあるwriteRelabelConfigs:セクションで、クラスター ID の再ラベル付け設定値を追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: remoteWrite: - url: "https://remote-write-endpoint.example.com" <endpoint_authentication_credentials> writeRelabelConfigs:1 - <relabel_config>2 以下の例は、デフォルトのプラットフォームモニタリングでクラスター ID ラベル

cluster_idを持つメトリクスを転送する方法を示しています。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: remoteWrite: - url: "https://remote-write-endpoint.example.com" writeRelabelConfigs: - sourceLabels: - __tmp_openshift_cluster_id__1 targetLabel: cluster_id2 action: replace3 - 1

- システムは最初に

__tmp_openshift_cluster_id__という名前の一時的なクラスター ID ソースラベルを適用します。この一時的なラベルは、指定するクラスター ID ラベル名に置き換えられます。 - 2

- リモート書き込みストレージに送信されるメトリックのクラスター ID ラベルの名前を指定します。メトリックにすでに存在するラベル名を使用する場合、その値はこのクラスター ID ラベルの名前で上書きされます。ラベル名には

__tmp_openshift_cluster_id__は使用しないでください。最後の再ラベル手順では、この名前を使用するラベルを削除します。 - 3

replace置き換えラベルの再設定アクションは、一時ラベルを送信メトリックのターゲットラベルに置き換えます。このアクションはデフォルトであり、アクションが指定されていない場合に適用されます。

ファイルを保存して、変更を

ConfigMapオブジェクトに適用します。更新された設定の影響を受ける Pod は自動的に再起動します。警告モニタリング

ConfigMapオブジェクトへの変更を保存すると、関連するプロジェクトの Pod およびその他のリソースが再デプロイされる可能性があります。また、変更を保存すると、そのプロジェクトで実行中のモニタリングプロセスも再起動する可能性があります。

2.13. ユーザー定義プロジェクトでバインドされていないメトリクス属性の影響の制御

開発者は、キーと値のペアの形式でメトリックの属性を定義するためにラベルを作成できます。使用できる可能性のあるキーと値のペアの数は、属性について使用できる可能性のある値の数に対応します。数が無制限の値を持つ属性は、バインドされていない属性と呼ばれます。たとえば、customer_id 属性は、使用できる値が無限にあるため、バインドされていない属性になります。

割り当てられるキーと値のペアにはすべて、一意の時系列があります。ラベルに多くのバインドされていない属性を使用すると、指数関数的により時系列を作成できます。これにより、Prometheus のパフォーマンスおよび利用可能なディスク領域に影響を与える可能性があります。

クラスター管理者は、以下の手段を使用して、ユーザー定義プロジェクトでのバインドされていないメトリクス属性の影響を制御できます。

- ユーザー定義プロジェクトでターゲット収集ごとに受け入れ可能なサンプル数を制限します。

- 収集されたラベルの数、ラベル名の長さ、およびラベル値の長さを制限します。

- 収集サンプルのしきい値に達するか、ターゲットを収集できない場合に実行されるアラートを作成します。

多くのバインドされていない属性を追加して発生する問題を防ぐには、メトリックに定義する収集サンプル、ラベル名、およびバインドされていない属性の数を制限します。また、制限された一連の値にバインドされる属性を使用して、潜在的なキーと値のペアの組み合わせの数を減らします。

2.13.1. ユーザー定義プロジェクトの収集サンプルおよびラベル制限の設定

ユーザー定義プロジェクトで、ターゲット収集ごとに受け入れ可能なサンプル数を制限できます。収集されたラベルの数、ラベル名の長さ、およびラベル値の長さを制限することもできます。

サンプルまたはラベルの制限を設定している場合、制限に達した後にそのターゲット収集についての追加のサンプルデータは取得されません。

前提条件

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 - ユーザー定義プロジェクトのモニタリングを有効にしている。

-

OpenShift CLI (

oc) がインストールされている。

手順

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-configenforcedSampleLimit設定をdata/config.yamlに追加し、ユーザー定義プロジェクトのターゲットの収集ごとに受け入れ可能なサンプルの数を制限できます。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: enforcedSampleLimit: 500001 - 1

- このパラメーターが指定されている場合は、値が必要です。この

enforcedSampleLimitの例では、ユーザー定義プロジェクトのターゲット収集ごとに受け入れ可能なサンプル数を 50,000 に制限します。

enforcedLabelLimit、enforcedLabelNameLengthLimit、およびenforcedLabelValueLengthLimit設定をdata/config.yamlに追加し、収集されるラベルの数、ラベル名の長さ、およびユーザー定義プロジェクトでのラベル値の長さを制限します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: enforcedLabelLimit: 5001 enforcedLabelNameLengthLimit: 502 enforcedLabelValueLengthLimit: 6003 変更を適用するためにファイルを保存します。制限は自動的に適用されます。

注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告変更が

user-workload-monitoring-configConfigMapオブジェクトに保存されると、openshift-user-workload-monitoringプロジェクトの Pod および他のリソースは再デプロイされる可能性があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

2.13.2. 収集サンプルアラートの作成

以下の場合に通知するアラートを作成できます。

-

ターゲットを収集できず、指定された

forの期間利用できない -

指定された

forの期間、収集サンプルのしきい値に達するか、この値を上回る

前提条件

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 - ユーザー定義プロジェクトのモニタリングを有効にしている。

-

user-workload-monitoring-configConfigMapオブジェクトを作成している。 -

enforcedSampleLimitを使用して、ユーザー定義プロジェクトのターゲット収集ごとに受け入れ可能なサンプル数を制限している。 -

OpenShift CLI (

oc) がインストールされている。

手順

ターゲットがダウンし、実行されたサンプル制限に近づく際に通知するアラートを指定して YAML ファイルを作成します。この例のファイルは

monitoring-stack-alerts.yamlという名前です。apiVersion: monitoring.coreos.com/v1 kind: PrometheusRule metadata: labels: prometheus: k8s role: alert-rules name: monitoring-stack-alerts1 namespace: ns12 spec: groups: - name: general.rules rules: - alert: TargetDown3 annotations: message: '{{ printf "%.4g" $value }}% of the {{ $labels.job }}/{{ $labels.service }} targets in {{ $labels.namespace }} namespace are down.'4 expr: 100 * (count(up == 0) BY (job, namespace, service) / count(up) BY (job, namespace, service)) > 10 for: 10m5 labels: severity: warning6 - alert: ApproachingEnforcedSamplesLimit7 annotations: message: '{{ $labels.container }} container of the {{ $labels.pod }} pod in the {{ $labels.namespace }} namespace consumes {{ $value | humanizePercentage }} of the samples limit budget.'8 expr: scrape_samples_scraped/50000 > 0.89 for: 10m10 labels: severity: warning11 - 1

- アラートルールの名前を定義します。

- 2

- アラートルールをデプロイするユーザー定義のプロジェクトを指定します。

- 3

TargetDownアラートは、forの期間にターゲットを収集できないか、利用できない場合に実行されます。- 4

TargetDownアラートが実行される場合に出力されるメッセージ。- 5

- アラートが実行される前に、

TargetDownアラートの条件がこの期間中 true である必要があります。 - 6

TargetDownアラートの重大度を定義します。- 7

ApproachingEnforcedSamplesLimitアラートは、指定されたforの期間に定義された収集サンプルのしきい値に達するか、この値を上回る場合に実行されます。- 8

ApproachingEnforcedSamplesLimitアラートの実行時に出力されるメッセージ。- 9

ApproachingEnforcedSamplesLimitアラートのしきい値。この例では、ターゲット収集ごとのサンプル数が実行されたサンプル制限50000の 80% を超えるとアラートが実行されます。アラートが実行される前に、forの期間も経過している必要があります。式scrape_samples_scraped/<number> > <threshold>の<number>はuser-workload-monitoring-configConfigMapオブジェクトで定義されるenforcedSampleLimit値に一致する必要があります。- 10

- アラートが実行される前に、

ApproachingEnforcedSamplesLimitアラートの条件がこの期間中 true である必要があります。 - 11

ApproachingEnforcedSamplesLimitアラートの重大度を定義します。

設定をユーザー定義プロジェクトに適用します。

$ oc apply -f monitoring-stack-alerts.yaml

第3章 外部 alertmanager インスタンスの設定

OpenShift Container Platform モニタリングスタックには、Prometheus からのアラートのルートなど、ローカルの Alertmanager インスタンスが含まれます。openshift-monitoring または user-workload-monitoring-config プロジェクトのいずれかで cluster-monitoring-config 設定マップを設定して外部 Alertmanager インスタンスを追加できます。

複数のクラスターに同じ外部 Alertmanager 設定を追加し、クラスターごとにローカルインスタンスを無効にする場合には、単一の外部 Alertmanager インスタンスを使用して複数のクラスターのアラートルーティングを管理できます。

前提条件

-

OpenShift CLI (

oc) がインストールされている。 openshift-monitoringプロジェクトで OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合:-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMap を作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMap オブジェクトを作成している。

-

手順

ConfigMapオブジェクトを編集します。OpenShift Container Platform のコアプロジェクトのルーティングアラート用に追加の Alertmanager を設定するには、以下を実行します。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMap を編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-config-

data/config.yaml/prometheusK8sにadditionalAlertmanagerConfigs:セクションを追加します。 このセクションに別の Alertmanager 設定の詳細情報を追加します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: additionalAlertmanagerConfigs: - <alertmanager_specification><alertmanager_specification>は、追加の Alertmanager インスタンスの認証およびその他の設定の詳細を置き換えます。現時点で、サポートされている認証方法はベアラートークン (bearerToken) およびクライアント TLS(tlsConfig) です。以下の設定マップは、クライアント TLS 認証でベアラートークンを使用して追加の Alertmanager を設定します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: additionalAlertmanagerConfigs: - scheme: https pathPrefix: / timeout: "30s" apiVersion: v1 bearerToken: name: alertmanager-bearer-token key: token tlsConfig: key: name: alertmanager-tls key: tls.key cert: name: alertmanager-tls key: tls.crt ca: name: alertmanager-tls key: tls.ca staticConfigs: - external-alertmanager1-remote.com - external-alertmanager1-remote2.com

ユーザー定義プロジェクトでルーティングアラート用に追加の Alertmanager インスタンスを設定するには、以下を実行します。

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-config設定マップを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-config-

data/config.yaml/の下に<component>/additionalAlertmanagerConfigs:セクションを追加します。 このセクションに別の Alertmanager 設定の詳細情報を追加します。

apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | <component>: additionalAlertmanagerConfigs: - <alertmanager_specification><component>には、サポート対象の外部 Alertmanager コンポーネント (prometheusまたはthanosRuler)2 つの内、いずれかに置き換えます。<alertmanager_specification>は、追加の Alertmanager インスタンスの認証およびその他の設定の詳細を置き換えます。現時点で、サポートされている認証方法はベアラートークン (bearerToken) およびクライアント TLS(tlsConfig) です。以下の設定マップは、ベアラートークンおよびクライアント TLS 認証を指定した Thanos Ruler を使用して追加の Alertmanager を設定します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | thanosRuler: additionalAlertmanagerConfigs: - scheme: https pathPrefix: / timeout: "30s" apiVersion: v1 bearerToken: name: alertmanager-bearer-token key: token tlsConfig: key: name: alertmanager-tls key: tls.key cert: name: alertmanager-tls key: tls.crt ca: name: alertmanager-tls key: tls.ca staticConfigs: - external-alertmanager1-remote.com - external-alertmanager1-remote2.com注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。

-

ファイルを保存して、変更を

ConfigMapオブジェクトに適用します。新しいコンポーネントの配置設定が自動的に適用されます。

3.1. 追加ラベルの時系列 (time series) およびアラートへの割り当て

Prometheus の外部ラベル機能を使用して、カスタムラベルを、Prometheus から出るすべての時系列およびアラートに割り当てることができます。

前提条件

OpenShift Container Platform のコアモニタリングコンポーネントを設定する場合、以下を実行します。

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

ユーザー定義のプロジェクトをモニターするコンポーネントを設定する場合:

-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

-

OpenShift CLI (

oc) がインストールされている。

手順

ConfigMapオブジェクトを編集します。カスタムラベルを、OpenShift Container Platform のコアプロジェクトをモニターする Prometheus インスタンスから出るすべての時系列およびアラートに割り当てるには、以下を実行します。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configdata/config.yamlの下にすべてのメトリックについて追加する必要のあるラベルのマップを定義します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: externalLabels: <key>: <value>1 - 1

<key>: <value>をキーと値のペアのマップに置き換えます。ここで、<key>は新規ラベルの一意の名前で、<value>はその値になります。

警告prometheusまたはprometheus_replicaは予約され、上書きされるため、これらをキー名として使用しないでください。たとえば、リージョンおよび環境に関するメタデータをすべての時系列およびアラートに追加するには、以下を使用します。

apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: externalLabels: region: eu environment: prod

カスタムラベルを、ユーザー定義のプロジェクトをモニターする Prometheus インスタンスから出るすべての時系列およびアラートに割り当てるには、以下を実行します。

openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-configdata/config.yamlの下にすべてのメトリックについて追加する必要のあるラベルのマップを定義します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: externalLabels: <key>: <value>1 - 1

<key>: <value>をキーと値のペアのマップに置き換えます。ここで、<key>は新規ラベルの一意の名前で、<value>はその値になります。

警告prometheusまたはprometheus_replicaは予約され、上書きされるため、これらをキー名として使用しないでください。注記openshift-user-workload-monitoringプロジェクトでは、Prometheus はメトリックを処理し、Thanos Ruler はアラートおよび記録ルールを処理します。user-workload-monitoring-configConfigMapオブジェクトでprometheusのexternalLabelsを設定すると、すべてのルールではなく、メトリックの外部ラベルのみが設定されます。たとえば、リージョンおよび環境に関するメタデータをすべての時系列およびユーザー定義プロジェクトに関連するアラートに追加するには、以下を使用します。

apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: externalLabels: region: eu environment: prod

変更を適用するためにファイルを保存します。新しい設定は自動的に適用されます。

注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告変更がモニタリング設定マップに保存されると、関連するプロジェクトの Pod およびその他のリソースが再デプロイされる可能性があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

3.2. モニタリングコンポーネントのログレベルの設定

Alertmanager、Prometheus Operator、Prometheus、Alertmanager および Thanos Querier および Thanos Ruler のログレベルを設定できます。

cluster-monitoring-config および user-workload-monitoring-configConfigMap オブジェクトの該当するコンポーネントには、以下のログレベルを適用することができます。

-

debug:デバッグ、情報、警告、およびエラーメッセージをログに記録します。 -

info:情報、警告およびエラーメッセージをログに記録します。 -

warn:警告およびエラーメッセージのみをログに記録します。 -

error:エラーメッセージのみをログに記録します。

デフォルトのログレベルは info です。

前提条件

openshift-monitoringプロジェクトで Alertmanager、Prometheus Operator、Prometheus、または Thanos Querier のログレベルを設定する場合には、以下を実行します。-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

openshift-user-workload-monitoringプロジェクトで Prometheus Operator、Prometheus、または Thanos Ruler のログレベルを設定する場合には、以下を実行します。-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

-

OpenShift CLI (

oc) がインストールされている。

手順

ConfigMapオブジェクトを編集します。openshift-monitoringプロジェクトのコンポーネントのログレベルを設定するには、以下を実行します。openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configコンポーネントの

logLevel: <log_level>をdata/config.yamlの下に追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | <component>:1 logLevel: <log_level>2

openshift-user-workload-monitoringプロジェクトのコンポーネントのログレベルを設定するには、以下を実行します。openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-configコンポーネントの

logLevel: <log_level>をdata/config.yamlの下に追加します。apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | <component>:1 logLevel: <log_level>2

変更を適用するためにファイルを保存します。ログレベルの変更を適用する際に、コンポーネントの Pod は自動的に再起動します。

注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告変更がモニタリング設定マップに保存されると、関連するプロジェクトの Pod およびその他のリソースが再デプロイされる可能性があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

関連するプロジェクトでデプロイメントまたは Pod 設定を確認し、ログレベルが適用されていることを確認します。以下の例では、

openshift-user-workload-monitoringプロジェクトのprometheus-operatorデプロイメントでログレベルを確認します。$ oc -n openshift-user-workload-monitoring get deploy prometheus-operator -o yaml | grep "log-level"出力例

- --log-level=debugコンポーネントの Pod が実行中であることを確認します。以下の例は、

openshift-user-workload-monitoringプロジェクトの Pod のステータスをリスト表示します。$ oc -n openshift-user-workload-monitoring get pods注記認識されない

loglevel値がConfigMapオブジェクトに含まれる場合は、コンポーネントの Pod が正常に再起動しない可能性があります。

3.3. Prometheus のクエリーログファイルの有効化

エンジンによって実行されたすべてのクエリーをログファイルに書き込むように Prometheus を設定できます。これは、デフォルトのプラットフォームモニタリングおよびユーザー定義のワークロードモニタリングに対して行うことができます。

ログローテーションはサポートされていないため、問題のトラブルシューティングが必要な場合にのみ、この機能を一時的に有効にします。トラブルシューティングが終了したら、ConfigMapオブジェクトに加えた変更を元に戻してクエリーログを無効にし、機能を有効にします。

前提条件

-

OpenShift CLI (

oc) がインストールされている。 openshift-monitoringプロジェクトで Prometheus のクエリーログファイル機能を有効にしている場合:-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

-

openshift-user-workload-monitoringプロジェクトで Prometheus のクエリーログファイル機能を有効にしている場合:-

cluster-adminクラスターロールを持つユーザーとして、またはopenshift-user-workload-monitoringプロジェクトのuser-workload-monitoring-config-editロールを持つユーザーとして、クラスターにアクセスできる。 -

user-workload-monitoring-configConfigMapオブジェクトを作成している。

-

手順

openshift-monitoringプロジェクトで Prometheus のクエリーログファイルを設定するには:openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configdata/config.yamlの下のprometheusK8sのqueryLogFile: <path>を追加します:apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | prometheusK8s: queryLogFile: <path>1 - 1

- クエリーがログに記録されるファイルへのフルパス。

変更を適用するためにファイルを保存します。

警告monitoring config map への変更を保存すると、関連プロジェクトの Pod およびその他のリソースが再デプロイされる場合があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

コンポーネントの Pod が実行中であることを確認します。次のコマンドの例は、

openshift-monitoringプロジェクトの Pod のステータスを一覧表示します。$ oc -n openshift-monitoring get podsクエリーログを読みます。

$ oc -n openshift-monitoring exec prometheus-k8s-0 -- cat <path>重要ログに記録されたクエリー情報を確認した後、config map の設定を元に戻します。

openshift-user-workload-monitoringプロジェクトで Prometheus のクエリーログファイルを設定するには:openshift-user-workload-monitoringプロジェクトでuser-workload-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-user-workload-monitoring edit configmap user-workload-monitoring-configdata/config.yamlの下のprometheusのqueryLogFile: <path>を追加します:apiVersion: v1 kind: ConfigMap metadata: name: user-workload-monitoring-config namespace: openshift-user-workload-monitoring data: config.yaml: | prometheus: queryLogFile: <path>1 - 1

- クエリーがログに記録されるファイルへのフルパス。

変更を適用するためにファイルを保存します。

注記user-workload-monitoring-configConfigMapオブジェクトに適用される設定は、クラスター管理者がユーザー定義プロジェクトのモニタリングを有効にしない限りアクティブにされません。警告monitoring config map への変更を保存すると、関連プロジェクトの Pod およびその他のリソースが再デプロイされる場合があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

コンポーネントの Pod が実行中であることを確認します。以下の例は、

openshift-user-workload-monitoringプロジェクトの Pod のステータスを一覧表示します。$ oc -n openshift-user-workload-monitoring get podsクエリーログを読みます。

$ oc -n openshift-user-workload-monitoring exec prometheus-user-workload-0 -- cat <path>重要ログに記録されたクエリー情報を確認した後、config map の設定を元に戻します。

3.4. Thanos Querier のクエリーロギングの有効化

openshift-monitoring プロジェクトのデフォルトのプラットフォームモニタリングの場合、Cluster Monitoring Operator を有効にして Thanos Querier によって実行されるすべてのクエリーをログに記録できます。

ログローテーションはサポートされていないため、問題のトラブルシューティングが必要な場合にのみ、この機能を一時的に有効にします。トラブルシューティングが終了したら、ConfigMapオブジェクトに加えた変更を元に戻してクエリーログを無効にし、機能を有効にします。

前提条件

-

OpenShift CLI (

oc) がインストールされている。 -

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

手順

openshift-monitoring プロジェクトで Thanos Querier のクエリーロギングを有効にすることができます。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-config以下の例のように

thanosQuerierセクションをdata/config.yamlに追加し、値を追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | thanosQuerier: enableRequestLogging: <value>1 logLevel: <value>2 変更を適用するためにファイルを保存します。

警告monitoring config map への変更を保存すると、関連プロジェクトの Pod およびその他のリソースが再デプロイされる場合があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

検証

Thanos Querier Pod が実行されていることを確認します。次のコマンドの例は、

openshift-monitoringプロジェクトの Pod のステータスを一覧表示します。$ oc -n openshift-monitoring get pods以下のサンプルコマンドをモデルとして使用して、テストクエリーを実行します。

$ token=`oc create token prometheus-k8s -n openshift-monitoring` $ oc -n openshift-monitoring exec -c prometheus prometheus-k8s-0 -- curl -k -H "Authorization: Bearer $token" 'https://thanos-querier.openshift-monitoring.svc:9091/api/v1/query?query=cluster_version'以下のコマンドを実行してクエリーログを読み取ります。

$ oc -n openshift-monitoring logs <thanos_querier_pod_name> -c thanos-query注記thanos-querierPod は高可用性 (HA) Pod であるため、1 つの Pod でのみログを表示できる可能性があります。-

ログに記録されたクエリー情報を確認したら、設定マップで

enableRequestLoggingの値をfalseに変更してクエリーロギングを無効にします。

第4章 Prometheus アダプターの監査ログレベルの設定

デフォルトのプラットフォームモニタリングでは、Prometheus アダプターの監査ログレベルを設定できます。

前提条件

-

OpenShift CLI (

oc) がインストールされている。 -

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。

手順

デフォルトの openshift-monitoring プロジェクトで Prometheus アダプターの監査ログレベルを設定できます。

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configk8sPrometheusAdapter/auditセクションにprofile:をdata/config.yamlの下に追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | k8sPrometheusAdapter: audit: profile: <audit_log_level>1 - 1

- Prometheus アダプターに適用する監査ログレベル。

profile:パラメーターに以下のいずれかの値を使用して、監査ログレベルを設定します。-

None: イベントをログに記録しません。 -

Metadata: ユーザー、タイムスタンプなど、リクエストのメタデータのみをログに記録します。リクエストテキストと応答テキストはログに記録しないでください。metadataはデフォルトの監査ログレベルです。 -

Request: メタデータと要求テキストのみをログに記録しますが、応答テキストはログに記録しません。このオプションは、リソース以外の要求には適用されません。 -

RequestResponse: イベントのメタデータ、要求テキスト、および応答テキストをログに記録します。このオプションは、リソース以外の要求には適用されません。

-

変更を適用するためにファイルを保存します。変更を適用すると、Prometheus Adapter 用の Pod が自動的に再起動します。

警告変更がモニタリング設定マップに保存されると、関連するプロジェクトの Pod およびその他のリソースが再デプロイされる可能性があります。該当するプロジェクトの実行中のモニタリングプロセスも再起動する可能性があります。

検証

-

設定マップの

k8sPrometheusAdapter/audit/profileで、ログレベルをRequestに設定し、ファイルを保存します。 Prometheus アダプターの Pod が実行されていることを確認します。以下の例は、

openshift-monitoringプロジェクトの Pod のステータスを一覧表示します。$ oc -n openshift-monitoring get pods監査ログレベルと監査ログファイルのパスが正しく設定されていることを確認します。

$ oc -n openshift-monitoring get deploy prometheus-adapter -o yaml出力例

... - --audit-policy-file=/etc/audit/request-profile.yaml - --audit-log-path=/var/log/adapter/audit.log正しいログレベルが

openshift-monitoringプロジェクトのprometheus-adapterデプロイメントに適用されていることを確認します。$ oc -n openshift-monitoring exec deploy/prometheus-adapter -c prometheus-adapter -- cat /etc/audit/request-profile.yaml出力例

"apiVersion": "audit.k8s.io/v1" "kind": "Policy" "metadata": "name": "Request" "omitStages": - "RequestReceived" "rules": - "level": "Request"注記ConfigMapオブジェクトで Prometheus アダプターに認識されないprofile値を入力すると、Prometheus アダプターには変更が加えられず、Cluster Monitoring Operator によってエラーがログに記録されます。Prometheus アダプターの監査ログを確認します。

$ oc -n openshift-monitoring exec -c <prometheus_adapter_pod_name> -- cat /var/log/adapter/audit.log

4.1. ローカル Alertmanager の無効化

Prometheus インスタンスからのアラートをルーティングするローカル Alertmanager は、OpenShift ContainerPlatform モニタリングスタックの openshift-monitoring プロジェクトではデフォルトで有効になっています。

ローカル Alertmanager を必要としない場合、openshift-monitoring プロジェクトで cluster-monitoring-config 設定マップを指定して無効にできます。

前提条件

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

cluster-monitoring-configConfigMap を作成している。 -

OpenShift CLI (

oc) がインストールされている。

手順

openshift-monitoringプロジェクトでcluster-monitoring-configConfigMap を編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configdata/config.yamlの下に、alertmanagerMainコンポーネントのenabled: falseを追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | alertmanagerMain: enabled: false- 変更を適用するためにファイルを保存します。Alertmanager インスタンスは、この変更を適用すると自動的に無効にされます。

4.2. 次のステップ

- ユーザー定義プロジェクトのモニタリングの有効化

- リモート正常性レポート を確認し、必要な場合はこれをオプトアウトします。

第5章 ユーザー定義プロジェクトのモニタリングの有効化

OpenShift Container Platform 4.11 では、デフォルトのプラットフォームのモニタリングに加えて、ユーザー定義プロジェクトのモニタリングを有効にできます。追加のモニタリングソリューションを必要とせずに、Open Shift Container Platform で独自のプロジェクトをモニタリングできます。この機能を使用することで、コアプラットフォームコンポーネントおよびユーザー定義プロジェクトのモニタリングが一元化されます。

Operator Lifecycle Manager (OLM) を使用してインストールされた Prometheus Operator のバージョンは、ユーザー定義のモニタリングと互換性がありません。そのため、OLM Prometheus Operator によって管理される Prometheus カスタムリソース (CR) としてインストールされるカスタム Prometheus インスタンスは OpenShift Container Platform ではサポートされていません。

5.1. ユーザー定義プロジェクトのモニタリングの有効化

クラスター管理者は、クラスターモニタリング ConfigMap オブジェクト に enableUserWorkload: true フィールドを設定し、ユーザー定義プロジェクトのモニタリングを有効にできます。

OpenShift Container Platform 4.11 では、ユーザー定義プロジェクトのモニタリングを有効にする前に、カスタム Prometheus インスタンスを削除する必要があります。

OpenShift Container Platform のユーザー定義プロジェクトのモニタリングを有効にするには、cluster-admin クラスターロールを持つユーザーとしてクラスターにアクセスできる必要があります。これにより、クラスター管理者は任意で、ユーザー定義のプロジェクトをモニターするコンポーネントを設定するパーミッションをユーザーに付与できます。

前提条件

-

cluster-adminクラスターロールを持つユーザーとしてクラスターにアクセスできます。 -

OpenShift CLI (

oc) がインストールされている。 -

cluster-monitoring-configConfigMapオブジェクトを作成している。 オプションで

user-workload-monitoring-configConfigMapをopenshift-user-workload-monitoringプロジェクトに作成している。ユーザー定義プロジェクトをモニターするコンポーネントのConfigMapに設定オプションを追加できます。注記設定の変更を

user-workload-monitoring-configConfigMapに保存するたびに、openshift-user-workload-monitoringプロジェクトの Pod が再デプロイされます。これらのコンポーネントが再デプロイするまで時間がかかる場合があります。ユーザー定義プロジェクトのモニタリングを最初に有効にする前にConfigMapオブジェクトを作成し、設定することができます。これにより、Pod を頻繁に再デプロイする必要がなくなります。

手順

cluster-monitoring-configConfigMapオブジェクトを編集します。$ oc -n openshift-monitoring edit configmap cluster-monitoring-configenableUserWorkload: trueをdata/config.yamlの下に追加します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: | enableUserWorkload: true1 - 1

trueに設定すると、enableUserWorkloadパラメーターはクラスター内のユーザー定義プロジェクトのモニタリングを有効にします。

変更を適用するためにファイルを保存します。ユーザー定義プロジェクトのモニタリングは自動的に有効になります。