Operator

OpenShift Container Platform での Operator の使用

概要

第1章 Operator の概要

Operator は OpenShift Container Platform の最も重要なコンポーネントです。Operator はコントロールプレーンでサービスをパッケージ化し、デプロイし、管理するための優先される方法です。Operator の使用は、ユーザーが実行するアプリケーションにも各種の利点があります。

Operator は kubectl や oc コマンドなどの Kubernetes API および CLI ツールと統合します。Operator はアプリケーションの監視、ヘルスチェックの実行、OTA (over-the-air) 更新の管理を実行し、アプリケーションが指定した状態にあることを確認するための手段となります。

どちらも同様の Operator の概念と目標に従いますが、OpenShift Container Platform の Operator は、目的に応じて 2 つの異なるシステムによって管理されます。

- Cluster Version Operator (CVO) によって管理されるクラスター Operator は、クラスター機能を実行するためにデフォルトでインストールされます。

- Operator Lifecycle Manager (OLM) によって管理されるオプションのアドオン Operator は、ユーザーがアプリケーションで実行できるようにアクセスできるようにすることができます。

Operator を使用すると、クラスター内で実行中のサービスを監視するアプリケーションを作成できます。Operator は、アプリケーション専用に設計されています。Operator は、インストールや設定などの一般的な Day 1 の操作と、自動スケーリングやバックアップの作成などの Day 2 の操作を実装および自動化します。これらのアクティビティーはすべて、クラスター内で実行されているソフトウェアの一部です。

1.1. 開発者の場合

開発者は、次の Operator タスクを実行できます。

1.2. 管理者の場合

クラスター管理者は、次の Operator タスクを実行できます。

Red Hat が提供するクラスター Operator の詳細については、クラスター Operators リファレンス を参照してください。

1.3. 次のステップ

Operator の詳細はOperator とはを参照してください。

第2章 Operator について

2.1. Operator について

概念的に言うと、Operator は人間の運用上のナレッジを使用し、これをコンシューマーと簡単に共有できるソフトウェアにエンコードします。

Operator は、ソフトウェアの他の部分を実行する運用上の複雑さを軽減するソフトウェアの特定の部分で設定されます。Operator はソフトウェアベンダーのエンジニアリングチームの拡張機能のように動作し、(OpenShift Container Platform などの) Kubernetes 環境を監視し、その最新状態に基づいてリアルタイムの意思決定を行います。高度な Operator はアップグレードをシームレスに実行し、障害に自動的に対応するように設計されており、時間の節約のためにソフトウェアのバックアッププロセスを省略するなどのショートカットを実行することはありません。

技術的に言うと、Operator は Kubernetes アプリケーションをパッケージ化し、デプロイし、管理する方法です。

Kubernetes アプリケーションは、Kubernetes にデプロイされ、Kubernetes API および kubectl または oc ツールを使用して管理されるアプリケーションです。Kubernetes を最大限に活用するには、Kubernetes 上で実行されるアプリケーションを提供し、管理するために拡張できるように一連の総合的な API が必要です。Operator は、Kubernetes 上でこのタイプのアプリケーションを管理するランタイムと見なすことができます。

2.1.1. Operator を使用する理由

Operator は以下を提供します。

- インストールおよびアップグレードの反復性。

- すべてのシステムコンポーネントの継続的なヘルスチェック。

- OpenShift コンポーネントおよび ISV コンテンツの OTA (Over-the-air) 更新。

- フィールドエンジニアからの知識をカプセル化し、1 または 2 ユーザーだけでなく、すべてのユーザーにデプロイメントする場所。

- Kubernetes にデプロイする理由

- Kubernetes (延長線上で考えると OpenShift Container Platform も含まれる) には、シークレットの処理、負荷分散、サービスの検出、自動スケーリングなどの、オンプレミスおよびクラウドプロバイダーで機能する、複雑な分散システムをビルドするために必要なすべてのプリミティブが含まれます。

- アプリケーションを Kubernetes API および

kubectlツールで管理する理由 -

これらの API は機能的に充実しており、すべてのプラットフォームのクライアントを持ち、クラスターのアクセス制御/監査機能にプラグインします。Operator は Kubernetes の拡張メカニズム、カスタムリソース定義 (CRD、Custom Resource Definition ) を使用するので、

MongoDBなどの カスタムオブジェクトは、ビルトインされたネイティブ Kubernetes オブジェクトのように表示され、機能します。 - Operator とサービスブローカーとの比較

- サービスブローカーは、アプリケーションのプログラムによる検出およびデプロイメントを行うための 1 つの手段です。ただし、これは長期的に実行されるプロセスではないため、アップグレード、フェイルオーバー、またはスケーリングなどの Day 2 オペレーションを実行できません。カスタマイズおよびチューニング可能なパラメーターはインストール時に提供されるのに対し、Operator はクラスターの最新の状態を常に監視します。クラスター外のサービスを使用する場合は、Operator もこれらのクラスター外のサービスに使用できますが、これらをサービスブローカーで使用できます。

2.1.2. Operator Framework

Operator Framework は、上記のカスタマーエクスペリエンスに関連して提供されるツールおよび機能のファミリーです。これは、コードを作成するためだけにあるのではなく、Operator のテスト、実行、および更新などの重要な機能を実行します。Operator Framework コンポーネントは、これらの課題に対応するためのオープンソースツールで構成されています。

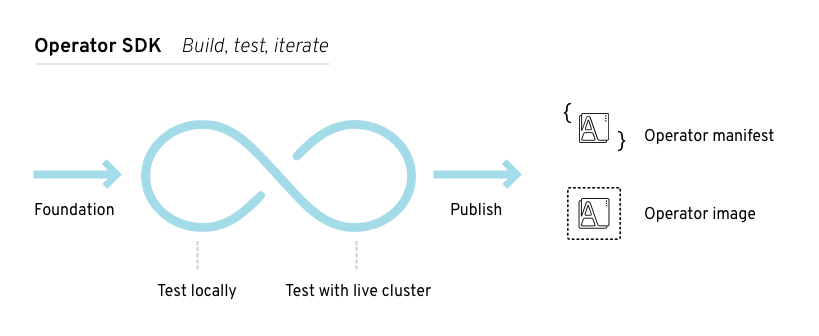

- Operator SDK

- Operator SDK は Kubernetes API の複雑性を把握していなくても、それぞれの専門知識に基づいて独自の Operator のブートストラップ、ビルド、テストおよびパッケージ化を実行できるよう Operator の作成者を支援します。

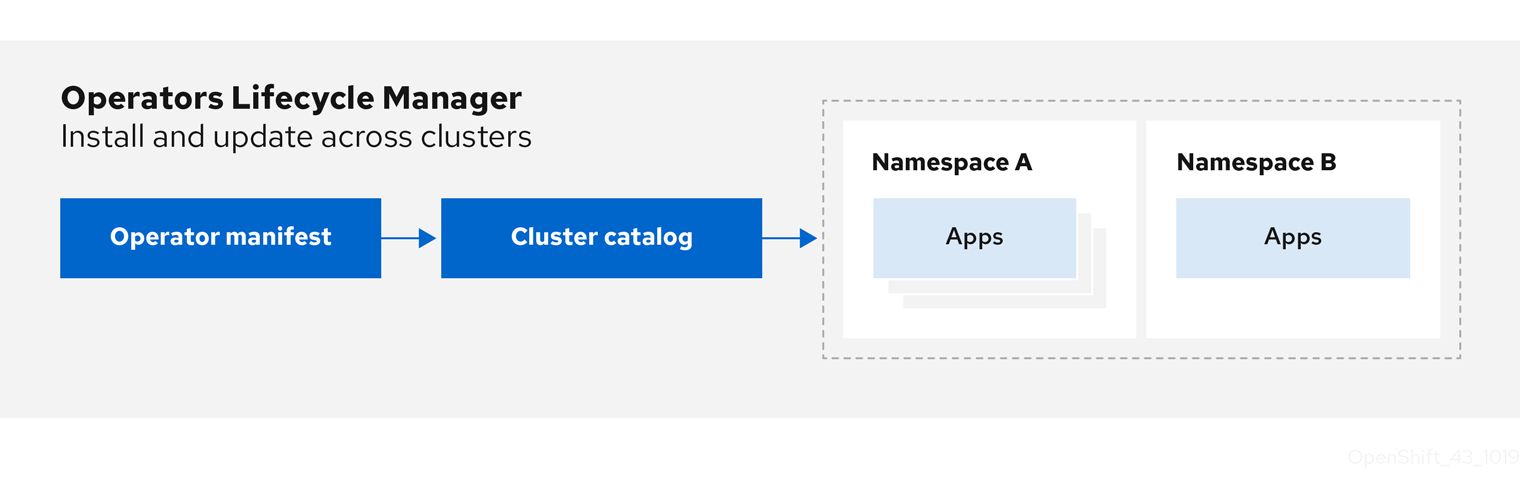

- Operator Lifecycle Manager

- Operator Lifecycle Manager (OLM) は、クラスター内の Operator のインストール、アップグレード、ロールベースのアクセス制御 (RBAC) を制御します。OpenShift Container Platform 4.11 ではデフォルトでデプロイされます。

- Operator レジストリー

- Operator レジストリーは、クラスターで作成するためのクラスターサービスバージョン (Cluster Service Version、CSV) およびカスタムリソース定義 (CRD) を保存し、パッケージおよびチャネルについての Operator メタデータを保存します。これは Kubernetes または OpenShift クラスターで実行され、この Operator カタログデータを OLM に指定します。

- OperatorHub

- OperatorHub は、クラスター管理者がクラスター上にインストールする Operator を検出し、選択するための Web コンソールです。OpenShift Container Platform ではデフォルトでデプロイされます。

これらのツールは組み立て可能なツールとして設計されているため、役に立つと思われるツールを使用できます。

2.1.3. Operator 成熟度モデル

Operator 内にカプセル化されている管理ロジックの複雑さのレベルはさまざまです。また、このロジックは通常 Operator によって表されるサービスのタイプによって大きく変わります。

ただし、大半の Operator に含まれる特定の機能セットについては、Operator のカプセル化された操作の成熟度の規模を一般化することができます。このため、以下の Operator 成熟度モデルは、 Operator の一般的な Day 2 オペレーションについての 5 つのフェーズの成熟度を定義しています。

図2.1 Operator 成熟度モデル

上記のモデルでは、これらの機能を Operator SDK の Helm、Go、および Ansible 機能で最適に開発する方法も示します。

2.2. Operator Framework パッケージ形式

以下で、OpenShift Container Platform の Operator Lifecycle Manager (OLM) によってサポートされる Operator のパッケージ形式について説明します。

Operator のレガシー パッケージマニフェスト形式 のサポートは、OpenShift Container Platform 4.8 以降で削除されます。パッケージマニフェスト形式の既存 Operator プロジェクトは、Operator SDK の pkgman-to-bundle コマンドを使用してバンドル形式に移行できます。詳細は、パッケージマニフェストプロジェクトのバンドル形式への移行 を参照してください。

2.2.1. Bundle Format

Operator の Bundle Format は、Operator Framework によって導入されるパッケージ形式です。スケーラビリティーを向上させ、アップストリームユーザーがより効果的に独自のカタログをホストできるようにするために、Bundle Format 仕様は Operator メタデータのディストリビューションを単純化します。

Operator バンドルは、Operator の単一バージョンを表します。ディスク上の バンドルマニフェスト は、Kubernetes マニフェストおよび Operator メタデータを保存する実行不可能なコンテナーイメージである バンドルイメージ としてコンテナー化され、提供されます。次に、バンドルイメージの保存および配布は、podman、docker、および Quay などのコンテナーレジストリーを使用して管理されます。

Operator メタデータには以下を含めることができます。

- Operator を識別する情報 (名前およびバージョンなど)。

- UI を駆動する追加情報 (アイコンや一部のカスタムリソース (CR) など)。

- 必須および提供される API。

- 関連するイメージ。

マニフェストを Operator レジストリーデータベースに読み込む際に、以下の要件が検証されます。

- バンドルには、アノテーションで定義された 1 つ以上のチャネルが含まれる必要がある。

- すべてのバンドルには、1 つのクラスターサービスバージョン (CSV) がある。

- CSV がクラスターリソース定義 (CRD) を所有する場合、その CRD はバンドルに存在する必要がある。

2.2.1.1. マニフェスト

バンドルマニフェストは、Operator のデプロイメントおよび RBAC モデルを定義する Kubernetes マニフェストのセットを指します。

バンドルにはディレクトリーごとに 1 つの CSV が含まれ、通常は manifest/ ディレクトリーの CSV の所有される API を定義する CRD が含まれます。

Bundle Format のレイアウトの例

etcd

├── manifests

│ ├── etcdcluster.crd.yaml

│ └── etcdoperator.clusterserviceversion.yaml

│ └── secret.yaml

│ └── configmap.yaml

└── metadata

└── annotations.yaml

└── dependencies.yamlその他のサポート対象のオブジェクト

以下のオブジェクトタイプは、バンドルの /manifests ディレクトリーにオプションとして追加することもできます。

サポート対象のオプションオブジェクトタイプ

-

ClusterRole -

clusterRoleBinding -

ConfigMap -

ConsoleCLIDownload -

ConsoleLink -

ConsoleQuickStart -

ConsoleYamlSample -

PodDisruptionBudget -

PriorityClass -

PrometheusRule -

Role -

RoleBinding -

Secret -

Service -

ServiceAccount -

ServiceMonitor -

VerticalPodAutoscaler

これらのオプションオブジェクトがバンドルに含まれる場合、Operator Lifecycle Manager (OLM) はバンドルからこれらを作成し、CSV と共にそれらのライフサイクルを管理できます。

オプションオブジェクトのライフサイクル

- CSV が削除されると、OLM はオプションオブジェクトを削除します。

CSV がアップグレードされると、以下を実行します。

- オプションオブジェクトの名前が同じである場合、OLM はこれを更新します。

- オプションオブジェクトの名前がバージョン間で変更された場合、OLM はこれを削除し、再作成します。

2.2.1.2. アノテーション

バンドルには、その metadata/ ディレクトリーに annotations.yaml ファイルも含まれます。このファイルは、バンドルをバンドルのインデックスに追加する方法についての形式およびパッケージ情報の記述に役立つ高レベルの集計データを定義します。

annotations.yaml の例

annotations:

operators.operatorframework.io.bundle.mediatype.v1: "registry+v1"

operators.operatorframework.io.bundle.manifests.v1: "manifests/"

operators.operatorframework.io.bundle.metadata.v1: "metadata/"

operators.operatorframework.io.bundle.package.v1: "test-operator"

operators.operatorframework.io.bundle.channels.v1: "beta,stable"

operators.operatorframework.io.bundle.channel.default.v1: "stable" - 1

- Operator バンドルのメディアタイプまたは形式。

registry+v1形式の場合、これに CSV および関連付けられた Kubernetes オブジェクトが含まれることを意味します。 - 2

- Operator マニフェストが含まれるディレクトリーへのイメージのパス。このラベルは今後使用するために予約され、現時点ではデフォの

manifests/に設定されています。manifests.v1の値は、バンドルに Operator マニフェストが含まれることを示します。 - 3

- バンドルについてのメタデータファイルが含まれるディレクトリーへのイメージのパス。このラベルは今後使用するために予約され、現時点ではデフォの

metadata/に設定されています。metadata.v1の値は、このバンドルに Operator メタデータがあることを意味します。 - 4

- バンドルのパッケージ名。

- 5

- Operator レジストリーに追加される際にバンドルがサブスクライブするチャネルのリスト。

- 6

- レジストリーからインストールされる場合に Operator がサブスクライブされるデフォルトチャネル。

一致しない場合、annotations.yaml ファイルは、これらのアノテーションに依存するクラスター上の Operator レジストリーのみがこのファイルにアクセスできるために権威を持つファイルになります。

2.2.1.3. Dependencies

Operator の依存関係は、バンドルの metadata/ フォルダー内の dependencies.yaml ファイルに一覧表示されます。このファイルはオプションであり、現時点では明示的な Operator バージョンの依存関係を指定するためにのみ使用されます。

依存関係の一覧には、依存関係の内容を指定するために各項目の type フィールドが含まれます。次のタイプの Operator 依存関係がサポートされています。

olm.package-

このタイプは、特定の Operator バージョンの依存関係であることを意味します。依存関係情報には、パッケージ名とパッケージのバージョンを semver 形式で含める必要があります。たとえば、

0.5.2などの特定バージョンや>0.5.1などのバージョンの範囲を指定することができます。 olm.gvk- このタイプの場合、作成者は CSV の既存の CRD および API ベースの使用方法と同様に group/version/kind (GVK) 情報で依存関係を指定できます。これは、Operator の作成者がすべての依存関係、API または明示的なバージョンを同じ場所に配置できるようにするパスです。

olm.constraint- このタイプは、任意の Operator プロパティーに対するジェネリック制約を宣言します。

以下の例では、依存関係は Prometheus Operator および etcd CRD について指定されます。

dependencies.yaml ファイルの例

dependencies:

- type: olm.package

value:

packageName: prometheus

version: ">0.27.0"

- type: olm.gvk

value:

group: etcd.database.coreos.com

kind: EtcdCluster

version: v1beta22.2.1.4. opm CLI について

opm CLI ツールは、Operator Bundle Format で使用するために Operator Framework によって提供されます。このツールを使用して、ソフトウェアリポジトリーに相当する Operator バンドルのリストから Operator のカタログを作成し、維持することができます。結果として、コンテナーイメージをコンテナーレジストリーに保存し、その後にクラスターにインストールできます。

カタログには、コンテナーイメージの実行時に提供される組み込まれた API を使用してクエリーできる、Operator マニフェストコンテンツへのポインターのデータベースが含まれます。OpenShift Container Platform では、Operator Lifecycle Manager (OLM) は、CatalogSource オブジェクトが定義したカタログソース内のイメージ参照できます。これにより、クラスター上にインストールされた Operator への頻度の高い更新を可能にするためにイメージを一定の間隔でポーリングできます。

-

opmCLI のインストール手順については、CLI ツール を参照してください。

2.2.2. ファイルベースのカタログ

ファイルベースのカタログは、Operator Lifecycle Manager(OLM) のカタログ形式の最新の反復になります。この形式は、プレーンテキストベース (JSON または YAML) であり、以前の SQLite データベース形式の宣言的な設定の進化であり、完全な下位互換性があります。この形式の目標は、Operator のカタログ編集、設定可能性、および拡張性を有効にすることです。

- 編集

ファイルベースのカタログを使用すると、カタログの内容を操作するユーザーは、形式を直接変更し、変更が有効であることを確認できます。この形式はプレーンテキストの JSON または YAML であるため、カタログメンテナーは、一般的に知られている、サポート対象の JSON または YAML ツール (例:

jqCLI) を使用して、手動でカタログメタデータを簡単に操作できます。この編集機能により、以下の機能とユーザー定義の拡張が有効になります。

- 既存のバンドルの新規チャネルへのプロモート

- パッケージのデフォルトチャネルの変更

- アップグレードエッジを追加、更新、および削除するためのカスタムアルゴリズム

- コンポーザービリティー

ファイルベースのカタログは、任意のディレクトリー階層に保管され、カタログの作成が可能になります。たとえば、2 つのファイルベースのカタログディレクトリー (

catalogAおよびcatalogB) について見てみましょう。カタログメンテナーは、新規のディレクトリーcatalogCを作成してcatalogAとcatalogBをそのディレクトリーにコピーし、新しく結合カタログを作成できます。このコンポーザービリティーにより、カタログの分散化が可能になります。この形式により、Operator の作成者は Operator 固有のカタログを維持でき、メンテナーは個別の Operator カタログで設定されるカタログを簡単にビルドできます。ファイルベースのカタログは、他の複数のカタログを組み合わせたり、1 つのカタログのサブセットを抽出したり、またはこれらの両方を組み合わせたりすることで作成できます。

注記パッケージ内でパッケージおよびバンドルを重複できません。

opm validateコマンドは、重複が見つかった場合はエラーを返します。Operator の作成者は Operator、その依存関係およびそのアップグレードの互換性について最も理解しているので、Operator 固有のカタログを独自のカタログに維持し、そのコンテンツを直接制御できます。ファイルベースのカタログの場合に、Operator の作成者はカタログでパッケージをビルドして維持するタスクを所有します。ただし、複合カタログメンテナーは、カタログ内のパッケージのキュレートおよびユーザーにカタログを公開するタスクのみを所有します。

- 拡張性

ファイルベースのカタログ仕様は、カタログの低レベル表現です。これは低レベルの形式で直接保守できますが、カタログメンテナーは、このレベルの上に任意の拡張をビルドして、独自のカスタムツールを使用して任意数の変更を加えることができます。

たとえば、ツールは

(mode=semver)などの高レベルの API を、アップグレードエッジ用に低レベルのファイルベースのカタログ形式に変換できます。または、カタログ保守担当者は、特定の条件を満たすバンドルに新規プロパティーを追加して、すべてのバンドルメタデータをカスタマイズする必要がある場合があります。このような拡張性を使用すると、今後の OpenShift Container Platform リリース向けに、追加の正式なツールを下層の API 上で開発できますが、主な利点として、カタログメンテナーにもこの機能がある点が挙げられます。

OpenShift Container Platform 4.11 の時点で、デフォルトの Red Hat が提供する Operator カタログは、ファイルベースのカタログ形式でリリースされます。OpenShift Container Platform 4.6 から 4.10 までのデフォルトの Red Hat が提供する Operator カタログは、非推奨の SQLite データベース形式でリリースされました。

opm サブコマンド、フラグ、および SQLite データベース形式に関連する機能も非推奨となり、今後のリリースで削除されます。機能は引き続きサポートされており、非推奨の SQLite データベース形式を使用するカタログに使用する必要があります。

opm index prune などの SQLite データベース形式を使用する opm サブコマンドおよびフラグの多くは、ファイルベースのカタログ形式では機能しません。ファイルベースのカタログの操作の詳細については、カスタムカタログの管理 と oc-mirror プラグインを使用した非接続型インストールのイメージのミラーリング を参照してください。

2.2.2.1. ディレクトリー構造

ファイルベースのカタログは、ディレクトリーベースのファイルシステムから保存してロードできます。opm CLI は、root ディレクトリーを元に、サブディレクトリーに再帰してカタログを読み込みます。CLI は、検出されるすべてのファイルの読み込みを試行し、エラーが発生した場合には失敗します。

.gitignore ファイルとパターンと優先順位が同じ .indexignore ファイルを使用して、カタログ以外のファイルを無視できます。

例: .indexignore ファイル

# Ignore everything except non-object .json and .yaml files

**/*

!*.json

!*.yaml

**/objects/*.json

**/objects/*.yamlカタログメンテナーは、必要なレイアウトを柔軟に選択できますが、各パッケージのファイルベースのカタログ Blob は別々のサブディレクトリーに保管することを推奨します。個々のファイルは JSON または YAML のいずれかをしようしてください。カタログ内のすべてのファイルが同じ形式を使用する必要はありません。

推奨される基本構造

catalog

├── packageA

│ └── index.yaml

├── packageB

│ ├── .indexignore

│ ├── index.yaml

│ └── objects

│ └── packageB.v0.1.0.clusterserviceversion.yaml

└── packageC

└── index.jsonこの推奨の構造には、ディレクトリー階層内の各サブディレクトリーは自己完結型のカタログであるという特性があるので、カタログの作成、検出、およびナビゲーションなどのファイルシステムの操作が簡素化されます。このカタログは、親カタログのルートディレクトリーにコピーして親カタログに追加することもできます。

2.2.2.2. スキーマ

ファイルベースのカタログは、任意のスキーマで拡張できる CUE 言語仕様 に基づく形式を使用します。以下の _Meta CUE スキーマは、すべてのファイルベースのカタログ Blob が順守する必要のある形式を定義します。

_Meta スキーマ

_Meta: {

// schema is required and must be a non-empty string

schema: string & !=""

// package is optional, but if it's defined, it must be a non-empty string

package?: string & !=""

// properties is optional, but if it's defined, it must be a list of 0 or more properties

properties?: [... #Property]

}

#Property: {

// type is required

type: string & !=""

// value is required, and it must not be null

value: !=null

}

この仕様にリストされている CUE スキーマは網羅されていると見なされます。opm validate コマンドには、CUE で簡潔に記述するのが困難または不可能な追加の検証が含まれます。

Operator Lifecycle Manager(OLM) カタログは、現時点で OLM の既存のパッケージおよびバンドルの概念に対応する 3 つのスキーマ (olm.package、olm.channel および olm.bundle) を使用します。

カタログの各 Operator パッケージには、olm.package Blob が 1 つ (少なくとも olm.channel Blob 1 つ、および 1 つ以上の olm.bundle Blob) が必要です。

olm.* スキーマは OLM 定義スキーマ用に予約されています。カスタムスキーマには、所有しているドメインなど、一意の接頭辞を使用する必要があります。

2.2.2.2.1. olm.package スキーマ

olm.package スキーマは Operator のパッケージレベルのメタデータを定義します。これには、名前、説明、デフォルトのチャネル、およびアイコンが含まれます。

例2.1 olm.package スキーマ

#Package: {

schema: "olm.package"

// Package name

name: string & !=""

// A description of the package

description?: string

// The package's default channel

defaultChannel: string & !=""

// An optional icon

icon?: {

base64data: string

mediatype: string

}

}2.2.2.2.2. olm.channel スキーマ

olm.channel スキーマは、パッケージ内のチャネル、チャネルのメンバーであるバンドルエントリー、およびそれらのバンドルのアップグレードエッジを定義します。

バンドルは複数の olm.channel Blob のエントリーとして含めることができますが、チャネルごとに設定できるエントリーは 1 つだけです。

このカタログまたは別のカタログで検索できない場合に、エントリーの置換値が別のバンドル名を参照することは有効です。ただし、他のすべてのチャネルの普遍条件に該当する必要があります (チャネルに複数のヘッドがない場合など)。

例2.2 olm.channel スキーマ

#Channel: {

schema: "olm.channel"

package: string & !=""

name: string & !=""

entries: [...#ChannelEntry]

}

#ChannelEntry: {

// name is required. It is the name of an `olm.bundle` that

// is present in the channel.

name: string & !=""

// replaces is optional. It is the name of bundle that is replaced

// by this entry. It does not have to be present in the entry list.

replaces?: string & !=""

// skips is optional. It is a list of bundle names that are skipped by

// this entry. The skipped bundles do not have to be present in the

// entry list.

skips?: [...string & !=""]

// skipRange is optional. It is the semver range of bundle versions

// that are skipped by this entry.

skipRange?: string & !=""

}2.2.2.2.3. olm.bundle スキーマ

例2.3 olm.bundle スキーマ

#Bundle: {

schema: "olm.bundle"

package: string & !=""

name: string & !=""

image: string & !=""

properties: [...#Property]

relatedImages?: [...#RelatedImage]

}

#Property: {

// type is required

type: string & !=""

// value is required, and it must not be null

value: !=null

}

#RelatedImage: {

// image is the image reference

image: string & !=""

// name is an optional descriptive name for an image that

// helps identify its purpose in the context of the bundle

name?: string & !=""

}2.2.2.3. プロパティー

プロパティーは、ファイルベースのカタログスキーマに追加できる任意のメタデータです。type フィールドは、value フィールドのセマンティックおよび構文上の意味を効果的に指定する文字列です。値には任意の JSON または YAML を使用できます。

OLM は、予約済みの olm.* 接頭辞をもう一度使用して、いくつかのプロパティータイプを定義します。

2.2.2.3.1. olm.package プロパティー

olm.package プロパティーは、パッケージ名とバージョンを定義します。これはバンドルの必須プロパティーであり、これらのプロパティーが 1 つ必要です。packageName フィールドはバンドルのファーストクラス package フィールドと同じでなければならず、version フィールドは有効なセマンティクスバージョンである必要があります。

例2.4 olm.package プロパティー

#PropertyPackage: {

type: "olm.package"

value: {

packageName: string & !=""

version: string & !=""

}

}2.2.2.3.2. olm.gvk プロパティー

olm.gvk プロパティーは、このバンドルで提供される Kubernetes API の group/version/kind(GVK) を定義します。このプロパティーは、OLM が使用して、必須の API と同じ GVK をリストする他のバンドルの依存関係として、このプロパティーでバンドルを解決します。GVK は Kubernetes GVK の検証に準拠する必要があります。

例2.5 olm.gvk プロパティー

#PropertyGVK: {

type: "olm.gvk"

value: {

group: string & !=""

version: string & !=""

kind: string & !=""

}

}2.2.2.3.3. olm.package.required

olm.package.required プロパティーは、このバンドルが必要な別のパッケージのパッケージ名とバージョン範囲を定義します。バンドルにリストされている必要なパッケージプロパティーごとに、OLM は、リストされているパッケージのクラスターに必要なバージョン範囲で Operator がインストールされていることを確認します。versionRange フィールドは有効なセマンティクスバージョン (semver) の範囲である必要があります。

例2.6 olm.package.required プロパティー

#PropertyPackageRequired: {

type: "olm.package.required"

value: {

packageName: string & !=""

versionRange: string & !=""

}

}2.2.2.3.4. olm.gvk.required

olm.gvk.required プロパティーは、このバンドルが必要とする Kubernetes API の group/version/kind(GVK) を定義します。バンドルにリストされている必要な GVK プロパティーごとに、OLM は、提供する Operator がクラスターにインストールされていることを確認します。GVK は Kubernetes GVK の検証に準拠する必要があります。

例2.7 olm.gvk.required プロパティー

#PropertyGVKRequired: {

type: "olm.gvk.required"

value: {

group: string & !=""

version: string & !=""

kind: string & !=""

}

}2.2.2.4. カタログの例

ファイルベースのカタログを使用すると、カタログメンテナーは Operator のキュレーションおよび互換性に集中できます。Operator の作成者は Operator 用に Operator 固有のカタログをすでに生成しているので、カタログメンテナーは、各 Operator カタログをカタログのルートディレクトリーのサブディレクトリーにレンダリングしてビルドできます。

ファイルベースのカタログをビルドする方法は多数あります。以下の手順は、単純なアプローチの概要を示しています。

カタログの設定ファイルを 1 つ維持し、カタログ内に Operator ごとにイメージの参照を含めます。

カタログ設定ファイルのサンプル

name: community-operators repo: quay.io/community-operators/catalog tag: latest references: - name: etcd-operator image: quay.io/etcd-operator/index@sha256:5891b5b522d5df086d0ff0b110fbd9d21bb4fc7163af34d08286a2e846f6be03 - name: prometheus-operator image: quay.io/prometheus-operator/index@sha256:e258d248fda94c63753607f7c4494ee0fcbe92f1a76bfdac795c9d84101eb317設定ファイルを解析し、その参照から新規カタログを作成するスクリプトを実行します。

スクリプトの例

name=$(yq eval '.name' catalog.yaml) mkdir "$name" yq eval '.name + "/" + .references[].name' catalog.yaml | xargs mkdir for l in $(yq e '.name as $catalog | .references[] | .image + "|" + $catalog + "/" + .name + "/index.yaml"' catalog.yaml); do image=$(echo $l | cut -d'|' -f1) file=$(echo $l | cut -d'|' -f2) opm render "$image" > "$file" done opm alpha generate dockerfile "$name" indexImage=$(yq eval '.repo + ":" + .tag' catalog.yaml) docker build -t "$indexImage" -f "$name.Dockerfile" . docker push "$indexImage"

2.2.2.5. ガイドライン

ファイルベースのカタログを維持する場合には、以下のガイドラインを考慮してください。

2.2.2.5.1. イミュータブルなバンドル

Operator Lifecycle Manager(OLM) に関する一般的なアドバイスとして、バンドルイメージとそのメタデータをイミュータブルとして処理する必要がある点があります。

破損したバンドルがカタログにプッシュされている場合には、少なくとも 1 人のユーザーがそのバンドルにアップグレードしたと想定する必要があります。この仮定に基づいて、破損したバンドルがインストールされたユーザーがアップグレードを受信できるように、破損したバンドルから、アップグレードエッジが含まれる別のバンドルをリリースする必要があります。OLM は、カタログでバンドルの内容が更新された場合に、インストールされたバンドルは再インストールされません。

ただし、カタログメタデータの変更が推奨される場合があります。

-

チャネルプロモーション: バンドルをすでにリリースし、後で別のチャネルに追加することにした場合は、バンドルのエントリーを別の

olm.channelBlob に追加できます。 -

新規アップグレードエッジ:

1.2.zバンドルバージョンを新たにリリースしたが (例:1.2.4)、1.3.0がすでにリリースされている場合は、1.2.4をスキップするように1.3.0のカタログメタデータを更新できます。

2.2.2.5.2. ソース制御

カタログメタデータはソースコントロールに保存され、信頼できる情報源として処理される必要があります。以下の手順で、カタログイメージを更新する必要があります。

- ソース制御されたカタログディレクトリーを新規コミットを使用して更新します。

-

カタログイメージをビルドし、プッシュします。ユーザーがカタログが利用可能になり次第更新を受信できるように、一貫性のあるタグ付け (

:latestor:<target_cluster_version>) を使用します。

2.2.2.6. CLI の使用

opm CLI を使用してファイルベースのカタログを作成する方法は、カスタムカタログの管理 を参照してください。

ファイルベースのカタログの管理に関連する opm CLI コマンドについての参考情報は、CLI ツール を参照してください。

2.2.2.7. 自動化

Operator の作成者およびカタログメンテナーは、CI/CD ワークフローを使用してカタログのメンテナンスを自動化することが推奨されます。カタログメンテナーは、GitOps 自動化をビルドして以下のタスクを実行し、これをさらに向上させることができます。

- パッケージのイメージ参照の更新など、プル要求 (PR) の作成者が要求された変更を実行できることを確認します。

-

カタログの更新で

opm validateコマンドが指定されていることを確認します。 - 更新されたバンドルまたはカタログイメージの参照が存在し、カタログイメージがクラスターで正常に実行され、そのパッケージの Operator が正常にインストールされることを確認します。

- 以前のチェックに合格した bmcs を自動的にマージします。

- カタログイメージを自動的にもう一度ビルドして公開します。

2.3. Operator Framework の一般的な用語の用語集

このトピックでは、パッケージ形式についての Operator Lifecycle Manager (OLM) および Operator SDK を含む、Operator Framework に関連する一般的な用語の用語集を提供します。

2.3.1. Common Operator Framework の一般的な用語

2.3.1.1. バンドル

Bundle Format では、バンドル は Operator CSV、マニフェスト、およびメタデータのコレクションです。さらに、それらはクラスターにインストールできる一意のバージョンの Operator を形成します。

2.3.1.2. バンドルイメージ

Bundle Format では、バンドルイメージ は Operator マニフェストからビルドされ、1 つのバンドルが含まれるコンテナーイメージです。バンドルイメージは、Quay.io または DockerHub などの Open Container Initiative (OCI) 仕様コンテナーレジストリーによって保存され、配布されます。

2.3.1.3. カタログソース

カタログソース は、OLM が Operator およびそれらの依存関係を検出し、インストールするためにクエリーできるメタデータのストアを表します。

2.3.1.4. Channel

チャネル は Operator の更新ストリームを定義し、サブスクライバーの更新をロールアウトするために使用されます。ヘッドはそのチャネルの最新バージョンを参照します。たとえば stable チャネルには、Operator のすべての安定したバージョンが最も古いものから最新のものへと編成されます。

Operator には複数のチャネルを含めることができ、特定のチャネルへのサブスクリプションのバインドはそのチャネル内の更新のみを検索します。

2.3.1.5. チャネルヘッド

チャネルヘッド は、特定のチャネル内の最新の既知の更新を指します。

2.3.1.6. クラスターサービスバージョン

クラスターサービスバージョン (CSV) は、クラスターでの Operator の実行に使用される Operator メタデータから作成される YAML マニフェストです。これは、ユーザーインターフェイスにロゴ、説明、およびバージョンなどの情報を設定するために使用される Operator コンテナーイメージに伴うメタデータです。

CSV は、Operator が必要とする RBAC ルールやそれが管理したり、依存したりするカスタムリソース (CR) などの Operator の実行に必要な技術情報の情報源でもあります。

2.3.1.7. 依存関係

Operator はクラスターに存在する別の Operator への 依存関係 を持つ場合があります。たとえば、Vault Operator にはそのデータ永続層について etcd Operator への依存関係があります。

OLM は、インストールフェーズで指定されたすべてのバージョンの Operator および CRD がクラスターにインストールされていることを確認して依存関係を解決します。この依存関係は、必要な CRD API を満たすカタログの Operator を検索し、インストールすることで解決され、パッケージまたはバンドルには関連しません。

2.3.1.8. インデックスイメージ

Bundle Format で、インデックスイメージ は、すべてのバージョンの CSV および CRD を含む Operator バンドルについての情報が含まれるデータベースのイメージ (データベーススナップショット) を指します。このインデックスは、クラスターで Operator の履歴をホストでき、opm CLI ツールを使用して Operator を追加または削除することで維持されます。

2.3.1.9. インストール計画

インストール計画 は、CSV を自動的にインストールするか、アップグレードするために作成されるリソースの計算された一覧です。

2.3.1.10. マルチテナントへの対応

OpenShift Container Platform の テナントは、通常はnamespaceまたはプロジェクトによって表される、一連のデプロイされたワークロードに対する共通のアクセスと権限を共有するユーザーまたはユーザーのグループです。テナントを使用して、異なるグループまたはチーム間に一定レベルの分離を提供できます。

クラスターが複数のユーザーまたはグループによって共有されている場合、マルチテナント クラスターと見なされます。

2.3.1.11. Operator グループ

Operator グループ は、 OperatorGroup オブジェクトと同じ namespace にデプロイされたすべての Operator を、namespace のリストまたはクラスター全体でそれらの CR を監視できるように設定します。

2.3.1.12. Package

Bundle Format で、パッケージ は Operator のリリースされたすべての履歴をそれぞれのバージョンで囲むディレクトリーです。Operator のリリースされたバージョンは、CRD と共に CSV マニフェストに記述されます。

2.3.1.13. レジストリー

レジストリー は、Operator のバンドルイメージを保存するデータベースで、それぞれにすべてのチャネルの最新バージョンおよび過去のバージョンすべてが含まれます。

2.3.1.14. サブスクリプション

サブスクリプション は、パッケージのチャネルを追跡して CSV を最新の状態に保ちます。

2.3.1.15. 更新グラフ

更新グラフ は、他のパッケージ化されたソフトウェアの更新グラフと同様に、CSV の複数のバージョンを 1 つにまとめます。Operator を順番にインストールすることも、特定のバージョンを省略することもできます。更新グラフは、新しいバージョンが追加されている状態でヘッドでのみ拡張することが予想されます。

2.4. Operator Lifecycle Manager (OLM)

2.4.1. Operator Lifecycle Manager の概念およびリソース

以下で、OpenShift Container Platform での Operator Lifecycle Manager (OLM) に関連する概念について説明します。

2.4.1.1. Operator Lifecycle Manager について

Operator Lifecycle Manager (OLM) を使用することにより、ユーザーは Kubernetes ネイティブアプリケーション (Operator) および OpenShift Container Platform クラスター全体で実行される関連サービスについてインストール、更新、およびそのライフサイクルの管理を実行できます。これは、Operator を効果的かつ自動化された拡張可能な方法で管理するために設計されたオープンソースツールキットの Operator Framework の一部です。

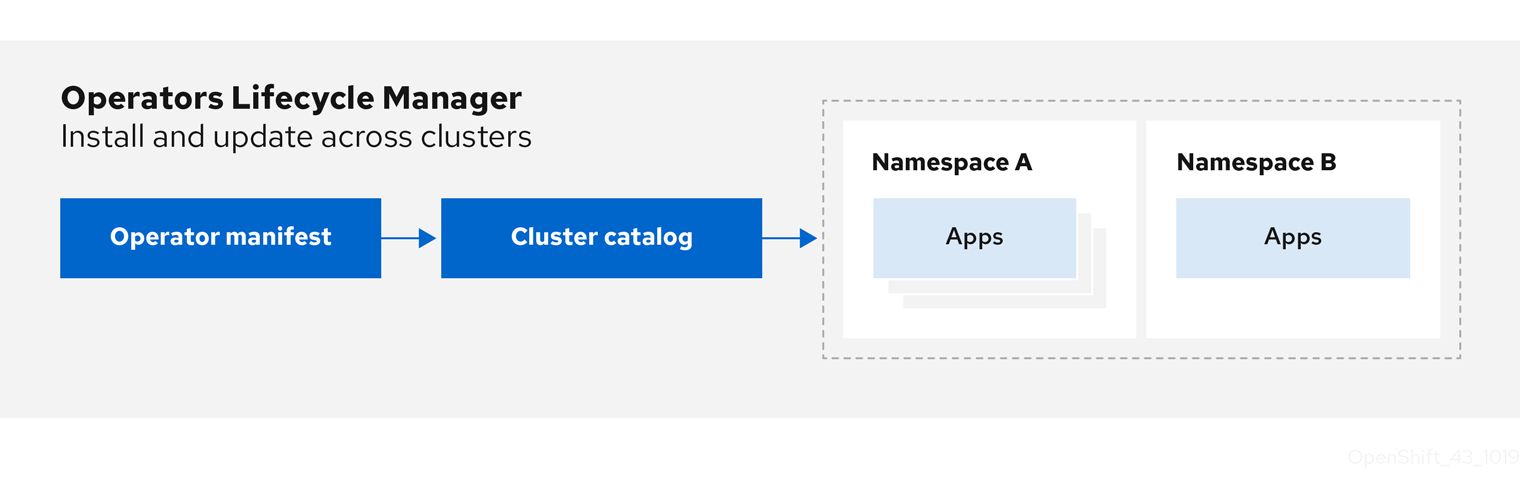

図2.2 Operator Lifecycle Manager ワークフロー

OLM は OpenShift Container Platform 4.11 でデフォルトで実行されます。これは、クラスター管理者がクラスターで実行されている Operator をインストールし、アップグレードし、アクセスをこれに付与するのに役立ちます。OpenShift Container Platform Web コンソールでは、クラスター管理者が Operator をインストールし、特定のプロジェクトアクセスを付与して、クラスターで利用可能な Operator のカタログを使用するための管理画面を利用できます。

開発者の場合は、セルフサービスを使用することで、専門的な知識がなくてもデータベースのインスタンスのプロビジョニングや設定、またモニタリング、ビッグデータサービスなどを実行できます。 Operator にそれらに関するナレッジが織り込まれているためです。

2.4.1.2. OLM リソース

以下のカスタムリソース定義 (CRD) は Operator Lifecycle Manager (OLM) によって定義され、管理されます。

| リソース | 短縮名 | 説明 |

|---|---|---|

|

|

| アプリケーションメタデータ:例: 名前、バージョン、アイコン、必須リソース。 |

|

|

| CSV、CRD、およびアプリケーションを定義するパッケージのリポジトリー。 |

|

|

| パッケージのチャネルを追跡して CSV を最新の状態に保ちます。 |

|

|

| CSV を自動的にインストールするか、アップグレードするために作成されるリソースの計算された一覧。 |

|

|

|

|

|

| - |

OLM とそれが管理する Operator との間で通信チャネルを作成します。Operator は |

2.4.1.2.1. クラスターサービスバージョン

クラスターサービスバージョン (CSV) は、OpenShift Container Platform クラスター上で実行中の Operator の特定バージョンを表します。これは、クラスターでの Operator Lifecycle Manager (OLM) の Operator の実行に使用される Operator メタデータから作成される YAML マニフェストです。

OLM は Operator についてのこのメタデータを要求し、これがクラスターで安全に実行できるようにし、Operator の新規バージョンが公開される際に更新を適用する方法についての情報を提供します。これは従来のオペレーティングシステムのソフトウェアのパッケージに似ています。OLM のパッケージ手順を、rpm、dep、または apk バンドルを作成するステージとして捉えることができます。

CSV には、ユーザーインターフェイスに名前、バージョン、説明、ラベル、リポジトリーリンクおよびロゴなどの情報を設定するために使用される Operator コンテナーイメージに伴うメタデータが含まれます。

CSV は、Operator が管理したり、依存したりするカスタムリソース (CR)、RBAC ルール、クラスター要件、およびインストールストラテジーなどの Operator の実行に必要な技術情報の情報源でもあります。この情報は OLM に対して必要なリソースの作成方法と、Operator をデプロイメントとしてセットアップする方法を指示します。

2.4.1.2.2. カタログソース

カタログソース は、通常コンテナーレジストリーに保存されている インデックスイメージ を参照してメタデータのストアを表します。Operator Lifecycle Manager(OLM) はカタログソースをクエリーし、Operator およびそれらの依存関係を検出してインストールします。OpenShift Container Platform Web コンソールの OperatorHub は、カタログソースで提供される Operator も表示します。

クラスター管理者は、Web コンソールの Administration → Cluster Settings → Configuration → OperatorHub ページを使用して、クラスターで有効なログソースにより提供される Operator の詳細一覧を表示できます。

CatalogSource オブジェクトの spec は、Pod の構築方法、または Operator レジストリー gRPC API を提供するサービスとの通信方法を示します。

例2.8 CatalogSource オブジェクトの例

apiVersion: operators.coreos.com/v1alpha1

kind: CatalogSource

metadata:

generation: 1

name: example-catalog

namespace: openshift-marketplace

annotations:

olm.catalogImageTemplate:

"quay.io/example-org/example-catalog:v{kube_major_version}.{kube_minor_version}.{kube_patch_version}"

spec:

displayName: Example Catalog

image: quay.io/example-org/example-catalog:v1

priority: -400

publisher: Example Org

sourceType: grpc

grpcPodConfig:

nodeSelector:

custom_label: <label>

priorityClassName: system-cluster-critical

tolerations:

- key: "key1"

operator: "Equal"

value: "value1"

effect: "NoSchedule"

updateStrategy:

registryPoll:

interval: 30m0s

status:

connectionState:

address: example-catalog.openshift-marketplace.svc:50051

lastConnect: 2021-08-26T18:14:31Z

lastObservedState: READY

latestImageRegistryPoll: 2021-08-26T18:46:25Z

registryService:

createdAt: 2021-08-26T16:16:37Z

port: 50051

protocol: grpc

serviceName: example-catalog

serviceNamespace: openshift-marketplace- 1

CatalogSourceオブジェクトの名前。この値は、要求された namespace で作成される、関連の Pod 名の一部としても使用されます。- 2

- カタログを作成する namespace。カタログを全 namespace のクラスター全体で利用可能にするには、この値を

openshift-marketplaceに設定します。Red Hat が提供するデフォルトのカタログソースもopenshift-marketplacenamespace を使用します。それ以外の場合は、値を特定の namespace に設定し、Operator をその namespace でのみ利用可能にします。 - 3

- 任意: クラスターのアップグレードにより、Operator のインストールがサポートされていない状態になったり、更新パスが継続されなかったりする可能性を回避するために、クラスターのアップグレードの一環として、Operator カタログのインデックスイメージのバージョンを自動的に変更するように有効化することができます。

olm.catalogImageTemplateアノテーションをインデックスイメージ名に設定し、イメージタグのテンプレートを作成する際に、1 つ以上の Kubernetes クラスターバージョン変数を使用します。アノテーションは、実行時にspec.imageフィールドを上書きします。詳細は、カスタムカタログソースのイメージテンプレートのセクションを参照してください。 - 4

- Web コンソールおよび CLI でのカタログの表示名。

- 5

- カタログのインデックスイメージ。オプションで、

olm.catalogImageTemplateアノテーションを使用して実行時のプル仕様を設定する場合には、省略できます。 - 6

- カタログソースの重み。OLM は重みを使用して依存関係の解決時に優先順位付けします。重みが大きい場合は、カタログが重みの小さいカタログよりも優先されることを示します。

- 7

- ソースタイプには以下が含まれます。

-

image参照のあるgrpc: OLM はイメージをポーリングし、Pod を実行します。これにより、準拠 API が提供されることが予想されます。 -

addressフィールドのあるgrpc: OLM は所定アドレスでの gRPC API へのアクセスを試行します。これはほとんどの場合使用することができません。 -

ConfigMap: OLM は設定マップデータを解析し、gRPC API を提供できる Pod を実行します。

-

- 8

- オプション:

grpcタイプのカタログソースの場合は、spec.imageでコンテンツを提供する Pod のデフォルトのノードセレクターをオーバーライドします (定義されている場合)。 - 9

- オプション:

grpcタイプのカタログソースの場合は、spec.imageでコンテンツを提供する Pod のデフォルトの優先度クラス名をオーバーライドします (定義されている場合)。Kubernetes は、デフォルトで優先度クラスsystem-cluster-criticalおよびsystem-node-criticalを提供します。フィールドを空 ("") に設定すると、Pod にデフォルトの優先度が割り当てられます。他の優先度クラスは、手動で定義できます。 - 10

- オプション:

grpcタイプのカタログソースの場合は、spec.imageでコンテンツを提供する Pod のデフォルトの Toleration をオーバーライドします (定義されている場合)。 - 11

- 最新の状態を維持するために、特定の間隔で新しいバージョンの有無を自動的にチェックします。

- 12

- カタログ接続が最後に監視された状態。以下に例を示します。

-

READY: 接続が正常に確立されました。 -

CONNECTING: 接続が確立中です。 -

TRANSIENT_FAILURE: タイムアウトなど、接続の確立時一時的な問題が発生しました。状態は最終的にCONNECTINGに戻り、再試行されます。

詳細は、gRPC ドキュメントの 接続の状態 を参照してください。

-

- 13

- カタログイメージを保存するコンテナーレジストリーがポーリングされ、イメージが最新の状態であることを確認します。

- 14

- カタログの Operator レジストリーサービスのステータス情報。

サブスクリプションの CatalogSource オブジェクトの name を参照すると、要求された Operator を検索する場所を、OLM に指示します。

例2.9 カタログソースを参照する Subscription オブジェクトの例

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: example-operator

namespace: example-namespace

spec:

channel: stable

name: example-operator

source: example-catalog

sourceNamespace: openshift-marketplace2.4.1.2.2.1. カスタムカタログソースのイメージテンプレート

基礎となるクラスターとの Operator との互換性は、さまざまな方法でカタログソースにより表現できます。デフォルトの Red Hat が提供するカタログソースに使用される 1 つの方法として、OpenShift Container Platform 4.11 などの特定のプラットフォームリリース用に特別に作成されるインデックスイメージのイメージタグを特定することです。

クラスターのアップグレード時に、Red Hat が提供するデフォルトのカタログソースのインデックスイメージのタグは、Operator Lifecycle Manager (OLM) が最新版のカタログをプルするように、Cluster Version Operator (CVO) により自動更新されます。たとえば、OpenShift Container Platform 4.10 から 4.11 へのアップグレード時に、redhat-operators カタログの CatalogSource オブジェクトの spec.image フィールドは以下のようになります。

registry.redhat.io/redhat/redhat-operator-index:v4.10以下のように変更します。

registry.redhat.io/redhat/redhat-operator-index:v4.11ただし、CVO ではカスタムカタログのイメージタグは自動更新されません。クラスターのアップグレード後、ユーザーが互換性があり、サポート対象の Operator のインストールを確実に行えるようにするには、カスタムカタログも更新して、更新されたインデックスイメージを参照する必要があります。

OpenShift Container Platform 4.9 以降、クラスター管理者はカスタムカタログの CatalogSource オブジェクトの olm.catalogImageTemplate アノテーションを、テンプレートなどのイメージ参照に追加できます。以下の Kubernetes バージョン変数は、テンプレートで使用できるようにサポートされています。

-

kube_major_version -

kube_minor_version -

kube_patch_version

OpenShift Container Platform クラスターのバージョンはテンプレートに現在しようできないので、このクラスターではなく、Kubernetes クラスターのバージョンを指定する必要があります。

更新された Kubernetes バージョンを指定するタグでインデックスイメージを作成してプッシュしている場合に、このアノテーションを設定すると、カスタムカタログのインデックスイメージのバージョンがクラスターのアップグレード後に自動的に変更されます。アノテーションの値は、CatalogSource オブジェクトの spec.image フィールドでイメージ参照を設定したり、更新したりするために使用されます。こうすることで、サポートなしの状態や、継続する更新パスなしの状態で Operator がインストールされないようにします。

格納されているレジストリーがどれであっても、クラスターのアップグレード時に、クラスターが、更新されたタグを含むインデックスイメージにアクセスできるようにする必要があります。

例2.10 イメージテンプレートを含むカタログソースの例

apiVersion: operators.coreos.com/v1alpha1

kind: CatalogSource

metadata:

generation: 1

name: example-catalog

namespace: openshift-marketplace

annotations:

olm.catalogImageTemplate:

"quay.io/example-org/example-catalog:v{kube_major_version}.{kube_minor_version}"

spec:

displayName: Example Catalog

image: quay.io/example-org/example-catalog:v1.24

priority: -400

publisher: Example Org

spec.image フィールドおよび olm.catalogImageTemplate アノテーションの両方が設定されている場合には、spec.image フィールドはアノテーションから解決された値で上書きされます。アノテーションが使用可能なプル仕様に対して解決されない場合は、カタログソースは spec.image 値にフォールバックします。

spec.image フィールドが設定されていない場合に、アノテーションが使用可能なプル仕様に対して解決されない場合は、OLM はカタログソースの調整を停止し、人間が判読できるエラー条件に設定します。

Kubernetes 1.24 を使用する OpenShift Container Platform 4.11 クラスターでは、前述の例の olm.catalogImageTemplate アノテーションは以下のイメージ参照に解決されます。

quay.io/example-org/example-catalog:v1.24

OpenShift Container Platform の今後のリリースでは、より新しい OpenShift Container Platform バージョンが使用する、より新しい Kubernetes バージョンを対象とした、カスタムカタログの更新済みインデックスイメージを作成できます。アップグレード前に olm.catalogImageTemplate アノテーションを設定してから、クラスターを新しい OpenShift Container Platform バージョンにアップグレードすると、カタログのインデックスイメージも自動的に更新されます。

2.4.1.2.2.2. カタログの正常性要件

クラスター上の Operator カタログは、インストール解決の観点から相互に置き換え可能です。Subscription オブジェクトは特定のカタログを参照する場合がありますが、依存関係はクラスターのすべてのカタログを使用して解決されます。

たとえば、カタログ A が正常でない場合、カタログ A を参照するサブスクリプションはカタログ B の依存関係を解決する可能性があります。通常、B のカタログ優先度は A よりも低いため、クラスター管理者はこれおを想定していない可能性があります。

その結果、OLM では、特定のグローバル namespace (デフォルトの openshift-marketplace namespace やカスタムグローバル namespace など) を持つすべてのカタログが正常であることが必要になります。カタログが正常でない場合、その共有グローバル namespace 内のすべての Operator のインストールまたは更新操作は、CatalogSourcesUnhealthy 状態で失敗します。正常でない状態でこれらの操作が許可されている場合、OLM はクラスター管理者が想定しない解決やインストールを決定する可能性があります。

クラスター管理者が、カタログが正常でないことを確認し、無効とみなして Operator インストールを再開する必要がある場合は、「カスタムカタログの削除」または「デフォルトの OperatorHub カタログソースの無効化」セクションで、正常でないカタログの削除について確認してください。

2.4.1.2.3. サブスクリプション

サブスクリプション は、Subscription オブジェクトによって定義され、Operator をインストールする意図を表します。これは、Operator をカタログソースに関連付けるカスタムリソースです。

サブスクリプションは、サブスクライブする Operator パッケージのチャネルや、更新を自動または手動で実行するかどうかを記述します。サブスクリプションが自動に設定された場合、Operator Lifecycle Manager (OLM) が Operator を管理し、アップグレードして、最新バージョンがクラスター内で常に実行されるようにします。

Subscription オブジェクトの例

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: example-operator

namespace: example-namespace

spec:

channel: stable

name: example-operator

source: example-catalog

sourceNamespace: openshift-marketplace

この Subscription オブジェクトは、Operator の名前および namespace および Operator データのあるカタログを定義します。alpha、beta、または stable などのチャネルは、カタログソースからインストールする必要のある Operator ストリームを判別するのに役立ちます。

サブスクリプションのチャネルの名前は Operator 間で異なる可能性がありますが、命名スキームは指定された Operator 内の一般的な規則に従う必要があります。たとえば、チャネル名は Operator によって提供されるアプリケーションのマイナーリリース更新ストリーム (1.2、1.3) またはリリース頻度 (stable、fast) に基づく可能性があります。

OpenShift Container Platform Web コンソールから簡単に表示されるだけでなく、関連するサブスクリプションのステータスを確認して、Operator の新規バージョンが利用可能になるタイミングを特定できます。currentCSV フィールドに関連付けられる値は OLM に認識される最新のバージョンであり、installedCSV はクラスターにインストールされるバージョンです。

2.4.1.2.4. インストール計画

InstallPlan オブジェクトによって定義される インストール計画 は、Operator Lifecycle Manager(OLM) が特定バージョンの Operator をインストールまたはアップグレードするために作成するリソースのセットを記述します。バージョンはクラスターサービスバージョン (CSV) で定義されます。

Operator、クラスター管理者、または Operator インストールパーミッションが付与されているユーザーをインストールするには、まず Subscription オブジェクトを作成する必要があります。サブスクリプションでは、カタログソースから利用可能なバージョンの Operator のストリームにサブスクライブする意図を表します。次に、サブスクリプションは InstallPlan オブジェクトを作成し、Operator のリソースのインストールを容易にします。

その後、インストール計画は、以下の承認ストラテジーのいずれかをもとに承認される必要があります。

-

サブスクリプションの

spec.installPlanApprovalフィールドがAutomaticに設定されている場合には、インストール計画は自動的に承認されます。 -

サブスクリプションの

spec.installPlanApprovalフィールドがManualに設定されている場合には、インストール計画はクラスター管理者または適切なパーミッションが割り当てられたユーザーによって手動で承認する必要があります。

インストール計画が承認されると、OLM は指定されたリソースを作成し、サブスクリプションで指定された namespace に Operator をインストールします。

例2.11 InstallPlan オブジェクトの例

apiVersion: operators.coreos.com/v1alpha1

kind: InstallPlan

metadata:

name: install-abcde

namespace: operators

spec:

approval: Automatic

approved: true

clusterServiceVersionNames:

- my-operator.v1.0.1

generation: 1

status:

...

catalogSources: []

conditions:

- lastTransitionTime: '2021-01-01T20:17:27Z'

lastUpdateTime: '2021-01-01T20:17:27Z'

status: 'True'

type: Installed

phase: Complete

plan:

- resolving: my-operator.v1.0.1

resource:

group: operators.coreos.com

kind: ClusterServiceVersion

manifest: >-

...

name: my-operator.v1.0.1

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1alpha1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: apiextensions.k8s.io

kind: CustomResourceDefinition

manifest: >-

...

name: webservers.web.servers.org

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1beta1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: ''

kind: ServiceAccount

manifest: >-

...

name: my-operator

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: rbac.authorization.k8s.io

kind: Role

manifest: >-

...

name: my-operator.v1.0.1-my-operator-6d7cbc6f57

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: rbac.authorization.k8s.io

kind: RoleBinding

manifest: >-

...

name: my-operator.v1.0.1-my-operator-6d7cbc6f57

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

...2.4.1.2.5. Operator グループ

Operator グループ は、 OperatorGroup リソースによって定義され、マルチテナント設定を OLM でインストールされた Operator に提供します。Operator グループは、そのメンバー Operator に必要な RBAC アクセスを生成するために使用するターゲット namespace を選択します。

ターゲット namespace のセットは、クラスターサービスバージョン (CSV) の olm.targetNamespaces アノテーションに保存されるコンマ区切りの文字列によって指定されます。このアノテーションは、メンバー Operator の CSV インスタンスに適用され、それらのデプロインメントに展開されます。

関連情報

2.4.1.2.6. Operator 条件

Operator のライフサイクル管理のロールの一部として、Operator Lifecycle Manager (OLM) は、Operator を定義する Kubernetes リソースの状態から Operator の状態を推測します。このアプローチでは、Operator が特定の状態にあることをある程度保証しますが、推測できない情報を Operator が OLM と通信して提供する必要がある場合も多々あります。続いて、OLM がこの情報を使用して、Operator のライフサイクルをより適切に管理することができます。

OLM は、Operator が OLM に条件について通信できる OperatorCondition というカスタムリソース定義 (CRD) を提供します。OperatorCondition リソースの Spec.Conditions 配列にある場合に、OLM による Operator の管理に影響するサポートされる条件のセットがあります。

デフォルトでは、 Spec.Conditions配列は、ユーザーによって追加されるか、カスタム Operator ロジックの結果として追加されるまで、 Operator Conditionオブジェクトに存在しません。

2.4.2. Operator Lifecycle Manager アーキテクチャー

以下では、OpenShift Container Platform における Operator Lifecycle Manager (OLM) のコンポーネントのアーキテクチャーを説明します。

2.4.2.1. コンポーネントのロール

Operator Lifecycle Manager (OLM) は、OLM Operator および Catalog Operator の 2 つの Operator で設定されています。

これらの Operator はそれぞれ OLM フレームワークのベースとなるカスタムリソース定義 (CRD) を管理します。

| リソース | 短縮名 | 所有する Operator | 説明 |

|---|---|---|---|

|

|

| OLM | アプリケーションのメタデータ: 名前、バージョン、アイコン、必須リソース、インストールなど。 |

|

|

| カタログ | CSV を自動的にインストールするか、アップグレードするために作成されるリソースの計算された一覧。 |

|

|

| カタログ | CSV、CRD、およびアプリケーションを定義するパッケージのリポジトリー。 |

|

|

| カタログ | パッケージのチャネルを追跡して CSV を最新の状態に保つために使用されます。 |

|

|

| OLM |

|

これらの Operator のそれぞれは以下のリソースの作成も行います。

| リソース | 所有する Operator |

|---|---|

|

| OLM |

|

| |

|

| |

|

| |

|

| カタログ |

|

|

2.4.2.2. OLM Operator

OLM Operator は、CSV で指定された必須リソースがクラスター内にあることが確認された後に CSV リソースで定義されるアプリケーションをデプロイします。

OLM Operator は必須リソースの作成には関与せず、ユーザーが CLI またはカタログ Operator を使用してこれらのリソースを手動で作成することを選択できます。このタスクの分離により、アプリケーションに OLM フレームワークをどの程度活用するかに関連してユーザーによる追加機能の購入を可能にします。

OLM Operator は以下のワークフローを使用します。

- namespace でクラスターサービスバージョン (CSV) の有無を確認し、要件を満たしていることを確認します。

要件が満たされている場合、CSV のインストールストラテジーを実行します。

注記CSV は、インストールストラテジーの実行を可能にするために Operator グループのアクティブなメンバーである必要があります。

2.4.2.3. カタログ Operator

カタログ Operator はクラスターサービスバージョン (CSV) およびそれらが指定する必須リソースを解決し、インストールします。また、カタログソースでチャネル内のパッケージへの更新の有無を確認し、必要な場合はそれらを利用可能な最新バージョンに自動的にアップグレードします。

チャネル内のパッケージを追跡するために、必要なパッケージ、チャネル、および更新のプルに使用する CatalogSource オブジェクトを設定して Subscription オブジェクトを作成できます。更新が見つかると、ユーザーに代わって適切な InstallPlan オブジェクトの namespace への書き込みが行われます。

カタログ Operator は以下のワークフローを使用します。

- クラスターの各カタログソースに接続します。

ユーザーによって作成された未解決のインストール計画の有無を確認し、これがあった場合は以下を実行します。

- 要求される名前に一致する CSV を検索し、これを解決済みリソースとして追加します。

- マネージドまたは必須の CRD のそれぞれについて、これを解決済みリソースとして追加します。

- 必須 CRD のそれぞれについて、これを管理する CSV を検索します。

- 解決済みのインストール計画の有無を確認し、それについての検出されたすべてのリソースを作成します (ユーザーによって、または自動的に承認される場合)。

- カタログソースおよびサブスクリプションの有無を確認し、それらに基づいてインストール計画を作成します。

2.4.2.4. カタログレジストリー

カタログレジストリーは、クラスター内での作成用に CSV および CRD を保存し、パッケージおよびチャネルについてのメタデータを保存します。

パッケージマニフェスト は、パッケージアイデンティティーを CSV のセットに関連付けるカタログレジストリー内のエントリーです。パッケージ内で、チャネルは特定の CSV を参照します。CSV は置き換え対象の CSV を明示的に参照するため、パッケージマニフェストはカタログ Operator に対し、CSV をチャネル内の最新バージョンに更新するために必要なすべての情報を提供します (各中間バージョンをステップスルー)。

2.4.3. Operator Lifecycle Manager ワークフロー

以下では、OpenShift Container Platform における Operator Lifecycle Manager (OLM) のワークロードについて説明します。

2.4.3.1. OLM での Operator のインストールおよびアップグレードのワークフロー

Operator Lifecycle Manager (OLM) エコシステムでは、以下のリソースを使用して Operator インストールおよびアップグレードを解決します。

-

ClusterServiceVersion(CSV) -

CatalogSource -

サブスクリプション

CSV で定義される Operator メタデータは、カタログソースというコレクションに保存できます。OLM はカタログソースを使用します。これは Operator Registry API を使用して利用可能な Operator やインストールされた Operator のアップグレードについてクエリーします。

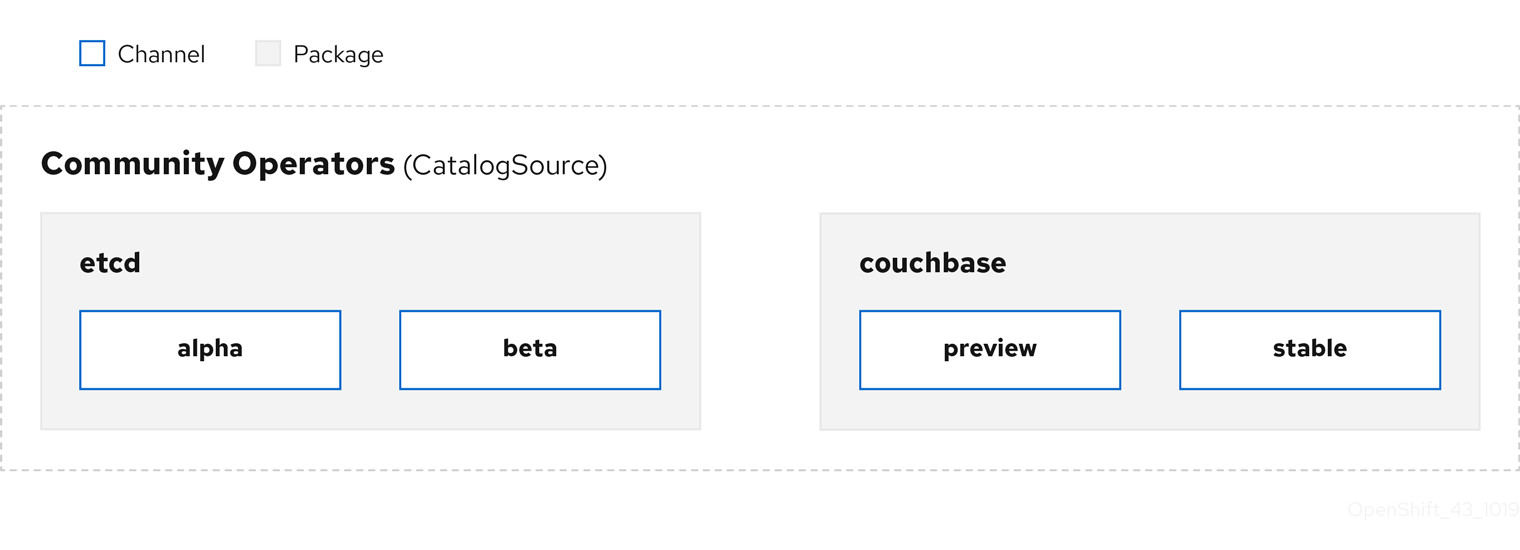

図2.3 カタログソースの概要

カタログソース内で、Operator は パッケージ と チャネル という更新のストリームに編成されます。これは、Web ブラウザーのような継続的なリリースサイクルの OpenShift Container Platform や他のソフトウェアで使用される更新パターンです。

図2.4 カタログソースのパッケージおよびチャネル

ユーザーは サブスクリプション の特定のカタログソースの特定のパッケージおよびチャネルを指定できます (例: etcd パッケージおよびその alpha チャネル)。サブスクリプションが namespace にインストールされていないパッケージに対して作成されると、そのパッケージの最新 Operator がインストールされます。

OLM では、バージョンの比較が意図的に避けられます。そのため、所定の catalog → channel → package パスから利用可能な latest または newest Operator が必ずしも最も高いバージョン番号である必要はありません。これは Git リポジトリーの場合と同様に、チャネルの Head リファレンスとして見なされます。

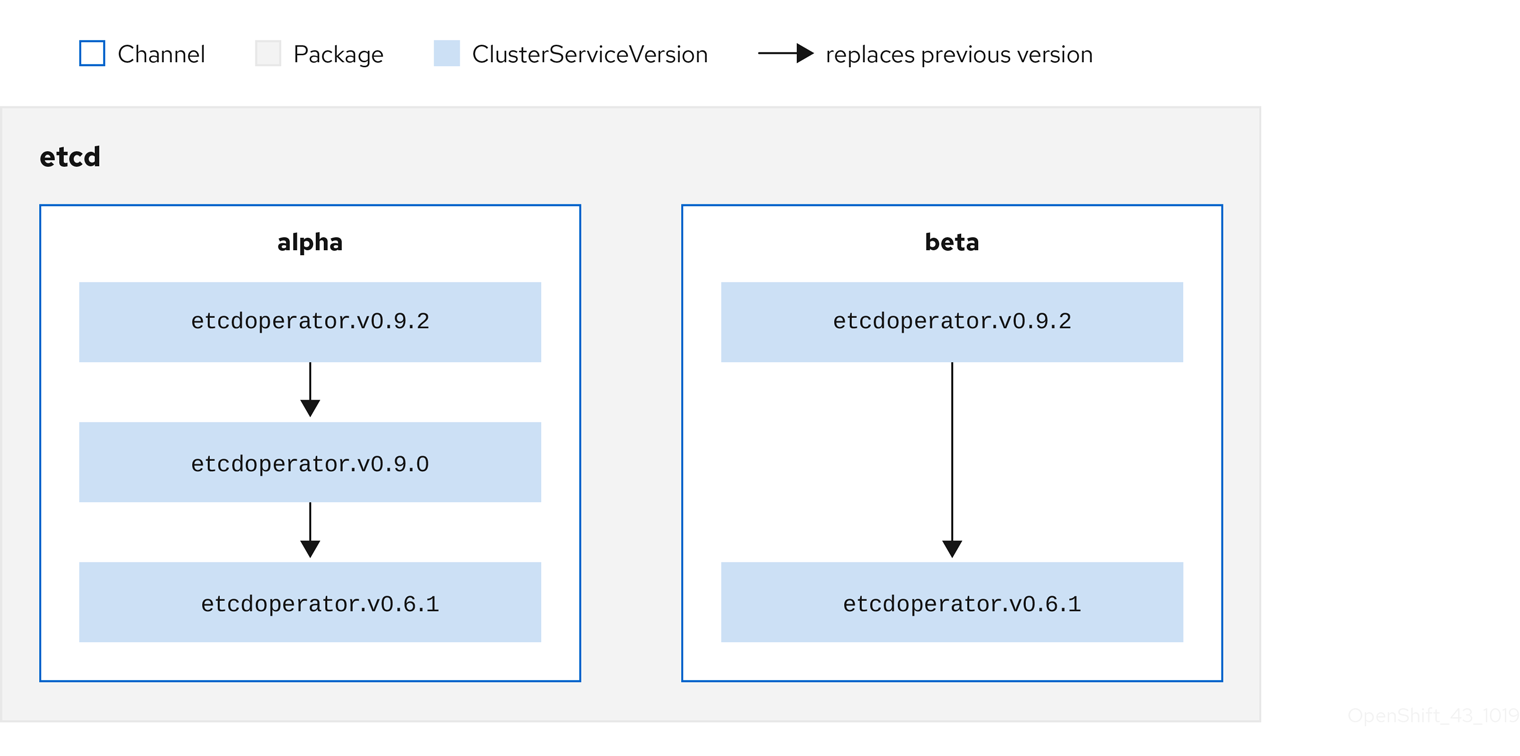

各 CSV には、これが置き換える Operator を示唆する replaces パラメーターがあります。これにより、OLM でクエリー可能な CSV のグラフが作成され、更新がチャネル間で共有されます。チャネルは、更新グラフのエントリーポイントと見なすことができます。

図2.5 利用可能なチャネル更新についての OLM グラフ

パッケージのチャネルの例

packageName: example

channels:

- name: alpha

currentCSV: example.v0.1.2

- name: beta

currentCSV: example.v0.1.3

defaultChannel: alpha

カタログソース、パッケージ、チャネルおよび CSV がある状態で、OLM が更新のクエリーを実行できるようにするには、カタログが入力された CSV の置き換え (replaces) を実行する単一 CSV を明確にかつ確定的に返すことができる必要があります。

2.4.3.1.1. アップグレードパスの例

アップグレードシナリオのサンプルについて、CSV バージョン 0.1.1 に対応するインストールされた Operator について見てみましょう。OLM はカタログソースをクエリーし、新規 CSV バージョン 0.1.3 についてサブスクライブされたチャネルのアップグレードを検出します。これは、古いバージョンでインストールされていない CSV バージョン 0.1.2 を置き換えます。その後、さらに古いインストールされた CSV バージョン 0.1.1 を置き換えます。

OLM は、チャネルヘッドから CSV で指定された replaces フィールドで以前のバージョンに戻り、アップグレードパス 0.1.3 → 0.1.2 → 0.1.1 を判別します。矢印の方向は前者が後者を置き換えることを示します。OLM は、チャネルヘッドに到達するまで Operator を 1 バージョンずつアップグレードします。

このシナリオでは、OLM は Operator バージョン 0.1.2 をインストールし、既存の Operator バージョン 0.1.1 を置き換えます。その後、Operator バージョン 0.1.3 をインストールし、直前にインストールされた Operator バージョン 0.1.2 を置き換えます。この時点で、インストールされた Operator のバージョン 0.1.3 はチャネルヘッドに一致し、アップグレードは完了します。

2.4.3.1.2. アップグレードの省略

OLM のアップグレードの基本パスは以下の通りです。

- カタログソースは Operator への 1 つ以上の更新によって更新されます。

- OLM は、カタログソースに含まれる最新バージョンに到達するまで、Operator のすべてのバージョンを横断します。

ただし、この操作の実行は安全でない場合があります。公開されているバージョンの Operator がクラスターにインストールされていない場合、そのバージョンによって深刻な脆弱性が導入される可能性があるなどの理由でその Operator をがクラスターにインストールできないことがあります。

この場合、OLM は以下の 2 つのクラスターの状態を考慮に入れて、それらの両方に対応する更新グラフを提供する必要があります。

- 問題のある中間 Operator がクラスターによって確認され、かつインストールされている。

- 問題のある中間 Operator がクラスターにまだインストールされていない。

OLM は、新規カタログを送り、省略されたリリースを追加することで、クラスターの状態や問題のある更新が発見されたかどうかにかかわらず、単一の固有の更新を常に取得することができます。

省略されたリリースの CSV 例

apiVersion: operators.coreos.com/v1alpha1

kind: ClusterServiceVersion

metadata:

name: etcdoperator.v0.9.2

namespace: placeholder

annotations:

spec:

displayName: etcd

description: Etcd Operator

replaces: etcdoperator.v0.9.0

skips:

- etcdoperator.v0.9.1古い CatalogSource および 新規 CatalogSource についての以下の例を見てみましょう。

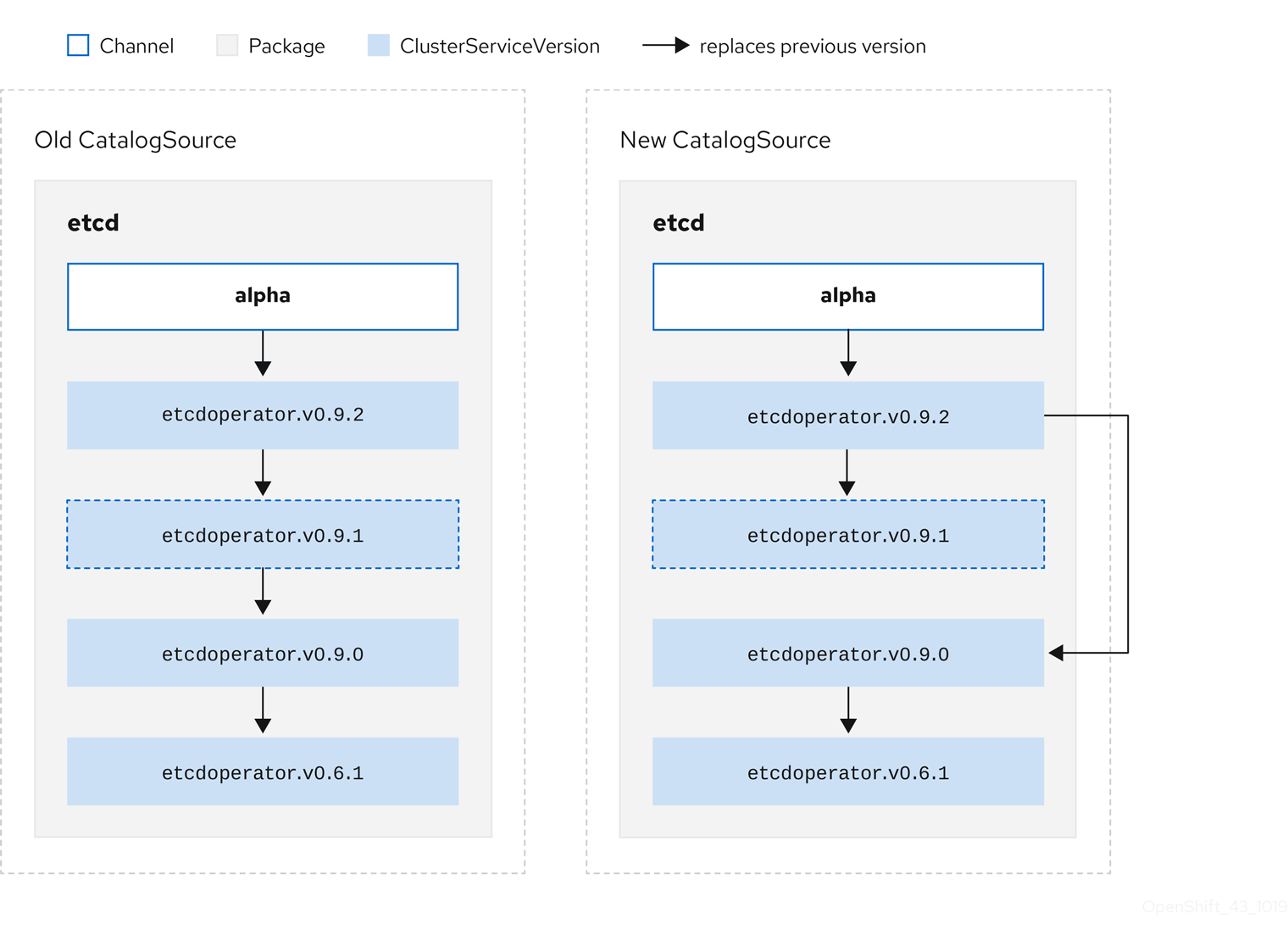

図2.6 更新のスキップ

このグラフは、以下を示しています。

- 古い CatalogSource の Operator には、 新規 CatalogSource の単一の置き換えがある。

- 新規 CatalogSource の Operator には、 新規 CatalogSource の単一の置き換えがある。

- 問題のある更新がインストールされていない場合、これがインストールされることはない。

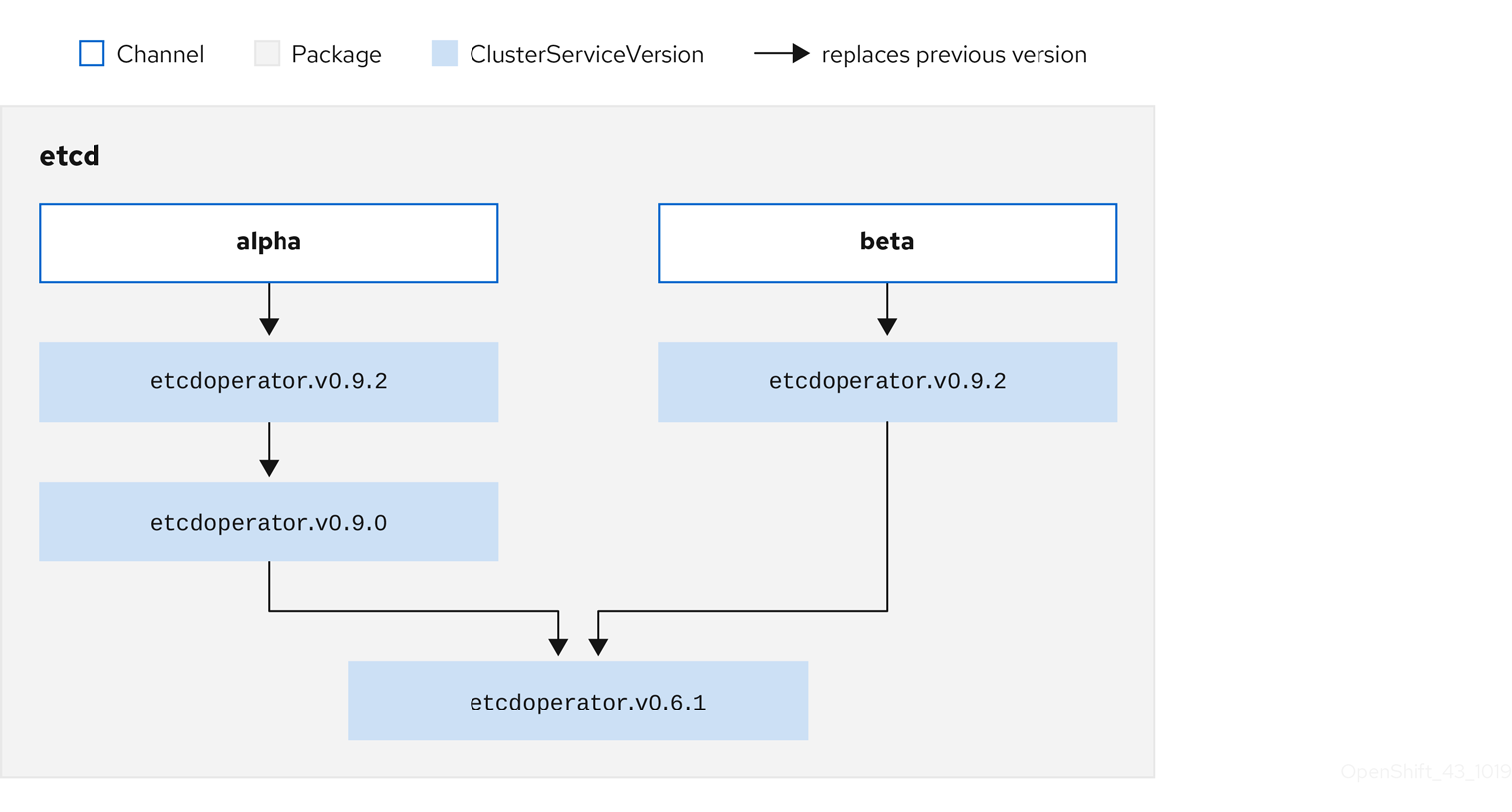

2.4.3.1.3. 複数 Operator の置き換え

説明されているように 新規 CatalogSource を作成するには、1 つの Operator を置き換える (置き換える) が、複数バージョンを省略 (skip) できる CSV を公開する必要があります。これは、skipRange アノテーションを使用して実行できます。

olm.skipRange: <semver_range>

ここで <semver_range> には、semver ライブラリー でサポートされるバージョン範囲の形式が使用されます。

カタログで更新を検索する場合、チャネルのヘッドに skipRange アノテーションがあり、現在インストールされている Operator にその範囲内のバージョンフィールドがある場合、OLM はチャネル内の最新エントリーに対して更新されます。

以下は動作が実行される順序になります。

-

サブスクリプションの

sourceNameで指定されるソースのチャネルヘッド (省略する他の条件が満たされている場合)。 -

sourceNameで指定されるソースの現行バージョンを置き換える次の Operator。 - サブスクリプションに表示される別のソースのチャネルヘッド (省略する他の条件が満たされている場合)。

- サブスクリプションに表示されるソースの現行バージョンを置き換える次の Operator。

skipRange を含む CSV の例

apiVersion: operators.coreos.com/v1alpha1

kind: ClusterServiceVersion

metadata:

name: elasticsearch-operator.v4.1.2

namespace: <namespace>

annotations:

olm.skipRange: '>=4.1.0 <4.1.2'2.4.3.1.4. z-stream サポート

z-streamまたはパッチリリースは、同じマイナーバージョンの以前のすべての z-stream リリースを置き換える必要があります。OLM は、メジャー、マイナーまたはパッチバージョンを考慮せず、カタログ内で正確なグラフのみを作成する必要があります。

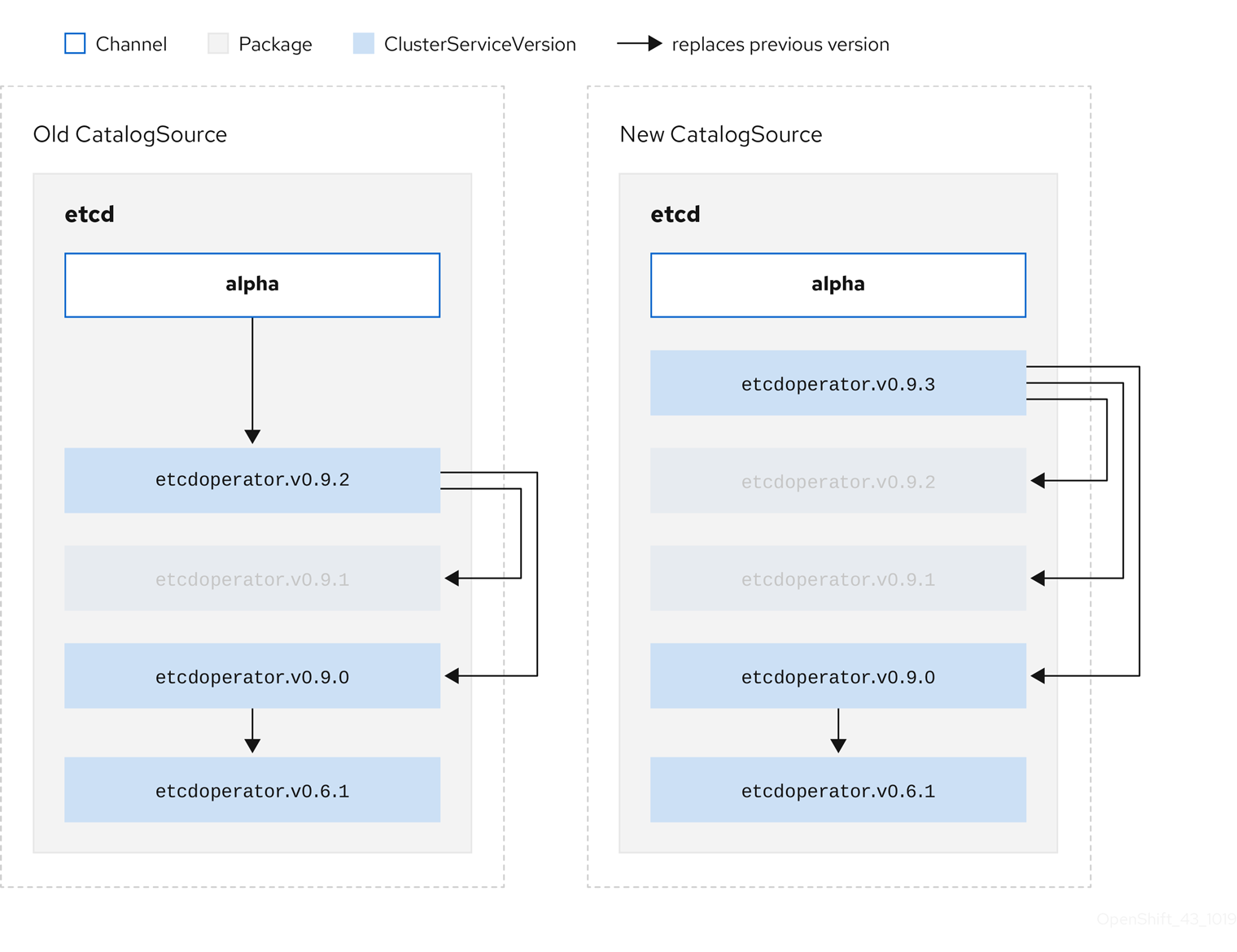

つまり、OLM では 古い CatalogSource のようにグラフを使用し、以前と同様に 新規 CatalogSource にあるようなグラフを生成する必要があります。

図2.7 複数 Operator の置き換え

このグラフは、以下を示しています。

- 古い CatalogSource の Operator には、 新規 CatalogSource の単一の置き換えがある。

- 新規 CatalogSource の Operator には、 新規 CatalogSource の単一の置き換えがある。

- 古い CatalogSource の z-stream リリースは、 新規 CatalogSource の最新 z-stream リリースに更新される。

- 使用不可のリリースは仮想グラフノードと見なされる。それらのコンテンツは存在する必要がなく、レジストリーはグラフが示すように応答することのみが必要になります。

2.4.4. Operator Lifecycle Manager の依存関係の解決

以下で、OpenShift Container Platform の Operator Lifecycle Manager (OLM) での依存関係の解決およびカスタムリソース定義 (CRD) アップグレードライフサイクルについて説明します。

2.4.4.1. 依存関係の解決

Operator Lifecycle Manager (OLM) は、実行中の Operator の依存関係の解決とアップグレードのライフサイクルを管理します。多くの場合、OLM が直面する問題は、 yumやrpmなどの他のシステムまたは言語パッケージマネージャーと同様です。

ただし、OLM にはあるものの、通常同様のシステムにはない 1 つの制約があります。Opearator は常に実行されており、OLM は相互に機能しない Operator のセットの共存を防ごうとします。

その結果、以下のシナリオで OLM を使用しないでください。

- 提供できない API を必要とする Operator のセットのインストール

- Operator と依存関係のあるものに障害を発生させる仕方での Operator の更新

これは、次の 2 種類のデータで可能になります。

| プロパティー | Operator に関する型付きのメタデータ。これは、依存関係のリゾルバーで Operator の公開インターフェイスを設定します。例としては、Operator が提供する API の group/version/kind (GVK) や Operator のセマンティックバージョン (semver) などがあります。 |

| 制約または依存関係 | ターゲットクラスターにすでにインストールされているかどうかに関係なく、他の Operator が満たす必要のある Operator の要件。これらは、使用可能なすべての Operator に対するクエリーまたはフィルターとして機能し、依存関係の解決およびインストール中に選択を制限します。クラスターで特定の API が利用できる状態にする必要がある場合や、特定のバージョンに特定の Operator をインストールする必要がある場合など、例として挙げられます。 |

OLM は、これらのプロパティーと制約をブール式のシステムに変換して SAT ソルバーに渡します。これは、ブールの充足可能性を確立するプログラムであり、インストールする Operator を決定する作業を行います。

2.4.4.2. Operator のプロパティー

カタログ内の Operator にはすべて、次のプロパティーが含まれます。

olm.package- パッケージの名前と Operator のバージョンを含めます。

olm.gvk- クラスターサービスバージョン (CSV) から提供された API ごとに 1 つのプロパティー

追加のプロパティーは、Operator バンドルの metadata/ディレクトリーにproperties.yamlファイルを追加して、Operator 作成者が直接宣言することもできます。

任意のプロパティーの例

properties:

- type: olm.kubeversion

value:

version: "1.16.0"2.4.4.2.1. 任意のプロパティー

Operator の作成者は、Operator バンドルのmetadata/ ディレクトリーにあるproperties.yamlファイルで任意のプロパティーを宣言できます。これらのプロパティーは、実行時に Operator Lifecycle Manager (OLM) リゾルバーへの入力として使用されるマップデータ構造に変換されます。

これらのプロパティーはリゾルバーには不透明です。リゾルバーはプロパティーについて理解しませんが、これらのプロパティーに対する一般的な制約を評価して、プロパティーリストを指定することで制約を満たすことができるかどうかを判断します。

任意のプロパティーの例

properties:

- property:

type: color

value: red

- property:

type: shape

value: square

- property:

type: olm.gvk

value:

group: olm.coreos.io

version: v1alpha1

kind: myresourceこの構造を使用して、ジェネリック制約の Common Expression Language (CEL) 式を作成できます。

2.4.4.3. Operator の依存関係

Operator の依存関係は、バンドルの metadata/ フォルダー内の dependencies.yaml ファイルに一覧表示されます。このファイルはオプションであり、現時点では明示的な Operator バージョンの依存関係を指定するためにのみ使用されます。

依存関係の一覧には、依存関係の内容を指定するために各項目の type フィールドが含まれます。次のタイプの Operator 依存関係がサポートされています。

olm.package-

このタイプは、特定の Operator バージョンの依存関係であることを意味します。依存関係情報には、パッケージ名とパッケージのバージョンを semver 形式で含める必要があります。たとえば、

0.5.2などの特定バージョンや>0.5.1などのバージョンの範囲を指定することができます。 olm.gvk- このタイプの場合、作成者は CSV の既存の CRD および API ベースの使用方法と同様に group/version/kind (GVK) 情報で依存関係を指定できます。これは、Operator の作成者がすべての依存関係、API または明示的なバージョンを同じ場所に配置できるようにするパスです。

olm.constraint- このタイプは、任意の Operator プロパティーに対するジェネリック制約を宣言します。

以下の例では、依存関係は Prometheus Operator および etcd CRD について指定されます。

dependencies.yaml ファイルの例

dependencies:

- type: olm.package

value:

packageName: prometheus

version: ">0.27.0"

- type: olm.gvk

value:

group: etcd.database.coreos.com

kind: EtcdCluster

version: v1beta22.4.4.4. 一般的な制約

olm.constraintプロパティーは、特定のタイプの依存関係制約を宣言し、非制約プロパティーと制約プロパティーを区別します。その値フィールドは、制約メッセージの文字列表現を保持するfailure Messageフィールドを含むオブジェクトです。このメッセージは、実行時に制約が満たされない場合に、ユーザーへの参考のコメントとして表示されます。

次のキーは、使用可能な制約タイプを示します。

gvk-

値と解釈が

olm.gvkタイプと同じタイプ package-

値と解釈が

olm.packageタイプと同じタイプ cel- 任意のバンドルプロパティーとクラスター情報に対して Operator Lifecycle Manager (OLM) リゾルバーによって実行時に評価される Common Expression Language (CEL) 式

all、any、not-

gvkやネストされた複合制約など、1 つ以上の具体的な制約を含む、論理積、論理和、否定の制約。

2.4.4.4.1. Common Expression Language (CEL) の制約

cel 制約型は、式言語としてCommon Expression Language (CEL)をサポートしています。cel 構造には、Operator が制約を満たしているかどうかを判断するために、実行時に Operator プロパティーに対して評価される CEL 式文字列を含む rule フィールドがあります。

cel 制約の例

type: olm.constraint

value:

failureMessage: 'require to have "certified"'

cel:

rule: 'properties.exists(p, p.type == "certified")'

CEL 構文は、AND や OR などの幅広い論理演算子をサポートします。その結果、単一の CEL 式は、これらの論理演算子で相互にリンクされる複数の条件に対して複数のルールを含めることができます。これらのルールは、バンドルまたは任意のソースからの複数の異なるプロパティーのデータセットに対して評価され、出力は、単一の制約内でこれらのルールのすべてを満たす単一のバンドルまたは Operator に対して解決されます。

複数のルールが指定されたcel制約の例

type: olm.constraint

value:

failureMessage: 'require to have "certified" and "stable" properties'

cel:

rule: 'properties.exists(p, p.type == "certified") && properties.exists(p, p.type == "stable")'2.4.4.4.2. 複合制約 (all, any, not)

複合制約タイプは、論理定義に従って評価されます。

以下は、2 つのパッケージと 1 つの GVK の接続制約 (all) の例です。つまり、インストールされたバンドルがすべての制約を満たす必要があります。

all制約の例

schema: olm.bundle

name: red.v1.0.0

properties:

- type: olm.constraint

value:

failureMessage: All are required for Red because...

all:

constraints:

- failureMessage: Package blue is needed for...

package:

name: blue

versionRange: '>=1.0.0'

- failureMessage: GVK Green/v1 is needed for...

gvk:

group: greens.example.com

version: v1

kind: Green

以下は、同じ GVK の 3 つのバージョンの選言的制約 ( any) の例です。つまり、インストールされたバンドルが少なくとも 1 つの制約を満たす必要があります。

any 制約の例

schema: olm.bundle

name: red.v1.0.0

properties:

- type: olm.constraint

value:

failureMessage: Any are required for Red because...

any:

constraints:

- gvk:

group: blues.example.com

version: v1beta1

kind: Blue

- gvk:

group: blues.example.com

version: v1beta2

kind: Blue

- gvk:

group: blues.example.com

version: v1

kind: Blue

以下は、GVK の 1 つのバージョンの否定制約 (not) の例です。つまり、この結果セットのバンドルでは、この GVK を提供できません。

not の制約例

schema: olm.bundle

name: red.v1.0.0

properties:

- type: olm.constraint

value:

all:

constraints:

- failureMessage: Package blue is needed for...

package:

name: blue

versionRange: '>=1.0.0'

- failureMessage: Cannot be required for Red because...

not:

constraints:

- gvk:

group: greens.example.com

version: v1alpha1

kind: greens

否定のセマンティクスは、not制約のコンテキストで不明確であるように見える場合があります。つまり、この否定では、特定の GVK、あるバージョンのパッケージを含むソリューション、または結果セットからの子の複合制約を満たすソリューションを削除するように、リゾルバーに対して指示を出しています。

当然の結果として、最初に可能な依存関係のセットを選択せずに否定することは意味がないため、複合ではnot制約はallまたはany制約内でのみ使用する必要があります。

2.4.4.4.3. ネストされた複合制約

ネストされた複合制約 (少なくとも 1 つの子複合制約と 0 個以上の単純な制約を含む制約) は、前述の各制約タイプの手順に従って、下から上に評価されます。

以下は、接続詞の論理和の例で、one、the other、または both が制約を満たすことができます。

ネストされた複合制約の例

schema: olm.bundle

name: red.v1.0.0

properties:

- type: olm.constraint

value:

failureMessage: Required for Red because...

any:

constraints:

- all:

constraints:

- package:

name: blue

versionRange: '>=1.0.0'

- gvk:

group: blues.example.com

version: v1

kind: Blue

- all:

constraints:

- package:

name: blue

versionRange: '<1.0.0'

- gvk:

group: blues.example.com

version: v1beta1

kind: Blue

olm.constraintタイプの最大 raw サイズは 64KB に設定されており、リソース枯渇攻撃を制限しています。

2.4.4.5. 依存関係の設定

Operator の依存関係を同等に満たすオプションが多数ある場合があります。Operator Lifecycle Manager (OLM) の依存関係リゾルバーは、要求された Operator の要件に最も適したオプションを判別します。Operator の作成者またはユーザーとして、依存関係の解決が明確になるようにこれらの選択方法を理解することは重要です。

2.4.4.5.1. カタログの優先順位

OpenShift Container Platform クラスターでは、OLM はカタログソースを読み取り、インストールに使用できる Operator を確認します。

CatalogSource オブジェクトの例

apiVersion: "operators.coreos.com/v1alpha1"

kind: "CatalogSource"

metadata:

name: "my-operators"

namespace: "operators"

spec:

sourceType: grpc

image: example.com/my/operator-index:v1

displayName: "My Operators"

priority: 100

CatalogSource オブジェクトには priority フィールドがあります。このフィールドは、依存関係のオプションを優先する方法を把握するためにリゾルバーによって使用されます。

カタログ設定を規定する 2 つのルールがあります。

- 優先順位の高いカタログにあるオプションは、優先順位の低いカタログのオプションよりも優先されます。

- 依存オブジェクトと同じカタログにあるオプションは他のカタログよりも優先されます。

2.4.4.5.2. チャネルの順序付け

カタログの Operator パッケージは、ユーザーが OpenShift Container Platform クラスターでサブスクライブできる更新チャネルのコレクションです。チャネルは、マイナーリリース (1.2、1.3) またはリリース頻度 (stable、fast) についての特定の更新ストリームを提供するために使用できます。

同じパッケージの Operator によって依存関係が満たされる可能性がありますが、その場合、異なるチャネルの Operator のバージョンによって満たされる可能性があります。たとえば、Operator のバージョン 1.2 は stable および fast チャネルの両方に存在する可能性があります。

それぞれのパッケージにはデフォルトのチャネルがあり、これは常にデフォルト以外のチャネルよりも優先されます。デフォルトチャネルのオプションが依存関係を満たさない場合には、オプションは、チャネル名の辞書式順序 (lexicographic order) で残りのチャネルから検討されます。

2.4.4.5.3. チャネル内での順序

ほとんどの場合、単一のチャネル内に依存関係を満たすオプションが複数あります。たとえば、1 つのパッケージおよびチャネルの Operator は同じセットの API を提供します。

ユーザーがサブスクリプションを作成すると、それらはどのチャネルから更新を受け取るかを示唆します。これにより、すぐにその 1 つのチャネルだけに検索が絞られます。ただし、チャネル内では、多くの Operator が依存関係を満たす可能性があります。

チャネル内では、更新グラフでより上位にある新規 Operator が優先されます。チャネルのヘッドが依存関係を満たす場合、これがまず試行されます。

2.4.4.5.4. その他の制約

OLM には、パッケージの依存関係で指定される制約のほかに、必要なユーザーの状態を表し、常にメンテナンスする必要のある依存関係の解決を適用するための追加の制約が含まれます。

2.4.4.5.4.1. サブスクリプションの制約

サブスクリプションの制約は、サブスクリプションを満たすことのできる Operator のセットをフィルターします。サブスクリプションは、依存関係リゾルバーについてのユーザー指定の制約です。それらは、クラスター上にない場合は新規 Operator をインストールすることを宣言するか、既存 Operator の更新された状態を維持することを宣言します。

2.4.4.5.4.2. パッケージの制約

namespace 内では、2 つの Operator が同じパッケージから取得されることはありません。

2.4.4.6. CRD のアップグレード

OLM は、単一のクラスターサービスバージョン (CSV) によって所有されている場合にはカスタムリソース定義 (CRD) をすぐにアップグレードします。CRD が複数の CSV によって所有されている場合、CRD は、以下の後方互換性の条件のすべてを満たす場合にアップグレードされます。

- 現行 CRD の既存の有効にされたバージョンすべてが新規 CRD に存在する。

- 検証が新規 CRD の検証スキーマに対して行われる場合、CRD の提供バージョンに関連付けられる既存インスタンスまたはカスタムリソースすべてが有効である。

2.4.4.7. 依存関係のベストプラクティス

依存関係を指定する際には、ベストプラクティスを考慮する必要があります。

- Operator の API または特定のバージョン範囲によって異なります。

-

Operator は API をいつでも追加または削除できます。Operator が必要とする API に

olm.gvk依存関係を常に指定できます。これの例外は、olm.package制約を代わりに指定する場合です。 - 最小バージョンの設定

API の変更に関する Kubernetes ドキュメントでは、Kubernetes 形式の Operator で許可される変更について説明しています。これらのバージョン管理規則により、Operator は API バージョンに後方互換性がある限り、API バージョンに影響を与えずに API を更新することができます。

Operator の依存関係の場合、依存関係の API バージョンを把握するだけでは、依存する Operator が確実に意図された通りに機能することを確認できないことを意味します。

以下に例を示します。

-

TestOperator v1.0.0 は、v1alpha1 API バージョンの

MyObjectリソースを提供します。 -

TestOperator v1.0.1 は新しいフィールド

spec.newfieldをMyObjectに追加しますが、v1alpha1 のままになります。

Operator では、

spec.newfieldをMyObjectリソースに書き込む機能が必要になる場合があります。olm.gvk制約のみでは、OLM で TestOperator v1.0.0 ではなく TestOperator v1.0.1 が必要であると判断することはできません。可能な場合には、API を提供する特定の Operator が事前に分かっている場合、最小値を設定するために追加の

olm.package制約を指定します。-

TestOperator v1.0.0 は、v1alpha1 API バージョンの

- 最大バージョンを省略するか、幅広いバージョンを許可します。

Operator は API サービスや CRD などのクラスタースコープのリソースを提供するため、依存関係に小規模な範囲を指定する Operator は、その依存関係の他のコンシューマーの更新に不要な制約を加える可能性があります。

可能な場合は、最大バージョンを設定しないでください。または、他の Operator との競合を防ぐために、幅広いセマンティクスの範囲を設定します。例:

>1.0.0 <2.0.0従来のパッケージマネージャーとは異なり、Operator の作成者は更新が OLM のチャネルで更新を安全に行われるように Operator を明示的にエンコードします。更新が既存のサブスクリプションで利用可能な場合、Operator の作成者がこれが以前のバージョンから更新できることを示唆していることが想定されます。依存関係の最大バージョンを設定すると、特定の上限で不必要な切り捨てが行われることにより、作成者の更新ストリームが上書きされます。

注記クラスター管理者は、Operator の作成者が設定した依存関係を上書きすることはできません。

ただし、回避する必要がある非互換性があることが分かっている場合は、最大バージョンを設定でき、およびこれを設定する必要があります。特定のバージョンは、バージョン範囲の構文 (例:

1.0.0 !1.2.1) で省略できます。

2.4.4.8. 依存関係に関する注意事項

依存関係を指定する際には、考慮すべき注意事項があります。

- 複合制約がない (AND)

現時点で、制約の間に AND 関係を指定する方法はありません。つまり、ある Operator が、所定の API を提供し、バージョン

>1.1.0を持つ別の Operator に依存するように指定することはできません。依存関係を指定すると、以下のようになります。

dependencies: - type: olm.package value: packageName: etcd version: ">3.1.0" - type: olm.gvk value: group: etcd.database.coreos.com kind: EtcdCluster version: v1beta2OLM は EtcdCluster を提供する Operator とバージョン

>3.1.0を持つ Operator の 2 つの Operator で、上記の依存関係の例の条件を満たすことができる可能性があります。その場合や、または両方の制約を満たす Operator が選択されるかどうかは、選択できる可能性のあるオプションが参照される順序によって変わります。依存関係の設定および順序のオプションは十分に定義され、理にかなったものであると考えられますが、Operator は継続的に特定のメカニズムをベースとする必要があります。- namespace 間の互換性

- OLM は namespace スコープで依存関係の解決を実行します。ある namespace での Operator の更新が別の namespace の Operator の問題となる場合、更新のデッドロックが生じる可能性があります。

2.4.4.9. 依存関係解決のシナリオ例

以下の例で、プロバイダー は CRD または API サービスを所有する Operator です。

例: 依存 API を非推奨にする

A および B は API (CRD):

- A のプロバイダーは B によって異なる。

- B のプロバイダーにはサブスクリプションがある。

- B のプロバイダーは C を提供するように更新するが、B を非推奨にする。

この結果は以下のようになります。

- B にはプロバイダーがなくなる。

- A は機能しなくなる。

これは OLM がアップグレードストラテジーで回避するケースです。

例: バージョンのデッドロック

A および B は API である:

- A のプロバイダーは B を必要とする。

- B のプロバイダーは A を必要とする。

- A のプロバイダーは (A2 を提供し、B2 を必要とするように) 更新し、A を非推奨にする。

- B のプロバイダーは (B2 を提供し、A2 を必要とするように) 更新し、B を非推奨にする。

OLM が B を同時に更新せずに A を更新しようとする場合や、その逆の場合、OLM は、新しい互換性のあるセットが見つかったとしても Operator の新規バージョンに進むことができません。

これは OLM がアップグレードストラテジーで回避するもう 1 つのケースです。

2.4.5. Operator グループ

以下では、OpenShift Container Platform で Operator Lifecycle Manager (OLM) を使用した Operator グループの使用について説明します。

2.4.5.1. Operator グループについて

Operator グループ は、 OperatorGroup リソースによって定義され、マルチテナント設定を OLM でインストールされた Operator に提供します。Operator グループは、そのメンバー Operator に必要な RBAC アクセスを生成するために使用するターゲット namespace を選択します。

ターゲット namespace のセットは、クラスターサービスバージョン (CSV) の olm.targetNamespaces アノテーションに保存されるコンマ区切りの文字列によって指定されます。このアノテーションは、メンバー Operator の CSV インスタンスに適用され、それらのデプロインメントに展開されます。

2.4.5.2. Operator グループメンバーシップ

Operator は、以下の条件が true の場合に Operator グループの メンバー とみなされます。

- Operator の CSV が Operator グループと同じ namespace にある。

- Operator の CSV のインストールモードは Operator グループがターゲットに設定する namespace のセットをサポートする。

CSV のインストールモードは InstallModeType フィールドおよびブール値の Supported フィールドで構成されます。CSV の仕様には、4 つの固有の InstallModeTypes のインストールモードのセットを含めることができます。

| InstallMode タイプ | 説明 |

|---|---|

|

| Operator は、独自の namespace を選択する Operator グループのメンバーにすることができます。 |

|

| Operator は 1 つの namespace を選択する Operator グループのメンバーにすることができます。 |

|

| Operator は複数の namespace を選択する Operator グループのメンバーにすることができます。 |

|

|

Operator はすべての namespace を選択する Operator グループのメンバーにすることができます (設定されるターゲット namespace は空の文字列 |

CSV の仕様が InstallModeType のエントリーを省略する場合、そのタイプは暗黙的にこれをサポートする既存エントリーによってサポートが示唆されない限り、サポートされないものとみなされます。

2.4.5.3. ターゲット namespace の選択

spec.targetNamespaces パラメーターを使用して Operator グループのターゲット namespace に名前を明示的に指定することができます。

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: my-group

namespace: my-namespace

spec:

targetNamespaces:

- my-namespaceOperator Lifecycle Manager (OLM) は、各 Operator グループに対して次のクラスターロールを作成します。

-

<operatorgroup_name>-admin -

<operatorgroup_name>-edit -

<operatorgroup_name>-view

Operator グループを手動で作成する場合は、既存のクラスターロールまたはクラスター上の他のOperator グループと競合しない一意の名前を指定する必要があります。

または、spec.selector パラメーターでラベルセレクターを使用して namespace を指定することもできます。

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: my-group

namespace: my-namespace

spec:

selector:

cool.io/prod: "true"

spec.targetNamespaces で複数の namespace をリスト表示したり、spec.selector でラベルセレクターを使用したりすることは推奨されません。Operator グループの複数のターゲット namespace のサポートは今後のリリースで取り除かれる可能性があります。

spec.targetNamespaces と spec.selector の両方が定義されている場合、 spec.selector は無視されます。または、spec.selector と spec.targetNamespaces の両方を省略し、global Operator グループを指定できます。これにより、すべての namespace が選択されます。

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: my-group

namespace: my-namespace

選択された namespace の解決済みのセットは Operator グループの status.namespaces パラメーターに表示されます。グローバル Operator グループの status.namespace には空の文字列 ("") が含まれます。 これは、消費する Operator に対し、すべての namespace を監視するように示唆します。

2.4.5.4. Operator グループの CSV アノテーション

Operator グループのメンバー CSV には以下のアノテーションがあります。

| アノテーション | 説明 |

|---|---|

|

| Operator グループの名前が含まれます。 |

|

| Operator グループの namespace が含まれます。 |

|

| Operator グループのターゲット namespace 選択をリスト表示するコンマ区切りの文字列が含まれます。 |

olm.targetNamespaces 以外のすべてのアノテーションがコピーされた CSV と共に含まれます。olm.targetNamespaces アノテーションをコピーされた CSV で省略すると、テナント間のターゲット namespace の重複が回避されます。

2.4.5.5. 提供される API アノテーション

group/version/kind(GVK) は Kubernetes API の一意の識別子です。Operator グループによって提供される GVK についての情報が olm.providedAPIs アノテーションに表示されます。アノテーションの値は、コンマで区切られた <kind>.<version>.<group> で構成される文字列です。Operator グループのすべてのアクティブメンバーの CSV によって提供される CRD および API サービスの GVK が含まれます。

PackageManifest リースを提供する単一のアクティブメンバー CSV を含む OperatorGroup オブジェクトの以下の例を確認してください。

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

annotations:

olm.providedAPIs: PackageManifest.v1alpha1.packages.apps.redhat.com

name: olm-operators

namespace: local

...

spec:

selector: {}

serviceAccount:

metadata:

creationTimestamp: null

targetNamespaces:

- local

status:

lastUpdated: 2019-02-19T16:18:28Z

namespaces:

- local2.4.5.6. ロールベースのアクセス制御

Operator グループの作成時に、3 つのクラスタールールが生成されます。それぞれには、以下に示すようにクラスターロールセレクターがラベルに一致するように設定された単一の集計ルールが含まれます。

| クラスターロール | 一致するラベル |

|---|---|

|

|

|

|

|

|

|

|

|

Operator Lifecycle Manager (OLM) は、各 Operator グループに対して次のクラスターロールを作成します。

-

<operatorgroup_name>-admin -

<operatorgroup_name>-edit -

<operatorgroup_name>-view

Operator グループを手動で作成する場合は、既存のクラスターロールまたはクラスター上の他のOperator グループと競合しない一意の名前を指定する必要があります。

以下の RBAC リソースは、CSV が AllNamespaces インストールモードのあるすべての namespace を監視しており、理由が InterOperatorGroupOwnerConflict の失敗状態にない限り、CSV が Operator グループのアクティブメンバーになる際に生成されます。

- CRD からの各 API リソースのクラスターロール

- API サービスからの各 API リソースのクラスターロール

- 追加のロールおよびロールバインディング

| クラスターロール | 設定 |

|---|---|

|

|

集計ラベル:

|

|

|

集計ラベル:

|

|

|

集計ラベル:

|

|

|

Verbs on

集計ラベル:

|

| クラスターロール | 設定 |

|---|---|

|

|

集計ラベル:

|

|

|

集計ラベル:

|

|

|

集計ラベル:

|

追加のロールおよびロールバインディング

-

CSV が

*が含まれる 1 つのターゲット namespace を定義する場合、クラスターロールと対応するクラスターロールバインディングが CSV のpermissionsフィールドに定義されるパーミッションごとに生成されます。生成されたすべてのリソースにはolm.owner: <csv_name>およびolm.owner.namespace: <csv_namespace>ラベルが付与されます。 -

CSV が

*が含まれる 1 つのターゲット namespace を定義 しない 場合、olm.owner: <csv_name>およびolm.owner.namespace: <csv_namespace>ラベルの付いた Operator namespace にあるすべてのロールおよびロールバインディングがターゲット namespace にコピーされます。

2.4.5.7. コピーされる CSV

OLM は、それぞれの Operator グループのターゲット namespace の Operator グループのすべてのアクティブな CSV のコピーを作成します。コピーされる CSV の目的は、ユーザーに対して、特定の Operator が作成されるリソースを監視するように設定されたターゲット namespace について通知することにあります。

コピーされる CSV にはステータスの理由 Copied があり、それらのソース CSV のステータスに一致するように更新されます。olm.targetNamespaces アノテーションは、クラスター上でコピーされる CSV が作成される前に取られます。ターゲット namespace 選択を省略すると、テナント間のターゲット namespace の重複が回避されます。

コピーされる CSV はそれらのソース CSV が存在しなくなるか、それらのソース CSV が属する Operator グループが、コピーされた CSV の namespace をターゲットに設定しなくなると削除されます。

デフォルトでは、disableCopiedCSVs フィールドは無効になっています。disableCopiedCSVs フィールドを有効にすると、OLM はクラスター上の既存のコピーされた CSV を削除します。disableCopiedCSVs フィールドが無効になると、OLM はコピーされた CSV を再度追加します。

disableCopiedCSVsフィールドを無効にします。$ cat << EOF | oc apply -f - apiVersion: operators.coreos.com/v1 kind: OLMConfig metadata: name: cluster spec: features: disableCopiedCSVs: false EOFdisableCopiedCSVsフィールドを有効にします。$ cat << EOF | oc apply -f - apiVersion: operators.coreos.com/v1 kind: OLMConfig metadata: name: cluster spec: features: disableCopiedCSVs: true EOF

2.4.5.8. 静的 Operator グループ

Operator グループはその spec.staticProvidedAPIs フィールドが true に設定されると 静的 になります。その結果、OLM は Operator グループの olm.providedAPIs アノテーションを変更しません。つまり、これを事前に設定することができます。これは、ユーザーが Operator グループを使用して namespace のセットでリソースの競合を防ぐ必要がある場合で、それらのリソースの API を提供するアクティブなメンバーの CSV がない場合に役立ちます。

以下は、something.cool.io/cluster-monitoring: "true" アノテーションのあるすべての namespace の Prometheus リソースを保護する Operator グループの例です。

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: cluster-monitoring

namespace: cluster-monitoring

annotations:

olm.providedAPIs: Alertmanager.v1.monitoring.coreos.com,Prometheus.v1.monitoring.coreos.com,PrometheusRule.v1.monitoring.coreos.com,ServiceMonitor.v1.monitoring.coreos.com

spec:

staticProvidedAPIs: true

selector:

matchLabels:

something.cool.io/cluster-monitoring: "true"Operator Lifecycle Manager (OLM) は、各 Operator グループに対して次のクラスターロールを作成します。

-

<operatorgroup_name>-admin -

<operatorgroup_name>-edit -

<operatorgroup_name>-view

Operator グループを手動で作成する場合は、既存のクラスターロールまたはクラスター上の他のOperator グループと競合しない一意の名前を指定する必要があります。

2.4.5.9. Operator グループの交差部分

2 つの Operator グループは、それらのターゲット namespace セットの交差部分が空のセットではなく、olm.providedAPIs アノテーションで定義されるそれらの指定 API セットの交差部分が空のセットではない場合に、 交差部分のある指定 API があると見なされます。

これによって生じ得る問題として、交差部分のある指定 API を持つ複数の Operator グループは、一連の交差部分のある namespace で同じリソースに関して競合関係になる可能性があります。

交差ルールを確認すると、Operator グループの namespace は常に選択されたターゲット namespace の一部として組み込まれます。

交差のルール

アクティブメンバーの CSV が同期する際はいつでも、OLM はクラスターで、CSV の Operator グループとそれ以外のすべての間での交差部分のある指定 API のセットについてクエリーします。その後、OLM はそのセットが空のセットであるかどうかを確認します。

trueであり、CSV の指定 API が Operator グループのサブセットである場合:- 移行を継続します。

trueであり、CSV の指定 API が Operator グループのサブセット ではない 場合:Operator グループが静的である場合:

- CSV に属するすべてのデプロイメントをクリーンアップします。

-

ステータスの理由

CannotModifyStaticOperatorGroupProvidedAPIsのある失敗状態に CSV を移行します。

Operator グループが静的 ではない 場合:

-

Operator グループの

olm.providedAPIsアノテーションを、それ自体と CSV の指定 API の集合に置き換えます。

-

Operator グループの

falseであり、CSV の指定 API が Operator グループのサブセット ではない 場合:- CSV に属するすべてのデプロイメントをクリーンアップします。

-

ステータスの理由

InterOperatorGroupOwnerConflictのある失敗状態に CSV を移行します。

falseであり、CSV の指定 API が Operator グループのサブセットである場合:Operator グループが静的である場合:

- CSV に属するすべてのデプロイメントをクリーンアップします。

-

ステータスの理由

CannotModifyStaticOperatorGroupProvidedAPIsのある失敗状態に CSV を移行します。

Operator グループが静的 ではない 場合:

-

Operator グループの

olm.providedAPIsアノテーションを、それ自体と CSV の指定 API 間の差異部分に置き換えます。

-

Operator グループの

Operator グループによって生じる失敗状態は非終了状態です。

以下のアクションは、Operator グループが同期するたびに実行されます。

- アクティブメンバーの CSV の指定 API のセットは、クラスターから計算されます。コピーされた CSV は無視されることに注意してください。

-

クラスターセットは

olm.providedAPIsと比較され、olm.providedAPIsに追加の API が含まれる場合は、それらの API がプルーニングされます。 - すべての namespace で同じ API を提供するすべての CSV は再びキューに入れられます。これにより、交差部分のあるグループ間の競合する CSV に対して、それらの競合が競合する CSV のサイズ変更または削除のいずれかによって解決されている可能性があることが通知されます。

2.4.5.10. マルチテナント Operator 管理の制限事項

OpenShift Container Platform は、異なるバージョンの Operator を同じクラスターに同時にインストールするための限定的なサポートを提供します。Operator Lifecycle Manager (OLM) は、Operator を異なる namespace に複数回インストールします。その 1 つの制約として、Operator の API バージョンは同じである必要があります。

Operator は、Kubernetes のグローバルリソースである CustomResourceDefinition オブジェクト (CRD) を使用するため、コントロールプレーンの拡張機能です。多くの場合、Operator の異なるメジャーバージョンには互換性のない CRD があります。これにより、クラスター上の異なる namespace に同時にインストールするのに互換性がなくなります。

すべてのテナントまたは namespace がクラスターの同じコントロールプレーンを共有します。したがって、マルチテナントクラスター内のテナントはグローバル CRD も共有するため、同じクラスターで同じ Operator の異なるインスタンスを並行して使用できるシナリオが制限されます。

サポートされているシナリオは次のとおりです。

- まったく同じ CRD 定義を提供する異なるバージョンの Operator (バージョン管理された CRD の場合は、まったく同じバージョンのセット)

- CRD を同梱せず、代わりに OperatorHub の別のバンドルで CRD を利用できる異なるバージョンの Operator

他のすべてのシナリオはサポートされていません。これは、異なる Operator バージョンからの複数の競合または重複する CRD が同じクラスター上で調整される場合、クラスターデータの整合性が保証されないためです。

2.4.5.11. Operator グループのトラブルシューティング

メンバーシップ

インストールプランの namespace には、Operator グループを 1 つだけ含める必要があります。namespace でクラスターサービスバージョン (CSV) を生成しようとすると、インストールプランでは、以下のシナリオの Operator グループが無効であると見なされます。

- インストールプランの namespace に Operator グループが存在しない。

- インストールプランの namespace に複数の Operator グループが存在する。

- Operator グループに、正しくないサービスアカウント名または存在しないサービスアカウント名が指定されている。

インストールプランで無効な Operator グループが検出された場合には、CSV は生成されず、

InstallPlanリソースは関連するメッセージを出力して、インストールを続行します。たとえば、複数の Operator グループが同じ namespace に存在する場合に以下のメッセージが表示されます。attenuated service account query failed - more than one operator group(s) are managing this namespace count=2ここでは、

count=は、namespace 内の Operator グループの数を指します。-

CSV のインストールモードがその namespace で Operator グループのターゲット namespace 選択をサポートしない場合、CSV は

UnsupportedOperatorGroupの理由で失敗状態に切り替わります。この理由で失敗した状態にある CSV は、Operator グループのターゲット namespace の選択がサポートされる設定に変更されるか、CSV のインストールモードがターゲット namespace 選択をサポートするように変更される場合に、保留状態に切り替わります。

2.4.6. マルチテナント対応と Operator のコロケーション

このガイドでは、Operator Lifecycle Manager (OLM) のマルチテナント対応と Operator のコロケーションについて説明します。

2.4.6.1. Colocation of Operators in a namespace

Operator Lifecycle Manager (OLM) は、同じ namespace にインストールされている OLM 管理の Operator を処理します。つまり、それらの Subscription リソースは、関連する Operator として同じnamespaceに配置されます。それらが実際には関連していなくても、いずれかが更新されると、OLM はバージョンや更新ポリシーなどの状態を考慮します。

このデフォルトの動作は、次の 2 つの方法で現れます。

-

保留中の更新の

InstallPlanリソースには、同じ namespace にある他のすべての Operator のClusterServiceVersion(CSV) リソースが含まれます。 - 同じ namespace 内のすべての Operator は、同じ更新ポリシーを共有します。たとえば、1 つの Operator が手動更新に設定されている場合、他のすべての Operator の更新ポリシーも手動に設定されます。

これらのシナリオは、次の問題につながる可能性があります。

- 更新された Operator だけでなく、より多くのリソースが定義されているため、Operator 更新のインストール計画について推論するのは難しくなります。

- ネームスペース内の一部の Operator を自動的に更新し、他の Operator を手動で更新することは不可能になります。これは、クラスター管理者にとって一般的な要望です。

OpenShift Container Platform Web コンソールを使用して Operator をインストールすると、デフォルトの動作により、All namespaces インストールモードをサポートする Operator がデフォルトの openshift-operators グローバル namespace にインストールされるため、これらの問題は通常表面化します。

クラスター管理者は、次のワークフローを使用して、このデフォルトの動作を手動でバイパスできます。

- Operator のインストール用の namespace を作成します。

- すべての namespace を監視する Operator グループである、カスタム グローバル Operator group を作成します。この Operator グループを作成した namespace に関連付けることで、インストール namespace がグローバル namespace になり、そこにインストールされた Operator がすべての namespace で使用できるようになります。

- 必要な Operator をインストール namespace にインストールします。

Operator に依存関係がある場合、依存関係は事前に作成された namespace に自動的にインストールされます。その結果、依存関係 Operator が同じ更新ポリシーと共有インストールプランを持つことが有効になります。詳細な手順については、カスタム namespace へのグローバル Operator のインストールを参照してください。

2.4.7. Operator 条件

以下では、Operator Lifecycle Manager (OLM) による Operator 条件の使用方法について説明します。

2.4.7.1. Operator 条件について

Operator のライフサイクル管理のロールの一部として、Operator Lifecycle Manager (OLM) は、Operator を定義する Kubernetes リソースの状態から Operator の状態を推測します。このアプローチでは、Operator が特定の状態にあることをある程度保証しますが、推測できない情報を Operator が OLM と通信して提供する必要がある場合も多々あります。続いて、OLM がこの情報を使用して、Operator のライフサイクルをより適切に管理することができます。

OLM は、Operator が OLM に条件について通信できる OperatorCondition というカスタムリソース定義 (CRD) を提供します。OperatorCondition リソースの Spec.Conditions 配列にある場合に、OLM による Operator の管理に影響するサポートされる条件のセットがあります。

デフォルトでは、 Spec.Conditions配列は、ユーザーによって追加されるか、カスタム Operator ロジックの結果として追加されるまで、 Operator Conditionオブジェクトに存在しません。

2.4.7.2. サポートされる条件

Operator Lifecycle Manager (OLM) は、以下の Operator 条件をサポートします。

2.4.7.2.1. アップグレード可能な条件

Upgradeable Operator 条件は、既存のクラスターサービスバージョン (CSV) が、新規の CSV バージョンに置き換えられることを阻止します。この条件は、以下の場合に役に立ちます。

- Operator が重要なプロセスを開始するところで、プロセスが完了するまでアップグレードしてはいけない場合

- Operator が、Operator のアップグレードの準備ができる前に完了する必要のあるカスタムリソース (CR) の移行を実行している場合

Upgradeable Operator の条件を False 値に設定しても、Pod の中断は回避できません。Pod が中断されないようにする必要がある場合は、「追加リソース」セクションの「Pod 中断バジェットを使用して稼働させなければならないPodの数を指定する」と「正常な終了」を参照してください。

Upgradeable Operator 条件の例

apiVersion: operators.coreos.com/v1

kind: OperatorCondition

metadata:

name: my-operator

namespace: operators

spec:

conditions:

- type: Upgradeable

status: "False"

reason: "migration"

message: "The Operator is performing a migration."

lastTransitionTime: "2020-08-24T23:15:55Z"2.4.8. Operator Lifecycle Manager メトリクス

2.4.8.1. 公開されるメトリック

Operator Lifecycle Manager (OLM) は、Prometheus ベースの OpenShift Container Platform クラスターモニタリングスタックで使用される特定の OLM 固有のリソースを公開します。

| 名前 | 説明 |

|---|---|

|

| カタログソースの数。 |

|

|

カタログソースの状態。値 |

|

|

クラスターサービスバージョン (CSV) を調整する際に、(インストールされていない場合など) CSV バージョンが |

|

| 正常に登録された CSV の数。 |

|

|

CSV を調整する際に、CSV バージョンが |

|

| CSV アップグレードの単調 (monotonic) カウント。 |

|

| インストール計画の数。 |

|

| インストール計画に含まれる非推奨のリソースなど、リソースによって生成される警告の個数。 |

|

| 依存関係解決の試行期間。 |

|

| サブスクリプションの数。 |

|

|

サブスクリプション同期の単調 (monotonic) カウント。 |

2.4.9. Operator Lifecycle Manager での Webhook の管理

Webhook により、リソースがオブジェクトストアに保存され、Operator コントローラーによって処理される前に、Operator の作成者はリソースのインターセプト、変更、許可、および拒否を実行することができます。Operator Lifecycle Manager (OLM) は、Operator と共に提供される際にこれらの Webhook のライフサイクルを管理できます。

Operator 開発者が自分の Operator に Webhook を定義する方法の詳細と、OLM で実行する場合の注意事項は、クラスターサービスのバージョン (CSV) を定義する を参照してください。

2.5. OperatorHub について

2.5.1. OperatorHub について

OperatorHub は OpenShift Container Platform の Web コンソールインターフェイスであり、これを使用してクラスター管理者は Operator を検出し、インストールします。1 回のクリックで、Operator をクラスター外のソースからプルし、クラスター上でインストールおよびサブスクライブして、エンジニアリングチームが Operator Lifecycle Manager (OLM) を使用してデプロイメント環境全体で製品をセルフサービスで管理される状態にすることができます。

クラスター管理者は、以下のカテゴリーにグループ化されたカタログから選択することができます。

| カテゴリー | 説明 |

|---|---|

| Red Hat Operator | Red Hat によってパッケージ化され、出荷される Red Hat 製品。Red Hat によってサポートされます。 |

| 認定 Operator | 大手独立系ソフトウェアベンダー (ISV) の製品。Red Hat は ISV とのパートナーシップにより、パッケージ化および出荷を行います。ISV によってサポートされます。 |

| Red Hat Marketplace | Red Hat Marketplace から購入できる認定ソフトウェア。 |

| コミュニティー Operator | redhat-openshift-ecosystem/community-operators-prod/operators GitHub リポジトリーで関連する担当者によって保守されているオプションで表示可能なソフトウェア。正式なサポートはありません。 |

| カスタム Operator | 各自でクラスターに追加する Operator。カスタム Operator を追加していない場合、カスタム カテゴリーは Web コンソールの OperatorHub 上に表示されません。 |

OperatorHub の Operator は OLM で実行されるようにパッケージ化されます。これには、Operator のインストールおよびセキュアな実行に必要なすべての CRD、RBAC ルール、デプロイメント、およびコンテナーイメージが含まれるクラスターサービスバージョン (CSV) という YAML ファイルが含まれます。また、機能の詳細やサポートされる Kubernetes バージョンなどのユーザーに表示される情報も含まれます。

Operator SDK は、開発者が OLM および OperatorHub で使用するために Operator のパッケージ化することを支援するために使用できます。お客様によるアクセスが可能な商用アプリケーションがある場合、Red Hat Partner Connect ポータル (connect.redhat.com) で提供される認定ワークフローを使用してこれを組み込むようにしてください。

2.5.2. OperatorHub アーキテクチャー

OperatorHub UI コンポーネントは、デフォルトで OpenShift Container Platform の openshift-marketplace namespace で Marketplace Operator によって実行されます。

2.5.2.1. OperatorHub カスタムリソース

Marketplace Operator は、OperatorHub で提供されるデフォルトの CatalogSource オブジェクトを管理する cluster という名前の OperatorHub カスタムリソース (CR) を管理します。このリソースを変更して、デフォルトのカタログを有効または無効にすることができます。これは、ネットワークが制限された環境で OpenShift Container Platform を設定する際に役立ちます。

OperatorHub カスタムリースの例

apiVersion: config.openshift.io/v1

kind: OperatorHub

metadata:

name: cluster

spec:

disableAllDefaultSources: true

sources: [

{

name: "community-operators",

disabled: false

}

]2.6. Red Hat が提供する Operator カタログ

Red Hat は、デフォルトで OpenShift Container Platform に含まれる複数の Operator カタログを提供します。

OpenShift Container Platform 4.11 の時点で、デフォルトの Red Hat が提供する Operator カタログは、ファイルベースのカタログ形式でリリースされます。OpenShift Container Platform 4.6 から 4.10 までのデフォルトの Red Hat が提供する Operator カタログは、非推奨の SQLite データベース形式でリリースされました。

opm サブコマンド、フラグ、および SQLite データベース形式に関連する機能も非推奨となり、今後のリリースで削除されます。機能は引き続きサポートされており、非推奨の SQLite データベース形式を使用するカタログに使用する必要があります。

opm index prune などの SQLite データベース形式を使用する opm サブコマンドおよびフラグの多くは、ファイルベースのカタログ形式では機能しません。ファイルベースのカタログの使用についての詳細は、カスタムカタログの管理、Operator Framework パッケージ形式、および oc-mirror プラグインを使用した非接続インストールのイメージのミラーリング について参照してください。

2.6.1. Operator カタログについて

Operator カタログは、Operator Lifecycle Manager (OLM) がクエリーを行い、Operator およびそれらの依存関係をクラスターで検出し、インストールできるメタデータのリポジトリーです。OLM は最新バージョンのカタログから Operator を常にインストールします。

Operator Bundle Format に基づくインデックスイメージは、カタログのコンテナー化されたスナップショットです。これは、Operator マニフェストコンテンツのセットへのポインターのデータベースが含まれるイミュータブルなアーティファクトです。カタログはインデックスイメージを参照し、クラスター上の OLM のコンテンツを調達できます。

カタログが更新されると、Operator の最新バージョンが変更され、それ以前のバージョンが削除または変更される可能性があります。さらに OLM がネットワークが制限された環境の OpenShift Container Platform クラスターで実行される場合、最新のコンテンツをプルするためにインターネットからカタログに直接アクセスすることはできません。

クラスター管理者は、Red Hat が提供するカタログをベースとして使用して、またはゼロから独自のカスタムインデックスイメージを作成できます。これを使用して、クラスターのカタログコンテンツを調達できます。独自のインデックスイメージの作成および更新により、クラスターで利用可能な Operator のセットをカスタマイズする方法が提供され、また前述のネットワークが制限された環境の問題を回避することができます。