Backup and restore

Backing up and restoring your OpenShift Container Platform cluster

Abstract

Chapter 1. Backup and restore

1.1. Control plane backup and restore operations

As a cluster administrator, you might need to stop an OpenShift Container Platform cluster for a period and restart it later. Some reasons for restarting a cluster are that you need to perform maintenance on a cluster or want to reduce resource costs. In OpenShift Container Platform, you can perform a graceful shutdown of a cluster so that you can easily restart the cluster later.

You must back up etcd data before shutting down a cluster; etcd is the key-value store for OpenShift Container Platform, which persists the state of all resource objects. An etcd backup plays a crucial role in disaster recovery. In OpenShift Container Platform, you can also replace an unhealthy etcd member.

When you want to get your cluster running again, restart the cluster gracefully.

A cluster’s certificates expire one year after the installation date. You can shut down a cluster and expect it to restart gracefully while the certificates are still valid. Although the cluster automatically retrieves the expired control plane certificates, you must still approve the certificate signing requests (CSRs).

You might run into several situations where OpenShift Container Platform does not work as expected, such as:

- You have a cluster that is not functional after the restart because of unexpected conditions, such as node failure or network connectivity issues.

- You have deleted something critical in the cluster by mistake.

- You have lost the majority of your control plane hosts, leading to etcd quorum loss.

You can always recover from a disaster situation by restoring your cluster to its previous state using the saved etcd snapshots.

1.2. Application backup and restore operations

As a cluster administrator, you can back up and restore applications running on OpenShift Container Platform by using the OpenShift API for Data Protection (OADP).

OADP backs up and restores Kubernetes resources and internal images, at the granularity of a namespace, by using the version of Velero that is appropriate for the version of OADP you install, according to the table in Downloading the Velero CLI tool. OADP backs up and restores persistent volumes (PVs) by using snapshots or Restic. For details, see OADP features.

1.2.1. OADP requirements

OADP has the following requirements:

-

You must be logged in as a user with a

cluster-adminrole. You must have object storage for storing backups, such as one of the following storage types:

- OpenShift Data Foundation

- Amazon Web Services

- Microsoft Azure

- Google Cloud

- S3-compatible object storage

- IBM Cloud® Object Storage S3

If you want to use CSI backup on OCP 4.11 and later, install OADP 1.1.x.

OADP 1.0.x does not support CSI backup on OCP 4.11 and later. OADP 1.0.x includes Velero 1.7.x and expects the API group snapshot.storage.k8s.io/v1beta1, which is not present on OCP 4.11 and later.

The CloudStorage API for S3 storage is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

To back up PVs with snapshots, you must have cloud storage that has a native snapshot API or supports Container Storage Interface (CSI) snapshots, such as the following providers:

- Amazon Web Services

- Microsoft Azure

- Google Cloud

- CSI snapshot-enabled cloud storage, such as Ceph RBD or Ceph FS

If you do not want to back up PVs by using snapshots, you can use Restic, which is installed by the OADP Operator by default.

1.2.2. Backing up and restoring applications

You back up applications by creating a Backup custom resource (CR). See Creating a Backup CR. You can configure the following backup options:

- Creating backup hooks to run commands before or after the backup operation

- Scheduling backups

- Backing up applications with File System Backup: Kopia or Restic

-

You restore application backups by creating a

Restore(CR). See Creating a Restore CR. - You can configure restore hooks to run commands in init containers or in the application container during the restore operation.

Chapter 2. Shutting down the cluster gracefully

This document describes the process to gracefully shut down your cluster. You might need to temporarily shut down your cluster for maintenance reasons, or to save on resource costs.

2.1. Prerequisites

Take an etcd backup prior to shutting down the cluster.

ImportantIt is important to take an etcd backup before performing this procedure so that your cluster can be restored if you encounter any issues when restarting the cluster.

For example, the following conditions can cause the restarted cluster to malfunction:

- etcd data corruption during shutdown

- Node failure due to hardware

- Network connectivity issues

If your cluster fails to recover, follow the steps to restore to a previous cluster state.

2.2. Shutting down the cluster

You can shut down your cluster in a graceful manner so that it can be restarted at a later date.

You can shut down a cluster until a year from the installation date and expect it to restart gracefully. After a year from the installation date, the cluster certificates expire. However, you might need to manually approve the pending certificate signing requests (CSRs) to recover kubelet certificates when the cluster restarts.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole. - You have taken an etcd backup.

Procedure

If you are shutting the cluster down for an extended period, determine the date on which certificates expire and run the following command:

$ oc -n openshift-kube-apiserver-operator get secret kube-apiserver-to-kubelet-signer -o jsonpath='{.metadata.annotations.auth\.openshift\.io/certificate-not-after}'Example output

2022-08-05T14:37:50Zuser@user:~ $1 - 1

- To ensure that the cluster can restart gracefully, plan to restart it on or before the specified date. As the cluster restarts, the process might require you to manually approve the pending certificate signing requests (CSRs) to recover kubelet certificates.

Mark all the nodes in the cluster as unschedulable. You can do this from your cloud provider’s web console, or by running the following loop:

$ for node in $(oc get nodes -o jsonpath='{.items[*].metadata.name}'); do echo ${node} ; oc adm cordon ${node} ; doneExample output

ci-ln-mgdnf4b-72292-n547t-master-0 node/ci-ln-mgdnf4b-72292-n547t-master-0 cordoned ci-ln-mgdnf4b-72292-n547t-master-1 node/ci-ln-mgdnf4b-72292-n547t-master-1 cordoned ci-ln-mgdnf4b-72292-n547t-master-2 node/ci-ln-mgdnf4b-72292-n547t-master-2 cordoned ci-ln-mgdnf4b-72292-n547t-worker-a-s7ntl node/ci-ln-mgdnf4b-72292-n547t-worker-a-s7ntl cordoned ci-ln-mgdnf4b-72292-n547t-worker-b-cmc9k node/ci-ln-mgdnf4b-72292-n547t-worker-b-cmc9k cordoned ci-ln-mgdnf4b-72292-n547t-worker-c-vcmtn node/ci-ln-mgdnf4b-72292-n547t-worker-c-vcmtn cordonedEvacuate the pods using the following method:

$ for node in $(oc get nodes -l node-role.kubernetes.io/worker -o jsonpath='{.items[*].metadata.name}'); do echo ${node} ; oc adm drain ${node} --delete-emptydir-data --ignore-daemonsets=true --timeout=15s --force ; doneShut down all of the nodes in the cluster. You can do this from the web console for your cloud provider web console, or by running the following loop. Shutting down the nodes by using one of these methods allows pods to terminate gracefully, which reduces the chance for data corruption.

NoteEnsure that the control plane node with the API VIP assigned is the last node processed in the loop. Otherwise, the shutdown command fails.

$ for node in $(oc get nodes -o jsonpath='{.items[*].metadata.name}'); do oc debug node/${node} -- chroot /host shutdown -h 1; done1 - 1

-h 1indicates how long, in minutes, this process lasts before the control plane nodes are shut down. For large-scale clusters with 10 nodes or more, set to-h 10or longer to make sure all the compute nodes have time to shut down first.

Example output

Starting pod/ip-10-0-130-169us-east-2computeinternal-debug ... To use host binaries, run `chroot /host` Shutdown scheduled for Mon 2021-09-13 09:36:17 UTC, use 'shutdown -c' to cancel. Removing debug pod ... Starting pod/ip-10-0-150-116us-east-2computeinternal-debug ... To use host binaries, run `chroot /host` Shutdown scheduled for Mon 2021-09-13 09:36:29 UTC, use 'shutdown -c' to cancel.NoteIt is not necessary to drain control plane nodes of the standard pods that ship with OpenShift Container Platform prior to shutdown. Cluster administrators are responsible for ensuring a clean restart of their own workloads after the cluster is restarted. If you drained control plane nodes prior to shutdown because of custom workloads, you must mark the control plane nodes as schedulable before the cluster will be functional again after restart.

Shut off any cluster dependencies that are no longer needed, such as external storage or an LDAP server. Be sure to consult your vendor’s documentation before doing so.

ImportantIf you deployed your cluster on a cloud-provider platform, do not shut down, suspend, or delete the associated cloud resources. If you delete the cloud resources of a suspended virtual machine, OpenShift Container Platform might not restore successfully.

Chapter 3. Restarting the cluster gracefully

This document describes the process to restart your cluster after a graceful shutdown.

Even though the cluster is expected to be functional after the restart, the cluster might not recover due to unexpected conditions, for example:

- etcd data corruption during shutdown

- Node failure due to hardware

- Network connectivity issues

If your cluster fails to recover, follow the steps to restore to a previous cluster state.

3.1. Prerequisites

- You have gracefully shut down your cluster.

3.2. Restarting the cluster

You can restart your cluster after it has been shut down gracefully.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole. - This procedure assumes that you gracefully shut down the cluster.

Procedure

Turn on the control plane nodes.

If you are using the

admin.kubeconfigfrom the cluster installation and the API virtual IP address (VIP) is up, complete the following steps:-

Set the

KUBECONFIGenvironment variable to theadmin.kubeconfigpath. For each control plane node in the cluster, run the following command:

$ oc adm uncordon <node>

-

Set the

If you do not have access to your

admin.kubeconfigcredentials, complete the following steps:- Use SSH to connect to a control plane node.

-

Copy the

localhost-recovery.kubeconfigfile to the/rootdirectory. Use that file to run the following command for each control plane node in the cluster:

$ oc adm uncordon <node>

- Power on any cluster dependencies, such as external storage or an LDAP server.

Start all cluster machines.

Use the appropriate method for your cloud environment to start the machines, for example, from your cloud provider’s web console.

Wait approximately 10 minutes before continuing to check the status of control plane nodes.

Verify that all control plane nodes are ready.

$ oc get nodes -l node-role.kubernetes.io/masterThe control plane nodes are ready if the status is

Ready, as shown in the following output:NAME STATUS ROLES AGE VERSION ip-10-0-168-251.ec2.internal Ready control-plane,master 75m v1.30.3 ip-10-0-170-223.ec2.internal Ready control-plane,master 75m v1.30.3 ip-10-0-211-16.ec2.internal Ready control-plane,master 75m v1.30.3If the control plane nodes are not ready, then check whether there are any pending certificate signing requests (CSRs) that must be approved.

Get the list of current CSRs:

$ oc get csrReview the details of a CSR to verify that it is valid:

$ oc describe csr <csr_name>1 - 1

<csr_name>is the name of a CSR from the list of current CSRs.

Approve each valid CSR:

$ oc adm certificate approve <csr_name>

After the control plane nodes are ready, verify that all worker nodes are ready.

$ oc get nodes -l node-role.kubernetes.io/workerThe worker nodes are ready if the status is

Ready, as shown in the following output:NAME STATUS ROLES AGE VERSION ip-10-0-179-95.ec2.internal Ready worker 64m v1.30.3 ip-10-0-182-134.ec2.internal Ready worker 64m v1.30.3 ip-10-0-250-100.ec2.internal Ready worker 64m v1.30.3If the worker nodes are not ready, then check whether there are any pending certificate signing requests (CSRs) that must be approved.

Get the list of current CSRs:

$ oc get csrReview the details of a CSR to verify that it is valid:

$ oc describe csr <csr_name>1 - 1

<csr_name>is the name of a CSR from the list of current CSRs.

Approve each valid CSR:

$ oc adm certificate approve <csr_name>

After the control plane and compute nodes are ready, mark all the nodes in the cluster as schedulable by running the following command:

$ for node in $(oc get nodes -o jsonpath='{.items[*].metadata.name}'); do echo ${node} ; oc adm uncordon ${node} ; doneVerify that the cluster started properly.

Check that there are no degraded cluster Operators.

$ oc get clusteroperatorsCheck that there are no cluster Operators with the

DEGRADEDcondition set toTrue.NAME VERSION AVAILABLE PROGRESSING DEGRADED SINCE authentication 4.17.0 True False False 59m cloud-credential 4.17.0 True False False 85m cluster-autoscaler 4.17.0 True False False 73m config-operator 4.17.0 True False False 73m console 4.17.0 True False False 62m csi-snapshot-controller 4.17.0 True False False 66m dns 4.17.0 True False False 76m etcd 4.17.0 True False False 76m ...Check that all nodes are in the

Readystate:$ oc get nodesCheck that the status for all nodes is

Ready.NAME STATUS ROLES AGE VERSION ip-10-0-168-251.ec2.internal Ready control-plane,master 82m v1.30.3 ip-10-0-170-223.ec2.internal Ready control-plane,master 82m v1.30.3 ip-10-0-179-95.ec2.internal Ready worker 70m v1.30.3 ip-10-0-182-134.ec2.internal Ready worker 70m v1.30.3 ip-10-0-211-16.ec2.internal Ready control-plane,master 82m v1.30.3 ip-10-0-250-100.ec2.internal Ready worker 69m v1.30.3If the cluster did not start properly, you might need to restore your cluster using an etcd backup. For more information, see "Restoring to a previous cluster state".

Chapter 4. OADP Application backup and restore

4.1. Introduction to OpenShift API for Data Protection

Use OpenShift API for Data Protection (OADP) to safeguard applications, application-related cluster resources, persistent volumes, and internal images on OpenShift Container Platform. OADP backs up containerized applications and virtual machines (VMs). This helps you ensure disaster recovery.

However, OADP does not serve as a disaster recovery solution for etcd or OpenShift Operators.

OADP support is provided to customer workload namespaces, and cluster scope resources.

Full cluster backup and restore are not supported.

4.1.1. OpenShift API for Data Protection APIs

OpenShift API for Data Protection (OADP) provides APIs that enable multiple approaches to customizing backups and preventing the inclusion of unnecessary or inappropriate resources.

OADP provides the following APIs. See the Additional resources section for more details.

-

Backup -

Restore -

Schedule -

BackupStorageLocation -

VolumeSnapshotLocation

4.1.1.1. Support for OpenShift API for Data Protection

Review the OADP support matrix for version compatibility with OpenShift Container Platform releases and lifecycle policy information, including Extended Update Support (EUS) options.

| Version | OpenShift Container Platform version | General availability | Full support ends | Maintenance ends | Extended Update Support (EUS) | Extended Update Support Term 2 (EUS Term 2) |

| 1.4 |

| 10 Jul 2024 | Release of 1.5 | Release of 1.6 | 27 Jun 2026 EUS must be on OpenShift Container Platform 4.16 | 27 Jun 2027 EUS Term 2 must be on OpenShift Container Platform 4.16 |

| 1.3 |

| 29 Nov 2023 | 10 Jul 2024 | Release of 1.5 | 31 Oct 2025 EUS must be on OpenShift Container Platform 4.14 | 31 Oct 2026 EUS Term 2 must be on OpenShift Container Platform 4.14 |

4.1.1.1.1. Unsupported versions of the OADP Operator

| Version | General availability | Full support ended | Maintenance ended |

| 1.2 | 14 Jun 2023 | 29 Nov 2023 | 10 Jul 2024 |

| 1.1 | 01 Sep 2022 | 14 Jun 2023 | 29 Nov 2023 |

| 1.0 | 09 Feb 2022 | 01 Sep 2022 | 14 Jun 2023 |

For more details about EUS, see Extended Update Support.

For more details about EUS Term 2, see Extended Update Support Term 2.

4.2. OADP release notes

4.2.1. OADP 1.4 release notes

The release notes for OpenShift API for Data Protection (OADP) 1.4 describe new features and enhancements, deprecated features, product recommendations, known issues, and resolved issues.

For additional information about OADP, see OpenShift API for Data Protection (OADP) FAQ.

4.2.1.1. OADP 1.4.9 release notes

The OpenShift API for Data Protection (OADP) 1.4.9 release notes list resolved issues.

4.2.1.1.1. Resolved issues

- File system backups no longer create

PodVolumeBackupCRs for excluded PVCs Before this update, when performing a file system (FS) backup with

defaultVolumesToFsBackup: trueand explicitly excludingpersistentvolumeclaims(PVCs) usingincludedResources,PodVolumeBackupresources were still created for these excluded PVCs. With this release, the backup logic was updated to correctly identify and skip the creation ofPodVolumeBackupresources forpersistentvolumeclaimstypes when they are explicitly excluded from the backup configuration. As a result,PodVolumeBackupCRs are no longer created for excluded PVCs.- S3 storage uses proxy values with

insecureSkipTLSVerify: "true" Before this update, when running image registry backups to S3 storage in a proxy-required environment, setting

insecureSkipTLSVerify: "true"caused the system to ignore the configured proxy, leading to backups hanging or failing. With this release, the backup logic has been updated to properly respect proxy settings. As a result, backups usingbackupImages: truenow complete successfully regardless of whetherinsecureSkipTLSVerifyis set to "true" or "false".- DPA reconciliation fails with a clear error when a VSL references a missing credential key

Before this update, the

DataProtectionApplication(DPA) did not validate that theVolumeSnapshotLocation(VSL)credential.keyexisted in the referenced secret, so a DPA could reconcile successfully with a wrong key name. This allowed misconfigured VSL credentials to pass reconciliation but later caused backups to fail validation with an error indicating that the secret was missing data for the specified key. With this release, DPA reconciliation checks that thecredential.keyexists in the referenced secret and fails with a clear error message when it does not exist.- Restricted permissions for Velero cloud credentials

Before this update, the Velero

/credentials/cloudsecret was mounted with incorrect permissions, making it world-readable. As a consequence, any process or user with access to the container file system could read sensitive cloud credential data. With this release, the Velero secret default permissions were changed to0640. As a result, access to the credentials file is limited to the intended owner or group.- Improved error when imagestream backups run without

oadp-<bsl_name>-<bsl_provider>-registry-secret Before this update, when the backup and the Backup Storage Location (BSL) are managed outside the scope of the Data Protection Application (DPA), the DPA did not create the relevant

oadp-<bsl_name>-<bsl_provider>-registry-secret. As a result, the OpenShift Velero plugin panicked on the imagestream backup with the following panic error:024-02-27T10:46:50.028951744Z time="2024-02-27T10:46:50Z" level=error msg="Error backing up item" backup=openshift-adp/<backup name> error="error executing custom action (groupResource=imagestreams.image.openshift.io, namespace=<BSL Name>, name=postgres): rpc error: code = Aborted desc = plugin panicked: runtime error: index out of range with length 1, stack trace: goroutine 94…With this release, if the required secret is missing, the plugin returns an error message indicating missing

oadp-<bsl_name>-<bsl_provider>-registry-secret.- Single-node OpenShift clusters no longer crash due to premature CRD sync before API initialization

Before this update, the controller crashed during image-based upgrade (IBU) due to missing OpenShift Container Platform custom resource definitions (CRDs) before they were fully initialized. As a consequence, this failure delayed

DataProtectionApplication(DPA) reconciliation during IBU upgrade by 8 minutes. This release resolves this issue by requiring the controller to wait for OpenShift Container Platform CRDs to load before starting in the IBU environment on single-node OpenShift, while also disabling leader election. This change shortens the DPA reconciliation window and improves the overall upgrade duration for single-node OpenShift clusters.- OADP 1.4.9 fixes the following CVEs

4.2.1.2. OADP 1.4.8 release notes

OpenShift API for Data Protection (OADP) 1.4.8 is a Container Grade Only (CGO) release, which is released to refresh the health grades of the containers. No code was changed in the product itself compared to that of OADP 1.4.7. OADP 1.4.8 fixes several Common Vulnerabilities and Exposures (CVEs).

4.2.1.2.1. Resolved issues

- OADP 1.4.8 fixes the following CVEs

4.2.1.3. OADP 1.4.7 release notes

OpenShift API for Data Protection (OADP) 1.4.7 is a Container Grade Only (CGO) release, which is released to refresh the health grades of the containers. No code was changed in the product itself compared to that of OADP 1.4.6.

4.2.1.4. OADP 1.4.6 release notes

OpenShift API for Data Protection (OADP) 1.4.6 is a Container Grade Only (CGO) release, which is released to refresh the health grades of the containers. No code was changed in the product itself compared to that of OADP 1.4.5.

4.2.1.5. OADP 1.4.5 release notes

The OpenShift API for Data Protection (OADP) 1.4.5 release notes list new features and resolved issues.

4.2.1.5.1. New features

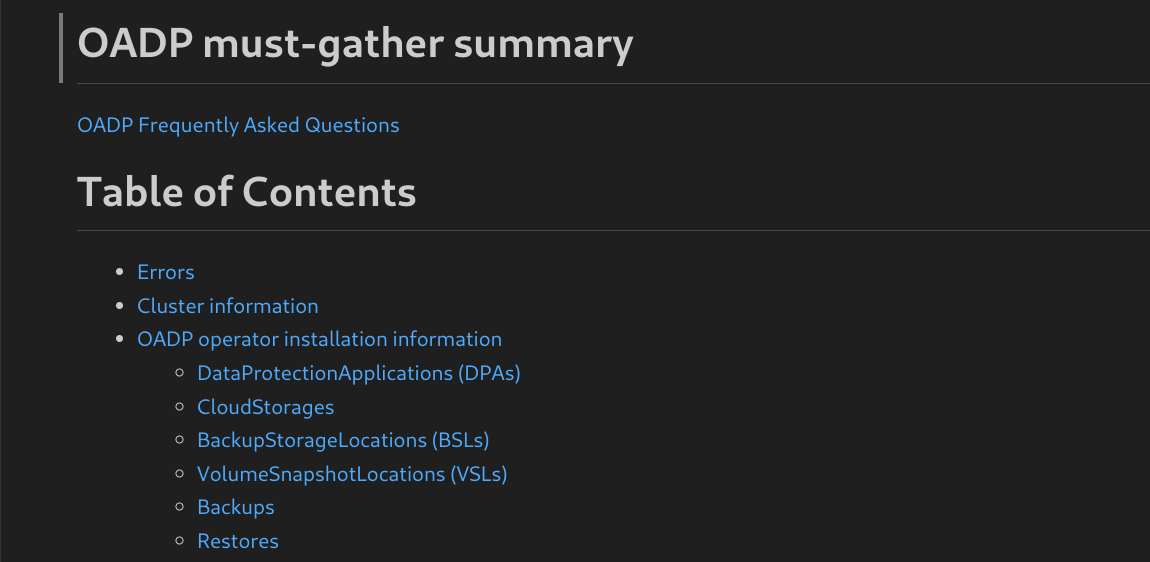

- Collecting logs with the

must-gathertool has been improved with a Markdown summary You can collect logs and information about OpenShift API for Data Protection (OADP) custom resources by using the

must-gathertool. Themust-gatherdata must be attached to all customer cases. This tool generates a Markdown output file with the collected information, which is located in the clusters directory of themust-gatherlogs.

4.2.1.5.2. Resolved issues

- OADP 1.4.5 fixes the following CVEs

4.2.1.6. OADP 1.4.4 release notes

OpenShift API for Data Protection (OADP) 1.4.4 is a Container Grade Only (CGO) release, which is released to refresh the health grades of the containers. No code was changed in the product itself compared to that of OADP 1.4.3. One known issue was identified.

4.2.1.6.1. Known issues

- Issue with restoring stateful applications

When you restore a stateful application that uses the

azurefile-csistorage class, the restore operation remains in theFinalizingphase.

4.2.1.7. OADP 1.4.3 release notes

The OpenShift API for Data Protection (OADP) 1.4.3 release notes list the following new feature.

4.2.1.7.1. New features

- Notable changes in the

kubevirtvelero plugin in version 0.7.1 With this release, the

kubevirtvelero plugin has been updated to version 0.7.1. Notable improvements include the following bug fix and new features:- Virtual machine instances (VMIs) are no longer ignored from backup when the owner VM is excluded.

- Object graphs now include all extra objects during backup and restore operations.

- Optionally generated labels are now added to new firmware Universally Unique Identifiers (UUIDs) during restore operations.

- Switching VM run strategies during restore operations is now possible.

- Clearing a MAC address by label is now supported.

- The restore-specific checks during the backup operation are now skipped.

-

The

VirtualMachineClusterInstancetypeandVirtualMachineClusterPreferencecustom resource definitions (CRDs) are now supported.

4.2.1.8. OADP 1.4.2 release notes

The OpenShift API for Data Protection (OADP) 1.4.2 release notes list new features, resolved issues, and known issues.

4.2.1.8.1. New features

- Backing up different volumes in the same namespace by using the VolumePolicy feature is now possible

With this release, Velero provides resource policies to back up different volumes in the same namespace by using the

VolumePolicyfeature. The supportedVolumePolicyfeature to back up different volumes includesskip,snapshot, andfs-backupactions.- File system backup and data mover can now use short-term credentials

File system backup and data mover can now use short-term credentials such as AWS Security Token Service (STS) and Google Cloud WIF. With this support, backup is successfully completed without any

PartiallyFailedstatus.

4.2.1.8.2. Resolved issues

- DPA now reports errors if VSL contains an incorrect provider value

Previously, if the provider of a Volume Snapshot Location (VSL) spec was incorrect, the Data Protection Application (DPA) reconciled successfully. With this update, DPA reports errors and requests for a valid provider value.

- Data Mover restore is successful irrespective of using different OADP namespaces for backup and restore

Previously, when backup operation was executed by using OADP installed in one namespace but was restored by using OADP installed in a different namespace, the Data Mover restore failed. With this update, Data Mover restore is now successful.

- SSE-C backup works with the calculated MD5 of the secret key

Previously, backup failed with the following error:

Requests specifying Server Side Encryption with Customer provided keys must provide the client calculated MD5 of the secret key.With this update, missing Server-Side Encryption with Customer-Provided Keys (SSE-C) base64 and MD5 hash are now fixed. As a result, SSE-C backup works with the calculated MD5 of the secret key. In addition, incorrect

errorhandlingfor thecustomerKeysize is also fixed.

For a complete list of all issues resolved in this release, see the list of OADP 1.4.2 resolved issues in Jira.

4.2.1.8.3. Known issues

- The nodeSelector spec is not supported for the Data Mover restore action

When a Data Protection Application (DPA) is created with the

nodeSelectorfield set in thenodeAgentparameter, Data Mover restore partially fails instead of completing the restore operation.- The S3 storage does not use proxy environment when TLS skip verify is specified

In the image registry backup, the S3 storage does not use the proxy environment when the

insecureSkipTLSVerifyparameter is set totrue.- Kopia does not delete artifacts after backup expiration

Even after you delete a backup, Kopia does not delete the volume artifacts from the

${bucket_name}/kopia/$openshift-adpon the S3 location after backup expired. For more information, see "About Kopia repository maintenance".

4.2.1.9. OADP 1.4.1 release notes

The OpenShift API for Data Protection (OADP) 1.4.1 release notes list new features, resolved issues, and known issues.

4.2.1.9.1. New features

- New DPA fields to update client qps and burst

You can now change Velero Server Kubernetes API queries per second and burst values by using the new Data Protection Application (DPA) fields. The new DPA fields are

spec.configuration.velero.client-qpsandspec.configuration.velero.client-burst, which both default to 100.- Enabling non-default algorithms with Kopia

With this update, you can now configure the hash, encryption, and splitter algorithms in Kopia to select non-default options to optimize performance for different backup workloads.

To configure these algorithms, set the

envvariable of aveleropod in thepodConfigsection of the DataProtectionApplication (DPA) configuration. If this variable is not set, or an unsupported algorithm is chosen, Kopia will default to its standard algorithms.

4.2.1.9.2. Resolved issues

- Restoring a backup without pods is now successful

Previously, restoring a backup without pods and having

StorageClass VolumeBindingModeset asWaitForFirstConsumer, resulted in thePartiallyFailedstatus with an error:fail to patch dynamic PV, err: context deadline exceeded. With this update, patching dynamic PV is skipped and restoring a backup is successful without anyPartiallyFailedstatus.- PodVolumeBackup CR now displays correct message

Previously, the

PodVolumeBackupcustom resource (CR) generated an incorrect message, which was:get a podvolumebackup with status "InProgress" during the server starting, mark it as "Failed". With this update, the message produced is now:found a podvolumebackup with status "InProgress" during the server starting, mark it as "Failed".- Overriding imagePullPolicy is now possible with DPA

Previously, OADP set the

imagePullPolicyparameter toAlwaysfor all images. With this update, OADP checks if each image containssha256orsha512digest, then it setsimagePullPolicytoIfNotPresent; otherwiseimagePullPolicyis set toAlways. You can now override this policy by using the newspec.containerImagePullPolicyDPA field.- OADP Velero can now retry updating the restore status if initial update fails

Previously, OADP Velero failed to update the restored CR status. This left the status at

InProgressindefinitely. Components which relied on the backup and restore CR status to determine the completion would fail. With this update, the restore CR status for a restore correctly proceeds to theCompletedorFailedstatus.- Restoring BuildConfig Build from a different cluster is successful without any errors

Previously, when performing a restore of the

BuildConfigBuild resource from a different cluster, the application generated an error on TLS verification to the internal image registry. The resulting error wasfailed to verify certificate: x509: certificate signed by unknown authorityerror. With this update, the restore of theBuildConfigbuild resources to a different cluster can proceed successfully without generating thefailed to verify certificateerror.- Restoring an empty PVC is successful

Previously, downloading data failed while restoring an empty persistent volume claim (PVC). It failed with the following error:

data path restore failed: Failed to run kopia restore: Unable to load snapshot : snapshot not foundWith this update, the downloading of data proceeds to correct conclusion when restoring an empty PVC and the error message is not generated.

- There is no Velero memory leak in CSI and DataMover plugins

Previously, a Velero memory leak was caused by using the CSI and DataMover plugins. When the backup ended, the Velero plugin instance was not deleted and the memory leak consumed memory until an

Out of Memory(OOM) condition was generated in the Velero pod. With this update, there is no resulting Velero memory leak when using the CSI and DataMover plugins.- Post-hook operation does not start before the related PVs are released

Previously, due to the asynchronous nature of the Data Mover operation, a post-hook might be attempted before the Data Mover persistent volume claim (PVC) releases the persistent volumes (PVs) of the related pods. This problem would cause the backup to fail with a

PartiallyFailedstatus. With this update, the post-hook operation is not started until the related PVs are released by the Data Mover PVC, eliminating thePartiallyFailedbackup status.- Deploying a DPA works as expected in namespaces with more than 37 characters

When you install the OADP Operator in a namespace with more than 37 characters to create a new DPA, labeling the "cloud-credentials" Secret fails and the DPA reports the following error:

The generated label name is too long.With this update, creating a DPA does not fail in namespaces with more than 37 characters in the name.

- Restore is successfully completed by overriding the timeout error

Previously, in a large scale environment, the restore operation would result in a

Partiallyfailedstatus with the error:fail to patch dynamic PV, err: context deadline exceeded. With this update, theresourceTimeoutVelero server argument is used to override this timeout error resulting in a successful restore.

For a complete list of all issues resolved in this release, see the list of OADP 1.4.1 resolved issues in Jira.

4.2.1.9.3. Known issues

- Cassandra application pods enter into the

CrashLoopBackoffstatus after restoring OADP After OADP restores, the Cassandra application pods might enter

CrashLoopBackoffstatus. To work around this problem, delete theStatefulSetpods that are returning the errorCrashLoopBackoffstate after restoring OADP. TheStatefulSetcontroller then recreates these pods and it runs normally.- Deployment referencing ImageStream is not restored properly leading to corrupted pod and volume contents

During a File System Backup (FSB) restore operation, a

Deploymentresource referencing anImageStreamis not restored properly. The restored pod that runs the FSB, and thepostHookis terminated prematurely.During the restore operation, the OpenShift Container Platform controller updates the

spec.template.spec.containers[0].imagefield in theDeploymentresource with an updatedImageStreamTaghash. The update triggers the rollout of a new pod, terminating the pod on whichveleroruns the FSB along with the post-hook. For more information about image stream trigger, see Triggering updates on image stream changes.The workaround for this behavior is a two-step restore process:

Perform a restore excluding the

Deploymentresources, for example:$ velero restore create <RESTORE_NAME> \ --from-backup <BACKUP_NAME> \ --exclude-resources=deployment.appsOnce the first restore is successful, perform a second restore by including these resources, for example:

$ velero restore create <RESTORE_NAME> \ --from-backup <BACKUP_NAME> \ --include-resources=deployment.apps

4.2.1.10. OADP 1.4.0 release notes

The OpenShift API for Data Protection (OADP) 1.4.0 release notes list resolved issues and known issues.

4.2.1.10.1. Resolved issues

- Restore works correctly in OpenShift Container Platform 4.16

Previously, while restoring the deleted application namespace, the restore operation partially failed with the

resource name may not be emptyerror in OpenShift Container Platform 4.16. With this update, restore works as expected in OpenShift Container Platform 4.16.- Data Mover backups work properly in the OpenShift Container Platform 4.16 cluster

Previously, Velero was using the earlier version of SDK where the

Spec.SourceVolumeModefield did not exist. As a consequence, Data Mover backups failed in the OpenShift Container Platform 4.16 cluster on the external snapshotter with version 4.2. With this update, external snapshotter is upgraded to version 7.0 and later. As a result, backups do not fail in the OpenShift Container Platform 4.16 cluster.

For a complete list of all issues resolved in this release, see the list of OADP 1.4.0 resolved issues in Jira.

4.2.1.10.2. Known issues

- Backup fails when checksumAlgorithm is not set for MCG

While performing a backup of any application with Noobaa as the backup location, if the

checksumAlgorithmconfiguration parameter is not set, backup fails. To fix this problem, if you do not provide a value forchecksumAlgorithmin the Backup Storage Location (BSL) configuration, an empty value is added. The empty value is only added for BSLs that are created using Data Protection Application (DPA) custom resource (CR), and this value is not added if BSLs are created using any other method.

For a complete list of all known issues in this release, see the list of OADP 1.4.0 known issues in Jira.

4.2.2. Upgrading OADP 1.3 to 1.4

Learn how to upgrade your existing OADP 1.3 installation to OADP 1.4.

Always upgrade to the next minor version. Do not skip versions. To update to a later version, upgrade only one channel at a time. For example, to upgrade from OpenShift API for Data Protection (OADP) 1.1 to 1.3, upgrade first to 1.2, and then to 1.3.

4.2.2.1. Changes from OADP 1.3 to 1.4

The Velero server has been updated from version 1.12 to 1.14. Although the Data Protection Application (DPA) remains unchanged, several plugins and default configuration values were updated.

The changes are as follows:

-

The

velero-plugin-for-csicode is now available in the Velero code, which means aninitcontainer is no longer required for the plugin. - Velero changed the client Burst default from 30 to 100 and the QPS default from 20 to 100.

The

velero-plugin-for-awsplugin updated default value of thespec.config.checksumAlgorithmfield inBackupStorageLocationobjects (BSLs) from""(no checksum calculation) to theCRC32algorithm. For more information, see Velero plugins for AWS Backup Storage Location. The checksum algorithm types are known to work only with AWS. Several S3 providers require themd5sumto be disabled by setting the checksum algorithm to"". Confirmmd5sumalgorithm support and configuration with your storage provider.In OADP 1.4, the default value for BSLs created within DPA for this configuration is

"". This default value means that themd5sumis not checked, which is consistent with OADP 1.3. For BSLs created within DPA, update it by using thespec.backupLocations[].velero.config.checksumAlgorithmfield in the DPA. If your BSLs are created outside DPA, you can update this configuration by usingspec.config.checksumAlgorithmin the BSLs.

4.2.2.2. Backing up the DPA configuration

Save your current DataProtectionApplication (DPA) configuration to a file. Backing up your configuration helps ensure you can restore your settings if any issues occur during the upgrade process.

Procedure

Save your current DPA configuration by running the following command:

Example command

$ oc get dpa -n openshift-adp -o yaml > dpa.orig.backup

4.2.2.3. Upgrading the OADP Operator

Upgrade the OpenShift API for Data Protection (OADP) Operator to ensure your installation has the latest enhancements and bug fixes.

Procedure

-

Change your subscription channel for the OADP Operator from

stable-1.3tostable-1.4. - Wait for the Operator and containers to update and restart.

4.2.2.4. Converting DPA to the new version for OADP 1.4.0

To upgrade from OADP 1.3 to 1.4, no Data Protection Application (DPA) changes are required.

4.2.2.5. Verifying the upgrade

Verify the upgrade to ensure the integrity of the installation and the availability of backup storage locations.

Procedure

Verify the installation by viewing the OpenShift API for Data Protection (OADP) resources by running the following command:

$ oc get all -n openshift-adpExample output

NAME READY STATUS RESTARTS AGE pod/oadp-operator-controller-manager-67d9494d47-6l8z8 2/2 Running 0 2m8s pod/restic-9cq4q 1/1 Running 0 94s pod/restic-m4lts 1/1 Running 0 94s pod/restic-pv4kr 1/1 Running 0 95s pod/velero-588db7f655-n842v 1/1 Running 0 95s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/oadp-operator-controller-manager-metrics-service ClusterIP 172.30.70.140 <none> 8443/TCP 2m8s NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/restic 3 3 3 3 3 <none> 96s NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/oadp-operator-controller-manager 1/1 1 1 2m9s deployment.apps/velero 1/1 1 1 96s NAME DESIRED CURRENT READY AGE replicaset.apps/oadp-operator-controller-manager-67d9494d47 1 1 1 2m9s replicaset.apps/velero-588db7f655 1 1 1 96sVerify that the

DataProtectionApplication(DPA) is reconciled by running the following command:$ oc get dpa dpa-sample -n openshift-adp -o jsonpath='{.status}'Example output

{"conditions":[{"lastTransitionTime":"2023-10-27T01:23:57Z","message":"Reconcile complete","reason":"Complete","status":"True","type":"Reconciled"}]}-

Verify the

typeis set toReconciled. Verify the backup storage location and confirm that the

PHASEisAvailableby running the following command:$ oc get backupstoragelocations.velero.io -n openshift-adpExample output

NAME PHASE LAST VALIDATED AGE DEFAULT dpa-sample-1 Available 1s 3d16h true

4.3. OADP performance

4.3.1. OADP recommended network settings

Keep a stable network across your OpenShift nodes, AWS Simple Storage Service (S3) storage, and cloud environments. Meeting these recommended network settings helps you ensure successful OpenShift API for Data Protection (OADP) backup and restore operations, even when using remote AWS S3 buckets.

4.3.1.1. OADP network requirements

For a supported experience with OpenShift API for Data Protection (OADP), you should have a stable and resilient network across OpenShift nodes, AWS Simple Storage Service (S3)-compatible object storage, and in supported cloud environments that meet OpenShift network requirements.

For deployments that use remote S3 buckets located off-cluster with suboptimal data paths, such as high-latency or geographically distant locations, successful backup and restore operations require specific configurations. Ensure your network settings meet the following minimum requirements:

- Bandwidth (network upload speed to object storage): Greater than 2 Mbps for small backups and 10-100 Mbps depending on the data volume for larger backups.

- Packet loss: 1%

- Packet corruption: 1%

- Latency: 100 ms

Ensure that your OpenShift Container Platform network performs optimally and meets OpenShift Container Platform network requirements.

Although Red Hat provides support for standard backup and restore failures, it does not provide support for failures caused by network settings that do not meet the recommended thresholds.

4.4. OADP features and plugins

Review OpenShift API for Data Protection (OADP) features and default plugins that integrate Velero with cloud providers to back up and restore OpenShift Container Platform resources. This helps you to select the right plugins and features for your backup and restore environment.

4.4.1. OADP features

Review the backup, restore, and scheduling features of OpenShift API for Data Protection (OADP) for protecting applications on OpenShift Container Platform. This helps you to understand the available capabilities for your data protection strategy.

- Backup

You can use OADP to back up all applications on the OpenShift Platform, or you can filter the resources by type, namespace, or label.

OADP backs up Kubernetes objects and internal images by saving them as an archive file on object storage. OADP backs up persistent volumes (PVs) by creating snapshots with the native cloud snapshot API or with the Container Storage Interface (CSI). For cloud providers that do not support snapshots, OADP backs up resources and PV data with Restic.

NoteYou must exclude Operators from the backup of an application for backup and restore to succeed.

- Restore

You can restore resources and PVs from a backup. You can restore all objects in a backup or filter the objects by namespace, PV, or label.

NoteYou must exclude Operators from the backup of an application for backup and restore to succeed.

- Schedule

- You can schedule backups at specified intervals.

- Hooks

-

You can use hooks to run commands in a container on a pod, for example,

fsfreezeto freeze a file system. You can configure a hook to run before or after a backup or restore. Restore hooks can run in an init container or in the application container.

4.4.2. OADP plugins

Review the default Velero plugins provided by OpenShift API for Data Protection (OADP) that integrate with storage providers to support backup and snapshot operations. This helps you to select and configure the right plugins for your cloud environment.

OADP also provides plugins for OpenShift Container Platform resource backups, OpenShift Virtualization resource backups, and Container Storage Interface (CSI) snapshots.

| OADP plugin | Function | Storage location |

|---|---|---|

|

| Backs up and restores Kubernetes objects. | AWS S3 |

| Backs up and restores volumes with snapshots. | AWS EBS | |

|

| Backs up and restores Kubernetes objects. | Microsoft Azure Blob storage |

| Backs up and restores volumes with snapshots. | Microsoft Azure Managed Disks | |

|

| Backs up and restores Kubernetes objects. | Google Cloud Storage |

| Backs up and restores volumes with snapshots. | Google Compute Engine Disks | |

|

| Backs up and restores OpenShift Container Platform resources. [1] | Object store |

|

| Backs up and restores OpenShift Virtualization resources. [2] | Object store |

|

| Backs up and restores volumes with CSI snapshots. [3] | Cloud storage that supports CSI snapshots |

|

| VolumeSnapshotMover relocates snapshots from the cluster into an object store to be used during a restore process to recover stateful applications, in situations such as cluster deletion. [4] | Object store |

- Mandatory.

- Virtual machine disks are backed up with CSI snapshots or Restic.

The

csiplugin uses the Kubernetes CSI snapshot API.-

OADP 1.1 or later uses

snapshot.storage.k8s.io/v1 -

OADP 1.0 uses

snapshot.storage.k8s.io/v1beta1

-

OADP 1.1 or later uses

- OADP 1.2 only.

4.4.3. About OADP Velero plugins

Review how to configure default cloud provider plugins or install custom plugins during the OADP deployment to connect your specific storage solutions. This helps you to successfully back up and restore resources across your environments.

4.4.3.1. Default Velero cloud provider plugins

You can install any of the following default Velero cloud provider plugins when you configure the oadp_v1alpha1_dpa.yaml file during deployment:

-

aws(Amazon Web Services) -

gcp(Google Cloud) -

azure(Microsoft Azure) -

openshift(OpenShift Velero plugin) -

csi(Container Storage Interface) -

kubevirt(KubeVirt)

You specify the desired default plugins in the oadp_v1alpha1_dpa.yaml file during deployment.

The following .yaml file installs the openshift, aws, azure, and gcp plugins:

apiVersion: oadp.openshift.io/v1alpha1

kind: DataProtectionApplication

metadata:

name: dpa-sample

spec:

configuration:

velero:

defaultPlugins:

- openshift

- aws

- azure

- gcp4.4.3.2. Custom Velero plugins

You can install a custom Velero plugin by specifying the plugin image and name when you configure the oadp_v1alpha1_dpa.yaml file during deployment.

You specify the desired custom plugins in the oadp_v1alpha1_dpa.yaml file during deployment.

The following .yaml file installs the default openshift, azure, and gcp plugins and a custom plugin that has the name custom-plugin-example and the image quay.io/example-repo/custom-velero-plugin:

apiVersion: oadp.openshift.io/v1alpha1

kind: DataProtectionApplication

metadata:

name: dpa-sample

spec:

configuration:

velero:

defaultPlugins:

- openshift

- azure

- gcp

customPlugins:

- name: custom-plugin-example

image: quay.io/example-repo/custom-velero-plugin4.4.4. Supported architectures for OADP

Review the architectures supported by OpenShift API for Data Protection (OADP). This helps you to verify compatibility with your cluster infrastructure.

- AMD64

- ARM64

- PPC64le

- s390x

OADP 1.2.0 and later versions support the ARM64 architecture.

4.4.5. OADP support for IBM Power and IBM Z

Review OpenShift API for Data Protection (OADP) support and tested backup locations for IBM Power® and IBM Z®. This helps you to verify compatibility and supported configurations for your IBM Power® or IBM Z® environment.

- OADP 1.3.8 was tested successfully against OpenShift Container Platform 4.12, 4.13, 4.14, and 4.15 for both IBM Power® and IBM Z®. The sections that follow give testing and support information for OADP 1.3.8 in terms of backup locations for these systems.

- OADP 1.4.9 was tested successfully against OpenShift Container Platform 4.14, 4.15, 4.16, and 4.17 for both IBM Power® and IBM Z®. The sections that follow give testing and support information for OADP 1.4.9 in terms of backup locations for these systems.

- OADP {oadp-version-1-5} was tested successfully against OpenShift Container Platform 4.19 for both IBM Power® and IBM Z®. The sections that follow give testing and support information for OADP {oadp-version-1-5} in terms of backup locations for these systems.

4.4.5.1. OADP support for target backup locations using IBM Power

Review the tested and supported configurations for running OADP on IBM Power® with various OpenShift Container Platform versions and S3-compatible backup locations. This helps you to verify that your IBM Power® environment is supported before configuring backups.

- IBM Power® running with OpenShift Container Platform 4.12, 4.13, 4.14, and 4.15, and OADP 1.3.8 was tested successfully against an AWS S3 backup location target. Although the test involved only an AWS S3 target, Red Hat supports running IBM Power® with OpenShift Container Platform 4.13, 4.14, and 4.15, and OADP 1.3.8 against all S3 backup location targets, which are not AWS, as well.

- IBM Power® running with OpenShift Container Platform 4.14, 4.15, 4.16, and 4.17, and OADP 1.4.9 was tested successfully against an AWS S3 backup location target. Although the test involved only an AWS S3 target, Red Hat supports running IBM Power® with OpenShift Container Platform 4.14, 4.15, 4.16, and 4.17, and OADP 1.4.9 against all S3 backup location targets, which are not AWS, as well.

4.4.5.2. OADP testing and support for target backup locations using IBM Z

Review the tested and supported OADP and OpenShift Container Platform version combinations for IBM Z® against S3 backup location targets. This helps you verify that your IBM Z® environment and OADP version are supported for backup operations.

- IBM Z® running with OpenShift Container Platform 4.12, 4.13, 4.14, and 4.15, and 1.3.8 was tested successfully against an AWS S3 backup location target. Although the test involved only an AWS S3 target, Red Hat supports running IBM Z® with OpenShift Container Platform 4.13 4.14, and 4.15, and 1.3.8 against all S3 backup location targets, which are not AWS, as well.

- IBM Z® running with OpenShift Container Platform 4.14, 4.15, 4.16, and 4.17, and 1.4.9 was tested successfully against an AWS S3 backup location target. Although the test involved only an AWS S3 target, Red Hat supports running IBM Z® with OpenShift Container Platform 4.14, 4.15, 4.16, and 4.17, and 1.4.9 against all S3 backup location targets, which are not AWS, as well.

4.4.5.2.1. Known issue of OADP using IBM Power(R) and IBM Z(R) platforms

Use only NFS storage with File System Backup (FSB) methods such as Kopia or Restic for Single-node OpenShift clusters on IBM Power® and IBM Z® platforms. This helps you to avoid unsupported backup configurations on these platforms.

There is currently no workaround for this restriction.

4.4.6. OADP plugins known issues

The following section describes known issues in OpenShift API for Data Protection (OADP) plugins:

4.4.6.1. Velero plugin panics during imagestream backups due to a missing secret

When the backup and the Backup Storage Location (BSL) are managed outside the scope of the Data Protection Application (DPA), the OADP controller, so the DPA reconciliation, does not create the relevant oadp-<bsl_name>-<bsl_provider>-registry-secret. This known issue is present in OADP versions up to 1.4.8, and it is fixed in version 1.4.9.

When the backup is run, the OpenShift Velero plugin panics on the imagestream backup, with the following panic error:

024-02-27T10:46:50.028951744Z time="2024-02-27T10:46:50Z" level=error msg="Error backing up item"

backup=openshift-adp/<backup name> error="error executing custom action (groupResource=imagestreams.image.openshift.io,

namespace=<BSL Name>, name=postgres): rpc error: code = Aborted desc = plugin panicked:

runtime error: index out of range with length 1, stack trace: goroutine 94…4.4.6.1.1. Workaround to avoid the panic error

To avoid the Velero plugin panic error, perform the following steps:

Label the custom BSL with the relevant label:

$ oc label backupstoragelocations.velero.io <bsl_name> app.kubernetes.io/component=bslAfter the BSL is labeled, wait until the DPA reconciles.

NoteYou can force the reconciliation by making any minor change to the DPA itself.

When the DPA reconciles, confirm that the relevant

oadp-<bsl_name>-<bsl_provider>-registry-secrethas been created and that the correct registry data has been populated into it:$ oc -n openshift-adp get secret/oadp-<bsl_name>-<bsl_provider>-registry-secret -o json | jq -r '.data'

4.4.6.2. OpenShift ADP Controller segmentation fault

If you configure a DPA with both cloudstorage and restic enabled, the openshift-adp-controller-manager pod crashes and restarts indefinitely until the pod fails with a crash loop segmentation fault.

You can have either velero or cloudstorage defined, because they are mutually exclusive fields.

-

If you have both

veleroandcloudstoragedefined, theopenshift-adp-controller-managerfails. -

If you have neither

veleronorcloudstoragedefined, theopenshift-adp-controller-managerfails.

For more information about this issue, see OADP-1054.

4.4.6.2.1. OpenShift ADP Controller segmentation fault workaround

You must define either velero or cloudstorage when you configure a DPA. If you define both APIs in your DPA, the openshift-adp-controller-manager pod fails with a crash loop segmentation fault.

4.4.7. OADP and FIPS

Federal Information Processing Standards (FIPS) are a set of computer security standards developed by the United States federal government inline with the Federal Information Security Management Act (FISMA).

OpenShift API for Data Protection (OADP) has been tested and works on FIPS-enabled OpenShift Container Platform clusters.

4.4.8. Avoiding the Velero plugin panic error

Label a custom Backup Storage Location (BSL) to resolve Velero plugin panic errors during imagestream backups. This helps you to ensure the OADP controller creates the required registry secret when you manage the BSL outside the DataProtectionApplication (DPA) CR.

A missing secret can cause a panic error for the Velero plugin during image stream backups. When the backup and the BSL are managed outside the scope of the DPA, the OADP controller does not create the relevant oadp-<bsl_name>-<bsl_provider>-registry-secret parameter.

During the backup operation, the OpenShift Velero plugin panics on the imagestream backup, with the following panic error:

024-02-27T10:46:50.028951744Z time="2024-02-27T10:46:50Z" level=error msg="Error backing up item"

backup=openshift-adp/<backup name> error="error executing custom action (groupResource=imagestreams.image.openshift.io,

namespace=<BSL Name>, name=postgres): rpc error: code = Aborted desc = plugin panicked:

runtime error: index out of range with length 1, stack trace: goroutine 94…Procedure

Label the custom BSL with the relevant label by using the following command:

$ oc label backupstoragelocations.velero.io <bsl_name> app.kubernetes.io/component=bslAfter the BSL is labeled, wait until the DPA reconciles.

NoteYou can force the reconciliation by making any minor change to the DPA itself.

Verification

After the DPA is reconciled, confirm that the parameter has been created and that the correct registry data has been populated into it by entering the following command:

$ oc -n openshift-adp get secret/oadp-<bsl_name>-<bsl_provider>-registry-secret -o json | jq -r '.data'

4.4.9. Workaround for OpenShift ADP Controller segmentation fault

Define either velero or cloudstorage in your Data Protection Application (DPA) configuration to prevent indefinite pod crashes. This configuration resolves a segmentation fault in the openshift-adp-controller-manager pod that occurs when both components are enabled.

The openshift-adp-controller-manager pod fails with a crash loop segmentation fault due to the following settings:

-

If you define both

veleroandcloudstorage, theopenshift-adp-controller-managerfails. -

If you do not define both

veleroandcloudstorage, theopenshift-adp-controller-managerfails.

See OADP-1054 for more information.

4.5. OADP use cases

4.5.1. Backup using OpenShift API for Data Protection and Red Hat OpenShift Data Foundation (ODF)

Following is a use case for using OADP and ODF to back up an application.

4.5.1.1. Backing up an application using OADP and ODF

In this use case, you back up an application by using OADP and store the backup in an object storage provided by Red Hat OpenShift Data Foundation (ODF).

- You create an object bucket claim (OBC) to configure the backup storage location. You use ODF to configure an Amazon S3-compatible object storage bucket. ODF provides MultiCloud Object Gateway (NooBaa MCG) and Ceph Object Gateway, also known as RADOS Gateway (RGW), object storage service. In this use case, you use NooBaa MCG as the backup storage location.

-

You use the NooBaa MCG service with OADP by using the

awsprovider plugin. - You configure the Data Protection Application (DPA) with the backup storage location (BSL).

- You create a backup custom resource (CR) and specify the application namespace to back up.

- You create and verify the backup.

Prerequisites

- You installed the OADP Operator.

- You installed the ODF Operator.

- You have an application with a database running in a separate namespace.

Procedure

Create an OBC manifest file to request a NooBaa MCG bucket as shown in the following example:

apiVersion: objectbucket.io/v1alpha1 kind: ObjectBucketClaim metadata: name: test-obc namespace: openshift-adp spec: storageClassName: openshift-storage.noobaa.io generateBucketName: test-backup-bucketwhere:

test-obc- Specifies the name of the object bucket claim.

test-backup-bucket- Specifies the name of the bucket.

Create the OBC by running the following command:

$ oc create -f <obc_file_name>where:

<obc_file_name>- Specifies the file name of the object bucket claim manifest.

When you create an OBC, ODF creates a

secretand aconfig mapwith the same name as the object bucket claim. Thesecrethas the bucket credentials, and theconfig maphas information to access the bucket. To get the bucket name and bucket host from the generated config map, run the following command:$ oc extract --to=- cm/test-obctest-obcis the name of the OBC.Example output

# BUCKET_NAME backup-c20...41fd # BUCKET_PORT 443 # BUCKET_REGION # BUCKET_SUBREGION # BUCKET_HOST s3.openshift-storage.svcTo get the bucket credentials from the generated

secret, run the following command:$ oc extract --to=- secret/test-obcExample output

# AWS_ACCESS_KEY_ID ebYR....xLNMc # AWS_SECRET_ACCESS_KEY YXf...+NaCkdyC3QPymGet the public URL for the S3 endpoint from the s3 route in the

openshift-storagenamespace by running the following command:$ oc get route s3 -n openshift-storageCreate a

cloud-credentialsfile with the object bucket credentials as shown in the following command:[default] aws_access_key_id=<AWS_ACCESS_KEY_ID> aws_secret_access_key=<AWS_SECRET_ACCESS_KEY>Create the

cloud-credentialssecret with thecloud-credentialsfile content as shown in the following command:$ oc create secret generic \ cloud-credentials \ -n openshift-adp \ --from-file cloud=cloud-credentialsConfigure the Data Protection Application (DPA) as shown in the following example:

apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: oadp-backup namespace: openshift-adp spec: configuration: nodeAgent: enable: true uploaderType: kopia velero: defaultPlugins: - aws - openshift - csi defaultSnapshotMoveData: true backupLocations: - velero: config: profile: "default" region: noobaa s3Url: https://s3.openshift-storage.svc s3ForcePathStyle: "true" insecureSkipTLSVerify: "true" provider: aws default: true credential: key: cloud name: cloud-credentials objectStorage: bucket: <bucket_name> prefix: oadpwhere:

defaultSnapshotMoveData-

Set to

trueto use the OADP Data Mover to enable movement of Container Storage Interface (CSI) snapshots to a remote object storage. s3Url- Specifies the S3 URL of ODF storage.

<bucket_name>- Specifies the bucket name.

Create the DPA by running the following command:

$ oc apply -f <dpa_filename>Verify that the DPA is created successfully by running the following command. In the example output, you can see the

statusobject hastypefield set toReconciled. This means, the DPA is successfully created.$ oc get dpa -o yamlExample output

apiVersion: v1 items: - apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: namespace: openshift-adp #...# spec: backupLocations: - velero: config: #...# status: conditions: - lastTransitionTime: "20....9:54:02Z" message: Reconcile complete reason: Complete status: "True" type: Reconciled kind: List metadata: resourceVersion: ""Verify that the backup storage location (BSL) is available by running the following command:

$ oc get backupstoragelocations.velero.io -n openshift-adpExample output

NAME PHASE LAST VALIDATED AGE DEFAULT dpa-sample-1 Available 3s 15s trueConfigure a backup CR as shown in the following example:

apiVersion: velero.io/v1 kind: Backup metadata: name: test-backup namespace: openshift-adp spec: includedNamespaces: - <application_namespace>where:

<application_namespace>- Specifies the namespace for the application to back up.

Create the backup CR by running the following command:

$ oc apply -f <backup_cr_filename>

Verification

Verify that the backup object is in the

Completedphase by running the following command. For more details, see the example output.$ oc describe backup test-backup -n openshift-adpExample output

Name: test-backup Namespace: openshift-adp # ....# Status: Backup Item Operations Attempted: 1 Backup Item Operations Completed: 1 Completion Timestamp: 2024-09-25T10:17:01Z Expiration: 2024-10-25T10:16:31Z Format Version: 1.1.0 Hook Status: Phase: Completed Progress: Items Backed Up: 34 Total Items: 34 Start Timestamp: 2024-09-25T10:16:31Z Version: 1 Events: <none>

4.5.2. OpenShift API for Data Protection (OADP) restore use case

Following is a use case for using OADP to restore a backup to a different namespace.

4.5.2.1. Restoring an application to a different namespace using OADP

Restore a backup of an application by using OADP to a new target namespace, test-restore-application. To restore a backup, you create a restore custom resource (CR) as shown in the following example. In the restore CR, the source namespace refers to the application namespace that you included in the backup. You then verify the restore by changing your project to the new restored namespace and verifying the resources.

Prerequisites

- You installed the OADP Operator.

- You have the backup of an application to be restored.

Procedure

Create a restore CR as shown in the following example:

apiVersion: velero.io/v1 kind: Restore metadata: name: test-restore namespace: openshift-adp spec: backupName: <backup_name> restorePVs: true namespaceMapping: <application_namespace>: test-restore-applicationwhere:

test-restore- Specifies the name of the restore CR.

<backup_name>- Specifies the name of the backup.

<application_namespace>-

Specifies the target namespace to restore to.

namespaceMappingmaps the source application namespace to the target application namespace.test-restore-applicationis the name of target namespace where you want to restore the backup.

Apply the restore CR by running the following command:

$ oc apply -f <restore_cr_filename>

Verification

Verify that the restore is in the

Completedphase by running the following command:$ oc describe restores.velero.io <restore_name> -n openshift-adpChange to the restored namespace

test-restore-applicationby running the following command:$ oc project test-restore-applicationVerify the restored resources such as persistent volume claim (pvc), service (svc), deployment, secret, and config map by running the following command:

$ oc get pvc,svc,deployment,secret,configmapExample output

NAME STATUS VOLUME persistentvolumeclaim/mysql Bound pvc-9b3583db-...-14b86 NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/mysql ClusterIP 172....157 <none> 3306/TCP 2m56s service/todolist ClusterIP 172.....15 <none> 8000/TCP 2m56s NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/mysql 0/1 1 0 2m55s NAME TYPE DATA AGE secret/builder-dockercfg-6bfmd kubernetes.io/dockercfg 1 2m57s secret/default-dockercfg-hz9kz kubernetes.io/dockercfg 1 2m57s secret/deployer-dockercfg-86cvd kubernetes.io/dockercfg 1 2m57s secret/mysql-persistent-sa-dockercfg-rgp9b kubernetes.io/dockercfg 1 2m57s NAME DATA AGE configmap/kube-root-ca.crt 1 2m57s configmap/openshift-service-ca.crt 1 2m57s

4.5.3. Including a self-signed CA certificate during backup

You can include a self-signed Certificate Authority (CA) certificate in the Data Protection Application (DPA) and then back up an application. You store the backup in a NooBaa bucket provided by Red Hat OpenShift Data Foundation (ODF).

4.5.3.1. Backing up an application and its self-signed CA certificate

The s3.openshift-storage.svc service, provided by ODF, uses a Transport Layer Security protocol (TLS) certificate that is signed with the self-signed service CA.

To prevent a certificate signed by unknown authority error, you must include a self-signed CA certificate in the backup storage location (BSL) section of DataProtectionApplication custom resource (CR). For this situation, you must complete the following tasks:

- Request a NooBaa bucket by creating an object bucket claim (OBC).

- Extract the bucket details.

-

Include a self-signed CA certificate in the

DataProtectionApplicationCR. - Back up an application.

Prerequisites

- You installed the OADP Operator.

- You installed the ODF Operator.

- You have an application with a database running in a separate namespace.

Procedure

Create an OBC manifest to request a NooBaa bucket as shown in the following example:

apiVersion: objectbucket.io/v1alpha1 kind: ObjectBucketClaim metadata: name: test-obc namespace: openshift-adp spec: storageClassName: openshift-storage.noobaa.io generateBucketName: test-backup-bucketwhere:

test-obc- Specifies the name of the object bucket claim.

test-backup-bucket- Specifies the name of the bucket.

Create the OBC by running the following command:

$ oc create -f <obc_file_name>When you create an OBC, ODF creates a

secretand aConfigMapwith the same name as the object bucket claim. Thesecretobject contains the bucket credentials, and theConfigMapobject contains information to access the bucket. To get the bucket name and bucket host from the generated config map, run the following command:$ oc extract --to=- cm/test-obctest-obcis the name of the OBC.Example output

# BUCKET_NAME backup-c20...41fd # BUCKET_PORT 443 # BUCKET_REGION # BUCKET_SUBREGION # BUCKET_HOST s3.openshift-storage.svcTo get the bucket credentials from the

secretobject, run the following command:$ oc extract --to=- secret/test-obcExample output

# AWS_ACCESS_KEY_ID ebYR....xLNMc # AWS_SECRET_ACCESS_KEY YXf...+NaCkdyC3QPymCreate a

cloud-credentialsfile with the object bucket credentials by using the following example configuration:[default] aws_access_key_id=<AWS_ACCESS_KEY_ID> aws_secret_access_key=<AWS_SECRET_ACCESS_KEY>Create the

cloud-credentialssecret with thecloud-credentialsfile content by running the following command:$ oc create secret generic \ cloud-credentials \ -n openshift-adp \ --from-file cloud=cloud-credentialsExtract the service CA certificate from the

openshift-service-ca.crtconfig map by running the following command. Ensure that you encode the certificate inBase64format and note the value to use in the next step.$ oc get cm/openshift-service-ca.crt \ -o jsonpath='{.data.service-ca\.crt}' | base64 -w0; echoExample output

LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0... ....gpwOHMwaG9CRmk5a3....FLS0tLS0KConfigure the

DataProtectionApplicationCR manifest file with the bucket name and CA certificate as shown in the following example:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: oadp-backup namespace: openshift-adp spec: configuration: nodeAgent: enable: true uploaderType: kopia velero: defaultPlugins: - aws - openshift - csi defaultSnapshotMoveData: true backupLocations: - velero: config: profile: "default" region: noobaa s3Url: https://s3.openshift-storage.svc s3ForcePathStyle: "true" insecureSkipTLSVerify: "false" provider: aws default: true credential: key: cloud name: cloud-credentials objectStorage: bucket: <bucket_name> prefix: oadp caCert: <ca_cert>where:

insecureSkipTLSVerify-

Specifies whether SSL/TLS security is enabled. If set to

true, SSL/TLS security is disabled. If set tofalse, SSL/TLS security is enabled. <bucket_name>- Specifies the name of the bucket extracted in an earlier step.

<ca_cert>-

Specifies the

Base64encoded certificate from the previous step.

Create the

DataProtectionApplicationCR by running the following command:$ oc apply -f <dpa_filename>Verify that the

DataProtectionApplicationCR is created successfully by running the following command:$ oc get dpa -o yamlExample output

apiVersion: v1 items: - apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: namespace: openshift-adp #...# spec: backupLocations: - velero: config: #...# status: conditions: - lastTransitionTime: "20....9:54:02Z" message: Reconcile complete reason: Complete status: "True" type: Reconciled kind: List metadata: resourceVersion: ""Verify that the backup storage location (BSL) is available by running the following command:

$ oc get backupstoragelocations.velero.io -n openshift-adpExample output

NAME PHASE LAST VALIDATED AGE DEFAULT dpa-sample-1 Available 3s 15s trueConfigure the

BackupCR by using the following example:apiVersion: velero.io/v1 kind: Backup metadata: name: test-backup namespace: openshift-adp spec: includedNamespaces: - <application_namespace>where:

<application_namespace>- Specifies the namespace for the application to back up.

Create the

BackupCR by running the following command:$ oc apply -f <backup_cr_filename>

Verification

Verify that the

Backupobject is in theCompletedphase by running the following command:$ oc describe backup test-backup -n openshift-adpExample output

Name: test-backup Namespace: openshift-adp # ....# Status: Backup Item Operations Attempted: 1 Backup Item Operations Completed: 1 Completion Timestamp: 2024-09-25T10:17:01Z Expiration: 2024-10-25T10:16:31Z Format Version: 1.1.0 Hook Status: Phase: Completed Progress: Items Backed Up: 34 Total Items: 34 Start Timestamp: 2024-09-25T10:16:31Z Version: 1 Events: <none>

4.5.4. Using the legacy-aws Velero plugin

If you are using an AWS S3-compatible backup storage location, you might get a SignatureDoesNotMatch error while backing up your application. This error occurs because some backup storage locations still use the older versions of the S3 APIs, which are incompatible with the newer AWS SDK for Go V2. To resolve this issue, you can use the legacy-aws Velero plugin in the DataProtectionApplication custom resource (CR). The legacy-aws Velero plugin uses the older AWS SDK for Go V1, which is compatible with the legacy S3 APIs, ensuring successful backups.

4.5.4.1. Using the legacy-aws Velero plugin in the DataProtectionApplication CR

In the following use case, you configure the DataProtectionApplication CR with the legacy-aws Velero plugin and then back up an application.

Depending on the backup storage location you choose, you can use either the legacy-aws or the aws plugin in your DataProtectionApplication CR. If you use both of the plugins in the DataProtectionApplication CR, the following error occurs: aws and legacy-aws can not be both specified in DPA spec.configuration.velero.defaultPlugins.

Prerequisites

- You have installed the OADP Operator.

- You have configured an AWS S3-compatible object storage as a backup location.

- You have an application with a database running in a separate namespace.

Procedure

Configure the

DataProtectionApplicationCR to use thelegacy-awsVelero plugin as shown in the following example:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: oadp-backup namespace: openshift-adp spec: configuration: nodeAgent: enable: true uploaderType: kopia velero: defaultPlugins: - legacy-aws - openshift - csi defaultSnapshotMoveData: true backupLocations: - velero: config: profile: "default" region: noobaa s3Url: https://s3.openshift-storage.svc s3ForcePathStyle: "true" insecureSkipTLSVerify: "true" provider: aws default: true credential: key: cloud name: cloud-credentials objectStorage: bucket: <bucket_name> prefix: oadpwhere:

legacy-aws-

Specifies to use the

legacy-awsplugin. <bucket_name>- Specifies the bucket name.

Create the

DataProtectionApplicationCR by running the following command:$ oc apply -f <dpa_filename>Verify that the

DataProtectionApplicationCR is created successfully by running the following command. In the example output, you can see thestatusobject has thetypefield set toReconciledand thestatusfield set to"True". That status indicates that theDataProtectionApplicationCR is successfully created.$ oc get dpa -o yamlExample output

apiVersion: v1 items: - apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: namespace: openshift-adp #...# spec: backupLocations: - velero: config: #...# status: conditions: - lastTransitionTime: "20....9:54:02Z" message: Reconcile complete reason: Complete status: "True" type: Reconciled kind: List metadata: resourceVersion: ""Verify that the backup storage location (BSL) is available by running the following command:

$ oc get backupstoragelocations.velero.io -n openshift-adpYou should see an output similar to the following example:

NAME PHASE LAST VALIDATED AGE DEFAULT dpa-sample-1 Available 3s 15s trueConfigure a

BackupCR as shown in the following example:apiVersion: velero.io/v1 kind: Backup metadata: name: test-backup namespace: openshift-adp spec: includedNamespaces: - <application_namespace>where:

<application_namespace>- Specifies the namespace for the application to back up.

Create the

BackupCR by running the following command:$ oc apply -f <backup_cr_filename>

Verification

Verify that the backup object is in the

Completedphase by running the following command. For more details, see the example output.$ oc describe backups.velero.io test-backup -n openshift-adpExample output

Name: test-backup Namespace: openshift-adp # ....# Status: Backup Item Operations Attempted: 1 Backup Item Operations Completed: 1 Completion Timestamp: 2024-09-25T10:17:01Z Expiration: 2024-10-25T10:16:31Z Format Version: 1.1.0 Hook Status: Phase: Completed Progress: Items Backed Up: 34 Total Items: 34 Start Timestamp: 2024-09-25T10:16:31Z Version: 1 Events: <none>

4.5.5. Backing up workloads on OADP with ROSA STS

To back up and restore workloads on ROSA, you can use OADP. You can create a backup of a workload, restore it from the backup, and verify the restoration. You can also clean up the OADP Operator, backup storage, and AWS resources when they are no longer needed.

4.5.5.1. Example: Performing a backup with OADP and OpenShift Container Platform

Perform a backup by using OpenShift API for Data Protection (OADP) with OpenShift Container Platform. The following example hello-world application has no persistent volumes (PVs) attached.

Either Data Protection Application (DPA) configuration will work.

Procedure

Create a workload to back up by running the following commands:

$ oc create namespace hello-world$ oc new-app -n hello-world --image=docker.io/openshift/hello-openshiftExpose the route by running the following command:

$ oc expose service/hello-openshift -n hello-worldCheck that the application is working by running the following command:

$ curl `oc get route/hello-openshift -n hello-world -o jsonpath='{.spec.host}'`You should see an output similar to the following example:

Hello OpenShift!Back up the workload by running the following command: