Installing on Nutanix

Installing OpenShift Container Platform on Nutanix

Abstract

Chapter 1. Preparing to install on Nutanix

Before you install an OpenShift Container Platform cluster, be sure that your Nutanix environment meets the following requirements.

1.1. Nutanix version requirements

You must install the OpenShift Container Platform cluster to a Nutanix environment that meets the following requirements.

| Component | Required version |

|---|---|

| Nutanix AOS | 6.5.2.7 or later |

| Prism Central | pc.2022.6 or later |

1.2. Environment requirements

Before you install an OpenShift Container Platform cluster, review the following Nutanix AOS environment requirements.

1.2.1. Infrastructure requirements

You can install OpenShift Container Platform on on-premise Nutanix clusters, Nutanix Cloud Clusters (NC2) on Amazon Web Services (AWS), or NC2 on Microsoft Azure.

For more information, see Nutanix Cloud Clusters on AWS and Nutanix Cloud Clusters on Microsoft Azure.

1.2.2. Required account privileges

The installation program requires access to a Nutanix account with the necessary permissions to deploy the cluster and to maintain the daily operation of it. The following options are available to you:

- You can use a local Prism Central user account with administrative privileges. Using a local account is the quickest way to grant access to an account with the required permissions.

- If your organization’s security policies require that you use a more restrictive set of permissions, use the permissions that are listed in the following table to create a custom Cloud Native role in Prism Central. You can then assign the role to a user account that is a member of a Prism Central authentication directory.

Consider the following when managing this user account:

- When assigning entities to the role, ensure that the user can access only the Prism Element and subnet that are required to deploy the virtual machines.

- Ensure that the user is a member of the project to which it needs to assign virtual machines.

For more information, see the Nutanix documentation about creating a Custom Cloud Native role, assigning a role, and adding a user to a project.

Example 1.1. Required permissions for creating a Custom Cloud Native role

| Nutanix Object | When required | Required permissions in Nutanix API | Description |

|---|---|---|---|

| Categories | Always |

| Create, read, and delete categories that are assigned to the OpenShift Container Platform machines. |

| Images | Always |

| Create, read, and delete the operating system images used for the OpenShift Container Platform machines. |

| Virtual Machines | Always |

| Create, read, and delete the OpenShift Container Platform machines. |

| Clusters | Always |

| View the Prism Element clusters that host the OpenShift Container Platform machines. |

| Subnets | Always |

| View the subnets that host the OpenShift Container Platform machines. |

| Projects | If you will associate a project with compute machines, control plane machines, or all machines. |

| View the projects defined in Prism Central and allow a project to be assigned to the OpenShift Container Platform machines. |

| Tasks | Always |

| Fetch and view tasks on the Prism Element that contain OpenShift Container Platform machines and nodes. |

| Hosts | If you use GPUs with compute machines. |

| Fetch and view hosts on the Prism Element that have GPUs attached. |

1.2.3. Cluster limits

Available resources vary between clusters. The number of possible clusters within a Nutanix environment is limited primarily by available storage space and any limitations associated with the resources that the cluster creates, and resources that you require to deploy the cluster, such a IP addresses and networks.

1.2.4. Cluster resources

A minimum of 800 GB of storage is required to use a standard cluster.

When you deploy a OpenShift Container Platform cluster that uses installer-provisioned infrastructure, the installation program must be able to create several resources in your Nutanix instance. Although these resources use 856 GB of storage, the bootstrap node is destroyed as part of the installation process.

A standard OpenShift Container Platform installation creates the following resources:

- 1 label

Virtual machines:

- 1 disk image

- 1 temporary bootstrap node

- 3 control plane nodes

- 3 compute machines

1.2.5. Networking requirements

You must use either AHV IP Address Management (IPAM) or Dynamic Host Configuration Protocol (DHCP) for the network and ensure that it is configured to provide persistent IP addresses to the cluster machines. Additionally, create the following networking resources before you install the OpenShift Container Platform cluster:

- IP addresses

- DNS records

Nutanix Flow Virtual Networking is supported for new cluster installations. To use this feature, enable Flow Virtual Networking on your AHV cluster before installing. For more information, see Flow Virtual Networking overview.

It is recommended that each OpenShift Container Platform node in the cluster have access to a Network Time Protocol (NTP) server that is discoverable via DHCP. Installation is possible without an NTP server. However, an NTP server prevents errors typically associated with asynchronous server clocks.

1.2.5.1. Required IP Addresses

An installer-provisioned installation requires two static virtual IP (VIP) addresses:

- A VIP address for the API is required. This address is used to access the cluster API.

- A VIP address for ingress is required. This address is used for cluster ingress traffic.

You specify these IP addresses when you install the OpenShift Container Platform cluster.

1.2.5.2. DNS records

You must create DNS records for two static IP addresses in the appropriate DNS server for the Nutanix instance that hosts your OpenShift Container Platform cluster. In each record, <cluster_name> is the cluster name and <base_domain> is the cluster base domain that you specify when you install the cluster.

If you use your own DNS or DHCP server, you must also create records for each node, including the bootstrap, control plane, and compute nodes.

A complete DNS record takes the form: <component>.<cluster_name>.<base_domain>..

| Component | Record | Description |

|---|---|---|

| API VIP |

| This DNS A/AAAA or CNAME record must point to the load balancer for the control plane machines. This record must be resolvable by both clients external to the cluster and from all the nodes within the cluster. |

| Ingress VIP |

| A wildcard DNS A/AAAA or CNAME record that points to the load balancer that targets the machines that run the Ingress router pods, which are the worker nodes by default. This record must be resolvable by both clients external to the cluster and from all the nodes within the cluster. |

1.3. Configuring the Cloud Credential Operator utility

The Cloud Credential Operator (CCO) manages cloud provider credentials as Kubernetes custom resource definitions (CRDs). To install a cluster on Nutanix, you must set the CCO to manual mode as part of the installation process.

To create and manage cloud credentials from outside of the cluster when the Cloud Credential Operator (CCO) is operating in manual mode, extract and prepare the CCO utility (ccoctl) binary.

The ccoctl utility is a Linux binary that must run in a Linux environment.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

-

You have installed the OpenShift CLI (

oc).

Procedure

Set a variable for the OpenShift Container Platform release image by running the following command:

$ RELEASE_IMAGE=$(./openshift-install version | awk '/release image/ {print $3}')Obtain the CCO container image from the OpenShift Container Platform release image by running the following command:

$ CCO_IMAGE=$(oc adm release info --image-for='cloud-credential-operator' $RELEASE_IMAGE -a ~/.pull-secret)NoteEnsure that the architecture of the

$RELEASE_IMAGEmatches the architecture of the environment in which you will use theccoctltool.Extract the

ccoctlbinary from the CCO container image within the OpenShift Container Platform release image by running the following command:$ oc image extract $CCO_IMAGE \ --file="/usr/bin/ccoctl.<rhel_version>" \1 -a ~/.pull-secret- 1

- For

<rhel_version>, specify the value that corresponds to the version of Red Hat Enterprise Linux (RHEL) that the host uses. If no value is specified,ccoctl.rhel8is used by default. The following values are valid:-

rhel8: Specify this value for hosts that use RHEL 8. -

rhel9: Specify this value for hosts that use RHEL 9.

-

NoteThe

ccoctlbinary is created in the directory from where you executed the command and not in/usr/bin/. You must rename the directory or move theccoctl.<rhel_version>binary toccoctl.Change the permissions to make

ccoctlexecutable by running the following command:$ chmod 775 ccoctl

Verification

To verify that

ccoctlis ready to use, display the help file. Use a relative file name when you run the command, for example:$ ./ccoctlExample output

OpenShift credentials provisioning tool Usage: ccoctl [command] Available Commands: aws Manage credentials objects for AWS cloud azure Manage credentials objects for Azure gcp Manage credentials objects for Google cloud help Help about any command ibmcloud Manage credentials objects for IBM Cloud nutanix Manage credentials objects for Nutanix Flags: -h, --help help for ccoctl Use "ccoctl [command] --help" for more information about a command.

Chapter 2. Fault tolerant deployments using multiple Prism Elements

By default, the installation program installs control plane and compute machines into a single Nutanix Prism Element (cluster). To improve the fault tolerance of your OpenShift Container Platform cluster, you can specify that these machines be distributed across multiple Nutanix clusters by configuring failure domains.

A failure domain represents an additional Prism Element instance that is available to OpenShift Container Platform machine pools during and after installation.

2.1. Installation method and failure domain configuration

The OpenShift Container Platform installation method determines how and when you configure failure domains:

If you deploy using installer-provisioned infrastructure, you can configure failure domains in the installation configuration file before deploying the cluster. For more information, see Configuring failure domains.

You can also configure failure domains after the cluster is deployed. For more information about configuring failure domains post-installation, see Adding failure domains to an existing Nutanix cluster.

- If you deploy using infrastructure that you manage (user-provisioned infrastructure) no additional configuration is required. After the cluster is deployed, you can manually distribute control plane and compute machines across failure domains.

2.2. Adding failure domains to an existing Nutanix cluster

By default, the installation program installs control plane and compute machines into a single Nutanix Prism Element (cluster). After an OpenShift Container Platform cluster is deployed, you can improve its fault tolerance by adding additional Prism Element instances to the deployment using failure domains.

A failure domain represents a single Prism Element instance where new control plane and compute machines can be deployed and existing control plane and compute machines can be distributed.

2.2.1. Failure domain requirements

When planning to use failure domains, consider the following requirements:

- All Nutanix Prism Element instances must be managed by the same instance of Prism Central. A deployment that is comprised of multiple Prism Central instances is not supported.

- The machines that make up the Prism Element clusters must reside on the same Ethernet network for failure domains to be able to communicate with each other.

- A subnet is required in each Prism Element that will be used as a failure domain in the OpenShift Container Platform cluster. When defining these subnets, they must share the same IP address prefix (CIDR) and should contain the virtual IP addresses that the OpenShift Container Platform cluster uses.

2.2.2. Adding failure domains to the Infrastructure CR

You add failure domains to an existing Nutanix cluster by modifying its Infrastructure custom resource (CR) (infrastructures.config.openshift.io).

It is recommended that you configure three failure domains to ensure high-availability.

Procedure

Edit the Infrastructure CR by running the following command:

$ oc edit infrastructures.config.openshift.io clusterConfigure the failure domains.

Example Infrastructure CR with Nutanix failure domains

spec: cloudConfig: key: config name: cloud-provider-config #... platformSpec: nutanix: failureDomains: - cluster: type: UUID uuid: <uuid> name: <failure_domain_name> subnets: - type: UUID uuid: <network_uuid> - cluster: type: UUID uuid: <uuid> name: <failure_domain_name> subnets: - type: UUID uuid: <network_uuid> - cluster: type: UUID uuid: <uuid> name: <failure_domain_name> subnets: - type: UUID uuid: <network_uuid> # ...where:

<uuid>- Specifies the universally unique identifier (UUID) of the Prism Element.

<failure_domain_name>-

Specifies a unique name for the failure domain. The name is limited to 64 or fewer characters, which can include lower-case letters, digits, and a dash (

-). The dash cannot be in the leading or ending position of the name. <network_uuid>- Specifies the UUID of the Prism Element subnet object. The subnet’s IP address prefix (CIDR) should contain the virtual IP addresses that the OpenShift Container Platform cluster uses. Only one subnet per failure domain (Prism Element) in an OpenShift Container Platform cluster is supported.

- Save the CR to apply the changes.

2.2.3. Distributing control planes across failure domains

You distribute control planes across Nutanix failure domains by modifying the control plane machine set custom resource (CR).

Prerequisites

- You have configured the failure domains in the cluster’s Infrastructure custom resource (CR).

- The control plane machine set custom resource (CR) is in an active state.

For more information on checking the control plane machine set custom resource state, see "Additional resources".

Procedure

Edit the control plane machine set CR by running the following command:

$ oc edit controlplanemachineset.machine.openshift.io cluster -n openshift-machine-apiConfigure the control plane machine set to use failure domains by adding a

spec.template.machines_v1beta1_machine_openshift_io.failureDomainsstanza.Example control plane machine set with Nutanix failure domains

apiVersion: machine.openshift.io/v1 kind: ControlPlaneMachineSet metadata: creationTimestamp: null labels: machine.openshift.io/cluster-api-cluster: <cluster_name> name: cluster namespace: openshift-machine-api spec: # ... template: machineType: machines_v1beta1_machine_openshift_io machines_v1beta1_machine_openshift_io: failureDomains: platform: Nutanix nutanix: - name: <failure_domain_name_1> - name: <failure_domain_name_2> - name: <failure_domain_name_3> # ...- Save your changes.

By default, the control plane machine set propagates changes to your control plane configuration automatically. If the cluster is configured to use the OnDelete update strategy, you must replace your control planes manually. For more information, see "Additional resources".

2.2.4. Distributing compute machines across failure domains

You can distribute compute machines across Nutanix failure domains one of the following ways:

- Editing existing compute machine sets allows you to distribute compute machines across Nutanix failure domains as a minimal configuration update.

- Replacing existing compute machine sets ensures that the specification is immutable and all your machines are the same.

2.2.4.1. Editing compute machine sets to implement failure domains

To distribute compute machines across Nutanix failure domains by using an existing compute machine set, you update the compute machine set with your configuration and then use scaling to replace the existing compute machines.

Prerequisites

- You have configured the failure domains in the cluster’s Infrastructure custom resource (CR).

Procedure

Run the following command to view the cluster’s Infrastructure CR.

$ oc describe infrastructures.config.openshift.io cluster-

For each failure domain (

platformSpec.nutanix.failureDomains), note the cluster’s UUID, name, and subnet object UUID. These values are required to add a failure domain to a compute machine set. List the compute machine sets in your cluster by running the following command:

$ oc get machinesets -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE <machine_set_name_1> 1 1 1 1 55m <machine_set_name_2> 1 1 1 1 55mEdit the first compute machine set by running the following command:

$ oc edit machineset <machine_set_name_1> -n openshift-machine-apiConfigure the compute machine set to use the first failure domain by updating the following to the

spec.template.spec.providerSpec.valuestanza.NoteBe sure that the values you specify for the

clusterandsubnetsfields match the values that were configured in thefailureDomainsstanza in the cluster’s Infrastructure CR.Example compute machine set with Nutanix failure domains

apiVersion: machine.openshift.io/v1 kind: MachineSet metadata: creationTimestamp: null labels: machine.openshift.io/cluster-api-cluster: <cluster_name> name: <machine_set_name_1> namespace: openshift-machine-api spec: replicas: 2 # ... template: spec: # ... providerSpec: value: apiVersion: machine.openshift.io/v1 failureDomain: name: <failure_domain_name_1> cluster: type: uuid uuid: <prism_element_uuid_1> subnets: - type: uuid uuid: <prism_element_network_uuid_1> # ...-

Note the value of

spec.replicas, because you need it when scaling the compute machine set to apply the changes. - Save your changes.

List the machines that are managed by the updated compute machine set by running the following command:

$ oc get -n openshift-machine-api machines \ -l machine.openshift.io/cluster-api-machineset=<machine_set_name_1>Example output

NAME PHASE TYPE REGION ZONE AGE <machine_name_original_1> Running AHV Unnamed Development-STS 4h <machine_name_original_2> Running AHV Unnamed Development-STS 4hFor each machine that is managed by the updated compute machine set, set the

deleteannotation by running the following command:$ oc annotate machine/<machine_name_original_1> \ -n openshift-machine-api \ machine.openshift.io/delete-machine="true"To create replacement machines with the new configuration, scale the compute machine set to twice the number of replicas by running the following command:

$ oc scale --replicas=<twice_the_number_of_replicas> \1 machineset <machine_set_name_1> \ -n openshift-machine-api- 1

- For example, if the original number of replicas in the compute machine set is

2, scale the replicas to4.

List the machines that are managed by the updated compute machine set by running the following command:

$ oc get -n openshift-machine-api machines -l machine.openshift.io/cluster-api-machineset=<machine_set_name_1>When the new machines are in the

Runningphase, you can scale the compute machine set to the original number of replicas.To remove the machines that were created with the old configuration, scale the compute machine set to the original number of replicas by running the following command:

$ oc scale --replicas=<original_number_of_replicas> \1 machineset <machine_set_name_1> \ -n openshift-machine-api- 1

- For example, if the original number of replicas in the compute machine set was

2, scale the replicas to2.

- As required, continue to modify machine sets to reference the additional failure domains that are available to the deployment.

2.2.4.2. Replacing compute machine sets to implement failure domains

To distribute compute machines across Nutanix failure domains by replacing a compute machine set, you create a new compute machine set with your configuration, wait for the machines that it creates to start, and then delete the old compute machine set.

Prerequisites

- You have configured the failure domains in the cluster’s Infrastructure custom resource (CR).

Procedure

Run the following command to view the cluster’s Infrastructure CR.

$ oc describe infrastructures.config.openshift.io cluster-

For each failure domain (

platformSpec.nutanix.failureDomains), note the cluster’s UUID, name, and subnet object UUID. These values are required to add a failure domain to a compute machine set. List the compute machine sets in your cluster by running the following command:

$ oc get machinesets -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE <original_machine_set_name_1> 1 1 1 1 55m <original_machine_set_name_2> 1 1 1 1 55m- Note the names of the existing compute machine sets.

Create a YAML file that contains the values for your new compute machine set custom resource (CR) by using one of the following methods:

Copy an existing compute machine set configuration into a new file by running the following command:

$ oc get machineset <original_machine_set_name_1> \ -n openshift-machine-api -o yaml > <new_machine_set_name_1>.yamlYou can edit this YAML file with your preferred text editor.

Create a blank YAML file named

<new_machine_set_name_1>.yamlwith your preferred text editor and include the required values for your new compute machine set.If you are not sure which value to set for a specific field, you can view values of an existing compute machine set CR by running the following command:

$ oc get machineset <original_machine_set_name_1> \ -n openshift-machine-api -o yamlExample output

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id>1 name: <infrastructure_id>-<role>2 namespace: openshift-machine-api spec: replicas: 1 selector: matchLabels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role> template: metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machine-role: <role> machine.openshift.io/cluster-api-machine-type: <role> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role> spec: providerSpec:3 ...- 1

- The cluster infrastructure ID.

- 2

- A default node label.Note

For clusters that have user-provisioned infrastructure, a compute machine set can only create machines with a

workerorinfrarole. - 3

- The values in the

<providerSpec>section of the compute machine set CR are platform-specific. For more information about<providerSpec>parameters in the CR, see the sample compute machine set CR configuration for your provider.

Configure the new compute machine set to use the first failure domain by updating or adding the following to the

spec.template.spec.providerSpec.valuestanza in the<new_machine_set_name_1>.yamlfile.NoteBe sure that the values you specify for the

clusterandsubnetsfields match the values that were configured in thefailureDomainsstanza in the cluster’s Infrastructure CR.Example compute machine set with Nutanix failure domains

apiVersion: machine.openshift.io/v1 kind: MachineSet metadata: creationTimestamp: null labels: machine.openshift.io/cluster-api-cluster: <cluster_name> name: <new_machine_set_name_1> namespace: openshift-machine-api spec: replicas: 2 # ... template: spec: # ... providerSpec: value: apiVersion: machine.openshift.io/v1 failureDomain: name: <failure_domain_name_1> cluster: type: uuid uuid: <prism_element_uuid_1> subnets: - type: uuid uuid: <prism_element_network_uuid_1> # ...- Save your changes.

Create a compute machine set CR by running the following command:

$ oc create -f <new_machine_set_name_1>.yaml- As required, continue to create compute machine sets to reference the additional failure domains that are available to the deployment.

List the machines that are managed by the new compute machine sets by running the following command for each new compute machine set:

$ oc get -n openshift-machine-api machines -l machine.openshift.io/cluster-api-machineset=<new_machine_set_name_1>Example output

NAME PHASE TYPE REGION ZONE AGE <machine_from_new_1> Provisioned AHV Unnamed Development-STS 25s <machine_from_new_2> Provisioning AHV Unnamed Development-STS 25sWhen the new machines are in the

Runningphase, you can delete the old compute machine sets that do not include the failure domain configuration.When you have verified that the new machines are in the

Runningphase, delete the old compute machine sets by running the following command for each:$ oc delete machineset <original_machine_set_name_1> -n openshift-machine-api

Verification

To verify that the compute machine sets without the updated configuration are deleted, list the compute machine sets in your cluster by running the following command:

$ oc get machinesets -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE <new_machine_set_name_1> 1 1 1 1 4m12s <new_machine_set_name_2> 1 1 1 1 4m12sTo verify that the compute machines without the updated configuration are deleted, list the machines in your cluster by running the following command:

$ oc get -n openshift-machine-api machinesExample output while deletion is in progress

NAME PHASE TYPE REGION ZONE AGE <machine_from_new_1> Running AHV Unnamed Development-STS 5m41s <machine_from_new_2> Running AHV Unnamed Development-STS 5m41s <machine_from_original_1> Deleting AHV Unnamed Development-STS 4h <machine_from_original_2> Deleting AHV Unnamed Development-STS 4hExample output when deletion is complete

NAME PHASE TYPE REGION ZONE AGE <machine_from_new_1> Running AHV Unnamed Development-STS 6m30s <machine_from_new_2> Running AHV Unnamed Development-STS 6m30sTo verify that a machine created by the new compute machine set has the correct configuration, examine the relevant fields in the CR for one of the new machines by running the following command:

$ oc describe machine <machine_from_new_1> -n openshift-machine-api

Chapter 3. Installing a cluster on Nutanix

In OpenShift Container Platform version 4.17, you can choose one of the following options to install a cluster on your Nutanix instance:

Using installer-provisioned infrastructure: Use the procedures in the following sections to use installer-provisioned infrastructure. Installer-provisioned infrastructure is ideal for installing in connected or disconnected network environments. The installer-provisioned infrastructure includes an installation program that provisions the underlying infrastructure for the cluster.

Using the Assisted Installer: The Assisted Installer hosted at console.redhat.com. The Assisted Installer cannot be used in disconnected environments. The Assisted Installer does not provision the underlying infrastructure for the cluster, so you must provision the infrastructure before you run the Assisted Installer. Installing with the Assisted Installer also provides integration with Nutanix, enabling autoscaling. See Installing an on-premise cluster using the Assisted Installer for additional details.

Using user-provisioned infrastructure: Complete the relevant steps outlined in the Installing a cluster on any platform documentation.

3.1. Prerequisites

- You have reviewed details about the OpenShift Container Platform installation and update processes.

- The installation program requires access to port 9440 on Prism Central and Prism Element. You verified that port 9440 is accessible.

If you use a firewall, you have met these prerequisites:

- You confirmed that port 9440 is accessible. Control plane nodes must be able to reach Prism Central and Prism Element on port 9440 for the installation to succeed.

- You configured the firewall to grant access to the sites that OpenShift Container Platform requires. This includes the use of Telemetry.

If your Nutanix environment is using the default self-signed SSL certificate, replace it with a certificate that is signed by a CA. The installation program requires a valid CA-signed certificate to access to the Prism Central API. For more information about replacing the self-signed certificate, see the Nutanix AOS Security Guide.

If your Nutanix environment uses an internal CA to issue certificates, you must configure a cluster-wide proxy as part of the installation process. For more information, see Configuring a custom PKI.

ImportantUse 2048-bit certificates. The installation fails if you use 4096-bit certificates with Prism Central 2022.x.

3.2. Internet access for OpenShift Container Platform

In OpenShift Container Platform 4.17, you require access to the internet to install your cluster.

You must have internet access to perform the following actions:

- Access OpenShift Cluster Manager to download the installation program and perform subscription management. If the cluster has internet access and you do not disable Telemetry, that service automatically entitles your cluster.

- Access Quay.io to obtain the packages that are required to install your cluster.

- Obtain the packages that are required to perform cluster updates.

If your cluster cannot have direct internet access, you can perform a restricted network installation on some types of infrastructure that you provision. During that process, you download the required content and use it to populate a mirror registry with the installation packages. With some installation types, the environment that you install your cluster in will not require internet access. Before you update the cluster, you update the content of the mirror registry.

3.3. Internet access for Prism Central

Prism Central requires internet access to obtain the Red Hat Enterprise Linux CoreOS (RHCOS) image that is required to install the cluster. The RHCOS image for Nutanix is available at rhcos.mirror.openshift.com.

3.4. Generating a key pair for cluster node SSH access

To enable secure, passwordless SSH access to your cluster nodes, provide an SSH public key during the OpenShift Container Platform installation. This ensures that the installation program automatically configures the Red Hat Enterprise Linux CoreOS (RHCOS) nodes for remote authentication through the core user.

The SSH public key gets added to the ~/.ssh/authorized_keys list for the core user on each node. After the key is passed to the Red Hat Enterprise Linux CoreOS (RHCOS) nodes through their Ignition config files, you can use the key pair to SSH in to the RHCOS nodes as the user core. To access the nodes through SSH, the private key identity must be managed by SSH for your local user.

If you want to SSH in to your cluster nodes to perform installation debugging or disaster recovery, you must provide the SSH public key during the installation process. The ./openshift-install gather command also requires the SSH public key to be in place on the cluster nodes.

Do not skip this procedure in production environments, where disaster recovery and debugging is required.

You must use a local key, not one that you configured with platform-specific approaches such as AWS key pairs.

Procedure

If you do not have an existing SSH key pair on your local machine to use for authentication onto your cluster nodes, create one. For example, on a computer that uses a Linux operating system, run the following command:

$ ssh-keygen -t ed25519 -N '' -f <path>/<file_name>Specifies the path and file name, such as

~/.ssh/id_ed25519, of the new SSH key. If you have an existing key pair, ensure your public key is in the your~/.sshdirectory.NoteIf you plan to install an OpenShift Container Platform cluster that uses the RHEL cryptographic libraries that have been submitted to NIST for FIPS 140-2/140-3 Validation on only the

x86_64,ppc64le, ands390xarchitectures, do not create a key that uses theed25519algorithm. Instead, create a key that uses thersaorecdsaalgorithm.View the public SSH key:

$ cat <path>/<file_name>.pubFor example, run the following to view the

~/.ssh/id_ed25519.pubpublic key:$ cat ~/.ssh/id_ed25519.pubAdd the SSH private key identity to the SSH agent for your local user, if it has not already been added. SSH agent management of the key is required for password-less SSH authentication onto your cluster nodes, or if you want to use the

./openshift-install gathercommand.NoteOn some distributions, default SSH private key identities such as

~/.ssh/id_rsaand~/.ssh/id_dsaare managed automatically.If the

ssh-agentprocess is not already running for your local user, start it as a background task:$ eval "$(ssh-agent -s)"Example output

Agent pid 31874NoteIf your cluster is in FIPS mode, only use FIPS-compliant algorithms to generate the SSH key. The key must be either RSA or ECDSA.

Add your SSH private key to the

ssh-agent:$ ssh-add <path>/<file_name>Specifies the path and file name for your SSH private key, such as

~/.ssh/id_ed25519Example output

Identity added: /home/<you>/<path>/<file_name> (<computer_name>)

Next steps

- When you install OpenShift Container Platform, provide the SSH public key to the installation program.

3.5. Obtaining the installation program

Before you install OpenShift Container Platform, download the installation file on the host you are using for installation.

Prerequisites

- You have a computer that runs Linux or macOS, with 500 MB of local disk space.

Procedure

Go to the Cluster Type page on the Red Hat Hybrid Cloud Console. If you have a Red Hat account, log in with your credentials. If you do not, create an account.

Tip- Select your infrastructure provider from the Run it yourself section of the page.

- Select your host operating system and architecture from the dropdown menus under OpenShift Installer and click Download Installer.

Place the downloaded file in the directory where you want to store the installation configuration files.

Important- The installation program creates several files on the computer that you use to install your cluster. You must keep the installation program and the files that the installation program creates after you finish installing the cluster. Both of the files are required to delete the cluster.

- Deleting the files created by the installation program does not remove your cluster, even if the cluster failed during installation. To remove your cluster, complete the OpenShift Container Platform uninstallation procedures for your specific cloud provider.

Extract the installation program. For example, on a computer that uses a Linux operating system, run the following command:

$ tar -xvf openshift-install-linux.tar.gzDownload your installation pull secret from Red Hat OpenShift Cluster Manager. This pull secret allows you to authenticate with the services that are provided by the included authorities, including Quay.io, which serves the container images for OpenShift Container Platform components.

TipAlternatively, you can retrieve the installation program from the Red Hat Customer Portal, where you can specify a version of the installation program to download. However, you must have an active subscription to access this page.

3.6. Adding Nutanix root CA certificates to your system trust

Because the installation program requires access to the Prism Central API, you must add your Nutanix trusted root CA certificates to your system trust before you install an OpenShift Container Platform cluster.

Procedure

- From the Prism Central web console, download the Nutanix root CA certificates.

- Extract the compressed file that contains the Nutanix root CA certificates.

Add the files for your operating system to the system trust. For example, on a Fedora operating system, run the following command:

# cp certs/lin/* /etc/pki/ca-trust/source/anchorsUpdate your system trust. For example, on a Fedora operating system, run the following command:

# update-ca-trust extract

3.7. Creating the installation configuration file

You can customize the OpenShift Container Platform cluster you install on

Nutanix.

Prerequisites

- You have the OpenShift Container Platform installation program and the pull secret for your cluster.

- You have verified that you have met the Nutanix networking requirements. For more information, see "Preparing to install on Nutanix".

Procedure

Create the

install-config.yamlfile.Change to the directory that contains the installation program and run the following command:

$ ./openshift-install create install-config --dir <installation_directory><installation_directory>: For<installation_directory>, specify the directory name to store the files that the installation program creates.When specifying the directory:

-

Verify that the directory has the

executepermission. This permission is required to run Terraform binaries under the installation directory. - Use an empty directory. Some installation assets, such as bootstrap X.509 certificates, have short expiration intervals, therefore you must not reuse an installation directory. If you want to reuse individual files from another cluster installation, you can copy them into your directory. However, the file names for the installation assets might change between releases. Use caution when copying installation files from an earlier OpenShift Container Platform version.

At the prompts, provide the configuration details for your cloud:

Optional: Select an SSH key to use to access your cluster machines.

NoteFor production OpenShift Container Platform clusters on which you want to perform installation debugging or disaster recovery, specify an SSH key that your

ssh-agentprocess uses.- Select nutanix as the platform to target.

- Enter the Prism Central domain name or IP address.

- Enter the port that is used to log into Prism Central.

Enter the credentials that are used to log into Prism Central.

The installation program connects to Prism Central.

- Select the Prism Element that will manage the OpenShift Container Platform cluster.

- Select the network subnet to use.

- Enter the virtual IP address that you configured for control plane API access.

- Enter the virtual IP address that you configured for cluster ingress.

- Enter the base domain. This base domain must be the same one that you configured in the DNS records.

Enter a descriptive name for your cluster.

The cluster name you enter must match the cluster name you specified when configuring the DNS records.

Optional: Update one or more of the default configuration parameters in the

install.config.yamlfile to customize the installation.For more information about the parameters, see "Installation configuration parameters".

NoteIf you are installing a three-node cluster, be sure to set the

compute.replicasparameter to0. This ensures that cluster’s control planes are schedulable. For more information, see "Installing a three-node cluster on Nutanix".Back up the

install-config.yamlfile so that you can use it to install multiple clusters.ImportantThe

install-config.yamlfile is consumed during the installation process. If you want to reuse the file, you must back it up now.

3.7.1. Sample customized install-config.yaml file for Nutanix

You can customize the install-config.yaml file to specify more details about your OpenShift Container Platform cluster’s platform or modify the values of the required parameters.

This sample YAML file is provided for reference only. You must obtain your install-config.yaml file by using the installation program and modify it.

apiVersion: v1

baseDomain: example.com

compute:

- hyperthreading: Enabled

name: worker

replicas: 3

platform:

nutanix:

cpus: 2

coresPerSocket: 2

memoryMiB: 8196

osDisk:

diskSizeGiB: 120

categories:

- key: <category_key_name>

value: <category_value>

controlPlane:

hyperthreading: Enabled

name: master

replicas: 3

platform:

nutanix:

cpus: 4

coresPerSocket: 2

memoryMiB: 16384

osDisk:

diskSizeGiB: 120

categories:

- key: <category_key_name>

value: <category_value>

metadata:

creationTimestamp: null

name: test-cluster

networking:

clusterNetwork:

- cidr: 10.128.0.0/14

hostPrefix: 23

machineNetwork:

- cidr: 10.0.0.0/16

networkType: OVNKubernetes

serviceNetwork:

- 172.30.0.0/16

platform:

nutanix:

apiVIPs:

- 10.40.142.7

defaultMachinePlatform:

bootType: Legacy

categories:

- key: <category_key_name>

value: <category_value>

project:

type: name

name: <project_name>

ingressVIPs:

- 10.40.142.8

prismCentral:

endpoint:

address: your.prismcentral.domainname

port: 9440

password: <password>

username: <username>

prismElements:

- endpoint:

address: your.prismelement.domainname

port: 9440

uuid: 0005b0f1-8f43-a0f2-02b7-3cecef193712

subnetUUIDs:

- c7938dc6-7659-453e-a688-e26020c68e43

clusterOSImage: http://example.com/images/rhcos-47.83.202103221318-0-nutanix.x86_64.qcow2

credentialsMode: Manual

publish: External

pullSecret: '{"auths": ...}'

fips: false

sshKey: ssh-ed25519 AAAA... - 1 10 12 15 16 17 18 19 21

- Required. The installation program prompts you for this value.

- 2 6

- The

controlPlanesection is a single mapping, but the compute section is a sequence of mappings. To meet the requirements of the different data structures, the first line of thecomputesection must begin with a hyphen,-, and the first line of thecontrolPlanesection must not. Although both sections currently define a single machine pool, it is possible that future versions of OpenShift Container Platform will support defining multiple compute pools during installation. Only one control plane pool is used. - 3 7

- Whether to enable or disable simultaneous multithreading, or

hyperthreading. By default, simultaneous multithreading is enabled to increase the performance of your machines' cores. You can disable it by setting the parameter value toDisabled. If you disable simultaneous multithreading in some cluster machines, you must disable it in all cluster machines.ImportantIf you disable simultaneous multithreading, ensure that your capacity planning accounts for the dramatically decreased machine performance.

- 4 8

- Optional: Provide additional configuration for the machine pool parameters for the compute and control plane machines.

- 5 9 13

- Optional: Provide one or more pairs of a prism category key and a prism category value. These category key-value pairs must exist in Prism Central. You can provide separate categories to compute machines, control plane machines, or all machines.

- 11

- The cluster network plugin to install. The default value

OVNKubernetesis the only supported value. - 14

- Optional: Specify a project with which VMs are associated. Specify either

nameoruuidfor the project type, and then provide the corresponding UUID or project name. You can associate projects to compute machines, control plane machines, or all machines. - 20

- Optional: By default, the installation program downloads and installs the Red Hat Enterprise Linux CoreOS (RHCOS) image. If Prism Central does not have internet access, you can override the default behavior by hosting the RHCOS image on any HTTP server and pointing the installation program to the image.

- 22

- Whether to enable or disable FIPS mode. By default, FIPS mode is not enabled. If FIPS mode is enabled, the Red Hat Enterprise Linux CoreOS (RHCOS) machines that OpenShift Container Platform runs on bypass the default Kubernetes cryptography suite and use the cryptography modules that are provided with RHCOS instead.Important

When running Red Hat Enterprise Linux (RHEL) or Red Hat Enterprise Linux CoreOS (RHCOS) booted in FIPS mode, OpenShift Container Platform core components use the RHEL cryptographic libraries that have been submitted to NIST for FIPS 140-2/140-3 Validation on only the x86_64, ppc64le, and s390x architectures.

- 23

- Optional: You can provide the

sshKeyvalue that you use to access the machines in your cluster.NoteFor production OpenShift Container Platform clusters on which you want to perform installation debugging or disaster recovery, specify an SSH key that your

ssh-agentprocess uses.

3.7.2. Configuring failure domains

Failure domains improve the fault tolerance of an OpenShift Container Platform cluster by distributing control plane and compute machines across multiple Nutanix Prism Elements (clusters).

It is recommended that you configure three failure domains to ensure high-availability.

Prerequisites

-

You have an installation configuration file (

install-config.yaml).

Procedure

Edit the

install-config.yamlfile and add the following stanza to configure the first failure domain:apiVersion: v1 baseDomain: example.com compute: # ... platform: nutanix: failureDomains: - name: <failure_domain_name> prismElement: name: <prism_element_name> uuid: <prism_element_uuid> subnetUUIDs: - <network_uuid> # ...where:

<failure_domain_name>-

Specifies a unique name for the failure domain. The name is limited to 64 or fewer characters, which can include lower-case letters, digits, and a dash (

-). The dash cannot be in the leading or ending position of the name. <prism_element_name>- Optional. Specifies the name of the Prism Element.

<prism_element_uuid>- Specifies the UUID of the Prism Element.

<network_uuid>- Specifies the UUID of the Prism Element subnet object. The subnet’s IP address prefix (CIDR) should contain the virtual IP addresses that the OpenShift Container Platform cluster uses. Only one subnet per failure domain (Prism Element) in an OpenShift Container Platform cluster is supported.

- As required, configure additional failure domains.

To distribute control plane and compute machines across the failure domains, do one of the following:

If compute and control plane machines can share the same set of failure domains, add the failure domain names under the cluster’s default machine configuration.

Example of control plane and compute machines sharing a set of failure domains

apiVersion: v1 baseDomain: example.com compute: # ... platform: nutanix: defaultMachinePlatform: failureDomains: - failure-domain-1 - failure-domain-2 - failure-domain-3 # ...If compute and control plane machines must use different failure domains, add the failure domain names under the respective machine pools.

Example of control plane and compute machines using different failure domains

apiVersion: v1 baseDomain: example.com compute: # ... controlPlane: platform: nutanix: failureDomains: - failure-domain-1 - failure-domain-2 - failure-domain-3 # ... compute: platform: nutanix: failureDomains: - failure-domain-1 - failure-domain-2 # ...

- Save the file.

3.7.3. Configuring the cluster-wide proxy during installation

To enable internet access in environments that deny direct connections, configure a cluster-wide proxy in the install-config.yaml file. This configuration ensures that the new OpenShift Container Platform cluster routes traffic through the specified HTTP or HTTPS proxy.

Prerequisites

-

You have an existing

install-config.yamlfile. You have reviewed the sites that your cluster requires access to and determined whether any of them need to bypass the proxy. By default, all cluster egress traffic is proxied, including calls to hosting cloud provider APIs. You added sites to the

Proxyobject’sspec.noProxyfield to bypass the proxy if necessary.NoteThe

Proxyobjectstatus.noProxyfield is populated with the values of thenetworking.machineNetwork[].cidr,networking.clusterNetwork[].cidr, andnetworking.serviceNetwork[]fields from your installation configuration.For installations on Amazon Web Services (AWS), Google Cloud, Microsoft Azure, and Red Hat OpenStack Platform (RHOSP), the

Proxyobjectstatus.noProxyfield is also populated with the instance metadata endpoint (169.254.169.254).

Procedure

Edit your

install-config.yamlfile and add the proxy settings. For example:apiVersion: v1 baseDomain: my.domain.com proxy: httpProxy: http://<username>:<pswd>@<ip>:<port> httpsProxy: https://<username>:<pswd>@<ip>:<port> noProxy: example.com additionalTrustBundle: | -----BEGIN CERTIFICATE----- <MY_TRUSTED_CA_CERT> -----END CERTIFICATE----- additionalTrustBundlePolicy: <policy_to_add_additionalTrustBundle> # ...where:

proxy.httpProxy-

Specifies a proxy URL to use for creating HTTP connections outside the cluster. The URL scheme must be

http. proxy.httpsProxy- Specifies a proxy URL to use for creating HTTPS connections outside the cluster.

proxy.noProxy-

Specifies a comma-separated list of destination domain names, IP addresses, or other network CIDRs to exclude from proxying. Preface a domain with

.to match subdomains only. For example,.y.commatchesx.y.com, but noty.com. Use*to bypass the proxy for all destinations. additionalTrustBundle-

If provided, the installation program generates a config map that is named

user-ca-bundlein theopenshift-confignamespace to hold the additional CA certificates. If you provideadditionalTrustBundleand at least one proxy setting, theProxyobject is configured to reference theuser-ca-bundleconfig map in thetrustedCAfield. The Cluster Network Operator then creates atrusted-ca-bundleconfig map that merges the contents specified for thetrustedCAparameter with the RHCOS trust bundle. TheadditionalTrustBundlefield is required unless the proxy’s identity certificate is signed by an authority from the RHCOS trust bundle. additionalTrustBundlePolicySpecifies the policy that determines the configuration of the

Proxyobject to reference theuser-ca-bundleconfig map in thetrustedCAfield. The allowed values areProxyonlyandAlways. UseProxyonlyto reference theuser-ca-bundleconfig map only whenhttp/httpsproxy is configured. UseAlwaysto always reference theuser-ca-bundleconfig map. The default value isProxyonly. Optional parameter.NoteThe installation program does not support the proxy

readinessEndpointsfield.NoteIf the installation program times out, restart and then complete the deployment by using the

wait-forcommand of the installation program. For example:$ ./openshift-install wait-for install-complete --log-level debug

Save the file and reference it when installing OpenShift Container Platform.

The installation program creates a cluster-wide proxy that is named

clusterthat uses the proxy settings in the providedinstall-config.yamlfile. If no proxy settings are provided, aclusterProxyobject is still created, but it will have a nilspec.NoteOnly the

Proxyobject namedclusteris supported, and no additional proxies can be created.

3.8. Installing the OpenShift CLI on Linux

To manage your cluster and deploy applications from the command line, install the OpenShift CLI (oc) binary on Linux.

If you installed an earlier version of oc, you cannot use it to complete all of the commands in OpenShift Container Platform 4.17. Download and install the new version of oc.

Procedure

- Navigate to the OpenShift Container Platform downloads page on the Red Hat Customer Portal.

- Select the architecture from the Product Variant drop-down list.

- Select the appropriate version from the Version drop-down list.

- Click Download Now next to the OpenShift v4.17 Linux Clients entry and save the file.

Unpack the archive:

$ tar xvf <file>Place the

ocbinary in a directory that is on yourPATH.To check your

PATH, execute the following command:$ echo $PATH

Verification

After you install the OpenShift CLI, it is available using the

occommand:$ oc <command>

3.9. Installing the OpenShift CLI on Windows

To manage your cluster and deploy applications from the command line, install OpenShift CLI (oc) binary on Windows.

If you installed an earlier version of oc, you cannot use it to complete all of the commands in OpenShift Container Platform.

Download and install the new version of oc.

Procedure

- Navigate to the Download OpenShift Container Platform page on the Red Hat Customer Portal.

- Select the appropriate version from the Version list.

- Click Download Now next to the OpenShift v4.17 Windows Client entry and save the file.

- Extract the archive with a ZIP program.

Move the

ocbinary to a directory that is on yourPATHvariable.To check your

PATHvariable, open the command prompt and execute the following command:C:\> path

Verification

After you install the OpenShift CLI, it is available using the

occommand:C:\> oc <command>

3.10. Installing the OpenShift CLI on macOS

To manage your cluster and deploy applications from the command line, install the OpenShift CLI (oc) binary on macOS.

If you installed an earlier version of oc, you cannot use it to complete all of the commands in OpenShift Container Platform.

Download and install the new version of oc.

Procedure

- Navigate to the Download OpenShift Container Platform page on the Red Hat Customer Portal.

- Select the architecture from the Product Variant list.

- Select the appropriate version from the Version list.

Click Download Now next to the OpenShift v4.17 macOS Clients entry and save the file.

NoteFor macOS arm64, choose the OpenShift v4.17 macOS arm64 Client entry.

- Unpack and unzip the archive.

Move the

ocbinary to a directory on yourPATHvariable.To check your

PATHvariable, open a terminal and execute the following command:$ echo $PATH

Verification

Verify your installation by using an

occommand:$ oc <command>

3.11. Configuring IAM for Nutanix

Installing the cluster requires that the Cloud Credential Operator (CCO) operate in manual mode. While the installation program configures the CCO for manual mode, you must specify the identity and access management secrets.

Prerequisites

-

You have configured the

ccoctlbinary. -

You have an

install-config.yamlfile.

Procedure

Create a YAML file that contains the credentials data in the following format:

Credentials data format

credentials: - type: basic_auth1 data: prismCentral:2 username: <username_for_prism_central> password: <password_for_prism_central> prismElements:3 - name: <name_of_prism_element> username: <username_for_prism_element> password: <password_for_prism_element>Set a

$RELEASE_IMAGEvariable with the release image from your installation file by running the following command:$ RELEASE_IMAGE=$(./openshift-install version | awk '/release image/ {print $3}')Extract the list of

CredentialsRequestcustom resources (CRs) from the OpenShift Container Platform release image by running the following command:$ oc adm release extract \ --from=$RELEASE_IMAGE \ --credentials-requests \ --included \1 --install-config=<path_to_directory_with_installation_configuration>/install-config.yaml \2 --to=<path_to_directory_for_credentials_requests>3 - 1

- The

--includedparameter includes only the manifests that your specific cluster configuration requires. - 2

- Specify the location of the

install-config.yamlfile. - 3

- Specify the path to the directory where you want to store the

CredentialsRequestobjects. If the specified directory does not exist, this command creates it.

Sample

CredentialsRequestobjectapiVersion: cloudcredential.openshift.io/v1 kind: CredentialsRequest metadata: annotations: include.release.openshift.io/self-managed-high-availability: "true" labels: controller-tools.k8s.io: "1.0" name: openshift-machine-api-nutanix namespace: openshift-cloud-credential-operator spec: providerSpec: apiVersion: cloudcredential.openshift.io/v1 kind: NutanixProviderSpec secretRef: name: nutanix-credentials namespace: openshift-machine-apiUse the

ccoctltool to process allCredentialsRequestobjects by running the following command:$ ccoctl nutanix create-shared-secrets \ --credentials-requests-dir=<path_to_credentials_requests_directory> \1 --output-dir=<ccoctl_output_dir> \2 --credentials-source-filepath=<path_to_credentials_file>3 - 1

- Specify the path to the directory that contains the files for the component

CredentialsRequestsobjects. - 2

- Optional: Specify the directory in which you want the

ccoctlutility to create objects. By default, the utility creates objects in the directory in which the commands are run. - 3

- Optional: Specify the directory that contains the credentials data YAML file. By default,

ccoctlexpects this file to be in<home_directory>/.nutanix/credentials.

Edit the

install-config.yamlconfiguration file so that thecredentialsModeparameter is set toManual.Example

install-config.yamlconfiguration fileapiVersion: v1 baseDomain: cluster1.example.com credentialsMode: Manual1 ...- 1

- Add this line to set the

credentialsModeparameter toManual.

Create the installation manifests by running the following command:

$ openshift-install create manifests --dir <installation_directory>1 - 1

- Specify the path to the directory that contains the

install-config.yamlfile for your cluster.

Copy the generated credential files to the target manifests directory by running the following command:

$ cp <ccoctl_output_dir>/manifests/*credentials.yaml ./<installation_directory>/manifests

Verification

Ensure that the appropriate secrets exist in the

manifestsdirectory.$ ls ./<installation_directory>/manifestsExample output

cluster-config.yaml cluster-dns-02-config.yml cluster-infrastructure-02-config.yml cluster-ingress-02-config.yml cluster-network-01-crd.yml cluster-network-02-config.yml cluster-proxy-01-config.yaml cluster-scheduler-02-config.yml cvo-overrides.yaml kube-cloud-config.yaml kube-system-configmap-root-ca.yaml machine-config-server-tls-secret.yaml openshift-config-secret-pull-secret.yaml openshift-cloud-controller-manager-nutanix-credentials-credentials.yaml openshift-machine-api-nutanix-credentials-credentials.yaml

3.12. Adding config map and secret resources required for Nutanix CCM

Installations on Nutanix require additional ConfigMap and Secret resources to integrate with the Nutanix Cloud Controller Manager (CCM).

Prerequisites

-

You have created a

manifestsdirectory within your installation directory.

Procedure

Navigate to the

manifestsdirectory:$ cd <path_to_installation_directory>/manifestsCreate the

cloud-confConfigMapfile with the nameopenshift-cloud-controller-manager-cloud-config.yamland add the following information:apiVersion: v1 kind: ConfigMap metadata: name: cloud-conf namespace: openshift-cloud-controller-manager data: cloud.conf: "{ \"prismCentral\": { \"address\": \"<prism_central_FQDN/IP>\",1 \"port\": 9440, \"credentialRef\": { \"kind\": \"Secret\", \"name\": \"nutanix-credentials\", \"namespace\": \"openshift-cloud-controller-manager\" } }, \"topologyDiscovery\": { \"type\": \"Prism\", \"topologyCategories\": null }, \"enableCustomLabeling\": true }"- 1

- Specify the Prism Central FQDN/IP.

Verify that the file

cluster-infrastructure-02-config.ymlexists and has the following information:spec: cloudConfig: key: config name: cloud-provider-config

3.13. Services for a user-managed load balancer

To integrate your infrastructure with existing network standards or gain more control over traffic management in OpenShift Container Platform , configure services for a user-managed load balancer.

Configuring a user-managed load balancer depends on your vendor’s load balancer.

The information and examples in this section are for guideline purposes only. Consult the vendor documentation for more specific information about the vendor’s load balancer.

Red Hat supports the following services for a user-managed load balancer:

- Ingress Controller

- OpenShift API

- OpenShift MachineConfig API

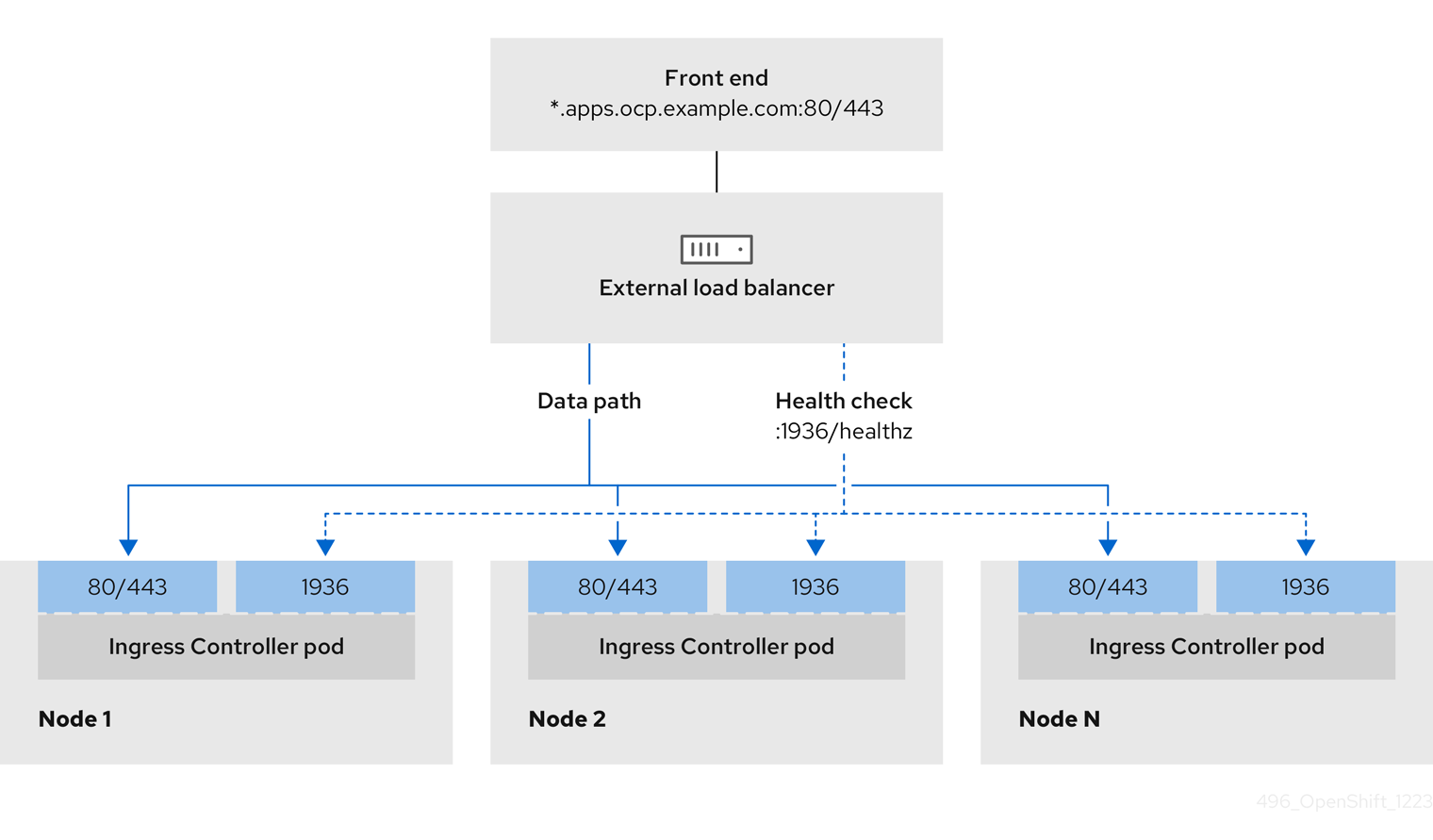

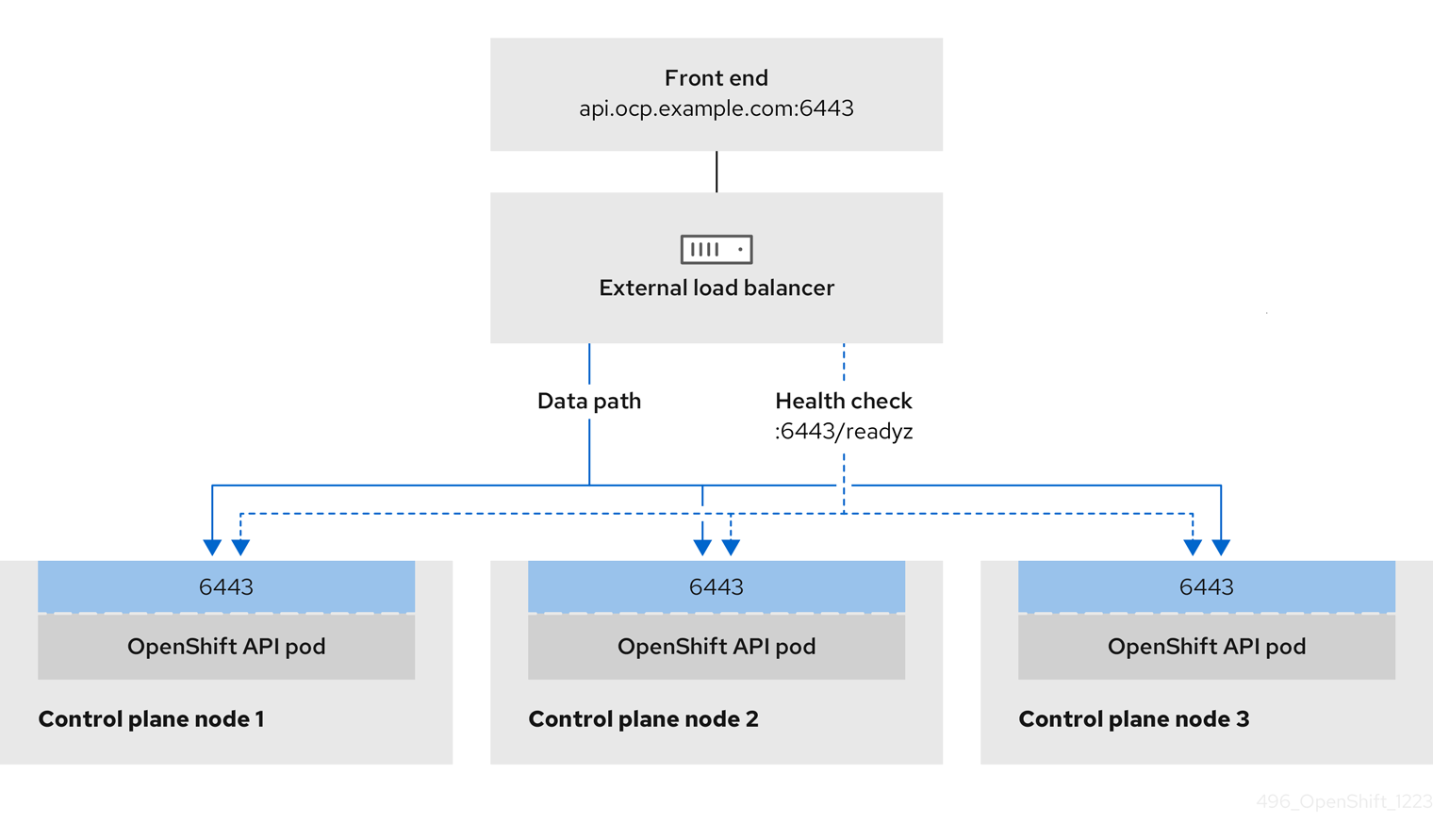

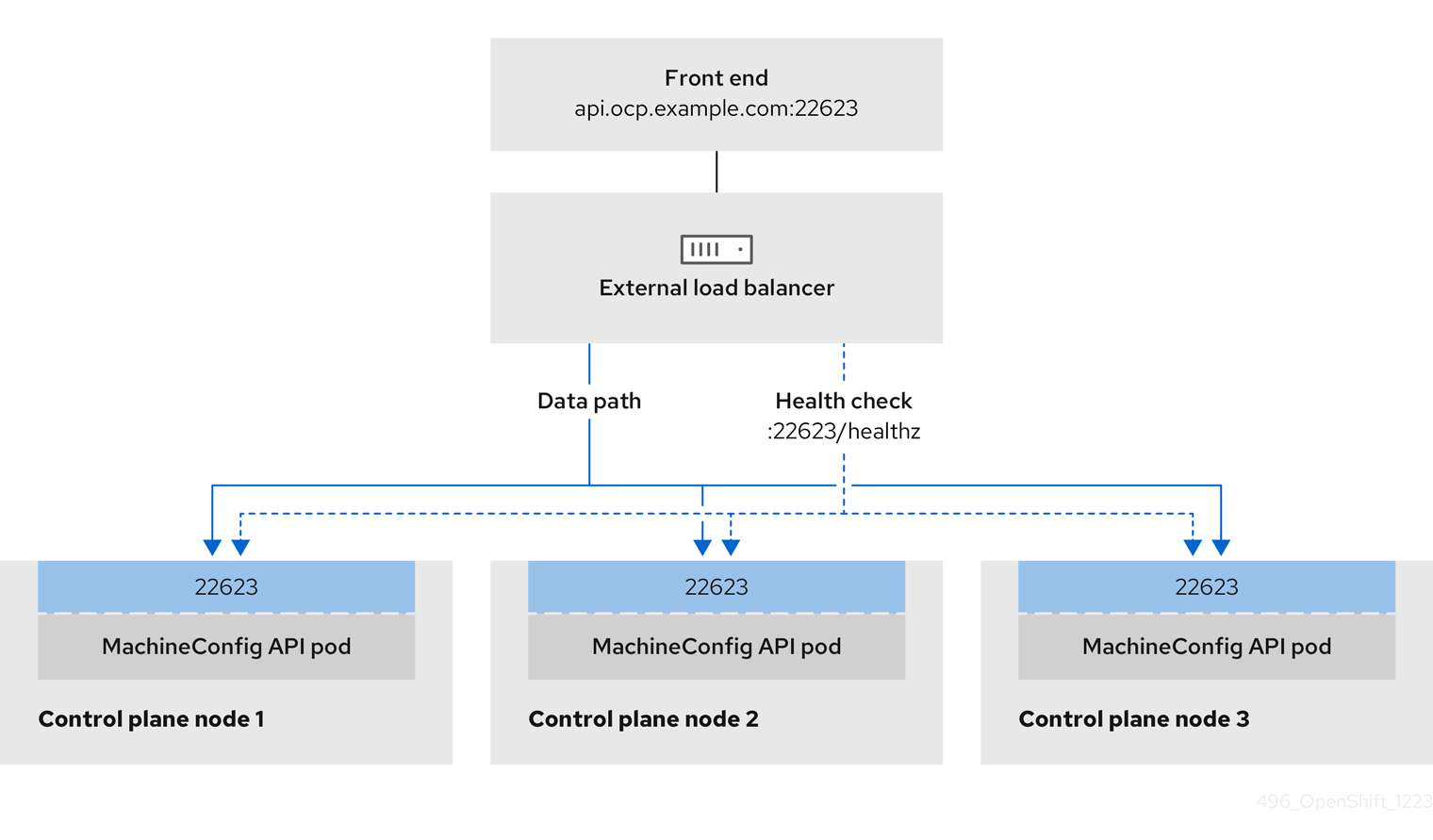

You can choose whether you want to configure one or all of these services for a user-managed load balancer. Configuring only the Ingress Controller service is a common configuration option. To better understand each service, view the following diagrams:

Figure 3.1. Example network workflow that shows an Ingress Controller operating in an OpenShift Container Platform environment

Figure 3.2. Example network workflow that shows an OpenShift API operating in an OpenShift Container Platform environment

Figure 3.3. Example network workflow that shows an OpenShift MachineConfig API operating in an OpenShift Container Platform environment

The following configuration options are supported for user-managed load balancers:

- Use a node selector to map the Ingress Controller to a specific set of nodes. You must assign a static IP address to each node in this set, or configure each node to receive the same IP address from the Dynamic Host Configuration Protocol (DHCP). Infrastructure nodes commonly receive this type of configuration.

Target all IP addresses on a subnet. This configuration can reduce maintenance overhead, because you can create and destroy nodes within those networks without reconfiguring the load balancer targets. If you deploy your ingress pods by using a machine set on a smaller network, such as a

/27or/28, you can simplify your load balancer targets.TipYou can list all IP addresses that exist in a network by checking the machine config pool’s resources.

Before you configure a user-managed load balancer for your OpenShift Container Platform cluster, consider the following information:

- For a front-end IP address, you can use the same IP address for the front-end IP address, the Ingress Controller’s load balancer, and API load balancer. Check the vendor’s documentation for this capability.

For a back-end IP address, ensure that an IP address for an OpenShift Container Platform control plane node does not change during the lifetime of the user-managed load balancer. You can achieve this by completing one of the following actions:

- Assign a static IP address to each control plane node.

- Configure each node to receive the same IP address from the DHCP every time the node requests a DHCP lease. Depending on the vendor, the DHCP lease might be in the form of an IP reservation or a static DHCP assignment.

- Manually define each node that runs the Ingress Controller in the user-managed load balancer for the Ingress Controller back-end service. For example, if the Ingress Controller moves to an undefined node, a connection outage can occur.

3.13.1. Configuring a user-managed load balancer

To integrate your infrastructure with existing network standards or gain more control over traffic management in OpenShift Container Platform , use a user-managed load balancer in place of the default load balancer.

Before you configure a user-managed load balancer, ensure that you read the "Services for a user-managed load balancer" section.

Read the following prerequisites that apply to the service that you want to configure for your user-managed load balancer.

MetalLB, which runs on a cluster, functions as a user-managed load balancer.

Prerequisites

The following list details OpenShift API prerequisites:

- You defined a front-end IP address.

TCP ports 6443 and 22623 are exposed on the front-end IP address of your load balancer. Check the following items:

- Port 6443 provides access to the OpenShift API service.

- Port 22623 can provide ignition startup configurations to nodes.

- The front-end IP address and port 6443 are reachable by all users of your system with a location external to your OpenShift Container Platform cluster.

- The front-end IP address and port 22623 are reachable only by OpenShift Container Platform nodes.

- The load balancer backend can communicate with OpenShift Container Platform control plane nodes on port 6443 and 22623.

The following list details Ingress Controller prerequisites:

- You defined a front-end IP address.

- TCP port 443 and port 80 are exposed on the front-end IP address of your load balancer.

- The front-end IP address, port 80 and port 443 are reachable by all users of your system with a location external to your OpenShift Container Platform cluster.

- The front-end IP address, port 80 and port 443 are reachable by all nodes that operate in your OpenShift Container Platform cluster.

- The load balancer backend can communicate with OpenShift Container Platform nodes that run the Ingress Controller on ports 80, 443, and 1936.

The following list details prerequisites for health check URL specifications:

You can configure most load balancers by setting health check URLs that determine if a service is available or unavailable. OpenShift Container Platform provides these health checks for the OpenShift API, Machine Configuration API, and Ingress Controller backend services.

The following example shows a Kubernetes API health check specification for a backend service:

Path: HTTPS:6443/readyz

Healthy threshold: 2

Unhealthy threshold: 2

Timeout: 10

Interval: 10The following example shows a Machine Config API health check specification for a backend service:

Path: HTTPS:22623/healthz

Healthy threshold: 2

Unhealthy threshold: 2

Timeout: 10

Interval: 10The following example shows a Ingress Controller health check specification for a backend service:

Path: HTTP:1936/healthz/ready

Healthy threshold: 2

Unhealthy threshold: 2

Timeout: 5

Interval: 10Procedure

Configure the HAProxy Ingress Controller, so that you can enable access to the cluster from your load balancer on ports 6443, 22623, 443, and 80. Depending on your needs, you can specify the IP address of a single subnet or IP addresses from multiple subnets in your HAProxy configuration.

Example HAProxy configuration with one listed subnet

# ... listen my-cluster-api-6443 bind 192.168.1.100:6443 mode tcp balance roundrobin option httpchk http-check connect http-check send meth GET uri /readyz http-check expect status 200 server my-cluster-master-2 192.168.1.101:6443 check inter 10s rise 2 fall 2 server my-cluster-master-0 192.168.1.102:6443 check inter 10s rise 2 fall 2 server my-cluster-master-1 192.168.1.103:6443 check inter 10s rise 2 fall 2 listen my-cluster-machine-config-api-22623 bind 192.168.1.100:22623 mode tcp balance roundrobin option httpchk http-check connect http-check send meth GET uri /healthz http-check expect status 200 server my-cluster-master-2 192.168.1.101:22623 check inter 10s rise 2 fall 2 server my-cluster-master-0 192.168.1.102:22623 check inter 10s rise 2 fall 2 server my-cluster-master-1 192.168.1.103:22623 check inter 10s rise 2 fall 2 listen my-cluster-apps-443 bind 192.168.1.100:443 mode tcp balance roundrobin option httpchk http-check connect http-check send meth GET uri /healthz/ready http-check expect status 200 server my-cluster-worker-0 192.168.1.111:443 check port 1936 inter 10s rise 2 fall 2 server my-cluster-worker-1 192.168.1.112:443 check port 1936 inter 10s rise 2 fall 2 server my-cluster-worker-2 192.168.1.113:443 check port 1936 inter 10s rise 2 fall 2 listen my-cluster-apps-80 bind 192.168.1.100:80 mode tcp balance roundrobin option httpchk http-check connect http-check send meth GET uri /healthz/ready http-check expect status 200 server my-cluster-worker-0 192.168.1.111:80 check port 1936 inter 10s rise 2 fall 2 server my-cluster-worker-1 192.168.1.112:80 check port 1936 inter 10s rise 2 fall 2 server my-cluster-worker-2 192.168.1.113:80 check port 1936 inter 10s rise 2 fall 2 # ...Example HAProxy configuration with multiple listed subnets

# ... listen api-server-6443 bind *:6443 mode tcp server master-00 192.168.83.89:6443 check inter 1s server master-01 192.168.84.90:6443 check inter 1s server master-02 192.168.85.99:6443 check inter 1s server bootstrap 192.168.80.89:6443 check inter 1s listen machine-config-server-22623 bind *:22623 mode tcp server master-00 192.168.83.89:22623 check inter 1s server master-01 192.168.84.90:22623 check inter 1s server master-02 192.168.85.99:22623 check inter 1s server bootstrap 192.168.80.89:22623 check inter 1s listen ingress-router-80 bind *:80 mode tcp balance source server worker-00 192.168.83.100:80 check inter 1s server worker-01 192.168.83.101:80 check inter 1s listen ingress-router-443 bind *:443 mode tcp balance source server worker-00 192.168.83.100:443 check inter 1s server worker-01 192.168.83.101:443 check inter 1s listen ironic-api-6385 bind *:6385 mode tcp balance source server master-00 192.168.83.89:6385 check inter 1s server master-01 192.168.84.90:6385 check inter 1s server master-02 192.168.85.99:6385 check inter 1s server bootstrap 192.168.80.89:6385 check inter 1s listen inspector-api-5050 bind *:5050 mode tcp balance source server master-00 192.168.83.89:5050 check inter 1s server master-01 192.168.84.90:5050 check inter 1s server master-02 192.168.85.99:5050 check inter 1s server bootstrap 192.168.80.89:5050 check inter 1s # ...Use the

curlCLI command to verify that the user-managed load balancer and its resources are operational:Verify that the cluster machine configuration API is accessible to the Kubernetes API server resource, by running the following command and observing the response:

$ curl https://<loadbalancer_ip_address>:6443/version --insecureIf the configuration is correct, you receive a JSON object in response:

{ "major": "1", "minor": "11+", "gitVersion": "v1.11.0+ad103ed", "gitCommit": "ad103ed", "gitTreeState": "clean", "buildDate": "2019-01-09T06:44:10Z", "goVersion": "go1.10.3", "compiler": "gc", "platform": "linux/amd64" }Verify that the cluster machine configuration API is accessible to the Machine config server resource, by running the following command and observing the output:

$ curl -v https://<loadbalancer_ip_address>:22623/healthz --insecureIf the configuration is correct, the output from the command shows the following response:

HTTP/1.1 200 OK Content-Length: 0Verify that the controller is accessible to the Ingress Controller resource on port 80, by running the following command and observing the output:

$ curl -I -L -H "Host: console-openshift-console.apps.<cluster_name>.<base_domain>" http://<load_balancer_front_end_IP_address>If the configuration is correct, the output from the command shows the following response:

HTTP/1.1 302 Found content-length: 0 location: https://console-openshift-console.apps.ocp4.private.opequon.net/ cache-control: no-cacheVerify that the controller is accessible to the Ingress Controller resource on port 443, by running the following command and observing the output:

$ curl -I -L --insecure --resolve console-openshift-console.apps.<cluster_name>.<base_domain>:443:<Load Balancer Front End IP Address> https://console-openshift-console.apps.<cluster_name>.<base_domain>If the configuration is correct, the output from the command shows the following response:

HTTP/1.1 200 OK referrer-policy: strict-origin-when-cross-origin set-cookie: csrf-token=UlYWOyQ62LWjw2h003xtYSKlh1a0Py2hhctw0WmV2YEdhJjFyQwWcGBsja261dGLgaYO0nxzVErhiXt6QepA7g==; Path=/; Secure; SameSite=Lax x-content-type-options: nosniff x-dns-prefetch-control: off x-frame-options: DENY x-xss-protection: 1; mode=block date: Wed, 04 Oct 2023 16:29:38 GMT content-type: text/html; charset=utf-8 set-cookie: 1e2670d92730b515ce3a1bb65da45062=1bf5e9573c9a2760c964ed1659cc1673; path=/; HttpOnly; Secure; SameSite=None cache-control: private

Configure the DNS records for your cluster to target the front-end IP addresses of the user-managed load balancer. You must update records to your DNS server for the cluster API and applications over the load balancer. The following examples shows modified DNS records:

<load_balancer_ip_address> A api.<cluster_name>.<base_domain> A record pointing to Load Balancer Front End<load_balancer_ip_address> A apps.<cluster_name>.<base_domain> A record pointing to Load Balancer Front EndImportantDNS propagation might take some time for each DNS record to become available. Ensure that each DNS record propagates before validating each record.

For your OpenShift Container Platform cluster to use the user-managed load balancer, you must specify the following configuration in your cluster’s

install-config.yamlfile:# ... platform: nutanix: loadBalancer: type: UserManaged apiVIPs: - <api_ip>1 ingressVIPs: - <ingress_ip>2 # ...where:

loadBalancer.type-

Set

UserManagedfor thetypeparameter to specify a user-managed load balancer for your cluster. The parameter defaults toOpenShiftManagedDefault, which denotes the default internal load balancer. For services defined in anopenshift-kni-infranamespace, a user-managed load balancer can deploy thecorednsservice to pods in your cluster but ignoreskeepalivedandhaproxyservices. loadBalancer.<api_ip>- Specifies a user-managed load balancer. Specify the user-managed load balancer’s public IP address, so that the Kubernetes API can communicate with the user-managed load balancer. Mandatory parameter.

loadBalancer.<ingress_ip>- Specifies a user-managed load balancer. Specify the user-managed load balancer’s public IP address, so that the user-managed load balancer can manage ingress traffic for your cluster. Mandatory parameter.

Verification

Use the

curlCLI command to verify that the user-managed load balancer and DNS record configuration are operational:Verify that you can access the cluster API, by running the following command and observing the output:

$ curl https://api.<cluster_name>.<base_domain>:6443/version --insecureIf the configuration is correct, you receive a JSON object in response:

{ "major": "1", "minor": "11+", "gitVersion": "v1.11.0+ad103ed", "gitCommit": "ad103ed", "gitTreeState": "clean", "buildDate": "2019-01-09T06:44:10Z", "goVersion": "go1.10.3", "compiler": "gc", "platform": "linux/amd64" }Verify that you can access the cluster machine configuration, by running the following command and observing the output:

$ curl -v https://api.<cluster_name>.<base_domain>:22623/healthz --insecureIf the configuration is correct, the output from the command shows the following response:

HTTP/1.1 200 OK Content-Length: 0Verify that you can access each cluster application on port 80, by running the following command and observing the output:

$ curl http://console-openshift-console.apps.<cluster_name>.<base_domain> -I -L --insecureIf the configuration is correct, the output from the command shows the following response:

HTTP/1.1 302 Found content-length: 0 location: https://console-openshift-console.apps.<cluster-name>.<base domain>/ cache-control: no-cacheHTTP/1.1 200 OK referrer-policy: strict-origin-when-cross-origin set-cookie: csrf-token=39HoZgztDnzjJkq/JuLJMeoKNXlfiVv2YgZc09c3TBOBU4NI6kDXaJH1LdicNhN1UsQWzon4Dor9GWGfopaTEQ==; Path=/; Secure x-content-type-options: nosniff x-dns-prefetch-control: off x-frame-options: DENY x-xss-protection: 1; mode=block date: Tue, 17 Nov 2020 08:42:10 GMT content-type: text/html; charset=utf-8 set-cookie: 1e2670d92730b515ce3a1bb65da45062=9b714eb87e93cf34853e87a92d6894be; path=/; HttpOnly; Secure; SameSite=None cache-control: privateVerify that you can access each cluster application on port 443, by running the following command and observing the output:

$ curl https://console-openshift-console.apps.<cluster_name>.<base_domain> -I -L --insecureIf the configuration is correct, the output from the command shows the following response:

HTTP/1.1 200 OK referrer-policy: strict-origin-when-cross-origin set-cookie: csrf-token=UlYWOyQ62LWjw2h003xtYSKlh1a0Py2hhctw0WmV2YEdhJjFyQwWcGBsja261dGLgaYO0nxzVErhiXt6QepA7g==; Path=/; Secure; SameSite=Lax x-content-type-options: nosniff x-dns-prefetch-control: off x-frame-options: DENY x-xss-protection: 1; mode=block date: Wed, 04 Oct 2023 16:29:38 GMT content-type: text/html; charset=utf-8 set-cookie: 1e2670d92730b515ce3a1bb65da45062=1bf5e9573c9a2760c964ed1659cc1673; path=/; HttpOnly; Secure; SameSite=None cache-control: private

3.14. Deploying the cluster

You can install OpenShift Container Platform on a compatible cloud platform.

You can run the create cluster command of the installation program only once, during initial installation.

Prerequisites

- You have the OpenShift Container Platform installation program and the pull secret for your cluster.

- You have verified that the cloud provider account on your host has the correct permissions to deploy the cluster. An account with incorrect permissions causes the installation process to fail with an error message that displays the missing permissions.

Procedure

In the directory that contains the installation program, initialize the cluster deployment by running the following command:

$ ./openshift-install create cluster --dir <installation_directory> \1 --log-level=info2

Verification

When the cluster deployment completes successfully:

-

The terminal displays directions for accessing your cluster, including a link to the web console and credentials for the