Installing

Installing and configuring OpenShift Container Platform clusters

Abstract

Chapter 1. OpenShift Container Platform installation overview

1.1. OpenShift Container Platform installation overview

The OpenShift Container Platform installation program offers you flexibility. You can use the installation program to deploy a cluster on infrastructure that the installation program provisions and the cluster maintains or deploy a cluster on infrastructure that you prepare and maintain.

These two basic types of OpenShift Container Platform clusters are frequently called installer-provisioned infrastructure clusters and user-provisioned infrastructure clusters.

Both types of clusters have the following characteristics:

- Highly available infrastructure with no single points of failure is available by default

- Administrators maintain control over what updates are applied and when

You use the same installation program to deploy both types of clusters. The main assets generated by the installation program are the Ignition config files for the bootstrap, master, and worker machines. With these three configurations and correctly configured infrastructure, you can start an OpenShift Container Platform cluster.

The OpenShift Container Platform installation program uses a set of targets and dependencies to manage cluster installation. The installation program has a set of targets that it must achieve, and each target has a set of dependencies. Because each target is only concerned with its own dependencies, the installation program can act to achieve multiple targets in parallel. The ultimate target is a running cluster. By meeting dependencies instead of running commands, the installation program is able to recognize and use existing components instead of running the commands to create them again.

The following diagram shows a subset of the installation targets and dependencies:

Figure 1.1. OpenShift Container Platform installation targets and dependencies

After installation, each cluster machine uses Red Hat Enterprise Linux CoreOS (RHCOS) as the operating system. RHCOS is the immutable container host version of Red Hat Enterprise Linux (RHEL) and features a RHEL kernel with SELinux enabled by default. It includes the kubelet, which is the Kubernetes node agent, and the CRI-O container runtime, which is optimized for Kubernetes.

Every control plane machine in an OpenShift Container Platform 4.8 cluster must use RHCOS, which includes a critical first-boot provisioning tool called Ignition. This tool enables the cluster to configure the machines. Operating system updates are delivered as an Atomic OSTree repository that is embedded in a container image that is rolled out across the cluster by an Operator. Actual operating system changes are made in-place on each machine as an atomic operation by using rpm-ostree. Together, these technologies enable OpenShift Container Platform to manage the operating system like it manages any other application on the cluster, via in-place upgrades that keep the entire platform up-to-date. These in-place updates can reduce the burden on operations teams.

If you use RHCOS as the operating system for all cluster machines, the cluster manages all aspects of its components and machines, including the operating system. Because of this, only the installation program and the Machine Config Operator can change machines. The installation program uses Ignition config files to set the exact state of each machine, and the Machine Config Operator completes more changes to the machines, such as the application of new certificates or keys, after installation.

1.1.1. Installation process

When you install an OpenShift Container Platform cluster, you download the installation program from the appropriate Infrastructure Provider page on the OpenShift Cluster Manager site. This site manages:

- REST API for accounts

- Registry tokens, which are the pull secrets that you use to obtain the required components

- Cluster registration, which associates the cluster identity to your Red Hat account to facilitate the gathering of usage metrics

In OpenShift Container Platform 4.8, the installation program is a Go binary file that performs a series of file transformations on a set of assets. The way you interact with the installation program differs depending on your installation type.

- For clusters with installer-provisioned infrastructure, you delegate the infrastructure bootstrapping and provisioning to the installation program instead of doing it yourself. The installation program creates all of the networking, machines, and operating systems that are required to support the cluster.

- If you provision and manage the infrastructure for your cluster, you must provide all of the cluster infrastructure and resources, including the bootstrap machine, networking, load balancing, storage, and individual cluster machines.

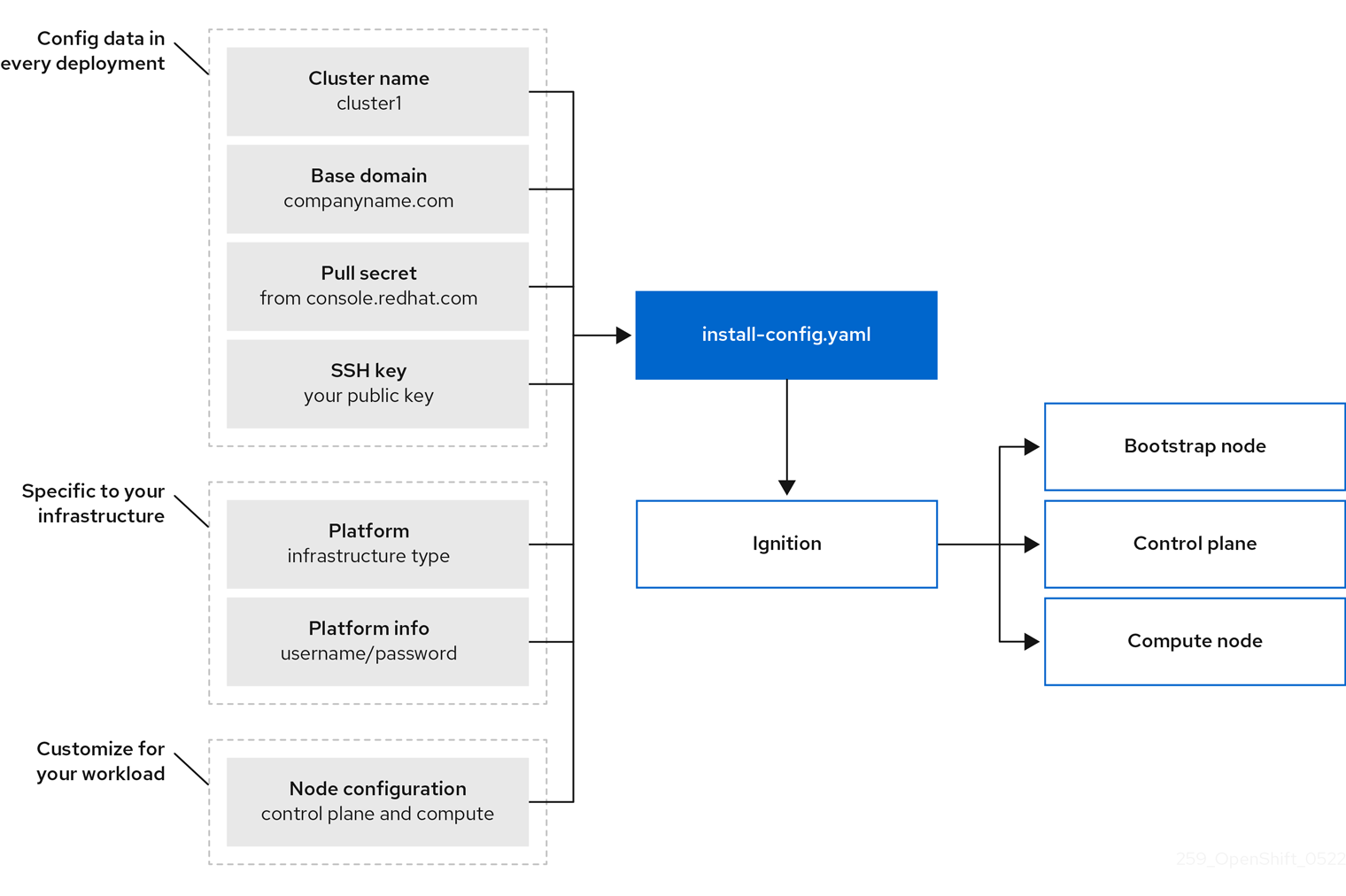

You use three sets of files during installation: an installation configuration file that is named install-config.yaml, Kubernetes manifests, and Ignition config files for your machine types.

It is possible to modify Kubernetes and the Ignition config files that control the underlying RHCOS operating system during installation. However, no validation is available to confirm the suitability of any modifications that you make to these objects. If you modify these objects, you might render your cluster non-functional. Because of this risk, modifying Kubernetes and Ignition config files is not supported unless you are following documented procedures or are instructed to do so by Red Hat support.

The installation configuration file is transformed into Kubernetes manifests, and then the manifests are wrapped into Ignition config files. The installation program uses these Ignition config files to create the cluster.

The installation configuration files are all pruned when you run the installation program, so be sure to back up all configuration files that you want to use again.

You cannot modify the parameters that you set during installation, but you can modify many cluster attributes after installation.

The installation process with installer-provisioned infrastructure

The default installation type uses installer-provisioned infrastructure. By default, the installation program acts as an installation wizard, prompting you for values that it cannot determine on its own and providing reasonable default values for the remaining parameters. You can also customize the installation process to support advanced infrastructure scenarios. The installation program provisions the underlying infrastructure for the cluster.

You can install either a standard cluster or a customized cluster. With a standard cluster, you provide minimum details that are required to install the cluster. With a customized cluster, you can specify more details about the platform, such as the number of machines that the control plane uses, the type of virtual machine that the cluster deploys, or the CIDR range for the Kubernetes service network.

If possible, use this feature to avoid having to provision and maintain the cluster infrastructure. In all other environments, you use the installation program to generate the assets that you require to provision your cluster infrastructure.

With installer-provisioned infrastructure clusters, OpenShift Container Platform manages all aspects of the cluster, including the operating system itself. Each machine boots with a configuration that references resources hosted in the cluster that it joins. This configuration allows the cluster to manage itself as updates are applied.

The installation process with user-provisioned infrastructure

You can also install OpenShift Container Platform on infrastructure that you provide. You use the installation program to generate the assets that you require to provision the cluster infrastructure, create the cluster infrastructure, and then deploy the cluster to the infrastructure that you provided.

If you do not use infrastructure that the installation program provisioned, you must manage and maintain the cluster resources yourself, including:

- The underlying infrastructure for the control plane and compute machines that make up the cluster

- Load balancers

- Cluster networking, including the DNS records and required subnets

- Storage for the cluster infrastructure and applications

If your cluster uses user-provisioned infrastructure, you have the option of adding RHEL compute machines to your cluster.

Installation process details

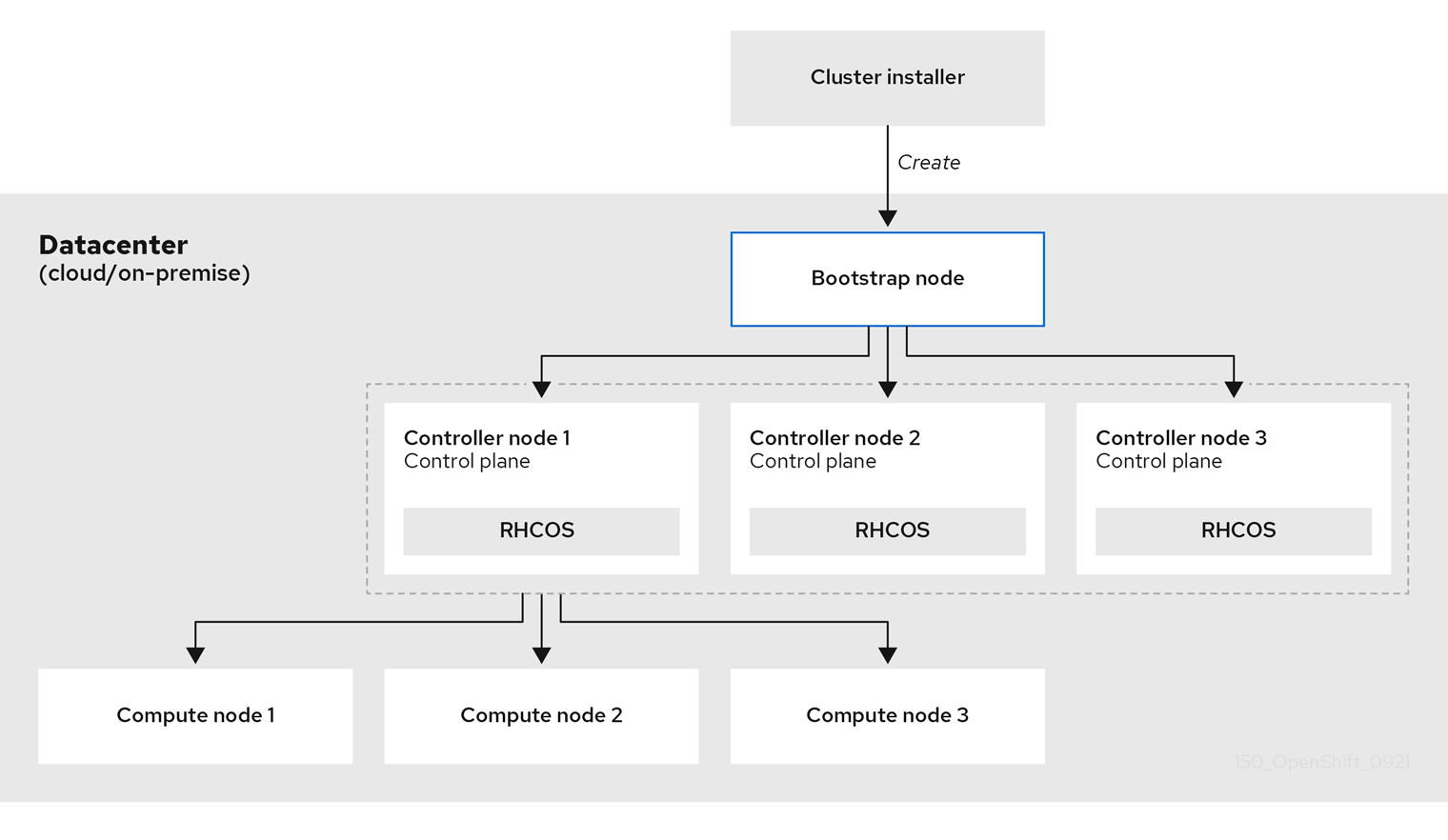

Because each machine in the cluster requires information about the cluster when it is provisioned, OpenShift Container Platform uses a temporary bootstrap machine during initial configuration to provide the required information to the permanent control plane. It boots by using an Ignition config file that describes how to create the cluster. The bootstrap machine creates the control plane machines (also known as the master machines) that make up the control plane. The control plane machines then create the compute machines, which are also known as worker machines. The following figure illustrates this process:

Figure 1.2. Creating the bootstrap, control plane, and compute machines

After the cluster machines initialize, the bootstrap machine is destroyed. All clusters use the bootstrap process to initialize the cluster, but if you provision the infrastructure for your cluster, you must complete many of the steps manually.

-

The Ignition config files that the installation program generates contain certificates that expire after 24 hours, which are then renewed at that time. If the cluster is shut down before renewing the certificates and the cluster is later restarted after the 24 hours have elapsed, the cluster automatically recovers the expired certificates. The exception is that you must manually approve the pending

node-bootstrappercertificate signing requests (CSRs) to recover kubelet certificates. See the documentation for Recovering from expired control plane certificates for more information. - It is recommended that you use Ignition config files within 12 hours after they are generated because the 24-hour certificate rotates from 16 to 22 hours after the cluster is installed. By using the Ignition config files within 12 hours, you can avoid installation failure if the certificate update runs during installation.

Bootstrapping a cluster involves the following steps:

- The bootstrap machine boots and starts hosting the remote resources required for the control plane machines to boot. (Requires manual intervention if you provision the infrastructure)

- The bootstrap machine starts a single-node etcd cluster and a temporary Kubernetes control plane.

- The control plane machines fetch the remote resources from the bootstrap machine and finish booting. (Requires manual intervention if you provision the infrastructure)

- The temporary control plane schedules the production control plane to the production control plane machines.

- The Cluster Version Operator (CVO) comes online and installs the etcd Operator. The etcd Operator scales up etcd on all control plane nodes.

- The temporary control plane shuts down and passes control to the production control plane.

- The bootstrap machine injects OpenShift Container Platform components into the production control plane.

- The installation program shuts down the bootstrap machine. (Requires manual intervention if you provision the infrastructure)

- The control plane sets up the compute nodes.

- The control plane installs additional services in the form of a set of Operators.

The result of this bootstrapping process is a running OpenShift Container Platform cluster. The cluster then downloads and configures remaining components needed for the day-to-day operation, including the creation of compute machines in supported environments.

1.1.2. Verifying node state after installation

The OpenShift Container Platform installation completes when the following installation health checks are successful:

- The provisioning host can access the OpenShift Container Platform web console.

- All control plane nodes are ready.

- All cluster Operators are available.

After the installation completes, the specific cluster Operators responsible for the worker nodes continuously attempt to provision all worker nodes. It can take some time before all worker nodes report as READY. For installations on bare metal, wait a minimum of 60 minutes before troubleshooting a worker node. For installations on all other platforms, wait a minimum of 40 minutes before troubleshooting a worker node. A DEGRADED state for the cluster Operators responsible for the worker nodes depends on the Operators' own resources and not on the state of the nodes.

After your installation completes, you can continue to monitor the condition of the nodes in your cluster using the following steps.

Prerequisites

- The installation program resolves successfully in the terminal.

Procedure

Show the status of all worker nodes:

oc get nodes

$ oc get nodesCopy to Clipboard Copied! Toggle word wrap Toggle overflow Example output

Copy to Clipboard Copied! Toggle word wrap Toggle overflow Show the phase of all worker machine nodes:

oc get machines -A

$ oc get machines -ACopy to Clipboard Copied! Toggle word wrap Toggle overflow Example output

Copy to Clipboard Copied! Toggle word wrap Toggle overflow

Installation scope

The scope of the OpenShift Container Platform installation program is intentionally narrow. It is designed for simplicity and ensured success. You can complete many more configuration tasks after installation completes.

1.2. Supported platforms for OpenShift Container Platform clusters

In OpenShift Container Platform 4.8, you can install a cluster that uses installer-provisioned infrastructure on the following platforms:

- Amazon Web Services (AWS)

- Google Cloud Platform (GCP)

- Microsoft Azure

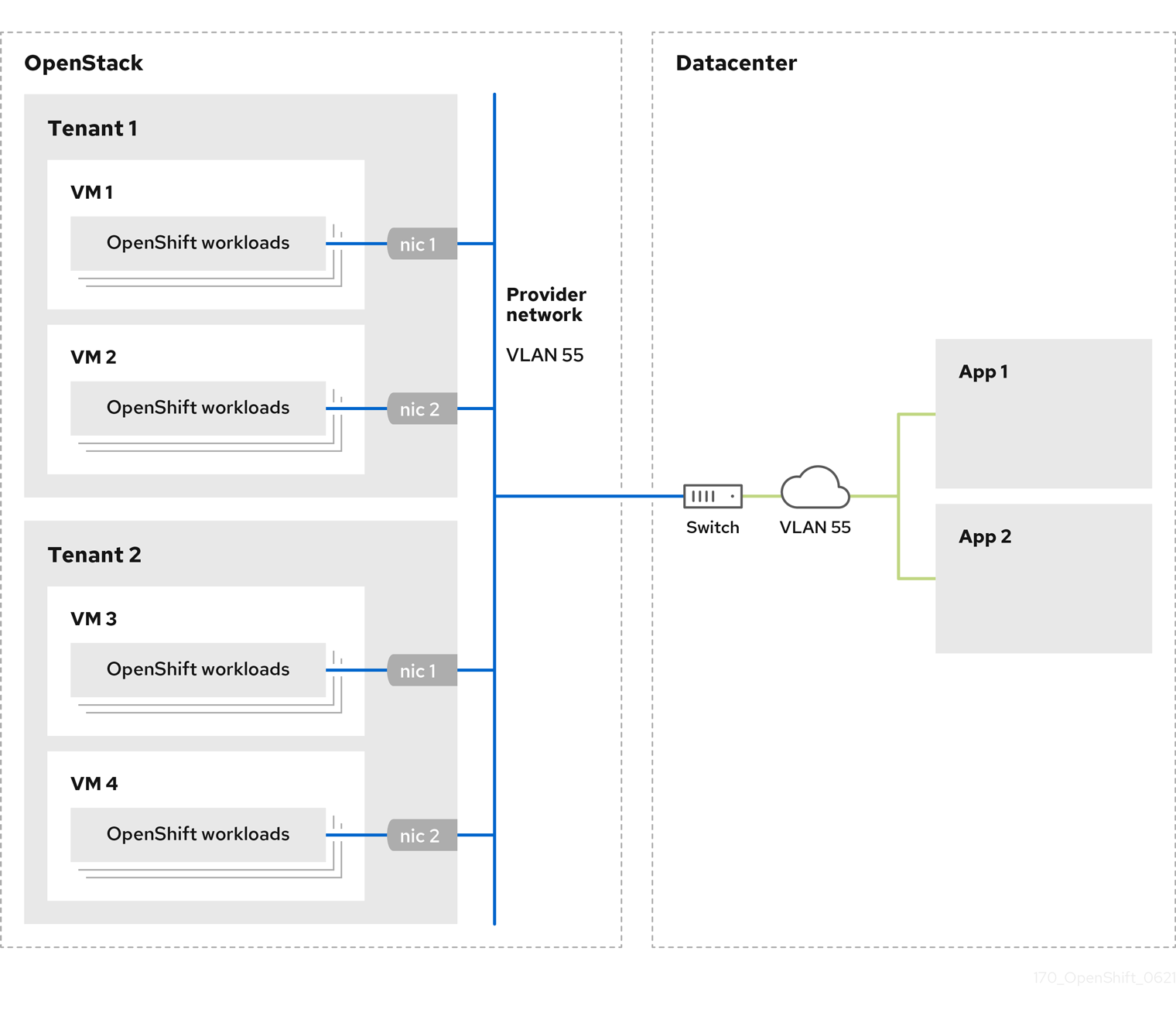

Red Hat OpenStack Platform (RHOSP) version 13 and 16

- The latest OpenShift Container Platform release supports both the latest RHOSP long-life release and intermediate release. For complete RHOSP release compatibility, see the OpenShift Container Platform on RHOSP support matrix.

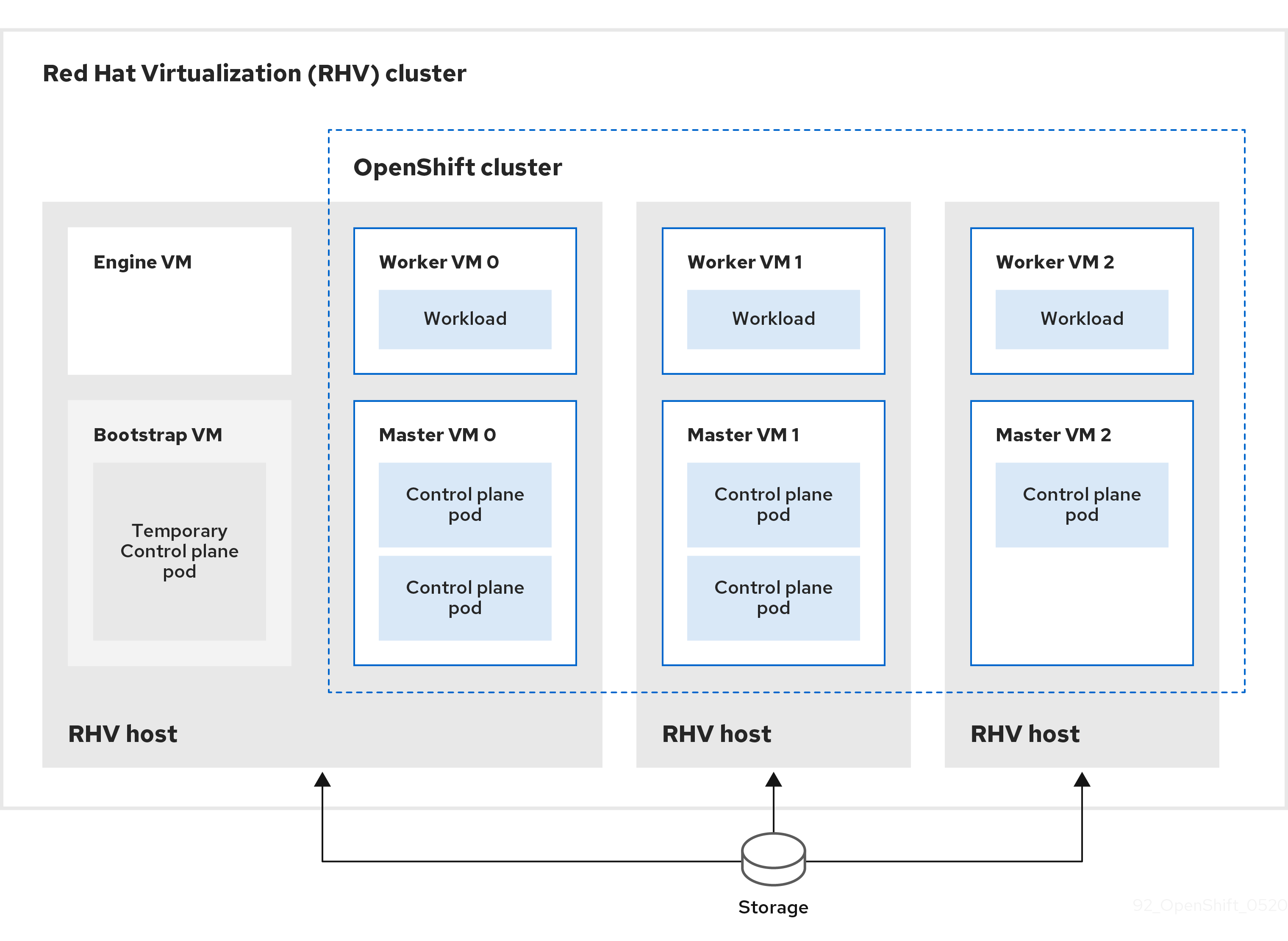

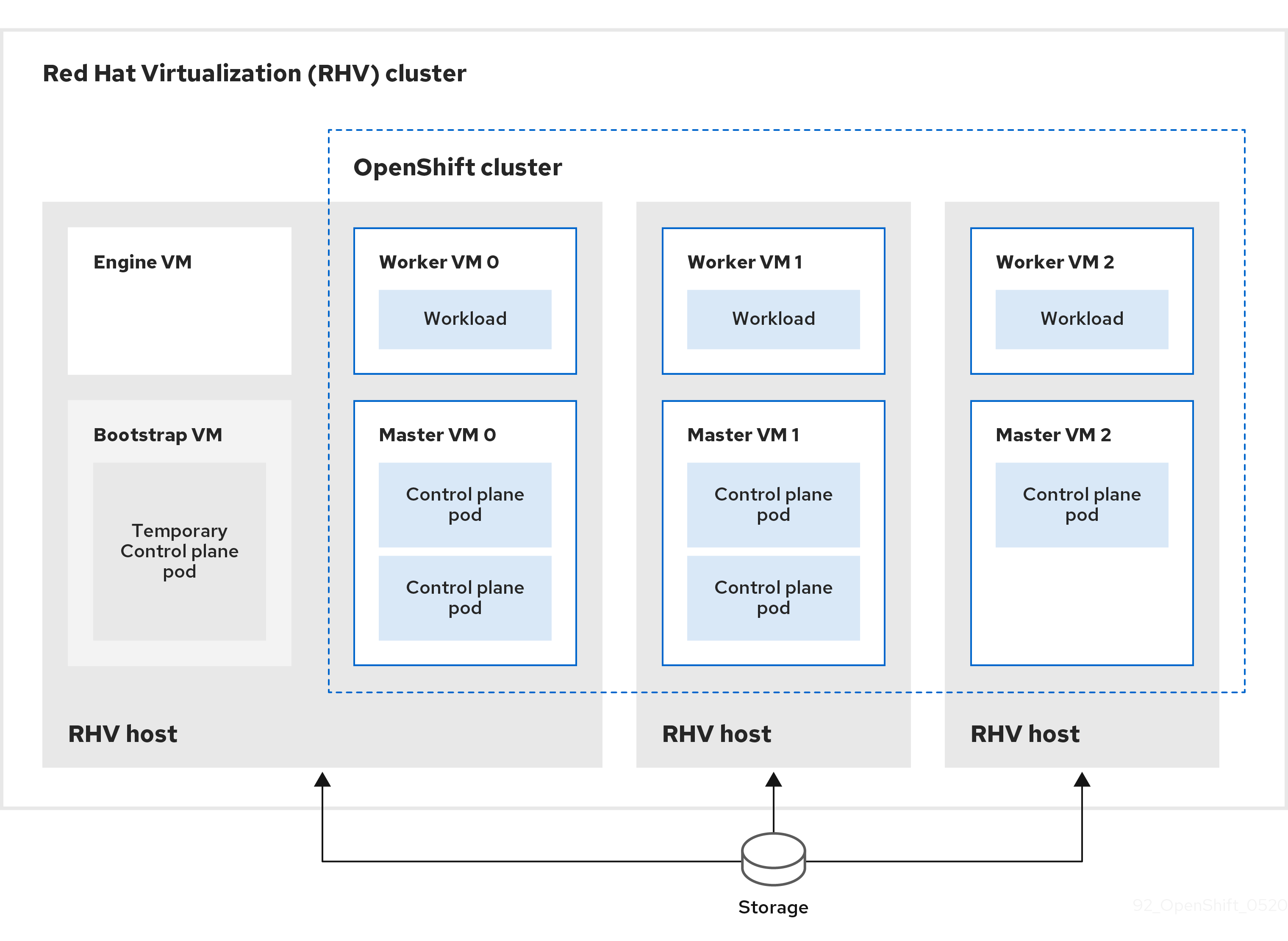

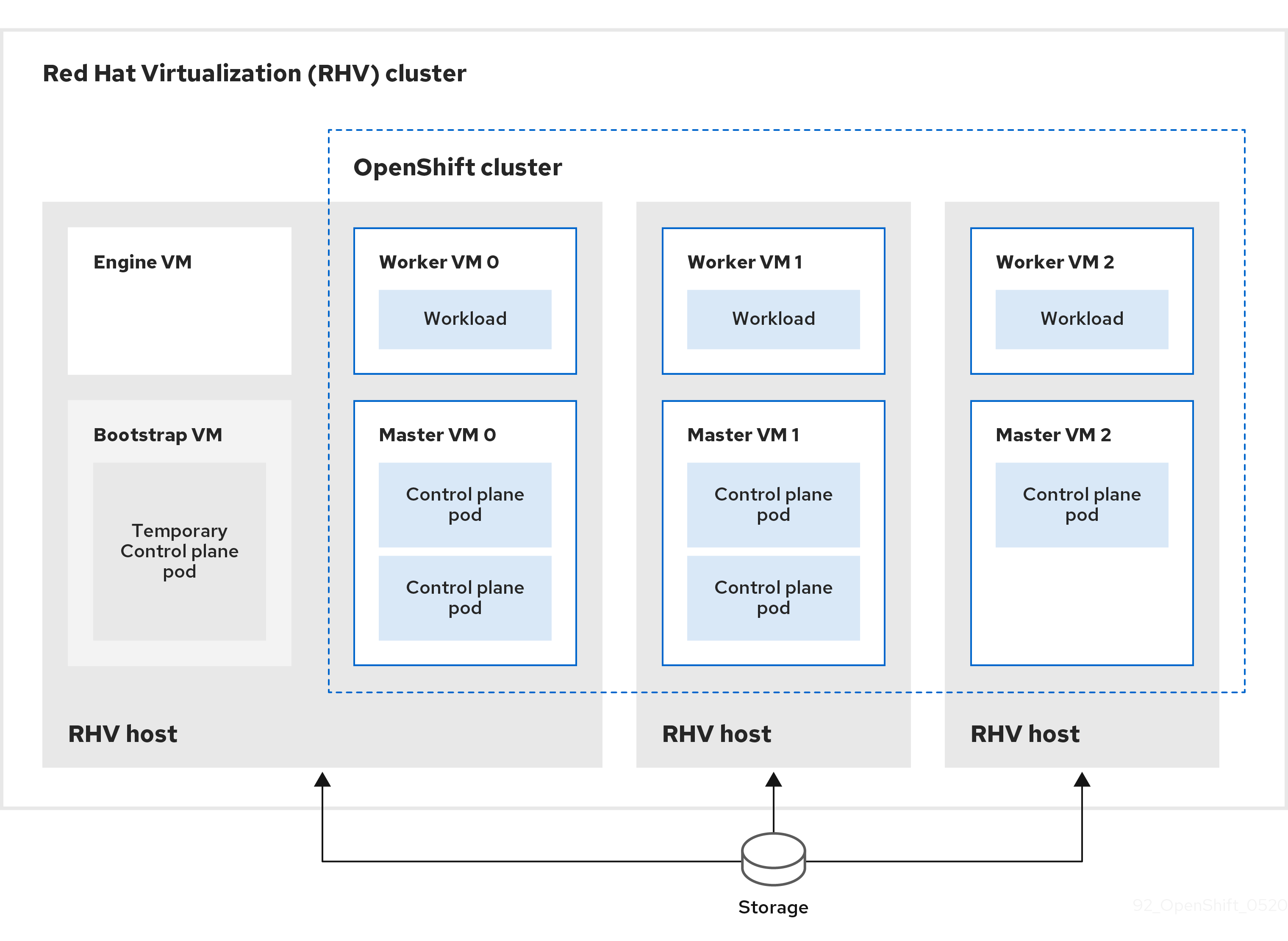

- Red Hat Virtualization (RHV)

- VMware vSphere

- VMware Cloud (VMC) on AWS

- Bare metal

For these clusters, all machines, including the computer that you run the installation process on, must have direct internet access to pull images for platform containers and provide telemetry data to Red Hat.

After installation, the following changes are not supported:

- Mixing cloud provider platforms

- Mixing cloud provider components, such as using a persistent storage framework from a differing platform than what the cluster is installed on

In OpenShift Container Platform 4.8, you can install a cluster that uses user-provisioned infrastructure on the following platforms:

- AWS

- Azure

- GCP

- RHOSP

- RHV

- VMware vSphere

- VMware Cloud on AWS

- Bare metal

- IBM Z or LinuxONE

- IBM Power Systems

Depending on the supported cases for the platform, installations on user-provisioned infrastructure allow you to run machines with full internet access, place your cluster behind a proxy, or perform a restricted network installation. In a restricted network installation, you can download the images that are required to install a cluster, place them in a mirror registry, and use that data to install your cluster. While you require internet access to pull images for platform containers, with a restricted network installation on vSphere or bare metal infrastructure, your cluster machines do not require direct internet access.

The OpenShift Container Platform 4.x Tested Integrations page contains details about integration testing for different platforms.

Chapter 2. Selecting a cluster installation method and preparing it for users

Before you install OpenShift Container Platform, decide what kind of installation process to follow and make sure you that you have all of the required resources to prepare the cluster for users.

2.1. Selecting a cluster installation type

Before you install an OpenShift Container Platform cluster, you need to select the best installation instructions to follow. Think about your answers to the following questions to select the best option.

2.1.1. Do you want to install and manage an OpenShift Container Platform cluster yourself?

If you want to install and manage OpenShift Container Platform yourself, you can install it on the following platforms:

- Amazon Web Services (AWS)

- Microsoft Azure

- Google Cloud Platform (GCP)

- Red Hat OpenStack Platform (RHOSP)

- Red Hat Virtualization (RHV)

- IBM Z and LinuxONE

- IBM Z and LinuxONE for Red Hat Enterprise Linux (RHEL) KVM

- IBM Power

- VMware vSphere

- VMware Cloud (VMC) on AWS

- Bare metal or other platform agnostic infrastructure

You can deploy an OpenShift Container Platform 4 cluster to both on-premise hardware and to cloud hosting services, but all of the machines in a cluster must be in the same datacenter or cloud hosting service.

If you want to use OpenShift Container Platform but do not want to manage the cluster yourself, you have several managed service options. If you want a cluster that is fully managed by Red Hat, you can use OpenShift Dedicated or OpenShift Online. You can also use OpenShift as a managed service on Azure, AWS, IBM Cloud, or Google Cloud. For more information about managed services, see the OpenShift Products page. If you install an OpenShift Container Platform cluster with a cloud virtual machine as a virtual bare metal, the corresponding cloud-based storage is not supported.

2.1.2. Have you used OpenShift Container Platform 3 and want to use OpenShift Container Platform 4?

If you used OpenShift Container Platform 3 and want to try OpenShift Container Platform 4, you need to understand how different OpenShift Container Platform 4 is. OpenShift Container Platform 4 weaves the Operators that package, deploy, and manage Kubernetes applications and the operating system that the platform runs on, Red Hat Enterprise Linux CoreOS (RHCOS), together seamlessly. Instead of deploying machines and configuring their operating systems so that you can install OpenShift Container Platform on them, the RHCOS operating system is an integral part of the OpenShift Container Platform cluster. Deploying the operating system for the cluster machines as part of the installation process for OpenShift Container Platform. See Comparing OpenShift Container Platform 3 and OpenShift Container Platform 4.

Because you need to provision machines as part of the OpenShift Container Platform cluster installation process, you cannot upgrade an OpenShift Container Platform 3 cluster to OpenShift Container Platform 4. Instead, you must create a new OpenShift Container Platform 4 cluster and migrate your OpenShift Container Platform 3 workloads to them. For more information about migrating, see OpenShift Migration Best Practices. Because you must migrate to OpenShift Container Platform 4, you can use any type of production cluster installation process to create your new cluster.

2.1.3. Do you want to use existing components in your cluster?

Because the operating system is integral to OpenShift Container Platform, it is easier to let the installation program for OpenShift Container Platform stand up all of the infrastructure. These are called installer provisioned infrastructure installations. In this type of installation, you can provide some existing infrastructure to the cluster, but the installation program deploys all of the machines that your cluster initially needs.

You can deploy an installer-provisioned infrastructure cluster without specifying any customizations to the cluster or its underlying machines to AWS, Azure, GCP, or VMC on AWS. These installation methods are the fastest way to deploy a production-capable OpenShift Container Platform cluster.

If you need to perform basic configuration for your installer-provisioned infrastructure cluster, such as the instance type for the cluster machines, you can customize an installation for AWS, Azure, GCP, or VMC on AWS.

For installer-provisioned infrastructure installations, you can use an existing VPC in AWS, vNet in Azure, or VPC in GCP. You can also reuse part of your networking infrastructure so that your cluster in AWS, Azure, GCP, or VMC on AWS can coexist with existing IP address allocations in your environment and integrate with existing MTU and VXLAN configurations. If you have existing accounts and credentials on these clouds, you can re-use them, but you might need to modify the accounts to have the required permissions to install OpenShift Container Platform clusters on them.

You can use the installer-provisioned infrastructure method to create appropriate machine instances on your hardware for RHOSP, RHOSP with Kuryr, RHV, vSphere, and bare metal.

If you want to reuse extensive cloud infrastructure, you can complete a user-provisioned infrastructure installation. With these installations, you manually deploy the machines that your cluster requires during the installation process. If you perform a user-provisioned infrastructure installation on AWS, Azure, GCP, or VMC on AWS, you can use the provided templates to help you stand up all of the required components. Otherwise, you can use the provider-agnostic installation method to deploy a cluster into other clouds.

You can also complete a user-provisioned infrastructure installation on your existing hardware. If you use RHOSP, RHOSP on SR-IOV, RHV, IBM Z or LinuxONE, IBM Power, or vSphere, use the specific installation instructions to deploy your cluster. If you use other supported hardware, follow the bare metal installation procedure.

2.1.4. Do you need extra security for your cluster?

If you use a user-provisioned installation method, you can configure a proxy for your cluster. The instructions are included in each installation procedure.

If you want to prevent your cluster on a public cloud from exposing endpoints externally, you can deploy a private cluster with installer-provisioned infrastructure on AWS, Azure, or GCP.

If you need to install your cluster that has limited access to the internet, such as a disconnected or restricted network cluster, you can mirror the installation packages and install the cluster from them. Follow detailed instructions for user provisioned infrastructure installations into restricted networks for AWS, GCP, IBM Z or LinuxONE, IBM Z or LinuxONE with RHEL KVM, IBM Power, vSphere, VMC on AWS, or bare metal. You can also install a cluster into a restricted network using installer-provisioned infrastructure by following detailed instructions for AWS, GCP, VMC on AWS, RHOSP, RHV, and vSphere.

If you need to deploy your cluster to an AWS GovCloud region or Azure government region, you can configure those custom regions during an installer-provisioned infrastructure installation.

You can also configure the cluster machines to use FIPS Validated / Modules in Process cryptographic libraries during installation.

The use of FIPS Validated / Modules in Process cryptographic libraries is only supported on OpenShift Container Platform deployments on the x86_64 architecture.

2.2. Preparing your cluster for users after installation

Some configuration is not required to install the cluster but recommended before your users access the cluster. You can customize the cluster itself by customizing the Operators that make up your cluster and integrate you cluster with other required systems, such as an identity provider.

For a production cluster, you must configure the following integrations:

2.3. Preparing your cluster for workloads

Depending on your workload needs, you might need to take extra steps before you begin deploying applications. For example, after you prepare infrastructure to support your application build strategy, you might need to make provisions for low-latency workloads or to protect sensitive workloads. You can also configure monitoring for application workloads. If you plan to run Windows workloads, you must enable hybrid networking with OVN-Kubernetes during the installation process; hybrid networking cannot be enabled after your cluster is installed.

2.4. Supported installation methods for different platforms

You can perform different types of installations on different platforms.

Not all installation options are supported for all platforms, as shown in the following tables. A checkmark indicates that the option is supported and links to the relevant section.

| AWS | Azure | GCP | RHOSP | RHV | Bare metal | vSphere | VMC | IBM Z | IBM Power | |

|---|---|---|---|---|---|---|---|---|---|---|

| Default | ||||||||||

| Custom | ||||||||||

| Network customization | ||||||||||

| Restricted network | ||||||||||

| Private clusters | ||||||||||

| Existing virtual private networks | ||||||||||

| Government regions |

| AWS | Azure | GCP | RHOSP | RHOSP on SR-IOV | RHV | Bare metal | vSphere | VMC | IBM Z | IBM Z with RHEL KVM | IBM Power | Platform agnostic | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Custom | |||||||||||||

| Network customization | |||||||||||||

| Restricted network | |||||||||||||

| Shared VPC hosted outside of cluster project |

Chapter 3. Mirroring images for a disconnected installation

You can use the procedures in this section to ensure your clusters only use container images that satisfy your organizational controls on external content. Before you install a cluster on infrastructure that you provision in a restricted network, you must mirror the required container images into that environment. To mirror container images, you must have a registry for mirroring.

You must have access to the internet to obtain the necessary container images. In this procedure, you place your mirror registry on a mirror host that has access to both your network and the Internet. If you do not have access to a mirror host, use the Mirroring an Operator catalog procedure to copy images to a device you can move across network boundaries with.

3.1. Prerequisites

You must have a container image registry that supports Docker v2-2 in the location that will host the OpenShift Container Platform cluster, such as one of the following registries:

If you have an entitlement to Red Hat Quay, see the documentation on deploying Red Hat Quay for proof-of-concept purposes or by using the Quay Operator. If you need additional assistance selecting and installing a registry, contact your sales representative or Red Hat support.

- If you do not already have an existing solution for a container image registry, subscribers of OpenShift Container Platform are provided a mirror registry for Red Hat OpenShift. The mirror registry for Red Hat OpenShift is included with your subscription and is a small-scale container registry that can be used to mirror the required container images of OpenShift Container Platform in disconnected installations.

3.2. About the mirror registry

You can mirror the images that are required for OpenShift Container Platform installation and subsequent product updates to a container mirror registry such as Red Hat Quay, JFrog Artifactory, Sonatype Nexus Repository, or Harbor. If you do not have access to a large-scale container registry, you can use the mirror registry for Red Hat OpenShift, a small-scale container registry included with OpenShift Container Platform subscriptions.

You can use any container registry that supports Docker v2-2, such as Red Hat Quay, the mirror registry for Red Hat OpenShift, Artifactory, Sonatype Nexus Repository, or Harbor. Regardless of your chosen registry, the procedure to mirror content from Red Hat hosted sites on the internet to an isolated image registry is the same. After you mirror the content, you configure each cluster to retrieve this content from your mirror registry.

The internal registry of the OpenShift Container Platform cluster cannot be used as the target registry because it does not support pushing without a tag, which is required during the mirroring process.

If choosing a container registry that is not the mirror registry for Red Hat OpenShift, it must be reachable by every machine in the clusters that you provision. If the registry is unreachable, installation, updating, or normal operations such as workload relocation might fail. For that reason, you must run mirror registries in a highly available way, and the mirror registries must at least match the production availability of your OpenShift Container Platform clusters.

When you populate your mirror registry with OpenShift Container Platform images, you can follow two scenarios. If you have a host that can access both the internet and your mirror registry, but not your cluster nodes, you can directly mirror the content from that machine. This process is referred to as connected mirroring. If you have no such host, you must mirror the images to a file system and then bring that host or removable media into your restricted environment. This process is referred to as disconnected mirroring.

For mirrored registries, to view the source of pulled images, you must review the Trying to access log entry in the CRI-O logs. Other methods to view the image pull source, such as using the crictl images command on a node, show the non-mirrored image name, even though the image is pulled from the mirrored location.

Red Hat does not test third party registries with OpenShift Container Platform.

Additional information

For information on viewing the CRI-O logs to view the image source, see Viewing the image pull source.

3.3. Preparing your mirror host

Before you perform the mirror procedure, you must prepare the host to retrieve content and push it to the remote location.

3.3.1. Installing the OpenShift CLI by downloading the binary

You can install the OpenShift CLI (oc) to interact with OpenShift Container Platform from a command-line interface. You can install oc on Linux, Windows, or macOS.

If you installed an earlier version of oc, you cannot use it to complete all of the commands in OpenShift Container Platform 4.8. Download and install the new version of oc.

Installing the OpenShift CLI on Linux

You can install the OpenShift CLI (oc) binary on Linux by using the following procedure.

Procedure

- Navigate to the OpenShift Container Platform downloads page on the Red Hat Customer Portal.

- Select the appropriate version in the Version drop-down menu.

- Click Download Now next to the OpenShift v4.8 Linux Client entry and save the file.

Unpack the archive:

tar xvzf <file>

$ tar xvzf <file>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Place the

ocbinary in a directory that is on yourPATH.To check your

PATH, execute the following command:echo $PATH

$ echo $PATHCopy to Clipboard Copied! Toggle word wrap Toggle overflow

After you install the OpenShift CLI, it is available using the oc command:

oc <command>

$ oc <command>Installing the OpenShift CLI on Windows

You can install the OpenShift CLI (oc) binary on Windows by using the following procedure.

Procedure

- Navigate to the OpenShift Container Platform downloads page on the Red Hat Customer Portal.

- Select the appropriate version in the Version drop-down menu.

- Click Download Now next to the OpenShift v4.8 Windows Client entry and save the file.

- Unzip the archive with a ZIP program.

Move the

ocbinary to a directory that is on yourPATH.To check your

PATH, open the command prompt and execute the following command:path

C:\> pathCopy to Clipboard Copied! Toggle word wrap Toggle overflow

After you install the OpenShift CLI, it is available using the oc command:

oc <command>

C:\> oc <command>Installing the OpenShift CLI on macOS

You can install the OpenShift CLI (oc) binary on macOS by using the following procedure.

Procedure

- Navigate to the OpenShift Container Platform downloads page on the Red Hat Customer Portal.

- Select the appropriate version in the Version drop-down menu.

- Click Download Now next to the OpenShift v4.8 MacOSX Client entry and save the file.

- Unpack and unzip the archive.

Move the

ocbinary to a directory on your PATH.To check your

PATH, open a terminal and execute the following command:echo $PATH

$ echo $PATHCopy to Clipboard Copied! Toggle word wrap Toggle overflow

After you install the OpenShift CLI, it is available using the oc command:

oc <command>

$ oc <command>3.4. Configuring credentials that allow images to be mirrored

Create a container image registry credentials file that allows mirroring images from Red Hat to your mirror.

Do not use this image registry credentials file as the pull secret when you install a cluster. If you provide this file when you install cluster, all of the machines in the cluster will have write access to your mirror registry.

This process requires that you have write access to a container image registry on the mirror registry and adds the credentials to a registry pull secret.

Prerequisites

- You configured a mirror registry to use in your disconnected environment.

- You identified an image repository location on your mirror registry to mirror images into.

- You provisioned a mirror registry account that allows images to be uploaded to that image repository.

Procedure

Complete the following steps on the installation host:

-

Download your

registry.redhat.iopull secret from the Red Hat OpenShift Cluster Manager and save it to a.jsonfile. Generate the base64-encoded user name and password or token for your mirror registry:

echo -n '<user_name>:<password>' | base64 -w0 BGVtbYk3ZHAtqXs=

$ echo -n '<user_name>:<password>' | base64 -w01 BGVtbYk3ZHAtqXs=Copy to Clipboard Copied! Toggle word wrap Toggle overflow - 1

- For

<user_name>and<password>, specify the user name and password that you configured for your registry.

Make a copy of your pull secret in JSON format:

cat ./pull-secret.text | jq . > <path>/<pull_secret_file_in_json>

$ cat ./pull-secret.text | jq . > <path>/<pull_secret_file_in_json>1 Copy to Clipboard Copied! Toggle word wrap Toggle overflow - 1

- Specify the path to the folder to store the pull secret in and a name for the JSON file that you create.

Save the file either as

~/.docker/config.jsonor$XDG_RUNTIME_DIR/containers/auth.json.The contents of the file resemble the following example:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow Edit the new file and add a section that describes your registry to it:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow The file resembles the following example:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow

3.5. Mirror registry for Red Hat OpenShift

The mirror registry for Red Hat OpenShift is a small and streamlined container registry that you can use as a target for mirroring the required container images of OpenShift Container Platform for disconnected installations.

If you already have a container image registry, such as Red Hat Quay, you can skip these steps and go straight to Mirroring the OpenShift Container Platform image repository.

Prerequisites

- An OpenShift Container Platform subscription.

- Red Hat Enterprise Linux (RHEL) 8 with Podman 3.3 and OpenSSL installed.

- Fully qualified domain name for the Red Hat Quay service, which must resolve through a DNS server.

-

Passwordless

sudoaccess on the target host. - Key-based SSH connectivity on the target host. SSH keys are automatically generated for local installs. For remote hosts, you must generate your own SSH keys.

- 2 or more vCPUs.

- 8 GB of RAM.

About 8.7 GB for OpenShift Container Platform 4.8 Release images, or about 668 GB for OpenShift Container Platform 4.8 Release images and OpenShift Container Platform 4.8 Red Hat Operator images. Up to 1 TB per stream or more is suggested.

ImportantThese requirements are based on local testing results with only Release images and Operator images tested. Storage requirements can vary based on your organization’s needs. Some users might require more space, for example, when they mirror multiple z-streams. You can use standard Red Hat Quay functionality to remove unnecessary images and free up space.

3.5.1. Mirror registry for Red Hat OpenShift introduction

For disconnected deployments of OpenShift Container Platform, a container registry is required to carry out the installation of the clusters. To run a production-grade registry service on such a cluster, you must create a separate registry deployment to install the first cluster. The mirror registry for Red Hat OpenShift addresses this need and is included in every OpenShift subscription. It is available for download on the OpenShift console Downloads page.

The mirror registry for Red Hat OpenShift allows users to install a small-scale version of Red Hat Quay and its required components using the mirror-registry command line interface (CLI) tool. The mirror registry for Red Hat OpenShift is deployed automatically with pre-configured local storage and a local database. It also includes auto-generated user credentials and access permissions with a single set of inputs and no additional configuration choices to get started.

The mirror registry for Red Hat OpenShift provides a pre-determined network configuration and reports deployed component credentials and access URLs upon success. A limited set of optional configuration inputs like fully qualified domain name (FQDN) services, superuser name and password, and custom TLS certificates are also provided. This provides users with a container registry so that they can easily create an offline mirror of all OpenShift Container Platform release content when running OpenShift Container Platform in restricted network environments.

The mirror registry for Red Hat OpenShift is limited to hosting images that are required to install a disconnected OpenShift Container Platform cluster, such as Release images or Red Hat Operator images. It uses local storage on your Red Hat Enterprise Linux (RHEL) machine, and storage supported by RHEL is supported by the mirror registry for Red Hat OpenShift. Content built by customers should not be hosted by the mirror registry for Red Hat OpenShift.

Unlike Red Hat Quay, the mirror registry for Red Hat OpenShift is not a highly-available registry and only local file system storage is supported. Using the mirror registry for Red Hat OpenShift with more than one cluster is discouraged, because multiple clusters can create a single point of failure when updating your cluster fleet. It is advised to leverage the mirror registry for Red Hat OpenShift to install a cluster that can host a production-grade, highly-available registry such as Red Hat Quay, which can serve OpenShift Container Platform content to other clusters.

Use of the mirror registry for Red Hat OpenShift is optional if another container registry is already available in the install environment.

3.5.2. Mirroring on a local host with mirror registry for Red Hat OpenShift

This procedure explains how to install the mirror registry for Red Hat OpenShift on a local host using the mirror-registry installer tool. By doing so, users can create a local host registry running on port 443 for the purpose of storing a mirror of OpenShift Container Platform images.

Installing the mirror registry for Red Hat OpenShift using the mirror-registry CLI tool makes several changes to your machine. After installation, a /etc/quay-install directory is created, which has installation files, local storage, and the configuration bundle. Trusted SSH keys are generated in case the deployment target is the local host, and systemd files on the host machine are set up to ensure that container runtimes are persistent. Additionally, an initial user named init is created with an automatically generated password. All access credentials are printed at the end of the install routine.

Procedure

-

Download the

mirror-registry.tar.gzpackage for the latest version of the mirror registry for Red Hat OpenShift found on the OpenShift console Downloads page. Install the mirror registry for Red Hat OpenShift on your local host with your current user account by using the

mirror-registrytool. For a full list of available flags, see "mirror registry for Red Hat OpenShift flags".sudo ./mirror-registry install \ --quayHostname <host_example_com> \ --quayRoot <example_directory_name>

$ sudo ./mirror-registry install \ --quayHostname <host_example_com> \ --quayRoot <example_directory_name>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Use the user name and password generated during installation to log into the registry by running the following command:

podman login --authfile pull-secret.txt \ -u init \ -p <password> \ <host_example_com>:8443> \ --tls-verify=false

$ podman login --authfile pull-secret.txt \ -u init \ -p <password> \ <host_example_com>:8443> \ --tls-verify=false1 Copy to Clipboard Copied! Toggle word wrap Toggle overflow - 1

- You can avoid running

--tls-verify=falseby configuring your system to trust the generated rootCA certificates. See "Using SSL to protect connections to Red Hat Quay" and "Configuring the system to trust the certificate authority" for more information.

NoteYou can also log in by accessing the UI at

https://<host.example.com>:8443after installation.You can mirror OpenShift Container Platform images after logging in. Depending on your needs, see either the "Mirroring the OpenShift Container Platform image repository" or the "Mirroring an Operator catalog" sections of this document.

NoteIf there are issues with images stored by the mirror registry for Red Hat OpenShift due to storage layer problems, you can remirror the OpenShift Container Platform images, or reinstall mirror registry on more stable storage.

3.5.3. Mirroring on a remote host with mirror registry for Red Hat OpenShift

This procedure explains how to install the mirror registry for Red Hat OpenShift on a remote host using the mirror-registry tool. By doing so, users can create a registry to hold a mirror of OpenShift Container Platform images.

Installing the mirror registry for Red Hat OpenShift using the mirror-registry CLI tool makes several changes to your machine. After installation, a /etc/quay-install directory is created, which has installation files, local storage, and the configuration bundle. Trusted SSH keys are generated in case the deployment target is the local host, and systemd files on the host machine are set up to ensure that container runtimes are persistent. Additionally, an initial user named init is created with an automatically generated password. All access credentials are printed at the end of the install routine.

Procedure

-

Download the

mirror-registry.tar.gzpackage for the latest version of the mirror registry for Red Hat OpenShift found on the OpenShift console Downloads page. Install the mirror registry for Red Hat OpenShift on your local host with your current user account by using the

mirror-registrytool. For a full list of available flags, see "mirror registry for Red Hat OpenShift flags".Copy to Clipboard Copied! Toggle word wrap Toggle overflow Use the user name and password generated during installation to log into the mirror registry by running the following command:

podman login --authfile pull-secret.txt \ -u init \ -p <password> \ <host_example_com>:8443> \ --tls-verify=false

$ podman login --authfile pull-secret.txt \ -u init \ -p <password> \ <host_example_com>:8443> \ --tls-verify=false1 Copy to Clipboard Copied! Toggle word wrap Toggle overflow - 1

- You can avoid running

--tls-verify=falseby configuring your system to trust the generated rootCA certificates. See "Using SSL to protect connections to Red Hat Quay" and "Configuring the system to trust the certificate authority" for more information.

NoteYou can also log in by accessing the UI at

https://<host.example.com>:8443after installation.You can mirror OpenShift Container Platform images after logging in. Depending on your needs, see either the "Mirroring the OpenShift Container Platform image repository" or the "Mirroring an Operator catalog" sections of this document.

NoteIf there are issues with images stored by the mirror registry for Red Hat OpenShift due to storage layer problems, you can remirror the OpenShift Container Platform images, or reinstall mirror registry on more stable storage.

3.6. Upgrading the mirror registry for Red Hat OpenShift

You can upgrade the mirror registry for Red Hat OpenShift from your local host by running the following command:

sudo ./mirror-registry upgrade

$ sudo ./mirror-registry upgradeCopy to Clipboard Copied! Toggle word wrap Toggle overflow Note-

Users who upgrade the mirror registry for Red Hat OpenShift with the

./mirror-registry upgradeflag must include the same credentials used when creating their mirror registry. For example, if you installed the mirror registry for Red Hat OpenShift with--quayHostname <host_example_com>and--quayRoot <example_directory_name>, you must include that string to properly upgrade the mirror registry.

-

Users who upgrade the mirror registry for Red Hat OpenShift with the

3.6.1. Uninstalling the mirror registry for Red Hat OpenShift

You can uninstall the mirror registry for Red Hat OpenShift from your local host by running the following command:

sudo ./mirror-registry uninstall -v \ --quayRoot <example_directory_name>

$ sudo ./mirror-registry uninstall -v \ --quayRoot <example_directory_name>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Note-

Deleting the mirror registry for Red Hat OpenShift will prompt the user before deletion. You can use

--autoApproveto skip this prompt. -

Users who install the mirror registry for Red Hat OpenShift with the

--quayRootflag must include the--quayRootflag when uninstalling. For example, if you installed the mirror registry for Red Hat OpenShift with--quayRoot example_directory_name, you must include that string to properly uninstall the mirror registry.

-

Deleting the mirror registry for Red Hat OpenShift will prompt the user before deletion. You can use

3.6.2. Mirror registry for Red Hat OpenShift flags

The following flags are available for the mirror registry for Red Hat OpenShift:

| Flags | Description |

|---|---|

|

|

A boolean value that disables interactive prompts. If set to |

|

| The password of the init user created during Quay installation. Must be at least eight characters and contain no whitespace. |

|

|

Shows the username of the initial user. Defaults to |

|

|

The fully-qualified domain name of the mirror registry that clients will use to contact the registry. Equivalent to |

|

|

The directory where container image layer and configuration data is saved, including |

|

|

The path of your SSH identity key. Defaults to |

|

|

The path to the SSL/TLS public key / certificate. Defaults to |

|

|

Skips the check for the certificate hostname against the |

|

|

The path to the SSL/TLS private key used for HTTPS communication. Defaults to |

|

|

The hostname of the target you want to install Quay to. Defaults to |

|

|

The user on the target host which will be used for SSH. Defaults to |

|

| Shows debug logs and Ansible playbook outputs. |

|

| Shows the version for the mirror registry for Red Hat OpenShift. |

-

--quayHostnamemust be modified if the public DNS name of your system is different from the local hostname. -

--sslCheckSkipis used in cases when the mirror registry is set behind a proxy and the exposed hostname is different from the internal Quay hostname. It can also be used when users do not want the certificates to be validated against the provided Quay hostname during installation.

3.7. Mirroring the OpenShift Container Platform image repository

Mirror the OpenShift Container Platform image repository to your registry to use during cluster installation or upgrade.

Prerequisites

- Your mirror host has access to the internet.

- You configured a mirror registry to use in your restricted network and can access the certificate and credentials that you configured.

- You downloaded the pull secret from the Red Hat OpenShift Cluster Manager and modified it to include authentication to your mirror repository.

If you use self-signed certificates that do not set a Subject Alternative Name, you must precede the

occommands in this procedure withGODEBUG=x509ignoreCN=0. If you do not set this variable, theoccommands will fail with the following error:x509: certificate relies on legacy Common Name field, use SANs or temporarily enable Common Name matching with GODEBUG=x509ignoreCN=0

x509: certificate relies on legacy Common Name field, use SANs or temporarily enable Common Name matching with GODEBUG=x509ignoreCN=0Copy to Clipboard Copied! Toggle word wrap Toggle overflow

Procedure

Complete the following steps on the mirror host:

- Review the OpenShift Container Platform downloads page to determine the version of OpenShift Container Platform that you want to install and determine the corresponding tag on the Repository Tags page.

Set the required environment variables:

Export the release version:

OCP_RELEASE=<release_version>

$ OCP_RELEASE=<release_version>Copy to Clipboard Copied! Toggle word wrap Toggle overflow For

<release_version>, specify the tag that corresponds to the version of OpenShift Container Platform to install, such as4.5.4.Export the local registry name and host port:

LOCAL_REGISTRY='<local_registry_host_name>:<local_registry_host_port>'

$ LOCAL_REGISTRY='<local_registry_host_name>:<local_registry_host_port>'Copy to Clipboard Copied! Toggle word wrap Toggle overflow For

<local_registry_host_name>, specify the registry domain name for your mirror repository, and for<local_registry_host_port>, specify the port that it serves content on.Export the local repository name:

LOCAL_REPOSITORY='<local_repository_name>'

$ LOCAL_REPOSITORY='<local_repository_name>'Copy to Clipboard Copied! Toggle word wrap Toggle overflow For

<local_repository_name>, specify the name of the repository to create in your registry, such asocp4/openshift4.Export the name of the repository to mirror:

PRODUCT_REPO='openshift-release-dev'

$ PRODUCT_REPO='openshift-release-dev'Copy to Clipboard Copied! Toggle word wrap Toggle overflow For a production release, you must specify

openshift-release-dev.Export the path to your registry pull secret:

LOCAL_SECRET_JSON='<path_to_pull_secret>'

$ LOCAL_SECRET_JSON='<path_to_pull_secret>'Copy to Clipboard Copied! Toggle word wrap Toggle overflow For

<path_to_pull_secret>, specify the absolute path to and file name of the pull secret for your mirror registry that you created.Export the release mirror:

RELEASE_NAME="ocp-release"

$ RELEASE_NAME="ocp-release"Copy to Clipboard Copied! Toggle word wrap Toggle overflow For a production release, you must specify

ocp-release.Export the type of architecture for your server, such as

x86_64:ARCHITECTURE=<server_architecture>

$ ARCHITECTURE=<server_architecture>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Export the path to the directory to host the mirrored images:

REMOVABLE_MEDIA_PATH=<path>

$ REMOVABLE_MEDIA_PATH=<path>1 Copy to Clipboard Copied! Toggle word wrap Toggle overflow - 1

- Specify the full path, including the initial forward slash (/) character.

Mirror the version images to the mirror registry:

If your mirror host does not have internet access, take the following actions:

- Connect the removable media to a system that is connected to the internet.

Review the images and configuration manifests to mirror:

oc adm release mirror -a ${LOCAL_SECRET_JSON} \ --from=quay.io/${PRODUCT_REPO}/${RELEASE_NAME}:${OCP_RELEASE}-${ARCHITECTURE} \ --to=${LOCAL_REGISTRY}/${LOCAL_REPOSITORY} \ --to-release-image=${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}:${OCP_RELEASE}-${ARCHITECTURE} --dry-run$ oc adm release mirror -a ${LOCAL_SECRET_JSON} \ --from=quay.io/${PRODUCT_REPO}/${RELEASE_NAME}:${OCP_RELEASE}-${ARCHITECTURE} \ --to=${LOCAL_REGISTRY}/${LOCAL_REPOSITORY} \ --to-release-image=${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}:${OCP_RELEASE}-${ARCHITECTURE} --dry-runCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Record the entire

imageContentSourcessection from the output of the previous command. The information about your mirrors is unique to your mirrored repository, and you must add theimageContentSourcessection to theinstall-config.yamlfile during installation. Mirror the images to a directory on the removable media:

oc adm release mirror -a ${LOCAL_SECRET_JSON} --to-dir=${REMOVABLE_MEDIA_PATH}/mirror quay.io/${PRODUCT_REPO}/${RELEASE_NAME}:${OCP_RELEASE}-${ARCHITECTURE}$ oc adm release mirror -a ${LOCAL_SECRET_JSON} --to-dir=${REMOVABLE_MEDIA_PATH}/mirror quay.io/${PRODUCT_REPO}/${RELEASE_NAME}:${OCP_RELEASE}-${ARCHITECTURE}Copy to Clipboard Copied! Toggle word wrap Toggle overflow Take the media to the restricted network environment and upload the images to the local container registry.

oc image mirror -a ${LOCAL_SECRET_JSON} --from-dir=${REMOVABLE_MEDIA_PATH}/mirror "file://openshift/release:${OCP_RELEASE}*" ${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}$ oc image mirror -a ${LOCAL_SECRET_JSON} --from-dir=${REMOVABLE_MEDIA_PATH}/mirror "file://openshift/release:${OCP_RELEASE}*" ${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}1 Copy to Clipboard Copied! Toggle word wrap Toggle overflow - 1

- For

REMOVABLE_MEDIA_PATH, you must use the same path that you specified when you mirrored the images.

If the local container registry is connected to the mirror host, take the following actions:

Directly push the release images to the local registry by using following command:

oc adm release mirror -a ${LOCAL_SECRET_JSON} \ --from=quay.io/${PRODUCT_REPO}/${RELEASE_NAME}:${OCP_RELEASE}-${ARCHITECTURE} \ --to=${LOCAL_REGISTRY}/${LOCAL_REPOSITORY} \ --to-release-image=${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}:${OCP_RELEASE}-${ARCHITECTURE}$ oc adm release mirror -a ${LOCAL_SECRET_JSON} \ --from=quay.io/${PRODUCT_REPO}/${RELEASE_NAME}:${OCP_RELEASE}-${ARCHITECTURE} \ --to=${LOCAL_REGISTRY}/${LOCAL_REPOSITORY} \ --to-release-image=${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}:${OCP_RELEASE}-${ARCHITECTURE}Copy to Clipboard Copied! Toggle word wrap Toggle overflow This command pulls the release information as a digest, and its output includes the

imageContentSourcesdata that you require when you install your cluster.Record the entire

imageContentSourcessection from the output of the previous command. The information about your mirrors is unique to your mirrored repository, and you must add theimageContentSourcessection to theinstall-config.yamlfile during installation.NoteThe image name gets patched to Quay.io during the mirroring process, and the podman images will show Quay.io in the registry on the bootstrap virtual machine.

To create the installation program that is based on the content that you mirrored, extract it and pin it to the release:

If your mirror host does not have internet access, run the following command:

oc adm release extract -a ${LOCAL_SECRET_JSON} --command=openshift-install "${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}:${OCP_RELEASE}"$ oc adm release extract -a ${LOCAL_SECRET_JSON} --command=openshift-install "${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}:${OCP_RELEASE}"Copy to Clipboard Copied! Toggle word wrap Toggle overflow If the local container registry is connected to the mirror host, run the following command:

oc adm release extract -a ${LOCAL_SECRET_JSON} --command=openshift-install "${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}:${OCP_RELEASE}-${ARCHITECTURE}"$ oc adm release extract -a ${LOCAL_SECRET_JSON} --command=openshift-install "${LOCAL_REGISTRY}/${LOCAL_REPOSITORY}:${OCP_RELEASE}-${ARCHITECTURE}"Copy to Clipboard Copied! Toggle word wrap Toggle overflow ImportantTo ensure that you use the correct images for the version of OpenShift Container Platform that you selected, you must extract the installation program from the mirrored content.

You must perform this step on a machine with an active internet connection.

If you are in a disconnected environment, use the

--imageflag as part of must-gather and point to the payload image.

For clusters using installer-provisioned infrastructure, run the following command:

openshift-install

$ openshift-installCopy to Clipboard Copied! Toggle word wrap Toggle overflow

3.8. The Cluster Samples Operator in a disconnected environment

In a disconnected environment, you must take additional steps after you install a cluster to configure the Cluster Samples Operator. Review the following information in preparation.

3.8.1. Cluster Samples Operator assistance for mirroring

During installation, OpenShift Container Platform creates a config map named imagestreamtag-to-image in the openshift-cluster-samples-operator namespace. The imagestreamtag-to-image config map contains an entry, the populating image, for each image stream tag.

The format of the key for each entry in the data field in the config map is <image_stream_name>_<image_stream_tag_name>.

During a disconnected installation of OpenShift Container Platform, the status of the Cluster Samples Operator is set to Removed. If you choose to change it to Managed, it installs samples.

The use of samples in a network-restricted or discontinued environment may require access to services external to your network. Some example services include: Github, Maven Central, npm, RubyGems, PyPi and others. There might be additional steps to take that allow the cluster samples operators’s objects to reach the services they require.

You can use this config map as a reference for which images need to be mirrored for your image streams to import.

-

While the Cluster Samples Operator is set to

Removed, you can create your mirrored registry, or determine which existing mirrored registry you want to use. - Mirror the samples you want to the mirrored registry using the new config map as your guide.

-

Add any of the image streams you did not mirror to the

skippedImagestreamslist of the Cluster Samples Operator configuration object. -

Set

samplesRegistryof the Cluster Samples Operator configuration object to the mirrored registry. -

Then set the Cluster Samples Operator to

Managedto install the image streams you have mirrored.

3.9. Next steps

- Mirror the OperatorHub images for the Operators that you want to install in your cluster.

- Install a cluster on infrastructure that you provision in your restricted network, such as on VMware vSphere, bare metal, or Amazon Web Services.

Chapter 4. Installing on AWS

4.1. Preparing to install on AWS

4.1.1. Prerequisites

- You reviewed details about the OpenShift Container Platform installation and update processes.

- You read the documentation on selecting a cluster installation method and preparing it for users.

4.1.2. Requirements for installing OpenShift Container Platform on AWS

Before installing OpenShift Container Platform on Amazon Web Services (AWS), you must create an AWS account. See Configuring an AWS account for details about configuring an account, account limits, account permissions, IAM user setup, and supported AWS regions.

If the cloud identity and access management (IAM) APIs are not accessible in your environment, or if you do not want to store an administrator-level credential secret in the kube-system namespace, see Manually creating IAM for AWS for other options, including configuring the Cloud Credential Operator (CCO) to use the Amazon Web Services Security Token Service (AWS STS).

4.1.3. Choosing a method to install OpenShift Container Platform on AWS

You can install OpenShift Container Platform on installer-provisioned or user-provisioned infrastructure. The default installation type uses installer-provisioned infrastructure, where the installation program provisions the underlying infrastructure for the cluster. You can also install OpenShift Container Platform on infrastructure that you provision. If you do not use infrastructure that the installation program provisions, you must manage and maintain the cluster resources yourself.

See Installation process for more information about installer-provisioned and user-provisioned installation processes.

4.1.3.1. Installing a cluster on installer-provisioned infrastructure

You can install a cluster on AWS infrastructure that is provisioned by the OpenShift Container Platform installation program, by using one of the following methods:

- Installing a cluster quickly on AWS: You can install OpenShift Container Platform on AWS infrastructure that is provisioned by the OpenShift Container Platform installation program. You can install a cluster quickly by using the default configuration options.

- Installing a customized cluster on AWS: You can install a customized cluster on AWS infrastructure that the installation program provisions. The installation program allows for some customization to be applied at the installation stage. Many other customization options are available post-installation.

- Installing a cluster on AWS with network customizations: You can customize your OpenShift Container Platform network configuration during installation, so that your cluster can coexist with your existing IP address allocations and adhere to your network requirements.

- Installing a cluster on AWS in a restricted network: You can install OpenShift Container Platform on AWS on installer-provisioned infrastructure by using an internal mirror of the installation release content. You can use this method to install a cluster that does not require an active internet connection to obtain the software components.

- Installing a cluster on an existing Virtual Private Cloud: You can install OpenShift Container Platform on an existing AWS Virtual Private Cloud (VPC). You can use this installation method if you have constraints set by the guidelines of your company, such as limits when creating new accounts or infrastructure.

- Installing a private cluster on an existing VPC: You can install a private cluster on an existing AWS VPC. You can use this method to deploy OpenShift Container Platform on an internal network that is not visible to the internet.

- Installing a cluster on AWS into a government or secret region: OpenShift Container Platform can be deployed into AWS regions that are specifically designed for US government agencies at the federal, state, and local level, as well as contractors, educational institutions, and other US customers that must run sensitive workloads in the cloud.

4.1.3.2. Installing a cluster on user-provisioned infrastructure

You can install a cluster on AWS infrastructure that you provision, by using one of the following methods:

- Installing a cluster on AWS infrastructure that you provide: You can install OpenShift Container Platform on AWS infrastructure that you provide. You can use the provided CloudFormation templates to create stacks of AWS resources that represent each of the components required for an OpenShift Container Platform installation.

- Installing a cluster on AWS in a restricted network with user-provisioned infrastructure: You can install OpenShift Container Platform on AWS infrastructure that you provide by using an internal mirror of the installation release content. You can use this method to install a cluster that does not require an active internet connection to obtain the software components. You can also use this installation method to ensure that your clusters only use container images that satisfy your organizational controls on external content. While you can install OpenShift Container Platform by using the mirrored content, your cluster still requires internet access to use the AWS APIs.

4.1.4. Next steps

4.2. Configuring an AWS account

Before you can install OpenShift Container Platform, you must configure an Amazon Web Services (AWS) account.

4.2.1. Configuring Route 53

To install OpenShift Container Platform, the Amazon Web Services (AWS) account you use must have a dedicated public hosted zone in your Route 53 service. This zone must be authoritative for the domain. The Route 53 service provides cluster DNS resolution and name lookup for external connections to the cluster.

Procedure

Identify your domain, or subdomain, and registrar. You can transfer an existing domain and registrar or obtain a new one through AWS or another source.

NoteIf you purchase a new domain through AWS, it takes time for the relevant DNS changes to propagate. For more information about purchasing domains through AWS, see Registering Domain Names Using Amazon Route 53 in the AWS documentation.

- If you are using an existing domain and registrar, migrate its DNS to AWS. See Making Amazon Route 53 the DNS Service for an Existing Domain in the AWS documentation.

Create a public hosted zone for your domain or subdomain. See Creating a Public Hosted Zone in the AWS documentation.

Use an appropriate root domain, such as

openshiftcorp.com, or subdomain, such asclusters.openshiftcorp.com.- Extract the new authoritative name servers from the hosted zone records. See Getting the Name Servers for a Public Hosted Zone in the AWS documentation.

- Update the registrar records for the AWS Route 53 name servers that your domain uses. For example, if you registered your domain to a Route 53 service in a different accounts, see the following topic in the AWS documentation: Adding or Changing Name Servers or Glue Records.

- If you are using a subdomain, add its delegation records to the parent domain. This gives Amazon Route 53 responsibility for the subdomain. Follow the delegation procedure outlined by the DNS provider of the parent domain. See Creating a subdomain that uses Amazon Route 53 as the DNS service without migrating the parent domain in the AWS documentation for an example high level procedure.

4.2.1.1. Ingress Operator endpoint configuration for AWS Route 53

If you install in either Amazon Web Services (AWS) GovCloud (US) US-West or US-East region, the Ingress Operator uses us-gov-west-1 region for Route53 and tagging API clients.

The Ingress Operator uses https://tagging.us-gov-west-1.amazonaws.com as the tagging API endpoint if a tagging custom endpoint is configured that includes the string 'us-gov-east-1'.

For more information on AWS GovCloud (US) endpoints, see the Service Endpoints in the AWS documentation about GovCloud (US).

Private, disconnected installations are not supported for AWS GovCloud when you install in the us-gov-east-1 region.

Example Route 53 configuration

- 1

- Route 53 defaults to

https://route53.us-gov.amazonaws.comfor both AWS GovCloud (US) regions. - 2

- Only the US-West region has endpoints for tagging. Omit this parameter if your cluster is in another region.

4.2.2. AWS account limits

The OpenShift Container Platform cluster uses a number of Amazon Web Services (AWS) components, and the default Service Limits affect your ability to install OpenShift Container Platform clusters. If you use certain cluster configurations, deploy your cluster in certain AWS regions, or run multiple clusters from your account, you might need to request additional resources for your AWS account.

The following table summarizes the AWS components whose limits can impact your ability to install and run OpenShift Container Platform clusters.

| Component | Number of clusters available by default | Default AWS limit | Description |

|---|---|---|---|

| Instance Limits | Varies | Varies | By default, each cluster creates the following instances:

These instance type counts are within a new account’s default limit. To deploy more worker nodes, enable autoscaling, deploy large workloads, or use a different instance type, review your account limits to ensure that your cluster can deploy the machines that you need.

In most regions, the bootstrap and worker machines uses an |

| Elastic IPs (EIPs) | 0 to 1 | 5 EIPs per account | To provision the cluster in a highly available configuration, the installation program creates a public and private subnet for each availability zone within a region. Each private subnet requires a NAT Gateway, and each NAT gateway requires a separate elastic IP. Review the AWS region map to determine how many availability zones are in each region. To take advantage of the default high availability, install the cluster in a region with at least three availability zones. To install a cluster in a region with more than five availability zones, you must increase the EIP limit. Important

To use the |

| Virtual Private Clouds (VPCs) | 5 | 5 VPCs per region | Each cluster creates its own VPC. |

| Elastic Load Balancing (ELB/NLB) | 3 | 20 per region |

By default, each cluster creates internal and external network load balancers for the master API server and a single classic elastic load balancer for the router. Deploying more Kubernetes |

| NAT Gateways | 5 | 5 per availability zone | The cluster deploys one NAT gateway in each availability zone. |

| Elastic Network Interfaces (ENIs) | At least 12 | 350 per region |

The default installation creates 21 ENIs and an ENI for each availability zone in your region. For example, the Additional ENIs are created for additional machines and elastic load balancers that are created by cluster usage and deployed workloads. |

| VPC Gateway | 20 | 20 per account | Each cluster creates a single VPC Gateway for S3 access. |

| S3 buckets | 99 | 100 buckets per account | Because the installation process creates a temporary bucket and the registry component in each cluster creates a bucket, you can create only 99 OpenShift Container Platform clusters per AWS account. |

| Security Groups | 250 | 2,500 per account | Each cluster creates 10 distinct security groups. |

4.2.3. Required AWS permissions for the IAM user

Your IAM user must have the permission tag:GetResources in the region us-east-1 to delete the base cluster resources. As part of the AWS API requirement, the OpenShift Container Platform installation program performs various actions in this region.

When you attach the AdministratorAccess policy to the IAM user that you create in Amazon Web Services (AWS), you grant that user all of the required permissions. To deploy all components of an OpenShift Container Platform cluster, the IAM user requires the following permissions:

Example 4.1. Required EC2 permissions for installation

-

ec2:AuthorizeSecurityGroupEgress -

ec2:AuthorizeSecurityGroupIngress -

ec2:CopyImage -

ec2:CreateNetworkInterface -

ec2:AttachNetworkInterface -

ec2:CreateSecurityGroup -

ec2:CreateTags -

ec2:CreateVolume -

ec2:DeleteSecurityGroup -

ec2:DeleteSnapshot -

ec2:DeleteTags -

ec2:DeregisterImage -

ec2:DescribeAccountAttributes -

ec2:DescribeAddresses -

ec2:DescribeAvailabilityZones -

ec2:DescribeDhcpOptions -

ec2:DescribeImages -

ec2:DescribeInstanceAttribute -

ec2:DescribeInstanceCreditSpecifications -

ec2:DescribeInstances -

ec2:DescribeInstanceTypes -

ec2:DescribeInternetGateways -

ec2:DescribeKeyPairs -

ec2:DescribeNatGateways -

ec2:DescribeNetworkAcls -

ec2:DescribeNetworkInterfaces -

ec2:DescribePrefixLists -

ec2:DescribeRegions -

ec2:DescribeRouteTables -

ec2:DescribeSecurityGroups -

ec2:DescribeSubnets -

ec2:DescribeTags -

ec2:DescribeVolumes -

ec2:DescribeVpcAttribute -

ec2:DescribeVpcClassicLink -

ec2:DescribeVpcClassicLinkDnsSupport -

ec2:DescribeVpcEndpoints -

ec2:DescribeVpcs -

ec2:GetEbsDefaultKmsKeyId -

ec2:ModifyInstanceAttribute -

ec2:ModifyNetworkInterfaceAttribute -

ec2:RevokeSecurityGroupEgress -

ec2:RevokeSecurityGroupIngress -

ec2:RunInstances -

ec2:TerminateInstances

Example 4.2. Required permissions for creating network resources during installation

-

ec2:AllocateAddress -

ec2:AssociateAddress -

ec2:AssociateDhcpOptions -

ec2:AssociateRouteTable -

ec2:AttachInternetGateway -

ec2:CreateDhcpOptions -

ec2:CreateInternetGateway -

ec2:CreateNatGateway -

ec2:CreateRoute -

ec2:CreateRouteTable -

ec2:CreateSubnet -

ec2:CreateVpc -

ec2:CreateVpcEndpoint -

ec2:ModifySubnetAttribute -

ec2:ModifyVpcAttribute

If you use an existing VPC, your account does not require these permissions for creating network resources.

Example 4.3. Required Elastic Load Balancing permissions (ELB) for installation

-

elasticloadbalancing:AddTags -

elasticloadbalancing:ApplySecurityGroupsToLoadBalancer -

elasticloadbalancing:AttachLoadBalancerToSubnets -

elasticloadbalancing:ConfigureHealthCheck -

elasticloadbalancing:CreateLoadBalancer -

elasticloadbalancing:CreateLoadBalancerListeners -

elasticloadbalancing:DeleteLoadBalancer -

elasticloadbalancing:DeregisterInstancesFromLoadBalancer -

elasticloadbalancing:DescribeInstanceHealth -

elasticloadbalancing:DescribeLoadBalancerAttributes -

elasticloadbalancing:DescribeLoadBalancers -

elasticloadbalancing:DescribeTags -

elasticloadbalancing:ModifyLoadBalancerAttributes -

elasticloadbalancing:RegisterInstancesWithLoadBalancer -

elasticloadbalancing:SetLoadBalancerPoliciesOfListener

Example 4.4. Required Elastic Load Balancing permissions (ELBv2) for installation

-

elasticloadbalancing:AddTags -

elasticloadbalancing:CreateListener -

elasticloadbalancing:CreateLoadBalancer -

elasticloadbalancing:CreateTargetGroup -

elasticloadbalancing:DeleteLoadBalancer -

elasticloadbalancing:DeregisterTargets -

elasticloadbalancing:DescribeListeners -

elasticloadbalancing:DescribeLoadBalancerAttributes -

elasticloadbalancing:DescribeLoadBalancers -

elasticloadbalancing:DescribeTargetGroupAttributes -

elasticloadbalancing:DescribeTargetHealth -

elasticloadbalancing:ModifyLoadBalancerAttributes -

elasticloadbalancing:ModifyTargetGroup -

elasticloadbalancing:ModifyTargetGroupAttributes -

elasticloadbalancing:RegisterTargets

Example 4.5. Required IAM permissions for installation

-

iam:AddRoleToInstanceProfile -

iam:CreateInstanceProfile -

iam:CreateRole -

iam:DeleteInstanceProfile -

iam:DeleteRole -

iam:DeleteRolePolicy -

iam:GetInstanceProfile -

iam:GetRole -

iam:GetRolePolicy -

iam:GetUser -

iam:ListInstanceProfilesForRole -

iam:ListRoles -

iam:ListUsers -

iam:PassRole -

iam:PutRolePolicy -

iam:RemoveRoleFromInstanceProfile -

iam:SimulatePrincipalPolicy -

iam:TagRole

If you have not created an elastic load balancer (ELB) in your AWS account, the IAM user also requires the iam:CreateServiceLinkedRole permission.

Example 4.6. Required Route 53 permissions for installation

-

route53:ChangeResourceRecordSets -

route53:ChangeTagsForResource -

route53:CreateHostedZone -

route53:DeleteHostedZone -

route53:GetChange -

route53:GetHostedZone -

route53:ListHostedZones -

route53:ListHostedZonesByName -

route53:ListResourceRecordSets -

route53:ListTagsForResource -

route53:UpdateHostedZoneComment

Example 4.7. Required S3 permissions for installation

-

s3:CreateBucket -

s3:DeleteBucket -

s3:GetAccelerateConfiguration -

s3:GetBucketAcl -

s3:GetBucketCors -

s3:GetBucketLocation -

s3:GetBucketLogging -

s3:GetBucketObjectLockConfiguration -

s3:GetBucketReplication -

s3:GetBucketRequestPayment -

s3:GetBucketTagging -

s3:GetBucketVersioning -

s3:GetBucketWebsite -

s3:GetEncryptionConfiguration -

s3:GetLifecycleConfiguration -

s3:GetReplicationConfiguration -

s3:ListBucket -

s3:PutBucketAcl -

s3:PutBucketTagging -

s3:PutEncryptionConfiguration

Example 4.8. S3 permissions that cluster Operators require

-

s3:DeleteObject -

s3:GetObject -

s3:GetObjectAcl -

s3:GetObjectTagging -

s3:GetObjectVersion -

s3:PutObject -

s3:PutObjectAcl -

s3:PutObjectTagging

Example 4.9. Required permissions to delete base cluster resources

-

autoscaling:DescribeAutoScalingGroups -

ec2:DeleteNetworkInterface -

ec2:DeleteVolume -

elasticloadbalancing:DeleteTargetGroup -

elasticloadbalancing:DescribeTargetGroups -

iam:DeleteAccessKey -

iam:DeleteUser -

iam:ListAttachedRolePolicies -

iam:ListInstanceProfiles -

iam:ListRolePolicies -

iam:ListUserPolicies -

s3:DeleteObject -

s3:ListBucketVersions -

tag:GetResources

Example 4.10. Required permissions to delete network resources

-

ec2:DeleteDhcpOptions -

ec2:DeleteInternetGateway -

ec2:DeleteNatGateway -

ec2:DeleteRoute -

ec2:DeleteRouteTable -