스토리지

OpenShift Container Platform에서 스토리지 구성 및 사용

초록

1장. OpenShift Container Platform 스토리지 개요

OpenShift Container Platform에서는 온프레미스 및 클라우드 공급자를 위해 여러 유형의 스토리지를 지원합니다. OpenShift Container Platform 클러스터에서 영구 및 비영구 데이터에 대한 컨테이너 스토리지를 관리할 수 있습니다.

1.1. OpenShift Container Platform 스토리지에 대한 일반 용어집

이 용어집은 스토리지 콘텐츠에 사용되는 일반적인 용어를 정의합니다.

- 액세스 모드

볼륨 액세스 모드에서는 볼륨 기능을 설명합니다. 액세스 모드를 사용하여 PVC(영구 볼륨 클레임) 및 PV(영구 볼륨)와 일치시킬 수 있습니다. 다음은 액세스 모드의 예입니다.

- ReadWriteOnce (RWO)

- ReadOnlyMany (ROX)

- ReadWriteMany (RWX)

- ReadWriteOncePod (RWOP)

- Cinder

- 모든 볼륨의 관리, 보안 및 스케줄링을 관리하는 RHOSP(Red Hat OpenStack Platform)용 블록 스토리지 서비스입니다.

- 구성 맵

-

구성 맵에서는 구성 데이터를 Pod에 삽입하는 방법을 제공합니다. 구성 맵에 저장된 데이터를

ConfigMap유형의 볼륨에서 참조할 수 있습니다. Pod에서 실행되는 애플리케이션에서는 이 데이터를 사용할 수 있습니다. - CSI(Container Storage Interface)

- 다양한 컨테이너 오케스트레이션(CO) 시스템에서 컨테이너 스토리지를 관리하기 위한 API 사양입니다.

- 동적 프로비저닝

- 프레임워크를 사용하면 필요에 따라 스토리지 볼륨을 생성할 수 있으므로 클러스터 관리자가 영구 스토리지를 사전 프로비저닝할 필요가 없습니다.

- 임시 스토리지

- Pod 및 컨테이너에는 작업을 위해 임시 또는 일시적인 로컬 스토리지가 필요할 수 있습니다. 이러한 임시 스토리지의 수명은 개별 Pod의 수명 이상으로 연장되지 않으며 이 임시 스토리지는 여러 Pod 사이에서 공유할 수 없습니다.

- trigger channel

- 데이터 센터, 컴퓨터 서버, 스위치 및 스토리지 간에 데이터를 전송하는 데 사용되는 네트워킹 기술입니다.

- FlexVolume

- FlexVolume은 exec 기반 모델을 사용하여 스토리지 드라이버와 상호 작용하는 트리 외부 플러그인 인터페이스입니다. 각 노드의 사전 정의된 볼륨 플러그인 경로와 경우에 따라 컨트롤 플레인 노드에 FlexVolume 드라이버 바이너리를 설치해야 합니다.

- fsGroup

- fsGroup은 Pod의 파일 시스템 그룹 ID를 정의합니다.

- iSCSI

- iSCSI(Internet Small Computer Systems Interface)는 데이터 스토리지 기능을 연결하기 위한 인터넷 프로토콜 기반 스토리지 네트워킹 표준입니다. iSCSI 볼륨은 기존 iSCSI(SCSI over IP) 볼륨을 Pod에 마운트할 수 있습니다.

- hostPath

- OpenShift Container Platform 클러스터의 hostPath 볼륨은 호스트 노드 파일 시스템의 파일 또는 디렉터리를 Pod에 마운트합니다.

- KMS 키

- KMS(Key Management Service)는 다양한 서비스에서 데이터에 필요한 암호화 수준을 달성하는 데 도움이 됩니다. KMS 키를 사용하여 데이터를 암호화, 암호 해독 및 재암호화할 수 있습니다.

- 로컬 볼륨

- 로컬 볼륨은 디스크, 파티션 또는 디렉터리와 같은 마운트된 로컬 스토리지 장치를 나타냅니다.

- NFS

- 원격 호스트가 네트워크를 통해 파일 시스템을 마운트하고 로컬로 마운트되는 것처럼 해당 파일 시스템과 상호 작용할 수 있는 네트워크 파일 시스템(NFS)입니다. 이를 통해 시스템 관리자는 네트워크의 중앙 집중식 서버에 리소스를 통합할 수 있습니다.

- OpenShift Data Foundation

- 사내 또는 하이브리드 클라우드에서 파일, 블록 및 오브젝트 스토리지를 지원하는 OpenShift Container Platform용 영구 스토리지 공급자

- 영구 스토리지

- Pod 및 컨테이너는 작동을 위해 영구 스토리지가 필요할 수 있습니다. OpenShift Container Platform에서는 Kubernetes PV(영구 볼륨) 프레임워크를 사용하여 클러스터 관리자가 클러스터의 영구 스토리지를 프로비저닝할 수 있습니다. 개발자는 PVC를 사용하여 기본 스토리지 인프라에 대한 구체적인 지식 없이도 PV 리소스를 요청할 수 있습니다.

- PV(영구 볼륨)

- OpenShift Container Platform에서는 Kubernetes PV(영구 볼륨) 프레임워크를 사용하여 클러스터 관리자가 클러스터의 영구 스토리지를 프로비저닝할 수 있습니다. 개발자는 PVC를 사용하여 기본 스토리지 인프라에 대한 구체적인 지식 없이도 PV 리소스를 요청할 수 있습니다.

- PVC(영구 볼륨 클레임)

- PVC를 사용하여 PersistentVolume을 포드에 마운트할 수 있습니다. 클라우드 환경의 세부 사항을 모르는 상태에서 스토리지에 액세스할 수 있습니다.

- Pod

- OpenShift Container Platform 클러스터에서 실행되는 볼륨 및 IP 주소와 같은 공유 리소스가 있는 하나 이상의 컨테이너입니다. Pod는 정의, 배포 및 관리되는 최소 컴퓨팅 단위입니다.

- 회수 정책

-

릴리스된 볼륨에서 수행할 작업을 클러스터에 알리는 정책입니다. 볼륨 회수 정책은

Retain,Recycle또는Delete일 수 있습니다. - RBAC(역할 기반 액세스 제어)

- RBAC(역할 기반 액세스 제어)는 조직 내 개별 사용자 역할에 따라 컴퓨터 또는 네트워크 리소스에 대한 액세스를 규제하는 방법입니다.

- 상태 비저장 애플리케이션

- 상태 비저장 애플리케이션은 해당 클라이언트와의 다음 세션에서 사용하기 위해 한 세션에 생성된 클라이언트 데이터를 저장하지 않는 애플리케이션 프로그램입니다.

- 상태 저장 애플리케이션

-

상태 저장 애플리케이션은 데이터를 영구 디스크 스토리지에 저장하는 애플리케이션 프로그램입니다. 서버, 클라이언트 및 애플리케이션에서는 영구 디스크 스토리지를 사용할 수 있습니다. OpenShift Container Platform에서

Statefulset오브젝트를 사용하여 일련의 Pod 배포 및 스케일링을 관리하고 이러한 Pod의 순서 및 고유성에 대한 보장을 제공할 수 있습니다. - 정적 프로비저닝

- 클러스터 관리자가 여러 PV를 생성합니다. PV에는 스토리지 세부 정보가 포함되어 있습니다. PV는 Kubernetes API에 있으며 사용할 수 있습니다.

- 스토리지

- OpenShift Container Platform은 온프레미스 및 클라우드 공급자를 위해 다양한 유형의 스토리지를 지원합니다. OpenShift Container Platform 클러스터에서 영구 및 비영구 데이터에 대한 컨테이너 스토리지를 관리할 수 있습니다.

- 스토리지 클래스

- 스토리지 클래스는 관리자가 제공하는 스토리지 클래스를 설명할 수 있는 방법을 제공합니다. 클래스는 서비스 수준, 백업 정책, 클러스터 관리자가 결정하는 임의의 정책에 매핑될 수 있습니다.

- VMware vSphere의 VMI(가상 머신 디스크) 볼륨

- VMI(가상 머신 디스크)는 가상 머신에 사용되는 가상 하드 디스크 드라이브의 컨테이너를 설명하는 파일 형식입니다.

1.2. 스토리지 유형

OpenShift Container Platform 스토리지는 두 가지 범주, 즉 임시 스토리지 및 영구 스토리지로 분류됩니다.

1.2.1. 임시 스토리지

Pod 및 컨테이너는 본질적으로 임시 또는 일시적이며 상태 비저장 애플리케이션을 위해 설계되었습니다. 임시 스토리지를 사용하면 관리자와 개발자가 일부 작업의 로컬 스토리지를 보다 효과적으로 관리할 수 있습니다. 임시 스토리지 개요, 유형 및 관리에 대한 자세한 내용은 임시 스토리지 이해를 참조하십시오.

1.2.2. 영구 스토리지

컨테이너에 배포된 상태 저장 애플리케이션에는 영구 스토리지가 필요합니다. OpenShift Container Platform은 클러스터 관리자가 영구 스토리지를 프로비저닝할 수 있도록 PV(영구 볼륨)라는 사전 프로비저닝된 스토리지 프레임워크를 사용합니다. 이러한 볼륨 내부의 데이터는 개별 Pod의 라이프사이클을 초과하여 존재할 수 있습니다. 개발자는 PVC(영구 볼륨 클레임)를 사용하여 스토리지 요구 사항을 요청할 수 있습니다. 영구 스토리지 개요, 구성 및 라이프사이클에 대한 자세한 내용은 영구 스토리지 이해를 참조하십시오.

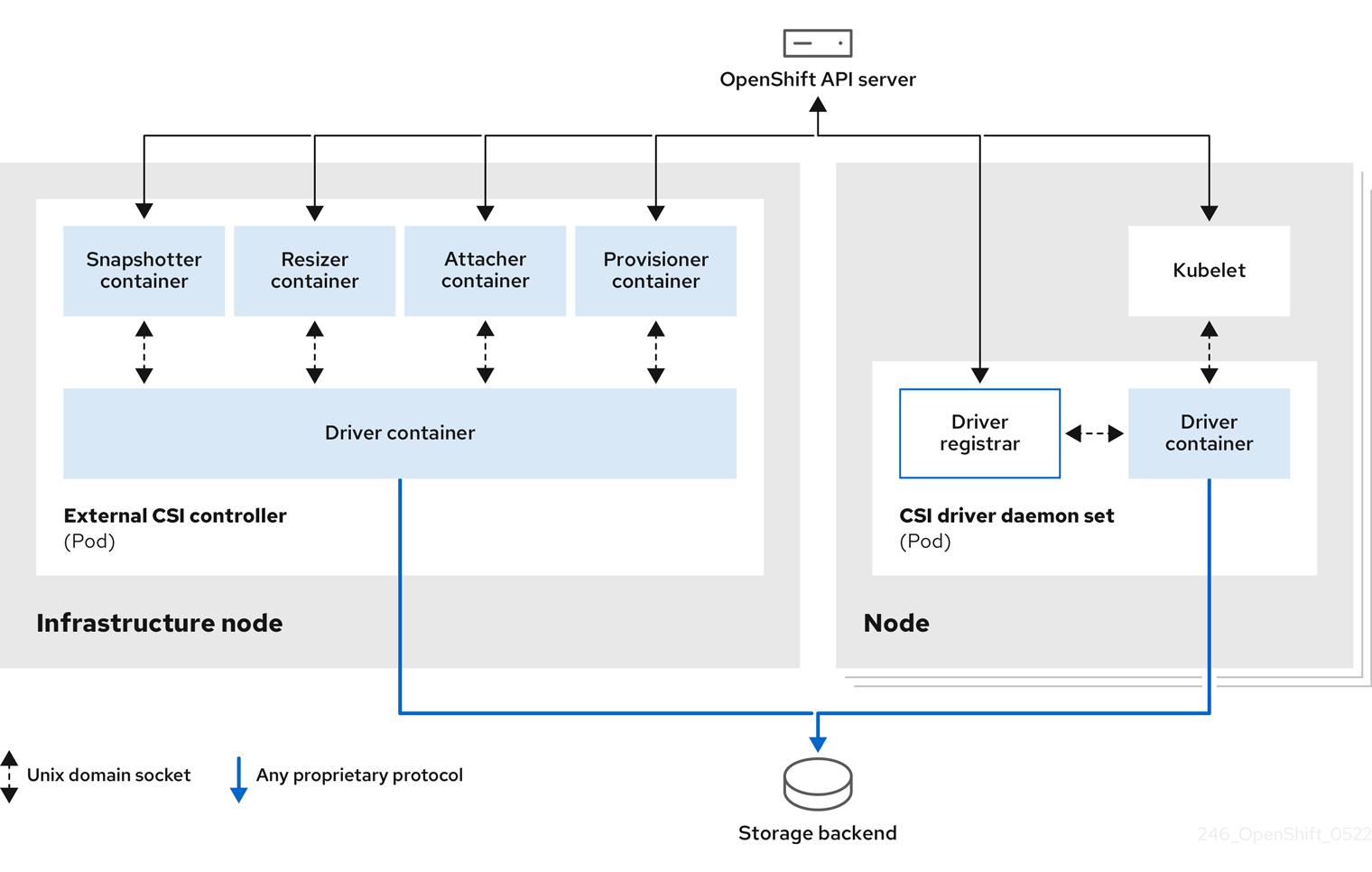

1.3. CSI(Container Storage Interface)

CSI는 다양한 컨테이너 오케스트레이션(CO) 시스템에서 컨테이너 스토리지를 관리하기 위한 API 사양입니다. 기본 스토리지 인프라에 대한 지식이 없어도 컨테이너 네이티브 환경 내에서 스토리지 볼륨을 관리할 수 있습니다. CSI를 사용하면 사용하는 스토리지 공급 업체에 관계없이 스토리지가 다양한 컨테이너 오케스트레이션 시스템에서 균일하게 작동합니다. CSI에 대한 자세한 내용은 CSI(Container Storage Interface) 사용을 참조하십시오.

1.4. 동적 프로비저닝

동적 프로비저닝을 사용하면 클러스터 관리자가 스토리지를 사전 프로비저닝할 필요가 없어 필요에 따라 스토리지 볼륨을 생성할 수 있습니다. 동적 프로비저닝에 대한 자세한 내용은 동적 프로비저닝을 참조하십시오. https://access.redhat.com/documentation/en-us/openshift_container_platform/4.13/html-single/storage/#dynamic-provisioning

2장. 임시 스토리지 이해

2.1. 개요

영구 스토리지 외에도 Pod 및 컨테이너는 작업을 위해 임시 또는 임시 로컬 스토리지가 필요할 수 있습니다. 이러한 임시 스토리지의 수명은 개별 Pod의 수명 이상으로 연장되지 않으며 이 임시 스토리지는 여러 Pod 사이에서 공유할 수 없습니다.

Pod는 스크래치 공간, 캐싱 및 로그를 위해 임시 로컬 스토리지를 사용합니다. 로컬 스토리지 회계 및 격리 부족과 관련한 문제는 다음과 같습니다.

- Pod는 사용 가능한 로컬 스토리지의 양을 감지할 수 없습니다.

- Pod는 보장되는 로컬 스토리지를 요청할 수 없습니다.

- 로컬 스토리지는 최상의 리소스입니다.

- Pod는 로컬 스토리지를 채우는 다른 Pod로 인해 제거할 수 있으며 충분한 스토리지를 회수할 때까지 새 Pod가 허용되지 않습니다.

영구 볼륨에 대해 임시 스토리지는 구조화되지 않으며 시스템에서 실행되는 모든 Pod와 시스템, 컨테이너 런타임 및 OpenShift Container Platform의 다른 사용에서 공간을 공유합니다. 임시 스토리지 프레임워크를 사용하면 Pod에서 일시적인 로컬 스토리지 요구를 지정할 수 있습니다. 또한, OpenShift Container Platform은 적절한 Pod를 예약하고 로컬 스토리지를 과도하게 사용하지 않도록 노드를 보호할 수 있습니다.

임시 스토리지 프레임워크를 사용하면 관리자와 개발자가 로컬 스토리지를 보다 효과적으로 관리할 수 있지만 I/O 처리량 및 대기 시간은 직접적인 영향을 받지 않습니다.

2.2. 임시 스토리지 유형

임시 로컬 스토리지는 항상 기본 파티션에서 사용할 수 있습니다. 기본 파티션을 생성하는 방법에는 루트 및 런타임이라는 두 가지 기본적인 방법이 있습니다.

루트

이 파티션에는 기본적으로 kubelet 루트 디렉터리인 /var/lib/kubelet/ 및 /var/log/ 디렉터리가 있습니다. 이 파티션은 사용자 Pod, OS, Kubernetes 시스템 데몬 간에 공유할 수 있습니다. 이 파티션은 EmptyDir 볼륨, 컨테이너 로그, 이미지 계층 및 container-wriable 계층을 통해 Pod에서 사용할 수 있습니다. kubelet은 이 파티션의 공유 액세스 및 격리를 관리합니다. 이 파티션은 임시입니다. 애플리케이션은 이 파티션에서 디스크 IOPS와 같은 성능 SLA를 기대할 수 없습니다.

런타임

런타임에서 오버레이 파일 시스템에 사용할 수 있는 선택적 파티션입니다. OpenShift Container Platform에서는 이 파티션에 대한 격리와 함께 공유 액세스를 식별하고 제공합니다. 컨테이너 이미지 계층 및 쓰기 가능한 계층이 여기에 저장됩니다. 런타임 파티션이 있는 경우 루트 파티션은 이미지 계층 또는 기타 쓰기 가능한 스토리지를 유지하지 않습니다.

2.3. 임시 데이터 스토리지 관리

클러스터 관리자는 비종료 상태의 모든 Pod에서 임시 스토리지에 대한 제한 범위 및 요청 수를 정의하는 할당량을 설정하여 프로젝트 내에서 임시 스토리지를 관리할 수 있습니다. 개발자는 Pod 및 컨테이너 수준에서 이러한 컴퓨팅 리소스에 대한 요청 및 제한을 설정할 수도 있습니다.

요청 및 제한을 지정하여 로컬 임시 스토리지를 관리할 수 있습니다. Pod의 각 컨테이너는 다음을 지정할 수 있습니다.

-

spec.containers[].resources.limits.ephemeral-storage -

spec.containers[].resources.requests.ephemeral-storage

임시 스토리지에 대한 제한 및 요청은 바이트 수량으로 측정됩니다. 스토리지를 일반 정수로 표현하거나 E, P, T, G, M, k 접미사 중 하나를 사용하여 고정 소수점 숫자로 표시할 수 있습니다. Power-of-two equivalents: Ei, Pi, Ti, Gi, Mi, Ki를 사용할 수도 있습니다. 예를 들어 다음 양은 모두 약 128974848, 129e6, 129M 및ECDHEMi와 같은 값을 나타냅니다. 접미사의 경우는 중요합니다. 400m의 임시 스토리지를 지정하면 400Mi(400Mi) 또는 400MB(400M)가 아닌 0.4바이트를 요청합니다.

다음 예제에서는 두 개의 컨테이너가 있는 포드를 보여줍니다. 각 컨테이너는 2GiB의 로컬 임시 스토리지를 요청합니다. 각 컨테이너에는 4GiB의 로컬 임시 스토리지 제한이 있습니다. 따라서 Pod에는 4GiB의 로컬 임시 스토리지 요청과 8GiB의 로컬 임시 스토리지 제한이 있습니다.

apiVersion: v1

kind: Pod

metadata:

name: frontend

spec:

containers:

- name: app

image: images.my-company.example/app:v4

resources:

requests:

ephemeral-storage: "2Gi"

limits:

ephemeral-storage: "4Gi"

volumeMounts:

- name: ephemeral

mountPath: "/tmp"

- name: log-aggregator

image: images.my-company.example/log-aggregator:v6

resources:

requests:

ephemeral-storage: "2Gi"

volumeMounts:

- name: ephemeral

mountPath: "/tmp"

volumes:

- name: ephemeral

emptyDir: {}Pod 사양의 이 설정은 스케줄러에서 Pod 예약 방법에 영향을 미치며 kubelet evict Pod를 제거하는 방법에도 영향을 미칩니다. 우선 스케줄러는 예약된 컨테이너의 리소스 요청 합계가 노드의 용량보다 작은지 확인합니다. 이 경우 사용 가능한 임시 스토리지(할당 가능한 리소스)가 4GiB를 초과하는 경우에만 Pod를 노드에 할당할 수 있습니다.

두 번째는 컨테이너 수준에서 첫 번째 컨테이너가 리소스 제한을 설정하므로 kubelet 제거 관리자가 이 컨테이너의 디스크 사용량을 측정하고 이 컨테이너의 스토리지 사용량이 제한(4GiB)을 초과하면 Pod를 제거합니다. Pod 수준에서 kubelet은 해당 Pod의 모든 컨테이너 제한을 추가하여 전체 Pod 스토리지 제한을 수행합니다. 이 경우 Pod 수준의 총 스토리지 사용량은 모든 컨테이너의 디스크 사용량 합계와 Pod의 emptyDir 볼륨 합계입니다. 이 총 사용량이 전체 Pod 스토리지 제한(4GiB)을 초과하는 경우 kubelet은 제거할 Pod도 표시합니다.

프로젝트 할당량 정의에 대한 자세한 내용은 프로젝트당 할당량 설정을 참조하십시오.

2.4. 임시 스토리지 모니터링

/bin/df를 임시 컨테이너 데이터가 위치하는 볼륨에서 임시 스토리지의 사용을 모니터링하는 도구를 사용할 수 있으며, 이는 /var/lib/kubelet 및 /var/lib/containers입니다. 클러스터 관리자가 /var/lib/containers를 별도의 디스크에 배치한 경우에는 df 명령을 사용하여 /var/lib/kubelet에서 전용으로 사용할 수 있는 공간을 표시할 수 있습니다.

/var/lib에서 사용된 공간 및 사용할 수 있는 공간을 사람이 읽을 수 있는 값으로 표시하려면 다음 명령을 입력합니다.

$ df -h /var/lib

출력에는 /var/lib에서의 임시 스토리지 사용량이 표시됩니다.

출력 예

Filesystem Size Used Avail Use% Mounted on

/dev/disk/by-partuuid/4cd1448a-01 69G 32G 34G 49% /3장. 영구 스토리지 이해

3.1. 영구 스토리지 개요

스토리지 관리는 컴퓨팅 리소스 관리와 다릅니다. OpenShift Container Platform에서는 Kubernetes PV(영구 볼륨) 프레임워크를 사용하여 클러스터 관리자가 클러스터의 영구 스토리지를 프로비저닝할 수 있습니다. 개발자는 PVC(영구 볼륨 클레임)를 사용하여 기본 스토리지 인프라를 구체적으로 잘 몰라도 PV 리소스를 요청할 수 있습니다.

PVC는 프로젝트별로 고유하며 PV를 사용하는 방법과 같이 개발자가 생성 및 사용할 수 있습니다. 자체 PV 리소스는 단일 프로젝트로 범위가 지정되지 않으며, 전체 OpenShift Container Platform 클러스터에서 공유되고 모든 프로젝트에서 요청할 수 있습니다. PV가 PVC에 바인딩된 후에는 해당 PV를 다른 PVC에 바인딩할 수 없습니다. 이는 바인딩 프로젝트인 단일 네임스페이스로 바인딩된 PV의 범위를 지정하는 효과가 있으며, 이는 바인딩된 프로젝트의 범위가 됩니다.

PV는 PersistentVolume API 오브젝트로 정의되면, 이는 클러스터 관리자가 정적으로 프로비저닝하거나 StorageClass 오브젝트를 사용하여 동적으로 프로비저닝한 클러스터에서의 기존 스토리지 조각을 나타냅니다. 그리고 노드가 클러스터 리소스인 것과 마찬가지로 클러스터의 리소스입니다.

PV는 볼륨과 같은 볼륨 플러그인이지만 PV를 사용하는 개별 Pod와 라이프사이클이 독립적입니다. PV 오브젝트는 NFS, iSCSI 또는 클라우드 공급자별 스토리지 시스템에서 스토리지 구현의 세부 정보를 캡처합니다.

인프라의 스토리지의 고가용성은 기본 스토리지 공급자가 담당합니다.

PVC는 PersistentVolumeClaim API 오브젝트에 의해 정의되며, 개발자의 스토리지 요청을 나타냅니다. Pod는 노드 리소스를 사용하고 PVC는 PV 리소스를 사용하는 점에서 Pod와 유사합니다. 예를 들어, Pod는 CPU 및 메모리와 같은 특정 리소스를 요청할 수 있지만 PVC는 특정 스토리지 용량 및 액세스 모드를 요청할 수 있습니다. 예를 들어, Pod는 1회 읽기-쓰기 또는 여러 번 읽기 전용으로 마운트될 수 있습니다.

3.2. 볼륨 및 클레임의 라이프사이클

PV는 클러스터의 리소스입니다. PVC는 그러한 리소스에 대한 요청이며, 리소스에 대한 클레임을 검사하는 역할을 합니다. PV와 PVC 간의 상호 작용에는 다음과 같은 라이프사이클이 있습니다.

3.2.1. 스토리지 프로비저닝

PVC에 정의된 개발자의 요청에 대한 응답으로 클러스터 관리자는 스토리지 및 일치하는 PV를 프로비저닝하는 하나 이상의 동적 프로비저너를 구성합니다.

다른 방법으로 클러스터 관리자는 사용할 수 있는 실제 스토리지의 세부 정보를 전달하는 여러 PV를 사전에 생성할 수 있습니다. PV는 API에 위치하며 사용할 수 있습니다.

3.2.2. 클레임 바인딩

PVC를 생성할 때 스토리지의 특정 용량을 요청하고, 필요한 액세스 모드를 지정하며, 스토리지를 설명 및 분류하는 스토리지 클래스를 만듭니다. 마스터의 제어 루프는 새 PVC를 감시하고 새 PVC를 적절한 PV에 바인딩합니다. 적절한 PV가 없으면 스토리지 클래스를 위한 프로비저너가 PV를 1개 생성합니다.

전체 PV의 크기는 PVC 크기를 초과할 수 있습니다. 이는 특히 수동으로 프로비저닝된 PV의 경우 더욱 그러합니다. 초과를 최소화하기 위해 OpenShift Container Platform은 기타 모든 조건과 일치하는 최소 PV로 바인딩됩니다.

일치하는 볼륨이 없거나 스토리지 클래스에 서비스를 제공하는 사용할 수 있는 프로비저너로 생성할 수 없는 경우 클레임은 영구적으로 바인딩되지 않습니다. 일치하는 볼륨을 사용할 수 있을 때 클레임이 바인딩됩니다. 예를 들어, 수동으로 프로비저닝된 50Gi 볼륨이 있는 클러스터는 100Gi 요청하는 PVC와 일치하지 않습니다. 100Gi PV가 클러스터에 추가되면 PVC를 바인딩할 수 있습니다.

3.2.3. Pod 및 클레임된 PV 사용

Pod는 클레임을 볼륨으로 사용합니다. 클러스터는 클레임을 검사하여 바인딩된 볼륨을 찾고 Pod에 해당 볼륨을 마운트합니다. 여러 액세스 모드를 지원하는 그러한 볼륨의 경우 Pod에서 클레임을 볼륨으로 사용할 때 적용되는 모드를 지정해야 합니다.

클레임이 있고 해당 클레임이 바인딩되면, 바인딩된 PV는 필요한 동안 사용자의 소유가 됩니다. Pod의 볼륨 블록에 persistentVolumeClaim을 포함하여 Pod를 예약하고 클레임된 PV에 액세스할 수 있습니다.

파일 수가 높은 영구 볼륨을 Pod에 연결하는 경우 해당 Pod가 실패하거나 시작하는 데 시간이 오래 걸릴 수 있습니다. 자세한 내용은 OpenShift에서 파일 수가 많은 영구 볼륨을 사용할 때 포드를 시작하지 못하거나 "Ready" 상태를 달성하기 위해 과도한 시간을 소비하는 경우를 참조하십시오.

3.2.4. 사용 중 스토리지 오브젝트 보호

사용 중 스토리지 오브젝트 보호 기능은 Pod에서 사용 중인 PVC와 PVC에 바인딩된 PC가 시스템에서 제거되지 않도록 합니다. 제거되면 데이터가 손실될 수 있습니다.

사용 중 스토리지 오브젝트 보호는 기본적으로 활성화됩니다.

PVC를 사용하는 Pod 오브젝트가 존재하는 경우 PVC는 Pod에 의해 활성 사용 중이 됩니다.

사용자가 Pod에서 활성 사용 중인 PVC를 삭제하면 PVC가 즉시 제거되지 않습니다. 모든 Pod에서 PVC를 더 이상 활성 사용하지 않을 때까지 PVC의 제거가 연기됩니다. 또한, 클러스터 관리자가 PVC에 바인딩된 PV를 삭제하는 경우에도 PV가 즉시 제거되지 않습니다. PV가 더 이상 PVC에 바인딩되지 않을 때까지 PV 제거가 연기됩니다.

3.2.5. 영구 볼륨 해제

볼륨 사용 작업이 끝나면 API에서 PVC 오브젝트를 삭제하여 리소스를 회수할 수 있습니다. 클레임이 삭제되었지만 다른 클레임에서 아직 사용할 수 없을 때 볼륨은 해제된 것으로 간주됩니다. 이전 클레임의 데이터는 볼륨에 남아 있으며 정책에 따라 처리되어야 합니다.

3.2.6. 영구 볼륨 회수 정책

영구 볼륨 회수 정책은 해제된 볼륨에서 수행할 작업을 클러스터에 명령합니다. 볼륨 회수 정책은 Retain, Recycle 또는 Delete일 수 있습니다.

-

Retain회수 정책을 사용하면 이를 지원하는 해당 볼륨 플러그인에 대한 리소스를 수동으로 회수할 수 있습니다. -

Recycle회수 정책은 볼륨이 클레임에서 해제되면 바인딩되지 않은 영구 볼륨 풀로 다시 재활용합니다.

OpenShift Container Platform 4에서는 Recycle 회수 정책이 사용되지 않습니다. 기능을 향상하기 위해 동적 프로비저닝이 권장됩니다.

-

삭제회수 정책은 OpenShift Container Platform에서PersistentVolume오브젝트와 Amazon EBS(Amazon EBS) 또는 VMware vSphere와 같은 외부 인프라의 관련 스토리지 자산을 모두 삭제합니다.

동적으로 프로비저닝된 볼륨은 항상 삭제됩니다.

3.2.7. 수동으로 영구 볼륨 회수

PVC(영구 볼륨 클레임)가 삭제되어도 PV(영구 볼륨)는 계속 존재하며 "해제됨"으로 간주됩니다. 그러나 이전 클레임의 데이터가 볼륨에 남아 있으므로 다른 클레임에서 PV를 아직 사용할 수 없습니다.

절차

클러스터 관리자로 PV를 수동으로 회수하려면 다음을 수행합니다.

PV를 삭제합니다.

$ oc delete pv <pv-name>AWS EBS, GCE PD, Azure Disk 또는 Cinder 볼륨과 같은 외부 인프라의 연결된 스토리지 자산은 PV가 삭제된 후에도 계속 존재합니다.

- 연결된 스토리지 자산에서 데이터를 정리합니다.

- 연결된 스토리지 자산을 삭제합니다. 대안으로, 동일한 스토리지 자산을 재사용하려면, 스토리지 자산 정의를 사용하여 새 PV를 생성합니다.

이제 회수된 PV를 다른 PVC에서 사용할 수 있습니다.

3.2.8. 영구 볼륨의 회수 정책 변경

영구 볼륨의 회수 정책을 변경하려면 다음을 수행합니다.

클러스터의 영구 볼륨을 나열합니다.

$ oc get pv출력 예

NAME CAPACITY ACCESSMODES RECLAIMPOLICY STATUS CLAIM STORAGECLASS REASON AGE pvc-b6efd8da-b7b5-11e6-9d58-0ed433a7dd94 4Gi RWO Delete Bound default/claim1 manual 10s pvc-b95650f8-b7b5-11e6-9d58-0ed433a7dd94 4Gi RWO Delete Bound default/claim2 manual 6s pvc-bb3ca71d-b7b5-11e6-9d58-0ed433a7dd94 4Gi RWO Delete Bound default/claim3 manual 3s영구 볼륨 중 하나를 선택하고 다음과 같이 회수 정책을 변경합니다.

$ oc patch pv <your-pv-name> -p '{"spec":{"persistentVolumeReclaimPolicy":"Retain"}}'선택한 영구 볼륨에 올바른 정책이 있는지 확인합니다.

$ oc get pv출력 예

NAME CAPACITY ACCESSMODES RECLAIMPOLICY STATUS CLAIM STORAGECLASS REASON AGE pvc-b6efd8da-b7b5-11e6-9d58-0ed433a7dd94 4Gi RWO Delete Bound default/claim1 manual 10s pvc-b95650f8-b7b5-11e6-9d58-0ed433a7dd94 4Gi RWO Delete Bound default/claim2 manual 6s pvc-bb3ca71d-b7b5-11e6-9d58-0ed433a7dd94 4Gi RWO Retain Bound default/claim3 manual 3s이전 출력에서

default/claim3클레임에 바인딩된 볼륨이 이제Retain회수 정책을 갖습니다. 사용자가default/claim3클레임을 삭제할 때 볼륨이 자동으로 삭제되지 않습니다.

3.3. PV(영구 볼륨)

각 PV에는 사양 및 상태가 포함됩니다. 이는 볼륨의 사양과 상태이고 예는 다음과 같습니다.

PersistentVolume 오브젝트 정의 예

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

...

status:

...3.3.1. PV 유형

OpenShift Container Platform에서는 다음과 같은 영구 볼륨 플러그인을 지원합니다.

- AliCloud Disk

- AWS Elastic Block Store (EBS)

- AWS EBS(Elastic File Store)

- Azure Disk

- Azure File

- Cinder

- 파이버 채널

- GCP 영구 디스크

- GCP 파일 저장소

- IBM Power Virtual Server Block

- IBM VPC Block

- HostPath

- iSCSI

- 로컬 볼륨

- NFS

- OpenStack Manila

- Red Hat OpenShift Data Foundation

- VMware vSphere

3.3.2. 용량

일반적으로 PV(영구 볼륨)에는 특정 스토리지 용량이 있습니다. 이는 PV의 용량 속성을 사용하여 설정됩니다.

현재는 스토리지 용량이 설정 또는 요청할 수 있는 유일한 리소스뿐입니다. 향후 속성에는 IOPS, 처리량 등이 포함될 수 있습니다.

3.3.3. 액세스 모드

영구 볼륨은 리소스 공급자가 지원하는 방식으로 호스트에 볼륨을 마운트될 수 있습니다. 공급자에 따라 기능이 다르며 각 PV의 액세스 모드는 해당 볼륨에서 지원하는 특정 모드로 설정됩니다. 예를 들어, NFS에서는 여러 읽기-쓰기 클라이언트를 지원할 수 있지만 특정 NFS PV는 서버에서 읽기 전용으로 내보낼 수 있습니다. 각 PV는 특정 PV의 기능을 설명하는 자체 액세스 모드 세트를 가져옵니다.

클레임은 액세스 모드가 유사한 볼륨과 매칭됩니다. 유일하게 일치하는 두 가지 기준은 액세스 모드와 크기입니다. 클레임의 액세스 모드는 요청을 나타냅니다. 따라서 더 많이 부여될 수 있지만 절대로 부족하게는 부여되지 않습니다. 예를 들어, 클레임 요청이 RWO이지만 사용 가능한 유일한 볼륨이 NFS PV(RWO+ROX+RWX)인 경우, RWO를 지원하므로 클레임이 NFS와 일치하게 됩니다.

항상 직접 일치가 먼저 시도됩니다. 볼륨의 모드는 사용자의 요청과 일치하거나 더 많은 모드를 포함해야 합니다. 크기는 예상되는 크기보다 크거나 같아야 합니다. NFS 및 iSCSI와 같은 두 개의 볼륨 유형에 동일한 액세스 모드 세트가 있는 경우 둘 중 하나를 해당 모드와 클레임과 일치시킬 수 있습니다. 볼륨 유형과 특정 유형을 선택할 수 있는 순서는 없습니다.

모드가 동일한 모든 볼륨이 그룹화된 후 크기 오름차순으로 크기가 정렬됩니다. 바인더는 모드가 일치하는 그룹을 가져온 후 크기가 일치하는 그룹을 찾을 때까지 크기 순서대로 각 그룹에 대해 반복합니다.

다음 표에는 액세스 모드가 나열되어 있습니다.

| 액세스 모드 | CLI 약어 | 설명 |

|---|---|---|

| ReadWriteOnce |

| 볼륨은 단일 노드에서 읽기-쓰기로 마운트할 수 있습니다. |

| ReadOnlyMany |

| 볼륨은 여러 노드에서 읽기 전용으로 마운트할 수 있습니다. |

| ReadWriteMany |

| 볼륨은 여러 노드에서 읽기-쓰기로 마운트할 수 있습니다. |

볼륨 액세스 모드는 볼륨 기능에 대한 설명자입니다. 제한 조건이 적용되지 않습니다. 리소스를 잘못된 사용으로 인한 런타임 오류는 스토리지 공급자가 처리합니다.

예를 들어, NFS는 ReadWriteOnce 액세스 모드를 제공합니다. 볼륨의 ROX 기능을 사용하려면 클레임을 읽기 전용으로 표시해야 합니다. 공급자에서의 오류는 런타임 시 마운트 오류로 표시됩니다.

iSCSI 및 파이버 채널 볼륨은 현재 펜싱 메커니즘을 지원하지 않습니다. 볼륨을 한 번에 하나씩만 사용하는지 확인해야 합니다. 노드 드레이닝과 같은 특정 상황에서는 두 개의 노드에서 볼륨을 동시에 사용할 수 있습니다. 노드를 드레인하기 전에 먼저 이러한 볼륨을 사용하는 Pod가 삭제되었는지 확인합니다.

| 볼륨 플러그인 | ReadWriteOnce [1] | ReadOnlyMany | ReadWriteMany |

|---|---|---|---|

| AliCloud Disk |

✅ |

- |

- |

| AWS EBS [2] |

✅ |

- |

- |

| AWS EFS |

✅ |

✅ |

✅ |

| Azure File |

✅ |

✅ |

✅ |

| Azure Disk |

✅ |

- |

- |

| Cinder |

✅ |

- |

- |

| 파이버 채널 |

✅ |

✅ |

- |

| GCP 영구 디스크 |

✅ |

- |

- |

| GCP 파일 저장소 |

✅ |

✅ |

✅ |

| HostPath |

✅ |

- |

- |

| IBM Power Virtual Server 디스크 |

✅ |

✅ |

✅ |

| IBM VPC Disk |

✅ |

- |

- |

| iSCSI |

✅ |

✅ |

- |

| 로컬 볼륨 |

✅ |

- |

- |

| NFS |

✅ |

✅ |

✅ |

| OpenStack Manila |

- |

- |

✅ |

| Red Hat OpenShift Data Foundation |

✅ |

- |

✅ |

| VMware vSphere |

✅ |

- |

✅ [3] |

- ReadWriteOnce(RWO) 볼륨은 여러 노드에 마운트할 수 없습니다. 시스템이 이미 실패한 노드에 할당되어 있기 때문에, 노드가 실패하면 시스템은 연결된 RWO 볼륨을 새 노드에 마운트하는 것을 허용하지 않습니다. 이로 인해 다중 연결 오류 메시지가 표시되면 동적 영구 볼륨이 연결된 경우와 같이 중요한 워크로드에서의 데이터가 손실되는 것을 방지하기 위해 종료되거나 충돌이 발생한 노드에서 Pod를 삭제해야 합니다.

- Amazon EBS를 사용하는 Pod에 재생성 배포 전략을 사용합니다.

- 기본 vSphere 환경에서 vSAN 파일 서비스를 지원하는 경우 OpenShift Container Platform에서 설치한 vSphere CSI(Container Storage Interface) Driver Operator는 RWX(ReadWriteMany) 볼륨의 프로비저닝을 지원합니다. vSAN 파일 서비스가 구성되어 있지 않고 RWX를 요청하면 볼륨이 생성되지 않고 오류가 기록됩니다. 자세한 내용은 "Container Storage Interface" → "VMware vSphere CSI Driver Operator 사용"을 참조하십시오.

3.3.4. 단계

볼륨은 다음 단계 중 하나에서 찾을 수 있습니다.

| 단계 | 설명 |

|---|---|

| Available | 아직 클레임에 바인딩되지 않은 여유 리소스입니다. |

| Bound | 볼륨이 클레임에 바인딩됩니다. |

| 해제됨 | 클레임이 삭제되었지만, 리소스가 아직 클러스터에 의해 회수되지 않았습니다. |

| 실패 | 볼륨에서 자동 회수가 실패했습니다. |

다음 명령을 실행하여 PV에 바인딩된 PVC의 이름을 볼 수 있습니다.

$ oc get pv <pv-claim>3.3.4.1. 마운트 옵션

mountOptions 속성을 사용하여 PV를 마운트하는 동안 마운트 옵션을 지정할 수 있습니다.

예를 들면 다음과 같습니다.

마운트 옵션 예

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

mountOptions:

- nfsvers=4.1

nfs:

path: /tmp

server: 172.17.0.2

persistentVolumeReclaimPolicy: Retain

claimRef:

name: claim1

namespace: default- 1

- 지정된 마운트 옵션이 PV를 디스크에 마운트하는 동안 사용됩니다.

다음 PV 유형에서는 마운트 옵션을 지원합니다.

- AWS Elastic Block Store (EBS)

- Azure Disk

- Azure File

- Cinder

- GCE 영구 디스크

- iSCSI

- 로컬 볼륨

- NFS

- Red Hat OpenShift Data Foundation (Ceph RBD 전용)

- VMware vSphere

파이버 채널 및 HostPath PV는 마운트 옵션을 지원하지 않습니다.

3.4. 영구 볼륨 클레임

각 PersistentVolumeClaim 오브젝트에는 spec 및 status가 포함되며, 이는 PVC(영구 볼륨 클레임)의 사양과 상태이고, 예를 들면 다음과 같습니다.

PersistentVolumeClaim 오브젝트 정의 예

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: myclaim

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 8Gi

storageClassName: gold

status:

...3.4.1. 스토리지 클래스

선택 사항으로 클레임은 storageClassName 속성에 스토리지 클래스의 이름을 지정하여 특정 스토리지 클래스를 요청할 수 있습니다. PVC와 storageClassName이 동일하고 요청된 클래스의 PV만 PVC에 바인딩할 수 있습니다. 클러스터 관리자는 동적 프로비저너를 구성하여 하나 이상의 스토리지 클래스에 서비스를 제공할 수 있습니다. 클러스터 관리자는 PVC의 사양과 일치하는 PV를 생성할 수 있습니다.

클러스터 스토리지 작업자는 사용 중인 플랫폼에 따라 기본 스토리지 클래스를 설치할 수 있습니다. 이 스토리지 클래스는 Operator가 소유하고 제어합니다. 주석 및 레이블 정의 외에는 삭제하거나 변경할 수 없습니다. 다른 동작이 필요한 경우 사용자 정의 스토리지 클래스를 정의해야 합니다.

클러스터 관리자는 모든 PVC의 기본 스토리지 클래스도 설정할 수도 있습니다. 기본 스토리지 클래스가 구성된 경우 PVC는 ""로 설정된 StorageClass 또는 storageClassName 주석이 스토리지 클래스를 제외하고 PV에 바인딩되도록 명시적으로 요청해야 합니다.

두 개 이상의 스토리지 클래스가 기본값으로 표시되면 storageClassName이 명시적으로 지정된 경우에만 PVC를 생성할 수 있습니다. 따라서 1개의 스토리지 클래스만 기본값으로 설정해야 합니다.

3.4.2. 액세스 모드

클레임은 특정 액세스 모드로 스토리지를 요청할 때 볼륨과 동일한 규칙을 사용합니다.

3.4.3. 리소스

Pod와 같은 클레임은 특정 리소스 수량을 요청할 수 있습니다. 이 경우 요청은 스토리지에 대한 요청입니다. 동일한 리소스 모델이 볼륨 및 클레임에 적용됩니다.

3.4.4. 클레임을 볼륨으로

클레임을 볼륨으로 사용하여 Pod 액세스 스토리지 클레임을 사용하는 Pod와 동일한 네임스페이스에 클레임이 있어야 합니다. 클러스터는 Pod의 네임스페이스에서 클레임을 검색하고 이를 사용하여 클레임을 지원하는 PersistentVolume을 가져옵니다. 볼륨은 호스트에 마운트되며, 예를 들면 다음과 같습니다.

호스트 및 Pod에 볼륨 마운트 예

kind: Pod

apiVersion: v1

metadata:

name: mypod

spec:

containers:

- name: myfrontend

image: dockerfile/nginx

volumeMounts:

- mountPath: "/var/www/html"

name: mypd

volumes:

- name: mypd

persistentVolumeClaim:

claimName: myclaim 3.5. 블록 볼륨 지원

OpenShift Container Platform은 원시 블록 볼륨을 정적으로 프로비저닝할 수 있습니다. 이러한 볼륨에는 파일 시스템이 없으며 디스크에 직접 쓰거나 자체 스토리지 서비스를 구현하는 애플리케이션에 성능 이점을 제공할 수 있습니다.

원시 블록 볼륨은 PV 및 PVC 사양에 volumeMode:Block을 지정하여 프로비저닝됩니다.

권한이 부여된 컨테이너를 허용하려면 원시 블록 볼륨을 사용하는 Pod를 구성해야 합니다.

다음 표는 블록 볼륨을 지원하는 볼륨 플러그인을 보여줍니다.

| 볼륨 플러그인 | 수동 프로비저닝 | 동적 프로비저닝 | 모두 지원됨 |

|---|---|---|---|

| AliCloud Disk | ✅ | ✅ | ✅ |

| Amazon Elastic Block Store(Amazon EBS) | ✅ | ✅ | ✅ |

| Amazon Elastic File Storage(Amazon EFS) | |||

| Azure Disk | ✅ | ✅ | ✅ |

| Azure File | |||

| Cinder | ✅ | ✅ | ✅ |

| 파이버 채널 | ✅ | ✅ | |

| GCP | ✅ | ✅ | ✅ |

| HostPath | |||

| IBM VPC Disk | ✅ | ✅ | ✅ |

| iSCSI | ✅ | ✅ | |

| 로컬 볼륨 | ✅ | ✅ | |

| NFS | |||

| Red Hat OpenShift Data Foundation | ✅ | ✅ | ✅ |

| VMware vSphere | ✅ | ✅ | ✅ |

수동으로 프로비저닝할 수 있지만 완전히 지원되지 않는 블록 볼륨을 사용하는 것은 기술 프리뷰 기능입니다. Technology Preview 기능은 Red Hat 프로덕션 서비스 수준 계약(SLA)에서 지원되지 않으며 기능적으로 완전하지 않을 수 있습니다. 따라서 프로덕션 환경에서 사용하는 것은 권장하지 않습니다. 이러한 기능을 사용하면 향후 제품 기능을 조기에 이용할 수 있어 개발 과정에서 고객이 기능을 테스트하고 피드백을 제공할 수 있습니다.

Red Hat 기술 프리뷰 기능의 지원 범위에 대한 자세한 내용은 기술 프리뷰 기능 지원 범위를 참조하십시오.

3.5.1. 블록 볼륨 예

PV 예

apiVersion: v1

kind: PersistentVolume

metadata:

name: block-pv

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteOnce

volumeMode: Block

persistentVolumeReclaimPolicy: Retain

fc:

targetWWNs: ["50060e801049cfd1"]

lun: 0

readOnly: false- 1

- 이 PV가 원시 블록 볼륨임을 나타내려면

volumeMode를Block으로 설정해야 합니다.

PVC 예

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: block-pvc

spec:

accessModes:

- ReadWriteOnce

volumeMode: Block

resources:

requests:

storage: 10Gi- 1

- 원시 블록 PVC가 요청되었음을 나타내려면

volumeMode를Block으로 설정해야 합니다.

Pod 사양 예

apiVersion: v1

kind: Pod

metadata:

name: pod-with-block-volume

spec:

containers:

- name: fc-container

image: fedora:26

command: ["/bin/sh", "-c"]

args: [ "tail -f /dev/null" ]

volumeDevices:

- name: data

devicePath: /dev/xvda

volumes:

- name: data

persistentVolumeClaim:

claimName: block-pvc | 값 | 기본 |

|---|---|

| 파일 시스템 | 예 |

| 블록 | 아니요 |

PV volumeMode | PVC volumeMode | 바인딩 결과 |

|---|---|---|

| 파일 시스템 | 파일 시스템 | 바인딩 |

| 지정되지 않음 | 지정되지 않음 | 바인딩 |

| 파일 시스템 | 지정되지 않음 | 바인딩 |

| 지정되지 않음 | 파일 시스템 | 바인딩 |

| 블록 | 블록 | 바인딩 |

| 지정되지 않음 | 블록 | 바인딩되지 않음 |

| 블록 | 지정되지 않음 | 바인딩되지 않음 |

| 파일 시스템 | 블록 | 바인딩되지 않음 |

| 블록 | 파일 시스템 | 바인딩되지 않음 |

값을 지정하지 않으면 Filesystem의 기본값이 사용됩니다.

3.6. fsGroup을 사용하여 Pod 타임아웃 감소

스토리지 볼륨에 여러 파일(~1TiB 이상)이 포함된 경우 Pod 시간 초과가 발생할 수 있습니다.

이는 기본적으로 OpenShift Container Platform이 해당 볼륨이 마운트될 때 Pod의 securityContext 에 지정된 fsGroup 과 일치하도록 각 볼륨의 콘텐츠에 대한 소유권 및 권한을 재귀적으로 변경하므로 발생할 수 있습니다. 대규모 볼륨의 경우 소유권 및 권한을 확인하고 변경하는 데 시간이 걸리므로 Pod 시작 속도가 느려질 수 있습니다. securityContext 내에서 fsGroupChangePolicy 필드를 사용하여 OpenShift Container Platform에서 볼륨에 대한 소유권 및 권한을 확인하고 관리하는 방식을 제어할 수 있습니다.

fsGroupChangePolicy 는 Pod 내부에 노출되기 전에 볼륨의 소유권 및 권한을 변경하는 동작을 정의합니다. 이 필드는 fsGroup- 제어되는 소유권 및 권한을 지원하는 볼륨 유형에만 적용됩니다. 이 필드에는 두 가지 가능한 값이 있습니다.

-

OnRootMismatch: 권한과 루트 디렉터리의 소유권이 예상되는 볼륨 권한과 일치하지 않는 경우에만 권한 및 소유권을 변경합니다. 이를 통해 Pod 타임아웃을 줄이기 위해 볼륨의 소유권 및 권한을 변경하는 데 걸리는 시간을 단축할 수 있습니다. -

Always: 볼륨이 마운트될 때 볼륨의 권한 및 소유권을 항상 변경합니다.

fsGroupChangePolicy example

securityContext:

runAsUser: 1000

runAsGroup: 3000

fsGroup: 2000

fsGroupChangePolicy: "OnRootMismatch"

...- 1

OnRootMismatch는 재귀 권한 변경을 생략하여 Pod 시간 초과 문제를 방지할 수 있도록 지정합니다.

fsGroupChangePolicyfield는 secret, configMap, emptydir과 같은 임시 볼륨 유형에는 영향을 미치지 않습니다.

4장. 영구 스토리지 구성

4.1. AWS Elastic Block Store를 사용하는 영구저장장치

OpenShift Container Platform은 Amazon EBS(Elastic Block Store) 볼륨을 지원합니다. Amazon EC2를 사용하여 영구 스토리지로 OpenShift Container Platform 클러스터를 프로비저닝할 수 있습니다.

Kubernetes 영구 볼륨 프레임워크를 사용하면 관리자는 영구 스토리지로 클러스터를 프로비저닝하고 사용자가 기본 인프라에 대한 지식이 없어도 해당 리소스를 요청할 수 있습니다. Amazon EBS 볼륨을 동적으로 프로비저닝할 수 있습니다. 영구 볼륨은 단일 프로젝트 또는 네임스페이스에 바인딩되지 않으며, OpenShift Container Platform 클러스터에서 공유할 수 있습니다. 영구 볼륨 클레임은 프로젝트 또는 네임스페이스에 고유하며 사용자가 요청할 수 있습니다. KMS 키를 정의하여 AWS에서 컨테이너-persistent 볼륨을 암호화할 수 있습니다. 기본적으로 OpenShift Container Platform 버전 4.10을 사용하여 새로 생성된 클러스터에서는 gp3 스토리지 및 AWS EBS CSI 드라이버 를 사용합니다.

인프라의 스토리지의 고가용성은 기본 스토리지 공급자가 담당합니다.

OpenShift Container Platform의 경우 AWS EBS in-tree에서 CSI(Container Storage Interface) 드라이버로 자동 마이그레이션은 TP(기술 프리뷰) 기능으로 사용할 수 있습니다. 마이그레이션이 활성화되면 기존 in-tree 드라이버를 사용하여 프로비저닝된 볼륨이 AWS EBS CSI 드라이버를 사용하도록 자동으로 마이그레이션됩니다. 자세한 내용은 CSI 자동 마이그레이션 기능을 참조하십시오.

4.1.1. EBS 스토리지 클래스 생성

스토리지 클래스는 스토리지 수준 및 사용량을 구분하고 조정하는 데 사용됩니다. 스토리지 클래스를 정의하면 사용자는 동적으로 프로비저닝된 영구 볼륨을 얻을 수 있습니다.

4.1.2. 영구 볼륨 클레임 생성

사전 요구 사항

OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있어야 합니다.

절차

- OpenShift Container Platform 콘솔에서 스토리지 → 영구 볼륨 클레임을 클릭합니다.

- 영구 볼륨 클레임 생성 개요에서 영구 볼륨 클레임 생성을 클릭합니다.

표시되는 페이지에 원하는 옵션을 정의합니다.

- 드롭다운 메뉴에서 이전에 생성한 스토리지 클래스를 선택합니다.

- 스토리지 클레임의 고유한 이름을 입력합니다.

- 액세스 모드를 선택합니다. 이 선택에서는 스토리지 클레임에 대한 읽기 및 쓰기 액세스 권한을 결정합니다.

- 스토리지 클레임의 크기를 정의합니다.

- 만들기를 클릭하여 영구 볼륨 클레임을 생성하고 영구 볼륨을 생성합니다.

4.1.3. 볼륨 형식

OpenShift Container Platform이 볼륨을 마운트하고 컨테이너에 전달하기 전에 영구 볼륨 정의에 fsType 매개변수에 의해 지정된 파일 시스템이 볼륨에 포함되어 있는지 확인합니다. 장치가 파일 시스템으로 포맷되지 않으면 장치의 모든 데이터가 삭제되고 장치는 지정된 파일 시스템에서 자동으로 포맷됩니다.

이 확인을 사용하면 OpenShift Container Platform이 처음 사용하기 전에 포맷되기 때문에 형식화되지 않은 AWS 볼륨을 영구 볼륨으로 사용할 수 있습니다.

4.1.4. 노드의 최대 EBS 볼륨 수

기본적으로 OpenShift Container Platform은 노드 1개에 연결된 최대 39개의 EBS 볼륨을 지원합니다. 이 제한은 AWS 볼륨 제한과 일치합니다. 볼륨 제한은 인스턴스 유형에 따라 다릅니다.

클러스터 관리자는 in-tree 또는 CSI(Container Storage Interface) 볼륨과 해당 스토리지 클래스를 사용할 수 있지만, 두 볼륨을 동시에 사용하지 않아야 합니다. 연결된 최대 EBS 볼륨 수는 in-tree 및 CSI 볼륨에 대해 별도로 계산되므로 각 유형의 최대 39개의 EBS 볼륨을 사용할 수 있습니다.

in-tree 볼륨 플러그인에서 사용할 수 없는 볼륨 스냅샷과 같은 추가 스토리지 옵션에 액세스하는 방법에 대한 자세한 내용은 AWS Elastic Block Store CSI Driver Operator 를 참조하십시오.

4.1.5. KMS 키를 사용하여 AWS의 컨테이너 영구 볼륨 암호화

AWS에 배포할 때 명시적 규정 준수 및 보안 지침이 있는 경우 AWS에서 컨테이너-영구 볼륨을 암호화할 KMS 키를 정의하는 것이 유용합니다.

사전 요구 사항

- 기본 인프라에는 스토리지가 포함되어야 합니다.

- AWS에서 고객 KMS 키를 생성해야 합니다.

절차

스토리지 클래스를 생성합니다.

$ cat << EOF | oc create -f - apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: <storage-class-name>1 parameters: fsType: ext42 encrypted: "true" kmsKeyId: keyvalue3 provisioner: ebs.csi.aws.com reclaimPolicy: Delete volumeBindingMode: WaitForFirstConsumer EOF- 1

- 스토리지 클래스의 이름을 지정합니다.

- 2

- 프로비저닝된 볼륨에서 생성되는 파일 시스템입니다.

- 3

- container-persistent 볼륨을 암호화할 때 사용할 키의 전체 ARMN(Amazon Resource Name)을 지정합니다. 키를 제공하지 않지만

encrypted필드가true로 설정된 경우 기본 KMS 키가 사용됩니다. AWS 문서의 AWS에서 키 ID 및 키 ARN 찾기를 참조하십시오.

KMS 키를 지정하는 스토리지 클래스를 사용하여 PVC(영구 볼륨 클레임)를 생성합니다.

$ cat << EOF | oc create -f - apiVersion: v1 kind: PersistentVolumeClaim metadata: name: mypvc spec: accessModes: - ReadWriteOnce volumeMode: Filesystem storageClassName: <storage-class-name> resources: requests: storage: 1Gi EOFPVC를 사용할 워크로드 컨테이너를 생성합니다.

$ cat << EOF | oc create -f - kind: Pod metadata: name: mypod spec: containers: - name: httpd image: quay.io/centos7/httpd-24-centos7 ports: - containerPort: 80 volumeMounts: - mountPath: /mnt/storage name: data volumes: - name: data persistentVolumeClaim: claimName: mypvc EOF

4.2. Azure를 사용하는 영구 스토리지

OpenShift Container Platform은 Microsoft Azure Disk 볼륨을 지원합니다. Azure를 사용하여 영구 스토리지로 OpenShift Container Platform 클러스터를 프로비저닝할 수 있습니다. Kubernetes 및 Azure에 대해 어느 정도 익숙한 것으로 가정합니다. Kubernetes 영구 볼륨 프레임워크를 사용하면 관리자는 영구 스토리지로 클러스터를 프로비저닝하고 사용자가 기본 인프라에 대한 지식이 없어도 해당 리소스를 요청할 수 있습니다. Azure 디스크 볼륨은 동적으로 프로비저닝할 수 있습니다. 영구 볼륨은 단일 프로젝트 또는 네임스페이스에 바인딩되지 않으며, OpenShift Container Platform 클러스터에서 공유할 수 있습니다. 영구 볼륨 클레임은 프로젝트 또는 네임스페이스에 고유하며 사용자가 요청할 수 있습니다.

OpenShift Container Platform은 기본적으로 in-tree (비 CSI) 플러그인을 사용하여 Azure Disk 스토리지를 프로비저닝합니다.

향후 OpenShift Container Platform 버전에서는 기존 in-tree 플러그인을 사용하여 프로비저닝된 볼륨이 동등한 CSI 드라이버로 마이그레이션할 계획입니다. CSI 자동 마이그레이션이 원활해야 합니다. 마이그레이션은 영구 볼륨, 영구 볼륨 클레임 및 스토리지 클래스와 같은 기존 API 오브젝트를 사용하는 방법을 변경하지 않습니다. 마이그레이션에 대한 자세한 내용은 CSI 자동 마이그레이션 을 참조하십시오.

전체 마이그레이션 후 in-tree 플러그인은 향후 OpenShift Container Platform 버전에서 제거됩니다.

인프라의 스토리지의 고가용성은 기본 스토리지 공급자가 담당합니다.

4.2.1. Azure 스토리지 클래스 생성

스토리지 클래스는 스토리지 수준 및 사용량을 구분하고 조정하는 데 사용됩니다. 스토리지 클래스를 정의하면 사용자는 동적으로 프로비저닝된 영구 볼륨을 얻을 수 있습니다.

절차

- OpenShift Container Platform 콘솔에서 스토리지 → 스토리지 클래스를 클릭합니다.

- 스토리지 클래스 개요에서 스토리지 클래스 만들기를 클릭합니다.

표시되는 페이지에 원하는 옵션을 정의합니다.

- 스토리지 클래스를 참조할 이름을 입력합니다.

- 선택적 설명을 입력합니다.

- 회수 정책을 선택합니다.

드롭다운 목록에서

kubernetes.io/azure-disk를 선택합니다.-

스토리지 계정 유형을 입력합니다. 이는 Azure 스토리지 계정 SKU 계층에 해당합니다. 유효한 옵션은

Premium_LRS,Standard_LRS,StandardSSD_LRS및UltraSSD_LRS입니다. 계정 종류를 입력합니다. 유효한 옵션은

shared,dedicated및managed입니다.중요Red Hat은 스토리지 클래스에서

kind: Managed의 사용만 지원합니다.Shared및Dedicated를 사용하여 Azure는 관리되지 않은 디스크를 생성합니다. 반면 OpenShift Container Platform은 머신 OS(root) 디스크의 관리 디스크를 생성합니다. Azure Disk는 노드에서 관리 및 관리되지 않은 디스크를 모두 사용하도록 허용하지 않으므로Shared또는Dedicated로 생성된 관리되지 않은 디스크를 OpenShift Container Platform 노드에 연결할 수 없습니다.

-

스토리지 계정 유형을 입력합니다. 이는 Azure 스토리지 계정 SKU 계층에 해당합니다. 유효한 옵션은

- 원하는 대로 스토리지 클래스에 대한 추가 매개변수를 입력합니다.

- 생성을 클릭하여 스토리지 클래스를 생성합니다.

4.2.2. 영구 볼륨 클레임 생성

사전 요구 사항

OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있어야 합니다.

절차

- OpenShift Container Platform 콘솔에서 스토리지 → 영구 볼륨 클레임을 클릭합니다.

- 영구 볼륨 클레임 생성 개요에서 영구 볼륨 클레임 생성을 클릭합니다.

표시되는 페이지에 원하는 옵션을 정의합니다.

- 드롭다운 메뉴에서 이전에 생성한 스토리지 클래스를 선택합니다.

- 스토리지 클레임의 고유한 이름을 입력합니다.

- 액세스 모드를 선택합니다. 이 선택에서는 스토리지 클레임에 대한 읽기 및 쓰기 액세스 권한을 결정합니다.

- 스토리지 클레임의 크기를 정의합니다.

- 만들기를 클릭하여 영구 볼륨 클레임을 생성하고 영구 볼륨을 생성합니다.

4.2.3. 볼륨 형식

OpenShift Container Platform이 볼륨을 마운트하고 컨테이너에 전달하기 전에 영구 볼륨 정의에 fsType 매개변수에 의해 지정된 파일 시스템이 포함되어 있는지 확인합니다. 장치가 파일 시스템으로 포맷되지 않으면 장치의 모든 데이터가 삭제되고 장치는 지정된 파일 시스템에서 자동으로 포맷됩니다.

이를 통해 OpenShift Container Platform이 처음 사용하기 전에 포맷되기 때문에 형식화되지 않은 Azure 볼륨을 영구 볼륨으로 사용할 수 있습니다.

4.2.4. PVC를 사용하여 경미한 디스크가 있는 머신을 배포하는 머신 세트

Azure에서 실행되는 머신 세트를 생성하여 경미한 디스크가 있는 머신을 배포할 수 있습니다. Ultra 디스크는 가장 엄격한 데이터 워크로드와 함께 사용하도록 설계된 고성능 스토리지입니다.

in-tree 플러그인 및 CSI 드라이버는 PVC를 사용하여 무기한 디스크를 활성화합니다. PVC를 생성하지 않고 전적으로 디스크가 있는 머신을 데이터 디스크로 배포할 수도 있습니다.

4.2.4.1. 머신 세트를 사용하여 무기 디스크로 머신 생성

머신 세트 YAML 파일을 편집하여 Azure에 simple 디스크가 있는 머신을 배포할 수 있습니다.

사전 요구 사항

- 기존 Microsoft Azure 클러스터가 있어야 합니다.

절차

기존 Azure

MachineSetCR(사용자 정의 리소스)을 복사하고 다음 명령을 실행하여 편집합니다.$ oc edit machineset <machine-set-name>여기서

<machine-set-name>은 디스크가 있는 머신을 프로비저닝할 머신 세트입니다.표시된 위치에 다음 행을 추가합니다.

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet spec: template: spec: metadata: labels: disk: ultrassd1 providerSpec: value: ultraSSDCapability: Enabled2 다음 명령을 실행하여 업데이트된 구성을 사용하여 머신 세트를 생성합니다.

$ oc create -f <machine-set-name>.yaml다음 YAML 정의가 포함된 스토리지 클래스를 생성합니다.

apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: ultra-disk-sc1 parameters: cachingMode: None diskIopsReadWrite: "2000"2 diskMbpsReadWrite: "320"3 kind: managed skuname: UltraSSD_LRS provisioner: disk.csi.azure.com4 reclaimPolicy: Delete volumeBindingMode: WaitForFirstConsumer5 다음 YAML 정의가 포함된 Restic

-disk-sc스토리지 클래스를 참조하도록 PVC(영구 볼륨 클레임)를 생성합니다.apiVersion: v1 kind: PersistentVolumeClaim metadata: name: ultra-disk1 spec: accessModes: - ReadWriteOnce storageClassName: ultra-disk-sc2 resources: requests: storage: 4Gi3 다음 YAML 정의가 포함된 Pod를 생성합니다.

apiVersion: v1 kind: Pod metadata: name: nginx-ultra spec: nodeSelector: disk: ultrassd1 containers: - name: nginx-ultra image: alpine:latest command: - "sleep" - "infinity" volumeMounts: - mountPath: "/mnt/azure" name: volume volumes: - name: volume persistentVolumeClaim: claimName: ultra-disk2

검증

다음 명령을 실행하여 시스템이 생성되었는지 확인합니다.

$ oc get machines시스템이

Running상태에 있어야 합니다.실행 중이고 노드가 연결된 머신의 경우 다음 명령을 실행하여 파티션을 검증합니다.

$ oc debug node/<node-name> -- chroot /host lsblk이 명령에서

oc debug node/<node-name>은 <node-name> 노드에서 디버깅 쉘을 시작하고--를 사용하여 명령을 전달합니다. 전달된 명령chroot /host는 기본 호스트 OS 바이너리에 대한 액세스를 제공하며lsblk는 호스트 OS 시스템에 연결된 블록 장치를 보여줍니다.

다음 단계

Pod에서 경미한 디스크를 사용하려면 마운트 지점을 사용하는 워크로드를 생성합니다. 다음 예와 유사한 YAML 파일을 생성합니다.

apiVersion: v1 kind: Pod metadata: name: ssd-benchmark1 spec: containers: - name: ssd-benchmark1 image: nginx ports: - containerPort: 80 name: "http-server" volumeMounts: - name: lun0p1 mountPath: "/tmp" volumes: - name: lun0p1 hostPath: path: /var/lib/lun0p1 type: DirectoryOrCreate nodeSelector: disktype: ultrassd

4.2.4.2. 경미한 디스크를 활성화하는 머신 세트의 리소스 문제 해결

이 섹션의 정보를 사용하여 발생할 수 있는 문제를 이해하고 복구합니다.

4.2.4.2.1. 경미한 디스크에서 지원하는 영구 볼륨 클레임을 마운트할 수 없음

경미한 디스크에서 지원하는 영구 볼륨 클레임을 마운트하는 데 문제가 있는 경우 Pod가 ContainerCreating 상태에 고착되고 경고가 트리거됩니다.

예를 들어, additionalCapabilities.ultraSSDEnabled 매개변수가 Pod를 호스팅하는 노드를 지원하는 머신에 설정되지 않은 경우 다음 오류 메시지가 표시됩니다.

StorageAccountType UltraSSD_LRS can be used only when additionalCapabilities.ultraSSDEnabled is set.이 문제를 해결하려면 다음 명령을 실행하여 Pod를 설명합니다.

$ oc -n <stuck_pod_namespace> describe pod <stuck_pod_name>

4.3. Azure File을 사용하는 영구 스토리지

OpenShift Container Platform은 Microsoft Azure File 볼륨을 지원합니다. Azure를 사용하여 영구 스토리지로 OpenShift Container Platform 클러스터를 프로비저닝할 수 있습니다. Kubernetes 및 Azure에 대해 어느 정도 익숙한 것으로 가정합니다.

Kubernetes 영구 볼륨 프레임워크를 사용하면 관리자는 영구 스토리지로 클러스터를 프로비저닝하고 사용자가 기본 인프라에 대한 지식이 없어도 해당 리소스를 요청할 수 있습니다. Azure File 볼륨을 동적으로 프로비저닝할 수 있습니다.

영구 볼륨은 단일 프로젝트 또는 네임스페이스에 바인딩되지 않으며 OpenShift Container Platform 클러스터에서 공유할 수 있습니다. 영구 볼륨 클레임은 프로젝트 또는 네임스페이스에 고유하며 사용자가 애플리케이션에서 사용하도록 요청할 수 있습니다.

인프라의 스토리지의 고가용성은 기본 스토리지 공급자가 담당합니다.

Azure File 볼륨은 서버 메시지 블록을 사용합니다.

향후 OpenShift Container Platform 버전에서는 기존 in-tree 플러그인을 사용하여 프로비저닝된 볼륨이 동등한 CSI 드라이버로 마이그레이션할 계획입니다. CSI 자동 마이그레이션이 원활해야 합니다. 마이그레이션은 영구 볼륨, 영구 볼륨 클레임 및 스토리지 클래스와 같은 기존 API 오브젝트를 사용하는 방법을 변경하지 않습니다. 마이그레이션에 대한 자세한 내용은 CSI 자동 마이그레이션 을 참조하십시오.

전체 마이그레이션 후 in-tree 플러그인은 향후 OpenShift Container Platform 버전에서 제거됩니다.

추가 리소스

4.3.1. Azure File 공유 영구 볼륨 클레임 생성

영구 볼륨 클레임을 생성하려면 먼저 Azure 계정 및 키가 포함된 Secret 오브젝트를 정의해야 합니다. 이 시크릿은 PersistentVolume 정의에 사용되며 애플리케이션에서 사용하기 위해 영구 볼륨 클레임에 의해 참조됩니다.

사전 요구 사항

- Azure File 공유가 있습니다.

- 이 공유에 액세스할 수 있는 인증 정보(특히 스토리지 계정 및 키)를 사용할 수 있습니다.

절차

Azure File 인증 정보가 포함된

Secret오브젝트를 생성합니다.$ oc create secret generic <secret-name> --from-literal=azurestorageaccountname=<storage-account> \1 --from-literal=azurestorageaccountkey=<storage-account-key>2 생성한

Secret오브젝트를 참조하는PersistentVolume오브젝트를 생성합니다.apiVersion: "v1" kind: "PersistentVolume" metadata: name: "pv0001"1 spec: capacity: storage: "5Gi"2 accessModes: - "ReadWriteOnce" storageClassName: azure-file-sc azureFile: secretName: <secret-name>3 shareName: share-14 readOnly: false생성한 영구 볼륨에 매핑되는

PersistentVolumeClaim오브젝트를 생성합니다.apiVersion: "v1" kind: "PersistentVolumeClaim" metadata: name: "claim1"1 spec: accessModes: - "ReadWriteOnce" resources: requests: storage: "5Gi"2 storageClassName: azure-file-sc3 volumeName: "pv0001"4

4.3.2. Pod에서 Azure 파일 공유 마운트

영구 볼륨 클레임을 생성한 후 애플리케이션에 의해 내부에서 사용될 수 있습니다. 다음 예시는 Pod 내부에서 이 공유를 마운트하는 방법을 보여줍니다.

사전 요구 사항

- 기본 Azure File 공유에 매핑된 영구 볼륨 클레임이 있습니다.

절차

기존 영구 볼륨 클레임을 마운트하는 Pod를 생성합니다.

apiVersion: v1 kind: Pod metadata: name: pod-name1 spec: containers: ... volumeMounts: - mountPath: "/data"2 name: azure-file-share volumes: - name: azure-file-share persistentVolumeClaim: claimName: claim13

4.4. Cinder를 사용하는 영구 스토리지

OpenShift Container Platform은 OpenStack Cinder를 지원합니다. Kubernetes 및 OpenStack에 대해 어느 정도 익숙한 것으로 가정합니다.

Cinder 볼륨은 동적으로 프로비저닝할 수 있습니다. 영구 볼륨은 단일 프로젝트 또는 네임스페이스에 바인딩되지 않으며, OpenShift Container Platform 클러스터에서 공유할 수 있습니다. 영구 볼륨 클레임은 프로젝트 또는 네임스페이스에 고유하며 사용자가 요청할 수 있습니다.

OpenShift Container Platform은 기본적으로 in-tree (비 CSI) 플러그인을 사용하여 Cinder 스토리지를 프로비저닝합니다.

향후 OpenShift Container Platform 버전에서는 기존 in-tree 플러그인을 사용하여 프로비저닝된 볼륨이 동등한 CSI 드라이버로 마이그레이션할 계획입니다. CSI 자동 마이그레이션이 원활해야 합니다. 마이그레이션은 영구 볼륨, 영구 볼륨 클레임 및 스토리지 클래스와 같은 기존 API 오브젝트를 사용하는 방법을 변경하지 않습니다. 마이그레이션에 대한 자세한 내용은 CSI 자동 마이그레이션 을 참조하십시오.

전체 마이그레이션 후 in-tree 플러그인은 향후 OpenShift Container Platform 버전에서 제거됩니다.

4.4.1. Cinder를 사용한 수동 프로비저닝

OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있어야 합니다.

사전 요구 사항

- RHOSP(Red Hat OpenStack Platform)용으로 구성된 OpenShift Container Platform

- Cinder 볼륨 ID

4.4.1.1. 영구 볼륨 생성

OpenShift Container Platform에서 생성하기 전에 오브젝트 정의에서 PV(영구 볼륨)를 정의해야 합니다.

절차

오브젝트 정의를 파일에 저장합니다.

cinder-persistentvolume.yaml

apiVersion: "v1" kind: "PersistentVolume" metadata: name: "pv0001"1 spec: capacity: storage: "5Gi"2 accessModes: - "ReadWriteOnce" cinder:3 fsType: "ext3"4 volumeID: "f37a03aa-6212-4c62-a805-9ce139fab180"5 중요볼륨이 포맷되어 프로비저닝된 후에는

fstype매개변수 값을 변경하지 마십시오. 이 값을 변경하면 데이터가 손실되고 Pod 오류가 발생할 수 있습니다.이전 단계에서 저장한 오브젝트 정의 파일을 생성합니다.

$ oc create -f cinder-persistentvolume.yaml

4.4.1.2. 영구 볼륨 포맷

OpenShift Container Platform은 처음 사용하기 전에 형식화되기 때문에 형식화되지 않은 Cinder 볼륨을 PV로 사용할 수 있습니다.

OpenShift Container Platform이 볼륨을 마운트하고 컨테이너에 전달하기 전, 시스템은 PV 정의에 fsType 매개변수에 의해 지정된 파일 시스템이 포함되어 있는지 확인합니다. 장치가 파일 시스템으로 포맷되지 않으면 장치의 모든 데이터가 삭제되고 장치는 지정된 파일 시스템에서 자동으로 포맷됩니다.

4.4.1.3. Cinder 볼륨 보안

애플리케이션에서 Cinder PV를 사용하는 경우 배포 구성에 대한 보안을 구성합니다.

사전 요구 사항

-

적절한

fsGroup전략을 사용하는 SCC를 생성해야 합니다.

절차

서비스 계정을 생성하고 SCC에 추가합니다.

$ oc create serviceaccount <service_account>$ oc adm policy add-scc-to-user <new_scc> -z <service_account> -n <project>애플리케이션 배포 구성에서 서비스 계정 이름과

securityContext를 입력합니다.apiVersion: v1 kind: ReplicationController metadata: name: frontend-1 spec: replicas: 11 selector:2 name: frontend template:3 metadata: labels:4 name: frontend5 spec: containers: - image: openshift/hello-openshift name: helloworld ports: - containerPort: 8080 protocol: TCP restartPolicy: Always serviceAccountName: <service_account>6 securityContext: fsGroup: 77777

4.5. 파이버 채널을 사용하는 영구 스토리지

OpenShift Container Platform은 파이버 채널을 지원하므로 파이버 채널 볼륨을 사용하여 영구 스토리지로 OpenShift Container Platform 클러스터를 프로비저닝할 수 있습니다. Kubernetes 및 Fibre 채널에 대해 어느 정도 익숙한 것으로 가정합니다.

파이버 채널을 사용하는 영구 스토리지는 ARM 아키텍처 기반 인프라에서 지원되지 않습니다.

Kubernetes 영구 볼륨 프레임워크를 사용하면 관리자는 영구 스토리지로 클러스터를 프로비저닝하고 사용자가 기본 인프라에 대한 지식이 없어도 해당 리소스를 요청할 수 있습니다. 영구 볼륨은 단일 프로젝트 또는 네임스페이스에 바인딩되지 않으며, OpenShift Container Platform 클러스터에서 공유할 수 있습니다. 영구 볼륨 클레임은 프로젝트 또는 네임스페이스에 고유하며 사용자가 요청할 수 있습니다.

인프라의 스토리지의 고가용성은 기본 스토리지 공급자가 담당합니다.

4.5.1. 프로비저닝

PersistentVolume API를 사용하여 파이버 채널 볼륨을 프로비저닝하려면 다음을 사용할 수 있어야 합니다.

-

targetWWN(파이버 채널 대상의 World Wide Names에 대한 배열). - 유효한 LUN 번호입니다.

- 파일 시스템 유형입니다.

영구 볼륨과 LUN은 일대일 매핑됩니다.

사전 요구 사항

- 파이버 채널 LUN은 기본 인프라에 있어야 합니다.

PersistentVolume 오브젝트 정의

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

fc:

wwids: [scsi-3600508b400105e210000900000490000]

targetWWNs: ['500a0981891b8dc5', '500a0981991b8dc5']

lun: 2

fsType: ext4- 1

- WWID(world wide identifiers)라고 합니다. FC

wwids또는 FCtargetWWN및lun의 조합은 설정해야 하지만 둘 다 동시에 설정되어야 합니다. WWN은 모든 스토리지 장치에 대해 고유하며 장치에 액세스하는 데 사용되는 경로와 무관하기 때문에 WWN 대상을 통해 FC WWID 식별자를 권장합니다. WWID 식별자는 SCSI Indentification Verttal Product Data (페이지 0x83 ) 또는 Unit Serial Number ()를 검색하여 얻을 수 있습니다. FC WWID는 장치의 경로가 변경되고 다른 시스템에서 장치에 액세스하는 경우에도 디스크의 데이터를 참조하기 위해페이지 0x80/dev/disk/by-id/로 식별됩니다. - 2 3

- 파이버 채널 WWN은

/dev/disk/by-path/pci-<IDENTIFIER>-fc-0x<WWN>-lun-<LUN#>로 식별되지만, 앞에0x를 포함한WWN이 오고 이후에-(하이픈)이 포함된 다른 경로가 있는 경로의 일부를 입력할 필요가 없습니다.

볼륨이 포맷되고 프로비저닝된 후 fstype 매개변수 값을 변경하면 데이터가 손실되고 Pod 오류가 발생할 수 있습니다.

4.5.1.1. 디스크 할당량 강제 적용

LUN 파티션을 사용하여 디스크 할당량 및 크기 제약 조건을 강제 적용합니다. 각 LUN은 단일 영구 볼륨에 매핑되며 고유한 이름을 영구 볼륨에 사용해야 합니다.

이렇게 하면 최종 사용자가 10Gi와 같은 특정 용량에 의해 영구 스토리지를 요청하고 해당 볼륨과 동등한 용량과 일치시킬 수 있습니다.

4.5.1.2. 파이버 채널 볼륨 보안

사용자는 영구 볼륨 클레임을 사용하여 스토리지를 요청합니다. 이 클레임은 사용자의 네임스페이스에만 존재하며, 동일한 네임스페이스 내의 Pod에서만 참조할 수 있습니다. 네임스페이스에서 영구 볼륨에 대한 액세스를 시도하면 Pod가 실패하게 됩니다.

각 파이버 채널 LUN은 클러스터의 모든 노드에서 액세스할 수 있어야 합니다.

4.6. FlexVolume을 사용하는 영구 스토리지

FlexVolume은 더 이상 사용되지 않는 기능입니다. 더 이상 사용되지 않는 기능은 여전히 OpenShift Container Platform에 포함되어 있으며 계속 지원됩니다. 그러나 이 기능은 향후 릴리스에서 제거될 예정이므로 새로운 배포에는 사용하지 않는 것이 좋습니다.

CSI(Out-of-tree Container Storage Interface) 드라이버는 OpenShift Container Platform에서 볼륨 드라이버를 작성하는 것이 좋습니다. FlexVolume 드라이버 관리자는 CSI 드라이버를 구현하고 FlexVolume 사용자를 CSI로 이동해야 합니다. FlexVolume 사용자는 워크로드를 CSI 드라이버로 이동해야 합니다.

OpenShift Container Platform에서 더 이상 사용되지 않거나 삭제된 주요 기능의 최신 목록은 OpenShift Container Platform 릴리스 노트에서 더 이상 사용되지 않고 삭제된 기능 섹션을 참조하십시오.

OpenShift Container Platform은 실행 가능한 모델을 사용하여 드라이버와 상호 작용하는 트리 부족 플러그인인 FlexVolume을 지원합니다.

플러그인이 내장된 백엔드의 스토리지를 사용하려면 FlexVolume 드라이버를 통해 OpenShift Container Platform을 확장하고 애플리케이션에 영구 스토리지를 제공할 수 있습니다.

Pod는 flexvolume in-tree 플러그인을 통해 FlexVolume 드라이버와 상호 작용합니다.

4.6.1. FlexVolume 드라이버 정보

FlexVolume 드라이버는 클러스터의 모든 노드의 올바르게 정의된 디렉터리에 위치하는 실행 파일입니다. flexVolume을 갖는 PersistentVolume 오브젝트가 소스로 표시되는 볼륨을 마운트하거나 마운트 해제해야 할 때마다 OpenShift Container Platform이 FlexVolume 드라이버를 호출합니다.

FlexVolume용 OpenShift Container Platform에서는 연결 및 분리 작업이 지원되지 않습니다.

4.6.2. FlexVolume 드라이버 예

FlexVolume 드라이버의 첫 번째 명령줄 인수는 항상 작업 이름입니다. 다른 매개 변수는 각 작업에 따라 다릅니다. 대부분의 작업에서는 JSON(JavaScript Object Notation) 문자열을 매개변수로 사용합니다. 이 매개변수는 전체 JSON 문자열이며 JSON 데이터가 있는 파일 이름은 아닙니다.

FlexVolume 드라이버에는 다음이 포함됩니다.

-

모든

flexVolume.options. -

fsType및readwrite와 같은kubernetes.io/접두사가 붙은flexVolume의 일부 옵션. -

설정된 경우,

kubernetes.io/secret/이 접두사로 사용되는 참조된 시크릿의 콘텐츠

FlexVolume 드라이버 JSON 입력 예

{

"fooServer": "192.168.0.1:1234",

"fooVolumeName": "bar",

"kubernetes.io/fsType": "ext4",

"kubernetes.io/readwrite": "ro",

"kubernetes.io/secret/<key name>": "<key value>",

"kubernetes.io/secret/<another key name>": "<another key value>",

}OpenShift Container Platform은 드라이버의 표준 출력에서 JSON 데이터를 예상합니다. 지정하지 않으면, 출력이 작업 결과를 설명합니다.

FlexVolume 드라이버 기본 출력 예

{

"status": "<Success/Failure/Not supported>",

"message": "<Reason for success/failure>"

}

드라이버의 종료 코드는 성공의 경우 0이고 오류의 경우 1이어야 합니다.

작업은 idempotent여야 합니다. 즉, 이미 마운트된 볼륨의 마운트의 경우 작업이 성공적으로 수행되어야 합니다.

4.6.3. FlexVolume 드라이버 설치

OpenShift Container Platform 확장에 사용되는 FlexVolume 드라이버는 노드에서만 실행됩니다. FlexVolumes를 구현하려면 호출할 작업 목록 및 설치 경로만 있으면 됩니다.

사전 요구 사항

FlexVolume 드라이버는 다음 작업을 구현해야 합니다.

init드라이버를 초기화합니다. 이는 모든 노드를 초기화하는 동안 호출됩니다.

- 인수: 없음

- 실행 위치: 노드

- 예상 출력: 기본 JSON

Mount디렉터리에 볼륨을 마운트합니다. 여기에는 장치를 검색한 다음 장치를 마운트하는 등 볼륨을 마운트하는 데 필요한 모든 항목이 포함됩니다.

-

인수:

<mount-dir><json> - 실행 위치: 노드

- 예상 출력: 기본 JSON

-

인수:

unmount디렉터리에서 볼륨의 마운트를 해제합니다. 여기에는 마운트 해제 후 볼륨을 정리하는 데 필요한 모든 항목이 포함됩니다.

-

인수:

<mount-dir> - 실행 위치: 노드

- 예상 출력: 기본 JSON

-

인수:

mountdevice- 개별 Pod가 마운트를 바인딩할 수 있는 디렉터리에 볼륨의 장치를 마운트합니다.

이 호출은 FlexVolume 사양에 지정된 "시크릿"을 전달하지 않습니다. 드라이버에 시크릿이 필요한 경우 이 호출을 구현하지 마십시오.

-

인수:

<mount-dir><json> - 실행 위치: 노드

예상 출력: 기본 JSON

unmountdevice- 디렉터리에서 볼륨의 장치를 마운트 해제합니다.

-

인수:

<mount-dir> - 실행 위치: 노드

예상 출력: 기본 JSON

-

다른 모든 작업은

{"status": "Not supported"}및1의 종료 코드와 함께 JSON을 반환해야 합니다.

-

다른 모든 작업은

절차

FlexVolume 드라이버를 설치하려면 다음을 수행합니다.

- 실행 가능한 파일이 클러스터의 모든 노드에 있는지 확인합니다.

-

볼륨 플러그인 경로에 실행 파일 위치:

/etc/kubernetes/kubelet-plugins/volume/exec/<vendor>~<driver>/<driver> .

예를 들어, foo 스토리지용 FlexVolume 드라이버를 설치하려면 실행 파일을 /etc/kubernetes/kubelet-plugins/volume/exec/openshift.com~foo/foo에 배치합니다.

4.6.4. FlexVolume 드라이버를 사용한 스토리지 사용

OpenShift Container Platform의 각 PersistentVolume 오브젝트는 볼륨과 같이 스토리지 백엔드에서 1개의 스토리지 자산을 나타냅니다.

절차

-

PersistentVolume오브젝트를 사용하여 설치된 스토리지를 참조합니다.

FlexVolume 드라이버를 사용한 영구 볼륨 오브젝트 정의 예

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

flexVolume:

driver: openshift.com/foo

fsType: "ext4"

secretRef: foo-secret

readOnly: true

options:

fooServer: 192.168.0.1:1234

fooVolumeName: bar- 1

- 볼륨의 이름입니다. 영구 볼륨 클레임을 통해 또는 Pod에서 식별되는 방법입니다. 이 이름은 백엔드 스토리지의 볼륨 이름과 다를 수 있습니다.

- 2

- 이 볼륨에 할당된 스토리지의 용량입니다.

- 3

- 드라이버의 이름입니다. 이 필드는 필수입니다.

- 4

- 볼륨에 존재하는 파일 시스템입니다. 이 필드는 선택 사항입니다.

- 5

- 시크릿에 대한 참조입니다. 이 시크릿의 키와 값은 호출 시 FlexVolume 드라이버에 제공됩니다. 이 필드는 선택 사항입니다.

- 6

- 읽기 전용 플래그입니다. 이 필드는 선택 사항입니다.

- 7

- FlexVolume 드라이버에 대한 추가 옵션입니다.

options필드에 있는 사용자가 지정한 플래그 외에도 다음 플래그도 실행 파일에 전달됩니다."fsType":"<FS type>", "readwrite":"<rw>", "secret/key1":"<secret1>" ... "secret/keyN":"<secretN>"

시크릿은 호출을 마운트하거나 마운트 해제하기 위해서만 전달됩니다.

4.7. GCE 영구 디스크를 사용하는 스토리지

OpenShift Container Platform은 gcePD(GCE 영구 디스크 볼륨)를 지원합니다. GCE를 사용하여 영구 스토리지로 OpenShift Container Platform 클러스터를 프로비저닝할 수 있습니다. Kubernetes 및 GCE에 대해 어느 정도 익숙한 것으로 가정합니다.

Kubernetes 영구 볼륨 프레임워크를 사용하면 관리자는 영구 스토리지로 클러스터를 프로비저닝하고 사용자가 기본 인프라에 대한 지식이 없어도 해당 리소스를 요청할 수 있습니다.

GCE 영구 디스크 볼륨은 동적으로 프로비저닝할 수 있습니다.

영구 볼륨은 단일 프로젝트 또는 네임스페이스에 바인딩되지 않으며, OpenShift Container Platform 클러스터에서 공유할 수 있습니다. 영구 볼륨 클레임은 프로젝트 또는 네임스페이스에 고유하며 사용자가 요청할 수 있습니다.

OpenShift Container Platform은 기본적으로 in-tree (비 CSI) 플러그인을 사용하여 gcePD 스토리지를 프로비저닝합니다.

향후 OpenShift Container Platform 버전에서는 기존 in-tree 플러그인을 사용하여 프로비저닝된 볼륨이 동등한 CSI 드라이버로 마이그레이션될 예정입니다. CSI 자동 마이그레이션이 원활해야 합니다. 마이그레이션은 영구 볼륨, 영구 볼륨 클레임 및 스토리지 클래스와 같은 기존 API 오브젝트를 사용하는 방법을 변경하지 않습니다. 마이그레이션에 대한 자세한 내용은 CSI 자동 마이그레이션 을 참조하십시오.

전체 마이그레이션 후 in-tree 플러그인은 향후 OpenShift Container Platform 버전에서 제거됩니다.

인프라의 스토리지의 고가용성은 기본 스토리지 공급자가 담당합니다.

4.7.1. GCE 스토리지 클래스 생성

스토리지 클래스는 스토리지 수준 및 사용량을 구분하고 조정하는 데 사용됩니다. 스토리지 클래스를 정의하면 사용자는 동적으로 프로비저닝된 영구 볼륨을 얻을 수 있습니다.

4.7.2. 영구 볼륨 클레임 생성

사전 요구 사항

OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있어야 합니다.

절차

- OpenShift Container Platform 콘솔에서 스토리지 → 영구 볼륨 클레임을 클릭합니다.

- 영구 볼륨 클레임 생성 개요에서 영구 볼륨 클레임 생성을 클릭합니다.

표시되는 페이지에 원하는 옵션을 정의합니다.

- 드롭다운 메뉴에서 이전에 생성한 스토리지 클래스를 선택합니다.

- 스토리지 클레임의 고유한 이름을 입력합니다.

- 액세스 모드를 선택합니다. 이 선택에서는 스토리지 클레임에 대한 읽기 및 쓰기 액세스 권한을 결정합니다.

- 스토리지 클레임의 크기를 정의합니다.

- 만들기를 클릭하여 영구 볼륨 클레임을 생성하고 영구 볼륨을 생성합니다.

4.7.3. 볼륨 형식

OpenShift Container Platform이 볼륨을 마운트하고 컨테이너에 전달하기 전에 영구 볼륨 정의에 fsType 매개변수에 의해 지정된 파일 시스템이 볼륨에 포함되어 있는지 확인합니다. 장치가 파일 시스템으로 포맷되지 않으면 장치의 모든 데이터가 삭제되고 장치는 지정된 파일 시스템에서 자동으로 포맷됩니다.

이 확인을 사용하면 OpenShift Container Platform이 처음 사용하기 전에 포맷되기 때문에 형식화되지 않은 GCE 볼륨을 영구 볼륨으로 사용할 수 있습니다.

4.8. iSCSI를 사용하는 영구 스토리지

iSCSI를 사용하여 영구 스토리지로 OpenShift Container Platform 클러스터를 프로비저닝할 수 있습니다. Kubernetes 및 iSCSI에 대해 어느 정도 익숙한 것으로 가정합니다.

Kubernetes 영구 볼륨 프레임워크를 사용하면 관리자는 영구 스토리지로 클러스터를 프로비저닝하고 사용자가 기본 인프라에 대한 지식이 없어도 해당 리소스를 요청할 수 있습니다.

인프라의 스토리지의 고가용성은 기본 스토리지 공급자가 담당합니다.

Amazon Web Services에서 iSCSI를 사용하는 경우 iSCSI 포트의 노드 간 TCP 트래픽을 포함하도록 기본 보안 정책을 업데이트해야 합니다. 기본적으로 해당 포트는 860 및 3260입니다.

사용자는 iscsi-initiator-utils 패키지를 설치하고 이니시에이터 이름을 /etc/iscsi/initiatorname.iscsi 에 구성하여 iSCSI 이니시에이터가 모든 OpenShift Container Platform 노드에 이미 구성되었는지 확인해야 합니다. iscsi-initiator-utils 패키지는 RHCOS(Red Hat Enterprise Linux CoreOS)를 사용하는 배포에 이미 설치되어 있습니다.

자세한 내용은 스토리지 장치 관리를 참조하십시오.

4.8.1. 프로비저닝

OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있는지 확인합니다. iSCSI에는 iSCSI 대상 포털, 유효한 IQN(iSCSI Qualified Name), 유효한 LUN 번호, 파일 시스템 유형 및 PersistentVolume API만 있으면 됩니다.

PersistentVolume 오브젝트 정의

apiVersion: v1

kind: PersistentVolume

metadata:

name: iscsi-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

iscsi:

targetPortal: 10.16.154.81:3260

iqn: iqn.2014-12.example.server:storage.target00

lun: 0

fsType: 'ext4'4.8.2. 디스크 할당량 강제 적용

LUN 파티션을 사용하여 디스크 할당량 및 크기 제약 조건을 강제 적용합니다. 각 LUN은 1개의 영구 볼륨입니다. Kubernetes는 영구 볼륨에 고유 이름을 강제 적용합니다.

이렇게 하면 최종 사용자가 특정 용량(예: 10Gi)에 따라 영구 스토리지를 요청하고 해당 볼륨과 동등한 용량과 일치시킬 수 있습니다.

4.8.3. iSCSI 볼륨 보안

사용자는 PersistentVolumeClaim 오브젝트를 사용하여 스토리지를 요청합니다. 이 클레임은 사용자의 네임스페이스에만 존재하며, 동일한 네임스페이스 내의 Pod에서만 참조할 수 있습니다. 네임스페이스에서 영구 볼륨 클레임에 대한 액세스를 시도하면 Pod가 실패하게 됩니다.

각 iSCSI LUN은 클러스터의 모든 노드에서 액세스할 수 있어야 합니다.

4.8.3.1. CHAP(Challenge Handshake Authentication Protocol) 구성

선택적으로 OpenShift Container Platform은 CHAP을 사용하여 iSCSI 대상에 자신을 인증할 수 있습니다.

apiVersion: v1

kind: PersistentVolume

metadata:

name: iscsi-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

iscsi:

targetPortal: 10.0.0.1:3260

iqn: iqn.2016-04.test.com:storage.target00

lun: 0

fsType: ext4

chapAuthDiscovery: true

chapAuthSession: true

secretRef:

name: chap-secret 4.8.4. iSCSI 다중 경로

iSCSI 기반 스토리지의 경우 두 개 이상의 대상 포털 IP 주소에 동일한 IQN을 사용하여 여러 경로를 구성할 수 있습니다. 경로의 구성 요소 중 하나 이상에 실패하면 다중 경로를 통해 영구 볼륨에 액세스할 수 있습니다.

Pod 사양에 다중 경로를 지정하려면 portals 필드를 사용합니다. 예를 들면 다음과 같습니다.

apiVersion: v1

kind: PersistentVolume

metadata:

name: iscsi-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

iscsi:

targetPortal: 10.0.0.1:3260

portals: ['10.0.2.16:3260', '10.0.2.17:3260', '10.0.2.18:3260']

iqn: iqn.2016-04.test.com:storage.target00

lun: 0

fsType: ext4

readOnly: false- 1

portals필드를 사용하여 추가 대상 포털을 추가합니다.

4.8.5. iSCSI 사용자 정의 이니시에이터 IQN

iSCSI 대상이 특정 IQN으로 제한되는 경우 사용자 정의 이니시에이터 IQN(iSCSI Qualified Name)을 구성하지만 iSCSI PV가 연결된 노드는 이러한 IQN을 갖는 것이 보장되지 않습니다.

사용자 정의 이니시에이터 IQN을 지정하려면 initiatorName 필드를 사용합니다.

apiVersion: v1

kind: PersistentVolume

metadata:

name: iscsi-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

iscsi:

targetPortal: 10.0.0.1:3260

portals: ['10.0.2.16:3260', '10.0.2.17:3260', '10.0.2.18:3260']

iqn: iqn.2016-04.test.com:storage.target00

lun: 0

initiatorName: iqn.2016-04.test.com:custom.iqn

fsType: ext4

readOnly: false- 1

- 이니시에이터의 이름을 지정합니다.

4.9. NFS를 사용하는 영구저장장치

OpenShift Container Platform 클러스터는 NFS를 사용하는 영구 스토리지와 함께 프로비저닝될 수 있습니다. PV(영구 볼륨) 및 PVC(영구 볼륨 클레임)는 프로젝트 전체에서 볼륨을 공유하는 편리한 방법을 제공합니다. PV 정의에 포함된 NFS 관련 정보는 Pod 정의에서 직접 정의될 수 있지만, 이렇게 하면 볼륨이 별도의 클러스터 리소스로 생성되지 않아 볼륨에서 충돌이 발생할 수 있습니다.

4.9.1. 프로비저닝

OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있어야 합니다. NFS 볼륨을 프로비저닝하려면, NFS 서버 목록 및 내보내기 경로만 있으면 됩니다.

절차

PV에 대한 오브젝트 정의를 생성합니다.

apiVersion: v1 kind: PersistentVolume metadata: name: pv00011 spec: capacity: storage: 5Gi2 accessModes: - ReadWriteOnce3 nfs:4 path: /tmp5 server: 172.17.0.26 persistentVolumeReclaimPolicy: Retain7 - 1

- 볼륨의 이름입니다. 이는 다양한

oc <command> pod에서 PV ID입니다. - 2

- 이 볼륨에 할당된 스토리지의 용량입니다.

- 3

- 볼륨에 대한 액세스를 제어하는 것으로 표시되지만 실제로 레이블에 사용되며 PVC를 PV에 연결하는 데 사용됩니다. 현재는

accessModes를 기반으로 하는 액세스 규칙이 적용되지 않습니다. - 4

- 사용 중인 볼륨 유형입니다(이 경우

nfs플러그인). - 5

- NFS 서버에서 내보낸 경로입니다.

- 6

- NFS 서버의 호스트 이름 또는 IP 주소입니다.

- 7

- PV의 회수 정책입니다. 이는 릴리스될 때 볼륨에 발생하는 작업을 정의합니다.

참고각 NFS 볼륨은 클러스터의 모든 스케줄링 가능한 노드에서 마운트할 수 있어야 합니다.

PV가 생성되었는지 확인합니다.

$ oc get pv출력 예

NAME LABELS CAPACITY ACCESSMODES STATUS CLAIM REASON AGE pv0001 <none> 5Gi RWO Available 31s새 PV에 바인딩하는 영구 볼륨 클레임을 생성합니다.

apiVersion: v1 kind: PersistentVolumeClaim metadata: name: nfs-claim1 spec: accessModes: - ReadWriteOnce1 resources: requests: storage: 5Gi2 volumeName: pv0001 storageClassName: ""영구 볼륨 클레임이 생성되었는지 확인합니다.

$ oc get pvc출력 예

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE nfs-claim1 Bound pv0001 5Gi RWO 2m

4.9.2. 디스크 할당량 강제 적용

디스크 파티션을 사용하여 디스크 할당량 및 크기 제약 조건을 적용할 수 있습니다. 각 파티션은 자체 내보내기일 수 있습니다. 각 내보내기는 1개의 PV입니다. OpenShift Container Platform은 PV에 고유한 이름을 적용하지만 NFS 볼륨 서버와 경로의 고유성은 관리자에 따라 다릅니다.

이렇게 하면 개발자가 10Gi와 같은 특정 용량에 의해 영구 스토리지를 요청하고 해당 볼륨과 동등한 용량과 일치시킬 수 있습니다.

4.9.3. NFS 볼륨 보안

이 섹션에서는 일치하는 권한 및 SELinux 고려 사항을 포함하여 NFS 볼륨 보안에 대해 설명합니다. 사용자는 POSIX 권한, 프로세스 UID, 추가 그룹 및 SELinux의 기본 사항을 이해하고 있어야 합니다.

개발자는 Pod 정의의 volumes 섹션에서 직접 PVC 또는 NFS 볼륨 플러그인을 참조하여 NFS 스토리지를 요청합니다.

NFS 서버의 /etc/exports 파일에 액세스할 수 있는 NFS 디렉터리가 있습니다. 대상 NFS 디렉터리에는 POSIX 소유자 및 그룹 ID가 있습니다. OpenShift Container Platform NFS 플러그인은 내보낸 NFS 디렉터리에 있는 동일한 POSIX 소유권 및 권한을 사용하여 컨테이너의 NFS 디렉터리를 마운트합니다. 그러나 컨테이너는 원하는 동작인 NFS 마운트의 소유자와 동일한 유효 UID로 실행되지 않습니다.

예를 들어, 대상 NFS 디렉터리가 NFS 서버에 다음과 같이 표시되는 경우:

$ ls -lZ /opt/nfs -d출력 예

drwxrws---. nfsnobody 5555 unconfined_u:object_r:usr_t:s0 /opt/nfs$ id nfsnobody출력 예

uid=65534(nfsnobody) gid=65534(nfsnobody) groups=65534(nfsnobody)

그러면 컨테이너가 SELinux 레이블과 일치하고 65534, nfsnobody 소유자 또는 디렉터리에 액세스하려면 추가 그룹의 5555와 함께 실행해야 합니다.

65534의 소유자 ID는 예시와 같이 사용됩니다. NFS의 root_squash는 UID가 0인 루트를 UID가 65534인 nfsnobody로 매핑하지만, NFS 내보내기에는 임의의 소유자 ID가 있을 수 있습니다. NFS를 내보내려면 소유자 65534가 필요하지 않습니다.

4.9.3.1. 그룹 ID

NFS 내보내기 권한 변경 옵션이 아닐 경우 NFS 액세스를 처리하는 권장 방법은 추가 그룹을 사용하는 것입니다. OpenShift Container Platform의 추가 그룹은 공유 스토리지에 사용되며 NFS가 그 예입니다. 반면 iSCSI와 같은 블록 스토리지는 Pod의 securityContext에 있는 fsGroup SCC 전략과 fsGroup 값을 사용합니다.

영구 스토리지에 액세스하려면, 일반적으로 추가 그룹 ID vs 사용자 ID를 사용하는 것이 좋습니다.

예시의 대상 NFS 디렉터리의 그룹 ID는 5555 이므로 Pod는 Pod의 securityContext 정의에서 supplementalGroups 를 사용하여 해당 그룹 ID를 정의할 수 있습니다. 예를 들면 다음과 같습니다.

spec:

containers:

- name:

...

securityContext:

supplementalGroups: [5555]

Pod 요구사항을 충족할 수 있는 사용자 지정 SCC가 없는 경우 Pod는 restricted SCC와 일치할 수 있습니다. 이 SCC에는 supplementalGroups 전략이 RunAsAny로 설정되어 있으므로, 범위를 확인하지 않고 제공되는 그룹 ID가 승인됩니다.

그 결과 위의 Pod에서 승인이 전달 및 실행됩니다. 그러나 그룹 ID 범위 확인이 필요한 경우에는 사용자 지정 SCC를 사용하는 것이 좋습니다. 사용자 지정 SCC를 생성하면 최소 및 최대 그룹 ID가 정의되고, 그룹 ID 범위 확인이 적용되며, 5555 그룹 ID가 허용될 수 있습니다.

사용자 정의 SCC를 사용하려면 먼저 적절한 서비스 계정에 추가해야 합니다. 예를 들어, Pod 사양에 다른 값이 지정된 경우를 제외하고 지정된 프로젝트에서 기본 서비스 계정을 사용하십시오.

4.9.3.2. 사용자 ID

사용자 ID는 컨테이너 이미지 또는 Pod 정의에 정의할 수 있습니다.

일반적으로 사용자 ID를 사용하는 대신 추가 그룹 ID를 사용하여 영구 스토리지에 대한 액세스 권한을 얻는 것이 좋습니다.

위에 표시된 예시 NFS 디렉터리에서 컨테이너는 UID가 65534로 설정되고, 현재 그룹 ID를 무시해야 하므로 다음을 Pod 정의에 추가할 수 있습니다.

spec:

containers:

- name:

...

securityContext:

runAsUser: 65534

프로젝트가 default이고 SCC가 restricted라고 가정하면 Pod에서 요청한 대로 65534의 사용자 ID가 허용되지 않습니다. 따라서 Pod가 다음과 같은 이유로 실패합니다.

-

65534가 사용자 ID로 요청되었습니다. -

Pod에서 사용 가능한 모든 SCC를 검사하여 어떤 SCC에서

65534의 사용자 ID를 허용하는지 확인합니다. SCC의 모든 정책을 확인하는 동안 여기에는 중요한 사항은 사용자 ID입니다. -

사용 가능한 모든 SCC는

runAsUser전략에서MustRunAsRange를 사용하므로 UID 범위 검사가 필요합니다. -

65534는 SCC 또는 프로젝트의 사용자 ID 범위에 포함되어 있지 않습니다.

일반적으로 사전 정의된 SCC를 수정하지 않는 것이 좋습니다. 이 상황을 해결하기 위해 선호되는 방법은 사용자 정의 SCC를 생성하는 것입니다. 따라서 최소 및 최대 사용자 ID가 정의되고 UID 범위 검사가 여전히 적용되며, 65534의 UID가 허용됩니다.

사용자 정의 SCC를 사용하려면 먼저 적절한 서비스 계정에 추가해야 합니다. 예를 들어, Pod 사양에 다른 값이 지정된 경우를 제외하고 지정된 프로젝트에서 기본 서비스 계정을 사용하십시오.

4.9.3.3. SELinux

RHEL(Red Hat Enterprise Linux) 및 RHCOS(Red Hat Enterprise Linux CoreOS) 시스템은 기본적으로 원격 NFS 서버에서 SELinux를 사용하도록 구성됩니다.

RHEL 이외 및 비RHCOS 시스템의 경우 SELinux는 Pod에서 원격 NFS 서버로 쓰기를 허용하지 않습니다. NFS 볼륨이 올바르게 마운트되지만 읽기 전용입니다. 다음 절차에 따라 올바른 SELinux 권한을 활성화해야 합니다.

사전 요구 사항

-

container-selinux패키지가 설치되어 있어야 합니다. 이 패키지는virt_use_nfsSELinux 부울을 제공합니다.

절차

다음 명령을 사용하여

virt_use_nfs부울을 활성화합니다.-P옵션을 사용하면 재부팅할 때 이 부울을 지속적으로 사용할 수 있습니다.# setsebool -P virt_use_nfs 1

4.9.3.4. 내보내기 설정

컨테이너 사용자가 볼륨을 읽고 쓸 수 있도록 하려면 NFS 서버의 내보낸 각 볼륨은 다음 조건을 준수해야 합니다.

모든 내보내는 다음 형식을 사용하여 내보내야 합니다.

/<example_fs> *(rw,root_squash)마운트 옵션으로 트래픽을 허용하도록 방화벽을 구성해야 합니다.

NFSv4의 경우 기본 포트

2049(nfs)를 구성합니다.NFSv4

# iptables -I INPUT 1 -p tcp --dport 2049 -j ACCEPTNFSv3의 경우

2049(nfs),20048(mountd) 및111(portmapper)의 포트 3개가 있습니다.NFSv3

# iptables -I INPUT 1 -p tcp --dport 2049 -j ACCEPT# iptables -I INPUT 1 -p tcp --dport 20048 -j ACCEPT# iptables -I INPUT 1 -p tcp --dport 111 -j ACCEPT

-

대상 Pod에서 액세스할 수 있도록 NFS 내보내기 및 디렉터리를 설정해야 합니다. 컨테이너의 기본 UID에 표시된 대로 내보내기를 컨테이너의 기본 UID로 설정하거나 위 그룹 ID에 표시된 대로

supplementalGroups를 사용하여 Pod 그룹 액세스 권한을 제공합니다.

4.9.4. 리소스 회수

NFS는 OpenShift Container Platform Recyclable 플러그인 인터페이스를 구현합니다. 자동 프로세스는 각 영구 볼륨에 설정된 정책에 따라 복구 작업을 처리합니다.

기본적으로 PV는 Retain으로 설정됩니다.

PVC에 대한 클레임이 삭제되고 PV가 해제되면 PV 오브젝트를 재사용해서는 안 됩니다. 대신 원래 볼륨과 동일한 기본 볼륨 세부 정보를 사용하여 새 PV를 생성해야 합니다.

예를 들어, 관리자가 이름이 nfs1인 PV를 생성합니다.

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs1

spec:

capacity:

storage: 1Mi

accessModes:

- ReadWriteMany

nfs:

server: 192.168.1.1

path: "/"

사용자가 PVC1을 생성하여 nfs1에 바인딩합니다. 그리고 사용자가 PVC1에서 nfs1 클레임을 해제합니다. 그러면 nfs1이 Released 상태가 됩니다. 관리자가 동일한 NFS 공유를 사용하려면 동일한 NFS 서버 세부 정보를 사용하여 새 PV를 생성해야 하지만 다른 PV 이름을 사용해야 합니다.

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs2

spec:

capacity:

storage: 1Mi

accessModes:

- ReadWriteMany

nfs:

server: 192.168.1.1

path: "/"

원래 PV를 삭제하고 동일한 이름으로 다시 생성하는 것은 권장되지 않습니다. Released에서 Available로 PV의 상태를 수동으로 변경하면 오류가 발생하거나 데이터가 손실될 수 있습니다.

4.9.5. 추가 구성 및 문제 해결

사용되는 NFS 버전과 구성 방법에 따라 적절한 내보내기 및 보안 매핑에 필요한 추가 구성 단계가 있을 수 있습니다. 다음은 적용되는 몇 가지입니다.

|

NFSv4 마운트에서 소유권이 |

|

| NFSv4에서 ID 매핑 비활성화 |

|

4.10. Red Hat OpenShift Data Foundation

Red Hat OpenShift Data Foundation은 사내 또는 하이브리드 클라우드에서 파일, 블록 및 오브젝트 스토리지를 지원하는 OpenShift Container Platform의 영구 스토리지 공급자입니다. Red Hat 스토리지 솔루션인 Red Hat OpenShift Data Foundation은 배포, 관리 및 모니터링을 위해 OpenShift Container Platform과 완전히 통합되어 있습니다.

Red Hat OpenShift Data Foundation은 자체 문서 라이브러리를 제공합니다. Red Hat OpenShift Data Foundation 문서의 전체 세트는 https://access.redhat.com/documentation/en-us/red_hat_openshift_data_foundation 에서 확인할 수 있습니다.

OpenShift Container Platform과 함께 설치된 가상 머신을 호스팅하는 하이퍼컨버지드 노드를 사용하는 가상화용 RHHI(Red Hat Hyperconverged Infrastructure) 상단에 있는 OpenShift Data Foundation은 지원되는 구성이 아닙니다. 지원되는 플랫폼에 대한 자세한 내용은 Red Hat OpenShift Data Foundation 지원 및 상호 운용성 가이드를 참조하십시오.

4.11. VMware vSphere 볼륨을 사용하는 영구 스토리지

OpenShift Container Platform을 사용하면 VMware vSphere의 VMDK(가상 머신 디스크) 볼륨을 사용할 수 있습니다. VMware vSphere를 사용하여 영구 스토리지로 OpenShift Container Platform 클러스터를 프로비저닝할 수 있습니다. Kubernetes 및 VMware vSphere에 대해 어느 정도 익숙한 것으로 가정합니다.

VMware vSphere 볼륨은 동적으로 프로비저닝할 수 있습니다. OpenShift Container Platform은 vSphere에서 디스크를 생성하고 이 디스크를 올바른 이미지에 연결합니다.

OpenShift Container Platform은 새 볼륨을 독립 영구 디스크로 프로비저닝하여 클러스터의 모든 노드에서 볼륨을 자유롭게 연결 및 분리합니다. 결과적으로 스냅샷을 사용하는 볼륨을 백업하거나 스냅샷에서 볼륨을 복원할 수 없습니다. 자세한 내용은 스냅 샷 제한 을 참조하십시오.

Kubernetes 영구 볼륨 프레임워크를 사용하면 관리자는 영구 스토리지로 클러스터를 프로비저닝하고 사용자가 기본 인프라에 대한 지식이 없어도 해당 리소스를 요청할 수 있습니다.

영구 볼륨은 단일 프로젝트 또는 네임스페이스에 바인딩되지 않으며, OpenShift Container Platform 클러스터에서 공유할 수 있습니다. 영구 볼륨 클레임은 프로젝트 또는 네임스페이스에 고유하며 사용자가 요청할 수 있습니다.

OpenShift Container Platform은 기본적으로 in-tree (비 CSI) 플러그인을 사용하여 vSphere 스토리지를 프로비저닝합니다.

향후 OpenShift Container Platform 버전에서는 기존 in-tree 플러그인을 사용하여 프로비저닝된 볼륨이 동등한 CSI 드라이버로 마이그레이션할 계획입니다. CSI 자동 마이그레이션이 원활해야 합니다. 마이그레이션은 영구 볼륨, 영구 볼륨 클레임 및 스토리지 클래스와 같은 기존 API 오브젝트를 사용하는 방법을 변경하지 않습니다. 마이그레이션에 대한 자세한 내용은 CSI 자동 마이그레이션 을 참조하십시오.

전체 마이그레이션 후 in-tree 플러그인은 향후 OpenShift Container Platform 버전에서 제거됩니다.

4.11.1. 동적으로 VMware vSphere 볼륨 프로비저닝

동적으로 VMware vSphere 볼륨 프로비저닝은 권장되는 방법입니다.

4.11.2. 사전 요구 사항

- 사용하는 구성 요소의 요구사항을 충족하는 VMware vSphere 버전 6 인스턴스에 OpenShift Container Platform 클러스터가 설치되어 있어야 합니다. vSphere 버전 지원에 대한 자세한 내용은 vSphere에 클러스터 설치를 참조하십시오.

다음 절차 중 하나를 사용하여 기본 스토리지 클래스를 사용하여 이러한 볼륨을 동적으로 프로비저닝할 수 있습니다.

4.11.2.1. UI를 사용하여 VMware vSphere 볼륨을 동적으로 프로비저닝

OpenShift Container Platform은 볼륨을 프로비저닝하기 위해 thin 디스크 형식을 사용하는 이름이 thin인 기본 스토리지 클래스를 설치합니다.

사전 요구 사항

- OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있어야 합니다.

절차

- OpenShift Container Platform 콘솔에서 스토리지 → 영구 볼륨 클레임을 클릭합니다.

- 영구 볼륨 클레임 생성 개요에서 영구 볼륨 클레임 생성을 클릭합니다.

결과 페이지에 필요한 옵션을 정의합니다.

-

thin스토리지 클래스를 선택합니다. - 스토리지 클레임의 고유한 이름을 입력합니다.

- 액세스 모드를 선택하여 생성된 스토리지 클레임에 대한 읽기 및 쓰기 액세스를 결정합니다.

- 스토리지 클레임의 크기를 정의합니다.

-

- 만들기를 클릭하여 영구 볼륨 클레임을 생성하고 영구 볼륨을 생성합니다.

4.11.2.2. CLI를 사용하여 VMware vSphere 볼륨을 동적으로 프로비저닝

OpenShift Container Platform은 볼륨 프로비저닝에 thin 디스크 형식을 사용하는 이름이 thin인 기본 StorageClass를 설치합니다.

사전 요구 사항

- OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있어야 합니다.

절차(CLI)

다음 콘텐츠와 함께

pvc.yaml파일을 생성하여 VMware vSphere PersistentVolumeClaim을 정의할 수 있습니다.kind: PersistentVolumeClaim apiVersion: v1 metadata: name: pvc1 spec: accessModes: - ReadWriteOnce2 resources: requests: storage: 1Gi3 파일에서

PersistentVolumeClaim오브젝트를 만듭니다.$ oc create -f pvc.yaml

4.11.3. 정적으로 프로비저닝 VMware vSphere 볼륨

VMware vSphere 볼륨을 정적으로 프로비저닝하려면 영구 볼륨 프레임워크를 참조하기 위해 가상 머신 디스크를 생성해야 합니다.

사전 요구 사항

- OpenShift Container Platform에서 볼륨으로 마운트하기 전에 기본 인프라에 스토리지가 있어야 합니다.

절차

가상 머신 디스크를 생성합니다. VMDK(가상 머신 디스크)는 정적으로 VMware vSphere 볼륨을 프로비저닝하기 전에 수동으로 생성해야 합니다. 다음 방법 중 하나를 사용합니다.

vmkfstools를 사용하여 생성합니다. SSH(Secure Shell)를 통해 ESX에 액세스한 후 다음 명령을 사용하여 VMDK 볼륨을 생성합니다.$ vmkfstools -c <size> /vmfs/volumes/<datastore-name>/volumes/<disk-name>.vmdkvmware-diskmanager를 사용하여 생성합니다.$ shell vmware-vdiskmanager -c -t 0 -s <size> -a lsilogic <disk-name>.vmdk

VMDK를 참조하는 영구 볼륨을 생성합니다.

PersistentVolume오브젝트 정의를 사용하여pv1.yaml파일을 생성합니다.apiVersion: v1 kind: PersistentVolume metadata: name: pv11 spec: capacity: storage: 1Gi2 accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Retain vsphereVolume:3 volumePath: "[datastore1] volumes/myDisk"4 fsType: ext45 - 1

- 볼륨의 이름입니다. 이 이름을 사용하여 영구 볼륨 클레임 또는 Pod에 의해 식별됩니다.

- 2

- 이 볼륨에 할당된 스토리지의 용량입니다.

- 3

- vSphere 볼륨을 위해

vsphereVolume과 함께 사용되는 볼륨 유형입니다. 레이블은 vSphere VMDK 볼륨을 Pod에 마운트하는 데 사용됩니다. 볼륨의 내용은 마운트 해제 시 보존됩니다. 볼륨 유형은 VMFS 및 VSAN 데이터 저장소를 지원합니다. - 4

- 사용할 기존 VMDK 볼륨입니다.

vmkfstools를 사용하는 경우 이전에 설명된 대로 볼륨 정의에서 데이터 저장소의 이름을 대괄호[]로 감싸야 합니다. - 5

- 마운트할 파일 시스템 유형입니다. 예를 들어, ext4, xfs 또는 기타 파일 시스템이 이에 해당합니다.

중요볼륨이 포맷 및 프로비저닝된 후 fsType 매개변수 값을 변경하면 데이터가 손실되거나 Pod 오류가 발생할 수 있습니다.

파일에서

PersistentVolume오브젝트를 만듭니다.$ oc create -f pv1.yaml이전 단계에서 생성한 영구 볼륨 클레임에 매핑되는 영구 볼륨 클레임을 생성합니다.

PersistentVolumeClaim오브젝트 정의를 사용하여pvc1.yaml파일을 생성합니다.apiVersion: v1 kind: PersistentVolumeClaim metadata: name: pvc11 spec: accessModes: - ReadWriteOnce2 resources: requests: storage: "1Gi"3 volumeName: pv14 파일에서

PersistentVolumeClaim오브젝트를 만듭니다.$ oc create -f pvc1.yaml

4.11.3.1. VMware vSphere 볼륨 포맷

OpenShift Container Platform이 볼륨을 마운트하고 컨테이너에 전달하기 전에 PersistentVolume(PV) 정의에 있는 fsType 매개변수 값에 의해 지정된 파일 시스템이 볼륨에 포함되어 있는지 확인합니다. 장치가 파일 시스템으로 포맷되지 않으면 장치의 모든 데이터가 삭제되고 장치는 지정된 파일 시스템에서 자동으로 포맷됩니다.

OpenShift Container Platform은 처음 사용하기 전에 포맷되므로 vSphere 볼륨을 PV로 사용할 수 있습니다.

4.12. 로컬 스토리지를 사용하는 영구저장장치

4.12.1. 로컬 블록을 사용하는 영구 스토리지

OpenShift Container Platform은 로컬 볼륨을 사용하여 영구 스토리지를 통해 프로비저닝될 수 있습니다. 로컬 영구 볼륨을 사용하면 표준 영구 볼륨 클레임 인터페이스를 사용하여 디스크 또는 파티션과 같은 로컬 스토리지 장치에 액세스할 수 있습니다.

시스템에서 볼륨 노드 제약 조건을 인식하고 있기 때문에 로컬 볼륨은 노드에 수동으로 Pod를 예약하지 않고 사용할 수 있습니다. 그러나 로컬 볼륨은 여전히 기본 노드의 가용성에 따라 달라지며 일부 애플리케이션에는 적합하지 않습니다.

로컬 볼륨은 정적으로 생성된 영구 볼륨으로 사용할 수 있습니다.

4.12.1.1. Local Storage Operator 설치

Local Storage Operator는 기본적으로 OpenShift Container Platform에 설치되지 않습니다. 다음 절차에 따라 이 Operator를 설치하고 구성하여 클러스터에서 로컬 볼륨을 활성화합니다.

사전 요구 사항

- OpenShift Container Platform 웹 콘솔 또는 CLI(명령줄 인터페이스)에 액세스할 수 있습니다.

절차

openshift-local-storage프로젝트를 생성합니다.$ oc adm new-project openshift-local-storage선택 사항: 인프라 노드에서 로컬 스토리지 생성을 허용합니다.

Local Storage Operator를 사용하여 로깅 및 모니터링과 같은 구성 요소를 지원하는 인프라 노드에 볼륨을 생성할 수 있습니다.

Local Storage Operator에 작업자 노드가 아닌 인프라 노드가 포함되도록 기본 노드 선택기를 조정해야 합니다.

Local Storage Operator가 클러스터 전체 기본 선택기를 상속하지 못하도록 하려면 다음 명령을 입력합니다.

$ oc annotate namespace openshift-local-storage openshift.io/node-selector=''선택 사항: 단일 노드 배포의 CPU 관리 풀에서 로컬 스토리지를 실행할 수 있습니다.

단일 노드 배포에서 Local Storage Operator를 사용하고

관리풀에 속하는 CPU를 사용할 수 있습니다. 관리 워크로드 파티셔닝을 사용하는 단일 노드 설치에서 이 단계를 수행합니다.Local Storage Operator가 관리 CPU 풀에서 실행되도록 다음 명령을 실행합니다.

$ oc annotate namespace openshift-local-storage workload.openshift.io/allowed='management'

UI에서

웹 콘솔에서 Local Storage Operator를 설치하려면 다음 단계를 따르십시오.

- OpenShift Container Platform 웹 콘솔에 로그인합니다.

- Operators → OperatorHub로 이동합니다.

- Local Storage를 필터 상자에 입력하여 Local Storage Operator를 찾습니다.

- 설치를 클릭합니다.

- Operator 설치 페이지에서 클러스터의 특정 네임스페이스를 선택합니다. 드롭다운 메뉴에서 openshift-local-storage를 선택합니다.

- 업데이트 채널 및 승인 전략 값을 원하는 값으로 조정합니다.

- 설치를 클릭합니다.

완료되면 Local Storage Operator가 웹 콘솔의 설치된 Operator 섹션에 나열됩니다.

CLI에서

CLI에서 Local Storage Operator를 설치합니다.

openshift-local-storage.yaml과 같은 Local Storage Operator의 Operator 그룹 및 서브스크립션을 정의하는 오브젝트 YAML 파일을 생성합니다.예: openshift-local-storage.yaml

apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: local-operator-group namespace: openshift-local-storage spec: targetNamespaces: - openshift-local-storage --- apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: local-storage-operator namespace: openshift-local-storage spec: channel: stable installPlanApproval: Automatic1 name: local-storage-operator source: redhat-operators sourceNamespace: openshift-marketplace- 1

- 설치 계획에 대한 사용자 승인 정책입니다.

다음 명령을 입력하여 Local Storage Operator 오브젝트를 생성합니다.

$ oc apply -f openshift-local-storage.yaml이 시점에서 OLM(Operator Lifecycle Manager)은 Local Storage Operator를 인식합니다. Operator의 ClusterServiceVersion (CSV)이 대상 네임스페이스에 표시되고 Operator가 제공한 API를 작성할 수 있어야 합니다.

모든 Pod 및 Local Storage Operator가 생성되었는지 확인하여 로컬 스토리지 설치를 확인합니다.

필요한 모든 Pod가 생성되었는지 확인합니다.

$ oc -n openshift-local-storage get pods출력 예

NAME READY STATUS RESTARTS AGE local-storage-operator-746bf599c9-vlt5t 1/1 Running 0 19mCSV(ClusterServiceVersion) YAML 매니페스트를 확인하여

openshift-local-storage프로젝트에서 Local Storage Operator를 사용할 수 있는지 확인합니다.$ oc get csvs -n openshift-local-storage출력 예

NAME DISPLAY VERSION REPLACES PHASE local-storage-operator.4.2.26-202003230335 Local Storage 4.2.26-202003230335 Succeeded

모든 확인이 통과되면 Local Storage Operator가 성공적으로 설치됩니다.

4.12.1.2. Local Storage Operator를 사용하여 로컬 볼륨을 프로비저닝

동적 프로비저닝을 통해 로컬 볼륨을 생성할 수 없습니다. 대신 Local Storage Operator에서 영구 볼륨을 생성할 수 있습니다. 로컬 볼륨 프로비저너는 정의된 리소스에 지정된 경로에서 모든 파일 시스템 또는 블록 볼륨 장치를 찾습니다.

사전 요구 사항

- Local Storage Operator가 설치되어 있습니다.

다음 조건을 충족하는 로컬 디스크가 있습니다.

- 노드에 연결되어 있습니다.

- 마운트되지 않았습니다.

- 파티션이 포함되어 있지 않습니다.

절차

로컬 볼륨 리소스를 생성합니다. 이 리소스는 로컬 볼륨에 대한 노드 및 경로를 정의해야 합니다.

참고동일한 장치에 다른 스토리지 클래스 이름을 사용하지 마십시오. 이렇게 하면 여러 영구 볼륨(PV)이 생성됩니다.

예: Filesystem

apiVersion: "local.storage.openshift.io/v1" kind: "LocalVolume" metadata: name: "local-disks" namespace: "openshift-local-storage"1 spec: nodeSelector:2 nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - ip-10-0-140-183 - ip-10-0-158-139 - ip-10-0-164-33 storageClassDevices: - storageClassName: "local-sc"3 volumeMode: Filesystem4 fsType: xfs5 devicePaths:6 - /path/to/device7 - 1

- Local Storage Operator가 설치된 네임스페이스입니다.

- 2

- 선택 사항: 로컬 스토리지 볼륨이 연결된 노드 목록이 포함된 노드 선택기입니다. 이 예에서는

oc get node에서 가져온 노드 호스트 이름을 사용합니다. 값을 정의하지 않으면 Local Storage Operator에서 사용 가능한 모든 노드에서 일치하는 디스크를 찾습니다. - 3

- 영구 볼륨 오브젝트를 생성할 때 사용할 스토리지 클래스의 이름입니다. Local Storage Operator가 존재하지 않는 경우 스토리지 클래스를 자동으로 생성합니다. 이 로컬 볼륨 세트를 고유하게 식별하는 스토리지 클래스를 사용해야 합니다.

- 4

- 로컬 볼륨의 유형을 정의하는

Filesystem또는Block중 하나에 해당 볼륨 모드입니다.참고원시 블록 볼륨(

volumeMode: Block)은 파일 시스템으로 포맷되지 않습니다. Pod에서 실행되는 모든 애플리케이션이 원시 블록 장치를 사용할 수 있는 경우에만 이 모드를 사용합니다. - 5

- 로컬 볼륨이 처음 마운트될 때 생성되는 파일 시스템입니다.

- 6

- 선택할 로컬 스토리지 장치 목록이 포함된 경로입니다.

- 7

- 이 값을

LocalVolume리소스의 실제 로컬 디스크 파일 경로(예:/dev/disk/)로 바꿉니다. 프로비저너가 배포되면 이러한 로컬 디스크에 PV가 생성됩니다.by-id/wwn참고RHEL KVM을 사용하여 OpenShift Container Platform을 실행하는 경우 VM 디스크에 일련 번호를 할당해야 합니다. 그렇지 않으면 재부팅 후 VM 디스크를 확인할 수 없습니다.

virsh edit <VM> 명령을 사용하여 <serial>mydisk</serial> 정의를 추가할 수 있습니다.

예: 블록

apiVersion: "local.storage.openshift.io/v1" kind: "LocalVolume" metadata: name: "local-disks" namespace: "openshift-local-storage"1 spec: nodeSelector:2 nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - ip-10-0-136-143 - ip-10-0-140-255 - ip-10-0-144-180 storageClassDevices: - storageClassName: "localblock-sc"3 volumeMode: Block4 devicePaths:5 - /path/to/device6 - 1

- Local Storage Operator가 설치된 네임스페이스입니다.

- 2

- 선택 사항: 로컬 스토리지 볼륨이 연결된 노드 목록이 포함된 노드 선택기입니다. 이 예에서는

oc get node에서 가져온 노드 호스트 이름을 사용합니다. 값을 정의하지 않으면 Local Storage Operator에서 사용 가능한 모든 노드에서 일치하는 디스크를 찾습니다. - 3

- 영구 볼륨 오브젝트를 생성할 때 사용할 스토리지 클래스의 이름입니다.

- 4

- 로컬 볼륨의 유형을 정의하는

Filesystem또는Block중 하나에 해당 볼륨 모드입니다. - 5

- 선택할 로컬 스토리지 장치 목록이 포함된 경로입니다.

- 6

- 이 값을

LocalVolume리소스의 실제 로컬 디스크 파일 경로(예:dev/disk/)로 바꿉니다. 프로비저너가 배포되면 이러한 로컬 디스크에 PV가 생성됩니다.by-id/wwn

참고RHEL KVM을 사용하여 OpenShift Container Platform을 실행하는 경우 VM 디스크에 일련 번호를 할당해야 합니다. 그렇지 않으면 재부팅 후 VM 디스크를 확인할 수 없습니다.

virsh edit <VM> 명령을 사용하여 <serial>mydisk</serial> 정의를 추가할 수 있습니다.OpenShift Container Platform 클러스터에 로컬 볼륨 리소스를 생성합니다. 방금 생성한 파일을 지정합니다.

$ oc create -f <local-volume>.yaml프로비저너가 생성되었고 해당 데몬 세트가 생성되었는지 확인합니다.

$ oc get all -n openshift-local-storage출력 예

NAME READY STATUS RESTARTS AGE pod/diskmaker-manager-9wzms 1/1 Running 0 5m43s pod/diskmaker-manager-jgvjp 1/1 Running 0 5m43s pod/diskmaker-manager-tbdsj 1/1 Running 0 5m43s pod/local-storage-operator-7db4bd9f79-t6k87 1/1 Running 0 14m NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/local-storage-operator-metrics ClusterIP 172.30.135.36 <none> 8383/TCP,8686/TCP 14m NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/diskmaker-manager 3 3 3 3 3 <none> 5m43s NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/local-storage-operator 1/1 1 1 14m NAME DESIRED CURRENT READY AGE replicaset.apps/local-storage-operator-7db4bd9f79 1 1 1 14m필요한 데몬 세트 프로세스 및 현재 개수를 기록해 둡니다.

0의 개수는 레이블 선택기가 유효하지 않음을 나타냅니다.영구 볼륨이 생성되었는지 확인합니다.

$ oc get pv출력 예

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE local-pv-1cec77cf 100Gi RWO Delete Available local-sc 88m local-pv-2ef7cd2a 100Gi RWO Delete Available local-sc 82m local-pv-3fa1c73 100Gi RWO Delete Available local-sc 48m

LocalVolume 오브젝트 편집은 안전하지 않은 작업이므로 이를 수행하면 기존 영구 볼륨의 fsType 또는 volumeMode가 변경되지 않습니다.

4.12.1.3. Local Storage Operator없이 로컬 볼륨 프로비저닝

동적 프로비저닝을 통해 로컬 볼륨을 생성할 수 없습니다. 대신 개체 정의에서 영구 볼륨(PV)을 정의하여 영구 볼륨을 생성할 수 있습니다. 로컬 볼륨 프로비저너는 정의된 리소스에 지정된 경로에서 모든 파일 시스템 또는 블록 볼륨 장치를 찾습니다.

PVC가 삭제될 때 PV를 수동으로 프로비저닝하면 PV를 재사용할 때 데이터 누출의 위험이 발생할 수 있습니다. Local Storage Operator는 로컬 PV를 프로비저닝할 때 장치의 라이프 사이클을 자동화하는 것이 좋습니다.

사전 요구 사항

- 로컬 디스크가 OpenShift Container Platform 노드에 연결되어 있습니다.

절차

PV를 정의합니다.

PersistentVolume오브젝트 정의로example-pv-filesystem.yaml또는example-pv-block.yaml과 같은 파일을 생성합니다. 이 리소스는 로컬 볼륨에 대한 노드 및 경로를 정의해야 합니다.참고동일한 장치에 다른 스토리지 클래스 이름을 사용하지 마십시오. 이렇게 하면 여러 PV가 생성됩니다.

example-pv-filesystem.yaml

apiVersion: v1 kind: PersistentVolume metadata: name: example-pv-filesystem spec: capacity: storage: 100Gi volumeMode: Filesystem1 accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Delete storageClassName: local-storage2 local: path: /dev/xvdf3 nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - example-node참고원시 블록 볼륨(

volumeMode: block)은 파일 시스템과 함께 포맷되지 않습니다. Pod에서 실행되는 모든 애플리케이션이 원시 블록 장치를 사용할 수 있는 경우에만 이 모드를 사용합니다.example-pv-block.yaml

apiVersion: v1 kind: PersistentVolume metadata: name: example-pv-block spec: capacity: storage: 100Gi volumeMode: Block1 accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Delete storageClassName: local-storage2 local: path: /dev/xvdf3 nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - example-nodeOpenShift Container Platform 클러스터에 PV 리소스를 생성합니다. 방금 생성한 파일을 지정합니다.

$ oc create -f <example-pv>.yaml로컬 PV가 생성되었는지 확인합니다.

$ oc get pv출력 예

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE example-pv-filesystem 100Gi RWO Delete Available local-storage 3m47s example-pv1 1Gi RWO Delete Bound local-storage/pvc1 local-storage 12h example-pv2 1Gi RWO Delete Bound local-storage/pvc2 local-storage 12h example-pv3 1Gi RWO Delete Bound local-storage/pvc3 local-storage 12h

4.12.1.4. 로컬 볼륨 영구 볼륨 클레임 생성

로컬 볼륨은 Pod가 액세스할 수 있는 영구 볼륨 클레임(PVC)으로서 정적으로 생성되어야 합니다.

사전 요구 사항

- 영구 볼륨은 로컬 볼륨 프로비저너를 사용하여 생성됩니다.

절차

해당 스토리지 클래스를 사용하여 PVC를 생성합니다.

kind: PersistentVolumeClaim apiVersion: v1 metadata: name: local-pvc-name1 spec: accessModes: - ReadWriteOnce volumeMode: Filesystem2 resources: requests: storage: 100Gi3 storageClassName: local-sc4 방금 작성한 파일을 지정하여 OpenShift Container Platform 클러스터에서 PVC를 생성합니다.

$ oc create -f <local-pvc>.yaml

4.12.1.5. 로컬 클레임 연결

로컬 볼륨이 영구 볼륨 클레임에 매핑된 후 리소스 내부에서 지정할 수 있습니다.

사전 요구 사항

- 동일한 네임스페이스에 영구 볼륨 클레임이 있어야 합니다.

절차

리소스 사양에 정의된 클레임을 포함합니다. 다음 예시는 Pod 내에서 영구 볼륨 클레임을 선언합니다.

apiVersion: v1 kind: Pod spec: ... containers: volumeMounts: - name: local-disks1 mountPath: /data2 volumes: - name: localpvc persistentVolumeClaim: claimName: local-pvc-name3 방금 생성한 파일을 지정하여 OpenShift Container Platform 클러스터에 리소스를 생성합니다.

$ oc create -f <local-pod>.yaml

4.12.1.6. 로컬 스토리지 장치에 대한 검색 및 프로비저닝 자동화

로컬 스토리지 Operator는 로컬 스토리지 검색 및 프로비저닝을 자동화합니다. 이 기능을 사용하면 배포 중에 연결된 장치가 있는 베어 메탈, VMware 또는 AWS 스토어 인스턴스와 같이 동적 프로비저닝을 사용할 수 없는 경우 설치를 단순화할 수 있습니다.

자동 검색 및 프로비저닝은 기술 프리뷰 기능 전용입니다. Technology Preview 기능은 Red Hat 프로덕션 서비스 수준 계약(SLA)에서 지원되지 않으며 기능적으로 완전하지 않을 수 있습니다. 따라서 프로덕션 환경에서 사용하는 것은 권장하지 않습니다. 이러한 기능을 사용하면 향후 제품 기능을 조기에 이용할 수 있어 개발 과정에서 고객이 기능을 테스트하고 피드백을 제공할 수 있습니다.

그러나 베어 메탈에 Red Hat OpenShift Data Foundation을 배포하는 데 사용할 때 자동 검색 및 프로비저닝은 완전히 지원됩니다.

Red Hat 기술 프리뷰 기능의 지원 범위에 대한 자세한 내용은 기술 프리뷰 기능 지원 범위를 참조하십시오.

다음 절차에 따라 로컬 장치를 자동으로 검색하고 선택한 장치에 대한 로컬 볼륨을 자동으로 프로비저닝하십시오.

LocalVolumeSet 오브젝트를 주의해서 사용하십시오. 로컬 디스크에서 PV(영구 볼륨)를 자동으로 프로비저닝하면 로컬 PV가 일치하는 모든 장치를 요청할 수 있습니다. LocalVolumeSet 오브젝트를 사용하는 경우 Local Storage Operator가 노드에서 로컬 장치를 관리하는 유일한 엔티티인지 확인합니다. 노드를 두 번 이상 대상으로 하는 LocalVolumeSet 의 여러 인스턴스를 생성할 수 없습니다.

사전 요구 사항

- 클러스터 관리자 권한이 있어야 합니다.

- Local Storage Operator가 설치되어 있습니다.

- OpenShift Container Platform 노드에 로컬 디스크가 연결되어 있습니다.

-

OpenShift Container Platform 웹 콘솔과

oc명령줄 인터페이스(CLI)에 액세스할 수 있습니다.

절차

웹 콘솔에서 로컬 장치를 자동으로 검색할 수 있도록 하려면 다음을 수행합니다.

- 관리자로 Operator → 설치된 Operator로 이동하여 로컬 볼륨 검색 탭을 클릭합니다.

- 로컬 볼륨 검색 만들기를 클릭합니다.

모든 또는 특정 노드에서 사용 가능한 디스크를 검색할지의 여부에 따라 모든 노드 또는 노드 선택을 선택합니다.

참고모든 노드 또는 노드 선택을 사용하여 필터링하는지의 여부와 관계없이 작업자 노드만 사용할 수 있습니다.

- Create를 클릭합니다.

이름이 auto-discover-devices인 로컬 볼륨 검색 인스턴스가 표시됩니다.

노드에 사용 가능한 장치 목록을 표시하려면 다음을 수행합니다.

- OpenShift Container Platform 웹 콘솔에 로그인합니다.

- 컴퓨팅 → 노드로 이동합니다.

- 열기를 원하는 노드 이름을 클릭합니다. "노드 세부 정보" 페이지가 표시됩니다.

선택한 장치 목록을 표시하려면 디스크 탭을 선택합니다.

로컬 디스크가 추가되거나 제거되면 장치 목록이 지속적으로 업데이트됩니다. 장치를 이름, 상태, 유형, 모델, 용량 및 모드로 필터링할 수 있습니다.

웹 콘솔에서 발견된 장치에 대한 로컬 볼륨을 자동으로 프로비저닝하려면 다음을 수행합니다.

- Operator → 설치된 Operator로 이동하고 Operator 목록에서 로컬 스토리지를 선택합니다.

- 로컬 볼륨 세트 → 로컬 볼륨 세트 만들기를 선택합니다.

- 볼륨 세트 이름과 스토리지 클래스 이름을 입력합니다.

그에 따라 필터를 적용하려면 모든 노드 또는 노드 선택을 선택합니다.

참고모든 노드 또는 노드 선택을 사용하여 필터링하는지의 여부와 관계없이 작업자 노드만 사용할 수 있습니다.

로컬 볼륨 세트에 적용할 디스크 유형, 모드, 크기 및 제한을 선택하고 만들기를 클릭합니다.

몇 분 후에 “Operator 조정됨”을 나타내는 메시지가 표시됩니다.

대신 CLI에서 검색된 장치에 대한 로컬 볼륨을 프로비저닝하려면 다음을 수행합니다.

다음 예와 같이