Dieser Inhalt ist in der von Ihnen ausgewählten Sprache nicht verfügbar.

Virtualization

OpenShift Virtualization installation, usage, and release notes

Abstract

Chapter 1. About OpenShift Virtualization

Learn about OpenShift Virtualization’s capabilities and support scope.

1.1. What you can do with OpenShift Virtualization

OpenShift Virtualization is an add-on to OpenShift Container Platform that allows you to run and manage virtual machine workloads alongside container workloads.

OpenShift Virtualization adds new objects into your OpenShift Container Platform cluster by using Kubernetes custom resources to enable virtualization tasks. These tasks include:

- Creating and managing Linux and Windows virtual machines (VMs)

- Running pod and VM workloads alongside each other in a cluster

- Connecting to virtual machines through a variety of consoles and CLI tools

- Importing and cloning existing virtual machines

- Managing network interface controllers and storage disks attached to virtual machines

- Live migrating virtual machines between nodes

An enhanced web console provides a graphical portal to manage these virtualized resources alongside the OpenShift Container Platform cluster containers and infrastructure.

OpenShift Virtualization is designed and tested to work well with Red Hat OpenShift Data Foundation features.

When you deploy OpenShift Virtualization with OpenShift Data Foundation, you must create a dedicated storage class for Windows virtual machine disks. See Optimizing ODF PersistentVolumes for Windows VMs for details.

You can use OpenShift Virtualization with the OVN-Kubernetes, OpenShift SDN, or one of the other certified network plugins listed in Certified OpenShift CNI Plug-ins.

You can check your OpenShift Virtualization cluster for compliance issues by installing the Compliance Operator and running a scan with the ocp4-moderate and ocp4-moderate-node profiles. The Compliance Operator uses OpenSCAP, a NIST-certified tool, to scan and enforce security policies.

1.1.1. OpenShift Virtualization supported cluster version

The latest stable release of OpenShift Virtualization 4.13 is 4.13.11.

OpenShift Virtualization 4.13 is supported for use on OpenShift Container Platform 4.13 clusters. To use the latest z-stream release of OpenShift Virtualization, you must first upgrade to the latest version of OpenShift Container Platform.

1.2. About storage volumes for virtual machine disks

If you use the storage API with known storage providers, volume and access modes are selected automatically. However, if you use a storage class that does not have a storage profile, you must select the volume and access mode.

For best results, use accessMode: ReadWriteMany and volumeMode: Block. This is important for the following reasons:

- The ReadWriteMany (RWX) access mode is required for live migration.

The

Blockvolume mode performs significantly better in comparison to theFilesystemvolume mode. This is because theFilesystemvolume mode uses more storage layers, including a file system layer and a disk image file. These layers are not necessary for VM disk storage.For example, if you use Red Hat OpenShift Data Foundation, Ceph RBD volumes are preferable to CephFS volumes.

ImportantYou cannot live migrate virtual machines that use:

- A storage volume with ReadWriteOnce (RWO) access mode

- Passthrough features such as GPUs

Do not set the

evictionStrategyfield toLiveMigratefor these virtual machines.

1.3. Single-node OpenShift differences

You can install OpenShift Virtualization on single-node OpenShift.

However, you should be aware that Single-node OpenShift does not support the following features:

- High availability

- Pod disruption

- Live migration

- Virtual machines or templates that have an eviction strategy configured

Chapter 2. Supported limits

You can refer to tested object maximums when planning your OpenShift Container Platform environment for OpenShift Virtualization. However, approaching the maximum values can reduce performance and increase latency. Ensure that you plan for your specific use case and consider all factors that can impact cluster scaling.

For more information about cluster configuration and options that impact performance, see the OpenShift Virtualization - Tuning & Scaling Guide in the Red Hat Knowledgebase.

2.1. Tested maximums for OpenShift Virtualization

The following limits apply to a large-scale OpenShift Virtualization 4.x environment. They are based on a single cluster of the largest possible size. When you plan an environment, remember that multiple smaller clusters might be the best option for your use case.

2.1.1. Virtual machine maximums

The following maximums apply to virtual machines (VMs) running on OpenShift Virtualization. These values are subject to the limits specified in Virtualization limits for Red Hat Enterprise Linux with KVM.

| Objective (per VM) | Tested limit | Theoretical limit |

|---|---|---|

| Virtual CPUs | 216 vCPUs | 255 vCPUs |

| Memory | 6 TB | 16 TB |

| Single disk size | 20 TB | 100 TB |

| Hot-pluggable disks | 255 disks | N/A |

Each VM must have at least 512 MB of memory.

2.1.2. Host maximums

The following maximums apply to the OpenShift Container Platform hosts used for OpenShift Virtualization.

| Objective (per host) | Tested limit | Theoretical limit |

|---|---|---|

| Logical CPU cores or threads | Same as Red Hat Enterprise Linux (RHEL) | N/A |

| RAM | Same as RHEL | N/A |

| Simultaneous live migrations | Defaults to 2 outbound migrations per node, and 5 concurrent migrations per cluster | Depends on NIC bandwidth |

| Live migration bandwidth | No default limit | Depends on NIC bandwidth |

2.1.3. Cluster maximums

The following maximums apply to objects defined in OpenShift Virtualization.

| Objective (per cluster) | Tested limit | Theoretical limit |

|---|---|---|

| Number of attached PVs per node | N/A | CSI storage provider dependent |

| Maximum PV size | N/A | CSI storage provider dependent |

| Hosts | 500 hosts (100 or fewer recommended) [1] | Same as OpenShift Container Platform |

| Defined VMs | 10,000 VMs [2] | Same as OpenShift Container Platform |

If you use more than 100 nodes, consider using Red Hat Advanced Cluster Management (RHACM) to manage multiple clusters instead of scaling out a single control plane. Larger clusters add complexity, require longer updates, and depending on node size and total object density, they can increase control plane stress.

Using multiple clusters can be beneficial in areas like per-cluster isolation and high availability.

The maximum number of VMs per node depends on the host hardware and resource capacity. It is also limited by the following parameters:

-

Settings that limit the number of pods that can be scheduled to a node. For example:

maxPods. -

The default number of KVM devices. For example:

devices.kubevirt.io/kvm: 1k.

-

Settings that limit the number of pods that can be scheduled to a node. For example:

Chapter 3. OpenShift Virtualization architecture

Learn about OpenShift Virtualization architecture.

3.1. How OpenShift Virtualization architecture works

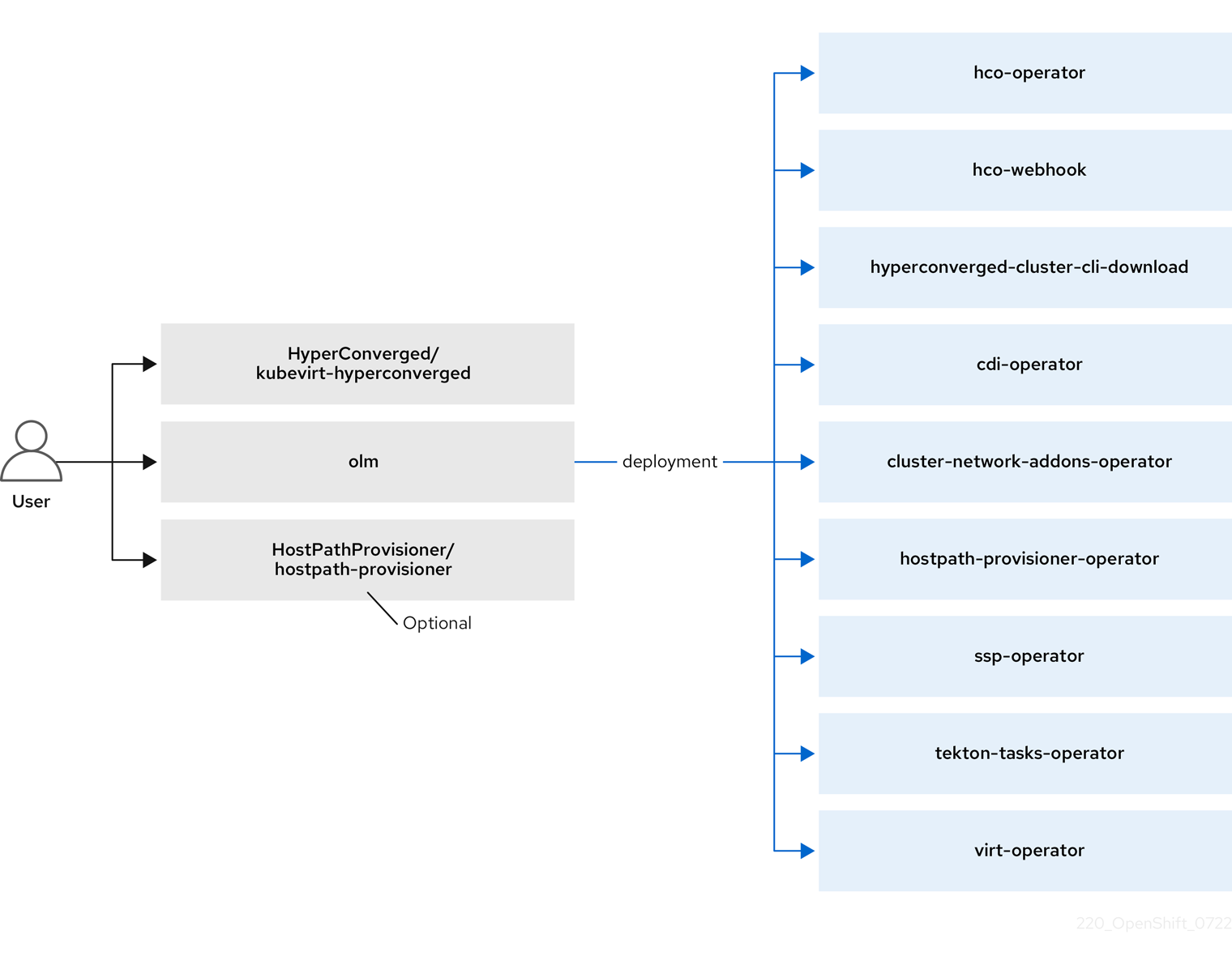

After you install OpenShift Virtualization, the Operator Lifecycle Manager (OLM) deploys operator pods for each component of OpenShift Virtualization:

-

Compute:

virt-operator -

Storage:

cdi-operator -

Network:

cluster-network-addons-operator -

Scaling:

ssp-operator -

Templating:

tekton-tasks-operator

OLM also deploys the hyperconverged-cluster-operator pod, which is responsible for the deployment, configuration, and life cycle of other components, and several helper pods: hco-webhook, and hyperconverged-cluster-cli-download.

After all operator pods are successfully deployed, you should create the HyperConverged custom resource (CR). The configurations set in the HyperConverged CR serve as the single source of truth and the entrypoint for OpenShift Virtualization, and guide the behavior of the CRs.

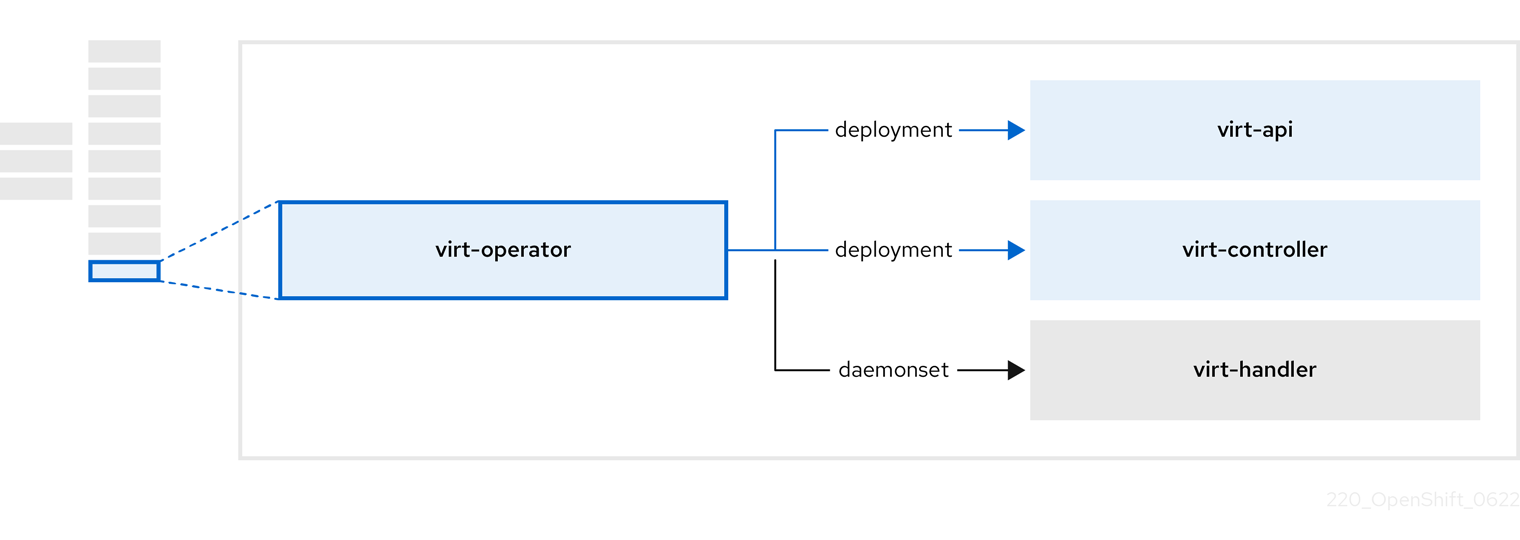

The HyperConverged CR creates corresponding CRs for the operators of all other components within its reconciliation loop. Each operator then creates resources such as daemon sets, config maps, and additional components for the OpenShift Virtualization control plane. For example, when the hco-operator creates the KubeVirt CR, the virt-operator reconciles it and create additional resources such as virt-controller, virt-handler, and virt-api.

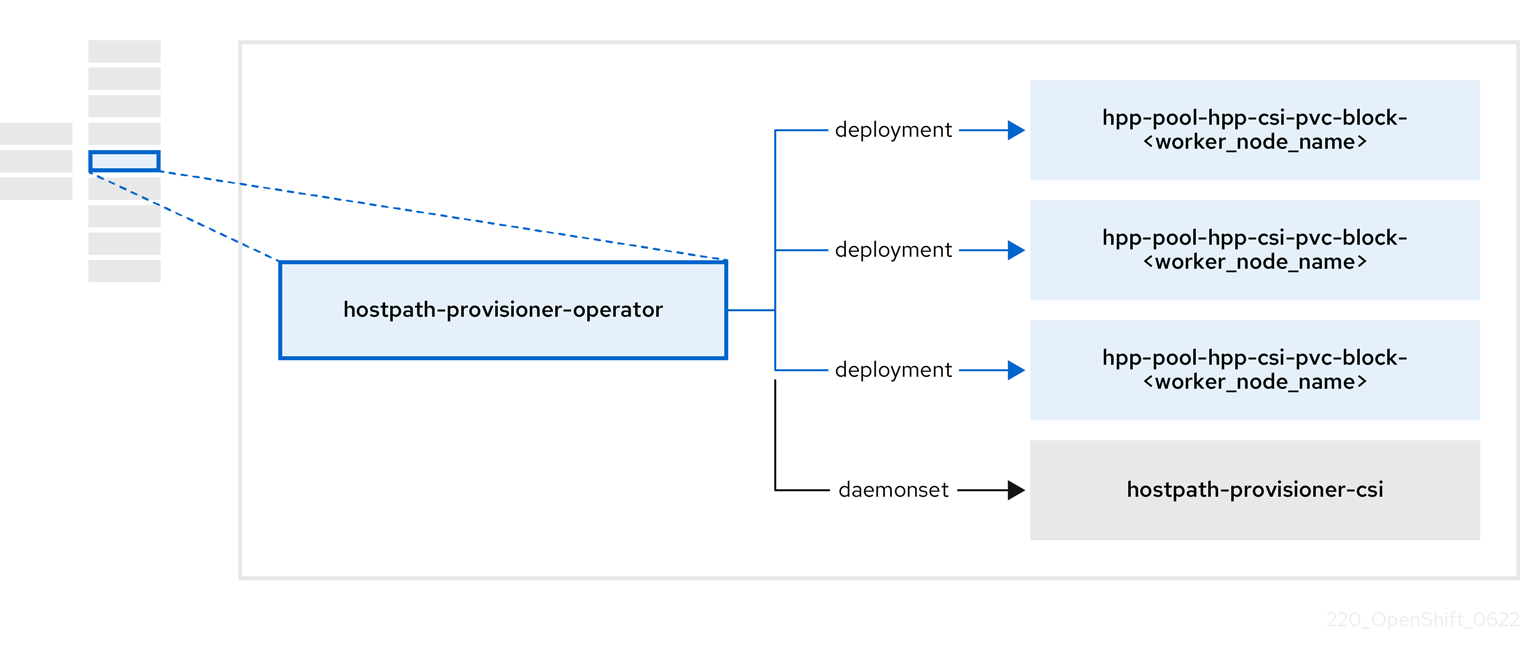

The OLM deploys the hostpath-provisioner-operator, but it is not functional until you create a hostpath provisioner (HPP) CR.

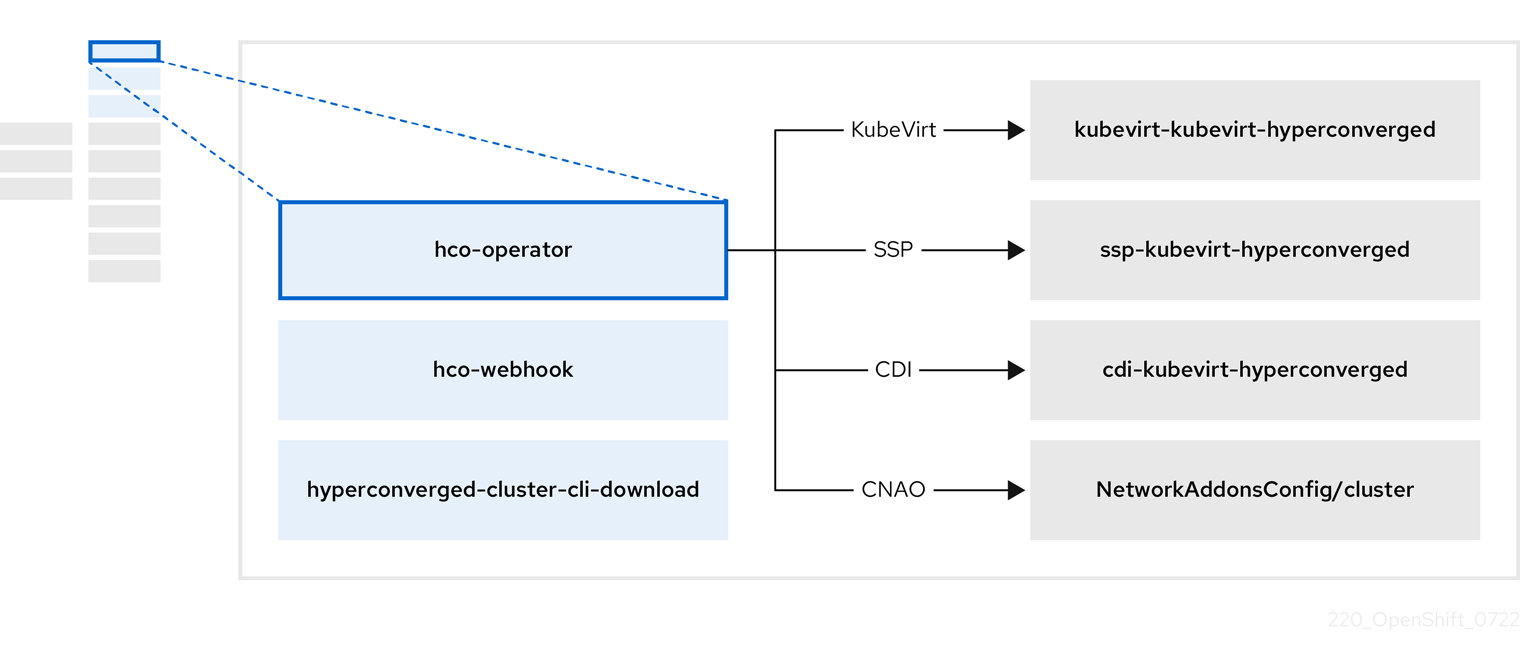

3.2. About the hco-operator

The hco-operator (HCO) provides a single entry point for deploying and managing OpenShift Virtualization and several helper operators with opinionated defaults. It also creates custom resources (CRs) for those operators.

| Component | Description |

|---|---|

|

|

Validates the |

|

|

Provides the |

|

| Contains all operators, CRs, and objects needed by OpenShift Virtualization. |

|

| An SSP CR. This is automatically created by the HCO. |

|

| A CDI CR. This is automatically created by the HCO. |

|

|

A CR that instructs and is managed by the |

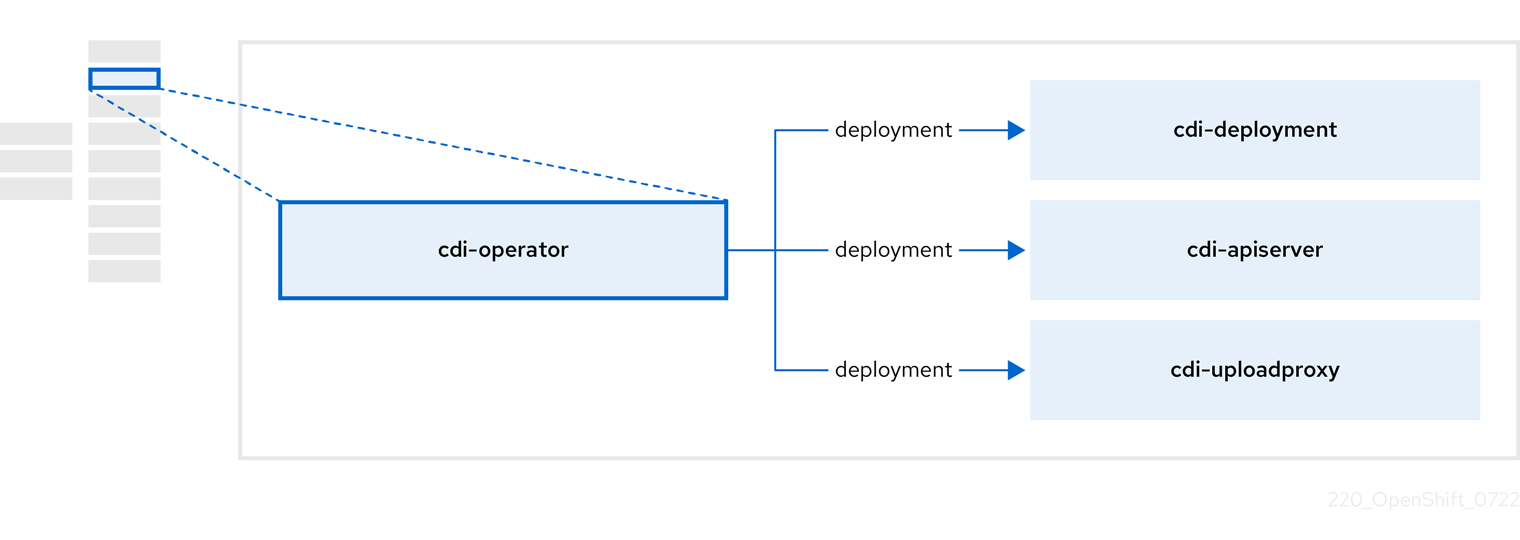

3.3. About the cdi-operator

The cdi-operator manages the Containerized Data Importer (CDI), and its related resources, which imports a virtual machine (VM) image into a persistent volume claim (PVC) by using a data volume.

| Component | Description |

|---|---|

|

| Manages the authorization to upload VM disks into PVCs by issuing secure upload tokens. |

|

| Directs external disk upload traffic to the appropriate upload server pod so that it can be written to the correct PVC. Requires a valid upload token. |

|

| Helper pod that imports a virtual machine image into a PVC when creating a data volume. |

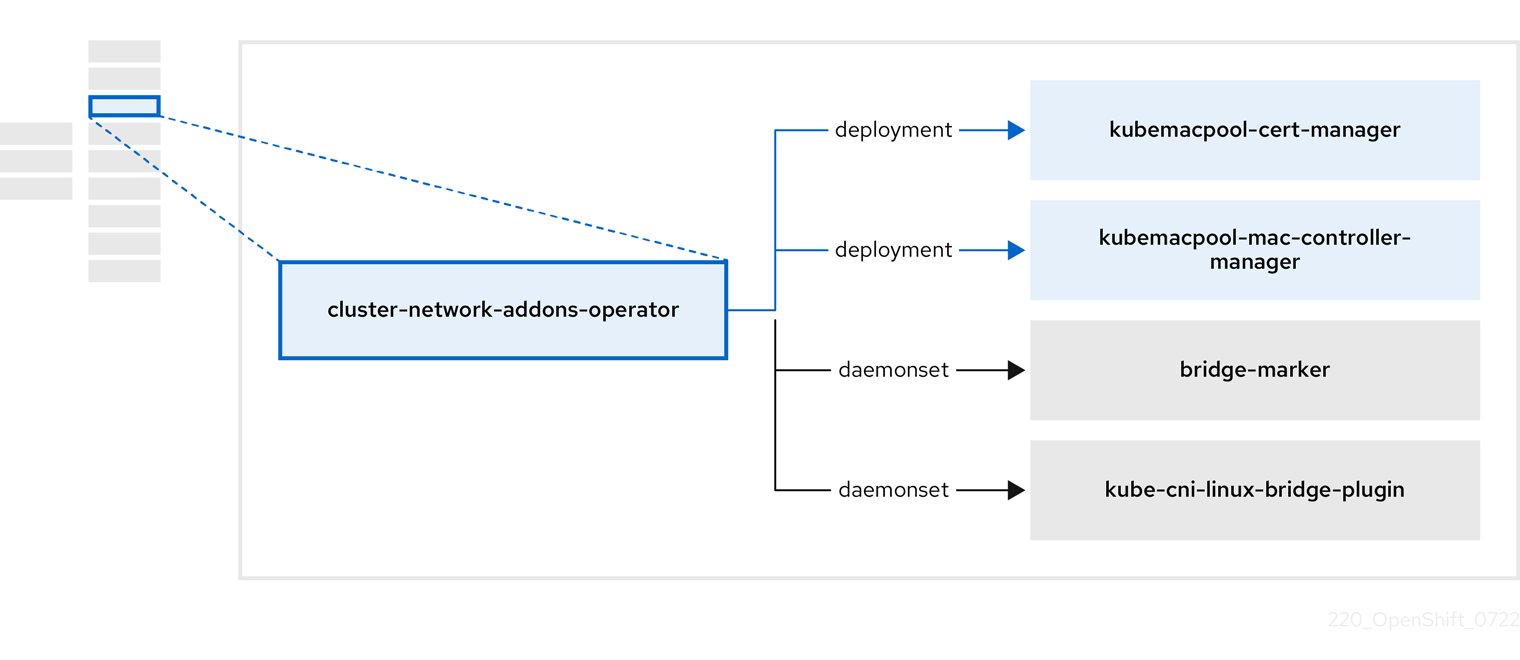

3.4. About the cluster-network-addons-operator

The cluster-network-addons-operator deploys networking components on a cluster and manages the related resources for extended network functionality.

| Component | Description |

|---|---|

|

| Manages TLS certificates of Kubemacpool’s webhooks. |

|

| Provides a MAC address pooling service for virtual machine (VM) network interface cards (NICs). |

|

| Marks network bridges available on nodes as node resources. |

|

| Installs CNI plugins on cluster nodes, enabling the attachment of VMs to Linux bridges through network attachment definitions. |

3.5. About the hostpath-provisioner-operator

The hostpath-provisioner-operator deploys and manages the multi-node hostpath provisioner (HPP) and related resources.

| Component | Description |

|---|---|

|

| Provides a worker for each node where the hostpath provisioner (HPP) is designated to run. The pods mount the specified backing storage on the node. |

|

| Implements the Container Storage Interface (CSI) driver interface of the HPP. |

|

| Implements the legacy driver interface of the HPP. |

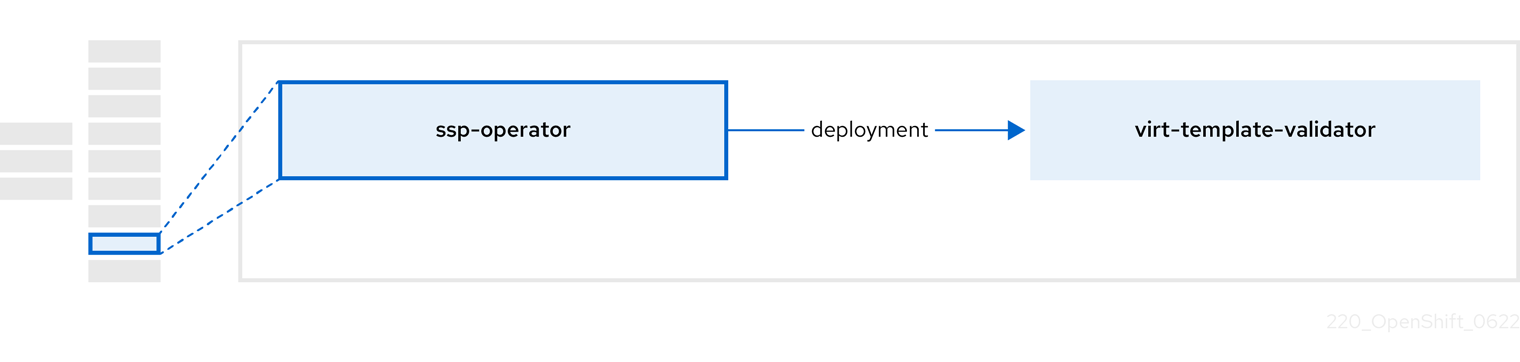

3.6. About the ssp-operator

The ssp-operator deploys the common templates, the related default boot sources, and the template validator.

| Component | Description |

|---|---|

|

|

Checks |

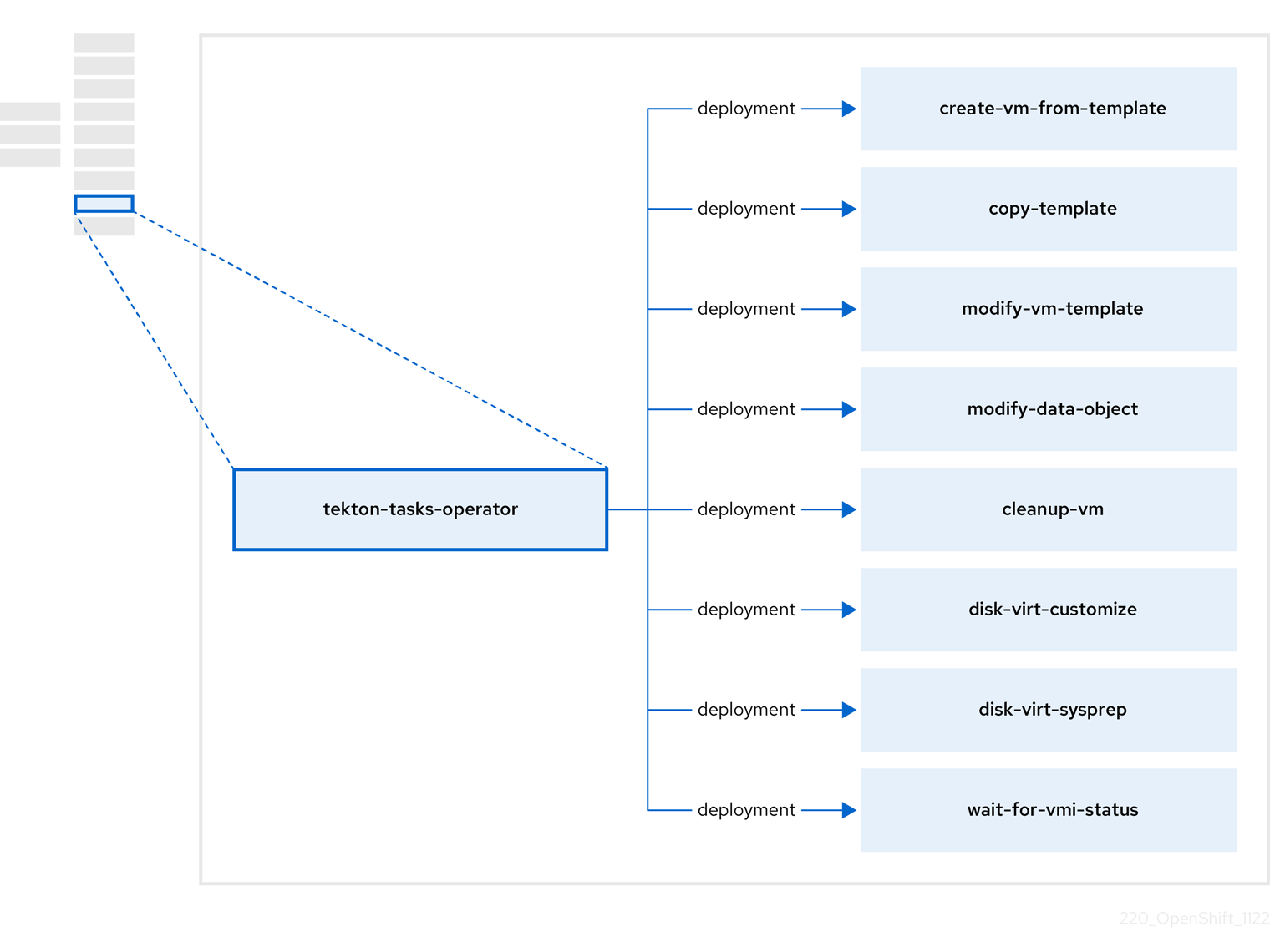

3.7. About the tekton-tasks-operator

The tekton-tasks-operator deploys example pipelines showing the usage of OpenShift Pipelines for VMs. It also deploys additional OpenShift Pipeline tasks that allow users to create VMs from templates, copy and modify templates, and create data volumes.

| Component | Description |

|---|---|

|

| Creates a VM from a template. |

|

| Copies a VM template. |

|

| Creates or removes a VM template. |

|

| Creates or removes data volumes or data sources. |

|

| Runs a script or a command on a VM, then stops or deletes the VM afterward. |

|

|

Runs a |

|

|

Runs a |

|

| Waits for a specific VMI status, then fails or succeeds according to that status. |

3.8. About the virt-operator

The virt-operator deploys, upgrades, and manages OpenShift Virtualization without disrupting current virtual machine (VM) workloads.

| Component | Description |

|---|---|

|

| HTTP API server that serves as the entry point for all virtualization-related flows. |

|

|

Observes the creation of a new VM instance object and creates a corresponding pod. When the pod is scheduled on a node, |

|

|

Monitors any changes to a VM and instructs |

|

|

Contains the VM that was created by the user as implemented by |

Chapter 4. Getting started with OpenShift Virtualization

You can explore the features and functionalities of OpenShift Virtualization by installing and configuring a basic environment.

Cluster configuration procedures require cluster-admin privileges.

4.1. Planning and installing OpenShift Virtualization

Plan and install OpenShift Virtualization on an OpenShift Container Platform cluster:

Planning and installation resources

4.2. Creating and managing virtual machines

Create virtual machines (VMs) by using the web console:

Connect to VMs:

- Connect to the serial console or VNC console of a VM by using the web console.

- Connect to a VM by using SSH.

- Connect to a Windows VM by using RDP.

Manage VMs:

4.3. Next steps

Connect the VMs to secondary networks:

- Connect a VM to a Linux bridge network.

Connect a VM to an SR-IOV network.

NoteVMs are connected to the pod network by default. You must configure a secondary network, such as Linux bridge or SR-IOV, and then add the network to the VM configuration.

- Live migrate VMs.

- Back up and restore VMs.

- Tune and scale your cluster

Chapter 5. Web console overview

The Virtualization section of the OpenShift Container Platform web console contains the following pages for managing and monitoring your OpenShift Virtualization environment.

| Page | Description |

|---|---|

| Manage and monitor the OpenShift Virtualization environment. | |

| Create VirtualMachines from a catalog of templates. | |

| Configure and monitor VirtualMachines. | |

| Create and manage templates. | |

| Create and manage DataSources for VirtualMachine boot sources. | |

| Create and manage MigrationPolicies for workloads. |

| Icon | Description |

|---|---|

|

| Edit icon |

|

| Link icon |

5.1. Overview page

The Overview page displays resources, metrics, migration progress, and cluster-level settings.

Example 5.1. Overview page

| Element | Description |

|---|---|

|

Download virtctl |

Download the |

| Resources, usage, alerts, and status | |

| Top consumers of CPU, memory, and storage resources | |

| Status of live migrations | |

| Cluster-wide settings, including live migration limits and user permissions |

5.1.1. Overview tab

The Overview tab displays resources, usage, alerts, and status.

Example 5.2. Overview tab

| Element | Description |

|---|---|

| Getting started resources card |

|

| VirtualMachines tile | Number of VirtualMachines, with a chart showing the last 7 days' trend |

| vCPU usage tile | vCPU usage, with a chart showing the last 7 days' trend |

| Memory tile | Memory usage, with a chart showing the last 7 days' trend |

| Storage tile | Storage usage, with a chart showing the last 7 days' trend |

| Alerts tile | OpenShift Virtualization alerts, grouped by severity |

| VirtualMachine statuses tile | Number of VirtualMachines, grouped by status |

| VirtualMachines per template chart | Number of VirtualMachines created from templates, grouped by template name |

5.1.2. Top consumers tab

The Top consumers tab displays the top consumers of CPU, memory, and storage.

Example 5.3. Top consumers tab

| Element | Description |

|---|---|

|

View virtualization dashboard | Link to Observe → Dashboards, which displays the top consumers for OpenShift Virtualization |

| Time period list | Select a time period to filter the results. |

| Top consumers list | Select the number of top consumers to filter the results. |

| CPU chart | VirtualMachines with the highest CPU usage |

| Memory chart | VirtualMachines with the highest memory usage |

| Memory swap traffic chart | VirtualMachines with the highest memory swap traffic |

| vCPU wait chart | VirtualMachines with the highest vCPU wait periods |

| Storage throughput chart | VirtualMachines with the highest storage throughput usage |

| Storage IOPS chart | VirtualMachines with the highest storage input/output operations per second usage |

5.1.3. Migrations tab

The Migrations tab displays the status of VirtualMachineInstance migrations.

Example 5.4. Migrations tab

| Element | Description |

|---|---|

| Time period list | Select a time period to filter VirtualMachineInstanceMigrations. |

| VirtualMachineInstanceMigrations table | List of VirtualMachineInstance migrations |

5.1.4. Settings tab

The Settings tab displays cluster-wide settings on the following tabs:

| Tab | Description |

|---|---|

| OpenShift Virtualization version and update status | |

| Live migration limits and network settings | |

| Project for Red Hat templates | |

| Cluster-wide user permissions |

5.1.4.1. General tab

The General tab displays the OpenShift Virtualization version and update status.

Example 5.5. General tab

| Label | Description |

|---|---|

| Service name | OpenShift Virtualization |

| Provider | Red Hat |

| Installed version | 4.13.11 |

| Update status |

Example: |

| Channel | Channel selected for updates |

5.1.4.2. Live migration tab

You can configure live migration on the Live migration tab.

Example 5.6. Live migration tab

| Element | Description |

|---|---|

| Max. migrations per cluster field | Select the maximum number of live migrations per cluster. |

| Max. migrations per node field | Select the maximum number of live migrations per node. |

| Live migration network list | Select a dedicated secondary network for live migration. |

5.1.4.3. Templates project tab

You can select a project for templates on the Templates project tab.

Example 5.7. Templates project tab

| Element | Description |

|---|---|

| Project list |

Select a project in which to store Red Hat templates. The default template project is If you want to define multiple template projects, you must clone the templates on the Templates page for each project. |

5.1.4.4. User permissions tab

The User permissions tab displays cluster-wide user permissions for tasks.

Example 5.8. User permissions tab

| Element | Description |

|---|---|

| User permissions table | List of tasks, such as Share templates, and permissions |

5.2. Catalog page

You can create a VirtualMachine by selecting a template or boot source on the Catalog page.

Example 5.9. Catalog page

| Element | Description |

|---|---|

| Select a template to create a VirtualMachine from. |

5.2.1. Template catalog tab

| Element | Description |

|---|---|

| Template project list | Select the project in which your templates are located.

By default, Red Hat templates are stored in the |

| All items|Default templates | Click Default templates to display only default templates. |

| Boot source available checkbox | Select the checkbox to display templates with an available boot source. |

| Operating system checkboxes | Select checkboxes to display templates with selected operating systems. |

| Workload checkboxes | Select checkboxes to display templates with selected workloads. |

| Search field | Search templates by keyword. |

| Template tiles | Click a template tile to view template details and to create a VirtualMachine. |

5.3. VirtualMachines page

You can create and manage VirtualMachines on the VirtualMachines page.

Example 5.10. VirtualMachines page

| Element | Description |

|---|---|

| Create → From template | Create a VirtualMachine on the Catalog page → Template catalog tab. |

| Create → From YAML | Create a VirtualMachine by editing a YAML configuration file. |

| Filter field | Filter VirtualMachines by status, template, operating system, or node. |

| Search field | Search for VirtualMachines by name or by label. |

| VirtualMachines table | List of VirtualMachines

Click the Options menu

Click a VirtualMachine to navigate to the VirtualMachine details page. |

5.3.1. VirtualMachine details page

You can configure a VirtualMachine on the VirtualMachine details page.

Example 5.11. VirtualMachine details page

| Element | Description |

|---|---|

| Actions menu | Click the Actions menu to select Stop, Restart, Pause, Clone, Migrate, Copy SSH command, Edit labels, Edit annotations, or Delete. |

| Resource usage, alerts, disks, and devices | |

| VirtualMachine details and configurations | |

| Memory, CPU, storage, network, and migration metrics | |

| VirtualMachine YAML configuration file | |

| Contains the Scheduling, Environment, Network interfaces, Disks, and Scripts tabs | |

| Scheduling a VirtualMachine to run on specific nodes | |

| Config map, secret, and service account management | |

| Network interfaces | |

| Disks | |

| Cloud-init settings, SSH key for Linux VirtualMachines, Sysprep answer file for Windows VirtualMachines | |

| VirtualMachine event stream | |

| Console session management | |

| Snapshot management | |

| Status conditions and volume snapshot status |

5.3.1.1. Overview tab

The Overview tab displays resource usage, alerts, and configuration information.

Example 5.12. Overview tab

| Element | Description |

|---|---|

| Details tile | General VirtualMachine information |

| Utilization tile | CPU, Memory, Storage, and Network transfer charts. By default, Network transfer displays the sum of all networks. To view the breakdown for a specific network, click Breakdown by network. |

| Hardware devices tile | GPU and host devices |

| Alerts tile | OpenShift Virtualization alerts, grouped by severity |

| Snapshots tile |

Take snapshot |

| Network interfaces tile | Network interfaces table |

| Disks tile | Disks table |

5.3.1.2. Details tab

You can view information about the VirtualMachine and edit labels, annotations, and other metadata and on the Details tab.

Example 5.13. Details tab

| Element | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Name | VirtualMachine name |

| Namespace | VirtualMachine namespace |

| Labels | Click the edit icon to edit the labels. |

| Annotations | Click the edit icon to edit the annotations. |

| Description | Click the edit icon to enter a description. |

| Operating system | Operating system name |

| CPU|Memory | Click the edit icon to edit the CPU|Memory request.

The number of CPUs is calculated by using the following formula: |

| Machine type | VirtualMachine machine type |

| Boot mode | Click the edit icon to edit the boot mode. |

| Start in pause mode | Click the edit icon to enable this setting. |

| Template | Name of the template used to create the VirtualMachine |

| Created at | VirtualMachine creation date |

| Owner | VirtualMachine owner |

| Status | VirtualMachine status |

| Pod |

|

| VirtualMachineInstance | VirtualMachineInstance name |

| Boot order | Click the edit icon to select a boot source. |

| IP address | IP address of the VirtualMachine |

| Hostname | Hostname of the VirtualMachine |

| Time zone | Time zone of the VirtualMachine |

| Node | Node on which the VirtualMachine is running |

| Workload profile | Click the edit icon to edit the workload profile. |

| SSH using virtctl |

Click the copy icon to copy the |

| SSH service type options | Select SSH over LoadBalancer or SSH over NodePort. |

| GPU devices | Click the edit icon to add a GPU device. |

| Host devices | Click the edit icon to add a host device. |

| Headless mode | Click the edit icon to enable headless mode. |

| Services section | Displays services if QEMU guest agent is installed. |

| Active users section | Displays active users if QEMU guest agent is installed. |

5.3.1.3. Metrics tab

The Metrics tab displays memory, CPU, storage, network, and migration usage charts.

Example 5.14. Metrics tab

| Element | Description |

|---|---|

| Time range list | Select a time range to filter the results. |

|

Virtualization dashboard | Link to the Workloads tab of the current project |

| Utilization section | Memory and CPU charts |

| Storage section | Storage total read/write and Storage IOPS total read/write charts |

| Network section | Network in, Network out, Network bandwidth, and Network interface charts. Select All networks or a specific network from the Network interface dropdown. |

| Migration section | Migration and KV data transfer rate charts |

5.3.1.4. YAML tab

You can configure the VirtualMachine by editing the YAML file on the YAML tab.

Example 5.15. YAML tab

| Element | Description |

|---|---|

| Save button | Save changes to the YAML file. |

| Reload button | Discard your changes and reload the YAML file. |

| Cancel button | Exit the YAML tab. |

| Download button | Download the YAML file to your local machine. |

5.3.1.5. Configuration tab

You can configure scheduling, network interfaces, disks, and other options on the Configuration tab.

Example 5.16. Tabs on the Configuration tab

| Tab | Description |

|---|---|

| Scheduling a VirtualMachine to run on specific nodes | |

| Config maps, secrets, and service accounts | |

| Network interfaces | |

| Disks | |

| Cloud-init settings, SSH key for Linux VirtualMachines, Sysprep answer file for Windows VirtualMachines |

5.3.1.5.1. Scheduling tab

You can configure VirtualMachines to run on specific nodes on the Scheduling tab.

Example 5.17. Scheduling tab

| Setting | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Node selector | Click the edit icon to add a label to specify qualifying nodes. |

| Tolerations | Click the edit icon to add a toleration to specify qualifying nodes. |

| Affinity rules | Click the edit icon to add an affinity rule. |

| Descheduler switch | Enable or disable the descheduler. The descheduler evicts a running pod so that the pod can be rescheduled onto a more suitable node. |

| Dedicated resources | Click the edit icon to select Schedule this workload with dedicated resources (guaranteed policy). |

| Eviction strategy | Click the edit icon to select LiveMigrate as the VirtualMachineInstance eviction strategy. |

5.3.1.5.2. Environment tab

You can manage config maps, secrets, and service accounts on the Environment tab.

Example 5.18. Environment tab

| Element | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

|

Add Config Map, Secret or Service Account | Click the link and select a config map, secret, or service account from the resource list. |

5.3.1.5.3. Network interfaces tab

You can manage network interfaces on the Network interfaces tab.

Example 5.19. Network interfaces tab

| Setting | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Add network interface button | Add a network interface to the VirtualMachine. |

| Filter field | Filter by interface type. |

| Search field | Search for a network interface by name or by label. |

| Network interface table | List of network interfaces

Click the Options menu

|

5.3.1.5.4. Disks tab

You can manage disks on the Disks tab.

Example 5.20. Disks tab

| Setting | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Add disk button | Add a disk to the VirtualMachine. |

| Filter field | Filter by disk type. |

| Search field | Search for a disk by name. |

| Mount Windows drivers disk checkbox | Select to mount an ephemeral container disk as a CD-ROM. |

| Disks table | List of VirtualMachine disks

Click the Options menu

|

| File systems table | List of VirtualMachine file systems if QEMU guest agent is installed |

5.3.1.5.5. Scripts tab

You can configure cloud-init, add an SSH key for a Linux VirtualMachine, and upload a Sysprep answer file for a Windows VirtualMachine on the Scripts tab.

Example 5.21. Scripts tab

| Element | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Cloud-init | Click the edit icon to edit the cloud-init settings. |

| Authorized SSH Key | Click the edit icon to create a new secret or to attach an existing secret. |

| Sysprep |

Click the edit icon to upload an |

5.3.1.6. Events tab

The Events tab displays a list of VirtualMachine events.

5.3.1.7. Console tab

You can open a console session to the VirtualMachine on the Console tab.

Example 5.22. Console tab

| Element | Description |

|---|---|

| Guest login credentials section |

Expand Guest login credentials to view the credentials created with |

| Console list | Select VNC console or Serial console. You can select Desktop viewer to connect to Windows VirtualMachines by using Remote Desktop Protocol (RDP). You must install an RDP client on a machine on the same network. |

| Send key list | Select a key-stroke combination to send to the console. |

| Disconnect button | Disconnect the console connection. You must manually disconnect the console connection if you open a new console session. Otherwise, the first console session continues to run in the background. |

| Paste button | You can paste a string from your client’s clipboard into the guest when using the VNC console. |

5.3.1.8. Snapshots tab

You can create snapshots and restore VirtualMachines from snapshots on the Snapshots tab.

Example 5.23. Snapshots tab

| Element | Description |

|---|---|

| Take snapshot button | Create a snapshot. |

| Filter field | Filter snapshots by status. |

| Search field | Search for snapshots by name or by label. |

| Snapshot table | List of snapshots Click the snapshot name to edit the labels or annotations.

Click the Options menu

|

5.3.1.9. Diagnostics tab

You can view the status conditions and volume snapshot status on the Diagnostics tab.

Example 5.24. Diagnostics tab

| Element | Description |

|---|---|

| Status conditions table | Display a list of conditions that are reported for all aspects of a VM. |

| Filter field | Filter status conditions by category and condition. |

| Search field | Search status conditions by reason. |

| Manage columns icon | Select columns to display. |

| Volume snapshot table | List of volumes, their snapshot enablement status, and reason |

5.4. Templates page

You can create, edit, and clone VirtualMachine templates on the Templates page.

You cannot edit a Red Hat template. You can clone a Red Hat template and edit it to create a custom template.

Example 5.25. Templates page

| Element | Description |

|---|---|

| Create Template button | Create a template by editing a YAML configuration file. |

| Filter field | Filter templates by type, boot source, template provider, or operating system. |

| Search field | Search for templates by name or by label. |

| Templates table | List of templates

Click the Options menu

|

5.4.1. Template details page

You can view template settings and edit custom templates on the Template details page.

Example 5.26. Template details page

| Element | Description |

|---|---|

| Actions menu | Click the Actions menu to select Edit, Clone, Edit boot source, Edit boot source reference, Edit labels, Edit annotations, or Delete. |

| Template settings and configurations | |

| YAML configuration file | |

| Scheduling configurations | |

| Network interface management | |

| Disk management | |

| Cloud-init, SSH key, and Sysprep management | |

| Parameters |

5.4.1.1. Details tab

You can configure a custom template on the Details tab.

Example 5.27. Details tab

| Element | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Name | Template name |

| Namespace | Template namespace |

| Labels | Click the edit icon to edit the labels. |

| Annotations | Click the edit icon to edit the annotations. |

| Display name | Click the edit icon to edit the display name. |

| Description | Click the edit icon to enter a description. |

| Operating system | Operating system name |

| CPU|Memory | Click the edit icon to edit the CPU|Memory request.

The number of CPUs is calculated by using the following formula: |

| Machine type | Template machine type |

| Boot mode | Click the edit icon to edit the boot mode. |

| Base template | Name of the base template used to create this template |

| Created at | Template creation date |

| Owner | Template owner |

| Boot order | Template boot order |

| Boot source | Boot source availability |

| Provider | Template provider |

| Support | Template support level |

| GPU devices | Click the edit icon to add a GPU device. |

| Host devices | Click the edit icon to add a host device. |

5.4.1.2. YAML tab

You can configure a custom template by editing the YAML file on the YAML tab.

Example 5.28. YAML tab

| Element | Description |

|---|---|

| Save button | Save changes to the YAML file. |

| Reload button | Discard your changes and reload the YAML file. |

| Cancel button | Exit the YAML tab. |

| Download button | Download the YAML file to your local machine. |

5.4.1.3. Scheduling tab

You can configure scheduling on the Scheduling tab.

Example 5.29. Scheduling tab

| Setting | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Node selector | Click the edit icon to add a label to specify qualifying nodes. |

| Tolerations | Click the edit icon to add a toleration to specify qualifying nodes. |

| Affinity rules | Click the edit icon to add an affinity rule. |

| Descheduler switch | Enable or disable the descheduler. The descheduler evicts a running pod so that the pod can be rescheduled onto a more suitable node. |

| Dedicated resources | Click the edit icon to select Schedule this workload with dedicated resources (guaranteed policy). |

| Eviction strategy | Click the edit icon to select LiveMigrate as the VirtualMachineInstance eviction strategy. |

5.4.1.4. Network interfaces tab

You can manage network interfaces on the Network interfaces tab.

Example 5.30. Network interfaces tab

| Setting | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Add network interface button | Add a network interface to the template. |

| Filter field | Filter by interface type. |

| Search field | Search for a network interface by name or by label. |

| Network interface table | List of network interfaces

Click the Options menu

|

5.4.1.5. Disks tab

You can manage disks on the Disks tab.

Example 5.31. Disks tab

| Setting | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Add disk button | Add a disk to the template. |

| Filter field | Filter by disk type. |

| Search field | Search for a disk by name. |

| Disks table | List of template disks

Click the Options menu

|

5.4.1.6. Scripts tab

You can manage the cloud-init settings, SSH keys, and Sysprep answer files on the Scripts tab.

Example 5.32. Scripts tab

| Element | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| Cloud-init | Click the edit icon to edit the cloud-init settings. |

| Authorized SSH Key | Click the edit icon to create a new secret or to attach an existing secret. |

| Sysprep |

Click the edit icon to upload an |

5.4.1.7. Parameters tab

You can edit selected template settings on the Parameters tab.

Example 5.33. Parameters tab

| Element | Description |

|---|---|

| YAML switch | Set to ON to view your live changes in the YAML configuration file. |

| VM name | Select Generated (expression) for a generated value, Value to set a default value, or None from the Default value type list. |

| DataSource name | Select Generated (expression) for a generated value, Value to set a default value, or None from the Default value type list. |

| DataSource namespace | Select Generated (expression) for a generated value, Value to set a default value, or None from the Default value type list. |

| Cloud user password | Select Generated (expression) for a generated value, Value to set a default value, or None from the Default value type list. |

5.5. DataSources page

You can create and configure DataSources for VirtualMachine boot sources on the DataSources page.

When you create a DataSource, a DataImportCron resource defines a cron job to poll and import the disk image unless you disable automatic boot source updates.

Example 5.34. DataSources page

| Element | Description |

|---|---|

| Create DataSource → With form | Create a DataSource by entering the registry URL, disk size, number of revisions, and cron expression in a form. |

| Create DataSources → With YAML | Create a DataSource by editing a YAML configuration file. |

| Filter field | Filter DataSources by attributes such as DataImportCron available. |

| Search field | Search for a DataSource by name or by label. |

| DataSources table | List of DataSources

Click the Options menu

|

Click a DataSource to view the DataSource details page.

5.5.1. DataSource details page

You can configure a DataSource on the DataSource details page.

Example 5.35. DataSource details page

| Element | Description |

|---|---|

| Details tab | Configure a DataSource by editing a form. |

| YAML tab | Configure a DataSource by editing a YAML configuration file. |

| Actions menu | Select Edit labels, Edit annotations, Delete, or Manage source. |

| Name | DataSource name |

| Namespace | DataSource namespace |

| DataImportCron | DataSource DataImportCron |

| Labels | Click the edit icon to edit the labels. |

| Annotations | Click the edit icon to edit the annotations. |

| Conditions | Displays the status conditions of the DataSource. |

| Created at | DataSource creation date |

| Owner | DataSource owner |

5.6. MigrationPolicies page

You can manage MigrationPolicies for your workloads on the MigrationPolicies page.

Example 5.36. MigrationPolicies page

| Element | Description |

|---|---|

| Create MigrationPolicy → With form | Create a MigrationPolicy by entering configurations and labels in a form. |

| Create MigrationPolicy → With YAML | Create a MigrationPolicy by editing a YAML configuration file. |

| Name | Label search field | Search for a MigrationPolicy by name or by label. |

| MigrationPolicies table | List of MigrationPolicies

Click the Options menu

|

Click a MigrationPolicy to view the MigrationPolicy details page.

5.6.1. MigrationPolicy details page

You can configure a MigrationPolicy on the MigrationPolicy details page.

Example 5.37. MigrationPolicy details page

| Element | Description |

|---|---|

| Details tab | Configure a MigrationPolicy by editing a form. |

| YAML tab | Configure a MigrationPolicy by editing a YAML configuration file. |

| Actions menu | Select Edit or Delete. |

| Name | MigrationPolicy name |

| Description | MigrationPolicy description |

| Configurations | Click the edit icon to update the MigrationPolicy configurations. |

| Bandwidth per migration |

Bandwidth request per migration. For unlimited bandwidth, set the value to |

| Auto converge | Auto converge policy |

| Post-copy | Post-copy policy |

| Completion timeout | Completion timeout value in seconds |

| Project labels | Click Edit to edit the project labels. |

| VirtualMachine labels | Click Edit to edit the VirtualMachine labels. |

Chapter 6. OpenShift Virtualization release notes

6.1. About Red Hat OpenShift Virtualization

Red Hat OpenShift Virtualization enables you to bring traditional virtual machines (VMs) into OpenShift Container Platform where they run alongside containers, and are managed as native Kubernetes objects.

OpenShift Virtualization is represented by the

![]() icon.

icon.

You can use OpenShift Virtualization with either the OVN-Kubernetes or the OpenShiftSDN default Container Network Interface (CNI) network provider.

Learn more about what you can do with OpenShift Virtualization.

Learn more about OpenShift Virtualization architecture and deployments.

Prepare your cluster for OpenShift Virtualization.

6.1.1. OpenShift Virtualization supported cluster version

The latest stable release of OpenShift Virtualization 4.13 is 4.13.11.

OpenShift Virtualization 4.13 is supported for use on OpenShift Container Platform 4.13 clusters. To use the latest z-stream release of OpenShift Virtualization, you must first upgrade to the latest version of OpenShift Container Platform.

Updating to OpenShift Virtualization 4.13 from OpenShift Virtualization 4.12.2 is not supported.

6.1.2. Supported guest operating systems

To view the supported guest operating systems for OpenShift Virtualization, see Certified Guest Operating Systems in Red Hat OpenStack Platform, Red Hat Virtualization, OpenShift Virtualization and Red Hat Enterprise Linux with KVM.

6.2. New and changed features

- OpenShift Virtualization is FIPS ready. However, OpenShift Container Platform 4.13 is based on Red Hat Enterprise Linux (RHEL) 9.2. RHEL 9.2 has not yet been submitted for FIPS validation. Red Hat expects, though cannot commit to a specific timeframe, to obtain FIPS validation for RHEL 9.0 and RHEL 9.2 modules, and later even minor releases of RHEL 9.x. Updates will be available in Compliance Activities and Government Standards.

OpenShift Virtualization is certified in Microsoft’s Windows Server Virtualization Validation Program (SVVP) to run Windows Server workloads.

The SVVP Certification applies to:

- Red Hat Enterprise Linux CoreOS workers. In the Microsoft SVVP Catalog, they are named Red Hat OpenShift Container Platform 4.13.

- Intel and AMD CPUs.

-

OpenShift Virtualization now adheres to the

restrictedKubernetes pod security standards profile. To learn more, see the OpenShift Virtualization security policies documentation.

OpenShift Virtualization is now based on Red Hat Enterprise Linux (RHEL) 9.

There is a new RHEL 9 machine type for VMs:

machineType: pc-q35-rhel9.2.0.All VM templates that are included with OpenShift Virtualization now use this machine type by default.

- For more information, see OpenShift Virtualization on RHEL 9.

-

You can now obtain the

VirtualMachine,ConfigMap, andSecretmanifests from the export server after you export a VM or snapshot. For more information, see accessing exported VM manifests.

- The "Logging, events, and monitoring" documentation is now called Support. The monitoring tools documentation has been moved to Monitoring.

- You can view and filter aggregated OpenShift Virtualization logs in the web console by using the LokiStack.

6.2.1. Quick starts

-

Quick start tours are available for several OpenShift Virtualization features. To view the tours, click the Help icon ? in the menu bar on the header of the OpenShift Virtualization console and then select Quick Starts. You can filter the available tours by entering the

virtualizationkeyword in the Filter field.

6.2.2. Networking

- You can now send unfragmented jumbo frame packets between two virtual machines (VMs) that are connected on the default pod network when you use the OVN-Kubernetes CNI plugin.

6.2.3. Storage

- OpenShift Virtualization storage resources now migrate automatically to the beta API versions. Alpha API versions are no longer supported.

6.2.4. Web console

- On the VirtualMachine details page, the Scheduling, Environment, Network interfaces, Disks, and Scripts tabs are displayed on the new Configuration tab.

- You can now paste a string from your client’s clipboard into the guest when using the VNC console.

- The VirtualMachine details → Details tab now provides a new SSH service type SSH over LoadBalancer to expose the SSH service over a load balancer.

- The option to make a hot-plug volume a persistent volume is added to the Disks tab.

- There is now a VirtualMachine details → Diagnostics tab where you can view the status conditions of VMs and the snapshot status of volumes.

- You can now enable headless mode for high performance VMs in the web console.

6.3. Deprecated and removed features

6.3.1. Deprecated features

Deprecated features are included and supported in the current release. However, they will be removed in a future release and are not recommended for new deployments.

-

Support for

virtctlcommand line tool installation for Red Hat Enterprise Linux (RHEL) 7 and RHEL 9 by an RPM is deprecated and is planned to be removed in a future release.

6.3.2. Removed features

Removed features are not supported in the current release.

- Red Hat Enterprise Linux 6 is no longer supported on OpenShift Virtualization.

- Support for the legacy HPP custom resource, and the associated storage class, has been removed for all new deployments. In OpenShift Virtualization 4.13, the HPP Operator uses the Kubernetes Container Storage Interface (CSI) driver to configure local storage. A legacy HPP custom resource is supported only if it had been installed on a previous version of OpenShift Virtualization.

6.4. Technology Preview features

Some features in this release are currently in Technology Preview. These experimental features are not intended for production use. Note the following scope of support on the Red Hat Customer Portal for these features:

Technology Preview Features Support Scope

You can now use Prometheus to monitor the following metrics:

-

kubevirt_vmi_cpu_system_usage_secondsreturns the physical system CPU time consumed by the hypervisor. -

kubevirt_vmi_cpu_user_usage_secondsreturns the physical user CPU time consumed by the hypervisor. -

kubevirt_vmi_cpu_usage_secondsreturns the total CPU time used in seconds by calculating the sum of the vCPU and the hypervisor usage.

-

- You can now run a checkup to verify if your OpenShift Container Platform cluster node can run a virtual machine with a Data Plane Development Kit (DPDK) workload with zero packet loss.

- You can configure your virtual machine to run DPDK workloads to achieve lower latency and higher throughput for faster packet processing in the user space.

- You can now access a VM that is attached to a secondary network interface from outside the cluster by using its fully qualified domain name (FQDN).

- You can now create OpenShift Container Platform clusters with worker nodes that are hosted by OpenShift Virtualization VMs. For more information, see Managing hosted control plane clusters on OpenShift Virtualization in the Red Hat Advanced Cluster Management (RHACM) documentation.

- You can now use Microsoft Windows 11 as a guest operating system. However, OpenShift Virtualization 4.13 does not support USB disks, which are required for a critical function of BitLocker recovery. To protect recovery keys, use other methods described in the BitLocker recovery guide.

6.5. Bug fix

- The virtual machine snapshot restore operation no longer hangs indefinitely due to some persistent volume claim (PVC) annotations created by the Containerized Data Importer (CDI). (BZ#2070366)

6.6. Known issues

With the release of the RHSA-2023:3722 advisory, the TLS

Extended Master Secret(EMS) extension (RFC 7627) is mandatory for TLS 1.2 connections on FIPS-enabled RHEL 9 systems. This is in accordance with FIPS-140-3 requirements. TLS 1.3 is not affected.Legacy OpenSSL clients that do not support EMS or TLS 1.3 now cannot connect to FIPS servers running on RHEL 9. Similarly, RHEL 9 clients in FIPS mode cannot connect to servers that only support TLS 1.2 without EMS. This in practice means that these clients cannot connect to servers on RHEL 6, RHEL 7 and non-RHEL legacy operating systems. This is because the legacy 1.0.x versions of OpenSSL do not support EMS or TLS 1.3. For more information, see TLS Extension "Extended Master Secret" enforced with Red Hat Enterprise Linux 9.2.

As a workaround, upgrade legacy OpenSSL clients to a version that supports TLS 1.3 and configure OpenShift Virtualization to use TLS 1.3, with the

ModernTLS security profile type, for FIPS mode.

If you enabled the

DisableMDEVConfigurationfeature gate by editing theHyperConvergedcustom resource in OpenShift Virtualization 4.12.4, you must re-enable the feature gate after you upgrade to versions 4.13.0 or 4.13.1 by creating a JSON Patch annotation (BZ#2184439):$ oc annotate --overwrite -n openshift-cnv hyperconverged kubevirt-hyperconverged \ kubevirt.kubevirt.io/jsonpatch='[{"op": "add","path": "/spec/configuration/developerConfiguration/featureGates/-", \ "value": "DisableMDEVConfiguration"}]'

OpenShift Virtualization versions 4.12.2 and earlier are not compatible with OpenShift Container Platform 4.13. Updating OpenShift Container Platform to 4.13 is blocked by design in OpenShift Virtualization 4.12.1 and 4.12.2, but this restriction could not be added to OpenShift Virtualization 4.12.0. If you have OpenShift Virtualization 4.12.0, ensure that you do not update OpenShift Container Platform to 4.13.

ImportantYour cluster becomes unsupported if you run incompatible versions of OpenShift Container Platform and OpenShift Virtualization.

- Enabling descheduler evictions on a virtual machine is a Technical Preview feature and might cause failed migrations and unstable scheduling.

- You cannot run OpenShift Virtualization on a single-stack IPv6 cluster. (BZ#2193267)

When you use two pods with different SELinux contexts, VMs with the

ocs-storagecluster-cephfsstorage class fail to migrate and the VM status changes toPaused. This is because both pods try to access the sharedReadWriteManyCephFS volume at the same time. (BZ#2092271)-

As a workaround, use the

ocs-storagecluster-ceph-rbdstorage class to live migrate VMs on a cluster that uses Red Hat Ceph Storage.

-

As a workaround, use the

If you clone more than 100 VMs using the

csi-clonecloning strategy, then the Ceph CSI might not purge the clones. Manually deleting the clones might also fail. (BZ#2055595)-

As a workaround, you can restart the

ceph-mgrto purge the VM clones.

-

As a workaround, you can restart the

- If you stop a node on a cluster and then use the Node Health Check Operator to bring the node back up, connectivity to Multus might be lost. (OCPBUGS-8398)

The

TopoLVMprovisioner name string has changed in OpenShift Virtualization 4.12. As a result, the automatic import of operating system images might fail with the following error message (BZ#2158521):DataVolume.storage spec is missing accessMode and volumeMode, cannot get access mode from StorageProfile.As a workaround:

Update the

claimPropertySetsarray of the storage profile:$ oc patch storageprofile <storage_profile> --type=merge -p '{"spec": {"claimPropertySets": [{"accessModes": ["ReadWriteOnce"], "volumeMode": "Block"}, \ {"accessModes": ["ReadWriteOnce"], "volumeMode": "Filesystem"}]}}'-

Delete the affected data volumes in the

openshift-virtualization-os-imagesnamespace. They are recreated with the access mode and volume mode from the updated storage profile.

When restoring a VM snapshot for storage whose binding mode is

WaitForFirstConsumer, the restored PVCs remain in thePendingstate and the restore operation does not progress.-

As a workaround, start the restored VM, stop it, and then start it again. The VM will be scheduled, the PVCs will be in the

Boundstate, and the restore operation will complete. (BZ#2149654)

-

As a workaround, start the restored VM, stop it, and then start it again. The VM will be scheduled, the PVCs will be in the

-

VMs created from common templates on a Single Node OpenShift (SNO) cluster display a

VMCannotBeEvictedalert because the template’s default eviction strategy isLiveMigrate. You can ignore this alert or remove the alert by updating the VM’s eviction strategy. (BZ#2092412)

-

Uninstalling OpenShift Virtualization does not remove the

feature.node.kubevirt.ionode labels created by OpenShift Virtualization. You must remove the labels manually. (CNV-22036)

-

Windows 11 virtual machines do not boot on clusters running in FIPS mode. Windows 11 requires a TPM (trusted platform module) device by default. However, the

swtpm(software TPM emulator) package is incompatible with FIPS. (BZ#2089301)

If your OpenShift Container Platform cluster uses OVN-Kubernetes as the default Container Network Interface (CNI) provider, you cannot attach a Linux bridge or bonding device to a host’s default interface because of a change in the host network topology of OVN-Kubernetes. (BZ#1885605)

- As a workaround, you can use a secondary network interface connected to your host, or switch to the OpenShift SDN default CNI provider.

In some instances, multiple virtual machines can mount the same PVC in read-write mode, which might result in data corruption. (BZ#1992753)

- As a workaround, avoid using a single PVC in read-write mode with multiple VMs.

The Pod Disruption Budget (PDB) prevents pod disruptions for migratable virtual machine images. If the PDB detects pod disruption, then

openshift-monitoringsends aPodDisruptionBudgetAtLimitalert every 60 minutes for virtual machine images that use theLiveMigrateeviction strategy. (BZ#2026733)- As a workaround, silence alerts.

OpenShift Virtualization links a service account token in use by a pod to that specific pod. OpenShift Virtualization implements a service account volume by creating a disk image that contains a token. If you migrate a VM, then the service account volume becomes invalid. (BZ#2037611)

- As a workaround, use user accounts rather than service accounts because user account tokens are not bound to a specific pod.

- In a heterogeneous cluster with different compute nodes, virtual machines that have HyperV Reenlightenment enabled cannot be scheduled on nodes that do not support timestamp-counter scaling (TSC) or have the appropriate TSC frequency. (BZ#2151169)

- If you deploy OpenShift Virtualization with Red Hat OpenShift Data Foundation, you must create a dedicated storage class for Windows virtual machine disks. See Optimizing ODF PersistentVolumes for Windows VMs for details.

VMs that use logical volume management (LVM) with block storage devices require additional configuration to avoid conflicts with Red Hat Enterprise Linux CoreOS (RHCOS) hosts.

-

As a workaround, you can create a VM, provision an LVM, and restart the VM. This creates an empty

system.lvmdevicesfile. (OCPBUGS-5223)

-

As a workaround, you can create a VM, provision an LVM, and restart the VM. This creates an empty

Chapter 7. Installing

7.1. Preparing your cluster for OpenShift Virtualization

Review this section before you install OpenShift Virtualization to ensure that your cluster meets the requirements.

You can use any installation method, including user-provisioned, installer-provisioned, or assisted installer, to deploy OpenShift Container Platform. However, the installation method and the cluster topology might affect OpenShift Virtualization functionality, such as snapshots or live migration.

IPv6

You cannot run OpenShift Virtualization on a single-stack IPv6 cluster. (BZ#2193267)

7.1.1. Hardware and operating system requirements

Review the following hardware and operating system requirements for OpenShift Virtualization.

Supported platforms

- On-premise bare metal servers

- Amazon Web Services bare metal instances. See Deploy OpenShift Virtualization on AWS Bare Metal Nodes for details.

- IBM Cloud Bare Metal Servers. See Deploy OpenShift Virtualization on IBM Cloud Bare Metal Nodes for details.

Installing OpenShift Virtualization on AWS bare metal instances or on IBM Cloud Bare Metal Servers is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

- Bare metal instances or servers offered by other cloud providers are not supported.

CPU requirements

- Supported by Red Hat Enterprise Linux (RHEL) 9

- Support for AMD and Intel 64-bit architectures (x86-64-v2)

- Support for Intel 64 or AMD64 CPU extensions

- Intel VT or AMD-V hardware virtualization extensions enabled

- NX (no execute) flag enabled

Storage requirements

- Supported by OpenShift Container Platform

If you deploy OpenShift Virtualization with Red Hat OpenShift Data Foundation, you must create a dedicated storage class for Windows virtual machine disks. See Optimizing ODF PersistentVolumes for Windows VMs for details.

Operating system requirements

Red Hat Enterprise Linux CoreOS (RHCOS) installed on worker nodes

NoteRHEL worker nodes are not supported.

- If your cluster uses worker nodes with different CPUs, live migration failures can occur because different CPUs have different capabilities. To avoid such failures, use CPUs with appropriate capacity for each node and set node affinity on your virtual machines to ensure successful migration. See Configuring a required node affinity rule for more information.

7.1.2. Physical resource overhead requirements

OpenShift Virtualization is an add-on to OpenShift Container Platform and imposes additional overhead that you must account for when planning a cluster. Each cluster machine must accommodate the following overhead requirements in addition to the OpenShift Container Platform requirements. Oversubscribing the physical resources in a cluster can affect performance.

The numbers noted in this documentation are based on Red Hat’s test methodology and setup. These numbers can vary based on your own individual setup and environments.

7.1.2.1. Memory overhead

Calculate the memory overhead values for OpenShift Virtualization by using the equations below.

Cluster memory overhead

Memory overhead per infrastructure node ≈ 150 MiBMemory overhead per worker node ≈ 360 MiBAdditionally, OpenShift Virtualization environment resources require a total of 2179 MiB of RAM that is spread across all infrastructure nodes.

Virtual machine memory overhead

Memory overhead per virtual machine ≈ (1.002 × requested memory) \

+ 218 MiB \

+ 8 MiB × (number of vCPUs) \

+ 16 MiB × (number of graphics devices) \

+ (additional memory overhead) - 1

- Required for the processes that run in the

virt-launcherpod. - 2

- Number of virtual CPUs requested by the virtual machine.

- 3

- Number of virtual graphics cards requested by the virtual machine.

- 4

- Additional memory overhead:

- If your environment includes a Single Root I/O Virtualization (SR-IOV) network device or a Graphics Processing Unit (GPU), allocate 1 GiB additional memory overhead for each device.

7.1.2.2. CPU overhead

Calculate the cluster processor overhead requirements for OpenShift Virtualization by using the equation below. The CPU overhead per virtual machine depends on your individual setup.

Cluster CPU overhead

CPU overhead for infrastructure nodes ≈ 4 coresOpenShift Virtualization increases the overall utilization of cluster level services such as logging, routing, and monitoring. To account for this workload, ensure that nodes that host infrastructure components have capacity allocated for 4 additional cores (4000 millicores) distributed across those nodes.

CPU overhead for worker nodes ≈ 2 cores + CPU overhead per virtual machineEach worker node that hosts virtual machines must have capacity for 2 additional cores (2000 millicores) for OpenShift Virtualization management workloads in addition to the CPUs required for virtual machine workloads.

Virtual machine CPU overhead

If dedicated CPUs are requested, there is a 1:1 impact on the cluster CPU overhead requirement. Otherwise, there are no specific rules about how many CPUs a virtual machine requires.

7.1.2.3. Storage overhead

Use the guidelines below to estimate storage overhead requirements for your OpenShift Virtualization environment.

Cluster storage overhead

Aggregated storage overhead per node ≈ 10 GiB10 GiB is the estimated on-disk storage impact for each node in the cluster when you install OpenShift Virtualization.

Virtual machine storage overhead

Storage overhead per virtual machine depends on specific requests for resource allocation within the virtual machine. The request could be for ephemeral storage on the node or storage resources hosted elsewhere in the cluster. OpenShift Virtualization does not currently allocate any additional ephemeral storage for the running container itself.

7.1.2.4. Example

As a cluster administrator, if you plan to host 10 virtual machines in the cluster, each with 1 GiB of RAM and 2 vCPUs, the memory impact across the cluster is 11.68 GiB. The estimated on-disk storage impact for each node in the cluster is 10 GiB and the CPU impact for worker nodes that host virtual machine workloads is a minimum of 2 cores.

7.1.3. About storage volumes for virtual machine disks

If you use the storage API with known storage providers, volume and access modes are selected automatically. However, if you use a storage class that does not have a storage profile, you must select the volume and access mode.

For best results, use accessMode: ReadWriteMany and volumeMode: Block. This is important for the following reasons:

- The ReadWriteMany (RWX) access mode is required for live migration.

The

Blockvolume mode performs significantly better in comparison to theFilesystemvolume mode. This is because theFilesystemvolume mode uses more storage layers, including a file system layer and a disk image file. These layers are not necessary for VM disk storage.For example, if you use Red Hat OpenShift Data Foundation, Ceph RBD volumes are preferable to CephFS volumes.

ImportantYou cannot live migrate virtual machines that use:

- A storage volume with ReadWriteOnce (RWO) access mode

- Passthrough features such as GPUs

Do not set the

evictionStrategyfield toLiveMigratefor these virtual machines.

7.1.4. Object maximums

You must consider the following tested object maximums when planning your cluster:

7.1.5. Restricted network environments

If you install OpenShift Virtualization in a restricted environment with no internet connectivity, you must configure Operator Lifecycle Manager for restricted networks.

If you have limited internet connectivity, you can configure proxy support in Operator Lifecycle Manager to access the Red Hat-provided OperatorHub.

7.1.6. Live migration

Live migration has the following requirements:

-

Shared storage with

ReadWriteMany(RWX) access mode. - Sufficient RAM and network bandwidth.

- If the virtual machine uses a host model CPU, the nodes must support the virtual machine’s host model CPU.

You must ensure that there is enough memory request capacity in the cluster to support node drains that result in live migrations. You can determine the approximate required spare memory by using the following calculation:

Product of (Maximum number of nodes that can drain in parallel) and (Highest total VM memory request allocations across nodes)The default number of migrations that can run in parallel in the cluster is 5.

7.1.7. Cluster high-availability options

You can configure one of the following high-availability (HA) options for your cluster:

Automatic high availability for installer-provisioned infrastructure (IPI) is available by deploying machine health checks.

NoteIn OpenShift Container Platform clusters installed using installer-provisioned infrastructure and with MachineHealthCheck properly configured, if a node fails the MachineHealthCheck and becomes unavailable to the cluster, it is recycled. What happens next with VMs that ran on the failed node depends on a series of conditions. See About RunStrategies for virtual machines for more detailed information about the potential outcomes and how RunStrategies affect those outcomes.

-

Automatic high availability for both IPI and non-IPI is available by using the Node Health Check Operator on the OpenShift Container Platform cluster to deploy the

NodeHealthCheckcontroller. The controller identifies unhealthy nodes and uses a remediation provider, such as the Self Node Remediation Operator or Fence Agents Remediation Operator, to remediate the unhealthy nodes. For more information on remediation, fencing, and maintaining nodes, see the Workload Availability for Red Hat OpenShift documentation. High availability for any platform is available by using either a monitoring system or a qualified human to monitor node availability. When a node is lost, shut it down and run

oc delete node <lost_node>.NoteWithout an external monitoring system or a qualified human monitoring node health, virtual machines lose high availability.

7.2. Specifying nodes for OpenShift Virtualization components

Specify the nodes where you want to deploy OpenShift Virtualization Operators, workloads, and controllers by configuring node placement rules.

You can configure node placement for some components after installing OpenShift Virtualization, but there must not be virtual machines present if you want to configure node placement for workloads.

7.2.1. About node placement for virtualization components

You might want to customize where OpenShift Virtualization deploys its components to ensure that:

- Virtual machines only deploy on nodes that are intended for virtualization workloads.

- Operators only deploy on infrastructure nodes.

- Certain nodes are unaffected by OpenShift Virtualization. For example, you have workloads unrelated to virtualization running on your cluster, and you want those workloads to be isolated from OpenShift Virtualization.

7.2.1.1. How to apply node placement rules to virtualization components

You can specify node placement rules for a component by editing the corresponding object directly or by using the web console.

-

For the OpenShift Virtualization Operators that Operator Lifecycle Manager (OLM) deploys, edit the OLM

Subscriptionobject directly. Currently, you cannot configure node placement rules for theSubscriptionobject by using the web console. -

For components that the OpenShift Virtualization Operators deploy, edit the

HyperConvergedobject directly or configure it by using the web console during OpenShift Virtualization installation. For the hostpath provisioner, edit the

HostPathProvisionerobject directly or configure it by using the web console.WarningYou must schedule the hostpath provisioner and the virtualization components on the same nodes. Otherwise, virtualization pods that use the hostpath provisioner cannot run.

Depending on the object, you can use one or more of the following rule types:

nodeSelector- Allows pods to be scheduled on nodes that are labeled with the key-value pair or pairs that you specify in this field. The node must have labels that exactly match all listed pairs.

affinity- Enables you to use more expressive syntax to set rules that match nodes with pods. Affinity also allows for more nuance in how the rules are applied. For example, you can specify that a rule is a preference, rather than a hard requirement, so that pods are still scheduled if the rule is not satisfied.

tolerations- Allows pods to be scheduled on nodes that have matching taints. If a taint is applied to a node, that node only accepts pods that tolerate the taint.

7.2.1.2. Node placement in the OLM Subscription object

To specify the nodes where OLM deploys the OpenShift Virtualization Operators, edit the Subscription object during OpenShift Virtualization installation. You can include node placement rules in the spec.config field, as shown in the following example:

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: hco-operatorhub

namespace: openshift-cnv

spec:

source: redhat-operators

sourceNamespace: openshift-marketplace

name: kubevirt-hyperconverged

startingCSV: kubevirt-hyperconverged-operator.v4.13.11

channel: "stable"

config: - 1

- The

configfield supportsnodeSelectorandtolerations, but it does not supportaffinity.

7.2.1.3. Node placement in the HyperConverged object

To specify the nodes where OpenShift Virtualization deploys its components, you can include the nodePlacement object in the HyperConverged Cluster custom resource (CR) file that you create during OpenShift Virtualization installation. You can include nodePlacement under the spec.infra and spec.workloads fields, as shown in the following example:

apiVersion: hco.kubevirt.io/v1beta1

kind: HyperConverged

metadata:

name: kubevirt-hyperconverged

namespace: openshift-cnv

spec:

infra:

nodePlacement:

...

workloads:

nodePlacement:

...- 1

- The

nodePlacementfields supportnodeSelector,affinity, andtolerationsfields.

7.2.1.4. Node placement in the HostPathProvisioner object

You can configure node placement rules in the spec.workload field of the HostPathProvisioner object that you create when you install the hostpath provisioner.

apiVersion: hostpathprovisioner.kubevirt.io/v1beta1

kind: HostPathProvisioner

metadata:

name: hostpath-provisioner

spec:

imagePullPolicy: IfNotPresent

pathConfig:

path: "</path/to/backing/directory>"

useNamingPrefix: false

workload: - 1

- The

workloadfield supportsnodeSelector,affinity, andtolerationsfields.

7.2.2. Example manifests

The following example YAML files use nodePlacement, affinity, and tolerations objects to customize node placement for OpenShift Virtualization components.

7.2.2.1. Operator Lifecycle Manager Subscription object

7.2.2.1.1. Example: Node placement with nodeSelector in the OLM Subscription object

In this example, nodeSelector is configured so that OLM places the OpenShift Virtualization Operators on nodes that are labeled with example.io/example-infra-key = example-infra-value.

apiVersion: operators.coreos.com/v1beta1

kind: Subscription

metadata:

name: hco-operatorhub

namespace: openshift-cnv

spec:

source: redhat-operators

sourceNamespace: openshift-marketplace

name: kubevirt-hyperconverged

startingCSV: kubevirt-hyperconverged-operator.v4.13.11

channel: "stable"

config:

nodeSelector:

example.io/example-infra-key: example-infra-value7.2.2.1.2. Example: Node placement with tolerations in the OLM Subscription object

In this example, nodes that are reserved for OLM to deploy OpenShift Virtualization Operators are labeled with the key=virtualization:NoSchedule taint. Only pods with the matching tolerations are scheduled to these nodes.

apiVersion: operators.coreos.com/v1beta1

kind: Subscription

metadata:

name: hco-operatorhub

namespace: openshift-cnv

spec:

source: redhat-operators

sourceNamespace: openshift-marketplace

name: kubevirt-hyperconverged

startingCSV: kubevirt-hyperconverged-operator.v4.13.11

channel: "stable"

config:

tolerations:

- key: "key"

operator: "Equal"

value: "virtualization"

effect: "NoSchedule"7.2.2.2. HyperConverged object

7.2.2.2.1. Example: Node placement with nodeSelector in the HyperConverged Cluster CR

In this example, nodeSelector is configured so that infrastructure resources are placed on nodes that are labeled with example.io/example-infra-key = example-infra-value and workloads are placed on nodes labeled with example.io/example-workloads-key = example-workloads-value.

apiVersion: hco.kubevirt.io/v1beta1

kind: HyperConverged

metadata:

name: kubevirt-hyperconverged

namespace: openshift-cnv

spec:

infra:

nodePlacement:

nodeSelector:

example.io/example-infra-key: example-infra-value

workloads:

nodePlacement:

nodeSelector:

example.io/example-workloads-key: example-workloads-value7.2.2.2.2. Example: Node placement with affinity in the HyperConverged Cluster CR

In this example, affinity is configured so that infrastructure resources are placed on nodes that are labeled with example.io/example-infra-key = example-value and workloads are placed on nodes labeled with example.io/example-workloads-key = example-workloads-value. Nodes that have more than eight CPUs are preferred for workloads, but if they are not available, pods are still scheduled.

apiVersion: hco.kubevirt.io/v1beta1

kind: HyperConverged

metadata:

name: kubevirt-hyperconverged

namespace: openshift-cnv

spec:

infra:

nodePlacement:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: example.io/example-infra-key

operator: In

values:

- example-infra-value

workloads:

nodePlacement:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: example.io/example-workloads-key

operator: In

values:

- example-workloads-value

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

preference:

matchExpressions:

- key: example.io/num-cpus

operator: Gt

values:

- 87.2.2.2.3. Example: Node placement with tolerations in the HyperConverged Cluster CR

In this example, nodes that are reserved for OpenShift Virtualization components are labeled with the key=virtualization:NoSchedule taint. Only pods with the matching tolerations are scheduled to these nodes.

apiVersion: hco.kubevirt.io/v1beta1

kind: HyperConverged

metadata:

name: kubevirt-hyperconverged

namespace: openshift-cnv

spec:

workloads:

nodePlacement:

tolerations:

- key: "key"

operator: "Equal"

value: "virtualization"

effect: "NoSchedule"7.2.2.3. HostPathProvisioner object

7.2.2.3.1. Example: Node placement with nodeSelector in the HostPathProvisioner object

In this example, nodeSelector is configured so that workloads are placed on nodes labeled with example.io/example-workloads-key = example-workloads-value.

apiVersion: hostpathprovisioner.kubevirt.io/v1beta1

kind: HostPathProvisioner

metadata:

name: hostpath-provisioner

spec:

imagePullPolicy: IfNotPresent

pathConfig:

path: "</path/to/backing/directory>"

useNamingPrefix: false

workload:

nodeSelector: