Chapter 4. Additional Concepts

4.1. Authentication

4.1.1. Overview

The authentication layer identifies the user associated with requests to the OpenShift Container Platform API. The authorization layer then uses information about the requesting user to determine if the request should be allowed.

As an administrator, you can configure authentication using a master configuration file.

4.1.2. Users and Groups

A user in OpenShift Container Platform is an entity that can make requests to the OpenShift Container Platform API. Typically, this represents the account of a developer or administrator that is interacting with OpenShift Container Platform.

A user can be assigned to one or more groups, each of which represent a certain set of users. Groups are useful when managing authorization policies to grant permissions to multiple users at once, for example allowing access to objects within a project, versus granting them to users individually.

In addition to explicitly defined groups, there are also system groups, or virtual groups, that are automatically provisioned by OpenShift. These can be seen when viewing cluster bindings.

In the default set of virtual groups, note the following in particular:

| Virtual Group | Description |

|---|---|

| system:authenticated | Automatically associated with all authenticated users. |

| system:authenticated:oauth | Automatically associated with all users authenticated with an OAuth access token. |

| system:unauthenticated | Automatically associated with all unauthenticated users. |

4.1.3. API Authentication

Requests to the OpenShift Container Platform API are authenticated using the following methods:

- OAuth Access Tokens

-

Obtained from the OpenShift Container Platform OAuth server using the

<master>/oauth/authorizeand<master>/oauth/tokenendpoints. -

Sent as an

Authorization: Bearer…header -

Sent as an

access_token=…query parameter for websocket requests prior to OpenShift Container Platform server version 3.6. -

Sent as a websocket subprotocol header in the form

base64url.bearer.authorization.k8s.io.<base64url-encoded-token>for websocket requests in OpenShift Container Platform server version 3.6 and later.

-

Obtained from the OpenShift Container Platform OAuth server using the

- X.509 Client Certificates

- Requires a HTTPS connection to the API server.

- Verified by the API server against a trusted certificate authority bundle.

- The API server creates and distributes certificates to controllers to authenticate themselves.

Any request with an invalid access token or an invalid certificate is rejected by the authentication layer with a 401 error.

If no access token or certificate is presented, the authentication layer assigns the system:anonymous virtual user and the system:unauthenticated virtual group to the request. This allows the authorization layer to determine which requests, if any, an anonymous user is allowed to make.

4.1.3.1. Impersonation

A request to the OpenShift Container Platform API can include an Impersonate-User header, which indicates that the requester wants to have the request handled as though it came from the specified user. You impersonate a user by adding the --as=<user> flag to requests.

Before User A can impersonate User B, User A is authenticated. Then, an authorization check occurs to ensure that User A is allowed to impersonate the user named User B. If User A is requesting to impersonate a service account, system:serviceaccount:namespace:name, OpenShift Container Platform confirms that User A can impersonate the serviceaccount named name in namespace. If the check fails, the request fails with a 403 (Forbidden) error code.

By default, project administrators and editors can impersonate service accounts in their namespace. The sudoers role allows a user to impersonate system:admin, which in turn has cluster administrator permissions. The ability to impersonate system:admin grants some protection against typos, but not security, for someone administering the cluster. For example, running oc delete nodes --all fails, but running oc delete nodes --all --as=system:admin succeeds. You can grant a user that permission by running this command:

$ oc create clusterrolebinding <any_valid_name> --clusterrole=sudoer --user=<username>

If you need to create a project request on behalf of a user, include the --as=<user> --as-group=<group1> --as-group=<group2> flags in your command. Because system:authenticated:oauth is the only bootstrap group that can create project requests, you must impersonate that group, as shown in the following example:

$ oc new-project <project> --as=<user> \

--as-group=system:authenticated --as-group=system:authenticated:oauth4.1.4. OAuth

The OpenShift Container Platform master includes a built-in OAuth server. Users obtain OAuth access tokens to authenticate themselves to the API.

When a person requests a new OAuth token, the OAuth server uses the configured identity provider to determine the identity of the person making the request.

It then determines what user that identity maps to, creates an access token for that user, and returns the token for use.

4.1.4.1. OAuth Clients

Every request for an OAuth token must specify the OAuth client that will receive and use the token. The following OAuth clients are automatically created when starting the OpenShift Container Platform API:

| OAuth Client | Usage |

|---|---|

| openshift-web-console | Requests tokens for the web console. |

| openshift-browser-client |

Requests tokens at |

| openshift-challenging-client |

Requests tokens with a user-agent that can handle |

To register additional clients:

$ oc create -f <(echo '

kind: OAuthClient

apiVersion: oauth.openshift.io/v1

metadata:

name: demo

secret: "..."

redirectURIs:

- "http://www.example.com/"

grantMethod: prompt

')- 1

- The

nameof the OAuth client is used as theclient_idparameter when making requests to<master>/oauth/authorizeand<master>/oauth/token. - 2

- The

secretis used as theclient_secretparameter when making requests to<master>/oauth/token. - 3

- The

redirect_uriparameter specified in requests to<master>/oauth/authorizeand<master>/oauth/tokenmust be equal to (or prefixed by) one of the URIs inredirectURIs. - 4

- The

grantMethodis used to determine what action to take when this client requests tokens and has not yet been granted access by the user. Uses the same values seen in Grant Options.

4.1.4.2. Service Accounts as OAuth Clients

A service account can be used as a constrained form of OAuth client. Service accounts can only request a subset of scopes that allow access to some basic user information and role-based power inside of the service account’s own namespace:

-

user:info -

user:check-access -

role:<any_role>:<serviceaccount_namespace> -

role:<any_role>:<serviceaccount_namespace>:!

When using a service account as an OAuth client:

-

client_idissystem:serviceaccount:<serviceaccount_namespace>:<serviceaccount_name>. client_secretcan be any of the API tokens for that service account. For example:$ oc sa get-token <serviceaccount_name>-

To get

WWW-Authenticatechallenges, set anserviceaccounts.openshift.io/oauth-want-challengesannotation on the service account to true. -

redirect_urimust match an annotation on the service account. Redirect URIs for Service Accounts as OAuth Clients provides more information.

4.1.4.3. Redirect URIs for Service Accounts as OAuth Clients

Annotation keys must have the prefix serviceaccounts.openshift.io/oauth-redirecturi. or serviceaccounts.openshift.io/oauth-redirectreference. such as:

serviceaccounts.openshift.io/oauth-redirecturi.<name>In its simplest form, the annotation can be used to directly specify valid redirect URIs. For example:

"serviceaccounts.openshift.io/oauth-redirecturi.first": "https://example.com"

"serviceaccounts.openshift.io/oauth-redirecturi.second": "https://other.com"

The first and second postfixes in the above example are used to separate the two valid redirect URIs.

In more complex configurations, static redirect URIs may not be enough. For example, perhaps you want all ingresses for a route to be considered valid. This is where dynamic redirect URIs via the serviceaccounts.openshift.io/oauth-redirectreference. prefix come into play.

For example:

"serviceaccounts.openshift.io/oauth-redirectreference.first": "{\"kind\":\"OAuthRedirectReference\",\"apiVersion\":\"v1\",\"reference\":{\"kind\":\"Route\",\"name\":\"jenkins\"}}"Since the value for this annotation contains serialized JSON data, it is easier to see in an expanded format:

{

"kind": "OAuthRedirectReference",

"apiVersion": "v1",

"reference": {

"kind": "Route",

"name": "jenkins"

}

}

Now you can see that an OAuthRedirectReference allows us to reference the route named jenkins. Thus, all ingresses for that route will now be considered valid. The full specification for an OAuthRedirectReference is:

{

"kind": "OAuthRedirectReference",

"apiVersion": "v1",

"reference": {

"kind": ...,

"name": ...,

"group": ...

}

}- 1

kindrefers to the type of the object being referenced. Currently, onlyrouteis supported.- 2

namerefers to the name of the object. The object must be in the same namespace as the service account.- 3

grouprefers to the group of the object. Leave this blank, as the group for a route is the empty string.

Both annotation prefixes can be combined to override the data provided by the reference object. For example:

"serviceaccounts.openshift.io/oauth-redirecturi.first": "custompath"

"serviceaccounts.openshift.io/oauth-redirectreference.first": "{\"kind\":\"OAuthRedirectReference\",\"apiVersion\":\"v1\",\"reference\":{\"kind\":\"Route\",\"name\":\"jenkins\"}}"

The first postfix is used to tie the annotations together. Assuming that the jenkins route had an ingress of https://example.com, now https://example.com/custompath is considered valid, but https://example.com is not. The format for partially supplying override data is as follows:

| Type | Syntax |

|---|---|

| Scheme | "https://" |

| Hostname | "//website.com" |

| Port | "//:8000" |

| Path | "examplepath" |

Specifying a host name override will replace the host name data from the referenced object, which is not likely to be desired behavior.

Any combination of the above syntax can be combined using the following format:

<scheme:>//<hostname><:port>/<path>

The same object can be referenced more than once for more flexibility:

"serviceaccounts.openshift.io/oauth-redirecturi.first": "custompath"

"serviceaccounts.openshift.io/oauth-redirectreference.first": "{\"kind\":\"OAuthRedirectReference\",\"apiVersion\":\"v1\",\"reference\":{\"kind\":\"Route\",\"name\":\"jenkins\"}}"

"serviceaccounts.openshift.io/oauth-redirecturi.second": "//:8000"

"serviceaccounts.openshift.io/oauth-redirectreference.second": "{\"kind\":\"OAuthRedirectReference\",\"apiVersion\":\"v1\",\"reference\":{\"kind\":\"Route\",\"name\":\"jenkins\"}}"

Assuming that the route named jenkins has an ingress of https://example.com, then both https://example.com:8000 and https://example.com/custompath are considered valid.

Static and dynamic annotations can be used at the same time to achieve the desired behavior:

"serviceaccounts.openshift.io/oauth-redirectreference.first": "{\"kind\":\"OAuthRedirectReference\",\"apiVersion\":\"v1\",\"reference\":{\"kind\":\"Route\",\"name\":\"jenkins\"}}"

"serviceaccounts.openshift.io/oauth-redirecturi.second": "https://other.com"4.1.4.3.1. API Events for OAuth

In some cases the API server returns an unexpected condition error message that is difficult to debug without direct access to the API master log. The underlying reason for the error is purposely obscured in order to avoid providing an unauthenticated user with information about the server’s state.

A subset of these errors is related to service account OAuth configuration issues. These issues are captured in events that can be viewed by non-administrator users. When encountering an unexpected condition server error during OAuth, run oc get events to view these events under ServiceAccount.

The following example warns of a service account that is missing a proper OAuth redirect URI:

$ oc get events | grep ServiceAccount

1m 1m 1 proxy ServiceAccount Warning NoSAOAuthRedirectURIs service-account-oauth-client-getter system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>

Running oc describe sa/<service-account-name> reports any OAuth events associated with the given service account name.

$ oc describe sa/proxy | grep -A5 Events

Events:

FirstSeen LastSeen Count From SubObjectPath Type Reason Message

--------- -------- ----- ---- ------------- -------- ------ -------

3m 3m 1 service-account-oauth-client-getter Warning NoSAOAuthRedirectURIs system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>The following is a list of the possible event errors:

No redirect URI annotations or an invalid URI is specified

Reason Message

NoSAOAuthRedirectURIs system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>Invalid route specified

Reason Message

NoSAOAuthRedirectURIs [routes.route.openshift.io "<name>" not found, system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>]Invalid reference type specified

Reason Message

NoSAOAuthRedirectURIs [no kind "<name>" is registered for version "v1", system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>]Missing SA tokens

Reason Message

NoSAOAuthTokens system:serviceaccount:myproject:proxy has no tokens4.1.4.3.1.1. Sample API Event Caused by a Possible Misconfiguration

The following steps represent one way a user could get into a broken state and how to debug or fix the issue:

Create a project utilizing a service account as an OAuth client.

Create YAML for a proxy service account object and ensure it uses the route

proxy:vi serviceaccount.yamlAdd the following sample code:

apiVersion: v1 kind: ServiceAccount metadata: name: proxy annotations: serviceaccounts.openshift.io/oauth-redirectreference.primary: '{"kind":"OAuthRedirectReference","apiVersion":"v1","reference":{"kind":"Route","name":"proxy"}}'Create YAML for a route object to create a secure connection to the proxy:

vi route.yamlAdd the following sample code:

apiVersion: route.openshift.io/v1 kind: Route metadata: name: proxy spec: to: name: proxy tls: termination: Reencrypt apiVersion: v1 kind: Service metadata: name: proxy annotations: service.alpha.openshift.io/serving-cert-secret-name: proxy-tls spec: ports: - name: proxy port: 443 targetPort: 8443 selector: app: proxyCreate a YAML for a deployment configuration to launch a proxy as a sidecar:

vi proxysidecar.yamlAdd the following sample code:

apiVersion: extensions/v1beta1 kind: Deployment metadata: name: proxy spec: replicas: 1 selector: matchLabels: app: proxy template: metadata: labels: app: proxy spec: serviceAccountName: proxy containers: - name: oauth-proxy image: openshift3/oauth-proxy imagePullPolicy: IfNotPresent ports: - containerPort: 8443 name: public args: - --https-address=:8443 - --provider=openshift - --openshift-service-account=proxy - --upstream=http://localhost:8080 - --tls-cert=/etc/tls/private/tls.crt - --tls-key=/etc/tls/private/tls.key - --cookie-secret=SECRET volumeMounts: - mountPath: /etc/tls/private name: proxy-tls - name: app image: openshift/hello-openshift:latest volumes: - name: proxy-tls secret: secretName: proxy-tlsCreate the objects

oc create -f serviceaccount.yaml oc create -f route.yaml oc create -f proxysidecar.yaml

Run

oc edit sa/proxyto edit the service account and change theserviceaccounts.openshift.io/oauth-redirectreferenceannotation to point to a Route that does not exist.apiVersion: v1 imagePullSecrets: - name: proxy-dockercfg-08d5n kind: ServiceAccount metadata: annotations: serviceaccounts.openshift.io/oauth-redirectreference.primary: '{"kind":"OAuthRedirectReference","apiVersion":"v1","reference":{"kind":"Route","name":"notexist"}}' ...Review the OAuth log for the service to locate the server error:

The authorization server encountered an unexpected condition that prevented it from fulfilling the request.Run

oc get eventsto view theServiceAccountevent:oc get events | grep ServiceAccount 23m 23m 1 proxy ServiceAccount Warning NoSAOAuthRedirectURIs service-account-oauth-client-getter [routes.route.openshift.io "notexist" not found, system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>]

4.1.4.4. Integrations

All requests for OAuth tokens involve a request to <master>/oauth/authorize. Most authentication integrations place an authenticating proxy in front of this endpoint, or configure OpenShift Container Platform to validate credentials against a backing identity provider. Requests to <master>/oauth/authorize can come from user-agents that cannot display interactive login pages, such as the CLI. Therefore, OpenShift Container Platform supports authenticating using a WWW-Authenticate challenge in addition to interactive login flows.

If an authenticating proxy is placed in front of the <master>/oauth/authorize endpoint, it should send unauthenticated, non-browser user-agents WWW-Authenticate challenges, rather than displaying an interactive login page or redirecting to an interactive login flow.

To prevent cross-site request forgery (CSRF) attacks against browser clients, Basic authentication challenges should only be sent if a X-CSRF-Token header is present on the request. Clients that expect to receive Basic WWW-Authenticate challenges should set this header to a non-empty value.

If the authenticating proxy cannot support WWW-Authenticate challenges, or if OpenShift Container Platform is configured to use an identity provider that does not support WWW-Authenticate challenges, users can visit <master>/oauth/token/request using a browser to obtain an access token manually.

4.1.4.5. OAuth Server Metadata

Applications running in OpenShift Container Platform may need to discover information about the built-in OAuth server. For example, they may need to discover what the address of the <master> server is without manual configuration. To aid in this, OpenShift Container Platform implements the IETF OAuth 2.0 Authorization Server Metadata draft specification.

Thus, any application running inside the cluster can issue a GET request to https://openshift.default.svc/.well-known/oauth-authorization-server to fetch the following information:

{

"issuer": "https://<master>",

"authorization_endpoint": "https://<master>/oauth/authorize",

"token_endpoint": "https://<master>/oauth/token",

"scopes_supported": [

"user:full",

"user:info",

"user:check-access",

"user:list-scoped-projects",

"user:list-projects"

],

"response_types_supported": [

"code",

"token"

],

"grant_types_supported": [

"authorization_code",

"implicit"

],

"code_challenge_methods_supported": [

"plain",

"S256"

]

}- 1

- The authorization server’s issuer identifier, which is a URL that uses the

httpsscheme and has no query or fragment components. This is the location where.well-knownRFC 5785 resources containing information about the authorization server are published. - 2

- URL of the authorization server’s authorization endpoint. See RFC 6749.

- 3

- URL of the authorization server’s token endpoint. See RFC 6749.

- 4

- JSON array containing a list of the OAuth 2.0 RFC 6749 scope values that this authorization server supports. Note that not all supported scope values are advertised.

- 5

- JSON array containing a list of the OAuth 2.0

response_typevalues that this authorization server supports. The array values used are the same as those used with theresponse_typesparameter defined by "OAuth 2.0 Dynamic Client Registration Protocol" in RFC 7591. - 6

- JSON array containing a list of the OAuth 2.0 grant type values that this authorization server supports. The array values used are the same as those used with the

grant_typesparameter defined by OAuth 2.0 Dynamic Client Registration Protocol in RFC 7591. - 7

- JSON array containing a list of PKCE RFC 7636 code challenge methods supported by this authorization server. Code challenge method values are used in the

code_challenge_methodparameter defined in Section 4.3 of RFC 7636. The valid code challenge method values are those registered in the IANA PKCE Code Challenge Methods registry. See IANA OAuth Parameters.

4.1.4.6. Obtaining OAuth Tokens

The OAuth server supports standard authorization code grant and the implicit grant OAuth authorization flows.

Run the following command to request an OAuth token by using the authorization code grant method:

$ curl -H "X-Remote-User: <username>" \

--cacert /etc/origin/master/ca.crt \

--cert /etc/origin/master/admin.crt \

--key /etc/origin/master/admin.key \

-I https://<master-address>/oauth/authorize?response_type=token\&client_id=openshift-challenging-client | grep -oP "access_token=\K[^&]*"

When requesting an OAuth token using the implicit grant flow (response_type=token) with a client_id configured to request WWW-Authenticate challenges (like openshift-challenging-client), these are the possible server responses from /oauth/authorize, and how they should be handled:

| Status | Content | Client response |

|---|---|---|

| 302 |

|

Use the |

| 302 |

|

Fail, optionally surfacing the |

| 302 |

Other | Follow the redirect, and process the result using these rules |

| 401 |

|

Respond to challenge if type is recognized (e.g. |

| 401 |

| No challenge authentication is possible. Fail and show response body (which might contain links or details on alternate methods to obtain an OAuth token) |

| Other | Other | Fail, optionally surfacing response body to the user |

To request an OAuth token using the implicit grant flow:

$ curl -u <username>:<password>

'https://<master-address>:8443/oauth/authorize?client_id=openshift-challenging-client&response_type=token' -skv /

/ -H "X-CSRF-Token: xxx"

* Trying 10.64.33.43...

* Connected to 10.64.33.43 (10.64.33.43) port 8443 (#0)

* found 148 certificates in /etc/ssl/certs/ca-certificates.crt

* found 592 certificates in /etc/ssl/certs

* ALPN, offering http/1.1

* SSL connection using TLS1.2 / ECDHE_RSA_AES_128_GCM_SHA256

* server certificate verification SKIPPED

* server certificate status verification SKIPPED

* common name: 10.64.33.43 (matched)

* server certificate expiration date OK

* server certificate activation date OK

* certificate public key: RSA

* certificate version: #3

* subject: CN=10.64.33.43

* start date: Thu, 09 Aug 2018 04:00:39 GMT

* expire date: Sat, 08 Aug 2020 04:00:40 GMT

* issuer: CN=openshift-signer@1531109367

* compression: NULL

* ALPN, server accepted to use http/1.1

* Server auth using Basic with user 'developer'

> GET /oauth/authorize?client_id=openshift-challenging-client&response_type=token HTTP/1.1

> Host: 10.64.33.43:8443

> Authorization: Basic ZGV2ZWxvcGVyOmRzc2Zkcw==

> User-Agent: curl/7.47.0

> Accept: */*

> X-CSRF-Token: xxx

>

< HTTP/1.1 302 Found

< Cache-Control: no-cache, no-store, max-age=0, must-revalidate

< Expires: Fri, 01 Jan 1990 00:00:00 GMT

< Location:

https://10.64.33.43:8443/oauth/token/implicit#access_token=gzTwOq_mVJ7ovHliHBTgRQEEXa1aCZD9lnj7lSw3ekQ&expires_in=86400&scope=user%3Afull&token_type=Bearer

< Pragma: no-cache

< Set-Cookie: ssn=MTUzNTk0OTc1MnxIckVfNW5vNFlLSlF5MF9GWEF6Zm55Vl95bi1ZNE41S1NCbFJMYnN1TWVwR1hwZmlLMzFQRklzVXRkc0RnUGEzdnBEa0NZZndXV2ZUVzN1dmFPM2dHSUlzUmVXakQ3Q09rVXpxNlRoVmVkQU5DYmdLTE9SUWlyNkJJTm1mSDQ0N2pCV09La3gzMkMzckwxc1V1QXpybFlXT2ZYSmI2R2FTVEZsdDBzRjJ8vk6zrQPjQUmoJCqb8Dt5j5s0b4wZlITgKlho9wlKAZI=; Path=/; HttpOnly; Secure

< Date: Mon, 03 Sep 2018 04:42:32 GMT

< Content-Length: 0

< Content-Type: text/plain; charset=utf-8

<

* Connection #0 to host 10.64.33.43 left intactTo view only the OAuth token value, run the following command:

$ curl -u <username>:<password>

'https://<master-address>:8443/oauth/authorize?client_id=openshift-challenging-client&response_type=token'

-skv -H "X-CSRF-Token: xxx" --stderr - | grep -oP "access_token=\K[^&]*"

hvqxe5aMlAzvbqfM2WWw3D6tR0R2jCQGKx0viZBxwmc

You can also use the Code Grant method to request a token

4.1.4.7. Authentication Metrics for Prometheus

OpenShift Container Platform captures the following Prometheus system metrics during authentication attempts:

-

openshift_auth_basic_password_countcounts the number ofoc loginuser name and password attempts. -

openshift_auth_basic_password_count_resultcounts the number ofoc loginuser name and password attempts by result (success or error). -

openshift_auth_form_password_countcounts the number of web console login attempts. -

openshift_auth_form_password_count_resultcounts the number of web console login attempts by result (success or error). -

openshift_auth_password_totalcounts the total number ofoc loginand web console login attempts.

4.2. Authorization

4.2.1. Overview

Role-based Access Control (RBAC) objects determine whether a user is allowed to perform a given action within a project.

This allows platform administrators to use the cluster roles and bindings to control who has various access levels to the OpenShift Container Platform platform itself and all projects.

It allows developers to use local roles and bindings to control who has access to their projects. Note that authorization is a separate step from authentication, which is more about determining the identity of who is taking the action.

Authorization is managed using:

| Rules |

Sets of permitted verbs on a set of objects. For example, whether something can |

| Roles | Collections of rules. Users and groups can be associated with, or bound to, multiple roles at the same time. |

| Bindings | Associations between users and/or groups with a role. |

Cluster administrators can visualize rules, roles, and bindings using the CLI.

For example, consider the following excerpt that shows the rule sets for the admin and basic-user default cluster roles:

$ oc describe clusterrole.rbac admin basic-userName: admin

Labels: <none>

Annotations: openshift.io/description=A user that has edit rights within the project and can change the project's membership.

rbac.authorization.kubernetes.io/autoupdate=true

PolicyRule:

Resources Non-Resource URLs Resource Names Verbs

--------- ----------------- -------------- -----

appliedclusterresourcequotas [] [] [get list watch]

appliedclusterresourcequotas.quota.openshift.io [] [] [get list watch]

bindings [] [] [get list watch]

buildconfigs [] [] [create delete deletecollection get list patch update watch]

buildconfigs.build.openshift.io [] [] [create delete deletecollection get list patch update watch]

buildconfigs/instantiate [] [] [create]

buildconfigs.build.openshift.io/instantiate [] [] [create]

buildconfigs/instantiatebinary [] [] [create]

buildconfigs.build.openshift.io/instantiatebinary [] [] [create]

buildconfigs/webhooks [] [] [create delete deletecollection get list patch update watch]

buildconfigs.build.openshift.io/webhooks [] [] [create delete deletecollection get list patch update watch]

buildlogs [] [] [create delete deletecollection get list patch update watch]

buildlogs.build.openshift.io [] [] [create delete deletecollection get list patch update watch]

builds [] [] [create delete deletecollection get list patch update watch]

builds.build.openshift.io [] [] [create delete deletecollection get list patch update watch]

builds/clone [] [] [create]

builds.build.openshift.io/clone [] [] [create]

builds/details [] [] [update]

builds.build.openshift.io/details [] [] [update]

builds/log [] [] [get list watch]

builds.build.openshift.io/log [] [] [get list watch]

configmaps [] [] [create delete deletecollection get list patch update watch]

cronjobs.batch [] [] [create delete deletecollection get list patch update watch]

daemonsets.extensions [] [] [get list watch]

deploymentconfigrollbacks [] [] [create]

deploymentconfigrollbacks.apps.openshift.io [] [] [create]

deploymentconfigs [] [] [create delete deletecollection get list patch update watch]

deploymentconfigs.apps.openshift.io [] [] [create delete deletecollection get list patch update watch]

deploymentconfigs/instantiate [] [] [create]

deploymentconfigs.apps.openshift.io/instantiate [] [] [create]

deploymentconfigs/log [] [] [get list watch]

deploymentconfigs.apps.openshift.io/log [] [] [get list watch]

deploymentconfigs/rollback [] [] [create]

deploymentconfigs.apps.openshift.io/rollback [] [] [create]

deploymentconfigs/scale [] [] [create delete deletecollection get list patch update watch]

deploymentconfigs.apps.openshift.io/scale [] [] [create delete deletecollection get list patch update watch]

deploymentconfigs/status [] [] [get list watch]

deploymentconfigs.apps.openshift.io/status [] [] [get list watch]

deployments.apps [] [] [create delete deletecollection get list patch update watch]

deployments.extensions [] [] [create delete deletecollection get list patch update watch]

deployments.extensions/rollback [] [] [create delete deletecollection get list patch update watch]

deployments.apps/scale [] [] [create delete deletecollection get list patch update watch]

deployments.extensions/scale [] [] [create delete deletecollection get list patch update watch]

deployments.apps/status [] [] [create delete deletecollection get list patch update watch]

endpoints [] [] [create delete deletecollection get list patch update watch]

events [] [] [get list watch]

horizontalpodautoscalers.autoscaling [] [] [create delete deletecollection get list patch update watch]

horizontalpodautoscalers.extensions [] [] [create delete deletecollection get list patch update watch]

imagestreamimages [] [] [create delete deletecollection get list patch update watch]

imagestreamimages.image.openshift.io [] [] [create delete deletecollection get list patch update watch]

imagestreamimports [] [] [create]

imagestreamimports.image.openshift.io [] [] [create]

imagestreammappings [] [] [create delete deletecollection get list patch update watch]

imagestreammappings.image.openshift.io [] [] [create delete deletecollection get list patch update watch]

imagestreams [] [] [create delete deletecollection get list patch update watch]

imagestreams.image.openshift.io [] [] [create delete deletecollection get list patch update watch]

imagestreams/layers [] [] [get update]

imagestreams.image.openshift.io/layers [] [] [get update]

imagestreams/secrets [] [] [create delete deletecollection get list patch update watch]

imagestreams.image.openshift.io/secrets [] [] [create delete deletecollection get list patch update watch]

imagestreams/status [] [] [get list watch]

imagestreams.image.openshift.io/status [] [] [get list watch]

imagestreamtags [] [] [create delete deletecollection get list patch update watch]

imagestreamtags.image.openshift.io [] [] [create delete deletecollection get list patch update watch]

jenkins.build.openshift.io [] [] [admin edit view]

jobs.batch [] [] [create delete deletecollection get list patch update watch]

limitranges [] [] [get list watch]

localresourceaccessreviews [] [] [create]

localresourceaccessreviews.authorization.openshift.io [] [] [create]

localsubjectaccessreviews [] [] [create]

localsubjectaccessreviews.authorization.k8s.io [] [] [create]

localsubjectaccessreviews.authorization.openshift.io [] [] [create]

namespaces [] [] [get list watch]

namespaces/status [] [] [get list watch]

networkpolicies.extensions [] [] [create delete deletecollection get list patch update watch]

persistentvolumeclaims [] [] [create delete deletecollection get list patch update watch]

pods [] [] [create delete deletecollection get list patch update watch]

pods/attach [] [] [create delete deletecollection get list patch update watch]

pods/exec [] [] [create delete deletecollection get list patch update watch]

pods/log [] [] [get list watch]

pods/portforward [] [] [create delete deletecollection get list patch update watch]

pods/proxy [] [] [create delete deletecollection get list patch update watch]

pods/status [] [] [get list watch]

podsecuritypolicyreviews [] [] [create]

podsecuritypolicyreviews.security.openshift.io [] [] [create]

podsecuritypolicyselfsubjectreviews [] [] [create]

podsecuritypolicyselfsubjectreviews.security.openshift.io [] [] [create]

podsecuritypolicysubjectreviews [] [] [create]

podsecuritypolicysubjectreviews.security.openshift.io [] [] [create]

processedtemplates [] [] [create delete deletecollection get list patch update watch]

processedtemplates.template.openshift.io [] [] [create delete deletecollection get list patch update watch]

projects [] [] [delete get patch update]

projects.project.openshift.io [] [] [delete get patch update]

replicasets.extensions [] [] [create delete deletecollection get list patch update watch]

replicasets.extensions/scale [] [] [create delete deletecollection get list patch update watch]

replicationcontrollers [] [] [create delete deletecollection get list patch update watch]

replicationcontrollers/scale [] [] [create delete deletecollection get list patch update watch]

replicationcontrollers.extensions/scale [] [] [create delete deletecollection get list patch update watch]

replicationcontrollers/status [] [] [get list watch]

resourceaccessreviews [] [] [create]

resourceaccessreviews.authorization.openshift.io [] [] [create]

resourcequotas [] [] [get list watch]

resourcequotas/status [] [] [get list watch]

resourcequotausages [] [] [get list watch]

rolebindingrestrictions [] [] [get list watch]

rolebindingrestrictions.authorization.openshift.io [] [] [get list watch]

rolebindings [] [] [create delete deletecollection get list patch update watch]

rolebindings.authorization.openshift.io [] [] [create delete deletecollection get list patch update watch]

rolebindings.rbac.authorization.k8s.io [] [] [create delete deletecollection get list patch update watch]

roles [] [] [create delete deletecollection get list patch update watch]

roles.authorization.openshift.io [] [] [create delete deletecollection get list patch update watch]

roles.rbac.authorization.k8s.io [] [] [create delete deletecollection get list patch update watch]

routes [] [] [create delete deletecollection get list patch update watch]

routes.route.openshift.io [] [] [create delete deletecollection get list patch update watch]

routes/custom-host [] [] [create]

routes.route.openshift.io/custom-host [] [] [create]

routes/status [] [] [get list watch update]

routes.route.openshift.io/status [] [] [get list watch update]

scheduledjobs.batch [] [] [create delete deletecollection get list patch update watch]

secrets [] [] [create delete deletecollection get list patch update watch]

serviceaccounts [] [] [create delete deletecollection get list patch update watch impersonate]

services [] [] [create delete deletecollection get list patch update watch]

services/proxy [] [] [create delete deletecollection get list patch update watch]

statefulsets.apps [] [] [create delete deletecollection get list patch update watch]

subjectaccessreviews [] [] [create]

subjectaccessreviews.authorization.openshift.io [] [] [create]

subjectrulesreviews [] [] [create]

subjectrulesreviews.authorization.openshift.io [] [] [create]

templateconfigs [] [] [create delete deletecollection get list patch update watch]

templateconfigs.template.openshift.io [] [] [create delete deletecollection get list patch update watch]

templateinstances [] [] [create delete deletecollection get list patch update watch]

templateinstances.template.openshift.io [] [] [create delete deletecollection get list patch update watch]

templates [] [] [create delete deletecollection get list patch update watch]

templates.template.openshift.io [] [] [create delete deletecollection get list patch update watch]

Name: basic-user

Labels: <none>

Annotations: openshift.io/description=A user that can get basic information about projects.

rbac.authorization.kubernetes.io/autoupdate=true

PolicyRule:

Resources Non-Resource URLs Resource Names Verbs

--------- ----------------- -------------- -----

clusterroles [] [] [get list]

clusterroles.authorization.openshift.io [] [] [get list]

clusterroles.rbac.authorization.k8s.io [] [] [get list watch]

projectrequests [] [] [list]

projectrequests.project.openshift.io [] [] [list]

projects [] [] [list watch]

projects.project.openshift.io [] [] [list watch]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews [] [] [create]

selfsubjectrulesreviews.authorization.openshift.io [] [] [create]

storageclasses.storage.k8s.io [] [] [get list]

users [] [~] [get]

users.user.openshift.io [] [~] [get]The following excerpt from viewing local role bindings shows the above roles bound to various users and groups:

oc describe rolebinding.rbac admin basic-user -n alice-projectName: admin

Labels: <none>

Annotations: <none>

Role:

Kind: ClusterRole

Name: admin

Subjects:

Kind Name Namespace

---- ---- ---------

User system:admin

User alice

Name: basic-user

Labels: <none>

Annotations: <none>

Role:

Kind: ClusterRole

Name: basic-user

Subjects:

Kind Name Namespace

---- ---- ---------

User joe

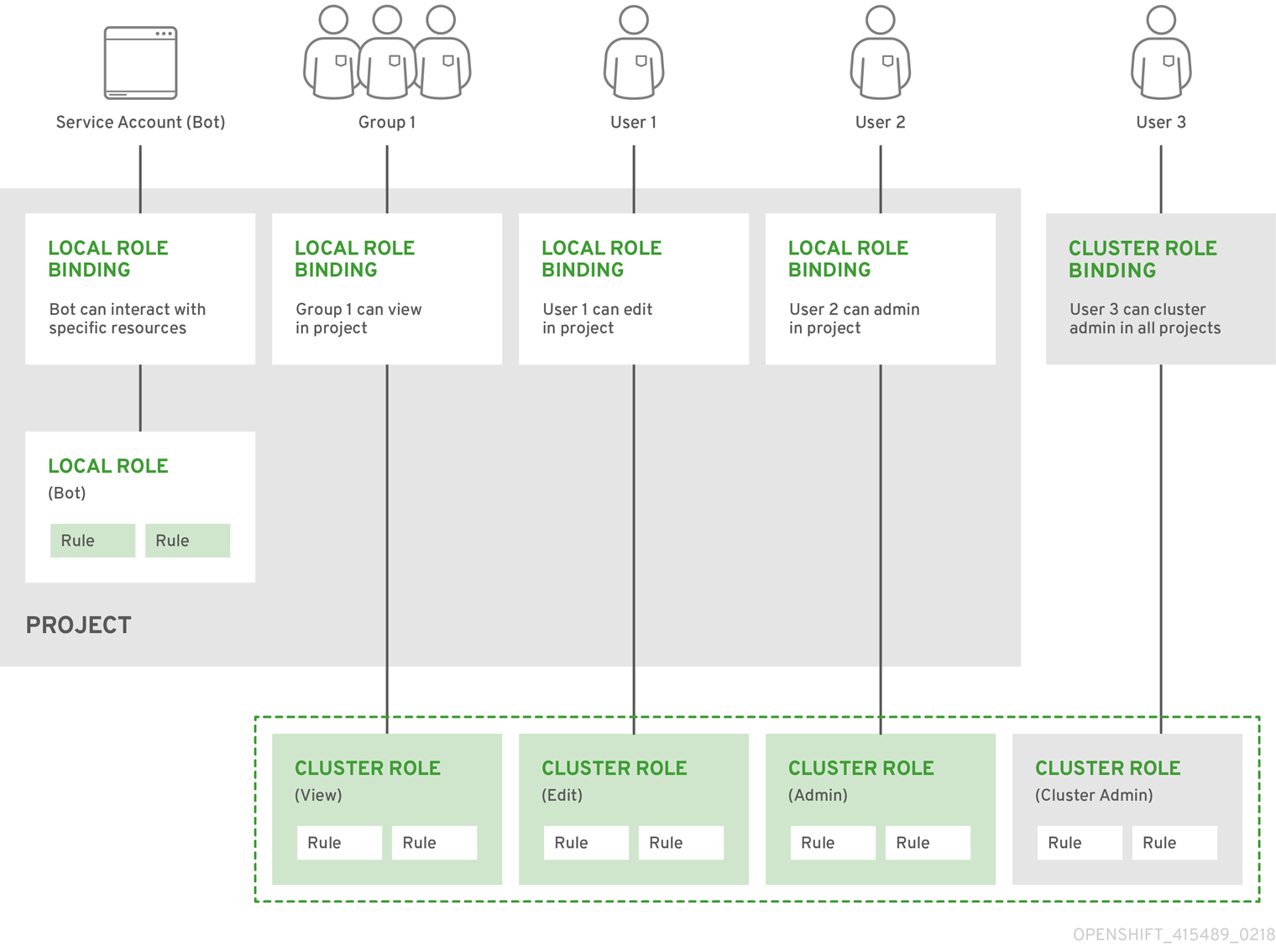

Group develThe relationships between cluster roles, local roles, cluster role bindings, local role bindings, users, groups and service accounts are illustrated below.

4.2.2. Evaluating Authorization

Several factors are combined to make the decision when OpenShift Container Platform evaluates authorization:

| Identity | In the context of authorization, both the user name and list of groups the user belongs to. | ||||||

| Action | The action being performed. In most cases, this consists of:

| ||||||

| Bindings | The full list of bindings. |

OpenShift Container Platform evaluates authorizations using the following steps:

- The identity and the project-scoped action is used to find all bindings that apply to the user or their groups.

- Bindings are used to locate all the roles that apply.

- Roles are used to find all the rules that apply.

- The action is checked against each rule to find a match.

- If no matching rule is found, the action is then denied by default.

4.2.3. Cluster and Local RBAC

There are two levels of RBAC roles and bindings that control authorization:

| Cluster RBAC | Roles and bindings that are applicable across all projects. Roles that exist cluster-wide are considered cluster roles. Cluster role bindings can only reference cluster roles. |

| Local RBAC | Roles and bindings that are scoped to a given project. Roles that exist only in a project are considered local roles. Local role bindings can reference both cluster and local roles. |

This two-level hierarchy allows re-usability over multiple projects through the cluster roles while allowing customization inside of individual projects through local roles.

During evaluation, both the cluster role bindings and the local role bindings are used. For example:

- Cluster-wide "allow" rules are checked.

- Locally-bound "allow" rules are checked.

- Deny by default.

4.2.4. Cluster Roles and Local Roles

Roles are collections of policy rules, which are sets of permitted verbs that can be performed on a set of resources. OpenShift Container Platform includes a set of default cluster roles that can be bound to users and groups cluster wide or locally.

| Default Cluster Role | Description |

|---|---|

| admin | A project manager. If used in a local binding, an admin user will have rights to view any resource in the project and modify any resource in the project except for quota. |

| basic-user | A user that can get basic information about projects and users. |

| cluster-admin | A super-user that can perform any action in any project. When bound to a user with a local binding, they have full control over quota and every action on every resource in the project. |

| cluster-status | A user that can get basic cluster status information. |

| edit | A user that can modify most objects in a project, but does not have the power to view or modify roles or bindings. |

| self-provisioner | A user that can create their own projects. |

| view | A user who cannot make any modifications, but can see most objects in a project. They cannot view or modify roles or bindings. |

Remember that users and groups can be associated with, or bound to, multiple roles at the same time.

Project administrators can visualize roles, including a matrix of the verbs and resources each are associated using the CLI to view local roles and bindings.

The cluster role bound to the project administrator is limited in a project via a local binding. It is not bound cluster-wide like the cluster roles granted to the cluster-admin or system:admin.

Cluster roles are roles defined at the cluster level, but can be bound either at the cluster level or at the project level.

Learn how to create a local role for a project.

4.2.4.1. Updating Cluster Roles

After any OpenShift Container Platform cluster upgrade, the default roles are updated and automatically reconciled when the server is started. During reconciliation, any permissions that are missing from the default roles are added. If you added more permissions to the role, they are not removed.

If you customized the default roles and configured them to prevent automatic role reconciliation, you must manually update policy definitions when you upgrade OpenShift Container Platform.

4.2.4.2. Applying Custom Roles and Permissions

To add or update custom roles and permissions, it is strongly recommended to use the following command:

# oc auth reconcile -f FILEThis command ensures that new permissions are applied properly in a way that will not break other clients. This is done internally by computing logical covers operations between rule sets, which is something you cannot do via a JSON merge on RBAC resources.

4.2.4.3. Cluster Role Aggregation

The default admin, edit, and view cluster roles support cluster role aggregation, where the cluster rules for each role are dynamically updated as new rules are created. This feature is relevant only if you extend the Kubernetes API by creating custom resources.

4.2.5. Security Context Constraints

In addition to the RBAC resources that control what a user can do, OpenShift Container Platform provides security context constraints (SCC) that control the actions that a pod can perform and what it has the ability to access. Administrators can manage SCCs using the CLI.

SCCs are also very useful for managing access to persistent storage.

SCCs are objects that define a set of conditions that a pod must run with in order to be accepted into the system. They allow an administrator to control the following:

- Running of privileged containers.

- Capabilities a container can request to be added.

- Use of host directories as volumes.

- The SELinux context of the container.

- The user ID.

- The use of host namespaces and networking.

-

Allocating an

FSGroupthat owns the pod’s volumes - Configuring allowable supplemental groups

- Requiring the use of a read only root file system

- Controlling the usage of volume types

- Configuring allowable seccomp profiles

Seven SCCs are added to the cluster by default, and are viewable by cluster administrators using the CLI:

$ oc get scc

NAME PRIV CAPS SELINUX RUNASUSER FSGROUP SUPGROUP PRIORITY READONLYROOTFS VOLUMES

anyuid false [] MustRunAs RunAsAny RunAsAny RunAsAny 10 false [configMap downwardAPI emptyDir persistentVolumeClaim secret]

hostaccess false [] MustRunAs MustRunAsRange MustRunAs RunAsAny <none> false [configMap downwardAPI emptyDir hostPath persistentVolumeClaim secret]

hostmount-anyuid false [] MustRunAs RunAsAny RunAsAny RunAsAny <none> false [configMap downwardAPI emptyDir hostPath nfs persistentVolumeClaim secret]

hostnetwork false [] MustRunAs MustRunAsRange MustRunAs MustRunAs <none> false [configMap downwardAPI emptyDir persistentVolumeClaim secret]

nonroot false [] MustRunAs MustRunAsNonRoot RunAsAny RunAsAny <none> false [configMap downwardAPI emptyDir persistentVolumeClaim secret]

privileged true [*] RunAsAny RunAsAny RunAsAny RunAsAny <none> false [*]

restricted false [] MustRunAs MustRunAsRange MustRunAs RunAsAny <none> false [configMap downwardAPI emptyDir persistentVolumeClaim secret]Do not modify the default SCCs. Customizing the default SCCs can lead to issues when OpenShift Container Platform is upgraded. Instead, create new SCCs.

The definition for each SCC is also viewable by cluster administrators using the CLI. For example, for the privileged SCC:

# oc get -o yaml --export scc/privileged

allowHostDirVolumePlugin: true

allowHostIPC: true

allowHostNetwork: true

allowHostPID: true

allowHostPorts: true

allowPrivilegedContainer: true

allowedCapabilities:

- '*'

apiVersion: v1

defaultAddCapabilities: []

fsGroup:

type: RunAsAny

groups:

- system:cluster-admins

- system:nodes

kind: SecurityContextConstraints

metadata:

annotations:

kubernetes.io/description: 'privileged allows access to all privileged and host

features and the ability to run as any user, any group, any fsGroup, and with

any SELinux context. WARNING: this is the most relaxed SCC and should be used

only for cluster administration. Grant with caution.'

creationTimestamp: null

name: privileged

priority: null

readOnlyRootFilesystem: false

requiredDropCapabilities: []

runAsUser:

type: RunAsAny

seLinuxContext:

type: RunAsAny

seccompProfiles:

- '*'

supplementalGroups:

type: RunAsAny

users:

- system:serviceaccount:default:registry

- system:serviceaccount:default:router

- system:serviceaccount:openshift-infra:build-controller

volumes:

- '*'- 1

- A list of capabilities that can be requested by a pod. An empty list means that none of capabilities can be requested while the special symbol

*allows any capabilities. - 2

- A list of additional capabilities that will be added to any pod.

- 3

- The

FSGroupstrategy which dictates the allowable values for the Security Context. - 4

- The groups that have access to this SCC.

- 5

- A list of capabilities that will be dropped from a pod.

- 6

- The run as user strategy type which dictates the allowable values for the Security Context.

- 7

- The SELinux context strategy type which dictates the allowable values for the Security Context.

- 8

- The supplemental groups strategy which dictates the allowable supplemental groups for the Security Context.

- 9

- The users who have access to this SCC.

The users and groups fields on the SCC control which SCCs can be used. By default, cluster administrators, nodes, and the build controller are granted access to the privileged SCC. All authenticated users are granted access to the restricted SCC.

Docker has a default list of capabilities that are allowed for each container of a pod. The containers use the capabilities from this default list, but pod manifest authors can alter it by requesting additional capabilities or dropping some of defaulting. The allowedCapabilities, defaultAddCapabilities, and requiredDropCapabilities fields are used to control such requests from the pods, and to dictate which capabilities can be requested, which ones must be added to each container, and which ones must be forbidden.

The privileged SCC:

- allows privileged pods.

- allows host directories to be mounted as volumes.

- allows a pod to run as any user.

- allows a pod to run with any MCS label.

- allows a pod to use the host’s IPC namespace.

- allows a pod to use the host’s PID namespace.

- allows a pod to use any FSGroup.

- allows a pod to use any supplemental group.

- allows a pod to use any seccomp profiles.

- allows a pod to request any capabilities.

The restricted SCC:

- ensures pods cannot run as privileged.

- ensures pods cannot use host directory volumes.

- requires that a pod run as a user in a pre-allocated range of UIDs.

- requires that a pod run with a pre-allocated MCS label.

- allows a pod to use any FSGroup.

- allows a pod to use any supplemental group.

For more information about each SCC, see the kubernetes.io/description annotation available on the SCC.

SCCs are comprised of settings and strategies that control the security features a pod has access to. These settings fall into three categories:

| Controlled by a boolean |

Fields of this type default to the most restrictive value. For example, |

| Controlled by an allowable set | Fields of this type are checked against the set to ensure their value is allowed. |

| Controlled by a strategy | Items that have a strategy to generate a value provide:

|

4.2.5.1. SCC Strategies

4.2.5.1.1. RunAsUser

-

MustRunAs - Requires a

runAsUserto be configured. Uses the configuredrunAsUseras the default. Validates against the configuredrunAsUser. - MustRunAsRange - Requires minimum and maximum values to be defined if not using pre-allocated values. Uses the minimum as the default. Validates against the entire allowable range.

-

MustRunAsNonRoot - Requires that the pod be submitted with a non-zero

runAsUseror have theUSERdirective defined in the image. No default provided. -

RunAsAny - No default provided. Allows any

runAsUserto be specified.

4.2.5.1.2. SELinuxContext

-

MustRunAs - Requires

seLinuxOptionsto be configured if not using pre-allocated values. UsesseLinuxOptionsas the default. Validates againstseLinuxOptions. -

RunAsAny - No default provided. Allows any

seLinuxOptionsto be specified.

4.2.5.1.3. SupplementalGroups

- MustRunAs - Requires at least one range to be specified if not using pre-allocated values. Uses the minimum value of the first range as the default. Validates against all ranges.

-

RunAsAny - No default provided. Allows any

supplementalGroupsto be specified.

4.2.5.1.4. FSGroup

- MustRunAs - Requires at least one range to be specified if not using pre-allocated values. Uses the minimum value of the first range as the default. Validates against the first ID in the first range.

-

RunAsAny - No default provided. Allows any

fsGroupID to be specified.

4.2.5.2. Controlling Volumes

The usage of specific volume types can be controlled by setting the volumes field of the SCC. The allowable values of this field correspond to the volume sources that are defined when creating a volume:

- azureFile

- azureDisk

- flocker

- flexVolume

- hostPath

- emptyDir

- gcePersistentDisk

- awsElasticBlockStore

- gitRepo

- secret

- nfs

- iscsi

- glusterfs

- persistentVolumeClaim

- rbd

- cinder

- cephFS

- downwardAPI

- fc

- configMap

- vsphereVolume

- quobyte

- photonPersistentDisk

- projected

- portworxVolume

- scaleIO

- storageos

- * (a special value to allow the use of all volume types)

- none (a special value to disallow the use of all volumes types. Exist only for backwards compatibility)

The recommended minimum set of allowed volumes for new SCCs are configMap, downwardAPI, emptyDir, persistentVolumeClaim, secret, and projected.

The list of allowable volume types is not exhaustive because new types are added with each release of OpenShift Container Platform.

For backwards compatibility, the usage of allowHostDirVolumePlugin overrides settings in the volumes field. For example, if allowHostDirVolumePlugin is set to false but allowed in the volumes field, then the hostPath value will be removed from volumes.

4.2.5.3. Restricting Access to FlexVolumes

OpenShift Container Platform provides additional control of FlexVolumes based on their driver. When SCC allows the usage of FlexVolumes, pods can request any FlexVolumes. However, when the cluster administrator specifies driver names in the AllowedFlexVolumes field, pods must only use FlexVolumes with these drivers.

Example of Limiting Access to Only Two FlexVolumes

volumes:

- flexVolume

allowedFlexVolumes:

- driver: example/lvm

- driver: example/cifs4.2.5.4. Seccomp

SeccompProfiles lists the allowed profiles that can be set for the pod or container’s seccomp annotations. An unset (nil) or empty value means that no profiles are specified by the pod or container. Use the wildcard * to allow all profiles. When used to generate a value for a pod, the first non-wildcard profile is used as the default.

Refer to the seccomp documentation for more information about configuring and using custom profiles.

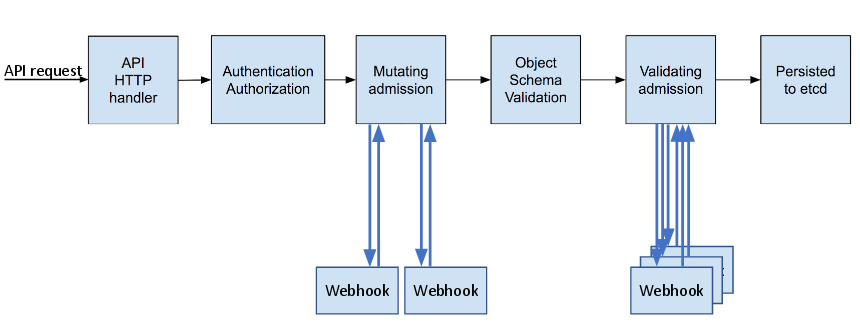

4.2.5.5. Admission

Admission control with SCCs allows for control over the creation of resources based on the capabilities granted to a user.

In terms of the SCCs, this means that an admission controller can inspect the user information made available in the context to retrieve an appropriate set of SCCs. Doing so ensures the pod is authorized to make requests about its operating environment or to generate a set of constraints to apply to the pod.

The set of SCCs that admission uses to authorize a pod are determined by the user identity and groups that the user belongs to. Additionally, if the pod specifies a service account, the set of allowable SCCs includes any constraints accessible to the service account.

Admission uses the following approach to create the final security context for the pod:

- Retrieve all SCCs available for use.

- Generate field values for security context settings that were not specified on the request.

- Validate the final settings against the available constraints.

If a matching set of constraints is found, then the pod is accepted. If the request cannot be matched to an SCC, the pod is rejected.

A pod must validate every field against the SCC. The following are examples for just two of the fields that must be validated:

These examples are in the context of a strategy using the preallocated values.

A FSGroup SCC Strategy of MustRunAs

If the pod defines a fsGroup ID, then that ID must equal the default fsGroup ID. Otherwise, the pod is not validated by that SCC and the next SCC is evaluated.

If the SecurityContextConstraints.fsGroup field has value RunAsAny and the pod specification omits the Pod.spec.securityContext.fsGroup, then this field is considered valid. Note that it is possible that during validation, other SCC settings will reject other pod fields and thus cause the pod to fail.

A SupplementalGroups SCC Strategy of MustRunAs

If the pod specification defines one or more supplementalGroups IDs, then the pod’s IDs must equal one of the IDs in the namespace’s openshift.io/sa.scc.supplemental-groups annotation. Otherwise, the pod is not validated by that SCC and the next SCC is evaluated.

If the SecurityContextConstraints.supplementalGroups field has value RunAsAny and the pod specification omits the Pod.spec.securityContext.supplementalGroups, then this field is considered valid. Note that it is possible that during validation, other SCC settings will reject other pod fields and thus cause the pod to fail.

4.2.5.5.1. SCC Prioritization

SCCs have a priority field that affects the ordering when attempting to validate a request by the admission controller. A higher priority SCC is moved to the front of the set when sorting. When the complete set of available SCCs are determined they are ordered by:

- Highest priority first, nil is considered a 0 priority

- If priorities are equal, the SCCs will be sorted from most restrictive to least restrictive

- If both priorities and restrictions are equal the SCCs will be sorted by name

By default, the anyuid SCC granted to cluster administrators is given priority in their SCC set. This allows cluster administrators to run pods as any user by without specifying a RunAsUser on the pod’s SecurityContext. The administrator may still specify a RunAsUser if they wish.

4.2.5.5.2. Understanding Pre-allocated Values and Security Context Constraints

The admission controller is aware of certain conditions in the security context constraints that trigger it to look up pre-allocated values from a namespace and populate the security context constraint before processing the pod. Each SCC strategy is evaluated independently of other strategies, with the pre-allocated values (where allowed) for each policy aggregated with pod specification values to make the final values for the various IDs defined in the running pod.

The following SCCs cause the admission controller to look for pre-allocated values when no ranges are defined in the pod specification:

-

A

RunAsUserstrategy of MustRunAsRange with no minimum or maximum set. Admission looks for the openshift.io/sa.scc.uid-range annotation to populate range fields. -

An

SELinuxContextstrategy of MustRunAs with no level set. Admission looks for the openshift.io/sa.scc.mcs annotation to populate the level. -

A

FSGroupstrategy of MustRunAs. Admission looks for the openshift.io/sa.scc.supplemental-groups annotation. -

A

SupplementalGroupsstrategy of MustRunAs. Admission looks for the openshift.io/sa.scc.supplemental-groups annotation.

During the generation phase, the security context provider will default any values that are not specifically set in the pod. Defaulting is based on the strategy being used:

-

RunAsAnyandMustRunAsNonRootstrategies do not provide default values. Thus, if the pod needs a field defined (for example, a group ID), this field must be defined inside the pod specification. -

MustRunAs(single value) strategies provide a default value which is always used. As an example, for group IDs: even if the pod specification defines its own ID value, the namespace’s default field will also appear in the pod’s groups. -

MustRunAsRangeandMustRunAs(range-based) strategies provide the minimum value of the range. As with a single valueMustRunAsstrategy, the namespace’s default value will appear in the running pod. If a range-based strategy is configurable with multiple ranges, it will provide the minimum value of the first configured range.

FSGroup and SupplementalGroups strategies fall back to the openshift.io/sa.scc.uid-range annotation if the openshift.io/sa.scc.supplemental-groups annotation does not exist on the namespace. If neither exist, the SCC will fail to create.

By default, the annotation-based FSGroup strategy configures itself with a single range based on the minimum value for the annotation. For example, if your annotation reads 1/3, the FSGroup strategy will configure itself with a minimum and maximum of 1. If you want to allow more groups to be accepted for the FSGroup field, you can configure a custom SCC that does not use the annotation.

The openshift.io/sa.scc.supplemental-groups annotation accepts a comma delimited list of blocks in the format of <start>/<length or <start>-<end>. The openshift.io/sa.scc.uid-range annotation accepts only a single block.

4.2.6. Determining What You Can Do as an Authenticated User

From within your OpenShift Container Platform project, you can determine what verbs you can perform against all namespace-scoped resources (including third-party resources). Run:

$ oc policy can-i --list --loglevel=8The output will help you to determine what API request to make to gather the information.

To receive information back in a user-readable format, run:

$ oc policy can-i --listThe output will provide a full list.

To determine if you can perform specific verbs, run:

$ oc policy can-i <verb> <resource>User scopes can provide more information about a given scope. For example:

$ oc policy can-i <verb> <resource> --scopes=user:info4.3. Persistent Storage

4.3.1. Overview

Managing storage is a distinct problem from managing compute resources. OpenShift Container Platform uses the Kubernetes persistent volume (PV) framework to allow cluster administrators to provision persistent storage for a cluster. Developers can use persistent volume claims (PVCs) to request PV resources without having specific knowledge of the underlying storage infrastructure.

PVCs are specific to a project and are created and used by developers as a means to use a PV. PV resources on their own are not scoped to any single project; they can be shared across the entire OpenShift Container Platform cluster and claimed from any project. After a PV is bound to a PVC, however, that PV cannot then be bound to additional PVCs. This has the effect of scoping a bound PV to a single namespace (that of the binding project).

PVs are defined by a PersistentVolume API object, which represents a piece of existing, networked storage in the cluster that was provisioned by the cluster administrator. It is a resource in the cluster just like a node is a cluster resource. PVs are volume plug-ins like Volumes but have a lifecycle that is independent of any individual pod that uses the PV. PV objects capture the details of the implementation of the storage, be that NFS, iSCSI, or a cloud-provider-specific storage system.

High availability of storage in the infrastructure is left to the underlying storage provider.

PVCs are defined by a PersistentVolumeClaim API object, which represents a request for storage by a developer. It is similar to a pod in that pods consume node resources and PVCs consume PV resources. For example, pods can request specific levels of resources (e.g., CPU and memory), while PVCs can request specific storage capacity and access modes (e.g, they can be mounted once read/write or many times read-only).

4.3.2. Lifecycle of a volume and claim

PVs are resources in the cluster. PVCs are requests for those resources and also act as claim checks to the resource. The interaction between PVs and PVCs have the following lifecycle.

4.3.2.1. Provision storage

In response to requests from a developer defined in a PVC, a cluster administrator configures one or more dynamic provisioners that provision storage and a matching PV.

Alternatively, a cluster administrator can create a number of PVs in advance that carry the details of the real storage that is available for use. PVs exist in the API and are available for use.

4.3.2.2. Bind claims

When you create a PVC, you request a specific amount of storage, specify the required access mode, and create a storage class to describe and classify the storage. The control loop in the master watches for new PVCs and binds the new PVC to an appropriate PV. If an appropriate PV does not exist, a provisioner for the storage class creates one.

The PV volume might exceed your requested volume. This is especially true with manually provisioned PVs. To minimize the excess, OpenShift Container Platform binds to the smallest PV that matches all other criteria.

Claims remain unbound indefinitely if a matching volume does not exist or cannot be created with any available provisioner servicing a storage class. Claims are bound as matching volumes become available. For example, a cluster with many manually provisioned 50Gi volumes would not match a PVC requesting 100Gi. The PVC can be bound when a 100Gi PV is added to the cluster.

4.3.2.3. Use pods and claimed PVs

Pods use claims as volumes. The cluster inspects the claim to find the bound volume and mounts that volume for a pod. For those volumes that support multiple access modes, you must specify which mode applies when you use the claim as a volume in a pod.

After you have a claim and that claim is bound, the bound PV belongs to you for as long as you need it. You can schedule pods and access claimed PVs by including persistentVolumeClaim in the pod’s volumes block. See below for syntax details.

4.3.2.4. PVC protection

PVC protection is enabled by default.

4.3.2.5. Release volumes

When you are finished with a volume, you can delete the PVC object from the API, which allows reclamation of the resource. The volume is considered "released" when the claim is deleted, but it is not yet available for another claim. The previous claimant’s data remains on the volume and must be handled according to policy.

4.3.2.6. Reclaim volumes

The reclaim policy of a PersistentVolume tells the cluster what to do with the volume after it is released. Volumes reclaim policy can either be Retain, Recycle, or Delete.

Retain reclaim policy allows manual reclamation of the resource for those volume plug-ins that support it. Delete reclaim policy deletes both the PersistentVolume object from OpenShift Container Platform and the associated storage asset in external infrastructure, such as AWS EBS, GCE PD, or Cinder volume.

Dynamically provisioned volumes have a default ReclaimPolicy value of Delete. Manually provisioned volumes have a default ReclaimPolicy value of Retain.

4.3.2.6.1. Recycle volumes

If supported by appropriate volume plug-in, recycling performs a basic scrub (rm -rf /thevolume/*) on the volume and makes it available again for a claim.

The recycle reclaim policy was deprecated and removed in favor of dynamic provisioning starting in OpenShift Container Platform 3.6.

You can configure a custom recycler pod template by using the controller manager command line arguments as described in the ControllerArguments section. The custom recycler pod template must contain a volumes specification, for example:

Custom recycler pod template example

apiVersion: v1

kind: Pod

metadata:

name: pv-recycler-

namespace: openshift-infra

spec:

restartPolicy: Never

serviceAccount: pv-recycler-controller

volumes:

- name: nfsvol

nfs:

server: any-server-it-will-be-replaced

path: any-path-it-will-be-replaced

containers:

- name: pv-recycler

image: "gcr.io/google_containers/busybox"

command: ["/bin/sh", "-c", "test -e /scrub && rm -rf /scrub/..?* /scrub/.[!.]* /scrub/* && test -z \"$(ls -A /scrub)\" || exit 1"]

volumeMounts:

- name: nfsvol

mountPath: /scrub- 1

- Namespace where the recycler pod runs.

openshift-infrais the recommended namespace, as it already has apv-recycler-controllerservice account that can recycle volumes. - 2

- Name of service account that is allowed to mount NFS volumes. It must exist in the specified namespace. A

pv-recycler-controlleraccount is recommended, as it is automatically created inopenshift-infranamespace and has all the required permissions. - 3 4

- The particular

serverandpathvalues specified in the custom recycler pod template in thevolumespart is replaced with the particular corresponding values from the PV that is being recycled.

4.3.3. Persistent volumes

Each PV contains a spec and status, which is the specification and status of the volume, for example:

PV object definition example

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0003

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /tmp

server: 172.17.0.24.3.3.1. Types of PVs

OpenShift Container Platform supports the following PersistentVolume plug-ins:

4.3.3.2. Capacity

Generally, a PV has a specific storage capacity. This is set by using the PV’s capacity attribute.

Currently, storage capacity is the only resource that can be set or requested. Future attributes may include IOPS, throughput, and so on.

4.3.3.3. Access modes

A PersistentVolume can be mounted on a host in any way supported by the resource provider. Providers will have different capabilities and each PV’s access modes are set to the specific modes supported by that particular volume. For example, NFS can support multiple read/write clients, but a specific NFS PV might be exported on the server as read-only. Each PV gets its own set of access modes describing that specific PV’s capabilities.

Claims are matched to volumes with similar access modes. The only two matching criteria are access modes and size. A claim’s access modes represent a request. Therefore, you might be granted more, but never less. For example, if a claim requests RWO, but the only volume available is an NFS PV (RWO+ROX+RWX), the claim would then match NFS because it supports RWO.

Direct matches are always attempted first. The volume’s modes must match or contain more modes than you requested. The size must be greater than or equal to what is expected. If two types of volumes (NFS and iSCSI, for example) have the same set of access modes, either of them can match a claim with those modes. There is no ordering between types of volumes and no way to choose one type over another.

All volumes with the same modes are grouped, and then sorted by size (smallest to largest). The binder gets the group with matching modes and iterates over each (in size order) until one size matches.

The following table lists the access modes:

| Access Mode | CLI abbreviation | Description |

|---|---|---|

| ReadWriteOnce |

| The volume can be mounted as read-write by a single node. |

| ReadOnlyMany |

| The volume can be mounted read-only by many nodes. |

| ReadWriteMany |

| The volume can be mounted as read-write by many nodes. |

A volume’s AccessModes are descriptors of the volume’s capabilities. They are not enforced constraints. The storage provider is responsible for runtime errors resulting from invalid use of the resource.

For example, Ceph offers ReadWriteOnce access mode. You must mark the claims as read-only if you want to use the volume’s ROX capability. Errors in the provider show up at runtime as mount errors.

iSCSI and Fibre Channel volumes do not currently have any fencing mechanisms. You must ensure the volumes are only used by one node at a time. In certain situations, such as draining a node, the volumes can be used simultaneously by two nodes. Before draining the node, first ensure the pods that use these volumes are deleted.

The following table lists the access modes supported by different PVs:

| Volume Plug-in | ReadWriteOnce | ReadOnlyMany | ReadWriteMany |

|---|---|---|---|

| AWS EBS |

✅ |

- |

- |

| Azure File |

✅ |

✅ |

✅ |

| Azure Disk |

✅ |

- |

- |

| Ceph RBD |

✅ |

✅ |

- |

| Fibre Channel |

✅ |

✅ |

- |

| GCE Persistent Disk |

✅ |

- |

- |

| GlusterFS |

✅ |

✅ |

✅ |

| HostPath |

✅ |

- |

- |

| iSCSI |

✅ |

✅ |

- |

| NFS |

✅ |

✅ |

✅ |

| Openstack Cinder |

✅ |

- |

- |

| VMWare vSphere |

✅ |

- |

- |

| Local |

✅ |

- |

- |

Use a recreate deployment strategy for pods that rely on AWS EBS, GCE Persistent Disks, or Openstack Cinder PVs.

4.3.3.4. Reclaim policy

The following table lists current reclaim policies:

| Reclaim policy | Description |

|---|---|

| Retain | Manual reclamation |

| Recycle |

Basic scrub (e.g, |

Currently, only NFS and HostPath support the 'Recycle' reclaim policy.

The recycle reclaim policy was deprecated and removed in favor of dynamic provisioning starting in OpenShift Container Platform 3.6.

4.3.3.5. Phase

Volumes can be found in one of the following phases:

| Phase | Description |

|---|---|

| Available | A free resource not yet bound to a claim. |

| Bound | The volume is bound to a claim. |

| Released | The claim was deleted, but the resource is not yet reclaimed by the cluster. |

| Failed | The volume has failed its automatic reclamation. |

The CLI shows the name of the PVC bound to the PV.

4.3.3.6. Mount options

You can specify mount options while mounting a persistent volume by using the annotation volume.beta.kubernetes.io/mount-options.

For example:

Mount options example

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0001

annotations:

volume.beta.kubernetes.io/mount-options: rw,nfsvers=4,noexec

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

nfs:

path: /tmp

server: 172.17.0.2

persistentVolumeReclaimPolicy: Recycle

claimRef:

name: claim1

namespace: default- 1

- Specified mount options are used while mounting the PV to the disk.

The following persistent volume types support mount options:

- NFS

- GlusterFS

- Ceph RBD

- OpenStack Cinder

- AWS Elastic Block Store (EBS)

- GCE Persistent Disk

- iSCSI

- Azure Disk

- Azure File

- VMWare vSphere

Fibre Channel and HostPath persistent volumes do not support mount options.

4.3.4. Persistent volume claims

Each PVC contains a spec and status, which is the specification and status of the claim, for example:

PVC object definition example

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: myclaim

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 8Gi

storageClassName: gold4.3.4.1. Storage classes

Claims can optionally request a specific storage class by specifying the storage class’s name in the storageClassName attribute. Only PVs of the requested class, ones with the same storageClassName as the PVC, can be bound to the PVC. The cluster administrator can configure dynamic provisioners to service one or more storage classes. The cluster administrator can create a PV on demand that matches the specifications in the PVC.

The cluster administrator can also set a default storage class for all PVCs. When a default storage class is configured, the PVC must explicitly ask for StorageClass or storageClassName annotations set to "" to be bound to a PV without a storage class.

4.3.4.2. Access modes

Claims use the same conventions as volumes when requesting storage with specific access modes.

4.3.4.3. Resources

Claims, such as pods, can request specific quantities of a resource. In this case, the request is for storage. The same resource model applies to volumes and claims.

4.3.4.4. Claims as volumes

Pods access storage by using the claim as a volume. Claims must exist in the same namespace as the pod by using the claim. The cluster finds the claim in the pod’s namespace and uses it to get the PersistentVolume backing the claim. The volume is mounted to the host and into the pod, for example:

Mount volume to the host and into the pod example

kind: Pod

apiVersion: v1

metadata:

name: mypod

spec:

containers:

- name: myfrontend

image: dockerfile/nginx

volumeMounts:

- mountPath: "/var/www/html"

name: mypd

volumes:

- name: mypd

persistentVolumeClaim:

claimName: myclaim4.3.5. Block volume support