Migrating from version 3 to 4

Migrating to OpenShift Container Platform 4

Abstract

Chapter 1. Migration from OpenShift Container Platform 3 to 4 overview

OpenShift Container Platform 4 clusters are different from OpenShift Container Platform 3 clusters. OpenShift Container Platform 4 clusters contain new technologies and functionality that result in a cluster that is self-managing, flexible, and automated. To learn more about migrating from OpenShift Container Platform 3 to 4 see About migrating from OpenShift Container Platform 3 to 4.

1.1. Differences between OpenShift Container Platform 3 and 4

Before migrating from OpenShift Container Platform 3 to 4, you can check differences between OpenShift Container Platform 3 and 4. Review the following information:

1.2. Planning network considerations

Before migrating from OpenShift Container Platform 3 to 4, review the differences between OpenShift Container Platform 3 and 4 for information about the following areas:

You can migrate stateful application workloads from OpenShift Container Platform 3 to 4 at the granularity of a namespace. To learn more about MTC see Understanding MTC.

If you are migrating from OpenShift Container Platform 3, see About migrating from OpenShift Container Platform 3 to 4 and Installing the legacy Migration Toolkit for Containers Operator on OpenShift Container Platform 3.

1.3. Installing MTC

Review the following tasks to install the MTC:

1.4. Upgrading MTC

You upgrade the Migration Toolkit for Containers (MTC) on OpenShift Container Platform 4.16 by using OLM. You upgrade MTC on OpenShift Container Platform 3 by reinstalling the legacy Migration Toolkit for Containers Operator.

1.5. Reviewing premigration checklists

Before you migrate your application workloads with the Migration Toolkit for Containers (MTC), review the premigration checklists.

1.6. Migrating applications

You can migrate your applications by using the MTC web console or the command line.

1.7. Advanced migration options

You can automate your migrations and modify MTC custom resources to improve the performance of large-scale migrations by using the following options:

1.8. Troubleshooting migrations

You can perform the following troubleshooting tasks:

- Viewing migration plan resources by using the MTC web console

- Viewing the migration plan aggregated log file

- Using the migration log reader

- Accessing performance metrics

-

Using the

must-gathertool -

Using the Velero CLI to debug

BackupandRestoreCRs - Using MTC custom resources for troubleshooting

- Checking common issues and concerns

1.9. Rolling back a migration

You can roll back a migration by using the MTC web console, by using the CLI, or manually.

1.10. Uninstalling MTC and deleting resources

You can uninstall the MTC and delete its resources to clean up the cluster.

Chapter 2. About migrating from OpenShift Container Platform 3 to 4

OpenShift Container Platform 4 contains new technologies and functionality that result in a cluster that is self-managing, flexible, and automated. OpenShift Container Platform 4 clusters are deployed and managed very differently from OpenShift Container Platform 3.

The most effective way to migrate from OpenShift Container Platform 3 to 4 is by using a CI/CD pipeline to automate deployments in an application lifecycle management framework.

If you do not have a CI/CD pipeline or if you are migrating stateful applications, you can use the Migration Toolkit for Containers (MTC) to migrate your application workloads.

You can use Red Hat Advanced Cluster Management for Kubernetes to help you import and manage your OpenShift Container Platform 3 clusters easily, enforce policies, and redeploy your applications. Take advantage of the free subscription to use Red Hat Advanced Cluster Management to simplify your migration process.

To successfully transition to OpenShift Container Platform 4, review the following information:

- Differences between OpenShift Container Platform 3 and 4

- Architecture

- Installation and upgrade

- Storage, network, logging, security, and monitoring considerations

- About the Migration Toolkit for Containers

- Workflow

- File system and snapshot copy methods for persistent volumes (PVs)

- Direct volume migration

- Direct image migration

- Advanced migration options

- Automating your migration with migration hooks

- Using the MTC API

- Excluding resources from a migration plan

-

Configuring the

MigrationControllercustom resource for large-scale migrations - Enabling automatic PV resizing for direct volume migration

- Enabling cached Kubernetes clients for improved performance

For new features and enhancements, technical changes, and known issues, see the MTC release notes.

Chapter 3. Differences between OpenShift Container Platform 3 and 4

OpenShift Container Platform 4.16 introduces architectural changes and enhancements/ The procedures that you used to manage your OpenShift Container Platform 3 cluster might not apply to OpenShift Container Platform 4.

For information on configuring your OpenShift Container Platform 4 cluster, review the appropriate sections of the OpenShift Container Platform documentation. For information on new features and other notable technical changes, review the OpenShift Container Platform 4.16 release notes.

It is not possible to upgrade your existing OpenShift Container Platform 3 cluster to OpenShift Container Platform 4. You must start with a new OpenShift Container Platform 4 installation. Tools are available to assist in migrating your control plane settings and application workloads.

3.1. Architecture

With OpenShift Container Platform 3, administrators individually deployed Red Hat Enterprise Linux (RHEL) hosts, and then installed OpenShift Container Platform on top of these hosts to form a cluster. Administrators were responsible for properly configuring these hosts and performing updates.

OpenShift Container Platform 4 represents a significant change in the way that OpenShift Container Platform clusters are deployed and managed. OpenShift Container Platform 4 includes new technologies and functionality, such as Operators, machine sets, and Red Hat Enterprise Linux CoreOS (RHCOS), which are core to the operation of the cluster. This technology shift enables clusters to self-manage some functions previously performed by administrators. This also ensures platform stability and consistency, and simplifies installation and scaling.

Beginning with OpenShift Container Platform 4.13, RHCOS now uses Red Hat Enterprise Linux (RHEL) 9.2 packages. This enhancement enables the latest fixes and features as well as the latest hardware support and driver updates. For more information about how this upgrade to RHEL 9.2 might affect your options configuration and services as well as driver and container support, see the RHCOS now uses RHEL 9.2 in the OpenShift Container Platform 4.13 release notes.

For more information, see OpenShift Container Platform architecture.

3.1.1. Immutable infrastructure

OpenShift Container Platform 4 uses Red Hat Enterprise Linux CoreOS (RHCOS), which is designed to run containerized applications, and provides efficient installation, Operator-based management, and simplified upgrades. RHCOS is an immutable container host, rather than a customizable operating system like RHEL. RHCOS enables OpenShift Container Platform 4 to manage and automate the deployment of the underlying container host. RHCOS is a part of OpenShift Container Platform, which means that everything runs inside a container and is deployed using OpenShift Container Platform.

In OpenShift Container Platform 4, control plane nodes must run RHCOS, ensuring that full-stack automation is maintained for the control plane. This makes rolling out updates and upgrades a much easier process than in OpenShift Container Platform 3.

For more information, see Red Hat Enterprise Linux CoreOS (RHCOS).

3.1.2. Operators

Operators are a method of packaging, deploying, and managing a Kubernetes application. Operators ease the operational complexity of running another piece of software. They watch over your environment and use the current state to make decisions in real time. Advanced Operators are designed to upgrade and react to failures automatically.

For more information, see Understanding Operators.

3.2. Installation and upgrade

3.2.1. Installation process

To install OpenShift Container Platform 3.11, you prepared your Red Hat Enterprise Linux (RHEL) hosts, set all of the configuration values your cluster needed, and then ran an Ansible playbook to install and set up your cluster.

In OpenShift Container Platform 4.16, you use the OpenShift installation program to create a minimum set of resources required for a cluster. After the cluster is running, you use Operators to further configure your cluster and to install new services. After first boot, Red Hat Enterprise Linux CoreOS (RHCOS) systems are managed by the Machine Config Operator (MCO) that runs in the OpenShift Container Platform cluster.

For more information, see Installation process.

If you want to add Red Hat Enterprise Linux (RHEL) worker machines to your OpenShift Container Platform 4.16 cluster, you use an Ansible playbook to join the RHEL worker machines after the cluster is running. For more information, see Adding RHEL compute machines to an OpenShift Container Platform cluster.

3.2.2. Infrastructure options

In OpenShift Container Platform 3.11, you installed your cluster on infrastructure that you prepared and maintained. In addition to providing your own infrastructure, OpenShift Container Platform 4 offers an option to deploy a cluster on infrastructure that the OpenShift Container Platform installation program provisions and the cluster maintains.

For more information, see OpenShift Container Platform installation overview.

3.2.3. Upgrading your cluster

In OpenShift Container Platform 3.11, you upgraded your cluster by running Ansible playbooks. In OpenShift Container Platform 4.16, the cluster manages its own updates, including updates to Red Hat Enterprise Linux CoreOS (RHCOS) on cluster nodes. You can easily upgrade your cluster by using the web console or by using the oc adm upgrade command from the OpenShift CLI and the Operators will automatically upgrade themselves. If your OpenShift Container Platform 4.16 cluster has RHEL worker machines, then you will still need to run an Ansible playbook to upgrade those worker machines.

For more information, see Updating clusters.

3.3. Migration considerations

Review the changes and other considerations that might affect your transition from OpenShift Container Platform 3.11 to OpenShift Container Platform 4.

3.3.1. Storage considerations

Review the following storage changes to consider when transitioning from OpenShift Container Platform 3.11 to OpenShift Container Platform 4.16.

3.3.1.1. Local volume persistent storage

Local storage is only supported by using the Local Storage Operator in OpenShift Container Platform 4.16. It is not supported to use the local provisioner method from OpenShift Container Platform 3.11.

For more information, see Persistent storage using local volumes.

3.3.1.2. FlexVolume persistent storage

The FlexVolume plugin location changed from OpenShift Container Platform 3.11. The new location in OpenShift Container Platform 4.16 is /etc/kubernetes/kubelet-plugins/volume/exec. Attachable FlexVolume plugins are no longer supported.

For more information, see Persistent storage using FlexVolume.

3.3.1.3. Container Storage Interface (CSI) persistent storage

Persistent storage using the Container Storage Interface (CSI) was Technology Preview in OpenShift Container Platform 3.11. OpenShift Container Platform 4.16 includes with several CSI drivers. You can also install your own driver.

For more information, see Persistent storage using the Container Storage Interface (CSI).

3.3.1.4. Red Hat OpenShift Data Foundation

OpenShift Container Storage 3, which is available for use with OpenShift Container Platform 3.11, uses Red Hat Gluster Storage as the backing storage.

Red Hat OpenShift Data Foundation 4, which is available for use with OpenShift Container Platform 4, uses Red Hat Ceph Storage as the backing storage.

For more information, see Persistent storage using Red Hat OpenShift Data Foundation and the interoperability matrix article.

3.3.1.5. Unsupported persistent storage options

Support for the following persistent storage options from OpenShift Container Platform 3.11 has changed in OpenShift Container Platform 4.16:

- GlusterFS is no longer supported.

- CephFS as a standalone product is no longer supported.

- Ceph RBD as a standalone product is no longer supported.

If you used one of these in OpenShift Container Platform 3.11, you must choose a different persistent storage option for full support in OpenShift Container Platform 4.16.

For more information, see Understanding persistent storage.

3.3.1.6. Migration of in-tree volumes to CSI drivers

OpenShift Container Platform 4 is migrating in-tree volume plugins to their Container Storage Interface (CSI) counterparts. In OpenShift Container Platform 4.16, CSI drivers are the new default for the following in-tree volume types:

- Amazon Web Services (AWS) Elastic Block Storage (EBS)

- Azure Disk

- Azure File

- Google Cloud Persistent Disk (GCP PD)

- OpenStack Cinder

VMware vSphere

NoteAs of OpenShift Container Platform 4.13, VMware vSphere is not available by default. However, you can opt into VMware vSphere.

All aspects of volume lifecycle, such as creation, deletion, mounting, and unmounting, is handled by the CSI driver.

For more information, see CSI automatic migration.

3.3.2. Networking considerations

Review the following networking changes to consider when transitioning from OpenShift Container Platform 3.11 to OpenShift Container Platform 4.16.

3.3.2.1. Network isolation mode

The default network isolation mode for OpenShift Container Platform 3.11 was ovs-subnet, though users frequently switched to use ovn-multitenant. The default network isolation mode for OpenShift Container Platform 4.16 is controlled by a network policy.

If your OpenShift Container Platform 3.11 cluster used the ovs-subnet or ovs-multitenant mode, it is recommended to switch to a network policy for your OpenShift Container Platform 4.16 cluster. Network policies are supported upstream, are more flexible, and they provide the functionality that ovs-multitenant does. If you want to maintain the ovs-multitenant behavior while using a network policy in OpenShift Container Platform 4.16, follow the steps to configure multitenant isolation using network policy.

For more information, see About network policy.

3.3.2.2. OVN-Kubernetes as the default networking plugin in Red Hat OpenShift Networking

In OpenShift Container Platform 3.11, OpenShift SDN was the default networking plugin in Red Hat OpenShift Networking. In OpenShift Container Platform 4.16, OVN-Kubernetes is now the default networking plugin.

For information on migrating to OVN-Kubernetes from OpenShift SDN, see Migrating from the OpenShift SDN network plugin.

It is not possible to upgrade a cluster to OpenShift Container Platform 4.17 if it is using the OpenShift SDN network plugin. You must migrate to the OVN-Kubernetes plugin before upgrading to OpenShift Container Platform 4.17.

3.3.3. Logging considerations

Review the following logging changes to consider when transitioning from OpenShift Container Platform 3.11 to OpenShift Container Platform 4.16.

3.3.3.1. Deploying OpenShift Logging

OpenShift Container Platform 4 provides a simple deployment mechanism for OpenShift Logging, by using a Cluster Logging custom resource.

For more information, see Installing OpenShift Logging.

3.3.3.2. Aggregated logging data

You cannot transition your aggregate logging data from OpenShift Container Platform 3.11 into your new OpenShift Container Platform 4 cluster.

For more information, see About OpenShift Logging.

3.3.3.3. Unsupported logging configurations

Some logging configurations that were available in OpenShift Container Platform 3.11 are no longer supported in OpenShift Container Platform 4.16.

For more information on the explicitly unsupported logging cases, see the logging support documentation.

3.3.4. Security considerations

Review the following security changes to consider when transitioning from OpenShift Container Platform 3.11 to OpenShift Container Platform 4.16.

3.3.4.1. Unauthenticated access to discovery endpoints

In OpenShift Container Platform 3.11, an unauthenticated user could access the discovery endpoints (for example, /api/* and /apis/*). For security reasons, unauthenticated access to the discovery endpoints is no longer allowed in OpenShift Container Platform 4.16. If you do need to allow unauthenticated access, you can configure the RBAC settings as necessary; however, be sure to consider the security implications as this can expose internal cluster components to the external network.

3.3.4.2. Identity providers

Configuration for identity providers has changed for OpenShift Container Platform 4, including the following notable changes:

- The request header identity provider in OpenShift Container Platform 4.16 requires mutual TLS, where in OpenShift Container Platform 3.11 it did not.

-

The configuration of the OpenID Connect identity provider was simplified in OpenShift Container Platform 4.16. It now obtains data, which previously had to specified in OpenShift Container Platform 3.11, from the provider’s

/.well-known/openid-configurationendpoint.

For more information, see Understanding identity provider configuration.

3.3.4.3. OAuth token storage format

Newly created OAuth HTTP bearer tokens no longer match the names of their OAuth access token objects. The object names are now a hash of the bearer token and are no longer sensitive. This reduces the risk of leaking sensitive information.

3.3.4.4. Default security context constraints

The restricted security context constraints (SCC) in OpenShift Container Platform 4 can no longer be accessed by any authenticated user as the restricted SCC in OpenShift Container Platform 3.11. The broad authenticated access is now granted to the restricted-v2 SCC, which is more restrictive than the old restricted SCC. The restricted SCC still exists; users that want to use it must be specifically given permissions to do it.

For more information, see Managing security context constraints.

3.3.5. Monitoring considerations

Review the following monitoring changes when transitioning from OpenShift Container Platform 3.11 to OpenShift Container Platform 4.16. You cannot migrate Hawkular configurations and metrics to Prometheus.

3.3.5.1. Alert for monitoring infrastructure availability

The default alert that triggers to ensure the availability of the monitoring structure was called DeadMansSwitch in OpenShift Container Platform 3.11. This was renamed to Watchdog in OpenShift Container Platform 4. If you had PagerDuty integration set up with this alert in OpenShift Container Platform 3.11, you must set up the PagerDuty integration for the Watchdog alert in OpenShift Container Platform 4.

For more information, see Configuring alert routing for default platform alerts.

Chapter 4. Network considerations

Review the strategies for redirecting your application network traffic after migration.

4.1. DNS considerations

The DNS domain of the target cluster is different from the domain of the source cluster. By default, applications get FQDNs of the target cluster after migration.

To preserve the source DNS domain of migrated applications, select one of the two options described below.

4.1.1. Isolating the DNS domain of the target cluster from the clients

You can allow the clients' requests sent to the DNS domain of the source cluster to reach the DNS domain of the target cluster without exposing the target cluster to the clients.

Procedure

- Place an exterior network component, such as an application load balancer or a reverse proxy, between the clients and the target cluster.

- Update the application FQDN on the source cluster in the DNS server to return the IP address of the exterior network component.

- Configure the network component to send requests received for the application in the source domain to the load balancer in the target cluster domain.

-

Create a wildcard DNS record for the

*.apps.source.example.comdomain that points to the IP address of the load balancer of the source cluster. - Create a DNS record for each application that points to the IP address of the exterior network component in front of the target cluster. A specific DNS record has higher priority than a wildcard record, so no conflict arises when the application FQDN is resolved.

- The exterior network component must terminate all secure TLS connections. If the connections pass through to the target cluster load balancer, the FQDN of the target application is exposed to the client and certificate errors occur.

- The applications must not return links referencing the target cluster domain to the clients. Otherwise, parts of the application might not load or work properly.

4.1.2. Setting up the target cluster to accept the source DNS domain

You can set up the target cluster to accept requests for a migrated application in the DNS domain of the source cluster.

Procedure

For both non-secure HTTP access and secure HTTPS access, perform the following steps:

Create a route in the target cluster’s project that is configured to accept requests addressed to the application’s FQDN in the source cluster:

$ oc expose svc <app1-svc> --hostname <app1.apps.source.example.com> \ -n <app1-namespace>With this new route in place, the server accepts any request for that FQDN and sends it to the corresponding application pods. In addition, when you migrate the application, another route is created in the target cluster domain. Requests reach the migrated application using either of these hostnames.

Create a DNS record with your DNS provider that points the application’s FQDN in the source cluster to the IP address of the default load balancer of the target cluster. This will redirect traffic away from your source cluster to your target cluster.

The FQDN of the application resolves to the load balancer of the target cluster. The default Ingress Controller router accept requests for that FQDN because a route for that hostname is exposed.

For secure HTTPS access, perform the following additional step:

- Replace the x509 certificate of the default Ingress Controller created during the installation process with a custom certificate.

Configure this certificate to include the wildcard DNS domains for both the source and target clusters in the

subjectAltNamefield.The new certificate is valid for securing connections made using either DNS domain.

4.2. Network traffic redirection strategies

After a successful migration, you must redirect network traffic of your stateless applications from the source cluster to the target cluster.

The strategies for redirecting network traffic are based on the following assumptions:

- The application pods are running on both the source and target clusters.

- Each application has a route that contains the source cluster hostname.

- The route with the source cluster hostname contains a CA certificate.

- For HTTPS, the target router CA certificate contains a Subject Alternative Name for the wildcard DNS record of the source cluster.

Consider the following strategies and select the one that meets your objectives.

Redirecting all network traffic for all applications at the same time

Change the wildcard DNS record of the source cluster to point to the target cluster router’s virtual IP address (VIP).

This strategy is suitable for simple applications or small migrations.

Redirecting network traffic for individual applications

Create a DNS record for each application with the source cluster hostname pointing to the target cluster router’s VIP. This DNS record takes precedence over the source cluster wildcard DNS record.

Redirecting network traffic gradually for individual applications

- Create a proxy that can direct traffic to both the source cluster router’s VIP and the target cluster router’s VIP, for each application.

- Create a DNS record for each application with the source cluster hostname pointing to the proxy.

- Configure the proxy entry for the application to route a percentage of the traffic to the target cluster router’s VIP and the rest of the traffic to the source cluster router’s VIP.

- Gradually increase the percentage of traffic that you route to the target cluster router’s VIP until all the network traffic is redirected.

User-based redirection of traffic for individual applications

Using this strategy, you can filter TCP/IP headers of user requests to redirect network traffic for predefined groups of users. This allows you to test the redirection process on specific populations of users before redirecting the entire network traffic.

- Create a proxy that can direct traffic to both the source cluster router’s VIP and the target cluster router’s VIP, for each application.

- Create a DNS record for each application with the source cluster hostname pointing to the proxy.

-

Configure the proxy entry for the application to route traffic matching a given header pattern, such as

test customers, to the target cluster router’s VIP and the rest of the traffic to the source cluster router’s VIP. - Redirect traffic to the target cluster router’s VIP in stages until all the traffic is on the target cluster router’s VIP.

Chapter 5. About the Migration Toolkit for Containers

The Migration Toolkit for Containers (MTC) enables you to migrate stateful application workloads from OpenShift Container Platform 3 to 4.16 at the granularity of a namespace.

Before you begin your migration, be sure to review the differences between OpenShift Container Platform 3 and 4.

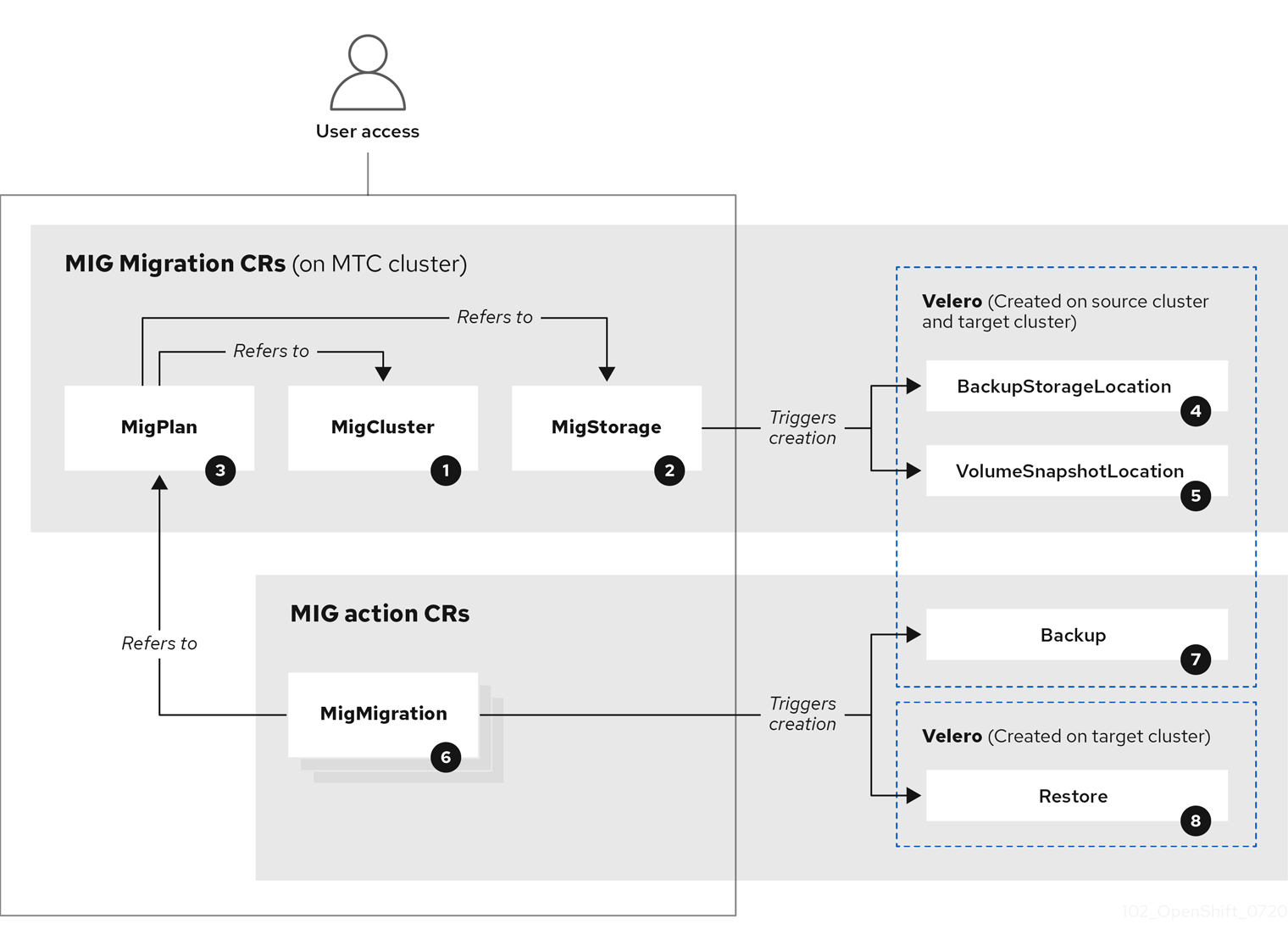

MTC provides a web console and an API, based on Kubernetes custom resources, to help you control the migration and minimize application downtime.

The MTC console is installed on the target cluster by default. You can configure the Migration Toolkit for Containers Operator to install the console on an OpenShift Container Platform 3 source cluster or on a remote cluster.

MTC supports the file system and snapshot data copy methods for migrating data from the source cluster to the target cluster. You can select a method that is suited for your environment and is supported by your storage provider.

The service catalog is deprecated in OpenShift Container Platform 4. You can migrate workload resources provisioned with the service catalog from OpenShift Container Platform 3 to 4 but you cannot perform service catalog actions such as provision, deprovision, or update on these workloads after migration. The MTC console displays a message if the service catalog resources cannot be migrated.

5.1. Terminology

| Term | Definition |

|---|---|

| Source cluster | Cluster from which the applications are migrated. |

| Destination cluster[1] | Cluster to which the applications are migrated. |

| Replication repository | Object storage used for copying images, volumes, and Kubernetes objects during indirect migration or for Kubernetes objects during direct volume migration or direct image migration. The replication repository must be accessible to all clusters. |

| Host cluster |

Cluster on which the The host cluster does not require an exposed registry route for direct image migration. |

| Remote cluster | A remote cluster is usually the source cluster but this is not required.

A remote cluster requires a A remote cluster requires an exposed secure registry route for direct image migration. |

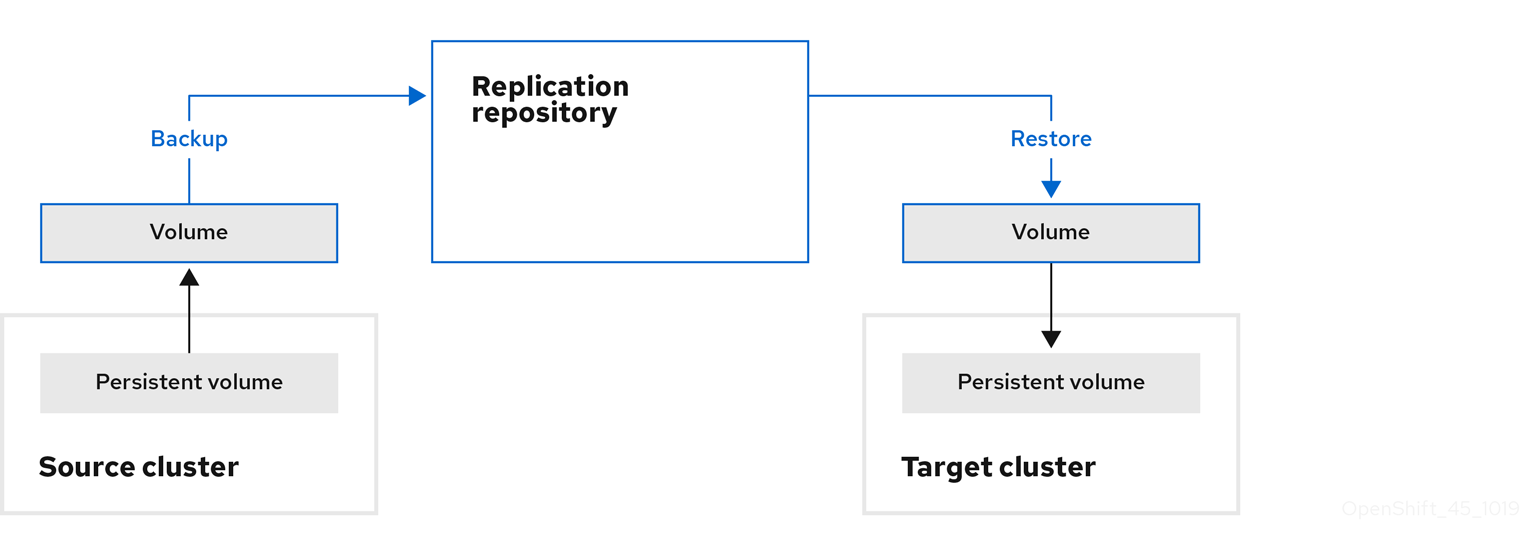

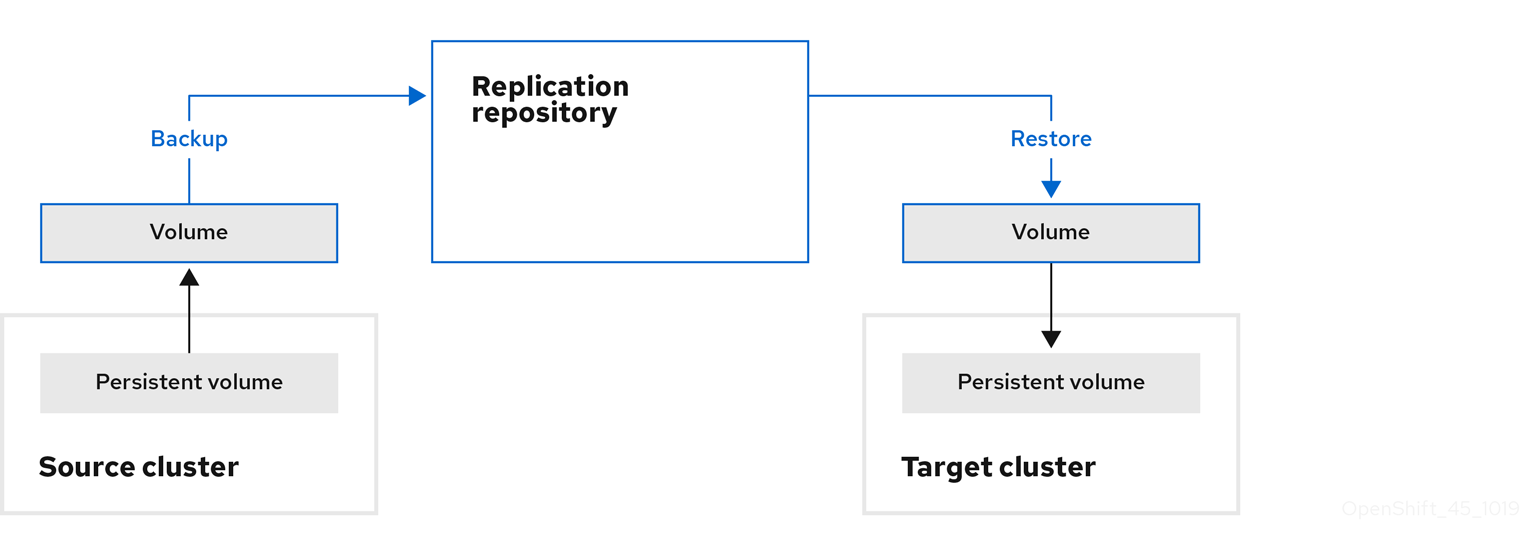

| Indirect migration | Images, volumes, and Kubernetes objects are copied from the source cluster to the replication repository and then from the replication repository to the destination cluster. |

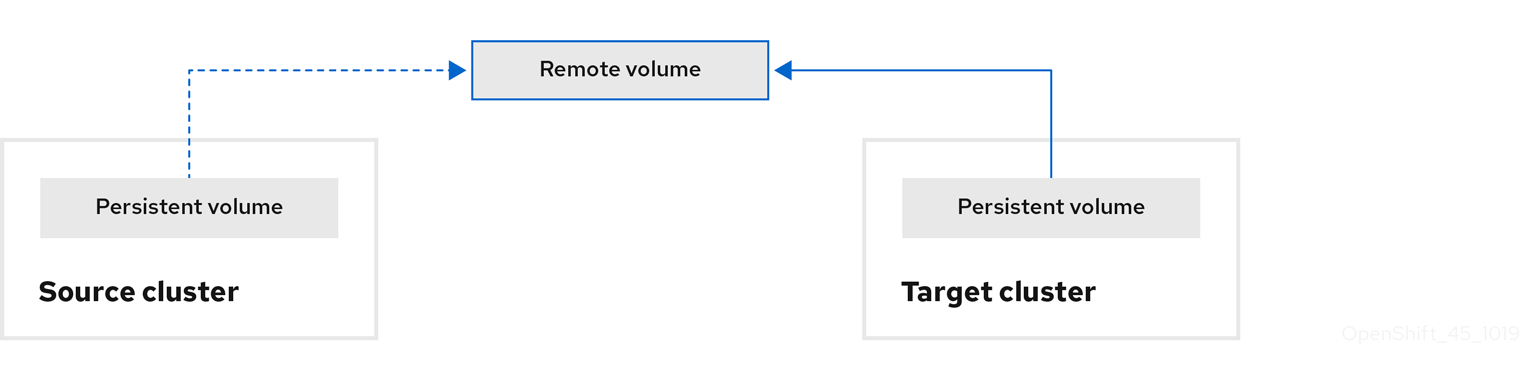

| Direct volume migration | Persistent volumes are copied directly from the source cluster to the destination cluster. |

| Direct image migration | Images are copied directly from the source cluster to the destination cluster. |

| Stage migration | Data is copied to the destination cluster without stopping the application. Running a stage migration multiple times reduces the duration of the cutover migration. |

| Cutover migration | The application is stopped on the source cluster and its resources are migrated to the destination cluster. |

| State migration | Application state is migrated by copying specific persistent volume claims to the destination cluster. |

| Rollback migration | Rollback migration rolls back a completed migration. |

1 Called the target cluster in the MTC web console.

5.2. MTC workflow

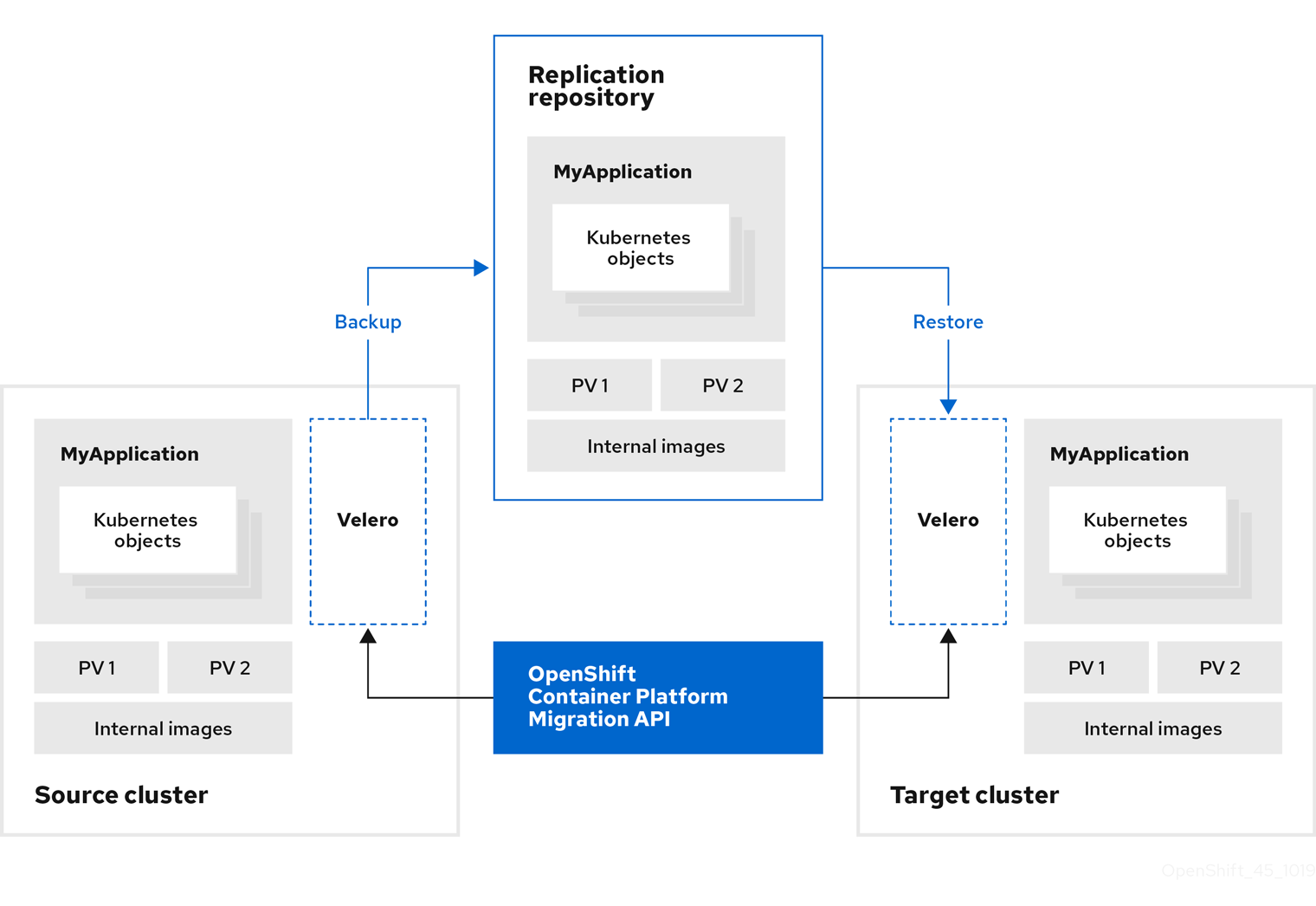

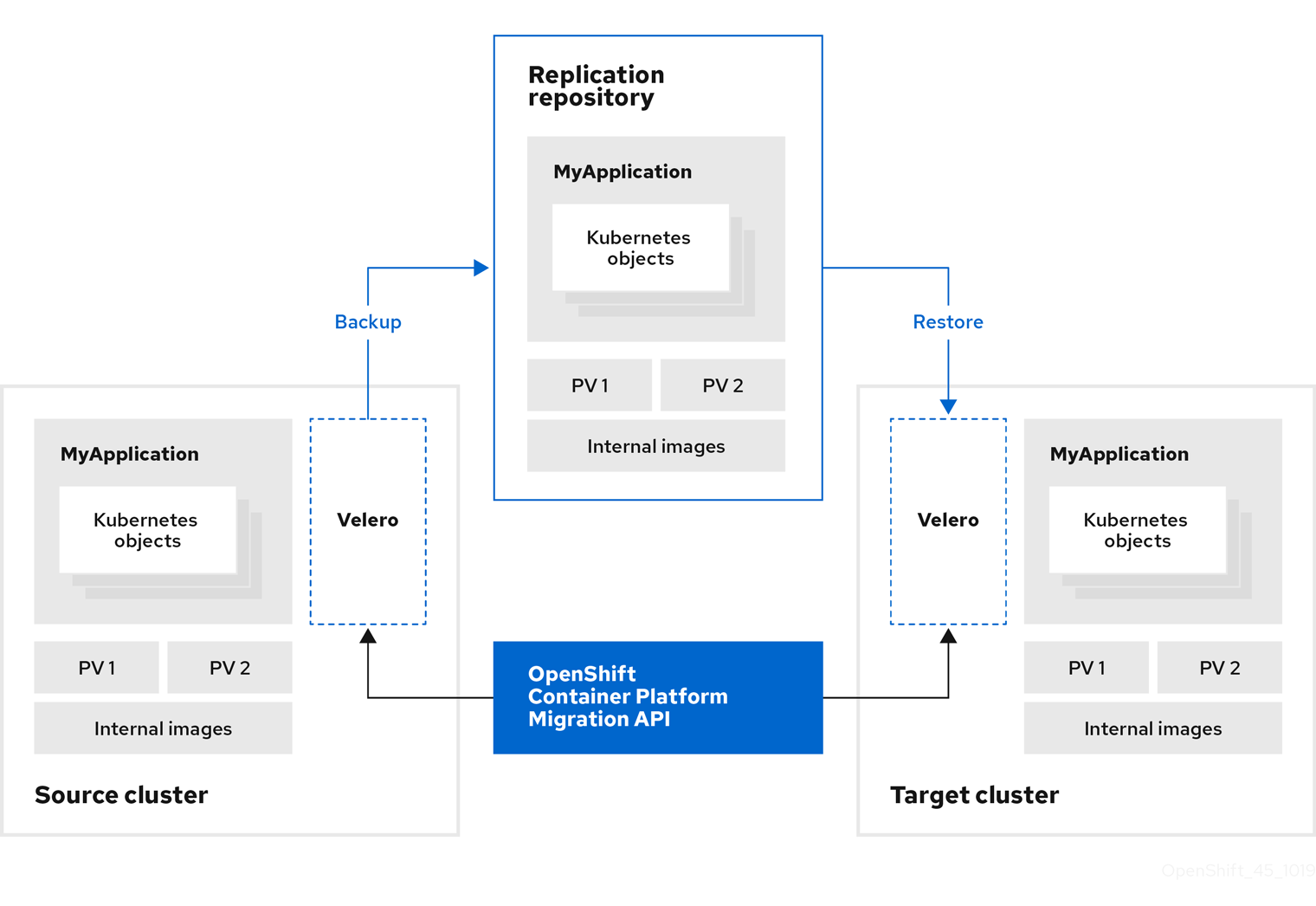

You can migrate Kubernetes resources, persistent volume data, and internal container images to OpenShift Container Platform 4.16 by using the Migration Toolkit for Containers (MTC) web console or the Kubernetes API.

MTC migrates the following resources:

- A namespace specified in a migration plan.

Namespace-scoped resources: When the MTC migrates a namespace, it migrates all the objects and resources associated with that namespace, such as services or pods. Additionally, if a resource that exists in the namespace but not at the cluster level depends on a resource that exists at the cluster level, the MTC migrates both resources.

For example, a security context constraint (SCC) is a resource that exists at the cluster level and a service account (SA) is a resource that exists at the namespace level. If an SA exists in a namespace that the MTC migrates, the MTC automatically locates any SCCs that are linked to the SA and also migrates those SCCs. Similarly, the MTC migrates persistent volumes that are linked to the persistent volume claims of the namespace.

NoteCluster-scoped resources might have to be migrated manually, depending on the resource.

- Custom resources (CRs) and custom resource definitions (CRDs): MTC automatically migrates CRs and CRDs at the namespace level.

Migrating an application with the MTC web console involves the following steps:

Install the Migration Toolkit for Containers Operator on all clusters.

You can install the Migration Toolkit for Containers Operator in a restricted environment with limited or no internet access. The source and target clusters must have network access to each other and to a mirror registry.

Configure the replication repository, an intermediate object storage that MTC uses to migrate data.

The source and target clusters must have network access to the replication repository during migration. If you are using a proxy server, you must configure it to allow network traffic between the replication repository and the clusters.

- Add the source cluster to the MTC web console.

- Add the replication repository to the MTC web console.

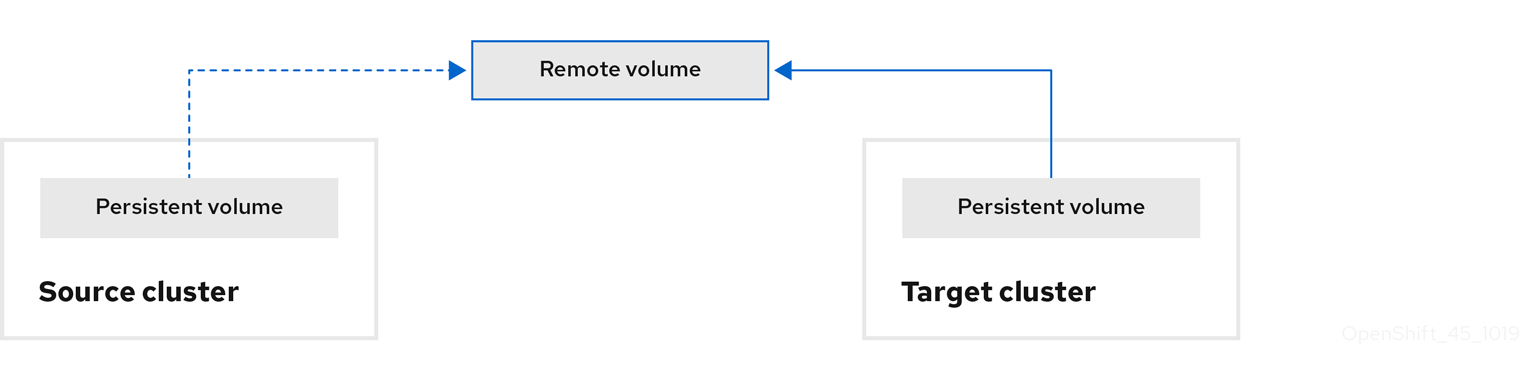

Create a migration plan, with one of the following data migration options:

Copy: MTC copies the data from the source cluster to the replication repository, and from the replication repository to the target cluster.

NoteIf you are using direct image migration or direct volume migration, the images or volumes are copied directly from the source cluster to the target cluster.

Move: MTC unmounts a remote volume, for example, NFS, from the source cluster, creates a PV resource on the target cluster pointing to the remote volume, and then mounts the remote volume on the target cluster. Applications running on the target cluster use the same remote volume that the source cluster was using. The remote volume must be accessible to the source and target clusters.

NoteAlthough the replication repository does not appear in this diagram, it is required for migration.

Run the migration plan, with one of the following options:

Stage copies data to the target cluster without stopping the application.

A stage migration can be run multiple times so that most of the data is copied to the target before migration. Running one or more stage migrations reduces the duration of the cutover migration.

Cutover stops the application on the source cluster and moves the resources to the target cluster.

Optional: You can clear the Halt transactions on the source cluster during migration checkbox.

5.3. About data copy methods

The Migration Toolkit for Containers (MTC) supports the file system and snapshot data copy methods for migrating data from the source cluster to the target cluster. You can select a method that is suited for your environment and is supported by your storage provider.

5.3.1. File system copy method

MTC copies data files from the source cluster to the replication repository, and from there to the target cluster.

The file system copy method uses Restic for indirect migration or Rsync for direct volume migration.

| Benefits | Limitations |

|---|---|

|

|

The Restic and Rsync PV migration assumes that the PVs supported are only volumeMode=filesystem. Using volumeMode=Block for file system migration is not supported.

5.3.2. Snapshot copy method

MTC copies a snapshot of the source cluster data to the replication repository of a cloud provider. The data is restored on the target cluster.

The snapshot copy method can be used with Amazon Web Services, Google Cloud, and Microsoft Azure.

| Benefits | Limitations |

|---|---|

|

|

5.4. Direct volume migration and direct image migration

You can use direct image migration (DIM) and direct volume migration (DVM) to migrate images and data directly from the source cluster to the target cluster.

If you run DVM with nodes that are in different availability zones, the migration might fail because the migrated pods cannot access the persistent volume claim.

DIM and DVM have significant performance benefits because the intermediate steps of backing up files from the source cluster to the replication repository and restoring files from the replication repository to the target cluster are skipped. The data is transferred with Rsync.

DIM and DVM have additional prerequisites.

Chapter 6. Installing the Migration Toolkit for Containers

You can install the Migration Toolkit for Containers (MTC) on OpenShift Container Platform 3 and 4.

After you install the Migration Toolkit for Containers Operator on OpenShift Container Platform 4.16 by using the Operator Lifecycle Manager, you manually install the legacy Migration Toolkit for Containers Operator on OpenShift Container Platform 3.

By default, the MTC web console and the Migration Controller pod run on the target cluster. You can configure the Migration Controller custom resource manifest to run the MTC web console and the Migration Controller pod on a source cluster or on a remote cluster.

After you have installed MTC, you must configure an object storage to use as a replication repository.

To uninstall MTC, see Uninstalling MTC and deleting resources.

6.1. Compatibility guidelines

You must install the Migration Toolkit for Containers (MTC) Operator that is compatible with your OpenShift Container Platform version.

Definitions

- control cluster

- The cluster that runs the MTC controller and GUI.

- remote cluster

- A source or destination cluster for a migration that runs Velero. The Control Cluster communicates with Remote clusters using the Velero API to drive migrations.

You must use the compatible MTC version for migrating your OpenShift Container Platform clusters. For the migration to succeed, both your source cluster and the destination cluster must use the same version of MTC.

MTC 1.7 supports migrations from OpenShift Container Platform 3.11 to 4.16.

MTC 1.8 only supports migrations from OpenShift Container Platform 4.14 and later.

| Details | OpenShift Container Platform 3.11 | OpenShift Container Platform 4.14 or later |

|---|---|---|

| Stable MTC version | MTC v.1.7.z | MTC v.1.8.z |

| Installation | As described in this guide |

Install with OLM, release channel |

Edge cases exist where network restrictions prevent OpenShift Container Platform 4 clusters from connecting to other clusters involved in the migration. For example, when migrating from an OpenShift Container Platform 3.11 cluster on premises to a OpenShift Container Platform 4 cluster in the cloud, the OpenShift Container Platform 4 cluster might have trouble connecting to the OpenShift Container Platform 3.11 cluster. In this case, it is possible to designate the OpenShift Container Platform 3.11 cluster as the control cluster and push workloads to the remote OpenShift Container Platform 4 cluster.

6.2. Installing the legacy Migration Toolkit for Containers Operator on OpenShift Container Platform 3

You can install the legacy Migration Toolkit for Containers Operator manually on OpenShift Container Platform 3.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters. -

You must have access to

registry.redhat.io. -

You must have

podmaninstalled. - You must create an image stream secret and copy it to each node in the cluster.

Procedure

Log in to

registry.redhat.iowith your Red Hat Customer Portal credentials:$ podman login registry.redhat.ioDownload the

operator.ymlfile by entering the following command:podman cp $(podman create registry.redhat.io/rhmtc/openshift-migration-legacy-rhel8-operator:v1.7):/operator.yml ./Download the

controller.ymlfile by entering the following command:podman cp $(podman create registry.redhat.io/rhmtc/openshift-migration-legacy-rhel8-operator:v1.7):/controller.yml ./- Log in to your OpenShift Container Platform source cluster.

Verify that the cluster can authenticate with

registry.redhat.io:$ oc run test --image registry.redhat.io/ubi9 --command sleep infinityCreate the Migration Toolkit for Containers Operator object:

$ oc create -f operator.ymlExample output

namespace/openshift-migration created rolebinding.rbac.authorization.k8s.io/system:deployers created serviceaccount/migration-operator created customresourcedefinition.apiextensions.k8s.io/migrationcontrollers.migration.openshift.io created role.rbac.authorization.k8s.io/migration-operator created rolebinding.rbac.authorization.k8s.io/migration-operator created clusterrolebinding.rbac.authorization.k8s.io/migration-operator created deployment.apps/migration-operator created Error from server (AlreadyExists): error when creating "./operator.yml": rolebindings.rbac.authorization.k8s.io "system:image-builders" already exists1 Error from server (AlreadyExists): error when creating "./operator.yml": rolebindings.rbac.authorization.k8s.io "system:image-pullers" already exists- 1

- You can ignore

Error from server (AlreadyExists)messages. They are caused by the Migration Toolkit for Containers Operator creating resources for earlier versions of OpenShift Container Platform 4 that are provided in later releases.

Create the

MigrationControllerobject:$ oc create -f controller.ymlVerify that the MTC pods are running:

$ oc get pods -n openshift-migration

6.3. Installing the Migration Toolkit for Containers Operator on OpenShift Container Platform 4.16

You install the Migration Toolkit for Containers Operator on OpenShift Container Platform 4.16 by using the Operator Lifecycle Manager.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters.

Procedure

- In the OpenShift Container Platform web console, click Operators → OperatorHub.

- Use the Filter by keyword field to find the Migration Toolkit for Containers Operator.

- Select the Migration Toolkit for Containers Operator and click Install.

Click Install.

On the Installed Operators page, the Migration Toolkit for Containers Operator appears in the openshift-migration project with the status Succeeded.

- Click Migration Toolkit for Containers Operator.

- Under Provided APIs, locate the Migration Controller tile, and click Create Instance.

- Click Create.

- Click Workloads → Pods to verify that the MTC pods are running.

6.4. Proxy configuration

For OpenShift Container Platform 4.1 and earlier versions, you must configure proxies in the MigrationController custom resource (CR) manifest after you install the Migration Toolkit for Containers Operator because these versions do not support a cluster-wide proxy object.

For OpenShift Container Platform 4.2 to 4.16, the MTC inherits the cluster-wide proxy settings. You can change the proxy parameters if you want to override the cluster-wide proxy settings.

6.4.1. Direct volume migration

Direct Volume Migration (DVM) was introduced in MTC 1.4.2. DVM supports only one proxy. The source cluster cannot access the route of the target cluster if the target cluster is also behind a proxy.

If you want to perform a DVM from a source cluster behind a proxy, you must configure a TCP proxy that works at the transport layer and forwards the SSL connections transparently without decrypting and re-encrypting them with their own SSL certificates. A Stunnel proxy is an example of such a proxy.

6.4.1.1. TCP proxy setup for DVM

You can set up a direct connection between the source and the target cluster through a TCP proxy and configure the stunnel_tcp_proxy variable in the MigrationController CR to use the proxy:

apiVersion: migration.openshift.io/v1alpha1

kind: MigrationController

metadata:

name: migration-controller

namespace: openshift-migration

spec:

[...]

stunnel_tcp_proxy: http://username:password@ip:portDirect volume migration (DVM) supports only basic authentication for the proxy. Moreover, DVM works only from behind proxies that can tunnel a TCP connection transparently. HTTP/HTTPS proxies in man-in-the-middle mode do not work. The existing cluster-wide proxies might not support this behavior. As a result, the proxy settings for DVM are intentionally kept different from the usual proxy configuration in MTC.

6.4.1.2. Why use a TCP proxy instead of an HTTP/HTTPS proxy?

You can enable DVM by running Rsync between the source and the target cluster over an OpenShift route. Traffic is encrypted using Stunnel, a TCP proxy. The Stunnel running on the source cluster initiates a TLS connection with the target Stunnel and transfers data over an encrypted channel.

Cluster-wide HTTP/HTTPS proxies in OpenShift are usually configured in man-in-the-middle mode where they negotiate their own TLS session with the outside servers. However, this does not work with Stunnel. Stunnel requires that its TLS session be untouched by the proxy, essentially making the proxy a transparent tunnel which simply forwards the TCP connection as-is. Therefore, you must use a TCP proxy.

6.4.1.3. Known issue

Migration fails with error Upgrade request required

The migration Controller uses the SPDY protocol to execute commands within remote pods. If the remote cluster is behind a proxy or a firewall that does not support the SPDY protocol, the migration controller fails to execute remote commands. The migration fails with the error message Upgrade request required. Workaround: Use a proxy that supports the SPDY protocol.

In addition to supporting the SPDY protocol, the proxy or firewall also must pass the Upgrade HTTP header to the API server. The client uses this header to open a websocket connection with the API server. If the Upgrade header is blocked by the proxy or firewall, the migration fails with the error message Upgrade request required. Workaround: Ensure that the proxy forwards the Upgrade header.

6.4.2. Tuning network policies for migrations

OpenShift supports restricting traffic to or from pods using NetworkPolicy or EgressFirewalls based on the network plugin used by the cluster. If any of the source namespaces involved in a migration use such mechanisms to restrict network traffic to pods, the restrictions might inadvertently stop traffic to Rsync pods during migration.

Rsync pods running on both the source and the target clusters must connect to each other over an OpenShift Route. Existing NetworkPolicy or EgressNetworkPolicy objects can be configured to automatically exempt Rsync pods from these traffic restrictions.

6.4.2.1. NetworkPolicy configuration

6.4.2.1.1. Egress traffic from Rsync pods

You can use the unique labels of Rsync pods to allow egress traffic to pass from them if the NetworkPolicy configuration in the source or destination namespaces blocks this type of traffic. The following policy allows all egress traffic from Rsync pods in the namespace:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: allow-all-egress-from-rsync-pods

spec:

podSelector:

matchLabels:

owner: directvolumemigration

app: directvolumemigration-rsync-transfer

egress:

- {}

policyTypes:

- Egress6.4.2.1.2. Ingress traffic to Rsync pods

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: allow-all-egress-from-rsync-pods

spec:

podSelector:

matchLabels:

owner: directvolumemigration

app: directvolumemigration-rsync-transfer

ingress:

- {}

policyTypes:

- Ingress6.4.2.2. EgressNetworkPolicy configuration

The EgressNetworkPolicy object or Egress Firewalls are OpenShift constructs designed to block egress traffic leaving the cluster.

Unlike the NetworkPolicy object, the Egress Firewall works at a project level because it applies to all pods in the namespace. Therefore, the unique labels of Rsync pods do not exempt only Rsync pods from the restrictions. However, you can add the CIDR ranges of the source or target cluster to the Allow rule of the policy so that a direct connection can be setup between two clusters.

Based on which cluster the Egress Firewall is present in, you can add the CIDR range of the other cluster to allow egress traffic between the two:

apiVersion: network.openshift.io/v1

kind: EgressNetworkPolicy

metadata:

name: test-egress-policy

namespace: <namespace>

spec:

egress:

- to:

cidrSelector: <cidr_of_source_or_target_cluster>

type: Deny6.4.2.3. Choosing alternate endpoints for data transfer

By default, DVM uses an OpenShift Container Platform route as an endpoint to transfer PV data to destination clusters. You can choose another type of supported endpoint, if cluster topologies allow.

For each cluster, you can configure an endpoint by setting the rsync_endpoint_type variable on the appropriate destination cluster in your MigrationController CR:

apiVersion: migration.openshift.io/v1alpha1

kind: MigrationController

metadata:

name: migration-controller

namespace: openshift-migration

spec:

[...]

rsync_endpoint_type: [NodePort|ClusterIP|Route]6.4.2.4. Configuring supplemental groups for Rsync pods

When your PVCs use a shared storage, you can configure the access to that storage by adding supplemental groups to Rsync pod definitions in order for the pods to allow access:

| Variable | Type | Default | Description |

|---|---|---|---|

|

| string | Not set | Comma-separated list of supplemental groups for source Rsync pods |

|

| string | Not set | Comma-separated list of supplemental groups for target Rsync pods |

Example usage

The MigrationController CR can be updated to set values for these supplemental groups:

spec:

src_supplemental_groups: "1000,2000"

target_supplemental_groups: "2000,3000"6.4.3. Configuring proxies

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters.

Procedure

Get the

MigrationControllerCR manifest:$ oc get migrationcontroller <migration_controller> -n openshift-migrationUpdate the proxy parameters:

apiVersion: migration.openshift.io/v1alpha1 kind: MigrationController metadata: name: <migration_controller> namespace: openshift-migration ... spec: stunnel_tcp_proxy: http://<username>:<password>@<ip>:<port>1 noProxy: example.com2 Preface a domain with

.to match subdomains only. For example,.y.commatchesx.y.com, but noty.com. Use*to bypass proxy for all destinations. If you scale up workers that are not included in the network defined by thenetworking.machineNetwork[].cidrfield from the installation configuration, you must add them to this list to prevent connection issues.This field is ignored if neither the

httpProxynor thehttpsProxyfield is set.-

Save the manifest as

migration-controller.yaml. Apply the updated manifest:

$ oc replace -f migration-controller.yaml -n openshift-migration

For more information, see Configuring the cluster-wide proxy.

6.5. Configuring a replication repository

You must configure an object storage to use as a replication repository. The Migration Toolkit for Containers (MTC) copies data from the source cluster to the replication repository, and then from the replication repository to the target cluster.

MTC supports the file system and snapshot data copy methods for migrating data from the source cluster to the target cluster. You can select a method that is suited for your environment and is supported by your storage provider.

The following storage providers are supported:

- Multicloud Object Gateway

- Amazon Web Services S3

- Google Cloud

- Microsoft Azure Blob

- Generic S3 object storage, for example, Minio or Ceph S3

6.5.1. Prerequisites

- All clusters must have uninterrupted network access to the replication repository.

- If you use a proxy server with an internally hosted replication repository, you must ensure that the proxy allows access to the replication repository.

6.5.2. Retrieving Multicloud Object Gateway credentials

Retrieve the Multicloud Object Gateway (MCG) credentials and S3 endpoint, which you need to configure MCG as a replication repository for the Migration Toolkit for Containers (MTC).

Retrieve the Multicloud Object Gateway (MCG) credentials, which you need to create a Secret custom resource (CR) for MTC.

Although the MCG Operator is deprecated, the MCG plugin is still available for OpenShift Data Foundation. To download the plugin, browse to Download Red Hat OpenShift Data Foundation and download the appropriate MCG plugin for your operating system.

Prerequisites

- You must deploy OpenShift Data Foundation by using the appropriate Red Hat OpenShift Data Foundation deployment guide.

Procedure

Obtain the S3 endpoint,

AWS_ACCESS_KEY_ID, and theAWS_SECRET_ACCESS_KEYvalue by running theoc describecommand for theNooBaaCR.You use these credentials to add MCG as a replication repository.

6.5.3. Configuring Amazon Web Services

Configure Amazon Web Services (AWS) S3 storage and Identity and Access Management (IAM) credentials for backup storage with Migration Toolkit for Containers (MTC). This provides the necessary permissions and storage infrastructure for data protection operations.

Prerequisites

- You must have the AWS CLI installed.

- The AWS S3 storage bucket must be accessible to the source and target clusters.

If you are using the snapshot copy method:

- You must have access to EC2 Elastic Block Storage (EBS).

- The source and target clusters must be in the same region.

- The source and target clusters must have the same storage class.

- The storage class must be compatible with snapshots.

Procedure

Set the

BUCKETvariable:$ BUCKET=<your_bucket>Set the

REGIONvariable:$ REGION=<your_region>Create an AWS S3 bucket:

$ aws s3api create-bucket \ --bucket $BUCKET \ --region $REGION \ --create-bucket-configuration LocationConstraint=$REGIONwhere:

LocationConstraint-

Specifies the bucket configuration location constraint.

us-east-1does not supportLocationConstraint. If your region isus-east-1, omit--create-bucket-configuration LocationConstraint=$REGION.

Create an IAM user:

$ aws iam create-user --user-name velerowhere:

velero- Specifies the user name. If you want to use Velero to back up multiple clusters with multiple S3 buckets, create a unique user name for each cluster.

Create a

velero-policy.jsonfile:$ cat > velero-policy.json <<EOF { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "ec2:DescribeVolumes", "ec2:DescribeSnapshots", "ec2:CreateTags", "ec2:CreateVolume", "ec2:CreateSnapshot", "ec2:DeleteSnapshot" ], "Resource": "*" }, { "Effect": "Allow", "Action": [ "s3:GetObject", "s3:DeleteObject", "s3:PutObject", "s3:AbortMultipartUpload", "s3:ListMultipartUploadParts" ], "Resource": [ "arn:aws:s3:::${BUCKET}/*" ] }, { "Effect": "Allow", "Action": [ "s3:ListBucket", "s3:GetBucketLocation", "s3:ListBucketMultipartUploads" ], "Resource": [ "arn:aws:s3:::${BUCKET}" ] } ] } EOFAttach the policies to give the

velerouser the minimum necessary permissions:$ aws iam put-user-policy \ --user-name velero \ --policy-name velero \ --policy-document file://velero-policy.jsonCreate an access key for the

velerouser:$ aws iam create-access-key --user-name velero{ "AccessKey": { "UserName": "velero", "Status": "Active", "CreateDate": "2017-07-31T22:24:41.576Z", "SecretAccessKey": <AWS_SECRET_ACCESS_KEY>, "AccessKeyId": <AWS_ACCESS_KEY_ID> } }Record the

AWS_SECRET_ACCESS_KEYand theAWS_ACCESS_KEY_ID. You use the credentials to add AWS as a replication repository.

6.5.4. Configuring Google Cloud

You configure a Google Cloud storage bucket as a replication repository for the Migration Toolkit for Containers (MTC).

Prerequisites

-

You must have the

gcloudandgsutilCLI tools installed. See the Google cloud documentation for details. - The Google Cloud storage bucket must be accessible to the source and target clusters.

If you are using the snapshot copy method:

- The source and target clusters must be in the same region.

- The source and target clusters must have the same storage class.

- The storage class must be compatible with snapshots.

Procedure

Log in to Google Cloud:

$ gcloud auth loginSet the

BUCKETvariable:$ BUCKET=<bucket>where:

bucket- Specifies the bucket name.

Create the storage bucket:

$ gsutil mb gs://$BUCKET/Set the

PROJECT_IDvariable to your active project:$ PROJECT_ID=$(gcloud config get-value project)Create a service account:

$ gcloud iam service-accounts create velero \ --display-name "Velero service account"List your service accounts:

$ gcloud iam service-accounts listSet the

SERVICE_ACCOUNT_EMAILvariable to match itsemailvalue:$ SERVICE_ACCOUNT_EMAIL=$(gcloud iam service-accounts list \ --filter="displayName:Velero service account" \ --format 'value(email)')Attach the policies to give the

velerouser the minimum necessary permissions:$ ROLE_PERMISSIONS=( compute.disks.get compute.disks.create compute.disks.createSnapshot compute.snapshots.get compute.snapshots.create compute.snapshots.useReadOnly compute.snapshots.delete compute.zones.get storage.objects.create storage.objects.delete storage.objects.get storage.objects.list iam.serviceAccounts.signBlob )Create the

velero.servercustom role:$ gcloud iam roles create velero.server \ --project $PROJECT_ID \ --title "Velero Server" \ --permissions "$(IFS=","; echo "${ROLE_PERMISSIONS[*]}")"Add IAM policy binding to the project:

$ gcloud projects add-iam-policy-binding $PROJECT_ID \ --member serviceAccount:$SERVICE_ACCOUNT_EMAIL \ --role projects/$PROJECT_ID/roles/velero.serverUpdate the IAM service account:

$ gsutil iam ch serviceAccount:$SERVICE_ACCOUNT_EMAIL:objectAdmin gs://${BUCKET}Save the IAM service account keys to the

credentials-velerofile in the current directory:$ gcloud iam service-accounts keys create credentials-velero \ --iam-account $SERVICE_ACCOUNT_EMAILYou use the

credentials-velerofile to add Google Cloud as a replication repository.

6.5.5. Configuring Microsoft Azure

Configure Microsoft Azure storage and service principal credentials for backup storage with Migration Toolkit for Containers (MTC). This provides the necessary authentication and storage infrastructure for data protection operations.

Prerequisites

- You must have the Azure CLI installed.

- The Azure Blob storage container must be accessible to the source and target clusters.

If you are using the snapshot copy method:

- The source and target clusters must be in the same region.

- The source and target clusters must have the same storage class.

- The storage class must be compatible with snapshots.

6.6. Uninstalling MTC and deleting resources

You can uninstall the Migration Toolkit for Containers (MTC) and delete its resources to clean up the cluster.

Deleting the velero custom resource definitions (CRDs) removes Velero from the cluster.

Prerequisites

- You have logged in to the OpenShift Container Platform cluster as a cluster administrator.

Procedure

Delete the

MigrationControllercustom resource (CR) on all clusters:$ oc delete migrationcontroller <migration_controller>- Uninstall the Migration Toolkit for Containers Operator on OpenShift Container Platform 4 by using the Operator Lifecycle Manager (OLM).

Delete cluster-scoped resources on all clusters by running the following commands:

migrationcustom resource definitions (CRDs):$ oc delete $(oc get crds -o name | grep 'migration.openshift.io')veleroCRDs:$ oc delete $(oc get crds -o name | grep 'velero')migrationcluster roles:$ oc delete $(oc get clusterroles -o name | grep 'migration.openshift.io')migration-operatorcluster role:$ oc delete clusterrole migration-operatorvelerocluster roles:$ oc delete $(oc get clusterroles -o name | grep 'velero')migrationcluster role bindings:$ oc delete $(oc get clusterrolebindings -o name | grep 'migration.openshift.io')migration-operatorcluster role bindings:$ oc delete clusterrolebindings migration-operatorvelerocluster role bindings:$ oc delete $(oc get clusterrolebindings -o name | grep 'velero')

Chapter 7. Installing the Migration Toolkit for Containers in a restricted network environment

You can install the Migration Toolkit for Containers (MTC) on OpenShift Container Platform 3 and 4 in a restricted network environment by performing the following procedures:

Create a mirrored Operator catalog.

This process creates a

mapping.txtfile, which contains the mapping between theregistry.redhat.ioimage and your mirror registry image. Themapping.txtfile is required for installing the Operator on the source cluster.Install the Migration Toolkit for Containers Operator on the OpenShift Container Platform 4.16 target cluster by using Operator Lifecycle Manager.

By default, the MTC web console and the

Migration Controllerpod run on the target cluster. You can configure theMigration Controllercustom resource manifest to run the MTC web console and theMigration Controllerpod on a source cluster or on a remote cluster.- Install the legacy Migration Toolkit for Containers Operator on the OpenShift Container Platform 3 source cluster from the command-line interface.

- Configure object storage to use as a replication repository.

To uninstall MTC, see Uninstalling MTC and deleting resources.

7.1. Compatibility guidelines

You must install the Migration Toolkit for Containers (MTC) Operator that is compatible with your OpenShift Container Platform version.

Definitions

- control cluster

- The cluster that runs the MTC controller and GUI.

- remote cluster

- A source or destination cluster for a migration that runs Velero. The Control Cluster communicates with Remote clusters using the Velero API to drive migrations.

You must use the compatible MTC version for migrating your OpenShift Container Platform clusters. For the migration to succeed, both your source cluster and the destination cluster must use the same version of MTC.

MTC 1.7 supports migrations from OpenShift Container Platform 3.11 to 4.16.

MTC 1.8 only supports migrations from OpenShift Container Platform 4.14 and later.

| Details | OpenShift Container Platform 3.11 | OpenShift Container Platform 4.14 or later |

|---|---|---|

| Stable MTC version | MTC v.1.7.z | MTC v.1.8.z |

| Installation | As described in this guide |

Install with OLM, release channel |

Edge cases exist where network restrictions prevent OpenShift Container Platform 4 clusters from connecting to other clusters involved in the migration. For example, when migrating from an OpenShift Container Platform 3.11 cluster on premises to a OpenShift Container Platform 4 cluster in the cloud, the OpenShift Container Platform 4 cluster might have trouble connecting to the OpenShift Container Platform 3.11 cluster. In this case, it is possible to designate the OpenShift Container Platform 3.11 cluster as the control cluster and push workloads to the remote OpenShift Container Platform 4 cluster.

7.2. Installing the Migration Toolkit for Containers Operator on OpenShift Container Platform 4.16

You install the Migration Toolkit for Containers Operator on OpenShift Container Platform 4.16 by using the Operator Lifecycle Manager.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters. - You must create an Operator catalog from a mirror image in a local registry.

Procedure

- In the OpenShift Container Platform web console, click Operators → OperatorHub.

- Use the Filter by keyword field to find the Migration Toolkit for Containers Operator.

- Select the Migration Toolkit for Containers Operator and click Install.

Click Install.

On the Installed Operators page, the Migration Toolkit for Containers Operator appears in the openshift-migration project with the status Succeeded.

- Click Migration Toolkit for Containers Operator.

- Under Provided APIs, locate the Migration Controller tile, and click Create Instance.

- Click Create.

- Click Workloads → Pods to verify that the MTC pods are running.

7.3. Installing the legacy Migration Toolkit for Containers Operator on OpenShift Container Platform 3

You can install the legacy Migration Toolkit for Containers Operator manually on OpenShift Container Platform 3.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters. -

You must have access to

registry.redhat.io. -

You must have

podmaninstalled. - You must create an image stream secret and copy it to each node in the cluster.

-

You must have a Linux workstation with network access in order to download files from

registry.redhat.io. - You must create a mirror image of the Operator catalog.

- You must install the Migration Toolkit for Containers Operator from the mirrored Operator catalog on OpenShift Container Platform 4.16.

Procedure

Log in to

registry.redhat.iowith your Red Hat Customer Portal credentials:$ podman login registry.redhat.ioDownload the

operator.ymlfile by entering the following command:podman cp $(podman create registry.redhat.io/rhmtc/openshift-migration-legacy-rhel8-operator:v1.7):/operator.yml ./Download the

controller.ymlfile by entering the following command:podman cp $(podman create registry.redhat.io/rhmtc/openshift-migration-legacy-rhel8-operator:v1.7):/controller.yml ./Obtain the Operator image mapping by running the following command:

$ grep openshift-migration-legacy-rhel8-operator ./mapping.txt | grep rhmtcThe

mapping.txtfile was created when you mirrored the Operator catalog. The output shows the mapping between theregistry.redhat.ioimage and your mirror registry image.Example output

registry.redhat.io/rhmtc/openshift-migration-legacy-rhel8-operator@sha256:468a6126f73b1ee12085ca53a312d1f96ef5a2ca03442bcb63724af5e2614e8a=<registry.apps.example.com>/rhmtc/openshift-migration-legacy-rhel8-operatorUpdate the

imagevalues for theansibleandoperatorcontainers and theREGISTRYvalue in theoperator.ymlfile:containers: - name: ansible image: <registry.apps.example.com>/rhmtc/openshift-migration-legacy-rhel8-operator@sha256:<468a6126f73b1ee12085ca53a312d1f96ef5a2ca03442bcb63724af5e2614e8a>1 ... - name: operator image: <registry.apps.example.com>/rhmtc/openshift-migration-legacy-rhel8-operator@sha256:<468a6126f73b1ee12085ca53a312d1f96ef5a2ca03442bcb63724af5e2614e8a>2 ... env: - name: REGISTRY value: <registry.apps.example.com>3 - Log in to your OpenShift Container Platform source cluster.

Create the Migration Toolkit for Containers Operator object:

$ oc create -f operator.ymlExample output

namespace/openshift-migration created rolebinding.rbac.authorization.k8s.io/system:deployers created serviceaccount/migration-operator created customresourcedefinition.apiextensions.k8s.io/migrationcontrollers.migration.openshift.io created role.rbac.authorization.k8s.io/migration-operator created rolebinding.rbac.authorization.k8s.io/migration-operator created clusterrolebinding.rbac.authorization.k8s.io/migration-operator created deployment.apps/migration-operator created Error from server (AlreadyExists): error when creating "./operator.yml": rolebindings.rbac.authorization.k8s.io "system:image-builders" already exists1 Error from server (AlreadyExists): error when creating "./operator.yml": rolebindings.rbac.authorization.k8s.io "system:image-pullers" already exists- 1

- You can ignore

Error from server (AlreadyExists)messages. They are caused by the Migration Toolkit for Containers Operator creating resources for earlier versions of OpenShift Container Platform 4 that are provided in later releases.

Create the

MigrationControllerobject:$ oc create -f controller.ymlVerify that the MTC pods are running:

$ oc get pods -n openshift-migration

7.4. Proxy configuration

For OpenShift Container Platform 4.1 and earlier versions, you must configure proxies in the MigrationController custom resource (CR) manifest after you install the Migration Toolkit for Containers Operator because these versions do not support a cluster-wide proxy object.

For OpenShift Container Platform 4.2 to 4.16, the MTC inherits the cluster-wide proxy settings. You can change the proxy parameters if you want to override the cluster-wide proxy settings.

7.4.1. Direct volume migration

Direct Volume Migration (DVM) was introduced in MTC 1.4.2. DVM supports only one proxy. The source cluster cannot access the route of the target cluster if the target cluster is also behind a proxy.

If you want to perform a DVM from a source cluster behind a proxy, you must configure a TCP proxy that works at the transport layer and forwards the SSL connections transparently without decrypting and re-encrypting them with their own SSL certificates. A Stunnel proxy is an example of such a proxy.

7.4.1.1. TCP proxy setup for DVM

You can set up a direct connection between the source and the target cluster through a TCP proxy and configure the stunnel_tcp_proxy variable in the MigrationController CR to use the proxy:

apiVersion: migration.openshift.io/v1alpha1

kind: MigrationController

metadata:

name: migration-controller

namespace: openshift-migration

spec:

[...]

stunnel_tcp_proxy: http://username:password@ip:portDirect volume migration (DVM) supports only basic authentication for the proxy. Moreover, DVM works only from behind proxies that can tunnel a TCP connection transparently. HTTP/HTTPS proxies in man-in-the-middle mode do not work. The existing cluster-wide proxies might not support this behavior. As a result, the proxy settings for DVM are intentionally kept different from the usual proxy configuration in MTC.

7.4.1.2. Why use a TCP proxy instead of an HTTP/HTTPS proxy?

You can enable DVM by running Rsync between the source and the target cluster over an OpenShift route. Traffic is encrypted using Stunnel, a TCP proxy. The Stunnel running on the source cluster initiates a TLS connection with the target Stunnel and transfers data over an encrypted channel.

Cluster-wide HTTP/HTTPS proxies in OpenShift are usually configured in man-in-the-middle mode where they negotiate their own TLS session with the outside servers. However, this does not work with Stunnel. Stunnel requires that its TLS session be untouched by the proxy, essentially making the proxy a transparent tunnel which simply forwards the TCP connection as-is. Therefore, you must use a TCP proxy.

7.4.1.3. Known issue

Migration fails with error Upgrade request required

The migration Controller uses the SPDY protocol to execute commands within remote pods. If the remote cluster is behind a proxy or a firewall that does not support the SPDY protocol, the migration controller fails to execute remote commands. The migration fails with the error message Upgrade request required. Workaround: Use a proxy that supports the SPDY protocol.

In addition to supporting the SPDY protocol, the proxy or firewall also must pass the Upgrade HTTP header to the API server. The client uses this header to open a websocket connection with the API server. If the Upgrade header is blocked by the proxy or firewall, the migration fails with the error message Upgrade request required. Workaround: Ensure that the proxy forwards the Upgrade header.

7.4.2. Tuning network policies for migrations

OpenShift supports restricting traffic to or from pods using NetworkPolicy or EgressFirewalls based on the network plugin used by the cluster. If any of the source namespaces involved in a migration use such mechanisms to restrict network traffic to pods, the restrictions might inadvertently stop traffic to Rsync pods during migration.

Rsync pods running on both the source and the target clusters must connect to each other over an OpenShift Route. Existing NetworkPolicy or EgressNetworkPolicy objects can be configured to automatically exempt Rsync pods from these traffic restrictions.

7.4.2.1. NetworkPolicy configuration

7.4.2.1.1. Egress traffic from Rsync pods

You can use the unique labels of Rsync pods to allow egress traffic to pass from them if the NetworkPolicy configuration in the source or destination namespaces blocks this type of traffic. The following policy allows all egress traffic from Rsync pods in the namespace:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: allow-all-egress-from-rsync-pods

spec:

podSelector:

matchLabels:

owner: directvolumemigration

app: directvolumemigration-rsync-transfer

egress:

- {}

policyTypes:

- Egress7.4.2.1.2. Ingress traffic to Rsync pods

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: allow-all-egress-from-rsync-pods

spec:

podSelector:

matchLabels:

owner: directvolumemigration

app: directvolumemigration-rsync-transfer

ingress:

- {}

policyTypes:

- Ingress7.4.2.2. EgressNetworkPolicy configuration

The EgressNetworkPolicy object or Egress Firewalls are OpenShift constructs designed to block egress traffic leaving the cluster.

Unlike the NetworkPolicy object, the Egress Firewall works at a project level because it applies to all pods in the namespace. Therefore, the unique labels of Rsync pods do not exempt only Rsync pods from the restrictions. However, you can add the CIDR ranges of the source or target cluster to the Allow rule of the policy so that a direct connection can be setup between two clusters.

Based on which cluster the Egress Firewall is present in, you can add the CIDR range of the other cluster to allow egress traffic between the two:

apiVersion: network.openshift.io/v1

kind: EgressNetworkPolicy

metadata:

name: test-egress-policy

namespace: <namespace>

spec:

egress:

- to:

cidrSelector: <cidr_of_source_or_target_cluster>

type: Deny7.4.2.3. Choosing alternate endpoints for data transfer

By default, DVM uses an OpenShift Container Platform route as an endpoint to transfer PV data to destination clusters. You can choose another type of supported endpoint, if cluster topologies allow.

For each cluster, you can configure an endpoint by setting the rsync_endpoint_type variable on the appropriate destination cluster in your MigrationController CR:

apiVersion: migration.openshift.io/v1alpha1

kind: MigrationController

metadata:

name: migration-controller

namespace: openshift-migration

spec:

[...]

rsync_endpoint_type: [NodePort|ClusterIP|Route]7.4.2.4. Configuring supplemental groups for Rsync pods

When your PVCs use a shared storage, you can configure the access to that storage by adding supplemental groups to Rsync pod definitions in order for the pods to allow access:

| Variable | Type | Default | Description |

|---|---|---|---|

|

| string | Not set | Comma-separated list of supplemental groups for source Rsync pods |

|

| string | Not set | Comma-separated list of supplemental groups for target Rsync pods |

Example usage

The MigrationController CR can be updated to set values for these supplemental groups:

spec:

src_supplemental_groups: "1000,2000"

target_supplemental_groups: "2000,3000"7.4.3. Configuring proxies

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters.

Procedure

Get the

MigrationControllerCR manifest:$ oc get migrationcontroller <migration_controller> -n openshift-migrationUpdate the proxy parameters:

apiVersion: migration.openshift.io/v1alpha1 kind: MigrationController metadata: name: <migration_controller> namespace: openshift-migration ... spec: stunnel_tcp_proxy: http://<username>:<password>@<ip>:<port>1 noProxy: example.com2 Preface a domain with

.to match subdomains only. For example,.y.commatchesx.y.com, but noty.com. Use*to bypass proxy for all destinations. If you scale up workers that are not included in the network defined by thenetworking.machineNetwork[].cidrfield from the installation configuration, you must add them to this list to prevent connection issues.This field is ignored if neither the

httpProxynor thehttpsProxyfield is set.-

Save the manifest as

migration-controller.yaml. Apply the updated manifest:

$ oc replace -f migration-controller.yaml -n openshift-migration

For more information, see Configuring the cluster-wide proxy.

7.5. Configuring a replication repository

The Multicloud Object Gateway is the only supported option for a restricted network environment.

MTC supports the file system and snapshot data copy methods for migrating data from the source cluster to the target cluster. You can select a method that is suited for your environment and is supported by your storage provider.

7.5.1. Prerequisites

- All clusters must have uninterrupted network access to the replication repository.

- If you use a proxy server with an internally hosted replication repository, you must ensure that the proxy allows access to the replication repository.

7.5.2. Retrieving Multicloud Object Gateway credentials

Although the MCG Operator is deprecated, the MCG plugin is still available for OpenShift Data Foundation. To download the plugin, browse to Download Red Hat OpenShift Data Foundation and download the appropriate MCG plugin for your operating system.

Prerequisites

- You must deploy OpenShift Data Foundation by using the appropriate Red Hat OpenShift Data Foundation deployment guide.

7.6. Uninstalling MTC and deleting resources

You can uninstall the Migration Toolkit for Containers (MTC) and delete its resources to clean up the cluster.

Deleting the velero custom resource definitions (CRDs) removes Velero from the cluster.

Prerequisites

- You have logged in to the OpenShift Container Platform cluster as a cluster administrator.

Procedure

Delete the

MigrationControllercustom resource (CR) on all clusters:$ oc delete migrationcontroller <migration_controller>- Uninstall the Migration Toolkit for Containers Operator on OpenShift Container Platform 4 by using the Operator Lifecycle Manager (OLM).

Delete cluster-scoped resources on all clusters by running the following commands:

migrationcustom resource definitions (CRDs):$ oc delete $(oc get crds -o name | grep 'migration.openshift.io')veleroCRDs:$ oc delete $(oc get crds -o name | grep 'velero')migrationcluster roles:$ oc delete $(oc get clusterroles -o name | grep 'migration.openshift.io')migration-operatorcluster role:$ oc delete clusterrole migration-operatorvelerocluster roles:$ oc delete $(oc get clusterroles -o name | grep 'velero')migrationcluster role bindings:$ oc delete $(oc get clusterrolebindings -o name | grep 'migration.openshift.io')migration-operatorcluster role bindings:$ oc delete clusterrolebindings migration-operatorvelerocluster role bindings:$ oc delete $(oc get clusterrolebindings -o name | grep 'velero')

Chapter 8. Upgrading the Migration Toolkit for Containers