Network Observability

Configuring and using the Network Observability Operator in OpenShift Container Platform

Abstract

Chapter 1. Network Observability Operator release notes

Review new features, enhancements, fixed issues, and known issues for the Network Observability Operator. These release notes provide information to help you understand changes and security advisories in the latest operator release.

The Network Observability Operator enables administrators to observe and analyze network traffic flows for OpenShift Container Platform clusters.

These release notes track the development of the Network Observability Operator in the OpenShift Container Platform.

1.1. Network Observability Operator 1.11.2 advisory

You can review the advisory for Network Observability Operator 1.11.2 release.

1.2. Network Observability Operator 1.11.1 advisory

You can review the advisory for Network Observability Operator 1.11.1 release.

1.3. Network Observability Operator 1.11.1 fixed issues

The Network Observability Operator 1.11.1 release contains several fixed issues that improve eBPF agent performance and the user experience in the OpenShift Container Platform web console.

- eBPF agent memory usage optimization

Before this update, a regression in the eBPF agent caused kernel memory to be reserved unintentionally for disabled features. This resulted in higher than expected memory usage for the agent.

With this update, the eBPF agent ensures that reserved kernel memory is kept to a minimum when features are disabled. As a result, the agent’s memory footprint is reduced and resource allocation is more efficient.

- Improved DNS graph availability in Prometheus-only mode

Before this update, when Loki was disabled and DNS tracking was enabled, the OpenShift Container Platform console plugin failed to display any DNS graphs. Instead, it displayed an error stating the request could not be performed with Prometheus metrics, even for graphs that had valid Prometheus data.

With this update, the error is only displayed for specific graphs that lack configured metrics. As a result, all valid DNS graphs are now correctly displayed in the web console.

- Improved error handling in Topology view

Before this update, in installations without Loki, certain queries in the Topology view could result in errors due to missing Prometheus metrics. These errors sometimes persisted until you cleared the browser cache.

With this update, the console no longer displays invalid scopes caused by missing metrics. Additionally, error handling in the console has been updated to provide more actionable information, eliminating the need for manual cache clears in these scenarios.

1.4. Network Observability Operator 1.11 advisory

You can review the advisory for Network Observability Operator 1.11 release.

1.5. Network Observability Operator 1.11 new features and enhancements

Learn about the new features and enhancements in the Network Observability Operator 1.11 release, including hierarchical governance with the FlowCollectorSlice resource, a new Service deployment model, and the general availability of health rules.

- Per-tenant hierarchical governance with the FlowCollectorSlice resource

This release introduces the

FlowCollectorSliceAPI to support hierarchical governance, allowing project administrators to independently manage sampling and subnet labeling for their specific namespaces.This feature was implemented to reduce global processing overhead and provide tenant autonomy in large-scale environments where individual teams require self-service visibility without cluster-wide configuration changes. As a result, organizations can selectively collect traffic and delegate data enrichment tasks to the project level while maintaining centralized cluster control.

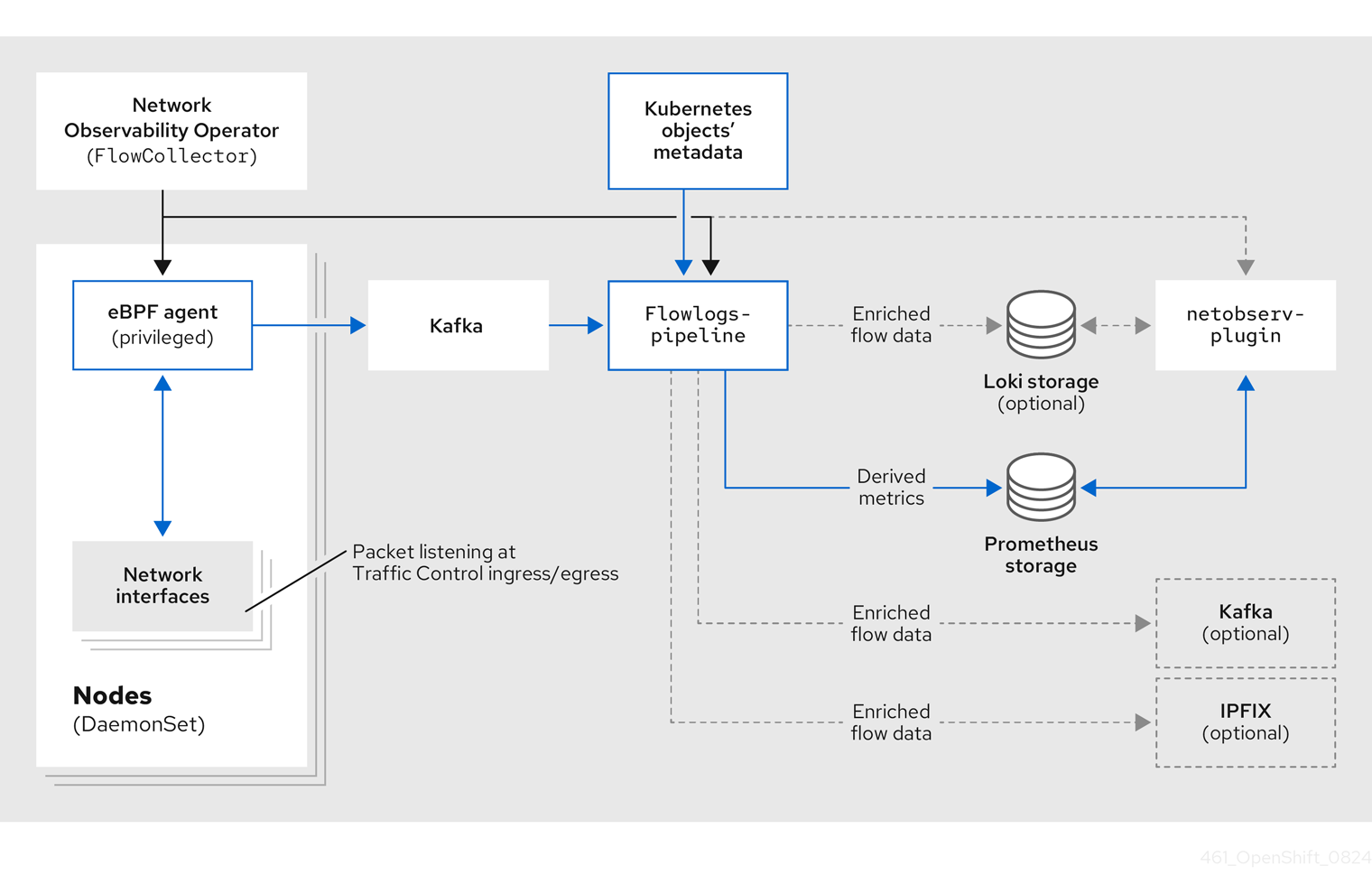

- New Service deployment model for the

FlowCollectorresource This release introduces a new

Servicedeployment model in theFlowCollectorcustom resource. This model provides an intermediate option between theDirectandKafkamodels. In theServicemodel, the eBPF agent is deployed as adaemonset, and theflowlogs-pipelinecomponent is deployed as a scalable service.This model offers improved performance in large clusters by reducing cache duplication across component instances.

- Health rules are generally available

The health alerts feature, introduced in previous versions as a Technology Preview feature, is fully supported as health rules in the Network Observability Operator 1.11 release.

ImportantNetwork Observability health rules are available on OpenShift Container Platform 4.16 and later.

This eBPF-based system correlates network metrics with infrastructure metadata to provide proactive notifications and automated insights into cluster health, such as traffic surges or latency trends. As a result, you can use the Network Health dashboard in the OpenShift Container Platform web console to manage categorized alerts, customize thresholds, and create recording rules for improved visualization performance.

- Enhanced network traffic visualization and filtering

This release introduces enhanced visualization and filtering tools in the OpenShift Container Platform web console.

- Inline filter editing: You can now edit filter chips directly within the filter input field. This enhancement provides a more efficient method for modifying long filter values that were previously truncated, eliminating the need to manually copy and paste values. This update adopts an inline editing convention consistent with the Saved filters feature.

- External traffic quick filters: New quick filters allow you to monitor external ingress and egress traffic actively. This enhancement streamlines network management, enabling you to identify and address issues related to external network communication quickly.

- Intuitive resource iconography: The OpenShift Container Platform console now uses specific icons for Kubernetes kinds, groups, and filters. These icons provide a more intuitive and visually consistent experience, making it easier to navigate the network topology and identify applied filters at a glance.

- DNS resolution analysis

This release includes eBPF-based DNS tracking to enrich network flow records with domain names.

This feature was implemented to reduce the mean time to identify (MTTI) by allowing administrators to immediately distinguish between network routing failures and service discovery issues, such as

NXDOMAINerrors.- Integration with Gateway API

This release introduces automatic integration between the Network Observability Operator and the Gateway API when a

GatewayClassresource is created. This feature provides high-level traffic attribution for cluster ingress and egress traffic without requiring manual configuration of theFlowCollectorresource.ImportantIntegration with Gateway API is available on OpenShift Container Platform 4.19 and later.

You can verify the automated mapping of network flows to Gateway API resources in the Observe → Network Traffic view of the OpenShift Container Platform web console. The Owner column displays the Gateway name, providing a direct link to the associated Gateway resource page.

- Improved data resilience in the Overview and Topology views

With this release, functional data remains visible in the Overview and Topology views even if some background queries fail. This enhancement ensures that the scope and group drop-down menus in the Topology view remain accessible during partial service disruptions.

Additionally, the Overview page now displays active error messages to assist with troubleshooting, providing better visibility into system health without interrupting the monitoring workflow.

- Improved categorization of unknown network flows

With this release, network flows from unknown sources are categorized into four distinct groups: external, unknown service, unknown node, and unknown pod.

This enhancement uses subnet labels to separate unknown IP subnets, providing a clearer network topology. This improved visibility helps to identify potential security threats and allows for a more targeted analysis of unknown elements within the cluster.

- Improved performance for new Network Observability installations

The default performance of the Network Observability Operator is improved for new installations. The default value for

cacheActiveTimeoutis increased from 5 to 15 seconds, and thecacheMaxFlowsvalue is increased from 100,000 to 120,000 to accommodate higher flow volumes.ImportantThese new default values apply only to new installations; existing installations retain their current configurations.

These changes reduce CPU load by up to 40%.

- Improved LokiStack status monitoring and reporting

With this release, the Network Observability Operator monitors the status of the

LokiStackresource and reports errors or configuration issues. The Network Observability Operator verifiesLokiStackconditions, including pending or failed pods and specific warning conditions.This enhancement provides more actionable information in the

FlowCollectorstatus, allowing for more effective troubleshooting of theLokiStackcomponent within network observability.- Visual indicators for Loki indexed fields in the filter menu

With this release, functional data remains visible in the Overview and Topology views even if some background queries fail. This enhancement ensures that the scope and group drop-down menus in the Topology view remain accessible during partial service disruptions.

This enhancement improves query performance by indicating which fields are indexed for faster data retrieval. Using indexed fields when filtering data reduces the time required to browse and analyze network flows within the console.

1.6. Network Observability Operator 1.11 known issues

The following known issues affect the Network Observability Operator 1.11 release.

- Health rules do not trigger when the sampling rate increases because of

lowVolumeThreshold Network observability alerts might not trigger when an elevated sampling rate causes the volume to fall below the

lowVolumeThresholdfilter. This results in fewer alerts being evaluated or displayed.To work around this problem, adjust the

lowVolumeThresholdvalue to align with the sampling rate to ensure consistent alert evaluation.- DNS metrics unavailable when Loki is disabled

When the

DNSTrackingfeature is enabled in a "Loki-less" installation, the required metrics for DNS graphs are unavailable. As a consequence, you cannot view DNS latency and response codes in the dashboard.To work around this problem, you must either disable the

DNSTrackingoption or enable Loki in theFlowCollectorresource by settingspec.loki.enableto true.

1.7. Network Observability Operator 1.11 fixed issues

The Network Observability Operator 1.11 release contains several fixed issues that improve performance and the user experience.

- Missing dates in charts

Before this update, the chart tooltip date was not displayed as intended, due to a breaking change in a dependency. As a consequence, users experienced missing date information in the OpenShift Container Platform web console plugin’s Overview tab chart, affecting data context.

With this release, the chart tooltip date display is restored.

- Warning message for Direct mode not refreshed after upscaling

Before this update, cluster information was not refreshed after scaling, causing a warning message to persist in large clusters, not updating with changes.

With this release, cluster information is now refreshed when it changes, resulting in the warning message for large clusters in

Directmode updating with changes in cluster size, improving user visibility.- Unenriched OVN IPs

Before this update, some IPs declared by OVN-Kubernetes were not enriched, causing unenriched IPs like

100.64.0.xto not appear inMachinesnetwork. As a consequence, IPs not enriched caused the wrong network visibility for users.With this release, missing IPs in OVN-Kubernetes are now enriched. As a result, IPs declared by OVN-Kubernetes are correctly enriched and appear in the

Machinesnetwork improving the visibility of network traffic sources in theMachinesnetwork.- Improved Operator API discovery reliability

Before this update, a race condition during Network Observability Operator startup could cause API discovery to fail silently. As a consequence, the operator could fail to recognize the OpenShift Container Platform cluster, leading to missing mandatory

ClusterRoleBindingresources and preventing components from functioning correctly.With this release, the Network Observability Operator continues to check for API availability over time and reconciliation is blocked if discovery fails. As a result, the operator correctly identifies the environment and ensures all required roles are created.

- Added missing translation fields to IPFIX exports

Before this update, some network flow fields were missing translations during the IPFIX export process. As a result, exported IPFIX data was incomplete or difficult to interpret in external collectors.

With this release, the missing translation fields (xlat) have been added to the

flowlogs-pipelineIPFIX exporter. IPFIX exports now provide a complete set of translated fields for consistent network observability.- Fixed FlowMetric form creation link and defaults

Before this update, the link to create a

FlowMetriccustom resource incorrectly directed users to a YAML editor instead of the intended form view. Additionally, the editor was pre-filled with incorrect default values.With this release, the link correctly leads to the

FlowMetricresource creation form with the expected default settings. As a result, users can now easily createFlowMetricresources through the user interface.- Virtual machine resource type icon in Topology view

Before this update, virtual machine (VM) owner types incorrectly displayed a generic question mark (?) icon in the Topology view.

With this release, the user interface now includes a specific icon for VM resources. As a result, users can more easily identify and distinguish VM traffic within the network topology.

- DNS optimization, update DNS Alerts

Before this update, many DNS "NXDOMAIN" errors were returned due to ambiguous URLs being used in network observability.

With this release, these URLs have been disambiguated, resulting in a more optimal use of DNS.

Chapter 2. Network Observability Operator release notes archive

2.1. Network Observability Operator release notes archive

These release notes track past developments of the Network Observability Operator in the OpenShift Container Platform. They are for reference purposes only.

The Network Observability Operator enables administrators to observe and analyze network traffic flows for OpenShift Container Platform clusters.

2.1.1. Network Observability Operator 1.10.1 advisory

You can review the advisory for Network Observability Operator 1.10.1 release.

2.1.2. Network Observability Operator 1.10.1 CVEs

You can review the CVEs for the Network Observability Operator 1.10.1 release.

2.1.3. Network Observability Operator 1.10.1 fixed issues

The Network Observability Operator 1.10.1 release contains several fixed issues that improve performance and the user experience.

- Warning Generated for Direct Mode on Clusters Over 15 Nodes

Before this update, the recommendation against using the

Directdeployment model on large clusters was only available in the documentation.With this release, the Network Observability Operator now generates a warning when the Direct deployment mode is used on a cluster exceeding 15 nodes.

- Network policy deployment disabled on OpenShiftSDN

Before this update, when OpenShift SDN was the cluster network plugin, enabling the

FlowCollectornetwork policy would break communication between network observability pods. This issue does not occur with OVN-Kubernetes, which is the default supported network plugin.With this release, the Network Observability Operator no longer attempts to deploy the network policy when OpenShift SDN is detected; a warning is displayed instead. Additionally, the default value for enabling the network policy is modified: it is now enabled by default only when OVN-Kubernetes is detected as the cluster network plugin.

- Validation added for subnet label characters

Before this update, there were no restrictions on characters allowed in the subnet labels "name" configuration, meaning users could enter text containing spaces or special characters. This generated errors in the web console plugin when users tried to apply filters, and clicking the filter icon for a subnet label often failed.

With this release, the configured subnet label name is validated immediately when configured in the

FlowCollectorcustom resource. The validation ensures the name contains only alphanumeric characters,:,_, and-. As a result, filtering on subnet labels from the web console plugin now works as expected.- Network Observability CLI uses unique temporary directory per run

Before this update, the Network Observability CLI created or reused a single temporary (

tmp) directory in the current working directory. This could lead to conflicts or data corruption between separate runs.With this release, the Network Observability CLI now creates a unique temporary directory for each run, preventing potential conflicts and improving file management hygiene.

2.1.4. Network Observability Operator 1.10 advisory

You can review the advisory for Network Observability Operator 1.10 release.

2.1.5. Network Observability Operator 1.10 new features and enhancements

The Network Observability Operator 1.10 release enhances security, improves performance, and introduces new CLI UI tools for better network flow management.

2.1.5.1. Network policy updates

The Network Observability Operator now supports configuring both ingress and egress network policies to control pod traffic. This enhancement improves security.

By default, the spec.NetworkPolicy.enable specification is now set to true. This means that if you use Loki or Kafka, it is recommended that you deploy the Loki Operator and Kafka instances into dedicated namespaces. This ensures that the network policies can be configured correctly to allow communication between all components.

2.1.5.2. Network Observability Operator CLI UI updates

This release brings the following new features and updates to the Network Observability Operator CLI (oc netobserv) user interface (UI):

Table view enhancements

- Customizable columns: Click Manage Columns to select which columns to display, and tailor the table to your needs.

- Smart filtering: Live filters now include auto-suggestions, making it easier to select the right keys and values.

-

Packet preview: When capturing packets, click a row to inspect the

pcapcontent directly.

Terminal-based line charts enhancements

- Metrics visualization: Real-time graphs are rendered directly in the CLI.

- Panel selection: Choose from predefined views or customize views by using the Manage Panels pop-up menu to selectively view charts of specific metrics.

2.1.5.3. Network observability console improvements

The network observability console plugin includes a new view to configure the FlowCollector custom resource (CR). From this view, you can complete the following tasks:

-

Configure the

FlowCollectorCR. - Calculate your resource footprint.

- Gain increased of issues such as configuration warnings or high metrics cardinality.

2.1.5.4. Performance improvements

Network Observability Operator 1.10 has improved the performance and memory footprint of the Operator, especially visible on large clusters.

2.1.6. Network Observability Operator 1.10 Technology Preview features

You can review the Technology Preview features for Network Observability Operator 1.10 release.

2.1.6.1. Network Observability Operator custom alerts (Technology Preview)

This release introduces new alert functionality, and custom alert configuration. These capabilities are Technology Preview features, and must be explicitly enabled.

To view the new alerts, in the OpenShift Container Platform web console, click Observe → Alerting → Alerting rules.

2.1.6.2. Network Observability Operator Network Health dashboard (Technology Preview)

When you enable the Technology Preview alerts functionality in the Network Observability Operator, you can view a new Network Health dashboard in the OpenShift Container Platform web console by clicking Observe.

The Network Health dashboard provides a summary of triggered alerts, distinguishing between critical, warning, and minor issues, and also shows pending alerts.

2.1.7. Network Observability Operator 1.10 removed features

Review the removed features that might affect your use of the Network Observability Operator 1.10 release.

2.1.7.1. FlowCollector API version v1beta1 has been removed

The FlowCollector custom resource (CR) API version v1beta1 has been removed and is no longer supported. Use the v1beta2 version.

2.1.8. Network Observability Operator 1.10 known issues

Review the following known issues and their recommended workarounds (where available) that might affect your use of the Network Observability Operator 1.10 release.

2.1.8.1. Upgrading to 1.10 fails on OpenShift Container Platform 4.14 and earlier

Upgrading to the Network Observability Operator 1.10 on OpenShift Container Platform 4.14 and earlier can fail due to a FlowCollector custom resource definition (CRD) validation error in the software catalog.

To workaround this problem, you must:

Uninstall both versions of the Network Observability Operator from the software catalog in the OpenShift Container Platform web console.

-

Keep the

FlowCollectorCRD installed so that it doesn’t cause any disruption in the flow collection process.

-

Keep the

Check the current name of the

FlowCollectorCRD by running the following command:$ oc get crd flowcollectors.flows.netobserv.io -o jsonpath='{.spec.versions[0].name}'Expected output:

v1beta1Check the current serving status of the

FlowCollectorCRD by running the following command:$ oc get crd flowcollectors.flows.netobserv.io -o jsonpath='{.spec.versions[0].served}'Expected output:

trueSet the

servedflag for thev1beta1version tofalseby running the following command:$ oc patch crd flowcollectors.flows.netobserv.io --type='json' -p "[{'op': 'replace', 'path': '/spec/versions/0/served', 'value': false}]"Verify that the

servedflag is set tofalseby running the following command:$ oc get crd flowcollectors.flows.netobserv.io -o jsonpath='{.spec.versions[0].served}'Expected output:

false- Install Network Observability Operator 1.10.

2.1.8.2. eBPF agent compatibility with older OpenShift Container Platform versions

The eBPF agent used in the Network Observability Command Line Interface (CLI) packet capture feature is incompatible with OpenShift Container Platform versions 4.16 and older.

This limitation prevents the eBPF-based Packet Capture Agent (PCA) from functioning correctly on those older clusters.

To work around this problem, you must manually configure PCA to use an older, compatible eBPF agent container image. For more information, see the Red Hat Knowledgebase Solution eBPF agent compatibility with older Openshift versions in Network Observability CLI 1.10+.

2.1.8.3. eBPF Agent fails to send flows with OpenShiftSDN when NetworkPolicy is enabled

When running Network Observability Operator 1.10 on OpenShift Container Platform 4.14 clusters that use the OpenShiftSDN CNI plugin, the eBPF agent is unable to send flow records to the flowlogs-pipeline component. This occurs when the FlowCollector custom resource is created with NetworkPolicy enabled (spec.networkPolicy.enable: true).

As a consequence, flow data is not processed by the flowlogs-pipeline component and does not appear in the Network Traffic dashboard or the configured storage (Loki). The eBPF agent pod logs show i/o timeout errors when attempting to connect to the collector:

time="2025-10-17T13:53:44Z" level=error msg="couldn't send flow records to collector" collector="10.0.68.187:2055" component=exporter/GRPCProto error="rpc error: code = Unavailable desc = connection error: desc = \"transport: Error while dialing: dial tcp 10.0.68.187:2055: i/o timeout\""

To work around this problem, set spec.networkPolicy.enable to false to disable NetworkPolicy in the FlowCollector resource for Network Observability Operator 1.10.

This will allow the eBPF agent to communicate with the flowlogs-pipeline component without interference from the automatically deployed network policy.

2.1.9. Network Observability Operator 1.10 fixed issues

The Network Observability Operator 1.10 release contains several fixed issues that improve performance and the user experience.

2.1.9.1. MetricName and Remap fields are validated

Before this update, users could create a FlowMetric custom resource (CR) with an invalid metric name. Although the FlowMetric CR was successfully created, the underlying metric would fail silently without providing any error feedback to the user.

With this release, the FlowMetric, metricName, and remap fields are now validated before creation, so users are immediately notified if they enter an invalid name.

2.1.9.2. Improved html-to-image export performance

Before this update, performance issues in the underlying library caused the html-to-image export function to take a long time, leading to browser freezing.

With this release, the performance of the html-to-image library has been improved, reducing export wait times and eliminating browser freezing during image generation.

2.1.9.3. Improved warnings for eBPF privileged mode

Before this update, when users selected eBPF features that require privileged mode, the features would often fail without clearly informing the user that privileged mode was missing or needed to be enabled.

With this release, a validation hook immediately warns the user if the configuration is inconsistent. This improves user understanding and prevents misconfiguration.

2.1.9.4. Subnet labels added to OpenTelemetry exporter

Before this update, the OpenTelemetry metrics exporter was missing the network flow labels SrcSubnetLabel and DstSubnetLabel, causing them to show as empty.

With this release, these labels are now correctly provided by the exporter. They have also been renamed to source.subnet.label and destination.subnet.label for improved clarity and consistency with OpenTelemetry standards.

2.1.9.5. Reduced default tolerations for network observability components

Before this update, a default toleration was set on all network observability components to allow them to be scheduled on any node, including those tainted with NoSchedule. This could potentially block cluster upgrades.

With this release, the default toleration is now only maintained for the eBPF agents and the Flowlogs-Pipeline when configured in Direct mode. The toleration has been removed from the OpenShift Container Platform web console plugin and the Flowlogs-Pipeline when configured in Kafka mode.

Additionally, while tolerations were always configurable in the FlowCollector custom resource (CR), it was previously impossible to replace the tolerations with an empty list. It is now possible to replace the tolerations with an empty list.

2.1.10. Network Observability Operator 1.9.3 advisory

You can review the advisory for Network Observability Operator 1.9.3 release.

2.1.11. Network Observability Operator 1.9.2 advisory

You can review the advisory for Network Observability Operator 1.9.2 release.

2.1.12. Network observability 1.9.2 bug fixes

You can review the fixed issues for the Network Observability Operator 1.9.1 release.

-

Before this update, OpenShift Container Platform versions 4.15 and earlier did not support the

TC_ATTACH_MODEconfiguration. This led to command-line interface (CLI) errors and prevented the observation of packets and flows. With this release, the Traffic Control eXtension (TCX) hook attachment mode has been adjusted for these older versions. This eliminatestcxhook errors and enables flow and packet observation.

2.1.13. Network Observability Operator 1.9.1 advisory

You can review the advisory for the Network Observability Operator 1.9.1 release.

The following advisory is available for the Network Observability Operator 1.9.1:

2.1.14. Network Observability Operator 1.9.1 fixed issues

You can review the fixed issues for the Network Observability Operator 1.9.1 release.

-

Before this update, network flows were not observed on OpenShift Container Platform 4.15 due to an incorrect attach mode setting. This stopped users from monitoring network flows correctly, especially with certain catalogs. With this release, the default attach mode for OpenShift Container Platform versions older than 4.16.0 is set to

tc, so flows are now observed on OpenShift Container Platform 4.15. (NETOBSERV-2333) - Before this update, if an IPFIX collector restarted, configuring an IPFIX exporter could lose its connection and stop sending network flows to the collector. With this release, the connection is restored, and network flows continue to be sent to the collector. (NETOBSERV-2315)

- Before this update, when you configured an IPFIX exporter, flows without port information (such as ICMP traffic) were ignored, which caused errors in logs. TCP flags and ICMP data were also missing from IPFIX exports. With this release, these details are now included. Missing fields (like ports) no longer cause errors and are part of the exported data. (NETOBSERV-2307)

- Before this update, the User Defined Networks (UDN) Mapping feature showed a configuration issue and warning on OpenShift Container Platform 4.18 because the OpenShift version was incorrectly set in the code. This impacted the user experience. With this release, UDN Mapping now supports OpenShift Container Platform 4.18 without warnings, making the user experience smooth. (NETOBSERV-2305)

-

Before this update, the expand function on the Network Traffic page had compatibility problems with OpenShift Container Platform Console 4.19. This resulted in empty menu space when expanding and an inconsistent user interface. With this release, the compatibility problem in the

NetflowTrafficpart andtheme hookis resolved. The side menu in the Network Traffic view is now properly managed, which improves how you interact with the user interface. (NETOBSERV-2304)

2.1.15. Network Observability Operator 1.9.0 advisory

You can review the advisory for the Network Observability Operator 1.9.0 release.

2.1.16. Network Observability Operator 1.9.0 new features and enhancements

You can review the new features and enhancements for the Network Observability Operator 1.9.0 release.

2.1.16.1. User-defined networks with network observability

With this release, user-defined networks (UDN) feature is generally available with network observability. When the UDNMapping feature is enabled in network observability, the Traffic flow table has a UDN labels column. You can filter logs on Source Network Name and Destination Network Name information.

2.1.16.2. Filter flowlogs at ingestion

With this release, you can create filters to reduce the number of generated network flows and the resource usage of network observability components. The following filters can be configured:

- eBPF Agent filters

- Flowlogs-pipeline filters

2.1.16.3. IPsec support

This update brings the following enhancements to network observability when IPsec is enabled on OpenShift Container Platform:

- A new column named IPsec Status is displayed in the network observability Traffic flows view to show whether a flow was successfully IPsec-encrypted or if there was an error during encryption/decryption.

- A new dashboard showing the percentage of encrypted traffic is generated.

2.1.16.4. Network Observability CLI

The following filtering options are now available for packets, flows, and metrics capture:

-

Configure the ratio of packets being sampled by using the

--samplingoption. -

Filter flows using a custom query by using the

--queryoption. -

Specify interfaces to monitor by using the

--interfacesoption. -

Specify interfaces to exclude by using the

--exclude_interfacesoption. -

Specify metric names to generate by using the

--include_listoption.

For more information, see:

2.1.17. Network Observability Operator release notes 1.9.0 notable technical changes

You can review the notable technical changes for the Network Observability Operator 1.6.0 release.

-

The

NetworkEventsfeature in network observability 1.9 has been updated to work with the newer Linux kernel of OpenShift Container Platform 4.19. This update breaks compatibility with older kernels. As a result, theNetworkEventsfeature can only be used with OpenShift Container Platform 4.19. If you are using this feature with network observability 1.8 and OpenShift Container Platform 4.18, consider avoiding a network observability upgrade or upgrade to network observability 1.9 and OpenShift Container Platform to 4.19. -

The

netobserv-readercluster role has been renamed tonetobserv-loki-reader. - Improved CPU performance of the eBPF agents.

2.1.18. Network Observability Operator 1.9.0 Technology Preview features

You can review the Technology Preview features for the Network Observability Operator 1.9.0 release.

Some features in this release are currently in Technology Preview. These experimental features are not intended for production use. Note the following scope of support on the Red Hat Customer Portal for these features:

Technology Preview Features Support Scope

2.1.18.1. eBPF Manager Operator with network observability

The eBPF Manager Operator reduces the attack surface and ensures compliance, security, and conflict prevention by managing all eBPF programs. Network observability can use the eBPF Manager Operator to load hooks. This eliminates the need to provide the eBPF Agent with privileged mode or additional Linux capabilities like CAP_BPF and CAP_PERFMON. The eBPF Manager Operator with network observability is only supported on 64-bit AMD architecture.

2.1.19. Network Observability Operator 1.9.0 CVEs

You can review the CVEs for the Network Observability Operator 1.9.0 release.

2.1.20. Network Observability Operator 1.9.0 fixed issues

You can review the fixed issues for the Network Observability Operator 1.9.0 release.

-

Previously, when filtering by source or destination IP from the console plugin, using a Classless Inter-Domain Routing (CIDR) notation such as

10.128.0.0/24did not work, returning results that should be filtered out. With this update, it is now possible to use a CIDR notation, with the results being filtered as expected. (NETOBSERV-2276) -

Previously, network flows might have incorrectly identified the network interfaces in use, especially with a risk of mixing up

eth0andens5. This issue only occurred when the eBPF agents were configured asPrivileged. With this update, it has been fixed partially, and almost all network interfaces are correctly identified. Refer to the known issues below for more details. (NETOBSERV-2257) - Previously, when the Operator checked for available Kubernetes APIs in order to adapt its behavior, if there was a stale API, this resulted in an error that prevented the Operator from starting normally. With this update, the Operator ignores error on unrelated APIs, logs errors on related APIs, and continues to run normally. (NETOBSERV-2240)

- Previously, users could not sort flows by Bytes or Packets in the Traffic flows view of the Console plugin. With this update, users can sort flows by Bytes and Packets. (NETOBSERV-2239)

-

Previously, when configuring the

FlowCollectorresource with an IPFIX exporter, MAC addresses in the IPFIX flows were truncated to their 2 first bytes. With this update, MAC addresses are fully represented in the IPFIX flows. (NETOBSERV-2208) - Previously, some of the warnings sent from the Operator validation webhook could lack clarity on what needed to be done. With this update, some of these messages have been reviewed and amended to make them more actionable. (NETOBSERV-2178)

-

Previously, it was not obvious to figure out there was an issue when referencing a

LokiStackfrom theFlowCollectorresource, such as in case of typing error. With this update, theFlowCollectorstatus clearly states that the referencedLokiStackis not found in that case. (NETOBSERV-2174) - Previously, in the console plugin Traffic flows view, in case of text overflow, text ellipses sometimes hid much of the text to be displayed. With this update, it displays as much text as possible. (NETOBSERV-2119)

- Previously, the console plugin for network observability 1.8.1 and earlier did not work with the OpenShift Container Platform 4.19 web console, making the Network Traffic page inaccessible. With this update, the console plugin is compatible and the Network Traffic page is accessible in network observability 1.9.0. (NETOBSERV-2046)

-

Previously, when using conversation tracking (

logTypes: ConversationsorlogTypes: Allin theFlowCollectorresource), the Traffic rates metrics visible in the dashboards were flawed, wrongly showing an out-of-control increase in traffic. Now, the metrics show more accurate traffic rates. However, note that inConversationsandEndedConversationsmodes, these metrics are still not completely accurate as they do not include long-standing connections. This information has been added to the documentation. The default modelogTypes: Flowsis recommended to avoid these inaccuracy. (NETOBSERV-1955)

2.1.21. Network Observability Operator 1.9.0 known issues

You can review the known issues for the Network Observability Operator 1.9.0 release.

- The user-defined network (UDN) feature displays a configuration issue and a warning when used with OpenShift Container Platform 4.18, even though it is supported. This warning can be ignored. (NETOBSERV-2305)

-

In some rare cases, the eBPF agent is unable to appropriately correlate flows with the involved interfaces when running in

privilegedmodes with several network namespaces. A large part of these issues have been identified and resolved in this release, but some inconsistencies remain, especially with theens5interface. (NETOBSERV-2287)

2.1.22. Network Observability Operator 1.8.1 advisory

You can review the advisory for the Network Observability Operator 1.8.1 release.

2.1.23. Network Observability Operator 1.8.1 CVEs

You can review the CVEs for the Network Observability Operator 1.8.1 release.

2.1.24. Network Observability Operator 1.8.1 fixed issues

You can review the fixed issues for the Network Observability Operator 1.8.1 release.

- This fix ensures that the Observe menu appears only once in future versions of OpenShift Container Platform. (NETOBSERV-2139)

2.1.25. Network Observability Operator 1.8.0 advisory

You can review the advisory for the Network Observability Operator 1.8.0 release.

2.1.26. Network Observability Operator 1.8.0 new features and enhancements

You can review the new features and enhancements for the Network Observability Operator 1.8.0 release.

2.1.26.1. Packet translation

You can now enrich network flows with translated endpoint information, showing not only the service but also the specific backend pod, so you can see which pod served a request.

For more information, see:

2.1.26.2. eBPF performance improvements in 1.8

- Network observability now uses hash maps instead of per-CPU maps. This means that network flows data is now tracked in the kernel space and new packets are also aggregated there. The de-duplication of network flows can now occur in the kernel, so the size of data transfer between the kernel and the user spaces yields better performance. With these eBPF performance improvements, there is potential to observe a CPU resource reduction between 40% and 57% in the eBPF Agent.

2.1.26.3. Network Observability CLI

The following new features, options, and filters are added to the Network Observability CLI for this release:

-

Capture metrics with filters enabled by running the

oc netobserv metricscommand. -

Run the CLI in the background by using the

--backgroundoption with flows and packets capture and runningoc netobserv followto see the progress of the background run andoc netobserv copyto download the generated logs. -

Enrich flows and metrics capture with Machines, Pods, and Services subnets by using the

--get-subnetsoption. New filtering options available with packets, flows, and metrics capture:

- eBPF filters on IPs, Ports, Protocol, Action, TCP Flags and more

-

Custom nodes using

--node-selector -

Drops only using

--drops -

Any field using

--regexes

For more information, see Network Observability CLI reference.

2.1.27. Network Observability Operator release notes 1.8.0 fixed issues

You can review the fixed issues for the Network Observability Operator 1.8.0 release.

- Previously, the Network Observability Operator came with a "kube-rbac-proxy" container to manage RBAC for its metrics server. Since this external component is deprecated, it was necessary to remove it. It is now replaced with direct TLS and RBAC management through Kubernetes controller-runtime, without the need for a side-car proxy. (NETOBSERV-1999)

- Previously in the OpenShift Container Platform console plugin, filtering on a key that was not equal to multiple values would not filter anything. With this fix, the expected results are returned, which is all flows not having any of the filtered values. (NETOBSERV-1990)

- Previously in the OpenShift Container Platform console plugin with disabled Loki, it was very likely to generate a "Can’t build query" error due to selecting an incompatible set of filters and aggregations. Now this error is avoided avoid by automatically disabling incompatible filters while still making the user aware of the filter incompatibility. (NETOBSERV-1977)

- Previously, when viewing flow details from the console plugin, the ICMP info was always displayed in the side panel, showing "undefined" values for non-ICMP flows. With this fix, ICMP info is not displayed for non-ICMP flows. (NETOBSERV-1969)

- Previously, the "Export data" link from the Traffic flows view did not work as intended, generating empty CSV reports. Now, the export feature is restored, generating non-empty CSV data. (NETOBSERV-1958)

-

Previously, it was possible to configure the

FlowCollectorwithprocessor.logTypesConversations,EndedConversationsorAllwithloki.enableset tofalse, despite the conversation logs being only useful when Loki is enabled. This resulted in resource usage waste. Now, this configuration is invalid and is rejected by the validation webhook. (NETOBSERV-1957) -

Configuring the

FlowCollectorwithprocessor.logTypesset toAllconsumes much more resources, such as CPU, memory and network bandwidth, than the other options. This was previously not documented. It is now documented, and triggers a warning from the validation webhook. (NETOBSERV-1956) - Previously, under high stress, some flows generated by the eBPF agent were mistakenly dismissed, resulting in traffic bandwidth under-estimation. Now, those generated flows are not dismissed. (NETOBSERV-1954)

-

Previously, when enabling the network policy in the

FlowCollectorconfiguration, the traffic to the Operator webhooks was blocked, breaking theFlowMetricsAPI validation. Now traffic to the webhooks is allowed. (NETOBSERV-1934) -

Previously, when deploying the default network policy, namespaces

openshift-consoleandopenshift-monitoringwere set by default in theadditionalNamespacesfield, resulting in duplicated rules. Now there is no additional namespace set by default, which helps avoid getting duplicated rules.(NETOBSERV-1933) - Previously from the OpenShift Container Platform console plugin, filtering on TCP flags would match flows having only the exact desired flag. Now, any flow having at least the desired flag appears in filtered flows. (NETOBSERV-1890)

- When the eBPF agent runs in privileged mode and pods are continuously added or deleted, a file descriptor (FD) leak occurs. The fix ensures proper closure of the FD when a network namespace is deleted. (NETOBSERV-2063)

-

Previously, the CLI agent

DaemonSetdid not deploy on master nodes. Now, a toleration is added on the agentDaemonSetto schedule on every node when taints are set. Now, CLI agentDaemonSetpods run on all nodes. (NETOBSERV-2030) - Previously, the Source Resource and Source Destination filters autocomplete were not working when using Prometheus storage only. Now this issue is fixed and suggestions displays as expected. (NETOBSERV-1885)

- Previously, a resource using multiple IPs was displayed separately in the Topology view. Now, the resource shows as a single topology node in the view. (NETOBSERV-1818)

- Previously, the console refreshed the Network traffic table view contents when the mouse pointer hovered over the columns. Now, the display is fixed, so row height remains constant with a mouse hover. (NETOBSERV-2049)

2.1.28. Network Observability Operator release notes 1.8.0 known issues

You can review the known issues for the Network Observability Operator 1.8.0 release.

- If there is traffic that uses overlapping subnets in your cluster, there is a small risk that the eBPF Agent mixes up the flows from overlapped IPs. This can happen if different connections happen to have the exact same source and destination IPs and if ports and protocol are within a 5 seconds time frame and happening on the same node. This should not be possible unless you configured secondary networks or UDN. Even in that case, it is still very unlikely in usual traffic, as source ports are usually a good differentiator. (NETOBSERV-2115)

-

After selecting a type of exporter to configure in the

FlowCollectorresourcespec.exporterssection from the OpenShift Container Platform web console form view, the detailed configuration for that type does not show up in the form. The workaround is to configure directly the YAML. (NETOBSERV-1981)

2.1.29. Network Observability Operator 1.7.0 advisory

You can review the advisory for the Network Observability Operator 1.7.0 release.

2.1.30. Network Observability Operator 1.7.0 new features and enhancements

You can review the following new features and enhancements for the Network Observability Operator 1.7.0 release.

2.1.30.1. OpenTelemetry support

You can now export enriched network flows to a compatible OpenTelemetry endpoint, such as the Red Hat build of OpenTelemetry.

For more information, see:

2.1.30.2. Network observability Developer perspective

You can now use network observability in the Developer perspective.

For more information, see:

2.1.30.3. TCP flags filtering

You can now use the tcpFlags filter to limit the volume of packets processed by the eBPF program.

For more information, see:

2.1.30.4. Network observability for OpenShift Virtualization

You can observe networking patterns on an OpenShift Virtualization setup by identifying eBPF-enriched network flows coming from VMs that are connected to secondary networks, such as through Open Virtual Network (OVN)-Kubernetes.

For more information, see:

2.1.30.5. Network policy deploys in the FlowCollector custom resource (CR)

With this release, you can configure the FlowCollector custom resource (CR) to deploy a network policy for network observability. Previously, if you wanted a network policy, you had to manually create one. The option to manually create a network policy is still available.

For more information, see:

2.1.30.6. FIPS compliance

You can install and use the Network Observability Operator in an OpenShift Container Platform cluster running in FIPS mode.

ImportantTo enable FIPS mode for your cluster, you must run the installation program from a Red Hat Enterprise Linux (RHEL) computer configured to operate in FIPS mode. For more information about configuring FIPS mode on RHEL, see Switching RHEL to FIPS mode.

When running Red Hat Enterprise Linux (RHEL) or Red Hat Enterprise Linux CoreOS (RHCOS) booted in FIPS mode, OpenShift Container Platform core components use the RHEL cryptographic libraries that have been submitted to NIST for FIPS 140-2/140-3 Validation on only the x86_64, ppc64le, and s390x architectures.

2.1.30.7. eBPF agent enhancements

The following enhancements are available for the eBPF agent:

-

If the DNS service maps to a different port than

53, you can specify this DNS tracking port usingspec.agent.ebpf.advanced.env.DNS_TRACKING_PORT. - You can now use two ports for transport protocols (TCP, UDP, or SCTP) filtering rules.

- You can now filter on transport ports with a wildcard protocol by leaving the protocol field empty.

For more information, see:

2.1.30.8. Network Observability CLI

The Network Observability CLI (oc netobserv), is now generally available. The following enhancements have been made since the 1.6 Technology Preview release:

- There are now eBPF enrichment filters for packet capture similar to flow capture.

-

You can now use filter

tcp_flagswith both flow and packets capture. - The auto-teardown option is available when max-bytes or max-time is reached.

For more information, see:

2.1.31. Network Observability Operator 1.7.0 fixed issues

You can review the following fixed issues for the Network Observability Operator 1.7.0 release.

-

Previously, when using a RHEL 9.2 real-time kernel, some of the webhooks did not work. Now, a fix is in place to check whether this RHEL 9.2 real-time kernel is being used. If the kernel is being used, a warning is displayed about the features that do not work, such as packet drop and neither Round-trip Time when using

s390xarchitecture. The fix is in OpenShift 4.16 and later. (NETOBSERV-1808) - Previously, in the Manage panels dialog in the Overview tab, filtering on total, bar, donut, or line did not show a result. Now the available panels are correctly filtered. (NETOBSERV-1540)

-

Previously, under high stress, the eBPF agents were susceptible to enter into a state where they generated a high number of small flows, almost not aggregated. With this fix, the aggregation process is still maintained under high stress, resulting in less flows being created. This fix improves the resource consumption not only in the eBPF agent but also in

flowlogs-pipelineand Loki. (NETOBSERV-1564) -

Previously, when the

workload_flows_totalmetric was enabled instead of thenamespace_flows_totalmetric, the health dashboard stopped showingBy namespaceflow charts. With this fix, the health dashboard now shows the flow charts when theworkload_flows_totalis enabled. (NETOBSERV-1746) -

Previously, when you used the

FlowMetricsAPI to generate a custom metric and later modified its labels, such as by adding a new label, the metric stopped populating and an error was shown in theflowlogs-pipelinelogs. With this fix, you can modify the labels, and the error is no longer raised in theflowlogs-pipelinelogs. (NETOBSERV-1748) -

Previously, there was an inconsistency with the default Loki

WriteBatchSizeconfiguration: it was set to 100 KB in theFlowCollectorCRD default, and 10 MB in the OLM sample or default configuration. Both are now aligned to 10 MB, which generally provides better performances and less resource footprint. (NETOBSERV-1766) - Previously, the eBPF flow filter on ports was ignored if you did not specify a protocol. With this fix, you can set eBPF flow filters independently on ports and or protocols. (NETOBSERV-1779)

- Previously, traffic from Pods to Services was hidden from the Topology view. Only the return traffic from Services to Pods was visible. With this fix, that traffic is correctly displayed. (NETOBSERV-1788)

- Previously, non-cluster administrator users that had access to Network Observability saw an error in the console plugin when they tried to filter for something that triggered auto-completion, such as a namespace. With this fix, no error is displayed, and the auto-completion returns the expected results. (NETOBSERV-1798)

- When the secondary interface support was added, you had to iterate multiple times to register the per network namespace with the netlink to learn about interface notifications. At the same time, unsuccessful handlers caused a leaking file descriptor because with TCX hook, unlike TC, handlers needed to be explicitly removed when the interface went down. Furthermore, when the network namespace was deleted, there was no Go close channel event to terminate the netlink goroutine socket, which caused go threads to leak. Now, there are no longer leaking file descriptors or go threads when you create or delete pods. (NETOBSERV-1805)

- Previously, the ICMP type and value were displaying 'n/a' in the Traffic flows table even when related data was available in the flow JSON. With this fix, ICMP columns display related values as expected in the flow table. (NETOBSERV-1806)

- Previously in the console plugin, it wasn’t always possible to filter for unset fields, such as unset DNS latency. With this fix, filtering on unset fields is now possible. (NETOBSERV-1816)

- Previously, when you cleared filters in the OpenShift web console plugin, sometimes the filters reappeared after you navigated to another page and returned to the page with filters. With this fix, filters do not unexpectedly reappear after they are cleared. (NETOBSERV-1733)

2.1.32. Network Observability Operator 1.7.0 known issues

You can review the following known issues for the Network Observability Operator 1.7.0 release.

- When you use the must-gather tool with network observability, logs are not collected when the cluster has FIPS enabled. (NETOBSERV-1830)

When the

spec.networkPolicyis enabled in theFlowCollector, which installs a network policy on thenetobservnamespace, it is impossible to use theFlowMetricsAPI. The network policy blocks calls to the validation webhook. As a workaround, use the following network policy:kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: allow-from-hostnetwork namespace: netobserv spec: podSelector: matchLabels: app: netobserv-operator ingress: - from: - namespaceSelector: matchLabels: policy-group.network.openshift.io/host-network: '' policyTypes: - Ingress

2.1.33. Network Observability Operator release notes 1.6.2 advisory

You can review the advisory for the Network Observability Operator 1.6.2 release.

2.1.34. Network Observability Operator release notes 1.6.2 CVEs

You can review the CVEs for the Network Observability Operator 1.6.2 release.

2.1.35. Network Observability Operator release notes 1.6.2 fixed issues

You can review the fixed issues for the Network Observability Operator 1.6.2 release.

- When the secondary interface support was added, there was a need to iterate multiple times to register the per network namespace with the netlink to learn about interface notifications. At the same time, unsuccessful handlers caused a leaking file descriptor because with TCX hook, unlike TC, handlers needed to be explicitly removed when the interface went down. Now, there are no longer leaking file descriptors when creating and deleting pods. (NETOBSERV-1805)

2.1.36. Network Observability Operator release notes 1.6.2 known issues

You can review the known issues for the Network Observability Operator 1.6.2 release.

- There was a compatibility issue with console plugins that would have prevented network observability from being installed on future versions of an OpenShift Container Platform cluster. By upgrading to 1.6.2, the compatibility issue is resolved and network observability can be installed as expected. (NETOBSERV-1737)

2.1.37. Network Observability Operator release notes 1.6.1 advisory

You can review the advisory for the Network Observability Operator 1.6.1 release.

2.1.38. Network Observability Operator release notes 1.6.1 CVEs

You can review the CVEs for the Network Observability Operator 1.6.1 release.

2.1.39. Network Observability Operator release notes 1.6.1 fixed issues

You can review the fixed issues for the Network Observability Operator 1.6.1 release.

- Previously, information about packet drops, such as the cause and TCP state, was only available in the Loki datastore and not in Prometheus. For that reason, the drop statistics in the OpenShift web console plugin Overview was only available with Loki. With this fix, information about packet drops is also added to metrics, so you can view drops statistics when Loki is disabled. (NETOBSERV-1649)

-

When the eBPF agent

PacketDropfeature was enabled, and sampling was configured to a value greater than1, reported dropped bytes and dropped packets ignored the sampling configuration. While this was done on purpose, so as not to miss any drops, a side effect was that the reported proportion of drops compared with non-drops became biased. For example, at a very high sampling rate, such as1:1000, it was likely that almost all the traffic appears to be dropped when observed from the console plugin. With this fix, the sampling configuration is honored with dropped bytes and packets. (NETOBSERV-1676) - Previously, the SR-IOV secondary interface was not detected if the interface was created first and then the eBPF agent was deployed. It was only detected if the agent was deployed first and then the SR-IOV interface was created. With this fix, the SR-IOV secondary interface is detected no matter the sequence of the deployments. (NETOBSERV-1697)

- Previously, when Loki was disabled, the Topology view in the OpenShift web console displayed the Cluster and Zone aggregation options in the slider beside the network topology diagram, even when the related features were not enabled. With this fix, the slider now only displays options according to the enabled features. (NETOBSERV-1705)

-

Previously, when Loki was disabled, and the OpenShift web console was first loading, an error would occur:

Request failed with status code 400 Loki is disabled. With this fix, the errors no longer occur. (NETOBSERV-1706) - Previously, in the Topology view of the OpenShift web console, when clicking on the Step into icon next to any graph node, the filters were not applied as required in order to set the focus to the selected graph node, resulting in showing a wide view of the Topology view in the OpenShift web console. With this fix, the filters are correctly set, effectively narrowing down the Topology. As part of this change, clicking the Step into icon on a Node now brings you to the Resource scope instead of the Namespaces scope. (NETOBSERV-1720)

- Previously, when Loki was disabled, in the Topology view of the OpenShift web console with the Scope set to Owner, clicking on the Step into icon next to any graph node would bring the Scope to Resource, which is not available without Loki, so an error message was shown. With this fix, the Step into icon is hidden in the Owner scope when Loki is disabled, so this scenario no longer occurs. (NETOBSERV-1721)

- Previously, when Loki was disabled, an error was displayed in the Topology view of the OpenShift web console when a group was set, but then the scope was changed so that the group becomes invalid. With this fix, the invalid group is removed, preventing the error. (NETOBSERV-1722)

-

When creating a

FlowCollectorresource from the OpenShift web console Form view, as opposed to the YAML view, the following settings were incorrectly managed by the web console:agent.ebpf.metrics.enableandprocessor.subnetLabels.openShiftAutoDetect. These settings can only be disabled in the YAML view, not in the Form view. To avoid any confusion, these settings have been removed from the Form view. They are still accessible in the YAML view. (NETOBSERV-1731) - Previously, the eBPF agent was unable to clean up traffic control flows installed before an ungraceful crash, for example a crash due to a SIGTERM signal. This led to the creation of multiple traffic control flow filters with the same name, since the older ones were not removed. With this fix, all previously installed traffic control flows are cleaned up when the agent starts, before installing new ones. (NETOBSERV-1732)

- Previously, when configuring custom subnet labels and keeping the OpenShift subnets auto-detection enabled, OpenShift subnets would take precedence over the custom ones, preventing the definition of custom labels for in cluster subnets. With this fix, custom defined subnets take precedence, allowing the definition of custom labels for in cluster subnets. (NETOBSERV-1734)

2.1.40. Network Observability Operator release notes 1.6.0 advisory

You can review the advisory for the Network Observability Operator 1.6.0 release.

2.1.41. Network Observability Operator 1.6.0 new features and enhancements

You can review the following new features and enhancements for the Network Observability Operator 1.6.0.

2.1.41.1. Enhanced use of Network Observability Operator without Loki

You can now use Prometheus metrics and rely less on Loki for storage when using the Network Observability Operator.

For more information, see:

2.1.41.2. Custom metrics API

You can create custom metrics out of flowlogs data by using the FlowMetrics API. Flowlogs data can be used with Prometheus labels to customize cluster information on your dashboards. You can add custom labels for any subnet that you want to identify in your flows and metrics. This enhancement can also be used to more easily identify external traffic by using the new labels SrcSubnetLabel and DstSubnetLabel, which exists both in flow logs and in metrics. Those fields are empty when there is external traffic, which gives a way to identify it.

For more information, see:

2.1.41.3. eBPF performance enhancements

Experience improved performances of the eBPF agent, in terms of CPU and memory, with the following updates:

- The eBPF agent now uses TCX webhooks instead of TC.

The NetObserv / Health dashboard has a new section that shows eBPF metrics.

- Based on the new eBPF metrics, an alert notifies you when the eBPF agent is dropping flows.

- Loki storage demand decreases significantly now that duplicated flows are removed. Instead of having multiple, individual duplicated flows per network interface, there is one de-duplicated flow with a list of related network interfaces.

With the duplicated flows update, the Interface and Interface Direction fields in the Network Traffic table are renamed to Interfaces and Interface Directions, so any bookmarked Quick filter queries using these fields need to be updated to interfaces and ifdirections.

For more information, see:

2.1.41.4. eBPF collection rule-based filtering

You can use rule-based filtering to reduce the volume of created flows. When this option is enabled, the Netobserv / Health dashboard for eBPF agent statistics has the Filtered flows rate view.

For more information, see:

2.1.42. Network Observability Operator 1.6.0 fixed issues

You can review the following fixed issues for the Network Observability Operator 1.6.0.

-

Previously, a dead link to the OpenShift Container Platform documentation was displayed in the Operator Lifecycle Manager (OLM) form for the

FlowMetricsAPI creation. Now the link has been updated to point to a valid page. (NETOBSERV-1607) - Previously, the Network Observability Operator description in the Operator Hub displayed a broken link to the documentation. With this fix, this link is restored. (NETOBSERV-1544)

-

Previously, if Loki was disabled and the Loki

Modewas set toLokiStack, or if Loki manual TLS configuration was configured, the Network Observability Operator still tried to read the Loki CA certificates. With this fix, when Loki is disabled, the Loki certificates are not read, even if there are settings in the Loki configuration. (NETOBSERV-1647) -

Previously, the

ocmust-gatherplugin for the Network Observability Operator was only working on theamd64architecture and failing on all others because the plugin was usingamd64for theocbinary. Now, the Network Observability Operatorocmust-gatherplugin collects logs on any architecture platform. -

Previously, when filtering on IP addresses using

not equal to, the Network Observability Operator would return a request error. Now, the IP filtering works in bothequalandnot equal tocases for IP addresses and ranges. (NETOBSERV-1630) -

Previously, when a user was not an admin, the error messages were not consistent with the selected tab of the Network Traffic view in the web console. Now, the

user not adminerror displays on any tab with improved display.(NETOBSERV-1621)

2.1.43. Network Observability Operator 1.6.0 known issues

You can review the following known issues for the Network Observability Operator 1.6.0.

-

When the eBPF agent

PacketDropfeature is enabled, and sampling is configured to a value greater than1, reported dropped bytes and dropped packets ignore the sampling configuration. While this is done on purpose to not miss any drops, a side effect is that the reported proportion of drops compared to non-drops becomes biased. For example, at a very high sampling rate, such as1:1000, it is likely that almost all the traffic appears to be dropped when observed from the console plugin. (NETOBSERV-1676) - In the Manage panels window in the Overview tab, filtering on total, bar, donut, or line does not show any result. (NETOBSERV-1540)

- The SR-IOV secondary interface is not detected if the interface was created first and then the eBPF agent was deployed. It is only detected if the agent was deployed first and then the SR-IOV interface is created. (NETOBSERV-1697)

- When Loki is disabled, the Topology view in the OpenShift web console always shows the Cluster and Zone aggregation options in the slider beside the network topology diagram, even when the related features are not enabled. There is no specific workaround, besides ignoring these slider options. (NETOBSERV-1705)

-

When Loki is disabled, and the OpenShift web console first loads, it might display an error:

Request failed with status code 400 Loki is disabled. As a workaround, you can continue switching content on the Network Traffic page, such as clicking between the Topology and the Overview tabs. The error should disappear. (NETOBSERV-1706)

2.1.44. Network Observability Operator 1.5.0 advisory

You can view the following advisory for the Network Observability Operator 1.5 release.

2.1.45. Network Observability Operator 1.5.0 new features and enhancements

You can view the following new features and enhancements for the Network Observability Operator 1.5 release.

2.1.45.1. DNS tracking enhancements

In 1.5, the TCP protocol is now supported in addition to UDP. New dashboards are also added to the Overview view of the Network Traffic page.

For more information, see:

2.1.45.2. Round-trip time (RTT)

You can use TCP handshake Round-Trip Time (RTT) captured from the fentry/tcp_rcv_established Extended Berkeley Packet Filter (eBPF) hookpoint to read smoothed round-trip time (SRTT) and analyze network flows. In the Overview, Network Traffic, and Topology pages in web console, you can monitor network traffic and troubleshoot with RTT metrics, filtering, and edge labeling.

For more information, see:

2.1.45.3. Metrics, dashboards, and alerts enhancements

The network observability metrics dashboards in Observe → Dashboards → NetObserv have new metrics types you can use to create Prometheus alerts. You can now define available metrics in the includeList specification. In previous releases, these metrics were defined in the ignoreTags specification.

For a complete list of these metrics, see:

2.1.45.4. Improvements for network observability without Loki

You can create Prometheus alerts for the Netobserv dashboard using DNS, Packet drop, and RTT metrics, even if you don’t use Loki. In the previous version of network observability, 1.4, these metrics were only available for querying and analysis in the Network Traffic, Overview, and Topology views, which are not available without Loki.

For more information, see:

2.1.45.5. Availability zones

You can configure the FlowCollector resource to collect information about the cluster availability zones. This configuration enriches the network flow data with the topology.kubernetes.io/zone label value applied to the nodes.

For more information, see:

2.1.45.6. Notable enhancements

The 1.5 release of the Network Observability Operator adds improvements and new capabilities to the OpenShift Container Platform web console plugin and the Operator configuration.

2.1.45.7. Performance enhancements

The

spec.agent.ebpf.kafkaBatchSizedefault is changed from10MBto1MBto enhance eBPF performance when using Kafka.ImportantWhen upgrading from an existing installation, this new value is not set automatically in the configuration. If you monitor a performance regression with the eBPF Agent memory consumption after upgrading, you might consider reducing the

kafkaBatchSizeto the new value.

2.1.45.8. Web console enhancements:

- There are new panels added to the Overview view for DNS and RTT: Min, Max, P90, P99.

There are new panel display options added:

- Focus on one panel while keeping others viewable but with smaller focus.

- Switch graph type.

- Show Top and Overall.

- A collection latency warning is shown in the Custom time range window.

- There is enhanced visibility for the contents of the Manage panels and Manage columns pop-up windows.

- The Differentiated Services Code Point (DSCP) field for egress QoS is available for filtering QoS DSCP in the web console Network Traffic page.

2.1.45.9. Configuration enhancements:

-

The

LokiStackmode in thespec.loki.modespecification simplifies installation by automatically setting URLs, TLS, cluster roles and a cluster role binding, as well as theauthTokenvalue. TheManualmode allows more control over configuration of these settings. -

The API version changes from

flows.netobserv.io/v1beta1toflows.netobserv.io/v1beta2.

2.1.46. Network Observability Operator 1.5.0 fixed issues

You can view the following fixed issues for the Network Observability Operator 1.5 release.

-

Previously, it was not possible to register the console plugin manually in the web console interface if the automatic registration of the console plugin was disabled. If the

spec.console.registervalue was set tofalsein theFlowCollectorresource, the Operator would override and erase the plugin registration. With this fix, setting thespec.console.registervalue tofalsedoes not impact the console plugin registration or registration removal. As a result, the plugin can be safely registered manually. (NETOBSERV-1134) -

Previously, using the default metrics settings, the NetObserv/Health dashboard was showing an empty graph named Flows Overhead. This metric was only available by removing "namespaces-flows" and "namespaces" from the

ignoreTagslist. With this fix, this metric is visible when you use the default metrics setting. (NETOBSERV-1351) - Previously, the node on which the eBPF Agent was running would not resolve with a specific cluster configuration. This resulted in cascading consequences that culminated in a failure to provide some of the traffic metrics. With this fix, the eBPF agent’s node IP is safely provided by the Operator, inferred from the pod status. Now, the missing metrics are restored. (NETOBSERV-1430)

- Previously, the Loki error 'Input size too long' error for the Loki Operator did not include additional information to troubleshoot the problem. With this fix, help is directly displayed in the web console next to the error with a direct link for more guidance. (NETOBSERV-1464)

-

Previously, the console plugin read timeout was forced to 30s. With the

FlowCollectorv1beta2API update, you can configure thespec.loki.readTimeoutspecification to update this value according to the Loki OperatorqueryTimeoutlimit. (NETOBSERV-1443) -

Previously, the Operator bundle did not display some of the supported features by CSV annotations as expected, such as

features.operators.openshift.io/…With this fix, these annotations are set in the CSV as expected. (NETOBSERV-1305) -

Previously, the

FlowCollectorstatus sometimes oscillated betweenDeploymentInProgressandReadystates during reconciliation. With this fix, the status only becomesReadywhen all of the underlying components are fully ready. (NETOBSERV-1293)

2.1.47. Network Observability Operator 1.5.0 known issues

You can view the following known issues for the Network Observability Operator 1.5 release.

-

When trying to access the web console, cache issues on OCP 4.14.10 prevent access to the Observe view. The web console shows the error message:

Failed to get a valid plugin manifest from /api/plugins/monitoring-plugin/. The recommended workaround is to update the cluster to the latest minor version. If this does not work, you need to apply the workarounds described in this Red Hat Knowledgebase article.(NETOBSERV-1493) -

Since the 1.3.0 release of the Network Observability Operator, installing the Operator causes a warning kernel taint to appear. The reason for this error is that the network observability eBPF agent has memory constraints that prevent preallocating the entire hashmap table. The Operator eBPF agent sets the

BPF_F_NO_PREALLOCflag so that pre-allocation is disabled when the hashmap is too memory expansive.

2.1.48. Network Observability Operator 1.4.2 advisory

The following advisory is available for the Network Observability Operator 1.4.2:

2.1.49. Network Observability Operator 1.4.2 CVEs

You can review the following CVEs in the Network Observability Operator 1.4.2 release.

2.1.50. Network Observability Operator 1.4.1 advisory

You can review the following advisory for the Network Observability Operator 1.4.1.

2.1.51. Network Observability Operator release 1.4.1 CVEs

You can review the following CVEs in the Network Observability Operator 1.4.1 release.

2.1.52. Network Observability Operator release notes 1.4.1 fixed issues

You can review the following fixed issues in the Network Observability Operator 1.4.1 release.

- In 1.4, there was a known issue when sending network flow data to Kafka. The Kafka message key was ignored, causing an error with connection tracking. Now the key is used for partitioning, so each flow from the same connection is sent to the same processor. (NETOBSERV-926)

-

In 1.4, the

Innerflow direction was introduced to account for flows between pods running on the same node. Flows with theInnerdirection were not taken into account in the generated Prometheus metrics derived from flows, resulting in under-evaluated bytes and packets rates. Now, derived metrics are including flows with theInnerdirection, providing correct bytes and packets rates. (NETOBSERV-1344)

2.1.53. Network observability release notes 1.4.0 advisory

You can review the following advisory for the Network Observability Operator 1.4.0 release.

2.1.54. Network observability release notes 1.4.0 new features and enhancements

You can review the following new features and enhancements in the Network Observability Operator 1.4.0 release.

2.1.54.1. Notable enhancements

The 1.4 release of the Network Observability Operator adds improvements and new capabilities to the OpenShift Container Platform web console plugin and the Operator configuration.

2.1.54.2. Web console enhancements:

- In the Query Options, the Duplicate flows checkbox is added to choose whether or not to show duplicated flows.

-

You can now filter source and destination traffic with

One-way,

One-way,

Back-and-forth, and Swap filters.

Back-and-forth, and Swap filters.

The network observability metrics dashboards in Observe → Dashboards → NetObserv and NetObserv / Health are modified as follows:

- The NetObserv dashboard shows top bytes, packets sent, packets received per nodes, namespaces, and workloads. Flow graphs are removed from this dashboard.

- The NetObserv / Health dashboard shows flows overhead as well as top flow rates per nodes, namespaces, and workloads.

- Infrastructure and Application metrics are shown in a split-view for namespaces and workloads.

For more information, see:

2.1.54.3. Configuration enhancements:

- You now have the option to specify different namespaces for any configured ConfigMap or Secret reference, such as in certificates configuration.

-

The

spec.processor.clusterNameparameter is added so that the name of the cluster appears in the flows data. This is useful in a multi-cluster context. When using OpenShift Container Platform, leave empty to make it automatically determined.

For more information, see:

2.1.54.4. Network observability without Loki