Networking

Configuring and managing cluster networking

Abstract

Chapter 1. About networking

Red Hat OpenShift Networking is an ecosystem of features, plugins and advanced networking capabilities that extend Kubernetes networking with the advanced networking-related features that your cluster needs to manage its network traffic for one or multiple hybrid clusters. This ecosystem of networking capabilities integrates ingress, egress, load balancing, high-performance throughput, security, inter- and intra-cluster traffic management and provides role-based observability tooling to reduce its natural complexities.

OpenShift SDN CNI is deprecated as of OpenShift Container Platform 4.14. As of OpenShift Container Platform 4.15, the network plugin is not an option for new installations. In a subsequent future release, the OpenShift SDN network plugin is planned to be removed and no longer supported. Red Hat will provide bug fixes and support for this feature until it is removed, but this feature will no longer receive enhancements. As an alternative to OpenShift SDN CNI, you can use OVN Kubernetes CNI instead. For more information, see OpenShift SDN CNI removal.

The following list highlights some of the most commonly used Red Hat OpenShift Networking features available on your cluster:

Primary cluster network provided by either of the following Container Network Interface (CNI) plugins:

- OVN-Kubernetes network plugin, the default plugin

- OpenShift SDN network plugin

- Certified 3rd-party alternative primary network plugins

- Cluster Network Operator for network plugin management

- Ingress Operator for TLS encrypted web traffic

- DNS Operator for name assignment

- MetalLB Operator for traffic load balancing on bare metal clusters

- IP failover support for high-availability

- Additional hardware network support through multiple CNI plugins, including for macvlan, ipvlan, and SR-IOV hardware networks

- IPv4, IPv6, and dual stack addressing

- Hybrid Linux-Windows host clusters for Windows-based workloads

- Red Hat OpenShift Service Mesh for discovery, load balancing, service-to-service authentication, failure recovery, metrics, and monitoring of services

- Single-node OpenShift

- Network Observability Operator for network debugging and insights

- Submariner for inter-cluster networking

- Red Hat Service Interconnect for layer 7 inter-cluster networking

Chapter 2. Understanding networking

Cluster Administrators have several options for exposing applications that run inside a cluster to external traffic and securing network connections:

- Service types, such as node ports or load balancers

-

API resources, such as

IngressandRoute

By default, Kubernetes allocates each pod an internal IP address for applications running within the pod. Pods and their containers can network, but clients outside the cluster do not have networking access. When you expose your application to external traffic, giving each pod its own IP address means that pods can be treated like physical hosts or virtual machines in terms of port allocation, networking, naming, service discovery, load balancing, application configuration, and migration.

Some cloud platforms offer metadata APIs that listen on the 169.254.169.254 IP address, a link-local IP address in the IPv4 169.254.0.0/16 CIDR block.

This CIDR block is not reachable from the pod network. Pods that need access to these IP addresses must be given host network access by setting the spec.hostNetwork field in the pod spec to true.

If you allow a pod host network access, you grant the pod privileged access to the underlying network infrastructure.

2.1. OpenShift Container Platform DNS

If you are running multiple services, such as front-end and back-end services for use with multiple pods, environment variables are created for user names, service IPs, and more so the front-end pods can communicate with the back-end services. If the service is deleted and recreated, a new IP address can be assigned to the service, and requires the front-end pods to be recreated to pick up the updated values for the service IP environment variable. Additionally, the back-end service must be created before any of the front-end pods to ensure that the service IP is generated properly, and that it can be provided to the front-end pods as an environment variable.

For this reason, OpenShift Container Platform has a built-in DNS so that the services can be reached by the service DNS as well as the service IP/port.

2.2. OpenShift Container Platform Ingress Operator

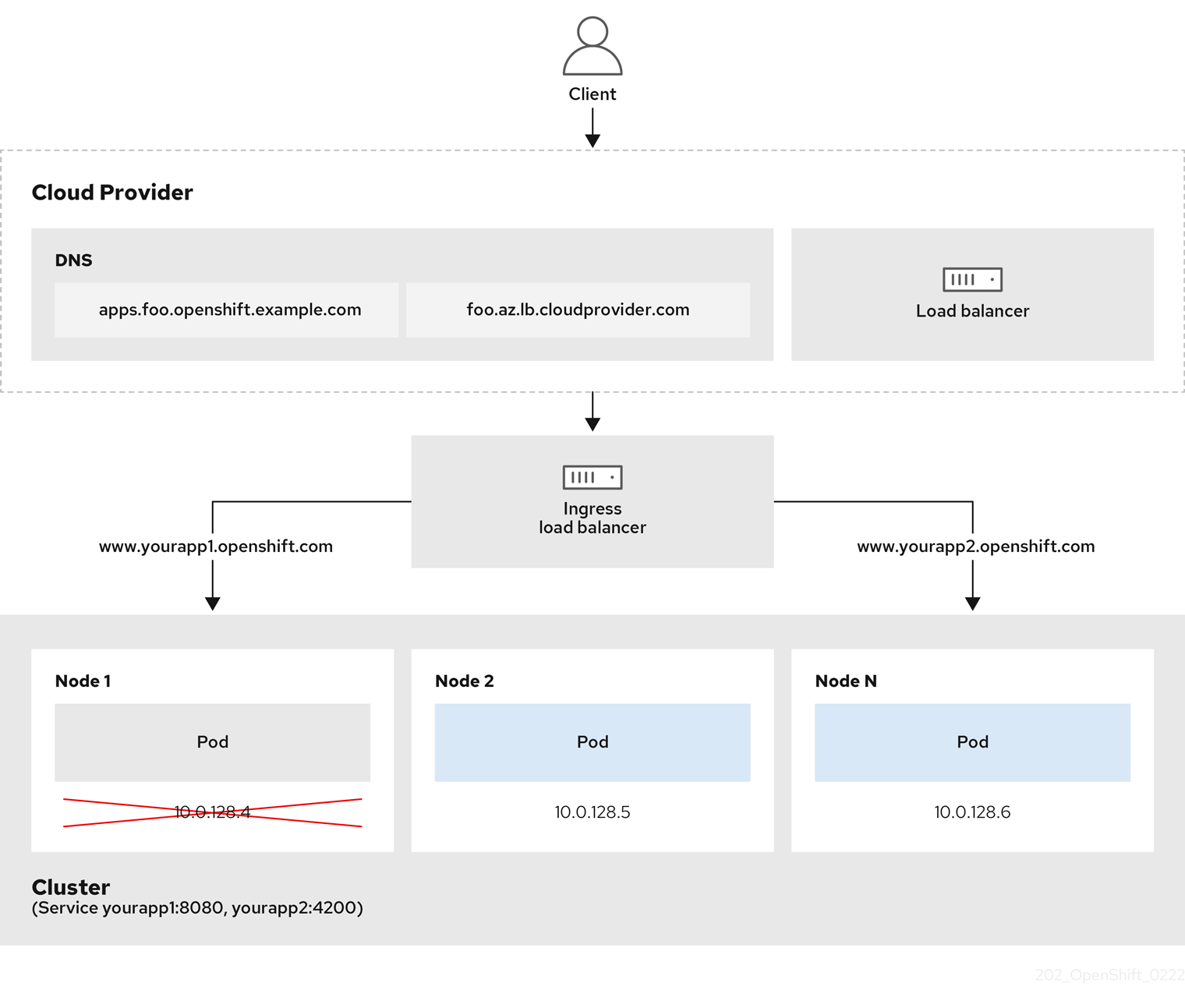

When you create your OpenShift Container Platform cluster, pods and services running on the cluster are each allocated their own IP addresses. The IP addresses are accessible to other pods and services running nearby but are not accessible to outside clients.

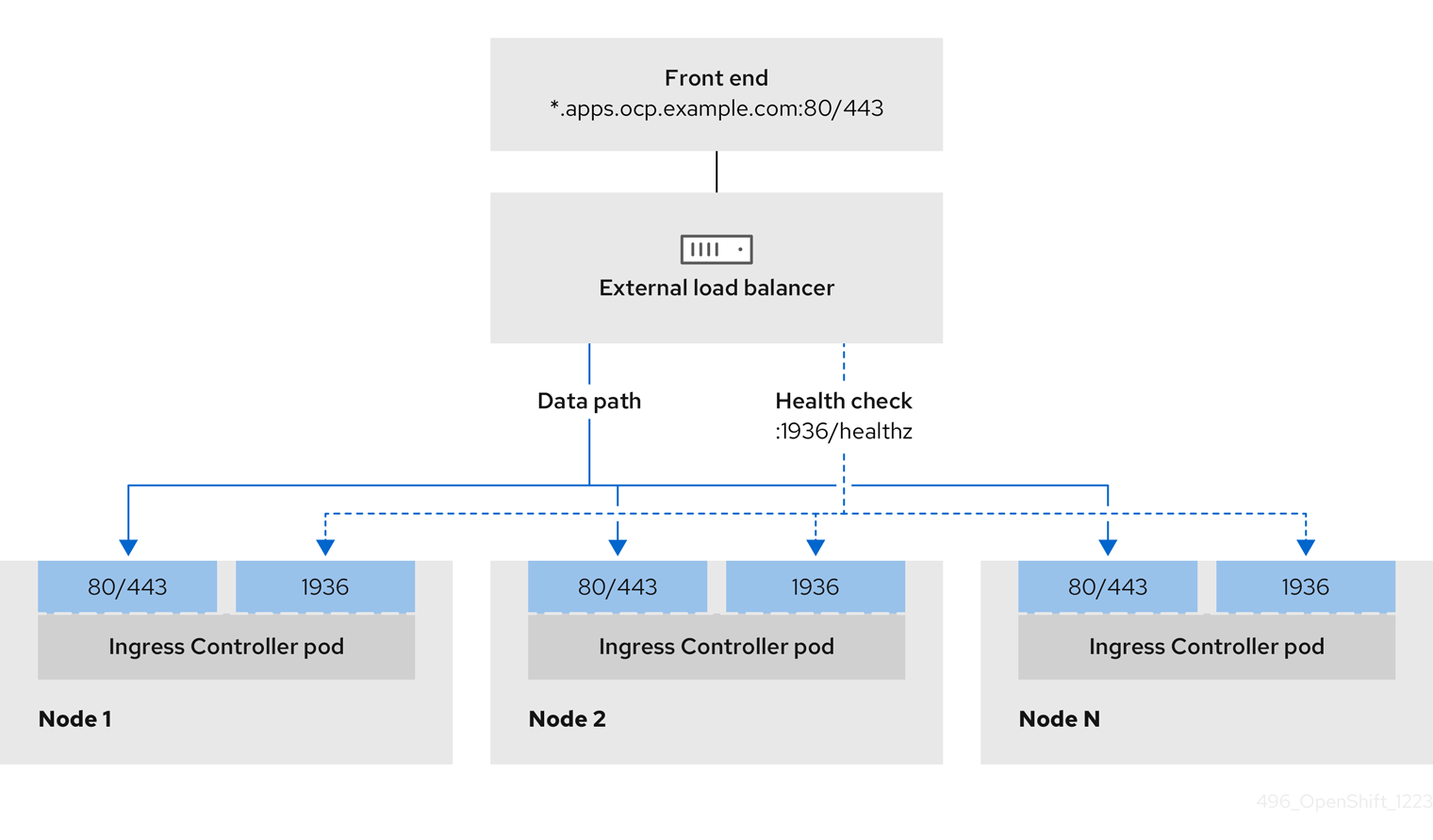

The Ingress Operator makes it possible for external clients to access your service by deploying and managing one or more HAProxy-based Ingress Controllers to handle routing. You can use the Ingress Operator to route traffic by specifying OpenShift Container Platform Route and Kubernetes Ingress resources. Configurations within the Ingress Controller, such as the ability to define endpointPublishingStrategy type and internal load balancing, provide ways to publish Ingress Controller endpoints.

2.2.1. Comparing routes and Ingress

The Kubernetes Ingress resource in OpenShift Container Platform implements the Ingress Controller with a shared router service that runs as a pod inside the cluster. The most common way to manage Ingress traffic is with the Ingress Controller. You can scale and replicate this pod like any other regular pod. This router service is based on HAProxy, which is an open source load balancer solution.

The OpenShift Container Platform route provides Ingress traffic to services in the cluster. Routes provide advanced features that might not be supported by standard Kubernetes Ingress Controllers, such as TLS re-encryption, TLS passthrough, and split traffic for blue-green deployments.

Ingress traffic accesses services in the cluster through a route. Routes and Ingress are the main resources for handling Ingress traffic. Ingress provides features similar to a route, such as accepting external requests and delegating them based on the route. However, with Ingress you can only allow certain types of connections: HTTP/2, HTTPS and server name identification (SNI), and TLS with certificate. In OpenShift Container Platform, routes are generated to meet the conditions specified by the Ingress resource.

2.3. Glossary of common terms for OpenShift Container Platform networking

This glossary defines common terms that are used in the networking content.

- authentication

- To control access to an OpenShift Container Platform cluster, a cluster administrator can configure user authentication and ensure only approved users access the cluster. To interact with an OpenShift Container Platform cluster, you must authenticate to the OpenShift Container Platform API. You can authenticate by providing an OAuth access token or an X.509 client certificate in your requests to the OpenShift Container Platform API.

- AWS Load Balancer Operator

-

The AWS Load Balancer (ALB) Operator deploys and manages an instance of the

aws-load-balancer-controller. - Cluster Network Operator

- The Cluster Network Operator (CNO) deploys and manages the cluster network components in an OpenShift Container Platform cluster. This includes deployment of the Container Network Interface (CNI) network plugin selected for the cluster during installation.

- config map

-

A config map provides a way to inject configuration data into pods. You can reference the data stored in a config map in a volume of type

ConfigMap. Applications running in a pod can use this data. - custom resource (CR)

- A CR is extension of the Kubernetes API. You can create custom resources.

- DNS

- Cluster DNS is a DNS server which serves DNS records for Kubernetes services. Containers started by Kubernetes automatically include this DNS server in their DNS searches.

- DNS Operator

- The DNS Operator deploys and manages CoreDNS to provide a name resolution service to pods. This enables DNS-based Kubernetes Service discovery in OpenShift Container Platform.

- deployment

- A Kubernetes resource object that maintains the life cycle of an application.

- domain

- Domain is a DNS name serviced by the Ingress Controller.

- egress

- The process of data sharing externally through a network’s outbound traffic from a pod.

- External DNS Operator

- The External DNS Operator deploys and manages ExternalDNS to provide the name resolution for services and routes from the external DNS provider to OpenShift Container Platform.

- HTTP-based route

- An HTTP-based route is an unsecured route that uses the basic HTTP routing protocol and exposes a service on an unsecured application port.

- Ingress

- The Kubernetes Ingress resource in OpenShift Container Platform implements the Ingress Controller with a shared router service that runs as a pod inside the cluster.

- Ingress Controller

- The Ingress Operator manages Ingress Controllers. Using an Ingress Controller is the most common way to allow external access to an OpenShift Container Platform cluster.

- installer-provisioned infrastructure

- The installation program deploys and configures the infrastructure that the cluster runs on.

- kubelet

- A primary node agent that runs on each node in the cluster to ensure that containers are running in a pod.

- Kubernetes NMState Operator

- The Kubernetes NMState Operator provides a Kubernetes API for performing state-driven network configuration across the OpenShift Container Platform cluster’s nodes with NMState.

- kube-proxy

- Kube-proxy is a proxy service which runs on each node and helps in making services available to the external host. It helps in forwarding the request to correct containers and is capable of performing primitive load balancing.

- load balancers

- OpenShift Container Platform uses load balancers for communicating from outside the cluster with services running in the cluster.

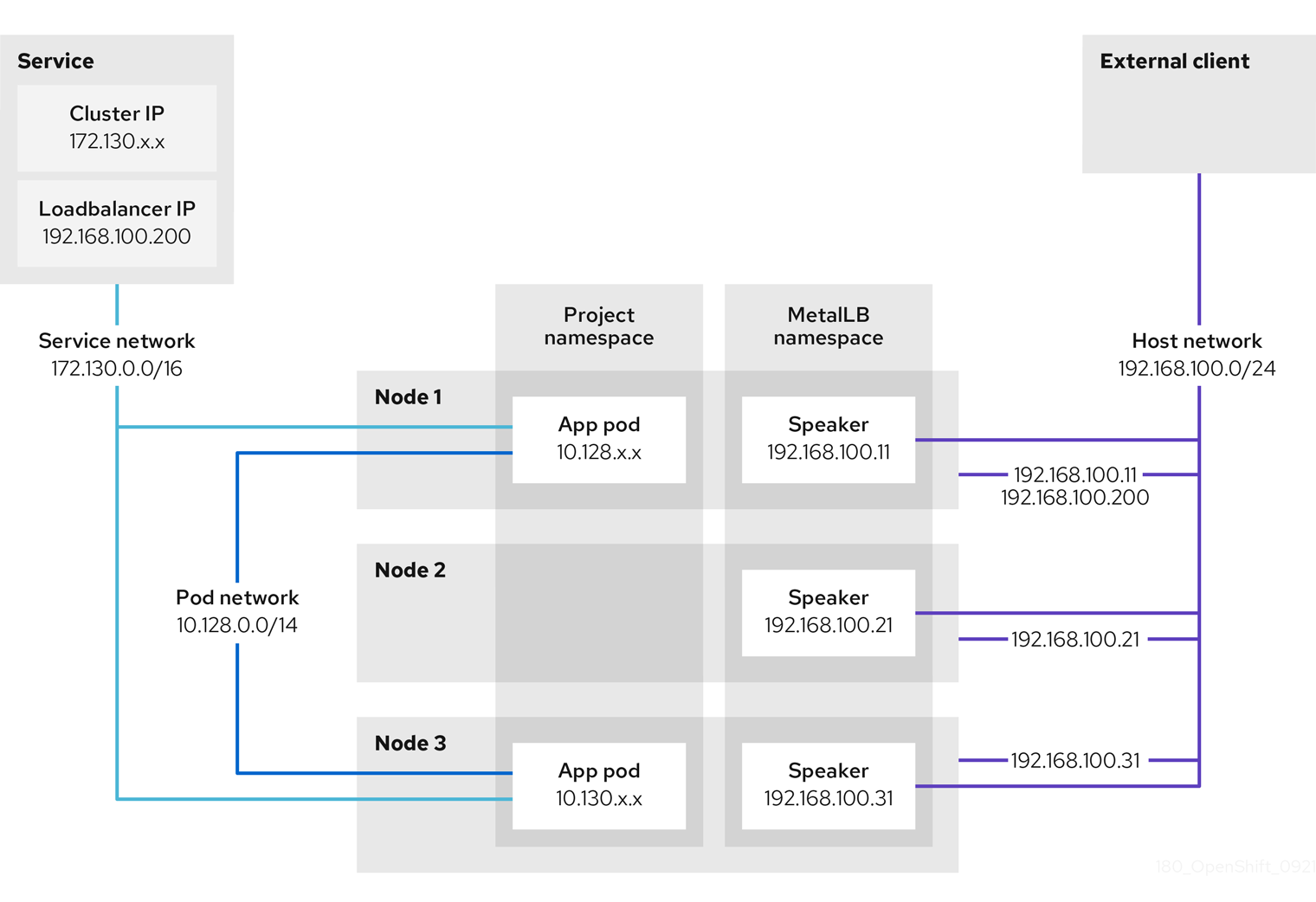

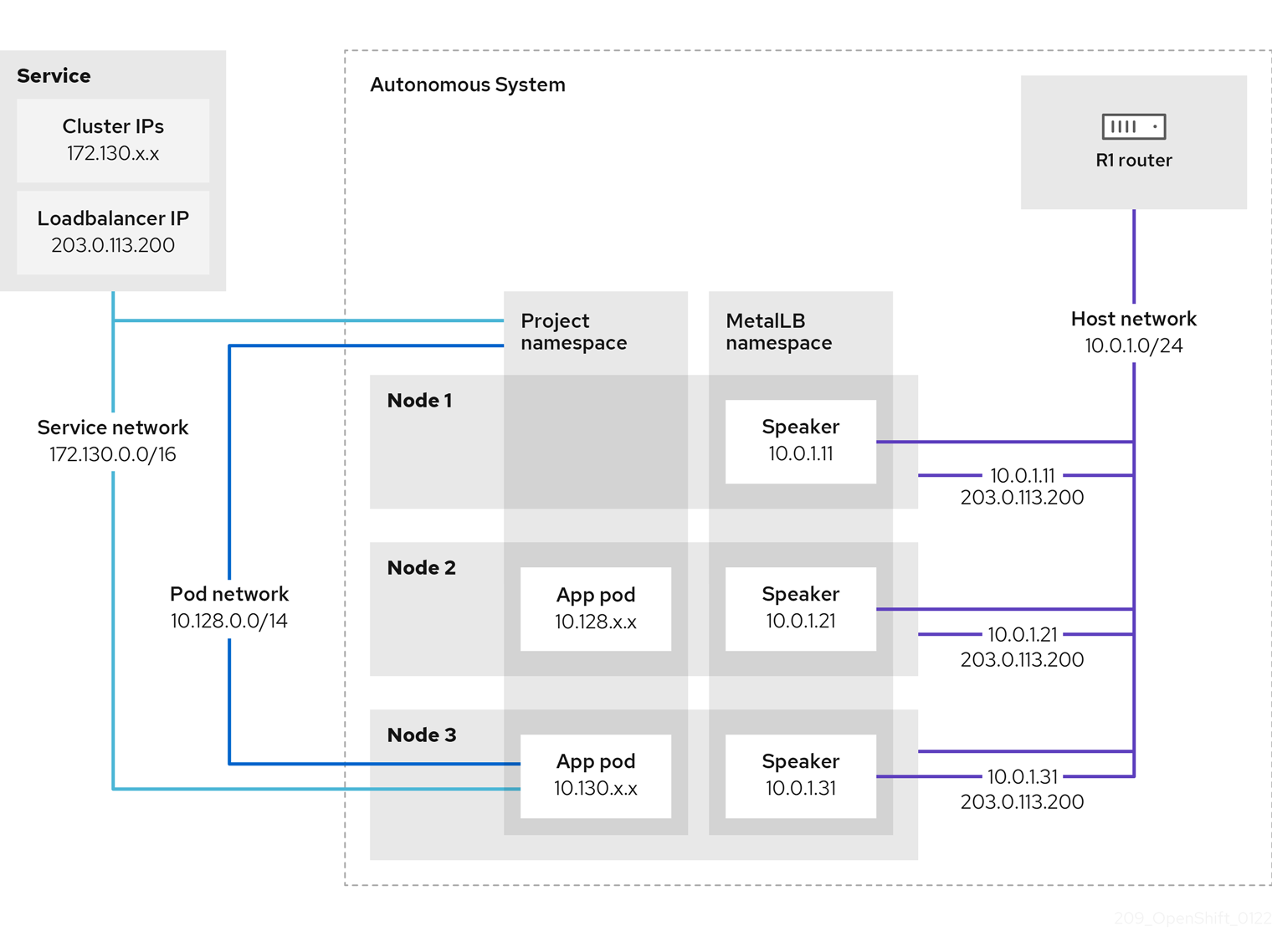

- MetalLB Operator

-

As a cluster administrator, you can add the MetalLB Operator to your cluster so that when a service of type

LoadBalanceris added to the cluster, MetalLB can add an external IP address for the service. - multicast

- With IP multicast, data is broadcast to many IP addresses simultaneously.

- namespaces

- A namespace isolates specific system resources that are visible to all processes. Inside a namespace, only processes that are members of that namespace can see those resources.

- networking

- Network information of a OpenShift Container Platform cluster.

- node

- A worker machine in the OpenShift Container Platform cluster. A node is either a virtual machine (VM) or a physical machine.

- OpenShift Container Platform Ingress Operator

-

The Ingress Operator implements the

IngressControllerAPI and is the component responsible for enabling external access to OpenShift Container Platform services. - pod

- One or more containers with shared resources, such as volume and IP addresses, running in your OpenShift Container Platform cluster. A pod is the smallest compute unit defined, deployed, and managed.

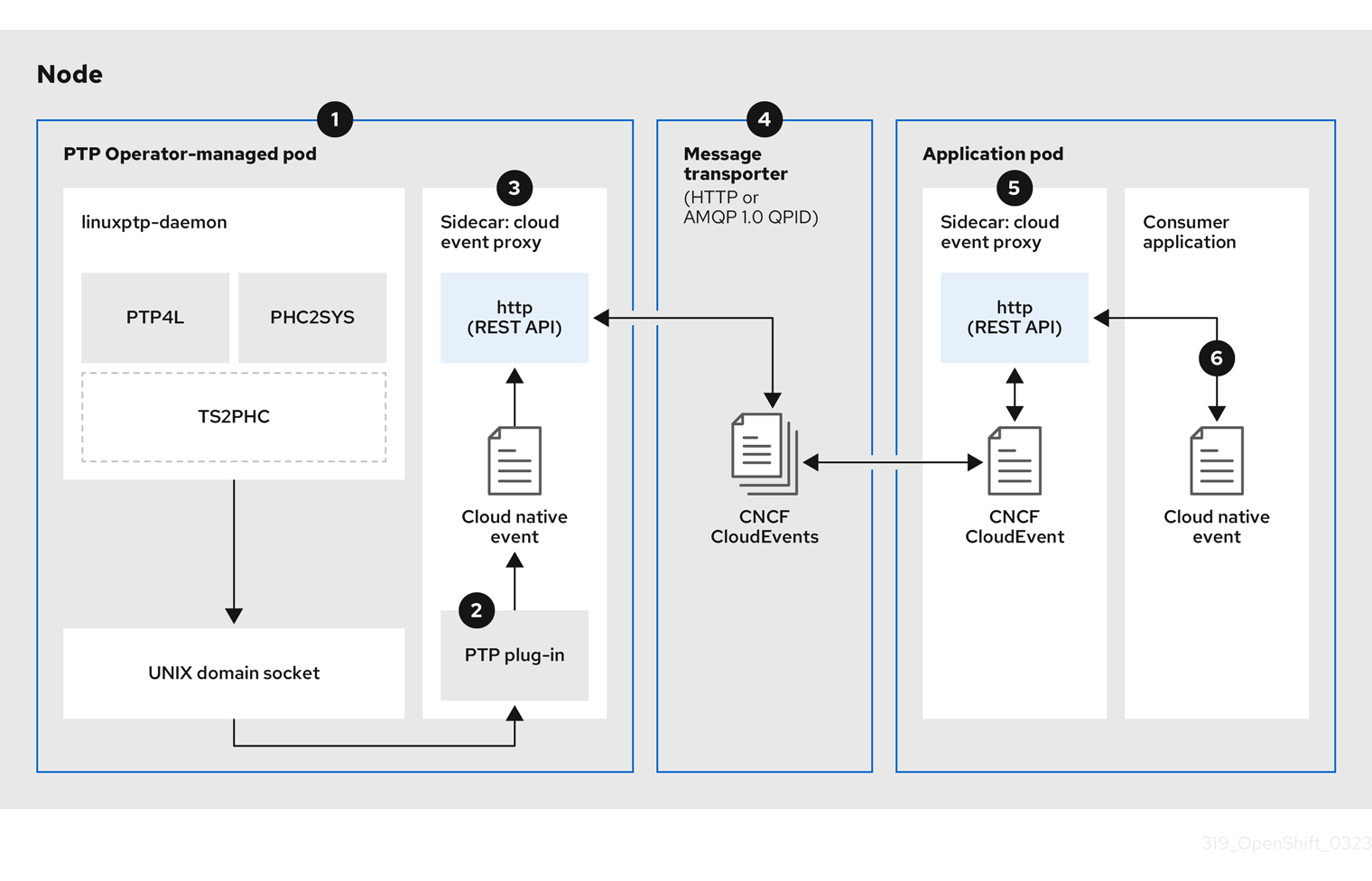

- PTP Operator

-

The PTP Operator creates and manages the

linuxptpservices. - route

- The OpenShift Container Platform route provides Ingress traffic to services in the cluster. Routes provide advanced features that might not be supported by standard Kubernetes Ingress Controllers, such as TLS re-encryption, TLS passthrough, and split traffic for blue-green deployments.

- scaling

- Increasing or decreasing the resource capacity.

- service

- Exposes a running application on a set of pods.

- Single Root I/O Virtualization (SR-IOV) Network Operator

- The Single Root I/O Virtualization (SR-IOV) Network Operator manages the SR-IOV network devices and network attachments in your cluster.

- software-defined networking (SDN)

- OpenShift Container Platform uses a software-defined networking (SDN) approach to provide a unified cluster network that enables communication between pods across the OpenShift Container Platform cluster.

OpenShift SDN CNI is deprecated as of OpenShift Container Platform 4.14. As of OpenShift Container Platform 4.15, the network plugin is not an option for new installations. In a subsequent future release, the OpenShift SDN network plugin is planned to be removed and no longer supported. Red Hat will provide bug fixes and support for this feature until it is removed, but this feature will no longer receive enhancements. As an alternative to OpenShift SDN CNI, you can use OVN Kubernetes CNI instead. For more information, see OpenShift SDN CNI removal.

- Stream Control Transmission Protocol (SCTP)

- SCTP is a reliable message based protocol that runs on top of an IP network.

- taint

- Taints and tolerations ensure that pods are scheduled onto appropriate nodes. You can apply one or more taints on a node.

- toleration

- You can apply tolerations to pods. Tolerations allow the scheduler to schedule pods with matching taints.

- web console

- A user interface (UI) to manage OpenShift Container Platform.

Chapter 3. Accessing hosts

Learn how to create a bastion host to access OpenShift Container Platform instances and access the control plane nodes with secure shell (SSH) access.

3.1. Accessing hosts on Amazon Web Services in an installer-provisioned infrastructure cluster

The OpenShift Container Platform installer does not create any public IP addresses for any of the Amazon Elastic Compute Cloud (Amazon EC2) instances that it provisions for your OpenShift Container Platform cluster. To be able to SSH to your OpenShift Container Platform hosts, you must follow this procedure.

Procedure

-

Create a security group that allows SSH access into the virtual private cloud (VPC) created by the

openshift-installcommand. - Create an Amazon EC2 instance on one of the public subnets the installer created.

Associate a public IP address with the Amazon EC2 instance that you created.

Unlike with the OpenShift Container Platform installation, you should associate the Amazon EC2 instance you created with an SSH keypair. It does not matter what operating system you choose for this instance, as it will simply serve as an SSH bastion to bridge the internet into your OpenShift Container Platform cluster’s VPC. The Amazon Machine Image (AMI) you use does matter. With Red Hat Enterprise Linux CoreOS (RHCOS), for example, you can provide keys via Ignition, like the installer does.

After you provisioned your Amazon EC2 instance and can SSH into it, you must add the SSH key that you associated with your OpenShift Container Platform installation. This key can be different from the key for the bastion instance, but does not have to be.

NoteDirect SSH access is only recommended for disaster recovery. When the Kubernetes API is responsive, run privileged pods instead.

-

Run

oc get nodes, inspect the output, and choose one of the nodes that is a master. The hostname looks similar toip-10-0-1-163.ec2.internal. From the bastion SSH host you manually deployed into Amazon EC2, SSH into that control plane host. Ensure that you use the same SSH key you specified during the installation:

$ ssh -i <ssh-key-path> core@<master-hostname>

Chapter 4. Networking dashboards

Networking metrics are viewable in dashboards within the OpenShift Container Platform web console, under Observe → Dashboards.

4.1. Network Observability Operator

If you have the Network Observability Operator installed, you can view network traffic metrics dashboards by selecting the Netobserv dashboard from the Dashboards drop-down list. For more information about metrics available in this Dashboard, see Network Observability metrics dashboards.

4.2. Networking and OVN-Kubernetes dashboard

You can view both general networking metrics as well as OVN-Kubernetes metrics from the dashboard.

To view general networking metrics, select Networking/Linux Subsystem Stats from the Dashboards drop-down list. You can view the following networking metrics from the dashboard: Network Utilisation, Network Saturation, and Network Errors.

To view OVN-Kubernetes metrics select Networking/Infrastructure from the Dashboards drop-down list. You can view the following OVN-Kuberenetes metrics: Networking Configuration, TCP Latency Probes, Control Plane Resources, and Worker Resources.

4.3. Ingress Operator dashboard

You can view networking metrics handled by the Ingress Operator from the dashboard. This includes metrics like the following:

- Incoming and outgoing bandwidth

- HTTP error rates

- HTTP server response latency

To view these Ingress metrics, select Networking/Ingress from the Dashboards drop-down list. You can view Ingress metrics for the following categories: Top 10 Per Route, Top 10 Per Namespace, and Top 10 Per Shard.

Chapter 5. Networking Operators

5.1. Kubernetes NMState Operator

The Kubernetes NMState Operator provides a Kubernetes API for performing state-driven network configuration across the OpenShift Container Platform cluster’s nodes with NMState. The Kubernetes NMState Operator provides users with functionality to configure various network interface types, DNS, and routing on cluster nodes. Additionally, the daemons on the cluster nodes periodically report on the state of each node’s network interfaces to the API server.

Red Hat supports the Kubernetes NMState Operator in production environments on bare-metal, IBM Power®, IBM Z®, IBM® LinuxONE, VMware vSphere, and OpenStack installations.

Before you can use NMState with OpenShift Container Platform, you must install the Kubernetes NMState Operator. After you install the Kubernetes NMState Operator, you can complete the following tasks:

- Observing and updating the node network state and configuration

-

Creating a manifest object that includes a customized

br-exbridge For more information on these tasks, see the Additional resources section

Before you can use NMState with OpenShift Container Platform, you must install the Kubernetes NMState Operator.

The Kubernetes NMState Operator updates the network configuration of a secondary NIC. The Operator cannot update the network configuration of the primary NIC, or update the br-ex bridge on most on-premise networks.

On a bare-metal platform, using the Kubernetes NMState Operator to update the br-ex bridge network configuration is only supported if you set the br-ex bridge as the interface in a machine config manifest file. To update the br-ex bridge as a postinstallation task, you must set the br-ex bridge as the interface in the NMState configuration of the NodeNetworkConfigurationPolicy custom resource (CR) for your cluster. For more information, see Creating a manifest object that includes a customized br-ex bridge in Postinstallation configuration.

OpenShift Container Platform uses nmstate to report on and configure the state of the node network. This makes it possible to modify the network policy configuration, such as by creating a Linux bridge on all nodes, by applying a single configuration manifest to the cluster.

Node networking is monitored and updated by the following objects:

NodeNetworkState- Reports the state of the network on that node.

NodeNetworkConfigurationPolicy-

Describes the requested network configuration on nodes. You update the node network configuration, including adding and removing interfaces, by applying a

NodeNetworkConfigurationPolicyCR to the cluster. NodeNetworkConfigurationEnactment- Reports the network policies enacted upon each node.

Do not make configuration changes to the br-ex bridge or its underlying interfaces as a postinstallation task.

5.1.1. Installing the Kubernetes NMState Operator

You can install the Kubernetes NMState Operator by using the web console or the CLI.

5.1.1.1. Installing the Kubernetes NMState Operator by using the web console

You can install the Kubernetes NMState Operator by using the web console. After you install the Kubernetes NMState Operator, the Operator has deployed the NMState State Controller as a daemon set across all of the cluster nodes.

Prerequisites

-

You are logged in as a user with

cluster-adminprivileges.

Procedure

- Select Operators → OperatorHub.

-

In the search field below All Items, enter

nmstateand click Enter to search for the Kubernetes NMState Operator. - Click on the Kubernetes NMState Operator search result.

- Click on Install to open the Install Operator window.

- Click Install to install the Operator.

- After the Operator finishes installing, click View Operator.

-

Under Provided APIs, click Create Instance to open the dialog box for creating an instance of

kubernetes-nmstate. In the Name field of the dialog box, ensure the name of the instance is

nmstate.NoteThe name restriction is a known issue. The instance is a singleton for the entire cluster.

- Accept the default settings and click Create to create the instance.

5.1.1.2. Installing the Kubernetes NMState Operator by using the CLI

You can install the Kubernetes NMState Operator by using the OpenShift CLI (oc). After it is installed, the Operator deploys the NMState State Controller as a daemon set across all of the cluster nodes to manage the node network state and configuration.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You are logged in as a user with

cluster-adminprivileges.

Procedure

Create the

nmstateOperator namespace:$ cat << EOF | oc apply -f - apiVersion: v1 kind: Namespace metadata: name: openshift-nmstate spec: finalizers: - kubernetes EOFCreate the

OperatorGroup:$ cat << EOF | oc apply -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: openshift-nmstate namespace: openshift-nmstate spec: targetNamespaces: - openshift-nmstate EOFSubscribe to the

nmstateOperator:$ cat << EOF| oc apply -f - apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: kubernetes-nmstate-operator namespace: openshift-nmstate spec: channel: stable installPlanApproval: Automatic name: kubernetes-nmstate-operator source: redhat-operators sourceNamespace: openshift-marketplace EOFConfirm the

ClusterServiceVersion(CSV) status for thenmstateOperator deployment equalsSucceeded:$ oc get clusterserviceversion -n openshift-nmstate \ -o custom-columns=Name:.metadata.name,Phase:.status.phaseCreate an instance of the

nmstateOperator:$ cat << EOF | oc apply -f - apiVersion: nmstate.io/v1 kind: NMState metadata: name: nmstate EOFIf your cluster has problems with the DNS health check probe because of DNS connectivity issues, you can add the following DNS host name configuration to the

NMStateCRD to build in health checks that can resolve these issues:apiVersion: nmstate.io/v1 kind: NMState metadata: name: nmstate spec: probeConfiguration: dns: host: redhat.com # ...Apply the DNS host name configuration to your cluster network by running the following command. Ensure that you replace

<filename>with the name of your CRD file.$ oc apply -f <filename>.yamlMonitor the

nmstateCRD until the resource reaches theAvailablecondition by running the following command. Ensure that you set a value for the--timeoutoption so that if theAvailablecondition is not met within this set maximum waiting time, the command times out and generates an error message.$ oc wait --for=condition=Available nmstate/nmstate --timeout=600s

Verification

Verify that all pods for the NMState Operator have the

Runningstatus by entering the following command:$ oc get pod -n openshift-nmstate

5.1.2. Uninstalling the Kubernetes NMState Operator

Remove the Kubernetes NMState Operator and related resources when they are no longer needed.

You can use the Operator Lifecycle Manager (OLM) to uninstall the Kubernetes NMState Operator, but by design OLM does not delete any associated custom resource definitions (CRDs), custom resources (CRs), or API Services.

Before you uninstall the Kubernetes NMState Operator from the Subcription resource used by OLM, identify what Kubernetes NMState Operator resources to delete. This identification ensures that you can delete resources without impacting your running cluster.

If you need to reinstall the Kubernetes NMState Operator, see "Installing the Kubernetes NMState Operator by using the CLI" or "Installing the Kubernetes NMState Operator by using the web console".

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have installed the

jqCLI tool. -

You are logged in as a user with

cluster-adminprivileges.

Procedure

Unsubscribe the Kubernetes NMState Operator from the

Subcriptionresource by running the following command:$ oc delete --namespace openshift-nmstate subscription kubernetes-nmstate-operatorFind the

ClusterServiceVersion(CSV) resource that associates with the Kubernetes NMState Operator:$ oc get --namespace openshift-nmstate clusterserviceversionExample output that lists a CSV resource

NAME DISPLAY VERSION REPLACES PHASE kubernetes-nmstate-operator.v4.18.0 Kubernetes NMState Operator 4.18.0 SucceededDelete the CSV resource. After you delete the file, OLM deletes certain resources, such as

RBAC, that it created for the Operator.$ oc delete --namespace openshift-nmstate clusterserviceversion kubernetes-nmstate-operator.v4.18.0Delete the

nmstateCR and any associatedDeploymentresources by running the following commands:$ oc -n openshift-nmstate delete nmstate nmstate$ oc delete --all deployments --namespace=openshift-nmstateAfter you deleted the

nmstateCR, remove thenmstate-console-pluginconsole plugin name from theconsole.operator.openshift.io/clusterCR.Store the position of the

nmstate-console-pluginentry that exists among the list of enable plugins by running the following command. The following command uses thejqCLI tool to store the index of the entry in an environment variable namedINDEX:INDEX=$(oc get console.operator.openshift.io cluster -o json | jq -r '.spec.plugins | to_entries[] | select(.value == "nmstate-console-plugin") | .key')Remove the

nmstate-console-pluginentry from theconsole.operator.openshift.io/clusterCR by running the following patch command:$ oc patch console.operator.openshift.io cluster --type=json -p "[{\"op\": \"remove\", \"path\": \"/spec/plugins/$INDEX\"}]"-

INDEXis an auxiliary variable. You can specify a different name for this variable.

-

Optional: To preserve CR instances so that you can restore them after you delete CRDs, enter the following command:

$ oc get -A nncp -o yaml > cluster-nncp.yamlImportantTo reuse preserved CRs, such as NNCPs, you must uninstall the Kubernetes NMState Operator, reinstall the Kubernetes NMState Operator, and then run the following command to restore the CRs:

$ oc apply -f cluster-nncp.yamlDelete all the CRDs, such as

nmstates.nmstate.io, by running the following commands:$ oc delete crd nmstates.nmstate.io$ oc delete crd nodenetworkconfigurationenactments.nmstate.io$ oc delete crd nodenetworkstates.nmstate.io$ oc delete crd nodenetworkconfigurationpolicies.nmstate.ioDelete the namespace:

$ oc delete namespace kubernetes-nmstate

5.2. AWS Load Balancer Operator

5.2.1. AWS Load Balancer Operator release notes

The AWS Load Balancer (ALB) Operator deploys and manages an instance of the AWSLoadBalancerController resource.

The AWS Load Balancer (ALB) Operator is only supported on the x86_64 architecture.

These release notes track the development of the AWS Load Balancer Operator in OpenShift Container Platform.

For an overview of the AWS Load Balancer Operator, see AWS Load Balancer Operator in OpenShift Container Platform.

AWS Load Balancer Operator currently does not support AWS GovCloud.

5.2.1.1. AWS Load Balancer Operator 1.2.0

The following advisory is available for the AWS Load Balancer Operator version 1.2.0:

5.2.1.1.1. Notable changes

- This release supports the AWS Load Balancer Controller version 2.8.2.

-

With this release, the platform tags defined in the

Infrastructureresource will now be added to all AWS objects created by the controller.

5.2.1.2. AWS Load Balancer Operator 1.1.1

The following advisory is available for the AWS Load Balancer Operator version 1.1.1:

5.2.1.3. AWS Load Balancer Operator 1.1.0

The AWS Load Balancer Operator version 1.1.0 supports the AWS Load Balancer Controller version 2.4.4.

The following advisory is available for the AWS Load Balancer Operator version 1.1.0:

5.2.1.3.1. Notable changes

- This release uses the Kubernetes API version 0.27.2.

5.2.1.3.2. New features

- The AWS Load Balancer Operator now supports a standardized Security Token Service (STS) flow by using the Cloud Credential Operator.

5.2.1.3.3. Bug fixes

A FIPS-compliant cluster must use TLS version 1.2. Previously, webhooks for the AWS Load Balancer Controller only accepted TLS 1.3 as the minimum version, resulting in an error such as the following on a FIPS-compliant cluster:

remote error: tls: protocol version not supportedNow, the AWS Load Balancer Controller accepts TLS 1.2 as the minimum TLS version, resolving this issue. (OCPBUGS-14846)

5.2.1.4. AWS Load Balancer Operator 1.0.1

The following advisory is available for the AWS Load Balancer Operator version 1.0.1:

5.2.1.5. AWS Load Balancer Operator 1.0.0

The AWS Load Balancer Operator is now generally available with this release. The AWS Load Balancer Operator version 1.0.0 supports the AWS Load Balancer Controller version 2.4.4.

The following advisory is available for the AWS Load Balancer Operator version 1.0.0:

The AWS Load Balancer (ALB) Operator version 1.x.x cannot upgrade automatically from the Technology Preview version 0.x.x. To upgrade from an earlier version, you must uninstall the ALB operands and delete the aws-load-balancer-operator namespace.

5.2.1.5.1. Notable changes

-

This release uses the new

v1API version.

5.2.1.5.2. Bug fixes

- Previously, the controller provisioned by the AWS Load Balancer Operator did not properly use the configuration for the cluster-wide proxy. These settings are now applied appropriately to the controller. (OCPBUGS-4052, OCPBUGS-5295)

5.2.1.6. Earlier versions

The two earliest versions of the AWS Load Balancer Operator are available as a Technology Preview. These versions should not be used in a production cluster. For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

The following advisory is available for the AWS Load Balancer Operator version 0.2.0:

The following advisory is available for the AWS Load Balancer Operator version 0.0.1:

5.2.2. AWS Load Balancer Operator in OpenShift Container Platform

The AWS Load Balancer Operator deploys and manages the AWS Load Balancer Controller. You can install the AWS Load Balancer Operator from OperatorHub by using OpenShift Container Platform web console or CLI.

5.2.2.1. AWS Load Balancer Operator considerations

Review the following limitations before installing and using the AWS Load Balancer Operator:

- The IP traffic mode only works on AWS Elastic Kubernetes Service (EKS). The AWS Load Balancer Operator disables the IP traffic mode for the AWS Load Balancer Controller. As a result of disabling the IP traffic mode, the AWS Load Balancer Controller cannot use the pod readiness gate.

-

The AWS Load Balancer Operator adds command-line flags such as

--disable-ingress-class-annotationand--disable-ingress-group-name-annotationto the AWS Load Balancer Controller. Therefore, the AWS Load Balancer Operator does not allow using thekubernetes.io/ingress.classandalb.ingress.kubernetes.io/group.nameannotations in theIngressresource. -

You have configured the AWS Load Balancer Operator so that the SVC type is

NodePort(notLoadBalancerorClusterIP).

5.2.2.2. AWS Load Balancer Operator

The AWS Load Balancer Operator can tag the public subnets if the kubernetes.io/role/elb tag is missing. Also, the AWS Load Balancer Operator detects the following information from the underlying AWS cloud:

- The ID of the virtual private cloud (VPC) on which the cluster hosting the Operator is deployed in.

- Public and private subnets of the discovered VPC.

The AWS Load Balancer Operator supports the Kubernetes service resource of type LoadBalancer by using Network Load Balancer (NLB) with the instance target type only.

Procedure

To deploy the AWS Load Balancer Operator on-demand from OperatorHub, create a

Subscriptionobject by running the following command:$ oc -n aws-load-balancer-operator get sub aws-load-balancer-operator --template='{{.status.installplan.name}}{{"\n"}}'Check if the status of an install plan is

Completeby running the following command:$ oc -n aws-load-balancer-operator get ip <install_plan_name> --template='{{.status.phase}}{{"\n"}}'View the status of the

aws-load-balancer-operator-controller-managerdeployment by running the following command:$ oc get -n aws-load-balancer-operator deployment/aws-load-balancer-operator-controller-managerExample output

NAME READY UP-TO-DATE AVAILABLE AGE aws-load-balancer-operator-controller-manager 1/1 1 1 23h

5.2.2.3. Using the AWS Load Balancer Operator in an AWS VPC cluster extended into an Outpost

You can configure the AWS Load Balancer Operator to provision an AWS Application Load Balancer in an AWS VPC cluster extended into an Outpost. AWS Outposts does not support AWS Network Load Balancers. As a result, the AWS Load Balancer Operator cannot provision Network Load Balancers in an Outpost.

You can create an AWS Application Load Balancer either in the cloud subnet or in the Outpost subnet. An Application Load Balancer in the cloud can attach to cloud-based compute nodes and an Application Load Balancer in the Outpost can attach to edge compute nodes. You must annotate Ingress resources with the Outpost subnet or the VPC subnet, but not both.

Prerequisites

- You have extended an AWS VPC cluster into an Outpost.

-

You have installed the OpenShift CLI (

oc). - You have installed the AWS Load Balancer Operator and created the AWS Load Balancer Controller.

Procedure

Configure the

Ingressresource to use a specified subnet:Example

Ingressresource configurationapiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: <application_name> annotations: alb.ingress.kubernetes.io/subnets: <subnet_id>1 spec: ingressClassName: alb rules: - http: paths: - path: / pathType: Exact backend: service: name: <application_name> port: number: 80- 1

- Specifies the subnet to use.

- To use the Application Load Balancer in an Outpost, specify the Outpost subnet ID.

- To use the Application Load Balancer in the cloud, you must specify at least two subnets in different availability zones.

5.2.3. Preparing an AWS STS cluster for the AWS Load Balancer Operator

You can install the Amazon Web Services (AWS) Load Balancer Operator on a cluster that uses the Security Token Service (STS). Follow these steps to prepare your cluster before installing the Operator.

The AWS Load Balancer Operator relies on the CredentialsRequest object to bootstrap the Operator and the AWS Load Balancer Controller. The AWS Load Balancer Operator waits until the required secrets are created and available.

5.2.3.1. Prerequisites

-

You installed the OpenShift CLI (

oc). You know the infrastructure ID of your cluster. To show this ID, run the following command in your CLI:

$ oc get infrastructure cluster -o=jsonpath="{.status.infrastructureName}"You know the OpenID Connect (OIDC) DNS information for your cluster. To show this information, enter the following command in your CLI:

$ oc get authentication.config cluster -o=jsonpath="{.spec.serviceAccountIssuer}"1 - 1

- An OIDC DNS example is

https://rh-oidc.s3.us-east-1.amazonaws.com/28292va7ad7mr9r4he1fb09b14t59t4f.

-

You logged into the AWS Web Console, navigated to IAM → Access management → Identity providers, and located the OIDC Amazon Resource Name (ARN) information. An OIDC ARN example is

arn:aws:iam::777777777777:oidc-provider/<oidc_dns_url>.

5.2.3.2. Creating an IAM role for the AWS Load Balancer Operator

An additional Amazon Web Services (AWS) Identity and Access Management (IAM) role is required to successfully install the AWS Load Balancer Operator on a cluster that uses STS. The IAM role is required to interact with subnets and Virtual Private Clouds (VPCs). The AWS Load Balancer Operator generates the CredentialsRequest object with the IAM role to bootstrap itself.

You can create the IAM role by using the following options:

-

Using the Cloud Credential Operator utility (

ccoctl) and a predefinedCredentialsRequestobject. - Using the AWS CLI and predefined AWS manifests.

Use the AWS CLI if your environment does not support the ccoctl command.

5.2.3.2.1. Creating an AWS IAM role by using the Cloud Credential Operator utility

You can use the Cloud Credential Operator utility (ccoctl) to create an AWS IAM role for the AWS Load Balancer Operator. An AWS IAM role interacts with subnets and Virtual Private Clouds (VPCs).

Prerequisites

-

You must extract and prepare the

ccoctlbinary.

Procedure

Download the

CredentialsRequestcustom resource (CR) and store it in a directory by running the following command:$ curl --create-dirs -o <credentials_requests_dir>/operator.yaml https://raw.githubusercontent.com/openshift/aws-load-balancer-operator/main/hack/operator-credentials-request.yamlUse the

ccoctlutility to create an AWS IAM role by running the following command:$ ccoctl aws create-iam-roles \ --name <name> \ --region=<aws_region> \ --credentials-requests-dir=<credentials_requests_dir> \ --identity-provider-arn <oidc_arn>Example output

2023/09/12 11:38:57 Role arn:aws:iam::777777777777:role/<name>-aws-load-balancer-operator-aws-load-balancer-operator created1 2023/09/12 11:38:57 Saved credentials configuration to: /home/user/<credentials_requests_dir>/manifests/aws-load-balancer-operator-aws-load-balancer-operator-credentials.yaml 2023/09/12 11:38:58 Updated Role policy for Role <name>-aws-load-balancer-operator-aws-load-balancer-operator created- 1

- Note the Amazon Resource Name (ARN) of an AWS IAM role that was created for the AWS Load Balancer Operator, such as

arn:aws:iam::777777777777:role/<name>-aws-load-balancer-operator-aws-load-balancer-operator.

NoteThe length of an AWS IAM role name must be less than or equal to 12 characters.

5.2.3.2.2. Creating an AWS IAM role by using the AWS CLI

You can use the AWS Command Line Interface to create an IAM role for the AWS Load Balancer Operator. The IAM role is used to interact with subnets and Virtual Private Clouds (VPCs).

Prerequisites

-

You must have access to the AWS Command Line Interface (

aws).

Procedure

Generate a trust policy file by using your identity provider by running the following command:

$ cat <<EOF > albo-operator-trust-policy.json { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "<oidc_arn>"1 }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "<cluster_oidc_endpoint>:sub": "system:serviceaccount:aws-load-balancer-operator:aws-load-balancer-operator-controller-manager"2 } } } ] } EOF- 1

- Specifies the Amazon Resource Name (ARN) of the OIDC identity provider, such as

arn:aws:iam::777777777777:oidc-provider/rh-oidc.s3.us-east-1.amazonaws.com/28292va7ad7mr9r4he1fb09b14t59t4f. - 2

- Specifies the service account for the AWS Load Balancer Controller. An example of

<cluster_oidc_endpoint>isrh-oidc.s3.us-east-1.amazonaws.com/28292va7ad7mr9r4he1fb09b14t59t4f.

Create the IAM role with the generated trust policy by running the following command:

$ aws iam create-role --role-name albo-operator --assume-role-policy-document file://albo-operator-trust-policy.jsonExample output

ROLE arn:aws:iam::<aws_account_number>:role/albo-operator 2023-08-02T12:13:22Z1 ASSUMEROLEPOLICYDOCUMENT 2012-10-17 STATEMENT sts:AssumeRoleWithWebIdentity Allow STRINGEQUALS system:serviceaccount:aws-load-balancer-operator:aws-load-balancer-controller-manager PRINCIPAL arn:aws:iam:<aws_account_number>:oidc-provider/<cluster_oidc_endpoint>- 1

- Note the ARN of the created AWS IAM role that was created for the AWS Load Balancer Operator, such as

arn:aws:iam::777777777777:role/albo-operator.

Download the permission policy for the AWS Load Balancer Operator by running the following command:

$ curl -o albo-operator-permission-policy.json https://raw.githubusercontent.com/openshift/aws-load-balancer-operator/main/hack/operator-permission-policy.jsonAttach the permission policy for the AWS Load Balancer Controller to the IAM role by running the following command:

$ aws iam put-role-policy --role-name albo-operator --policy-name perms-policy-albo-operator --policy-document file://albo-operator-permission-policy.json

5.2.3.3. Configuring the ARN role for the AWS Load Balancer Operator

You can configure the Amazon Resource Name (ARN) role for the AWS Load Balancer Operator as an environment variable. You can configure the ARN role by using the CLI.

Prerequisites

-

You have installed the OpenShift CLI (

oc).

Procedure

Create the

aws-load-balancer-operatorproject by running the following command:$ oc new-project aws-load-balancer-operatorCreate the

OperatorGroupobject by running the following command:$ cat <<EOF | oc apply -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: aws-load-balancer-operator namespace: aws-load-balancer-operator spec: targetNamespaces: [] EOFCreate the

Subscriptionobject by running the following command:$ cat <<EOF | oc apply -f - apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: aws-load-balancer-operator namespace: aws-load-balancer-operator spec: channel: stable-v1 name: aws-load-balancer-operator source: redhat-operators sourceNamespace: openshift-marketplace config: env: - name: ROLEARN value: "<albo_role_arn>"1 EOF- 1

- Specifies the ARN role to be used in the

CredentialsRequestto provision the AWS credentials for the AWS Load Balancer Operator. An example for<albo_role_arn>isarn:aws:iam::<aws_account_number>:role/albo-operator.

NoteThe AWS Load Balancer Operator waits until the secret is created before moving to the

Availablestatus.

5.2.3.4. Creating an IAM role for the AWS Load Balancer Controller

The CredentialsRequest object for the AWS Load Balancer Controller must be set with a manually provisioned IAM role.

You can create the IAM role by using the following options:

-

Using the Cloud Credential Operator utility (

ccoctl) and a predefinedCredentialsRequestobject. - Using the AWS CLI and predefined AWS manifests.

Use the AWS CLI if your environment does not support the ccoctl command.

5.2.3.4.1. Creating an AWS IAM role for the controller by using the Cloud Credential Operator utility

You can use the Cloud Credential Operator utility (ccoctl) to create an AWS IAM role for the AWS Load Balancer Controller. An AWS IAM role is used to interact with subnets and Virtual Private Clouds (VPCs).

Prerequisites

-

You must extract and prepare the

ccoctlbinary.

Procedure

Download the

CredentialsRequestcustom resource (CR) and store it in a directory by running the following command:$ curl --create-dirs -o <credentials_requests_dir>/controller.yaml https://raw.githubusercontent.com/openshift/aws-load-balancer-operator/main/hack/controller/controller-credentials-request.yamlUse the

ccoctlutility to create an AWS IAM role by running the following command:$ ccoctl aws create-iam-roles \ --name <name> \ --region=<aws_region> \ --credentials-requests-dir=<credentials_requests_dir> \ --identity-provider-arn <oidc_arn>Example output

2023/09/12 11:38:57 Role arn:aws:iam::777777777777:role/<name>-aws-load-balancer-operator-aws-load-balancer-controller created1 2023/09/12 11:38:57 Saved credentials configuration to: /home/user/<credentials_requests_dir>/manifests/aws-load-balancer-operator-aws-load-balancer-controller-credentials.yaml 2023/09/12 11:38:58 Updated Role policy for Role <name>-aws-load-balancer-operator-aws-load-balancer-controller created- 1

- Note the Amazon Resource Name (ARN) of an AWS IAM role that was created for the AWS Load Balancer Controller, such as

arn:aws:iam::777777777777:role/<name>-aws-load-balancer-operator-aws-load-balancer-controller.

NoteThe length of an AWS IAM role name must be less than or equal to 12 characters.

5.2.3.4.2. Creating an AWS IAM role for the controller by using the AWS CLI

You can use the AWS command-line interface to create an AWS IAM role for the AWS Load Balancer Controller. An AWS IAM role is used to interact with subnets and Virtual Private Clouds (VPCs).

Prerequisites

-

You must have access to the AWS command-line interface (

aws).

Procedure

Generate a trust policy file using your identity provider by running the following command:

$ cat <<EOF > albo-controller-trust-policy.json { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "<oidc_arn>"1 }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "<cluster_oidc_endpoint>:sub": "system:serviceaccount:aws-load-balancer-operator:aws-load-balancer-operator-controller-manager"2 } } } ] } EOF- 1

- Specifies the Amazon Resource Name (ARN) of the OIDC identity provider, such as

arn:aws:iam::777777777777:oidc-provider/rh-oidc.s3.us-east-1.amazonaws.com/28292va7ad7mr9r4he1fb09b14t59t4f. - 2

- Specifies the service account for the AWS Load Balancer Controller. An example of

<cluster_oidc_endpoint>isrh-oidc.s3.us-east-1.amazonaws.com/28292va7ad7mr9r4he1fb09b14t59t4f.

Create an AWS IAM role with the generated trust policy by running the following command:

$ aws iam create-role --role-name albo-controller --assume-role-policy-document file://albo-controller-trust-policy.jsonExample output

ROLE arn:aws:iam::<aws_account_number>:role/albo-controller 2023-08-02T12:13:22Z1 ASSUMEROLEPOLICYDOCUMENT 2012-10-17 STATEMENT sts:AssumeRoleWithWebIdentity Allow STRINGEQUALS system:serviceaccount:aws-load-balancer-operator:aws-load-balancer-operator-controller-manager PRINCIPAL arn:aws:iam:<aws_account_number>:oidc-provider/<cluster_oidc_endpoint>- 1

- Note the ARN of an AWS IAM role for the AWS Load Balancer Controller, such as

arn:aws:iam::777777777777:role/albo-controller.

Download the permission policy for the AWS Load Balancer Controller by running the following command:

$ curl -o albo-controller-permission-policy.json https://raw.githubusercontent.com/openshift/aws-load-balancer-operator/main/assets/iam-policy.jsonAttach the permission policy for the AWS Load Balancer Controller to an AWS IAM role by running the following command:

$ aws iam put-role-policy --role-name albo-controller --policy-name perms-policy-albo-controller --policy-document file://albo-controller-permission-policy.jsonCreate a YAML file that defines the

AWSLoadBalancerControllerobject:Example

sample-aws-lb-manual-creds.yamlfileapiVersion: networking.olm.openshift.io/v1 kind: AWSLoadBalancerController1 metadata: name: cluster2 spec: credentialsRequestConfig: stsIAMRoleARN: <albc_role_arn>3 - 1

- Defines the

AWSLoadBalancerControllerobject. - 2

- Defines the AWS Load Balancer Controller name. All related resources use this instance name as a suffix.

- 3

- Specifies the ARN role for the AWS Load Balancer Controller. The

CredentialsRequestobject uses this ARN role to provision the AWS credentials. An example of<albc_role_arn>isarn:aws:iam::777777777777:role/albo-controller.

5.2.4. Installing the AWS Load Balancer Operator

The AWS Load Balancer Operator deploys and manages the AWS Load Balancer Controller. You can install the AWS Load Balancer Operator from the OperatorHub by using OpenShift Container Platform web console or CLI.

5.2.4.1. Installing the AWS Load Balancer Operator by using the web console

You can install the AWS Load Balancer Operator by using the web console.

Prerequisites

-

You have logged in to the OpenShift Container Platform web console as a user with

cluster-adminpermissions. - Your cluster is configured with AWS as the platform type and cloud provider.

- If you are using a security token service (STS) or user-provisioned infrastructure, follow the related preparation steps. For example, if you are using AWS Security Token Service, see "Preparing for the AWS Load Balancer Operator on a cluster using the AWS Security Token Service (STS)".

Procedure

- Navigate to Operators → OperatorHub in the OpenShift Container Platform web console.

- Select the AWS Load Balancer Operator. You can use the Filter by keyword text box or use the filter list to search for the AWS Load Balancer Operator from the list of Operators.

-

Select the

aws-load-balancer-operatornamespace. On the Install Operator page, select the following options:

- Update the channel as stable-v1.

- Installation mode as All namespaces on the cluster (default).

-

Installed Namespace as

aws-load-balancer-operator. If theaws-load-balancer-operatornamespace does not exist, it gets created during the Operator installation. - Select Update approval as Automatic or Manual. By default, the Update approval is set to Automatic. If you select automatic updates, the Operator Lifecycle Manager (OLM) automatically upgrades the running instance of your Operator without any intervention. If you select manual updates, the OLM creates an update request. As a cluster administrator, you must then manually approve that update request to update the Operator updated to the new version.

- Click Install.

Verification

- Verify that the AWS Load Balancer Operator shows the Status as Succeeded on the Installed Operators dashboard.

5.2.4.2. Installing the AWS Load Balancer Operator by using the CLI

You can install the AWS Load Balancer Operator by using the CLI.

Prerequisites

-

You are logged in to the OpenShift Container Platform web console as a user with

cluster-adminpermissions. - Your cluster is configured with AWS as the platform type and cloud provider.

-

You are logged into the OpenShift CLI (

oc).

Procedure

Create a

Namespaceobject:Create a YAML file that defines the

Namespaceobject:Example

namespace.yamlfileapiVersion: v1 kind: Namespace metadata: name: aws-load-balancer-operatorCreate the

Namespaceobject by running the following command:$ oc apply -f namespace.yaml

Create an

OperatorGroupobject:Create a YAML file that defines the

OperatorGroupobject:Example

operatorgroup.yamlfileapiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: aws-lb-operatorgroup namespace: aws-load-balancer-operator spec: upgradeStrategy: DefaultCreate the

OperatorGroupobject by running the following command:$ oc apply -f operatorgroup.yaml

Create a

Subscriptionobject:Create a YAML file that defines the

Subscriptionobject:Example

subscription.yamlfileapiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: aws-load-balancer-operator namespace: aws-load-balancer-operator spec: channel: stable-v1 installPlanApproval: Automatic name: aws-load-balancer-operator source: redhat-operators sourceNamespace: openshift-marketplaceCreate the

Subscriptionobject by running the following command:$ oc apply -f subscription.yaml

Verification

Get the name of the install plan from the subscription:

$ oc -n aws-load-balancer-operator \ get subscription aws-load-balancer-operator \ --template='{{.status.installplan.name}}{{"\n"}}'Check the status of the install plan:

$ oc -n aws-load-balancer-operator \ get ip <install_plan_name> \ --template='{{.status.phase}}{{"\n"}}'The output must be

Complete.

5.2.4.3. Creating the AWS Load Balancer Controller

You can install only a single instance of the AWSLoadBalancerController object in a cluster. You can create the AWS Load Balancer Controller by using CLI. The AWS Load Balancer Operator reconciles only the cluster named resource.

Prerequisites

-

You have created the

echoservernamespace. -

You have access to the OpenShift CLI (

oc).

Procedure

Create a YAML file that defines the

AWSLoadBalancerControllerobject:Example

sample-aws-lb.yamlfileapiVersion: networking.olm.openshift.io/v1 kind: AWSLoadBalancerController1 metadata: name: cluster2 spec: subnetTagging: Auto3 additionalResourceTags:4 - key: example.org/security-scope value: staging ingressClass: alb5 config: replicas: 26 enabledAddons:7 - AWSWAFv28 - 1

- Defines the

AWSLoadBalancerControllerobject. - 2

- Defines the AWS Load Balancer Controller name. This instance name gets added as a suffix to all related resources.

- 3

- Configures the subnet tagging method for the AWS Load Balancer Controller. The following values are valid:

-

Auto: The AWS Load Balancer Operator determines the subnets that belong to the cluster and tags them appropriately. The Operator cannot determine the role correctly if the internal subnet tags are not present on internal subnet. -

Manual: You manually tag the subnets that belong to the cluster with the appropriate role tags. Use this option if you installed your cluster on user-provided infrastructure.

-

- 4

- Defines the tags used by the AWS Load Balancer Controller when it provisions AWS resources.

- 5

- Defines the ingress class name. The default value is

alb. - 6

- Specifies the number of replicas of the AWS Load Balancer Controller.

- 7

- Specifies annotations as an add-on for the AWS Load Balancer Controller.

- 8

- Enables the

alb.ingress.kubernetes.io/wafv2-acl-arnannotation.

Create the

AWSLoadBalancerControllerobject by running the following command:$ oc create -f sample-aws-lb.yamlCreate a YAML file that defines the

Deploymentresource:Example

sample-aws-lb.yamlfileapiVersion: apps/v1 kind: Deployment1 metadata: name: <echoserver>2 namespace: echoserver spec: selector: matchLabels: app: echoserver replicas: 33 template: metadata: labels: app: echoserver spec: containers: - image: openshift/origin-node command: - "/bin/socat" args: - TCP4-LISTEN:8080,reuseaddr,fork - EXEC:'/bin/bash -c \"printf \\\"HTTP/1.0 200 OK\r\n\r\n\\\"; sed -e \\\"/^\r/q\\\"\"' imagePullPolicy: Always name: echoserver ports: - containerPort: 8080Create a YAML file that defines the

Serviceresource:Example

service-albo.yamlfileapiVersion: v1 kind: Service1 metadata: name: <echoserver>2 namespace: echoserver spec: ports: - port: 80 targetPort: 8080 protocol: TCP type: NodePort selector: app: echoserverCreate a YAML file that defines the

Ingressresource:Example

ingress-albo.yamlfileapiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: <name>1 namespace: echoserver annotations: alb.ingress.kubernetes.io/scheme: internet-facing alb.ingress.kubernetes.io/target-type: instance spec: ingressClassName: alb rules: - http: paths: - path: / pathType: Exact backend: service: name: <echoserver>2 port: number: 80

Verification

Save the status of the

Ingressresource in theHOSTvariable by running the following command:$ HOST=$(oc get ingress -n echoserver echoserver --template='{{(index .status.loadBalancer.ingress 0).hostname}}')Verify the status of the

Ingressresource by running the following command:$ curl $HOST

5.2.5. Configuring the AWS Load Balancer Operator

5.2.5.1. Trusting the certificate authority of the cluster-wide proxy

You can configure the cluster-wide proxy in the AWS Load Balancer Operator. After configuring the cluster-wide proxy, Operator Lifecycle Manager (OLM) automatically updates all the deployments of the Operators with the environment variables such as HTTP_PROXY, HTTPS_PROXY, and NO_PROXY. These variables are populated to the managed controller by the AWS Load Balancer Operator.

Create the config map to contain the certificate authority (CA) bundle in the

aws-load-balancer-operatornamespace by running the following command:$ oc -n aws-load-balancer-operator create configmap trusted-caTo inject the trusted CA bundle into the config map, add the

config.openshift.io/inject-trusted-cabundle=truelabel to the config map by running the following command:$ oc -n aws-load-balancer-operator label cm trusted-ca config.openshift.io/inject-trusted-cabundle=trueUpdate the AWS Load Balancer Operator subscription to access the config map in the AWS Load Balancer Operator deployment by running the following command:

$ oc -n aws-load-balancer-operator patch subscription aws-load-balancer-operator --type='merge' -p '{"spec":{"config":{"env":[{"name":"TRUSTED_CA_CONFIGMAP_NAME","value":"trusted-ca"}],"volumes":[{"name":"trusted-ca","configMap":{"name":"trusted-ca"}}],"volumeMounts":[{"name":"trusted-ca","mountPath":"/etc/pki/tls/certs/albo-tls-ca-bundle.crt","subPath":"ca-bundle.crt"}]}}}'After the AWS Load Balancer Operator is deployed, verify that the CA bundle is added to the

aws-load-balancer-operator-controller-managerdeployment by running the following command:$ oc -n aws-load-balancer-operator exec deploy/aws-load-balancer-operator-controller-manager -c manager -- bash -c "ls -l /etc/pki/tls/certs/albo-tls-ca-bundle.crt; printenv TRUSTED_CA_CONFIGMAP_NAME"Example output

-rw-r--r--. 1 root 1000690000 5875 Jan 11 12:25 /etc/pki/tls/certs/albo-tls-ca-bundle.crt trusted-caOptional: Restart deployment of the AWS Load Balancer Operator every time the config map changes by running the following command:

$ oc -n aws-load-balancer-operator rollout restart deployment/aws-load-balancer-operator-controller-manager

5.2.5.2. Adding TLS termination on the AWS Load Balancer

You can route the traffic for the domain to pods of a service and add TLS termination on the AWS Load Balancer.

Prerequisites

-

You have an access to the OpenShift CLI (

oc).

Procedure

Create a YAML file that defines the

AWSLoadBalancerControllerresource:Example

add-tls-termination-albc.yamlfileapiVersion: networking.olm.openshift.io/v1 kind: AWSLoadBalancerController metadata: name: cluster spec: subnetTagging: Auto ingressClass: tls-termination1 - 1

- Defines the ingress class name. If the ingress class is not present in your cluster the AWS Load Balancer Controller creates one. The AWS Load Balancer Controller reconciles the additional ingress class values if

spec.controlleris set toingress.k8s.aws/alb.

Create a YAML file that defines the

Ingressresource:Example

add-tls-termination-ingress.yamlfileapiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: <example>1 annotations: alb.ingress.kubernetes.io/scheme: internet-facing2 alb.ingress.kubernetes.io/certificate-arn: arn:aws:acm:us-west-2:xxxxx3 spec: ingressClassName: tls-termination4 rules: - host: example.com5 http: paths: - path: / pathType: Exact backend: service: name: <example_service>6 port: number: 80- 1

- Specifies the ingress name.

- 2

- The controller provisions the load balancer for ingress in a public subnet to access the load balancer over the internet.

- 3

- The Amazon Resource Name (ARN) of the certificate that you attach to the load balancer.

- 4

- Defines the ingress class name.

- 5

- Defines the domain for traffic routing.

- 6

- Defines the service for traffic routing.

5.2.5.3. Creating multiple ingress resources through a single AWS Load Balancer

You can route the traffic to different services with multiple ingress resources that are part of a single domain through a single AWS Load Balancer. Each ingress resource provides different endpoints of the domain.

Prerequisites

-

You have an access to the OpenShift CLI (

oc).

Procedure

Create an

IngressClassParamsresource YAML file, for example,sample-single-lb-params.yaml, as follows:apiVersion: elbv2.k8s.aws/v1beta11 kind: IngressClassParams metadata: name: single-lb-params2 spec: group: name: single-lb3 Create the

IngressClassParamsresource by running the following command:$ oc create -f sample-single-lb-params.yamlCreate the

IngressClassresource YAML file, for example,sample-single-lb-class.yaml, as follows:apiVersion: networking.k8s.io/v11 kind: IngressClass metadata: name: single-lb2 spec: controller: ingress.k8s.aws/alb3 parameters: apiGroup: elbv2.k8s.aws4 kind: IngressClassParams5 name: single-lb-params6 - 1

- Defines the API group and version of the

IngressClassresource. - 2

- Specifies the ingress class name.

- 3

- Defines the controller name. The

ingress.k8s.aws/albvalue denotes that all ingress resources of this class should be managed by the AWS Load Balancer Controller. - 4

- Defines the API group of the

IngressClassParamsresource. - 5

- Defines the resource type of the

IngressClassParamsresource. - 6

- Defines the

IngressClassParamsresource name.

Create the

IngressClassresource by running the following command:$ oc create -f sample-single-lb-class.yamlCreate the

AWSLoadBalancerControllerresource YAML file, for example,sample-single-lb.yaml, as follows:apiVersion: networking.olm.openshift.io/v1 kind: AWSLoadBalancerController metadata: name: cluster spec: subnetTagging: Auto ingressClass: single-lb1 - 1

- Defines the name of the

IngressClassresource.

Create the

AWSLoadBalancerControllerresource by running the following command:$ oc create -f sample-single-lb.yamlCreate the

Ingressresource YAML file, for example,sample-multiple-ingress.yaml, as follows:apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: example-11 annotations: alb.ingress.kubernetes.io/scheme: internet-facing2 alb.ingress.kubernetes.io/group.order: "1"3 alb.ingress.kubernetes.io/target-type: instance4 spec: ingressClassName: single-lb5 rules: - host: example.com6 http: paths: - path: /blog7 pathType: Prefix backend: service: name: example-18 port: number: 809 --- apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: example-2 annotations: alb.ingress.kubernetes.io/scheme: internet-facing alb.ingress.kubernetes.io/group.order: "2" alb.ingress.kubernetes.io/target-type: instance spec: ingressClassName: single-lb rules: - host: example.com http: paths: - path: /store pathType: Prefix backend: service: name: example-2 port: number: 80 --- apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: example-3 annotations: alb.ingress.kubernetes.io/scheme: internet-facing alb.ingress.kubernetes.io/group.order: "3" alb.ingress.kubernetes.io/target-type: instance spec: ingressClassName: single-lb rules: - host: example.com http: paths: - path: / pathType: Prefix backend: service: name: example-3 port: number: 80- 1

- Specifies the ingress name.

- 2

- Indicates the load balancer to provision in the public subnet to access the internet.

- 3

- Specifies the order in which the rules from the multiple ingress resources are matched when the request is received at the load balancer.

- 4

- Indicates that the load balancer will target OpenShift Container Platform nodes to reach the service.

- 5

- Specifies the ingress class that belongs to this ingress.

- 6

- Defines a domain name used for request routing.

- 7

- Defines the path that must route to the service.

- 8

- Defines the service name that serves the endpoint configured in the

Ingressresource. - 9

- Defines the port on the service that serves the endpoint.

Create the

Ingressresource by running the following command:$ oc create -f sample-multiple-ingress.yaml

5.2.5.4. AWS Load Balancer Operator logs

You can view the AWS Load Balancer Operator logs by using the oc logs command.

Procedure

View the logs of the AWS Load Balancer Operator by running the following command:

$ oc logs -n aws-load-balancer-operator deployment/aws-load-balancer-operator-controller-manager -c manager

5.3. External DNS Operator

5.3.1. External DNS Operator release notes

The External DNS Operator deploys and manages ExternalDNS to provide name resolution for services and routes. This enables your external DNS provider to resolve hostnames directly to OpenShift Container Platform resources.

The External DNS Operator is only supported on the x86_64 architecture.

These release notes track the development of the External DNS Operator in OpenShift Container Platform.

5.3.1.1. External DNS Operator 1.2

The External DNS Operator 1.2 release notes summarize all new features and enhancements, notable technical changes, major corrections from previous versions, and any known bugs upon general availability.

- External DNS Operator 1.2.0

The following advisory is available for the External DNS Operator version 1.2.0:

RHEA-2022:5867 ExternalDNS Operator 1.2 Operator or operand containers

New features:

The External DNS Operator now supports AWS shared VPC. For more information, "Creating DNS records in a different AWS Account using a shared VPC".

Bug fixes

-

The update strategy for the operand changed from

RollingtoRecreate. (OCPBUGS-3630)

5.3.1.2. External DNS Operator 1.1

The External DNS Operator 1.1 release notes summarize all new features and enhancements, notable technical changes, major corrections from previous versions, and any known bugs upon general availability.

- External DNS Operator 1.1.1

The following advisory is available for the External DNS Operator version 1.1.1:

- External DNS Operator 1.1.0

This release included a rebase of the operand from the upstream project version 0.13.1. The following advisory is available for the External DNS Operator version 1.1.0:

RHEA-2022:9086-01 ExternalDNS Operator 1.1 Operator or operand containers

Bug fixes:

-

Previously, the ExternalDNS Operator enforced an empty

defaultModevalue for volumes, which caused constant updates due to a conflict with the OpenShift API. Now, thedefaultModevalue is not enforced and operand deployment does not update constantly. (OCPBUGS-2793)

5.3.1.3. External DNS Operator 1.0

The External DNS Operator 1.0 release notes summarize all new features and enhancements, notable technical changes, major corrections from previous versions, and any known bugs upon general availability.

- External DNS Operator 1.0.1

The following advisory is available for the External DNS Operator version 1.0.1:

- External DNS Operator 1.0.0

The following advisory is available for the External DNS Operator version 1.0.0:

RHEA-2022:5867 ExternalDNS Operator 1.0 Operator or operand containers

Bug fixes:

- Previously, the External DNS Operator issued a warning about the violation of the restricted SCC policy during ExternalDNS operand pod deployments. This issue has been resolved. (BZ#2086408)

5.3.2. Understanding the External DNS Operator

To provide name resolution for services and routes from an External DNS provider to OpenShift Container Platform, use the External DNS Operator. This Operator deploys and manages ExternalDNS to synchronize your cluster resources with the external provider.

5.3.2.1. External DNS Operator domain name limitations

To prevent configuration errors when deploying the ExternalDNS resource, review the domain name limitations enforced by the External DNS Operator. Understanding these constraints ensures that your requested hostnames and domains are compatible with your underlying DNS provider.

The External DNS Operator uses the TXT registry that adds the prefix for TXT records. This reduces the maximum length of the domain name for TXT records. A DNS record cannot be present without a corresponding TXT record, so the domain name of the DNS record must follow the same limit as the TXT records. For example, a DNS record of <domain_name_from_source> results in a TXT record of external-dns-<record_type>-<domain_name_from_source>.

The domain name of the DNS records generated by the External DNS Operator has the following limitations:

| Record type | Number of characters |

|---|---|

| CNAME | 44 |

| Wildcard CNAME records on AzureDNS | 42 |

| A | 48 |

| Wildcard A records on AzureDNS | 46 |

The following error shows in the External DNS Operator logs if the generated domain name exceeds any of the domain name limitations:

time="2022-09-02T08:53:57Z" level=error msg="Failure in zone test.example.io. [Id: /hostedzone/Z06988883Q0H0RL6UMXXX]"

time="2022-09-02T08:53:57Z" level=error msg="InvalidChangeBatch: [FATAL problem: DomainLabelTooLong (Domain label is too long) encountered with 'external-dns-a-hello-openshift-aaaaaaaaaa-bbbbbbbbbb-ccccccc']\n\tstatus code: 400, request id: e54dfd5a-06c6-47b0-bcb9-a4f7c3a4e0c6"5.3.2.2. Deploying the External DNS Operator

You can deploy the External DNS Operator on-demand from the Software Catalog. Deploying the External DNS Operator creates a Subscription object.

The External DNS Operator implements the External DNS API from the olm.openshift.io API group. The External DNS Operator updates services, routes, and external DNS providers.

Prerequisites

-

You have installed the

yqCLI tool.

Procedure

Check the name of an install plan, such as

install-zcvlr, by running the following command:$ oc -n external-dns-operator get sub external-dns-operator -o yaml | yq '.status.installplan.name'Check if the status of an install plan is

Completeby running the following command:$ oc -n external-dns-operator get ip <install_plan_name> -o yaml | yq '.status.phase'View the status of the

external-dns-operatordeployment by running the following command:$ oc get -n external-dns-operator deployment/external-dns-operatorExample output

NAME READY UP-TO-DATE AVAILABLE AGE external-dns-operator 1/1 1 1 23h

5.3.2.3. Viewing External DNS Operator logs

To troubleshoot DNS configuration issues, view the External DNS Operator logs. Use the oc logs command to retrieve diagnostic information directly from the Operator pod.

Procedure

View the logs of the External DNS Operator by running the following command:

$ oc logs -n external-dns-operator deployment/external-dns-operator -c external-dns-operator

5.3.3. Installing the External DNS Operator

To manage DNS records on your cloud infrastructure, install the External DNS Operator. This Operator supports deployment on major cloud providers, including Amazon Web Services (AWS), Microsoft Azure, and Google Cloud.

5.3.3.1. Installing the External DNS Operator with OperatorHub

You can install the External DNS Operator by using the OpenShift Container Platform OperatorHub. You can then manage the Operator lifecycle directly from the web console.

Procedure

- Click Operators → OperatorHub in the OpenShift Container Platform web console.

- Click External DNS Operator. You can use the Filter by keyword text box or the filter list to search for External DNS Operator from the list of Operators.

-

Select the

external-dns-operatornamespace. - On the External DNS Operator page, click Install.

On the Install Operator page, ensure that you selected the following options:

- Update the channel as stable-v1.

- Installation mode as A specific name on the cluster.

-

Installed namespace as

external-dns-operator. If namespaceexternal-dns-operatordoes not exist, the Operator gets created during the Operator installation. - Select Approval Strategy as Automatic or Manual. The Approval Strategy defaults to Automatic.

Click Install.

If you select Automatic updates, the Operator Lifecycle Manager (OLM) automatically upgrades the running instance of your Operator without any intervention.

If you select Manual updates, the OLM creates an update request. As a cluster administrator, you must then manually approve that update request to have the Operator updated to the new version.

Verification

- Verify that the External DNS Operator shows the Status as Succeeded on the Installed Operators dashboard.

5.3.3.2. Installing the External DNS Operator by using the CLI

You can use the OpenShift CLI (oc) to install the External DNS Operator. The Operator manages the installation process directly from your terminal without you having to use the web console.

Prerequisites

-

You are logged in to the OpenShift CLI (

oc).

Procedure

Create a

Namespaceobject:Create a YAML file that defines the

Namespaceobject:Example

namespace.yamlfileapiVersion: v1 kind: Namespace metadata: name: external-dns-operator # ...Create the

Namespaceobject by running the following command:$ oc apply -f namespace.yaml

Create an

OperatorGroupobject:Create a YAML file that defines the

OperatorGroupobject:Example

operatorgroup.yamlfileapiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: external-dns-operator namespace: external-dns-operator spec: upgradeStrategy: Default targetNamespaces: - external-dns-operator # ...Create the

OperatorGroupobject by running the following command:$ oc apply -f operatorgroup.yaml

Create a

Subscriptionobject:Create a YAML file that defines the

Subscriptionobject:Example

subscription.yamlfileapiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: external-dns-operator namespace: external-dns-operator spec: channel: stable-v1 installPlanApproval: Automatic name: external-dns-operator source: redhat-operators sourceNamespace: openshift-marketplace # ...Create the

Subscriptionobject by running the following command:$ oc apply -f subscription.yaml

Verification

Get the name of the install plan from the subscription by running the following command:

$ oc -n external-dns-operator \ get subscription external-dns-operator \ --template='{{.status.installplan.name}}{{"\n"}}'Verify that the status of the install plan is

Completeby running the following command:$ oc -n external-dns-operator \ get ip <install_plan_name> \ --template='{{.status.phase}}{{"\n"}}'Verify that the status of the

external-dns-operatorpod isRunningby running the following command:$ oc -n external-dns-operator get podExample output

NAME READY STATUS RESTARTS AGE external-dns-operator-5584585fd7-5lwqm 2/2 Running 0 11mVerify that the catalog source of the subscription is

redhat-operatorsby running the following command:$ oc -n external-dns-operator get subscriptionCheck the

external-dns-operatorversion by running the following command:$ oc -n external-dns-operator get csv

5.3.4. External DNS Operator configuration parameters

To customize the behavior of the External DNS Operator, configure the available parameters in the ExternalDNS custom resource (CR). By configuraing parameters, you can control how the Operator synchronizes services and routes with your external DNS provider.

5.3.4.1. External DNS Operator configuration parameters

To customize the behavior of the External DNS Operator, configure the available parameters in the ExternalDNS custom resource (CR). By configuring parameters, you can control how the Operator synchronizes services and routes with your external DNS provider.

| Parameter | Description |

|---|---|

|

| Enables the type of a cloud provider.

|

|

|

Enables you to specify DNS zones by their domains. If you do not specify zones, the

|

|

|

Enables you to specify AWS zones by their domains. If you do not specify domains, the

|

|

|

Enables you to specify the source for the DNS records,

|

5.3.5. Creating DNS records on AWS

To create DNS records on AWS and AWS GovCloud, use the External DNS Operator. The Operator manages external name resolution for your cluster services directly through the Operator.

5.3.5.1. Creating DNS records on a public hosted zone for AWS by using Red Hat External DNS Operator

You can create DNS records on a public hosted zone for AWS by using the Red Hat External DNS Operator. You can use the same instructions to create DNS records on a hosted zone for AWS GovCloud.

Procedure

Check the user profile by running the following command. The profile, such as

system:admin, must have access to thekube-systemnamespace. If you do not have the credentials, you can fetch the credentials from thekube-systemnamespace to use the cloud provider client by running the following command:$ oc whoamiFetch the values from the