Chapter 2. Migrating from OpenShift Container Platform 4.1

2.1. Migration tools and prerequisites

You can migrate application workloads from OpenShift Container Platform 4.1 to 4.5 with the Migration Toolkit for Containers (MTC). MTC enables you to control the migration and to minimize application downtime.

You can migrate between OpenShift Container Platform clusters of the same version, for example, from 4.1 to 4.1, as long as the source and target clusters are configured correctly.

The MTC web console and API, based on Kubernetes custom resources, enable you to migrate stateful and stateless application workloads at the granularity of a namespace.

MTC supports the file system and snapshot data copy methods for migrating data from the source cluster to the target cluster. You can select a method that is suited for your environment and is supported by your storage provider.

You can use migration hooks to run Ansible playbooks at certain points during the migration. The hooks are added when you create a migration plan.

2.1.1. Migration Toolkit for Containers workflow

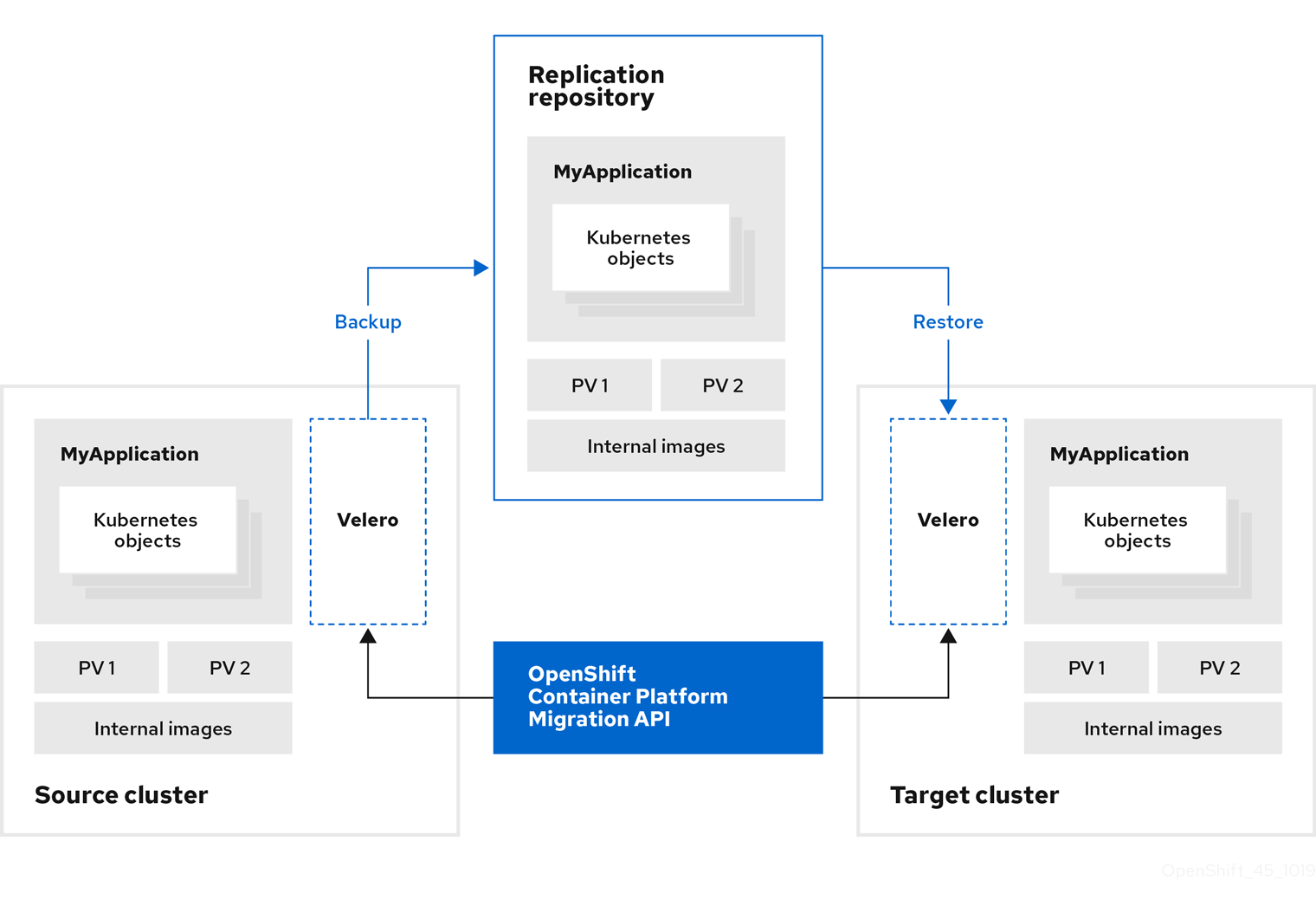

You use the Migration Toolkit for Containers (MTC) to migrate Kubernetes resources, persistent volume data, and internal container images from an OpenShift Container Platform source cluster to an OpenShift Container Platform 4.5 target cluster by using the MTC web console or the Kubernetes API.

The (MTC) migrates the following resources:

- A namespace specified in a migration plan.

Namespace-scoped resources: When the MTC migrates a namespace, it migrates all the objects and resources associated with that namespace, such as services or pods. Additionally, if a resource that exists in the namespace but not at the cluster level depends on a resource that exists at the cluster level, the MTC migrates both resources.

For example, a security context constraint (SCC) is a resource that exists at the cluster level and a service account (SA) is a resource that exists at the namespace level. If an SA exists in a namespace that the MTC migrates, the MTC automatically locates any SCCs that are linked to the SA and also migrates those SCCs. Similarly, the MTC migrates persistent volume claims that are linked to the persistent volumes of the namespace.

- Custom resources (CRs) and custom resource definitions (CRDs): The MTC automatically migrates any CRs that exist at the namespace level as well as the CRDs that are linked to those CRs.

Migrating an application with the MTC web console involves the following steps:

Install the Migration Toolkit for Containers Operator on all clusters.

You can install the Migration Toolkit for Containers Operator in a restricted environment with limited or no internet access. The source and target clusters must have network access to each other and to a mirror registry.

Configure the replication repository, an intermediate object storage that MTC uses to migrate data.

The source and target clusters must have network access to the replication repository during migration. In a restricted environment, you can use an internally hosted S3 storage repository. If you are using a proxy server, you must configure it to allow network traffic between the replication repository and the clusters.

- Add the source cluster to the MTC web console.

- Add the replication repository to the MTC web console.

Create a migration plan, with one of the following data migration options:

Copy: MTC copies the data from the source cluster to the replication repository, and from the replication repository to the target cluster.

NoteIf you are using direct image migration or direct volume migration, the images or volumes are copied directly from the source cluster to the target cluster.

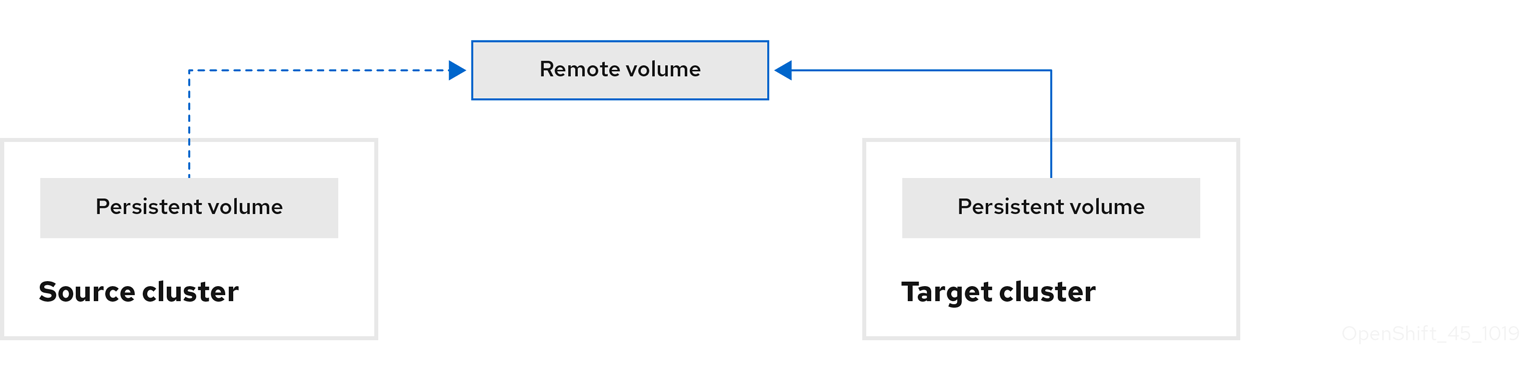

Move: MTC unmounts a remote volume, for example, NFS, from the source cluster, creates a PV resource on the target cluster pointing to the remote volume, and then mounts the remote volume on the target cluster. Applications running on the target cluster use the same remote volume that the source cluster was using. The remote volume must be accessible to the source and target clusters.

NoteAlthough the replication repository does not appear in this diagram, it is required for migration.

Run the migration plan, with one of the following options:

Stage (optional) copies data to the target cluster without stopping the application.

Staging can be run multiple times so that most of the data is copied to the target before migration. This minimizes the duration of the migration and application downtime.

- Migrate stops the application on the source cluster and recreates its resources on the target cluster. Optionally, you can migrate the workload without stopping the application.

2.1.2. Migration Toolkit for Containers custom resources

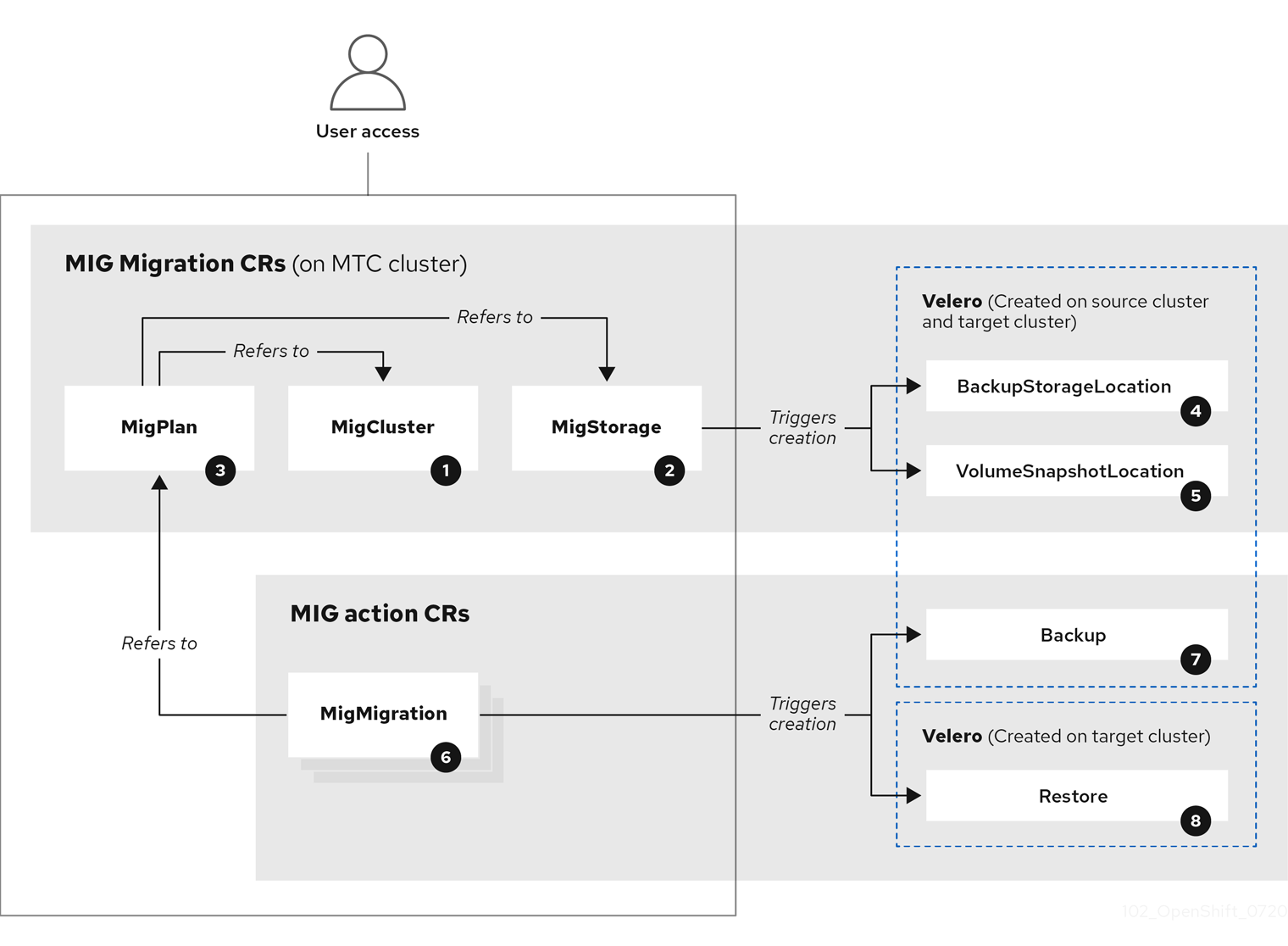

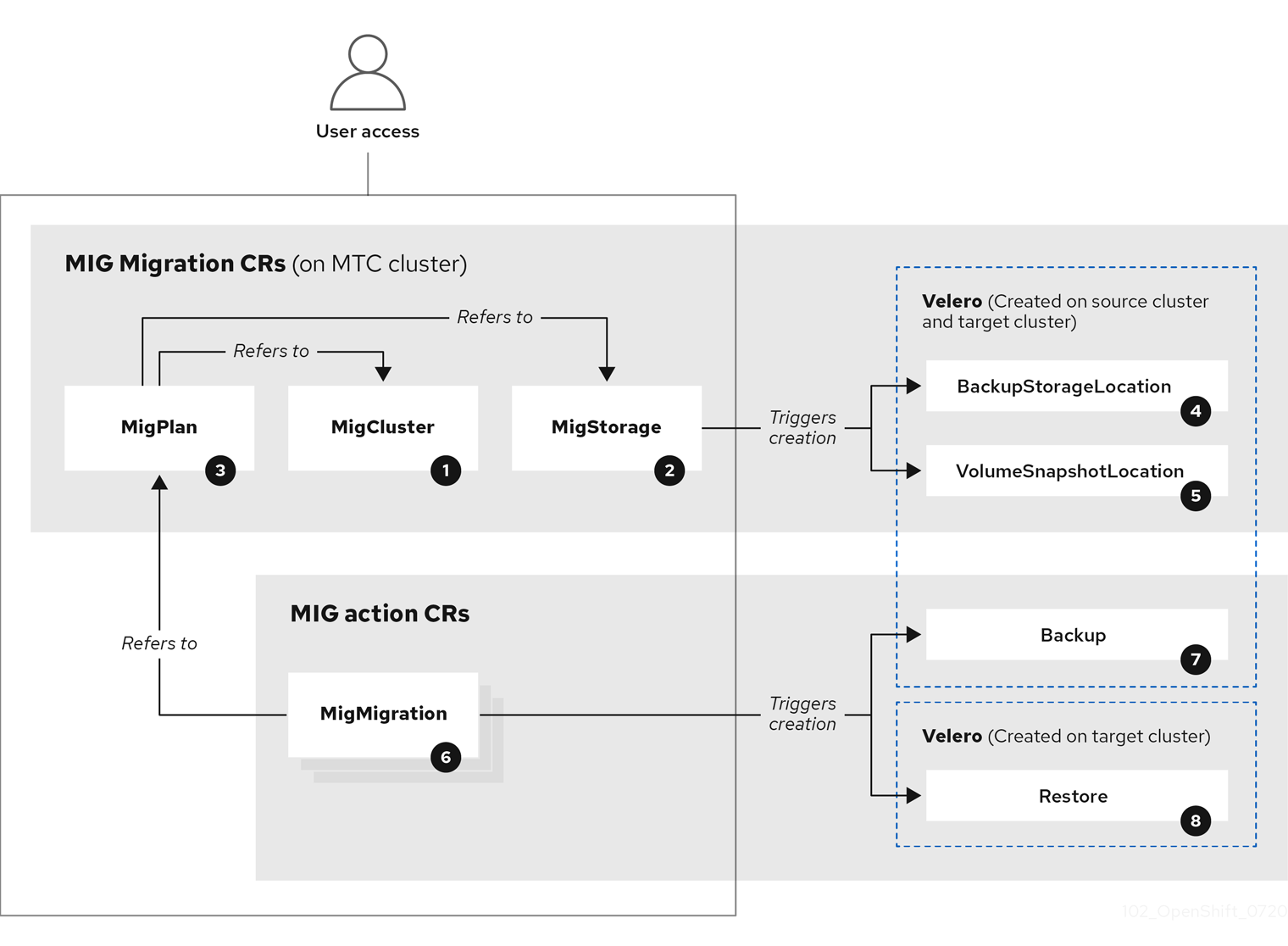

The Migration Toolkit for Containers (MTC) creates the following custom resources (CRs):

![]() MigCluster (configuration, MTC cluster): Cluster definition

MigCluster (configuration, MTC cluster): Cluster definition

![]() MigStorage (configuration, MTC cluster): Storage definition

MigStorage (configuration, MTC cluster): Storage definition

![]() MigPlan (configuration, MTC cluster): Migration plan

MigPlan (configuration, MTC cluster): Migration plan

The MigPlan CR describes the source and target clusters, replication repository, and namespaces being migrated. It is associated with 0, 1, or many MigMigration CRs.

Deleting a MigPlan CR deletes the associated MigMigration CRs.

![]() BackupStorageLocation (configuration, MTC cluster): Location of

BackupStorageLocation (configuration, MTC cluster): Location of Velero backup objects

![]() VolumeSnapshotLocation (configuration, MTC cluster): Location of

VolumeSnapshotLocation (configuration, MTC cluster): Location of Velero volume snapshots

![]() MigMigration (action, MTC cluster): Migration, created every time you stage or migrate data. Each

MigMigration (action, MTC cluster): Migration, created every time you stage or migrate data. Each MigMigration CR is associated with a MigPlan CR.

![]() Backup (action, source cluster): When you run a migration plan, the

Backup (action, source cluster): When you run a migration plan, the MigMigration CR creates two Velero backup CRs on each source cluster:

- Backup CR #1 for Kubernetes objects

- Backup CR #2 for PV data

![]() Restore (action, target cluster): When you run a migration plan, the

Restore (action, target cluster): When you run a migration plan, the MigMigration CR creates two Velero restore CRs on the target cluster:

- Restore CR #1 (using Backup CR #2) for PV data

- Restore CR #2 (using Backup CR #1) for Kubernetes objects

2.1.3. About data copy methods

The Migration Toolkit for Containers (MTC) supports the file system and snapshot data copy methods for migrating data from the source cluster to the target cluster. You can select a method that is suited for your environment and is supported by your storage provider.

2.1.3.1. File system copy method

MTC copies data files from the source cluster to the replication repository, and from there to the target cluster.

| Benefits | Limitations |

|---|---|

|

|

2.1.3.2. Snapshot copy method

MTC copies a snapshot of the source cluster data to the replication repository of a cloud provider. The data is restored on the target cluster.

AWS, Google Cloud Provider, and Microsoft Azure support the snapshot copy method.

| Benefits | Limitations |

|---|---|

|

|

2.1.4. About migration hooks

You can use migration hooks to run custom code at certain points during a migration with the Migration Toolkit for Containers (MTC). You can add up to four migration hooks to a single migration plan, with each hook running at a different phase of the migration.

Migration hooks perform tasks such as customizing application quiescence, manually migrating unsupported data types, and updating applications after migration.

A migration hook runs on a source or a target cluster at one of the following migration steps:

-

PreBackup: Before resources are backed up on the source cluster -

PostBackup: After resources are backed up on the source cluster -

PreRestore: Before resources are restored on the target cluster -

PostRestore: After resources are restored on the target cluster

You can create a hook by using an Ansible playbook or a custom hook container.

Ansible playbook

The Ansible playbook is mounted on a hook container as a config map. The hook container runs as a job, using the cluster, service account, and namespace specified in the MigPlan custom resource (CR). The job continues to run until it reaches the the default limit of 6 retries or a successful completion. This continues even if the initial pod is evicted or killed.

The default Ansible runtime image is registry.redhat.io/rhmtc/openshift-migration-hook-runner-rhel7:1.4. This image is based on the Ansible Runner image and includes python-openshift for Ansible Kubernetes resources and an updated oc binary.

Optional: You can use a custom Ansible runtime image containing additional Ansible modules or tools instead of the default image.

Custom hook container

You can create a custom hook container that includes Ansible playbooks or custom code.

2.2. Installing and upgrading the Migration Toolkit for Containers

You can install the Migration Toolkit for Containers on an OpenShift Container Platform 4.5 target cluster and on a 4.1 source cluster.

MTC is installed on the target cluster by default. You can install the MTC on an OpenShift Container Platform 3 cluster or on a remote cluster.

2.2.1. Installing the Migration Toolkit for Containers in a connected environment

You can install the Migration Toolkit for Containers (MTC) in a connected environment.

You must install the same MTC version on all clusters.

2.2.1.1. Installing the Migration Toolkit for Containers on an OpenShift Container Platform 4.5 target cluster

You can install the Migration Toolkit for Containers (MTC) on an OpenShift Container Platform 4.5 target cluster.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters.

Procedure

-

In the OpenShift Container Platform web console, click Operators

OperatorHub. - Use the Filter by keyword field to find the Migration Toolkit for Containers Operator.

Select the Migration Toolkit for Containers Operator and click Install.

NoteDo not change the subscription approval option to Automatic. The Migration Toolkit for Containers version must be the same on the source and the target clusters.

Click Install.

On the Installed Operators page, the Migration Toolkit for Containers Operator appears in the openshift-migration project with the status Succeeded.

- Click Migration Toolkit for Containers Operator.

- Under Provided APIs, locate the Migration Controller tile, and click Create Instance.

- Click Create.

-

Click Workloads

Pods to verify that the MTC pods are running.

2.2.1.2. Installing the Migration Toolkit for Containers on an OpenShift Container Platform 4.1 source cluster

You can install the Migration Toolkit for Containers (MTC) on an OpenShift Container Platform 4 source cluster.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters.

Procedure

-

In the OpenShift Container Platform web console, click Catalog

OperatorHub. - Use the Filter by keyword field to find the Migration Toolkit for Containers Operator.

Select the Migration Toolkit for Containers Operator and click Install.

NoteDo not change the subscription approval option to Automatic. The Migration Toolkit for Containers version must be the same on the source and the target clusters.

Click Install.

On the Installed Operators page, the Migration Toolkit for Containers Operator appears in the openshift-migration project with the status Succeeded.

- Click Migration Toolkit for Containers Operator.

- Under Provided APIs, locate the Migration Controller tile, and click Create Instance.

Update the following parameters in the

migration-controllercustom resource manifest:spec: ... migration_controller: false migration_ui: false ... deprecated_cors_configuration: true1 - 1

- Add the

deprecated_cors_configurationparameter and its value.

- Click Create.

-

Click Workloads

Pods to verify that the MTC pods are running.

2.2.2. Installing the Migration Toolkit for Containers in a restricted environment

You can install the Migration Toolkit for Containers (MTC) in a restricted environment.

You must install the same MTC version on all clusters.

You can build a custom Operator catalog image for OpenShift Container Platform 4, push it to a local mirror image registry, and configure Operator Lifecycle Manager (OLM) to install the Migration Toolkit for Containers Operator from the local registry.

2.2.2.1. Building an Operator catalog image

Cluster administrators can build a custom Operator catalog image based on the Package Manifest Format to be used by Operator Lifecycle Manager (OLM). The catalog image can be pushed to a container image registry that supports Docker v2-2. For a cluster on a restricted network, this registry can be a registry that the cluster has network access to, such as a mirror registry created during a restricted network cluster installation.

The internal registry of the OpenShift Container Platform cluster cannot be used as the target registry because it does not support pushing without a tag, which is required during the mirroring process.

For this example, the procedure assumes use of a mirror registry that has access to both your network and the Internet.

Only the Linux version of the oc client can be used for this procedure, because the Windows and macOS versions do not provide the oc adm catalog build command.

Prerequisites

- Workstation with unrestricted network access

-

ocversion 4.3.5+ Linux client -

podmanversion 1.4.4+ - Access to mirror registry that supports Docker v2-2

If you are working with private registries, set the

REG_CREDSenvironment variable to the file path of your registry credentials for use in later steps. For example, for thepodmanCLI:$ REG_CREDS=${XDG_RUNTIME_DIR}/containers/auth.jsonIf you are working with private namespaces that your quay.io account has access to, you must set a Quay authentication token. Set the

AUTH_TOKENenvironment variable for use with the--auth-tokenflag by making a request against the login API using your quay.io credentials:$ AUTH_TOKEN=$(curl -sH "Content-Type: application/json" \ -XPOST https://quay.io/cnr/api/v1/users/login -d ' { "user": { "username": "'"<quay_username>"'", "password": "'"<quay_password>"'" } }' | jq -r '.token')

Procedure

On the workstation with unrestricted network access, authenticate with the target mirror registry:

$ podman login <registry_host_name>Also authenticate with

registry.redhat.ioso that the base image can be pulled during the build:$ podman login registry.redhat.ioBuild a catalog image based on the

redhat-operatorscatalog from Quay.io, tagging and pushing it to your mirror registry:$ oc adm catalog build \ --appregistry-org redhat-operators \1 --from=registry.redhat.io/openshift4/ose-operator-registry:v4.5 \2 --filter-by-os="linux/amd64" \3 --to=<registry_host_name>:<port>/olm/redhat-operators:v1 \4 [-a ${REG_CREDS}] \5 [--insecure] \6 [--auth-token "${AUTH_TOKEN}"]7 - 1

- Organization (namespace) to pull from an App Registry instance.

- 2

- Set

--fromto theose-operator-registrybase image using the tag that matches the target OpenShift Container Platform cluster major and minor version. - 3

- Set

--filter-by-osto the operating system and architecture to use for the base image, which must match the target OpenShift Container Platform cluster. Valid values arelinux/amd64,linux/ppc64le, andlinux/s390x. - 4

- Name your catalog image and include a tag, for example,

v1. - 5

- Optional: If required, specify the location of your registry credentials file.

- 6

- Optional: If you do not want to configure trust for the target registry, add the

--insecureflag. - 7

- Optional: If other application registry catalogs are used that are not public, specify a Quay authentication token.

Example output

INFO[0013] loading Bundles dir=/var/folders/st/9cskxqs53ll3wdn434vw4cd80000gn/T/300666084/manifests-829192605 ... Pushed sha256:f73d42950021f9240389f99ddc5b0c7f1b533c054ba344654ff1edaf6bf827e3 to example_registry:5000/olm/redhat-operators:v1Sometimes invalid manifests are accidentally introduced catalogs provided by Red Hat; when this happens, you might see some errors:

Example output with errors

... INFO[0014] directory dir=/var/folders/st/9cskxqs53ll3wdn434vw4cd80000gn/T/300666084/manifests-829192605 file=4.2 load=package W1114 19:42:37.876180 34665 builder.go:141] error building database: error loading package into db: fuse-camel-k-operator.v7.5.0 specifies replacement that couldn't be found Uploading ... 244.9kB/sThese errors are usually non-fatal, and if the Operator package mentioned does not contain an Operator you plan to install or a dependency of one, then they can be ignored.

2.2.2.2. Configuring OperatorHub for restricted networks

Cluster administrators can configure OLM and OperatorHub to use local content in a restricted network environment using a custom Operator catalog image. For this example, the procedure uses a custom redhat-operators catalog image previously built and pushed to a supported registry.

Prerequisites

- Workstation with unrestricted network access

- A custom Operator catalog image pushed to a supported registry

-

ocversion 4.3.5+ -

podmanversion 1.4.4+ - Access to mirror registry that supports Docker v2-2

If you are working with private registries, set the

REG_CREDSenvironment variable to the file path of your registry credentials for use in later steps. For example, for thepodmanCLI:$ REG_CREDS=${XDG_RUNTIME_DIR}/containers/auth.json

Procedure

The

oc adm catalog mirrorcommand extracts the contents of your custom Operator catalog image to generate the manifests required for mirroring. You can choose to either:- Allow the default behavior of the command to automatically mirror all of the image content to your mirror registry after generating manifests, or

-

Add the

--manifests-onlyflag to only generate the manifests required for mirroring, but do not actually mirror the image content to a registry yet. This can be useful for reviewing what will be mirrored, and it allows you to make any changes to the mapping list if you only require a subset of the content. You can then use that file with theoc image mirrorcommand to mirror the modified list of images in a later step.

On your workstation with unrestricted network access, run the following command:

$ oc adm catalog mirror \ <registry_host_name>:<port>/olm/redhat-operators:v1 \1 <registry_host_name>:<port> \ [-a ${REG_CREDS}] \2 [--insecure] \3 --filter-by-os='.*' \4 [--manifests-only]5 - 1

- Specify your Operator catalog image.

- 2

- Optional: If required, specify the location of your registry credentials file.

- 3

- Optional: If you do not want to configure trust for the target registry, add the

--insecureflag. - 4

- This flag is currently required due to a known issue with multiple architecture support.

- 5

- Optional: Only generate the manifests required for mirroring and do not actually mirror the image content to a registry.

WarningIf the

--filter-by-osflag remains unset or set to any value other than.*, the command filters out different architectures, which changes the digest of the manifest list, also known as a multi-arch image. The incorrect digest causes deployments of those images and Operators on disconnected clusters to fail. For more information, see BZ#1890951.Example output

using database path mapping: /:/tmp/190214037 wrote database to /tmp/190214037 using database at: /tmp/190214037/bundles.db1 ...- 1

- Temporary database generated by the command.

After running the command, a

<image_name>-manifests/directory is created in the current directory and generates the following files:-

The

imageContentSourcePolicy.yamlfile defines anImageContentSourcePolicyobject that can configure nodes to translate between the image references stored in Operator manifests and the mirrored registry. -

The

mapping.txtfile contains all of the source images and where to map them in the target registry. This file is compatible with theoc image mirrorcommand and can be used to further customize the mirroring configuration.

If you used the

--manifests-onlyflag in the previous step and want to mirror only a subset of the content:Modify the list of images in your

mapping.txtfile to your specifications. If you are unsure of the exact names and versions of the subset of images you want to mirror, use the following steps to find them:Run the

sqlite3tool against the temporary database that was generated by theoc adm catalog mirrorcommand to retrieve a list of images matching a general search query. The output helps inform how you will later edit yourmapping.txtfile.For example, to retrieve a list of images that are similar to the string

clusterlogging.4.3:$ echo "select * from related_image \ where operatorbundle_name like 'clusterlogging.4.3%';" \ | sqlite3 -line /tmp/190214037/bundles.db1 - 1

- Refer to the previous output of the

oc adm catalog mirrorcommand to find the path of the database file.

Example output

image = registry.redhat.io/openshift4/ose-logging-kibana5@sha256:aa4a8b2a00836d0e28aa6497ad90a3c116f135f382d8211e3c55f34fb36dfe61 operatorbundle_name = clusterlogging.4.3.33-202008111029.p0 image = registry.redhat.io/openshift4/ose-oauth-proxy@sha256:6b4db07f6e6c962fc96473d86c44532c93b146bbefe311d0c348117bf759c506 operatorbundle_name = clusterlogging.4.3.33-202008111029.p0 ...Use the results from the previous step to edit the

mapping.txtfile to only include the subset of images you want to mirror.For example, you can use the

imagevalues from the previous example output to find that the following matching lines exist in yourmapping.txtfile:Matching image mappings in

mapping.txtregistry.redhat.io/openshift4/ose-logging-kibana5@sha256:aa4a8b2a00836d0e28aa6497ad90a3c116f135f382d8211e3c55f34fb36dfe61=<registry_host_name>:<port>/openshift4-ose-logging-kibana5:a767c8f0 registry.redhat.io/openshift4/ose-oauth-proxy@sha256:6b4db07f6e6c962fc96473d86c44532c93b146bbefe311d0c348117bf759c506=<registry_host_name>:<port>/openshift4-ose-oauth-proxy:3754ea2bIn this example, if you only want to mirror these images, you would then remove all other entries in the

mapping.txtfile and leave only the above two lines.

Still on your workstation with unrestricted network access, use your modified

mapping.txtfile to mirror the images to your registry using theoc image mirrorcommand:$ oc image mirror \ [-a ${REG_CREDS}] \ --filter-by-os='.*' \ -f ./redhat-operators-manifests/mapping.txtWarningIf the

--filter-by-osflag remains unset or set to any value other than.*, the command filters out different architectures, which changes the digest of the manifest list, also known as a multi-arch image. The incorrect digest causes deployments of those images and Operators on disconnected clusters to fail.

Apply the

ImageContentSourcePolicyobject:$ oc apply -f ./redhat-operators-manifests/imageContentSourcePolicy.yamlCreate a

CatalogSourceobject that references your catalog image.Modify the following to your specifications and save it as a

catalogsource.yamlfile:apiVersion: operators.coreos.com/v1alpha1 kind: CatalogSource metadata: name: my-operator-catalog namespace: openshift-marketplace spec: sourceType: grpc image: <registry_host_name>:<port>/olm/redhat-operators:v11 displayName: My Operator Catalog publisher: grpc- 1

- Specify your custom Operator catalog image.

Use the file to create the

CatalogSourceobject:$ oc create -f catalogsource.yaml

Verify the following resources are created successfully.

Check the pods:

$ oc get pods -n openshift-marketplaceExample output

NAME READY STATUS RESTARTS AGE my-operator-catalog-6njx6 1/1 Running 0 28s marketplace-operator-d9f549946-96sgr 1/1 Running 0 26hCheck the catalog source:

$ oc get catalogsource -n openshift-marketplaceExample output

NAME DISPLAY TYPE PUBLISHER AGE my-operator-catalog My Operator Catalog grpc 5sCheck the package manifest:

$ oc get packagemanifest -n openshift-marketplaceExample output

NAME CATALOG AGE etcd My Operator Catalog 34s

You can now install the Operators from the OperatorHub page on your restricted network OpenShift Container Platform cluster web console.

You can install the Migration Toolkit for Containers (MTC) on an OpenShift Container Platform 4.5 target cluster.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters. - You must create a custom Operator catalog and push it to a mirror registry.

- You must configure Operator Lifecycle Manager to install the Migration Toolkit for Containers Operator from the mirror registry.

Procedure

-

In the OpenShift Container Platform web console, click Operators

OperatorHub. - Use the Filter by keyword field to find the Migration Toolkit for Containers Operator.

Select the Migration Toolkit for Containers Operator and click Install.

NoteDo not change the subscription approval option to Automatic. The Migration Toolkit for Containers version must be the same on the source and the target clusters.

Click Install.

On the Installed Operators page, the Migration Toolkit for Containers Operator appears in the openshift-migration project with the status Succeeded.

- Click Migration Toolkit for Containers Operator.

- Under Provided APIs, locate the Migration Controller tile, and click Create Instance.

- Click Create.

-

Click Workloads

Pods to verify that the MTC pods are running.

You can install the Migration Toolkit for Containers (MTC) on an OpenShift Container Platform 4 source cluster.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters. - You must create a custom Operator catalog and push it to a mirror registry.

- You must configure Operator Lifecycle Manager to install the Migration Toolkit for Containers Operator from the mirror registry.

Procedure

- Use the Filter by keyword field to find the Migration Toolkit for Containers Operator.

Select the Migration Toolkit for Containers Operator and click Install.

NoteDo not change the subscription approval option to Automatic. The Migration Toolkit for Containers version must be the same on the source and the target clusters.

Click Install.

On the Installed Operators page, the Migration Toolkit for Containers Operator appears in the openshift-migration project with the status Succeeded.

- Click Migration Toolkit for Containers Operator.

- Under Provided APIs, locate the Migration Controller tile, and click Create Instance.

- Click Create.

-

Click Workloads

Pods to verify that the MTC pods are running.

2.2.3. Upgrading the Migration Toolkit for Containers

You can upgrade the Migration Toolkit for Containers (MTC) by using the OpenShift Container Platform web console.

You must ensure that the same MTC version is installed on all clusters.

If you are upgrading MTC version 1.3, you must perform an additional procedure to update the MigPlan custom resource (CR).

2.2.3.1. Upgrading the Migration Toolkit for Containers on an OpenShift Container Platform 4 cluster

You can upgrade the Migration Toolkit for Containers (MTC) on an OpenShift Container Platform 4 cluster by using the OpenShift Container Platform web console.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges.

Procedure

In the OpenShift Container Platform console, navigate to Operators

Installed Operators. Operators that have a pending upgrade display an Upgrade available status.

- Click Migration Toolkit for Containers Operator.

- Click the Subscription tab. Any upgrades requiring approval are displayed next to Upgrade Status. For example, it might display 1 requires approval.

- Click 1 requires approval, then click Preview Install Plan.

- Review the resources that are listed as available for upgrade and click Approve.

-

Navigate back to the Operators

Installed Operators page to monitor the progress of the upgrade. When complete, the status changes to Succeeded and Up to date. - Click Migration Toolkit for Containers Operator.

- Under Provided APIs, locate the Migration Controller tile, and click Create Instance.

If you are upgrading MTC on a source cluster, update the following parameters in the

MigrationControllercustom resource (CR) manifest:spec: ... migration_controller: false migration_ui: false ... deprecated_cors_configuration: trueYou do not need to update the

MigrationControllerCR manifest on the target cluster.- Click Create.

-

Click Workloads

Pods to verify that the MTC pods are running.

2.2.3.2. Upgrading MTC 1.3 to 1.4

If you are upgrading Migration Toolkit for Containers (MTC) version 1.3.x to 1.4, you must update the MigPlan custom resource (CR) manifest on the cluster on which the MigrationController pod is running.

Because the indirectImageMigration and indirectVolumeMigration parameters do not exist in MTC 1.3, their default value in version 1.4 is false, which means that direct image migration and direct volume migration are enabled. Because the direct migration requirements are not fulfilled, the migration plan cannot reach a Ready state unless these parameter values are changed to true.

Prerequisites

- You must have MTC 1.3 installed.

-

You must be logged in as a user with

cluster-adminprivileges.

Procedure

-

Log in to the cluster on which the

MigrationControllerpod is running. Get the

MigPlanCR manifest:$ oc get migplan <migplan> -o yaml -n openshift-migrationUpdate the following parameter values and save the file as

migplan.yaml:... spec: indirectImageMigration: true indirectVolumeMigration: trueReplace the

MigPlanCR manifest to apply the changes:$ oc replace -f migplan.yaml -n openshift-migrationGet the updated

MigPlanCR manifest to verify the changes:$ oc get migplan <migplan> -o yaml -n openshift-migration

2.3. Configuring object storage for a replication repository

You must configure an object storage to use as a replication repository. The Migration Toolkit for Containers (MTC) copies data from the source cluster to the replication repository, and then from the replication repository to the target cluster.

MTC supports the file system and snapshot data copy methods for migrating data from the source cluster to the target cluster. You can select a method that is suited for your environment and is supported by your storage provider.

The following storage providers are supported:

- Multi-Cloud Object Gateway (MCG)

- Amazon Web Services (AWS) S3

- Google Cloud Provider (GCP)

- Microsoft Azure

- Generic S3 object storage, for example, Minio or Ceph S3

In a restricted environment, you can create an internally hosted replication repository.

Prerequisites

- All clusters must have uninterrupted network access to the replication repository.

- If you use a proxy server with an internally hosted replication repository, you must ensure that the proxy allows access to the replication repository.

2.3.1. Configuring a Multi-Cloud Object Gateway storage bucket as a replication repository

You can install the OpenShift Container Storage Operator and configure a Multi-Cloud Object Gateway (MCG) storage bucket as a replication repository for the Migration Toolkit for Containers (MTC).

2.3.1.1. Installing the OpenShift Container Storage Operator

You can install the OpenShift Container Storage Operator from OperatorHub.

Procedure

-

In the OpenShift Container Platform web console, click Operators

OperatorHub. - Use Filter by keyword (in this case, OCS) to find the OpenShift Container Storage Operator.

- Select the OpenShift Container Storage Operator and click Install.

- Select an Update Channel, Installation Mode, and Approval Strategy.

Click Install.

On the Installed Operators page, the OpenShift Container Storage Operator appears in the openshift-storage project with the status Succeeded.

2.3.1.2. Creating the Multi-Cloud Object Gateway storage bucket

You can create the Multi-Cloud Object Gateway (MCG) storage bucket’s custom resources (CRs).

Procedure

Log in to the OpenShift Container Platform cluster:

$ oc loginCreate the

NooBaaCR configuration file,noobaa.yml, with the following content:apiVersion: noobaa.io/v1alpha1 kind: NooBaa metadata: name: noobaa namespace: openshift-storage spec: dbResources: requests: cpu: 0.51 memory: 1Gi coreResources: requests: cpu: 0.52 memory: 1GiCreate the

NooBaaobject:$ oc create -f noobaa.ymlCreate the

BackingStoreCR configuration file,bs.yml, with the following content:apiVersion: noobaa.io/v1alpha1 kind: BackingStore metadata: finalizers: - noobaa.io/finalizer labels: app: noobaa name: mcg-pv-pool-bs namespace: openshift-storage spec: pvPool: numVolumes: 31 resources: requests: storage: 50Gi2 storageClass: gp23 type: pv-poolCreate the

BackingStoreobject:$ oc create -f bs.ymlCreate the

BucketClassCR configuration file,bc.yml, with the following content:apiVersion: noobaa.io/v1alpha1 kind: BucketClass metadata: labels: app: noobaa name: mcg-pv-pool-bc namespace: openshift-storage spec: placementPolicy: tiers: - backingStores: - mcg-pv-pool-bs placement: SpreadCreate the

BucketClassobject:$ oc create -f bc.ymlCreate the

ObjectBucketClaimCR configuration file,obc.yml, with the following content:apiVersion: objectbucket.io/v1alpha1 kind: ObjectBucketClaim metadata: name: migstorage namespace: openshift-storage spec: bucketName: migstorage1 storageClassName: openshift-storage.noobaa.io additionalConfig: bucketclass: mcg-pv-pool-bc- 1

- Record the bucket name for adding the replication repository to the MTC web console.

Create the

ObjectBucketClaimobject:$ oc create -f obc.ymlWatch the resource creation process to verify that the

ObjectBucketClaimstatus isBound:$ watch -n 30 'oc get -n openshift-storage objectbucketclaim migstorage -o yaml'This process can take five to ten minutes.

Obtain and record the following values, which are required when you add the replication repository to the MTC web console:

S3 endpoint:

$ oc get route -n openshift-storage s3S3 provider access key:

$ oc get secret -n openshift-storage migstorage -o go-template='{{ .data.AWS_ACCESS_KEY_ID }}' | base64 --decodeS3 provider secret access key:

$ oc get secret -n openshift-storage migstorage -o go-template='{{ .data.AWS_SECRET_ACCESS_KEY }}' | base64 --decode

2.3.2. Configuring an AWS S3 storage bucket as a replication repository

You can configure an AWS S3 storage bucket as a replication repository for the Migration Toolkit for Containers (MTC).

Prerequisites

- The AWS S3 storage bucket must be accessible to the source and target clusters.

- You must have the AWS CLI installed.

If you are using the snapshot copy method:

- You must have access to EC2 Elastic Block Storage (EBS).

- The source and target clusters must be in the same region.

- The source and target clusters must have the same storage class.

- The storage class must be compatible with snapshots.

Procedure

Create an AWS S3 bucket:

$ aws s3api create-bucket \ --bucket <bucket_name> \1 --region <bucket_region>2 Create the IAM user

velero:$ aws iam create-user --user-name veleroCreate an EC2 EBS snapshot policy:

$ cat > velero-ec2-snapshot-policy.json <<EOF { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "ec2:DescribeVolumes", "ec2:DescribeSnapshots", "ec2:CreateTags", "ec2:CreateVolume", "ec2:CreateSnapshot", "ec2:DeleteSnapshot" ], "Resource": "*" } ] } EOFCreate an AWS S3 access policy for one or for all S3 buckets:

$ cat > velero-s3-policy.json <<EOF { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "s3:GetObject", "s3:DeleteObject", "s3:PutObject", "s3:AbortMultipartUpload", "s3:ListMultipartUploadParts" ], "Resource": [ "arn:aws:s3:::<bucket_name>/*"1 ] }, { "Effect": "Allow", "Action": [ "s3:ListBucket", "s3:GetBucketLocation", "s3:ListBucketMultipartUploads" ], "Resource": [ "arn:aws:s3:::<bucket_name>"2 ] } ] } EOFExample output

"Resource": [ "arn:aws:s3:::*"Attach the EC2 EBS policy to

velero:$ aws iam put-user-policy \ --user-name velero \ --policy-name velero-ebs \ --policy-document file://velero-ec2-snapshot-policy.jsonAttach the AWS S3 policy to

velero:$ aws iam put-user-policy \ --user-name velero \ --policy-name velero-s3 \ --policy-document file://velero-s3-policy.jsonCreate an access key for

velero:$ aws iam create-access-key --user-name velero { "AccessKey": { "UserName": "velero", "Status": "Active", "CreateDate": "2017-07-31T22:24:41.576Z", "SecretAccessKey": <AWS_SECRET_ACCESS_KEY>,1 "AccessKeyId": <AWS_ACCESS_KEY_ID>2 } }

2.3.3. Configuring a Google Cloud Provider storage bucket as a replication repository

You can configure a Google Cloud Provider (GCP) storage bucket as a replication repository for the Migration Toolkit for Containers (MTC).

Prerequisites

- The GCP storage bucket must be accessible to the source and target clusters.

-

You must have

gsutilinstalled. If you are using the snapshot copy method:

- The source and target clusters must be in the same region.

- The source and target clusters must have the same storage class.

- The storage class must be compatible with snapshots.

Procedure

Log in to

gsutil:$ gsutil initExample output

Welcome! This command will take you through the configuration of gcloud. Your current configuration has been set to: [default] To continue, you must login. Would you like to login (Y/n)?Set the

BUCKETvariable:$ BUCKET=<bucket_name>1 - 1

- Specify your bucket name.

Create a storage bucket:

$ gsutil mb gs://$BUCKET/Set the

PROJECT_IDvariable to your active project:$ PROJECT_ID=`gcloud config get-value project`Create a

veleroIAM service account:$ gcloud iam service-accounts create velero \ --display-name "Velero Storage"Create the

SERVICE_ACCOUNT_EMAILvariable:$ SERVICE_ACCOUNT_EMAIL=`gcloud iam service-accounts list \ --filter="displayName:Velero Storage" \ --format 'value(email)'`Create the

ROLE_PERMISSIONSvariable:$ ROLE_PERMISSIONS=( compute.disks.get compute.disks.create compute.disks.createSnapshot compute.snapshots.get compute.snapshots.create compute.snapshots.useReadOnly compute.snapshots.delete compute.zones.get )Create the

velero.servercustom role:$ gcloud iam roles create velero.server \ --project $PROJECT_ID \ --title "Velero Server" \ --permissions "$(IFS=","; echo "${ROLE_PERMISSIONS[*]}")"Add IAM policy binding to the project:

$ gcloud projects add-iam-policy-binding $PROJECT_ID \ --member serviceAccount:$SERVICE_ACCOUNT_EMAIL \ --role projects/$PROJECT_ID/roles/velero.serverUpdate the IAM service account:

$ gsutil iam ch serviceAccount:$SERVICE_ACCOUNT_EMAIL:objectAdmin gs://${BUCKET}Save the IAM service account keys to the

credentials-velerofile in the current directory:$ gcloud iam service-accounts keys create credentials-velero \ --iam-account $SERVICE_ACCOUNT_EMAIL

2.3.4. Configuring a Microsoft Azure Blob storage container as a replication repository

You can configure a Microsoft Azure Blob storage container as a replication repository for the Migration Toolkit for Containers (MTC).

Prerequisites

- You must have an Azure storage account.

- You must have the Azure CLI installed.

- The Azure Blob storage container must be accessible to the source and target clusters.

If you are using the snapshot copy method:

- The source and target clusters must be in the same region.

- The source and target clusters must have the same storage class.

- The storage class must be compatible with snapshots.

Procedure

Set the

AZURE_RESOURCE_GROUPvariable:$ AZURE_RESOURCE_GROUP=Velero_BackupsCreate an Azure resource group:

$ az group create -n $AZURE_RESOURCE_GROUP --location <CentralUS>1 - 1

- Specify your location.

Set the

AZURE_STORAGE_ACCOUNT_IDvariable:$ AZURE_STORAGE_ACCOUNT_ID=velerobackupsCreate an Azure storage account:

$ az storage account create \ --name $AZURE_STORAGE_ACCOUNT_ID \ --resource-group $AZURE_RESOURCE_GROUP \ --sku Standard_GRS \ --encryption-services blob \ --https-only true \ --kind BlobStorage \ --access-tier HotSet the

BLOB_CONTAINERvariable:$ BLOB_CONTAINER=veleroCreate an Azure Blob storage container:

$ az storage container create \ -n $BLOB_CONTAINER \ --public-access off \ --account-name $AZURE_STORAGE_ACCOUNT_IDCreate a service principal and credentials for

velero:$ AZURE_SUBSCRIPTION_ID=`az account list --query '[?isDefault].id' -o tsv` \ AZURE_TENANT_ID=`az account list --query '[?isDefault].tenantId' -o tsv` \ AZURE_CLIENT_SECRET=`az ad sp create-for-rbac --name "velero" --role "Contributor" --query 'password' -o tsv` \ AZURE_CLIENT_ID=`az ad sp list --display-name "velero" --query '[0].appId' -o tsv`Save the service principal credentials in the

credentials-velerofile:$ cat << EOF > ./credentials-velero AZURE_SUBSCRIPTION_ID=${AZURE_SUBSCRIPTION_ID} AZURE_TENANT_ID=${AZURE_TENANT_ID} AZURE_CLIENT_ID=${AZURE_CLIENT_ID} AZURE_CLIENT_SECRET=${AZURE_CLIENT_SECRET} AZURE_RESOURCE_GROUP=${AZURE_RESOURCE_GROUP} AZURE_CLOUD_NAME=AzurePublicCloud EOF

2.4. Migrating your applications

You can migrate your applications by using the Migration Toolkit for Containers (MTC) web console or on the command line.

2.4.1. Prerequisites

The Migration Toolkit for Containers (MTC) has the following prerequisites:

-

You must be logged in as a user with

cluster-adminprivileges on all clusters. - The MTC version must be the same on all clusters.

Clusters:

- The source cluster must be upgraded to the latest MTC z-stream release.

-

The cluster on which the

migration-controllerpod is running must have unrestricted network access to the other clusters. - The clusters must have unrestricted network access to each other.

- The clusters must have unrestricted network access to the replication repository.

- The clusters must be able to communicate using OpenShift routes on port 443.

- The clusters must have no critical conditions.

- The clusters must be in a ready state.

Volume migration:

- The persistent volumes (PVs) must be valid.

- The PVs must be bound to persistent volume claims.

- If you copy the PVs by using the move method, the clusters must have unrestricted network access to the remote volume.

If you copy the PVs by using the snapshot copy method, the following prerequisites apply:

- The cloud provider must support snapshots.

- The volumes must have the same cloud provider.

- The volumes must be located in the same geographic region.

- The volumes must have the same storage class.

- If you perform a direct volume migration in a proxy environment, you must configure an Stunnel TCP proxy.

- If you perform a direct image migration, you must expose the internal registry of the source cluster to external traffic.

2.4.1.1. Creating a CA certificate bundle file

If you use a self-signed certificate to secure a cluster or a replication repository for the Migration Toolkit for Containers (MTC), certificate verification might fail with the following error message: Certificate signed by unknown authority.

You can create a custom CA certificate bundle file and upload it in the MTC web console when you add a cluster or a replication repository.

Procedure

Download a CA certificate from a remote endpoint and save it as a CA bundle file:

$ echo -n | openssl s_client -connect <host_FQDN>:<port> \

| sed -ne '/-BEGIN CERTIFICATE-/,/-END CERTIFICATE-/p' > <ca_bundle.cert> 2.4.1.2. Configuring a proxy for direct volume migration

If you are performing direct volume migration from a source cluster behind a proxy, you must configure an Stunnel proxy in the MigrationController custom resource (CR). Stunnel creates a transparent tunnel between the source and target clusters for the TCP connection without changing the certificates.

Direct volume migration supports only one proxy. The source cluster cannot access the route of the target cluster if the target cluster is also behind a proxy.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters.

Procedure

-

Log in to the cluster on which the

MigrationControllerpod runs. Get the

MigrationControllerCR manifest:$ oc get migrationcontroller <migration_controller> -n openshift-migrationAdd the

stunnel_tcp_proxyparameter:apiVersion: migration.openshift.io/v1alpha1 kind: MigrationController metadata: name: migration-controller namespace: openshift-migration ... spec: stunnel_tcp_proxy: <stunnel_proxy>1 - 1

- Specify the Stunnel proxy:

http://<user_name>:<password>@<ip_address>:<port>.

-

Save the manifest as

migration-controller.yaml. Apply the updated manifest:

$ oc replace -f migration-controller.yaml -n openshift-migration

2.4.1.3. Writing an Ansible playbook for a migration hook

You can write an Ansible playbook to use as a migration hook. The hook is added to a migration plan by using the MTC web console or by specifying values for the spec.hooks parameters in the MigPlan custom resource (CR) manifest.

The Ansible playbook is mounted onto a hook container as a config map. The hook container runs as a job, using the cluster, service account, and namespace specified in the MigPlan CR. The hook container uses a specified service account token so that the tasks do not require authentication before they run in the cluster.

2.4.1.3.1. Ansible modules

You can use the Ansible shell module to run oc commands.

Example shell module

- hosts: localhost

gather_facts: false

tasks:

- name: get pod name

shell: oc get po --all-namespaces

You can use kubernetes.core modules, such as k8s_info, to interact with Kubernetes resources.

Example k8s_facts module

- hosts: localhost

gather_facts: false

tasks:

- name: Get pod

k8s_info:

kind: pods

api: v1

namespace: openshift-migration

name: "{{ lookup( 'env', 'HOSTNAME') }}"

register: pods

- name: Print pod name

debug:

msg: "{{ pods.resources[0].metadata.name }}"

You can use the fail module to produce a non-zero exit status in cases where a non-zero exit status would not normally be produced, ensuring that the success or failure of a hook is detected. Hooks run as jobs and the success or failure status of a hook is based on the exit status of the job container.

Example fail module

- hosts: localhost

gather_facts: false

tasks:

- name: Set a boolean

set_fact:

do_fail: true

- name: "fail"

fail:

msg: "Cause a failure"

when: do_fail2.4.1.3.2. Environment variables

The MigPlan CR name and migration namespaces are passed as environment variables to the hook container. These variables are accessed by using the lookup plug-in.

Example environment variables

- hosts: localhost

gather_facts: false

tasks:

- set_fact:

namespaces: "{{ (lookup( 'env', 'migration_namespaces')).split(',') }}"

- debug:

msg: "{{ item }}"

with_items: "{{ namespaces }}"

- debug:

msg: "{{ lookup( 'env', 'migplan_name') }}"2.4.1.4. Additional resources

2.4.2. Migrating your applications using the MTC web console

You can configure clusters and a replication repository by using the MTC web console. Then, you can create and run a migration plan.

2.4.2.1. Launching the MTC web console

You can launch the Migration Toolkit for Containers (MTC) web console in a browser.

Prerequisites

- The MTC web console must have network access to the OpenShift Container Platform web console.

- The MTC web console must have network access to the OAuth authorization server.

Procedure

- Log in to the OpenShift Container Platform cluster on which you have installed MTC.

Obtain the MTC web console URL by entering the following command:

$ oc get -n openshift-migration route/migration -o go-template='https://{{ .spec.host }}'The output resembles the following:

https://migration-openshift-migration.apps.cluster.openshift.com.Launch a browser and navigate to the MTC web console.

NoteIf you try to access the MTC web console immediately after installing the Migration Toolkit for Containers Operator, the console might not load because the Operator is still configuring the cluster. Wait a few minutes and retry.

- If you are using self-signed CA certificates, you will be prompted to accept the CA certificate of the source cluster API server. The web page guides you through the process of accepting the remaining certificates.

- Log in with your OpenShift Container Platform username and password.

2.4.2.2. Adding a cluster to the Migration Toolkit for Containers web console

You can add a cluster to the Migration Toolkit for Containers (MTC) web console.

Prerequisites

If you are using Azure snapshots to copy data:

- You must specify the Azure resource group name for the cluster.

- The clusters must be in the same Azure resource group.

- The clusters must be in the same geographic location.

Procedure

- Log in to the cluster.

Obtain the

migration-controllerservice account token:$ oc sa get-token migration-controller -n openshift-migrationExample output

eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJtaWciLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlY3JldC5uYW1lIjoibWlnLXRva2VuLWs4dDJyIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6Im1pZyIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImE1YjFiYWMwLWMxYmYtMTFlOS05Y2NiLTAyOWRmODYwYjMwOCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDptaWc6bWlnIn0.xqeeAINK7UXpdRqAtOj70qhBJPeMwmgLomV9iFxr5RoqUgKchZRG2J2rkqmPm6vr7K-cm7ibD1IBpdQJCcVDuoHYsFgV4mp9vgOfn9osSDp2TGikwNz4Az95e81xnjVUmzh-NjDsEpw71DH92iHV_xt2sTwtzftS49LpPW2LjrV0evtNBP_t_RfskdArt5VSv25eORl7zScqfe1CiMkcVbf2UqACQjo3LbkpfN26HAioO2oH0ECPiRzT0Xyh-KwFutJLS9Xgghyw-LD9kPKcE_xbbJ9Y4Rqajh7WdPYuB0Jd9DPVrslmzK-F6cgHHYoZEv0SvLQi-PO0rpDrcjOEQQ- In the MTC web console, click Clusters.

- Click Add cluster.

Fill in the following fields:

-

Cluster name: The cluster name can contain lower-case letters (

a-z) and numbers (0-9). It must not contain spaces or international characters. -

URL: Specify the API server URL, for example,

https://<www.example.com>:8443. -

Service account token: Paste the

migration-controllerservice account token. Exposed route host to image registry: If you are using direct image migration, specify the exposed route to the image registry of the source cluster, for example,

www.example.apps.cluster.com.You can specify a port. The default port is

5000.- Azure cluster: You must select this option if you use Azure snapshots to copy your data.

- Azure resource group: This field is displayed if Azure cluster is selected. Specify the Azure resource group.

- Require SSL verification: Optional: Select this option to verify SSL connections to the cluster.

- CA bundle file: This field is displayed if Require SSL verification is selected. If you created a custom CA certificate bundle file for self-signed certificates, click Browse, select the CA bundle file, and upload it.

-

Cluster name: The cluster name can contain lower-case letters (

Click Add cluster.

The cluster appears in the Clusters list.

2.4.2.3. Adding a replication repository to the MTC web console

You can add an object storage bucket as a replication repository to the Migration Toolkit for Containers (MTC) web console.

Prerequisites

- You must configure an object storage bucket for migrating the data.

Procedure

- In the MTC web console, click Replication repositories.

- Click Add repository.

Select a Storage provider type and fill in the following fields:

AWS for AWS S3, MCG, and generic S3 providers:

- Replication repository name: Specify the replication repository name in the MTC web console.

- S3 bucket name: Specify the name of the S3 bucket you created.

- S3 bucket region: Specify the S3 bucket region. Required for AWS S3. Optional for other S3 providers.

-

S3 endpoint: Specify the URL of the S3 service, not the bucket, for example,

https://<s3-storage.apps.cluster.com>. Required for a generic S3 provider. You must use thehttps://prefix. -

S3 provider access key: Specify the

<AWS_SECRET_ACCESS_KEY>for AWS or the S3 provider access key for MCG. -

S3 provider secret access key: Specify the

<AWS_ACCESS_KEY_ID>for AWS or the S3 provider secret access key for MCG. - Require SSL verification: Clear this check box if you are using a generic S3 provider.

- If you use a custom CA bundle, click Browse and browse to the Base64-encoded CA bundle file.

GCP:

- Replication repository name: Specify the replication repository name in the MTC web console.

- GCP bucket name: Specify the name of the GCP bucket.

-

GCP credential JSON blob: Specify the string in the

credentials-velerofile.

Azure:

- Replication repository name: Specify the replication repository name in the MTC web console.

- Azure resource group: Specify the resource group of the Azure Blob storage.

- Azure storage account name: Specify the Azure Blob storage account name.

-

Azure credentials - INI file contents: Specify the string in the

credentials-velerofile.

- Click Add repository and wait for connection validation.

Click Close.

The new repository appears in the Replication repositories list.

2.4.2.4. Creating a migration plan in the MTC web console

You can create a migration plan in the Migration Toolkit for Containers (MTC) web console.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges on all clusters. - You must ensure that the same MTC version is installed on all clusters.

- You must add the clusters and the replication repository to the MTC web console.

- If you want to use the move data copy method to migrate a persistent volume (PV), the source and target clusters must have uninterrupted network access to the remote volume.

-

If you want to use direct image migration, the

MigClustercustom resource manifest of the source cluster must specify the exposed route of the internal image registry.

Procedure

- In the MTC web console, click Migration plans.

- Click Add migration plan.

Enter the Plan name and click Next.

The migration plan name must not exceed 253 lower-case alphanumeric characters (

a-z, 0-9) and must not contain spaces or underscores (_).- Select a Source cluster.

- Select a Target cluster.

- Select a Replication repository.

- Select the projects to be migrated and click Next.

- Select a Source cluster, a Target cluster, and a Repository, and click Next.

- On the Namespaces page, select the projects to be migrated and click Next.

On the Persistent volumes page, click a Migration type for each PV:

- The Copy option copies the data from the PV of a source cluster to the replication repository and then restores the data on a newly created PV, with similar characteristics, in the target cluster.

- The Move option unmounts a remote volume, for example, NFS, from the source cluster, creates a PV resource on the target cluster pointing to the remote volume, and then mounts the remote volume on the target cluster. Applications running on the target cluster use the same remote volume that the source cluster was using.

- Click Next.

On the Copy options page, select a Copy method for each PV:

- Snapshot copy backs up and restores data using the cloud provider’s snapshot functionality. It is significantly faster than Filesystem copy.

Filesystem copy backs up the files on the source cluster and restores them on the target cluster.

The file system copy method is required for direct volume migration.

- You can select Verify copy to verify data migrated with Filesystem copy. Data is verified by generating a checksum for each source file and checking the checksum after restoration. Data verification significantly reduces performance.

Select a Target storage class.

If you selected Filesystem copy, you can change the target storage class.

- Click Next.

On the Migration options page, the Direct image migration option is selected if you specified an exposed image registry route for the source cluster. The Direct PV migration option is selected if you are migrating data with Filesystem copy.

The direct migration options copy images and files directly from the source cluster to the target cluster. This option is much faster than copying images and files from the source cluster to the replication repository and then from the replication repository to the target cluster.

- Click Next.

Optional: On the Hooks page, click Add Hook to add a hook to the migration plan.

A hook runs custom code. You can add up to four hooks to a single migration plan. Each hook runs during a different migration step.

- Enter the name of the hook to display in the web console.

- If the hook is an Ansible playbook, select Ansible playbook and click Browse to upload the playbook or paste the contents of the playbook in the field.

- Optional: Specify an Ansible runtime image if you are not using the default hook image.

If the hook is not an Ansible playbook, select Custom container image and specify the image name and path.

A custom container image can include Ansible playbooks.

- Select Source cluster or Target cluster.

- Enter the Service account name and the Service account namespace.

Select the migration step for the hook:

- preBackup: Before the application workload is backed up on the source cluster

- postBackup: After the application workload is backed up on the source cluster

- preRestore: Before the application workload is restored on the target cluster

- postRestore: After the application workload is restored on the target cluster

- Click Add.

Click Finish.

The migration plan is displayed in the Migration plans list.

2.4.2.5. Running a migration plan in the MTC web console

You can stage or migrate applications and data with the migration plan you created in the Migration Toolkit for Containers (MTC) web console.

During migration, MTC sets the reclaim policy of migrated persistent volumes (PVs) to Retain on the target cluster.

The Backup custom resource contains a PVOriginalReclaimPolicy annotation that indicates the original reclaim policy. You can manually restore the reclaim policy of the migrated PVs.

Prerequisites

The MTC web console must contain the following:

-

Source cluster in a

Readystate -

Target cluster in a

Readystate - Replication repository

- Valid migration plan

Procedure

- Log in to the source cluster.

Delete old images:

$ oc adm prune images- Log in to the MTC web console and click Migration plans.

Click the Options menu

next to a migration plan and select Stage to copy data from the source cluster to the target cluster without stopping the application.

next to a migration plan and select Stage to copy data from the source cluster to the target cluster without stopping the application.

You can run Stage multiple times to reduce the actual migration time.

-

When you are ready to migrate the application workload, the Options menu

beside a migration plan and select Migrate.

beside a migration plan and select Migrate.

- Optional: In the Migrate window, you can select Do not stop applications on the source cluster during migration.

- Click Migrate.

When the migration is complete, verify that the application migrated successfully in the OpenShift Container Platform web console:

-

Click Home

Projects. - Click the migrated project to view its status.

- In the Routes section, click Location to verify that the application is functioning, if applicable.

-

Click Workloads

Pods to verify that the pods are running in the migrated namespace. -

Click Storage

Persistent volumes to verify that the migrated persistent volume is correctly provisioned.

-

Click Home

2.4.3. Migrating your applications from the command line

You can migrate your applications on the command line by using the MTC custom resources (CRs).

You can migrate applications from a local cluster to a remote cluster, from a remote cluster to a local cluster, and between remote clusters.

MTC terminology

The following terms are relevant for configuring clusters:

hostcluster:-

The

migration-controllerpod runs on thehostcluster. -

A

hostcluster does not require an exposed secure registry route for direct image migration.

-

The

-

Local cluster: The local cluster is often the same as the

hostcluster but this is not a requirement. Remote cluster:

- A remote cluster must have an exposed secure registry route for direct image migration.

-

A remote cluster must have a

SecretCR containing themigration-controllerservice account token.

The following terms are relevant for performing a migration:

- Source cluster: Cluster from which the applications are migrated.

- Destination cluster: Cluster to which the applications are migrated.

2.4.3.1. Migrating your applications with the Migration Toolkit for Containers API

You can migrate your applications on the command line with the Migration Toolkit for Containers (MTC) API.

You can migrate applications from a local cluster to a remote cluster, from a remote cluster to a local cluster, and between remote clusters.

This procedure describes how to perform indirect migration and direct migration:

- Indirect migration: Images, volumes, and Kubernetes objects are copied from the source cluster to the replication repository and then from the replication repository to the destination cluster.

- Direct migration: Images or volumes are copied directly from the source cluster to the destination cluster. Direct image migration and direct volume migration have significant performance benefits.

You create the following custom resources (CRs) to perform a migration:

MigClusterCR: Defines ahost, local, or remote clusterThe

migration-controllerpod runs on thehostcluster.-

SecretCR: Contains credentials for a remote cluster or storage MigStorageCR: Defines a replication repositoryDifferent storage providers require different parameters in the

MigStorageCR manifest.-

MigPlanCR: Defines a migration plan MigMigrationCR: Performs a migration defined in an associatedMigPlanYou can create multiple

MigMigrationCRs for a singleMigPlanCR for the following purposes:- To perform stage migrations, which copy most of the data without stopping the application, before running a migration. Stage migrations improve the performance of the migration.

- To cancel a migration in progress

- To roll back a completed migration

Prerequisites

-

You must have

cluster-adminprivileges for all clusters. -

You must install the OpenShift Container Platform CLI (

oc). - You must install the Migration Toolkit for Containers Operator on all clusters.

- The version of the installed Migration Toolkit for Containers Operator must be the same on all clusters.

- You must configure an object storage as a replication repository.

- If you are using direct image migration, you must expose a secure registry route on all remote clusters.

- If you are using direct volume migration, the source cluster must not have an HTTP proxy configured.

Procedure

Create a

MigClusterCR manifest for thehostcluster calledhost-cluster.yaml:apiVersion: migration.openshift.io/v1alpha1 kind: MigCluster metadata: name: host namespace: openshift-migration spec: isHostCluster: trueCreate a

MigClusterCR for thehostcluster:$ oc create -f host-cluster.yaml -n openshift-migrationCreate a

SecretCR manifest for each remote cluster calledcluster-secret.yaml:apiVersion: v1 kind: Secret metadata: name: <cluster_secret> namespace: openshift-config type: Opaque data: saToken: <sa_token>1 - 1

- Specify the base64-encoded

migration-controllerservice account (SA) token of the remote cluster.

You can obtain the SA token by running the following command:

$ oc sa get-token migration-controller -n openshift-migration | base64 -w 0Create a

SecretCR for each remote cluster:$ oc create -f cluster-secret.yamlCreate a

MigClusterCR manifest for each remote cluster calledremote-cluster.yaml:apiVersion: migration.openshift.io/v1alpha1 kind: MigCluster metadata: name: <remote_cluster> namespace: openshift-migration spec: exposedRegistryPath: <exposed_registry_route>1 insecure: false2 isHostCluster: false serviceAccountSecretRef: name: <remote_cluster_secret>3 namespace: openshift-config url: <remote_cluster_url>4 - 1

- Optional: Specify the exposed registry route, for example,

docker-registry-default.apps.example.comif you are using direct image migration. - 2

- SSL verification is enabled if

false. CA certificates are not required or checked iftrue. - 3

- Specify the

SecretCR of the remote cluster. - 4

- Specify the URL of the remote cluster.

Create a

MigClusterCR for each remote cluster:$ oc create -f remote-cluster.yaml -n openshift-migrationVerify that all clusters are in a

Readystate:$ oc describe cluster <cluster_name>Create a

SecretCR manifest for the replication repository calledstorage-secret.yaml:apiVersion: v1 kind: Secret metadata: namespace: openshift-config name: <migstorage_creds> type: Opaque data: aws-access-key-id: <key_id_base64>1 aws-secret-access-key: <secret_key_base64>2 AWS credentials are base64-encoded by default. If you are using another storage provider, you must encode your credentials by running the following command with each key:

$ echo -n "<key>" | base64 -w 01 - 1

- Specify the key ID or the secret key. Both keys must be base64-encoded.

Create the

SecretCR for the replication repository:$ oc create -f storage-secret.yamlCreate a

MigStorageCR manifest for the replication repository calledmigstorage.yaml:apiVersion: migration.openshift.io/v1alpha1 kind: MigStorage metadata: name: <storage_name> namespace: openshift-migration spec: backupStorageConfig: awsBucketName: <bucket_name>1 credsSecretRef: name: <storage_secret_ref>2 namespace: openshift-config backupStorageProvider: <storage_provider_name>3 volumeSnapshotConfig: credsSecretRef: name: <storage_secret_ref>4 namespace: openshift-config volumeSnapshotProvider: <storage_provider_name>5 - 1

- Specify the bucket name.

- 2

- Specify the

SecretsCR of the object storage. You must ensure that the credentials stored in theSecretsCR of the object storage are correct. - 3

- Specify the storage provider.

- 4

- Optional: If you are copying data by using snapshots, specify the

SecretsCR of the object storage. You must ensure that the credentials stored in theSecretsCR of the object storage are correct. - 5

- Optional: If you are copying data by using snapshots, specify the storage provider.

Create the

MigStorageCR:$ oc create -f migstorage.yaml -n openshift-migrationVerify that the

MigStorageCR is in aReadystate:$ oc describe migstorage <migstorage_name>Create a

MigPlanCR manifest calledmigplan.yaml:apiVersion: migration.openshift.io/v1alpha1 kind: MigPlan metadata: name: <migration_plan> namespace: openshift-migration spec: destMigClusterRef: name: host namespace: openshift-migration indirectImageMigration: true1 indirectVolumeMigration: true2 migStorageRef: name: <migstorage_ref>3 namespace: openshift-migration namespaces: - <application_namespace>4 srcMigClusterRef: name: <remote_cluster_ref>5 namespace: openshift-migrationCreate the

MigPlanCR:$ oc create -f migplan.yaml -n openshift-migrationView the

MigPlaninstance to verify that it is in aReadystate:$ oc describe migplan <migplan_name> -n openshift-migrationCreate a

MigMigrationCR manifest calledmigmigration.yaml:apiVersion: migration.openshift.io/v1alpha1 kind: MigMigration metadata: name: <migmigration_name> namespace: openshift-migration spec: migPlanRef: name: <migplan_name>1 namespace: openshift-migration quiescePods: true2 stage: false3 rollback: false4 Create the

MigMigrationCR to start the migration defined in theMigPlanCR:$ oc create -f migmigration.yaml -n openshift-migrationVerify the progress of the migration by watching the

MigMigrationCR:$ oc watch migmigration <migmigration_name> -n openshift-migrationThe output resembles the following:

Example output

Name: c8b034c0-6567-11eb-9a4f-0bc004db0fbc Namespace: openshift-migration Labels: migration.openshift.io/migplan-name=django Annotations: openshift.io/touch: e99f9083-6567-11eb-8420-0a580a81020c API Version: migration.openshift.io/v1alpha1 Kind: MigMigration ... Spec: Mig Plan Ref: Name: my_application Namespace: openshift-migration Stage: false Status: Conditions: Category: Advisory Last Transition Time: 2021-02-02T15:04:09Z Message: Step: 19/47 Reason: InitialBackupCreated Status: True Type: Running Category: Required Last Transition Time: 2021-02-02T15:03:19Z Message: The migration is ready. Status: True Type: Ready Category: Required Durable: true Last Transition Time: 2021-02-02T15:04:05Z Message: The migration registries are healthy. Status: True Type: RegistriesHealthy Itinerary: Final Observed Digest: 7fae9d21f15979c71ddc7dd075cb97061895caac5b936d92fae967019ab616d5 Phase: InitialBackupCreated Pipeline: Completed: 2021-02-02T15:04:07Z Message: Completed Name: Prepare Started: 2021-02-02T15:03:18Z Message: Waiting for initial Velero backup to complete. Name: Backup Phase: InitialBackupCreated Progress: Backup openshift-migration/c8b034c0-6567-11eb-9a4f-0bc004db0fbc-wpc44: 0 out of estimated total of 0 objects backed up (5s) Started: 2021-02-02T15:04:07Z Message: Not started Name: StageBackup Message: Not started Name: StageRestore Message: Not started Name: DirectImage Message: Not started Name: DirectVolume Message: Not started Name: Restore Message: Not started Name: Cleanup Start Timestamp: 2021-02-02T15:03:18Z Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Running 57s migmigration_controller Step: 2/47 Normal Running 57s migmigration_controller Step: 3/47 Normal Running 57s (x3 over 57s) migmigration_controller Step: 4/47 Normal Running 54s migmigration_controller Step: 5/47 Normal Running 54s migmigration_controller Step: 6/47 Normal Running 52s (x2 over 53s) migmigration_controller Step: 7/47 Normal Running 51s (x2 over 51s) migmigration_controller Step: 8/47 Normal Ready 50s (x12 over 57s) migmigration_controller The migration is ready. Normal Running 50s migmigration_controller Step: 9/47 Normal Running 50s migmigration_controller Step: 10/47

2.4.3.2. MTC custom resource manifests

Migration Toolkit for Containers (MTC) uses the following custom resource (CR) manifests to create CRs for migrating applications.

2.4.3.2.1. DirectImageMigration

The DirectImageMigration CR copies images directly from the source cluster to the destination cluster.

apiVersion: migration.openshift.io/v1alpha1

kind: DirectImageMigration

metadata:

labels:

controller-tools.k8s.io: "1.0"

name: <directimagemigration_name>

spec:

srcMigClusterRef:

name: <source_cluster_ref>

namespace: openshift-migration

destMigClusterRef:

name: <destination_cluster_ref>

namespace: openshift-migration

namespaces:

- <namespace> 2.4.3.2.2. DirectImageStreamMigration

The DirectImageStreamMigration CR copies image stream references directly from the source cluster to the destination cluster.

apiVersion: migration.openshift.io/v1alpha1

kind: DirectImageStreamMigration

metadata:

labels:

controller-tools.k8s.io: "1.0"

name: directimagestreammigration_name

spec:

srcMigClusterRef:

name: <source_cluster_ref>

namespace: openshift-migration

destMigClusterRef:

name: <destination_cluster_ref>

namespace: openshift-migration

imageStreamRef:

name: <image_stream_name>

namespace: <source_image_stream_namespace>

destNamespace: <destination_image_stream_namespace> 2.4.3.2.3. DirectVolumeMigration

The DirectVolumeMigration CR copies persistent volumes (PVs) directly from the source cluster to the destination cluster.

apiVersion: migration.openshift.io/v1alpha1

kind: DirectVolumeMigration

metadata:

name: <directvolumemigration_name>

namespace: openshift-migration

spec:

createDestinationNamespaces: false

deleteProgressReportingCRs: false

destMigClusterRef:

name: host

namespace: openshift-migration

persistentVolumeClaims:

- name: <pvc_name>

namespace: <pvc_namespace>

srcMigClusterRef:

name: <source_cluster_ref>

namespace: openshift-migration- 1

- Namespaces are created for the PVs on the destination cluster if

true. - 2

- The

DirectVolumeMigrationProgressCRs are deleted after migration iftrue. The default value isfalseso thatDirectVolumeMigrationProgressCRs are retained for troubleshooting. - 3

- Update the cluster name if the destination cluster is not the host cluster.

- 4

- Specify one or more PVCs to be migrated with direct volume migration.

- 5

- Specify the namespace of each PVC.

- 6

- Specify the

MigClusterCR name of the source cluster.

2.4.3.2.4. DirectVolumeMigrationProgress

The DirectVolumeMigrationProgress CR shows the progress of the DirectVolumeMigration CR.

apiVersion: migration.openshift.io/v1alpha1

kind: DirectVolumeMigrationProgress

metadata:

labels:

controller-tools.k8s.io: "1.0"

name: directvolumemigrationprogress_name

spec:

clusterRef:

name: source_cluster

namespace: openshift-migration

podRef:

name: rsync_pod

namespace: openshift-migration2.4.3.2.5. MigAnalytic

The MigAnalytic CR collects the number of images, Kubernetes resources, and the PV capacity from an associated MigPlan CR.

apiVersion: migration.openshift.io/v1alpha1

kind: MigAnalytic

metadata:

annotations:

migplan: <migplan_name>

name: miganalytic_name

namespace: openshift-migration

labels:

migplan: <migplan_name>

spec:

analyzeImageCount: true

analyzeK8SResources: true

analyzePVCapacity: true

listImages: false

listImagesLimit: 50

migPlanRef:

name: migplan_name

namespace: openshift-migration- 1

- Specify the

MigPlanCR name associated with theMigAnalyticCR. - 2

- Specify the

MigPlanCR name associated with theMigAnalyticCR. - 3

- Optional: The number of images is returned if

true. - 4

- Optional: Returns the number, kind, and API version of the Kubernetes resources if

true. - 5

- Optional: Returns the PV capacity if

true. - 6

- Returns a list of image names if

true. Default isfalseso that the output is not excessively long. - 7

- Optional: Specify the maximum number of image names to return if

listImagesistrue. - 8

- Specify the

MigPlanCR name associated with theMigAnalyticCR.

2.4.3.2.6. MigCluster

The MigCluster CR defines a host, local, or remote cluster.

apiVersion: migration.openshift.io/v1alpha1

kind: MigCluster

metadata:

labels:

controller-tools.k8s.io: "1.0"

name: host

namespace: openshift-migration

spec:

isHostCluster: true

azureResourceGroup: <azure_resource_group>

caBundle: <ca_bundle_base64>

insecure: false

refresh: false

# The 'restartRestic' parameter is relevant for a source cluster.

# restartRestic: true

# The following parameters are relevant for a remote cluster.

# isHostCluster: false

# exposedRegistryPath:

# url: <destination_cluster_url>

# serviceAccountSecretRef:

# name: <source_secret_ref>

# namespace: openshift-config- 1

- Optional: Update the cluster name if the

migration-controllerpod is not running on this cluster. - 2

- The

migration-controllerpod runs on this cluster iftrue. - 3