Chapter 6. Creating and managing serverless applications

6.1. Serverless applications using Knative services

To deploy a serverless application using OpenShift Serverless, you must create a Knative service. Knative services are Kubernetes services, defined by a route and a configuration, and contained in a YAML file.

Example Knative service YAML

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: hello

namespace: default

spec:

template:

spec:

containers:

- image: docker.io/openshift/hello-openshift

env:

- name: RESPONSE

value: "Hello Serverless!"You can create a serverless application by using one of the following methods:

- Create a Knative service from the OpenShift Container Platform web console.

-

Create a Knative service using the

knCLI. - Create and apply a YAML file.

6.2. Creating serverless applications using the OpenShift Container Platform web console

You can create a serverless application using either the Developer or Administrator perspective in the OpenShift Container Platform web console.

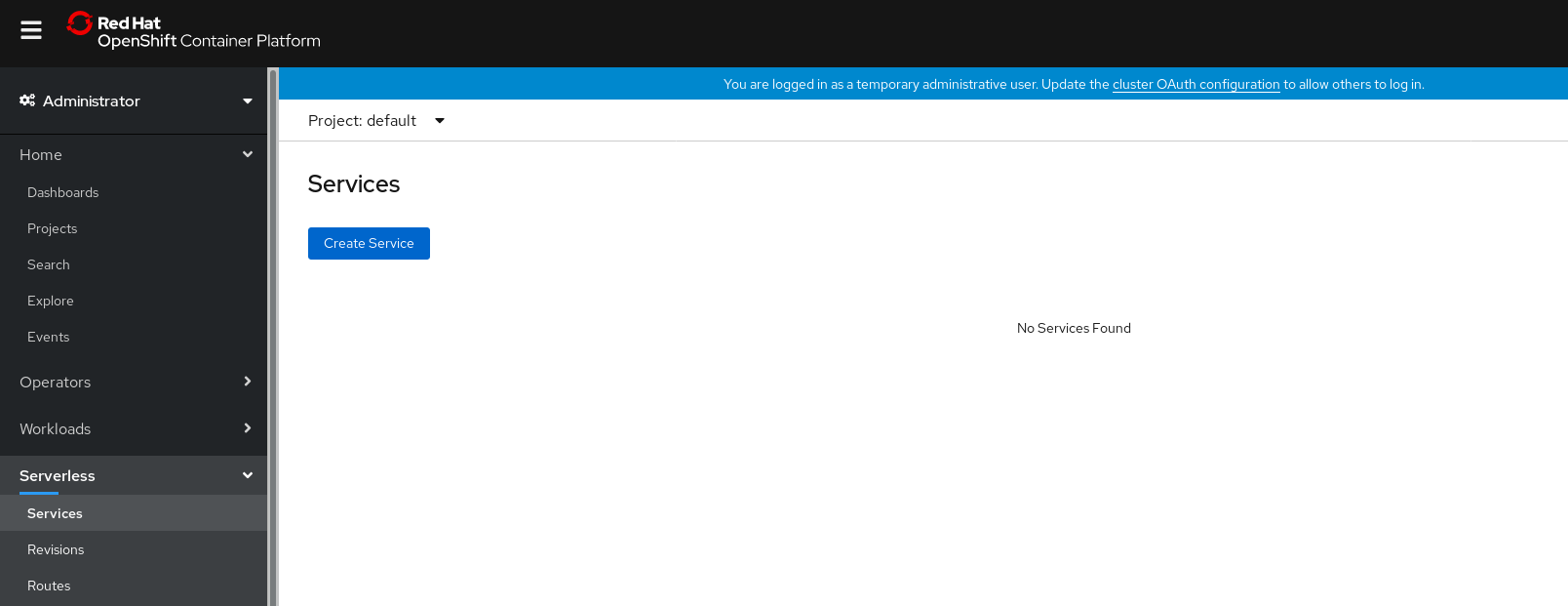

6.2.1. Creating serverless applications using the Administrator perspective

Prerequisites

To create serverless applications using the Administrator perspective, ensure that you have completed the following steps.

- The OpenShift Serverless Operator and Knative Serving are installed.

- You have logged in to the web console and are in the Administrator perspective.

Procedure

Navigate to the Serverless

Services page.

- Click Create Service.

Manually enter YAML or JSON definitions, or by dragging and dropping a file into the editor.

- Click Create.

6.2.2. Creating serverless applications using the Developer perspective

For more information about creating applications using the Developer perspective in OpenShift Container Platform, see the documentation on Creating applications using the Developer perspective.

6.3. Creating serverless applications using the kn CLI

The following procedure describes how you can create a basic serverless application using the kn CLI.

Prerequisites

- OpenShift Serverless Operator and Knative Serving are installed on your cluster.

-

You have installed

knCLI.

Procedure

Create the Knative service by entering the following command:

$ kn service create <service_name> --image <image> --env <key=value>Example command

$ kn service create hello --image docker.io/openshift/hello-openshift --env RESPONSE="Hello Serverless!"Example output

Creating service 'hello' in namespace 'default': 0.271s The Route is still working to reflect the latest desired specification. 0.580s Configuration "hello" is waiting for a Revision to become ready. 3.857s ... 3.861s Ingress has not yet been reconciled. 4.270s Ready to serve. Service 'hello' created with latest revision 'hello-bxshg-1' and URL: http://hello-default.apps-crc.testing

6.4. Creating serverless applications using YAML

To create a serverless application by using YAML, you must create a YAML file that defines a Service, then apply it by using oc apply.

Procedure

Create a YAML file, then copy the following example into the file:

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: hello namespace: default spec: template: spec: containers: - image: docker.io/openshift/hello-openshift env: - name: RESPONSE value: "Hello Serverless!"Navigate to the directory where the YAML file is contained, and deploy the application by applying the YAML file:

$ oc apply -f <filename>

After the Service is created and the application is deployed, Knative creates an immutable Revision for this version of the application.

Knative also performs network programming to create a Route, Ingress, Service, and load balancer for your application and automatically scales your pods up and down based on traffic, including inactive pods.

6.5. Verifying your serverless application deployment

To verify that your serverless application has been deployed successfully, you must get the application URL created by Knative, and then send a request to that URL and observe the output.

OpenShift Serverless supports the use of both HTTP and HTTPS URLs; however the output from oc get ksvc <service_name> always prints URLs using the http:// format.

Procedure

Find the application URL by entering:

$ oc get ksvc <service_name>Example output

NAME URL LATESTCREATED LATESTREADY READY REASON hello http://hello-default.example.com hello-4wsd2 hello-4wsd2 TrueMake a request to your cluster and observe the output.

Example HTTP request

$ curl http://hello-default.example.comExample HTTPS request

$ curl https://hello-default.example.comExample output

Hello Serverless!Optional. If you receive an error relating to a self-signed certificate in the certificate chain, you can add the

--insecureflag to the curl command to ignore the error.ImportantSelf-signed certificates must not be used in a production deployment. This method is only for testing purposes.

Example command

$ curl https://hello-default.example.com --insecureExample output

Hello Serverless!Optional. If your OpenShift Container Platform cluster is configured with a certificate that is signed by a certificate authority (CA) but not yet globally configured for your system, you can specify this with the curl command. The path to the certificate can be passed to the curl command by using the

--cacertflag.Example command

$ curl https://hello-default.example.com --cacert <file>Example output

Hello Serverless!

6.6. Interacting with a serverless application using HTTP2 and gRPC

OpenShift Serverless supports only insecure or edge-terminated routes.

Insecure or edge-terminated routes do not support HTTP2 on OpenShift Container Platform. These routes also do not support gRPC because gRPC is transported by HTTP2.

If you use these protocols in your application, you must call the application using the ingress gateway directly. To do this you must find the ingress gateway’s public address and the application’s specific host.

Procedure

- Find the application host. See the instructions in Verifying your serverless application deployment.

Find the ingress gateway’s public address:

$ oc -n knative-serving-ingress get svc kourierExample output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kourier LoadBalancer 172.30.51.103 a83e86291bcdd11e993af02b7a65e514-33544245.us-east-1.elb.amazonaws.com 80:31380/TCP,443:31390/TCP 67mThe public address is surfaced in the

EXTERNAL-IPfield. In this case, it would bea83e86291bcdd11e993af02b7a65e514-33544245.us-east-1.elb.amazonaws.com.Manually set the host header of your HTTP request to the application’s host, but direct the request itself against the public address of the ingress gateway.

The following example uses the information obtained from the steps in Verifying your serverless application deployment:

Example command

$ curl -H "Host: hello-default.example.com" a83e86291bcdd11e993af02b7a65e514-33544245.us-east-1.elb.amazonaws.comExample output

Hello Serverless!You can also make a gRPC request by setting the authority to the application’s host, while directing the request against the ingress gateway directly:

grpc.Dial( "a83e86291bcdd11e993af02b7a65e514-33544245.us-east-1.elb.amazonaws.com:80", grpc.WithAuthority("hello-default.example.com:80"), grpc.WithInsecure(), )NoteEnsure that you append the respective port, 80 by default, to both hosts as shown in the previous example.