Networking

Configuring and managing cluster networking

Abstract

Chapter 1. Understanding networking

Cluster Administrators have several options for exposing applications that run inside a cluster to external traffic and securing network connections:

- Service types, such as node ports or load balancers

-

API resources, such as

IngressandRoute

By default, Kubernetes allocates each pod an internal IP address for applications running within the pod. Pods and their containers can network, but clients outside the cluster do not have networking access. When you expose your application to external traffic, giving each pod its own IP address means that pods can be treated like physical hosts or virtual machines in terms of port allocation, networking, naming, service discovery, load balancing, application configuration, and migration.

Some cloud platforms offer metadata APIs that listen on the 169.254.169.254 IP address, a link-local IP address in the IPv4 169.254.0.0/16 CIDR block.

This CIDR block is not reachable from the pod network. Pods that need access to these IP addresses must be given host network access by setting the spec.hostNetwork field in the pod spec to true.

If you allow a pod host network access, you grant the pod privileged access to the underlying network infrastructure.

1.1. OpenShift Container Platform DNS

If you are running multiple services, such as front-end and back-end services for use with multiple pods, environment variables are created for user names, service IPs, and more so the front-end pods can communicate with the back-end services. If the service is deleted and recreated, a new IP address can be assigned to the service, and requires the front-end pods to be recreated to pick up the updated values for the service IP environment variable. Additionally, the back-end service must be created before any of the front-end pods to ensure that the service IP is generated properly, and that it can be provided to the front-end pods as an environment variable.

For this reason, OpenShift Container Platform has a built-in DNS so that the services can be reached by the service DNS as well as the service IP/port.

1.2. OpenShift Container Platform Ingress Operator

When you create your OpenShift Container Platform cluster, pods and services running on the cluster are each allocated their own IP addresses. The IP addresses are accessible to other pods and services running nearby but are not accessible to outside clients. The Ingress Operator implements the IngressController API and is the component responsible for enabling external access to OpenShift Container Platform cluster services.

The Ingress Operator makes it possible for external clients to access your service by deploying and managing one or more HAProxy-based Ingress Controllers to handle routing. You can use the Ingress Operator to route traffic by specifying OpenShift Container Platform Route and Kubernetes Ingress resources. Configurations within the Ingress Controller, such as the ability to define endpointPublishingStrategy type and internal load balancing, provide ways to publish Ingress Controller endpoints.

1.2.1. Comparing routes and Ingress

The Kubernetes Ingress resource in OpenShift Container Platform implements the Ingress Controller with a shared router service that runs as a pod inside the cluster. The most common way to manage Ingress traffic is with the Ingress Controller. You can scale and replicate this pod like any other regular pod. This router service is based on HAProxy, which is an open source load balancer solution.

The OpenShift Container Platform route provides Ingress traffic to services in the cluster. Routes provide advanced features that might not be supported by standard Kubernetes Ingress Controllers, such as TLS re-encryption, TLS passthrough, and split traffic for blue-green deployments.

Ingress traffic accesses services in the cluster through a route. Routes and Ingress are the main resources for handling Ingress traffic. Ingress provides features similar to a route, such as accepting external requests and delegating them based on the route. However, with Ingress you can only allow certain types of connections: HTTP/2, HTTPS and server name identification (SNI), and TLS with certificate. In OpenShift Container Platform, routes are generated to meet the conditions specified by the Ingress resource.

Chapter 2. Accessing hosts

Learn how to create a bastion host to access OpenShift Container Platform instances and access the control plane nodes (also known as the master nodes) with secure shell (SSH) access.

2.1. Accessing hosts on Amazon Web Services in an installer-provisioned infrastructure cluster

The OpenShift Container Platform installer does not create any public IP addresses for any of the Amazon Elastic Compute Cloud (Amazon EC2) instances that it provisions for your OpenShift Container Platform cluster. To be able to SSH to your OpenShift Container Platform hosts, you must follow this procedure.

Procedure

-

Create a security group that allows SSH access into the virtual private cloud (VPC) created by the

openshift-installcommand. - Create an Amazon EC2 instance on one of the public subnets the installer created.

Associate a public IP address with the Amazon EC2 instance that you created.

Unlike with the OpenShift Container Platform installation, you should associate the Amazon EC2 instance you created with an SSH keypair. It does not matter what operating system you choose for this instance, as it will simply serve as an SSH bastion to bridge the internet into your OpenShift Container Platform cluster’s VPC. The Amazon Machine Image (AMI) you use does matter. With Red Hat Enterprise Linux CoreOS (RHCOS), for example, you can provide keys via Ignition, like the installer does.

Once you provisioned your Amazon EC2 instance and can SSH into it, you must add the SSH key that you associated with your OpenShift Container Platform installation. This key can be different from the key for the bastion instance, but does not have to be.

NoteDirect SSH access is only recommended for disaster recovery. When the Kubernetes API is responsive, run privileged pods instead.

-

Run

oc get nodes, inspect the output, and choose one of the nodes that is a master. The hostname looks similar toip-10-0-1-163.ec2.internal. From the bastion SSH host you manually deployed into Amazon EC2, SSH into that control plane host (also known as the master host). Ensure that you use the same SSH key you specified during the installation:

$ ssh -i <ssh-key-path> core@<master-hostname>

Chapter 3. Networking Operators overview

OpenShift Container Platform supports multiple types of networking Operators. You can manage the cluster networking using these networking Operators.

3.1. Cluster Network Operator

The Cluster Network Operator (CNO) deploys and manages the cluster network components in an OpenShift Container Platform cluster. This includes deployment of the Container Network Interface (CNI) default network provider plug-in selected for the cluster during installation. For more information, see Cluster Network Operator in OpenShift Container Platform.

3.2. DNS Operator

The DNS Operator deploys and manages CoreDNS to provide a name resolution service to pods. This enables DNS-based Kubernetes Service discovery in OpenShift Container Platform. For more information, see DNS Operator in OpenShift Container Platform.

3.3. Ingress Operator

When you create your OpenShift Container Platform cluster, pods and services running on the cluster are each allocated IP addresses. The IP addresses are accessible to other pods and services running nearby but are not accessible to external clients. The Ingress Operator implements the Ingress Controller API and is responsible for enabling external access to OpenShift Container Platform cluster services. For more information, see Ingress Operator in OpenShift Container Platform.

Chapter 4. Cluster Network Operator in OpenShift Container Platform

The Cluster Network Operator (CNO) deploys and manages the cluster network components on an OpenShift Container Platform cluster, including the Container Network Interface (CNI) default network provider plug-in selected for the cluster during installation.

4.1. Cluster Network Operator

The Cluster Network Operator implements the network API from the operator.openshift.io API group. The Operator deploys the OpenShift SDN default Container Network Interface (CNI) network provider plug-in, or the default network provider plug-in that you selected during cluster installation, by using a daemon set.

Procedure

The Cluster Network Operator is deployed during installation as a Kubernetes Deployment.

Run the following command to view the Deployment status:

$ oc get -n openshift-network-operator deployment/network-operatorExample output

NAME READY UP-TO-DATE AVAILABLE AGE network-operator 1/1 1 1 56mRun the following command to view the state of the Cluster Network Operator:

$ oc get clusteroperator/networkExample output

NAME VERSION AVAILABLE PROGRESSING DEGRADED SINCE network 4.5.4 True False False 50mThe following fields provide information about the status of the operator:

AVAILABLE,PROGRESSING, andDEGRADED. TheAVAILABLEfield isTruewhen the Cluster Network Operator reports an available status condition.

4.2. Viewing the cluster network configuration

Every new OpenShift Container Platform installation has a network.config object named cluster.

Procedure

Use the

oc describecommand to view the cluster network configuration:$ oc describe network.config/clusterExample output

Name: cluster Namespace: Labels: <none> Annotations: <none> API Version: config.openshift.io/v1 Kind: Network Metadata: Self Link: /apis/config.openshift.io/v1/networks/cluster Spec:1 Cluster Network: Cidr: 10.128.0.0/14 Host Prefix: 23 Network Type: OpenShiftSDN Service Network: 172.30.0.0/16 Status:2 Cluster Network: Cidr: 10.128.0.0/14 Host Prefix: 23 Cluster Network MTU: 8951 Network Type: OpenShiftSDN Service Network: 172.30.0.0/16 Events: <none>

4.3. Viewing Cluster Network Operator status

You can inspect the status and view the details of the Cluster Network Operator using the oc describe command.

Procedure

Run the following command to view the status of the Cluster Network Operator:

$ oc describe clusteroperators/network

4.4. Viewing Cluster Network Operator logs

You can view Cluster Network Operator logs by using the oc logs command.

Procedure

Run the following command to view the logs of the Cluster Network Operator:

$ oc logs --namespace=openshift-network-operator deployment/network-operator

4.5. Cluster Network Operator configuration

The configuration for the cluster network is specified as part of the Cluster Network Operator (CNO) configuration and stored in a custom resource (CR) object that is named cluster. The CR specifies the fields for the Network API in the operator.openshift.io API group.

The CNO configuration inherits the following fields during cluster installation from the Network API in the Network.config.openshift.io API group and these fields cannot be changed:

clusterNetwork- IP address pools from which pod IP addresses are allocated.

serviceNetwork- IP address pool for services.

defaultNetwork.type- Cluster network provider, such as OpenShift SDN or OVN-Kubernetes.

After cluster installation, you cannot modify the fields listed in the previous section.

You can specify the cluster network provider configuration for your cluster by setting the fields for the defaultNetwork object in the CNO object named cluster.

4.5.1. Cluster Network Operator configuration object

The fields for the Cluster Network Operator (CNO) are described in the following table:

| Field | Type | Description |

|---|---|---|

|

|

|

The name of the CNO object. This name is always |

|

|

| A list specifying the blocks of IP addresses from which pod IP addresses are allocated and the subnet prefix length assigned to each individual node in the cluster. For example:

This value is ready-only and inherited from the |

|

|

| A block of IP addresses for services. The OpenShift SDN and OVN-Kubernetes Container Network Interface (CNI) network providers support only a single IP address block for the service network. For example:

This value is ready-only and inherited from the |

|

|

| Configures the Container Network Interface (CNI) cluster network provider for the cluster network. |

|

|

| The fields for this object specify the kube-proxy configuration. If you are using the OVN-Kubernetes cluster network provider, the kube-proxy configuration has no effect. |

defaultNetwork object configuration

The values for the defaultNetwork object are defined in the following table:

| Field | Type | Description |

|---|---|---|

|

|

|

Either Note OpenShift Container Platform uses the OpenShift SDN Container Network Interface (CNI) cluster network provider by default. |

|

|

| This object is only valid for the OpenShift SDN cluster network provider. |

|

|

| This object is only valid for the OVN-Kubernetes cluster network provider. |

Configuration for the OpenShift SDN CNI cluster network provider

The following table describes the configuration fields for the OpenShift SDN Container Network Interface (CNI) cluster network provider.

| Field | Type | Description |

|---|---|---|

|

|

| The network isolation mode for OpenShift SDN. |

|

|

| The maximum transmission unit (MTU) for the VXLAN overlay network. This value is normally configured automatically. |

|

|

|

The port to use for all VXLAN packets. The default value is |

You can only change the configuration for your cluster network provider during cluster installation.

Example OpenShift SDN configuration

defaultNetwork:

type: OpenShiftSDN

openshiftSDNConfig:

mode: NetworkPolicy

mtu: 1450

vxlanPort: 4789Configuration for the OVN-Kubernetes CNI cluster network provider

The following table describes the configuration fields for the OVN-Kubernetes CNI cluster network provider.

| Field | Type | Description |

|---|---|---|

|

|

| The maximum transmission unit (MTU) for the Geneve (Generic Network Virtualization Encapsulation) overlay network. This value is normally configured automatically. |

|

|

| The UDP port for the Geneve overlay network. |

|

|

| If the field is present, IPsec is enabled for the cluster. |

You can only change the configuration for your cluster network provider during cluster installation.

Example OVN-Kubernetes configuration

defaultNetwork:

type: OVNKubernetes

ovnKubernetesConfig:

mtu: 1400

genevePort: 6081

ipsecConfig: {}kubeProxyConfig object configuration

The values for the kubeProxyConfig object are defined in the following table:

| Field | Type | Description |

|---|---|---|

|

|

|

The refresh period for Note

Because of performance improvements introduced in OpenShift Container Platform 4.3 and greater, adjusting the |

|

|

|

The minimum duration before refreshing |

4.5.2. Cluster Network Operator example configuration

A complete CNO configuration is specified in the following example:

Example Cluster Network Operator object

apiVersion: operator.openshift.io/v1

kind: Network

metadata:

name: cluster

spec:

clusterNetwork:

- cidr: 10.128.0.0/14

hostPrefix: 23

serviceNetwork:

- 172.30.0.0/16

defaultNetwork:

type: OpenShiftSDN

openshiftSDNConfig:

mode: NetworkPolicy

mtu: 1450

vxlanPort: 4789

kubeProxyConfig:

iptablesSyncPeriod: 30s

proxyArguments:

iptables-min-sync-period:

- 0sChapter 5. DNS Operator in OpenShift Container Platform

The DNS Operator deploys and manages CoreDNS to provide a name resolution service to pods, enabling DNS-based Kubernetes Service discovery in OpenShift.

5.1. DNS Operator

The DNS Operator implements the dns API from the operator.openshift.io API group. The Operator deploys CoreDNS using a daemon set, creates a service for the daemon set, and configures the kubelet to instruct pods to use the CoreDNS service IP address for name resolution.

Procedure

The DNS Operator is deployed during installation with a Deployment object.

Use the

oc getcommand to view the deployment status:$ oc get -n openshift-dns-operator deployment/dns-operatorExample output

NAME READY UP-TO-DATE AVAILABLE AGE dns-operator 1/1 1 1 23hUse the

oc getcommand to view the state of the DNS Operator:$ oc get clusteroperator/dnsExample output

NAME VERSION AVAILABLE PROGRESSING DEGRADED SINCE dns 4.1.0-0.11 True False False 92mAVAILABLE,PROGRESSINGandDEGRADEDprovide information about the status of the operator.AVAILABLEisTruewhen at least 1 pod from the CoreDNS daemon set reports anAvailablestatus condition.

5.2. View the default DNS

Every new OpenShift Container Platform installation has a dns.operator named default.

Procedure

Use the

oc describecommand to view the defaultdns:$ oc describe dns.operator/defaultExample output

Name: default Namespace: Labels: <none> Annotations: <none> API Version: operator.openshift.io/v1 Kind: DNS ... Status: Cluster Domain: cluster.local1 Cluster IP: 172.30.0.102 ...To find the service CIDR of your cluster, use the

oc getcommand:$ oc get networks.config/cluster -o jsonpath='{$.status.serviceNetwork}'

Example output

[172.30.0.0/16]5.3. Using DNS forwarding

You can use DNS forwarding to override the forwarding configuration identified in /etc/resolv.conf on a per-zone basis by specifying which name server should be used for a given zone. If the forwarded zone is the Ingress domain managed by OpenShift Container Platform, then the upstream name server must be authorized for the domain.

Procedure

Modify the DNS Operator object named

default:$ oc edit dns.operator/defaultThis allows the Operator to create and update the ConfigMap named

dns-defaultwith additional server configuration blocks based onServer. If none of the servers has a zone that matches the query, then name resolution falls back to the name servers that are specified in/etc/resolv.conf.Sample DNS

apiVersion: operator.openshift.io/v1 kind: DNS metadata: name: default spec: servers: - name: foo-server1 zones:2 - example.com forwardPlugin: upstreams:3 - 1.1.1.1 - 2.2.2.2:5353 - name: bar-server zones: - bar.com - example.com forwardPlugin: upstreams: - 3.3.3.3 - 4.4.4.4:5454NoteIf

serversis undefined or invalid, the ConfigMap only contains the default server.View the ConfigMap:

$ oc get configmap/dns-default -n openshift-dns -o yamlSample DNS ConfigMap based on previous sample DNS

apiVersion: v1 data: Corefile: | example.com:5353 { forward . 1.1.1.1 2.2.2.2:5353 } bar.com:5353 example.com:5353 { forward . 3.3.3.3 4.4.4.4:54541 } .:5353 { errors health kubernetes cluster.local in-addr.arpa ip6.arpa { pods insecure upstream fallthrough in-addr.arpa ip6.arpa } prometheus :9153 forward . /etc/resolv.conf { policy sequential } cache 30 reload } kind: ConfigMap metadata: labels: dns.operator.openshift.io/owning-dns: default name: dns-default namespace: openshift-dns- 1

- Changes to the

forwardPlugintriggers a rolling update of the CoreDNS daemon set.

5.4. DNS Operator status

You can inspect the status and view the details of the DNS Operator using the oc describe command.

Procedure

View the status of the DNS Operator:

$ oc describe clusteroperators/dns5.5. DNS Operator logs

You can view DNS Operator logs by using the oc logs command.

Procedure

View the logs of the DNS Operator:

$ oc logs -n openshift-dns-operator deployment/dns-operator -c dns-operatorChapter 6. Ingress Operator in OpenShift Container Platform

6.1. OpenShift Container Platform Ingress Operator

When you create your OpenShift Container Platform cluster, pods and services running on the cluster are each allocated their own IP addresses. The IP addresses are accessible to other pods and services running nearby but are not accessible to outside clients. The Ingress Operator implements the IngressController API and is the component responsible for enabling external access to OpenShift Container Platform cluster services.

The Ingress Operator makes it possible for external clients to access your service by deploying and managing one or more HAProxy-based Ingress Controllers to handle routing. You can use the Ingress Operator to route traffic by specifying OpenShift Container Platform Route and Kubernetes Ingress resources. Configurations within the Ingress Controller, such as the ability to define endpointPublishingStrategy type and internal load balancing, provide ways to publish Ingress Controller endpoints.

6.2. The Ingress configuration asset

The installation program generates an asset with an Ingress resource in the config.openshift.io API group, cluster-ingress-02-config.yml.

YAML Definition of the Ingress resource

apiVersion: config.openshift.io/v1

kind: Ingress

metadata:

name: cluster

spec:

domain: apps.openshiftdemos.com

The installation program stores this asset in the cluster-ingress-02-config.yml file in the manifests/ directory. This Ingress resource defines the cluster-wide configuration for Ingress. This Ingress configuration is used as follows:

- The Ingress Operator uses the domain from the cluster Ingress configuration as the domain for the default Ingress Controller.

-

The OpenShift API Server Operator uses the domain from the cluster Ingress configuration. This domain is also used when generating a default host for a

Routeresource that does not specify an explicit host.

6.3. Ingress controller configuration parameters

The ingresscontrollers.operator.openshift.io resource offers the following configuration parameters.

| Parameter | Description |

|---|---|

|

|

The

If empty, the default value is |

|

|

|

|

|

If not set, the default value is based on

The |

|

|

The

The secret must contain the following keys and data: *

If not set, a wildcard certificate is automatically generated and used. The certificate is valid for the Ingress controller The in-use certificate, whether generated or user-specified, is automatically integrated with OpenShift Container Platform built-in OAuth server. |

|

|

|

|

|

|

|

|

If not set, the defaults values are used. Note

The |

|

|

If not set, the default value is based on the

When using the

The minimum TLS version for Ingress controllers is Important

The HAProxy Ingress controller image does not support TLS

The Ingress Operator also converts the TLS

OpenShift Container Platform router enables Red Hat-distributed OpenSSL default set of TLS Note

Ciphers and the minimum TLS version of the configured security profile are reflected in the |

|

|

|

|

|

|

|

|

By setting the

By default, the policy is set to

|

All parameters are optional.

6.3.1. Ingress Controller TLS security profiles

TLS security profiles provide a way for servers to regulate which ciphers a connecting client can use when connecting to the server.

6.3.1.1. Understanding TLS security profiles

You can use a TLS (Transport Layer Security) security profile to define which TLS ciphers are required by various OpenShift Container Platform components. The OpenShift Container Platform TLS security profiles are based on Mozilla recommended configurations.

You can specify one of the following TLS security profiles for each component:

| Profile | Description |

|---|---|

|

| This profile is intended for use with legacy clients or libraries. The profile is based on the Old backward compatibility recommended configuration.

The Note For the Ingress Controller, the minimum TLS version is converted from 1.0 to 1.1. |

|

| This profile is the recommended configuration for the majority of clients. It is the default TLS security profile for the Ingress Controller and control plane. The profile is based on the Intermediate compatibility recommended configuration.

The |

|

| This profile is intended for use with modern clients that have no need for backwards compatibility. This profile is based on the Modern compatibility recommended configuration.

The Note

In OpenShift Container Platform 4.6, 4.7, and 4.8, the Important

The |

|

| This profile allows you to define the TLS version and ciphers to use. Warning

Use caution when using a Note

OpenShift Container Platform router enables Red Hat-distributed OpenSSL default set of TLS |

When using one of the predefined profile types, the effective profile configuration is subject to change between releases. For example, given a specification to use the Intermediate profile deployed on release X.Y.Z, an upgrade to release X.Y.Z+1 might cause a new profile configuration to be applied, resulting in a rollout.

6.3.1.2. Configuring the TLS security profile for the Ingress Controller

To configure a TLS security profile for an Ingress Controller, edit the IngressController custom resource (CR) to specify a predefined or custom TLS security profile. If a TLS security profile is not configured, the default value is based on the TLS security profile set for the API server.

Sample IngressController CR that configures the Old TLS security profile

apiVersion: operator.openshift.io/v1

kind: IngressController

...

spec:

tlsSecurityProfile:

old: {}

type: Old

...The TLS security profile defines the minimum TLS version and the TLS ciphers for TLS connections for Ingress Controllers.

You can see the ciphers and the minimum TLS version of the configured TLS security profile in the IngressController custom resource (CR) under Status.Tls Profile and the configured TLS security profile under Spec.Tls Security Profile. For the Custom TLS security profile, the specific ciphers and minimum TLS version are listed under both parameters.

The HAProxy Ingress Controller image does not support TLS 1.3 and because the Modern profile requires TLS 1.3, it is not supported. The Ingress Operator converts the Modern profile to Intermediate. The Ingress Operator also converts the TLS 1.0 of an Old or Custom profile to 1.1, and TLS 1.3 of a Custom profile to 1.2.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole.

Procedure

Edit the

IngressControllerCR in theopenshift-ingress-operatorproject to configure the TLS security profile:$ oc edit IngressController default -n openshift-ingress-operatorAdd the

spec.tlsSecurityProfilefield:Sample

IngressControllerCR for aCustomprofileapiVersion: operator.openshift.io/v1 kind: IngressController ... spec: tlsSecurityProfile: type: Custom1 custom:2 ciphers:3 - ECDHE-ECDSA-CHACHA20-POLY1305 - ECDHE-RSA-CHACHA20-POLY1305 - ECDHE-RSA-AES128-GCM-SHA256 - ECDHE-ECDSA-AES128-GCM-SHA256 minTLSVersion: VersionTLS11 ...- Save the file to apply the changes.

Verification

Verify that the profile is set in the

IngressControllerCR:$ oc describe IngressController default -n openshift-ingress-operatorExample output

Name: default Namespace: openshift-ingress-operator Labels: <none> Annotations: <none> API Version: operator.openshift.io/v1 Kind: IngressController ... Spec: ... Tls Security Profile: Custom: Ciphers: ECDHE-ECDSA-CHACHA20-POLY1305 ECDHE-RSA-CHACHA20-POLY1305 ECDHE-RSA-AES128-GCM-SHA256 ECDHE-ECDSA-AES128-GCM-SHA256 Min TLS Version: VersionTLS11 Type: Custom ...

6.3.2. Ingress controller endpoint publishing strategy

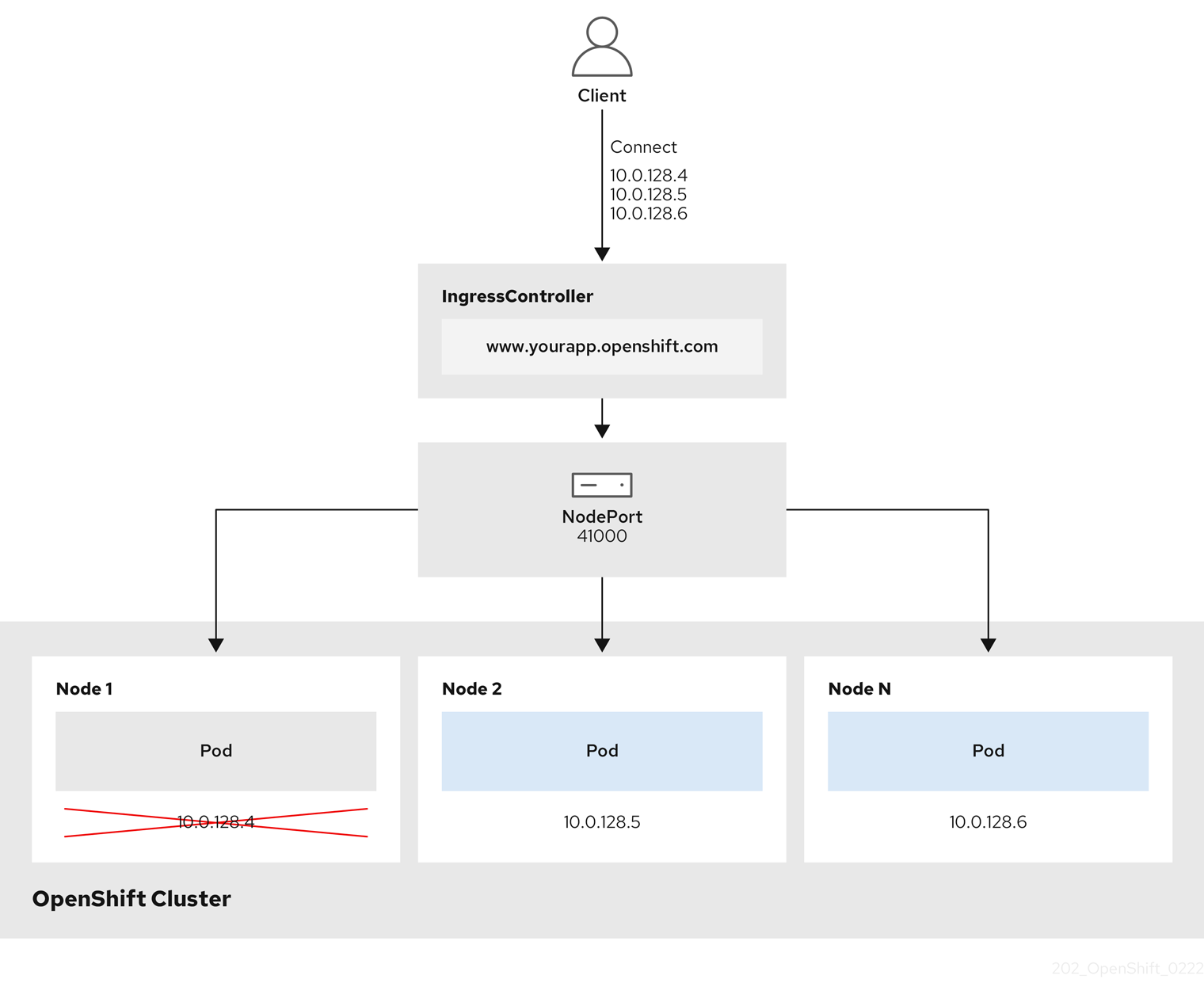

NodePortService endpoint publishing strategy

The NodePortService endpoint publishing strategy publishes the Ingress Controller using a Kubernetes NodePort service.

In this configuration, the Ingress Controller deployment uses container networking. A NodePortService is created to publish the deployment. The specific node ports are dynamically allocated by OpenShift Container Platform; however, to support static port allocations, your changes to the node port field of the managed NodePortService are preserved.

Figure 6.1. Diagram of NodePortService

The preceding graphic shows the following concepts pertaining to OpenShift Container Platform Ingress NodePort endpoint publishing strategy:

- All the available nodes in the cluster have their own, externally accessible IP addresses. The service running in the cluster is bound to the unique NodePort for all the nodes.

-

When the client connects to a node that is down, for example, by connecting the

10.0.128.4IP address in the graphic, the node port directly connects the client to an available node that is running the service. In this scenario, no load balancing is required. As the image shows, the10.0.128.4address is down and another IP address must be used instead.

The Ingress Operator ignores any updates to .spec.ports[].nodePort fields of the service.

By default, ports are allocated automatically and you can access the port allocations for integrations. However, sometimes static port allocations are necessary to integrate with existing infrastructure which may not be easily reconfigured in response to dynamic ports. To achieve integrations with static node ports, you can update the managed service resource directly.

For more information, see the Kubernetes Services documentation on NodePort.

HostNetwork endpoint publishing strategy

The HostNetwork endpoint publishing strategy publishes the Ingress Controller on node ports where the Ingress Controller is deployed.

An Ingress controller with the HostNetwork endpoint publishing strategy can have only one pod replica per node. If you want n replicas, you must use at least n nodes where those replicas can be scheduled. Because each pod replica requests ports 80 and 443 on the node host where it is scheduled, a replica cannot be scheduled to a node if another pod on the same node is using those ports.

6.4. View the default Ingress Controller

The Ingress Operator is a core feature of OpenShift Container Platform and is enabled out of the box.

Every new OpenShift Container Platform installation has an ingresscontroller named default. It can be supplemented with additional Ingress Controllers. If the default ingresscontroller is deleted, the Ingress Operator will automatically recreate it within a minute.

Procedure

View the default Ingress Controller:

$ oc describe --namespace=openshift-ingress-operator ingresscontroller/default

6.5. View Ingress Operator status

You can view and inspect the status of your Ingress Operator.

Procedure

View your Ingress Operator status:

$ oc describe clusteroperators/ingress

6.6. View Ingress Controller logs

You can view your Ingress Controller logs.

Procedure

View your Ingress Controller logs:

$ oc logs --namespace=openshift-ingress-operator deployments/ingress-operator

6.7. View Ingress Controller status

Your can view the status of a particular Ingress Controller.

Procedure

View the status of an Ingress Controller:

$ oc describe --namespace=openshift-ingress-operator ingresscontroller/<name>

6.8. Configuring the Ingress Controller

6.8.1. Setting a custom default certificate

As an administrator, you can configure an Ingress Controller to use a custom certificate by creating a Secret resource and editing the IngressController custom resource (CR).

Prerequisites

- You must have a certificate/key pair in PEM-encoded files, where the certificate is signed by a trusted certificate authority or by a private trusted certificate authority that you configured in a custom PKI.

Your certificate meets the following requirements:

- The certificate is valid for the ingress domain.

-

The certificate uses the

subjectAltNameextension to specify a wildcard domain, such as*.apps.ocp4.example.com.

You must have an

IngressControllerCR. You may use the default one:$ oc --namespace openshift-ingress-operator get ingresscontrollersExample output

NAME AGE default 10m

If you have intermediate certificates, they must be included in the tls.crt file of the secret containing a custom default certificate. Order matters when specifying a certificate; list your intermediate certificate(s) after any server certificate(s).

Procedure

The following assumes that the custom certificate and key pair are in the tls.crt and tls.key files in the current working directory. Substitute the actual path names for tls.crt and tls.key. You also may substitute another name for custom-certs-default when creating the Secret resource and referencing it in the IngressController CR.

This action will cause the Ingress Controller to be redeployed, using a rolling deployment strategy.

Create a Secret resource containing the custom certificate in the

openshift-ingressnamespace using thetls.crtandtls.keyfiles.$ oc --namespace openshift-ingress create secret tls custom-certs-default --cert=tls.crt --key=tls.keyUpdate the IngressController CR to reference the new certificate secret:

$ oc patch --type=merge --namespace openshift-ingress-operator ingresscontrollers/default \ --patch '{"spec":{"defaultCertificate":{"name":"custom-certs-default"}}}'Verify the update was effective:

$ echo Q |\ openssl s_client -connect console-openshift-console.apps.<domain>:443 -showcerts 2>/dev/null |\ openssl x509 -noout -subject -issuer -enddatewhere:

<domain>- Specifies the base domain name for your cluster.

Example output

subject=C = US, ST = NC, L = Raleigh, O = RH, OU = OCP4, CN = *.apps.example.com issuer=C = US, ST = NC, L = Raleigh, O = RH, OU = OCP4, CN = example.com notAfter=May 10 08:32:45 2022 GMThe certificate secret name should match the value used to update the CR.

Once the IngressController CR has been modified, the Ingress Operator updates the Ingress Controller’s deployment to use the custom certificate.

6.8.2. Removing a custom default certificate

As an administrator, you can remove a custom certificate that you configured an Ingress Controller to use.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole. -

You have installed the OpenShift CLI (

oc). - You previously configured a custom default certificate for the Ingress Controller.

Procedure

To remove the custom certificate and restore the certificate that ships with OpenShift Container Platform, enter the following command:

$ oc patch -n openshift-ingress-operator ingresscontrollers/default \ --type json -p $'- op: remove\n path: /spec/defaultCertificate'There can be a delay while the cluster reconciles the new certificate configuration.

Verification

To confirm that the original cluster certificate is restored, enter the following command:

$ echo Q | \ openssl s_client -connect console-openshift-console.apps.<domain>:443 -showcerts 2>/dev/null | \ openssl x509 -noout -subject -issuer -enddatewhere:

<domain>- Specifies the base domain name for your cluster.

Example output

subject=CN = *.apps.<domain> issuer=CN = ingress-operator@1620633373 notAfter=May 10 10:44:36 2023 GMT

6.8.3. Scaling an Ingress Controller

Manually scale an Ingress Controller to meeting routing performance or availability requirements such as the requirement to increase throughput. oc commands are used to scale the IngressController resource. The following procedure provides an example for scaling up the default IngressController.

Procedure

View the current number of available replicas for the default

IngressController:$ oc get -n openshift-ingress-operator ingresscontrollers/default -o jsonpath='{$.status.availableReplicas}'Example output

2Scale the default

IngressControllerto the desired number of replicas using theoc patchcommand. The following example scales the defaultIngressControllerto 3 replicas:$ oc patch -n openshift-ingress-operator ingresscontroller/default --patch '{"spec":{"replicas": 3}}' --type=mergeExample output

ingresscontroller.operator.openshift.io/default patchedVerify that the default

IngressControllerscaled to the number of replicas that you specified:$ oc get -n openshift-ingress-operator ingresscontrollers/default -o jsonpath='{$.status.availableReplicas}'Example output

3

Scaling is not an immediate action, as it takes time to create the desired number of replicas.

6.8.4. Configuring Ingress access logging

You can configure the Ingress Controller to enable access logs. If you have clusters that do not receive much traffic, then you can log to a sidecar. If you have high traffic clusters, to avoid exceeding the capacity of the logging stack or to integrate with a logging infrastructure outside of OpenShift Container Platform, you can forward logs to a custom syslog endpoint. You can also specify the format for access logs.

Container logging is useful to enable access logs on low-traffic clusters when there is no existing Syslog logging infrastructure, or for short-term use while diagnosing problems with the Ingress Controller.

Syslog is needed for high-traffic clusters where access logs could exceed the OpenShift Logging stack’s capacity, or for environments where any logging solution needs to integrate with an existing Syslog logging infrastructure. The Syslog use-cases can overlap.

Prerequisites

-

Log in as a user with

cluster-adminprivileges.

Procedure

Configure Ingress access logging to a sidecar.

To configure Ingress access logging, you must specify a destination using

spec.logging.access.destination. To specify logging to a sidecar container, you must specifyContainerspec.logging.access.destination.type. The following example is an Ingress Controller definition that logs to aContainerdestination:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: ContainerWhen you configure the Ingress Controller to log to a sidecar, the operator creates a container named

logsinside the Ingress Controller Pod:$ oc -n openshift-ingress logs deployment.apps/router-default -c logsExample output

2020-05-11T19:11:50.135710+00:00 router-default-57dfc6cd95-bpmk6 router-default-57dfc6cd95-bpmk6 haproxy[108]: 174.19.21.82:39654 [11/May/2020:19:11:50.133] public be_http:hello-openshift:hello-openshift/pod:hello-openshift:hello-openshift:10.128.2.12:8080 0/0/1/0/1 200 142 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"

Configure Ingress access logging to a Syslog endpoint.

To configure Ingress access logging, you must specify a destination using

spec.logging.access.destination. To specify logging to a Syslog endpoint destination, you must specifySyslogforspec.logging.access.destination.type. If the destination type isSyslog, you must also specify a destination endpoint usingspec.logging.access.destination.syslog.endpointand you can specify a facility usingspec.logging.access.destination.syslog.facility. The following example is an Ingress Controller definition that logs to aSyslogdestination:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Syslog syslog: address: 1.2.3.4 port: 10514NoteThe

syslogdestination port must be UDP.

Configure Ingress access logging with a specific log format.

You can specify

spec.logging.access.httpLogFormatto customize the log format. The following example is an Ingress Controller definition that logs to asyslogendpoint with IP address 1.2.3.4 and port 10514:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Syslog syslog: address: 1.2.3.4 port: 10514 httpLogFormat: '%ci:%cp [%t] %ft %b/%s %B %bq %HM %HU %HV'

Disable Ingress access logging.

To disable Ingress access logging, leave

spec.loggingorspec.logging.accessempty:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: null

6.8.5. Ingress Controller sharding

As the primary mechanism for traffic to enter the cluster, the demands on the Ingress Controller, or router, can be significant. As a cluster administrator, you can shard the routes to:

- Balance Ingress Controllers, or routers, with several routes to speed up responses to changes.

- Allocate certain routes to have different reliability guarantees than other routes.

- Allow certain Ingress Controllers to have different policies defined.

- Allow only specific routes to use additional features.

- Expose different routes on different addresses so that internal and external users can see different routes, for example.

Ingress Controller can use either route labels or namespace labels as a sharding method.

6.8.5.1. Configuring Ingress Controller sharding by using route labels

Ingress Controller sharding by using route labels means that the Ingress Controller serves any route in any namespace that is selected by the route selector.

Ingress Controller sharding is useful when balancing incoming traffic load among a set of Ingress Controllers and when isolating traffic to a specific Ingress Controller. For example, company A goes to one Ingress Controller and company B to another.

Procedure

Edit the

router-internal.yamlfile:# cat router-internal.yaml apiVersion: v1 items: - apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: sharded namespace: openshift-ingress-operator spec: domain: <apps-sharded.basedomain.example.net> nodePlacement: nodeSelector: matchLabels: node-role.kubernetes.io/worker: "" routeSelector: matchLabels: type: sharded status: {} kind: List metadata: resourceVersion: "" selfLink: ""Apply the Ingress Controller

router-internal.yamlfile:# oc apply -f router-internal.yamlThe Ingress Controller selects routes in any namespace that have the label

type: sharded.

6.8.5.2. Configuring Ingress Controller sharding by using namespace labels

Ingress Controller sharding by using namespace labels means that the Ingress Controller serves any route in any namespace that is selected by the namespace selector.

Ingress Controller sharding is useful when balancing incoming traffic load among a set of Ingress Controllers and when isolating traffic to a specific Ingress Controller. For example, company A goes to one Ingress Controller and company B to another.

If you deploy the Keepalived Ingress VIP, do not deploy a non-default Ingress Controller with value HostNetwork for the endpointPublishingStrategy parameter. Doing so might cause issues. Use value NodePort instead of HostNetwork for endpointPublishingStrategy.

Procedure

Edit the

router-internal.yamlfile:# cat router-internal.yamlExample output

apiVersion: v1 items: - apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: sharded namespace: openshift-ingress-operator spec: domain: <apps-sharded.basedomain.example.net> nodePlacement: nodeSelector: matchLabels: node-role.kubernetes.io/worker: "" namespaceSelector: matchLabels: type: sharded status: {} kind: List metadata: resourceVersion: "" selfLink: ""Apply the Ingress Controller

router-internal.yamlfile:# oc apply -f router-internal.yamlThe Ingress Controller selects routes in any namespace that is selected by the namespace selector that have the label

type: sharded.

6.8.6. Configuring an Ingress Controller to use an internal load balancer

When creating an Ingress Controller on cloud platforms, the Ingress Controller is published by a public cloud load balancer by default. As an administrator, you can create an Ingress Controller that uses an internal cloud load balancer.

If your cloud provider is Microsoft Azure, you must have at least one public load balancer that points to your nodes. If you do not, all of your nodes will lose egress connectivity to the internet.

If you want to change the scope for an IngressController object, you must delete and then recreate that IngressController object. You cannot change the .spec.endpointPublishingStrategy.loadBalancer.scope parameter after the custom resource (CR) is created.

Figure 6.2. Diagram of LoadBalancer

The preceding graphic shows the following concepts pertaining to OpenShift Container Platform Ingress LoadBalancerService endpoint publishing strategy:

- You can load load balance externally, using the cloud provider load balancer, or internally, using the OpenShift Ingress Controller Load Balancer.

- You can use the single IP address of the load balancer and more familiar ports, such as 8080 and 4200 as shown on the cluster depicted in the graphic.

- Traffic from the external load balancer is directed at the pods, and managed by the load balancer, as depicted in the instance of a down node. See the Kubernetes Services documentation for implementation details.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create an

IngressControllercustom resource (CR) in a file named<name>-ingress-controller.yaml, such as in the following example:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: namespace: openshift-ingress-operator name: <name>1 spec: domain: <domain>2 endpointPublishingStrategy: type: LoadBalancerService loadBalancer: scope: Internal3 Create the Ingress Controller defined in the previous step by running the following command:

$ oc create -f <name>-ingress-controller.yaml1 - 1

- Replace

<name>with the name of theIngressControllerobject.

Optional: Confirm that the Ingress Controller was created by running the following command:

$ oc --all-namespaces=true get ingresscontrollers

6.8.7. Configuring the default Ingress Controller for your cluster to be internal

You can configure the default Ingress Controller for your cluster to be internal by deleting and recreating it.

If your cloud provider is Microsoft Azure, you must have at least one public load balancer that points to your nodes. If you do not, all of your nodes will lose egress connectivity to the internet.

If you want to change the scope for an IngressController object, you must delete and then recreate that IngressController object. You cannot change the .spec.endpointPublishingStrategy.loadBalancer.scope parameter after the custom resource (CR) is created.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Configure the

defaultIngress Controller for your cluster to be internal by deleting and recreating it.$ oc replace --force --wait --filename - <<EOF apiVersion: operator.openshift.io/v1 kind: IngressController metadata: namespace: openshift-ingress-operator name: default spec: endpointPublishingStrategy: type: LoadBalancerService loadBalancer: scope: Internal EOF

6.8.8. Configuring the route admission policy

Administrators and application developers can run applications in multiple namespaces with the same domain name. This is for organizations where multiple teams develop microservices that are exposed on the same hostname.

Allowing claims across namespaces should only be enabled for clusters with trust between namespaces, otherwise a malicious user could take over a hostname. For this reason, the default admission policy disallows hostname claims across namespaces.

Prerequisites

- Cluster administrator privileges.

Procedure

Edit the

.spec.routeAdmissionfield of theingresscontrollerresource variable using the following command:$ oc -n openshift-ingress-operator patch ingresscontroller/default --patch '{"spec":{"routeAdmission":{"namespaceOwnership":"InterNamespaceAllowed"}}}' --type=mergeSample Ingress Controller configuration

spec: routeAdmission: namespaceOwnership: InterNamespaceAllowed ...

6.8.9. Using wildcard routes

The HAProxy Ingress Controller has support for wildcard routes. The Ingress Operator uses wildcardPolicy to configure the ROUTER_ALLOW_WILDCARD_ROUTES environment variable of the Ingress Controller.

The default behavior of the Ingress Controller is to admit routes with a wildcard policy of None, which is backwards compatible with existing IngressController resources.

Procedure

Configure the wildcard policy.

Use the following command to edit the

IngressControllerresource:$ oc edit IngressControllerUnder

spec, set thewildcardPolicyfield toWildcardsDisallowedorWildcardsAllowed:spec: routeAdmission: wildcardPolicy: WildcardsDisallowed # or WildcardsAllowed

6.8.10. Using X-Forwarded headers

You configure the HAProxy Ingress Controller to specify a policy for how to handle HTTP headers including Forwarded and X-Forwarded-For. The Ingress Operator uses the HTTPHeaders field to configure the ROUTER_SET_FORWARDED_HEADERS environment variable of the Ingress Controller.

Procedure

Configure the

HTTPHeadersfield for the Ingress Controller.Use the following command to edit the

IngressControllerresource:$ oc edit IngressControllerUnder

spec, set theHTTPHeaderspolicy field toAppend,Replace,IfNone, orNever:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: httpHeaders: forwardedHeaderPolicy: Append

Example use cases

As a cluster administrator, you can:

Configure an external proxy that injects the

X-Forwarded-Forheader into each request before forwarding it to an Ingress Controller.To configure the Ingress Controller to pass the header through unmodified, you specify the

neverpolicy. The Ingress Controller then never sets the headers, and applications receive only the headers that the external proxy provides.Configure the Ingress Controller to pass the

X-Forwarded-Forheader that your external proxy sets on external cluster requests through unmodified.To configure the Ingress Controller to set the

X-Forwarded-Forheader on internal cluster requests, which do not go through the external proxy, specify theif-nonepolicy. If an HTTP request already has the header set through the external proxy, then the Ingress Controller preserves it. If the header is absent because the request did not come through the proxy, then the Ingress Controller adds the header.

As an application developer, you can:

Configure an application-specific external proxy that injects the

X-Forwarded-Forheader.To configure an Ingress Controller to pass the header through unmodified for an application’s Route, without affecting the policy for other Routes, add an annotation

haproxy.router.openshift.io/set-forwarded-headers: if-noneorhaproxy.router.openshift.io/set-forwarded-headers: neveron the Route for the application.NoteYou can set the

haproxy.router.openshift.io/set-forwarded-headersannotation on a per route basis, independent from the globally set value for the Ingress Controller.

6.8.11. Enabling HTTP/2 Ingress connectivity

You can enable transparent end-to-end HTTP/2 connectivity in HAProxy. It allows application owners to make use of HTTP/2 protocol capabilities, including single connection, header compression, binary streams, and more.

You can enable HTTP/2 connectivity for an individual Ingress Controller or for the entire cluster.

To enable the use of HTTP/2 for the connection from the client to HAProxy, a route must specify a custom certificate. A route that uses the default certificate cannot use HTTP/2. This restriction is necessary to avoid problems from connection coalescing, where the client re-uses a connection for different routes that use the same certificate.

The connection from HAProxy to the application pod can use HTTP/2 only for re-encrypt routes and not for edge-terminated or insecure routes. This restriction is because HAProxy uses Application-Level Protocol Negotiation (ALPN), which is a TLS extension, to negotiate the use of HTTP/2 with the back-end. The implication is that end-to-end HTTP/2 is possible with passthrough and re-encrypt and not with insecure or edge-terminated routes.

Using WebSockets with a re-encrypt route and with HTTP/2 enabled on an Ingress Controller requires WebSocket support over HTTP/2. WebSockets over HTTP/2 is a feature of HAProxy 2.4, which is unsupported in OpenShift Container Platform at this time.

For non-passthrough routes, the Ingress Controller negotiates its connection to the application independently of the connection from the client. This means a client may connect to the Ingress Controller and negotiate HTTP/1.1, and the Ingress Controller may then connect to the application, negotiate HTTP/2, and forward the request from the client HTTP/1.1 connection using the HTTP/2 connection to the application. This poses a problem if the client subsequently tries to upgrade its connection from HTTP/1.1 to the WebSocket protocol, because the Ingress Controller cannot forward WebSocket to HTTP/2 and cannot upgrade its HTTP/2 connection to WebSocket. Consequently, if you have an application that is intended to accept WebSocket connections, it must not allow negotiating the HTTP/2 protocol or else clients will fail to upgrade to the WebSocket protocol.

Procedure

Enable HTTP/2 on a single Ingress Controller.

To enable HTTP/2 on an Ingress Controller, enter the

oc annotatecommand:$ oc -n openshift-ingress-operator annotate ingresscontrollers/<ingresscontroller_name> ingress.operator.openshift.io/default-enable-http2=trueReplace

<ingresscontroller_name>with the name of the Ingress Controller to annotate.

Enable HTTP/2 on the entire cluster.

To enable HTTP/2 for the entire cluster, enter the

oc annotatecommand:$ oc annotate ingresses.config/cluster ingress.operator.openshift.io/default-enable-http2=true

6.8.12. Specifying an alternative cluster domain using the appsDomain option

As a cluster administrator, you can specify an alternative to the default cluster domain for user-created routes by configuring the appsDomain field. The appsDomain field is an optional domain for OpenShift Container Platform to use instead of the default, which is specified in the domain field. If you specify an alternative domain, it overrides the default cluster domain for the purpose of determining the default host for a new route.

For example, you can use the DNS domain for your company as the default domain for routes and ingresses for applications running on your cluster.

Prerequisites

- You deployed an OpenShift Container Platform cluster.

-

You installed the

occommand line interface.

Procedure

Configure the

appsDomainfield by specifying an alternative default domain for user-created routes.Edit the ingress

clusterresource:$ oc edit ingresses.config/cluster -o yamlEdit the YAML file:

Sample

appsDomainconfiguration totest.example.comapiVersion: config.openshift.io/v1 kind: Ingress metadata: name: cluster spec: domain: apps.example.com1 appsDomain: <test.example.com>2

Verify that an existing route contains the domain name specified in the

appsDomainfield by exposing the route and verifying the route domain change:NoteWait for the

openshift-apiserverfinish rolling updates before exposing the route.Expose the route:

$ oc expose service hello-openshift route.route.openshift.io/hello-openshift exposedExample output:

$ oc get routes NAME HOST/PORT PATH SERVICES PORT TERMINATION WILDCARD hello-openshift hello_openshift-<my_project>.test.example.com hello-openshift 8080-tcp None

Chapter 7. Verifying connectivity to an endpoint

The Cluster Network Operator (CNO) runs a controller, the connectivity check controller, that performs a connection health check between resources within your cluster. By reviewing the results of the health checks, you can diagnose connection problems or eliminate network connectivity as the cause of an issue that you are investigating.

7.1. Connection health checks performed

To verify that cluster resources are reachable, a TCP connection is made to each of the following cluster API services:

- Kubernetes API server service

- Kubernetes API server endpoints

- OpenShift API server service

- OpenShift API server endpoints

- Load balancers

To verify that services and service endpoints are reachable on every node in the cluster, a TCP connection is made to each of the following targets:

- Health check target service

- Health check target endpoints

7.2. Implementation of connection health checks

The connectivity check controller orchestrates connection verification checks in your cluster. The results for the connection tests are stored in PodNetworkConnectivity objects in the openshift-network-diagnostics namespace. Connection tests are performed every minute in parallel.

The Cluster Network Operator (CNO) deploys several resources to the cluster to send and receive connectivity health checks:

- Health check source

-

This program deploys in a single pod replica set managed by a

Deploymentobject. The program consumesPodNetworkConnectivityobjects and connects to thespec.targetEndpointspecified in each object. - Health check target

- A pod deployed as part of a daemon set on every node in the cluster. The pod listens for inbound health checks. The presence of this pod on every node allows for the testing of connectivity to each node.

7.3. PodNetworkConnectivityCheck object fields

The PodNetworkConnectivityCheck object fields are described in the following tables.

| Field | Type | Description |

|---|---|---|

|

|

|

The name of the object in the following format:

|

|

|

|

The namespace that the object is associated with. This value is always |

|

|

|

The name of the pod where the connection check originates, such as |

|

|

|

The target of the connection check, such as |

|

|

| Configuration for the TLS certificate to use. |

|

|

| The name of the TLS certificate used, if any. The default value is an empty string. |

|

|

| An object representing the condition of the connection test and logs of recent connection successes and failures. |

|

|

| The latest status of the connection check and any previous statuses. |

|

|

| Connection test logs from unsuccessful attempts. |

|

|

| Connect test logs covering the time periods of any outages. |

|

|

| Connection test logs from successful attempts. |

The following table describes the fields for objects in the status.conditions array:

| Field | Type | Description |

|---|---|---|

|

|

| The time that the condition of the connection transitioned from one status to another. |

|

|

| The details about last transition in a human readable format. |

|

|

| The last status of the transition in a machine readable format. |

|

|

| The status of the condition. |

|

|

| The type of the condition. |

The following table describes the fields for objects in the status.conditions array:

| Field | Type | Description |

|---|---|---|

|

|

| The timestamp from when the connection failure is resolved. |

|

|

| Connection log entries, including the log entry related to the successful end of the outage. |

|

|

| A summary of outage details in a human readable format. |

|

|

| The timestamp from when the connection failure is first detected. |

|

|

| Connection log entries, including the original failure. |

Connection log fields

The fields for a connection log entry are described in the following table. The object is used in the following fields:

-

status.failures[] -

status.successes[] -

status.outages[].startLogs[] -

status.outages[].endLogs[]

| Field | Type | Description |

|---|---|---|

|

|

| Records the duration of the action. |

|

|

| Provides the status in a human readable format. |

|

|

|

Provides the reason for status in a machine readable format. The value is one of |

|

|

| Indicates if the log entry is a success or failure. |

|

|

| The start time of connection check. |

7.4. Verifying network connectivity for an endpoint

As a cluster administrator, you can verify the connectivity of an endpoint, such as an API server, load balancer, service, or pod.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Access to the cluster as a user with the

cluster-adminrole.

Procedure

To list the current

PodNetworkConnectivityCheckobjects, enter the following command:$ oc get podnetworkconnectivitycheck -n openshift-network-diagnosticsExample output

NAME AGE network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-1 73m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-2 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-service-cluster 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-default-service-cluster 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-load-balancer-api-external 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-load-balancer-api-internal 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-master-0 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-master-1 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-master-2 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh 74m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-worker-c-n8mbf 74m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-worker-d-4hnrz 74m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-service-cluster 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-openshift-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-openshift-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-1 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-openshift-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-2 74m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-openshift-apiserver-service-cluster 75mView the connection test logs:

- From the output of the previous command, identify the endpoint that you want to review the connectivity logs for.

To view the object, enter the following command:

$ oc get podnetworkconnectivitycheck <name> \ -n openshift-network-diagnostics -o yamlwhere

<name>specifies the name of thePodNetworkConnectivityCheckobject.Example output

apiVersion: controlplane.operator.openshift.io/v1alpha1 kind: PodNetworkConnectivityCheck metadata: name: network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0 namespace: openshift-network-diagnostics ... spec: sourcePod: network-check-source-7c88f6d9f-hmg2f targetEndpoint: 10.0.0.4:6443 tlsClientCert: name: "" status: conditions: - lastTransitionTime: "2021-01-13T20:11:34Z" message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnectSuccess status: "True" type: Reachable failures: - latency: 2.241775ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:10:34Z" - latency: 2.582129ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:09:34Z" - latency: 3.483578ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:08:34Z" outages: - end: "2021-01-13T20:11:34Z" endLogs: - latency: 2.032018ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T20:11:34Z" - latency: 2.241775ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:10:34Z" - latency: 2.582129ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:09:34Z" - latency: 3.483578ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:08:34Z" message: Connectivity restored after 2m59.999789186s start: "2021-01-13T20:08:34Z" startLogs: - latency: 3.483578ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:08:34Z" successes: - latency: 2.845865ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:14:34Z" - latency: 2.926345ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:13:34Z" - latency: 2.895796ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:12:34Z" - latency: 2.696844ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:11:34Z" - latency: 1.502064ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:10:34Z" - latency: 1.388857ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:09:34Z" - latency: 1.906383ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:08:34Z" - latency: 2.089073ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:07:34Z" - latency: 2.156994ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:06:34Z" - latency: 1.777043ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:05:34Z"

Chapter 8. Configuring the node port service range

As a cluster administrator, you can expand the available node port range. If your cluster uses of a large number of node ports, you might need to increase the number of available ports.

The default port range is 30000-32767. You can never reduce the port range, even if you first expand it beyond the default range.

8.1. Prerequisites

-

Your cluster infrastructure must allow access to the ports that you specify within the expanded range. For example, if you expand the node port range to

30000-32900, the inclusive port range of32768-32900must be allowed by your firewall or packet filtering configuration.

8.2. Expanding the node port range

You can expand the node port range for the cluster.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in to the cluster with a user with

cluster-adminprivileges.

Procedure

To expand the node port range, enter the following command. Replace

<port>with the largest port number in the new range.$ oc patch network.config.openshift.io cluster --type=merge -p \ '{ "spec": { "serviceNodePortRange": "30000-<port>" } }'Example output

network.config.openshift.io/cluster patchedTo confirm that the configuration is active, enter the following command. It can take several minutes for the update to apply.

$ oc get configmaps -n openshift-kube-apiserver config \ -o jsonpath="{.data['config\.yaml']}" | \ grep -Eo '"service-node-port-range":["[[:digit:]]+-[[:digit:]]+"]'Example output

"service-node-port-range":["30000-33000"]

Chapter 9. Using the Stream Control Transmission Protocol (SCTP) on a bare metal cluster

As a cluster administrator, you can use the Stream Control Transmission Protocol (SCTP) on a cluster.

9.1. Support for Stream Control Transmission Protocol (SCTP) on OpenShift Container Platform

As a cluster administrator, you can enable SCTP on the hosts in the cluster. On Red Hat Enterprise Linux CoreOS (RHCOS), the SCTP module is disabled by default.

SCTP is a reliable message based protocol that runs on top of an IP network.

When enabled, you can use SCTP as a protocol with pods, services, and network policy. A Service object must be defined with the type parameter set to either the ClusterIP or NodePort value.

9.1.1. Example configurations using SCTP protocol

You can configure a pod or service to use SCTP by setting the protocol parameter to the SCTP value in the pod or service object.

In the following example, a pod is configured to use SCTP:

apiVersion: v1

kind: Pod

metadata:

namespace: project1

name: example-pod

spec:

containers:

- name: example-pod

...

ports:

- containerPort: 30100

name: sctpserver

protocol: SCTPIn the following example, a service is configured to use SCTP:

apiVersion: v1

kind: Service

metadata:

namespace: project1

name: sctpserver

spec:

...

ports:

- name: sctpserver

protocol: SCTP

port: 30100

targetPort: 30100

type: ClusterIP

In the following example, a NetworkPolicy object is configured to apply to SCTP network traffic on port 80 from any pods with a specific label:

kind: NetworkPolicy

apiVersion: networking.k8s.io/v1

metadata:

name: allow-sctp-on-http

spec:

podSelector:

matchLabels:

role: web

ingress:

- ports:

- protocol: SCTP

port: 809.2. Enabling Stream Control Transmission Protocol (SCTP)

As a cluster administrator, you can load and enable the blacklisted SCTP kernel module on worker nodes in your cluster.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Access to the cluster as a user with the

cluster-adminrole.

Procedure

Create a file named

load-sctp-module.yamlthat contains the following YAML definition:apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: name: load-sctp-module labels: machineconfiguration.openshift.io/role: worker spec: config: ignition: version: 3.2.0 storage: files: - path: /etc/modprobe.d/sctp-blacklist.conf mode: 0644 overwrite: true contents: source: data:, - path: /etc/modules-load.d/sctp-load.conf mode: 0644 overwrite: true contents: source: data:,sctpTo create the

MachineConfigobject, enter the following command:$ oc create -f load-sctp-module.yamlOptional: To watch the status of the nodes while the MachineConfig Operator applies the configuration change, enter the following command. When the status of a node transitions to

Ready, the configuration update is applied.$ oc get nodes

9.3. Verifying Stream Control Transmission Protocol (SCTP) is enabled

You can verify that SCTP is working on a cluster by creating a pod with an application that listens for SCTP traffic, associating it with a service, and then connecting to the exposed service.

Prerequisites

-

Access to the Internet from the cluster to install the

ncpackage. -

Install the OpenShift CLI (

oc). -

Access to the cluster as a user with the

cluster-adminrole.

Procedure

Create a pod starts an SCTP listener:

Create a file named

sctp-server.yamlthat defines a pod with the following YAML:apiVersion: v1 kind: Pod metadata: name: sctpserver labels: app: sctpserver spec: containers: - name: sctpserver image: registry.access.redhat.com/ubi8/ubi command: ["/bin/sh", "-c"] args: ["dnf install -y nc && sleep inf"] ports: - containerPort: 30102 name: sctpserver protocol: SCTPCreate the pod by entering the following command:

$ oc create -f sctp-server.yaml

Create a service for the SCTP listener pod.

Create a file named

sctp-service.yamlthat defines a service with the following YAML:apiVersion: v1 kind: Service metadata: name: sctpservice labels: app: sctpserver spec: type: NodePort selector: app: sctpserver ports: - name: sctpserver protocol: SCTP port: 30102 targetPort: 30102To create the service, enter the following command:

$ oc create -f sctp-service.yaml

Create a pod for the SCTP client.

Create a file named

sctp-client.yamlwith the following YAML:apiVersion: v1 kind: Pod metadata: name: sctpclient labels: app: sctpclient spec: containers: - name: sctpclient image: registry.access.redhat.com/ubi8/ubi command: ["/bin/sh", "-c"] args: ["dnf install -y nc && sleep inf"]To create the

Podobject, enter the following command:$ oc apply -f sctp-client.yaml

Run an SCTP listener on the server.

To connect to the server pod, enter the following command:

$ oc rsh sctpserverTo start the SCTP listener, enter the following command:

$ nc -l 30102 --sctp

Connect to the SCTP listener on the server.

- Open a new terminal window or tab in your terminal program.

Obtain the IP address of the

sctpserviceservice. Enter the following command:$ oc get services sctpservice -o go-template='{{.spec.clusterIP}}{{"\n"}}'To connect to the client pod, enter the following command:

$ oc rsh sctpclientTo start the SCTP client, enter the following command. Replace

<cluster_IP>with the cluster IP address of thesctpserviceservice.# nc <cluster_IP> 30102 --sctp

Chapter 10. Configuring PTP hardware

Precision Time Protocol (PTP) hardware is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see https://access.redhat.com/support/offerings/techpreview/.

10.1. About PTP hardware

OpenShift Container Platform includes the capability to use Precision Time Protocol (PTP)hardware on your nodes. You can configure linuxptp services on nodes in your cluster that have PTP-capable hardware.

The PTP Operator works with PTP-capable devices on clusters provisioned only on bare metal infrastructure.

You can use the OpenShift Container Platform console to install PTP by deploying the PTP Operator. The PTP Operator creates and manages the linuxptp services. The Operator provides the following features:

- Discovery of the PTP-capable devices in a cluster.

- Management of the configuration of linuxptp services.

10.2. Automated discovery of PTP network devices