ノード

OpenShift Container Platform でのノードの設定および管理

概要

第1章 ノードの概要

1.1. ノードについて

ノードは、Kubernetes クラスター内の仮想マシンまたはベアメタルマシンです。ワーカーノードは、Pod としてグループ化されたアプリケーションコンテナーをホストします。コントロールプレーンノードは、Kubernetes クラスターを制御するために必要なサービスを実行します。OpenShift Container Platform では、コントロールプレーンノードには、OpenShift ContainerPlatform クラスターを管理するための Kubernetes サービス以上のものが含まれています。

クラスター内に安定した正常なノードを持つことは、ホストされたアプリケーションがスムーズに機能するための基本です。OpenShift Container Platform では、ノードを表す Node オブジェクトを介して Node にアクセス、管理、およびモニターできます。OpenShift CLI (oc) または Web コンソールを使用して、ノードで以下の操作を実行できます。

読み取り操作

読み取り操作により、管理者または開発者は OpenShift ContainerPlatform クラスター内のノードに関する情報を取得できます。

- クラスター内のすべてのノードを一覧表示します。

- メモリーと CPU の使用率、ヘルス、ステータス、経過時間など、ノードに関する情報を取得します。

- ノードで実行されている Pod を一覧表示します。

管理操作

管理者は、次のいくつかのタスクを通じて、OpenShift ContainerPlatform クラスター内のノードを簡単に管理できます。

-

ノードラベルを追加または更新します。ラベルは、

Nodeオブジェクトに適用されるキーと値のペアです。ラベルを使用して Pod のスケジュールを制御できます。 -

カスタムリソース定義 (CRD) または

kubeletConfigオブジェクトを使用してノード設定を変更します。 -

Pod のスケジューリングを許可または禁止するようにノードを設定します。ステータスが

Readyの正常なワーカーノードでは、デフォルトで Pod の配置が許可されますが、コントロールプレーンノードでは許可されません。このデフォルトの動作を変更するには、ワーカーノードをスケジュール不可に設定 し、コントロールプレーンノードをスケジュール可能に設定 します。 -

system-reserved設定を使用して、ノードにリソースを割り当てます。OpenShift Container Platform がノードに最適なsystem-reservedCPU およびメモリーリソースを自動的に決定できるようにするか、ノードに最適なリソースを手動で決定および設定することができます。 - ノード上のプロセッサーコアの数、ハード制限、またはその両方に基づいて、ノード上で実行できる Pod の数を設定 します。

- Pod の非アフィニティー を使用して、ノードを正常に再起動します。

- マシンセットを使用してクラスターをスケールダウンすることにより、クラスターからノードを削除 します。ベアメタルクラスターからノードを削除するには、最初にノード上のすべての Pod をドレインしてから、手動でノードを削除する必要があります。

エンハンスメント操作

OpenShift Container Platform を使用すると、ノードへのアクセスと管理以上のことができます。管理者は、ノードで次のタスクを実行して、クラスターをより効率的でアプリケーションに適したものにし、開発者により良い環境を提供できます。

- Node Tuning Operator を使用 して、ある程度のカーネルチューニングを必要とする高性能アプリケーションのノードレベルのチューニングを管理します。

- デーモンセットを使用してノードでバックグラウンドタスクを自動的に実行 します。デーモンセットを作成して使用し、共有ストレージを作成したり、すべてのノードでロギング Pod を実行したり、すべてのノードに監視エージェントをデプロイしたりできます。

- ガベージコレクションを使用してノードリソースを解放 します。終了したコンテナーと、実行中の Pod によって参照されていないイメージを削除することで、ノードが効率的に実行されていることを確認できます。

- カーネル引数をノードのセットに追加 します。

- ネットワークエッジにワーカーノード (リモートワーカーノード) を持つように OpenShift ContainerPlatform クラスターを設定します。OpenShift Container Platform クラスターにリモートワーカーノードを配置する際の課題と、リモートワーカーノードで Pod を管理するための推奨されるアプローチについては、ネットワークエッジでのリモートワーカーノードの使用 を参照してください。

1.2. Pod について

Pod は、ノードに一緒にデプロイされる 1 つ以上のコンテナーです。クラスター管理者は、Pod を定義し、スケジューリングの準備ができている正常なノードで実行するように割り当て、管理することができます。コンテナーが実行されている限り、Pod は実行されます。Pod を定義して実行すると、Pod を変更することはできません。Pod を操作するときに実行できる操作は次のとおりです。

読み取り操作

管理者は、次のタスクを通じてプロジェクト内の Pod に関する情報を取得できます。

- レプリカと再起動の数、現在のステータス、経過時間などの情報を含む、プロジェクトに関連付けられている Pod を一覧表示 します。

- CPU、メモリー、ストレージ消費量などの Pod 使用統計を表示 します。

管理操作

以下のタスクのリストは、管理者が OpenShift ContainerPlatform クラスターで Pod を管理する方法の概要を示しています。

OpenShift Container Platform で利用可能な高度なスケジューリング機能を使用して、Pod のスケジューリングを制御します。

- Pod アフィニティー、ノードアフィニティー、非アフィニティー などのノード間バインディングルール。

- ノードラベルとセレクター。

- テイントおよび容認 (Toleration)。

- Pod トポロジー分散制約。

- カスタムスケジューラー。

- 特定のストラテジーに基づいて Pod をエビクトするように descheduler を設定 して、スケジューラーが Pod をより適切なノードに再スケジュールするようにします。

- Pod コントローラーと再起動ポリシーを使用して、再起動後の Pod の動作を設定します。

- Pod の egress トラフィック と ingress トラフィックの両方を制限 します。

- Pod テンプレートを持つオブジェクトとの間でボリュームを追加および削除 します。ボリュームは、Pod 内のすべてのコンテナーで使用できるマウントされたファイルシステムです。コンテナーの保管はエフェメラルなものです。ボリュームを使用して、コンテナーデータを永続化できます。

エンハンスメント操作

OpenShift Container Platform で利用可能なさまざまなツールと機能を使用して、Pod をより簡単かつ効率的に操作できます。次の操作では、これらのツールと機能を使用して Pod をより適切に管理します。

| 操作 | ユーザー | 詳細情報 |

|---|---|---|

| Horizontal Pod Autoscaler を作成して使用。 | 開発者 | Horizontal Pod Autoscaler を使用して、実行する Pod の最小数と最大数、および Pod がターゲットとする CPU 使用率またはメモリー使用率を指定できます。水平 Pod オートスケーラーを使用すると、Pod を 自動的にスケーリング できます。 |

| 管理者および開発者 | 管理者は、垂直 Pod オートスケーラーを使用して、リソースとワークロードのリソース要件を監視することにより、クラスターリソースをより適切に使用します。 開発者は、垂直 Pod オートスケーラーを使用して、各 Pod に十分なリソースがあるノードに Pod をスケジュールすることにより、需要が高い時に Pod が稼働し続けるようにします。 | |

| デバイスプラグインを使用して外部リソースへのアクセスを提供します。 | Administrator | デバイスプラグイン は、ノード (kubelet の外部) で実行される gRPC サービスであり、特定のハードウェアリソースを管理します。デバイスプラグインを導入 して、クラスター全体でハードウェアデバイスを使用するための一貫性のあるポータブルソリューションを提供できます。 |

|

| Administrator |

一部のアプリケーションでは、パスワードやユーザー名などの機密情報が必要です。 |

1.3. コンテナーについて

コンテナーは、OpenShift Container Platform アプリケーションの基本ユニットであり、依存関係、ライブラリー、およびバイナリーとともにパッケージ化されたアプリケーションコードで設定されます。コンテナーは、複数の環境、および物理サーバー、仮想マシン (VM)、およびプライベートまたはパブリッククラウドなどの複数のデプロイメントターゲット間に一貫性をもたらします。

Linux コンテナーテクノロジーは、実行中のプロセスを分離し、指定されたリソースのみへのアクセスを制限するための軽量メカニズムです。管理者は、Linux コンテナーで次のようなさまざまなタスクを実行できます。

- コンテナーとの間でファイルをコピー します。

- コンテナーによる API オブジェクトの消費を許可 します。

- コンテナー内でリモートコマンドを実行 します。

- ポート転送を使用してコンテナー内のアプリケーションにアクセス します。

OpenShift Container Platform は、Init コンテナー と呼ばれる特殊なコンテナーを提供します。Init コンテナーは、アプリケーションコンテナーの前に実行され、アプリケーションイメージに存在しないユーティリティーまたはセットアップスクリプトを含めることができます。Pod の残りの部分がデプロイされる前に、Init コンテナーを使用してタスクを実行できます。

ノード、Pod、およびコンテナーで特定のタスクを実行する以外に、OpenShift Container Platform クラスター全体を操作して、クラスターの効率とアプリケーション Pod の高可用性を維持できます。

第2章 Pod の使用

2.1. Pod の使用

Pod は 1 つのホストにデプロイされる 1 つ以上のコンテナーであり、定義され、デプロイされ、管理される最小のコンピュート単位です。

2.1.1. Pod について

Pod はコンテナーに対してマシンインスタンス (物理または仮想) とほぼ同じ機能を持ちます。各 Pod は独自の内部 IP アドレスで割り当てられるため、そのポートスペース全体を所有し、Pod 内のコンテナーはそれらのローカルストレージおよびネットワークを共有できます。

Pod にはライフサイクルがあります。それらは定義された後にノードで実行されるために割り当てられ、コンテナーが終了するまで実行されるか、その他の理由でコンテナーが削除されるまで実行されます。ポリシーおよび終了コードによっては、Pod は終了後に削除されるか、コンテナーのログへのアクセスを有効にするために保持される可能性があります。

OpenShift Container Platform は Pod をほとんどがイミュータブルなものとして処理します。Pod が実行中の場合は Pod に変更を加えることができません。OpenShift Container Platform は既存 Pod を終了し、これを変更された設定、ベースイメージのいずれかまたはその両方で再作成して変更を実装します。Pod は拡張可能なものとしても処理されますが、再作成時に状態を維持しません。そのため、通常 Pod はユーザーから直接管理されるのでははく、ハイレベルのコントローラーで管理される必要があります。

OpenShift Container Platform ノードホストごとの Pod の最大数については、クラスターの制限について参照してください。

レプリケーションコントローラーによって管理されないベア Pod はノードの中断時に再スケジュールされません。

2.1.2. Pod 設定の例

OpenShift Container Platform は、Pod の Kubernetes の概念を活用しています。これはホスト上に共にデプロイされる 1 つ以上のコンテナーであり、定義され、デプロイされ、管理される最小のコンピュート単位です。

以下は、Rails アプリケーションからの Pod の定義例です。これは数多くの Pod の機能を示していますが、それらのほとんどは他のトピックで説明されるため、ここではこれらについて簡単に説明します。

Pod オブジェクト定義 (YAML)

kind: Pod

apiVersion: v1

metadata:

name: example

namespace: default

selfLink: /api/v1/namespaces/default/pods/example

uid: 5cc30063-0265780783bc

resourceVersion: '165032'

creationTimestamp: '2019-02-13T20:31:37Z'

labels:

app: hello-openshift

annotations:

openshift.io/scc: anyuid

spec:

restartPolicy: Always

serviceAccountName: default

imagePullSecrets:

- name: default-dockercfg-5zrhb

priority: 0

schedulerName: default-scheduler

terminationGracePeriodSeconds: 30

nodeName: ip-10-0-140-16.us-east-2.compute.internal

securityContext:

seLinuxOptions:

level: 's0:c11,c10'

containers:

- resources: {}

terminationMessagePath: /dev/termination-log

name: hello-openshift

securityContext:

capabilities:

drop:

- MKNOD

procMount: Default

ports:

- containerPort: 8080

protocol: TCP

imagePullPolicy: Always

volumeMounts:

- name: default-token-wbqsl

readOnly: true

mountPath: /var/run/secrets/kubernetes.io/serviceaccount

terminationMessagePolicy: File

image: registry.redhat.io/openshift4/ose-ogging-eventrouter:v4.3

serviceAccount: default

volumes:

- name: default-token-wbqsl

secret:

secretName: default-token-wbqsl

defaultMode: 420

dnsPolicy: ClusterFirst

status:

phase: Pending

conditions:

- type: Initialized

status: 'True'

lastProbeTime: null

lastTransitionTime: '2019-02-13T20:31:37Z'

- type: Ready

status: 'False'

lastProbeTime: null

lastTransitionTime: '2019-02-13T20:31:37Z'

reason: ContainersNotReady

message: 'containers with unready status: [hello-openshift]'

- type: ContainersReady

status: 'False'

lastProbeTime: null

lastTransitionTime: '2019-02-13T20:31:37Z'

reason: ContainersNotReady

message: 'containers with unready status: [hello-openshift]'

- type: PodScheduled

status: 'True'

lastProbeTime: null

lastTransitionTime: '2019-02-13T20:31:37Z'

hostIP: 10.0.140.16

startTime: '2019-02-13T20:31:37Z'

containerStatuses:

- name: hello-openshift

state:

waiting:

reason: ContainerCreating

lastState: {}

ready: false

restartCount: 0

image: openshift/hello-openshift

imageID: ''

qosClass: BestEffort- 1

- Pod には 1 つまたは複数のラベルでタグ付けすることができ、このラベルを使用すると、一度の操作で Pod グループの選択や管理が可能になります。これらのラベルは、キー/値形式で

metadataハッシュに保存されます。 - 2

- Pod 再起動ポリシーと使用可能な値の

Always、OnFailure、およびNeverです。デフォルト値はAlwaysです。 - 3

- OpenShift Container Platform は、コンテナーが特権付きコンテナーとして実行されるか、選択したユーザーとして実行されるかどうかを指定するセキュリティーコンテキストを定義します。デフォルトのコンテキストには多くの制限がありますが、管理者は必要に応じてこれを変更できます。

- 4

containersは、1 つ以上のコンテナー定義の配列を指定します。- 5

- コンテナーは外部ストレージボリュームがコンテナー内にマウントされるかどうかを指定します。この場合、OpenShift Container Platform API に対して要求を行うためにレジストリーが必要とする認証情報へのアクセスを保存するためにボリュームがあります。

- 6

- Pod に提供するボリュームを指定します。ボリュームは指定されたパスにマウントされます。コンテナーのルート (

/) や、ホストとコンテナーで同じパスにはマウントしないでください。これは、コンテナーに十分な特権が付与されている場合、ホストシステムを破壊する可能性があります (例: ホストの/dev/ptsファイル)。ホストをマウントするには、/hostを使用するのが安全です。 - 7

- Pod 内の各コンテナーは、独自のコンテナーイメージからインスタンス化されます。

- 8

- OpenShift Container Platform API に対して要求する Pod は一般的なパターンです。この場合、

serviceAccountフィールドがあり、これは要求を行う際に Pod が認証する必要のあるサービスアカウントユーザーを指定するために使用されます。これにより、カスタムインフラストラクチャーコンポーネントの詳細なアクセス制御が可能になります。 - 9

- Pod は、コンテナーで使用できるストレージボリュームを定義します。この場合、デフォルトのサービスアカウントトークンを含む

secretボリュームのエフェメラルボリュームを提供します。ファイル数が多い永続ボリュームを Pod に割り当てる場合、それらの Pod は失敗するか、または起動に時間がかかる場合があります。詳細は、When using Persistent Volumes with high file counts in OpenShift, why do pods fail to start or take an excessive amount of time to achieve "Ready" state? を参照してください。

この Pod 定義には、Pod が作成され、ライフサイクルが開始された後に OpenShift Container Platform によって自動的に設定される属性が含まれません。Kubernetes Pod ドキュメント には、Pod の機能および目的についての詳細が記載されています。

2.2. Pod の表示

管理者として、クラスターで Pod を表示し、それらの Pod および全体としてクラスターの正常性を判別することができます。

2.2.1. Pod について

OpenShift Container Platform は、Pod の Kubernetes の概念を活用しています。これはホスト上に共にデプロイされる 1 つ以上のコンテナーであり、定義され、デプロイされ、管理される最小のコンピュート単位です。Pod はコンテナーに対するマシンインスタンス (物理または仮想) とほぼ同等のものです。

特定のプロジェクトに関連付けられた Pod の一覧を表示したり、Pod についての使用状況の統計を表示したりすることができます。

2.2.2. プロジェクトでの Pod の表示

レプリカの数、Pod の現在のステータス、再起動の数および年数を含む、現在のプロジェクトに関連付けられた Pod の一覧を表示できます。

手順

プロジェクトで Pod を表示するには、以下を実行します。

プロジェクトに切り替えます。

$ oc project <project-name>以下のコマンドを実行します。

$ oc get pods以下に例を示します。

$ oc get pods -n openshift-console出力例

NAME READY STATUS RESTARTS AGE console-698d866b78-bnshf 1/1 Running 2 165m console-698d866b78-m87pm 1/1 Running 2 165m-o wideフラグを追加して、Pod の IP アドレスと Pod があるノードを表示します。$ oc get pods -o wide出力例

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE console-698d866b78-bnshf 1/1 Running 2 166m 10.128.0.24 ip-10-0-152-71.ec2.internal <none> console-698d866b78-m87pm 1/1 Running 2 166m 10.129.0.23 ip-10-0-173-237.ec2.internal <none>

2.2.3. Pod の使用状況についての統計の表示

コンテナーのランタイム環境を提供する、Pod についての使用状況の統計を表示できます。これらの使用状況の統計には CPU、メモリー、およびストレージの消費量が含まれます。

前提条件

-

使用状況の統計を表示するには、

cluster-readerパーミッションがなければなりません。 - 使用状況の統計を表示するには、メトリクスをインストールしている必要があります。

手順

使用状況の統計を表示するには、以下を実行します。

以下のコマンドを実行します。

$ oc adm top pods以下に例を示します。

$ oc adm top pods -n openshift-console出力例

NAME CPU(cores) MEMORY(bytes) console-7f58c69899-q8c8k 0m 22Mi console-7f58c69899-xhbgg 0m 25Mi downloads-594fcccf94-bcxk8 3m 18Mi downloads-594fcccf94-kv4p6 2m 15Miラベルを持つ Pod の使用状況の統計を表示するには、以下のコマンドを実行します。

$ oc adm top pod --selector=''フィルターに使用するセレクター (ラベルクエリー) を選択する必要があります。

=、==、および!=をサポートします。

2.2.4. リソースログの表示

OpenShift CLI (oc) および Web コンソールで、各種リソースのログを表示できます。ログの末尾から読み取られるログ。

前提条件

- OpenShift CLI (oc) へのアクセス。

手順 (UI)

OpenShift Container Platform コンソールで Workloads → Pods に移動するか、または調査するリソースから Pod に移動します。

注記ビルドなどの一部のリソースには、直接クエリーする Pod がありません。このような場合には、リソースについて Details ページで Logs リンクを特定できます。

- ドロップダウンメニューからプロジェクトを選択します。

- 調査する Pod の名前をクリックします。

- Logs をクリックします。

手順 (CLI)

特定の Pod のログを表示します。

$ oc logs -f <pod_name> -c <container_name>ここでは、以下のようになります。

-f- オプション: ログに書き込まれている内容に沿って出力することを指定します。

<pod_name>- Pod の名前を指定します。

<container_name>- オプション: コンテナーの名前を指定します。Pod に複数のコンテナーがある場合、コンテナー名を指定する必要があります。

以下に例を示します。

$ oc logs ruby-58cd97df55-mww7r$ oc logs -f ruby-57f7f4855b-znl92 -c rubyログファイルの内容が出力されます。

特定のリソースのログを表示します。

$ oc logs <object_type>/<resource_name>1 - 1

- リソースタイプおよび名前を指定します。

以下に例を示します。

$ oc logs deployment/rubyログファイルの内容が出力されます。

2.3. OpenShift Container Platform クラスターでの Pod の設定

管理者として、Pod に対して効率的なクラスターを作成し、維持することができます。

クラスターの効率性を維持することにより、1 回のみ実行するように設計された Pod をいつ再起動するか、Pod が利用できる帯域幅をいつ制限するか、中断時に Pod をどのように実行させ続けるかなど、Pod が終了するときの動作をツールとして使って必要な数の Pod が常に実行されるようにし、開発者により良い環境を提供することができます。

2.3.1. 再起動後の Pod の動作方法の設定

Pod 再起動ポリシーは、Pod のコンテナーの終了時に OpenShift Container Platform が応答する方法を決定します。このポリシーは Pod のすべてのコンテナーに適用されます。

以下の値を使用できます。

-

Always: Pod が再起動するまで、Pod で正常に終了したコンテナーの継続的な再起動を、指数関数のバックオフ遅延 (10 秒、20 秒、40 秒) で試行します。デフォルトはAlwaysです。 -

OnFailure: Pod で失敗したコンテナーの継続的な再起動を、5 分を上限として指数関数のバックオフ遅延 (10 秒、20 秒、40 秒) で試行します。 -

Never: Pod で終了したコンテナーまたは失敗したコンテナーの再起動を試行しません。Pod はただちに失敗し、終了します。

いったんノードにバインドされた Pod は別のノードにはバインドされなくなります。これは、Pod がのノードの失敗後も存続するにはコントローラーが必要であることを示しています。

| 条件 | コントローラーのタイプ | 再起動ポリシー |

|---|---|---|

| (バッチ計算など) 終了することが予想される Pod | ジョブ |

|

| (Web サービスなど) 終了しないことが予想される Pod | レプリケーションコントローラー |

|

| マシンごとに 1 回実行される Pod | デーモンセット | すべて |

Pod のコンテナーが失敗し、再起動ポリシーが OnFailure に設定される場合、Pod はノード上に留まり、コンテナーが再起動します。コンテナーを再起動させない場合には、再起動ポリシーの Never を使用します。

Pod 全体が失敗すると、OpenShift Container Platform は新規 Pod を起動します。開発者は、アプリケーションが新規 Pod で再起動される可能性に対応しなくてはなりません。とくに、アプリケーションは、一時的なファイル、ロック、以前の実行で生じた未完成の出力などを処理する必要があります。

Kubernetes アーキテクチャーでは、クラウドプロバイダーからの信頼性のあるエンドポイントが必要です。クラウドプロバイダーが停止している場合、kubelet は OpenShift Container Platform が再起動されないようにします。

基礎となるクラウドプロバイダーのエンドポイントに信頼性がない場合は、クラウドプロバイダー統合を使用してクラスターをインストールしないでください。クラスターを、非クラウド環境で実行する場合のようにインストールします。インストール済みのクラスターで、クラウドプロバイダー統合をオンまたはオフに切り替えることは推奨されていません。

OpenShift Container Platform が失敗したコンテナーについて再起動ポリシーを使用する方法の詳細は、Kubernetes ドキュメントの State の例 を参照してください。

2.3.2. Pod で利用可能な帯域幅の制限

QoS (Quality-of-Service) トラフィックシェーピングを Pod に適用し、その利用可能な帯域幅を効果的に制限することができます。(Pod からの) Egress トラフィックは、設定したレートを超えるパケットを単純にドロップするポリシングによって処理されます。(Pod への) Ingress トラフィックは、データを効果的に処理できるようシェーピングでパケットをキューに入れて処理されます。Pod に設定する制限は、他の Pod の帯域幅には影響を与えません。

手順

Pod の帯域幅を制限するには、以下を実行します。

オブジェクト定義 JSON ファイルを作成し、

kubernetes.io/ingress-bandwidthおよびkubernetes.io/egress-bandwidthアノテーションを使用してデータトラフィックの速度を指定します。たとえば、 Pod の egress および ingress の両方の帯域幅を 10M/s に制限するには、以下を実行します。制限が設定された

Podオブジェクト定義{ "kind": "Pod", "spec": { "containers": [ { "image": "openshift/hello-openshift", "name": "hello-openshift" } ] }, "apiVersion": "v1", "metadata": { "name": "iperf-slow", "annotations": { "kubernetes.io/ingress-bandwidth": "10M", "kubernetes.io/egress-bandwidth": "10M" } } }オブジェクト定義を使用して Pod を作成します。

$ oc create -f <file_or_dir_path>

2.3.3. Pod の Disruption Budget (停止状態の予算) を使って起動している Pod の数を指定する方法

Pod の Disruption Budget は Kubernetes API の一部であり、他のオブジェクトタイプのように oc コマンドで管理できます。この設定により、メンテナンスのためのノードのドレイン (解放) などの操作時に Pod への安全面の各種の制約を指定できます。

PodDisruptionBudget は、同時に起動している必要のあるレプリカの最小数またはパーセンテージを指定する API オブジェクトです。これらをプロジェクトに設定することは、ノードのメンテナンス (クラスターのスケールダウンまたはクラスターのアップグレードなどの実行) 時に役立ち、この設定は (ノードの障害時ではなく) 自発的なエビクションの場合にのみ許可されます。

PodDisruptionBudget オブジェクトの設定は、以下の主要な部分で設定されています。

- 一連の Pod に対するラベルのクエリー機能であるラベルセレクター。

同時に利用可能にする必要のある Pod の最小数を指定する可用性レベル。

-

minAvailableは、中断時にも常に利用可能である必要のある Pod 数です。 -

maxUnavailableは、中断時に利用不可にできる Pod 数です。

-

maxUnavailable の 0% または 0 あるいは minAvailable の 100%、ないしはレプリカ数に等しい値は許可されますが、これによりノードがドレイン (解放) されないようにブロックされる可能性があります。

以下を実行して、Pod の Disruption Budget をすべてのプロジェクトで確認することができます。

$ oc get poddisruptionbudget --all-namespaces出力例

NAMESPACE NAME MIN-AVAILABLE SELECTOR

another-project another-pdb 4 bar=foo

test-project my-pdb 2 foo=bar

PodDisruptionBudget は、最低でも minAvailable Pod がシステムで実行されている場合は正常であるとみなされます。この制限を超えるすべての Pod はエビクションの対象となります。

Pod の優先順位およびプリエンプションの設定に基づいて、優先順位の低い Pod は Pod の Disruption Budget の要件を無視して削除される可能性があります。

2.3.3.1. Pod の Disruption Budget を使って起動している Pod 数の指定

同時に起動している必要のあるレプリカの最小数またはパーセンテージは、PodDisruptionBudget オブジェクトを使って指定します。

手順

Pod の Disruption Budget を設定するには、以下を実行します。

YAML ファイルを以下のようなオブジェクト定義で作成します。

apiVersion: policy/v1beta11 kind: PodDisruptionBudget metadata: name: my-pdb spec: minAvailable: 22 selector:3 matchLabels: foo: barまたは、以下を実行します。

apiVersion: policy/v1beta11 kind: PodDisruptionBudget metadata: name: my-pdb spec: maxUnavailable: 25%2 selector:3 matchLabels: foo: bar以下のコマンドを実行してオブジェクトをプロジェクトに追加します。

$ oc create -f </path/to/file> -n <project_name>

2.3.4. Critical Pod の使用による Pod の削除の防止

クラスターを十分に機能させるために不可欠であるのに、マスターノードではなく通常のクラスターノードで実行される重要なコンポーネントは多数あります。重要なアドオンをエビクトすると、クラスターが正常に動作しなくなる可能性があります。

Critical とマークされている Pod はエビクトできません。

手順

Pod を Citical にするには、以下を実行します。

Pod仕様を作成するか、または既存の Pod を編集してsystem-cluster-critical優先順位クラスを含めます。spec: template: metadata: name: critical-pod priorityClassName: system-cluster-critical1 - 1

- ノードからエビクトすべきではない Pod のデフォルトの優先順位クラス。

または、クラスターにとって重要だが、必要に応じて削除できる Pod に

system-node-criticalを指定することもできます。Pod を作成します。

$ oc create -f <file-name>.yaml

2.4. Horizontal Pod Autoscaler での Pod の自動スケーリング

開発者として、Horizontal Pod Autoscaler (HPA) を使って、レプリケーションコントローラーに属する Pod から収集されるメトリクスまたはデプロイメント設定に基づき、OpenShift Container Platform がレプリケーションコントローラーまたはデプロイメント設定のスケールを自動的に増減する方法を指定できます。

2.4.1. Horizontal Pod Autoscaler について

Horizontal Pod Autoscaler を作成することで、実行する Pod の最小数と最大数を指定するだけでなく、Pod がターゲットに設定する CPU の使用率またはメモリー使用率を指定することができます。

Horizontal Pod Autoscaler を作成すると、OpenShift Container Platform は Pod で CPU またはメモリーリソースのメトリクスのクエリーを開始します。メトリクスが利用可能になると、Horizontal Pod Autoscaler は必要なメトリクスの使用率に対する現在のメトリクスの使用率の割合を計算し、随時スケールアップまたはスケールダウンを実行します。クエリーとスケーリングは一定間隔で実行されますが、メトリクスが利用可能になるでに 1 分から 2 分の時間がかかる場合があります。

レプリケーションコントローラーの場合、このスケーリングはレプリケーションコントローラーのレプリカに直接対応します。デプロイメント設定の場合、スケーリングはデプロイメント設定のレプリカ数に直接対応します。自動スケーリングは Complete フェーズの最新デプロイメントにのみ適用されることに注意してください。

OpenShift Container Platform はリソースに自動的に対応し、起動時などのリソースの使用が急増した場合など必要のない自動スケーリングを防ぎます。unready 状態の Pod には、スケールアップ時の使用率が 0 CPU と指定され、Autoscaler はスケールダウン時にはこれらの Pod を無視します。既知のメトリクスのない Pod にはスケールアップ時の使用率が 0% CPU、スケールダウン時に 100% CPU となります。これにより、HPA の決定時に安定性が増します。この機能を使用するには、readiness チェックを設定して新規 Pod が使用可能であるかどうかを判別します。

Horizontal Pod Autoscaler を使用するには、クラスターの管理者はクラスターメトリクスを適切に設定している必要があります。

2.4.1.1. サポートされるメトリクス

以下のメトリクスは Horizontal Pod Autoscaler でサポートされています。

| メトリクス | 説明 | API バージョン |

|---|---|---|

| CPU の使用率 | 使用されている CPU コアの数。Pod の要求される CPU の割合の計算に使用されます。 |

|

| メモリーの使用率 | 使用されているメモリーの量。Pod の要求されるメモリーの割合の計算に使用されます。 |

|

メモリーベースの自動スケーリングでは、メモリー使用量がレプリカ数と比例して増減する必要があります。平均的には以下のようになります。

- レプリカ数が増えると、Pod ごとのメモリー (作業セット) の使用量が全体的に減少します。

- レプリカ数が減ると、Pod ごとのメモリー使用量が全体的に増加します。

OpenShift Container Platform Web コンソールを使用して、アプリケーションのメモリー動作を確認し、メモリーベースの自動スケーリングを使用する前にアプリケーションがそれらの要件を満たしていることを確認します。

以下の例は、image-registry DeploymentConfig オブジェクトの自動スケーリングを示しています。最初のデプロイメントでは 3 つの Pod が必要です。HPA オブジェクトは最小で 5 まで増加され、Pod 上の CPU 使用率が 75% に達すると、Pod を最大 7 まで増やします。

$ oc autoscale dc/image-registry --min=5 --max=7 --cpu-percent=75出力例

horizontalpodautoscaler.autoscaling/image-registry autoscaledminReplicas が 3 に設定された image-registryDeploymentConfig オブジェクトのサンプル HPA

apiVersion: autoscaling/v1

kind: HorizontalPodAutoscaler

metadata:

name: image-registry

namespace: default

spec:

maxReplicas: 7

minReplicas: 3

scaleTargetRef:

apiVersion: apps.openshift.io/v1

kind: DeploymentConfig

name: image-registry

targetCPUUtilizationPercentage: 75

status:

currentReplicas: 5

desiredReplicas: 0デプロイメントの新しい状態を表示します。

$ oc get dc image-registryデプロイメントには 5 つの Pod があります。

出力例

NAME REVISION DESIRED CURRENT TRIGGERED BY image-registry 1 5 5 config

2.4.1.2. スケーリングポリシー

autoscaling/v2beta2 API を使用すると、スケーリングポリシー を Horizontal Pod Autoscaler に追加できます。スケーリングポリシーは、OpenShift Container Platform の Horizontal Pod Autoscaler (HPA) が Pod をスケーリングする方法を制御します。スケーリングポリシーにより、特定の期間にスケーリングするように特定の数または特定のパーセンテージを設定して、HPA が Pod をスケールアップまたはスケールダウンするレートを制限できます。固定化ウィンドウ (stabilization window) を定義することもできます。これはメトリクスが変動する場合に、先に計算される必要な状態を使用してスケーリングを制御します。同じスケーリングの方向に複数のポリシーを作成し、変更の量に応じて使用するポリシーを判別することができます。タイミングが調整された反復によりスケーリングを制限することもできます。HPA は反復時に Pod をスケーリングし、その後の反復で必要に応じてスケーリングを実行します。

スケーリングポリシーを適用するサンプル HPA オブジェクト

apiVersion: autoscaling/v2beta2

kind: HorizontalPodAutoscaler

metadata:

name: hpa-resource-metrics-memory

namespace: default

spec:

behavior:

scaleDown:

policies:

- type: Pods

value: 4

periodSeconds: 60

- type: Percent

value: 10

periodSeconds: 60

selectPolicy: Min

stabilizationWindowSeconds: 300

scaleUp:

policies:

- type: Pods

value: 5

periodSeconds: 70

- type: Percent

value: 12

periodSeconds: 80

selectPolicy: Max

stabilizationWindowSeconds: 0

...- 1

scaleDownまたはscaleUpのいずれかのスケーリングポリシーの方向を指定します。この例では、スケールダウンのポリシーを作成します。- 2

- スケーリングポリシーを定義します。

- 3

- ポリシーが反復時に特定の Pod の数または Pod のパーセンテージに基づいてスケーリングするかどうかを決定します。デフォルト値は

podsです。 - 4

- 反復ごとに Pod の数または Pod のパーセンテージのいずれかでスケーリングの量を決定します。Pod 数でスケールダウンする際のデフォルト値はありません。

- 5

- スケーリングの反復の長さを決定します。デフォルト値は

15秒です。 - 6

- パーセンテージでのスケールダウンのデフォルト値は 100% です。

- 7

- 複数のポリシーが定義されている場合は、最初に使用するポリシーを決定します。最大限の変更を許可するポリシーを使用するように

Maxを指定するか、最小限の変更を許可するポリシーを使用するようにMinを指定するか、または HPA がポリシーの方向でスケーリングしないようにDisabledを指定します。デフォルト値はMaxです。 - 8

- HPA が必要とされる状態で遡る期間を決定します。デフォルト値は

0です。 - 9

- この例では、スケールアップのポリシーを作成します。

- 10

- Pod 数によるスケールアップの量。Pod 数をスケールアップするためのデフォルト値は 4% です。

- 11

- Pod のパーセンテージによるスケールアップの量。パーセンテージでスケールアップするためのデフォルト値は 100% です。

スケールダウンポリシーの例

apiVersion: autoscaling/v2beta2

kind: HorizontalPodAutoscaler

metadata:

name: hpa-resource-metrics-memory

namespace: default

spec:

...

minReplicas: 20

...

behavior:

scaleDown:

stabilizationWindowSeconds: 300

policies:

- type: Pods

value: 4

periodSeconds: 30

- type: Percent

value: 10

periodSeconds: 60

selectPolicy: Max

scaleUp:

selectPolicy: Disabled

この例では、Pod の数が 40 より大きい場合、パーセントベースのポリシーがスケールダウンに使用されます。このポリシーでは、 selectPolicy による要求により、より大きな変更が生じるためです。

80 の Pod レプリカがある場合、初回の反復で HPA は Pod を 8 Pod 減らします。これは、1 分間 (periodSeconds: 60) の (type: Percent および value: 10 パラメーターに基づく) 80 Pod の 10% に相当します。次回の反復では、Pod 数は 72 になります。HPA は、残りの Pod の 10% が 7.2 であると計算し、これを 8 に丸め、8 Pod をスケールダウンします。後続の反復ごとに、スケーリングされる Pod 数は残りの Pod 数に基づいて再計算されます。Pod の数が 40 未満の場合、Pod ベースの数がパーセントベースの数よりも大きくなるため、Pod ベースのポリシーが適用されます。HPA は、残りのレプリカ (minReplicas) が 20 になるまで、30 秒 (periodSeconds: 30) で一度に 4 Pod (type: Pods および value: 4) を減らします。

selectPolicy: Disabled パラメーターは HPA による Pod のスケールアップを防ぎます。必要な場合は、レプリカセットまたはデプロイメントセットでレプリカの数を調整して手動でスケールアップできます。

設定されている場合、oc edit コマンドを使用してスケーリングポリシーを表示できます。

$ oc edit hpa hpa-resource-metrics-memory出力例

apiVersion: autoscaling/v1

kind: HorizontalPodAutoscaler

metadata:

annotations:

autoscaling.alpha.kubernetes.io/behavior:\

'{"ScaleUp":{"StabilizationWindowSeconds":0,"SelectPolicy":"Max","Policies":[{"Type":"Pods","Value":4,"PeriodSeconds":15},{"Type":"Percent","Value":100,"PeriodSeconds":15}]},\

"ScaleDown":{"StabilizationWindowSeconds":300,"SelectPolicy":"Min","Policies":[{"Type":"Pods","Value":4,"PeriodSeconds":60},{"Type":"Percent","Value":10,"PeriodSeconds":60}]}}'

...2.4.2. Web コンソールを使用した Horizontal Pod Autoscaler の作成

Web コンソールから、デプロイメントで実行する Pod の最小および最大数を指定する Horizontal Pod Autoscaler (HPA) を作成できます。Pod がターゲットに設定する CPU またはメモリー使用量を定義することもできます。

HPA は、Operator がサポートするサービス、Knative サービス、または Helm チャートの一部であるデプロイメントに追加することはできません。

手順

Web コンソールで HPA を作成するには、以下を実行します。

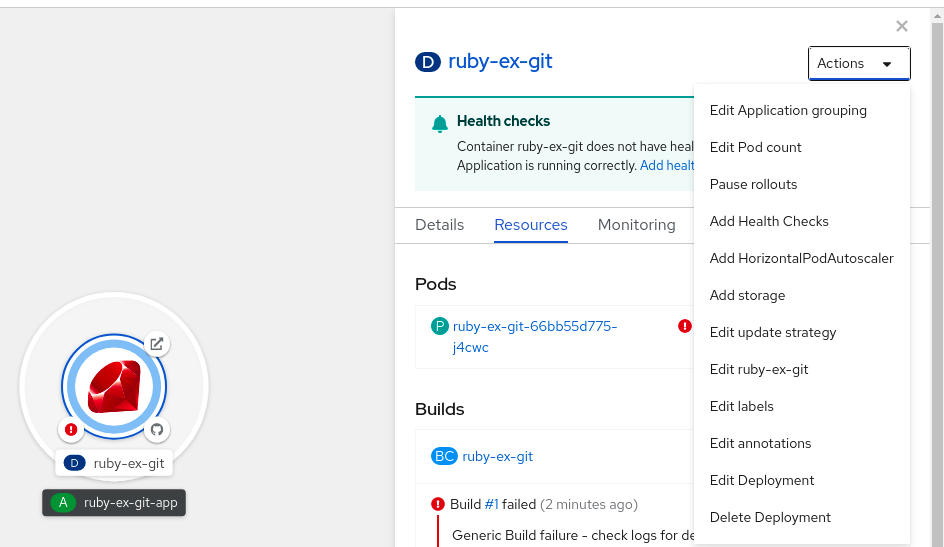

- Topology ビューで、ノードをクリックしてサイドペインを表示します。

Actions ドロップダウンリストから、Add HorizontalPodAutoscaler を選択して Add HorizontalPodAutoscaler フォームを開きます。

図2.1 Horizontal Pod Autoscaler の追加

Add HorizontalPodAutoscaler フォームから、名前、最小および最大の Pod 制限、CPU およびメモリーの使用状況を定義し、Save をクリックします。

注記CPU およびメモリー使用量の値のいずれかが見つからない場合は、警告が表示されます。

Web コンソールで HPA を編集するには、以下を実行します。

- Topology ビューで、ノードをクリックしてサイドペインを表示します。

- Actions ドロップダウンリストから、Edit HorizontalPodAutoscaler を選択し、 Horizontal Pod Autoscaler フォームを開きます。

- Edit Horizontal Pod Autoscaler フォームから、最小および最大の Pod 制限および CPU およびメモリー使用量を編集し、Save をクリックします。

Web コンソールで Horizontal Pod Autoscaler を作成または編集する際に、Form view から YAML viewに切り替えることができます。

Web コンソールで HPA を削除するには、以下を実行します。

- Topology ビューで、ノードをクリックし、サイドパネルを表示します。

- Actions ドロップダウンリストから、Remove HorizontalPodAutoscaler を選択します。

- 確認のポップアップウィンドウで、Remove をクリックして HPA を削除します。

2.4.3. CLI を使用した CPU 使用率向けの Horizontal Pod Autoscaler の作成

既存の Deployment、DeploymentConfig、ReplicaSet、ReplicationController 、または StatefulSet オブジェクトの水平 Pod オートスケーラー (HPA) を作成して、そのオブジェクトに関連付けられた Pod を自動的にスケーリングし、指定した CPU 使用率を維持できます。

HPA は、すべての Pod で指定された CPU 使用率を維持するために、最小数と最大数の間でレプリカ数を増減します。

CPU 使用率について自動スケーリングを行う際に、oc autoscale コマンドを使用し、実行する必要のある Pod の最小数および最大数と Pod がターゲットとして設定する必要のある平均 CPU 使用率を指定することができます。最小値を指定しない場合、Pod には OpenShift Container Platform サーバーからのデフォルト値が付与されます。特定の CPU 値について自動スケーリングを行うには、ターゲット CPU および Pod の制限のある HorizontalPodAutoscaler オブジェクトを作成します。

前提条件

Horizontal Pod Autoscaler を使用するには、クラスターの管理者はクラスターメトリクスを適切に設定している必要があります。メトリクスが設定されているかどうかは、oc describe PodMetrics <pod-name> コマンドを使用して判断できます。メトリクスが設定されている場合、出力は以下の Usage の下にある Cpu と Memory のように表示されます。

$ oc describe PodMetrics openshift-kube-scheduler-ip-10-0-135-131.ec2.internal出力例

Name: openshift-kube-scheduler-ip-10-0-135-131.ec2.internal

Namespace: openshift-kube-scheduler

Labels: <none>

Annotations: <none>

API Version: metrics.k8s.io/v1beta1

Containers:

Name: wait-for-host-port

Usage:

Memory: 0

Name: scheduler

Usage:

Cpu: 8m

Memory: 45440Ki

Kind: PodMetrics

Metadata:

Creation Timestamp: 2019-05-23T18:47:56Z

Self Link: /apis/metrics.k8s.io/v1beta1/namespaces/openshift-kube-scheduler/pods/openshift-kube-scheduler-ip-10-0-135-131.ec2.internal

Timestamp: 2019-05-23T18:47:56Z

Window: 1m0s

Events: <none>手順

CPU 使用率のための Horizontal Pod Autoscaler を作成するには、以下を実行します。

以下のいずれかを実行します。

CPU 使用率のパーセントに基づいてスケーリングするには、既存のオブジェクトとして

HorizontalPodAutoscalerオブジェクトを作成します。$ oc autoscale <object_type>/<name> \1 --min <number> \2 --max <number> \3 --cpu-percent=<percent>4 - 1

- 自動スケーリングするオブジェクトのタイプと名前を指定します。オブジェクトが存在し、

Deployment、DeploymentConfig/dc、ReplicaSet/rs、ReplicationController/rc、またはStatefulSetである必要があります。 - 2

- オプションで、スケールダウン時のレプリカの最小数を指定します。

- 3

- スケールアップ時のレプリカの最大数を指定します。

- 4

- 要求された CPU のパーセントで表示された、すべての Pod に対する目標の平均 CPU 使用率を指定します。指定しない場合または負の値の場合、デフォルトの自動スケーリングポリシーが使用されます。

たとえば、次のコマンドが示すように、

image-registryDeploymentConfigオブジェクトの自動スケーリング。最初のデプロイメントでは 3 つの Pod が必要です。HPA オブジェクトは最小で 5 まで増加され、Pod 上の CPU 使用率が 75% に達すると、Pod を最大 7 まで増やします。$ oc autoscale dc/image-registry --min=5 --max=7 --cpu-percent=75特定の CPU 値に合わせてスケーリングするには、既存のオブジェクトに対して次のような YAML ファイルを作成します。

以下のような YAML ファイルを作成します。

apiVersion: autoscaling/v2beta21 kind: HorizontalPodAutoscaler metadata: name: cpu-autoscale2 namespace: default spec: scaleTargetRef: apiVersion: apps/v13 kind: ReplicaSet4 name: example5 minReplicas: 16 maxReplicas: 107 metrics:8 - type: Resource resource: name: cpu9 target: type: AverageValue10 averageValue: 500m11 - 1

autoscaling/v2beta2API を使用します。- 2

- この Horizontal Pod Autoscaler オブジェクトの名前を指定します。

- 3

- スケーリングするオブジェクトの API バージョンを指定します。

-

ReplicationControllerの場合は、v1を使用します。 -

DeploymentConfigの場合は、apps.openshift.io/v1を使用します。 -

Deployment、ReplicaSet、Statefulsetオブジェクトの場合は、apps/v1を使用します。

-

- 4

- オブジェクトのタイプを指定します。オブジェクトは、

Deployment、DeploymentConfig/dc、ReplicaSet/rs、ReplicationController/rc、またはStatefulSetである必要があります。 - 5

- スケーリングするオブジェクトの名前を指定します。オブジェクトが存在する必要があります。

- 6

- スケールダウン時のレプリカの最小数を指定します。

- 7

- スケールアップ時のレプリカの最大数を指定します。

- 8

- メモリー使用率に

metricsパラメーターを使用します。 - 9

- CPU 使用率に

cpuを指定します。 - 10

AverageValueに設定します。- 11

- ターゲットに設定された CPU 値で

averageValueに設定します。

Horizontal Pod Autoscaler を作成します。

$ oc create -f <file-name>.yaml

Horizontal Pod Autoscaler が作成されていることを確認します。

$ oc get hpa cpu-autoscale出力例

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE cpu-autoscale ReplicationController/example 173m/500m 1 10 1 20m

2.4.4. CLI を使用したメモリー使用率向けの Horizontal Pod Autoscaler オブジェクトの作成

直接の値または要求されるメモリーのパーセンテージのいずれかで指定する平均のメモリー使用率を維持するために、オブジェクトに関連付けられた Pod を自動的にスケーリングする既存の DeploymentConfig オブジェクトまたは ReplicationController オブジェクトの Horizontal Pod Autoscaler (HPA) を作成できます。

HPA は、すべての Pod で指定のメモリー使用率を維持するために、最小数と最大数の間のレプリカ数を増減します。

メモリー使用率については、Pod の最小数および最大数と、Pod がターゲットとする平均のメモリー使用率を指定することができます。最小値を指定しない場合、Pod には OpenShift Container Platform サーバーからのデフォルト値が付与されます。

前提条件

Horizontal Pod Autoscaler を使用するには、クラスターの管理者はクラスターメトリクスを適切に設定している必要があります。メトリクスが設定されているかどうかは、oc describe PodMetrics <pod-name> コマンドを使用して判断できます。メトリクスが設定されている場合、出力は以下の Usage の下にある Cpu と Memory のように表示されます。

$ oc describe PodMetrics openshift-kube-scheduler-ip-10-0-129-223.compute.internal -n openshift-kube-scheduler出力例

Name: openshift-kube-scheduler-ip-10-0-129-223.compute.internal

Namespace: openshift-kube-scheduler

Labels: <none>

Annotations: <none>

API Version: metrics.k8s.io/v1beta1

Containers:

Name: scheduler

Usage:

Cpu: 2m

Memory: 41056Ki

Name: wait-for-host-port

Usage:

Memory: 0

Kind: PodMetrics

Metadata:

Creation Timestamp: 2020-02-14T22:21:14Z

Self Link: /apis/metrics.k8s.io/v1beta1/namespaces/openshift-kube-scheduler/pods/openshift-kube-scheduler-ip-10-0-129-223.compute.internal

Timestamp: 2020-02-14T22:21:14Z

Window: 5m0s

Events: <none>手順

メモリー使用率の Horizontal Pod Autoscaler を作成するには、以下を実行します。

以下のいずれか 1 つを含む YAML ファイルを作成します。

特定のメモリー値に合わせてスケーリングするには、既存の

ReplicationControllerオブジェクトまたはレプリケーションコントローラーに対して、次のようなHorizontalPodAutoscalerオブジェクトを作成します。出力例

apiVersion: autoscaling/v2beta21 kind: HorizontalPodAutoscaler metadata: name: hpa-resource-metrics-memory2 namespace: default spec: scaleTargetRef: apiVersion: v13 kind: ReplicationController4 name: example5 minReplicas: 16 maxReplicas: 107 metrics:8 - type: Resource resource: name: memory9 target: type: AverageValue10 averageValue: 500Mi11 behavior:12 scaleDown: stabilizationWindowSeconds: 300 policies: - type: Pods value: 4 periodSeconds: 60 - type: Percent value: 10 periodSeconds: 60 selectPolicy: Max- 1

autoscaling/v2beta2API を使用します。- 2

- この Horizontal Pod Autoscaler オブジェクトの名前を指定します。

- 3

- スケーリングするオブジェクトの API バージョンを指定します。

-

レプリケーションコントローラーについては、

v1を使用します。 -

DeploymentConfigオブジェクトについては、apps.openshift.io/v1を使用します。

-

レプリケーションコントローラーについては、

- 4

- スケーリングするオブジェクトの種類 (

ReplicationControllerまたはDeploymentConfigのいずれか) を指定します。 - 5

- スケーリングするオブジェクトの名前を指定します。オブジェクトが存在する必要があります。

- 6

- スケールダウン時のレプリカの最小数を指定します。

- 7

- スケールアップ時のレプリカの最大数を指定します。

- 8

- メモリー使用率に

metricsパラメーターを使用します。 - 9

- メモリー使用率の

memoryを指定します。 - 10

- タイプを

AverageValueに設定します。 - 11

averageValueおよび特定のメモリー値を指定します。- 12

- オプション: スケールアップまたはスケールダウンのレートを制御するスケーリングポリシーを指定します。

パーセンテージについてスケーリングするには、以下のように

HorizontalPodAutoscalerオブジェクトを作成します。出力例

apiVersion: autoscaling/v2beta21 kind: HorizontalPodAutoscaler metadata: name: memory-autoscale2 namespace: default spec: scaleTargetRef: apiVersion: apps.openshift.io/v13 kind: DeploymentConfig4 name: example5 minReplicas: 16 maxReplicas: 107 metrics:8 - type: Resource resource: name: memory9 target: type: Utilization10 averageUtilization: 5011 behavior:12 scaleUp: stabilizationWindowSeconds: 180 policies: - type: Pods value: 6 periodSeconds: 120 - type: Percent value: 10 periodSeconds: 120 selectPolicy: Max- 1

autoscaling/v2beta2API を使用します。- 2

- この Horizontal Pod Autoscaler オブジェクトの名前を指定します。

- 3

- スケーリングするオブジェクトの API バージョンを指定します。

-

レプリケーションコントローラーについては、

v1を使用します。 -

DeploymentConfigオブジェクトについては、apps.openshift.io/v1を使用します。

-

レプリケーションコントローラーについては、

- 4

- スケーリングするオブジェクトの種類 (

ReplicationControllerまたはDeploymentConfigのいずれか) を指定します。 - 5

- スケーリングするオブジェクトの名前を指定します。オブジェクトが存在する必要があります。

- 6

- スケールダウン時のレプリカの最小数を指定します。

- 7

- スケールアップ時のレプリカの最大数を指定します。

- 8

- メモリー使用率に

metricsパラメーターを使用します。 - 9

- メモリー使用率の

memoryを指定します。 - 10

Utilizationに設定します。- 11

averageUtilizationおよび ターゲットに設定する平均メモリー使用率をすべての Pod に対して指定します (要求されるメモリーのパーセントで表す)。ターゲット Pod にはメモリー要求が設定されている必要があります。- 12

- オプション: スケールアップまたはスケールダウンのレートを制御するスケーリングポリシーを指定します。

Horizontal Pod Autoscaler を作成します。

$ oc create -f <file-name>.yaml以下に例を示します。

$ oc create -f hpa.yaml出力例

horizontalpodautoscaler.autoscaling/hpa-resource-metrics-memory createdHorizontal Pod Autoscaler が作成されていることを確認します。

$ oc get hpa hpa-resource-metrics-memory出力例

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE hpa-resource-metrics-memory ReplicationController/example 2441216/500Mi 1 10 1 20m$ oc describe hpa hpa-resource-metrics-memory出力例

Name: hpa-resource-metrics-memory Namespace: default Labels: <none> Annotations: <none> CreationTimestamp: Wed, 04 Mar 2020 16:31:37 +0530 Reference: ReplicationController/example Metrics: ( current / target ) resource memory on pods: 2441216 / 500Mi Min replicas: 1 Max replicas: 10 ReplicationController pods: 1 current / 1 desired Conditions: Type Status Reason Message ---- ------ ------ ------- AbleToScale True ReadyForNewScale recommended size matches current size ScalingActive True ValidMetricFound the HPA was able to successfully calculate a replica count from memory resource ScalingLimited False DesiredWithinRange the desired count is within the acceptable range Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal SuccessfulRescale 6m34s horizontal-pod-autoscaler New size: 1; reason: All metrics below target

2.4.5. CLI を使用した Horizontal Pod Autoscaler の状態条件について

状態条件セットを使用して、Horizontal Pod Autoscaler (HPA) がスケーリングできるかどうかや、現時点でこれがいずれかの方法で制限されているかどうかを判別できます。

HPA の状態条件は、自動スケーリング API の v2beta1 バージョンで利用できます。

HPA は、以下の状態条件で応答します。

AbleToScale条件では、HPA がメトリクスを取得して更新できるか、またバックオフ関連の条件によりスケーリングが回避されるかどうかを指定します。-

True条件はスケーリングが許可されることを示します。 -

False条件は指定される理由によりスケーリングが許可されないことを示します。

-

ScalingActive条件は、HPA が有効にされており (ターゲットのレプリカ数がゼロでない)、必要なメトリクスを計算できるかどうかを示します。-

True条件はメトリクスが適切に機能していることを示します。 -

False条件は通常フェッチするメトリクスに関する問題を示します。

-

ScalingLimited条件は、必要とするスケールが Horizontal Pod Autoscaler の最大値または最小値によって制限されていたことを示します。-

True条件は、スケーリングするためにレプリカの最小または最大数を引き上げるか、または引き下げる必要があることを示します。 False条件は、要求されたスケーリングが許可されることを示します。$ oc describe hpa cm-test出力例

Name: cm-test Namespace: prom Labels: <none> Annotations: <none> CreationTimestamp: Fri, 16 Jun 2017 18:09:22 +0000 Reference: ReplicationController/cm-test Metrics: ( current / target ) "http_requests" on pods: 66m / 500m Min replicas: 1 Max replicas: 4 ReplicationController pods: 1 current / 1 desired Conditions:1 Type Status Reason Message ---- ------ ------ ------- AbleToScale True ReadyForNewScale the last scale time was sufficiently old as to warrant a new scale ScalingActive True ValidMetricFound the HPA was able to successfully calculate a replica count from pods metric http_request ScalingLimited False DesiredWithinRange the desired replica count is within the acceptable range Events:- 1

- Horizontal Pod Autoscaler の状況メッセージです。

-

以下は、スケーリングできない Pod の例です。

出力例

Conditions:

Type Status Reason Message

---- ------ ------ -------

AbleToScale False FailedGetScale the HPA controller was unable to get the target's current scale: no matches for kind "ReplicationController" in group "apps"

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedGetScale 6s (x3 over 36s) horizontal-pod-autoscaler no matches for kind "ReplicationController" in group "apps"以下は、スケーリングに必要なメトリクスを取得できなかった Pod の例です。

出力例

Conditions:

Type Status Reason Message

---- ------ ------ -------

AbleToScale True SucceededGetScale the HPA controller was able to get the target's current scale

ScalingActive False FailedGetResourceMetric the HPA was unable to compute the replica count: failed to get cpu utilization: unable to get metrics for resource cpu: no metrics returned from resource metrics API以下は、要求される自動スケーリングが要求される最小数よりも小さい場合の Pod の例です。

出力例

Conditions:

Type Status Reason Message

---- ------ ------ -------

AbleToScale True ReadyForNewScale the last scale time was sufficiently old as to warrant a new scale

ScalingActive True ValidMetricFound the HPA was able to successfully calculate a replica count from pods metric http_request

ScalingLimited False DesiredWithinRange the desired replica count is within the acceptable range2.4.5.1. CLI を使用した Horizontal Pod Autoscaler の状態条件の表示

Pod に設定された状態条件は、Horizontal Pod Autoscaler (HPA) で表示することができます。

Horizontal Pod Autoscaler の状態条件は、自動スケーリング API の v2beta1 バージョンで利用できます。

前提条件

Horizontal Pod Autoscaler を使用するには、クラスターの管理者はクラスターメトリクスを適切に設定している必要があります。メトリクスが設定されているかどうかは、oc describe PodMetrics <pod-name> コマンドを使用して判断できます。メトリクスが設定されている場合、出力は以下の Usage の下にある Cpu と Memory のように表示されます。

$ oc describe PodMetrics openshift-kube-scheduler-ip-10-0-135-131.ec2.internal出力例

Name: openshift-kube-scheduler-ip-10-0-135-131.ec2.internal

Namespace: openshift-kube-scheduler

Labels: <none>

Annotations: <none>

API Version: metrics.k8s.io/v1beta1

Containers:

Name: wait-for-host-port

Usage:

Memory: 0

Name: scheduler

Usage:

Cpu: 8m

Memory: 45440Ki

Kind: PodMetrics

Metadata:

Creation Timestamp: 2019-05-23T18:47:56Z

Self Link: /apis/metrics.k8s.io/v1beta1/namespaces/openshift-kube-scheduler/pods/openshift-kube-scheduler-ip-10-0-135-131.ec2.internal

Timestamp: 2019-05-23T18:47:56Z

Window: 1m0s

Events: <none>手順

Pod の状態条件を表示するには、Pod の名前と共に以下のコマンドを使用します。

$ oc describe hpa <pod-name>以下に例を示します。

$ oc describe hpa cm-test

条件は、出力の Conditions フィールドに表示されます。

出力例

Name: cm-test

Namespace: prom

Labels: <none>

Annotations: <none>

CreationTimestamp: Fri, 16 Jun 2017 18:09:22 +0000

Reference: ReplicationController/cm-test

Metrics: ( current / target )

"http_requests" on pods: 66m / 500m

Min replicas: 1

Max replicas: 4

ReplicationController pods: 1 current / 1 desired

Conditions:

Type Status Reason Message

---- ------ ------ -------

AbleToScale True ReadyForNewScale the last scale time was sufficiently old as to warrant a new scale

ScalingActive True ValidMetricFound the HPA was able to successfully calculate a replica count from pods metric http_request

ScalingLimited False DesiredWithinRange the desired replica count is within the acceptable range2.5. Vertical Pod Autoscaler を使用した Pod リソースレベルの自動調整

OpenShift Container Platform の Vertical Pod Autoscaler Operator (VPA) は、Pod 内のコンテナーの履歴および現在の CPU とメモリーリソースを自動的に確認し、把握する使用値に基づいてリソース制限および要求を更新できます。VPA は個別のカスタムリソース (CR) を使用して、プロジェクトの Deployment、Deployment Config、StatefulSet、Job、DaemonSet、ReplicaSet、または ReplicationController などのワークロードオブジェクトに関連付けられたすべての Pod を更新します。

VPA は、Pod に最適な CPU およびメモリーの使用状況を理解するのに役立ち、Pod のライフサイクルを通じて Pod のリソースを自動的に維持します。

Vertical Pod Autoscaler はテクノロジープレビュー機能としてのみご利用いただけます。テクノロジープレビュー機能は Red Hat の実稼働環境でのサービスレベルアグリーメント (SLA) ではサポートされていないため、Red Hat では実稼働環境での使用を推奨していません。Red Hat は実稼働環境でこれらを使用することを推奨していません。テクノロジープレビューの機能は、最新の製品機能をいち早く提供して、開発段階で機能のテストを行いフィードバックを提供していただくことを目的としています。

Red Hat のテクノロジープレビュー機能のサポート範囲についての詳細は、テクノロジープレビュー機能のサポート範囲 を参照してください。

2.5.1. Vertical Pod Autoscaler Operator について

Vertical Pod Autoscaler Operator (VPA) は、API リソースおよびカスタムリソース (CR) として実装されます。CR は、プロジェクトのデーモンセット、レプリケーションコントローラーなどの特定のワークロードオブジェクトに関連付けられた Pod について Vertical Pod Autoscaler Operator が取るべき動作を判別します。

VPA は、それらの Pod 内のコンテナーの履歴および現在の CPU とメモリーの使用状況を自動的に計算し、このデータを使用して、最適化されたリソース制限および要求を判別し、これらの Pod が常時効率的に動作していることを確認することができます。たとえば、VPA は使用している量よりも多くのリソースを要求する Pod のリソースを減らし、十分なリソースを要求していない Pod のリソースを増やします。

VPA は、一度に 1 つずつ推奨値で調整されていない Pod を自動的に削除するため、アプリケーションはダウンタイムなしに継続して要求を提供できます。ワークロードオブジェクトは、元のリソース制限および要求で Pod を再デプロイします。VPA は変更用の受付 Webhook を使用して、Pod がノードに許可される前に最適化されたリソース制限および要求で Pod を更新します。VPA が Pod を削除する必要がない場合は、VPA リソース制限および要求を表示し、必要に応じて Pod を手動で更新できます。

たとえば、CPU の 50% を使用する Pod が 10% しか要求しない場合、VPA は Pod が要求よりも多くの CPU を消費すると判別してその Pod を削除します。レプリカセットなどのワークロードオブジェクトは Pod を再起動し、VPA は推奨リソースで新しい Pod を更新します。

開発者の場合、VPA を使用して、Pod を各 Pod に適したリソースを持つノードにスケジュールし、Pod の需要の多い期間でも稼働状態を維持することができます。

管理者は、VPA を使用してクラスターリソースをより適切に活用できます。たとえば、必要以上の CPU リソースを Pod が予約できないようにします。VPA は、ワークロードが実際に使用しているリソースをモニターし、他のワークロードで容量を使用できるようにリソース要件を調整します。VPA は、初期のコンテナー設定で指定される制限と要求の割合をそのまま維持します。

VPA の実行を停止するか、またはクラスターの特定の VPA CR を削除する場合、VPA によってすでに変更された Pod のリソース要求は変更されません。新規 Pod は、VPA による以前の推奨事項ではなく、ワークロードオブジェクトで定義されたリソースを取得します。

2.5.2. Vertical Pod Autoscaler Operator のインストール

OpenShift Container Platform Web コンソールを使って Vertical Pod Autoscaler Operator (VPA) をインストールすることができます。

手順

- OpenShift Container Platform Web コンソールで、Operators → OperatorHub をクリックします。

- 利用可能な Operator の一覧から VerticalPodAutoscaler を選択し、Install をクリックします。

-

Install Operator ページで、Operator recommended namespace オプションが選択されていることを確認します。これにより、Operator が必須の

openshift-vertical-pod-autoscalernamespace にインストールされます。この namespace は存在しない場合は、自動的に作成されます。 - Install をクリックします。

VPA Operator コンポーネントを一覧表示して、インストールを確認します。

- Workloads → Pods に移動します。

-

ドロップダウンメニューから

openshift-vertical-pod-autoscalerプロジェクトを選択し、4 つの Pod が実行されていることを確認します。 - Workloads → Deploymentsに移動し、4 つの デプロイメントが実行されていることを確認します。

オプション:以下のコマンドを使用して、OpenShift Container Platform CLI でインストールを確認します。

$ oc get all -n openshift-vertical-pod-autoscaler出力には、4 つの Pod と 4 つのデプロイメントが表示されます。

出力例

NAME READY STATUS RESTARTS AGE pod/vertical-pod-autoscaler-operator-85b4569c47-2gmhc 1/1 Running 0 3m13s pod/vpa-admission-plugin-default-67644fc87f-xq7k9 1/1 Running 0 2m56s pod/vpa-recommender-default-7c54764b59-8gckt 1/1 Running 0 2m56s pod/vpa-updater-default-7f6cc87858-47vw9 1/1 Running 0 2m56s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/vpa-webhook ClusterIP 172.30.53.206 <none> 443/TCP 2m56s NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/vertical-pod-autoscaler-operator 1/1 1 1 3m13s deployment.apps/vpa-admission-plugin-default 1/1 1 1 2m56s deployment.apps/vpa-recommender-default 1/1 1 1 2m56s deployment.apps/vpa-updater-default 1/1 1 1 2m56s NAME DESIRED CURRENT READY AGE replicaset.apps/vertical-pod-autoscaler-operator-85b4569c47 1 1 1 3m13s replicaset.apps/vpa-admission-plugin-default-67644fc87f 1 1 1 2m56s replicaset.apps/vpa-recommender-default-7c54764b59 1 1 1 2m56s replicaset.apps/vpa-updater-default-7f6cc87858 1 1 1 2m56s

2.5.3. Vertical Pod Autoscaler Operator の使用について

Vertical Pod Autoscaler Operator (VPA) を使用するには、クラスター内にワークロードオブジェクトの VPA カスタムリソース (CR) を作成します。VPA は、そのワークロードオブジェクトに関連付けられた Pod に最適な CPU およびメモリーリソースを確認し、適用します。VPA は、デプロイメント、ステートフルセット、ジョブ、デーモンセット、レプリカセット、またはレプリケーションコントローラーのワークロードオブジェクトと共に使用できます。VPA CR はモニターする必要のある Pod と同じプロジェクトになければなりません。

VPA CR を使用してワークロードオブジェクトを関連付け、VPA が動作するモードを指定します。

-

AutoおよびRecreateモードは、Pod の有効期間中は VPA CPU およびメモリーの推奨事項を自動的に適用します。VPA は、推奨値で調整されていないプロジェクトの Pod を削除します。ワークロードオブジェクトによって再デプロイされる場合、VPA はその推奨内容で新規 Pod を更新します。 -

Initialモードは、Pod の作成時にのみ VPA の推奨事項を自動的に適用します。 -

Offモードは、推奨されるリソース制限および要求のみを提供するので、推奨事項を手動で適用することができます。offモードは Pod を更新しません。

CR を使用して、VPA 評価および更新から特定のコンテナーをオプトアウトすることもできます。

たとえば、Pod には以下の制限および要求があります。

resources:

limits:

cpu: 1

memory: 500Mi

requests:

cpu: 500m

memory: 100Mi

auto に設定された VPA を作成すると、VPA はリソースの使用状況を確認して Pod を削除します。再デプロイ時に、Pod は新規のリソース制限および要求を使用します。

resources:

limits:

cpu: 50m

memory: 1250Mi

requests:

cpu: 25m

memory: 262144k以下のコマンドを実行して、VPA の推奨事項を表示できます。

$ oc get vpa <vpa-name> --output yaml数分後に、出力には、以下のような CPU およびメモリー要求の推奨内容が表示されます。

出力例

...

status:

...

recommendation:

containerRecommendations:

- containerName: frontend

lowerBound:

cpu: 25m

memory: 262144k

target:

cpu: 25m

memory: 262144k

uncappedTarget:

cpu: 25m

memory: 262144k

upperBound:

cpu: 262m

memory: "274357142"

- containerName: backend

lowerBound:

cpu: 12m

memory: 131072k

target:

cpu: 12m

memory: 131072k

uncappedTarget:

cpu: 12m

memory: 131072k

upperBound:

cpu: 476m

memory: "498558823"

...

出力には、target (推奨リソース)、lowerBound (最小推奨リソース)、upperBound (最大推奨リソース)、および uncappedTarget (最新の推奨リソース) が表示されます。

VPA は lowerBound および upperBound の値を使用して、Pod の更新が必要であるかどうかを判別します。Pod のリソース要求が lowerBound 値を下回るか、upperBound 値を上回る場合は、VPA は終了し、target 値で Pod を再作成します。

2.5.3.1. VPA の推奨事項の自動適用

VPA を使用して Pod を自動的に更新するには、updateMode が Auto または Recreate に設定された特定のワークロードオブジェクトの VPA CR を作成します。

Pod がワークロードオブジェクト用に作成されると、VPA はコンテナーを継続的にモニターして、CPU およびメモリーのニーズを分析します。VPA は、CPU およびメモリーについての VPA の推奨値を満たさない Pod を削除します。再デプロイ時に、Pod は VPA の推奨値に基づいて新規のリソース制限および要求を使用し、アプリケーションに設定された Pod の Disruption Budget (停止状態の予算) を反映します。この推奨事項は、参照用に VPA CR の status フィールドに追加されます。

ワークロードオブジェクトは、VPA が Pod を監視し、更新できるようにレプリカを 2 つ以上指定する必要があります。ワークロードオブジェクトが 1 つのレプリカを指定する場合、VPA はアプリケーションのダウンタイムを防ぐために Pod を削除しません。Pod を手動で削除し、推奨リソースを使用することができます。

Auto モードの VPA CR の例

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: vpa-recommender

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: frontend

updatePolicy:

updateMode: "Auto" - 1 1

- この VPA CR が管理するワークロードオブジェクトのタイプ。

- 2

- この VPA CR が管理するワークロードオブジェクトの名前。

- 3

- モードを

AutoまたはRecreateに設定します。-

Auto:VPA は、Pod の作成時にリソース要求を割り当て、要求されるリソースが新規の推奨事項と大きく異なる場合に、それらを終了して既存の Pod を更新します。 -

Recreate:VPA は、Pod の作成時にリソース要求を割り当て、要求されるリソースが新規の推奨事項と大きく異なる場合に、それらを終了して既存の Pod を更新します。このモードはほとんど使用されることはありません。リソース要求が変更される際に Pod が再起動されていることを確認する必要がある場合にのみ使用します。

-

VPA が推奨リソースを判別し、新規 Pod に推奨事項を割り当てる前に、プロジェクトに動作中の Pod がなければなりません。

2.5.3.2. Pod 作成時における VPA 推奨の自動適用

VPA を使用して、Pod が最初にデプロイされる場合にのみ推奨リソースを適用するには、updateMode が Initial に設定された特定のワークロードオブジェクトの VPA CR を作成します。

次に、VPA の推奨値を使用する必要のあるワークロードオブジェクトに関連付けられた Pod を手動で削除します。Initial モードで、VPA は新しいリソースの推奨内容を確認する際に Pod を削除したり、更新したりしません。

Initial モードの VPA CR の例

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: vpa-recommender

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: frontend

updatePolicy:

updateMode: "Initial" VPA が推奨リソースを判別し、新規 Pod に推奨事項を割り当てる前に、プロジェクトに動作中の Pod がなければなりません。

2.5.3.3. VPA の推奨事項の手動適用

CPU およびメモリーの推奨値を判別するためだけに VPA を使用するには、updateMode を off に設定した特定のワークロードオブジェクトの VPA CR を作成します。

Pod がワークロードオブジェクト用に作成されると、VPA はコンテナーの CPU およびメモリーのニーズを分析し、VPA CR の status フィールドにそれらの推奨事項を記録します。VPA は、新しい推奨リソースを判別する際に Pod を更新しません。

Off モードの VPA CR の例

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: vpa-recommender

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: frontend

updatePolicy:

updateMode: "Off" 以下のコマンドを使用して、推奨事項を表示できます。

$ oc get vpa <vpa-name> --output yamlこの推奨事項により、ワークロードオブジェクトを編集して CPU およびメモリー要求を追加し、推奨リソースを使用して Pod を削除および再デプロイできます。

VPA が推奨リソースを判別する前に、プロジェクトに動作中の Pod がなければなりません。

2.5.3.4. VPA の推奨事項をすべてのコンテナーに適用しないようにする

ワークロードオブジェクトに複数のコンテナーがあり、VPA がすべてのコンテナーを評価および実行対象としないようにするには、特定のワークロードオブジェクトの VPA CR を作成し、resourcePolicy を追加して特定のコンテナーをオプトアウトします。

VPA が推奨リソースで Pod を更新すると、resourcePolicy が設定されたコンテナーは更新されず、VPA は Pod 内のそれらのコンテナーの推奨事項を提示しません。

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: vpa-recommender

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: frontend

updatePolicy:

updateMode: "Auto"

resourcePolicy:

containerPolicies:

- containerName: my-opt-sidecar

mode: "Off"たとえば、Pod には同じリソース要求および制限の 2 つのコンテナーがあります。

# ...

spec:

containers:

- name: frontend

resources:

limits:

cpu: 1

memory: 500Mi

requests:

cpu: 500m

memory: 100Mi

- name: backend

resources:

limits:

cpu: "1"

memory: 500Mi

requests:

cpu: 500m

memory: 100Mi

# ...

backend コンテナーがオプトアウトに設定された VPA CR を起動した後、VPA は Pod を終了し、frontend コンテナーのみに適用される推奨リソースで Pod を再作成します。

...

spec:

containers:

name: frontend

resources:

limits:

cpu: 50m

memory: 1250Mi

requests:

cpu: 25m

memory: 262144k

...

name: backend

resources:

limits:

cpu: "1"

memory: 500Mi

requests:

cpu: 500m

memory: 100Mi

...2.5.4. Vertical Pod Autoscaler Operator の使用

VPA カスタムリソース (CR) を作成して、Vertical Pod Autoscaler Operator (VPA) を使用できます。CR は、分析すべき Pod を示し、VPA がそれらの Pod について実行するアクションを判別します。

手順

特定のワークロードオブジェクトの VPA CR を作成するには、以下を実行します。

スケーリングするワークロードオブジェクトがあるプロジェクトに切り替えます。

VPA CR YAML ファイルを作成します。

apiVersion: autoscaling.k8s.io/v1 kind: VerticalPodAutoscaler metadata: name: vpa-recommender spec: targetRef: apiVersion: "apps/v1" kind: Deployment1 name: frontend2 updatePolicy: updateMode: "Auto"3 resourcePolicy:4 containerPolicies: - containerName: my-opt-sidecar mode: "Off"- 1

- この VPA が管理するワークロードオブジェクトのタイプ (

Deployment、StatefulSet、Job、DaemonSet、ReplicaSet、またはReplicationController) を指定します。 - 2

- この VPA が管理する既存のワークロードオブジェクトの名前を指定します。

- 3

- VPA モードを指定します。

-

autoは、コントローラーに関連付けられた Pod に推奨リソースを自動的に適用します。VPA は既存の Pod を終了し、推奨されるリソース制限および要求で新規 Pod を作成します。 -

recreateは、ワークロードオブジェクトに関連付けられた Pod に推奨リソースを自動的に適用します。VPA は既存の Pod を終了し、推奨されるリソース制限および要求で新規 Pod を作成します。recreateモードはほとんど使用されることはありません。リソース要求が変更される際に Pod が再起動されていることを確認する必要がある場合にのみ使用します。 -

initialは、ワークロードオブジェクトに関連付けられた Pod が作成される際に、推奨リソースを自動的に適用します。VPA は、新しい推奨リソースを確認する際に Pod を更新しません。 -

offは、ワークロードオブジェクトに関連付けられた Pod の推奨リソースのみを生成します。VPA は、新しい推奨リソースを確認する際に Pod を更新しません。また、新規 Pod に推奨事項を適用しません。

-

- 4

- オプション:オプトアウトするコンテナーを指定し、モードを

Offに設定します。

VPA CR を作成します。

$ oc create -f <file-name>.yamlしばらくすると、VPA はワークロードオブジェクトに関連付けられた Pod 内のコンテナーのリソース使用状況を確認します。

以下のコマンドを実行して、VPA の推奨事項を表示できます。

$ oc get vpa <vpa-name> --output yaml出力には、以下のような CPU およびメモリー要求の推奨事項が表示されます。

出力例

... status: ... recommendation: containerRecommendations: - containerName: frontend lowerBound:1 cpu: 25m memory: 262144k target:2 cpu: 25m memory: 262144k uncappedTarget:3 cpu: 25m memory: 262144k upperBound:4 cpu: 262m memory: "274357142" - containerName: backend lowerBound: cpu: 12m memory: 131072k target: cpu: 12m memory: 131072k uncappedTarget: cpu: 12m memory: 131072k upperBound: cpu: 476m memory: "498558823" ...

2.5.5. Vertical Pod Autoscaler Operator のアンインストール

Vertical Pod Autoscaler Operator (VPA) を OpenShift Container Platform クラスターから削除できます。アンインストール後、既存の VPA CR によってすでに変更された Pod のリソース要求は変更されません。新規 Pod は、Vertical Pod Autoscaler Operator による以前の推奨事項ではなく、ワークロードオブジェクトで定義されるリソースを取得します。

oc delete vpa <vpa-name> コマンドを使用して、特定の VPA を削除できます。Vertical Pod Autoscaler のアンインストール時と同じアクションがリソース要求に対して適用されます。

VPA Operator を削除した後、潜在的な問題を回避するために、Operator に関連する他のコンポーネントを削除することをお勧めします。

前提条件

- Vertical Pod Autoscaler Operator がインストールされていること。

手順

- OpenShift Container Platform Web コンソールで、Operators → Installed Operators をクリックします。

- openshift-vertical-pod-autoscaler プロジェクトに切り替えます。

- VerticalPodAutoscaler Operator を検索し、Options メニューをクリックします。Uninstall Operator を選択します。

- ダイアログボックスで、Uninstall をクリックします。

- オプション: 演算子に関連付けられているすべてのオペランドを削除するには、ダイアログボックスで、Delete all operand instances for this operatorチェックボックスをオンにします。

- Uninstall をクリックします。

オプション: OpenShift CLI を使用して VPA コンポーネントを削除します。

VPA の変更用 Webhook 設定を削除します。

$ oc delete mutatingwebhookconfigurations/vpa-webhook-configVPA カスタムリソースを一覧表示します。

$ oc get verticalpodautoscalercheckpoints.autoscaling.k8s.io,verticalpodautoscalercontrollers.autoscaling.openshift.io,verticalpodautoscalers.autoscaling.k8s.io -o wide --all-namespaces出力例

NAMESPACE NAME AGE my-project verticalpodautoscalercheckpoint.autoscaling.k8s.io/vpa-recommender-httpd 5m46s NAMESPACE NAME AGE openshift-vertical-pod-autoscaler verticalpodautoscalercontroller.autoscaling.openshift.io/default 11m NAMESPACE NAME MODE CPU MEM PROVIDED AGE my-project verticalpodautoscaler.autoscaling.k8s.io/vpa-recommender Auto 93m 262144k True 9m15s一覧表示された VPA カスタムリソースを削除します。以下に例を示します。

$ oc delete verticalpodautoscalercheckpoint.autoscaling.k8s.io/vpa-recommender-httpd -n my-project$ oc delete verticalpodautoscalercontroller.autoscaling.openshift.io/default -n openshift-vertical-pod-autoscaler$ oc delete verticalpodautoscaler.autoscaling.k8s.io/vpa-recommender -n my-projectVPA カスタムリソース定義 (CRD) を一覧表示します。

$ oc get crd出力例

NAME CREATED AT ... verticalpodautoscalercheckpoints.autoscaling.k8s.io 2022-02-07T14:09:20Z verticalpodautoscalercontrollers.autoscaling.openshift.io 2022-02-07T14:09:20Z verticalpodautoscalers.autoscaling.k8s.io 2022-02-07T14:09:20Z ...一覧表示された VPA CRD を削除します。

$ oc delete crd verticalpodautoscalercheckpoints.autoscaling.k8s.io verticalpodautoscalercontrollers.autoscaling.openshift.io verticalpodautoscalers.autoscaling.k8s.ioCRD を削除すると、関連付けられたロール、クラスターロール、およびロールバインディングが削除されます。ただし、手動で削除する必要のあるクラスターロールがいくつかあります。

VPA クラスターロールを一覧表示します。

$ oc get clusterrole | grep openshift-vertical-pod-autoscaler出力例

openshift-vertical-pod-autoscaler-6896f-admin 2022-02-02T15:29:55Z openshift-vertical-pod-autoscaler-6896f-edit 2022-02-02T15:29:55Z openshift-vertical-pod-autoscaler-6896f-view 2022-02-02T15:29:55Z一覧表示された VPA クラスターロールを削除します。以下に例を示します。

$ oc delete clusterrole openshift-vertical-pod-autoscaler-6896f-admin openshift-vertical-pod-autoscaler-6896f-edit openshift-vertical-pod-autoscaler-6896f-viewVPA Operator を削除します。

$ oc delete operator/vertical-pod-autoscaler.openshift-vertical-pod-autoscaler

2.6. Pod への機密性の高いデータの提供

アプリケーションによっては、パスワードやユーザー名など開発者に使用させない秘密情報が必要になります。

管理者として シークレット オブジェクトを使用すると、この情報を平文で公開することなく提供することが可能です。

2.6.1. シークレットについて

Secret オブジェクトタイプはパスワード、OpenShift Container Platform クライアント設定ファイル、プライベートソースリポジトリーの認証情報などの機密情報を保持するメカニズムを提供します。シークレットは機密内容を Pod から切り離します。シークレットはボリュームプラグインを使用してコンテナーにマウントすることも、システムが Pod の代わりにシークレットを使用して各種アクションを実行することもできます。

キーのプロパティーには以下が含まれます。

- シークレットデータはその定義とは別に参照できます。

- シークレットデータのボリュームは一時ファイルストレージ機能 (tmpfs) でサポートされ、ノードで保存されることはありません。

- シークレットデータは namespace 内で共有できます。

YAML Secret オブジェクト定義

apiVersion: v1

kind: Secret

metadata:

name: test-secret

namespace: my-namespace

type: Opaque

data:

username: dmFsdWUtMQ0K

password: dmFsdWUtMg0KDQo=

stringData:

hostname: myapp.mydomain.com シークレットに依存する Pod を作成する前に、シークレットを作成する必要があります。

シークレットの作成時に以下を実行します。

- シークレットデータでシークレットオブジェクトを作成します。

- Pod のサービスアカウントをシークレットの参照を許可するように更新します。

-

シークレットを環境変数またはファイルとして使用する Pod を作成します (

secretボリュームを使用)。

2.6.1.1. シークレットの種類

type フィールドの値で、シークレットのキー名と値の構造を指定します。このタイプを使用して、シークレットオブジェクトにユーザー名とキーの配置を実行できます。検証の必要がない場合には、デフォルト設定の opaque タイプを使用してください。

以下のタイプから 1 つ指定して、サーバー側で最小限の検証をトリガーし、シークレットデータに固有のキー名が存在することを確認します。

-

kubernetes.io/service-account-token。サービスアカウントトークンを使用します。 -

kubernetes.io/basic-auth。Basic 認証で使用します。 -

kubernetes.io/ssh-auth.SSH キー認証で使用します。 -

kubernetes.io/tls。TLS 認証局で使用します。

検証が必要ない場合には type: Opaque と指定します。これは、シークレットがキー名または値の規則に準拠しないという意味です。opaque シークレットでは、任意の値を含む、体系化されていない key:value ペアも利用できます。

example.com/my-secret-type などの他の任意のタイプを指定できます。これらのタイプはサーバー側では実行されませんが、シークレットの作成者がその種類のキー/値の要件に従う意図があることを示します。

シークレットのさまざまなタイプの例については、シークレットの使用 に関連するコードのサンプルを参照してください。

2.6.1.2. シークレットデータキー

シークレットキーは DNS サブドメインになければなりません。

2.6.2. シークレットの作成方法

管理者は、開発者がシークレットに依存する Pod を作成できるよう事前にシークレットを作成しておく必要があります。

シークレットの作成時に以下を実行します。

秘密にしておきたいデータを含む秘密オブジェクトを作成します。各シークレットタイプに必要な特定のデータは、以下のセクションで非表示になります。

不透明なシークレットを作成する YAML オブジェクトの例

apiVersion: v1 kind: Secret metadata: name: test-secret type: Opaque1 data:2 username: dmFsdWUtMQ0K password: dmFsdWUtMQ0KDQo= stringData:3 hostname: myapp.mydomain.com secret.properties: | property1=valueA property2=valueBdataフィールドまたはstringdataフィールドの両方ではなく、いずれかを使用してください。Pod のサービスアカウントをシークレットを参照するように更新します。

シークレットを使用するサービスアカウントの YAML

apiVersion: v1 kind: ServiceAccount ... secrets: - name: test-secretシークレットを環境変数またはファイルとして使用する Pod を作成します (

secretボリュームを使用)。シークレットデータと共にボリュームのファイルが設定された Pod の YAML

apiVersion: v1 kind: Pod metadata: name: secret-example-pod spec: containers: - name: secret-test-container image: busybox command: [ "/bin/sh", "-c", "cat /etc/secret-volume/*" ] volumeMounts:1 - name: secret-volume mountPath: /etc/secret-volume2 readOnly: true3 volumes: - name: secret-volume secret: secretName: test-secret4 restartPolicy: Neverシークレットデータと共に環境変数が設定された Pod の YAML

apiVersion: v1 kind: Pod metadata: name: secret-example-pod spec: containers: - name: secret-test-container image: busybox command: [ "/bin/sh", "-c", "export" ] env: - name: TEST_SECRET_USERNAME_ENV_VAR valueFrom: secretKeyRef:1 name: test-secret key: username restartPolicy: Never- 1

- シークレットキーを使用する環境変数を指定します。

シークレットデータと環境変数が設定されたビルド設定の YAML

apiVersion: build.openshift.io/v1 kind: BuildConfig metadata: name: secret-example-bc spec: strategy: sourceStrategy: env: - name: TEST_SECRET_USERNAME_ENV_VAR valueFrom: secretKeyRef:1 name: test-secret key: username- 1

- シークレットキーを使用する環境変数を指定します。

2.6.2.1. シークレットの作成に関する制限

シークレットを使用するには、Pod がシークレットを参照できる必要があります。シークレットは、以下の 3 つの方法で Pod で使用されます。

- コンテナーの環境変数を事前に設定するために使用される。

- 1 つ以上のコンテナーにマウントされるボリュームのファイルとして使用される。

- Pod のイメージをプルする際に kubelet によって使用される。

ボリュームタイプのシークレットは、ボリュームメカニズムを使用してデータをファイルとしてコンテナーに書き込みます。イメージプルシークレットは、シークレットを namespace のすべての Pod に自動的に挿入するためにサービスアカウントを使用します。

テンプレートにシークレット定義が含まれる場合、テンプレートで指定のシークレットを使用できるようにするには、シークレットのボリュームソースを検証し、指定されるオブジェクト参照が Secret オブジェクトを実際に参照していることを確認できる必要があります。そのため、シークレットはこれに依存する Pod の作成前に作成されている必要があります。最も効果的な方法として、サービスアカウントを使用してシークレットを自動的に挿入することができます。

シークレット API オブジェクトは namespace にあります。それらは同じ namespace の Pod によってのみ参照されます。

個々のシークレットは 1MB のサイズに制限されます。これにより、apiserver および kubelet メモリーを使い切るような大規模なシークレットの作成を防ぐことができます。ただし、小規模なシークレットであってもそれらを数多く作成するとメモリーの消費につながります。

2.6.2.2. 不透明なシークレットの作成

管理者は、不透明なシークレットを作成できます。これにより、任意の値を含むことができる非構造化 key:value のペアを格納できます。

手順

コントロールプレーンノードの YAML ファイルに

Secretオブジェクトを作成します。以下に例を示します。

apiVersion: v1 kind: Secret metadata: name: mysecret type: Opaque1 data: username: dXNlci1uYW1l password: cGFzc3dvcmQ=- 1

- 不透明なシークレットを指定します。

以下のコマンドを使用して

Secretオブジェクトを作成します。$ oc create -f <filename>.yamlPod でシークレットを使用するには、以下を実行します。

- シークレットの作成方法についてセクションに示すように、Pod のサービスアカウントを更新してシークレットを参照します。

-

シークレットの作成方法についてに示すように、シークレットを環境変数またはファイル (

secretボリュームを使用) として使用する Pod を作成します。

2.6.2.3. サービスアカウントトークンシークレットの作成

管理者は、サービスアカウントトークンシークレットを作成できます。これにより、API に対して認証する必要のあるアプリケーションにサービスアカウントトークンを配布できます。

サービスアカウントトークンシークレットを使用する代わりに、TokenRequest API を使用してバインドされたサービスアカウントトークンを取得することをお勧めします。TokenRequest API から取得したトークンは、有効期間が制限されており、他の API クライアントが読み取れないため、シークレットに保存されているトークンよりも安全です。

TokenRequest API を使用できず、読み取り可能な API オブジェクトで有効期限が切れていないトークンのセキュリティーエクスポージャーが許容できる場合にのみ、サービスアカウントトークンシークレットを作成する必要があります。

バインドされたサービスアカウントトークンの作成に関する詳細は、以下の追加リソースセクションを参照してください。

手順

コントロールプレーンノードの YAML ファイルに

Secretオブジェクトを作成します。secretオブジェクトの例:apiVersion: v1 kind: Secret metadata: name: secret-sa-sample annotations: kubernetes.io/service-account.name: "sa-name"1 type: kubernetes.io/service-account-token2 以下のコマンドを使用して

Secretオブジェクトを作成します。$ oc create -f <filename>.yamlPod でシークレットを使用するには、以下を実行します。

- シークレットの作成方法についてセクションに示すように、Pod のサービスアカウントを更新してシークレットを参照します。

-

シークレットの作成方法についてに示すように、シークレットを環境変数またはファイル (

secretボリュームを使用) として使用する Pod を作成します。

2.6.2.4. Basic 認証シークレットの作成

管理者は Basic 認証シークレットを作成できます。これにより、Basic 認証に必要な認証情報を保存できます。このシークレットタイプを使用する場合は、Secret オブジェクトの data パラメーターには、base64 形式でエンコードされた以下のキーが含まれている必要があります。

-

username: 認証用のユーザー名 -

password: 認証のパスワードまたはトークン

stringData パラメーターを使用して、クリアテキストコンテンツを使用できます。

手順

コントロールプレーンノードの YAML ファイルに

Secretオブジェクトを作成します。secretオブジェクトの例apiVersion: v1 kind: Secret metadata: name: secret-basic-auth type: kubernetes.io/basic-auth1 data: stringData:2 username: admin password: t0p-Secret以下のコマンドを使用して

Secretオブジェクトを作成します。$ oc create -f <filename>.yamlPod でシークレットを使用するには、以下を実行します。

- シークレットの作成方法についてセクションに示すように、Pod のサービスアカウントを更新してシークレットを参照します。

-

シークレットの作成方法についてに示すように、シークレットを環境変数またはファイル (

secretボリュームを使用) として使用する Pod を作成します。

2.6.2.5. SSH 認証シークレットの作成

管理者は、SSH 認証シークレットを作成できます。これにより、SSH 認証に使用されるデータを保存できます。このシークレットタイプを使用する場合、Secret オブジェクトの data パラメーターには、使用する SSH 認証情報が含まれている必要があります。

手順

コントロールプレーンノードの YAML ファイルに

Secretオブジェクトを作成します。secretオブジェクトの例:apiVersion: v1 kind: Secret metadata: name: secret-ssh-auth type: kubernetes.io/ssh-auth1 data: ssh-privatekey: |2 MIIEpQIBAAKCAQEAulqb/Y ...以下のコマンドを使用して

Secretオブジェクトを作成します。$ oc create -f <filename>.yamlPod でシークレットを使用するには、以下を実行します。

- シークレットの作成方法についてセクションに示すように、Pod のサービスアカウントを更新してシークレットを参照します。

-

シークレットの作成方法についてに示すように、シークレットを環境変数またはファイル (

secretボリュームを使用) として使用する Pod を作成します。

2.6.2.6. Docker 設定シークレットの作成

管理者は Docker 設定シークレットを作成できます。これにより、コンテナーイメージレジストリーにアクセスするための認証情報を保存できます。

-

kubernetes.io/dockercfg.このシークレットタイプを使用してローカルの Docker 設定ファイルを保存します。secretオブジェクトのdataパラメーターには、base64 形式でエンコードされた.dockercfgファイルの内容が含まれている必要があります。 -

kubernetes.io/dockerconfigjson.このシークレットタイプを使用して、ローカルの Docker 設定 JSON ファイルを保存します。secretオブジェクトのdataパラメーターには、base64 形式でエンコードされた.docker/config.jsonファイルの内容が含まれている必要があります。

手順

コントロールプレーンノードの YAML ファイルに

Secretオブジェクトを作成します。Docker 設定の

secretオブジェクトの例apiVersion: v1 kind: Secret metadata: name: secret-docker-cfg namespace: my-project type: kubernetes.io/dockerconfig1 data: .dockerconfig:bm5ubm5ubm5ubm5ubm5ubm5ubm5ubmdnZ2dnZ2dnZ2dnZ2dnZ2dnZ2cgYXV0aCBrZXlzCg==2 Docker 設定の JSON

secretオブジェクトの例apiVersion: v1 kind: Secret metadata: name: secret-docker-json namespace: my-project type: kubernetes.io/dockerconfig1 data: .dockerconfigjson:bm5ubm5ubm5ubm5ubm5ubm5ubm5ubmdnZ2dnZ2dnZ2dnZ2dnZ2dnZ2cgYXV0aCBrZXlzCg==2 以下のコマンドを使用して

Secretオブジェクトを作成します。$ oc create -f <filename>.yamlPod でシークレットを使用するには、以下を実行します。

- シークレットの作成方法についてセクションに示すように、Pod のサービスアカウントを更新してシークレットを参照します。

-

シークレットの作成方法についてに示すように、シークレットを環境変数またはファイル (

secretボリュームを使用) として使用する Pod を作成します。

2.6.3. シークレットの更新方法

シークレットの値を変更する場合、値 (すでに実行されている Pod で使用される値) は動的に変更されません。シークレットを変更するには、元の Pod を削除してから新規の Pod を作成する必要があります (同じ PodSpec を使用する場合があります)。

シークレットの更新は、新規コンテナーイメージのデプロイメントと同じワークフローで実行されます。kubectl rolling-update コマンドを使用できます。

シークレットの resourceVersion 値は参照時に指定されません。したがって、シークレットが Pod の起動と同じタイミングで更新される場合、Pod に使用されるシークレットのバージョンは定義されません。

現時点で、Pod の作成時に使用されるシークレットオブジェクトのリソースバージョンを確認することはできません。コントローラーが古い resourceVersion を使用して Pod を再起動できるように、Pod がこの情報を報告できるようにすることが予定されています。それまでは既存シークレットのデータを更新せずに別の名前で新規のシークレットを作成します。

2.6.4. シークレットで署名証明書を使用する方法

サービスの通信を保護するため、プロジェクト内のシークレットに追加可能な、署名されたサービス証明書/キーペアを生成するように OpenShift Container Platform を設定することができます。

サービス提供証明書のシークレット は、追加設定なしの証明書を必要とする複雑なミドルウェアアプリケーションをサポートするように設計されています。これにはノードおよびマスターの管理者ツールで生成されるサーバー証明書と同じ設定が含まれます。

サービス提供証明書のシークレット用に設定されるサービス Pod 仕様

apiVersion: v1

kind: Service

metadata:

name: registry

annotations:

service.beta.openshift.io/serving-cert-secret-name: registry-cert

# ...- 1

- 証明書の名前を指定します。

他の Pod は Pod に自動的にマウントされる /var/run/secrets/kubernetes.io/serviceaccount/service-ca.crt ファイルの CA バンドルを使用して、クラスターで作成される証明書 (内部 DNS 名の場合にのみ署名される) を信頼できます。

この機能の署名アルゴリズムは x509.SHA256WithRSA です。ローテーションを手動で実行するには、生成されたシークレットを削除します。新規の証明書が作成されます。

2.6.4.1. シークレットで使用する署名証明書の生成

署名されたサービス証明書/キーペアを Pod で使用するには、サービスを作成または編集して service.beta.openshift.io/serving-cert-secret-name アノテーションを追加した後に、シークレットを Pod に追加します。

手順

サービス提供証明書のシークレット を作成するには、以下を実行します。

-

サービスの

Pod仕様を編集します。 シークレットに使用する名前に

service.beta.openshift.io/serving-cert-secret-nameアノテーションを追加します。kind: Service apiVersion: v1 metadata: name: my-service annotations: service.beta.openshift.io/serving-cert-secret-name: my-cert1 spec: selector: app: MyApp ports: - protocol: TCP port: 80 targetPort: 9376証明書およびキーは PEM 形式であり、それぞれ

tls.crtおよびtls.keyに保存されます。サービスを作成します。

$ oc create -f <file-name>.yamlシークレットを表示して、作成されていることを確認します。

すべてのシークレットの一覧を表示します。

$ oc get secrets出力例

NAME TYPE DATA AGE my-cert kubernetes.io/tls 2 9mシークレットの詳細を表示します。

$ oc describe secret my-cert出力例

Name: my-cert Namespace: openshift-console Labels: <none> Annotations: service.beta.openshift.io/expiry: 2023-03-08T23:22:40Z service.beta.openshift.io/originating-service-name: my-service service.beta.openshift.io/originating-service-uid: 640f0ec3-afc2-4380-bf31-a8c784846a11 service.beta.openshift.io/expiry: 2023-03-08T23:22:40Z Type: kubernetes.io/tls Data ==== tls.key: 1679 bytes tls.crt: 2595 bytes

このシークレットを使って

Pod仕様を編集します。apiVersion: v1 kind: Pod metadata: name: my-service-pod spec: containers: - name: mypod image: redis volumeMounts: - name: foo mountPath: "/etc/foo" volumes: - name: foo secret: secretName: my-cert items: - key: username path: my-group/my-username mode: 511これが利用可能な場合、Pod が実行されます。この証明書は内部サービス DNS 名、

<service.name>.<service.namespace>.svcに適しています。証明書/キーのペアは有効期限に近づくと自動的に置換されます。シークレットの

service.beta.openshift.io/expiryアノテーションで RFC3339 形式の有効期限の日付を確認します。注記ほとんどの場合、サービス DNS 名

<service.name>.<service.namespace>.svcは外部にルーティング可能ではありません。<service.name>.<service.namespace>.svcの主な使用方法として、クラスターまたはサービス間の通信用として、 re-encrypt ルートで使用されます。

2.6.5. シークレットのトラブルシューティング

サービス証明書の生成は以下を出して失敗します (サービスの service.beta.openshift.io/serving-cert-generation-error アノテーションには以下が含まれます)。

secret/ssl-key references serviceUID 62ad25ca-d703-11e6-9d6f-0e9c0057b608, which does not match 77b6dd80-d716-11e6-9d6f-0e9c0057b60

証明書を生成したサービスがすでに存在しないか、またはサービスに異なる serviceUID があります。古いシークレットを削除し、サービスのアノテーション (service.beta.openshift.io/serving-cert-generation-error、service.beta.openshift.io/serving-cert-generation-error-num) をクリアして証明書の再生成を強制的に実行する必要があります。

シークレットを削除します。

$ oc delete secret <secret_name>アノテーションをクリアします。

$ oc annotate service <service_name> service.beta.openshift.io/serving-cert-generation-error-$ oc annotate service <service_name> service.beta.openshift.io/serving-cert-generation-error-num-

アノテーションを削除するコマンドでは、削除するアノテーション名の後に - を付けます。

2.7. 設定マップの作成および使用

以下のセクションでは、設定マップおよびそれらを作成し、使用する方法を定義します。

2.7.1. 設定マップについて

数多くのアプリケーションには、設定ファイル、コマンドライン引数、および環境変数の組み合わせを使用した設定が必要です。OpenShift Container Platform では、これらの設定アーティファクトは、コンテナー化されたアプリケーションを移植可能な状態に保つためにイメージコンテンツから切り離されます。

ConfigMap オブジェクトは、コンテナーを OpenShift Container Platform に依存させないようにする一方で、コンテナーに設定データを挿入するメカニズムを提供します。設定マップは、個々のプロパティーなどの粒度の細かい情報や、設定ファイル全体または JSON Blob などの粒度の荒い情報を保存するために使用できます。

ConfigMap API オブジェクトは、Pod で使用したり、コントローラーなどのシステムコンポーネントの設定データを保存するために使用できる設定データのキーと値のペアを保持します。以下に例を示します。

ConfigMap オブジェクト定義

kind: ConfigMap

apiVersion: v1

metadata:

creationTimestamp: 2016-02-18T19:14:38Z

name: example-config

namespace: default

data:

example.property.1: hello

example.property.2: world

example.property.file: |-

property.1=value-1

property.2=value-2

property.3=value-3

binaryData:

bar: L3Jvb3QvMTAw

イメージなどのバイナリーファイルから設定マップを作成する場合に、binaryData フィールドを使用できます。

設定データはさまざまな方法で Pod 内で使用できます。設定マップは以下を実行するために使用できます。

- コンテナーへの環境変数値の設定

- コンテナーのコマンドライン引数の設定

- ボリュームの設定ファイルの設定

ユーザーとシステムコンポーネントの両方が設定データを設定マップに保存できます。

設定マップはシークレットに似ていますが、機密情報を含まない文字列の使用をより効果的にサポートするように設計されています。

設定マップの制限

設定マップは、コンテンツを Pod で使用される前に作成する必要があります。

コントローラーは、設定データが不足していても、その状況を許容して作成できます。ケースごとに設定マップを使用して設定される個々のコンポーネントを参照してください。

ConfigMap オブジェクトはプロジェクト内にあります。

それらは同じプロジェクトの Pod によってのみ参照されます。

Kubelet は、API サーバーから取得する Pod の設定マップの使用のみをサポートします。

これには、CLI を使用して作成された Pod、またはレプリケーションコントローラーから間接的に作成された Pod が含まれます。これには、OpenShift Container Platform ノードの --manifest-url フラグ、その --config フラグ、またはその REST API を使用して作成された Pod は含まれません (これらは Pod を作成する一般的な方法ではありません)。

2.7.2. OpenShift Container Platform Web コンソールでの設定マップの作成

OpenShift Container Platform Web コンソールで設定マップを作成できます。

手順

クラスター管理者として設定マップを作成するには、以下を実行します。

-

Administrator パースペクティブで

Workloads→Config Mapsを選択します。 - ページの右上にある Create Config Map を選択します。

- 設定マップの内容を入力します。

- Create を選択します。

-

Administrator パースペクティブで

開発者として設定マップを作成するには、以下を実行します。

-

開発者パースペクティブで、

Config Mapsを選択します。 - ページの右上にある Create Config Map を選択します。

- 設定マップの内容を入力します。

- Create を選択します。

-

開発者パースペクティブで、

2.7.3. CLI を使用して設定マップを作成する

以下のコマンドを使用して、ディレクトリー、特定のファイルまたはリテラル値から設定マップを作成できます。

手順

設定マップの作成

$ oc create configmap <configmap_name> [options]

2.7.3.1. ディレクトリーからの設定マップの作成

ディレクトリーから設定マップを作成できます。この方法では、ディレクトリー内の複数のファイルを使用して設定マップを作成できます。

手順

以下の例の手順は、ディレクトリーから設定マップを作成する方法を説明しています。

設定マップの設定に必要なデータがすでに含まれるファイルのあるディレクトリーについて見てみましょう。

$ ls example-files出力例

game.properties ui.properties$ cat example-files/game.properties出力例

enemies=aliens lives=3 enemies.cheat=true enemies.cheat.level=noGoodRotten secret.code.passphrase=UUDDLRLRBABAS secret.code.allowed=true secret.code.lives=30$ cat example-files/ui.properties出力例

color.good=purple color.bad=yellow allow.textmode=true how.nice.to.look=fairlyNice次のコマンドを入力して、このディレクトリー内の各ファイルの内容を保持する設定マップを作成します。

$ oc create configmap game-config \ --from-file=example-files/--from-fileオプションがディレクトリーを参照する場合、そのディレクトリーに直接含まれる各ファイルが ConfigMap でキーを設定するために使用されます。 このキーの名前はファイル名であり、キーの値はファイルの内容になります。たとえば、前のコマンドは次の設定マップを作成します。

$ oc describe configmaps game-config出力例

Name: game-config Namespace: default Labels: <none> Annotations: <none> Data game.properties: 158 bytes ui.properties: 83 bytesマップにある 2 つのキーが、コマンドで指定されたディレクトリーのファイル名に基づいて作成されていることに気づかれることでしょう。それらのキーの内容のサイズは大きくなる可能性があるため、

oc describeの出力はキーの名前とキーのサイズのみを表示します。-oオプションを使用してオブジェクトのoc getコマンドを入力し、キーの値を表示します。$ oc get configmaps game-config -o yaml出力例

apiVersion: v1 data: game.properties: |- enemies=aliens lives=3 enemies.cheat=true enemies.cheat.level=noGoodRotten secret.code.passphrase=UUDDLRLRBABAS secret.code.allowed=true secret.code.lives=30 ui.properties: | color.good=purple color.bad=yellow allow.textmode=true how.nice.to.look=fairlyNice kind: ConfigMap metadata: creationTimestamp: 2016-02-18T18:34:05Z name: game-config namespace: default resourceVersion: "407" selflink: /api/v1/namespaces/default/configmaps/game-config uid: 30944725-d66e-11e5-8cd0-68f728db1985

2.7.3.2. ファイルから設定マップを作成する

ファイルから設定マップを作成できます。

手順

以下の手順例では、ファイルから設定マップを作成する方法を説明します。

ファイルから設定マップを作成する場合、UTF8 以外のデータを破損することなく、UTF8 以外のデータを含むファイルをこの新規フィールドに配置できます。OpenShift Container Platform はバイナリーファイルを検出し、ファイルを MIME として透過的にエンコーディングします。サーバーでは、データを破損することなく MIME ペイロードがデコーディングされ、保存されます。

--from-file オプションを CLI に複数回渡すことができます。以下の例を実行すると、ディレクトリーからの作成の例と同等の結果を出すことができます。

特定のファイルを指定して設定マップを作成します。

$ oc create configmap game-config-2 \ --from-file=example-files/game.properties \ --from-file=example-files/ui.properties結果を確認します。

$ oc get configmaps game-config-2 -o yaml出力例

apiVersion: v1 data: game.properties: |- enemies=aliens lives=3 enemies.cheat=true enemies.cheat.level=noGoodRotten secret.code.passphrase=UUDDLRLRBABAS secret.code.allowed=true secret.code.lives=30 ui.properties: | color.good=purple color.bad=yellow allow.textmode=true how.nice.to.look=fairlyNice kind: ConfigMap metadata: creationTimestamp: 2016-02-18T18:52:05Z name: game-config-2 namespace: default resourceVersion: "516" selflink: /api/v1/namespaces/default/configmaps/game-config-2 uid: b4952dc3-d670-11e5-8cd0-68f728db1985

ファイルからインポートされたコンテンツの設定マップで設定するキーを指定できます。これは、key=value 式を --from-file オプションに渡すことで設定できます。以下に例を示します。

キーと値のペアを指定して、設定マップを作成します。

$ oc create configmap game-config-3 \ --from-file=game-special-key=example-files/game.properties結果を確認します。

$ oc get configmaps game-config-3 -o yaml出力例

apiVersion: v1 data: game-special-key: |-1 enemies=aliens lives=3 enemies.cheat=true enemies.cheat.level=noGoodRotten secret.code.passphrase=UUDDLRLRBABAS secret.code.allowed=true secret.code.lives=30 kind: ConfigMap metadata: creationTimestamp: 2016-02-18T18:54:22Z name: game-config-3 namespace: default resourceVersion: "530" selflink: /api/v1/namespaces/default/configmaps/game-config-3 uid: 05f8da22-d671-11e5-8cd0-68f728db1985- 1

- これは、先の手順で設定したキーです。

2.7.3.3. リテラル値からの設定マップの作成

設定マップにリテラル値を指定することができます。

手順

--from-literal オプションは、リテラル値をコマンドラインに直接指定できる key=value 構文を取ります。

リテラル値を指定して設定マップを作成します。

$ oc create configmap special-config \ --from-literal=special.how=very \ --from-literal=special.type=charm結果を確認します。

$ oc get configmaps special-config -o yaml出力例

apiVersion: v1 data: special.how: very special.type: charm kind: ConfigMap metadata: creationTimestamp: 2016-02-18T19:14:38Z name: special-config namespace: default resourceVersion: "651" selflink: /api/v1/namespaces/default/configmaps/special-config uid: dadce046-d673-11e5-8cd0-68f728db1985

2.7.4. ユースケース: Pod で設定マップを使用する

以下のセクションでは、Pod で ConfigMap オブジェクトを使用する際のいくつかのユースケースについて説明します。

2.7.4.1. 設定マップの使用によるコンテナーでの環境変数の設定

設定マップはコンテナーで個別の環境変数を設定するために使用したり、有効な環境変数名を生成するすべてのキーを使用してコンテナーで環境変数を設定するために使用したりすることができます。

例として、以下の設定マップについて見てみましょう。

2 つの環境変数を含む ConfigMap

apiVersion: v1

kind: ConfigMap

metadata:

name: special-config

namespace: default

data:

special.how: very

special.type: charm 1 つの環境変数を含む ConfigMap

apiVersion: v1

kind: ConfigMap

metadata:

name: env-config

namespace: default

data:

log_level: INFO 手順

configMapKeyRefセクションを使用して、Pod のこのConfigMapのキーを使用できます。特定の環境変数を挿入するように設定されている

Pod仕様のサンプルapiVersion: v1 kind: Pod metadata: name: dapi-test-pod spec: containers: - name: test-container image: gcr.io/google_containers/busybox command: [ "/bin/sh", "-c", "env" ] env:1 - name: SPECIAL_LEVEL_KEY2 valueFrom: configMapKeyRef: name: special-config3 key: special.how4 - name: SPECIAL_TYPE_KEY valueFrom: configMapKeyRef: name: special-config5 key: special.type6 optional: true7 envFrom:8 - configMapRef: name: env-config9 restartPolicy: Neverこの Pod が実行されると、Pod のログには以下の出力が含まれます。

SPECIAL_LEVEL_KEY=very log_level=INFO

SPECIAL_TYPE_KEY=charm は出力例に一覧表示されません。optional: true が設定されているためです。

2.7.4.2. 設定マップを使用したコンテナーコマンドのコマンドライン引数の設定

設定マップを使用して、コンテナー内のコマンドまたは引数の値を設定することもできます。これは、Kubernetes 置換構文 $(VAR_NAME) を使用して実行できます。次の設定マップを検討してください。

apiVersion: v1

kind: ConfigMap

metadata:

name: special-config

namespace: default

data:

special.how: very

special.type: charm手順

値をコンテナーのコマンドに挿入するには、環境変数で ConfigMap を使用する場合のように環境変数として使用する必要のあるキーを使用する必要があります。次に、

$(VAR_NAME)構文を使用してコンテナーのコマンドでそれらを参照することができます。特定の環境変数を挿入するように設定されている

Pod仕様のサンプルapiVersion: v1 kind: Pod metadata: name: dapi-test-pod spec: containers: - name: test-container image: gcr.io/google_containers/busybox command: [ "/bin/sh", "-c", "echo $(SPECIAL_LEVEL_KEY) $(SPECIAL_TYPE_KEY)" ]1 env: - name: SPECIAL_LEVEL_KEY valueFrom: configMapKeyRef: name: special-config key: special.how - name: SPECIAL_TYPE_KEY valueFrom: configMapKeyRef: name: special-config key: special.type restartPolicy: Never- 1

- 環境変数として使用するキーを使用して、コンテナーのコマンドに値を挿入します。

この Pod が実行されると、test-container コンテナーで実行される echo コマンドの出力は以下のようになります。

very charm

2.7.4.3. 設定マップの使用によるボリュームへのコンテンツの挿入

設定マップを使用して、コンテンツをボリュームに挿入することができます。

ConfigMap カスタムリソース (CR) の例

apiVersion: v1

kind: ConfigMap

metadata:

name: special-config

namespace: default

data:

special.how: very

special.type: charm手順

設定マップを使用してコンテンツをボリュームに挿入するには、2 つの異なるオプションを使用できます。

設定マップを使用してコンテンツをボリュームに挿入するための最も基本的な方法は、キーがファイル名であり、ファイルの内容がキーの値になっているファイルでボリュームを設定する方法です。

apiVersion: v1 kind: Pod metadata: name: dapi-test-pod spec: containers: - name: test-container image: gcr.io/google_containers/busybox command: [ "/bin/sh", "cat", "/etc/config/special.how" ] volumeMounts: - name: config-volume mountPath: /etc/config volumes: - name: config-volume configMap: name: special-config1 restartPolicy: Never- 1

- キーを含むファイル。

この Pod が実行されると、cat コマンドの出力は以下のようになります。

very設定マップキーが投影されるボリューム内のパスを制御することもできます。