Chapter 4. Pipelines

4.1. Red Hat OpenShift Pipelines release notes

Red Hat OpenShift Pipelines is a cloud-native CI/CD experience based on the Tekton project which provides:

- Standard Kubernetes-native pipeline definitions (CRDs).

- Serverless pipelines with no CI server management overhead.

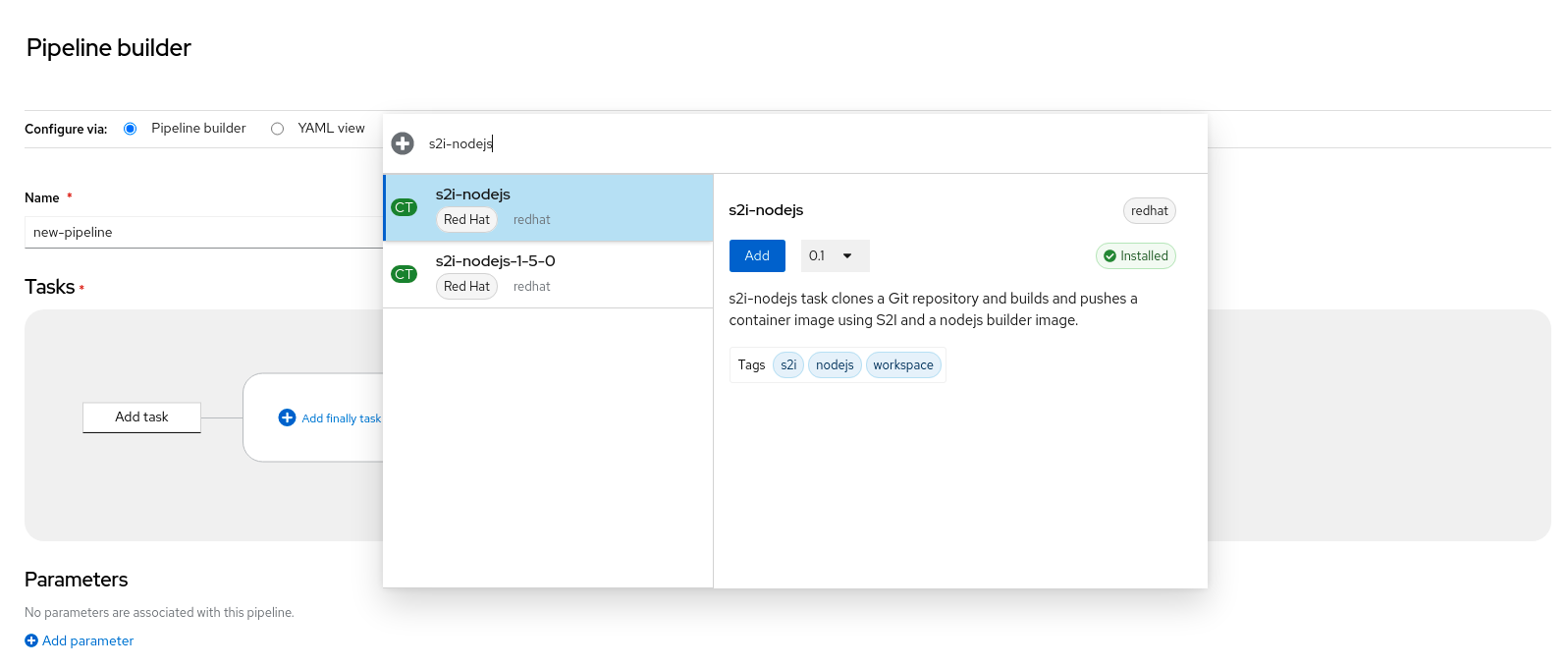

- Extensibility to build images using any Kubernetes tool, such as S2I, Buildah, JIB, and Kaniko.

- Portability across any Kubernetes distribution.

- Powerful CLI for interacting with pipelines.

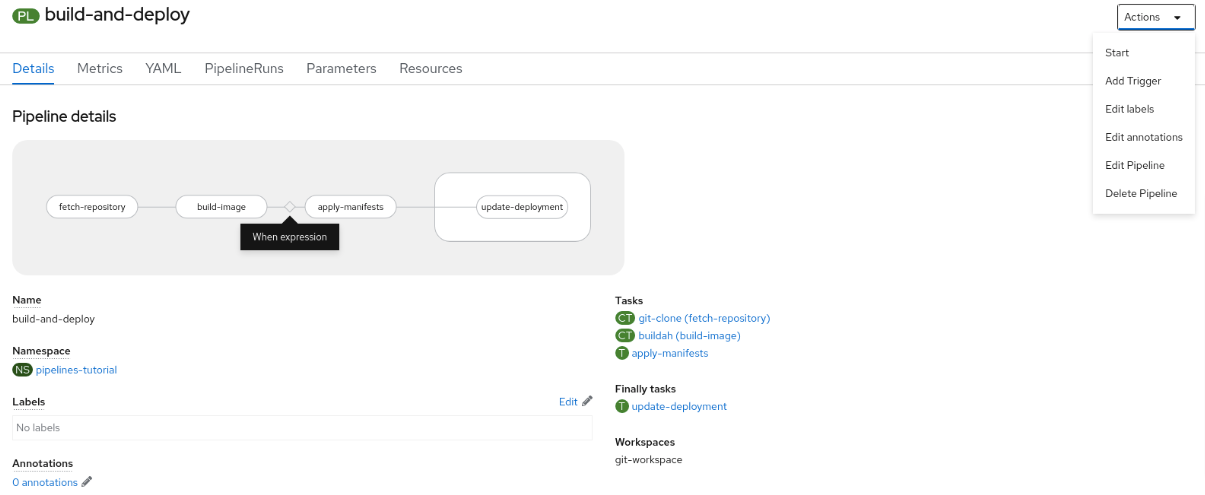

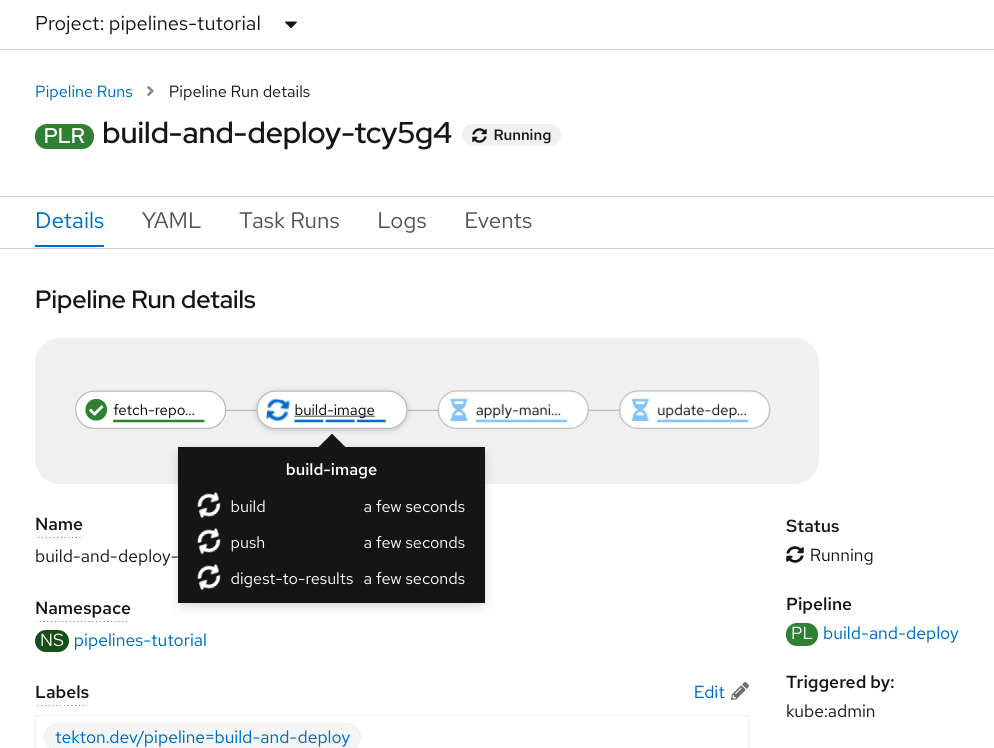

- Integrated user experience with the Developer perspective of the OpenShift Container Platform web console.

For an overview of Red Hat OpenShift Pipelines, see Understanding OpenShift Pipelines.

4.1.1. Compatibility and support matrix

Some features in this release are currently in Technology Preview. These experimental features are not intended for production use.

In the table, features are marked with the following statuses:

| TP | Technology Preview |

| GA | General Availability |

| Red Hat OpenShift Pipelines Version | Component Version | OpenShift Version | Support Status | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Operator | Pipelines | Triggers | CLI | Catalog | Chains | Hub | Pipelines as Code | ||

| 1.7 | 0.33.x | 0.19.x | 0.23.x | 0.33 | 0.8.0 (TP) | 1.7.0 (TP) | 0.5.x (TP) | 4.9, 4.10, 4.11 | GA |

| 1.6 | 0.28.x | 0.16.x | 0.21.x | 0.28 | N/A | N/A | N/A | 4.9 | GA |

| 1.5 | 0.24.x | 0.14.x (TP) | 0.19.x | 0.24 | N/A | N/A | N/A | 4.8 | GA |

| 1.4 | 0.22.x | 0.12.x (TP) | 0.17.x | 0.22 | N/A | N/A | N/A | 4.7 | GA |

In Red Hat OpenShift Pipelines 1.6, Triggers 0.16.x transitioned to GA status. In earlier versions, Triggers was available as a technology preview feature.

For questions and feedback, you can send an email to the product team at pipelines-interest@redhat.com.

4.1.2. Making open source more inclusive

Red Hat is committed to replacing problematic language in our code, documentation, and web properties. We are beginning with these four terms: master, slave, blacklist, and whitelist. Because of the enormity of this endeavor, these changes will be implemented gradually over several upcoming releases. For more details, see our CTO Chris Wright’s message.

4.1.3. Release notes for Red Hat OpenShift Pipelines General Availability 1.7

With this update, Red Hat OpenShift Pipelines General Availability (GA) 1.7 is available on OpenShift Container Platform 4.9, 4.10, and 4.11.

4.1.3.1. New features

In addition to the fixes and stability improvements, the following sections highlight what is new in Red Hat OpenShift Pipelines 1.7.

4.1.3.1.1. Pipelines

With this update,

pipelines-<version>is the default channel to install the Red Hat OpenShift Pipelines Operator. For example, the default channel to install the Pipelines Operator version1.7ispipelines-1.7. Cluster administrators can also use thelatestchannel to install the most recent stable version of the Operator.NoteThe

previewandstablechannels will be deprecated and removed in a future release.When you run a command in a user namespace, your container runs as

root(user id0) but has user privileges on the host. With this update, to run pods in the user namespace, you must pass the annotations that CRI-O expects.-

To add these annotations for all users, run the

oc edit clustertask buildahcommand and edit thebuildahcluster task. - To add the annotations to a specific namespace, export the cluster task as a task to that namespace.

-

To add these annotations for all users, run the

Before this update, if certain conditions were not met, the

whenexpression skipped aTaskobject and its dependent tasks. With this update, you can scope thewhenexpression to guard theTaskobject only, not its dependent tasks. To enable this update, set thescope-when-expressions-to-taskflag totruein theTektonConfigCRD.NoteThe

scope-when-expressions-to-taskflag is deprecated and will be removed in a future release. As a best practice for Pipelines, usewhenexpressions scoped to the guardedTaskonly.-

With this update, you can use variable substitution in the

subPathfield of a workspace within a task. With this update, you can reference parameters and results by using a bracket notation with single or double quotes. Prior to this update, you could only use the dot notation. For example, the following are now equivalent:

$(param.myparam),$(param['myparam']), and$(param["myparam"]).You can use single or double quotes to enclose parameter names that contain problematic characters, such as

".". For example,$(param['my.param'])and$(param["my.param"]).

-

With this update, you can include the

onErrorparameter of a step in the task definition without enabling theenable-api-fieldsflag.

4.1.3.1.2. Triggers

-

With this update, the

feature-flag-triggersconfig map has a new fieldlabels-exclusion-pattern. You can set the value of this field to a regular expression (regex) pattern. The controller filters out labels that match the regex pattern from propagating from the event listener to the resources created for the event listener. -

With this update, the

TriggerGroupsfield is added to theEventListenerspecification. Using this field, you can specify a set of interceptors to run before selecting and running a group of triggers. To enable this feature, set theenable-api-fieldsflag in thefeature-flags-triggersconfig map toalpha. -

With this update,

Triggerresources support custom runs defined by aTriggerTemplatetemplate. -

With this update, Triggers support emitting Kubernetes events from an

EventListenerpod. -

With this update, count metrics are available for the following objects:

ClusterInteceptor,EventListener,TriggerTemplate,ClusterTriggerBinding, andTriggerBinding. -

This update adds the

ServicePortspecification to Kubernetes resource. You can use this specification to modify which port exposes the event listener service. The default port is8080. -

With this update, you can use the

targetURIfield in theEventListenerspecification to send cloud events during trigger processing. To enable this feature, set theenable-api-fieldsflag in thefeature-flags-triggersconfig map toalpha. -

With this update, the

tekton-triggers-eventlistener-rolesobject now has apatchverb, in addition to thecreateverb that already exists. -

With this update, the

securityContext.runAsUserparameter is removed from event listener deployment.

4.1.3.1.3. CLI

With this update, the

tkn [pipeline | pipelinerun] exportcommand exports a pipeline or pipeline run as a YAML file. For example:Export a pipeline named

test_pipelinein theopenshift-pipelinesnamespace:$ tkn pipeline export test_pipeline -n openshift-pipelinesExport a pipeline run named

test_pipeline_runin theopenshift-pipelinesnamespace:$ tkn pipelinerun export test_pipeline_run -n openshift-pipelines

-

With this update, the

--graceoption is added to thetkn pipelinerun cancel. Use the--graceoption to terminate a pipeline run gracefully instead of forcing the termination. To enable this feature, set theenable-api-fieldsflag in thefeature-flagsconfig map toalpha. This update adds the Operator and Chains versions to the output of the

tkn versioncommand.ImportantTekton Chains is a Technology Preview feature.

-

With this update, the

tkn pipelinerun describecommand displays all canceled task runs, when you cancel a pipeline run. Before this fix, only one task run was displayed. -

With this update, you can skip supplying the asking specifications for optional workspace when you run the

tkn [t | p | ct] startcommand skips with the--skip-optional-workspaceflag. You can also skip it when running in interactive mode. With this update, you can use the

tkn chainscommand to manage Tekton Chains. You can also use the--chains-namespaceoption to specify the namespace where you want to install Tekton Chains.ImportantTekton Chains is a Technology Preview feature.

4.1.3.1.4. Operator

With this update, you can use the Red Hat OpenShift Pipelines Operator to install and deploy Tekton Hub and Tekton Chains.

ImportantTekton Chains and deployment of Tekton Hub on a cluster are Technology Preview features.

With this update, you can find and use Pipelines as Code (PAC) as an add-on option.

ImportantPipelines as Code is a Technology Preview feature.

With this update, you can now disable the installation of community cluster tasks by setting the

communityClusterTasksparameter tofalse. For example:... spec: profile: all targetNamespace: openshift-pipelines addon: params: - name: clusterTasks value: "true" - name: pipelineTemplates value: "true" - name: communityClusterTasks value: "false" ...With this update, you can disable the integration of Tekton Hub with the Developer perspective by setting the

enable-devconsole-integrationflag in theTektonConfigcustom resource tofalse. For example:... hub: params: - name: enable-devconsole-integration value: "true" ...-

With this update, the

operator-config.yamlconfig map enables the output of thetkn versioncommand to display of the Operator version. -

With this update, the version of the

argocd-task-sync-and-waittasks is modified tov0.2. -

With this update to the

TektonConfigCRD, theoc get tektonconfigcommand displays the OPerator version. - With this update, service monitor is added to the Triggers metrics.

4.1.3.1.5. Hub

Deploying Tekton Hub on a cluster is a Technology Preview feature.

Tekton Hub helps you discover, search, and share reusable tasks and pipelines for your CI/CD workflows. A public instance of Tekton Hub is available at hub.tekton.dev.

Staring with Red Hat OpenShift Pipelines 1.7, cluster administrators can also install and deploy a custom instance of Tekton Hub on enterprise clusters. You can curate a catalog with reusable tasks and pipelines specific to your organization.

4.1.3.1.6. Chains

Tekton Chains is a Technology Preview feature.

Tekton Chains is a Kubernetes Custom Resource Definition (CRD) controller. You can use it to manage the supply chain security of the tasks and pipelines created using Red Hat OpenShift Pipelines.

By default, Tekton Chains monitors the task runs in your OpenShift Container Platform cluster. Chains takes snapshots of completed task runs, converts them to one or more standard payload formats, and signs and stores all artifacts.

Tekton Chains supports the following features:

-

You can sign task runs, task run results, and OCI registry images with cryptographic key types and services such as

cosign. -

You can use attestation formats such as

in-toto. - You can securely store signatures and signed artifacts using OCI repository as a storage backend.

4.1.3.1.7. Pipelines as Code (PAC)

Pipelines as Code is a Technology Preview feature.

With Pipelines as Code, cluster administrators and users with the required privileges can define pipeline templates as part of source code Git repositories. When triggered by a source code push or a pull request for the configured Git repository, the feature runs the pipeline and reports status.

Pipelines as Code supports the following features:

- Pull request status. When iterating over a pull request, the status and control of the pull request is exercised on the platform hosting the Git repository.

- GitHub checks the API to set the status of a pipeline run, including rechecks.

- GitHub pull request and commit events.

-

Pull request actions in comments, such as

/retest. - Git events filtering, and a separate pipeline for each event.

- Automatic task resolution in Pipelines for local tasks, Tekton Hub, and remote URLs.

- Use of GitHub blobs and objects API for retrieving configurations.

-

Access Control List (ACL) over a GitHub organization, or using a Prow-style

OWNERfile. -

The

tkn-pacplugin for thetknCLI tool, which you can use to manage Pipelines as Code repositories and bootstrapping. - Support for GitHub Application, GitHub Webhook, Bitbucket Server, and Bitbucket Cloud.

4.1.3.2. Deprecated features

-

Breaking change: This update removes the

disable-working-directory-overwriteanddisable-home-env-overwritefields from theTektonConfigcustom resource (CR). As a result, theTektonConfigCR no longer automatically sets the$HOMEenvironment variable andworkingDirparameter. You can still set the$HOMEenvironment variable andworkingDirparameter by using theenvandworkingDirfields in theTaskcustom resource definition (CRD).

-

The

Conditionscustom resource definition (CRD) type is deprecated and planned to be removed in a future release. Instead, use the recommendedWhenexpression.

-

Breaking change: The

Triggersresource validates the templates and generates an error if you do not specify theEventListenerandTriggerBindingvalues.

4.1.3.3. Known issues

When you run Maven and Jib-Maven cluster tasks, the default container image is supported only on Intel (x86) architecture. Therefore, tasks will fail on IBM Power Systems (ppc64le), IBM Z, and LinuxONE (s390x) clusters. As a workaround, you can specify a custom image by setting the

MAVEN_IMAGEparameter value tomaven:3.6.3-adoptopenjdk-11.TipBefore you install tasks based on the Tekton Catalog on IBM Power Systems (ppc64le), IBM Z, and LinuxONE (s390x) using

tkn hub, verify if the task can be executed on these platforms. To check ifppc64leands390xare listed in the "Platforms" section of the task information, you can run the following command:tkn hub info task <name>-

On IBM Power Systems, IBM Z, and LinuxONE, the

s2i-dotnetcluster task is unsupported. You cannot use the

nodejs:14-ubi8-minimalimage stream because doing so generates the following errors:STEP 7: RUN /usr/libexec/s2i/assemble /bin/sh: /usr/libexec/s2i/assemble: No such file or directory subprocess exited with status 127 subprocess exited with status 127 error building at STEP "RUN /usr/libexec/s2i/assemble": exit status 127 time="2021-11-04T13:05:26Z" level=error msg="exit status 127"

-

Implicit parameter mapping incorrectly passes parameters from the top-level

PipelineorPipelineRundefinitions to thetaskReftasks. Mapping should only occur from a top-level resource to tasks with in-linetaskSpecspecifications. This issue only affects users who have set theenable-api-fieldsfeature flag toalpha.

4.1.3.4. Fixed issues

-

With this update, if metadata such as

labelsandannotationsare present in bothPipelineandPipelineRunobject definitions, the values in thePipelineRuntype takes precedence. You can observe similar behavior forTaskandTaskRunobjects. -

With this update, if the

timeouts.tasksfield or thetimeouts.finallyfield is set to0, then thetimeouts.pipelineis also set to0. -

With this update, the

-xset flag is removed from scripts that do not use a shebang. The fix reduces potential data leak from script execution. -

With this update, any backslash character present in the usernames in Git credentials is escaped with an additional backslash in the

.gitconfigfile.

-

With this update, the

finalizerproperty of theEventListenerobject is not necessary for cleaning up logging and config maps. - With this update, the default HTTP client associated with the event listener server is removed, and a custom HTTP client added. As a result, the timeouts have improved.

- With this update, the Triggers cluster role now works with owner references.

- With this update, the race condition in the event listener does not happen when multiple interceptors return extensions.

-

With this update, the

tkn pr deletecommand does not delete the pipeline runs with theignore-runningflag.

- With this update, the Operator pods do not continue restarting when you modify any add-on parameters.

-

With this update, the

tkn serveCLI pod is scheduled on infra nodes, if not configured in the subscription and config custom resources. - With this update, cluster tasks with specified versions are not deleted during upgrade.

4.1.3.5. Release notes for Red Hat OpenShift Pipelines General Availability 1.7.1

With this update, Red Hat OpenShift Pipelines General Availability (GA) 1.7.1 is available on OpenShift Container Platform 4.9, 4.10, and 4.11.

4.1.3.5.1. Fixed issues

- Before this update, upgrading the Red Hat OpenShift Pipelines Operator deleted the data in the database associated with Tekton Hub and installed a new database. With this update, an Operator upgrade preserves the data.

- Before this update, only cluster administrators could access pipeline metrics in the OpenShift Container Platform console. With this update, users with other cluster roles also can access the pipeline metrics.

-

Before this update, pipeline runs failed for pipelines containing tasks that emit large termination messages. The pipeline runs failed because the total size of termination messages of all containers in a pod cannot exceed 12 KB. With this update, the

place-toolsandstep-initinitialization containers that uses the same image are merged to reduce the number of containers running in each tasks’s pod. The solution reduces the chance of failed pipeline runs by minimizing the number of containers running in a task’s pod. However, it does not remove the limitation of the maximum allowed size of a termination message. -

Before this update, attempts to access resource URLs directly from the Tekton Hub web console resulted in an Nginx

404error. With this update, the Tekton Hub web console image is fixed to allow accessing resource URLs directly from the Tekton Hub web console. - Before this update, for each namespace the resource pruner job created a separate container to prune resources. With this update, the resource pruner job runs commands for all namespaces as a loop in one container.

4.1.3.6. Release notes for Red Hat OpenShift Pipelines General Availability 1.7.2

With this update, Red Hat OpenShift Pipelines General Availability (GA) 1.7.2 is available on OpenShift Container Platform 4.9, 4.10, and the upcoming version.

4.1.3.6.1. Known issues

-

The

chains-configconfig map for Tekton Chains in theopenshift-pipelinesnamespace is automatically reset to default after upgrading the Red Hat OpenShift Pipelines Operator. Currently, there is no workaround for this issue.

4.1.3.6.2. Fixed issues

-

Before this update, tasks on Pipelines 1.7.1 failed on using

initas the first argument, followed by two or more arguments. With this update, the flags are parsed correctly and the task runs are successful. Before this update, installation of the Red Hat OpenShift Pipelines Operator on OpenShift Container Platform 4.9 and 4.10 failed due to invalid role binding, with the following error message:

error updating rolebinding openshift-operators-prometheus-k8s-read-binding: RoleBinding.rbac.authorization.k8s.io "openshift-operators-prometheus-k8s-read-binding" is invalid: roleRef: Invalid value: rbac.RoleRef{APIGroup:"rbac.authorization.k8s.io", Kind:"Role", Name:"openshift-operator-read"}: cannot change roleRefWith this update, the Red Hat OpenShift Pipelines Operator installs with distinct role binding namespaces to avoid conflict with installation of other Operators.

Before this update, upgrading the Operator triggered a reset of the

signing-secretssecret key for Tekton Chains to its default value. With this update, the custom secret key persists after you upgrade the Operator.NoteUpgrading to Red Hat OpenShift Pipelines 1.7.2 resets the key. However, when you upgrade to future releases, the key is expected to persist.

Before this update, all S2I build tasks failed with an error similar to the following message:

Error: error writing "0 0 4294967295\n" to /proc/22/uid_map: write /proc/22/uid_map: operation not permitted time="2022-03-04T09:47:57Z" level=error msg="error writing \"0 0 4294967295\\n\" to /proc/22/uid_map: write /proc/22/uid_map: operation not permitted" time="2022-03-04T09:47:57Z" level=error msg="(unable to determine exit status)"With this update, the

pipelines-sccsecurity context constraint (SCC) is compatible with theSETFCAPcapability necessary forBuildahandS2Icluster tasks. As a result, theBuildahandS2Ibuild tasks can run successfully.To successfully run the

Buildahcluster task andS2Ibuild tasks for applications written in various languages and frameworks, add the following snippet for appropriatestepsobjects such asbuildandpush:securityContext: capabilities: add: ["SETFCAP"]

4.1.3.7. Release notes for Red Hat OpenShift Pipelines General Availability 1.7.3

With this update, Red Hat OpenShift Pipelines General Availability (GA) 1.7.3 is available on OpenShift Container Platform 4.9, 4.10, and 4.11.

4.1.3.7.1. Fixed issues

-

Before this update, the Operator failed when creating RBAC resources if any namespace was in a

Terminatingstate. With this update, the Operator ignores namespaces in aTerminatingstate and creates the RBAC resources. -

Previously, upgrading the Red Hat OpenShift Pipelines Operator caused the

pipelineservice account to be recreated, which meant that the secrets linked to the service account were lost. This update fixes the issue. During upgrades, the Operator no longer recreates thepipelineservice account. As a result, secrets attached to thepipelineservice account persist after upgrades, and the resources (tasks and pipelines) continue to work correctly.

4.1.4. Release notes for Red Hat OpenShift Pipelines General Availability 1.6

With this update, Red Hat OpenShift Pipelines General Availability (GA) 1.6 is available on OpenShift Container Platform 4.9.

4.1.4.1. New features

In addition to the fixes and stability improvements, the following sections highlight what is new in Red Hat OpenShift Pipelines 1.6.

-

With this update, you can configure a pipeline or task

startcommand to return a YAML or JSON-formatted string by using the--output <string>, where<string>isyamlorjson. Otherwise, without the--outputoption, thestartcommand returns a human-friendly message that is hard for other programs to parse. Returning a YAML or JSON-formatted string is useful for continuous integration (CI) environments. For example, after a resource is created, you can useyqorjqto parse the YAML or JSON-formatted message about the resource and wait until that resource is terminated without using theshowlogoption. -

With this update, you can authenticate to a registry using the

auth.jsonauthentication file of Podman. For example, you can usetkn bundle pushto push to a remote registry using Podman instead of Docker CLI. -

With this update, if you use the

tkn [taskrun | pipelinerun] delete --allcommand, you can preserve runs that are younger than a specified number of minutes by using the new--keep-since <minutes>option. For example, to keep runs that are less than five minutes old, you entertkn [taskrun | pipelinerun] delete -all --keep-since 5. -

With this update, when you delete task runs or pipeline runs, you can use the

--parent-resourceand--keep-sinceoptions together. For example, thetkn pipelinerun delete --pipeline pipelinename --keep-since 5command preserves pipeline runs whose parent resource is namedpipelinenameand whose age is five minutes or less. Thetkn tr delete -t <taskname> --keep-since 5andtkn tr delete --clustertask <taskname> --keep-since 5commands work similarly for task runs. -

This update adds support for the triggers resources to work with

v1beta1resources.

-

This update adds an

ignore-runningoption to thetkn pipelinerun deleteandtkn taskrun deletecommands. -

This update adds a

createsubcommand to thetkn taskandtkn clustertaskcommands. -

With this update, when you use the

tkn pipelinerun delete --allcommand, you can use the new--label <string>option to filter the pipeline runs by label. Optionally, you can use the--labeloption with=and==as equality operators, or!=as an inequality operator. For example, thetkn pipelinerun delete --all --label asdfandtkn pipelinerun delete --all --label==asdfcommands both delete all the pipeline runs that have theasdflabel. - With this update, you can fetch the version of installed Tekton components from the config map or, if the config map is not present, from the deployment controller.

-

With this update, triggers support the

feature-flagsandconfig-defaultsconfig map to configure feature flags and to set default values respectively. -

This update adds a new metric,

eventlistener_event_count, that you can use to count events received by theEventListenerresource. This update adds

v1beta1Go API types. With this update, triggers now support thev1beta1API version.With the current release, the

v1alpha1features are now deprecated and will be removed in a future release. Begin using thev1beta1features instead.

In the current release, auto-prunning of resources is enabled by default. In addition, you can configure auto-prunning of task run and pipeline run for each namespace separately, by using the following new annotations:

-

operator.tekton.dev/prune.schedule: If the value of this annotation is different from the value specified at theTektonConfigcustom resource definition, a new cron job in that namespace is created. -

operator.tekton.dev/prune.skip: When set totrue, the namespace for which it is configured will not be prunned. -

operator.tekton.dev/prune.resources: This annotation accepts a comma-separated list of resources. To prune a single resource such as a pipeline run, set this annotation to"pipelinerun". To prune multiple resources, such as task run and pipeline run, set this annotation to"taskrun, pipelinerun". -

operator.tekton.dev/prune.keep: Use this annotation to retain a resource without prunning. operator.tekton.dev/prune.keep-since: Use this annotation to retain resources based on their age. The value for this annotation must be equal to the age of the resource in minutes. For example, to retain resources which were created not more than five days ago, setkeep-sinceto7200.NoteThe

keepandkeep-sinceannotations are mutually exclusive. For any resource, you must configure only one of them.-

operator.tekton.dev/prune.strategy: Set the value of this annotation to eitherkeeporkeep-since.

-

-

Administrators can disable the creation of the

pipelineservice account for the entire cluster, and prevent privilege escalation by misusing the associated SCC, which is very similar toanyuid. -

You can now configure feature flags and components by using the

TektonConfigcustom resource (CR) and the CRs for individual components, such asTektonPipelineandTektonTriggers. This level of granularity helps customize and test alpha features such as the Tekton OCI bundle for individual components. -

You can now configure optional

Timeoutsfield for thePipelineRunresource. For example, you can configure timeouts separately for a pipeline run, each task run, and thefinallytasks. -

The pods generated by the

TaskRunresource now sets theactiveDeadlineSecondsfield of the pods. This enables OpenShift to consider them as terminating, and allows you to use specifically scopedResourceQuotaobject for the pods. - You can use configmaps to eliminate metrics tags or labels type on a task run, pipeline run, task, and pipeline. In addition, you can configure different types of metrics for measuring duration, such as a histogram, gauge, or last value.

-

You can define requests and limits on a pod coherently, as Tekton now fully supports the

LimitRangeobject by considering theMin,Max,Default, andDefaultRequestfields. The following alpha features are introduced:

A pipeline run can now stop after running the

finallytasks, rather than the previous behavior of stopping the execution of all task run directly. This update adds the followingspec.statusvalues:-

StoppedRunFinallywill stop the currently running tasks after they are completed, and then run thefinallytasks. -

CancelledRunFinallywill immediately cancel the running tasks, and then run thefinallytasks. Cancelledwill retain the previous behavior provided by thePipelineRunCancelledstatus.NoteThe

Cancelledstatus replaces the deprecatedPipelineRunCancelledstatus, which will be removed in thev1version.

-

-

You can now use the

oc debugcommand to put a task run into debug mode, which pauses the execution and allows you to inspect specific steps in a pod. -

When you set the

onErrorfield of a step tocontinue, the exit code for the step is recorded and passed on to subsequent steps. However, the task run does not fail and the execution of the rest of the steps in the task continues. To retain the existing behavior, you can set the value of theonErrorfield tostopAndFail. - Tasks can now accept more parameters than are actually used. When the alpha feature flag is enabled, the parameters can implicitly propagate to inlined specs. For example, an inlined task can access parameters of its parent pipeline run, without explicitly defining each parameter for the task.

-

If you enable the flag for the alpha features, the conditions under

Whenexpressions will only apply to the task with which it is directly associated, and not the dependents of the task. To apply theWhenexpressions to the associated task and its dependents, you must associate the expression with each dependent task separately. Note that, going forward, this will be the default behavior of theWhenexpressions in any new API versions of Tekton. The existing default behavior will be deprecated in favor of this update.

The current release enables you to configure node selection by specifying the

nodeSelectorandtolerationsvalues in theTektonConfigcustom resource (CR). The Operator adds these values to all the deployments that it creates.-

To configure node selection for the Operator’s controller and webhook deployment, you edit the

config.nodeSelectorandconfig.tolerationsfields in the specification for theSubscriptionCR, after installing the Operator. -

To deploy the rest of the control plane pods of OpenShift Pipelines on an infrastructure node, update the

TektonConfigCR with thenodeSelectorandtolerationsfields. The modifications are then applied to all the pods created by Operator.

-

To configure node selection for the Operator’s controller and webhook deployment, you edit the

4.1.4.2. Deprecated features

-

In CLI 0.21.0, support for all

v1alpha1resources forclustertask,task,taskrun,pipeline, andpipelineruncommands are deprecated. These resources are now deprecated and will be removed in a future release.

In Tekton Triggers v0.16.0, the redundant

statuslabel is removed from the metrics for theEventListenerresource.ImportantBreaking change: The

statuslabel has been removed from theeventlistener_http_duration_seconds_*metric. Remove queries that are based on thestatuslabel.-

With the current release, the

v1alpha1features are now deprecated and will be removed in a future release. With this update, you can begin using thev1beta1Go API types instead. Triggers now supports thev1beta1API version. With the current release, the

EventListenerresource sends a response before the triggers finish processing.ImportantBreaking change: With this change, the

EventListenerresource stops responding with a201 Createdstatus code when it creates resources. Instead, it responds with a202 Acceptedresponse code.The current release removes the

podTemplatefield from theEventListenerresource.ImportantBreaking change: The

podTemplatefield, which was deprecated as part of #1100, has been removed.The current release removes the deprecated

replicasfield from the specification for theEventListenerresource.ImportantBreaking change: The deprecated

replicasfield has been removed.

In Red Hat OpenShift Pipelines 1.6, the values of

HOME="/tekton/home"andworkingDir="/workspace"are removed from the specification of theStepobjects.Instead, Red Hat OpenShift Pipelines sets

HOMEandworkingDirto the values defined by the containers running theStepobjects. You can override these values in the specification of yourStepobjects.To use the older behavior, you can change the

disable-working-directory-overwriteanddisable-home-env-overwritefields in theTektonConfigCR tofalse:apiVersion: operator.tekton.dev/v1alpha1 kind: TektonConfig metadata: name: config spec: pipeline: disable-working-directory-overwrite: false disable-home-env-overwrite: false ...ImportantThe

disable-working-directory-overwriteanddisable-home-env-overwritefields in theTektonConfigCR are now deprecated and will be removed in a future release.

4.1.4.3. Known issues

-

When you run Maven and Jib-Maven cluster tasks, the default container image is supported only on Intel (x86) architecture. Therefore, tasks will fail on IBM Power Systems (ppc64le), IBM Z, and LinuxONE (s390x) clusters. As a workaround, you can specify a custom image by setting the

MAVEN_IMAGEparameter value tomaven:3.6.3-adoptopenjdk-11. -

On IBM Power Systems, IBM Z, and LinuxONE, the

s2i-dotnetcluster task is unsupported. -

Before you install tasks based on the Tekton Catalog on IBM Power Systems (ppc64le), IBM Z, and LinuxONE (s390x) using

tkn hub, verify if the task can be executed on these platforms. To check ifppc64leands390xare listed in the "Platforms" section of the task information, you can run the following command:tkn hub info task <name> You cannot use the

nodejs:14-ubi8-minimalimage stream because doing so generates the following errors:STEP 7: RUN /usr/libexec/s2i/assemble /bin/sh: /usr/libexec/s2i/assemble: No such file or directory subprocess exited with status 127 subprocess exited with status 127 error building at STEP "RUN /usr/libexec/s2i/assemble": exit status 127 time="2021-11-04T13:05:26Z" level=error msg="exit status 127"

4.1.4.4. Fixed issues

-

The

tkn hubcommand is now supported on IBM Power Systems, IBM Z, and LinuxONE.

-

Before this update, the terminal was not available after the user ran a

tkncommand, and the pipeline run was done, even ifretrieswere specified. Specifying a timeout in the task run or pipeline run had no effect. This update fixes the issue so that the terminal is available after running the command. -

Before this update, running

tkn pipelinerun delete --allwould delete all resources. This update prevents the resources in the running state from getting deleted. -

Before this update, using the

tkn version --component=<component>command did not return the component version. This update fixes the issue so that this command returns the component version. -

Before this update, when you used the

tkn pr logscommand, it displayed the pipelines output logs in the wrong task order. This update resolves the issue so that logs of completedPipelineRunsare listed in the appropriateTaskRunexecution order.

-

Before this update, editing the specification of a running pipeline might prevent the pipeline run from stopping when it was complete. This update fixes the issue by fetching the definition only once and then using the specification stored in the status for verification. This change reduces the probability of a race condition when a

PipelineRunor aTaskRunrefers to aPipelineorTaskthat changes while it is running. -

Whenexpression values can now have array parameter references, such as:values: [$(params.arrayParam[*])].

4.1.4.5. Release notes for Red Hat OpenShift Pipelines General Availability 1.6.1

4.1.4.5.1. Known issues

After upgrading to Red Hat OpenShift Pipelines 1.6.1 from an older version, Pipelines might enter an inconsistent state where you are unable to perform any operations (create/delete/apply) on Tekton resources (tasks and pipelines). For example, while deleting a resource, you might encounter the following error:

Error from server (InternalError): Internal error occurred: failed calling webhook "validation.webhook.pipeline.tekton.dev": Post "https://tekton-pipelines-webhook.openshift-pipelines.svc:443/resource-validation?timeout=10s": service "tekton-pipelines-webhook" not found.

4.1.4.5.2. Fixed issues

The

SSL_CERT_DIRenvironment variable (/tekton-custom-certs) set by Red Hat OpenShift Pipelines will not override the following default system directories with certificate files:-

/etc/pki/tls/certs -

/etc/ssl/certs -

/system/etc/security/cacerts

-

- The Horizontal Pod Autoscaler can manage the replica count of deployments controlled by the Red Hat OpenShift Pipelines Operator. From this release onward, if the count is changed by an end user or an on-cluster agent, the Red Hat OpenShift Pipelines Operator will not reset the replica count of deployments managed by it. However, the replicas will be reset when you upgrade the Red Hat OpenShift Pipelines Operator.

-

The pod serving the

tknCLI will now be scheduled on nodes, based on the node selector and toleration limits specified in theTektonConfigcustom resource.

4.1.4.6. Release notes for Red Hat OpenShift Pipelines General Availability 1.6.2

4.1.4.6.1. Known issues

-

When you create a new project, the creation of the

pipelineservice account is delayed, and removal of existing cluster tasks and pipeline templates takes more than 10 minutes.

4.1.4.6.2. Fixed issues

-

Before this update, multiple instances of Tekton installer sets were created for a pipeline after upgrading to Red Hat OpenShift Pipelines 1.6.1 from an older version. With this update, the Operator ensures that only one instance of each type of

TektonInstallerSetexists after an upgrade. - Before this update, all the reconcilers in the Operator used the component version to decide resource recreation during an upgrade to Red Hat OpenShift Pipelines 1.6.1 from an older version. As a result, those resources were not recreated whose component versions did not change in the upgrade. With this update, the Operator uses the Operator version instead of the component version to decide resource recreation during an upgrade.

- Before this update, the pipelines webhook service was missing in the cluster after an upgrade. This was due to an upgrade deadlock on the config maps. With this update, a mechanism is added to disable webhook validation if the config maps are absent in the cluster. As a result, the pipelines webhook service persists in the cluster after an upgrade.

- Before this update, cron jobs for auto-pruning got recreated after any configuration change to the namespace. With this update, cron jobs for auto-pruning get recreated only if there is a relevant annotation change in the namespace.

The upstream version of Tekton Pipelines is revised to

v0.28.3, which has the following fixes:-

Fix

PipelineRunorTaskRunobjects to allow label or annotation propagation. For implicit params:

-

Do not apply the

PipelineSpecparameters to theTaskRefsobject. -

Disable implicit param behavior for the

Pipelineobjects.

-

Do not apply the

-

Fix

4.1.4.7. Release notes for Red Hat OpenShift Pipelines General Availability 1.6.3

4.1.4.7.1. Fixed issues

Before this update, the Red Hat OpenShift Pipelines Operator installed pod security policies from components such as Pipelines and Triggers. However, the pod security policies shipped as part of the components were deprecated in an earlier release. With this update, the Operator stops installing pod security policies from components. As a result, the following upgrade paths are affected:

- Upgrading from Pipelines 1.6.1 or 1.6.2 to Pipelines 1.6.3 deletes the pod security policies, including those from the Pipelines and Triggers components.

Upgrading from Pipelines 1.5.x to 1.6.3 retains the pod security policies installed from components. As a cluster administrator, you can delete them manually.

NoteWhen you upgrade to future releases, the Red Hat OpenShift Pipelines Operator will automatically delete all obsolete pod security policies.

- Before this update, only cluster administrators could access pipeline metrics in the OpenShift Container Platform console. With this update, users with other cluster roles also can access the pipeline metrics.

- Before this update, role-based access control (RBAC) issues with the Pipelines Operator caused problems upgrading or installing components. This update improves the reliability and consistency of installing various Red Hat OpenShift Pipelines components.

-

Before this update, setting the

clusterTasksandpipelineTemplatesfields tofalsein theTektonConfigCR slowed the removal of cluster tasks and pipeline templates. This update improves the speed of lifecycle management of Tekton resources such as cluster tasks and pipeline templates.

4.1.4.8. Release notes for Red Hat OpenShift Pipelines General Availability 1.6.4

4.1.4.8.1. Known issues

After upgrading from Red Hat OpenShift Pipelines 1.5.2 to 1.6.4, accessing the event listener routes returns a

503error.Workaround: Modify the target port in the YAML file for the event listener’s route.

Extract the route name for the relevant namespace.

$ oc get route -n <namespace>Edit the route to modify the value of the

targetPortfield.$ oc edit route -n <namespace> <el-route_name>Example: Existing event listener route

... spec: host: el-event-listener-q8c3w5-test-upgrade1.apps.ve49aws.aws.ospqa.com port: targetPort: 8000 to: kind: Service name: el-event-listener-q8c3w5 weight: 100 wildcardPolicy: None ...Example: Modified event listener route

... spec: host: el-event-listener-q8c3w5-test-upgrade1.apps.ve49aws.aws.ospqa.com port: targetPort: http-listener to: kind: Service name: el-event-listener-q8c3w5 weight: 100 wildcardPolicy: None ...

4.1.4.8.2. Fixed issues

-

Before this update, the Operator failed when creating RBAC resources if any namespace was in a

Terminatingstate. With this update, the Operator ignores namespaces in aTerminatingstate and creates the RBAC resources. - Before this update, the task runs failed or restarted due to absence of annotation specifying the release version of the associated Tekton controller. With this update, the inclusion of the appropriate annotations are automated, and the tasks run without failure or restarts.

4.1.5. Release notes for Red Hat OpenShift Pipelines General Availability 1.5

Red Hat OpenShift Pipelines General Availability (GA) 1.5 is now available on OpenShift Container Platform 4.8.

4.1.5.1. Compatibility and support matrix

Some features in this release are currently in Technology Preview. These experimental features are not intended for production use.

In the table, features are marked with the following statuses:

| TP | Technology Preview |

| GA | General Availability |

Note the following scope of support on the Red Hat Customer Portal for these features:

| Feature | Version | Support Status |

|---|---|---|

| Pipelines | 0.24 | GA |

| CLI | 0.19 | GA |

| Catalog | 0.24 | GA |

| Triggers | 0.14 | TP |

| Pipeline resources | - | TP |

For questions and feedback, you can send an email to the product team at pipelines-interest@redhat.com.

4.1.5.2. New features

In addition to the fixes and stability improvements, the following sections highlight what is new in Red Hat OpenShift Pipelines 1.5.

Pipeline run and task runs will be automatically pruned by a cron job in the target namespace. The cron job uses the

IMAGE_JOB_PRUNER_TKNenvironment variable to get the value oftkn image. With this enhancement, the following fields are introduced to theTektonConfigcustom resource:... pruner: resources: - pipelinerun - taskrun schedule: "*/5 * * * *" # cron schedule keep: 2 # delete all keeping n ...In OpenShift Container Platform, you can customize the installation of the Tekton Add-ons component by modifying the values of the new parameters

clusterTasksandpipelinesTemplatesin theTektonConfigcustom resource:apiVersion: operator.tekton.dev/v1alpha1 kind: TektonConfig metadata: name: config spec: profile: all targetNamespace: openshift-pipelines addon: params: - name: clusterTasks value: "true" - name: pipelineTemplates value: "true" ...The customization is allowed if you create the add-on using

TektonConfig, or directly by using Tekton Add-ons. However, if the parameters are not passed, the controller adds parameters with default values.Note-

If add-on is created using the

TektonConfigcustom resource, and you change the parameter values later in theAddoncustom resource, then the values in theTektonConfigcustom resource overwrites the changes. -

You can set the value of the

pipelinesTemplatesparameter totrueonly when the value of theclusterTasksparameter istrue.

-

If add-on is created using the

The

enableMetricsparameter is added to theTektonConfigcustom resource. You can use it to disable the service monitor, which is part of Tekton Pipelines for OpenShift Container Platform.apiVersion: operator.tekton.dev/v1alpha1 kind: TektonConfig metadata: name: config spec: profile: all targetNamespace: openshift-pipelines pipeline: params: - name: enableMetrics value: "true" ...- Eventlistener OpenCensus metrics, which captures metrics at process level, is added.

- Triggers now has label selector; you can configure triggers for an event listener using labels.

The

ClusterInterceptorcustom resource definition for registering interceptors is added, which allows you to register newInterceptortypes that you can plug in. In addition, the following relevant changes are made:-

In the trigger specifications, you can configure interceptors using a new API that includes a

reffield to refer to a cluster interceptor. In addition, you can use theparamsfield to add parameters that pass on to the interceptors for processing. -

The bundled interceptors CEL, GitHub, GitLab, and BitBucket, have been migrated. They are implemented using the new

ClusterInterceptorcustom resource definition. -

Core interceptors are migrated to the new format, and any new triggers created using the old syntax automatically switch to the new

reforparamsbased syntax.

-

In the trigger specifications, you can configure interceptors using a new API that includes a

-

To disable prefixing the name of the task or step while displaying logs, use the

--prefixoption forlogcommands. -

To display the version of a specific component, use the new

--componentflag in thetkn versioncommand. -

The

tkn hub check-upgradecommand is added, and other commands are revised to be based on the pipeline version. In addition, catalog names are displayed in thesearchcommand output. -

Support for optional workspaces are added to the

startcommand. -

If the plugins are not present in the

pluginsdirectory, they are searched in the current path. The

tkn start [task | clustertask | pipeline]command starts interactively and ask for theparamsvalue, even when you specify the default parameters are specified. To stop the interactive prompts, pass the--use-param-defaultsflag at the time of invoking the command. For example:$ tkn pipeline start build-and-deploy \ -w name=shared-workspace,volumeClaimTemplateFile=https://raw.githubusercontent.com/openshift/pipelines-tutorial/pipelines-1.7/01_pipeline/03_persistent_volume_claim.yaml \ -p deployment-name=pipelines-vote-api \ -p git-url=https://github.com/openshift/pipelines-vote-api.git \ -p IMAGE=image-registry.openshift-image-registry.svc:5000/pipelines-tutorial/pipelines-vote-api \ --use-param-defaults-

The

versionfield is added in thetkn task describecommand. -

The option to automatically select resources such as

TriggerTemplate, orTriggerBinding, orClusterTriggerBinding, orEventlistener, is added in thedescribecommand, if only one is present. -

In the

tkn pr describecommand, a section for skipped tasks is added. -

Support for the

tkn clustertask logsis added. -

The YAML merge and variable from

config.yamlis removed. In addition, therelease.yamlfile can now be more easily consumed by tools such askustomizeandytt. - The support for resource names to contain the dot character (".") is added.

-

The

hostAliasesarray in thePodTemplatespecification is added to the pod-level override of hostname resolution. It is achieved by modifying the/etc/hostsfile. -

A variable

$(tasks.status)is introduced to access the aggregate execution status of tasks. - An entry-point binary build for Windows is added.

4.1.5.3. Deprecated features

In the

whenexpressions, support for fields written is PascalCase is removed. Thewhenexpressions only support fields written in lowercase.NoteIf you had applied a pipeline with

whenexpressions in Tekton Pipelinesv0.16(Operatorv1.2.x), you have to reapply it.When you upgrade the Red Hat OpenShift Pipelines Operator to

v1.5, theopenshift-clientand theopenshift-client-v-1-5-0cluster tasks have theSCRIPTparameter. However, theARGSparameter and thegitresource are removed from the specification of theopenshift-clientcluster task. This is a breaking change, and only those cluster tasks that do not have a specific version in thenamefield of theClusterTaskresource upgrade seamlessly.To prevent the pipeline runs from breaking, use the

SCRIPTparameter after the upgrade because it moves the values previously specified in theARGSparameter into theSCRIPTparameter of the cluster task. For example:... - name: deploy params: - name: SCRIPT value: oc rollout status <deployment-name> runAfter: - build taskRef: kind: ClusterTask name: openshift-client ...When you upgrade from Red Hat OpenShift Pipelines Operator

v1.4tov1.5, the profile names in which theTektonConfigcustom resource is installed now change.Expand Table 4.3. Profiles for TektonConfig custom resource Profiles in Pipelines 1.5 Corresponding profile in Pipelines 1.4 Installed Tekton components All (default profile)

All (default profile)

Pipelines, Triggers, Add-ons

Basic

Default

Pipelines, Triggers

Lite

Basic

Pipelines

NoteIf you used

profile: allin theconfiginstance of theTektonConfigcustom resource, no change is necessary in the resource specification.However, if the installed Operator is either in the Default or the Basic profile before the upgrade, you must edit the

configinstance of theTektonConfigcustom resource after the upgrade. For example, if the configuration wasprofile: basicbefore the upgrade, ensure that it isprofile: liteafter upgrading to Pipelines 1.5.The

disable-home-env-overwriteanddisable-working-dir-overwritefields are now deprecated and will be removed in a future release. For this release, the default value of these flags is set totruefor backward compatibility.NoteIn the next release (Red Hat OpenShift Pipelines 1.6), the

HOMEenvironment variable will not be automatically set to/tekton/home, and the default working directory will not be set to/workspacefor task runs. These defaults collide with any value set by image Dockerfile of the step.-

The

ServiceTypeandpodTemplatefields are removed from theEventListenerspec. - The controller service account no longer requests cluster-wide permission to list and watch namespaces.

The status of the

EventListenerresource has a new condition calledReady.NoteIn the future, the other status conditions for the

EventListenerresource will be deprecated in favor of theReadystatus condition.-

The

eventListenerandnamespacefields in theEventListenerresponse are deprecated. Use theeventListenerUIDfield instead. The

replicasfield is deprecated from theEventListenerspec. Instead, thespec.replicasfield is moved tospec.resources.kubernetesResource.replicasin theKubernetesResourcespec.NoteThe

replicasfield will be removed in a future release.-

The old method of configuring the core interceptors is deprecated. However, it continues to work until it is removed in a future release. Instead, interceptors in a

Triggerresource are now configured using a newrefandparamsbased syntax. The resulting default webhook automatically switch the usages of the old syntax to the new syntax for new triggers. -

Use

rbac.authorization.k8s.io/v1instead of the deprecatedrbac.authorization.k8s.io/v1beta1for theClusterRoleBindingresource. -

In cluster roles, the cluster-wide write access to resources such as

serviceaccounts,secrets,configmaps, andlimitrangesare removed. In addition, cluster-wide access to resources such asdeployments,statefulsets, anddeployment/finalizersare removed. -

The

imagecustom resource definition in thecaching.internal.knative.devgroup is not used by Tekton anymore, and is excluded in this release.

4.1.5.4. Known issues

The git-cli cluster task is built off the alpine/git base image, which expects

/rootas the user’s home directory. However, this is not explicitly set in thegit-clicluster task.In Tekton, the default home directory is overwritten with

/tekton/homefor every step of a task, unless otherwise specified. This overwriting of the$HOMEenvironment variable of the base image causes thegit-clicluster task to fail.This issue is expected to be fixed in the upcoming releases. For Red Hat OpenShift Pipelines 1.5 and earlier versions, you can use any one of the following workarounds to avoid the failure of the

git-clicluster task:Set the

$HOMEenvironment variable in the steps, so that it is not overwritten.-

[OPTIONAL] If you installed Red Hat OpenShift Pipelines using the Operator, then clone the

git-clicluster task into a separate task. This approach ensures that the Operator does not overwrite the changes made to the cluster task. -

Execute the

oc edit clustertasks git-clicommand. Add the expected

HOMEenvironment variable to the YAML of the step:... steps: - name: git env: - name: HOME value: /root image: $(params.BASE_IMAGE) workingDir: $(workspaces.source.path) ...WarningFor Red Hat OpenShift Pipelines installed by the Operator, if you do not clone the

git-clicluster task into a separate task before changing theHOMEenvironment variable, then the changes are overwritten during Operator reconciliation.

-

[OPTIONAL] If you installed Red Hat OpenShift Pipelines using the Operator, then clone the

Disable overwriting the

HOMEenvironment variable in thefeature-flagsconfig map.-

Execute the

oc edit -n openshift-pipelines configmap feature-flagscommand. Set the value of the

disable-home-env-overwriteflag totrue.Warning- If you installed Red Hat OpenShift Pipelines using the Operator, then the changes are overwritten during Operator reconciliation.

-

Modifying the default value of the

disable-home-env-overwriteflag can break other tasks and cluster tasks, as it changes the default behavior for all tasks.

-

Execute the

Use a different service account for the

git-clicluster task, as the overwriting of theHOMEenvironment variable happens when the default service account for pipelines is used.- Create a new service account.

- Link your Git secret to the service account you just created.

- Use the service account while executing a task or a pipeline.

-

On IBM Power Systems, IBM Z, and LinuxONE, the

s2i-dotnetcluster task and thetkn hubcommand are unsupported. -

When you run Maven and Jib-Maven cluster tasks, the default container image is supported only on Intel (x86) architecture. Therefore, tasks will fail on IBM Power Systems (ppc64le), IBM Z, and LinuxONE (s390x) clusters. As a workaround, you can specify a custom image by setting the

MAVEN_IMAGEparameter value tomaven:3.6.3-adoptopenjdk-11.

4.1.5.5. Fixed issues

-

The

whenexpressions indagtasks are not allowed to specify the context variable accessing the execution status ($(tasks.<pipelineTask>.status)) of any other task. -

Use Owner UIDs instead of Owner names, as it helps avoid race conditions created by deleting a

volumeClaimTemplatePVC, in situations where aPipelineRunresource is quickly deleted and then recreated. -

A new Dockerfile is added for

pullrequest-initforbuild-baseimage triggered by non-root users. -

When a pipeline or task is executed with the

-foption and theparamin its definition does not have atypedefined, a validation error is generated instead of the pipeline or task run failing silently. -

For the

tkn start [task | pipeline | clustertask]commands, the description of the--workspaceflag is now consistent. - While parsing the parameters, if an empty array is encountered, the corresponding interactive help is displayed as an empty string now.

4.1.6. Release notes for Red Hat OpenShift Pipelines General Availability 1.4

Red Hat OpenShift Pipelines General Availability (GA) 1.4 is now available on OpenShift Container Platform 4.7.

In addition to the stable and preview Operator channels, the Red Hat OpenShift Pipelines Operator 1.4.0 comes with the ocp-4.6, ocp-4.5, and ocp-4.4 deprecated channels. These deprecated channels and support for them will be removed in the following release of Red Hat OpenShift Pipelines.

4.1.6.1. Compatibility and support matrix

Some features in this release are currently in Technology Preview. These experimental features are not intended for production use.

In the table, features are marked with the following statuses:

| TP | Technology Preview |

| GA | General Availability |

Note the following scope of support on the Red Hat Customer Portal for these features:

| Feature | Version | Support Status |

|---|---|---|

| Pipelines | 0.22 | GA |

| CLI | 0.17 | GA |

| Catalog | 0.22 | GA |

| Triggers | 0.12 | TP |

| Pipeline resources | - | TP |

For questions and feedback, you can send an email to the product team at pipelines-interest@redhat.com.

4.1.6.2. New features

In addition to the fixes and stability improvements, the following sections highlight what is new in Red Hat OpenShift Pipelines 1.4.

The custom tasks have the following enhancements:

- Pipeline results can now refer to results produced by custom tasks.

- Custom tasks can now use workspaces, service accounts, and pod templates to build more complex custom tasks.

The

finallytask has the following enhancements:-

The

whenexpressions are supported infinallytasks, which provides efficient guarded execution and improved reusability of tasks. A

finallytask can be configured to consume the results of any task within the same pipeline.NoteSupport for

whenexpressions andfinallytasks are unavailable in the OpenShift Container Platform 4.7 web console.

-

The

-

Support for multiple secrets of the type

dockercfgordockerconfigjsonis added for authentication at runtime. -

Functionality to support sparse-checkout with the

git-clonetask is added. This enables you to clone only a subset of the repository as your local copy, and helps you to restrict the size of the cloned repositories. - You can create pipeline runs in a pending state without actually starting them. In clusters that are under heavy load, this allows Operators to have control over the start time of the pipeline runs.

-

Ensure that you set the

SYSTEM_NAMESPACEenvironment variable manually for the controller; this was previously set by default. -

A non-root user is now added to the build-base image of pipelines so that

git-initcan clone repositories as a non-root user. - Support to validate dependencies between resolved resources before a pipeline run starts is added. All result variables in the pipeline must be valid, and optional workspaces from a pipeline can only be passed to tasks expecting it for the pipeline to start running.

- The controller and webhook runs as a non-root group, and their superfluous capabilities have been removed to make them more secure.

-

You can use the

tkn pr logscommand to see the log streams for retried task runs. -

You can use the

--clustertaskoption in thetkn tr deletecommand to delete all the task runs associated with a particular cluster task. -

Support for using Knative service with the

EventListenerresource is added by introducing a newcustomResourcefield. - An error message is displayed when an event payload does not use the JSON format.

-

The source control interceptors such as GitLab, BitBucket, and GitHub, now use the new

InterceptorRequestorInterceptorResponsetype interface. -

A new CEL function

marshalJSONis implemented so that you can encode a JSON object or an array to a string. -

An HTTP handler for serving the CEL and the source control core interceptors is added. It packages four core interceptors into a single HTTP server that is deployed in the

tekton-pipelinesnamespace. TheEventListenerobject forwards events over the HTTP server to the interceptor. Each interceptor is available at a different path. For example, the CEL interceptor is available on the/celpath. The

pipelines-sccSecurity Context Constraint (SCC) is used with the defaultpipelineservice account for pipelines. This new service account is similar toanyuid, but with a minor difference as defined in the YAML for SCC of OpenShift Container Platform 4.7:fsGroup: type: MustRunAs

4.1.6.3. Deprecated features

-

The

build-gcssub-type in the pipeline resource storage, and thegcs-fetcherimage, are not supported. -

In the

taskRunfield of cluster tasks, the labeltekton.dev/taskis removed. -

For webhooks, the value

v1beta1corresponding to the fieldadmissionReviewVersionsis removed. -

The

creds-inithelper image for building and deploying is removed. In the triggers spec and binding, the deprecated field

template.nameis removed in favor oftemplate.ref. You should update alleventListenerdefinitions to use thereffield.NoteUpgrade from Pipelines 1.3.x and earlier versions to Pipelines 1.4.0 breaks event listeners because of the unavailability of the

template.namefield. For such cases, use Pipelines 1.4.1 to avail the restoredtemplate.namefield.-

For

EventListenercustom resources/objects, the fieldsPodTemplateandServiceTypeare deprecated in favor ofResource. - The deprecated spec style embedded bindings is removed.

-

The

specfield is removed from thetriggerSpecBinding. - The event ID representation is changed from a five-character random string to a UUID.

4.1.6.4. Known issues

- In the Developer perspective, the pipeline metrics and triggers features are available only on OpenShift Container Platform 4.7.6 or later versions.

-

On IBM Power Systems, IBM Z, and LinuxONE, the

tkn hubcommand is not supported. -

When you run Maven and Jib Maven cluster tasks on an IBM Power Systems (ppc64le), IBM Z, and LinuxONE (s390x) clusters, set the

MAVEN_IMAGEparameter value tomaven:3.6.3-adoptopenjdk-11. Triggers throw error resulting from bad handling of the JSON format, if you have the following configuration in the trigger binding:

params: - name: github_json value: $(body)To resolve the issue:

-

If you are using triggers v0.11.0 and above, use the

marshalJSONCEL function, which takes a JSON object or array and returns the JSON encoding of that object or array as a string. If you are using older triggers version, add the following annotation in the trigger template:

annotations: triggers.tekton.dev/old-escape-quotes: "true"

-

If you are using triggers v0.11.0 and above, use the

- When upgrading from Pipelines 1.3.x to 1.4.x, you must recreate the routes.

4.1.6.5. Fixed issues

-

Previously, the

tekton.dev/tasklabel was removed from the task runs of cluster tasks, and thetekton.dev/clusterTasklabel was introduced. The problems resulting from that change is resolved by fixing theclustertask describeanddeletecommands. In addition, thelastrunfunction for tasks is modified, to fix the issue of thetekton.dev/tasklabel being applied to the task runs of both tasks and cluster tasks in older versions of pipelines. -

When doing an interactive

tkn pipeline start pipelinename, aPipelineResourceis created interactively. Thetkn p startcommand prints the resource status if the resource status is notnil. -

Previously, the

tekton.dev/task=namelabel was removed from the task runs created from cluster tasks. This fix modifies thetkn clustertask startcommand with the--lastflag to check for thetekton.dev/task=namelabel in the created task runs. -

When a task uses an inline task specification, the corresponding task run now gets embedded in the pipeline when you run the

tkn pipeline describecommand, and the task name is returned as embedded. -

The

tkn versioncommand is fixed to display the version of the installed Tekton CLI tool, without a configuredkubeConfiguration namespaceor access to a cluster. -

If an argument is unexpected or more than one arguments are used, the

tkn completioncommand gives an error. -

Previously, pipeline runs with the

finallytasks nested in a pipeline specification would lose thosefinallytasks, when converted to thev1alpha1version and restored back to thev1beta1version. This error occurring during conversion is fixed to avoid potential data loss. Pipeline runs with thefinallytasks nested in a pipeline specification is now serialized and stored on the alpha version, only to be deserialized later. -

Previously, there was an error in the pod generation when a service account had the

secretsfield as{}. The task runs failed withCouldntGetTaskbecause the GET request with an empty secret name returned an error, indicating that the resource name may not be empty. This issue is fixed by avoiding an empty secret name in thekubeclientGET request. -

Pipelines with the

v1beta1API versions can now be requested along with thev1alpha1version, without losing thefinallytasks. Applying the returnedv1alpha1version will store the resource asv1beta1, with thefinallysection restored to its original state. -

Previously, an unset

selfLinkfield in the controller caused an error in the Kubernetes v1.20 clusters. As a temporary fix, theCloudEventsource field is set to a value that matches the current source URI, without the value of the auto-populatedselfLinkfield. -

Previously, a secret name with dots such as

gcr.ioled to a task run creation failure. This happened because of the secret name being used internally as part of a volume mount name. The volume mount name conforms to the RFC1123 DNS label and disallows dots as part of the name. This issue is fixed by replacing the dot with a dash that results in a readable name. -

Context variables are now validated in the

finallytasks. -

Previously, when the task run reconciler was passed a task run that did not have a previous status update containing the name of the pod it created, the task run reconciler listed the pods associated with the task run. The task run reconciler used the labels of the task run, which were propagated to the pod, to find the pod. Changing these labels while the task run was running, caused the code to not find the existing pod. As a result, duplicate pods were created. This issue is fixed by changing the task run reconciler to only use the

tekton.dev/taskRunTekton-controlled label when finding the pod. - Previously, when a pipeline accepted an optional workspace and passed it to a pipeline task, the pipeline run reconciler stopped with an error if the workspace was not provided, even if a missing workspace binding is a valid state for an optional workspace. This issue is fixed by ensuring that the pipeline run reconciler does not fail to create a task run, even if an optional workspace is not provided.

- The sorted order of step statuses matches the order of step containers.

-

Previously, the task run status was set to

unknownwhen a pod encountered theCreateContainerConfigErrorreason, which meant that the task and the pipeline ran until the pod timed out. This issue is fixed by setting the task run status tofalse, so that the task is set as failed when the pod encounters theCreateContainerConfigErrorreason. -

Previously, pipeline results were resolved on the first reconciliation, after a pipeline run was completed. This could fail the resolution resulting in the

Succeededcondition of the pipeline run being overwritten. As a result, the final status information was lost, potentially confusing any services watching the pipeline run conditions. This issue is fixed by moving the resolution of pipeline results to the end of a reconciliation, when the pipeline run is put into aSucceededorTruecondition. - Execution status variable is now validated. This avoids validating task results while validating context variables to access execution status.

- Previously, a pipeline result that contained an invalid variable would be added to the pipeline run with the literal expression of the variable intact. Therefore, it was difficult to assess whether the results were populated correctly. This issue is fixed by filtering out the pipeline run results that reference failed task runs. Now, a pipeline result that contains an invalid variable will not be emitted by the pipeline run at all.

-

The

tkn eventlistener describecommand is fixed to avoid crashing without a template. It also displays the details about trigger references. -

Upgrades from Pipelines 1.3.x and earlier versions to Pipelines 1.4.0 breaks event listeners because of the unavailability of

template.name. In Pipelines 1.4.1, thetemplate.namehas been restored to avoid breaking event listeners in triggers. -

In Pipelines 1.4.1, the

ConsoleQuickStartcustom resource has been updated to align with OpenShift Container Platform 4.7 capabilities and behavior.

4.1.7. Release notes for Red Hat OpenShift Pipelines Technology Preview 1.3

4.1.7.1. New features

Red Hat OpenShift Pipelines Technology Preview (TP) 1.3 is now available on OpenShift Container Platform 4.7. Red Hat OpenShift Pipelines TP 1.3 is updated to support:

- Tekton Pipelines 0.19.0

-

Tekton

tknCLI 0.15.0 - Tekton Triggers 0.10.2

- cluster tasks based on Tekton Catalog 0.19.0

- IBM Power Systems on OpenShift Container Platform 4.7

- IBM Z and LinuxONE on OpenShift Container Platform 4.7

In addition to the fixes and stability improvements, the following sections highlight what is new in Red Hat OpenShift Pipelines 1.3.

4.1.7.1.1. Pipelines

- Tasks that build images, such as S2I and Buildah tasks, now emit a URL of the image built that includes the image SHA.

-

Conditions in pipeline tasks that reference custom tasks are disallowed because the

Conditioncustom resource definition (CRD) has been deprecated. -

Variable expansion is now added in the

TaskCRD for the following fields:spec.steps[].imagePullPolicyandspec.sidecar[].imagePullPolicy. -

You can disable the built-in credential mechanism in Tekton by setting the

disable-creds-initfeature-flag totrue. -

Resolved when expressions are now listed in the

Skipped Tasksand theTask Runssections in theStatusfield of thePipelineRunconfiguration. -

The

git initcommand can now clone recursive submodules. -

A

TaskCR author can now specify a timeout for a step in theTaskspec. -

You can now base the entry point image on the

distroless/static:nonrootimage and give it a mode to copy itself to the destination, without relying on thecpcommand being present in the base image. -

You can now use the configuration flag

require-git-ssh-secret-known-hoststo disallow omitting known hosts in the Git SSH secret. When the flag value is set totrue, you must include theknown_hostfield in the Git SSH secret. The default value for the flag isfalse. - The concept of optional workspaces is now introduced. A task or pipeline might declare a workspace optional and conditionally change their behavior based on its presence. A task run or pipeline run might also omit that workspace, thereby modifying the task or pipeline behavior. The default task run workspaces are not added in place of an omitted optional workspace.

- Credentials initialization in Tekton now detects an SSH credential that is used with a non-SSH URL, and vice versa in Git pipeline resources, and logs a warning in the step containers.

- The task run controller emits a warning event if the affinity specified by the pod template is overwritten by the affinity assistant.

- The task run reconciler now records metrics for cloud events that are emitted once a task run is completed. This includes retries.

4.1.7.1.2. Pipelines CLI

-

Support for

--no-headers flagis now added to the following commands:tkn condition list,tkn triggerbinding list,tkn eventlistener list,tkn clustertask list,tkn clustertriggerbinding list. -

When used together, the

--lastor--useoptions override the--prefix-nameand--timeoutoptions. -

The

tkn eventlistener logscommand is now added to view theEventListenerlogs. -

The

tekton hubcommands are now integrated into thetknCLI. -

The

--nocolouroption is now changed to--no-color. -

The

--all-namespacesflag is added to the following commands:tkn triggertemplate list,tkn condition list,tkn triggerbinding list,tkn eventlistener list.

4.1.7.1.3. Triggers

-

You can now specify your resource information in the

EventListenertemplate. -

It is now mandatory for

EventListenerservice accounts to have thelistandwatchverbs, in addition to thegetverb for all the triggers resources. This enables you to useListersto fetch data fromEventListener,Trigger,TriggerBinding,TriggerTemplate, andClusterTriggerBindingresources. You can use this feature to create aSinkobject rather than specifying multiple informers, and directly make calls to the API server. -

A new

Interceptorinterface is added to support immutable input event bodies. Interceptors can now add data or fields to a newextensionsfield, and cannot modify the input bodies making them immutable. The CEL interceptor uses this newInterceptorinterface. -

A

namespaceSelectorfield is added to theEventListenerresource. Use it to specify the namespaces from where theEventListenerresource can fetch theTriggerobject for processing events. To use thenamespaceSelectorfield, the service account for theEventListenerresource must have a cluster role. -

The triggers

EventListenerresource now supports end-to-end secure connection to theeventlistenerpod. -

The escaping parameters behavior in the

TriggerTemplatesresource by replacing"with\"is now removed. -

A new

resourcesfield, supporting Kubernetes resources, is introduced as part of theEventListenerspec. - A new functionality for the CEL interceptor, with support for upper and lower-casing of ASCII strings, is added.

-

You can embed

TriggerBindingresources by using thenameandvaluefields in a trigger, or an event listener. -

The

PodSecurityPolicyconfiguration is updated to run in restricted environments. It ensures that containers must run as non-root. In addition, the role-based access control for using the pod security policy is moved from cluster-scoped to namespace-scoped. This ensures that the triggers cannot use other pod security policies that are unrelated to a namespace. -

Support for embedded trigger templates is now added. You can either use the

namefield to refer to an embedded template or embed the template inside thespecfield.

4.1.7.2. Deprecated features

-

Pipeline templates that use

PipelineResourcesCRDs are now deprecated and will be removed in a future release. -

The

template.namefield is deprecated in favor of thetemplate.reffield and will be removed in a future release. -

The

-cshorthand for the--checkcommand has been removed. In addition, globaltknflags are added to theversioncommand.

4.1.7.3. Known issues

-

CEL overlays add fields to a new top-level

extensionsfunction, instead of modifying the incoming event body.TriggerBindingresources can access values within this newextensionsfunction using the$(extensions.<key>)syntax. Update your binding to use the$(extensions.<key>)syntax instead of the$(body.<overlay-key>)syntax. -

The escaping parameters behavior by replacing

"with\"is now removed. If you need to retain the old escaping parameters behavior add thetekton.dev/old-escape-quotes: true"annotation to yourTriggerTemplatespecification. -

You can embed

TriggerBindingresources by using thenameandvaluefields inside a trigger or an event listener. However, you cannot specify bothnameandreffields for a single binding. Use thereffield to refer to aTriggerBindingresource and thenamefield for embedded bindings. -

An interceptor cannot attempt to reference a

secretoutside the namespace of anEventListenerresource. You must include secrets in the namespace of the `EventListener`resource. -

In Triggers 0.9.0 and later, if a body or header based

TriggerBindingparameter is missing or malformed in an event payload, the default values are used instead of displaying an error. -

Tasks and pipelines created with

WhenExpressionobjects using Tekton Pipelines 0.16.x must be reapplied to fix their JSON annotations. - When a pipeline accepts an optional workspace and gives it to a task, the pipeline run stalls if the workspace is not provided.