30.3. Getting Started with VDO

30.3.1. Introduction

Copy linkLink copied to clipboard!

Virtual Data Optimizer (VDO) provides inline data reduction for Linux in the form of deduplication, compression, and thin provisioning. When you set up a VDO volume, you specify a block device on which to construct your VDO volume and the amount of logical storage you plan to present.

- When hosting active VMs or containers, Red Hat recommends provisioning storage at a 10:1 logical to physical ratio: that is, if you are utilizing 1 TB of physical storage, you would present it as 10 TB of logical storage.

- For object storage, such as the type provided by Ceph, Red Hat recommends using a 3:1 logical to physical ratio: that is, 1 TB of physical storage would present as 3 TB logical storage.

In either case, you can simply put a file system on top of the logical device presented by VDO and then use it directly or as part of a distributed cloud storage architecture.

This chapter describes the following use cases of VDO deployment:

- the direct-attached use case for virtualization servers, such as those built using Red Hat Virtualization, and

- the cloud storage use case for object-based distributed storage clusters, such as those built using Ceph Storage.

Note

VDO deployment with Ceph is currently not supported.

This chapter provides examples for configuring VDO for use with a standard Linux file system that can be easily deployed for either use case; see the diagrams in Section 30.3.5, “Deployment Examples”.

30.3.2. Installing VDO

Copy linkLink copied to clipboard!

VDO is deployed using the following RPM packages:

- vdo

- kmod-kvdo

To install VDO, use the yum package manager to install the RPM packages:

# yum install vdo kmod-kvdo30.3.3. Creating a VDO Volume

Copy linkLink copied to clipboard!

Create a VDO volume for your block device. Note that multiple VDO volumes can be created for separate devices on the same machine. If you choose this approach, you must supply a different name and device for each instance of VDO on the system.

Important

Use expandable storage as the backing block device. For more information, see Section 30.2, “System Requirements”.

In all the following steps, replace vdo_name with the identifier you want to use for your VDO volume; for example,

vdo1.

- Create the VDO volume using the VDO Manager:

# vdo create \ --name=vdo_name \ --device=block_device \ --vdoLogicalSize=logical_size \ [--vdoSlabSize=slab_size]- Replace block_device with the persistent name of the block device where you want to create the VDO volume. For example,

/dev/disk/by-id/scsi-3600508b1001c264ad2af21e903ad031f.Important

Use a persistent device name. If you use a non-persistent device name, then VDO might fail to start properly in the future if the device name changes.For more information on persistent names, see Section 25.8, “Persistent Naming”. - Replace logical_size with the amount of logical storage that the VDO volume should present:

- For active VMs or container storage, use logical size that is ten times the physical size of your block device. For example, if your block device is 1 TB in size, use

10There. - For object storage, use logical size that is three times the physical size of your block device. For example, if your block device is 1 TB in size, use

3There.

- If the block device is larger than 16 TiB, add the

--vdoSlabSize=32Gto increase the slab size on the volume to 32 GiB.Using the default slab size of 2 GiB on block devices larger than 16 TiB results in thevdo createcommand failing with the following error:vdo: ERROR - vdoformat: formatVDO failed on '/dev/device': VDO Status: Exceeds maximum number of slabs supportedFor more information, see Section 30.1.3, “VDO Volume”.

Example 30.1. Creating VDO for Container Storage

For example, to create a VDO volume for container storage on a 1 TB block device, you might use:# vdo create \ --name=vdo1 \ --device=/dev/disk/by-id/scsi-3600508b1001c264ad2af21e903ad031f \ --vdoLogicalSize=10TWhen a VDO volume is created, VDO adds an entry to the/etc/vdoconf.ymlconfiguration file. Thevdo.servicesystemd unit then uses the entry to start the volume by default.Important

If a failure occurs when creating the VDO volume, remove the volume to clean up. See Section 30.4.3.1, “Removing an Unsuccessfully Created Volume” for details. - Create a file system:

- For the XFS file system:

# mkfs.xfs -K /dev/mapper/vdo_name - For the ext4 file system:

# mkfs.ext4 -E nodiscard /dev/mapper/vdo_name

- Mount the file system:

# mkdir -m 1777 /mnt/vdo_name # mount /dev/mapper/vdo_name /mnt/vdo_name - To configure the file system to mount automatically, use either the

/etc/fstabfile or a systemd mount unit:- If you decide to use the

/etc/fstabconfiguration file, add one of the following lines to the file:- For the XFS file system:

/dev/mapper/vdo_name /mnt/vdo_name xfs defaults,_netdev,x-systemd.device-timeout=0,x-systemd.requires=vdo.service 0 0 - For the ext4 file system:

/dev/mapper/vdo_name /mnt/vdo_name ext4 defaults,_netdev,x-systemd.device-timeout=0,x-systemd.requires=vdo.service 0 0

- Alternatively, if you decide to use a systemd unit, create a systemd mount unit file with the appropriate filename. For the mount point of your VDO volume, create the

/etc/systemd/system/mnt-vdo_name.mountfile with the following content:[Unit] Description = VDO unit file to mount file system name = vdo_name.mount Requires = vdo.service After = multi-user.target Conflicts = umount.target [Mount] What = /dev/mapper/vdo_name Where = /mnt/vdo_name Type = xfs [Install] WantedBy = multi-user.targetAn example systemd unit file is also installed at/usr/share/doc/vdo/examples/systemd/VDO.mount.example.

- Enable the

discardfeature for the file system on your VDO device. Both batch and online operations work with VDO.For information on how to set up thediscardfeature, see Section 2.4, “Discard Unused Blocks”.

30.3.4. Monitoring VDO

Copy linkLink copied to clipboard!

Because VDO is thin provisioned, the file system and applications will only see the logical space in use and will not be aware of the actual physical space available.

VDO space usage and efficiency can be monitored using the vdostats utility:

# vdostats --human-readable

Device 1K-blocks Used Available Use% Space saving%

/dev/mapper/node1osd1 926.5G 21.0G 905.5G 2% 73%

/dev/mapper/node1osd2 926.5G 28.2G 898.3G 3% 64%

When the physical storage capacity of a VDO volume is almost full, VDO reports a warning in the system log, similar to the following:

Oct 2 17:13:39 system lvm[13863]: Monitoring VDO pool vdo_name.

Oct 2 17:27:39 system lvm[13863]: WARNING: VDO pool vdo_name is now 80.69% full.

Oct 2 17:28:19 system lvm[13863]: WARNING: VDO pool vdo_name is now 85.25% full.

Oct 2 17:29:39 system lvm[13863]: WARNING: VDO pool vdo_name is now 90.64% full.

Oct 2 17:30:29 system lvm[13863]: WARNING: VDO pool vdo_name is now 96.07% full.

Important

Monitor physical space on your VDO volumes to prevent out-of-space situations. Running out of physical blocks might result in losing recently written, unacknowledged data on the VDO volume.

30.3.5. Deployment Examples

Copy linkLink copied to clipboard!

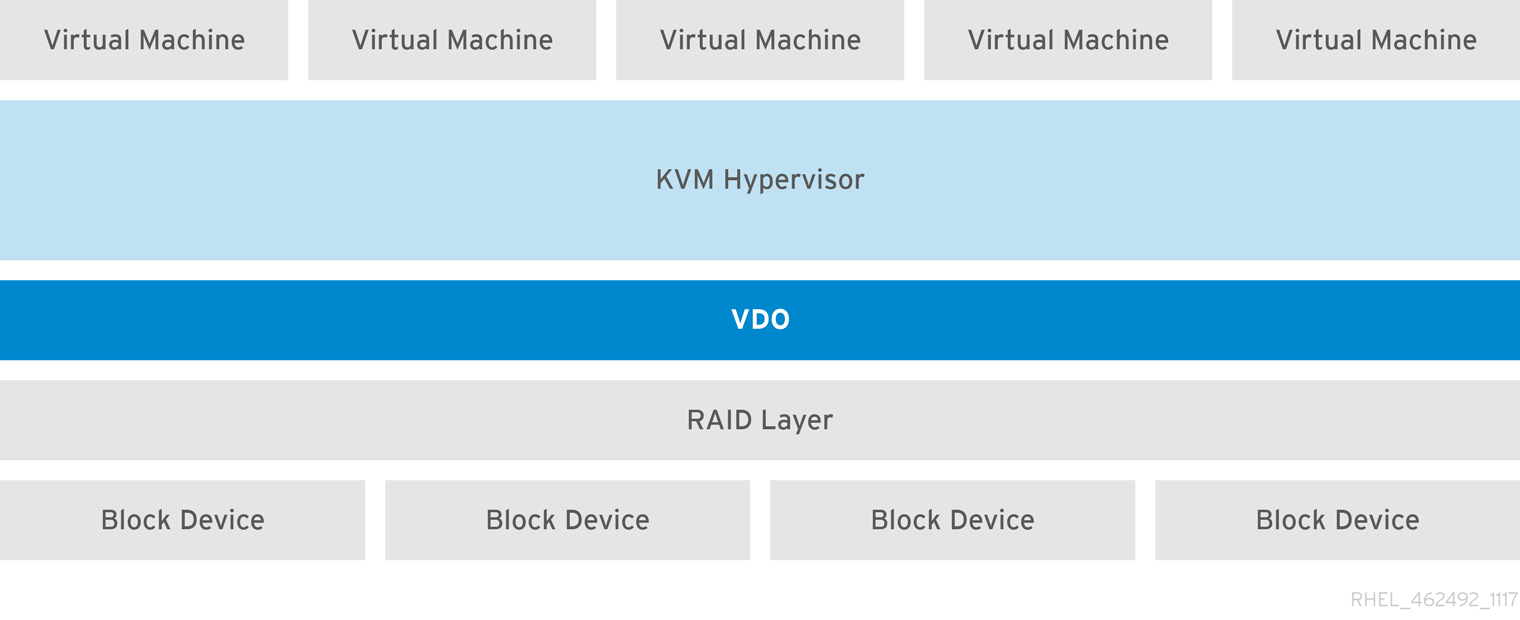

The following examples illustrate how VDO might be used in KVM and other deployments.

VDO Deployment with KVM

Copy linkLink copied to clipboard!

To see how VDO can be deployed successfully on a KVM server configured with Direct Attached Storage, see Figure 30.2, “VDO Deployment with KVM”.

Figure 30.2. VDO Deployment with KVM

More Deployment Scenarios

Copy linkLink copied to clipboard!

For more information on VDO deployment, see Section 30.5, “Deployment Scenarios”.