5.2. Cluster Tasks

5.2.1. Creating a New Cluster

Procedure 5.1. Creating a New Cluster

- Select the Clusters resource tab.

- Click .

- Select the Data Center the cluster will belong to from the drop-down list.

- Enter the Name and Description of the cluster.

- Select a network from the Management Network drop-down list to assign the management network role.

- Select the CPU Architecture and CPU Type from the drop-down lists. It is important to match the CPU processor family with the minimum CPU processor type of the hosts you intend to attach to the cluster, otherwise the host will be non-operational.

Note

For both Intel and AMD CPU types, the listed CPU models are in logical order from the oldest to the newest. If your cluster includes hosts with different CPU models, select the oldest CPU model. For more information on each CPU model, see https://access.redhat.com/solutions/634853. - Select the Compatibility Version of the cluster from the drop-down list.

- Select either the Enable Virt Service or Enable Gluster Service radio button to define whether the cluster will be populated with virtual machine hosts or with Gluster-enabled nodes. Note that you cannot add Red Hat Virtualization Host (RHVH) to a Gluster-enabled cluster.

- Optionally select the Enable to set VM maintenance reason check box to enable an optional reason field when a virtual machine is shut down from the Manager, allowing the administrator to provide an explanation for the maintenance.

- Optionally select the Enable to set Host maintenance reason check box to enable an optional reason field when a host is placed into maintenance mode from the Manager, allowing the administrator to provide an explanation for the maintenance.

- Select either the /dev/random source (Linux-provided device) or /dev/hwrng source (external hardware device) check box to specify the random number generator device that all hosts in the cluster will use.

- Click the Optimization tab to select the memory page sharing threshold for the cluster, and optionally enable CPU thread handling and memory ballooning on the hosts in the cluster.

- Click the Migration Policy tab to define the virtual machine migration policy for the cluster.

- Click the Scheduling Policy tab to optionally configure a scheduling policy, configure scheduler optimization settings, enable trusted service for hosts in the cluster, enable HA Reservation, and add a custom serial number policy.

- Click the Console tab to optionally override the global SPICE proxy, if any, and specify the address of a SPICE proxy for hosts in the cluster.

- Click the Fencing policy tab to enable or disable fencing in the cluster, and select fencing options.

- Click to create the cluster and open the New Cluster - Guide Me window.

- The Guide Me window lists the entities that need to be configured for the cluster. Configure these entities or postpone configuration by clicking the button; configuration can be resumed by selecting the cluster and clicking the button.

5.2.2. Explanation of Settings and Controls in the New Cluster and Edit Cluster Windows

5.2.2.1. General Cluster Settings Explained

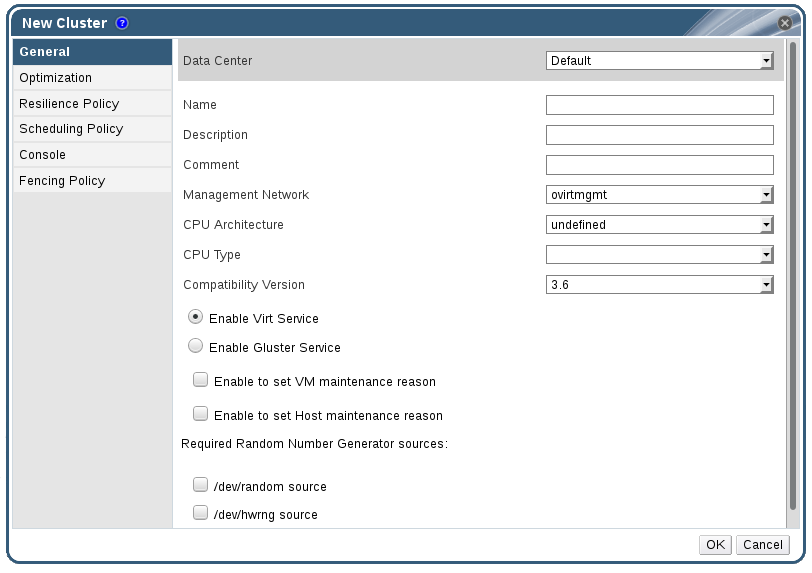

Figure 5.2. New Cluster window

|

Field

|

Description/Action

|

|---|---|

|

Data Center

|

The data center that will contain the cluster. The data center must be created before adding a cluster.

|

|

Name

|

The name of the cluster. This text field has a 40-character limit and must be a unique name with any combination of uppercase and lowercase letters, numbers, hyphens, and underscores.

|

|

Description / Comment

| The description of the cluster or additional notes. These fields are recommended but not mandatory. |

|

Management Network

|

The logical network which will be assigned the management network role. The default is ovirtmgmt. On existing clusters, the management network can only be changed via the button in the Logical Networks tab in the details pane.

|

| CPU Architecture | The CPU architecture of the cluster. Different CPU types are available depending on which CPU architecture is selected.

|

|

CPU Type

| The CPU type of the cluster. Choose one of:

|

|

Compatibility Version

| The version of Red Hat Virtualization. Choose one of:

|

|

Enable Virt Service

| If this radio button is selected, hosts in this cluster will be used to run virtual machines. |

|

Enable Gluster Service

| If this radio button is selected, hosts in this cluster will be used as Red Hat Gluster Storage Server nodes, and not for running virtual machines. You cannot add a Red Hat Virtualization Host to a cluster with this option enabled. |

|

Import existing gluster configuration

|

This check box is only available if the Enable Gluster Service radio button is selected. This option allows you to import an existing Gluster-enabled cluster and all its attached hosts to Red Hat Virtualization Manager.

The following options are required for each host in the cluster that is being imported:

|

| Enable to set VM maintenance reason | If this check box is selected, an optional reason field will appear when a virtual machine in the cluster is shut down from the Manager. This allows you to provide an explanation for the maintenance, which will appear in the logs and when the virtual machine is powered on again. |

| Enable to set Host maintenance reason | If this check box is selected, an optional reason field will appear when a host in the cluster is moved into maintenance mode from the Manager. This allows you to provide an explanation for the maintenance, which will appear in the logs and when the host is activated again. |

| Required Random Number Generator sources: |

If one of the following check boxes is selected, all hosts in the cluster must have that device available. This enables passthrough of entropy from the random number generator device to virtual machines.

|

5.2.2.2. Optimization Settings Explained

|

Field

|

Description/Action

|

|---|---|

|

Memory Optimization

|

|

|

CPU Threads

|

Selecting the Count Threads As Cores check box allows hosts to run virtual machines with a total number of processor cores greater than the number of cores in the host.

The exposed host threads would be treated as cores which can be utilized by virtual machines. For example, a 24-core system with 2 threads per core (48 threads total) can run virtual machines with up to 48 cores each, and the algorithms to calculate host CPU load would compare load against twice as many potential utilized cores.

|

|

Memory Balloon

|

Selecting the Enable Memory Balloon Optimization check box enables memory overcommitment on virtual machines running on the hosts in this cluster. When this option is set, the Memory Overcommit Manager (MoM) will start ballooning where and when possible, with a limitation of the guaranteed memory size of every virtual machine.

To have a balloon running, the virtual machine needs to have a balloon device with relevant drivers. Each virtual machine includes a balloon device unless specifically removed. Each host in this cluster receives a balloon policy update when its status changes to

Up. If necessary, you can manually update the balloon policy on a host without having to change the status. See Section 5.2.5, “Updating the MoM Policy on Hosts in a Cluster”.

It is important to understand that in some scenarios ballooning may collide with KSM. In such cases MoM will try to adjust the balloon size to minimize collisions. Additionally, in some scenarios ballooning may cause sub-optimal performance for a virtual machine. Administrators are advised to use ballooning optimization with caution.

|

|

KSM control

|

Selecting the Enable KSM check box enables MoM to run Kernel Same-page Merging (KSM) when necessary and when it can yield a memory saving benefit that outweighs its CPU cost.

|

5.2.2.3. Migration Policy Settings Explained

|

Policy

|

Description

|

|---|---|

|

Legacy

|

Legacy behavior of 3.6 version. Overrides in

vdsm.conf are still applied. The guest agent hook mechanism is disabled.

|

|

Minimal downtime

|

A policy that lets virtual machines migrate in typical situations. Virtual machines should not experience any significant downtime. The migration will be aborted if the virtual machine migration does not converge after a long time (dependent on QEMU iterations, with a maximum of 500 milliseconds). The guest agent hook mechanism is enabled.

|

|

Suspend workload if needed

|

A policy that lets virtual machines migrate in most situations, including virtual machines running heavy workloads. Virtual machines may experience a more significant downtime. The migration may still be aborted for extreme workloads. The guest agent hook mechanism is enabled.

|

|

Policy

|

Description

|

|---|---|

|

Auto

|

Bandwidth is copied from the Rate Limit [Mbps] setting in the data center Host Network QoS. If the rate limit has not been defined, it is computed as a minimum of link speeds of sending and receiving network interfaces. If rate limit has not been set, and link speeds are not available, it is determined by local VDSM setting on sending host.

|

|

Hypervisor default

|

Bandwidth is controlled by local VDSM setting on sending Host.

|

|

Custom

|

Defined by user (in Mbps).

|

|

Field

|

Description/Action

|

|---|---|

|

Migrate Virtual Machines

|

Migrates all virtual machines in order of their defined priority.

|

|

Migrate only Highly Available Virtual Machines

|

Migrates only highly available virtual machines to prevent overloading other hosts.

|

|

Do Not Migrate Virtual Machines

| Prevents virtual machines from being migrated. |

|

Property

|

Description

|

|---|---|

|

Auto Converge migrations

|

Allows you to set whether auto-convergence is used during live migration of virtual machines. Large virtual machines with high workloads can dirty memory more quickly than the transfer rate achieved during live migration, and prevent the migration from converging. Auto-convergence capabilities in QEMU allow you to force convergence of virtual machine migrations. QEMU automatically detects a lack of convergence and triggers a throttle-down of the vCPUs on the virtual machine. Auto-convergence is disabled globally by default.

|

|

Enable migration compression

|

The option allows you to set whether migration compression is used during live migration of the virtual machine. This feature uses Xor Binary Zero Run-Length-Encoding to reduce virtual machine downtime and total live migration time for virtual machines running memory write-intensive workloads or for any application with a sparse memory update pattern. Migration compression is disabled globally by default.

|

5.2.2.4. Scheduling Policy Settings Explained

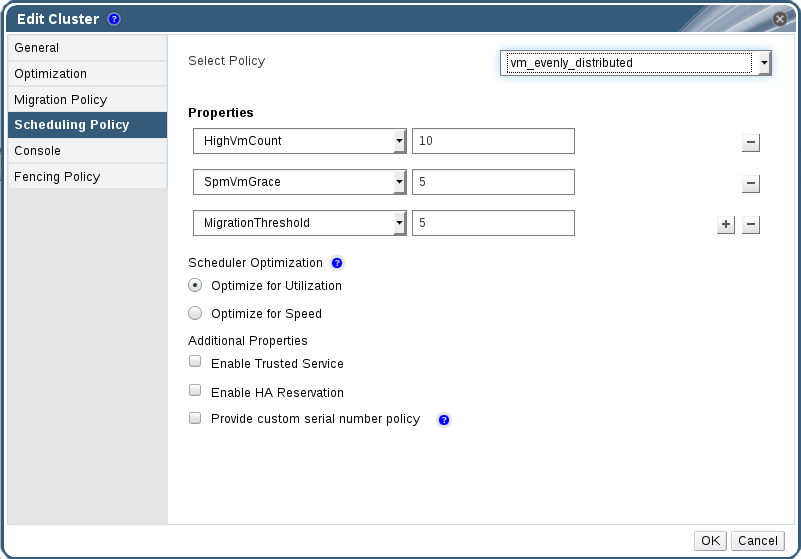

Figure 5.3. Scheduling Policy Settings: vm_evenly_distributed

|

Field

|

Description/Action

|

|---|---|

|

Select Policy

|

Select a policy from the drop-down list.

|

|

Properties

|

The following properties appear depending on the selected policy, and can be edited if necessary:

|

|

Scheduler Optimization

|

Optimize scheduling for host weighing/ordering.

|

|

Enable Trusted Service

|

Enable integration with an OpenAttestation server. Before this can be enabled, use the

engine-config tool to enter the OpenAttestation server's details. For more information, see Section 10.4, “Trusted Compute Pools”.

|

|

Enable HA Reservation

|

Enable the Manager to monitor cluster capacity for highly available virtual machines. The Manager ensures that appropriate capacity exists within a cluster for virtual machines designated as highly available to migrate in the event that their existing host fails unexpectedly.

|

|

Provide custom serial number policy

|

This check box allows you to specify a serial number policy for the virtual machines in the cluster. Select one of the following options:

|

mom.Controllers.Balloon - INFO Ballooning guest:half1 from 1096400 to 1991580 are logged to /var/log/vdsm/mom.log. /var/log/vdsm/mom.log is the Memory Overcommit Manager log file.

5.2.2.5. Cluster Console Settings Explained

|

Field

|

Description/Action

|

|---|---|

|

Define SPICE Proxy for Cluster

|

Select this check box to enable overriding the SPICE proxy defined in global configuration. This feature is useful in a case where the user (who is, for example, connecting via the User Portal) is outside of the network where the hypervisors reside.

|

|

Overridden SPICE proxy address

|

The proxy by which the SPICE client will connect to virtual machines. The address must be in the following format:

|

5.2.2.6. Fencing Policy Settings Explained

| Field | Description/Action |

|---|---|

| Enable fencing | Enables fencing on the cluster. Fencing is enabled by default, but can be disabled if required; for example, if temporary network issues are occurring or expected, administrators can disable fencing until diagnostics or maintenance activities are completed. Note that if fencing is disabled, highly available virtual machines running on non-responsive hosts will not be restarted elsewhere. |

| Skip fencing if host has live lease on storage | If this check box is selected, any hosts in the cluster that are Non Responsive and still connected to storage will not be fenced. |

| Skip fencing on cluster connectivity issues | If this check box is selected, fencing will be temporarily disabled if the percentage of hosts in the cluster that are experiencing connectivity issues is greater than or equal to the defined Threshold. The Threshold value is selected from the drop-down list; available values are 25, 50, 75, and 100. |

5.2.3. Editing a Resource

Edit the properties of a resource.

Procedure 5.2. Editing a Resource

- Use the resource tabs, tree mode, or the search function to find and select the resource in the results list.

- Click to open the Edit window.

- Change the necessary properties and click .

The new properties are saved to the resource. The Edit window will not close if a property field is invalid.

5.2.4. Setting Load and Power Management Policies for Hosts in a Cluster

Procedure 5.3. Setting Load and Power Management Policies for Hosts

- Use the resource tabs, tree mode, or the search function to find and select the cluster in the results list.

- Click to open the Edit Cluster window.

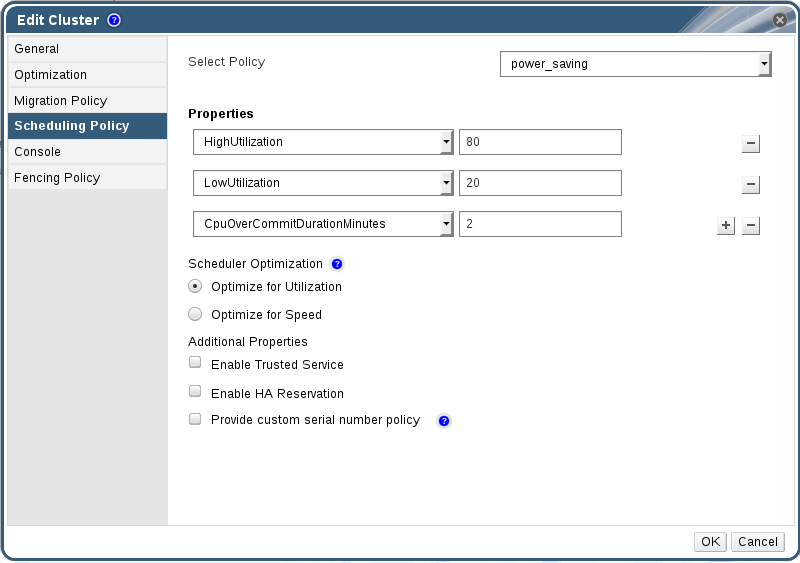

Figure 5.4. Edit Scheduling Policy

- Select one of the following policies:

- none

- vm_evenly_distributed

- Set the minimum number of virtual machines that must be running on at least one host to enable load balancing in the HighVmCount field.

- Define the maximum acceptable difference between the number of virtual machines on the most highly-utilized host and the number of virtual machines on the least-utilized host in the MigrationThreshold field.

- Define the number of slots for virtual machines to be reserved on SPM hosts in the SpmVmGrace field.

- evenly_distributed

- Set the time (in minutes) that a host can run a CPU load outside of the defined utilization values before the scheduling policy takes action in the CpuOverCommitDurationMinutes field.

- Enter the CPU utilization percentage at which virtual machines start migrating to other hosts in the HighUtilization field.

- Enter the minimum required free memory in MB above which virtual machines start migrating to other hosts in the MinFreeMemoryForUnderUtilized.

- Enter the maximum required free memory in MB below which virtual machines start migrating to other hosts in the MaxFreeMemoryForOverUtilized.

- power_saving

- Set the time (in minutes) that a host can run a CPU load outside of the defined utilization values before the scheduling policy takes action in the CpuOverCommitDurationMinutes field.

- Enter the CPU utilization percentage below which the host will be considered under-utilized in the LowUtilization field.

- Enter the CPU utilization percentage at which virtual machines start migrating to other hosts in the HighUtilization field.

- Enter the minimum required free memory in MB above which virtual machines start migrating to other hosts in the MinFreeMemoryForUnderUtilized.

- Enter the maximum required free memory in MB below which virtual machines start migrating to other hosts in the MaxFreeMemoryForOverUtilized.

- Choose one of the following as the Scheduler Optimization for the cluster:

- Select Optimize for Utilization to include weight modules in scheduling to allow best selection.

- Select Optimize for Speed to skip host weighting in cases where there are more than ten pending requests.

- If you are using an OpenAttestation server to verify your hosts, and have set up the server's details using the

engine-configtool, select the Enable Trusted Service check box. - Optionally select the Enable HA Reservation check box to enable the Manager to monitor cluster capacity for highly available virtual machines.

- Optionally select the Provide custom serial number policy check box to specify a serial number policy for the virtual machines in the cluster, and then select one of the following options:

- Select Host ID to set the host's UUID as the virtual machine's serial number.

- Select Vm ID to set the virtual machine's UUID as its serial number.

- Select Custom serial number, and then specify a custom serial number in the text field.

- Click .

5.2.5. Updating the MoM Policy on Hosts in a Cluster

Procedure 5.4. Synchronizing MoM Policy on a Host

- Click the Clusters tab and select the cluster to which the host belongs.

- Click the Hosts tab in the details pane and select the host that requires an updated MoM policy.

- Click .

5.2.6. CPU Profiles

5.2.6.1. Creating a CPU Profile

Procedure 5.5. Creating a CPU Profile

- Click the Clusters resource tab and select a cluster.

- Click the CPU Profiles sub tab in the details pane.

- Click .

- Enter a name for the CPU profile in the Name field.

- Enter a description for the CPU profile in the Description field.

- Select the quality of service to apply to the CPU profile from the QoS list.

- Click .

5.2.6.2. Removing a CPU Profile

Procedure 5.6. Removing a CPU Profile

- Click the Clusters resource tab and select a cluster.

- Click the CPU Profiles sub tab in the details pane.

- Select the CPU profile to remove.

- Click .

- Click .

default CPU profile.

5.2.7. Importing an Existing Red Hat Gluster Storage Cluster

gluster peer status command is executed on that host through SSH, then displays a list of hosts that are a part of the cluster. You must manually verify the fingerprint of each host and provide passwords for them. You will not be able to import the cluster if one of the hosts in the cluster is down or unreachable. As the newly imported hosts do not have VDSM installed, the bootstrap script installs all the necessary VDSM packages on the hosts after they have been imported, and reboots them.

Procedure 5.7. Importing an Existing Red Hat Gluster Storage Cluster to Red Hat Virtualization Manager

- Select the Clusters resource tab to list all clusters in the results list.

- Click to open the New Cluster window.

- Select the Data Center the cluster will belong to from the drop-down menu.

- Enter the Name and Description of the cluster.

- Select the Enable Gluster Service radio button and the Import existing gluster configuration check box.The Import existing gluster configuration field is displayed only if you select Enable Gluster Service radio button.

- In the Address field, enter the hostname or IP address of any server in the cluster.The host Fingerprint displays to ensure you are connecting with the correct host. If a host is unreachable or if there is a network error, an error Error in fetching fingerprint displays in the Fingerprint field.

- Enter the Root Password for the server, and click OK.

- The Add Hosts window opens, and a list of hosts that are a part of the cluster displays.

- For each host, enter the Name and the Root Password.

- If you wish to use the same password for all hosts, select the Use a Common Password check box to enter the password in the provided text field.Click to set the entered password all hosts.Make sure the fingerprints are valid and submit your changes by clicking .

5.2.8. Explanation of Settings in the Add Hosts Window

| Field | Description |

|---|---|

| Use a common password | Tick this check box to use the same password for all hosts belonging to the cluster. Enter the password in the Password field, then click the Apply button to set the password on all hosts. |

| Name | Enter the name of the host. |

| Hostname/IP | This field is automatically populated with the fully qualified domain name or IP of the host you provided in the New Cluster window. |

| Root Password | Enter a password in this field to use a different root password for each host. This field overrides the common password provided for all hosts in the cluster. |

| Fingerprint | The host fingerprint is displayed to ensure you are connecting with the correct host. This field is automatically populated with the fingerprint of the host you provided in the New Cluster window. |

5.2.9. Removing a Cluster

Move all hosts out of a cluster before removing it.

Note

Procedure 5.8. Removing a Cluster

- Use the resource tabs, tree mode, or the search function to find and select the cluster in the results list.

- Ensure there are no hosts in the cluster.

- Click to open the Remove Cluster(s) confirmation window.

- Click

The cluster is removed.

5.2.10. Changing the Cluster Compatibility Version

Note

Procedure 5.9. Changing the Cluster Compatibility Version

- From the Administration Portal, click the Clusters tab.

- Select the cluster to change from the list displayed.

- Click .

- Change the Compatibility Version to the desired value.

- Click to open the Change Cluster Compatibility Version confirmation window.

- Click to confirm.

Important