Chapter 5. Develop

5.1. Serverless applications

Serverless applications are created and deployed as Kubernetes services, defined by a route and a configuration, and contained in a YAML file. To deploy a serverless application using OpenShift Serverless, you must create a Knative Service object.

Example Knative Service object YAML file

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: hello

namespace: default

spec:

template:

spec:

containers:

- image: docker.io/openshift/hello-openshift

env:

- name: RESPONSE

value: "Hello Serverless!"You can create a serverless application by using one of the following methods:

- Create a Knative service from the OpenShift Container Platform web console. See the documentation about Creating applications using the Developer perspective.

-

Create a Knative service by using the Knative (

kn) CLI. -

Create and apply a Knative

Serviceobject as a YAML file, by using theocCLI.

5.1.1. Creating serverless applications by using the Knative CLI

Using the Knative (kn) CLI to create serverless applications provides a more streamlined and intuitive user interface over modifying YAML files directly. You can use the kn service create command to create a basic serverless application.

Prerequisites

- OpenShift Serverless Operator and Knative Serving are installed on your cluster.

-

You have installed the Knative (

kn) CLI. - You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

Procedure

Create a Knative service:

$ kn service create <service-name> --image <image> --tag <tag-value>Where:

-

--imageis the URI of the image for the application. --tagis an optional flag that can be used to add a tag to the initial revision that is created with the service.Example command

$ kn service create event-display \ --image quay.io/openshift-knative/knative-eventing-sources-event-display:latestExample output

Creating service 'event-display' in namespace 'default': 0.271s The Route is still working to reflect the latest desired specification. 0.580s Configuration "event-display" is waiting for a Revision to become ready. 3.857s ... 3.861s Ingress has not yet been reconciled. 4.270s Ready to serve. Service 'event-display' created with latest revision 'event-display-bxshg-1' and URL: http://event-display-default.apps-crc.testing

-

5.1.2. Creating a service using offline mode

You can execute kn service commands in offline mode, so that no changes happen on the cluster, and instead the service descriptor file is created on your local machine. After the descriptor file is created, you can modify the file before propagating changes to the cluster.

The offline mode of the Knative CLI is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see https://access.redhat.com/support/offerings/techpreview/.

Prerequisites

- OpenShift Serverless Operator and Knative Serving are installed on your cluster.

-

You have installed the Knative (

kn) CLI.

Procedure

In offline mode, create a local Knative service descriptor file:

$ kn service create event-display \ --image quay.io/openshift-knative/knative-eventing-sources-event-display:latest \ --target ./ \ --namespace testExample output

Service 'event-display' created in namespace 'test'.The

--target ./flag enables offline mode and specifies./as the directory for storing the new directory tree.If you do not specify an existing directory, but use a filename, such as

--target my-service.yaml, then no directory tree is created. Instead, only the service descriptor filemy-service.yamlis created in the current directory.The filename can have the

.yaml,.yml, or.jsonextension. Choosing.jsoncreates the service descriptor file in the JSON format.The

--namespace testoption places the new service in thetestnamespace.If you do not use

--namespace, and you are logged in to an OpenShift Container Platform cluster, the descriptor file is created in the current namespace. Otherwise, the descriptor file is created in thedefaultnamespace.

Examine the created directory structure:

$ tree ./Example output

./ └── test └── ksvc └── event-display.yaml 2 directories, 1 file-

The current

./directory specified with--targetcontains the newtest/directory that is named after the specified namespace. -

The

test/directory contains theksvcdirectory, named after the resource type. -

The

ksvcdirectory contains the descriptor fileevent-display.yaml, named according to the specified service name.

-

The current

Examine the generated service descriptor file:

$ cat test/ksvc/event-display.yamlExample output

apiVersion: serving.knative.dev/v1 kind: Service metadata: creationTimestamp: null name: event-display namespace: test spec: template: metadata: annotations: client.knative.dev/user-image: quay.io/openshift-knative/knative-eventing-sources-event-display:latest creationTimestamp: null spec: containers: - image: quay.io/openshift-knative/knative-eventing-sources-event-display:latest name: "" resources: {} status: {}List information about the new service:

$ kn service describe event-display --target ./ --namespace testExample output

Name: event-display Namespace: test Age: URL: Revisions: Conditions: OK TYPE AGE REASONThe

--target ./option specifies the root directory for the directory structure containing namespace subdirectories.Alternatively, you can directly specify a YAML or JSON filename with the

--targetoption. The accepted file extensions are.yaml,.yml, and.json.The

--namespaceoption specifies the namespace, which communicates toknthe subdirectory that contains the necessary service descriptor file.If you do not use

--namespace, and you are logged in to an OpenShift Container Platform cluster,knsearches for the service in the subdirectory that is named after the current namespace. Otherwise,knsearches in thedefault/subdirectory.

Use the service descriptor file to create the service on the cluster:

$ kn service create -f test/ksvc/event-display.yamlExample output

Creating service 'event-display' in namespace 'test': 0.058s The Route is still working to reflect the latest desired specification. 0.098s ... 0.168s Configuration "event-display" is waiting for a Revision to become ready. 23.377s ... 23.419s Ingress has not yet been reconciled. 23.534s Waiting for load balancer to be ready 23.723s Ready to serve. Service 'event-display' created to latest revision 'event-display-00001' is available at URL: http://event-display-test.apps.example.com

5.1.3. Creating serverless applications using YAML

Creating Knative resources by using YAML files uses a declarative API, which enables you to describe applications declaratively and in a reproducible manner. To create a serverless application by using YAML, you must create a YAML file that defines a Knative Service object, then apply it by using oc apply.

After the service is created and the application is deployed, Knative creates an immutable revision for this version of the application. Knative also performs network programming to create a route, ingress, service, and load balancer for your application and automatically scales your pods up and down based on traffic.

Prerequisites

- OpenShift Serverless Operator and Knative Serving are installed on your cluster.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

-

Install the OpenShift CLI (

oc).

Procedure

Create a YAML file containing the following sample code:

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: event-delivery namespace: default spec: template: spec: containers: - image: quay.io/openshift-knative/knative-eventing-sources-event-display:latest env: - name: RESPONSE value: "Hello Serverless!"Navigate to the directory where the YAML file is contained, and deploy the application by applying the YAML file:

$ oc apply -f <filename>

5.1.4. Verifying your serverless application deployment

To verify that your serverless application has been deployed successfully, you must get the application URL created by Knative, and then send a request to that URL and observe the output. OpenShift Serverless supports the use of both HTTP and HTTPS URLs, however the output from oc get ksvc always prints URLs using the http:// format.

Prerequisites

- OpenShift Serverless Operator and Knative Serving are installed on your cluster.

-

You have installed the

ocCLI. - You have created a Knative service.

Prerequisites

-

Install the OpenShift CLI (

oc).

Procedure

Find the application URL:

$ oc get ksvc <service_name>Example output

NAME URL LATESTCREATED LATESTREADY READY REASON event-delivery http://event-delivery-default.example.com event-delivery-4wsd2 event-delivery-4wsd2 TrueMake a request to your cluster and observe the output.

Example HTTP request

$ curl http://event-delivery-default.example.comExample HTTPS request

$ curl https://event-delivery-default.example.comExample output

Hello Serverless!Optional. If you receive an error relating to a self-signed certificate in the certificate chain, you can add the

--insecureflag to the curl command to ignore the error:$ curl https://event-delivery-default.example.com --insecureExample output

Hello Serverless!ImportantSelf-signed certificates must not be used in a production deployment. This method is only for testing purposes.

Optional. If your OpenShift Container Platform cluster is configured with a certificate that is signed by a certificate authority (CA) but not yet globally configured for your system, you can specify this with the

curlcommand. The path to the certificate can be passed to the curl command by using the--cacertflag:$ curl https://event-delivery-default.example.com --cacert <file>Example output

Hello Serverless!

5.1.5. Interacting with a serverless application using HTTP2 and gRPC

OpenShift Serverless supports only insecure or edge-terminated routes. Insecure or edge-terminated routes do not support HTTP2 on OpenShift Container Platform. These routes also do not support gRPC because gRPC is transported by HTTP2. If you use these protocols in your application, you must call the application using the ingress gateway directly. To do this you must find the ingress gateway’s public address and the application’s specific host.

This method needs to expose Kourier Gateway using the LoadBalancer service type. You can configure this by adding the following YAML to your KnativeServing custom resource definition (CRD):

...

spec:

ingress:

kourier:

service-type: LoadBalancer

...Prerequisites

- OpenShift Serverless Operator and Knative Serving are installed on your cluster.

-

Install the OpenShift CLI (

oc). - You have created a Knative service.

Procedure

- Find the application host. See the instructions in Verifying your serverless application deployment.

Find the ingress gateway’s public address:

$ oc -n knative-serving-ingress get svc kourierExample output

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kourier LoadBalancer 172.30.51.103 a83e86291bcdd11e993af02b7a65e514-33544245.us-east-1.elb.amazonaws.com 80:31380/TCP,443:31390/TCP 67mThe public address is surfaced in the

EXTERNAL-IPfield, and in this case isa83e86291bcdd11e993af02b7a65e514-33544245.us-east-1.elb.amazonaws.com.Manually set the host header of your HTTP request to the application’s host, but direct the request itself against the public address of the ingress gateway.

$ curl -H "Host: hello-default.example.com" a83e86291bcdd11e993af02b7a65e514-33544245.us-east-1.elb.amazonaws.comExample output

Hello Serverless!You can also make a gRPC request by setting the authority to the application’s host, while directing the request against the ingress gateway directly:

grpc.Dial( "a83e86291bcdd11e993af02b7a65e514-33544245.us-east-1.elb.amazonaws.com:80", grpc.WithAuthority("hello-default.example.com:80"), grpc.WithInsecure(), )NoteEnsure that you append the respective port, 80 by default, to both hosts as shown in the previous example.

5.1.6. Enabling communication with Knative applications on a cluster with restrictive network policies

If you are using a cluster that multiple users have access to, your cluster might use network policies to control which pods, services, and namespaces can communicate with each other over the network. If your cluster uses restrictive network policies, it is possible that Knative system pods are not able to access your Knative application. For example, if your namespace has the following network policy, which denies all requests, Knative system pods cannot access your Knative application:

Example NetworkPolicy object that denies all requests to the namespace

kind: NetworkPolicy

apiVersion: networking.k8s.io/v1

metadata:

name: deny-by-default

namespace: example-namespace

spec:

podSelector:

ingress: []

To allow access to your applications from Knative system pods, you must add a label to each of the Knative system namespaces, and then create a NetworkPolicy object in your application namespace that allows access to the namespace for other namespaces that have this label.

A network policy that denies requests to non-Knative services on your cluster still prevents access to these services. However, by allowing access from Knative system namespaces to your Knative application, you are allowing access to your Knative application from all namespaces in the cluster.

If you do not want to allow access to your Knative application from all namespaces on the cluster, you might want to use JSON Web Token authentication for Knative services instead. JSON Web Token authentication for Knative services requires Service Mesh.

Prerequisites

-

Install the OpenShift CLI (

oc). - OpenShift Serverless Operator and Knative Serving are installed on your cluster.

Procedure

Add the

knative.openshift.io/system-namespace=truelabel to each Knative system namespace that requires access to your application:Label the

knative-servingnamespace:$ oc label namespace knative-serving knative.openshift.io/system-namespace=trueLabel the

knative-serving-ingressnamespace:$ oc label namespace knative-serving-ingress knative.openshift.io/system-namespace=trueLabel the

knative-eventingnamespace:$ oc label namespace knative-eventing knative.openshift.io/system-namespace=trueLabel the

knative-kafkanamespace:$ oc label namespace knative-kafka knative.openshift.io/system-namespace=true

Create a

NetworkPolicyobject in your application namespace to allow access from namespaces with theknative.openshift.io/system-namespacelabel:Example

NetworkPolicyobjectapiVersion: networking.k8s.io/v1 kind: NetworkPolicy metadata: name: <network_policy_name>1 namespace: <namespace>2 spec: ingress: - from: - namespaceSelector: matchLabels: knative.openshift.io/system-namespace: "true" podSelector: {} policyTypes: - Ingress

5.1.7. Configuring init containers

Init containers are specialized containers that are run before application containers in a pod. They are generally used to implement initialization logic for an application, which may include running setup scripts or downloading required configurations.

Init containers may cause longer application start-up times and should be used with caution for serverless applications, which are expected to scale up and down frequently.

Multiple init containers are supported in a single Knative service spec. Knative provides a default, configurable naming template if a template name is not provided. The init containers template can be set by adding an appropriate value in a Knative Service object spec.

Prerequisites

- OpenShift Serverless Operator and Knative Serving are installed on your cluster.

-

Before you can use init containers for Knative services, an administrator must add the

kubernetes.podspec-init-containersflag to theKnativeServingcustom resource (CR). See the OpenShift Serverless "Global configuration" documentation for more information.

Procedure

Add the

initContainersspec to a KnativeServiceobject:Example service spec

apiVersion: serving.knative.dev/v1 kind: Service ... spec: template: spec: initContainers: - imagePullPolicy: IfNotPresent1 image: <image_uri>2 volumeMounts:3 - name: data mountPath: /data ...- 1

- The image pull policy when the image is downloaded.

- 2

- The URI for the init container image.

- 3

- The location where volumes are mounted within the container file system.

5.1.8. HTTPS redirection per service

You can enable or disable HTTPS redirection for a service by configuring the networking.knative.dev/http-option annotation. The following example shows how you can use this annotation in a Knative Service YAML object:

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: example

namespace: default

annotations:

networking.knative.dev/http-option: "redirected"

spec:

...5.2. Autoscaling

Knative Serving provides automatic scaling, or autoscaling, for applications to match incoming demand. For example, if an application is receiving no traffic, and scale-to-zero is enabled, Knative Serving scales the application down to zero replicas. If scale-to-zero is disabled, the application is scaled down to the minimum number of replicas configured for applications on the cluster. Replicas can also be scaled up to meet demand if traffic to the application increases.

Autoscaling settings for Knative services can be global settings that are configured by cluster administrators, or per-revision settings that are configured for individual services. You can modify per-revision settings for your services by using the OpenShift Container Platform web console, by modifying the YAML file for your service, or by using the Knative (kn) CLI.

Any limits or targets that you set for a service are measured against a single instance of your application. For example, setting the target annotation to 50 configures the autoscaler to scale the application so that each revision handles 50 requests at a time.

5.2.1. Scale bounds

Scale bounds determine the minimum and maximum numbers of replicas that can serve an application at any given time. You can set scale bounds for an application to help prevent cold starts or control computing costs.

5.2.1.1. Minimum scale bounds

The minimum number of replicas that can serve an application is determined by the min-scale annotation. If scale to zero is not enabled, the min-scale value defaults to 1.

The min-scale value defaults to 0 replicas if the following conditions are met:

-

The

min-scaleannotation is not set - Scaling to zero is enabled

-

The class

KPAis used

Example service spec with min-scale annotation

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: example-service

namespace: default

spec:

template:

metadata:

annotations:

autoscaling.knative.dev/min-scale: "0"

...5.2.1.1.1. Setting the min-scale annotation by using the Knative CLI

Using the Knative (kn) CLI to set the min-scale annotation provides a more streamlined and intuitive user interface over modifying YAML files directly. You can use the kn service command with the --scale-min flag to create or modify the min-scale value for a service.

Prerequisites

- Knative Serving is installed on the cluster.

-

You have installed the Knative (

kn) CLI.

Procedure

Set the minimum number of replicas for the service by using the

--scale-minflag:$ kn service create <service_name> --image <image_uri> --scale-min <integer>Example command

$ kn service create example-service --image quay.io/openshift-knative/knative-eventing-sources-event-display:latest --scale-min 2

5.2.1.2. Maximum scale bounds

The maximum number of replicas that can serve an application is determined by the max-scale annotation. If the max-scale annotation is not set, there is no upper limit for the number of replicas created.

Example service spec with max-scale annotation

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: example-service

namespace: default

spec:

template:

metadata:

annotations:

autoscaling.knative.dev/max-scale: "10"

...5.2.1.2.1. Setting the max-scale annotation by using the Knative CLI

Using the Knative (kn) CLI to set the max-scale annotation provides a more streamlined and intuitive user interface over modifying YAML files directly. You can use the kn service command with the --scale-max flag to create or modify the max-scale value for a service.

Prerequisites

- Knative Serving is installed on the cluster.

-

You have installed the Knative (

kn) CLI.

Procedure

Set the maximum number of replicas for the service by using the

--scale-maxflag:$ kn service create <service_name> --image <image_uri> --scale-max <integer>Example command

$ kn service create example-service --image quay.io/openshift-knative/knative-eventing-sources-event-display:latest --scale-max 10

5.2.2. Concurrency

Concurrency determines the number of simultaneous requests that can be processed by each replica of an application at any given time. Concurrency can be configured as a soft limit or a hard limit:

- A soft limit is a targeted requests limit, rather than a strictly enforced bound. For example, if there is a sudden burst of traffic, the soft limit target can be exceeded.

A hard limit is a strictly enforced upper bound requests limit. If concurrency reaches the hard limit, surplus requests are buffered and must wait until there is enough free capacity to execute the requests.

ImportantUsing a hard limit configuration is only recommended if there is a clear use case for it with your application. Having a low, hard limit specified may have a negative impact on the throughput and latency of an application, and might cause cold starts.

Adding a soft target and a hard limit means that the autoscaler targets the soft target number of concurrent requests, but imposes a hard limit of the hard limit value for the maximum number of requests.

If the hard limit value is less than the soft limit value, the soft limit value is tuned down, because there is no need to target more requests than the number that can actually be handled.

5.2.2.1. Configuring a soft concurrency target

A soft limit is a targeted requests limit, rather than a strictly enforced bound. For example, if there is a sudden burst of traffic, the soft limit target can be exceeded. You can specify a soft concurrency target for your Knative service by setting the autoscaling.knative.dev/target annotation in the spec, or by using the kn service command with the correct flags.

Procedure

Optional: Set the

autoscaling.knative.dev/targetannotation for your Knative service in the spec of theServicecustom resource:Example service spec

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: example-service namespace: default spec: template: metadata: annotations: autoscaling.knative.dev/target: "200"Optional: Use the

kn servicecommand to specify the--concurrency-targetflag:$ kn service create <service_name> --image <image_uri> --concurrency-target <integer>Example command to create a service with a concurrency target of 50 requests

$ kn service create example-service --image quay.io/openshift-knative/knative-eventing-sources-event-display:latest --concurrency-target 50

5.2.2.2. Configuring a hard concurrency limit

A hard concurrency limit is a strictly enforced upper bound requests limit. If concurrency reaches the hard limit, surplus requests are buffered and must wait until there is enough free capacity to execute the requests. You can specify a hard concurrency limit for your Knative service by modifying the containerConcurrency spec, or by using the kn service command with the correct flags.

Procedure

Optional: Set the

containerConcurrencyspec for your Knative service in the spec of theServicecustom resource:Example service spec

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: example-service namespace: default spec: template: spec: containerConcurrency: 50The default value is

0, which means that there is no limit on the number of simultaneous requests that are permitted to flow into one replica of the service at a time.A value greater than

0specifies the exact number of requests that are permitted to flow into one replica of the service at a time. This example would enable a hard concurrency limit of 50 requests.Optional: Use the

kn servicecommand to specify the--concurrency-limitflag:$ kn service create <service_name> --image <image_uri> --concurrency-limit <integer>Example command to create a service with a concurrency limit of 50 requests

$ kn service create example-service --image quay.io/openshift-knative/knative-eventing-sources-event-display:latest --concurrency-limit 50

5.2.2.3. Concurrency target utilization

This value specifies the percentage of the concurrency limit that is actually targeted by the autoscaler. This is also known as specifying the hotness at which a replica runs, which enables the autoscaler to scale up before the defined hard limit is reached.

For example, if the containerConcurrency value is set to 10, and the target-utilization-percentage value is set to 70 percent, the autoscaler creates a new replica when the average number of concurrent requests across all existing replicas reaches 7. Requests numbered 7 to 10 are still sent to the existing replicas, but additional replicas are started in anticipation of being required after the containerConcurrency value is reached.

Example service configured using the target-utilization-percentage annotation

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: example-service

namespace: default

spec:

template:

metadata:

annotations:

autoscaling.knative.dev/target-utilization-percentage: "70"

...5.3. Traffic management

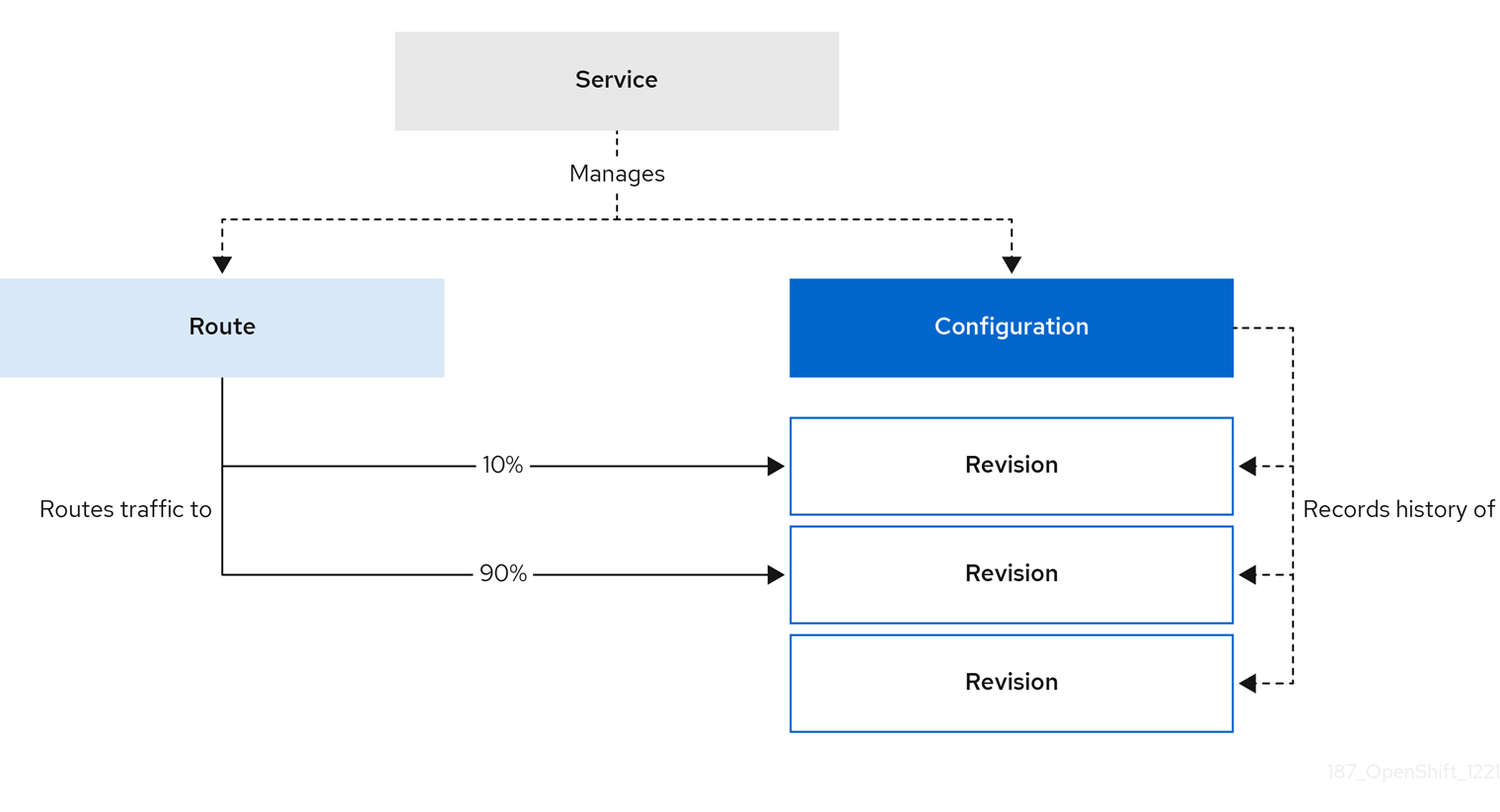

In a Knative application, traffic can be managed by creating a traffic split. A traffic split is configured as part of a route, which is managed by a Knative service.

Configuring a route allows requests to be sent to different revisions of a service. This routing is determined by the traffic spec of the Service object.

A traffic spec declaration consists of one or more revisions, each responsible for handling a portion of the overall traffic. The percentages of traffic routed to each revision must add up to 100%, which is ensured by a Knative validation.

The revisions specified in a traffic spec can either be a fixed, named revision, or can point to the “latest” revision, which tracks the head of the list of all revisions for the service. The "latest" revision is a type of floating reference that updates if a new revision is created. Each revision can have a tag attached that creates an additional access URL for that revision.

The traffic spec can be modified by:

-

Editing the YAML of a

Serviceobject directly. -

Using the Knative (

kn) CLI--trafficflag. - Using the OpenShift Container Platform web console.

When you create a Knative service, it does not have any default traffic spec settings.

5.3.1. Traffic spec examples

The following example shows a traffic spec where 100% of traffic is routed to the latest revision of the service. Under status, you can see the name of the latest revision that latestRevision resolves to:

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: example-service

namespace: default

spec:

...

traffic:

- latestRevision: true

percent: 100

status:

...

traffic:

- percent: 100

revisionName: example-service

The following example shows a traffic spec where 100% of traffic is routed to the revision tagged as current, and the name of that revision is specified as example-service. The revision tagged as latest is kept available, even though no traffic is routed to it:

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: example-service

namespace: default

spec:

...

traffic:

- tag: current

revisionName: example-service

percent: 100

- tag: latest

latestRevision: true

percent: 0

The following example shows how the list of revisions in the traffic spec can be extended so that traffic is split between multiple revisions. This example sends 50% of traffic to the revision tagged as current, and 50% of traffic to the revision tagged as candidate. The revision tagged as latest is kept available, even though no traffic is routed to it:

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: example-service

namespace: default

spec:

...

traffic:

- tag: current

revisionName: example-service-1

percent: 50

- tag: candidate

revisionName: example-service-2

percent: 50

- tag: latest

latestRevision: true

percent: 05.3.2. Knative CLI traffic management flags

The Knative (kn) CLI supports traffic operations on the traffic block of a service as part of the kn service update command.

The following table displays a summary of traffic splitting flags, value formats, and the operation the flag performs. The Repetition column denotes whether repeating the particular value of flag is allowed in a kn service update command.

| Flag | Value(s) | Operation | Repetition |

|---|---|---|---|

|

|

|

Gives | Yes |

|

|

|

Gives | Yes |

|

|

|

Gives | No |

|

|

|

Gives | Yes |

|

|

|

Gives | No |

|

|

|

Removes | Yes |

5.3.2.1. Multiple flags and order precedence

All traffic-related flags can be specified using a single kn service update command. kn defines the precedence of these flags. The order of the flags specified when using the command is not taken into account.

The precedence of the flags as they are evaluated by kn are:

-

--untag: All the referenced revisions with this flag are removed from the traffic block. -

--tag: Revisions are tagged as specified in the traffic block. -

--traffic: The referenced revisions are assigned a portion of the traffic split.

You can add tags to revisions and then split traffic according to the tags you have set.

5.3.2.2. Custom URLs for revisions

Assigning a --tag flag to a service by using the kn service update command creates a custom URL for the revision that is created when you update the service. The custom URL follows the pattern https://<tag>-<service_name>-<namespace>.<domain> or http://<tag>-<service_name>-<namespace>.<domain>.

The --tag and --untag flags use the following syntax:

- Require one value.

- Denote a unique tag in the traffic block of the service.

- Can be specified multiple times in one command.

5.3.2.2.1. Example: Assign a tag to a revision

The following example assigns the tag latest to a revision named example-revision:

$ kn service update <service_name> --tag @latest=example-tag5.3.2.2.2. Example: Remove a tag from a revision

You can remove a tag to remove the custom URL, by using the --untag flag.

If a revision has its tags removed, and it is assigned 0% of the traffic, the revision is removed from the traffic block entirely.

The following command removes all tags from the revision named example-revision:

$ kn service update <service_name> --untag example-tag5.3.3. Creating a traffic split by using the Knative CLI

Using the Knative (kn) CLI to create traffic splits provides a more streamlined and intuitive user interface over modifying YAML files directly. You can use the kn service update command to split traffic between revisions of a service.

Prerequisites

- The OpenShift Serverless Operator and Knative Serving are installed on your cluster.

-

You have installed the Knative (

kn) CLI. - You have created a Knative service.

Procedure

Specify the revision of your service and what percentage of traffic you want to route to it by using the

--traffictag with a standardkn service updatecommand:Example command

$ kn service update <service_name> --traffic <revision>=<percentage>Where:

-

<service_name>is the name of the Knative service that you are configuring traffic routing for. -

<revision>is the revision that you want to configure to receive a percentage of traffic. You can either specify the name of the revision, or a tag that you assigned to the revision by using the--tagflag. -

<percentage>is the percentage of traffic that you want to send to the specified revision.

-

Optional: The

--trafficflag can be specified multiple times in one command. For example, if you have a revision tagged as@latestand a revision namedstable, you can specify the percentage of traffic that you want to split to each revision as follows:Example command

$ kn service update example-service --traffic @latest=20,stable=80If you have multiple revisions and do not specify the percentage of traffic that should be split to the last revision, the

--trafficflag can calculate this automatically. For example, if you have a third revision namedexample, and you use the following command:Example command

$ kn service update example-service --traffic @latest=10,stable=60The remaining 30% of traffic is split to the

examplerevision, even though it was not specified.

5.3.4. Managing traffic between revisions by using the OpenShift Container Platform web console

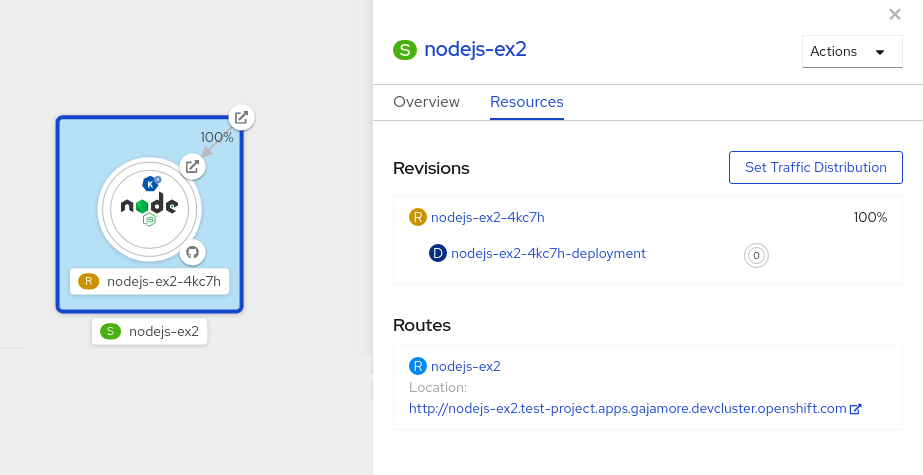

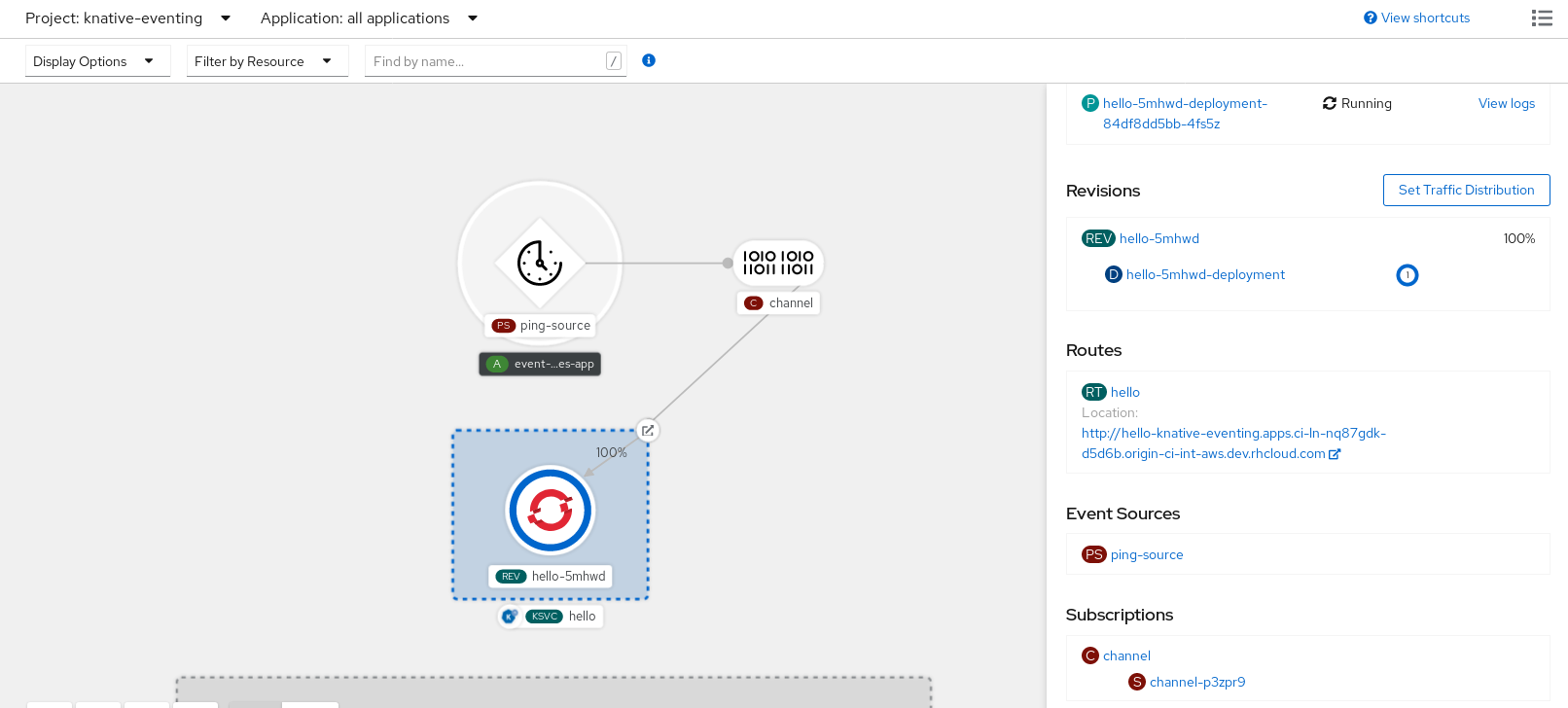

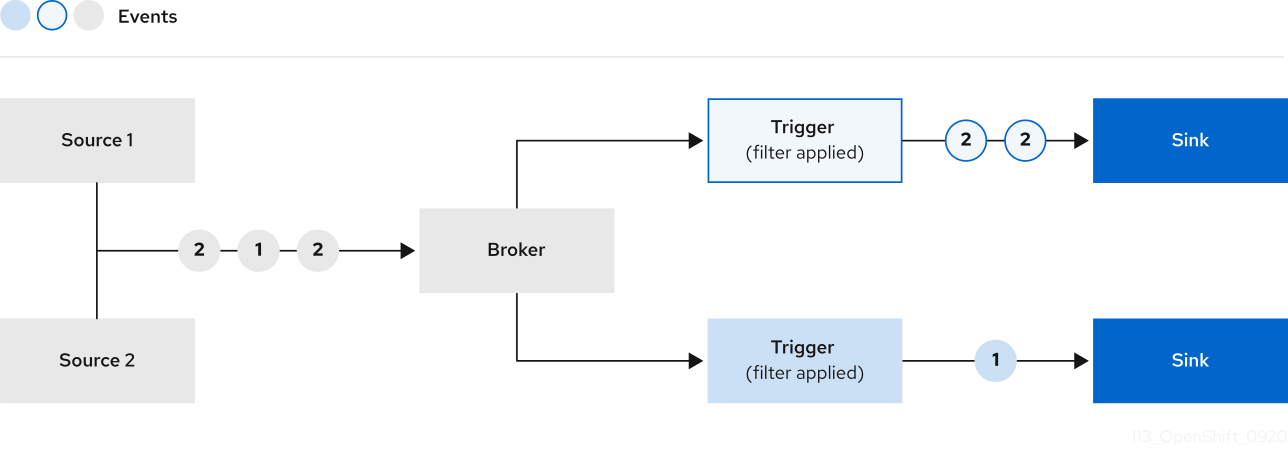

After you create a serverless application, the application is displayed in the Topology view of the Developer perspective in the OpenShift Container Platform web console. The application revision is represented by the node, and the Knative service is indicated by a quadrilateral around the node.

Any new change in the code or the service configuration creates a new revision, which is a snapshot of the code at a given time. For a service, you can manage the traffic between the revisions of the service by splitting and routing it to the different revisions as required.

Prerequisites

- The OpenShift Serverless Operator and Knative Serving are installed on your cluster.

- You have logged in to the OpenShift Container Platform web console.

Procedure

To split traffic between multiple revisions of an application in the Topology view:

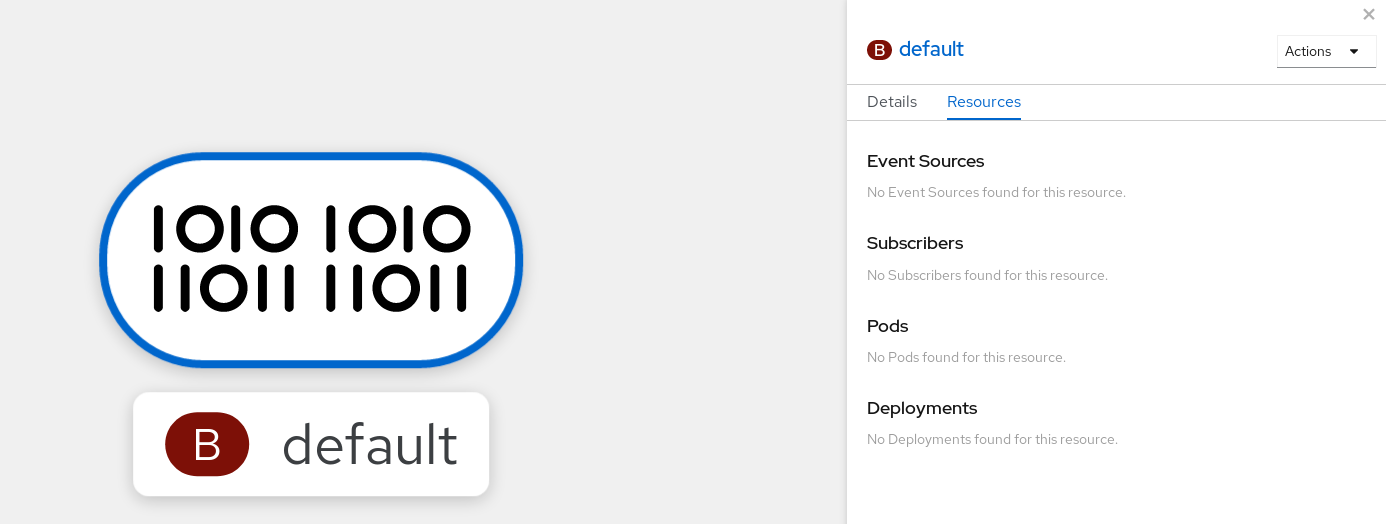

- Click the Knative service to see its overview in the side panel.

Click the Resources tab, to see a list of Revisions and Routes for the service.

Figure 5.1. Serverless application

- Click the service, indicated by the S icon at the top of the side panel, to see an overview of the service details.

-

Click the YAML tab and modify the service configuration in the YAML editor, and click Save. For example, change the

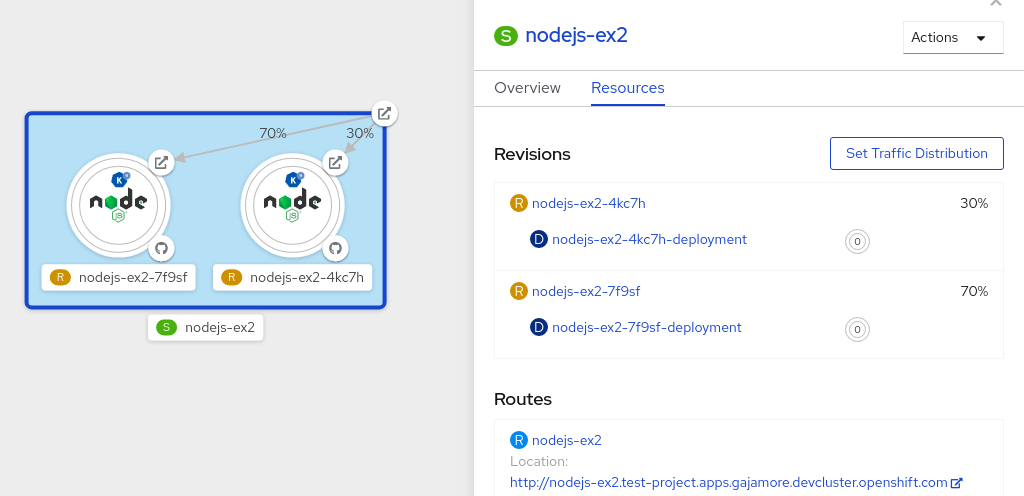

timeoutsecondsfrom 300 to 301 . This change in the configuration triggers a new revision. In the Topology view, the latest revision is displayed and the Resources tab for the service now displays the two revisions. In the Resources tab, click to see the traffic distribution dialog box:

- Add the split traffic percentage portion for the two revisions in the Splits field.

- Add tags to create custom URLs for the two revisions.

Click Save to see two nodes representing the two revisions in the Topology view.

Figure 5.2. Serverless application revisions

5.3.5. Routing and managing traffic by using a blue-green deployment strategy

You can safely reroute traffic from a production version of an app to a new version, by using a blue-green deployment strategy.

Prerequisites

- The OpenShift Serverless Operator and Knative Serving are installed on the cluster.

-

Install the OpenShift CLI (

oc).

Procedure

- Create and deploy an app as a Knative service.

Find the name of the first revision that was created when you deployed the service, by viewing the output from the following command:

$ oc get ksvc <service_name> -o=jsonpath='{.status.latestCreatedRevisionName}'Example command

$ oc get ksvc example-service -o=jsonpath='{.status.latestCreatedRevisionName}'Example output

$ example-service-00001Add the following YAML to the service

specto send inbound traffic to the revision:... spec: traffic: - revisionName: <first_revision_name> percent: 100 # All traffic goes to this revision ...Verify that you can view your app at the URL output you get from running the following command:

$ oc get ksvc <service_name>-

Deploy a second revision of your app by modifying at least one field in the

templatespec of the service and redeploying it. For example, you can modify theimageof the service, or anenvenvironment variable. You can redeploy the service by applying the service YAML file, or by using thekn service updatecommand if you have installed the Knative (kn) CLI. Find the name of the second, latest revision that was created when you redeployed the service, by running the command:

$ oc get ksvc <service_name> -o=jsonpath='{.status.latestCreatedRevisionName}'At this point, both the first and second revisions of the service are deployed and running.

Update your existing service to create a new, test endpoint for the second revision, while still sending all other traffic to the first revision:

Example of updated service spec with test endpoint

... spec: traffic: - revisionName: <first_revision_name> percent: 100 # All traffic is still being routed to the first revision - revisionName: <second_revision_name> percent: 0 # No traffic is routed to the second revision tag: v2 # A named route ...After you redeploy this service by reapplying the YAML resource, the second revision of the app is now staged. No traffic is routed to the second revision at the main URL, and Knative creates a new service named

v2for testing the newly deployed revision.Get the URL of the new service for the second revision, by running the following command:

$ oc get ksvc <service_name> --output jsonpath="{.status.traffic[*].url}"You can use this URL to validate that the new version of the app is behaving as expected before you route any traffic to it.

Update your existing service again, so that 50% of traffic is sent to the first revision, and 50% is sent to the second revision:

Example of updated service spec splitting traffic 50/50 between revisions

... spec: traffic: - revisionName: <first_revision_name> percent: 50 - revisionName: <second_revision_name> percent: 50 tag: v2 ...When you are ready to route all traffic to the new version of the app, update the service again to send 100% of traffic to the second revision:

Example of updated service spec sending all traffic to the second revision

... spec: traffic: - revisionName: <first_revision_name> percent: 0 - revisionName: <second_revision_name> percent: 100 tag: v2 ...TipYou can remove the first revision instead of setting it to 0% of traffic if you do not plan to roll back the revision. Non-routeable revision objects are then garbage-collected.

- Visit the URL of the first revision to verify that no more traffic is being sent to the old version of the app.

5.4. Routing

Knative leverages OpenShift Container Platform TLS termination to provide routing for Knative services. When a Knative service is created, a OpenShift Container Platform route is automatically created for the service. This route is managed by the OpenShift Serverless Operator. The OpenShift Container Platform route exposes the Knative service through the same domain as the OpenShift Container Platform cluster.

You can disable Operator control of OpenShift Container Platform routing so that you can configure a Knative route to directly use your TLS certificates instead.

Knative routes can also be used alongside the OpenShift Container Platform route to provide additional fine-grained routing capabilities, such as traffic splitting.

5.4.1. Customizing labels and annotations for OpenShift Container Platform routes

OpenShift Container Platform routes support the use of custom labels and annotations, which you can configure by modifying the metadata spec of a Knative service. Custom labels and annotations are propagated from the service to the Knative route, then to the Knative ingress, and finally to the OpenShift Container Platform route.

Prerequisites

- You must have the OpenShift Serverless Operator and Knative Serving installed on your OpenShift Container Platform cluster.

-

Install the OpenShift CLI (

oc).

Procedure

Create a Knative service that contains the label or annotation that you want to propagate to the OpenShift Container Platform route:

To create a service by using YAML:

Example service created by using YAML

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: <service_name> labels: <label_name>: <label_value> annotations: <annotation_name>: <annotation_value> ...To create a service by using the Knative (

kn) CLI, enter:Example service created by using a

kncommand$ kn service create <service_name> \ --image=<image> \ --annotation <annotation_name>=<annotation_value> \ --label <label_value>=<label_value>

Verify that the OpenShift Container Platform route has been created with the annotation or label that you added by inspecting the output from the following command:

Example command for verification

$ oc get routes.route.openshift.io \ -l serving.knative.openshift.io/ingressName=<service_name> \1 -l serving.knative.openshift.io/ingressNamespace=<service_namespace> \2 -n knative-serving-ingress -o yaml \ | grep -e "<label_name>: \"<label_value>\"" -e "<annotation_name>: <annotation_value>"3

5.4.2. Configuring OpenShift Container Platform routes for Knative services

If you want to configure a Knative service to use your TLS certificate on OpenShift Container Platform, you must disable the automatic creation of a route for the service by the OpenShift Serverless Operator and instead manually create a route for the service.

When you complete the following procedure, the default OpenShift Container Platform route in the knative-serving-ingress namespace is not created. However, the Knative route for the application is still created in this namespace.

Prerequisites

- The OpenShift Serverless Operator and Knative Serving component must be installed on your OpenShift Container Platform cluster.

-

Install the OpenShift CLI (

oc).

Procedure

Create a Knative service that includes the

serving.knative.openshift.io/disableRoute=trueannotation:ImportantThe

serving.knative.openshift.io/disableRoute=trueannotation instructs OpenShift Serverless to not automatically create a route for you. However, the service still shows a URL and reaches a status ofReady. This URL does not work externally until you create your own route with the same hostname as the hostname in the URL.Create a Knative

Serviceresource:Example resource

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: <service_name> annotations: serving.knative.openshift.io/disableRoute: "true" spec: template: spec: containers: - image: <image> ...Apply the

Serviceresource:$ oc apply -f <filename>Optional. Create a Knative service by using the

kn service createcommand:Example

kncommand$ kn service create <service_name> \ --image=gcr.io/knative-samples/helloworld-go \ --annotation serving.knative.openshift.io/disableRoute=true

Verify that no OpenShift Container Platform route has been created for the service:

Example command

$ $ oc get routes.route.openshift.io \ -l serving.knative.openshift.io/ingressName=$KSERVICE_NAME \ -l serving.knative.openshift.io/ingressNamespace=$KSERVICE_NAMESPACE \ -n knative-serving-ingressYou will see the following output:

No resources found in knative-serving-ingress namespace.Create a

Routeresource in theknative-serving-ingressnamespace:apiVersion: route.openshift.io/v1 kind: Route metadata: annotations: haproxy.router.openshift.io/timeout: 600s1 name: <route_name>2 namespace: knative-serving-ingress3 spec: host: <service_host>4 port: targetPort: http2 to: kind: Service name: kourier weight: 100 tls: insecureEdgeTerminationPolicy: Allow termination: edge5 key: |- -----BEGIN PRIVATE KEY----- [...] -----END PRIVATE KEY----- certificate: |- -----BEGIN CERTIFICATE----- [...] -----END CERTIFICATE----- caCertificate: |- -----BEGIN CERTIFICATE----- [...] -----END CERTIFICATE---- wildcardPolicy: None- 1

- The timeout value for the OpenShift Container Platform route. You must set the same value as the

max-revision-timeout-secondssetting (600sby default). - 2

- The name of the OpenShift Container Platform route.

- 3

- The namespace for the OpenShift Container Platform route. This must be

knative-serving-ingress. - 4

- The hostname for external access. You can set this to

<service_name>-<service_namespace>.<domain>. - 5

- The certificates you want to use. Currently, only

edgetermination is supported.

Apply the

Routeresource:$ oc apply -f <filename>

5.4.3. Setting cluster availability to cluster local

By default, Knative services are published to a public IP address. Being published to a public IP address means that Knative services are public applications, and have a publicly accessible URL.

Publicly accessible URLs are accessible from outside of the cluster. However, developers may need to build back-end services that are only be accessible from inside the cluster, known as private services. Developers can label individual services in the cluster with the networking.knative.dev/visibility=cluster-local label to make them private.

For OpenShift Serverless 1.15.0 and newer versions, the serving.knative.dev/visibility label is no longer available. You must update existing services to use the networking.knative.dev/visibility label instead.

Prerequisites

- The OpenShift Serverless Operator and Knative Serving are installed on the cluster.

- You have created a Knative service.

Procedure

Set the visibility for your service by adding the

networking.knative.dev/visibility=cluster-locallabel:$ oc label ksvc <service_name> networking.knative.dev/visibility=cluster-local

Verification

Check that the URL for your service is now in the format

http://<service_name>.<namespace>.svc.cluster.local, by entering the following command and reviewing the output:$ oc get ksvcExample output

NAME URL LATESTCREATED LATESTREADY READY REASON hello http://hello.default.svc.cluster.local hello-tx2g7 hello-tx2g7 True

5.5. Event sinks

When you create an event source, you can specify a sink where events are sent to from the source. A sink is an addressable or a callable resource that can receive incoming events from other resources. Knative services, channels and brokers are all examples of sinks.

Addressable objects receive and acknowledge an event delivered over HTTP to an address defined in their status.address.url field. As a special case, the core Kubernetes Service object also fulfills the addressable interface.

Callable objects are able to receive an event delivered over HTTP and transform the event, returning 0 or 1 new events in the HTTP response. These returned events may be further processed in the same way that events from an external event source are processed.

5.5.1. Knative CLI sink flag

When you create an event source by using the Knative (kn) CLI, you can specify a sink where events are sent to from that resource by using the --sink flag. The sink can be any addressable or callable resource that can receive incoming events from other resources.

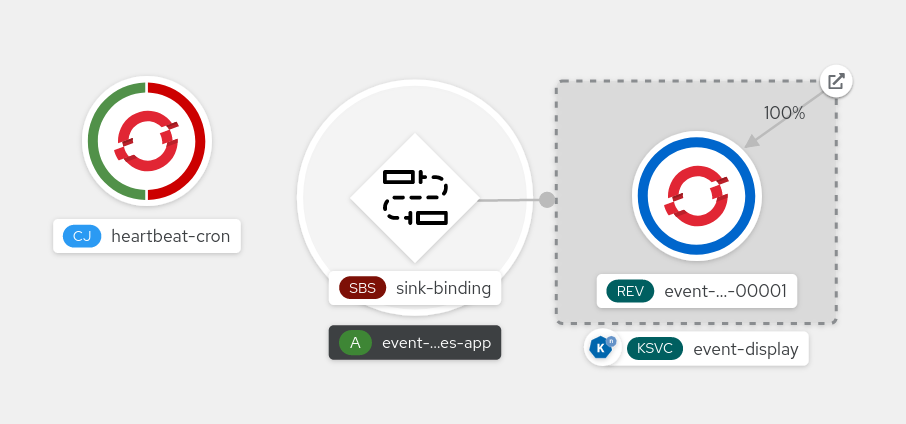

The following example creates a sink binding that uses a service, http://event-display.svc.cluster.local, as the sink:

Example command using the sink flag

$ kn source binding create bind-heartbeat \

--namespace sinkbinding-example \

--subject "Job:batch/v1:app=heartbeat-cron" \

--sink http://event-display.svc.cluster.local \

--ce-override "sink=bound"- 1

svcinhttp://event-display.svc.cluster.localdetermines that the sink is a Knative service. Other default sink prefixes includechannel, andbroker.

You can configure which CRs can be used with the --sink flag for Knative (kn) CLI commands by Customizing kn.

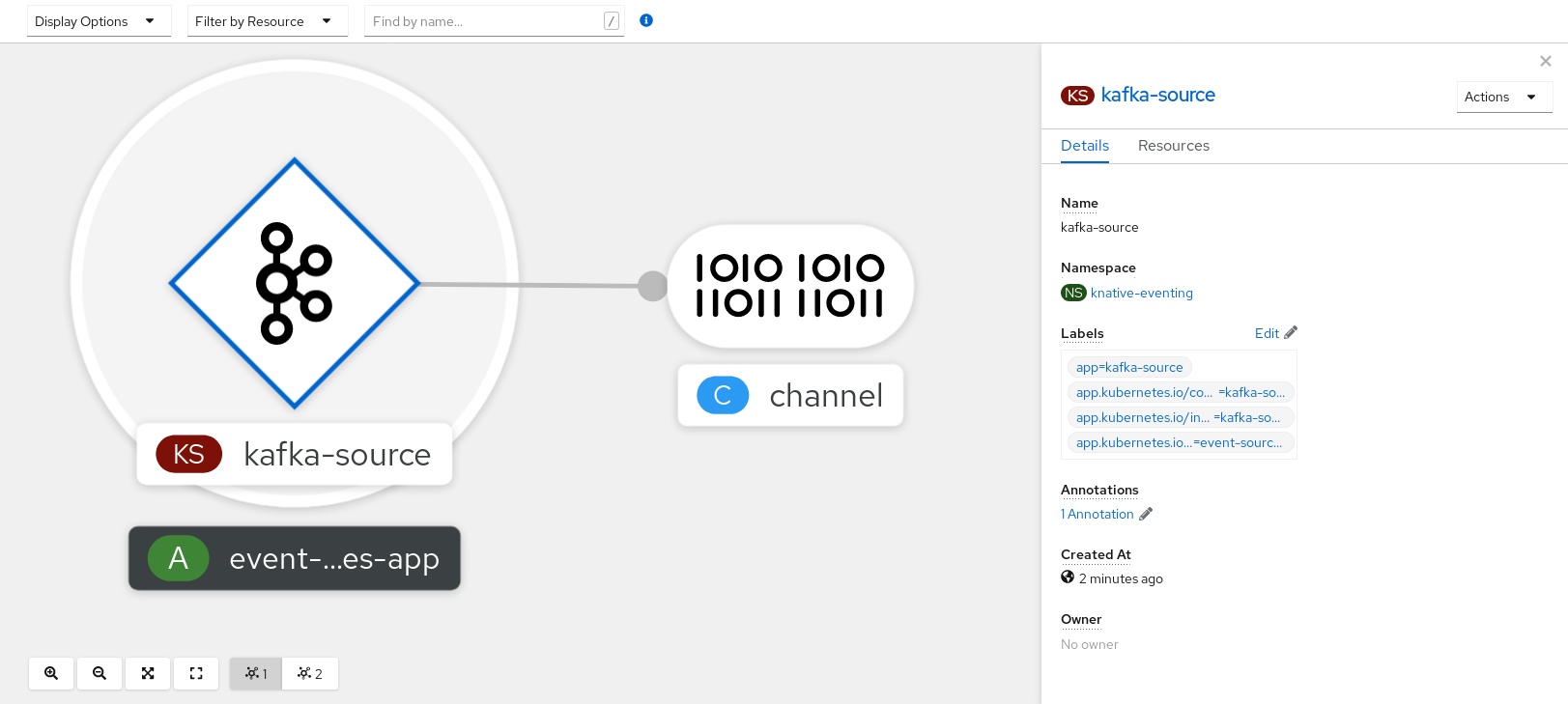

5.5.2. Connect an event source to a sink using the Developer perspective

When you create an event source by using the OpenShift Container Platform web console, you can specify a sink that events are sent to from that source. The sink can be any addressable or callable resource that can receive incoming events from other resources.

Prerequisites

- The OpenShift Serverless Operator, Knative Serving, and Knative Eventing are installed on your OpenShift Container Platform cluster.

- You have logged in to the web console and are in the Developer perspective.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

- You have created a sink, such as a Knative service, channel or broker.

Procedure

-

Create an event source of any type, by navigating to +Add

Event Source and selecting the event source type that you want to create. - In the Sink section of the Create Event Source form view, select your sink in the Resource list.

- Click Create.

Verification

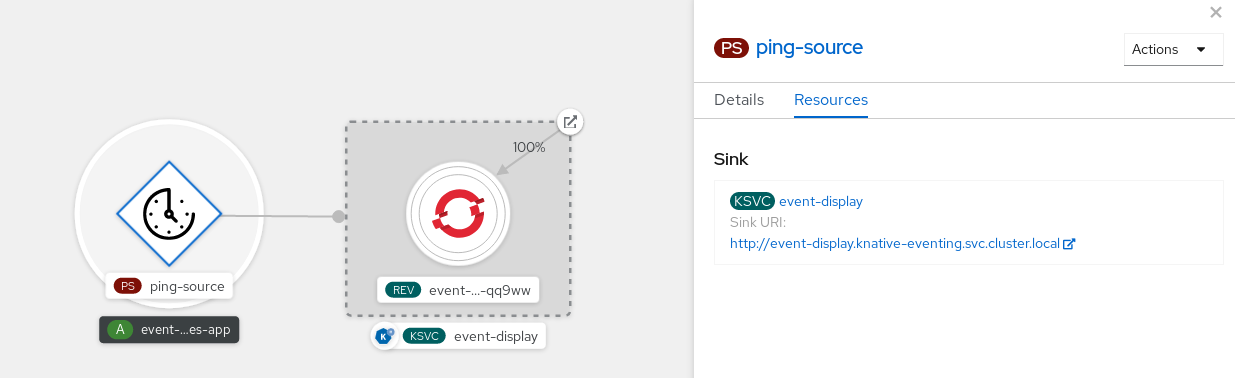

You can verify that the event source was created and is connected to the sink by viewing the Topology page.

- In the Developer perspective, navigate to Topology.

- View the event source and click the connected sink to see the sink details in the right panel.

5.5.3. Connecting a trigger to a sink

You can connect a trigger to a sink, so that events from a broker are filtered before they are sent to the sink. A sink that is connected to a trigger is configured as a subscriber in the Trigger object’s resource spec.

Example of a Trigger object connected to a Kafka sink

apiVersion: eventing.knative.dev/v1

kind: Trigger

metadata:

name: <trigger_name>

spec:

...

subscriber:

ref:

apiVersion: eventing.knative.dev/v1alpha1

kind: KafkaSink

name: <kafka_sink_name> 5.6. Event delivery

You can configure event delivery parameters that are applied in cases where an event fails to be delivered to an event sink. Configuring event delivery parameters, including a dead letter sink, ensures that any events that fail to be delivered to an event sink are retried. Otherwise, undelivered events are dropped.

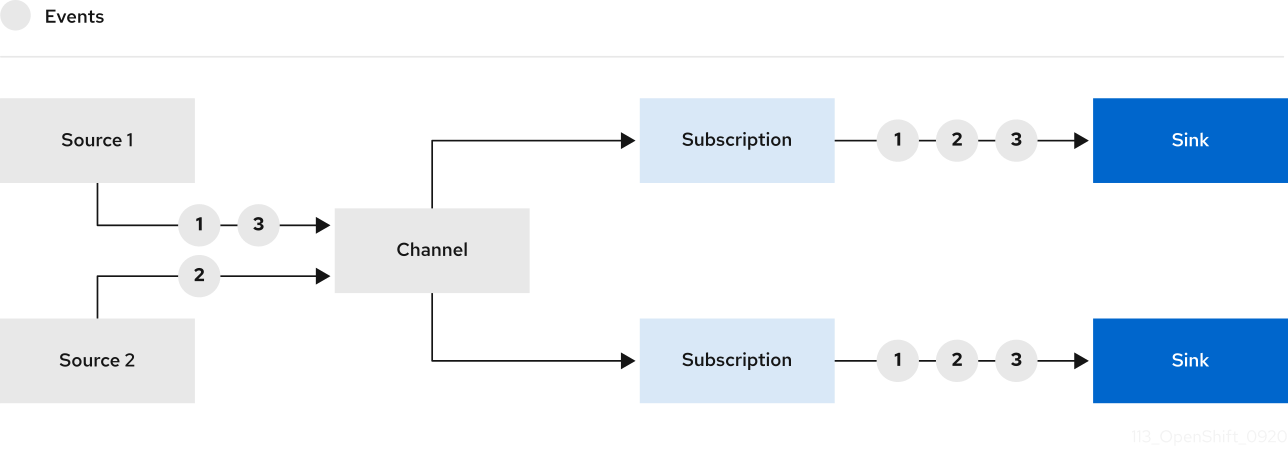

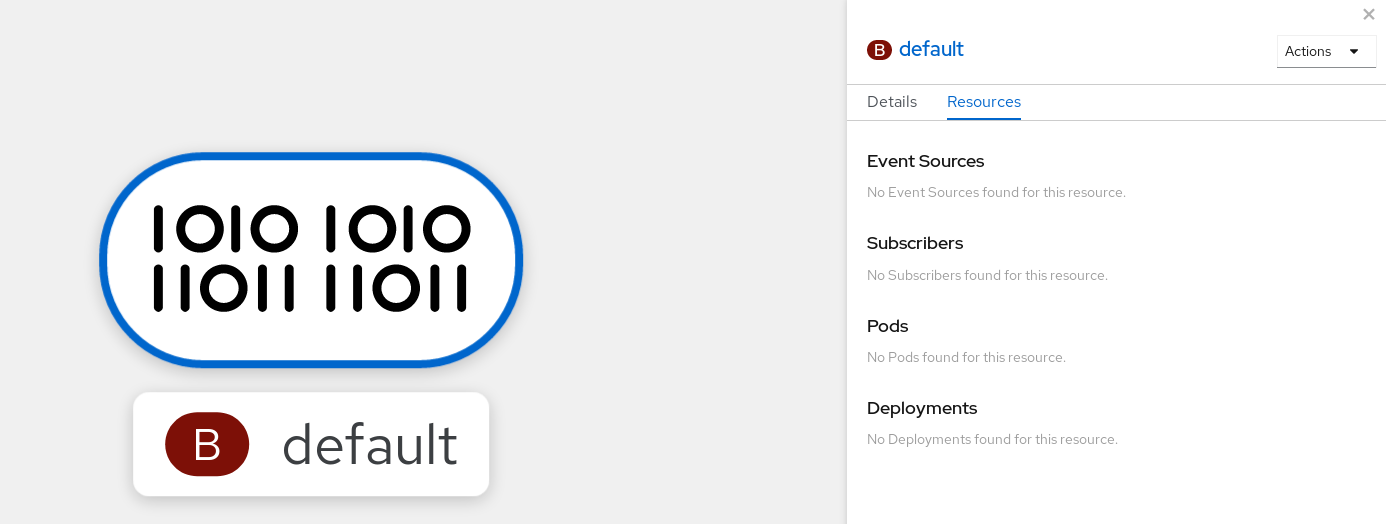

5.6.1. Event delivery behavior patterns for channels and brokers

Different channel and broker types have their own behavior patterns that are followed for event delivery.

5.6.1.1. Knative Kafka channels and brokers

If an event is successfully delivered to a Kafka channel or broker receiver, the receiver responds with a 202 status code, which means that the event has been safely stored inside a Kafka topic and is not lost.

If the receiver responds with any other status code, the event is not safely stored, and steps must be taken by the user to resolve the issue.

5.6.2. Configurable event delivery parameters

The following parameters can be configured for event delivery:

- Dead letter sink

-

You can configure the

deadLetterSinkdelivery parameter so that if an event fails to be delivered, it is stored in the specified event sink. Undelivered events that are not stored in a dead letter sink are dropped. The dead letter sink be any addressable object that conforms to the Knative Eventing sink contract, such as a Knative service, a Kubernetes service, or a URI. - Retries

-

You can set a minimum number of times that the delivery must be retried before the event is sent to the dead letter sink, by configuring the

retrydelivery parameter with an integer value. - Back off delay

-

You can set the

backoffDelaydelivery parameter to specify the time delay before an event delivery retry is attempted after a failure. The duration of thebackoffDelayparameter is specified using the ISO 8601 format. For example,PT1Sspecifies a 1 second delay. - Back off policy

-

The

backoffPolicydelivery parameter can be used to specify the retry back off policy. The policy can be specified as eitherlinearorexponential. When using thelinearback off policy, the back off delay is equal tobackoffDelay * <numberOfRetries>. When using theexponentialbackoff policy, the back off delay is equal tobackoffDelay*2^<numberOfRetries>.

5.6.3. Examples of configuring event delivery parameters

You can configure event delivery parameters for Broker, Trigger, Channel, and Subscription objects. If you configure event delivery parameters for a broker or channel, these parameters are propagated to triggers or subscriptions created for those objects. You can also set event delivery parameters for triggers or subscriptions to override the settings for the broker or channel.

Example Broker object

apiVersion: eventing.knative.dev/v1

kind: Broker

metadata:

...

spec:

delivery:

deadLetterSink:

ref:

apiVersion: eventing.knative.dev/v1alpha1

kind: KafkaSink

name: <sink_name>

backoffDelay: <duration>

backoffPolicy: <policy_type>

retry: <integer>

...Example Trigger object

apiVersion: eventing.knative.dev/v1

kind: Trigger

metadata:

...

spec:

broker: <broker_name>

delivery:

deadLetterSink:

ref:

apiVersion: serving.knative.dev/v1

kind: Service

name: <sink_name>

backoffDelay: <duration>

backoffPolicy: <policy_type>

retry: <integer>

...Example Channel object

apiVersion: messaging.knative.dev/v1

kind: Channel

metadata:

...

spec:

delivery:

deadLetterSink:

ref:

apiVersion: serving.knative.dev/v1

kind: Service

name: <sink_name>

backoffDelay: <duration>

backoffPolicy: <policy_type>

retry: <integer>

...Example Subscription object

apiVersion: messaging.knative.dev/v1

kind: Subscription

metadata:

...

spec:

channel:

apiVersion: messaging.knative.dev/v1

kind: Channel

name: <channel_name>

delivery:

deadLetterSink:

ref:

apiVersion: serving.knative.dev/v1

kind: Service

name: <sink_name>

backoffDelay: <duration>

backoffPolicy: <policy_type>

retry: <integer>

...5.6.4. Configuring event delivery ordering for triggers

If you are using a Kafka broker, you can configure the delivery order of events from triggers to event sinks.

Prerequisites

- The OpenShift Serverless Operator, Knative Eventing, and Knative Kafka are installed on your OpenShift Container Platform cluster.

- Kafka broker is enabled for use on your cluster, and you have created a Kafka broker.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

-

You have installed the OpenShift (

oc) CLI.

Procedure

Create or modify a

Triggerobject and set thekafka.eventing.knative.dev/delivery.orderannotation:apiVersion: eventing.knative.dev/v1 kind: Trigger metadata: name: <trigger_name> annotations: kafka.eventing.knative.dev/delivery.order: ordered ...The supported consumer delivery guarantees are:

unordered- An unordered consumer is a non-blocking consumer that delivers messages unordered, while preserving proper offset management.

orderedAn ordered consumer is a per-partition blocking consumer that waits for a successful response from the CloudEvent subscriber before it delivers the next message of the partition.

The default ordering guarantee is

unordered.

Apply the

Triggerobject:$ oc apply -f <filename>

5.7. Listing event sources and event source types

It is possible to view a list of all event sources or event source types that exist or are available for use on your OpenShift Container Platform cluster. You can use the Knative (kn) CLI or the Developer perspective in the OpenShift Container Platform web console to list available event sources or event source types.

5.7.1. Listing available event source types by using the Knative CLI

Using the Knative (kn) CLI provides a streamlined and intuitive user interface to view available event source types on your cluster. You can list event source types that can be created and used on your cluster by using the kn source list-types CLI command.

Prerequisites

- The OpenShift Serverless Operator and Knative Eventing are installed on the cluster.

-

You have installed the Knative (

kn) CLI.

Procedure

List the available event source types in the terminal:

$ kn source list-typesExample output

TYPE NAME DESCRIPTION ApiServerSource apiserversources.sources.knative.dev Watch and send Kubernetes API events to a sink PingSource pingsources.sources.knative.dev Periodically send ping events to a sink SinkBinding sinkbindings.sources.knative.dev Binding for connecting a PodSpecable to a sinkOptional: You can also list the available event source types in YAML format:

$ kn source list-types -o yaml

5.7.2. Viewing available event source types within the Developer perspective

It is possible to view a list of all available event source types on your cluster. Using the OpenShift Container Platform web console provides a streamlined and intuitive user interface to view available event source types.

Prerequisites

- You have logged in to the OpenShift Container Platform web console.

- The OpenShift Serverless Operator and Knative Eventing are installed on your OpenShift Container Platform cluster.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

Procedure

- Access the Developer perspective.

- Click +Add.

- Click Event Source.

- View the available event source types.

5.7.3. Listing available event sources by using the Knative CLI

Using the Knative (kn) CLI provides a streamlined and intuitive user interface to view existing event sources on your cluster. You can list existing event sources by using the kn source list command.

Prerequisites

- The OpenShift Serverless Operator and Knative Eventing are installed on the cluster.

-

You have installed the Knative (

kn) CLI.

Procedure

List the existing event sources in the terminal:

$ kn source listExample output

NAME TYPE RESOURCE SINK READY a1 ApiServerSource apiserversources.sources.knative.dev ksvc:eshow2 True b1 SinkBinding sinkbindings.sources.knative.dev ksvc:eshow3 False p1 PingSource pingsources.sources.knative.dev ksvc:eshow1 TrueOptional: You can list event sources of a specific type only, by using the

--typeflag:$ kn source list --type <event_source_type>Example command

$ kn source list --type PingSourceExample output

NAME TYPE RESOURCE SINK READY p1 PingSource pingsources.sources.knative.dev ksvc:eshow1 True

5.8. Creating an API server source

The API server source is an event source that can be used to connect an event sink, such as a Knative service, to the Kubernetes API server. The API server source watches for Kubernetes events and forwards them to the Knative Eventing broker.

5.8.1. Creating an API server source by using the web console

After Knative Eventing is installed on your cluster, you can create an API server source by using the web console. Using the OpenShift Container Platform web console provides a streamlined and intuitive user interface to create an event source.

Prerequisites

- You have logged in to the OpenShift Container Platform web console.

- The OpenShift Serverless Operator and Knative Eventing are installed on the cluster.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

-

You have installed the OpenShift CLI (

oc).

If you want to re-use an existing service account, you can modify your existing ServiceAccount resource to include the required permissions instead of creating a new resource.

Create a service account, role, and role binding for the event source as a YAML file:

apiVersion: v1 kind: ServiceAccount metadata: name: events-sa namespace: default1 --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: event-watcher namespace: default2 rules: - apiGroups: - "" resources: - events verbs: - get - list - watch --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: k8s-ra-event-watcher namespace: default3 roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: event-watcher subjects: - kind: ServiceAccount name: events-sa namespace: default4 Apply the YAML file:

$ oc apply -f <filename>-

In the Developer perspective, navigate to +Add

Event Source. The Event Sources page is displayed. - Optional: If you have multiple providers for your event sources, select the required provider from the Providers list to filter the available event sources from the provider.

- Select ApiServerSource and then click Create Event Source. The Create Event Source page is displayed.

Configure the ApiServerSource settings by using the Form view or YAML view:

NoteYou can switch between the Form view and YAML view. The data is persisted when switching between the views.

-

Enter

v1as the APIVERSION andEventas the KIND. - Select the Service Account Name for the service account that you created.

- Select the Sink for the event source. A Sink can be either a Resource, such as a channel, broker, or service, or a URI.

-

Enter

- Click Create.

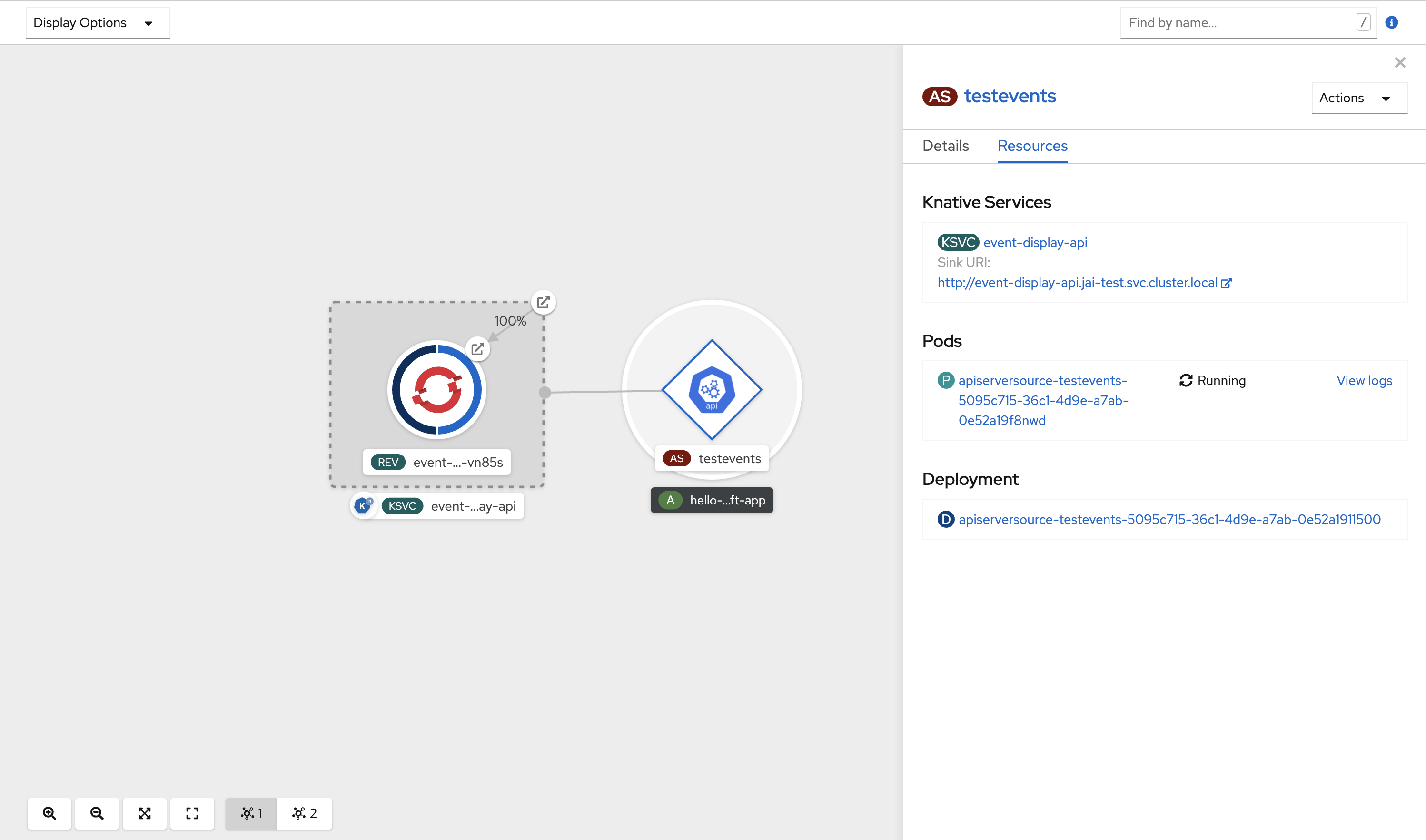

Verification

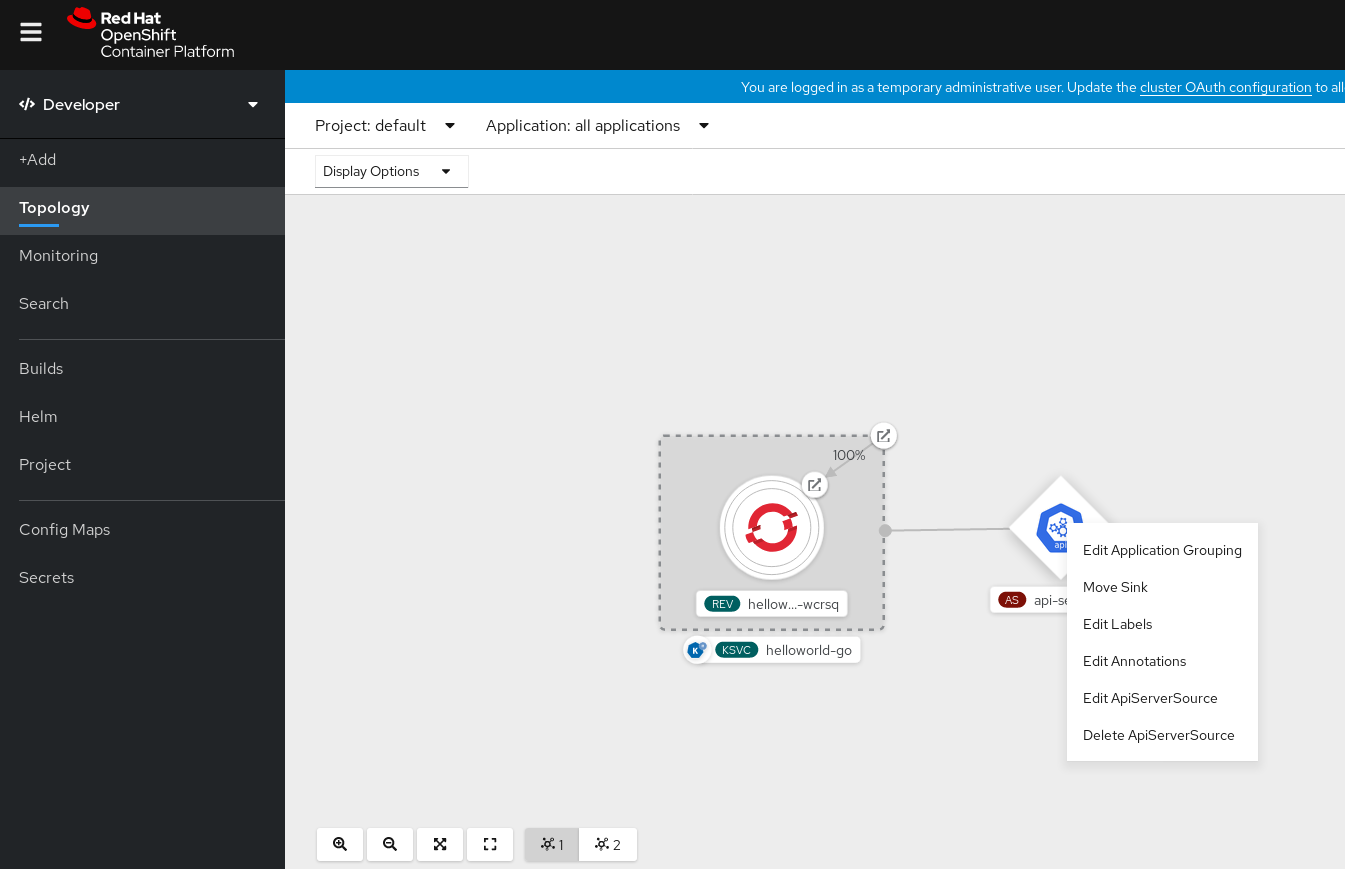

After you have created the API server source, you will see it connected to the service it is sinked to in the Topology view.

If a URI sink is used, modify the URI by right-clicking on URI sink

Deleting the API server source

- Navigate to the Topology view.

Right-click the API server source and select Delete ApiServerSource.

5.8.2. Creating an API server source by using the Knative CLI

You can use the kn source apiserver create command to create an API server source by using the kn CLI. Using the kn CLI to create an API server source provides a more streamlined and intuitive user interface than modifying YAML files directly.

Prerequisites

- The OpenShift Serverless Operator and Knative Eventing are installed on the cluster.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

-

You have installed the OpenShift CLI (

oc). -

You have installed the Knative (

kn) CLI.

If you want to re-use an existing service account, you can modify your existing ServiceAccount resource to include the required permissions instead of creating a new resource.

Create a service account, role, and role binding for the event source as a YAML file:

apiVersion: v1 kind: ServiceAccount metadata: name: events-sa namespace: default1 --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: event-watcher namespace: default2 rules: - apiGroups: - "" resources: - events verbs: - get - list - watch --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: k8s-ra-event-watcher namespace: default3 roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: event-watcher subjects: - kind: ServiceAccount name: events-sa namespace: default4 Apply the YAML file:

$ oc apply -f <filename>Create an API server source that has an event sink. In the following example, the sink is a broker:

$ kn source apiserver create <event_source_name> --sink broker:<broker_name> --resource "event:v1" --service-account <service_account_name> --mode ResourceTo check that the API server source is set up correctly, create a Knative service that dumps incoming messages to its log:

$ kn service create <service_name> --image quay.io/openshift-knative/knative-eventing-sources-event-display:latestIf you used a broker as an event sink, create a trigger to filter events from the

defaultbroker to the service:$ kn trigger create <trigger_name> --sink ksvc:<service_name>Create events by launching a pod in the default namespace:

$ oc create deployment hello-node --image quay.io/openshift-knative/knative-eventing-sources-event-display:latestCheck that the controller is mapped correctly by inspecting the output generated by the following command:

$ kn source apiserver describe <source_name>Example output

Name: mysource Namespace: default Annotations: sources.knative.dev/creator=developer, sources.knative.dev/lastModifier=developer Age: 3m ServiceAccountName: events-sa Mode: Resource Sink: Name: default Namespace: default Kind: Broker (eventing.knative.dev/v1) Resources: Kind: event (v1) Controller: false Conditions: OK TYPE AGE REASON ++ Ready 3m ++ Deployed 3m ++ SinkProvided 3m ++ SufficientPermissions 3m ++ EventTypesProvided 3m

Verification

You can verify that the Kubernetes events were sent to Knative by looking at the message dumper function logs.

Get the pods:

$ oc get podsView the message dumper function logs for the pods:

$ oc logs $(oc get pod -o name | grep event-display) -c user-containerExample output

☁️ cloudevents.Event Validation: valid Context Attributes, specversion: 1.0 type: dev.knative.apiserver.resource.update datacontenttype: application/json ... Data, { "apiVersion": "v1", "involvedObject": { "apiVersion": "v1", "fieldPath": "spec.containers{hello-node}", "kind": "Pod", "name": "hello-node", "namespace": "default", ..... }, "kind": "Event", "message": "Started container", "metadata": { "name": "hello-node.159d7608e3a3572c", "namespace": "default", .... }, "reason": "Started", ... }

Deleting the API server source

Delete the trigger:

$ kn trigger delete <trigger_name>Delete the event source:

$ kn source apiserver delete <source_name>Delete the service account, cluster role, and cluster binding:

$ oc delete -f authentication.yaml

5.8.2.1. Knative CLI sink flag

When you create an event source by using the Knative (kn) CLI, you can specify a sink where events are sent to from that resource by using the --sink flag. The sink can be any addressable or callable resource that can receive incoming events from other resources.

The following example creates a sink binding that uses a service, http://event-display.svc.cluster.local, as the sink:

Example command using the sink flag

$ kn source binding create bind-heartbeat \

--namespace sinkbinding-example \

--subject "Job:batch/v1:app=heartbeat-cron" \

--sink http://event-display.svc.cluster.local \

--ce-override "sink=bound"- 1

svcinhttp://event-display.svc.cluster.localdetermines that the sink is a Knative service. Other default sink prefixes includechannel, andbroker.

5.8.3. Creating an API server source by using YAML files

Creating Knative resources by using YAML files uses a declarative API, which enables you to describe event sources declaratively and in a reproducible manner. To create an API server source by using YAML, you must create a YAML file that defines an ApiServerSource object, then apply it by using the oc apply command.

Prerequisites

- The OpenShift Serverless Operator and Knative Eventing are installed on the cluster.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

-

You have created the

defaultbroker in the same namespace as the one defined in the API server source YAML file. -

Install the OpenShift CLI (

oc).

If you want to re-use an existing service account, you can modify your existing ServiceAccount resource to include the required permissions instead of creating a new resource.

Create a service account, role, and role binding for the event source as a YAML file:

apiVersion: v1 kind: ServiceAccount metadata: name: events-sa namespace: default1 --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: event-watcher namespace: default2 rules: - apiGroups: - "" resources: - events verbs: - get - list - watch --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: k8s-ra-event-watcher namespace: default3 roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: event-watcher subjects: - kind: ServiceAccount name: events-sa namespace: default4 Apply the YAML file:

$ oc apply -f <filename>Create an API server source as a YAML file:

apiVersion: sources.knative.dev/v1alpha1 kind: ApiServerSource metadata: name: testevents spec: serviceAccountName: events-sa mode: Resource resources: - apiVersion: v1 kind: Event sink: ref: apiVersion: eventing.knative.dev/v1 kind: Broker name: defaultApply the

ApiServerSourceYAML file:$ oc apply -f <filename>To check that the API server source is set up correctly, create a Knative service as a YAML file that dumps incoming messages to its log:

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: event-display namespace: default spec: template: spec: containers: - image: quay.io/openshift-knative/knative-eventing-sources-event-display:latestApply the

ServiceYAML file:$ oc apply -f <filename>Create a

Triggerobject as a YAML file that filters events from thedefaultbroker to the service created in the previous step:apiVersion: eventing.knative.dev/v1 kind: Trigger metadata: name: event-display-trigger namespace: default spec: broker: default subscriber: ref: apiVersion: serving.knative.dev/v1 kind: Service name: event-displayApply the

TriggerYAML file:$ oc apply -f <filename>Create events by launching a pod in the default namespace:

$ oc create deployment hello-node --image=quay.io/openshift-knative/knative-eventing-sources-event-displayCheck that the controller is mapped correctly, by entering the following command and inspecting the output:

$ oc get apiserversource.sources.knative.dev testevents -o yamlExample output

apiVersion: sources.knative.dev/v1alpha1 kind: ApiServerSource metadata: annotations: creationTimestamp: "2020-04-07T17:24:54Z" generation: 1 name: testevents namespace: default resourceVersion: "62868" selfLink: /apis/sources.knative.dev/v1alpha1/namespaces/default/apiserversources/testevents2 uid: 1603d863-bb06-4d1c-b371-f580b4db99fa spec: mode: Resource resources: - apiVersion: v1 controller: false controllerSelector: apiVersion: "" kind: "" name: "" uid: "" kind: Event labelSelector: {} serviceAccountName: events-sa sink: ref: apiVersion: eventing.knative.dev/v1 kind: Broker name: default

Verification

To verify that the Kubernetes events were sent to Knative, you can look at the message dumper function logs.

Get the pods by entering the following command:

$ oc get podsView the message dumper function logs for the pods by entering the following command:

$ oc logs $(oc get pod -o name | grep event-display) -c user-containerExample output

☁️ cloudevents.Event Validation: valid Context Attributes, specversion: 1.0 type: dev.knative.apiserver.resource.update datacontenttype: application/json ... Data, { "apiVersion": "v1", "involvedObject": { "apiVersion": "v1", "fieldPath": "spec.containers{hello-node}", "kind": "Pod", "name": "hello-node", "namespace": "default", ..... }, "kind": "Event", "message": "Started container", "metadata": { "name": "hello-node.159d7608e3a3572c", "namespace": "default", .... }, "reason": "Started", ... }

Deleting the API server source

Delete the trigger:

$ oc delete -f trigger.yamlDelete the event source:

$ oc delete -f k8s-events.yamlDelete the service account, cluster role, and cluster binding:

$ oc delete -f authentication.yaml

5.9. Creating a ping source

A ping source is an event source that can be used to periodically send ping events with a constant payload to an event consumer. A ping source can be used to schedule sending events, similar to a timer.

5.9.1. Creating a ping source by using the web console

After Knative Eventing is installed on your cluster, you can create a ping source by using the web console. Using the OpenShift Container Platform web console provides a streamlined and intuitive user interface to create an event source.

Prerequisites

- You have logged in to the OpenShift Container Platform web console.

- The OpenShift Serverless Operator, Knative Serving and Knative Eventing are installed on the cluster.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

Procedure

To verify that the ping source is working, create a simple Knative service that dumps incoming messages to the logs of the service.

-

In the Developer perspective, navigate to +Add

YAML. Copy the example YAML:

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: event-display spec: template: spec: containers: - image: quay.io/openshift-knative/knative-eventing-sources-event-display:latest- Click Create.

-

In the Developer perspective, navigate to +Add

Create a ping source in the same namespace as the service created in the previous step, or any other sink that you want to send events to.

-

In the Developer perspective, navigate to +Add

Event Source. The Event Sources page is displayed. - Optional: If you have multiple providers for your event sources, select the required provider from the Providers list to filter the available event sources from the provider.

Select Ping Source and then click Create Event Source. The Create Event Source page is displayed.

NoteYou can configure the PingSource settings by using the Form view or YAML view and can switch between the views. The data is persisted when switching between the views.

-

Enter a value for Schedule. In this example, the value is

*/2 * * * *, which creates a PingSource that sends a message every two minutes. - Optional: You can enter a value for Data, which is the message payload.

-

Select a Sink. This can be either a Resource or a URI. In this example, the

event-displayservice created in the previous step is used as the Resource sink. - Click Create.

-

In the Developer perspective, navigate to +Add

Verification

You can verify that the ping source was created and is connected to the sink by viewing the Topology page.

- In the Developer perspective, navigate to Topology.

View the ping source and sink.

Deleting the ping source

- Navigate to the Topology view.

- Right-click the API server source and select Delete Ping Source.

5.9.2. Creating a ping source by using the Knative CLI

You can use the kn source ping create command to create a ping source by using the Knative (kn) CLI. Using the Knative CLI to create event sources provides a more streamlined and intuitive user interface than modifying YAML files directly.

Prerequisites

- The OpenShift Serverless Operator, Knative Serving and Knative Eventing are installed on the cluster.

-

You have installed the Knative (

kn) CLI. - You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

-

Optional: If you want to use the verification steps for this procedure, install the OpenShift CLI (

oc).

Procedure

To verify that the ping source is working, create a simple Knative service that dumps incoming messages to the service logs:

$ kn service create event-display \ --image quay.io/openshift-knative/knative-eventing-sources-event-display:latestFor each set of ping events that you want to request, create a ping source in the same namespace as the event consumer:

$ kn source ping create test-ping-source \ --schedule "*/2 * * * *" \ --data '{"message": "Hello world!"}' \ --sink ksvc:event-displayCheck that the controller is mapped correctly by entering the following command and inspecting the output:

$ kn source ping describe test-ping-sourceExample output