Operators

OpenShift Dedicated Operators

Abstract

Chapter 1. Operators overview

Operators are among the most important components of OpenShift Dedicated. They are the preferred method of packaging, deploying, and managing services on the control plane. They can also provide advantages to applications that users run.

Operators integrate with Kubernetes APIs and CLI tools such as kubectl and the OpenShift CLI (oc). They provide the means of monitoring applications, performing health checks, managing over-the-air (OTA) updates, and ensuring that applications remain in your specified state.

Operators are designed specifically for Kubernetes-native applications to implement and automate common Day 1 operations, such as installation and configuration. Operators can also automate Day 2 operations, such as autoscaling up or down and creating backups. All of these activities are directed by a piece of software running on your cluster.

While both follow similar Operator concepts and goals, Operators in OpenShift Dedicated are managed by two different systems, depending on their purpose:

- Cluster Operators

- Managed by the Cluster Version Operator (CVO) and installed by default to perform cluster functions.

- Optional add-on Operators

- Managed by Operator Lifecycle Manager (OLM) and can be made accessible for users to run in their applications. Also known as OLM-based Operators.

1.1. For developers

As an Operator author, you can perform the following development tasks for OLM-based Operators:

1.2. For administrators

As an administrator with the dedicated-admin role, you can perform the following Operator tasks:

1.3. Next steps

Chapter 2. Understanding Operators

2.1. What are Operators?

Conceptually, Operators take human operational knowledge and encode it into software that is more easily shared with consumers.

Operators are pieces of software that ease the operational complexity of running another piece of software. They act like an extension of the software vendor’s engineering team, monitoring a Kubernetes environment (such as OpenShift Dedicated) and using its current state to make decisions in real time. Advanced Operators are designed to handle upgrades seamlessly, react to failures automatically, and not take shortcuts, like skipping a software backup process to save time.

More technically, Operators are a method of packaging, deploying, and managing a Kubernetes application.

A Kubernetes application is an app that is both deployed on Kubernetes and managed using the Kubernetes APIs and kubectl or oc tooling. To be able to make the most of Kubernetes, you require a set of cohesive APIs to extend in order to service and manage your apps that run on Kubernetes. Think of Operators as the runtime that manages this type of app on Kubernetes.

2.1.1. Why use Operators?

Operators provide:

- Repeatability of installation and upgrade.

- Constant health checks of every system component.

- Over-the-air (OTA) updates for OpenShift components and ISV content.

- A place to encapsulate knowledge from field engineers and spread it to all users, not just one or two.

- Why deploy on Kubernetes?

- Kubernetes (and by extension, OpenShift Dedicated) contains all of the primitives needed to build complex distributed systems – secret handling, load balancing, service discovery, autoscaling – that work across on-premise and cloud providers.

- Why manage your app with Kubernetes APIs and

kubectltooling? -

These APIs are feature rich, have clients for all platforms and plug into the cluster’s access control/auditing. An Operator uses the Kubernetes extension mechanism, custom resource definitions (CRDs), so your custom object, for example

MongoDB, looks and acts just like the built-in, native Kubernetes objects. - How do Operators compare with service brokers?

- A service broker is a step towards programmatic discovery and deployment of an app. However, because it is not a long running process, it cannot execute Day 2 operations like upgrade, failover, or scaling. Customizations and parameterization of tunables are provided at install time, versus an Operator that is constantly watching the current state of your cluster. Off-cluster services are a good match for a service broker, although Operators exist for these as well.

2.1.2. Operator Framework

The Operator Framework is a family of tools and capabilities to deliver on the customer experience described above. It is not just about writing code; testing, delivering, and updating Operators is just as important. The Operator Framework components consist of open source tools to tackle these problems:

- Operator Lifecycle Manager

- Operator Lifecycle Manager (OLM) controls the installation, upgrade, and role-based access control (RBAC) of Operators in a cluster. It is deployed by default in OpenShift Dedicated.

- Operator Registry

- The Operator Registry stores cluster service versions (CSVs) and custom resource definitions (CRDs) for creation in a cluster and stores Operator metadata about packages and channels. It runs in a Kubernetes or OpenShift cluster to provide this Operator catalog data to OLM.

- Software Catalog

- The software catalog is a web console for cluster administrators to discover and select Operators to install on their cluster. It is deployed by default in OpenShift Dedicated.

These tools are designed to be composable, so you can use any that are useful to you.

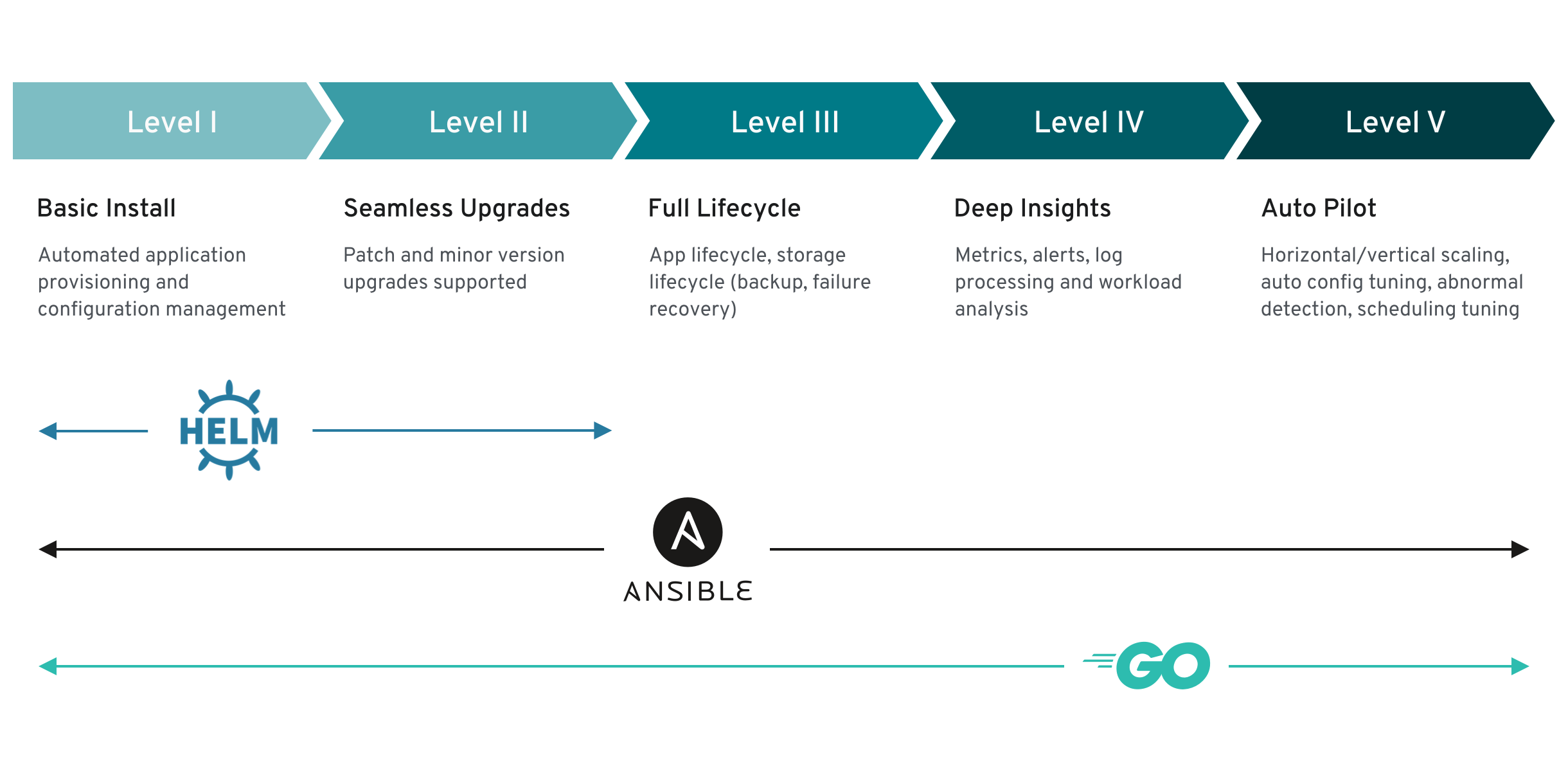

2.1.3. Operator maturity model

The level of sophistication of the management logic encapsulated within an Operator can vary. This logic is also in general highly dependent on the type of the service represented by the Operator.

One can however generalize the scale of the maturity of the encapsulated operations of an Operator for certain set of capabilities that most Operators can include. To this end, the following Operator maturity model defines five phases of maturity for generic Day 2 operations of an Operator:

Figure 2.1. Operator maturity model

2.2. Operator Framework packaging format

This guide outlines the packaging format for Operators supported by Operator Lifecycle Manager (OLM) in OpenShift Dedicated.

2.2.1. Bundle format

The bundle format for Operators is a packaging format introduced by the Operator Framework. To improve scalability and to better enable upstream users hosting their own catalogs, the bundle format specification simplifies the distribution of Operator metadata.

An Operator bundle represents a single version of an Operator. On-disk bundle manifests are containerized and shipped as a bundle image, which is a non-runnable container image that stores the Kubernetes manifests and Operator metadata. Storage and distribution of the bundle image is then managed using existing container tools like podman and docker and container registries such as Quay.

Operator metadata can include:

- Information that identifies the Operator, for example its name and version.

- Additional information that drives the UI, for example its icon and some example custom resources (CRs).

- Required and provided APIs.

- Related images.

When loading manifests into the Operator Registry database, the following requirements are validated:

- The bundle must have at least one channel defined in the annotations.

- Every bundle has exactly one cluster service version (CSV).

- If a CSV owns a custom resource definition (CRD), that CRD must exist in the bundle.

2.2.1.1. Manifests

Bundle manifests refer to a set of Kubernetes manifests that define the deployment and RBAC model of the Operator.

A bundle includes one CSV per directory and typically the CRDs that define the owned APIs of the CSV in its /manifests directory.

Example bundle format layout

etcd

├── manifests

│ ├── etcdcluster.crd.yaml

│ └── etcdoperator.clusterserviceversion.yaml

│ └── secret.yaml

│ └── configmap.yaml

└── metadata

└── annotations.yaml

└── dependencies.yaml2.2.1.1.1. Additionally supported objects

The following object types can also be optionally included in the /manifests directory of a bundle:

Supported optional object types

-

ClusterRole -

ClusterRoleBinding -

ConfigMap -

ConsoleCLIDownload -

ConsoleLink -

ConsoleQuickStart -

ConsoleYamlSample -

PodDisruptionBudget -

PriorityClass -

PrometheusRule -

Role -

RoleBinding -

Secret -

Service -

ServiceAccount -

ServiceMonitor -

VerticalPodAutoscaler

When these optional objects are included in a bundle, Operator Lifecycle Manager (OLM) can create them from the bundle and manage their lifecycle along with the CSV:

Lifecycle for optional objects

- When the CSV is deleted, OLM deletes the optional object.

When the CSV is upgraded:

- If the name of the optional object is the same, OLM updates it in place.

- If the name of the optional object has changed between versions, OLM deletes and recreates it.

2.2.1.2. Annotations

A bundle also includes an annotations.yaml file in its /metadata directory. This file defines higher level aggregate data that helps describe the format and package information about how the bundle should be added into an index of bundles:

Example annotations.yaml

annotations:

operators.operatorframework.io.bundle.mediatype.v1: "registry+v1"

operators.operatorframework.io.bundle.manifests.v1: "manifests/"

operators.operatorframework.io.bundle.metadata.v1: "metadata/"

operators.operatorframework.io.bundle.package.v1: "test-operator"

operators.operatorframework.io.bundle.channels.v1: "beta,stable"

operators.operatorframework.io.bundle.channel.default.v1: "stable" - 1

- The media type or format of the Operator bundle. The

registry+v1format means it contains a CSV and its associated Kubernetes objects. - 2

- The path in the image to the directory that contains the Operator manifests. This label is reserved for future use and currently defaults to

manifests/. The valuemanifests.v1implies that the bundle contains Operator manifests. - 3

- The path in the image to the directory that contains metadata files about the bundle. This label is reserved for future use and currently defaults to

metadata/. The valuemetadata.v1implies that this bundle has Operator metadata. - 4

- The package name of the bundle.

- 5

- The list of channels the bundle is subscribing to when added into an Operator Registry.

- 6

- The default channel an Operator should be subscribed to when installed from a registry.

In case of a mismatch, the annotations.yaml file is authoritative because the on-cluster Operator Registry that relies on these annotations only has access to this file.

2.2.1.3. Dependencies

The dependencies of an Operator are listed in a dependencies.yaml file in the metadata/ folder of a bundle. This file is optional and currently only used to specify explicit Operator-version dependencies.

The dependency list contains a type field for each item to specify what kind of dependency this is. The following types of Operator dependencies are supported:

olm.package-

This type indicates a dependency for a specific Operator version. The dependency information must include the package name and the version of the package in semver format. For example, you can specify an exact version such as

0.5.2or a range of versions such as>0.5.1. olm.gvk- With this type, the author can specify a dependency with group/version/kind (GVK) information, similar to existing CRD and API-based usage in a CSV. This is a path to enable Operator authors to consolidate all dependencies, API or explicit versions, to be in the same place.

olm.constraint- This type declares generic constraints on arbitrary Operator properties.

In the following example, dependencies are specified for a Prometheus Operator and etcd CRDs:

Example dependencies.yaml file

dependencies:

- type: olm.package

value:

packageName: prometheus

version: ">0.27.0"

- type: olm.gvk

value:

group: etcd.database.coreos.com

kind: EtcdCluster

version: v1beta22.2.1.4. About the opm CLI

The opm CLI tool is provided by the Operator Framework for use with the Operator bundle format. This tool allows you to create and maintain catalogs of Operators from a list of Operator bundles that are similar to software repositories. The result is a container image which can be stored in a container registry and then installed on a cluster.

A catalog contains a database of pointers to Operator manifest content that can be queried through an included API that is served when the container image is run. On OpenShift Dedicated, Operator Lifecycle Manager (OLM) can reference the image in a catalog source, defined by a CatalogSource object, which polls the image at regular intervals to enable frequent updates to installed Operators on the cluster.

-

See CLI tools for steps on installing the

opmCLI.

2.2.2. Highlights

File-based catalogs are the latest iteration of the catalog format in Operator Lifecycle Manager (OLM). It is a plain text-based (JSON or YAML) and declarative config evolution of the earlier SQLite database format, and it is fully backwards compatible. The goal of this format is to enable Operator catalog editing, composability, and extensibility.

- Editing

With file-based catalogs, users interacting with the contents of a catalog are able to make direct changes to the format and verify that their changes are valid. Because this format is plain text JSON or YAML, catalog maintainers can easily manipulate catalog metadata by hand or with widely known and supported JSON or YAML tooling, such as the

jqCLI.This editability enables the following features and user-defined extensions:

- Promoting an existing bundle to a new channel

- Changing the default channel of a package

- Custom algorithms for adding, updating, and removing upgrade paths

- Composability

File-based catalogs are stored in an arbitrary directory hierarchy, which enables catalog composition. For example, consider two separate file-based catalog directories:

catalogAandcatalogB. A catalog maintainer can create a new combined catalog by making a new directorycatalogCand copyingcatalogAandcatalogBinto it.This composability enables decentralized catalogs. The format permits Operator authors to maintain Operator-specific catalogs, and it permits maintainers to trivially build a catalog composed of individual Operator catalogs. File-based catalogs can be composed by combining multiple other catalogs, by extracting subsets of one catalog, or a combination of both of these.

NoteDuplicate packages and duplicate bundles within a package are not permitted. The

opm validatecommand returns an error if any duplicates are found.Because Operator authors are most familiar with their Operator, its dependencies, and its upgrade compatibility, they are able to maintain their own Operator-specific catalog and have direct control over its contents. With file-based catalogs, Operator authors own the task of building and maintaining their packages in a catalog. Composite catalog maintainers, however, only own the task of curating the packages in their catalog and publishing the catalog to users.

- Extensibility

The file-based catalog specification is a low-level representation of a catalog. While it can be maintained directly in its low-level form, catalog maintainers can build interesting extensions on top that can be used by their own custom tooling to make any number of mutations.

For example, a tool could translate a high-level API, such as

(mode=semver), down to the low-level, file-based catalog format for upgrade paths. Or a catalog maintainer might need to customize all of the bundle metadata by adding a new property to bundles that meet a certain criteria.While this extensibility allows for additional official tooling to be developed on top of the low-level APIs for future OpenShift Dedicated releases, the major benefit is that catalog maintainers have this capability as well.

As of OpenShift Dedicated 4.11, the default Red Hat-provided Operator catalog releases in the file-based catalog format. The default Red Hat-provided Operator catalogs for OpenShift Dedicated 4.6 through 4.10 released in the deprecated SQLite database format.

The opm subcommands, flags, and functionality related to the SQLite database format are also deprecated and will be removed in a future release. The features are still supported and must be used for catalogs that use the deprecated SQLite database format.

Many of the opm subcommands and flags for working with the SQLite database format, such as opm index prune, do not work with the file-based catalog format. For more information about working with file-based catalogs, see Managing custom catalogs.

2.2.2.1. Directory structure

File-based catalogs can be stored and loaded from directory-based file systems. The opm CLI loads the catalog by walking the root directory and recursing into subdirectories. The CLI attempts to load every file it finds and fails if any errors occur.

Non-catalog files can be ignored using .indexignore files, which have the same rules for patterns and precedence as .gitignore files.

Example .indexignore file

# Ignore everything except non-object .json and .yaml files

**/*

!*.json

!*.yaml

**/objects/*.json

**/objects/*.yamlCatalog maintainers have the flexibility to choose their desired layout, but it is recommended to store each package’s file-based catalog blobs in separate subdirectories. Each individual file can be either JSON or YAML; it is not necessary for every file in a catalog to use the same format.

Basic recommended structure

catalog

├── packageA

│ └── index.yaml

├── packageB

│ ├── .indexignore

│ ├── index.yaml

│ └── objects

│ └── packageB.v0.1.0.clusterserviceversion.yaml

└── packageC

└── index.json

└── deprecations.yamlThis recommended structure has the property that each subdirectory in the directory hierarchy is a self-contained catalog, which makes catalog composition, discovery, and navigation trivial file system operations. The catalog can also be included in a parent catalog by copying it into the parent catalog’s root directory.

2.2.2.2. Schemas

File-based catalogs use a format, based on the CUE language specification, that can be extended with arbitrary schemas. The following _Meta CUE schema defines the format that all file-based catalog blobs must adhere to:

_Meta schema

_Meta: {

// schema is required and must be a non-empty string

schema: string & !=""

// package is optional, but if it's defined, it must be a non-empty string

package?: string & !=""

// properties is optional, but if it's defined, it must be a list of 0 or more properties

properties?: [... #Property]

}

#Property: {

// type is required

type: string & !=""

// value is required, and it must not be null

value: !=null

}

No CUE schemas listed in this specification should be considered exhaustive. The opm validate command has additional validations that are difficult or impossible to express concisely in CUE.

An Operator Lifecycle Manager (OLM) catalog currently uses three schemas (olm.package, olm.channel, and olm.bundle), which correspond to OLM’s existing package and bundle concepts.

Each Operator package in a catalog requires exactly one olm.package blob, at least one olm.channel blob, and one or more olm.bundle blobs.

All olm.* schemas are reserved for OLM-defined schemas. Custom schemas must use a unique prefix, such as a domain that you own.

2.2.2.2.1. olm.package schema

The olm.package schema defines package-level metadata for an Operator. This includes its name, description, default channel, and icon.

Example 2.1. olm.package schema

#Package: {

schema: "olm.package"

// Package name

name: string & !=""

// A description of the package

description?: string

// The package's default channel

defaultChannel: string & !=""

// An optional icon

icon?: {

base64data: string

mediatype: string

}

}2.2.2.2.2. olm.channel schema

The olm.channel schema defines a channel within a package, the bundle entries that are members of the channel, and the upgrade paths for those bundles.

If a bundle entry represents an edge in multiple olm.channel blobs, it can only appear once per channel.

It is valid for an entry’s replaces value to reference another bundle name that cannot be found in this catalog or another catalog. However, all other channel invariants must hold true, such as a channel not having multiple heads.

Example 2.2. olm.channel schema

#Channel: {

schema: "olm.channel"

package: string & !=""

name: string & !=""

entries: [...#ChannelEntry]

}

#ChannelEntry: {

// name is required. It is the name of an `olm.bundle` that

// is present in the channel.

name: string & !=""

// replaces is optional. It is the name of bundle that is replaced

// by this entry. It does not have to be present in the entry list.

replaces?: string & !=""

// skips is optional. It is a list of bundle names that are skipped by

// this entry. The skipped bundles do not have to be present in the

// entry list.

skips?: [...string & !=""]

// skipRange is optional. It is the semver range of bundle versions

// that are skipped by this entry.

skipRange?: string & !=""

}

When using the skipRange field, the skipped Operator versions are pruned from the update graph and are longer installable by users with the spec.startingCSV property of Subscription objects.

You can update an Operator incrementally while keeping previously installed versions available to users for future installation by using both the skipRange and replaces field. Ensure that the replaces field points to the immediate previous version of the Operator version in question.

2.2.2.2.3. olm.bundle schema

Example 2.3. olm.bundle schema

#Bundle: {

schema: "olm.bundle"

package: string & !=""

name: string & !=""

image: string & !=""

properties: [...#Property]

relatedImages?: [...#RelatedImage]

}

#Property: {

// type is required

type: string & !=""

// value is required, and it must not be null

value: !=null

}

#RelatedImage: {

// image is the image reference

image: string & !=""

// name is an optional descriptive name for an image that

// helps identify its purpose in the context of the bundle

name?: string & !=""

}2.2.2.2.4. olm.deprecations schema

The optional olm.deprecations schema defines deprecation information for packages, bundles, and channels in a catalog. Operator authors can use this schema to provide relevant messages about their Operators, such as support status and recommended upgrade paths, to users running those Operators from a catalog.

When this schema is defined, the OpenShift Dedicated web console displays warning badges for the affected elements of the Operator, including any custom deprecation messages, on both the pre- and post-installation pages of the software catalog.

An olm.deprecations schema entry contains one or more of the following reference types, which indicates the deprecation scope. After the Operator is installed, any specified messages can be viewed as status conditions on the related Subscription object.

| Type | Scope | Status condition |

|---|---|---|

|

| Represents the entire package |

|

|

| Represents one channel |

|

|

| Represents one bundle version |

|

Each reference type has their own requirements, as detailed in the following example.

Example 2.4. Example olm.deprecations schema with each reference type

schema: olm.deprecations

package: my-operator

entries:

- reference:

schema: olm.package

message: |

The 'my-operator' package is end of life. Please use the

'my-operator-new' package for support.

- reference:

schema: olm.channel

name: alpha

message: |

The 'alpha' channel is no longer supported. Please switch to the

'stable' channel.

- reference:

schema: olm.bundle

name: my-operator.v1.68.0

message: |

my-operator.v1.68.0 is deprecated. Uninstall my-operator.v1.68.0 and

install my-operator.v1.72.0 for support.- 1

- Each deprecation schema must have a

packagevalue, and that package reference must be unique across the catalog. There must not be an associatednamefield. - 2

- The

olm.packageschema must not include anamefield, because it is determined by thepackagefield defined earlier in the schema. - 3

- All

messagefields, for anyreferencetype, must be a non-zero length and represented as an opaque text blob. - 4

- The

namefield for theolm.channelschema is required. - 5

- The

namefield for theolm.bundleschema is required.

The deprecation feature does not consider overlapping deprecation, for example package versus channel versus bundle.

Operator authors can save olm.deprecations schema entries as a deprecations.yaml file in the same directory as the package’s index.yaml file:

Example directory structure for a catalog with deprecations

my-catalog

└── my-operator

├── index.yaml

└── deprecations.yaml2.2.2.3. Properties

Properties are arbitrary pieces of metadata that can be attached to file-based catalog schemas. The type field is a string that effectively specifies the semantic and syntactic meaning of the value field. The value can be any arbitrary JSON or YAML.

OLM defines a handful of property types, again using the reserved olm.* prefix.

2.2.2.3.1. olm.package property

The olm.package property defines the package name and version. This is a required property on bundles, and there must be exactly one of these properties. The packageName field must match the bundle’s first-class package field, and the version field must be a valid semantic version.

Example 2.5. olm.package property

#PropertyPackage: {

type: "olm.package"

value: {

packageName: string & !=""

version: string & !=""

}

}2.2.2.3.2. olm.gvk property

The olm.gvk property defines the group/version/kind (GVK) of a Kubernetes API that is provided by this bundle. This property is used by OLM to resolve a bundle with this property as a dependency for other bundles that list the same GVK as a required API. The GVK must adhere to Kubernetes GVK validations.

Example 2.6. olm.gvk property

#PropertyGVK: {

type: "olm.gvk"

value: {

group: string & !=""

version: string & !=""

kind: string & !=""

}

}2.2.2.3.3. olm.package.required

The olm.package.required property defines the package name and version range of another package that this bundle requires. For every required package property a bundle lists, OLM ensures there is an Operator installed on the cluster for the listed package and in the required version range. The versionRange field must be a valid semantic version (semver) range.

Example 2.7. olm.package.required property

#PropertyPackageRequired: {

type: "olm.package.required"

value: {

packageName: string & !=""

versionRange: string & !=""

}

}2.2.2.3.4. olm.gvk.required

The olm.gvk.required property defines the group/version/kind (GVK) of a Kubernetes API that this bundle requires. For every required GVK property a bundle lists, OLM ensures there is an Operator installed on the cluster that provides it. The GVK must adhere to Kubernetes GVK validations.

Example 2.8. olm.gvk.required property

#PropertyGVKRequired: {

type: "olm.gvk.required"

value: {

group: string & !=""

version: string & !=""

kind: string & !=""

}

}2.2.2.4. Example catalog

With file-based catalogs, catalog maintainers can focus on Operator curation and compatibility. Because Operator authors have already produced Operator-specific catalogs for their Operators, catalog maintainers can build their catalog by rendering each Operator catalog into a subdirectory of the catalog’s root directory.

There are many possible ways to build a file-based catalog; the following steps outline a simple approach:

Maintain a single configuration file for the catalog, containing image references for each Operator in the catalog:

Example catalog configuration file

name: community-operators repo: quay.io/community-operators/catalog tag: latest references: - name: etcd-operator image: quay.io/etcd-operator/index@sha256:5891b5b522d5df086d0ff0b110fbd9d21bb4fc7163af34d08286a2e846f6be03 - name: prometheus-operator image: quay.io/prometheus-operator/index@sha256:e258d248fda94c63753607f7c4494ee0fcbe92f1a76bfdac795c9d84101eb317Run a script that parses the configuration file and creates a new catalog from its references:

Example script

name=$(yq eval '.name' catalog.yaml) mkdir "$name" yq eval '.name + "/" + .references[].name' catalog.yaml | xargs mkdir for l in $(yq e '.name as $catalog | .references[] | .image + "|" + $catalog + "/" + .name + "/index.yaml"' catalog.yaml); do image=$(echo $l | cut -d'|' -f1) file=$(echo $l | cut -d'|' -f2) opm render "$image" > "$file" done opm generate dockerfile "$name" indexImage=$(yq eval '.repo + ":" + .tag' catalog.yaml) docker build -t "$indexImage" -f "$name.Dockerfile" . docker push "$indexImage"

2.2.2.5. Guidelines

Consider the following guidelines when maintaining file-based catalogs.

2.2.2.5.1. Immutable bundles

The general advice with Operator Lifecycle Manager (OLM) is that bundle images and their metadata should be treated as immutable.

If a broken bundle has been pushed to a catalog, you must assume that at least one of your users has upgraded to that bundle. Based on that assumption, you must release another bundle with an upgrade path from the broken bundle to ensure users with the broken bundle installed receive an upgrade. OLM will not reinstall an installed bundle if the contents of that bundle are updated in the catalog.

However, there are some cases where a change in the catalog metadata is preferred:

-

Channel promotion: If you already released a bundle and later decide that you would like to add it to another channel, you can add an entry for your bundle in another

olm.channelblob. -

New upgrade paths: If you release a new

1.2.zbundle version, for example1.2.4, but1.3.0is already released, you can update the catalog metadata for1.3.0to skip1.2.4.

2.2.2.5.2. Source control

Catalog metadata should be stored in source control and treated as the source of truth. Updates to catalog images should include the following steps:

- Update the source-controlled catalog directory with a new commit.

-

Build and push the catalog image. Use a consistent tagging taxonomy, such as

:latestor:<target_cluster_version>, so that users can receive updates to a catalog as they become available.

2.2.2.6. CLI usage

For instructions about creating file-based catalogs by using the opm CLI, see Managing custom catalogs.

For reference documentation about the opm CLI commands related to managing file-based catalogs, see CLI tools.

2.2.2.7. Automation

Operator authors and catalog maintainers are encouraged to automate their catalog maintenance with CI/CD workflows. Catalog maintainers can further improve on this by building GitOps automation to accomplish the following tasks:

- Check that pull request (PR) authors are permitted to make the requested changes, for example by updating their package’s image reference.

-

Check that the catalog updates pass the

opm validatecommand. - Check that the updated bundle or catalog image references exist, the catalog images run successfully in a cluster, and Operators from that package can be successfully installed.

- Automatically merge PRs that pass the previous checks.

- Automatically rebuild and republish the catalog image.

2.3. Operator Framework glossary of common terms

This topic provides a glossary of common terms related to the Operator Framework, including Operator Lifecycle Manager (OLM).

2.3.1. Bundle

In the bundle format, a bundle is a collection of an Operator CSV, manifests, and metadata. Together, they form a unique version of an Operator that can be installed onto the cluster.

2.3.2. Bundle image

In the bundle format, a bundle image is a container image that is built from Operator manifests and that contains one bundle. Bundle images are stored and distributed by Open Container Initiative (OCI) spec container registries, such as Quay.io or DockerHub.

2.3.3. Catalog source

A catalog source represents a store of metadata that OLM can query to discover and install Operators and their dependencies.

2.3.4. Channel

A channel defines a stream of updates for an Operator and is used to roll out updates for subscribers. The head points to the latest version of that channel. For example, a stable channel would have all stable versions of an Operator arranged from the earliest to the latest.

An Operator can have several channels, and a subscription binding to a certain channel would only look for updates in that channel.

2.3.5. Channel head

A channel head refers to the latest known update in a particular channel.

2.3.6. Cluster service version

A cluster service version (CSV) is a YAML manifest created from Operator metadata that assists OLM in running the Operator in a cluster. It is the metadata that accompanies an Operator container image, used to populate user interfaces with information such as its logo, description, and version.

It is also a source of technical information that is required to run the Operator, like the RBAC rules it requires and which custom resources (CRs) it manages or depends on.

2.3.7. Dependency

An Operator may have a dependency on another Operator being present in the cluster. For example, the Vault Operator has a dependency on the etcd Operator for its data persistence layer.

OLM resolves dependencies by ensuring that all specified versions of Operators and CRDs are installed on the cluster during the installation phase. This dependency is resolved by finding and installing an Operator in a catalog that satisfies the required CRD API, and is not related to packages or bundles.

2.3.8. Extension

Extensions enable cluster administrators to extend capabilities for users on their OpenShift Dedicated cluster. Extensions are managed by Operator Lifecycle Manager (OLM) v1.

The ClusterExtension API streamlines management of installed extensions, which includes Operators via the registry+v1 bundle format, by consolidating user-facing APIs into a single object. Administrators and SREs can use the API to automate processes and define desired states by using GitOps principles.

2.3.9. Index image

In the bundle format, an index image refers to an image of a database (a database snapshot) that contains information about Operator bundles including CSVs and CRDs of all versions. This index can host a history of Operators on a cluster and be maintained by adding or removing Operators using the opm CLI tool.

2.3.10. Install plan

An install plan is a calculated list of resources to be created to automatically install or upgrade a CSV.

2.3.11. Multitenancy

A tenant in OpenShift Dedicated is a user or group of users that share common access and privileges for a set of deployed workloads, typically represented by a namespace or project. You can use tenants to provide a level of isolation between different groups or teams.

When a cluster is shared by multiple users or groups, it is considered a multitenant cluster.

2.3.12. Operator

Operators are a method of packaging, deploying, and managing a Kubernetes application. A Kubernetes application is an app that is both deployed on Kubernetes and managed using the Kubernetes APIs and kubectl or oc tooling.

In Operator Lifecycle Manager (OLM) v1, the ClusterExtension API streamlines management of installed extensions, which includes Operators via the registry+v1 bundle format.

2.3.13. Operator group

An Operator group configures all Operators deployed in the same namespace as the OperatorGroup object to watch for their CR in a list of namespaces or cluster-wide.

2.3.14. Package

In the bundle format, a package is a directory that encloses all released history of an Operator with each version. A released version of an Operator is described in a CSV manifest alongside the CRDs.

2.3.15. Registry

A registry is a database that stores bundle images of Operators, each with all of its latest and historical versions in all channels.

2.3.16. Subscription

A subscription keeps CSVs up to date by tracking a channel in a package.

2.3.17. Update graph

An update graph links versions of CSVs together, similar to the update graph of any other packaged software. Operators can be installed sequentially, or certain versions can be skipped. The update graph is expected to grow only at the head with newer versions being added.

Also known as update edges or update paths.

2.4. Operator Lifecycle Manager (OLM)

2.4.1. Operator Lifecycle Manager concepts and resources

This guide provides an overview of the concepts that drive Operator Lifecycle Manager (OLM) in OpenShift Dedicated.

2.4.1.1. What is Operator Lifecycle Manager (OLM) Classic?

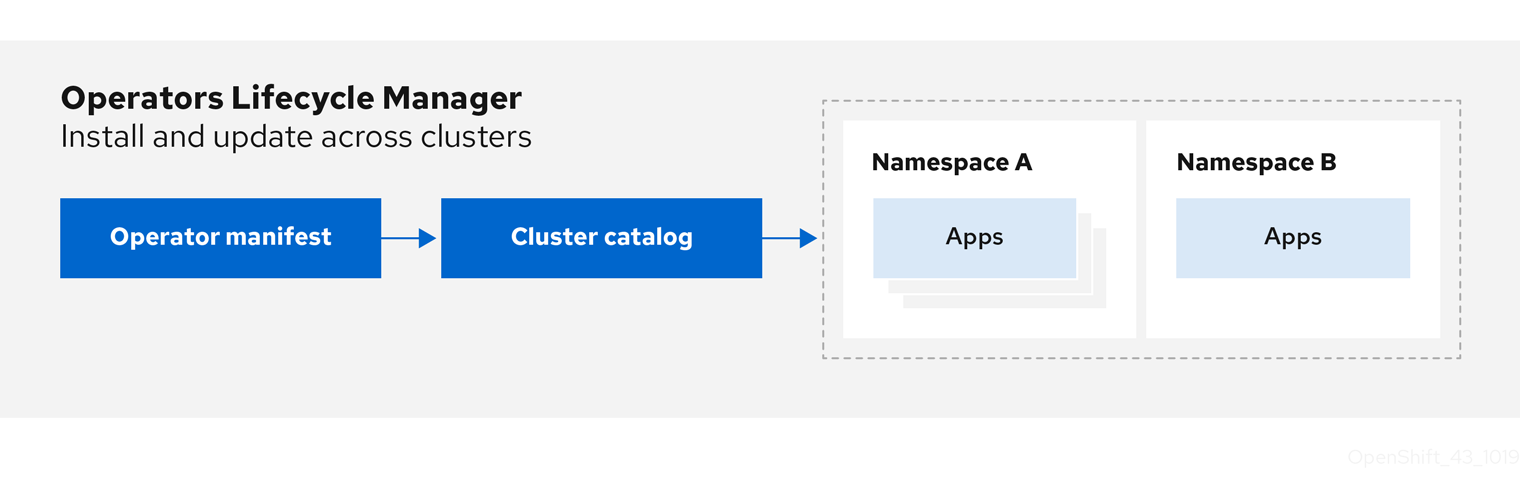

Operator Lifecycle Manager (OLM) Classic helps users install, update, and manage the lifecycle of Kubernetes native applications (Operators) and their associated services running across their OpenShift Dedicated clusters. It is part of the Operator Framework, an open source toolkit designed to manage Operators in an effective, automated, and scalable way.

Figure 2.2. OLM (Classic) workflow

OLM runs by default in OpenShift Dedicated, which aids administrators with the dedicated-admin role in installing, upgrading, and granting access to Operators running on their cluster. The OpenShift Dedicated web console provides management screens for dedicated-admin administrators to install Operators, as well as grant specific projects access to use the catalog of Operators available on the cluster.

For developers, a self-service experience allows provisioning and configuring instances of databases, monitoring, and big data services without having to be subject matter experts, because the Operator has that knowledge baked into it.

2.4.1.2. OLM resources

The following custom resource definitions (CRDs) are defined and managed by Operator Lifecycle Manager (OLM):

| Resource | Short name | Description |

|---|---|---|

|

|

| Application metadata. For example: name, version, icon, required resources. |

|

|

| A repository of CSVs, CRDs, and packages that define an application. |

|

|

| Keeps CSVs up to date by tracking a channel in a package. |

|

|

| Calculated list of resources to be created to automatically install or upgrade a CSV. |

|

|

|

Configures all Operators deployed in the same namespace as the |

|

| - |

Creates a communication channel between OLM and an Operator it manages. Operators can write to the |

2.4.1.2.1. Cluster service version

A cluster service version (CSV) represents a specific version of a running Operator on your OpenShift Dedicated cluster. It is a YAML manifest created from Operator metadata that assists Operator Lifecycle Manager (OLM) in running the Operator in the cluster.

OLM requires this metadata about an Operator to ensure that it can be kept running safely on a cluster, and to provide information about how updates should be applied as new versions of the Operator are published. This is similar to packaging software for a traditional operating system; think of the packaging step for OLM as the stage at which you make your rpm, deb, or apk bundle.

A CSV includes the metadata that accompanies an Operator container image, used to populate user interfaces with information such as its name, version, description, labels, repository link, and logo.

A CSV is also a source of technical information required to run the Operator, such as which custom resources (CRs) it manages or depends on, RBAC rules, cluster requirements, and install strategies. This information tells OLM how to create required resources and set up the Operator as a deployment.

2.4.1.2.2. Catalog source

A catalog source represents a store of metadata, typically by referencing an index image stored in a container registry. Operator Lifecycle Manager (OLM) queries catalog sources to discover and install Operators and their dependencies. The software catalog in the OpenShift Dedicated web console also displays the Operators provided by catalog sources.

Cluster administrators can view the full list of Operators provided by an enabled catalog source on a cluster by using the Administration → Cluster Settings → Configuration → OperatorHub page in the web console.

The spec of a CatalogSource object indicates how to construct a pod or how to communicate with a service that serves the Operator Registry gRPC API.

Example CatalogSource object

apiVersion: operators.coreos.com/v1alpha1

kind: CatalogSource

metadata:

generation: 1

name: example-catalog

namespace: openshift-marketplace

annotations:

olm.catalogImageTemplate:

"quay.io/example-org/example-catalog:v{kube_major_version}.{kube_minor_version}.{kube_patch_version}"

spec:

displayName: Example Catalog

image: quay.io/example-org/example-catalog:v1

priority: -400

publisher: Example Org

sourceType: grpc

grpcPodConfig:

securityContextConfig: <security_mode>

nodeSelector:

custom_label: <label>

priorityClassName: system-cluster-critical

tolerations:

- key: "key1"

operator: "Equal"

value: "value1"

effect: "NoSchedule"

updateStrategy:

registryPoll:

interval: 30m0s

status:

connectionState:

address: example-catalog.openshift-marketplace.svc:50051

lastConnect: 2021-08-26T18:14:31Z

lastObservedState: READY

latestImageRegistryPoll: 2021-08-26T18:46:25Z

registryService:

createdAt: 2021-08-26T16:16:37Z

port: 50051

protocol: grpc

serviceName: example-catalog

serviceNamespace: openshift-marketplace- 1

- Name for the

CatalogSourceobject. This value is also used as part of the name for the related pod that is created in the requested namespace. - 2

- Namespace to create the catalog in. To make the catalog available cluster-wide in all namespaces, set this value to

openshift-marketplace. The default Red Hat-provided catalog sources also use theopenshift-marketplacenamespace. Otherwise, set the value to a specific namespace to make the Operator only available in that namespace. - 3

- Optional: To avoid cluster upgrades potentially leaving Operator installations in an unsupported state or without a continued update path, you can enable automatically changing your Operator catalog’s index image version as part of cluster upgrades.

Set the

olm.catalogImageTemplateannotation to your index image name and use one or more of the Kubernetes cluster version variables as shown when constructing the template for the image tag. The annotation overwrites thespec.imagefield at run time. See the "Image template for custom catalog sources" section for more details. - 4

- Display name for the catalog in the web console and CLI.

- 5

- Index image for the catalog. Optionally, can be omitted when using the

olm.catalogImageTemplateannotation, which sets the pull spec at run time. - 6

- Weight for the catalog source. OLM uses the weight for prioritization during dependency resolution. A higher weight indicates the catalog is preferred over lower-weighted catalogs.

- 7

- Source types include the following:

-

grpcwith animagereference: OLM pulls the image and runs the pod, which is expected to serve a compliant API. -

grpcwith anaddressfield: OLM attempts to contact the gRPC API at the given address. This should not be used in most cases. -

configmap: OLM parses config map data and runs a pod that can serve the gRPC API over it.

-

- 8

- Specify the value of

legacyorrestricted. If the field is not set, the default value islegacy. In a future OpenShift Dedicated release, it is planned that the default value will berestricted.NoteIf your catalog cannot run with

restrictedpermissions, it is recommended that you manually set this field tolegacy. - 9

- Optional: For

grpctype catalog sources, overrides the default node selector for the pod serving the content inspec.image, if defined. - 10

- Optional: For

grpctype catalog sources, overrides the default priority class name for the pod serving the content inspec.image, if defined. Kubernetes providessystem-cluster-criticalandsystem-node-criticalpriority classes by default. Setting the field to empty ("") assigns the pod the default priority. Other priority classes can be defined manually. - 11

- Optional: For

grpctype catalog sources, overrides the default tolerations for the pod serving the content inspec.image, if defined. - 12

- Automatically check for new versions at a given interval to stay up-to-date.

- 13

- Last observed state of the catalog connection. For example:

-

READY: A connection is successfully established. -

CONNECTING: A connection is attempting to establish. -

TRANSIENT_FAILURE: A temporary problem has occurred while attempting to establish a connection, such as a timeout. The state will eventually switch back toCONNECTINGand try again.

See States of Connectivity in the gRPC documentation for more details.

-

- 14

- Latest time the container registry storing the catalog image was polled to ensure the image is up-to-date.

- 15

- Status information for the catalog’s Operator Registry service.

Referencing the name of a CatalogSource object in a subscription instructs OLM where to search to find a requested Operator:

Example Subscription object referencing a catalog source

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: example-operator

namespace: example-namespace

spec:

channel: stable

name: example-operator

source: example-catalog

sourceNamespace: openshift-marketplace2.4.1.2.2.1. Image template for custom catalog sources

Operator compatibility with the underlying cluster can be expressed by a catalog source in various ways. One way, which is used for the default Red Hat-provided catalog sources, is to identify image tags for index images that are specifically created for a particular platform release, for example OpenShift Dedicated.

During a cluster upgrade, the index image tag for the default Red Hat-provided catalog sources are updated automatically by the Cluster Version Operator (CVO) so that Operator Lifecycle Manager (OLM) pulls the updated version of the catalog. For example during an upgrade from OpenShift Dedicated 4.20 to 4, the spec.image field in the CatalogSource object for the redhat-operators catalog is updated from:

registry.redhat.io/redhat/redhat-operator-index:v4.21to:

registry.redhat.io/redhat/redhat-operator-index:v4.21However, the CVO does not automatically update image tags for custom catalogs. To ensure users are left with a compatible and supported Operator installation after a cluster upgrade, custom catalogs should also be kept updated to reference an updated index image.

Starting in OpenShift Dedicated 4.9, cluster administrators can add the olm.catalogImageTemplate annotation in the CatalogSource object for custom catalogs to an image reference that includes a template. The following Kubernetes version variables are supported for use in the template:

-

kube_major_version -

kube_minor_version -

kube_patch_version

You must specify the Kubernetes cluster version and not the OpenShift Dedicated cluster version, as the latter is not currently available for templating.

Provided that you have created and pushed an index image with a tag specifying the updated Kubernetes version, setting this annotation enables the index image versions in custom catalogs to be automatically changed after a cluster upgrade. The annotation value is used to set or update the image reference in the spec.image field of the CatalogSource object. This helps avoid cluster upgrades leaving Operator installations in unsupported states or without a continued update path.

You must ensure that the index image with the updated tag, in whichever registry it is stored in, is accessible by the cluster at the time of the cluster upgrade.

Example 2.9. Example catalog source with an image template

apiVersion: operators.coreos.com/v1alpha1

kind: CatalogSource

metadata:

generation: 1

name: example-catalog

namespace: openshift-marketplace

annotations:

olm.catalogImageTemplate:

"quay.io/example-org/example-catalog:v{kube_major_version}.{kube_minor_version}"

spec:

displayName: Example Catalog

image: quay.io/example-org/example-catalog:v1.34

priority: -400

publisher: Example Org

If the spec.image field and the olm.catalogImageTemplate annotation are both set, the spec.image field is overwritten by the resolved value from the annotation. If the annotation does not resolve to a usable pull spec, the catalog source falls back to the set spec.image value.

If the spec.image field is not set and the annotation does not resolve to a usable pull spec, OLM stops reconciliation of the catalog source and sets it into a human-readable error condition.

For a OpenShift Dedicated cluster, which uses Kubernetes 1.34, the olm.catalogImageTemplate annotation in the preceding example resolves to the following image reference:

quay.io/example-org/example-catalog:v1.34

For future releases of OpenShift Dedicated, you can create updated index images for your custom catalogs that target the later Kubernetes version that is used by the later OpenShift Dedicated version. With the olm.catalogImageTemplate annotation set before the upgrade, upgrading the cluster to the later OpenShift Dedicated version would then automatically update the catalog’s index image as well.

2.4.1.2.2.2. Catalog health requirements

Operator catalogs on a cluster are interchangeable from the perspective of installation resolution; a Subscription object might reference a specific catalog, but dependencies are resolved using all catalogs on the cluster.

For example, if Catalog A is unhealthy, a subscription referencing Catalog A could resolve a dependency in Catalog B, which the cluster administrator might not have been expecting, because B normally had a lower catalog priority than A.

As a result, OLM requires that all catalogs with a given global namespace (for example, the default openshift-marketplace namespace or a custom global namespace) are healthy. When a catalog is unhealthy, all Operator installation or update operations within its shared global namespace will fail with a CatalogSourcesUnhealthy condition. If these operations were permitted in an unhealthy state, OLM might make resolution and installation decisions that were unexpected to the cluster administrator.

As a cluster administrator, if you observe an unhealthy catalog and want to consider the catalog as invalid and resume Operator installations, see the "Removing custom catalogs" or "Disabling the default software catalog sources" sections for information about removing the unhealthy catalog.

2.4.1.2.3. Subscription

A subscription, defined by a Subscription object, represents an intention to install an Operator. It is the custom resource that relates an Operator to a catalog source.

Subscriptions describe which channel of an Operator package to subscribe to, and whether to perform updates automatically or manually. If set to automatic, the subscription ensures Operator Lifecycle Manager (OLM) manages and upgrades the Operator to ensure that the latest version is always running in the cluster.

Example Subscription object

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: example-operator

namespace: example-namespace

spec:

channel: stable

name: example-operator

source: example-catalog

sourceNamespace: openshift-marketplace

This Subscription object defines the name and namespace of the Operator, as well as the catalog from which the Operator data can be found. The channel, such as alpha, beta, or stable, helps determine which Operator stream should be installed from the catalog source.

The names of channels in a subscription can differ between Operators, but the naming scheme should follow a common convention within a given Operator. For example, channel names might follow a minor release update stream for the application provided by the Operator (1.2, 1.3) or a release frequency (stable, fast).

In addition to being easily visible from the OpenShift Dedicated web console, it is possible to identify when there is a newer version of an Operator available by inspecting the status of the related subscription. The value associated with the currentCSV field is the newest version that is known to OLM, and installedCSV is the version that is installed on the cluster.

2.4.1.2.4. Install plan

An install plan, defined by an InstallPlan object, describes a set of resources that Operator Lifecycle Manager (OLM) creates to install or upgrade to a specific version of an Operator. The version is defined by a cluster service version (CSV).

To install an Operator, a cluster administrator, or a user who has been granted Operator installation permissions, must first create a Subscription object. A subscription represents the intent to subscribe to a stream of available versions of an Operator from a catalog source. The subscription then creates an InstallPlan object to facilitate the installation of the resources for the Operator.

The install plan must then be approved according to one of the following approval strategies:

-

If the subscription’s

spec.installPlanApprovalfield is set toAutomatic, the install plan is approved automatically. -

If the subscription’s

spec.installPlanApprovalfield is set toManual, the install plan must be manually approved by a cluster administrator or user with proper permissions.

After the install plan is approved, OLM creates the specified resources and installs the Operator in the namespace that is specified by the subscription.

Example 2.10. Example InstallPlan object

apiVersion: operators.coreos.com/v1alpha1

kind: InstallPlan

metadata:

name: install-abcde

namespace: operators

spec:

approval: Automatic

approved: true

clusterServiceVersionNames:

- my-operator.v1.0.1

generation: 1

status:

...

catalogSources: []

conditions:

- lastTransitionTime: '2021-01-01T20:17:27Z'

lastUpdateTime: '2021-01-01T20:17:27Z'

status: 'True'

type: Installed

phase: Complete

plan:

- resolving: my-operator.v1.0.1

resource:

group: operators.coreos.com

kind: ClusterServiceVersion

manifest: >-

...

name: my-operator.v1.0.1

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1alpha1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: apiextensions.k8s.io

kind: CustomResourceDefinition

manifest: >-

...

name: webservers.web.servers.org

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1beta1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: ''

kind: ServiceAccount

manifest: >-

...

name: my-operator

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: rbac.authorization.k8s.io

kind: Role

manifest: >-

...

name: my-operator.v1.0.1-my-operator-6d7cbc6f57

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

- resolving: my-operator.v1.0.1

resource:

group: rbac.authorization.k8s.io

kind: RoleBinding

manifest: >-

...

name: my-operator.v1.0.1-my-operator-6d7cbc6f57

sourceName: redhat-operators

sourceNamespace: openshift-marketplace

version: v1

status: Created

...2.4.1.2.5. Operator groups

An Operator group, defined by the OperatorGroup resource, provides multitenant configuration to OLM-installed Operators. An Operator group selects target namespaces in which to generate required RBAC access for its member Operators.

The set of target namespaces is provided by a comma-delimited string stored in the olm.targetNamespaces annotation of a cluster service version (CSV). This annotation is applied to the CSV instances of member Operators and is projected into their deployments.

Additional resources

2.4.1.2.6. Operator conditions

As part of its role in managing the lifecycle of an Operator, Operator Lifecycle Manager (OLM) infers the state of an Operator from the state of Kubernetes resources that define the Operator. While this approach provides some level of assurance that an Operator is in a given state, there are many instances where an Operator might need to communicate information to OLM that could not be inferred otherwise. This information can then be used by OLM to better manage the lifecycle of the Operator.

OLM provides a custom resource definition (CRD) called OperatorCondition that allows Operators to communicate conditions to OLM. There are a set of supported conditions that influence management of the Operator by OLM when present in the Spec.Conditions array of an OperatorCondition resource.

By default, the Spec.Conditions array is not present in an OperatorCondition object until it is either added by a user or as a result of custom Operator logic.

2.4.2. Operator Lifecycle Manager architecture

This guide outlines the component architecture of Operator Lifecycle Manager (OLM) in OpenShift Dedicated.

2.4.2.1. Component responsibilities

Operator Lifecycle Manager (OLM) is composed of two Operators: the OLM Operator and the Catalog Operator.

The OLM and Catalog Operators are responsible for managing the custom resource definitions (CRDs) that are the basis for the OLM framework:

| Resource | Short name | Owner | Description |

|---|---|---|---|

|

|

| OLM | Application metadata: name, version, icon, required resources, installation, and so on. |

|

|

| Catalog | Calculated list of resources to be created to automatically install or upgrade a CSV. |

|

|

| Catalog | A repository of CSVs, CRDs, and packages that define an application. |

|

|

| Catalog | Used to keep CSVs up to date by tracking a channel in a package. |

|

|

| OLM |

Configures all Operators deployed in the same namespace as the |

Each of these Operators is also responsible for creating the following resources:

| Resource | Owner |

|---|---|

|

| OLM |

|

| |

|

| |

|

| |

|

| Catalog |

|

|

2.4.2.2. OLM Operator

The OLM Operator is responsible for deploying applications defined by CSV resources after the required resources specified in the CSV are present in the cluster.

The OLM Operator is not concerned with the creation of the required resources; you can choose to manually create these resources using the CLI or using the Catalog Operator. This separation of concern allows users incremental buy-in in terms of how much of the OLM framework they choose to leverage for their application.

The OLM Operator uses the following workflow:

- Watch for cluster service versions (CSVs) in a namespace and check that requirements are met.

If requirements are met, run the install strategy for the CSV.

NoteA CSV must be an active member of an Operator group for the install strategy to run.

2.4.2.3. Catalog Operator

The Catalog Operator is responsible for resolving and installing cluster service versions (CSVs) and the required resources they specify. It is also responsible for watching catalog sources for updates to packages in channels and upgrading them, automatically if desired, to the latest available versions.

To track a package in a channel, you can create a Subscription object configuring the desired package, channel, and the CatalogSource object you want to use for pulling updates. When updates are found, an appropriate InstallPlan object is written into the namespace on behalf of the user.

The Catalog Operator uses the following workflow:

- Connect to each catalog source in the cluster.

Watch for unresolved install plans created by a user, and if found:

- Find the CSV matching the name requested and add the CSV as a resolved resource.

- For each managed or required CRD, add the CRD as a resolved resource.

- For each required CRD, find the CSV that manages it.

- Watch for resolved install plans and create all of the discovered resources for it, if approved by a user or automatically.

- Watch for catalog sources and subscriptions and create install plans based on them.

2.4.2.4. Catalog Registry

The Catalog Registry stores CSVs and CRDs for creation in a cluster and stores metadata about packages and channels.

A package manifest is an entry in the Catalog Registry that associates a package identity with sets of CSVs. Within a package, channels point to a particular CSV. Because CSVs explicitly reference the CSV that they replace, a package manifest provides the Catalog Operator with all of the information that is required to update a CSV to the latest version in a channel, stepping through each intermediate version.

2.4.3. Operator Lifecycle Manager workflow

This guide outlines the workflow of Operator Lifecycle Manager (OLM) in OpenShift Dedicated.

2.4.3.1. Operator installation and upgrade workflow in OLM

In the Operator Lifecycle Manager (OLM) ecosystem, the following resources are used to resolve Operator installations and upgrades:

-

ClusterServiceVersion(CSV) -

CatalogSource -

Subscription

Operator metadata, defined in CSVs, can be stored in a collection called a catalog source. OLM uses catalog sources, which use the Operator Registry API, to query for available Operators as well as upgrades for installed Operators.

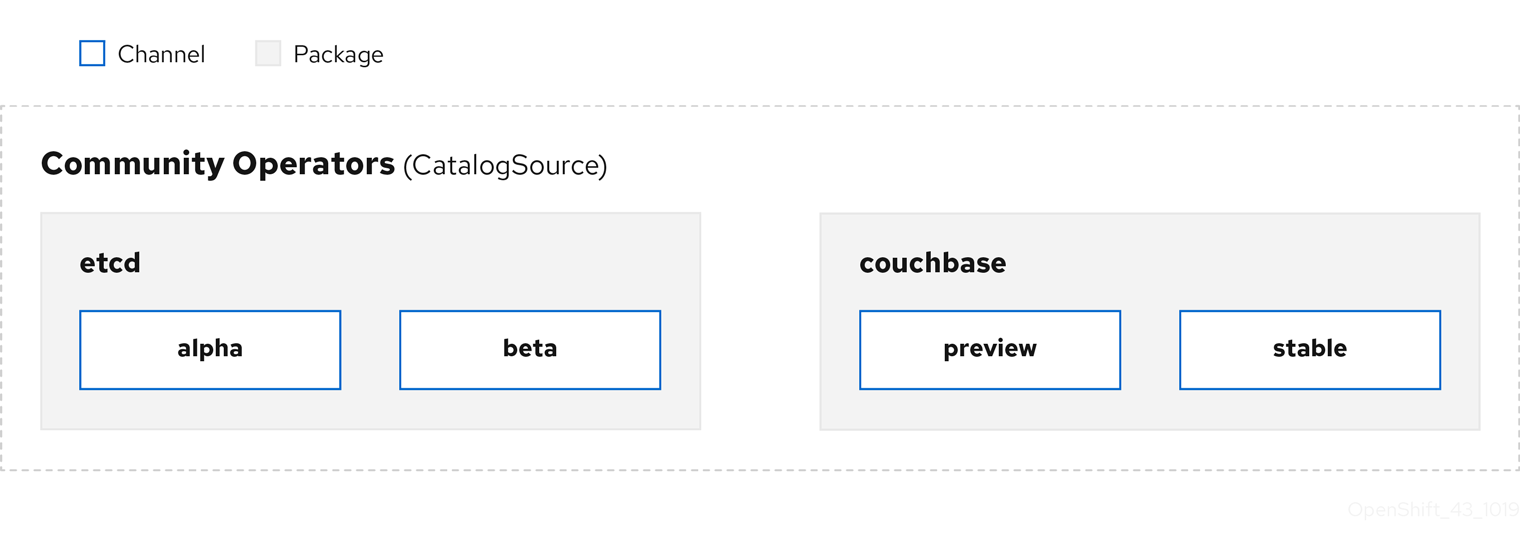

Figure 2.3. Catalog source overview

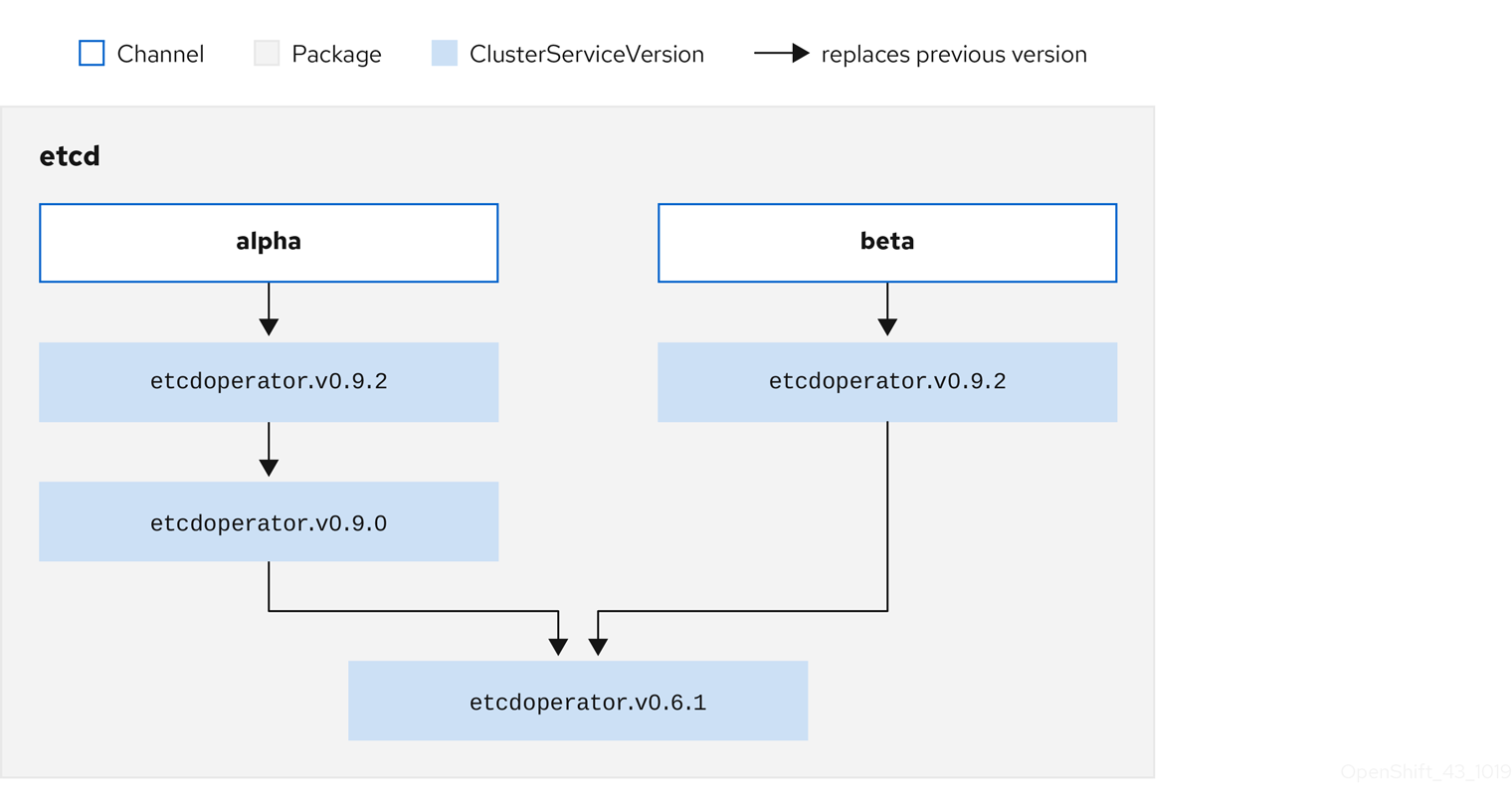

Within a catalog source, Operators are organized into packages and streams of updates called channels, which should be a familiar update pattern from OpenShift Dedicated or other software on a continuous release cycle like web browsers.

Figure 2.4. Packages and channels in a Catalog source

A user indicates a particular package and channel in a particular catalog source in a subscription, for example an etcd package and its alpha channel. If a subscription is made to a package that has not yet been installed in the namespace, the latest Operator for that package is installed.

OLM deliberately avoids version comparisons, so the "latest" or "newest" Operator available from a given catalog → channel → package path does not necessarily need to be the highest version number. It should be thought of more as the head reference of a channel, similar to a Git repository.

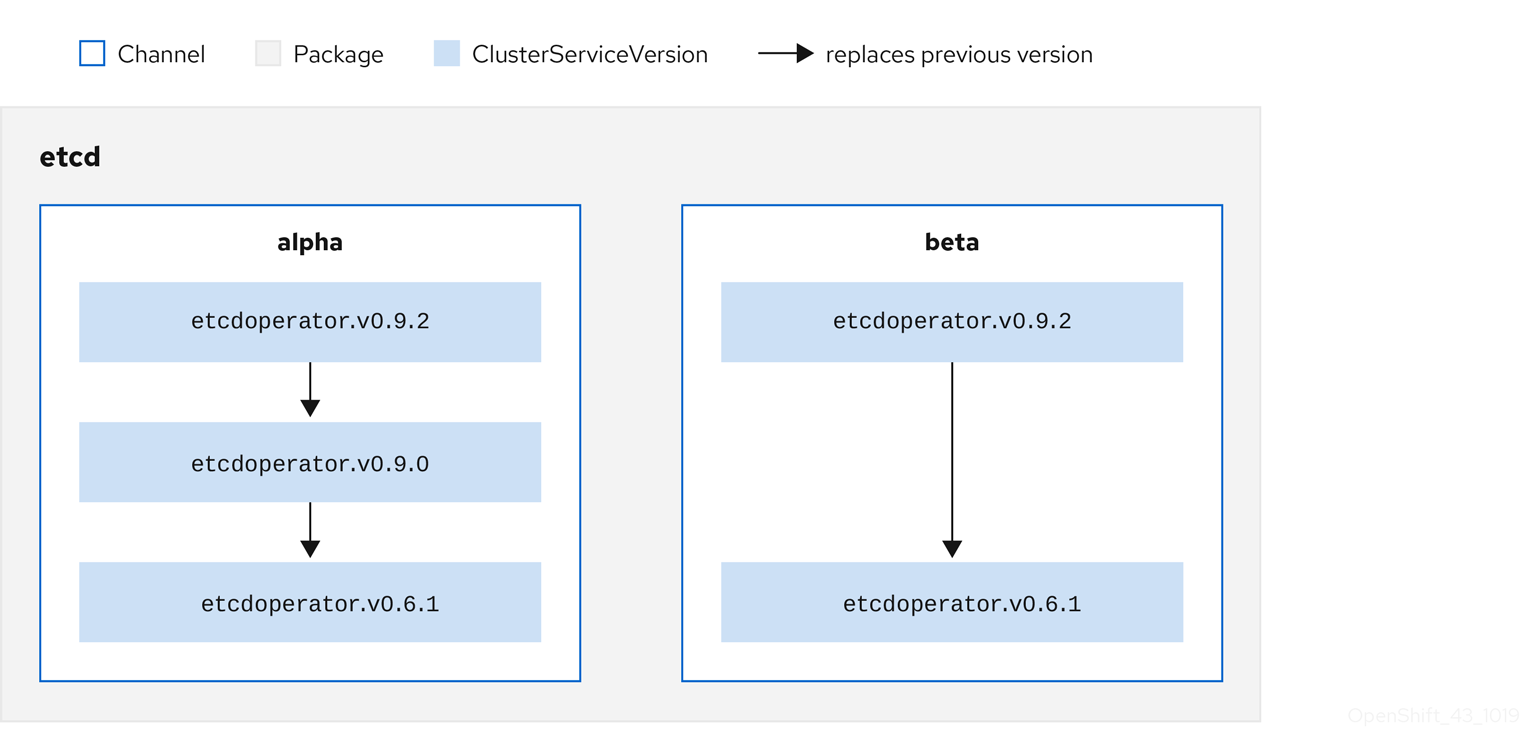

Each CSV has a replaces parameter that indicates which Operator it replaces. This builds a graph of CSVs that can be queried by OLM, and updates can be shared between channels. Channels can be thought of as entry points into the graph of updates:

Figure 2.5. OLM graph of available channel updates

Example channels in a package

packageName: example

channels:

- name: alpha

currentCSV: example.v0.1.2

- name: beta

currentCSV: example.v0.1.3

defaultChannel: alpha

For OLM to successfully query for updates, given a catalog source, package, channel, and CSV, a catalog must be able to return, unambiguously and deterministically, a single CSV that replaces the input CSV.

2.4.3.1.1. Example upgrade path

For an example upgrade scenario, consider an installed Operator corresponding to CSV version 0.1.1. OLM queries the catalog source and detects an upgrade in the subscribed channel with new CSV version 0.1.3 that replaces an older but not-installed CSV version 0.1.2, which in turn replaces the older and installed CSV version 0.1.1.

OLM walks back from the channel head to previous versions via the replaces field specified in the CSVs to determine the upgrade path 0.1.3 → 0.1.2 → 0.1.1; the direction of the arrow indicates that the former replaces the latter. OLM upgrades the Operator one version at the time until it reaches the channel head.

For this given scenario, OLM installs Operator version 0.1.2 to replace the existing Operator version 0.1.1. Then, it installs Operator version 0.1.3 to replace the previously installed Operator version 0.1.2. At this point, the installed operator version 0.1.3 matches the channel head and the upgrade is completed.

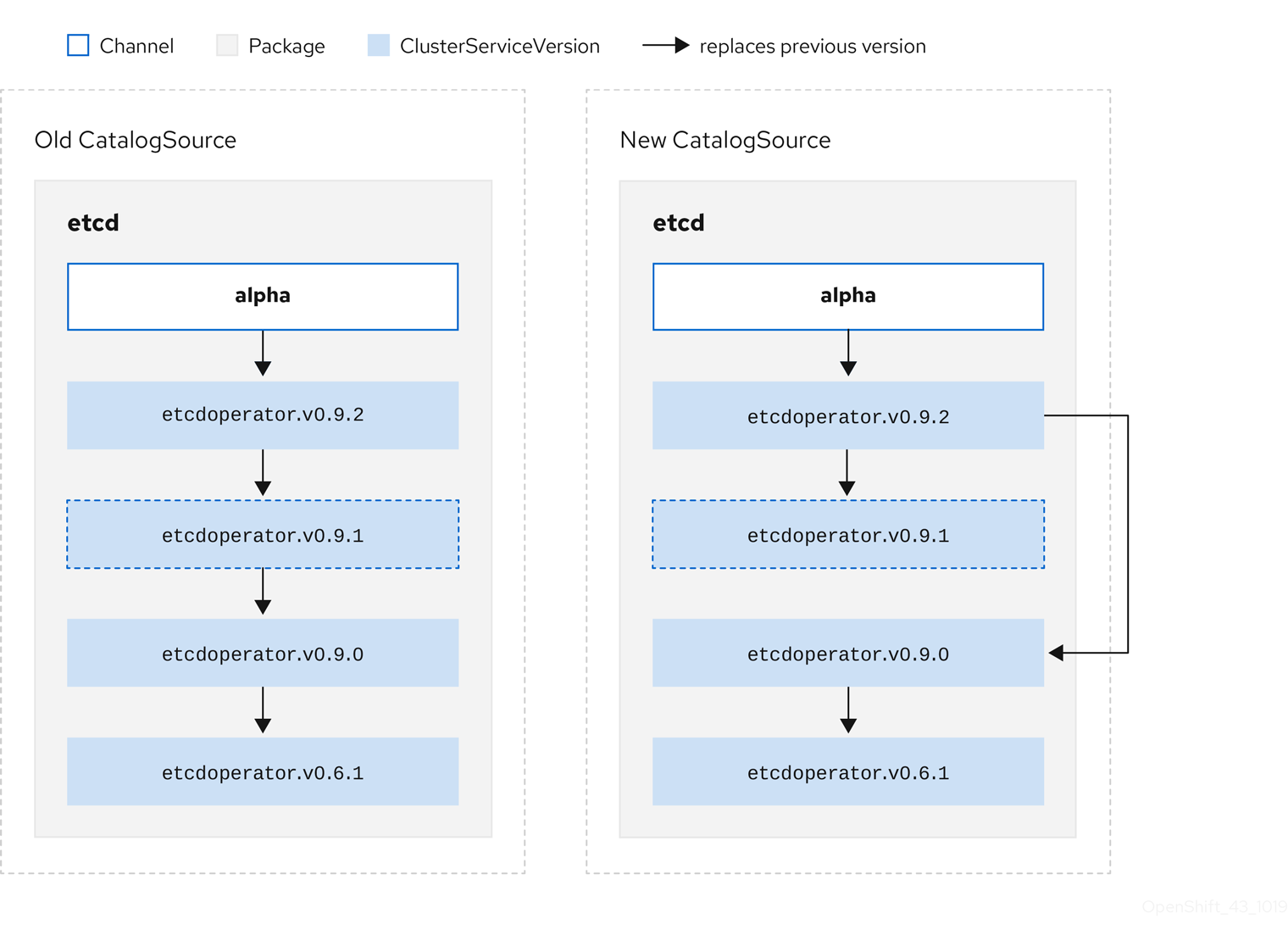

2.4.3.1.2. Skipping upgrades

The basic path for upgrades in OLM is:

- A catalog source is updated with one or more updates to an Operator.

- OLM traverses every version of the Operator until reaching the latest version the catalog source contains.

However, sometimes this is not a safe operation to perform. There will be cases where a published version of an Operator should never be installed on a cluster if it has not already, for example because a version introduces a serious vulnerability.

In those cases, OLM must consider two cluster states and provide an update graph that supports both:

- The "bad" intermediate Operator has been seen by the cluster and installed.

- The "bad" intermediate Operator has not yet been installed onto the cluster.

By shipping a new catalog and adding a skipped release, OLM is ensured that it can always get a single unique update regardless of the cluster state and whether it has seen the bad update yet.

Example CSV with skipped release

apiVersion: operators.coreos.com/v1alpha1

kind: ClusterServiceVersion

metadata:

name: etcdoperator.v0.9.2

namespace: placeholder

annotations:

spec:

displayName: etcd

description: Etcd Operator

replaces: etcdoperator.v0.9.0

skips:

- etcdoperator.v0.9.1Consider the following example of Old CatalogSource and New CatalogSource.

Figure 2.6. Skipping updates

This graph maintains that:

- Any Operator found in Old CatalogSource has a single replacement in New CatalogSource.

- Any Operator found in New CatalogSource has a single replacement in New CatalogSource.

- If the bad update has not yet been installed, it will never be.

2.4.3.1.3. Replacing multiple Operators

Creating New CatalogSource as described requires publishing CSVs that replace one Operator, but can skip several. This can be accomplished using the skipRange annotation:

olm.skipRange: <semver_range>

where <semver_range> has the version range format supported by the semver library.

When searching catalogs for updates, if the head of a channel has a skipRange annotation and the currently installed Operator has a version field that falls in the range, OLM updates to the latest entry in the channel.

The order of precedence is:

-

Channel head in the source specified by

sourceNameon the subscription, if the other criteria for skipping are met. -

The next Operator that replaces the current one, in the source specified by

sourceName. - Channel head in another source that is visible to the subscription, if the other criteria for skipping are met.

- The next Operator that replaces the current one in any source visible to the subscription.

Example CSV with skipRange

apiVersion: operators.coreos.com/v1alpha1

kind: ClusterServiceVersion

metadata:

name: elasticsearch-operator.v4.1.2

namespace: <namespace>

annotations:

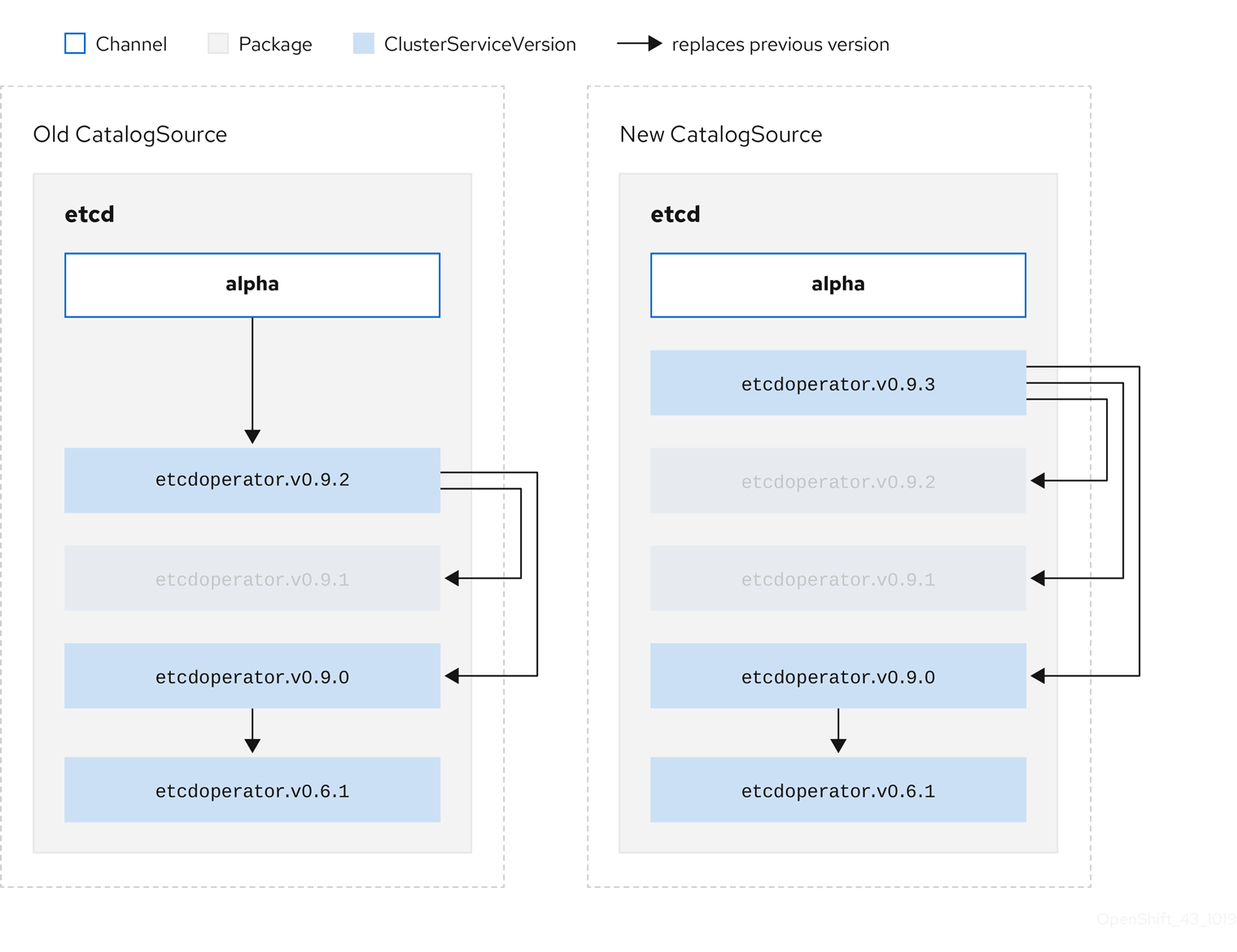

olm.skipRange: '>=4.1.0 <4.1.2'2.4.3.1.4. Z-stream support

A z-stream, or patch release, must replace all previous z-stream releases for the same minor version. OLM does not consider major, minor, or patch versions, it just needs to build the correct graph in a catalog.

In other words, OLM must be able to take a graph as in Old CatalogSource and, similar to before, generate a graph as in New CatalogSource:

Figure 2.7. Replacing several Operators

This graph maintains that:

- Any Operator found in Old CatalogSource has a single replacement in New CatalogSource.

- Any Operator found in New CatalogSource has a single replacement in New CatalogSource.

- Any z-stream release in Old CatalogSource will update to the latest z-stream release in New CatalogSource.

- Unavailable releases can be considered "virtual" graph nodes; their content does not need to exist, the registry just needs to respond as if the graph looks like this.

2.4.4. Operator Lifecycle Manager dependency resolution

This guide outlines dependency resolution and custom resource definition (CRD) upgrade lifecycles with Operator Lifecycle Manager (OLM) in OpenShift Dedicated.

2.4.4.1. About dependency resolution

Operator Lifecycle Manager (OLM) manages the dependency resolution and upgrade lifecycle of running Operators. In many ways, the problems OLM faces are similar to other system or language package managers, such as yum and rpm.

However, there is one constraint that similar systems do not generally have that OLM does: because Operators are always running, OLM attempts to ensure that you are never left with a set of Operators that do not work with each other.

As a result, OLM must never create the following scenarios:

- Install a set of Operators that require APIs that cannot be provided

- Update an Operator in a way that breaks another that depends upon it

This is made possible with two types of data:

| Properties | Typed metadata about the Operator that constitutes the public interface for it in the dependency resolver. Examples include the group/version/kind (GVK) of the APIs provided by the Operator and the semantic version (semver) of the Operator. |

| Constraints or dependencies | An Operator’s requirements that should be satisfied by other Operators that might or might not have already been installed on the target cluster. These act as queries or filters over all available Operators and constrain the selection during dependency resolution and installation. Examples include requiring a specific API to be available on the cluster or expecting a particular Operator with a particular version to be installed. |

OLM converts these properties and constraints into a system of Boolean formulas and passes them to a SAT solver, a program that establishes Boolean satisfiability, which does the work of determining what Operators should be installed.

2.4.4.2. Operator properties

All Operators in a catalog have the following properties:

olm.package- Includes the name of the package and the version of the Operator

olm.gvk- A single property for each provided API from the cluster service version (CSV)

Additional properties can also be directly declared by an Operator author by including a properties.yaml file in the metadata/ directory of the Operator bundle.

Example arbitrary property

properties:

- type: olm.kubeversion

value:

version: "1.16.0"2.4.4.2.1. Arbitrary properties

Operator authors can declare arbitrary properties in a properties.yaml file in the metadata/ directory of the Operator bundle. These properties are translated into a map data structure that is used as an input to the Operator Lifecycle Manager (OLM) resolver at runtime.

These properties are opaque to the resolver as it does not understand the properties, but it can evaluate the generic constraints against those properties to determine if the constraints can be satisfied given the properties list.

Example arbitrary properties

properties:

- property:

type: color

value: red

- property:

type: shape

value: square

- property:

type: olm.gvk

value:

group: olm.coreos.io

version: v1alpha1

kind: myresourceThis structure can be used to construct a Common Expression Language (CEL) expression for generic constraints.

Additional resources

2.4.4.3. Operator dependencies

The dependencies of an Operator are listed in a dependencies.yaml file in the metadata/ folder of a bundle. This file is optional and currently only used to specify explicit Operator-version dependencies.

The dependency list contains a type field for each item to specify what kind of dependency this is. The following types of Operator dependencies are supported:

olm.package-

This type indicates a dependency for a specific Operator version. The dependency information must include the package name and the version of the package in semver format. For example, you can specify an exact version such as

0.5.2or a range of versions such as>0.5.1. olm.gvk- With this type, the author can specify a dependency with group/version/kind (GVK) information, similar to existing CRD and API-based usage in a CSV. This is a path to enable Operator authors to consolidate all dependencies, API or explicit versions, to be in the same place.

olm.constraint- This type declares generic constraints on arbitrary Operator properties.

In the following example, dependencies are specified for a Prometheus Operator and etcd CRDs:

Example dependencies.yaml file

dependencies:

- type: olm.package

value:

packageName: prometheus

version: ">0.27.0"

- type: olm.gvk

value:

group: etcd.database.coreos.com

kind: EtcdCluster

version: v1beta22.4.4.4. Generic constraints

An olm.constraint property declares a dependency constraint of a particular type, differentiating non-constraint and constraint properties. Its value field is an object containing a failureMessage field holding a string-representation of the constraint message. This message is surfaced as an informative comment to users if the constraint is not satisfiable at runtime.

The following keys denote the available constraint types:

gvk-

Type whose value and interpretation is identical to the

olm.gvktype package-

Type whose value and interpretation is identical to the

olm.packagetype cel- A Common Expression Language (CEL) expression evaluated at runtime by the Operator Lifecycle Manager (OLM) resolver over arbitrary bundle properties and cluster information

all,any,not-

Conjunction, disjunction, and negation constraints, respectively, containing one or more concrete constraints, such as

gvkor a nested compound constraint

2.4.4.4.1. Common Expression Language (CEL) constraints

The cel constraint type supports Common Expression Language (CEL) as the expression language. The cel struct has a rule field which contains the CEL expression string that is evaluated against Operator properties at runtime to determine if the Operator satisfies the constraint.

Example cel constraint

type: olm.constraint

value:

failureMessage: 'require to have "certified"'

cel:

rule: 'properties.exists(p, p.type == "certified")'

The CEL syntax supports a wide range of logical operators, such as AND and OR. As a result, a single CEL expression can have multiple rules for multiple conditions that are linked together by these logical operators. These rules are evaluated against a dataset of multiple different properties from a bundle or any given source, and the output is solved into a single bundle or Operator that satisfies all of those rules within a single constraint.

Example cel constraint with multiple rules

type: olm.constraint

value:

failureMessage: 'require to have "certified" and "stable" properties'

cel:

rule: 'properties.exists(p, p.type == "certified") && properties.exists(p, p.type == "stable")'2.4.4.4.2. Compound constraints (all, any, not)

Compound constraint types are evaluated following their logical definitions.

The following is an example of a conjunctive constraint (all) of two packages and one GVK. That is, they must all be satisfied by installed bundles:

Example all constraint

schema: olm.bundle

name: red.v1.0.0

properties:

- type: olm.constraint

value:

failureMessage: All are required for Red because...

all:

constraints:

- failureMessage: Package blue is needed for...

package:

name: blue

versionRange: '>=1.0.0'

- failureMessage: GVK Green/v1 is needed for...

gvk:

group: greens.example.com

version: v1

kind: Green

The following is an example of a disjunctive constraint (any) of three versions of the same GVK. That is, at least one must be satisfied by installed bundles: