Chapter 5. Using Container Storage Interface (CSI)

5.1. Configuring CSI volumes

The Container Storage Interface (CSI) allows Red Hat OpenShift Service on AWS to consume storage from storage back ends that implement the CSI interface as persistent storage.

Red Hat OpenShift Service on AWS 4 supports version 1.6.0 of the CSI specification.

5.1.1. CSI architecture

CSI drivers are typically shipped as container images. These containers are not aware of Red Hat OpenShift Service on AWS where they run. To use CSI-compatible storage back end in Red Hat OpenShift Service on AWS, the cluster administrator must deploy several components that serve as a bridge between Red Hat OpenShift Service on AWS and the storage driver.

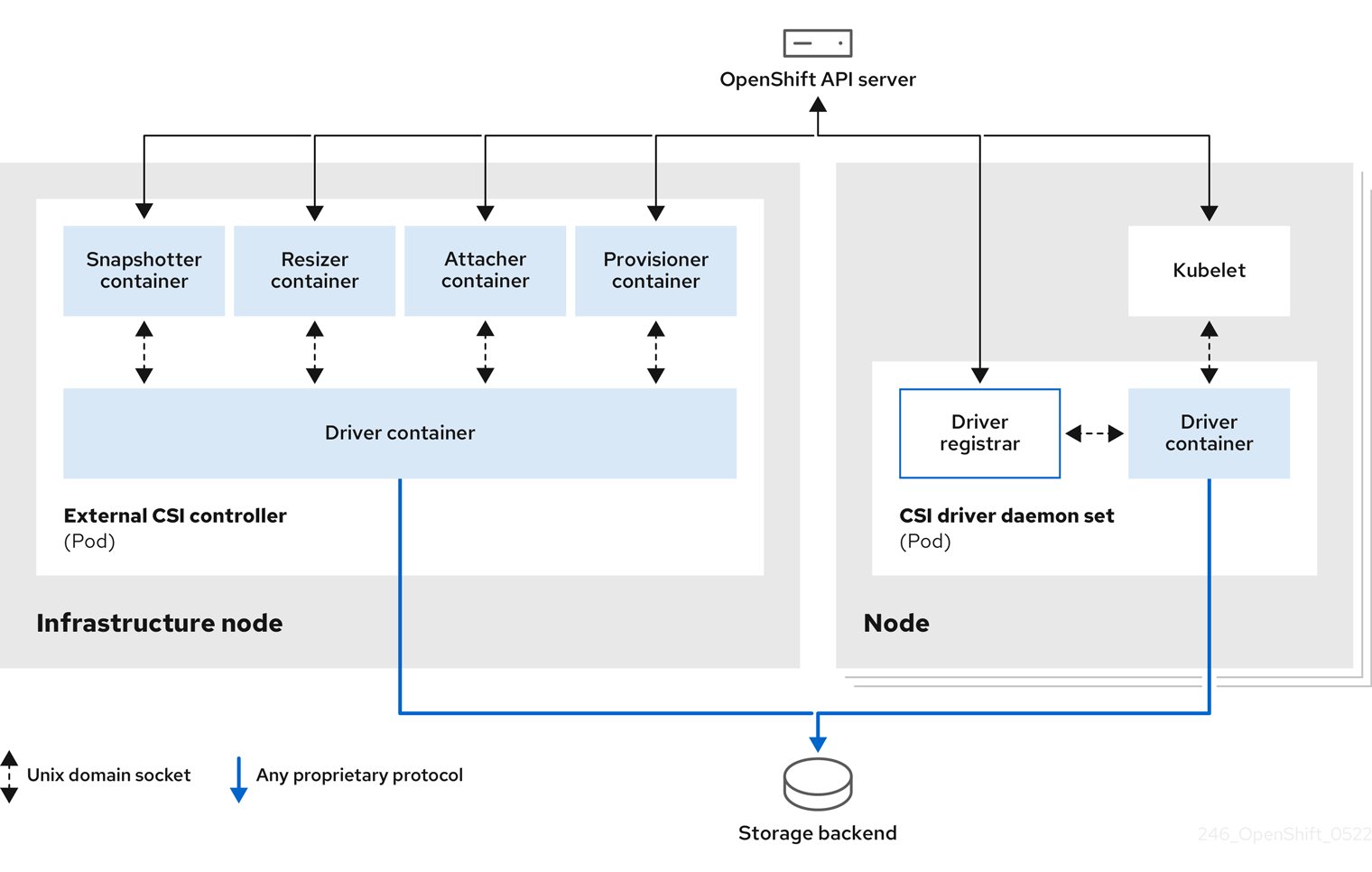

The following diagram provides a high-level overview about the components running in pods in the Red Hat OpenShift Service on AWS cluster.

It is possible to run multiple CSI drivers for different storage back ends. Each driver needs its own external controllers deployment and daemon set with the driver and CSI registrar.

5.1.1.1. External CSI controllers

External CSI controllers is a deployment that deploys one or more pods with five containers:

-

The snapshotter container watches

VolumeSnapshotandVolumeSnapshotContentobjects and is responsible for the creation and deletion ofVolumeSnapshotContentobject. -

The resizer container is a sidecar container that watches for

PersistentVolumeClaimupdates and triggersControllerExpandVolumeoperations against a CSI endpoint if you request more storage onPersistentVolumeClaimobject. -

An external CSI attacher container translates

attachanddetachcalls from Red Hat OpenShift Service on AWS to respectiveControllerPublishandControllerUnpublishcalls to the CSI driver. -

An external CSI provisioner container that translates

provisionanddeletecalls from Red Hat OpenShift Service on AWS to respectiveCreateVolumeandDeleteVolumecalls to the CSI driver. - A CSI driver container.

The CSI attacher and CSI provisioner containers communicate with the CSI driver container using UNIX Domain Sockets, ensuring that no CSI communication leaves the pod. The CSI driver is not accessible from outside of the pod.

The attach, detach, provision, and delete operations typically require the CSI driver to use credentials to the storage backend. Run the CSI controller pods on infrastructure nodes so the credentials are never leaked to user processes, even in the event of a catastrophic security breach on a compute node.

The external attacher must also run for CSI drivers that do not support third-party attach or detach operations. The external attacher will not issue any ControllerPublish or ControllerUnpublish operations to the CSI driver. However, it still must run to implement the necessary Red Hat OpenShift Service on AWS attachment API.

5.1.1.2. CSI driver daemon set

The CSI driver daemon set runs a pod on every node that allows Red Hat OpenShift Service on AWS to mount storage provided by the CSI driver to the node and use it in user workloads (pods) as persistent volumes (PVs). The pod with the CSI driver installed contains the following containers:

-

A CSI driver registrar, which registers the CSI driver into the

openshift-nodeservice running on the node. Theopenshift-nodeprocess running on the node then directly connects with the CSI driver using the UNIX Domain Socket available on the node. - A CSI driver.

The CSI driver deployed on the node should have as few credentials to the storage back end as possible. Red Hat OpenShift Service on AWS will only use the node plugin set of CSI calls such as NodePublish/NodeUnpublish and NodeStage/NodeUnstage, if these calls are implemented.

5.1.2. CSI drivers supported by Red Hat OpenShift Service on AWS

Red Hat OpenShift Service on AWS installs certain CSI drivers by default, giving users storage options that are not possible with in-tree volume plugins.

To create CSI-provisioned persistent volumes that mount to these supported storage assets, Red Hat OpenShift Service on AWS installs the necessary CSI driver Operator, the CSI driver, and the required storage class by default. For more details about the default namespace of the Operator and driver, see the documentation for the specific CSI Driver Operator.

The AWS EFS driver is not installed by default, and must be installed manually. For instructions about installing the AWS EFS CSI driver, see "AWS Elastic File Service CSI Driver Operator" in the Additional resources section.

The following table describes the CSI drivers that are installed with Red Hat OpenShift Service on AWS, supported by Red Hat OpenShift Service on AWS, and which CSI features they support, such as volume snapshots and resize.

In addition to the drivers listed in the following table, Red Hat OpenShift Service on AWS functions with CSI drivers from third-party storage vendors. Red Hat does not oversee third-party provisioners or the connected CSI drivers and the vendors fully control source code, deployment, operation, and Kubernetes compatibility. These volume provisioners are considered customer-managed and the respective vendors are responsible for providing support. For more information, see the Shared responsibilities for Red Hat OpenShift Service on AWS in the Additional resources section.

| CSI driver | CSI volume snapshots | CSI volume group snapshots [1] | CSI cloning | CSI resize | Inline ephemeral volumes | User namespaces |

|---|---|---|---|---|---|---|

| AWS EBS |

✅ |

|

|

✅ |

|

✅ |

| AWS EFS |

|

|

|

|

|

|

| LVM Storage |

✅ |

|

✅ |

✅ |

|

✅ |

5.1.3. Dynamic provisioning

Dynamic provisioning of persistent storage depends on the capabilities of the CSI driver and underlying storage back end. The provider of the CSI driver should document how to create a storage class in Red Hat OpenShift Service on AWS and the parameters available for configuration.

The created storage class can be configured to enable dynamic provisioning.

Procedure

Create a default storage class that ensures all PVCs that do not require any special storage class are provisioned by the installed CSI driver.

# oc create -f - << EOF apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: <storage-class>1 annotations: storageclass.kubernetes.io/is-default-class: "true" provisioner: <provisioner-name>2 parameters: csi.storage.k8s.io/fstype: xfs3 EOF

5.1.4. Example using the CSI driver

The following example installs a default MySQL template without any changes to the template.

Prerequisites

- The CSI driver has been deployed.

- A storage class has been created for dynamic provisioning.

Procedure

Create the MySQL template:

# oc new-app mysql-persistentExample output

--> Deploying template "openshift/mysql-persistent" to project default ...# oc get pvcExample output

NAME STATUS VOLUME CAPACITY mysql Bound kubernetes-dynamic-pv-3271ffcb4e1811e8 1Gi ACCESS MODES STORAGECLASS AGE RWO gp3-csi 3s

5.2. Managing the default storage class

5.2.1. Overview

Managing the default storage class allows you to accomplish several different objectives:

- Enforcing static provisioning by disabling dynamic provisioning.

- When you have other preferred storage classes, preventing the storage operator from re-creating the initial default storage class.

- Renaming, or otherwise changing, the default storage class

To accomplish these objectives, you change the setting for the spec.storageClassState field in the ClusterCSIDriver object. The possible settings for this field are:

- Managed: (Default) The Container Storage Interface (CSI) operator is actively managing its default storage class, so that most manual changes made by a cluster administrator to the default storage class are removed, and the default storage class is continuously re-created if you attempt to manually delete it.

- Unmanaged: You can modify the default storage class. The CSI operator is not actively managing storage classes, so that it is not reconciling the default storage class it creates automatically.

- Removed: The CSI operators deletes the default storage class.

5.2.2. Managing the default storage class using the web console

Prerequisites

- Access to the Red Hat OpenShift Service on AWS web console.

- Access to the cluster with cluster-admin privileges.

Procedure

To manage the default storage class using the web console:

- Log in to the web console.

- Click Administration > CustomResourceDefinitions.

-

On the CustomResourceDefinitions page, type

clustercsidriverto find theClusterCSIDriverobject. - Click ClusterCSIDriver, and then click the Instances tab.

- Click the name of the desired instance, and then click the YAML tab.

Add the

spec.storageClassStatefield with a value ofManaged,Unmanaged, orRemoved.Example

... spec: driverConfig: driverType: '' logLevel: Normal managementState: Managed observedConfig: null operatorLogLevel: Normal storageClassState: Unmanaged1 ...- 1

spec.storageClassStatefield set to "Unmanaged"

- Click Save.

5.2.3. Managing the default storage class using the CLI

Prerequisites

- Access to the cluster with cluster-admin privileges.

Procedure

To manage the storage class using the CLI, run the following command:

$ oc patch clustercsidriver $DRIVERNAME --type=merge -p "{\"spec\":{\"storageClassState\":\"${STATE}\"}}" - 1

- Where

${STATE}is "Removed" or "Managed" or "Unmanaged".Where

$DRIVERNAMEis the provisioner name. You can find the provisioner name by running the commandoc get sc.

5.2.4. Absent or multiple default storage classes

5.2.4.1. Multiple default storage classes

Multiple default storage classes can occur if you mark a non-default storage class as default and do not unset the existing default storage class, or you create a default storage class when a default storage class is already present. With multiple default storage classes present, any persistent volume claim (PVC) requesting the default storage class (pvc.spec.storageClassName=nil) gets the most recently created default storage class, regardless of the default status of that storage class, and the administrator receives an alert in the alerts dashboard that there are multiple default storage classes, MultipleDefaultStorageClasses.

5.2.4.2. Absent default storage class

There are two possible scenarios where PVCs can attempt to use a non-existent default storage class:

- An administrator removes the default storage class or marks it as non-default, and then a user creates a PVC requesting the default storage class.

- During installation, the installer creates a PVC requesting the default storage class, which has not yet been created.

In the preceding scenarios, PVCs remain in the pending state indefinitely. To resolve this situation, create a default storage class or declare one of the existing storage classes as the default. As soon as the default storage class is created or declared, the PVCs get the new default storage class. If possible, the PVCs eventually bind to statically or dynamically provisioned PVs as usual, and move out of the pending state.

5.2.5. Changing the default storage class

Use the following procedure to change the default storage class.

For example, if you have two defined storage classes, gp3 and standard, and you want to change the default storage class from gp3 to standard.

Prerequisites

- Access to the cluster with cluster-admin privileges.

Procedure

To change the default storage class:

List the storage classes:

$ oc get storageclassExample output

NAME TYPE gp3 (default) ebs.csi.aws.com1 standard ebs.csi.aws.com- 1

(default)indicates the default storage class.

Make the desired storage class the default.

For the desired storage class, set the

storageclass.kubernetes.io/is-default-classannotation totrueby running the following command:$ oc patch storageclass standard -p '{"metadata": {"annotations": {"storageclass.kubernetes.io/is-default-class": "true"}}}'NoteYou can have multiple default storage classes for a short time. However, you should ensure that only one default storage class exists eventually.

With multiple default storage classes present, any persistent volume claim (PVC) requesting the default storage class (

pvc.spec.storageClassName=nil) gets the most recently created default storage class, regardless of the default status of that storage class, and the administrator receives an alert in the alerts dashboard that there are multiple default storage classes,MultipleDefaultStorageClasses.Remove the default storage class setting from the old default storage class.

For the old default storage class, change the value of the

storageclass.kubernetes.io/is-default-classannotation tofalseby running the following command:$ oc patch storageclass gp3 -p '{"metadata": {"annotations": {"storageclass.kubernetes.io/is-default-class": "false"}}}'Verify the changes:

$ oc get storageclassExample output

NAME TYPE gp3 ebs.csi.aws.com standard (default) ebs.csi.aws.com

5.3. AWS Elastic Block Store CSI Driver Operator

5.3.1. Overview

Red Hat OpenShift Service on AWS is capable of provisioning persistent volumes (PVs) using the AWS EBS CSI driver.

Familiarity with persistent storage and configuring CSI volumes is recommended when working with a Container Storage Interface (CSI) Operator and driver.

To create CSI-provisioned PVs that mount to AWS EBS storage assets, Red Hat OpenShift Service on AWS installs the AWS EBS CSI Driver Operator (a Red Hat operator) and the AWS EBS CSI driver by default in the openshift-cluster-csi-drivers namespace.

- The AWS EBS CSI Driver Operator provides a StorageClass by default that you can use to create PVCs. You can disable this default storage class if desired (see Managing the default storage class). You also have the option to create the AWS EBS StorageClass as described in Persistent storage using Amazon Elastic Block Store.

- The AWS EBS CSI driver enables you to create and mount AWS EBS PVs.

5.3.2. About CSI

Storage vendors have traditionally provided storage drivers as part of Kubernetes. With the implementation of the Container Storage Interface (CSI), third-party providers can instead deliver storage plugins using a standard interface without ever having to change the core Kubernetes code.

CSI Operators give Red Hat OpenShift Service on AWS users storage options, such as volume snapshots, that are not possible with in-tree volume plugins.

Red Hat OpenShift Service on AWS defaults to using the CSI plugin to provision Amazon Elastic Block Store (Amazon EBS) storage.

For information about dynamically provisioning AWS EBS persistent volumes in Red Hat OpenShift Service on AWS, see Persistent storage using Amazon Elastic Block Store.

5.4. AWS Elastic File Service CSI Driver Operator

This procedure is specific to the AWS EFS CSI Driver Operator (a Red Hat Operator), which is only applicable for Red Hat OpenShift Service on AWS 4.10 and later versions.

5.4.1. Overview

Red Hat OpenShift Service on AWS is capable of provisioning persistent volumes (PVs) using the Container Storage Interface (CSI) driver for AWS Elastic File Service (EFS).

Familiarity with persistent storage and configuring CSI volumes is recommended when working with a CSI Operator and driver.

After installing the AWS EFS CSI Driver Operator, Red Hat OpenShift Service on AWS installs the AWS EFS CSI Operator and the AWS EFS CSI driver by default in the openshift-cluster-csi-drivers namespace. This allows the AWS EFS CSI Driver Operator to create CSI-provisioned PVs that mount to AWS EFS assets.

-

The AWS EFS CSI Driver Operator, after being installed, does not create a storage class by default to use to create persistent volume claims (PVCs). However, you can manually create the AWS EFS

StorageClass. The AWS EFS CSI Driver Operator supports dynamic volume provisioning by allowing storage volumes to be created on-demand. This eliminates the need for cluster administrators to pre-provision storage. - The AWS EFS CSI driver enables you to create and mount AWS EFS PVs.

5.4.2. About CSI

Storage vendors have traditionally provided storage drivers as part of Kubernetes. With the implementation of the Container Storage Interface (CSI), third-party providers can instead deliver storage plugins using a standard interface without ever having to change the core Kubernetes code.

CSI Operators give Red Hat OpenShift Service on AWS users storage options, such as volume snapshots, that are not possible with in-tree volume plugins.

5.4.3. Setting up the AWS EFS CSI Driver Operator

- If you are using AWS EFS with AWS Secure Token Service (STS), obtain a role Amazon Resource Name (ARN) for STS. This is required for installing the AWS EFS CSI Driver Operator.

- Install the AWS EFS CSI Driver Operator.

- Install the AWS EFS CSI Driver.

5.4.3.1. Obtaining a role Amazon Resource Name for Security Token Service

This procedure explains how to obtain a role Amazon Resource Name (ARN) to configure the AWS EFS CSI Driver Operator with Red Hat OpenShift Service on AWS on AWS Security Token Service (STS).

Perform this procedure before you install the AWS EFS CSI Driver Operator (see Installing the AWS EFS CSI Driver Operator procedure).

Prerequisites

- Access to the cluster as a user with the cluster-admin role.

- AWS account credentials

Procedure

Create an IAM policy JSON file with the following content:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "elasticfilesystem:DescribeAccessPoints", "elasticfilesystem:DescribeFileSystems", "elasticfilesystem:DescribeMountTargets", "ec2:DescribeAvailabilityZones", "elasticfilesystem:TagResource" ], "Resource": "*" }, { "Effect": "Allow", "Action": [ "elasticfilesystem:CreateAccessPoint" ], "Resource": "*", "Condition": { "StringLike": { "aws:RequestTag/efs.csi.aws.com/cluster": "true" } } }, { "Effect": "Allow", "Action": "elasticfilesystem:DeleteAccessPoint", "Resource": "*", "Condition": { "StringEquals": { "aws:ResourceTag/efs.csi.aws.com/cluster": "true" } } } ] }Create an IAM trust JSON file with the following content:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "arn:aws:iam::<your_aws_account_ID>:oidc-provider/<openshift_oidc_provider>" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "<openshift_oidc_provider>:sub": [ "system:serviceaccount:openshift-cluster-csi-drivers:aws-efs-csi-driver-operator", "system:serviceaccount:openshift-cluster-csi-drivers:aws-efs-csi-driver-controller-sa" ] } } } ] }where:

Statement.Principal.FederatedSpecifies your AWS account ID and the OpenShift OIDC provider endpoint.

Obtain your AWS account ID by running the following command:

$ aws sts get-caller-identity --query Account --output textStatement.Condition.StringEquals[0]- Specify the OpenShift OIDC endpoint again.

Create the IAM role:

ROLE_ARN=$(aws iam create-role \ --role-name "<your_cluster_name>-aws-efs-csi-operator" \ --assume-role-policy-document file://<your_trust_file_name>.json \ --query "Role.Arn" --output text); echo $ROLE_ARNCopy the role ARN. You will need it when you install the AWS EFS CSI Driver Operator.

Create the IAM policy:

POLICY_ARN=$(aws iam create-policy \ --policy-name "<your_cluster_name>-aws-efs-csi" \ --policy-document file://<your_policy_file_name>.json \ --query 'Policy.Arn' --output text); echo $POLICY_ARNAttach the IAM policy to the IAM role:

$ aws iam attach-role-policy \ --role-name "<your_cluster_name>-aws-efs-csi-operator" \ --policy-arn $POLICY_ARN

Next steps

5.4.3.2. Installing the AWS EFS CSI Driver Operator

The AWS EFS CSI Driver Operator (a Red Hat Operator) is not installed in Red Hat OpenShift Service on AWS by default. Use the following procedure to install and configure the AWS EFS CSI Driver Operator in your cluster.

Prerequisites

- Access to the Red Hat OpenShift Service on AWS web console.

Procedure

To install the AWS EFS CSI Driver Operator from the web console:

- Log in to the web console.

Install the AWS EFS CSI Operator:

-

Click Ecosystem

Software Catalog. - Locate the AWS EFS CSI Operator by typing AWS EFS CSI in the filter box.

- Click the AWS EFS CSI Driver Operator button.

ImportantBe sure to select the AWS EFS CSI Driver Operator and not the AWS EFS Operator. The AWS EFS Operator is a community Operator and is not supported by Red Hat.

- On the AWS EFS CSI Driver Operator page, click Install.

On the Install Operator page, ensure that:

- All namespaces on the cluster (default) is selected.

- Installed Namespace is set to openshift-cluster-csi-drivers.

Click Install.

After the installation finishes, the AWS EFS CSI Operator is listed in the Installed Operators section of the web console.

-

Click Ecosystem

Next steps

5.4.3.3. Installing the AWS EFS CSI Driver

After installing the AWS EFS CSI Driver Operator (a Red Hat operator), you install the AWS EFS CSI driver.

Prerequisites

- Access to the Red Hat OpenShift Service on AWS web console.

Procedure

-

Click Administration

CustomResourceDefinitions ClusterCSIDriver. - On the Instances tab, click Create ClusterCSIDriver.

Use the following YAML file:

apiVersion: operator.openshift.io/v1 kind: ClusterCSIDriver metadata: name: efs.csi.aws.com spec: managementState: Managed- Click Create.

Wait for the following Conditions to change to a "True" status:

- AWSEFSDriverNodeServiceControllerAvailable

- AWSEFSDriverControllerServiceControllerAvailable

5.4.4. Creating the AWS EFS storage class

Storage classes are used to differentiate and delineate storage levels and usages. By defining a storage class, users can obtain dynamically provisioned persistent volumes.

The AWS EFS CSI Driver Operator (a Red Hat operator), after being installed, does not create a storage class by default. However, you can manually create the AWS EFS storage class.

5.4.4.1. Creating the AWS EFS storage class using the console

Procedure

-

In the Red Hat OpenShift Service on AWS web console, click Storage

StorageClasses. - On the StorageClasses page, click Create StorageClass.

On the StorageClass page, perform the following steps:

- Enter a name to reference the storage class.

- Optional: Enter the description.

- Select the reclaim policy.

-

Select

efs.csi.aws.comfrom the Provisioner drop-down list. - Optional: Set the configuration parameters for the selected provisioner.

- Click Create.

5.4.4.2. Creating the AWS EFS storage class using the CLI

Procedure

Create a

StorageClassobject:kind: StorageClass apiVersion: storage.k8s.io/v1 metadata: name: efs-sc provisioner: efs.csi.aws.com parameters: provisioningMode: efs-ap1 fileSystemId: fs-a53249112 directoryPerms: "700"3 gidRangeStart: "1000"4 gidRangeEnd: "2000"5 basePath: "/dynamic_provisioning"6 - 1

provisioningModemust beefs-apto enable dynamic provisioning.- 2

fileSystemIdmust be the ID of the EFS volume created manually.- 3

directoryPermsis the default permission of the root directory of the volume. In this example, the volume is accessible only by the owner.- 4 5

gidRangeStartandgidRangeEndset the range of POSIX Group IDs (GIDs) that are used to set the GID of the AWS access point. If not specified, the default range is 50000-7000000. Each provisioned volume, and thus AWS access point, is assigned a unique GID from this range.- 6

basePathis the directory on the EFS volume that is used to create dynamically provisioned volumes. In this case, a PV is provisioned as “/dynamic_provisioning/<random uuid>” on the EFS volume. Only the subdirectory is mounted to pods that use the PV.

NoteA cluster admin can create several

StorageClassobjects, each using a different EFS volume.

5.4.5. AWS EFS CSI cross account support

Cross account support allows you to have a Red Hat OpenShift Service on AWS cluster in one AWS account and mount your file system in another AWS account by using the AWS Elastic File System (EFS) Container Storage Interface (CSI) driver.

Prerequisites

- Access to a Red Hat OpenShift Service on AWS cluster with administrator rights

- Two valid AWS accounts

- The EFS CSI Operator has been installed. For information about installing the EFS CSI Operator, see the Installing the AWS EFS CSI Driver Operator section.

- Both the Red Hat OpenShift Service on AWS cluster and EFS file system must be located in the same AWS region.

- Ensure that the two virtual private clouds (VPCs) used in the following procedure use different network Classless Inter-Domain Routing (CIDR) ranges.

-

Access to Red Hat OpenShift Service on AWS CLI (

oc). - Access to AWS CLI.

-

Access to

jqcommand-line JSON processor.

Procedure

The following procedure explains how to set up:

- Red Hat OpenShift Service on AWS AWS Account A: Contains a Red Hat Red Hat OpenShift Service on AWS cluster v4.16, or later, deployed within a VPC

- AWS Account B: Contains a VPC (including subnets, route tables, and network connectivity). The EFS filesystem will be created in this VPC.

To use AWS EFS across accounts:

Set up the environment:

Configure environment variables by running the following commands:

export CLUSTER_NAME="<CLUSTER_NAME>"1 export AWS_REGION="<AWS_REGION>"2 export AWS_ACCOUNT_A_ID="<ACCOUNT_A_ID>"3 export AWS_ACCOUNT_B_ID="<ACCOUNT_B_ID>"4 export AWS_ACCOUNT_A_VPC_CIDR="<VPC_A_CIDR>"5 export AWS_ACCOUNT_B_VPC_CIDR="<VPC_B_CIDR>"6 export AWS_ACCOUNT_A_VPC_ID="<VPC_A_ID>"7 export AWS_ACCOUNT_B_VPC_ID="<VPC_B_ID>"8 export SCRATCH_DIR="<WORKING_DIRECTORY>"9 export CSI_DRIVER_NAMESPACE="openshift-cluster-csi-drivers"10 export AWS_PAGER=""11 - 1

- Cluster name of choice.

- 2

- AWS region of choice.

- 3

- AWS Account A ID.

- 4

- AWS Account B ID.

- 5

- CIDR range of VPC in Account A.

- 6

- CIDR range of VPC in Account B.

- 7

- VPC ID in Account A (cluster)

- 8

- VPC ID in Account B (EFS cross account)

- 9

- Any writeable directory of choice to use to store temporary files.

- 10

- If your driver is installed in a non-default namespace, change this value.

- 11

- Makes AWS CLI output everything directly to stdout.

Create the working directory by running the following command:

mkdir -p $SCRATCH_DIRVerify cluster connectivity by running the following command in the Red Hat OpenShift Service on AWS CLI:

$ oc whoamiDetermine the Red Hat OpenShift Service on AWS cluster type and set node selector:

The EFS cross account feature requires assigning AWS IAM policies to nodes running EFS CSI controller pods. However, this is not consistent for every Red Hat OpenShift Service on AWS type.

If your cluster is deployed as a Hosted Control Plane (HyperShift), set the

NODE_SELECTORenvironment variable to hold the worker node label by running the following command:export NODE_SELECTOR=node-role.kubernetes.io/workerFor all other Red Hat OpenShift Service on AWS types, set the

NODE_SELECTORenvironment variable to hold the master node label by running the following command:export NODE_SELECTOR=node-role.kubernetes.io/master

Configure AWS CLI profiles as environment variables for account switching by running the following commands:

export AWS_ACCOUNT_A="<ACCOUNT_A_NAME>" export AWS_ACCOUNT_B="<ACCOUNT_B_NAME>"Ensure that your AWS CLI is configured with JSON output format as the default for both accounts by running the following commands:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_A} aws configure get output export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_B} aws configure get outputIf the preceding commands return:

- No value: The default output format is already set to JSON and no changes are required.

- Any value: Reconfigure your AWS CLI to use JSON format. For information about changing output formats, see Setting the output format in the AWS CLI in the AWS documentation.

Unset

AWS_PROFILEin your shell to prevent conflicts withAWS_DEFAULT_PROFILEby running the following command:unset AWS_PROFILE

Configure the AWS Account B IAM roles and policies:

Switch to your Account B profile by running the following command:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_B}Define the IAM role name for the EFS CSI Driver Operator by running the following command:

export ACCOUNT_B_ROLE_NAME=${CLUSTER_NAME}-cross-account-aws-efs-csi-operatorCreate the IAM trust policy file by running the following command:

cat <<EOF > $SCRATCH_DIR/AssumeRolePolicyInAccountB.json { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "AWS": "arn:aws:iam::${AWS_ACCOUNT_A_ID}:root" }, "Action": "sts:AssumeRole", "Condition": {} } ] } EOFCreate the IAM role for the EFS CSI Driver Operator by running the following command:

ACCOUNT_B_ROLE_ARN=$(aws iam create-role \ --role-name "${ACCOUNT_B_ROLE_NAME}" \ --assume-role-policy-document file://$SCRATCH_DIR/AssumeRolePolicyInAccountB.json \ --query "Role.Arn" --output text) \ && echo $ACCOUNT_B_ROLE_ARNCreate the IAM policy file by running the following command:

cat << EOF > $SCRATCH_DIR/EfsPolicyInAccountB.json { "Version": "2012-10-17", "Statement": [ { "Sid": "VisualEditor0", "Effect": "Allow", "Action": [ "ec2:DescribeNetworkInterfaces", "ec2:DescribeSubnets" ], "Resource": "*" }, { "Sid": "VisualEditor1", "Effect": "Allow", "Action": [ "elasticfilesystem:DescribeMountTargets", "elasticfilesystem:DeleteAccessPoint", "elasticfilesystem:ClientMount", "elasticfilesystem:DescribeAccessPoints", "elasticfilesystem:ClientWrite", "elasticfilesystem:ClientRootAccess", "elasticfilesystem:DescribeFileSystems", "elasticfilesystem:CreateAccessPoint", "elasticfilesystem:TagResource" ], "Resource": "*" } ] } EOFCreate the IAM policy by running the following command:

ACCOUNT_B_POLICY_ARN=$(aws iam create-policy --policy-name "${CLUSTER_NAME}-efs-csi-policy" \ --policy-document file://$SCRATCH_DIR/EfsPolicyInAccountB.json \ --query 'Policy.Arn' --output text) \ && echo ${ACCOUNT_B_POLICY_ARN}Attach the policy to the role by running the following command:

aws iam attach-role-policy \ --role-name "${ACCOUNT_B_ROLE_NAME}" \ --policy-arn "${ACCOUNT_B_POLICY_ARN}"

Configure the AWS Account A IAM roles and policies:

Switch to your Account A profile by running the following command:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_A}Create the IAM policy document by running the following command:

cat << EOF > $SCRATCH_DIR/AssumeRoleInlinePolicyPolicyInAccountA.json { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": "sts:AssumeRole", "Resource": "${ACCOUNT_B_ROLE_ARN}" } ] } EOFIn AWS Account A, attach the AWS-managed policy "AmazonElasticFileSystemClientFullAccess" to the Red Hat OpenShift Service on AWS cluster master role by running the following command:

EFS_CLIENT_FULL_ACCESS_BUILTIN_POLICY_ARN=arn:aws:iam::aws:policy/AmazonElasticFileSystemClientFullAccess declare -A ROLE_SEEN for NODE in $(oc get nodes --selector="${NODE_SELECTOR}" -o jsonpath='{.items[*].metadata.name}'); do INSTANCE_PROFILE=$(aws ec2 describe-instances \ --filters "Name=private-dns-name,Values=${NODE}" \ --query 'Reservations[].Instances[].IamInstanceProfile.Arn' \ --output text | awk -F'/' '{print $NF}' | xargs) MASTER_ROLE_ARN=$(aws iam get-instance-profile \ --instance-profile-name "${INSTANCE_PROFILE}" \ --query 'InstanceProfile.Roles[0].Arn' \ --output text | xargs) MASTER_ROLE_NAME=$(echo "${MASTER_ROLE_ARN}" | awk -F'/' '{print $NF}' | xargs) echo "Checking role: '${MASTER_ROLE_NAME}'" if [[ -n "${ROLE_SEEN[$MASTER_ROLE_NAME]:-}" ]]; then echo "Already processed role: '${MASTER_ROLE_NAME}', skipping." continue fi ROLE_SEEN["$MASTER_ROLE_NAME"]=1 echo "Assigning policy ${EFS_CLIENT_FULL_ACCESS_BUILTIN_POLICY_ARN} to role ${MASTER_ROLE_NAME}" aws iam attach-role-policy --role-name "${MASTER_ROLE_NAME}" --policy-arn "${EEFS_CLIENT_FULL_ACCESS_BUILTIN_POLICY_ARN}" done

Attach the policy to the IAM entity to allow role assumption:

This step depends on your cluster configuration. In both of the following scenarios, the EFS CSI Driver Operator uses an entity to authenticate to AWS, and this entity must be granted permission to assume roles in Account B.

If your cluster:

- Does not have STS enabled: The EFS CSI Driver Operator uses an IAM User entity for AWS authentication. Continue with the step "Attach policy to IAM User to allow role assumption".

- Has STS enabled: The EFS CSI Driver Operator uses an IAM role entity for AWS authentication. Continue with the step "Attach policy to IAM Role to allow role assumption".

Attach policy to IAM User to allow role assumption

Identify the IAM User used by the EFS CSI Driver Operator by running the following command:

EFS_CSI_DRIVER_OPERATOR_USER=$(oc -n openshift-cloud-credential-operator get credentialsrequest/openshift-aws-efs-csi-driver -o json | jq -r '.status.providerStatus.user')Attach the policy to the IAM user by running the following command:

aws iam put-user-policy \ --user-name "${EFS_CSI_DRIVER_OPERATOR_USER}" \ --policy-name efs-cross-account-inline-policy \ --policy-document file://$SCRATCH_DIR/AssumeRoleInlinePolicyPolicyInAccountA.json

Attach the policy to the IAM role to allow role assumption:

Identify the IAM role name currently used by the EFS CSI Driver Operator by running the following command:

EFS_CSI_DRIVER_OPERATOR_ROLE=$(oc -n ${CSI_DRIVER_NAMESPACE} get secret/aws-efs-cloud-credentials -o jsonpath='{.data.credentials}' | base64 -d | grep role_arn | cut -d'/' -f2) && echo ${EFS_CSI_DRIVER_OPERATOR_ROLE}Attach the policy to the IAM role used by the EFS CSI Driver Operator by running the following command:

aws iam put-role-policy \ --role-name "${EFS_CSI_DRIVER_OPERATOR_ROLE}" \ --policy-name efs-cross-account-inline-policy \ --policy-document file://$SCRATCH_DIR/AssumeRoleInlinePolicyPolicyInAccountA.json

Configure VPC peering:

Initiate a peering request from Account A to Account B by running the following command:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_A} PEER_REQUEST_ID=$(aws ec2 create-vpc-peering-connection --vpc-id "${AWS_ACCOUNT_A_VPC_ID}" --peer-vpc-id "${AWS_ACCOUNT_B_VPC_ID}" --peer-owner-id "${AWS_ACCOUNT_B_ID}" --query VpcPeeringConnection.VpcPeeringConnectionId --output text)Accept the peering request from Account B by running the following command:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_B} aws ec2 accept-vpc-peering-connection --vpc-peering-connection-id "${PEER_REQUEST_ID}"Retrieve the route table IDs for Account A and add routes to the Account B VPC by running the following command:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_A} for NODE in $(oc get nodes --selector=node-role.kubernetes.io/worker | tail -n +2 | awk '{print $1}') do SUBNET=$(aws ec2 describe-instances --filters "Name=private-dns-name,Values=$NODE" --query 'Reservations[*].Instances[*].NetworkInterfaces[*].SubnetId' | jq -r '.[0][0][0]') echo SUBNET is ${SUBNET} ROUTE_TABLE_ID=$(aws ec2 describe-route-tables --filters "Name=association.subnet-id,Values=${SUBNET}" --query 'RouteTables[*].RouteTableId' | jq -r '.[0]') echo Route table ID is $ROUTE_TABLE_ID aws ec2 create-route --route-table-id ${ROUTE_TABLE_ID} --destination-cidr-block ${AWS_ACCOUNT_B_VPC_CIDR} --vpc-peering-connection-id ${PEER_REQUEST_ID} doneRetrieve the route table IDs for Account B and add routes to the Account A VPC by running the following command:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_B} for ROUTE_TABLE_ID in $(aws ec2 describe-route-tables --filters "Name=vpc-id,Values=${AWS_ACCOUNT_B_VPC_ID}" --query "RouteTables[].RouteTableId" | jq -r '.[]') do echo Route table ID is $ROUTE_TABLE_ID aws ec2 create-route --route-table-id ${ROUTE_TABLE_ID} --destination-cidr-block ${AWS_ACCOUNT_A_VPC_CIDR} --vpc-peering-connection-id ${PEER_REQUEST_ID} done

Configure security groups in Account B to allow NFS traffic from Account A to EFS:

Switch to your Account B profile by running the following command:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_B}Configure the VPC security groups for EFS access by running the following command:

SECURITY_GROUP_ID=$(aws ec2 describe-security-groups --filters Name=vpc-id,Values="${AWS_ACCOUNT_B_VPC_ID}" | jq -r '.SecurityGroups[].GroupId') aws ec2 authorize-security-group-ingress \ --group-id "${SECURITY_GROUP_ID}" \ --protocol tcp \ --port 2049 \ --cidr "${AWS_ACCOUNT_A_VPC_CIDR}" | jq .

Create a region-wide EFS filesystem in Account B:

Switch to your Account B profile by running the following command:

export AWS_DEFAULT_PROFILE=${AWS_ACCOUNT_B}Create a region-wide EFS file system by running the following command:

CROSS_ACCOUNT_FS_ID=$(aws efs create-file-system --creation-token efs-token-1 \ --region ${AWS_REGION} \ --encrypted | jq -r '.FileSystemId') \ && echo $CROSS_ACCOUNT_FS_IDConfigure region-wide mount targets for EFS by running the following command:

for SUBNET in $(aws ec2 describe-subnets \ --filters "Name=vpc-id,Values=${AWS_ACCOUNT_B_VPC_ID}" \ --region ${AWS_REGION} \ | jq -r '.Subnets.[].SubnetId'); do \ MOUNT_TARGET=$(aws efs create-mount-target --file-system-id ${CROSS_ACCOUNT_FS_ID} \ --subnet-id ${SUBNET} \ --region ${AWS_REGION} \ | jq -r '.MountTargetId'); \ echo ${MOUNT_TARGET}; \ doneThis creates a mount point in each subnet of your VPC.

Configure the EFS Operator for cross-account access:

Define custom names for the secret and storage class that you will create in subsequent steps by running the following command:

export SECRET_NAME=my-efs-cross-account export STORAGE_CLASS_NAME=efs-sc-crossCreate a secret that references the role ARN in Account B by running the following command in the Red Hat OpenShift Service on AWS CLI:

oc create secret generic ${SECRET_NAME} -n ${CSI_DRIVER_NAMESPACE} --from-literal=awsRoleArn="${ACCOUNT_B_ROLE_ARN}"Grant the CSI driver controller access to the newly created secret by running the following commands in the Red Hat OpenShift Service on AWS CLI:

oc -n ${CSI_DRIVER_NAMESPACE} create role access-secrets --verb=get,list,watch --resource=secrets oc -n ${CSI_DRIVER_NAMESPACE} create rolebinding --role=access-secrets default-to-secrets --serviceaccount=${CSI_DRIVER_NAMESPACE}:aws-efs-csi-driver-controller-saCreate a new storage class that references the EFS ID from Account B and the secret created previously by running the following command in the Red Hat OpenShift Service on AWS CLI:

cat << EOF | oc apply -f - kind: StorageClass apiVersion: storage.k8s.io/v1 metadata: name: ${STORAGE_CLASS_NAME} provisioner: efs.csi.aws.com parameters: provisioningMode: efs-ap fileSystemId: ${CROSS_ACCOUNT_FS_ID} directoryPerms: "700" gidRangeStart: "1000" gidRangeEnd: "2000" basePath: "/dynamic_provisioning" csi.storage.k8s.io/provisioner-secret-name: ${SECRET_NAME} csi.storage.k8s.io/provisioner-secret-namespace: ${CSI_DRIVER_NAMESPACE} EOF

5.4.6. One Zone file systems

5.4.6.1. One Zone file systems overview

Red Hat OpenShift Service on AWS supports AWS Elastic File System (EFS) One Zone file system, which is an EFS storage option that stores data redundantly within a single Availability Zone (AZ). This contrasts with the default EFS storage option, which stores data redundantly across multiple AZs within a region.

Clusters upgraded from Red Hat OpenShift Service on AWS 4.19 are compatible with the regional EFS volumes.

Dynamic provisioning of One Zone volumes is supported only in single-zone clusters. All nodes in the cluster must be in the same AZ as the EFS volume that is used for the dynamic provisioning.

Manually provisioned One Zone volumes in regional clusters is supported, assuming that the persistent volumes (PVs) have correct spec.nodeAffinity that indicates the zone that the volume is in.

For Cloud Credential Operator (CCO) Mint mode or Passthrough, no extra configuration is required. However, for Security Token Service (STS), use the procedure in Section Setting up One Zone file systems with STS.

5.4.6.2. Setting up One Zone file systems with STS

The following procedure explains how to set up AWS One Zone file systems with Security Token Service (STS):

Prerequisites

- Access to the cluster as a user with the cluster-admin role.

- AWS account credentials

Procedure

To configure One Zone file systems with STS:

Create two

CredentialsRequestsin thecredrequestsdirectory following the procedure under Section Obtaining a role Amazon Resource Name for Security Token Service.:-

For the controller

CredentialsRequest, follow the procedure without any changes. For the driver node

CredentialsRequestuse the following example file:Example CredentialsRequest YAML file for driver node

apiVersion: cloudcredential.openshift.io/v1 kind: CredentialsRequest metadata: annotations: credentials.openshift.io/role-arns-vars: NODE_ROLEARN1 name: openshift-aws-efs-csi-driver-node namespace: openshift-cloud-credential-operator spec: providerSpec: apiVersion: cloudcredential.openshift.io/v1 kind: AWSProviderSpec statementEntries: - action: - elasticfilesystem:DescribeMountTargets - ec2:DescribeAvailabilityZones effect: Allow resource: '*' secretRef: name: node-aws-efs-cloud-credentials namespace: openshift-cluster-csi-drivers serviceAccountNames: - aws-efs-csi-driver-node-sa- 1

- Set

metadata.annotations.credentials.openshift.io/role-arns-varstoNODE_ROLEARN.

Example

ccoctloutput2025/08/26 14:05:24 Role arn:aws:iam::269733383066:role/my-arn-1-blll6-openshift-cluster-csi-drivers-aws-efs-cloud-cre created1 2025/08/26 14:05:24 Saved credentials configuration to: /home/my-arn/project/go/src/github.com/openshift/myinst/aws-sts-compact-1/manifests/openshift-cluster-csi-drivers-aws-efs-cloud-credentials-credentials.yaml 2025/08/26 14:05:24 Updated Role policy for Role my-arn-1-blll6-openshift-cluster-csi-drivers-aws-efs-cloud-cre 2025/08/26 14:05:24 Role arn:aws:iam::269733383066:role/my-arn-1-blll6-openshift-cluster-csi-drivers-node-aws-efs-clou created2 2025/08/26 14:05:24 Saved credentials configuration to: manifests/openshift-cluster-csi-drivers-node-aws-efs-cloud-credentials-credentials.yaml 2025/08/26 14:05:24 Updated Role policy for Role my-arn-1-blll6-openshift-cluster-csi-drivers-node-aws-efs-clou

-

For the controller

- Install the AWS EFS CSI driver using the controller ARN created earlier in this procedure.

Edit the operator’s subscription and add

NODE_ROLEARNwith the driver node’s ARN by running a command similar to the following:$ oc -n openshift-cluster-csi-drivers edit subscription aws-efs-csi-driver-operator ... config: env: - name: ROLEARN value: arn:aws:iam::269733383066:role/my-arn-1-blll6-openshift-cluster-csi-drivers-aws-efs-cloud-cre1 - name: NODE_ROLEARN value: arn:aws:iam::269733383066:role/my-arn-1-blll6-openshift-cluster-csi-drivers-node-aws-efs-clou2 ...

5.4.7. Dynamic provisioning for Amazon Elastic File Storage

The AWS EFS CSI driver supports a different form of dynamic provisioning than other CSI drivers. It provisions new PVs as subdirectories of a pre-existing EFS volume. The PVs are independent of each other. However, they all share the same EFS volume. When the volume is deleted, all PVs provisioned out of it are deleted too. The EFS CSI driver creates an AWS Access Point for each such subdirectory. Due to AWS AccessPoint limits, you can only dynamically provision 1000 PVs from a single StorageClass/EFS volume.

Note that PVC.spec.resources is not enforced by EFS.

In the example below, you request 5 GiB of space. However, the created PV is limitless and can store any amount of data (like petabytes). A broken application, or even a rogue application, can cause significant expenses when it stores too much data on the volume.

Using monitoring of EFS volume sizes in AWS is strongly recommended.

Prerequisites

- You have created Amazon Elastic File Storage (Amazon EFS) volumes.

- You have created the AWS EFS storage class.

Procedure

To enable dynamic provisioning:

Create a PVC (or StatefulSet or Template) as usual, referring to the

StorageClasscreated previously.apiVersion: v1 kind: PersistentVolumeClaim metadata: name: test spec: storageClassName: efs-sc accessModes: - ReadWriteMany resources: requests: storage: 5Gi

If you have problems setting up dynamic provisioning, see AWS EFS troubleshooting.

5.4.8. Creating static PVs with Amazon Elastic File Storage

It is possible to use an Amazon Elastic File Storage (Amazon EFS) volume as a single PV without any dynamic provisioning. The whole volume is mounted to pods.

Prerequisites

- You have created Amazon EFS volumes.

Procedure

Create the PV using the following YAML file:

apiVersion: v1 kind: PersistentVolume metadata: name: efs-pv spec: capacity:1 storage: 5Gi volumeMode: Filesystem accessModes: - ReadWriteMany - ReadWriteOnce persistentVolumeReclaimPolicy: Retain csi: driver: efs.csi.aws.com volumeHandle: fs-ae66151a2 volumeAttributes: encryptInTransit: "false"3 - 1

spec.capacitydoes not have any meaning and is ignored by the CSI driver. It is used only when binding to a PVC. Applications can store any amount of data to the volume.- 2

volumeHandlemust be the same ID as the EFS volume you created in AWS. If you are providing your own access point,volumeHandleshould be<EFS volume ID>::<access point ID>. For example:fs-6e633ada::fsap-081a1d293f0004630.- 3

- If desired, you can disable encryption in transit. Encryption is enabled by default.

If you have problems setting up static PVs, see AWS EFS troubleshooting.

5.4.9. Amazon Elastic File Storage security

The following information is important for Amazon Elastic File Storage (Amazon EFS) security.

When using access points, for example, by using dynamic provisioning as described earlier, Amazon automatically replaces GIDs on files with the GID of the access point. In addition, EFS considers the user ID, group ID, and secondary group IDs of the access point when evaluating file system permissions. EFS ignores the NFS client’s IDs. For more information about access points, see https://docs.aws.amazon.com/efs/latest/ug/efs-access-points.html.

As a consequence, EFS volumes silently ignore FSGroup; Red Hat OpenShift Service on AWS is not able to replace the GIDs of files on the volume with FSGroup. Any pod that can access a mounted EFS access point can access any file on it.

Unrelated to this, encryption in transit is enabled by default. For more information, see https://docs.aws.amazon.com/efs/latest/ug/encryption-in-transit.html.

5.4.10. AWS EFS storage CSI usage metrics

5.4.10.1. Usage metrics overview

Amazon Web Services (AWS) Elastic File Service (EFS) storage Container Storage Interface (CSI) usage metrics allow you to monitor how much space is used by either dynamically or statically provisioned EFS volumes.

This features is disabled by default, because turning on metrics can lead to performance degradation.

The AWS EFS usage metrics feature collects volume metrics in the AWS EFS CSI Driver by recursively walking through the files in the volume. Because this effort can degrade performance, administrators must explicitly enable this feature.

5.4.10.2. Enabling usage metrics using the web console

To enable Amazon Web Services (AWS) Elastic File Service (EFS) Storage Container Storage Interface (CSI) usage metrics using the web console:

- Click Administration > CustomResourceDefinitions.

-

On the CustomResourceDefinitions page next to the Name dropdown box, type

clustercsidriver. - Click CRD ClusterCSIDriver.

- Click the YAML tab.

Under

spec.aws.efsVolumeMetrics.state, set the value toRecursiveWalk.RecursiveWalkindicates that volume metrics collection in the AWS EFS CSI Driver is performed by recursively walking through the files in the volume.Example ClusterCSIDriver efs.csi.aws.com YAML file

spec: driverConfig: driverType: AWS aws: efsVolumeMetrics: state: RecursiveWalk recursiveWalk: refreshPeriodMinutes: 100 fsRateLimit: 10Optional: To define how the recursive walk operates, you can also set the following fields:

-

refreshPeriodMinutes: Specifies the refresh frequency for volume metrics in minutes. If this field is left blank, a reasonable default is chosen, which is subject to change over time. The current default is 240 minutes. The valid range is 1 to 43,200 minutes. -

fsRateLimit: Defines the rate limit for processing volume metrics in goroutines per file system. If this field is left blank, a reasonable default is chosen, which is subject to change over time. The current default is 5 goroutines. The valid range is 1 to 100 goroutines.

-

- Click Save.

To disable AWS EFS CSI usage metrics, use the preceding procedure, but for spec.aws.efsVolumeMetrics.state, change the value from RecursiveWalk to Disabled.

5.4.10.3. Enabling usage metrics using the CLI

To enable Amazon Web Services (AWS) Elastic File Service (EFS) storage Container Storage Interface (CSI) usage metrics using the CLI:

Edit ClusterCSIDriver by running the following command:

$ oc edit clustercsidriver efs.csi.aws.comUnder

spec.aws.efsVolumeMetrics.state, set the value toRecursiveWalk.RecursiveWalkindicates that volume metrics collection in the AWS EFS CSI Driver is performed by recursively walking through the files in the volume.Example ClusterCSIDriver efs.csi.aws.com YAML file

spec: driverConfig: driverType: AWS aws: efsVolumeMetrics: state: RecursiveWalk recursiveWalk: refreshPeriodMinutes: 100 fsRateLimit: 10Optional: To define how the recursive walk operates, you can also set the following fields:

-

refreshPeriodMinutes: Specifies the refresh frequency for volume metrics in minutes. If this field is left blank, a reasonable default is chosen, which is subject to change over time. The current default is 240 minutes. The valid range is 1 to 43,200 minutes. -

fsRateLimit: Defines the rate limit for processing volume metrics in goroutines per file system. If this field is left blank, a reasonable default is chosen, which is subject to change over time. The current default is 5 goroutines. The valid range is 1 to 100 goroutines.

-

-

Save the changes to the

efs.csi.aws.comobject.

To disable AWS EFS CSI usage metrics, use the preceding procedure, but for spec.aws.efsVolumeMetrics.state, change the value from RecursiveWalk to Disabled.

5.4.11. Amazon Elastic File Storage troubleshooting

The following information provides guidance on how to troubleshoot issues with Amazon Elastic File Storage (Amazon EFS):

-

The AWS EFS Operator and CSI driver run in namespace

openshift-cluster-csi-drivers. To initiate gathering of logs of the AWS EFS Operator and CSI driver, run the following command:

$ oc adm must-gather [must-gather ] OUT Using must-gather plugin-in image: quay.io/openshift-release-dev/ocp-v4.0-art-dev@sha256:125f183d13601537ff15b3239df95d47f0a604da2847b561151fedd699f5e3a5 [must-gather ] OUT namespace/openshift-must-gather-xm4wq created [must-gather ] OUT clusterrolebinding.rbac.authorization.k8s.io/must-gather-2bd8x created [must-gather ] OUT pod for plug-in image quay.io/openshift-release-dev/ocp-v4.0-art-dev@sha256:125f183d13601537ff15b3239df95d47f0a604da2847b561151fedd699f5e3a5 createdTo show AWS EFS Operator errors, view the

ClusterCSIDriverstatus:$ oc get clustercsidriver efs.csi.aws.com -o yamlIf a volume cannot be mounted to a pod (as shown in the output of the following command):

$ oc describe pod ... Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 2m13s default-scheduler Successfully assigned default/efs-app to ip-10-0-135-94.ec2.internal Warning FailedMount 13s kubelet MountVolume.SetUp failed for volume "pvc-d7c097e6-67ec-4fae-b968-7e7056796449" : rpc error: code = DeadlineExceeded desc = context deadline exceeded1 Warning FailedMount 10s kubelet Unable to attach or mount volumes: unmounted volumes=[persistent-storage], unattached volumes=[persistent-storage kube-api-access-9j477]: timed out waiting for the condition- 1

- Warning message indicating volume not mounted.

This error is frequently caused by AWS dropping packets between an Red Hat OpenShift Service on AWS node and Amazon EFS.

Check that the following are correct:

- AWS firewall and Security Groups

- Networking: port number and IP addresses

5.4.12. Uninstalling the AWS EFS CSI Driver Operator

All EFS PVs are inaccessible after uninstalling the AWS EFS CSI Driver Operator (a Red Hat operator).

Prerequisites

- Access to the Red Hat OpenShift Service on AWS web console.

Procedure

To uninstall the AWS EFS CSI Driver Operator from the web console:

- Log in to the web console.

- Stop all applications that use AWS EFS PVs.

Delete all AWS EFS PVs:

-

Click Storage

PersistentVolumeClaims. - Select each PVC that is in use by the AWS EFS CSI Driver Operator, click the drop-down menu on the far right of the PVC, and then click Delete PersistentVolumeClaims.

-

Click Storage

Uninstall the AWS EFS CSI driver:

NoteBefore you can uninstall the Operator, you must remove the CSI driver first.

-

Click Administration

CustomResourceDefinitions ClusterCSIDriver. - On the Instances tab, for efs.csi.aws.com, on the far left side, click the drop-down menu, and then click Delete ClusterCSIDriver.

- When prompted, click Delete.

-

Click Administration

Uninstall the AWS EFS CSI Operator:

-

Click Ecosystem

Installed Operators. - On the Installed Operators page, scroll or type AWS EFS CSI into the Search by name box to find the Operator, and then click it.

-

On the upper, right of the Installed Operators > Operator details page, click Actions

Uninstall Operator. When prompted on the Uninstall Operator window, click the Uninstall button to remove the Operator from the namespace. Any applications deployed by the Operator on the cluster need to be cleaned up manually.

After uninstalling, the AWS EFS CSI Driver Operator is no longer listed in the Installed Operators section of the web console.

-

Click Ecosystem

Before you can destroy a cluster (openshift-install destroy cluster), you must delete the EFS volume in AWS. A Red Hat OpenShift Service on AWS cluster cannot be destroyed when there is an EFS volume that uses the cluster’s VPC. Amazon does not allow deletion of such a VPC.