Chapter 29. Load balancing on RHOSP

29.1. Using the Octavia OVN load balancer provider driver with Kuryr SDN

If your OpenShift Container Platform cluster uses Kuryr and was installed on a Red Hat OpenStack Platform (RHOSP) 13 cloud that was later upgraded to RHOSP 16, you can configure it to use the Octavia OVN provider driver.

Kuryr replaces existing load balancers after you change provider drivers. This process results in some downtime.

Prerequisites

-

Install the RHOSP CLI,

openstack. -

Install the OpenShift Container Platform CLI,

oc. Verify that the Octavia OVN driver on RHOSP is enabled.

TipTo view a list of available Octavia drivers, on a command line, enter

openstack loadbalancer provider list.The

ovndriver is displayed in the command’s output.

Procedure

To change from the Octavia Amphora provider driver to Octavia OVN:

Open the

kuryr-configConfigMap. On a command line, enter:$ oc -n openshift-kuryr edit cm kuryr-configIn the ConfigMap, delete the line that contains

kuryr-octavia-provider: default. For example:... kind: ConfigMap metadata: annotations: networkoperator.openshift.io/kuryr-octavia-provider: default1 ...- 1

- Delete this line. The cluster will regenerate it with

ovnas the value.

Wait for the Cluster Network Operator to detect the modification and to redeploy the

kuryr-controllerandkuryr-cnipods. This process might take several minutes.Verify that the

kuryr-configConfigMap annotation is present withovnas its value. On a command line, enter:$ oc -n openshift-kuryr edit cm kuryr-configThe

ovnprovider value is displayed in the output:... kind: ConfigMap metadata: annotations: networkoperator.openshift.io/kuryr-octavia-provider: ovn ...Verify that RHOSP recreated its load balancers.

On a command line, enter:

$ openstack loadbalancer list | grep amphoraA single Amphora load balancer is displayed. For example:

a4db683b-2b7b-4988-a582-c39daaad7981 | ostest-7mbj6-kuryr-api-loadbalancer | 84c99c906edd475ba19478a9a6690efd | 172.30.0.1 | ACTIVE | amphoraSearch for

ovnload balancers by entering:$ openstack loadbalancer list | grep ovnThe remaining load balancers of the

ovntype are displayed. For example:2dffe783-98ae-4048-98d0-32aa684664cc | openshift-apiserver-operator/metrics | 84c99c906edd475ba19478a9a6690efd | 172.30.167.119 | ACTIVE | ovn 0b1b2193-251f-4243-af39-2f99b29d18c5 | openshift-etcd/etcd | 84c99c906edd475ba19478a9a6690efd | 172.30.143.226 | ACTIVE | ovn f05b07fc-01b7-4673-bd4d-adaa4391458e | openshift-dns-operator/metrics | 84c99c906edd475ba19478a9a6690efd | 172.30.152.27 | ACTIVE | ovn

29.2. Scaling clusters for application traffic by using Octavia

OpenShift Container Platform clusters that run on Red Hat OpenStack Platform (RHOSP) can use the Octavia load balancing service to distribute traffic across multiple virtual machines (VMs) or floating IP addresses. This feature mitigates the bottleneck that single machines or addresses create.

If your cluster uses Kuryr, the Cluster Network Operator created an internal Octavia load balancer at deployment. You can use this load balancer for application network scaling.

If your cluster does not use Kuryr, you must create your own Octavia load balancer to use it for application network scaling.

29.2.1. Scaling clusters by using Octavia

If you want to use multiple API load balancers, or if your cluster does not use Kuryr, create an Octavia load balancer and then configure your cluster to use it.

Prerequisites

- Octavia is available on your Red Hat OpenStack Platform (RHOSP) deployment.

Procedure

From a command line, create an Octavia load balancer that uses the Amphora driver:

$ openstack loadbalancer create --name API_OCP_CLUSTER --vip-subnet-id <id_of_worker_vms_subnet>You can use a name of your choice instead of

API_OCP_CLUSTER.After the load balancer becomes active, create listeners:

$ openstack loadbalancer listener create --name API_OCP_CLUSTER_6443 --protocol HTTPS--protocol-port 6443 API_OCP_CLUSTERNoteTo view the status of the load balancer, enter

openstack loadbalancer list.Create a pool that uses the round robin algorithm and has session persistence enabled:

$ openstack loadbalancer pool create --name API_OCP_CLUSTER_pool_6443 --lb-algorithm ROUND_ROBIN --session-persistence type=<source_IP_address> --listener API_OCP_CLUSTER_6443 --protocol HTTPSTo ensure that control plane machines are available, create a health monitor:

$ openstack loadbalancer healthmonitor create --delay 5 --max-retries 4 --timeout 10 --type TCP API_OCP_CLUSTER_pool_6443Add the control plane machines as members of the load balancer pool:

$ for SERVER in $(MASTER-0-IP MASTER-1-IP MASTER-2-IP) do openstack loadbalancer member create --address $SERVER --protocol-port 6443 API_OCP_CLUSTER_pool_6443 doneOptional: To reuse the cluster API floating IP address, unset it:

$ openstack floating ip unset $API_FIPAdd either the unset

API_FIPor a new address to the created load balancer VIP:$ openstack floating ip set --port $(openstack loadbalancer show -c <vip_port_id> -f value API_OCP_CLUSTER) $API_FIP

Your cluster now uses Octavia for load balancing.

If Kuryr uses the Octavia Amphora driver, all traffic is routed through a single Amphora virtual machine (VM).

You can repeat this procedure to create additional load balancers, which can alleviate the bottleneck.

29.2.2. Scaling clusters that use Kuryr by using Octavia

If your cluster uses Kuryr, associate the API floating IP address of your cluster with the pre-existing Octavia load balancer.

Prerequisites

- Your OpenShift Container Platform cluster uses Kuryr.

- Octavia is available on your Red Hat OpenStack Platform (RHOSP) deployment.

Procedure

Optional: From a command line, to reuse the cluster API floating IP address, unset it:

$ openstack floating ip unset $API_FIPAdd either the unset

API_FIPor a new address to the created load balancer VIP:$ openstack floating ip set --port $(openstack loadbalancer show -c <vip_port_id> -f value ${OCP_CLUSTER}-kuryr-api-loadbalancer) $API_FIP

Your cluster now uses Octavia for load balancing.

If Kuryr uses the Octavia Amphora driver, all traffic is routed through a single Amphora virtual machine (VM).

You can repeat this procedure to create additional load balancers, which can alleviate the bottleneck.

29.3. Scaling for ingress traffic by using RHOSP Octavia

You can use Octavia load balancers to scale Ingress controllers on clusters that use Kuryr.

Prerequisites

- Your OpenShift Container Platform cluster uses Kuryr.

- Octavia is available on your RHOSP deployment.

Procedure

To copy the current internal router service, on a command line, enter:

$ oc -n openshift-ingress get svc router-internal-default -o yaml > external_router.yamlIn the file

external_router.yaml, change the values ofmetadata.nameandspec.typetoLoadBalancer.Example router file

apiVersion: v1 kind: Service metadata: labels: ingresscontroller.operator.openshift.io/owning-ingresscontroller: default name: router-external-default1 namespace: openshift-ingress spec: ports: - name: http port: 80 protocol: TCP targetPort: http - name: https port: 443 protocol: TCP targetPort: https - name: metrics port: 1936 protocol: TCP targetPort: 1936 selector: ingresscontroller.operator.openshift.io/deployment-ingresscontroller: default sessionAffinity: None type: LoadBalancer2

You can delete timestamps and other information that is irrelevant to load balancing.

From a command line, create a service from the

external_router.yamlfile:$ oc apply -f external_router.yamlVerify that the external IP address of the service is the same as the one that is associated with the load balancer:

On a command line, retrieve the external IP address of the service:

$ oc -n openshift-ingress get svcExample output

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE router-external-default LoadBalancer 172.30.235.33 10.46.22.161 80:30112/TCP,443:32359/TCP,1936:30317/TCP 3m38s router-internal-default ClusterIP 172.30.115.123 <none> 80/TCP,443/TCP,1936/TCP 22hRetrieve the IP address of the load balancer:

$ openstack loadbalancer list | grep router-externalExample output

| 21bf6afe-b498-4a16-a958-3229e83c002c | openshift-ingress/router-external-default | 66f3816acf1b431691b8d132cc9d793c | 172.30.235.33 | ACTIVE | octavia |Verify that the addresses you retrieved in the previous steps are associated with each other in the floating IP list:

$ openstack floating ip list | grep 172.30.235.33Example output

| e2f80e97-8266-4b69-8636-e58bacf1879e | 10.46.22.161 | 172.30.235.33 | 655e7122-806a-4e0a-a104-220c6e17bda6 | a565e55a-99e7-4d15-b4df-f9d7ee8c9deb | 66f3816acf1b431691b8d132cc9d793c |

You can now use the value of EXTERNAL-IP as the new Ingress address.

If Kuryr uses the Octavia Amphora driver, all traffic is routed through a single Amphora virtual machine (VM).

You can repeat this procedure to create additional load balancers, which can alleviate the bottleneck.

29.4. Configuring an external load balancer

You can configure an OpenShift Container Platform cluster on Red Hat OpenStack Platform (RHOSP) to use an external load balancer in place of the default load balancer.

Configuring an external load balancer depends on your vendor’s load balancer.

The information and examples in this section are for guideline purposes only. Consult the vendor documentation for more specific information about the vendor’s load balancer.

Red Hat supports the following services for an external load balancer:

- Ingress Controller

- OpenShift API

- OpenShift MachineConfig API

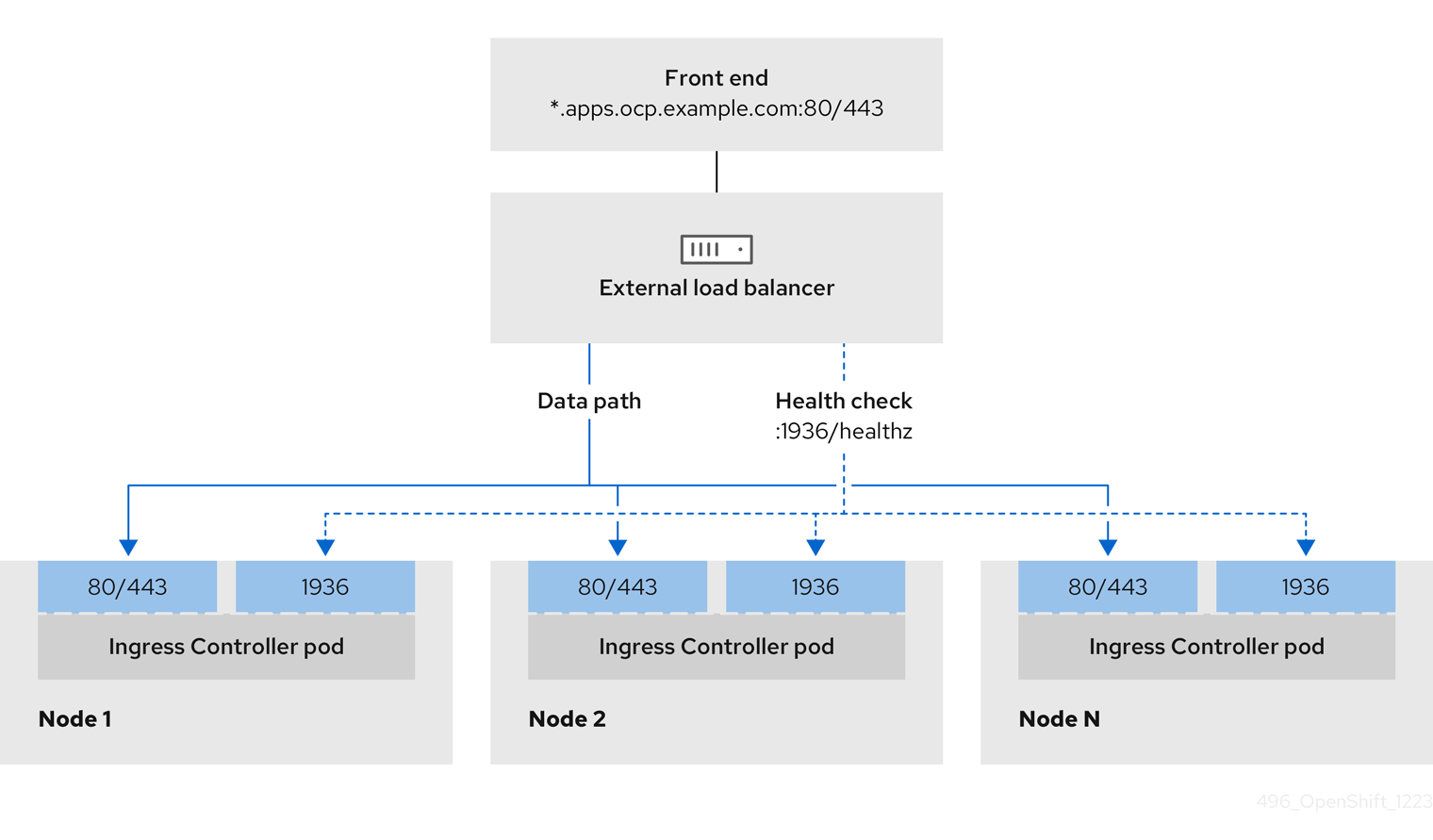

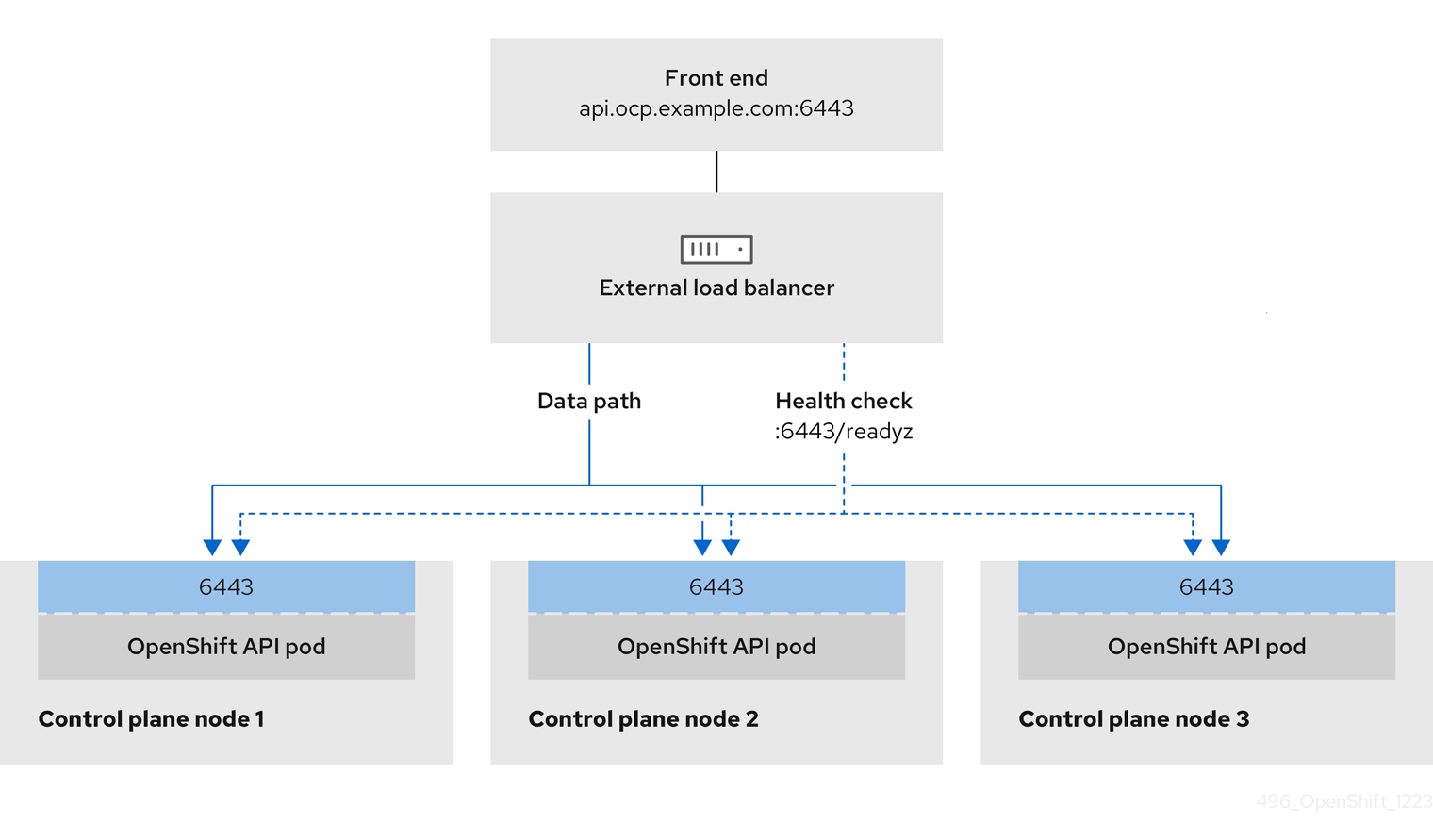

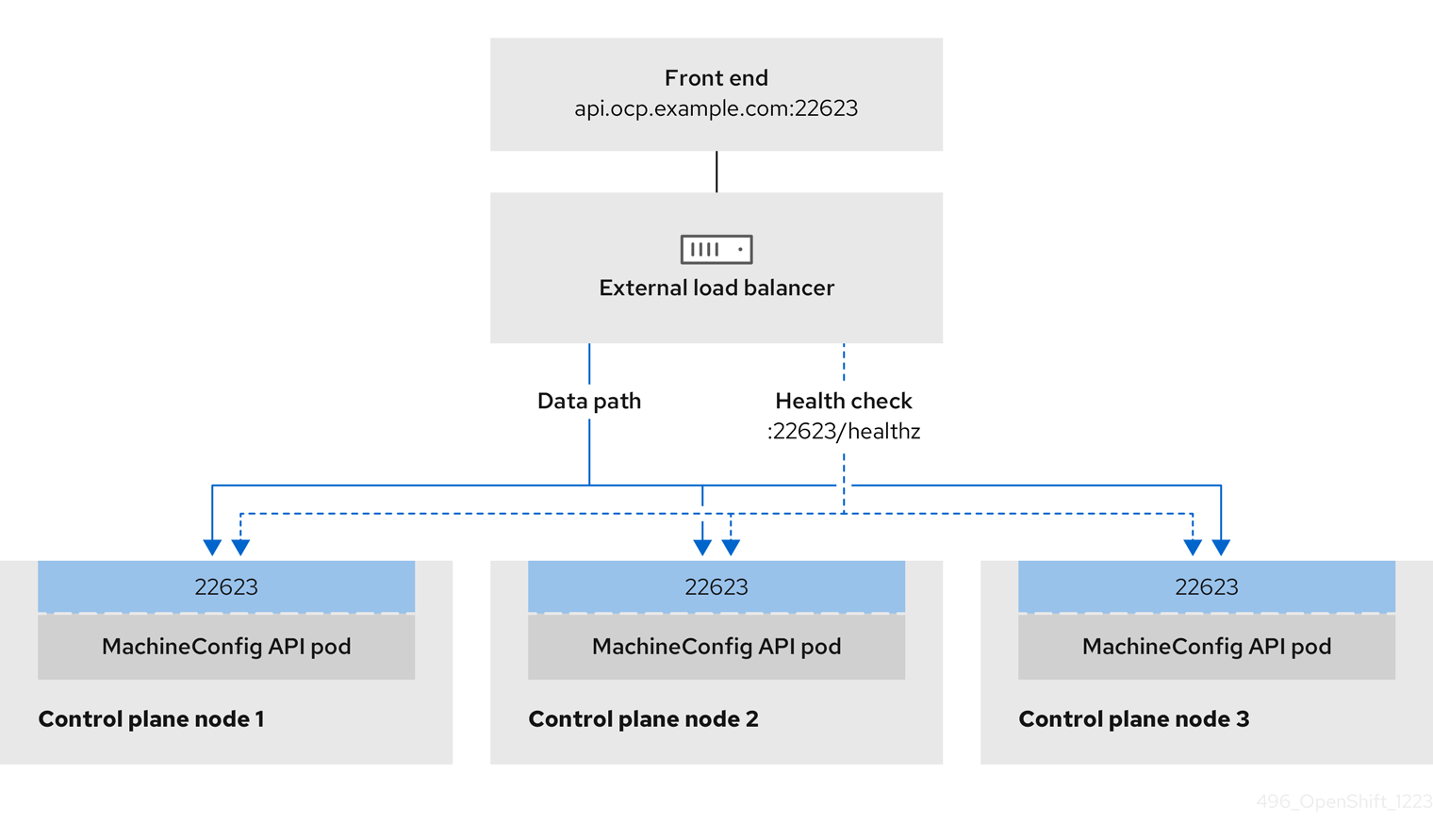

You can choose whether you want to configure one or all of these services for an external load balancer. Configuring only the Ingress Controller service is a common configuration option. To better understand each service, view the following diagrams:

Figure 29.1. Example network workflow that shows an Ingress Controller operating in an OpenShift Container Platform environment

Figure 29.2. Example network workflow that shows an OpenShift API operating in an OpenShift Container Platform environment

Figure 29.3. Example network workflow that shows an OpenShift MachineConfig API operating in an OpenShift Container Platform environment

Considerations

- For a front-end IP address, you can use the same IP address for the front-end IP address, the Ingress Controller’s load balancer, and API load balancer. Check the vendor’s documentation for this capability.

For a back-end IP address, ensure that an IP address for an OpenShift Container Platform control plane node does not change during the lifetime of the external load balancer. You can achieve this by completing one of the following actions:

- Assign a static IP address to each control plane node.

- Configure each node to receive the same IP address from the DHCP every time the node requests a DHCP lease. Depending on the vendor, the DHCP lease might be in the form of an IP reservation or a static DHCP assignment.

- Manually define each node that runs the Ingress Controller in the external load balancer for the Ingress Controller back-end service. For example, if the Ingress Controller moves to an undefined node, a connection outage can occur.

OpenShift API prerequisites

- You defined a front-end IP address.

TCP ports 6443 and 22623 are exposed on the front-end IP address of your load balancer. Check the following items:

- Port 6443 provides access to the OpenShift API service.

- Port 22623 can provide ignition startup configurations to nodes.

- The front-end IP address and port 6443 are reachable by all users of your system with a location external to your OpenShift Container Platform cluster.

- The front-end IP address and port 22623 are reachable only by OpenShift Container Platform nodes.

- The load balancer backend can communicate with OpenShift Container Platform control plane nodes on port 6443 and 22623.

Ingress Controller prerequisites

- You defined a front-end IP address.

- TCP ports 443 and 80 are exposed on the front-end IP address of your load balancer.

- The front-end IP address, port 80 and port 443 are be reachable by all users of your system with a location external to your OpenShift Container Platform cluster.

- The front-end IP address, port 80 and port 443 are reachable to all nodes that operate in your OpenShift Container Platform cluster.

- The load balancer backend can communicate with OpenShift Container Platform nodes that run the Ingress Controller on ports 80, 443, and 1936.

Prerequisite for health check URL specifications

You can configure most load balancers by setting health check URLs that determine if a service is available or unavailable. OpenShift Container Platform provides these health checks for the OpenShift API, Machine Configuration API, and Ingress Controller backend services.

The following examples demonstrate health check specifications for the previously listed backend services:

Example of a Kubernetes API health check specification

Path: HTTPS:6443/readyz

Healthy threshold: 2

Unhealthy threshold: 2

Timeout: 10

Interval: 10Example of a Machine Config API health check specification

Path: HTTPS:22623/healthz

Healthy threshold: 2

Unhealthy threshold: 2

Timeout: 10

Interval: 10Example of an Ingress Controller health check specification

Path: HTTP:1936/healthz/ready

Healthy threshold: 2

Unhealthy threshold: 2

Timeout: 5

Interval: 10Procedure

Configure the HAProxy Ingress Controller, so that you can enable access to the cluster from your load balancer on ports 6443, 443, and 80:

Example HAProxy configuration

#... listen my-cluster-api-6443 bind 192.168.1.100:6443 mode tcp balance roundrobin option httpchk http-check connect http-check send meth GET uri /readyz http-check expect status 200 server my-cluster-master-2 192.168.1.101:6443 check inter 10s rise 2 fall 2 server my-cluster-master-0 192.168.1.102:6443 check inter 10s rise 2 fall 2 server my-cluster-master-1 192.168.1.103:6443 check inter 10s rise 2 fall 2 listen my-cluster-machine-config-api-22623 bind 192.168.1.1000.0.0.0:22623 mode tcp balance roundrobin option httpchk http-check connect http-check send meth GET uri /healthz http-check expect status 200 server my-cluster-master-2 192.0168.21.2101:22623 check inter 10s rise 2 fall 2 server my-cluster-master-0 192.168.1.1020.2.3:22623 check inter 10s rise 2 fall 2 server my-cluster-master-1 192.168.1.1030.2.1:22623 check inter 10s rise 2 fall 2 listen my-cluster-apps-443 bind 192.168.1.100:443 mode tcp balance roundrobin option httpchk http-check connect http-check send meth GET uri /healthz/ready http-check expect status 200 server my-cluster-worker-0 192.168.1.111:443 check port 1936 inter 10s rise 2 fall 2 server my-cluster-worker-1 192.168.1.112:443 check port 1936 inter 10s rise 2 fall 2 server my-cluster-worker-2 192.168.1.113:443 check port 1936 inter 10s rise 2 fall 2 listen my-cluster-apps-80 bind 192.168.1.100:80 mode tcp balance roundrobin option httpchk http-check connect http-check send meth GET uri /healthz/ready http-check expect status 200 server my-cluster-worker-0 192.168.1.111:80 check port 1936 inter 10s rise 2 fall 2 server my-cluster-worker-1 192.168.1.112:80 check port 1936 inter 10s rise 2 fall 2 server my-cluster-worker-2 192.168.1.113:80 check port 1936 inter 10s rise 2 fall 2 # ...Use the

curlCLI command to verify that the external load balancer and its resources are operational:Verify that the cluster machine configuration API is accessible to the Kubernetes API server resource, by running the following command and observing the response:

$ curl https://<loadbalancer_ip_address>:6443/version --insecureIf the configuration is correct, you receive a JSON object in response:

{ "major": "1", "minor": "11+", "gitVersion": "v1.11.0+ad103ed", "gitCommit": "ad103ed", "gitTreeState": "clean", "buildDate": "2019-01-09T06:44:10Z", "goVersion": "go1.10.3", "compiler": "gc", "platform": "linux/amd64" }Verify that the cluster machine configuration API is accessible to the Machine config server resource, by running the following command and observing the output:

$ curl -v https://<loadbalancer_ip_address>:22623/healthz --insecureIf the configuration is correct, the output from the command shows the following response:

HTTP/1.1 200 OK Content-Length: 0Verify that the controller is accessible to the Ingress Controller resource on port 80, by running the following command and observing the output:

$ curl -I -L -H "Host: console-openshift-console.apps.<cluster_name>.<base_domain>" http://<load_balancer_front_end_IP_address>If the configuration is correct, the output from the command shows the following response:

HTTP/1.1 302 Found content-length: 0 location: https://console-openshift-console.apps.ocp4.private.opequon.net/ cache-control: no-cacheVerify that the controller is accessible to the Ingress Controller resource on port 443, by running the following command and observing the output:

$ curl -I -L --insecure --resolve console-openshift-console.apps.<cluster_name>.<base_domain>:443:<Load Balancer Front End IP Address> https://console-openshift-console.apps.<cluster_name>.<base_domain>If the configuration is correct, the output from the command shows the following response:

HTTP/1.1 200 OK referrer-policy: strict-origin-when-cross-origin set-cookie: csrf-token=UlYWOyQ62LWjw2h003xtYSKlh1a0Py2hhctw0WmV2YEdhJjFyQwWcGBsja261dGLgaYO0nxzVErhiXt6QepA7g==; Path=/; Secure; SameSite=Lax x-content-type-options: nosniff x-dns-prefetch-control: off x-frame-options: DENY x-xss-protection: 1; mode=block date: Wed, 04 Oct 2023 16:29:38 GMT content-type: text/html; charset=utf-8 set-cookie: 1e2670d92730b515ce3a1bb65da45062=1bf5e9573c9a2760c964ed1659cc1673; path=/; HttpOnly; Secure; SameSite=None cache-control: private

Configure the DNS records for your cluster to target the front-end IP addresses of the external load balancer. You must update records to your DNS server for the cluster API and applications over the load balancer.

Examples of modified DNS records

<load_balancer_ip_address> A api.<cluster_name>.<base_domain> A record pointing to Load Balancer Front End<load_balancer_ip_address> A apps.<cluster_name>.<base_domain> A record pointing to Load Balancer Front EndImportantDNS propagation might take some time for each DNS record to become available. Ensure that each DNS record propagates before validating each record.

Use the

curlCLI command to verify that the external load balancer and DNS record configuration are operational:Verify that you can access the cluster API, by running the following command and observing the output:

$ curl https://api.<cluster_name>.<base_domain>:6443/version --insecureIf the configuration is correct, you receive a JSON object in response:

{ "major": "1", "minor": "11+", "gitVersion": "v1.11.0+ad103ed", "gitCommit": "ad103ed", "gitTreeState": "clean", "buildDate": "2019-01-09T06:44:10Z", "goVersion": "go1.10.3", "compiler": "gc", "platform": "linux/amd64" }Verify that you can access the cluster machine configuration, by running the following command and observing the output:

$ curl -v https://api.<cluster_name>.<base_domain>:22623/healthz --insecureIf the configuration is correct, the output from the command shows the following response:

HTTP/1.1 200 OK Content-Length: 0Verify that you can access each cluster application on port, by running the following command and observing the output:

$ curl http://console-openshift-console.apps.<cluster_name>.<base_domain> -I -L --insecureIf the configuration is correct, the output from the command shows the following response:

HTTP/1.1 302 Found content-length: 0 location: https://console-openshift-console.apps.<cluster-name>.<base domain>/ cache-control: no-cacheHTTP/1.1 200 OK referrer-policy: strict-origin-when-cross-origin set-cookie: csrf-token=39HoZgztDnzjJkq/JuLJMeoKNXlfiVv2YgZc09c3TBOBU4NI6kDXaJH1LdicNhN1UsQWzon4Dor9GWGfopaTEQ==; Path=/; Secure x-content-type-options: nosniff x-dns-prefetch-control: off x-frame-options: DENY x-xss-protection: 1; mode=block date: Tue, 17 Nov 2020 08:42:10 GMT content-type: text/html; charset=utf-8 set-cookie: 1e2670d92730b515ce3a1bb65da45062=9b714eb87e93cf34853e87a92d6894be; path=/; HttpOnly; Secure; SameSite=None cache-control: privateVerify that you can access each cluster application on port 443, by running the following command and observing the output:

$ curl https://console-openshift-console.apps.<cluster_name>.<base_domain> -I -L --insecureIf the configuration is correct, the output from the command shows the following response:

HTTP/1.1 200 OK referrer-policy: strict-origin-when-cross-origin set-cookie: csrf-token=UlYWOyQ62LWjw2h003xtYSKlh1a0Py2hhctw0WmV2YEdhJjFyQwWcGBsja261dGLgaYO0nxzVErhiXt6QepA7g==; Path=/; Secure; SameSite=Lax x-content-type-options: nosniff x-dns-prefetch-control: off x-frame-options: DENY x-xss-protection: 1; mode=block date: Wed, 04 Oct 2023 16:29:38 GMT content-type: text/html; charset=utf-8 set-cookie: 1e2670d92730b515ce3a1bb65da45062=1bf5e9573c9a2760c964ed1659cc1673; path=/; HttpOnly; Secure; SameSite=None cache-control: private