Chapter 3. Install

3.1. Installing the OpenShift Serverless Operator

Installing the OpenShift Serverless Operator enables you to install and use Knative Serving, Knative Eventing, and Knative Kafka on a OpenShift Container Platform cluster. The OpenShift Serverless Operator manages Knative custom resource definitions (CRDs) for your cluster and enables you to configure them without directly modifying individual config maps for each component.

3.1.1. Before you begin

Read the following information about supported configurations and prerequisites before you install OpenShift Serverless.

- OpenShift Serverless is supported for installation in a restricted network environment.

- OpenShift Serverless currently cannot be used in a multi-tenant configuration on a single cluster.

3.1.1.1. Defining cluster size requirements

To install and use OpenShift Serverless, the OpenShift Container Platform cluster must be sized correctly. The total size requirements to run OpenShift Serverless are dependent on the components that are installed and the applications that are deployed, and might vary depending on your deployment.

The following requirements relate only to the pool of worker machines of the OpenShift Container Platform cluster. Control plane nodes are not used for general scheduling and are omitted from the requirements.

By default, each pod requests approximately 400m of CPU, so the minimum requirements are based on this value. Lowering the actual CPU request of applications can increase the number of possible replicas.

If you have high availability (HA) enabled on your cluster, this requires between 0.5 - 1.5 cores and between 200MB - 2GB of memory for each replica of the Knative Serving control plane.

3.1.1.2. Scaling your cluster using machine sets

You can use the OpenShift Container Platform MachineSet API to manually scale your cluster up to the desired size. The minimum requirements usually mean that you must scale up one of the default machine sets by two additional machines. See Manually scaling a machine set.

3.1.2. Installing the OpenShift Serverless Operator

You can install the OpenShift Serverless Operator from the OperatorHub by using the OpenShift Container Platform web console. Installing this Operator enables you to install and use Knative components.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have logged in to the OpenShift Container Platform web console.

Procedure

-

In the OpenShift Container Platform web console, navigate to the Operators

OperatorHub page. - Scroll, or type the keyword Serverless into the Filter by keyword box to find the OpenShift Serverless Operator.

- Review the information about the Operator and click Install.

On the Install Operator page:

-

The Installation Mode is All namespaces on the cluster (default). This mode installs the Operator in the default

openshift-serverlessnamespace to watch and be made available to all namespaces in the cluster. -

The Installed Namespace is

openshift-serverless. - Select the stable channel as the Update Channel. The stable channel will enable installation of the latest stable release of the OpenShift Serverless Operator.

- Select Automatic or Manual approval strategy.

-

The Installation Mode is All namespaces on the cluster (default). This mode installs the Operator in the default

- Click Install to make the Operator available to the selected namespaces on this OpenShift Container Platform cluster.

From the Catalog

Operator Management page, you can monitor the OpenShift Serverless Operator subscription’s installation and upgrade progress. - If you selected a Manual approval strategy, the subscription’s upgrade status will remain Upgrading until you review and approve its install plan. After approving on the Install Plan page, the subscription upgrade status moves to Up to date.

- If you selected an Automatic approval strategy, the upgrade status should resolve to Up to date without intervention.

Verification

After the Subscription’s upgrade status is Up to date, select Catalog

If it does not:

-

Switch to the Catalog

Operator Management page and inspect the Operator Subscriptions and Install Plans tabs for any failure or errors under Status. -

Check the logs in any pods in the

openshift-serverlessproject on the WorkloadsPods page that are reporting issues to troubleshoot further.

If you want to use Red Hat OpenShift distributed tracing with OpenShift Serverless, you must install and configure Red Hat OpenShift distributed tracing before you install Knative Serving or Knative Eventing.

3.1.4. Next steps

- After the OpenShift Serverless Operator is installed, you can install Knative Serving or install Knative Eventing.

3.2. Installing Knative Serving

Installing Knative Serving allows you to create Knative services and functions on your cluster. It also allows you to use additional functionality such as autoscaling and networking options for your applications.

After you install the OpenShift Serverless Operator, you can install Knative Serving by using the default settings, or configure more advanced settings in the KnativeServing custom resource (CR). For more information about configuration options for the KnativeServing CR, see Global configuration.

If you want to use Red Hat OpenShift distributed tracing with OpenShift Serverless, you must install and configure Red Hat OpenShift distributed tracing before you install Knative Serving.

3.2.1. Installing Knative Serving by using the web console

After you install the OpenShift Serverless Operator, install Knative Serving by using the OpenShift Container Platform web console. You can install Knative Serving by using the default settings or configure more advanced settings in the KnativeServing custom resource (CR).

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have logged in to the OpenShift Container Platform web console.

- You have installed the OpenShift Serverless Operator.

Procedure

-

In the Administrator perspective of the OpenShift Container Platform web console, navigate to Operators

Installed Operators. - Check that the Project dropdown at the top of the page is set to Project: knative-serving.

- Click Knative Serving in the list of Provided APIs for the OpenShift Serverless Operator to go to the Knative Serving tab.

- Click Create Knative Serving.

In the Create Knative Serving page, you can install Knative Serving using the default settings by clicking Create.

You can also modify settings for the Knative Serving installation by editing the

KnativeServingobject using either the form provided, or by editing the YAML.-

Using the form is recommended for simpler configurations that do not require full control of

KnativeServingobject creation. Editing the YAML is recommended for more complex configurations that require full control of

KnativeServingobject creation. You can access the YAML by clicking the edit YAML link in the top right of the Create Knative Serving page.After you complete the form, or have finished modifying the YAML, click Create.

NoteFor more information about configuration options for the KnativeServing custom resource definition, see the documentation on Advanced installation configuration options.

-

Using the form is recommended for simpler configurations that do not require full control of

-

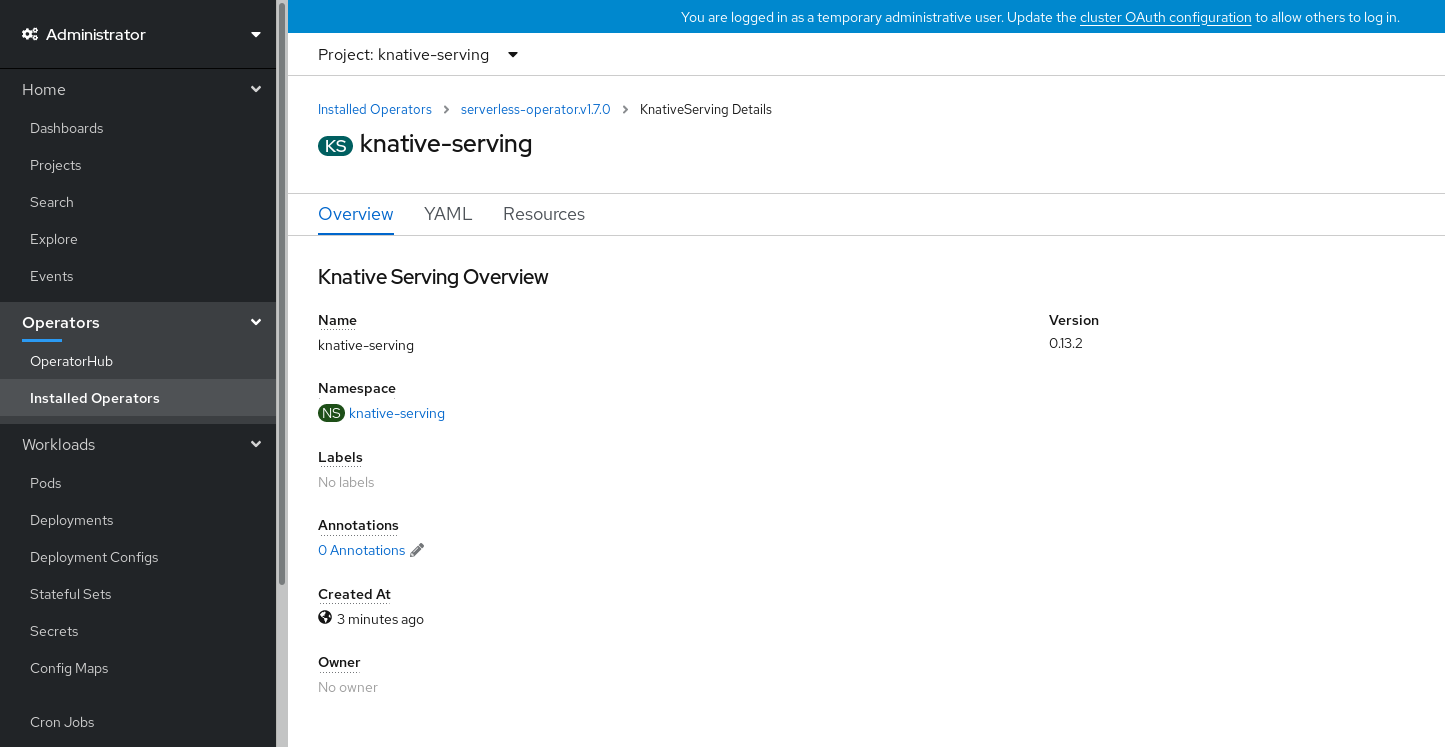

After you have installed Knative Serving, the

KnativeServingobject is created, and you are automatically directed to the Knative Serving tab. You will see theknative-servingcustom resource in the list of resources.

Verification

-

Click on

knative-servingcustom resource in the Knative Serving tab. You will be automatically directed to the Knative Serving Overview page.

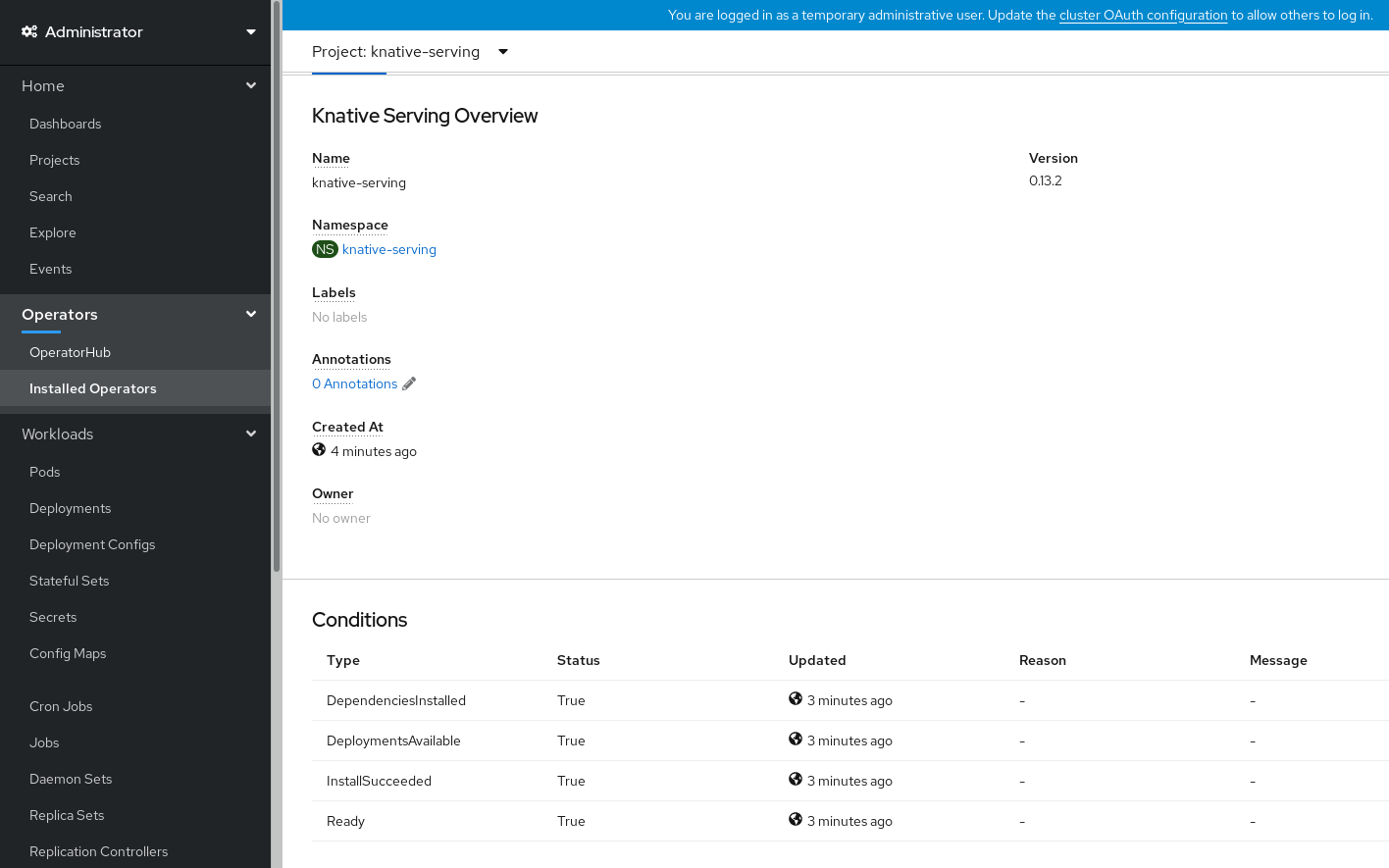

- Scroll down to look at the list of Conditions.

You should see a list of conditions with a status of True, as shown in the example image.

Note

NoteIt may take a few seconds for the Knative Serving resources to be created. You can check their status in the Resources tab.

- If the conditions have a status of Unknown or False, wait a few moments and then check again after you have confirmed that the resources have been created.

3.2.2. Installing Knative Serving by using YAML

After you install the OpenShift Serverless Operator, you can install Knative Serving by using the default settings, or configure more advanced settings in the KnativeServing custom resource (CR). You can use the following procedure to install Knative Serving by using YAML files and the oc CLI.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have installed the OpenShift Serverless Operator.

-

Install the OpenShift CLI (

oc).

Procedure

Create a file named

serving.yamland copy the following example YAML into it:apiVersion: operator.knative.dev/v1alpha1 kind: KnativeServing metadata: name: knative-serving namespace: knative-servingApply the

serving.yamlfile:$ oc apply -f serving.yaml

Verification

To verify the installation is complete, enter the following command:

$ oc get knativeserving.operator.knative.dev/knative-serving -n knative-serving --template='{{range .status.conditions}}{{printf "%s=%s\n" .type .status}}{{end}}'Example output

DependenciesInstalled=True DeploymentsAvailable=True InstallSucceeded=True Ready=TrueNoteIt may take a few seconds for the Knative Serving resources to be created.

If the conditions have a status of

UnknownorFalse, wait a few moments and then check again after you have confirmed that the resources have been created.Check that the Knative Serving resources have been created:

$ oc get pods -n knative-servingExample output

NAME READY STATUS RESTARTS AGE activator-67ddf8c9d7-p7rm5 2/2 Running 0 4m activator-67ddf8c9d7-q84fz 2/2 Running 0 4m autoscaler-5d87bc6dbf-6nqc6 2/2 Running 0 3m59s autoscaler-5d87bc6dbf-h64rl 2/2 Running 0 3m59s autoscaler-hpa-77f85f5cc4-lrts7 2/2 Running 0 3m57s autoscaler-hpa-77f85f5cc4-zx7hl 2/2 Running 0 3m56s controller-5cfc7cb8db-nlccl 2/2 Running 0 3m50s controller-5cfc7cb8db-rmv7r 2/2 Running 0 3m18s domain-mapping-86d84bb6b4-r746m 2/2 Running 0 3m58s domain-mapping-86d84bb6b4-v7nh8 2/2 Running 0 3m58s domainmapping-webhook-769d679d45-bkcnj 2/2 Running 0 3m58s domainmapping-webhook-769d679d45-fff68 2/2 Running 0 3m58s storage-version-migration-serving-serving-0.26.0--1-6qlkb 0/1 Completed 0 3m56s webhook-5fb774f8d8-6bqrt 2/2 Running 0 3m57s webhook-5fb774f8d8-b8lt5 2/2 Running 0 3m57sCheck that the necessary networking components have been installed to the automatically created

knative-serving-ingressnamespace:$ oc get pods -n knative-serving-ingressExample output

NAME READY STATUS RESTARTS AGE net-kourier-controller-7d4b6c5d95-62mkf 1/1 Running 0 76s net-kourier-controller-7d4b6c5d95-qmgm2 1/1 Running 0 76s 3scale-kourier-gateway-6688b49568-987qz 1/1 Running 0 75s 3scale-kourier-gateway-6688b49568-b5tnp 1/1 Running 0 75s

3.2.3. Next steps

- If you want to use Knative event-driven architecture you can install Knative Eventing.

3.3. Installing Knative Eventing

To use event-driven architecture on your cluster, install Knative Eventing. You can create Knative components such as event sources, brokers, and channels and then use them to send events to applications or external systems.

After you install the OpenShift Serverless Operator, you can install Knative Eventing by using the default settings, or configure more advanced settings in the KnativeEventing custom resource (CR). For more information about configuration options for the KnativeEventing CR, see Global configuration.

If you want to use Red Hat OpenShift distributed tracing with OpenShift Serverless, you must install and configure Red Hat OpenShift distributed tracing before you install Knative Eventing.

3.3.1. Installing Knative Eventing by using the web console

After you install the OpenShift Serverless Operator, install Knative Eventing by using the OpenShift Container Platform web console. You can install Knative Eventing by using the default settings or configure more advanced settings in the KnativeEventing custom resource (CR).

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have logged in to the OpenShift Container Platform web console.

- You have installed the OpenShift Serverless Operator.

Procedure

-

In the Administrator perspective of the OpenShift Container Platform web console, navigate to Operators

Installed Operators. - Check that the Project dropdown at the top of the page is set to Project: knative-eventing.

- Click Knative Eventing in the list of Provided APIs for the OpenShift Serverless Operator to go to the Knative Eventing tab.

- Click Create Knative Eventing.

In the Create Knative Eventing page, you can choose to configure the

KnativeEventingobject by using either the default form provided, or by editing the YAML.Using the form is recommended for simpler configurations that do not require full control of

KnativeEventingobject creation.Optional. If you are configuring the

KnativeEventingobject using the form, make any changes that you want to implement for your Knative Eventing deployment.

Click Create.

Editing the YAML is recommended for more complex configurations that require full control of

KnativeEventingobject creation. You can access the YAML by clicking the edit YAML link in the top right of the Create Knative Eventing page.Optional. If you are configuring the

KnativeEventingobject by editing the YAML, make any changes to the YAML that you want to implement for your Knative Eventing deployment.

- Click Create.

-

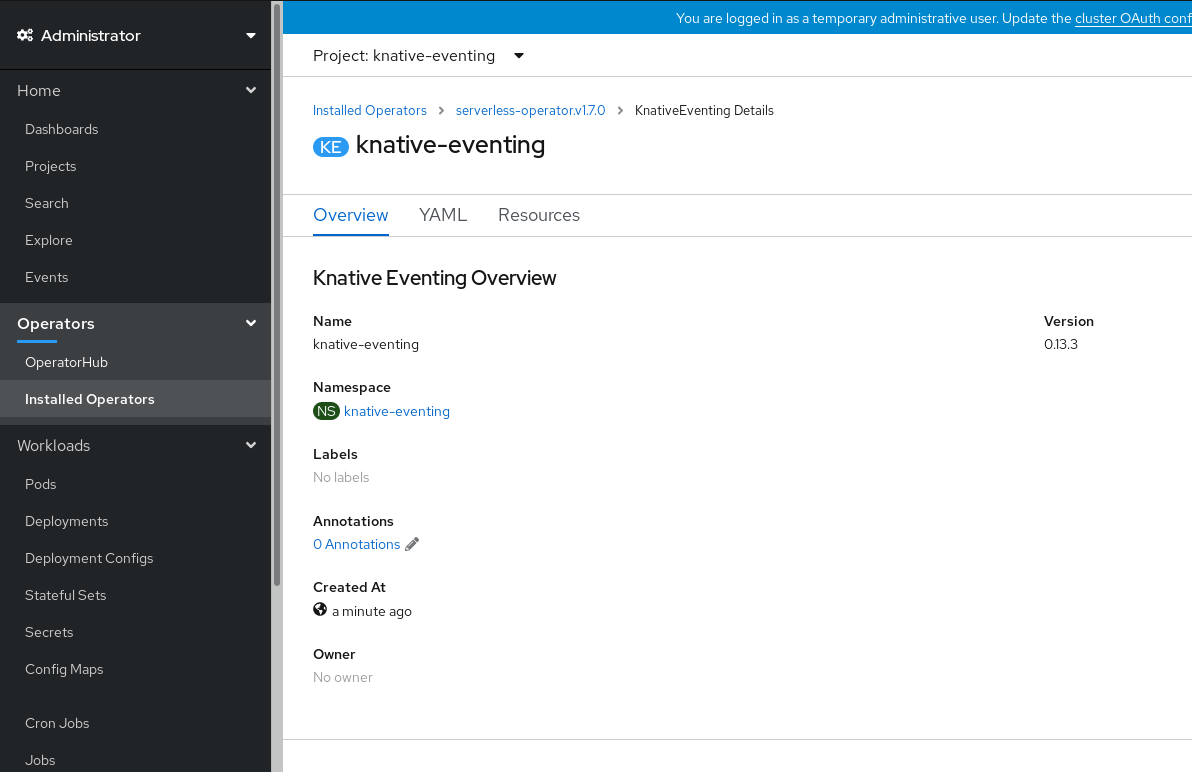

After you have installed Knative Eventing, the

KnativeEventingobject is created, and you are automatically directed to the Knative Eventing tab. You will see theknative-eventingcustom resource in the list of resources.

Verification

-

Click on the

knative-eventingcustom resource in the Knative Eventing tab. You are automatically directed to the Knative Eventing Overview page.

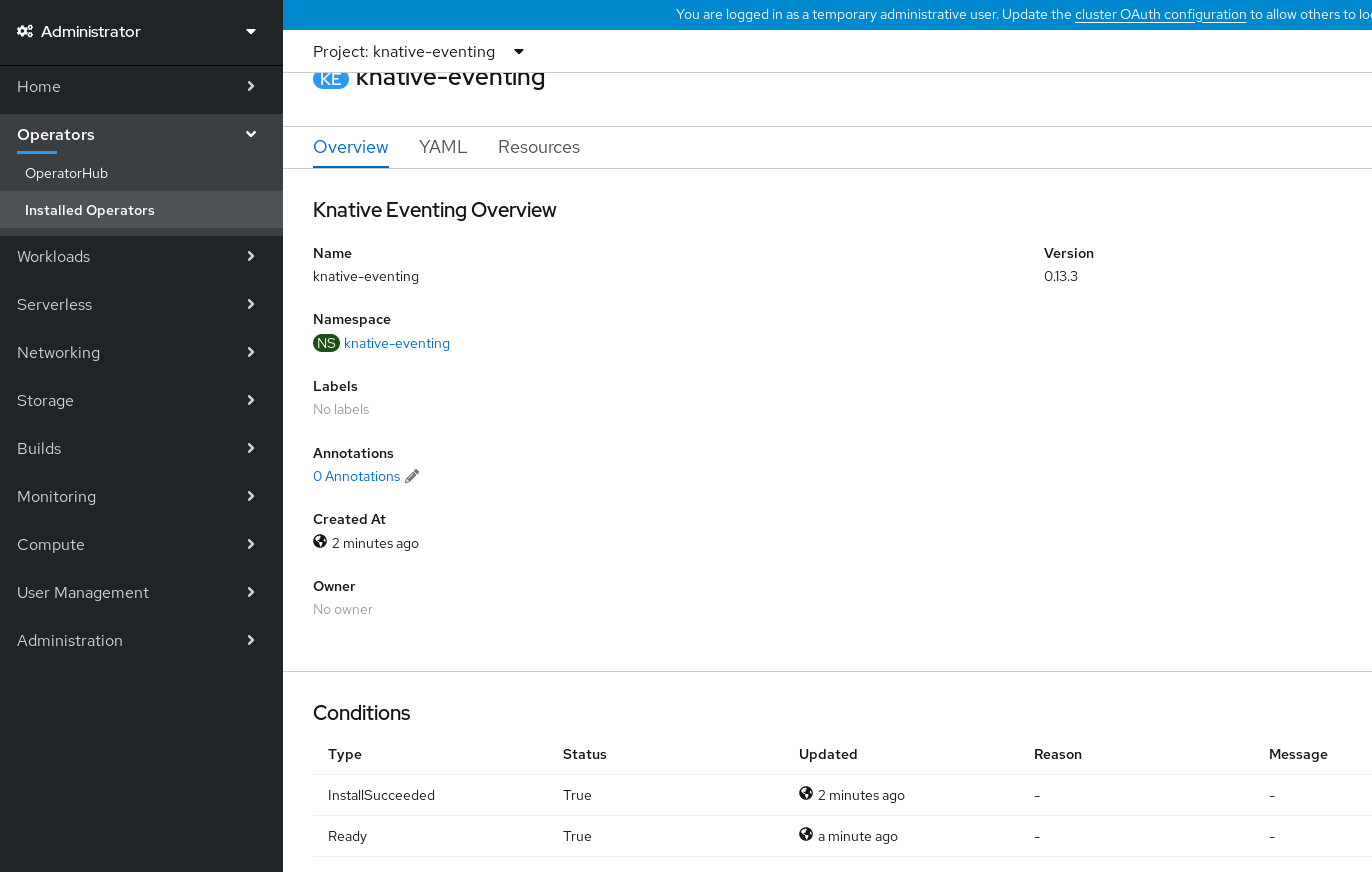

- Scroll down to look at the list of Conditions.

You should see a list of conditions with a status of True, as shown in the example image.

Note

NoteIt may take a few seconds for the Knative Eventing resources to be created. You can check their status in the Resources tab.

- If the conditions have a status of Unknown or False, wait a few moments and then check again after you have confirmed that the resources have been created.

3.3.2. Installing Knative Eventing by using YAML

After you install the OpenShift Serverless Operator, you can install Knative Eventing by using the default settings, or configure more advanced settings in the KnativeEventing custom resource (CR). You can use the following procedure to install Knative Eventing by using YAML files and the oc CLI.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have installed the OpenShift Serverless Operator.

-

Install the OpenShift CLI (

oc).

Procedure

-

Create a file named

eventing.yaml. Copy the following sample YAML into

eventing.yaml:apiVersion: operator.knative.dev/v1alpha1 kind: KnativeEventing metadata: name: knative-eventing namespace: knative-eventing- Optional. Make any changes to the YAML that you want to implement for your Knative Eventing deployment.

Apply the

eventing.yamlfile by entering:$ oc apply -f eventing.yaml

Verification

Verify the installation is complete by entering the following command and observing the output:

$ oc get knativeeventing.operator.knative.dev/knative-eventing \ -n knative-eventing \ --template='{{range .status.conditions}}{{printf "%s=%s\n" .type .status}}{{end}}'Example output

InstallSucceeded=True Ready=TrueNoteIt may take a few seconds for the Knative Eventing resources to be created.

-

If the conditions have a status of

UnknownorFalse, wait a few moments and then check again after you have confirmed that the resources have been created. Check that the Knative Eventing resources have been created by entering:

$ oc get pods -n knative-eventingExample output

NAME READY STATUS RESTARTS AGE broker-controller-58765d9d49-g9zp6 1/1 Running 0 7m21s eventing-controller-65fdd66b54-jw7bh 1/1 Running 0 7m31s eventing-webhook-57fd74b5bd-kvhlz 1/1 Running 0 7m31s imc-controller-5b75d458fc-ptvm2 1/1 Running 0 7m19s imc-dispatcher-64f6d5fccb-kkc4c 1/1 Running 0 7m18s

3.3.3. Next steps

- If you want to use Knative services you can install Knative Serving.

3.4. Removing OpenShift Serverless

If you need to remove OpenShift Serverless from your cluster, you can do so by manually removing the OpenShift Serverless Operator and other OpenShift Serverless components. Before you can remove the OpenShift Serverless Operator, you must remove Knative Serving and Knative Eventing.

3.4.1. Uninstalling Knative Serving

Before you can remove the OpenShift Serverless Operator, you must remove Knative Serving. To uninstall Knative Serving, you must remove the KnativeServing custom resource (CR) and delete the knative-serving namespace.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

-

Install the OpenShift CLI (

oc).

Procedure

Delete the

KnativeServingCR:$ oc delete knativeservings.operator.knative.dev knative-serving -n knative-servingAfter the command has completed and all pods have been removed from the

knative-servingnamespace, delete the namespace:$ oc delete namespace knative-serving

3.4.2. Uninstalling Knative Eventing

Before you can remove the OpenShift Serverless Operator, you must remove Knative Eventing. To uninstall Knative Eventing, you must remove the KnativeEventing custom resource (CR) and delete the knative-eventing namespace.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

-

Install the OpenShift CLI (

oc).

Procedure

Delete the

KnativeEventingCR:$ oc delete knativeeventings.operator.knative.dev knative-eventing -n knative-eventingAfter the command has completed and all pods have been removed from the

knative-eventingnamespace, delete the namespace:$ oc delete namespace knative-eventing

3.4.3. Removing the OpenShift Serverless Operator

After you have removed Knative Serving and Knative Eventing, you can remove the OpenShift Serverless Operator. You can do this by using the OpenShift Container Platform web console or the oc CLI.

3.4.3.1. Deleting Operators from a cluster using the web console

Cluster administrators can delete installed Operators from a selected namespace by using the web console.

Prerequisites

-

Access to an OpenShift Container Platform cluster web console using an account with

cluster-adminpermissions.

Procedure

-

From the Operators

Installed Operators page, scroll or type a keyword into the Filter by name to find the Operator you want. Then, click on it. On the right side of the Operator Details page, select Uninstall Operator from the Actions list.

An Uninstall Operator? dialog box is displayed, reminding you that:

Removing the Operator will not remove any of its custom resource definitions or managed resources. If your Operator has deployed applications on the cluster or configured off-cluster resources, these will continue to run and need to be cleaned up manually.

This action removes the Operator as well as the Operator deployments and pods, if any. Any Operands, and resources managed by the Operator, including CRDs and CRs, are not removed. The web console enables dashboards and navigation items for some Operators. To remove these after uninstalling the Operator, you might need to manually delete the Operator CRDs.

- Select Uninstall. This Operator stops running and no longer receives updates.

3.4.3.2. Deleting Operators from a cluster using the CLI

Cluster administrators can delete installed Operators from a selected namespace by using the CLI.

Prerequisites

-

Access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions. -

occommand installed on workstation.

Procedure

Check the current version of the subscribed Operator (for example,

jaeger) in thecurrentCSVfield:$ oc get subscription jaeger -n openshift-operators -o yaml | grep currentCSVExample output

currentCSV: jaeger-operator.v1.8.2Delete the subscription (for example,

jaeger):$ oc delete subscription jaeger -n openshift-operatorsExample output

subscription.operators.coreos.com "jaeger" deletedDelete the CSV for the Operator in the target namespace using the

currentCSVvalue from the previous step:$ oc delete clusterserviceversion jaeger-operator.v1.8.2 -n openshift-operatorsExample output

clusterserviceversion.operators.coreos.com "jaeger-operator.v1.8.2" deleted

3.4.3.3. Refreshing failing subscriptions

In Operator Lifecycle Manager (OLM), if you subscribe to an Operator that references images that are not accessible on your network, you can find jobs in the openshift-marketplace namespace that are failing with the following errors:

Example output

ImagePullBackOff for

Back-off pulling image "example.com/openshift4/ose-elasticsearch-operator-bundle@sha256:6d2587129c846ec28d384540322b40b05833e7e00b25cca584e004af9a1d292e"Example output

rpc error: code = Unknown desc = error pinging docker registry example.com: Get "https://example.com/v2/": dial tcp: lookup example.com on 10.0.0.1:53: no such hostAs a result, the subscription is stuck in this failing state and the Operator is unable to install or upgrade.

You can refresh a failing subscription by deleting the subscription, cluster service version (CSV), and other related objects. After recreating the subscription, OLM then reinstalls the correct version of the Operator.

Prerequisites

- You have a failing subscription that is unable to pull an inaccessible bundle image.

- You have confirmed that the correct bundle image is accessible.

Procedure

Get the names of the

SubscriptionandClusterServiceVersionobjects from the namespace where the Operator is installed:$ oc get sub,csv -n <namespace>Example output

NAME PACKAGE SOURCE CHANNEL subscription.operators.coreos.com/elasticsearch-operator elasticsearch-operator redhat-operators 5.0 NAME DISPLAY VERSION REPLACES PHASE clusterserviceversion.operators.coreos.com/elasticsearch-operator.5.0.0-65 OpenShift Elasticsearch Operator 5.0.0-65 SucceededDelete the subscription:

$ oc delete subscription <subscription_name> -n <namespace>Delete the cluster service version:

$ oc delete csv <csv_name> -n <namespace>Get the names of any failing jobs and related config maps in the

openshift-marketplacenamespace:$ oc get job,configmap -n openshift-marketplaceExample output

NAME COMPLETIONS DURATION AGE job.batch/1de9443b6324e629ddf31fed0a853a121275806170e34c926d69e53a7fcbccb 1/1 26s 9m30s NAME DATA AGE configmap/1de9443b6324e629ddf31fed0a853a121275806170e34c926d69e53a7fcbccb 3 9m30sDelete the job:

$ oc delete job <job_name> -n openshift-marketplaceThis ensures pods that try to pull the inaccessible image are not recreated.

Delete the config map:

$ oc delete configmap <configmap_name> -n openshift-marketplace- Reinstall the Operator using OperatorHub in the web console.

Verification

Check that the Operator has been reinstalled successfully:

$ oc get sub,csv,installplan -n <namespace>

3.4.4. Deleting OpenShift Serverless custom resource definitions

After uninstalling the OpenShift Serverless, the Operator and API custom resource definitions (CRDs) remain on the cluster. You can use the following procedure to remove the remaining CRDs.

Removing the Operator and API CRDs also removes all resources that were defined by using them, including Knative services.

Prerequisites

- You have access to an OpenShift Container Platform account with cluster administrator access.

- You have uninstalled Knative Serving and removed the OpenShift Serverless Operator.

-

Install the OpenShift CLI (

oc).

Procedure

To delete the remaining OpenShift Serverless CRDs, enter the following command:

$ oc get crd -oname | grep 'knative.dev' | xargs oc delete