Dieser Inhalt ist in der von Ihnen ausgewählten Sprache nicht verfügbar.

Chapter 4. Installing a cluster on OpenStack with Kuryr

Kuryr is a deprecated feature. Deprecated functionality is still included in OpenShift Container Platform and continues to be supported; however, it will be removed in a future release of this product and is not recommended for new deployments.

For the most recent list of major functionality that has been deprecated or removed within OpenShift Container Platform, refer to the Deprecated and removed features section of the OpenShift Container Platform release notes.

In OpenShift Container Platform version 4.12, you can install a customized cluster on Red Hat OpenStack Platform (RHOSP) that uses Kuryr SDN. To customize the installation, modify parameters in the install-config.yaml before you install the cluster.

4.1. Prerequisites

- You reviewed details about the OpenShift Container Platform installation and update processes.

- You read the documentation on selecting a cluster installation method and preparing it for users.

- You verified that OpenShift Container Platform 4.12 is compatible with your RHOSP version by using the Supported platforms for OpenShift clusters section. You can also compare platform support across different versions by viewing the OpenShift Container Platform on RHOSP support matrix.

- You have a storage service installed in RHOSP, such as block storage (Cinder) or object storage (Swift). Object storage is the recommended storage technology for OpenShift Container Platform registry cluster deployment. For more information, see Optimizing storage.

- You understand performance and scalability practices for cluster scaling, control plane sizing, and etcd. For more information, see Recommended practices for scaling the cluster.

4.2. About Kuryr SDN

Kuryr is a deprecated feature. Deprecated functionality is still included in OpenShift Container Platform and continues to be supported; however, it will be removed in a future release of this product and is not recommended for new deployments.

For the most recent list of major functionality that has been deprecated or removed within OpenShift Container Platform, refer to the Deprecated and removed features section of the OpenShift Container Platform release notes.

Kuryr is a container network interface (CNI) plugin solution that uses the Neutron and Octavia Red Hat OpenStack Platform (RHOSP) services to provide networking for pods and Services.

Kuryr and OpenShift Container Platform integration is primarily designed for OpenShift Container Platform clusters running on RHOSP VMs. Kuryr improves the network performance by plugging OpenShift Container Platform pods into RHOSP SDN. In addition, it provides interconnectivity between pods and RHOSP virtual instances.

Kuryr components are installed as pods in OpenShift Container Platform using the openshift-kuryr namespace:

-

kuryr-controller- a single service instance installed on amasternode. This is modeled in OpenShift Container Platform as aDeploymentobject. -

kuryr-cni- a container installing and configuring Kuryr as a CNI driver on each OpenShift Container Platform node. This is modeled in OpenShift Container Platform as aDaemonSetobject.

The Kuryr controller watches the OpenShift Container Platform API server for pod, service, and namespace create, update, and delete events. It maps the OpenShift Container Platform API calls to corresponding objects in Neutron and Octavia. This means that every network solution that implements the Neutron trunk port functionality can be used to back OpenShift Container Platform via Kuryr. This includes open source solutions such as Open vSwitch (OVS) and Open Virtual Network (OVN) as well as Neutron-compatible commercial SDNs.

Kuryr is recommended for OpenShift Container Platform deployments on encapsulated RHOSP tenant networks to avoid double encapsulation, such as running an encapsulated OpenShift Container Platform SDN over an RHOSP network.

If you use provider networks or tenant VLANs, you do not need to use Kuryr to avoid double encapsulation. The performance benefit is negligible. Depending on your configuration, though, using Kuryr to avoid having two overlays might still be beneficial.

Kuryr is not recommended in deployments where all of the following criteria are true:

- The RHOSP version is less than 16.

- The deployment uses UDP services, or a large number of TCP services on few hypervisors.

or

-

The

ovn-octaviaOctavia driver is disabled. - The deployment uses a large number of TCP services on few hypervisors.

4.3. Resource guidelines for installing OpenShift Container Platform on RHOSP with Kuryr

When using Kuryr SDN, the pods, services, namespaces, and network policies are using resources from the RHOSP quota; this increases the minimum requirements. Kuryr also has some additional requirements on top of what a default install requires.

Use the following quota to satisfy a default cluster’s minimum requirements:

| Resource | Value |

|---|---|

| Floating IP addresses | 3 - plus the expected number of Services of LoadBalancer type |

| Ports | 1500 - 1 needed per Pod |

| Routers | 1 |

| Subnets | 250 - 1 needed per Namespace/Project |

| Networks | 250 - 1 needed per Namespace/Project |

| RAM | 112 GB |

| vCPUs | 28 |

| Volume storage | 275 GB |

| Instances | 7 |

| Security groups | 250 - 1 needed per Service and per NetworkPolicy |

| Security group rules | 1000 |

| Server groups | 2 - plus 1 for each additional availability zone in each machine pool |

| Load balancers | 100 - 1 needed per Service |

| Load balancer listeners | 500 - 1 needed per Service-exposed port |

| Load balancer pools | 500 - 1 needed per Service-exposed port |

A cluster might function with fewer than recommended resources, but its performance is not guaranteed.

If RHOSP object storage (Swift) is available and operated by a user account with the swiftoperator role, it is used as the default backend for the OpenShift Container Platform image registry. In this case, the volume storage requirement is 175 GB. Swift space requirements vary depending on the size of the image registry.

If you are using Red Hat OpenStack Platform (RHOSP) version 16 with the Amphora driver rather than the OVN Octavia driver, security groups are associated with service accounts instead of user projects.

Take the following notes into consideration when setting resources:

- The number of ports that are required is larger than the number of pods. Kuryr uses ports pools to have pre-created ports ready to be used by pods and speed up the pods' booting time.

-

Each network policy is mapped into an RHOSP security group, and depending on the

NetworkPolicyspec, one or more rules are added to the security group. Each service is mapped to an RHOSP load balancer. Consider this requirement when estimating the number of security groups required for the quota.

If you are using RHOSP version 15 or earlier, or the

ovn-octavia driver, each load balancer has a security group with the user project.The quota does not account for load balancer resources (such as VM resources), but you must consider these resources when you decide the RHOSP deployment’s size. The default installation will have more than 50 load balancers; the clusters must be able to accommodate them.

If you are using RHOSP version 16 with the OVN Octavia driver enabled, only one load balancer VM is generated; services are load balanced through OVN flows.

An OpenShift Container Platform deployment comprises control plane machines, compute machines, and a bootstrap machine.

To enable Kuryr SDN, your environment must meet the following requirements:

- Run RHOSP 13+.

- Have Overcloud with Octavia.

- Use Neutron Trunk ports extension.

-

Use

openvswitchfirewall driver if ML2/OVS Neutron driver is used instead ofovs-hybrid.

4.3.1. Increasing quota

When using Kuryr SDN, you must increase quotas to satisfy the Red Hat OpenStack Platform (RHOSP) resources used by pods, services, namespaces, and network policies.

Procedure

Increase the quotas for a project by running the following command:

$ sudo openstack quota set --secgroups 250 --secgroup-rules 1000 --ports 1500 --subnets 250 --networks 250 <project>

4.3.2. Configuring Neutron

Kuryr CNI leverages the Neutron Trunks extension to plug containers into the Red Hat OpenStack Platform (RHOSP) SDN, so you must use the trunks extension for Kuryr to properly work.

In addition, if you leverage the default ML2/OVS Neutron driver, the firewall must be set to openvswitch instead of ovs_hybrid so that security groups are enforced on trunk subports and Kuryr can properly handle network policies.

4.3.3. Configuring Octavia

Kuryr SDN uses Red Hat OpenStack Platform (RHOSP)'s Octavia LBaaS to implement OpenShift Container Platform services. Thus, you must install and configure Octavia components in RHOSP to use Kuryr SDN.

To enable Octavia, you must include the Octavia service during the installation of the RHOSP Overcloud, or upgrade the Octavia service if the Overcloud already exists. The following steps for enabling Octavia apply to both a clean install of the Overcloud or an Overcloud update.

The following steps only capture the key pieces required during the deployment of RHOSP when dealing with Octavia. It is also important to note that registry methods vary.

This example uses the local registry method.

Procedure

If you are using the local registry, create a template to upload the images to the registry. For example:

(undercloud) $ openstack overcloud container image prepare \ -e /usr/share/openstack-tripleo-heat-templates/environments/services-docker/octavia.yaml \ --namespace=registry.access.redhat.com/rhosp13 \ --push-destination=<local-ip-from-undercloud.conf>:8787 \ --prefix=openstack- \ --tag-from-label {version}-{product-version} \ --output-env-file=/home/stack/templates/overcloud_images.yaml \ --output-images-file /home/stack/local_registry_images.yamlVerify that the

local_registry_images.yamlfile contains the Octavia images. For example:... - imagename: registry.access.redhat.com/rhosp13/openstack-octavia-api:13.0-43 push_destination: <local-ip-from-undercloud.conf>:8787 - imagename: registry.access.redhat.com/rhosp13/openstack-octavia-health-manager:13.0-45 push_destination: <local-ip-from-undercloud.conf>:8787 - imagename: registry.access.redhat.com/rhosp13/openstack-octavia-housekeeping:13.0-45 push_destination: <local-ip-from-undercloud.conf>:8787 - imagename: registry.access.redhat.com/rhosp13/openstack-octavia-worker:13.0-44 push_destination: <local-ip-from-undercloud.conf>:8787NoteThe Octavia container versions vary depending upon the specific RHOSP release installed.

Pull the container images from

registry.redhat.ioto the Undercloud node:(undercloud) $ sudo openstack overcloud container image upload \ --config-file /home/stack/local_registry_images.yaml \ --verboseThis may take some time depending on the speed of your network and Undercloud disk.

Install or update your Overcloud environment with Octavia:

$ openstack overcloud deploy --templates \ -e /usr/share/openstack-tripleo-heat-templates/environments/services-docker/octavia.yaml \ -e octavia_timeouts.yamlNoteThis command only includes the files associated with Octavia; it varies based on your specific installation of RHOSP. See the RHOSP documentation for further information. For more information on customizing your Octavia installation, see installation of Octavia using Director.

NoteWhen leveraging Kuryr SDN, the Overcloud installation requires the Neutron

trunkextension. This is available by default on director deployments. Use theopenvswitchfirewall instead of the defaultovs-hybridwhen the Neutron backend is ML2/OVS. There is no need for modifications if the backend is ML2/OVN.

4.3.3.1. The Octavia OVN Driver

Octavia supports multiple provider drivers through the Octavia API.

To see all available Octavia provider drivers, on a command line, enter:

$ openstack loadbalancer provider listExample output

+---------+-------------------------------------------------+

| name | description |

+---------+-------------------------------------------------+

| amphora | The Octavia Amphora driver. |

| octavia | Deprecated alias of the Octavia Amphora driver. |

| ovn | Octavia OVN driver. |

+---------+-------------------------------------------------+

Beginning with RHOSP version 16, the Octavia OVN provider driver (ovn) is supported on OpenShift Container Platform on RHOSP deployments.

ovn is an integration driver for the load balancing that Octavia and OVN provide. It supports basic load balancing capabilities, and is based on OpenFlow rules. The driver is automatically enabled in Octavia by Director on deployments that use OVN Neutron ML2.

The Amphora provider driver is the default driver. If ovn is enabled, however, Kuryr uses it.

If Kuryr uses ovn instead of Amphora, it offers the following benefits:

- Decreased resource requirements. Kuryr does not require a load balancer VM for each service.

- Reduced network latency.

- Increased service creation speed by using OpenFlow rules instead of a VM for each service.

- Distributed load balancing actions across all nodes instead of centralized on Amphora VMs.

You can configure your cluster to use the Octavia OVN driver after your RHOSP cloud is upgraded from version 13 to version 16.

4.3.4. Known limitations of installing with Kuryr

Using OpenShift Container Platform with Kuryr SDN has several known limitations.

RHOSP general limitations

Using OpenShift Container Platform with Kuryr SDN has several limitations that apply to all versions and environments:

-

Serviceobjects with theNodePorttype are not supported. -

Clusters that use the OVN Octavia provider driver support

Serviceobjects for which the.spec.selectorproperty is unspecified only if the.subsets.addressesproperty of theEndpointsobject includes the subnet of the nodes or pods. -

If the subnet on which machines are created is not connected to a router, or if the subnet is connected, but the router has no external gateway set, Kuryr cannot create floating IPs for

Serviceobjects with typeLoadBalancer. -

Configuring the

sessionAffinity=ClientIPproperty onServiceobjects does not have an effect. Kuryr does not support this setting.

RHOSP version limitations

Using OpenShift Container Platform with Kuryr SDN has several limitations that depend on the RHOSP version.

RHOSP versions before 16 use the default Octavia load balancer driver (Amphora). This driver requires that one Amphora load balancer VM is deployed per OpenShift Container Platform service. Creating too many services can cause you to run out of resources.

Deployments of later versions of RHOSP that have the OVN Octavia driver disabled also use the Amphora driver. They are subject to the same resource concerns as earlier versions of RHOSP.

- Kuryr SDN does not support automatic unidling by a service.

RHOSP upgrade limitations

As a result of the RHOSP upgrade process, the Octavia API might be changed, and upgrades to the Amphora images that are used for load balancers might be required.

You can address API changes on an individual basis.

If the Amphora image is upgraded, the RHOSP operator can handle existing load balancer VMs in two ways:

- Upgrade each VM by triggering a load balancer failover.

- Leave responsibility for upgrading the VMs to users.

If the operator takes the first option, there might be short downtimes during failovers.

If the operator takes the second option, the existing load balancers will not support upgraded Octavia API features, like UDP listeners. In this case, users must recreate their Services to use these features.

4.3.5. Control plane machines

By default, the OpenShift Container Platform installation process creates three control plane machines.

Each machine requires:

- An instance from the RHOSP quota

- A port from the RHOSP quota

- A flavor with at least 16 GB memory and 4 vCPUs

- At least 100 GB storage space from the RHOSP quota

4.3.6. Compute machines

By default, the OpenShift Container Platform installation process creates three compute machines.

Each machine requires:

- An instance from the RHOSP quota

- A port from the RHOSP quota

- A flavor with at least 8 GB memory and 2 vCPUs

- At least 100 GB storage space from the RHOSP quota

Compute machines host the applications that you run on OpenShift Container Platform; aim to run as many as you can.

4.3.7. Bootstrap machine

During installation, a bootstrap machine is temporarily provisioned to stand up the control plane. After the production control plane is ready, the bootstrap machine is deprovisioned.

The bootstrap machine requires:

- An instance from the RHOSP quota

- A port from the RHOSP quota

- A flavor with at least 16 GB memory and 4 vCPUs

- At least 100 GB storage space from the RHOSP quota

4.4. Internet access for OpenShift Container Platform

In OpenShift Container Platform 4.12, you require access to the internet to install your cluster.

You must have internet access to:

- Access OpenShift Cluster Manager Hybrid Cloud Console to download the installation program and perform subscription management. If the cluster has internet access and you do not disable Telemetry, that service automatically entitles your cluster.

- Access Quay.io to obtain the packages that are required to install your cluster.

- Obtain the packages that are required to perform cluster updates.

If your cluster cannot have direct internet access, you can perform a restricted network installation on some types of infrastructure that you provision. During that process, you download the required content and use it to populate a mirror registry with the installation packages. With some installation types, the environment that you install your cluster in will not require internet access. Before you update the cluster, you update the content of the mirror registry.

4.5. Enabling Swift on RHOSP

Swift is operated by a user account with the swiftoperator role. Add the role to an account before you run the installation program.

If the Red Hat OpenStack Platform (RHOSP) object storage service, commonly known as Swift, is available, OpenShift Container Platform uses it as the image registry storage. If it is unavailable, the installation program relies on the RHOSP block storage service, commonly known as Cinder.

If Swift is present and you want to use it, you must enable access to it. If it is not present, or if you do not want to use it, skip this section.

RHOSP 17 sets the rgw_max_attr_size parameter of Ceph RGW to 256 characters. This setting causes issues with uploading container images to the OpenShift Container Platform registry. You must set the value of rgw_max_attr_size to at least 1024 characters.

Before installation, check if your RHOSP deployment is affected by this problem. If it is, reconfigure Ceph RGW.

Prerequisites

- You have a RHOSP administrator account on the target environment.

- The Swift service is installed.

-

On Ceph RGW, the

account in urloption is enabled.

Procedure

To enable Swift on RHOSP:

As an administrator in the RHOSP CLI, add the

swiftoperatorrole to the account that will access Swift:$ openstack role add --user <user> --project <project> swiftoperator

Your RHOSP deployment can now use Swift for the image registry.

4.6. Verifying external network access

The OpenShift Container Platform installation process requires external network access. You must provide an external network value to it, or deployment fails. Before you begin the process, verify that a network with the external router type exists in Red Hat OpenStack Platform (RHOSP).

Prerequisites

Procedure

Using the RHOSP CLI, verify the name and ID of the 'External' network:

$ openstack network list --long -c ID -c Name -c "Router Type"Example output

+--------------------------------------+----------------+-------------+ | ID | Name | Router Type | +--------------------------------------+----------------+-------------+ | 148a8023-62a7-4672-b018-003462f8d7dc | public_network | External | +--------------------------------------+----------------+-------------+

A network with an external router type appears in the network list. If at least one does not, see Creating a default floating IP network and Creating a default provider network.

If the external network’s CIDR range overlaps one of the default network ranges, you must change the matching network ranges in the install-config.yaml file before you start the installation process.

The default network ranges are:

| Network | Range |

|---|---|

|

| 10.0.0.0/16 |

|

| 172.30.0.0/16 |

|

| 10.128.0.0/14 |

If the installation program finds multiple networks with the same name, it sets one of them at random. To avoid this behavior, create unique names for resources in RHOSP.

If the Neutron trunk service plugin is enabled, a trunk port is created by default. For more information, see Neutron trunk port.

4.7. Defining parameters for the installation program

The OpenShift Container Platform installation program relies on a file that is called clouds.yaml. The file describes Red Hat OpenStack Platform (RHOSP) configuration parameters, including the project name, log in information, and authorization service URLs.

Procedure

Create the

clouds.yamlfile:If your RHOSP distribution includes the Horizon web UI, generate a

clouds.yamlfile in it.ImportantRemember to add a password to the

authfield. You can also keep secrets in a separate file fromclouds.yaml.If your RHOSP distribution does not include the Horizon web UI, or you do not want to use Horizon, create the file yourself. For detailed information about

clouds.yaml, see Config files in the RHOSP documentation.clouds: shiftstack: auth: auth_url: http://10.10.14.42:5000/v3 project_name: shiftstack username: <username> password: <password> user_domain_name: Default project_domain_name: Default dev-env: region_name: RegionOne auth: username: <username> password: <password> project_name: 'devonly' auth_url: 'https://10.10.14.22:5001/v2.0'

If your RHOSP installation uses self-signed certificate authority (CA) certificates for endpoint authentication:

- Copy the certificate authority file to your machine.

Add the

cacertskey to theclouds.yamlfile. The value must be an absolute, non-root-accessible path to the CA certificate:clouds: shiftstack: ... cacert: "/etc/pki/ca-trust/source/anchors/ca.crt.pem"TipAfter you run the installer with a custom CA certificate, you can update the certificate by editing the value of the

ca-cert.pemkey in thecloud-provider-configkeymap. On a command line, run:$ oc edit configmap -n openshift-config cloud-provider-config

Place the

clouds.yamlfile in one of the following locations:-

The value of the

OS_CLIENT_CONFIG_FILEenvironment variable - The current directory

-

A Unix-specific user configuration directory, for example

~/.config/openstack/clouds.yaml A Unix-specific site configuration directory, for example

/etc/openstack/clouds.yamlThe installation program searches for

clouds.yamlin that order.

-

The value of the

4.8. Setting OpenStack Cloud Controller Manager options

Optionally, you can edit the OpenStack Cloud Controller Manager (CCM) configuration for your cluster. This configuration controls how OpenShift Container Platform interacts with Red Hat OpenStack Platform (RHOSP).

For a complete list of configuration parameters, see the "OpenStack Cloud Controller Manager reference guide" page in the "Installing on OpenStack" documentation.

Procedure

If you have not already generated manifest files for your cluster, generate them by running the following command:

$ openshift-install --dir <destination_directory> create manifestsIn a text editor, open the cloud-provider configuration manifest file. For example:

$ vi openshift/manifests/cloud-provider-config.yamlModify the options according to the CCM reference guide.

Configuring Octavia for load balancing is a common case for clusters that do not use Kuryr. For example:

#... [LoadBalancer] use-octavia=true1 lb-provider = "amphora"2 floating-network-id="d3deb660-4190-40a3-91f1-37326fe6ec4a"3 create-monitor = True4 monitor-delay = 10s5 monitor-timeout = 10s6 monitor-max-retries = 17 #...- 1

- This property enables Octavia integration.

- 2

- This property sets the Octavia provider that your load balancer uses. It accepts

"ovn"or"amphora"as values. If you choose to use OVN, you must also setlb-methodtoSOURCE_IP_PORT. - 3

- This property is required if you want to use multiple external networks with your cluster. The cloud provider creates floating IP addresses on the network that is specified here.

- 4

- This property controls whether the cloud provider creates health monitors for Octavia load balancers. Set the value to

Trueto create health monitors. As of RHOSP 16.2, this feature is only available for the Amphora provider. - 5

- This property sets the frequency with which endpoints are monitored. The value must be in the

time.ParseDuration()format. This property is required if the value of thecreate-monitorproperty isTrue. - 6

- This property sets the time that monitoring requests are open before timing out. The value must be in the

time.ParseDuration()format. This property is required if the value of thecreate-monitorproperty isTrue. - 7

- This property defines how many successful monitoring requests are required before a load balancer is marked as online. The value must be an integer. This property is required if the value of the

create-monitorproperty isTrue.

ImportantPrior to saving your changes, verify that the file is structured correctly. Clusters might fail if properties are not placed in the appropriate section.

ImportantYou must set the value of the

create-monitorproperty toTrueif you use services that have the value of the.spec.externalTrafficPolicyproperty set toLocal. The OVN Octavia provider in RHOSP 16.2 does not support health monitors. Therefore, services that haveETPparameter values set toLocalmight not respond when thelb-providervalue is set to"ovn".ImportantFor installations that use Kuryr, Kuryr handles relevant services. There is no need to configure Octavia load balancing in the cloud provider.

Save the changes to the file and proceed with installation.

TipYou can update your cloud provider configuration after you run the installer. On a command line, run:

$ oc edit configmap -n openshift-config cloud-provider-configAfter you save your changes, your cluster will take some time to reconfigure itself. The process is complete if none of your nodes have a

SchedulingDisabledstatus.

4.9. Obtaining the installation program

Before you install OpenShift Container Platform, download the installation file on the host you are using for installation.

Prerequisites

- You have a computer that runs Linux or macOS, with 500 MB of local disk space.

Procedure

- Access the Infrastructure Provider page on the OpenShift Cluster Manager site. If you have a Red Hat account, log in with your credentials. If you do not, create an account.

- Select your infrastructure provider.

Navigate to the page for your installation type, download the installation program that corresponds with your host operating system and architecture, and place the file in the directory where you will store the installation configuration files.

ImportantThe installation program creates several files on the computer that you use to install your cluster. You must keep the installation program and the files that the installation program creates after you finish installing the cluster. Both files are required to delete the cluster.

ImportantDeleting the files created by the installation program does not remove your cluster, even if the cluster failed during installation. To remove your cluster, complete the OpenShift Container Platform uninstallation procedures for your specific cloud provider.

Extract the installation program. For example, on a computer that uses a Linux operating system, run the following command:

$ tar -xvf openshift-install-linux.tar.gz- Download your installation pull secret from the Red Hat OpenShift Cluster Manager. This pull secret allows you to authenticate with the services that are provided by the included authorities, including Quay.io, which serves the container images for OpenShift Container Platform components.

4.10. Creating the installation configuration file

You can customize the OpenShift Container Platform cluster you install on Red Hat OpenStack Platform (RHOSP).

Prerequisites

- Obtain the OpenShift Container Platform installation program and the pull secret for your cluster.

- Obtain service principal permissions at the subscription level.

Procedure

Create the

install-config.yamlfile.Change to the directory that contains the installation program and run the following command:

$ ./openshift-install create install-config --dir <installation_directory>1 - 1

- For

<installation_directory>, specify the directory name to store the files that the installation program creates.

When specifying the directory:

-

Verify that the directory has the

executepermission. This permission is required to run Terraform binaries under the installation directory. - Use an empty directory. Some installation assets, such as bootstrap X.509 certificates, have short expiration intervals, therefore you must not reuse an installation directory. If you want to reuse individual files from another cluster installation, you can copy them into your directory. However, the file names for the installation assets might change between releases. Use caution when copying installation files from an earlier OpenShift Container Platform version.

At the prompts, provide the configuration details for your cloud:

Optional: Select an SSH key to use to access your cluster machines.

NoteFor production OpenShift Container Platform clusters on which you want to perform installation debugging or disaster recovery, specify an SSH key that your

ssh-agentprocess uses.- Select openstack as the platform to target.

- Specify the Red Hat OpenStack Platform (RHOSP) external network name to use for installing the cluster.

- Specify the floating IP address to use for external access to the OpenShift API.

- Specify a RHOSP flavor with at least 16 GB RAM to use for control plane nodes and 8 GB RAM for compute nodes.

- Select the base domain to deploy the cluster to. All DNS records will be sub-domains of this base and will also include the cluster name.

- Enter a name for your cluster. The name must be 14 or fewer characters long.

- Paste the pull secret from the Red Hat OpenShift Cluster Manager.

-

Modify the

install-config.yamlfile. You can find more information about the available parameters in the "Installation configuration parameters" section. Back up the

install-config.yamlfile so that you can use it to install multiple clusters.ImportantThe

install-config.yamlfile is consumed during the installation process. If you want to reuse the file, you must back it up now.

4.10.1. Configuring the cluster-wide proxy during installation

Production environments can deny direct access to the internet and instead have an HTTP or HTTPS proxy available. You can configure a new OpenShift Container Platform cluster to use a proxy by configuring the proxy settings in the install-config.yaml file.

Kuryr installations default to HTTP proxies.

Prerequisites

For Kuryr installations on restricted networks that use the

Proxyobject, the proxy must be able to reply to the router that the cluster uses. To add a static route for the proxy configuration, from a command line as the root user, enter:$ ip route add <cluster_network_cidr> via <installer_subnet_gateway>-

The restricted subnet must have a gateway that is defined and available to be linked to the

Routerresource that Kuryr creates. -

You have an existing

install-config.yamlfile. You reviewed the sites that your cluster requires access to and determined whether any of them need to bypass the proxy. By default, all cluster egress traffic is proxied, including calls to hosting cloud provider APIs. You added sites to the

Proxyobject’sspec.noProxyfield to bypass the proxy if necessary.NoteThe

Proxyobjectstatus.noProxyfield is populated with the values of thenetworking.machineNetwork[].cidr,networking.clusterNetwork[].cidr, andnetworking.serviceNetwork[]fields from your installation configuration.For installations on Amazon Web Services (AWS), Google Cloud, Microsoft Azure, and Red Hat OpenStack Platform (RHOSP), the

Proxyobjectstatus.noProxyfield is also populated with the instance metadata endpoint (169.254.169.254).

Procedure

Edit your

install-config.yamlfile and add the proxy settings. For example:apiVersion: v1 baseDomain: my.domain.com proxy: httpProxy: http://<username>:<pswd>@<ip>:<port>1 httpsProxy: https://<username>:<pswd>@<ip>:<port>2 noProxy: example.com3 additionalTrustBundle: |4 -----BEGIN CERTIFICATE----- <MY_TRUSTED_CA_CERT> -----END CERTIFICATE----- additionalTrustBundlePolicy: <policy_to_add_additionalTrustBundle>5 - 1

- A proxy URL to use for creating HTTP connections outside the cluster. The URL scheme must be

http. - 2

- A proxy URL to use for creating HTTPS connections outside the cluster.

- 3

- A comma-separated list of destination domain names, IP addresses, or other network CIDRs to exclude from proxying. Preface a domain with

.to match subdomains only. For example,.y.commatchesx.y.com, but noty.com. Use*to bypass the proxy for all destinations. - 4

- If provided, the installation program generates a config map that is named

user-ca-bundlein theopenshift-confignamespace that contains one or more additional CA certificates that are required for proxying HTTPS connections. The Cluster Network Operator then creates atrusted-ca-bundleconfig map that merges these contents with the Red Hat Enterprise Linux CoreOS (RHCOS) trust bundle, and this config map is referenced in thetrustedCAfield of theProxyobject. TheadditionalTrustBundlefield is required unless the proxy’s identity certificate is signed by an authority from the RHCOS trust bundle. - 5

- Optional: The policy to determine the configuration of the

Proxyobject to reference theuser-ca-bundleconfig map in thetrustedCAfield. The allowed values areProxyonlyandAlways. UseProxyonlyto reference theuser-ca-bundleconfig map only whenhttp/httpsproxy is configured. UseAlwaysto always reference theuser-ca-bundleconfig map. The default value isProxyonly.

NoteThe installation program does not support the proxy

readinessEndpointsfield.NoteIf the installer times out, restart and then complete the deployment by using the

wait-forcommand of the installer. For example:$ ./openshift-install wait-for install-complete --log-level debug- Save the file and reference it when installing OpenShift Container Platform.

The installation program creates a cluster-wide proxy that is named cluster that uses the proxy settings in the provided install-config.yaml file. If no proxy settings are provided, a cluster Proxy object is still created, but it will have a nil spec.

Only the Proxy object named cluster is supported, and no additional proxies can be created.

4.11. Installation configuration parameters

Before you deploy an OpenShift Container Platform cluster, you provide parameter values to describe your account on the cloud platform that hosts your cluster and optionally customize your cluster’s platform. When you create the install-config.yaml installation configuration file, you provide values for the required parameters through the command line. If you customize your cluster, you can modify the install-config.yaml file to provide more details about the platform.

After installation, you cannot modify these parameters in the install-config.yaml file.

4.11.1. Required configuration parameters

Required installation configuration parameters are described in the following table:

| Parameter | Description | Values |

|---|---|---|

|

|

The API version for the | String |

|

|

The base domain of your cloud provider. The base domain is used to create routes to your OpenShift Container Platform cluster components. The full DNS name for your cluster is a combination of the |

A fully-qualified domain or subdomain name, such as |

|

|

Kubernetes resource | Object |

|

|

The name of the cluster. DNS records for the cluster are all subdomains of |

String of lowercase letters, hyphens ( |

|

|

The configuration for the specific platform upon which to perform the installation: | Object |

|

| Get a pull secret from the Red Hat OpenShift Cluster Manager to authenticate downloading container images for OpenShift Container Platform components from services such as Quay.io. |

|

4.11.2. Network configuration parameters

You can customize your installation configuration based on the requirements of your existing network infrastructure. For example, you can expand the IP address block for the cluster network or provide different IP address blocks than the defaults.

Only IPv4 addresses are supported.

Globalnet is not supported with Red Hat OpenShift Data Foundation disaster recovery solutions. For regional disaster recovery scenarios, ensure that you use a nonoverlapping range of private IP addresses for the cluster and service networks in each cluster.

| Parameter | Description | Values |

|---|---|---|

|

| The configuration for the cluster network. | Object Note

You cannot modify parameters specified by the |

|

| The Red Hat OpenShift Networking network plugin to install. |

Either |

|

| The IP address blocks for pods.

The default value is If you specify multiple IP address blocks, the blocks must not overlap. | An array of objects. For example: |

|

|

Required if you use An IPv4 network. |

An IP address block in Classless Inter-Domain Routing (CIDR) notation. The prefix length for an IPv4 block is between |

|

|

The subnet prefix length to assign to each individual node. For example, if | A subnet prefix.

The default value is |

|

|

The IP address block for services. The default value is The OpenShift SDN and OVN-Kubernetes network plugins support only a single IP address block for the service network. | An array with an IP address block in CIDR format. For example: |

|

| The IP address blocks for machines. If you specify multiple IP address blocks, the blocks must not overlap. | An array of objects. For example: |

|

|

Required if you use | An IP network block in CIDR notation.

For example, Note

Set the |

4.11.3. Optional configuration parameters

Optional installation configuration parameters are described in the following table:

| Parameter | Description | Values |

|---|---|---|

|

| A PEM-encoded X.509 certificate bundle that is added to the nodes' trusted certificate store. This trust bundle may also be used when a proxy has been configured. | String |

|

| Controls the installation of optional core cluster components. You can reduce the footprint of your OpenShift Container Platform cluster by disabling optional components. For more information, see the "Cluster capabilities" page in Installing. | String array |

|

|

Selects an initial set of optional capabilities to enable. Valid values are | String |

|

|

Extends the set of optional capabilities beyond what you specify in | String array |

|

| The configuration for the machines that comprise the compute nodes. |

Array of |

|

|

Determines the instruction set architecture of the machines in the pool. Currently, clusters with varied architectures are not supported. All pools must specify the same architecture. Valid values are | String |

|

|

Whether to enable or disable simultaneous multithreading, or Important If you disable simultaneous multithreading, ensure that your capacity planning accounts for the dramatically decreased machine performance. |

|

|

|

Required if you use |

|

|

|

Required if you use |

|

|

| The number of compute machines, which are also known as worker machines, to provision. |

A positive integer greater than or equal to |

|

| Enables the cluster for a feature set. A feature set is a collection of OpenShift Container Platform features that are not enabled by default. For more information about enabling a feature set during installation, see "Enabling features using feature gates". |

String. The name of the feature set to enable, such as |

|

| The configuration for the machines that comprise the control plane. |

Array of |

|

|

Determines the instruction set architecture of the machines in the pool. Currently, clusters with varied architectures are not supported. All pools must specify the same architecture. Valid values are | String |

|

|

Whether to enable or disable simultaneous multithreading, or Important If you disable simultaneous multithreading, ensure that your capacity planning accounts for the dramatically decreased machine performance. |

|

|

|

Required if you use |

|

|

|

Required if you use |

|

|

| The number of control plane machines to provision. |

The only supported value is |

|

| The Cloud Credential Operator (CCO) mode. If no mode is specified, the CCO dynamically tries to determine the capabilities of the provided credentials, with a preference for mint mode on the platforms where multiple modes are supported. Note Not all CCO modes are supported for all cloud providers. For more information about CCO modes, see the Cloud Credential Operator entry in the Cluster Operators reference content. Note

If your AWS account has service control policies (SCP) enabled, you must configure the |

|

|

|

Enable or disable FIPS mode. The default is Important

To enable FIPS mode for your cluster, you must run the installation program from a Red Hat Enterprise Linux (RHEL) computer configured to operate in FIPS mode. For more information about configuring FIPS mode on RHEL, see Switching RHEL to FIPS mode. The use of FIPS validated or Modules In Process cryptographic libraries is only supported on OpenShift Container Platform deployments on the Note If you are using Azure File storage, you cannot enable FIPS mode. |

|

|

| Sources and repositories for the release-image content. |

Array of objects. Includes a |

|

|

Required if you use | String |

|

| Specify one or more repositories that may also contain the same images. | Array of strings |

|

| How to publish or expose the user-facing endpoints of your cluster, such as the Kubernetes API, OpenShift routes. |

Setting this field to Important

If the value of the field is set to |

|

| The SSH key to authenticate access to your cluster machines. Note

For production OpenShift Container Platform clusters on which you want to perform installation debugging or disaster recovery, specify an SSH key that your |

For example, |

4.11.4. Additional Red Hat OpenStack Platform (RHOSP) configuration parameters

Additional RHOSP configuration parameters are described in the following table:

| Parameter | Description | Values |

|---|---|---|

|

| For compute machines, the size in gigabytes of the root volume. If you do not set this value, machines use ephemeral storage. |

Integer, for example |

|

| For compute machines, the root volume’s type. |

String, for example |

|

| For control plane machines, the size in gigabytes of the root volume. If you do not set this value, machines use ephemeral storage. |

Integer, for example |

|

| For control plane machines, the root volume’s type. |

String, for example |

|

|

The name of the RHOSP cloud to use from the list of clouds in the |

String, for example |

|

| The RHOSP external network name to be used for installation. |

String, for example |

|

| The RHOSP flavor to use for control plane and compute machines.

This property is deprecated. To use a flavor as the default for all machine pools, add it as the value of the |

String, for example |

4.11.5. Optional RHOSP configuration parameters

Optional RHOSP configuration parameters are described in the following table:

| Parameter | Description | Values |

|---|---|---|

|

| Additional networks that are associated with compute machines. Allowed address pairs are not created for additional networks. |

A list of one or more UUIDs as strings. For example, |

|

| Additional security groups that are associated with compute machines. |

A list of one or more UUIDs as strings. For example, |

|

| RHOSP Compute (Nova) availability zones (AZs) to install machines on. If this parameter is not set, the installation program relies on the default settings for Nova that the RHOSP administrator configured. On clusters that use Kuryr, RHOSP Octavia does not support availability zones. Load balancers and, if you are using the Amphora provider driver, OpenShift Container Platform services that rely on Amphora VMs, are not created according to the value of this property. |

A list of strings. For example, |

|

| For compute machines, the availability zone to install root volumes on. If you do not set a value for this parameter, the installation program selects the default availability zone. |

A list of strings, for example |

|

|

Server group policy to apply to the group that will contain the compute machines in the pool. You cannot change server group policies or affiliations after creation. Supported options include

An

If you use a strict |

A server group policy to apply to the machine pool. For example, |

|

| Additional networks that are associated with control plane machines. Allowed address pairs are not created for additional networks. Additional networks that are attached to a control plane machine are also attached to the bootstrap node. |

A list of one or more UUIDs as strings. For example, |

|

| Additional security groups that are associated with control plane machines. |

A list of one or more UUIDs as strings. For example, |

|

| RHOSP Compute (Nova) availability zones (AZs) to install machines on. If this parameter is not set, the installation program relies on the default settings for Nova that the RHOSP administrator configured. On clusters that use Kuryr, RHOSP Octavia does not support availability zones. Load balancers and, if you are using the Amphora provider driver, OpenShift Container Platform services that rely on Amphora VMs, are not created according to the value of this property. |

A list of strings. For example, |

|

| For control plane machines, the availability zone to install root volumes on. If you do not set this value, the installation program selects the default availability zone. |

A list of strings, for example |

|

|

Server group policy to apply to the group that will contain the control plane machines in the pool. You cannot change server group policies or affiliations after creation. Supported options include

An

If you use a strict |

A server group policy to apply to the machine pool. For example, |

|

| The location from which the installation program downloads the RHCOS image. You must set this parameter to perform an installation in a restricted network. | An HTTP or HTTPS URL, optionally with an SHA-256 checksum.

For example, |

|

|

Properties to add to the installer-uploaded ClusterOSImage in Glance. This property is ignored if

You can use this property to exceed the default persistent volume (PV) limit for RHOSP of 26 PVs per node. To exceed the limit, set the

You can also use this property to enable the QEMU guest agent by including the | A set of string properties. For example: |

|

| The default machine pool platform configuration. |

|

|

|

An existing floating IP address to associate with the Ingress port. To use this property, you must also define the |

An IP address, for example |

|

|

An existing floating IP address to associate with the API load balancer. To use this property, you must also define the |

An IP address, for example |

|

| IP addresses for external DNS servers that cluster instances use for DNS resolution. |

A list of IP addresses as strings. For example, |

|

| The UUID of a RHOSP subnet that the cluster’s nodes use. Nodes and virtual IP (VIP) ports are created on this subnet.

The first item in If you deploy to a custom subnet, you cannot specify an external DNS server to the OpenShift Container Platform installer. Instead, add DNS to the subnet in RHOSP. |

A UUID as a string. For example, |

4.11.6. Custom subnets in RHOSP deployments

Optionally, you can deploy a cluster on a Red Hat OpenStack Platform (RHOSP) subnet of your choice. The subnet’s GUID is passed as the value of platform.openstack.machinesSubnet in the install-config.yaml file.

This subnet is used as the cluster’s primary subnet. By default, nodes and ports are created on it. You can create nodes and ports on a different RHOSP subnet by setting the value of the platform.openstack.machinesSubnet property to the subnet’s UUID.

Before you run the OpenShift Container Platform installer with a custom subnet, verify that your configuration meets the following requirements:

-

The subnet that is used by

platform.openstack.machinesSubnethas DHCP enabled. -

The CIDR of

platform.openstack.machinesSubnetmatches the CIDR ofnetworking.machineNetwork. - The installation program user has permission to create ports on this network, including ports with fixed IP addresses.

Clusters that use custom subnets have the following limitations:

-

If you plan to install a cluster that uses floating IP addresses, the

platform.openstack.machinesSubnetsubnet must be attached to a router that is connected to theexternalNetworknetwork. -

If the

platform.openstack.machinesSubnetvalue is set in theinstall-config.yamlfile, the installation program does not create a private network or subnet for your RHOSP machines. -

You cannot use the

platform.openstack.externalDNSproperty at the same time as a custom subnet. To add DNS to a cluster that uses a custom subnet, configure DNS on the RHOSP network.

By default, the API VIP takes x.x.x.5 and the Ingress VIP takes x.x.x.7 from your network’s CIDR block. To override these default values, set values for platform.openstack.apiVIPs and platform.openstack.ingressVIPs that are outside of the DHCP allocation pool.

The CIDR ranges for networks are not adjustable after cluster installation. Red Hat does not provide direct guidance on determining the range during cluster installation because it requires careful consideration of the number of created pods per namespace.

4.11.7. Sample customized install-config.yaml file for RHOSP with Kuryr

To deploy with Kuryr SDN instead of the default OVN-Kubernetes network plugin, you must modify the install-config.yaml file to include Kuryr as the desired networking.networkType. This sample install-config.yaml demonstrates all of the possible Red Hat OpenStack Platform (RHOSP) customization options.

This sample file is provided for reference only. You must obtain your install-config.yaml file by using the installation program.

apiVersion: v1

baseDomain: example.com

controlPlane:

name: master

platform: {}

replicas: 3

compute:

- name: worker

platform:

openstack:

type: ml.large

replicas: 3

metadata:

name: example

networking:

clusterNetwork:

- cidr: 10.128.0.0/14

hostPrefix: 23

machineNetwork:

- cidr: 10.0.0.0/16

serviceNetwork:

- 172.30.0.0/16

networkType: Kuryr

platform:

openstack:

cloud: mycloud

externalNetwork: external

computeFlavor: m1.xlarge

apiFloatingIP: 128.0.0.1

trunkSupport: true

octaviaSupport: true

pullSecret: '{"auths": ...}'

sshKey: ssh-ed25519 AAAA...- 1

- The Amphora Octavia driver creates two ports per load balancer. As a result, the service subnet that the installer creates is twice the size of the CIDR that is specified as the value of the

serviceNetworkproperty. The larger range is required to prevent IP address conflicts. - 2

- The cluster network plugin to install. The supported values are

Kuryr,OVNKubernetes, andOpenShiftSDN. The default value isOVNKubernetes. - 3 4

- Both

trunkSupportandoctaviaSupportare automatically discovered by the installer, so there is no need to set them. But if your environment does not meet both requirements, Kuryr SDN will not properly work. Trunks are needed to connect the pods to the RHOSP network and Octavia is required to create the OpenShift Container Platform services.

4.11.8. Cluster deployment on RHOSP provider networks

You can deploy your OpenShift Container Platform clusters on Red Hat OpenStack Platform (RHOSP) with a primary network interface on a provider network. Provider networks are commonly used to give projects direct access to a public network that can be used to reach the internet. You can also share provider networks among projects as part of the network creation process.

RHOSP provider networks map directly to an existing physical network in the data center. A RHOSP administrator must create them.

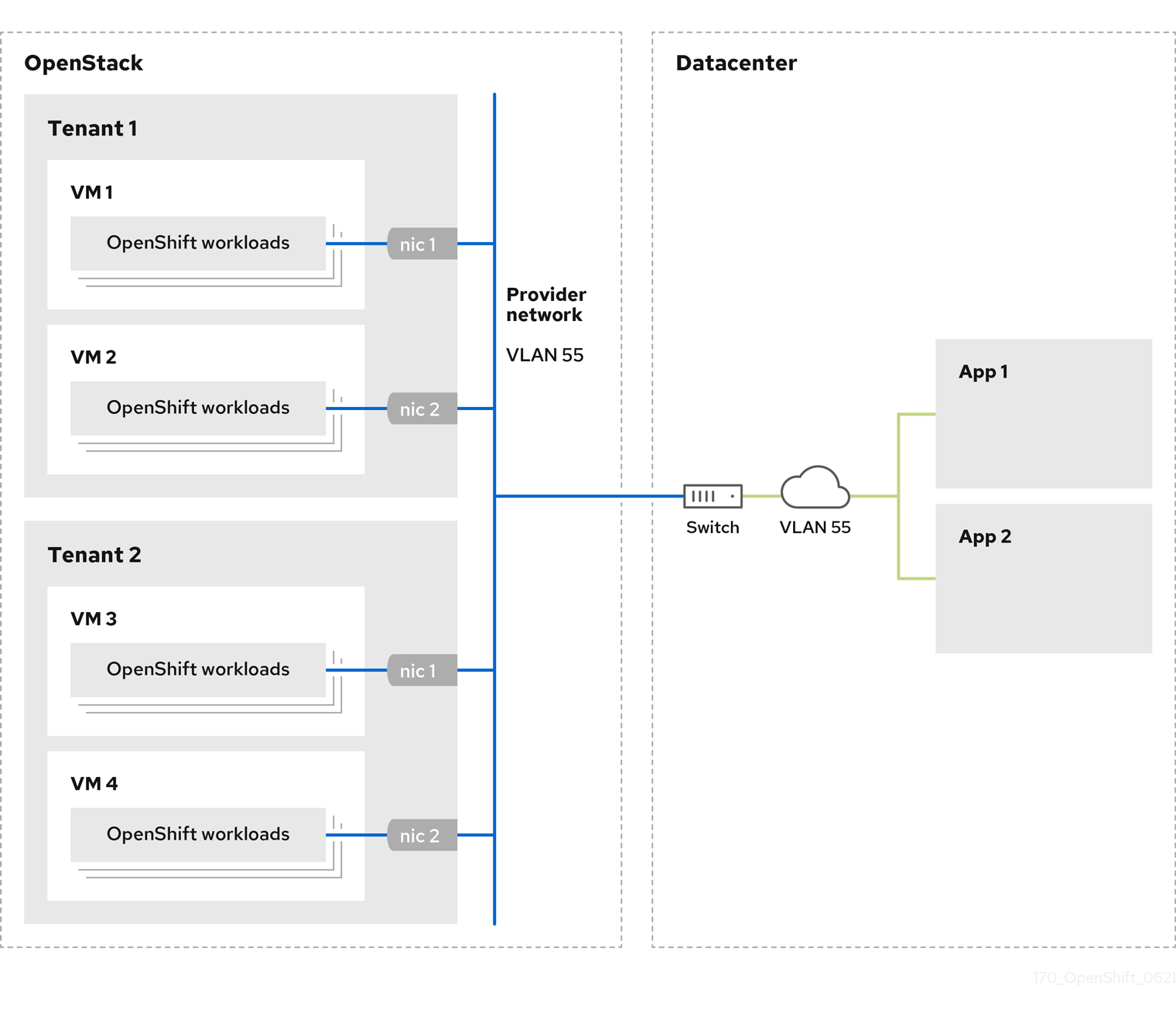

In the following example, OpenShift Container Platform workloads are connected to a data center by using a provider network:

OpenShift Container Platform clusters that are installed on provider networks do not require tenant networks or floating IP addresses. The installer does not create these resources during installation.

Example provider network types include flat (untagged) and VLAN (802.1Q tagged).

A cluster can support as many provider network connections as the network type allows. For example, VLAN networks typically support up to 4096 connections.

You can learn more about provider and tenant networks in the RHOSP documentation.

4.11.8.1. RHOSP provider network requirements for cluster installation

Before you install an OpenShift Container Platform cluster, your Red Hat OpenStack Platform (RHOSP) deployment and provider network must meet a number of conditions:

- The RHOSP networking service (Neutron) is enabled and accessible through the RHOSP networking API.

- The RHOSP networking service has the port security and allowed address pairs extensions enabled.

The provider network can be shared with other tenants.

TipUse the

openstack network createcommand with the--shareflag to create a network that can be shared.The RHOSP project that you use to install the cluster must own the provider network, as well as an appropriate subnet.

Tip- To create a network for a project that is named "openshift," enter the following command

$ openstack network create --project openshift- To create a subnet for a project that is named "openshift," enter the following command

$ openstack subnet create --project openshiftTo learn more about creating networks on RHOSP, read the provider networks documentation.

If the cluster is owned by the

adminuser, you must run the installer as that user to create ports on the network.ImportantProvider networks must be owned by the RHOSP project that is used to create the cluster. If they are not, the RHOSP Compute service (Nova) cannot request a port from that network.

Verify that the provider network can reach the RHOSP metadata service IP address, which is

169.254.169.254by default.Depending on your RHOSP SDN and networking service configuration, you might need to provide the route when you create the subnet. For example:

$ openstack subnet create --dhcp --host-route destination=169.254.169.254/32,gateway=192.0.2.2 ...- Optional: To secure the network, create role-based access control (RBAC) rules that limit network access to a single project.

4.11.8.2. Deploying a cluster that has a primary interface on a provider network

You can deploy an OpenShift Container Platform cluster that has its primary network interface on an Red Hat OpenStack Platform (RHOSP) provider network.

Prerequisites

- Your Red Hat OpenStack Platform (RHOSP) deployment is configured as described by "RHOSP provider network requirements for cluster installation".

Procedure

-

In a text editor, open the

install-config.yamlfile. -

Set the value of the

platform.openstack.apiVIPsproperty to the IP address for the API VIP. -

Set the value of the

platform.openstack.ingressVIPsproperty to the IP address for the Ingress VIP. -

Set the value of the

platform.openstack.machinesSubnetproperty to the UUID of the provider network subnet. -

Set the value of the

networking.machineNetwork.cidrproperty to the CIDR block of the provider network subnet.

The platform.openstack.apiVIPs and platform.openstack.ingressVIPs properties must both be unassigned IP addresses from the networking.machineNetwork.cidr block.

Section of an installation configuration file for a cluster that relies on a RHOSP provider network

...

platform:

openstack:

apiVIPs:

- 192.0.2.13

ingressVIPs:

- 192.0.2.23

machinesSubnet: fa806b2f-ac49-4bce-b9db-124bc64209bf

# ...

networking:

machineNetwork:

- cidr: 192.0.2.0/24

You cannot set the platform.openstack.externalNetwork or platform.openstack.externalDNS parameters while using a provider network for the primary network interface.

When you deploy the cluster, the installer uses the install-config.yaml file to deploy the cluster on the provider network.

You can add additional networks, including provider networks, to the platform.openstack.additionalNetworkIDs list.

After you deploy your cluster, you can attach pods to additional networks. For more information, see Understanding multiple networks.

4.11.9. Kuryr ports pools

A Kuryr ports pool maintains a number of ports on standby for pod creation.

Keeping ports on standby minimizes pod creation time. Without ports pools, Kuryr must explicitly request port creation or deletion whenever a pod is created or deleted.

The Neutron ports that Kuryr uses are created in subnets that are tied to namespaces. These pod ports are also added as subports to the primary port of OpenShift Container Platform cluster nodes.

Because Kuryr keeps each namespace in a separate subnet, a separate ports pool is maintained for each namespace-worker pair.

Prior to installing a cluster, you can set the following parameters in the cluster-network-03-config.yml manifest file to configure ports pool behavior:

-

The

enablePortPoolsPrepopulationparameter controls pool prepopulation, which forces Kuryr to add Neutron ports to the pools when the first pod that is configured to use the dedicated network for pods is created in a namespace. The default value isfalse. -

The

poolMinPortsparameter is the minimum number of free ports that are kept in the pool. The default value is1. The

poolMaxPortsparameter is the maximum number of free ports that are kept in the pool. A value of0disables that upper bound. This is the default setting.If your OpenStack port quota is low, or you have a limited number of IP addresses on the pod network, consider setting this option to ensure that unneeded ports are deleted.

-

The

poolBatchPortsparameter defines the maximum number of Neutron ports that can be created at once. The default value is3.

4.11.10. Adjusting Kuryr ports pools during installation

During installation, you can configure how Kuryr manages Red Hat OpenStack Platform (RHOSP) Neutron ports to control the speed and efficiency of pod creation.

Prerequisites

-

Create and modify the

install-config.yamlfile.

Procedure

From a command line, create the manifest files:

$ ./openshift-install create manifests --dir <installation_directory>1 - 1

- For

<installation_directory>, specify the name of the directory that contains theinstall-config.yamlfile for your cluster.

Create a file that is named

cluster-network-03-config.ymlin the<installation_directory>/manifests/directory:$ touch <installation_directory>/manifests/cluster-network-03-config.yml1 - 1

- For

<installation_directory>, specify the directory name that contains themanifests/directory for your cluster.

After creating the file, several network configuration files are in the

manifests/directory, as shown:$ ls <installation_directory>/manifests/cluster-network-*Example output

cluster-network-01-crd.yml cluster-network-02-config.yml cluster-network-03-config.ymlOpen the

cluster-network-03-config.ymlfile in an editor, and enter a custom resource (CR) that describes the Cluster Network Operator configuration that you want:$ oc edit networks.operator.openshift.io clusterEdit the settings to meet your requirements. The following file is provided as an example:

apiVersion: operator.openshift.io/v1 kind: Network metadata: name: cluster spec: clusterNetwork: - cidr: 10.128.0.0/14 hostPrefix: 23 serviceNetwork: - 172.30.0.0/16 defaultNetwork: type: Kuryr kuryrConfig: enablePortPoolsPrepopulation: false1 poolMinPorts: 12 poolBatchPorts: 33 poolMaxPorts: 54 openstackServiceNetwork: 172.30.0.0/155 - 1

- Set

enablePortPoolsPrepopulationtotrueto make Kuryr create new Neutron ports when the first pod on the network for pods is created in a namespace. This setting raises the Neutron ports quota but can reduce the time that is required to spawn pods. The default value isfalse. - 2

- Kuryr creates new ports for a pool if the number of free ports in that pool is lower than the value of

poolMinPorts. The default value is1. - 3

poolBatchPortscontrols the number of new ports that are created if the number of free ports is lower than the value ofpoolMinPorts. The default value is3.- 4

- If the number of free ports in a pool is higher than the value of

poolMaxPorts, Kuryr deletes them until the number matches that value. Setting this value to0disables this upper bound, preventing pools from shrinking. The default value is0. - 5

- The

openStackServiceNetworkparameter defines the CIDR range of the network from which IP addresses are allocated to RHOSP Octavia’s LoadBalancers.

If this parameter is used with the Amphora driver, Octavia takes two IP addresses from this network for each load balancer: one for OpenShift and the other for VRRP connections. Because these IP addresses are managed by OpenShift Container Platform and Neutron respectively, they must come from different pools. Therefore, the value of

openStackServiceNetworkmust be at least twice the size of the value ofserviceNetwork, and the value ofserviceNetworkmust overlap entirely with the range that is defined byopenStackServiceNetwork.The CNO verifies that VRRP IP addresses that are taken from the range that is defined by this parameter do not overlap with the range that is defined by the

serviceNetworkparameter.If this parameter is not set, the CNO uses an expanded value of

serviceNetworkthat is determined by decrementing the prefix size by 1.-

Save the

cluster-network-03-config.ymlfile, and exit the text editor. -

Optional: Back up the

manifests/cluster-network-03-config.ymlfile. The installation program deletes themanifests/directory while creating the cluster.

4.12. Generating a key pair for cluster node SSH access

During an OpenShift Container Platform installation, you can provide an SSH public key to the installation program. The key is passed to the Red Hat Enterprise Linux CoreOS (RHCOS) nodes through their Ignition config files and is used to authenticate SSH access to the nodes. The key is added to the ~/.ssh/authorized_keys list for the core user on each node, which enables password-less authentication.

After the key is passed to the nodes, you can use the key pair to SSH in to the RHCOS nodes as the user core. To access the nodes through SSH, the private key identity must be managed by SSH for your local user.

If you want to SSH in to your cluster nodes to perform installation debugging or disaster recovery, you must provide the SSH public key during the installation process. The ./openshift-install gather command also requires the SSH public key to be in place on the cluster nodes.

Do not skip this procedure in production environments, where disaster recovery and debugging is required.

Procedure

If you do not have an existing SSH key pair on your local machine to use for authentication onto your cluster nodes, create one. For example, on a computer that uses a Linux operating system, run the following command:

$ ssh-keygen -t ed25519 -N '' -f <path>/<file_name>1 - 1

- Specify the path and file name, such as

~/.ssh/id_ed25519, of the new SSH key. If you have an existing key pair, ensure your public key is in the your~/.sshdirectory.

NoteIf you plan to install an OpenShift Container Platform cluster that uses FIPS validated or Modules In Process cryptographic libraries on the

x86_64,ppc64le, ands390xarchitectures. do not create a key that uses theed25519algorithm. Instead, create a key that uses thersaorecdsaalgorithm.View the public SSH key:

$ cat <path>/<file_name>.pubFor example, run the following to view the

~/.ssh/id_ed25519.pubpublic key:$ cat ~/.ssh/id_ed25519.pubAdd the SSH private key identity to the SSH agent for your local user, if it has not already been added. SSH agent management of the key is required for password-less SSH authentication onto your cluster nodes, or if you want to use the

./openshift-install gathercommand.NoteOn some distributions, default SSH private key identities such as

~/.ssh/id_rsaand~/.ssh/id_dsaare managed automatically.If the

ssh-agentprocess is not already running for your local user, start it as a background task:$ eval "$(ssh-agent -s)"Example output

Agent pid 31874NoteIf your cluster is in FIPS mode, only use FIPS-compliant algorithms to generate the SSH key. The key must be either RSA or ECDSA.

Add your SSH private key to the

ssh-agent:$ ssh-add <path>/<file_name>1 - 1

- Specify the path and file name for your SSH private key, such as

~/.ssh/id_ed25519

Example output

Identity added: /home/<you>/<path>/<file_name> (<computer_name>)

Next steps

- When you install OpenShift Container Platform, provide the SSH public key to the installation program.

4.13. Enabling access to the environment

At deployment, all OpenShift Container Platform machines are created in a Red Hat OpenStack Platform (RHOSP)-tenant network. Therefore, they are not accessible directly in most RHOSP deployments.

You can configure OpenShift Container Platform API and application access by using floating IP addresses (FIPs) during installation. You can also complete an installation without configuring FIPs, but the installer will not configure a way to reach the API or applications externally.

4.13.1. Enabling access with floating IP addresses

Create floating IP (FIP) addresses for external access to the OpenShift Container Platform API and cluster applications.

Procedure

Using the Red Hat OpenStack Platform (RHOSP) CLI, create the API FIP:

$ openstack floating ip create --description "API <cluster_name>.<base_domain>" <external_network>Using the Red Hat OpenStack Platform (RHOSP) CLI, create the apps, or Ingress, FIP:

$ openstack floating ip create --description "Ingress <cluster_name>.<base_domain>" <external_network>Add records that follow these patterns to your DNS server for the API and Ingress FIPs:

api.<cluster_name>.<base_domain>. IN A <API_FIP> *.apps.<cluster_name>.<base_domain>. IN A <apps_FIP>NoteIf you do not control the DNS server, you can access the cluster by adding the cluster domain names such as the following to your

/etc/hostsfile:-

<api_floating_ip> api.<cluster_name>.<base_domain> -

<application_floating_ip> grafana-openshift-monitoring.apps.<cluster_name>.<base_domain> -

<application_floating_ip> prometheus-k8s-openshift-monitoring.apps.<cluster_name>.<base_domain> -

<application_floating_ip> oauth-openshift.apps.<cluster_name>.<base_domain> -

<application_floating_ip> console-openshift-console.apps.<cluster_name>.<base_domain> -

application_floating_ip integrated-oauth-server-openshift-authentication.apps.<cluster_name>.<base_domain>

The cluster domain names in the

/etc/hostsfile grant access to the web console and the monitoring interface of your cluster locally. You can also use thekubectloroc. You can access the user applications by using the additional entries pointing to the <application_floating_ip>. This action makes the API and applications accessible to only you, which is not suitable for production deployment, but does allow installation for development and testing.-

Add the FIPs to the

install-config.yamlfile as the values of the following parameters:-

platform.openstack.ingressFloatingIP -

platform.openstack.apiFloatingIP

-

If you use these values, you must also enter an external network as the value of the platform.openstack.externalNetwork parameter in the install-config.yaml file.

You can make OpenShift Container Platform resources available outside of the cluster by assigning a floating IP address and updating your firewall configuration.

4.13.2. Completing installation without floating IP addresses

You can install OpenShift Container Platform on Red Hat OpenStack Platform (RHOSP) without providing floating IP addresses.

In the install-config.yaml file, do not define the following parameters:

-

platform.openstack.ingressFloatingIP -

platform.openstack.apiFloatingIP

If you cannot provide an external network, you can also leave platform.openstack.externalNetwork blank. If you do not provide a value for platform.openstack.externalNetwork, a router is not created for you, and, without additional action, the installer will fail to retrieve an image from Glance. You must configure external connectivity on your own.

If you run the installer from a system that cannot reach the cluster API due to a lack of floating IP addresses or name resolution, installation fails. To prevent installation failure in these cases, you can use a proxy network or run the installer from a system that is on the same network as your machines.

You can enable name resolution by creating DNS records for the API and Ingress ports. For example:

api.<cluster_name>.<base_domain>. IN A <api_port_IP>

*.apps.<cluster_name>.<base_domain>. IN A <ingress_port_IP>

If you do not control the DNS server, you can add the record to your /etc/hosts file. This action makes the API accessible to only you, which is not suitable for production deployment but does allow installation for development and testing.

4.14. Deploying the cluster

You can install OpenShift Container Platform on a compatible cloud platform.

You can run the create cluster command of the installation program only once, during initial installation.

Prerequisites

- Obtain the OpenShift Container Platform installation program and the pull secret for your cluster.

- Verify the cloud provider account on your host has the correct permissions to deploy the cluster. An account with incorrect permissions causes the installation process to fail with an error message that displays the missing permissions.

Procedure

Change to the directory that contains the installation program and initialize the cluster deployment:

$ ./openshift-install create cluster --dir <installation_directory> \1 --log-level=info2 NoteIf the cloud provider account that you configured on your host does not have sufficient permissions to deploy the cluster, the installation process stops, and the missing permissions are displayed.

Verification

When the cluster deployment completes successfully:

-

The terminal displays directions for accessing your cluster, including a link to the web console and credentials for the

kubeadminuser. -

Credential information also outputs to

<installation_directory>/.openshift_install.log.

Do not delete the installation program or the files that the installation program creates. Both are required to delete the cluster.

Example output

...

INFO Install complete!

INFO To access the cluster as the system:admin user when using 'oc', run 'export KUBECONFIG=/home/myuser/install_dir/auth/kubeconfig'

INFO Access the OpenShift web-console here: https://console-openshift-console.apps.mycluster.example.com

INFO Login to the console with user: "kubeadmin", and password: "password"

INFO Time elapsed: 36m22s-

The Ignition config files that the installation program generates contain certificates that expire after 24 hours, which are then renewed at that time. If the cluster is shut down before renewing the certificates and the cluster is later restarted after the 24 hours have elapsed, the cluster automatically recovers the expired certificates. The exception is that you must manually approve the pending

node-bootstrappercertificate signing requests (CSRs) to recover kubelet certificates. See the documentation for Recovering from expired control plane certificates for more information. - It is recommended that you use Ignition config files within 12 hours after they are generated because the 24-hour certificate rotates from 16 to 22 hours after the cluster is installed. By using the Ignition config files within 12 hours, you can avoid installation failure if the certificate update runs during installation.

4.15. Verifying cluster status

You can verify your OpenShift Container Platform cluster’s status during or after installation.

Procedure

In the cluster environment, export the administrator’s kubeconfig file:

$ export KUBECONFIG=<installation_directory>/auth/kubeconfig1 - 1

- For

<installation_directory>, specify the path to the directory that you stored the installation files in.

The

kubeconfigfile contains information about the cluster that is used by the CLI to connect a client to the correct cluster and API server.View the control plane and compute machines created after a deployment:

$ oc get nodesView your cluster’s version:

$ oc get clusterversionView your Operators' status:

$ oc get clusteroperatorView all running pods in the cluster:

$ oc get pods -A

4.16. Logging in to the cluster by using the CLI

You can log in to your cluster as a default system user by exporting the cluster kubeconfig file. The kubeconfig file contains information about the cluster that is used by the CLI to connect a client to the correct cluster and API server. The file is specific to a cluster and is created during OpenShift Container Platform installation.

Prerequisites

- You deployed an OpenShift Container Platform cluster.

-

You installed the

ocCLI.

Procedure

Export the

kubeadmincredentials:$ export KUBECONFIG=<installation_directory>/auth/kubeconfig1 - 1

- For

<installation_directory>, specify the path to the directory that you stored the installation files in.

Verify you can run

occommands successfully using the exported configuration:$ oc whoamiExample output

system:admin

4.17. Telemetry access for OpenShift Container Platform

In OpenShift Container Platform 4.12, the Telemetry service, which runs by default to provide metrics about cluster health and the success of updates, requires internet access. If your cluster is connected to the internet, Telemetry runs automatically, and your cluster is registered to OpenShift Cluster Manager Hybrid Cloud Console.

After you confirm that your OpenShift Cluster Manager Hybrid Cloud Console inventory is correct, either maintained automatically by Telemetry or manually by using OpenShift Cluster Manager, use subscription watch to track your OpenShift Container Platform subscriptions at the account or multi-cluster level.

4.18. Next steps

- Customize your cluster.

- Remote health reporting

- If you need to enable external access to node ports, configure ingress cluster traffic by using a node port.

- If you did not configure RHOSP to accept application traffic over floating IP addresses, configure RHOSP access with floating IP addresses.