Dieser Inhalt ist in der von Ihnen ausgewählten Sprache nicht verfügbar.

Chapter 19. Clusters at the network far edge

19.1. Challenges of the network far edge

Edge computing presents complex challenges when managing many sites in geographically displaced locations. Use zero touch provisioning (ZTP) and GitOps to provision and manage sites at the far edge of the network.

19.1.1. Overcoming the challenges of the network far edge

Today, service providers want to deploy their infrastructure at the edge of the network. This presents significant challenges:

- How do you handle deployments of many edge sites in parallel?

- What happens when you need to deploy sites in disconnected environments?

- How do you manage the lifecycle of large fleets of clusters?

Zero touch provisioning (ZTP) and GitOps meets these challenges by allowing you to provision remote edge sites at scale with declarative site definitions and configurations for bare-metal equipment. Template or overlay configurations install OpenShift Container Platform features that are required for CNF workloads. The full lifecycle of installation and upgrades is handled through the ZTP pipeline.

ZTP uses GitOps for infrastructure deployments. With GitOps, you use declarative YAML files and other defined patterns stored in Git repositories. Red Hat Advanced Cluster Management (RHACM) uses your Git repositories to drive the deployment of your infrastructure.

GitOps provides traceability, role-based access control (RBAC), and a single source of truth for the desired state of each site. Scalability issues are addressed by Git methodologies and event driven operations through webhooks.

You start the ZTP workflow by creating declarative site definition and configuration custom resources (CRs) that the ZTP pipeline delivers to the edge nodes.

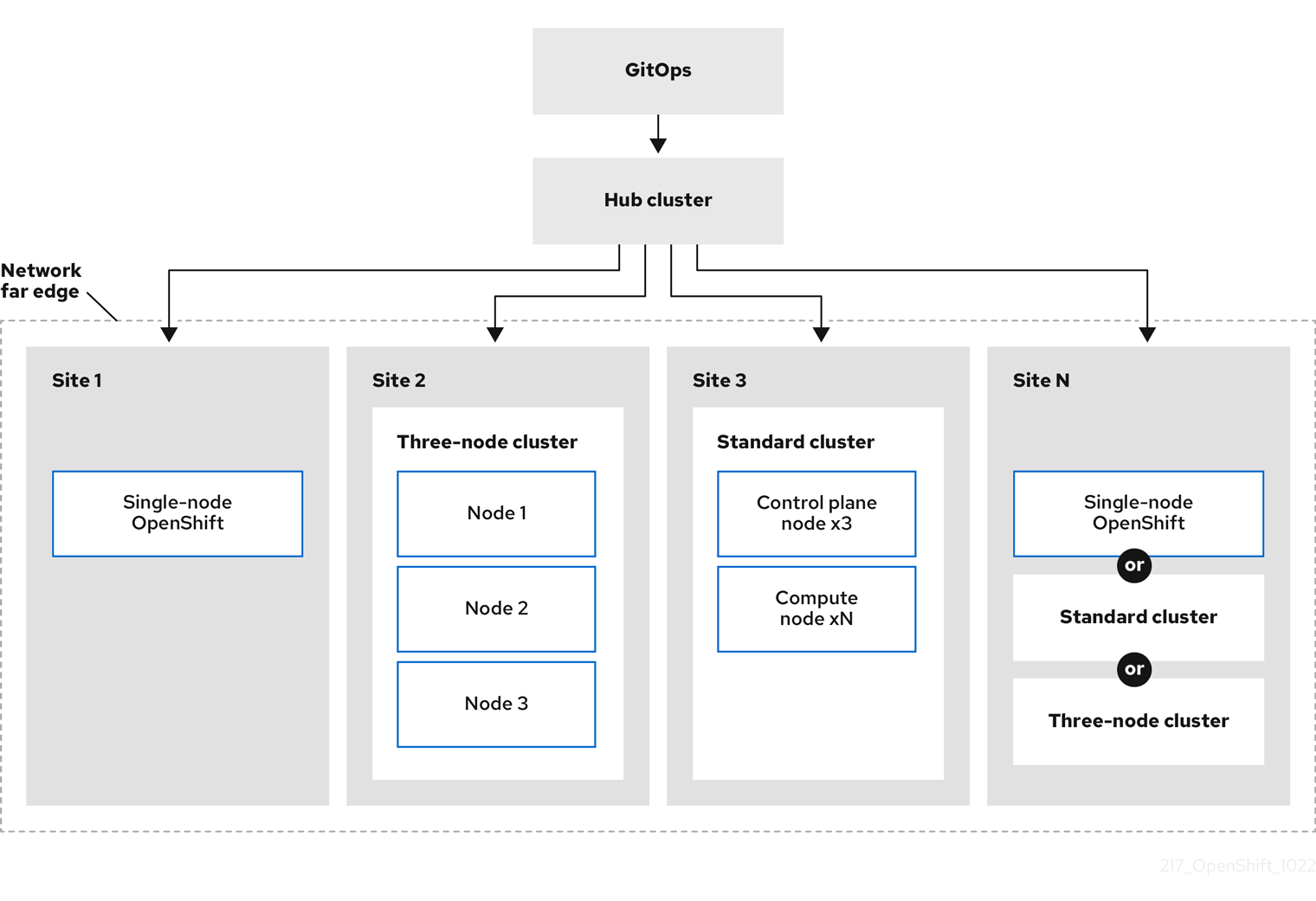

The following diagram shows how ZTP works within the far edge framework.

19.1.2. Using ZTP to provision clusters at the network far edge

Red Hat Advanced Cluster Management (RHACM) manages clusters in a hub-and-spoke architecture, where a single hub cluster manages many spoke clusters. Hub clusters running RHACM provision and deploy the managed clusters by using zero touch provisioning (ZTP) and the assisted service that is deployed when you install RHACM.

The assisted service handles provisioning of OpenShift Container Platform on single node clusters, three-node clusters, or standard clusters running on bare metal.

A high-level overview of using ZTP to provision and maintain bare-metal hosts with OpenShift Container Platform is as follows:

- A hub cluster running RHACM manages an OpenShift image registry that mirrors the OpenShift Container Platform release images. RHACM uses the OpenShift image registry to provision the managed clusters.

- You manage the bare-metal hosts in a YAML format inventory file, versioned in a Git repository.

- You make the hosts ready for provisioning as managed clusters, and use RHACM and the assisted service to install the bare-metal hosts on site.

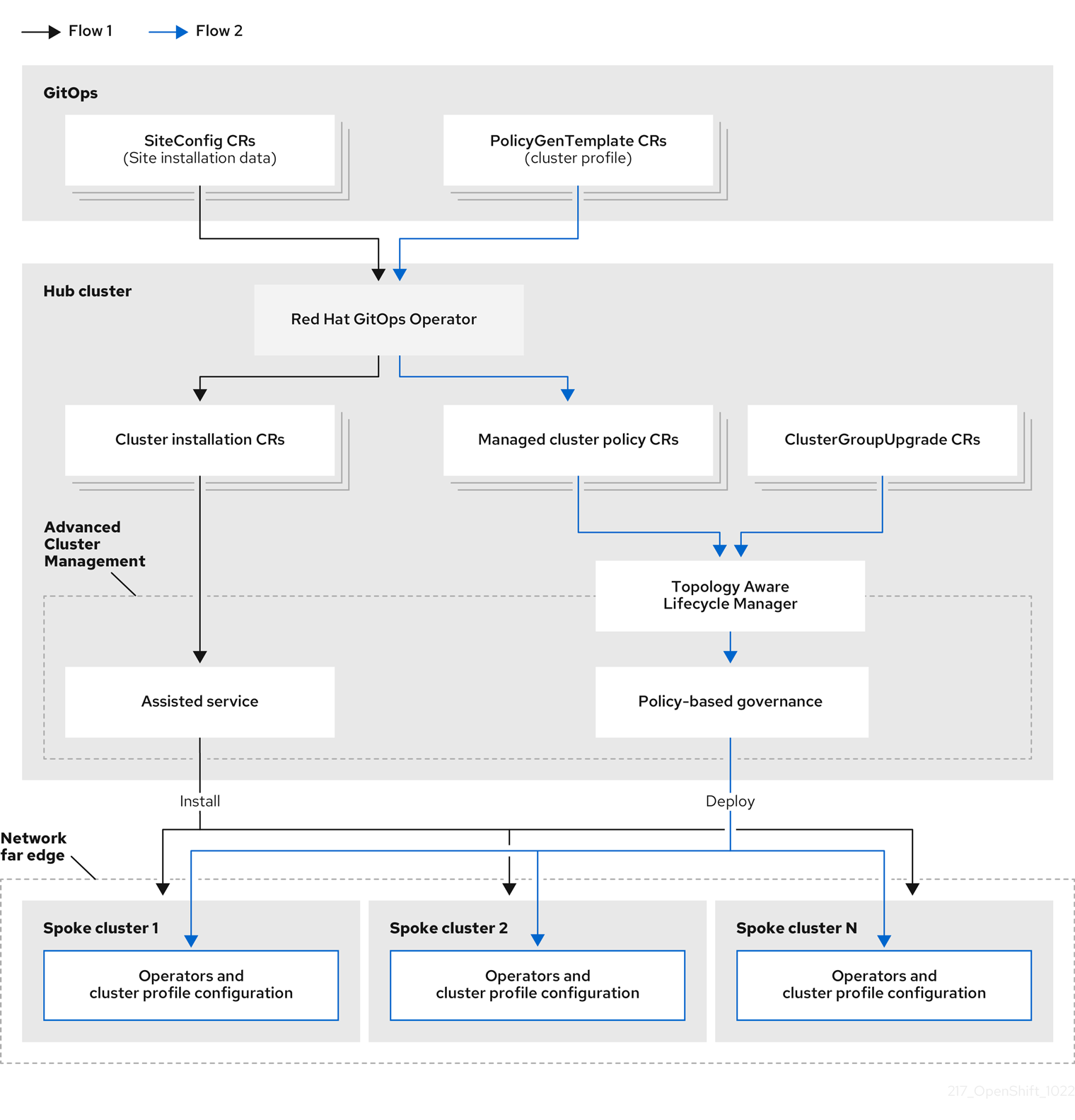

Installing and deploying the clusters is a two-stage process, involving an initial installation phase, and a subsequent configuration phase. The following diagram illustrates this workflow:

19.1.3. Installing managed clusters with SiteConfig resources and RHACM

GitOps ZTP uses SiteConfig custom resources (CRs) in a Git repository to manage the processes that install OpenShift Container Platform clusters. The SiteConfig CR contains cluster-specific parameters required for installation. It has options for applying select configuration CRs during installation including user defined extra manifests.

The ZTP GitOps plugin processes SiteConfig CRs to generate a collection of CRs on the hub cluster. This triggers the assisted service in Red Hat Advanced Cluster Management (RHACM) to install OpenShift Container Platform on the bare-metal host. You can find installation status and error messages in these CRs on the hub cluster.

You can provision single clusters manually or in batches with ZTP:

- Provisioning a single cluster

-

Create a single

SiteConfigCR and related installation and configuration CRs for the cluster, and apply them in the hub cluster to begin cluster provisioning. This is a good way to test your CRs before deploying on a larger scale. - Provisioning many clusters

-

Install managed clusters in batches of up to 400 by defining

SiteConfigand related CRs in a Git repository. ArgoCD uses theSiteConfigCRs to deploy the sites. The RHACM policy generator creates the manifests and applies them to the hub cluster. This starts the cluster provisioning process.

19.1.4. Configuring managed clusters with policies and PolicyGenTemplate resources

Zero touch provisioning (ZTP) uses Red Hat Advanced Cluster Management (RHACM) to configure clusters by using a policy-based governance approach to applying the configuration.

The policy generator or PolicyGen is a plugin for the GitOps Operator that enables the creation of RHACM policies from a concise template. The tool can combine multiple CRs into a single policy, and you can generate multiple policies that apply to various subsets of clusters in your fleet.

For scalability and to reduce the complexity of managing configurations across the fleet of clusters, use configuration CRs with as much commonality as possible.

- Where possible, apply configuration CRs using a fleet-wide common policy.

- The next preference is to create logical groupings of clusters to manage as much of the remaining configurations as possible under a group policy.

- When a configuration is unique to an individual site, use RHACM templating on the hub cluster to inject the site-specific data into a common or group policy. Alternatively, apply an individual site policy for the site.

The following diagram shows how the policy generator interacts with GitOps and RHACM in the configuration phase of cluster deployment.

For large fleets of clusters, it is typical for there to be a high-level of consistency in the configuration of those clusters.

The following recommended structuring of policies combines configuration CRs to meet several goals:

- Describe common configurations once and apply to the fleet.

- Minimize the number of maintained and managed policies.

- Support flexibility in common configurations for cluster variants.

| Policy category | Description |

|---|---|

| Common |

A policy that exists in the common category is applied to all clusters in the fleet. Use common |

| Groups |

A policy that exists in the groups category is applied to a group of clusters in the fleet. Use group |

| Sites | A policy that exists in the sites category is applied to a specific cluster site. Any cluster can have its own specific policies maintained. |

19.2. Preparing the hub cluster for ZTP

To use RHACM in a disconnected environment, create a mirror registry that mirrors the OpenShift Container Platform release images and Operator Lifecycle Manager (OLM) catalog that contains the required Operator images. OLM manages, installs, and upgrades Operators and their dependencies in the cluster. You can also use a disconnected mirror host to serve the RHCOS ISO and RootFS disk images that are used to provision the bare-metal hosts.

19.2.1. Telco RAN 4.12 validated solution software versions

The Red Hat Telco Radio Access Network (RAN) version 4.12 solution has been validated using the following Red Hat software products.

| Product | Software version |

|---|---|

| Hub cluster OpenShift Container Platform version | 4.12 |

| GitOps ZTP plugin | 4.10, 4.11, or 4.12 |

| Red Hat Advanced Cluster Management (RHACM) | 2.6, 2.7 |

| Red Hat OpenShift GitOps | 1.9, 1.10 |

| Topology Aware Lifecycle Manager (TALM) | 4.10, 4.11, or 4.12 |

19.2.2. Installing GitOps ZTP in a disconnected environment

Use Red Hat Advanced Cluster Management (RHACM), Red Hat OpenShift GitOps, and Topology Aware Lifecycle Manager (TALM) on the hub cluster in the disconnected environment to manage the deployment of multiple managed clusters.

Prerequisites

-

You have installed the OpenShift Container Platform CLI (

oc). -

You have logged in as a user with

cluster-adminprivileges. You have configured a disconnected mirror registry for use in the cluster.

NoteThe disconnected mirror registry that you create must contain a version of TALM backup and pre-cache images that matches the version of TALM running in the hub cluster. The spoke cluster must be able to resolve these images in the disconnected mirror registry.

Procedure

- Install RHACM in the hub cluster. See Installing RHACM in a disconnected environment.

- Install GitOps and TALM in the hub cluster.

19.2.3. Adding RHCOS ISO and RootFS images to the disconnected mirror host

Before you begin installing clusters in the disconnected environment with Red Hat Advanced Cluster Management (RHACM), you must first host Red Hat Enterprise Linux CoreOS (RHCOS) images for it to use. Use a disconnected mirror to host the RHCOS images.

Prerequisites

- Deploy and configure an HTTP server to host the RHCOS image resources on the network. You must be able to access the HTTP server from your computer, and from the machines that you create.

The RHCOS images might not change with every release of OpenShift Container Platform. You must download images with the highest version that is less than or equal to the version that you install. Use the image versions that match your OpenShift Container Platform version if they are available. You require ISO and RootFS images to install RHCOS on the hosts. RHCOS QCOW2 images are not supported for this installation type.

Procedure

- Log in to the mirror host.

Obtain the RHCOS ISO and RootFS images from mirror.openshift.com, for example:

Export the required image names and OpenShift Container Platform version as environment variables:

$ export ISO_IMAGE_NAME=<iso_image_name>1 $ export ROOTFS_IMAGE_NAME=<rootfs_image_name>1 $ export OCP_VERSION=<ocp_version>1 Download the required images:

$ sudo wget https://mirror.openshift.com/pub/openshift-v4/dependencies/rhcos/4.12/${OCP_VERSION}/${ISO_IMAGE_NAME} -O /var/www/html/${ISO_IMAGE_NAME}$ sudo wget https://mirror.openshift.com/pub/openshift-v4/dependencies/rhcos/4.12/${OCP_VERSION}/${ROOTFS_IMAGE_NAME} -O /var/www/html/${ROOTFS_IMAGE_NAME}

Verification steps

Verify that the images downloaded successfully and are being served on the disconnected mirror host, for example:

$ wget http://$(hostname)/${ISO_IMAGE_NAME}Example output

Saving to: rhcos-4.12.1-x86_64-live.x86_64.iso rhcos-4.12.1-x86_64-live.x86_64.iso- 11%[====> ] 10.01M 4.71MB/s

19.2.4. Enabling the assisted service

Red Hat Advanced Cluster Management (RHACM) uses the assisted service to deploy OpenShift Container Platform clusters. The assisted service is deployed automatically when you enable the MultiClusterHub Operator on Red Hat Advanced Cluster Management (RHACM). After that, you need to configure the Provisioning resource to watch all namespaces and to update the AgentServiceConfig custom resource (CR) with references to the ISO and RootFS images that are hosted on the mirror registry HTTP server.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges. - You have RHACM with MultiClusterHub enabled.

Procedure

-

Enable the

Provisioningresource to watch all namespaces and configure mirrors for disconnected environments. For more information, see Enabling the Central Infrastructure Management service. Update the

AgentServiceConfigCR by running the following command:$ oc edit AgentServiceConfigAdd the following entry to the

items.spec.osImagesfield in the CR:- cpuArchitecture: x86_64 openshiftVersion: "4.12" rootFSUrl: https://<host>/<path>/rhcos-live-rootfs.x86_64.img url: https://<host>/<path>/rhcos-live.x86_64.isowhere:

- <host>

- Is the fully qualified domain name (FQDN) for the target mirror registry HTTP server.

- <path>

- Is the path to the image on the target mirror registry.

Save and quit the editor to apply the changes.

19.2.5. Configuring the hub cluster to use a disconnected mirror registry

You can configure the hub cluster to use a disconnected mirror registry for a disconnected environment.

Prerequisites

- You have a disconnected hub cluster installation with Red Hat Advanced Cluster Management (RHACM) 2.7 installed.

-

You have hosted the

rootfsandisoimages on an HTTP server. See the Additional resources section for guidance about Mirroring the OpenShift Container Platform image repository.

If you enable TLS for the HTTP server, you must confirm the root certificate is signed by an authority trusted by the client and verify the trusted certificate chain between your OpenShift Container Platform hub and managed clusters and the HTTP server. Using a server configured with an untrusted certificate prevents the images from being downloaded to the image creation service. Using untrusted HTTPS servers is not supported.

Procedure

Create a

ConfigMapcontaining the mirror registry config:apiVersion: v1 kind: ConfigMap metadata: name: assisted-installer-mirror-config namespace: multicluster-engine1 labels: app: assisted-service data: ca-bundle.crt: <certificate>2 registries.conf: |3 unqualified-search-registries = ["registry.access.redhat.com", "docker.io"] [[registry]] location = <mirror_registry_url>4 insecure = false mirror-by-digest-only = true- 1

- The

ConfigMapnamespace must be set tomulticluster-engine. - 2

- The mirror registry’s certificate used when creating the mirror registry.

- 3

- The configuration file for the mirror registry. The mirror registry configuration adds mirror information to

/etc/containers/registries.confin the Discovery image. The mirror information is stored in theimageContentSourcessection of theinstall-config.yamlfile when passed to the installation program. The Assisted Service pod running on the HUB cluster fetches the container images from the configured mirror registry. - 4

- The URL of the mirror registry. You must use the URL from the

imageContentSourcessection by running theoc adm release mirrorcommand when you configure the mirror registry. For more information, see the Mirroring the OpenShift Container Platform image repository section.

This updates

mirrorRegistryRefin theAgentServiceConfigcustom resource, as shown below:Example output

apiVersion: agent-install.openshift.io/v1beta1 kind: AgentServiceConfig metadata: name: agent namespace: multicluster-engine1 spec: databaseStorage: volumeName: <db_pv_name> accessModes: - ReadWriteOnce resources: requests: storage: <db_storage_size> filesystemStorage: volumeName: <fs_pv_name> accessModes: - ReadWriteOnce resources: requests: storage: <fs_storage_size> mirrorRegistryRef: name: assisted-installer-mirror-config2 osImages: - openshiftVersion: <ocp_version> url: <iso_url>3

A valid NTP server is required during cluster installation. Ensure that a suitable NTP server is available and can be reached from the installed clusters through the disconnected network.

19.2.6. Configuring the hub cluster to use unauthenticated registries

You can configure the hub cluster to use unauthenticated registries. Unauthenticated registries does not require authentication to access and download images.

Prerequisites

- You have installed and configured a hub cluster and installed Red Hat Advanced Cluster Management (RHACM) on the hub cluster.

- You have installed the OpenShift Container Platform CLI (oc).

-

You have logged in as a user with

cluster-adminprivileges. - You have configured an unauthenticated registry for use with the hub cluster.

Procedure

Update the

AgentServiceConfigcustom resource (CR) by running the following command:$ oc edit AgentServiceConfig agentAdd the

unauthenticatedRegistriesfield in the CR:apiVersion: agent-install.openshift.io/v1beta1 kind: AgentServiceConfig metadata: name: agent spec: unauthenticatedRegistries: - example.registry.com - example.registry2.com ...Unauthenticated registries are listed under

spec.unauthenticatedRegistriesin theAgentServiceConfigresource. Any registry on this list is not required to have an entry in the pull secret used for the spoke cluster installation.assisted-servicevalidates the pull secret by making sure it contains the authentication information for every image registry used for installation.

Mirror registries are automatically added to the ignore list and do not need to be added under spec.unauthenticatedRegistries. Specifying the PUBLIC_CONTAINER_REGISTRIES environment variable in the ConfigMap overrides the default values with the specified value. The PUBLIC_CONTAINER_REGISTRIES defaults are quay.io and registry.svc.ci.openshift.org.

Verification

Verify that you can access the newly added registry from the hub cluster by running the following commands:

Open a debug shell prompt to the hub cluster:

$ oc debug node/<node_name>Test access to the unauthenticated registry by running the following command:

sh-4.4# podman login -u kubeadmin -p $(oc whoami -t) <unauthenticated_registry>where:

- <unauthenticated_registry>

-

Is the new registry, for example,

unauthenticated-image-registry.openshift-image-registry.svc:5000.

Example output

Login Succeeded!

19.2.7. Configuring the hub cluster with ArgoCD

You can configure the hub cluster with a set of ArgoCD applications that generate the required installation and policy custom resources (CRs) for each site with GitOps zero touch provisioning (ZTP).

Red Hat Advanced Cluster Management (RHACM) uses SiteConfig CRs to generate the Day 1 managed cluster installation CRs for ArgoCD. Each ArgoCD application can manage a maximum of 300 SiteConfig CRs.

Prerequisites

- You have a OpenShift Container Platform hub cluster with Red Hat Advanced Cluster Management (RHACM) and Red Hat OpenShift GitOps installed.

-

You have extracted the reference deployment from the ZTP GitOps plugin container as described in the "Preparing the GitOps ZTP site configuration repository" section. Extracting the reference deployment creates the

out/argocd/deploymentdirectory referenced in the following procedure.

Procedure

Prepare the ArgoCD pipeline configuration:

- Create a Git repository with the directory structure similar to the example directory. For more information, see "Preparing the GitOps ZTP site configuration repository".

Configure access to the repository using the ArgoCD UI. Under Settings configure the following:

-

Repositories - Add the connection information. The URL must end in

.git, for example,https://repo.example.com/repo.gitand credentials. - Certificates - Add the public certificate for the repository, if needed.

-

Repositories - Add the connection information. The URL must end in

Modify the two ArgoCD applications,

out/argocd/deployment/clusters-app.yamlandout/argocd/deployment/policies-app.yaml, based on your Git repository:-

Update the URL to point to the Git repository. The URL ends with

.git, for example,https://repo.example.com/repo.git. -

The

targetRevisionindicates which Git repository branch to monitor. -

pathspecifies the path to theSiteConfigandPolicyGenTemplateCRs, respectively.

-

Update the URL to point to the Git repository. The URL ends with

To install the ZTP GitOps plugin you must patch the ArgoCD instance in the hub cluster by using the patch file previously extracted into the

out/argocd/deployment/directory. Run the following command:$ oc patch argocd openshift-gitops \ -n openshift-gitops --type=merge \ --patch-file out/argocd/deployment/argocd-openshift-gitops-patch.jsonNoteFor a disconnected environment, amend the

out/argocd/deployment/argocd-openshift-gitops-patch.jsonfile with theztp-site-generateimage mirrored in your local registry. Run the following command:$ oc patch argocd openshift-gitops -n openshift-gitops --type='json' \ -p='[{"op": "replace", "path": "/spec/repo/initContainers/0/image", \ "value": "<local_registry>/<ztp_site_generate_image_ref>"}]'where:

- <local_registry>

-

Is the URL of the disconnected registry, for example,

my.local.registry:5000 - <ztp-site-generate-image-ref>

-

Is the path to the mirrored

ztp-site-generateimage in the local registry, for exampleopenshift4-ztp-site-generate:custom.

In RHACM 2.7 and later, the multicluster engine enables the

cluster-proxy-addonfeature by default. To disable this feature, apply the following patch to disable and remove the relevant hub cluster and managed cluster pods that are responsible for this add-on.$ oc patch multiclusterengines.multicluster.openshift.io multiclusterengine --type=merge --patch-file out/argocd/deployment/disable-cluster-proxy-addon.jsonApply the pipeline configuration to your hub cluster by using the following command:

$ oc apply -k out/argocd/deployment

19.2.8. Preparing the GitOps ZTP site configuration repository

Before you can use the ZTP GitOps pipeline, you need to prepare the Git repository to host the site configuration data.

Prerequisites

- You have configured the hub cluster GitOps applications for generating the required installation and policy custom resources (CRs).

- You have deployed the managed clusters using zero touch provisioning (ZTP).

Procedure

-

Create a directory structure with separate paths for the

SiteConfigandPolicyGenTemplateCRs. Export the

argocddirectory from theztp-site-generatecontainer image using the following commands:$ podman pull registry.redhat.io/openshift4/ztp-site-generate-rhel8:v4.12$ mkdir -p ./out$ podman run --log-driver=none --rm registry.redhat.io/openshift4/ztp-site-generate-rhel8:v4.12 extract /home/ztp --tar | tar x -C ./outCheck that the

outdirectory contains the following subdirectories:-

out/extra-manifestcontains the source CR files thatSiteConfiguses to generate extra manifestconfigMap. -

out/source-crscontains the source CR files thatPolicyGenTemplateuses to generate the Red Hat Advanced Cluster Management (RHACM) policies. -

out/argocd/deploymentcontains patches and YAML files to apply on the hub cluster for use in the next step of this procedure. -

out/argocd/examplecontains the examples forSiteConfigandPolicyGenTemplatefiles that represent the recommended configuration.

-

The directory structure under out/argocd/example serves as a reference for the structure and content of your Git repository. The example includes SiteConfig and PolicyGenTemplate reference CRs for single-node, three-node, and standard clusters. Remove references to cluster types that you are not using. The following example describes a set of CRs for a network of single-node clusters:

example

├── policygentemplates

│ ├── common-ranGen.yaml

│ ├── example-sno-site.yaml

│ ├── group-du-sno-ranGen.yaml

│ ├── group-du-sno-validator-ranGen.yaml

│ ├── kustomization.yaml

│ └── ns.yaml

└── siteconfig

├── example-sno.yaml

├── KlusterletAddonConfigOverride.yaml

└── kustomization.yaml

Keep SiteConfig and PolicyGenTemplate CRs in separate directories. Both the SiteConfig and PolicyGenTemplate directories must contain a kustomization.yaml file that explicitly includes the files in that directory.

This directory structure and the kustomization.yaml files must be committed and pushed to your Git repository. The initial push to Git should include the kustomization.yaml files. The SiteConfig (example-sno.yaml) and PolicyGenTemplate (common-ranGen.yaml, group-du-sno*.yaml, and example-sno-site.yaml) files can be omitted and pushed at a later time as required when deploying a site.

The KlusterletAddonConfigOverride.yaml file is only required if one or more SiteConfig CRs which make reference to it are committed and pushed to Git. See example-sno.yaml for an example of how this is used.

19.3. Installing managed clusters with RHACM and SiteConfig resources

You can provision OpenShift Container Platform clusters at scale with Red Hat Advanced Cluster Management (RHACM) using the assisted service and the GitOps plugin policy generator with core-reduction technology enabled. The zero touch priovisioning (ZTP) pipeline performs the cluster installations. ZTP can be used in a disconnected environment.

19.3.1. GitOps ZTP and Topology Aware Lifecycle Manager

GitOps zero touch provisioning (ZTP) generates installation and configuration CRs from manifests stored in Git. These artifacts are applied to a centralized hub cluster where Red Hat Advanced Cluster Management (RHACM), the assisted service, and the Topology Aware Lifecycle Manager (TALM) use the CRs to install and configure the managed cluster. The configuration phase of the ZTP pipeline uses the TALM to orchestrate the application of the configuration CRs to the cluster. There are several key integration points between GitOps ZTP and the TALM.

- Inform policies

-

By default, GitOps ZTP creates all policies with a remediation action of

inform. These policies cause RHACM to report on compliance status of clusters relevant to the policies but does not apply the desired configuration. During the ZTP process, after OpenShift installation, the TALM steps through the createdinformpolicies and enforces them on the target managed cluster(s). This applies the configuration to the managed cluster. Outside of the ZTP phase of the cluster lifecycle, this allows you to change policies without the risk of immediately rolling those changes out to affected managed clusters. You can control the timing and the set of remediated clusters by using TALM. - Automatic creation of ClusterGroupUpgrade CRs

To automate the initial configuration of newly deployed clusters, TALM monitors the state of all

ManagedClusterCRs on the hub cluster. AnyManagedClusterCR that does not have aztp-donelabel applied, including newly createdManagedClusterCRs, causes the TALM to automatically create aClusterGroupUpgradeCR with the following characteristics:-

The

ClusterGroupUpgradeCR is created and enabled in theztp-installnamespace. -

ClusterGroupUpgradeCR has the same name as theManagedClusterCR. -

The cluster selector includes only the cluster associated with that

ManagedClusterCR. -

The set of managed policies includes all policies that RHACM has bound to the cluster at the time the

ClusterGroupUpgradeis created. - Pre-caching is disabled.

- Timeout set to 4 hours (240 minutes).

The automatic creation of an enabled

ClusterGroupUpgradeensures that initial zero-touch deployment of clusters proceeds without the need for user intervention. Additionally, the automatic creation of aClusterGroupUpgradeCR for anyManagedClusterwithout theztp-donelabel allows a failed ZTP installation to be restarted by simply deleting theClusterGroupUpgradeCR for the cluster.-

The

- Waves

Each policy generated from a

PolicyGenTemplateCR includes aztp-deploy-waveannotation. This annotation is based on the same annotation from each CR which is included in that policy. The wave annotation is used to order the policies in the auto-generatedClusterGroupUpgradeCR. The wave annotation is not used other than for the auto-generatedClusterGroupUpgradeCR.NoteAll CRs in the same policy must have the same setting for the

ztp-deploy-waveannotation. The default value of this annotation for each CR can be overridden in thePolicyGenTemplate. The wave annotation in the source CR is used for determining and setting the policy wave annotation. This annotation is removed from each built CR which is included in the generated policy at runtime.The TALM applies the configuration policies in the order specified by the wave annotations. The TALM waits for each policy to be compliant before moving to the next policy. It is important to ensure that the wave annotation for each CR takes into account any prerequisites for those CRs to be applied to the cluster. For example, an Operator must be installed before or concurrently with the configuration for the Operator. Similarly, the

CatalogSourcefor an Operator must be installed in a wave before or concurrently with the Operator Subscription. The default wave value for each CR takes these prerequisites into account.NoteMultiple CRs and policies can share the same wave number. Having fewer policies can result in faster deployments and lower CPU usage. It is a best practice to group many CRs into relatively few waves.

To check the default wave value in each source CR, run the following command against the

out/source-crsdirectory that is extracted from theztp-site-generatecontainer image:$ grep -r "ztp-deploy-wave" out/source-crs- Phase labels

The

ClusterGroupUpgradeCR is automatically created and includes directives to annotate theManagedClusterCR with labels at the start and end of the ZTP process.When ZTP configuration postinstallation commences, the

ManagedClusterhas theztp-runninglabel applied. When all policies are remediated to the cluster and are fully compliant, these directives cause the TALM to remove theztp-runninglabel and apply theztp-donelabel.For deployments that make use of the

informDuValidatorpolicy, theztp-donelabel is applied when the cluster is fully ready for deployment of applications. This includes all reconciliation and resulting effects of the ZTP applied configuration CRs. Theztp-donelabel affects automaticClusterGroupUpgradeCR creation by TALM. Do not manipulate this label after the initial ZTP installation of the cluster.- Linked CRs

-

The automatically created

ClusterGroupUpgradeCR has the owner reference set as theManagedClusterfrom which it was derived. This reference ensures that deleting theManagedClusterCR causes the instance of theClusterGroupUpgradeto be deleted along with any supporting resources.

19.3.2. Overview of deploying managed clusters with ZTP

Red Hat Advanced Cluster Management (RHACM) uses zero touch provisioning (ZTP) to deploy single-node OpenShift Container Platform clusters, three-node clusters, and standard clusters. You manage site configuration data as OpenShift Container Platform custom resources (CRs) in a Git repository. ZTP uses a declarative GitOps approach for a develop once, deploy anywhere model to deploy the managed clusters.

The deployment of the clusters includes:

- Installing the host operating system (RHCOS) on a blank server

- Deploying OpenShift Container Platform

- Creating cluster policies and site subscriptions

- Making the necessary network configurations to the server operating system

- Deploying profile Operators and performing any needed software-related configuration, such as performance profile, PTP, and SR-IOV

Overview of the managed site installation process

After you apply the managed site custom resources (CRs) on the hub cluster, the following actions happen automatically:

- A Discovery image ISO file is generated and booted on the target host.

- When the ISO file successfully boots on the target host it reports the host hardware information to RHACM.

- After all hosts are discovered, OpenShift Container Platform is installed.

-

When OpenShift Container Platform finishes installing, the hub installs the

klusterletservice on the target cluster. - The requested add-on services are installed on the target cluster.

The Discovery image ISO process is complete when the Agent CR for the managed cluster is created on the hub cluster.

The target bare-metal host must meet the networking, firmware, and hardware requirements listed in Recommended single-node OpenShift cluster configuration for vDU application workloads.

19.3.3. Creating the managed bare-metal host secrets

Add the required Secret custom resources (CRs) for the managed bare-metal host to the hub cluster. You need a secret for the ZTP pipeline to access the Baseboard Management Controller (BMC) and a secret for the assisted installer service to pull cluster installation images from the registry.

The secrets are referenced from the SiteConfig CR by name. The namespace must match the SiteConfig namespace.

Procedure

Create a YAML secret file containing credentials for the host Baseboard Management Controller (BMC) and a pull secret required for installing OpenShift and all add-on cluster Operators:

Save the following YAML as the file

example-sno-secret.yaml:apiVersion: v1 kind: Secret metadata: name: example-sno-bmc-secret namespace: example-sno1 data:2 password: <base64_password> username: <base64_username> type: Opaque --- apiVersion: v1 kind: Secret metadata: name: pull-secret namespace: example-sno3 data: .dockerconfigjson: <pull_secret>4 type: kubernetes.io/dockerconfigjson

-

Add the relative path to

example-sno-secret.yamlto thekustomization.yamlfile that you use to install the cluster.

19.3.4. Configuring Discovery ISO kernel arguments for installations using GitOps ZTP

The GitOps ZTP workflow uses the Discovery ISO as part of the OpenShift Container Platform installation process on managed bare-metal hosts. You can edit the InfraEnv resource to specify kernel arguments for the Discovery ISO. This is useful for cluster installations with specific environmental requirements. For example, configure the rd.net.timeout.carrier kernel argument for the Discovery ISO to facilitate static networking for the cluster or to receive a DHCP address before downloading the root file system during installation.

In OpenShift Container Platform 4.12, you can only add kernel arguments. You can not replace or delete kernel arguments.

Prerequisites

- You have installed the OpenShift CLI (oc).

- You have logged in to the hub cluster as a user with cluster-admin privileges.

Procedure

Create the

InfraEnvCR and edit thespec.kernelArgumentsspecification to configure kernel arguments.Save the following YAML in an

InfraEnv-example.yamlfile:NoteThe

InfraEnvCR in this example uses template syntax such as{{ .Cluster.ClusterName }}that is populated based on values in theSiteConfigCR. TheSiteConfigCR automatically populates values for these templates during deployment. Do not edit the templates manually.apiVersion: agent-install.openshift.io/v1beta1 kind: InfraEnv metadata: annotations: argocd.argoproj.io/sync-wave: "1" name: "{{ .Cluster.ClusterName }}" namespace: "{{ .Cluster.ClusterName }}" spec: clusterRef: name: "{{ .Cluster.ClusterName }}" namespace: "{{ .Cluster.ClusterName }}" kernelArguments: - operation: append1 value: audit=02 - operation: append value: trace=1 sshAuthorizedKey: "{{ .Site.SshPublicKey }}" proxy: "{{ .Cluster.ProxySettings }}" pullSecretRef: name: "{{ .Site.PullSecretRef.Name }}" ignitionConfigOverride: "{{ .Cluster.IgnitionConfigOverride }}" nmStateConfigLabelSelector: matchLabels: nmstate-label: "{{ .Cluster.ClusterName }}" additionalNTPSources: "{{ .Cluster.AdditionalNTPSources }}"

Commit the

InfraEnv-example.yamlCR to the same location in your Git repository that has theSiteConfigCR and push your changes. The following example shows a sample Git repository structure:~/example-ztp/install └── site-install ├── siteconfig-example.yaml ├── InfraEnv-example.yaml ...Edit the

spec.clusters.crTemplatesspecification in theSiteConfigCR to reference theInfraEnv-example.yamlCR in your Git repository:clusters: crTemplates: InfraEnv: "InfraEnv-example.yaml"When you are ready to deploy your cluster by committing and pushing the

SiteConfigCR, the build pipeline uses the customInfraEnv-exampleCR in your Git repository to configure the infrastructure environment, including the custom kernel arguments.

Verification

To verify that the kernel arguments are applied, after the Discovery image verifies that OpenShift Container Platform is ready for installation, you can SSH to the target host before the installation process begins. At that point, you can view the kernel arguments for the Discovery ISO in the /proc/cmdline file.

Begin an SSH session with the target host:

$ ssh -i /path/to/privatekey core@<host_name>View the system’s kernel arguments by using the following command:

$ cat /proc/cmdline

19.3.5. Deploying a managed cluster with SiteConfig and ZTP

Use the following procedure to create a SiteConfig custom resource (CR) and related files and initiate the zero touch provisioning (ZTP) cluster deployment.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges. - You configured the hub cluster for generating the required installation and policy CRs.

You created a Git repository where you manage your custom site configuration data. The repository must be accessible from the hub cluster and you must configure it as a source repository for the ArgoCD application. See "Preparing the GitOps ZTP site configuration repository" for more information.

NoteWhen you create the source repository, ensure that you patch the ArgoCD application with the

argocd/deployment/argocd-openshift-gitops-patch.jsonpatch-file that you extract from theztp-site-generatecontainer. See "Configuring the hub cluster with ArgoCD".To be ready for provisioning managed clusters, you require the following for each bare-metal host:

- Network connectivity

- Your network requires DNS. Managed cluster hosts should be reachable from the hub cluster. Ensure that Layer 3 connectivity exists between the hub cluster and the managed cluster host.

- Baseboard Management Controller (BMC) details

-

ZTP uses BMC username and password details to connect to the BMC during cluster installation. The GitOps ZTP plugin manages the

ManagedClusterCRs on the hub cluster based on theSiteConfigCR in your site Git repo. You create individualBMCSecretCRs for each host manually.

Procedure

Create the required managed cluster secrets on the hub cluster. These resources must be in a namespace with a name matching the cluster name. For example, in

out/argocd/example/siteconfig/example-sno.yaml, the cluster name and namespace isexample-sno.Export the cluster namespace by running the following command:

$ export CLUSTERNS=example-snoCreate the namespace:

$ oc create namespace $CLUSTERNS

Create pull secret and BMC

SecretCRs for the managed cluster. The pull secret must contain all the credentials necessary for installing OpenShift Container Platform and all required Operators. See "Creating the managed bare-metal host secrets" for more information.NoteThe secrets are referenced from the

SiteConfigcustom resource (CR) by name. The namespace must match theSiteConfignamespace.Create a

SiteConfigCR for your cluster in your local clone of the Git repository:Choose the appropriate example for your CR from the

out/argocd/example/siteconfig/folder. The folder includes example files for single node, three-node, and standard clusters:-

example-sno.yaml -

example-3node.yaml -

example-standard.yaml

-

Change the cluster and host details in the example file to match the type of cluster you want. For example:

# example-node1-bmh-secret & assisted-deployment-pull-secret need to be created under same namespace example-sno --- apiVersion: ran.openshift.io/v1 kind: SiteConfig metadata: name: "example-sno" namespace: "example-sno" spec: baseDomain: "example.com" pullSecretRef: name: "assisted-deployment-pull-secret" clusterImageSetNameRef: "openshift-4.10" sshPublicKey: "ssh-rsa AAAA…" clusters: - clusterName: "example-sno" networkType: "OVNKubernetes" # installConfigOverrides is a generic way of passing install-config # parameters through the siteConfig. The 'capabilities' field configures # the composable openshift feature. In this 'capabilities' setting, we # remove all but the marketplace component from the optional set of # components. # Notes: # - OperatorLifecycleManager is needed for 4.15 and later # - NodeTuning is needed for 4.13 and later, not for 4.12 and earlier installConfigOverrides: | { "capabilities": { "baselineCapabilitySet": "None", "additionalEnabledCapabilities": [ "NodeTuning", "OperatorLifecycleManager" ] } } # It is strongly recommended to include crun manifests as part of the additional install-time manifests for 4.13+. # The crun manifests can be obtained from source-crs/optional-extra-manifest/ and added to the git repo ie.sno-extra-manifest. # extraManifestPath: sno-extra-manifest clusterLabels: # These example cluster labels correspond to the bindingRules in the PolicyGenTemplate examples du-profile: "latest" # These example cluster labels correspond to the bindingRules in the PolicyGenTemplate examples in ../policygentemplates: # ../policygentemplates/common-ranGen.yaml will apply to all clusters with 'common: true' common: true # ../policygentemplates/group-du-sno-ranGen.yaml will apply to all clusters with 'group-du-sno: ""' group-du-sno: "" # ../policygentemplates/example-sno-site.yaml will apply to all clusters with 'sites: "example-sno"' # Normally this should match or contain the cluster name so it only applies to a single cluster sites : "example-sno" clusterNetwork: - cidr: 1001:1::/48 hostPrefix: 64 machineNetwork: - cidr: 1111:2222:3333:4444::/64 serviceNetwork: - 1001:2::/112 additionalNTPSources: - 1111:2222:3333:4444::2 # Initiates the cluster for workload partitioning. Setting specific reserved/isolated CPUSets is done via PolicyTemplate # please see Workload Partitioning Feature for a complete guide. cpuPartitioningMode: AllNodes # Optionally; This can be used to override the KlusterletAddonConfig that is created for this cluster: crTemplates: # KlusterletAddonConfig: "KlusterletAddonConfigOverride.yaml" nodes: - hostName: "example-node1.example.com" role: "master" # Optionally; This can be used to configure desired BIOS setting on a host: #biosConfigRef: # filePath: "example-hw.profile" bmcAddress: "idrac-virtualmedia+https://[1111:2222:3333:4444::bbbb:1]/redfish/v1/Systems/System.Embedded.1" bmcCredentialsName: name: "example-node1-bmh-secret" bootMACAddress: "AA:BB:CC:DD:EE:11" # Use UEFISecureBoot to enable secure boot bootMode: "UEFI" rootDeviceHints: deviceName: "/dev/disk/by-path/pci-0000:01:00.0-scsi-0:2:0:0" # disk partition at

/var/lib/containerswith ignitionConfigOverride. Some values must be updated. See DiskPartitionContainer.md for more details ignitionConfigOverride: | { "ignition": { "version": "3.2.0" }, "storage": { "disks": [ { "device": "/dev/disk/by-path/pci-0000:01:00.0-scsi-0:2:0:0", "partitions": [ { "label": "var-lib-containers", "sizeMiB": 0, "startMiB": 250000 } ], "wipeTable": false } ], "filesystems": [ { "device": "/dev/disk/by-partlabel/var-lib-containers", "format": "xfs", "mountOptions": [ "defaults", "prjquota" ], "path": "/var/lib/containers", "wipeFilesystem": true } ] }, "systemd": { "units": [ { "contents": " Generated by Butane\n[Unit]\nRequires=systemd-fsck@dev-disk-by\\x2dpartlabel-var\\x2dlib\\x2dcontainers.service\nAfter=systemd-fsck@dev-disk-by\\x2dpartlabel-var\\x2dlib\\x2dcontainers.service\n\n[Mount]\nWhere=/var/lib/containers\nWhat=/dev/disk/by-partlabel/var-lib-containers\nType=xfs\nOptions=defaults,prjquota\n\n[Install]\nRequiredBy=local-fs.target", "enabled": true, "name": "var-lib-containers.mount" } ] } } nodeNetwork: interfaces: - name: eno1 macAddress: "AA:BB:CC:DD:EE:11" config: interfaces: - name: eno1 type: ethernet state: up ipv4: enabled: false ipv6: enabled: true address: # For SNO sites with static IP addresses, the node-specific, # API and Ingress IPs should all be the same and configured on # the interface - ip: 1111:2222:3333:4444::aaaa:1 prefix-length: 64 dns-resolver: config: search: - example.com server: - 1111:2222:3333:4444::2 routes: config: - destination: ::/0 next-hop-interface: eno1 next-hop-address: 1111:2222:3333:4444::1 table-id: 254+

NoteFor more information about BMC addressing, see the "Additional resources" section. The

installConfigOverridesandignitionConfigOverridefields are expanded in the example for ease of readability.-

You can inspect the default set of extra-manifest

MachineConfigCRs inout/argocd/extra-manifest. It is automatically applied to the cluster when it is installed. -

Optional: To provision additional install-time manifests on the provisioned cluster, create a directory in your Git repository, for example,

sno-extra-manifest/, and add your custom manifest CRs to this directory. If yourSiteConfig.yamlrefers to this directory in theextraManifestPathfield, any CRs in this referenced directory are appended to the default set of extra manifests.

-

Add the

SiteConfigCR to thekustomization.yamlfile in thegeneratorssection, similar to the example shown inout/argocd/example/siteconfig/kustomization.yaml. Commit the

SiteConfigCR and associatedkustomization.yamlchanges in your Git repository and push the changes.The ArgoCD pipeline detects the changes and begins the managed cluster deployment.

19.3.5.1. Single-node OpenShift SiteConfig CR installation reference

| SiteConfig CR field | Description |

|---|---|

|

|

Set |

|

|

Configure the image set available on the hub cluster for all the clusters in the site. To see the list of supported versions on your hub cluster, run |

|

|

Set the Important

Use the reference configuration as specified in the example |

|

|

Configure cluster labels to correspond to the |

|

|

Optional. Set |

|

|

For single-node deployments, define a single host. For three-node deployments, define three hosts. For standard deployments, define three hosts with |

|

| BMC address that you use to access the host. Applies to all cluster types. {ztp} supports iPXE and virtual media booting by using Redfish or IPMI protocols. To use iPXE booting, you must use RHACM 2.8 or later. For more information about BMC addressing, see the "Additional resources" section. |

|

| BMC address that you use to access the host. Applies to all cluster types. {ztp} supports iPXE and virtual media booting by using Redfish or IPMI protocols. To use iPXE booting, you must use RHACM 2.8 or later. For more information about BMC addressing, see the "Additional resources" section. Note In far edge Telco use cases, only virtual media is supported for use with {ztp}. |

|

|

Configure the |

|

|

Set the boot mode for the host to |

|

|

Specifies the device for deployment. Identifiers that are stable across reboots are recommended. For example, |

|

|

Configure |

|

| Configure the network settings for the node. |

|

| Configure the IPv6 address for the host. For single-node OpenShift clusters with static IP addresses, the node-specific API and Ingress IPs should be the same. |

19.3.6. Monitoring managed cluster installation progress

The ArgoCD pipeline uses the SiteConfig CR to generate the cluster configuration CRs and syncs it with the hub cluster. You can monitor the progress of the synchronization in the ArgoCD dashboard.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges.

Procedure

When the synchronization is complete, the installation generally proceeds as follows:

The Assisted Service Operator installs OpenShift Container Platform on the cluster. You can monitor the progress of cluster installation from the RHACM dashboard or from the command line by running the following commands:

Export the cluster name:

$ export CLUSTER=<clusterName>Query the

AgentClusterInstallCR for the managed cluster:$ oc get agentclusterinstall -n $CLUSTER $CLUSTER -o jsonpath='{.status.conditions[?(@.type=="Completed")]}' | jqGet the installation events for the cluster:

$ curl -sk $(oc get agentclusterinstall -n $CLUSTER $CLUSTER -o jsonpath='{.status.debugInfo.eventsURL}') | jq '.[-2,-1]'

19.3.7. Troubleshooting GitOps ZTP by validating the installation CRs

The ArgoCD pipeline uses the SiteConfig and PolicyGenTemplate custom resources (CRs) to generate the cluster configuration CRs and Red Hat Advanced Cluster Management (RHACM) policies. Use the following steps to troubleshoot issues that might occur during this process.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges.

Procedure

Check that the installation CRs were created by using the following command:

$ oc get AgentClusterInstall -n <cluster_name>If no object is returned, use the following steps to troubleshoot the ArgoCD pipeline flow from

SiteConfigfiles to the installation CRs.Verify that the

ManagedClusterCR was generated using theSiteConfigCR on the hub cluster:$ oc get managedclusterIf the

ManagedClusteris missing, check if theclustersapplication failed to synchronize the files from the Git repository to the hub cluster:$ oc describe -n openshift-gitops application clustersCheck for the

Status.Conditionsfield to view the error logs for the managed cluster. For example, setting an invalid value forextraManifestPath:in theSiteConfigCR raises the following error:Status: Conditions: Last Transition Time: 2021-11-26T17:21:39Z Message: rpc error: code = Unknown desc = `kustomize build /tmp/https___git.com/ran-sites/siteconfigs/ --enable-alpha-plugins` failed exit status 1: 2021/11/26 17:21:40 Error could not create extra-manifest ranSite1.extra-manifest3 stat extra-manifest3: no such file or directory 2021/11/26 17:21:40 Error: could not build the entire SiteConfig defined by /tmp/kust-plugin-config-913473579: stat extra-manifest3: no such file or directory Error: failure in plugin configured via /tmp/kust-plugin-config-913473579; exit status 1: exit status 1 Type: ComparisonErrorCheck the

Status.Syncfield. If there are log errors, theStatus.Syncfield could indicate anUnknownerror:Status: Sync: Compared To: Destination: Namespace: clusters-sub Server: https://kubernetes.default.svc Source: Path: sites-config Repo URL: https://git.com/ran-sites/siteconfigs/.git Target Revision: master Status: Unknown

19.3.8. Troubleshooting {ztp} virtual media booting on Supermicro servers

SuperMicro X11 servers do not support virtual media installations when the image is served using the https protocol. As a result, single-node OpenShift deployments for this environment fail to boot on the target node. To avoid this issue, log in to the hub cluster and disable Transport Layer Security (TLS) in the Provisioning resource. This ensures the image is not served with TLS even though the image address uses the https scheme.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges.

Procedure

Disable TLS in the

Provisioningresource by running the following command:$ oc patch provisioning provisioning-configuration --type merge -p '{"spec":{"disableVirtualMediaTLS": true}}'- Continue the steps to deploy your single-node OpenShift cluster.

19.3.9. Removing a managed cluster site from the ZTP pipeline

You can remove a managed site and the associated installation and configuration policy CRs from the ZTP pipeline.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges.

Procedure

Remove a site and the associated CRs by removing the associated

SiteConfigandPolicyGenTemplatefiles from thekustomization.yamlfile.When you run the ZTP pipeline again, the generated CRs are removed.

-

Optional: If you want to permanently remove a site, you should also remove the

SiteConfigand site-specificPolicyGenTemplatefiles from the Git repository. -

Optional: If you want to remove a site temporarily, for example when redeploying a site, you can leave the

SiteConfigand site-specificPolicyGenTemplateCRs in the Git repository.

19.3.10. Removing obsolete content from the ZTP pipeline

If a change to the PolicyGenTemplate configuration results in obsolete policies, for example, if you rename policies, use the following procedure to remove the obsolete policies.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges.

Procedure

-

Remove the affected

PolicyGenTemplatefiles from the Git repository, commit and push to the remote repository. - Wait for the changes to synchronize through the application and the affected policies to be removed from the hub cluster.

Add the updated

PolicyGenTemplatefiles back to the Git repository, and then commit and push to the remote repository.NoteRemoving zero touch provisioning (ZTP) policies from the Git repository, and as a result also removing them from the hub cluster, does not affect the configuration of the managed cluster. The policy and CRs managed by that policy remains in place on the managed cluster.

Optional: As an alternative, after making changes to

PolicyGenTemplateCRs that result in obsolete policies, you can remove these policies from the hub cluster manually. You can delete policies from the RHACM console using the Governance tab or by running the following command:$ oc delete policy -n <namespace> <policy_name>

19.3.11. Tearing down the ZTP pipeline

You can remove the ArgoCD pipeline and all generated ZTP artifacts.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges.

Procedure

- Detach all clusters from Red Hat Advanced Cluster Management (RHACM) on the hub cluster.

Delete the

kustomization.yamlfile in thedeploymentdirectory using the following command:$ oc delete -k out/argocd/deployment- Commit and push your changes to the site repository.

19.4. Configuring managed clusters with policies and PolicyGenTemplate resources

Applied policy custom resources (CRs) configure the managed clusters that you provision. You can customize how Red Hat Advanced Cluster Management (RHACM) uses PolicyGenTemplate CRs to generate the applied policy CRs.

19.4.1. About the PolicyGenTemplate CRD

The PolicyGenTemplate custom resource definition (CRD) tells the PolicyGen policy generator what custom resources (CRs) to include in the cluster configuration, how to combine the CRs into the generated policies, and what items in those CRs need to be updated with overlay content.

The following example shows a PolicyGenTemplate CR (common-du-ranGen.yaml) extracted from the ztp-site-generate reference container. The common-du-ranGen.yaml file defines two Red Hat Advanced Cluster Management (RHACM) policies. The polices manage a collection of configuration CRs, one for each unique value of policyName in the CR. common-du-ranGen.yaml creates a single placement binding and a placement rule to bind the policies to clusters based on the labels listed in the bindingRules section.

Example PolicyGenTemplate CR - common-du-ranGen.yaml

---

apiVersion: ran.openshift.io/v1

kind: PolicyGenTemplate

metadata:

name: "common"

namespace: "ztp-common"

spec:

bindingRules:

common: "true"

sourceFiles:

- fileName: SriovSubscription.yaml

policyName: "subscriptions-policy"

- fileName: SriovSubscriptionNS.yaml

policyName: "subscriptions-policy"

- fileName: SriovSubscriptionOperGroup.yaml

policyName: "subscriptions-policy"

- fileName: SriovOperatorStatus.yaml

policyName: "subscriptions-policy"

- fileName: PtpSubscription.yaml

policyName: "subscriptions-policy"

- fileName: PtpSubscriptionNS.yaml

policyName: "subscriptions-policy"

- fileName: PtpSubscriptionOperGroup.yaml

policyName: "subscriptions-policy"

- fileName: PtpOperatorStatus.yaml

policyName: "subscriptions-policy"

- fileName: ClusterLogNS.yaml

policyName: "subscriptions-policy"

- fileName: ClusterLogOperGroup.yaml

policyName: "subscriptions-policy"

- fileName: ClusterLogSubscription.yaml

policyName: "subscriptions-policy"

- fileName: ClusterLogOperatorStatus.yaml

policyName: "subscriptions-policy"

- fileName: StorageNS.yaml

policyName: "subscriptions-policy"

- fileName: StorageOperGroup.yaml

policyName: "subscriptions-policy"

- fileName: StorageSubscription.yaml

policyName: "subscriptions-policy"

- fileName: StorageOperatorStatus.yaml

policyName: "subscriptions-policy"

- fileName: ReduceMonitoringFootprint.yaml

policyName: "config-policy"

- fileName: OperatorHub.yaml

policyName: "config-policy"

- fileName: DefaultCatsrc.yaml

policyName: "config-policy"

metadata:

name: redhat-operators

spec:

displayName: disconnected-redhat-operators

image: registry.example.com:5000/disconnected-redhat-operators/disconnected-redhat-operator-index:v4.9

- fileName: DisconnectedICSP.yaml

policyName: "config-policy"

spec:

repositoryDigestMirrors:

- mirrors:

- registry.example.com:5000

source: registry.redhat.io- 1

common: "true"applies the policies to all clusters with this label.- 2

- Files listed under

sourceFilescreate the Operator policies for installed clusters. - 3

OperatorHub.yamlconfigures the OperatorHub for the disconnected registry.- 4

DefaultCatsrc.yamlconfigures the catalog source for the disconnected registry.- 5

policyName: "config-policy"configures Operator subscriptions. TheOperatorHubCR disables the default and this CR replacesredhat-operatorswith aCatalogSourceCR that points to the disconnected registry.

A PolicyGenTemplate CR can be constructed with any number of included CRs. Apply the following example CR in the hub cluster to generate a policy containing a single CR:

apiVersion: ran.openshift.io/v1

kind: PolicyGenTemplate

metadata:

name: "group-du-sno"

namespace: "ztp-group"

spec:

bindingRules:

group-du-sno: ""

mcp: "master"

sourceFiles:

- fileName: PtpConfigSlave.yaml

policyName: "config-policy"

metadata:

name: "du-ptp-slave"

spec:

profile:

- name: "slave"

interface: "ens5f0"

ptp4lOpts: "-2 -s --summary_interval -4"

phc2sysOpts: "-a -r -n 24"

Using the source file PtpConfigSlave.yaml as an example, the file defines a PtpConfig CR. The generated policy for the PtpConfigSlave example is named group-du-sno-config-policy. The PtpConfig CR defined in the generated group-du-sno-config-policy is named du-ptp-slave. The spec defined in PtpConfigSlave.yaml is placed under du-ptp-slave along with the other spec items defined under the source file.

The following example shows the group-du-sno-config-policy CR:

apiVersion: policy.open-cluster-management.io/v1

kind: Policy

metadata:

name: group-du-ptp-config-policy

namespace: groups-sub

annotations:

policy.open-cluster-management.io/categories: CM Configuration Management

policy.open-cluster-management.io/controls: CM-2 Baseline Configuration

policy.open-cluster-management.io/standards: NIST SP 800-53

spec:

remediationAction: inform

disabled: false

policy-templates:

- objectDefinition:

apiVersion: policy.open-cluster-management.io/v1

kind: ConfigurationPolicy

metadata:

name: group-du-ptp-config-policy-config

spec:

remediationAction: inform

severity: low

namespaceselector:

exclude:

- kube-*

include:

- '*'

object-templates:

- complianceType: musthave

objectDefinition:

apiVersion: ptp.openshift.io/v1

kind: PtpConfig

metadata:

name: du-ptp-slave

namespace: openshift-ptp

spec:

recommend:

- match:

- nodeLabel: node-role.kubernetes.io/worker-du

priority: 4

profile: slave

profile:

- interface: ens5f0

name: slave

phc2sysOpts: -a -r -n 24

ptp4lConf: |

[global]

#

# Default Data Set

#

twoStepFlag 1

slaveOnly 0

priority1 128

priority2 128

domainNumber 24

.....19.4.2. Recommendations when customizing PolicyGenTemplate CRs

Consider the following best practices when customizing site configuration PolicyGenTemplate custom resources (CRs):

-

Use as few policies as are necessary. Using fewer policies requires less resources. Each additional policy creates overhead for the hub cluster and the deployed managed cluster. CRs are combined into policies based on the

policyNamefield in thePolicyGenTemplateCR. CRs in the samePolicyGenTemplatewhich have the same value forpolicyNameare managed under a single policy. -

In disconnected environments, use a single catalog source for all Operators by configuring the registry as a single index containing all Operators. Each additional

CatalogSourceCR on the managed clusters increases CPU usage. -

MachineConfigCRs should be included asextraManifestsin theSiteConfigCR so that they are applied during installation. This can reduce the overall time taken until the cluster is ready to deploy applications. -

PolicyGenTemplatesshould override the channel field to explicitly identify the desired version. This ensures that changes in the source CR during upgrades does not update the generated subscription.

When managing large numbers of spoke clusters on the hub cluster, minimize the number of policies to reduce resource consumption.

Grouping multiple configuration CRs into a single or limited number of policies is one way to reduce the overall number of policies on the hub cluster. When using the common, group, and site hierarchy of policies for managing site configuration, it is especially important to combine site-specific configuration into a single policy.

19.4.3. PolicyGenTemplate CRs for RAN deployments

Use PolicyGenTemplate (PGT) custom resources (CRs) to customize the configuration applied to the cluster by using the GitOps zero touch provisioning (ZTP) pipeline. The PGT CR allows you to generate one or more policies to manage the set of configuration CRs on your fleet of clusters. The PGT identifies the set of managed CRs, bundles them into policies, builds the policy wrapping around those CRs, and associates the policies with clusters by using label binding rules.

The reference configuration, obtained from the GitOps ZTP container, is designed to provide a set of critical features and node tuning settings that ensure the cluster can support the stringent performance and resource utilization constraints typical of RAN (Radio Access Network) Distributed Unit (DU) applications. Changes or omissions from the baseline configuration can affect feature availability, performance, and resource utilization. Use the reference PolicyGenTemplate CRs as the basis to create a hierarchy of configuration files tailored to your specific site requirements.

The baseline PolicyGenTemplate CRs that are defined for RAN DU cluster configuration can be extracted from the GitOps ZTP ztp-site-generate container. See "Preparing the GitOps ZTP site configuration repository" for further details.

The PolicyGenTemplate CRs can be found in the ./out/argocd/example/policygentemplates folder. The reference architecture has common, group, and site-specific configuration CRs. Each PolicyGenTemplate CR refers to other CRs that can be found in the ./out/source-crs folder.

The PolicyGenTemplate CRs relevant to RAN cluster configuration are described below. Variants are provided for the group PolicyGenTemplate CRs to account for differences in single-node, three-node compact, and standard cluster configurations. Similarly, site-specific configuration variants are provided for single-node clusters and multi-node (compact or standard) clusters. Use the group and site-specific configuration variants that are relevant for your deployment.

| PolicyGenTemplate CR | Description |

|---|---|

|

| Contains a set of CRs that get applied to multi-node clusters. These CRs configure SR-IOV features typical for RAN installations. |

|

| Contains a set of CRs that get applied to single-node OpenShift clusters. These CRs configure SR-IOV features typical for RAN installations. |

|

| Contains a set of common RAN CRs that get applied to all clusters. These CRs subscribe to a set of operators providing cluster features typical for RAN as well as baseline cluster tuning. |

|

| Contains the RAN policies for three-node clusters only. |

|

| Contains the RAN policies for single-node clusters only. |

|

| Contains the RAN policies for standard three control-plane clusters. |

|

|

|

|

|

|

|

|

|

19.4.4. Customizing a managed cluster with PolicyGenTemplate CRs

Use the following procedure to customize the policies that get applied to the managed cluster that you provision using the zero touch provisioning (ZTP) pipeline.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges. - You configured the hub cluster for generating the required installation and policy CRs.

- You created a Git repository where you manage your custom site configuration data. The repository must be accessible from the hub cluster and be defined as a source repository for the Argo CD application.

Procedure

Create a

PolicyGenTemplateCR for site-specific configuration CRs.-

Choose the appropriate example for your CR from the

out/argocd/example/policygentemplatesfolder, for example,example-sno-site.yamlorexample-multinode-site.yaml. Change the

bindingRulesfield in the example file to match the site-specific label included in theSiteConfigCR. In the exampleSiteConfigfile, the site-specific label issites: example-sno.NoteEnsure that the labels defined in your

PolicyGenTemplatebindingRulesfield correspond to the labels that are defined in the related managed clustersSiteConfigCR.- Change the content in the example file to match the desired configuration.

-

Choose the appropriate example for your CR from the

Optional: Create a

PolicyGenTemplateCR for any common configuration CRs that apply to the entire fleet of clusters.-

Select the appropriate example for your CR from the

out/argocd/example/policygentemplatesfolder, for example,common-ranGen.yaml. - Change the content in the example file to match the desired configuration.

-

Select the appropriate example for your CR from the

Optional: Create a

PolicyGenTemplateCR for any group configuration CRs that apply to the certain groups of clusters in the fleet.Ensure that the content of the overlaid spec files matches your desired end state. As a reference, the out/source-crs directory contains the full list of source-crs available to be included and overlaid by your PolicyGenTemplate templates.

NoteDepending on the specific requirements of your clusters, you might need more than a single group policy per cluster type, especially considering that the example group policies each have a single PerformancePolicy.yaml file that can only be shared across a set of clusters if those clusters consist of identical hardware configurations.

-

Select the appropriate example for your CR from the

out/argocd/example/policygentemplatesfolder, for example,group-du-sno-ranGen.yaml. - Change the content in the example file to match the desired configuration.

-

Select the appropriate example for your CR from the

-

Optional. Create a validator inform policy

PolicyGenTemplateCR to signal when the ZTP installation and configuration of the deployed cluster is complete. For more information, see "Creating a validator inform policy". Define all the policy namespaces in a YAML file similar to the example

out/argocd/example/policygentemplates/ns.yamlfile.ImportantDo not include the

NamespaceCR in the same file with thePolicyGenTemplateCR.-

Add the

PolicyGenTemplateCRs andNamespaceCR to thekustomization.yamlfile in the generators section, similar to the example shown inout/argocd/example/policygentemplates/kustomization.yaml. Commit the

PolicyGenTemplateCRs,NamespaceCR, and associatedkustomization.yamlfile in your Git repository and push the changes.The ArgoCD pipeline detects the changes and begins the managed cluster deployment. You can push the changes to the

SiteConfigCR and thePolicyGenTemplateCR simultaneously.

19.4.5. Monitoring managed cluster policy deployment progress

The ArgoCD pipeline uses PolicyGenTemplate CRs in Git to generate the RHACM policies and then sync them to the hub cluster. You can monitor the progress of the managed cluster policy synchronization after the assisted service installs OpenShift Container Platform on the managed cluster.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have logged in to the hub cluster as a user with

cluster-adminprivileges.

Procedure

The Topology Aware Lifecycle Manager (TALM) applies the configuration policies that are bound to the cluster.

After the cluster installation is complete and the cluster becomes

Ready, aClusterGroupUpgradeCR corresponding to this cluster, with a list of ordered policies defined by theran.openshift.io/ztp-deploy-wave annotations, is automatically created by the TALM. The cluster’s policies are applied in the order listed inClusterGroupUpgradeCR.You can monitor the high-level progress of configuration policy reconciliation by using the following commands:

$ export CLUSTER=<clusterName>$ oc get clustergroupupgrades -n ztp-install $CLUSTER -o jsonpath='{.status.conditions[-1:]}' | jqExample output

{ "lastTransitionTime": "2022-11-09T07:28:09Z", "message": "Remediating non-compliant policies", "reason": "InProgress", "status": "True", "type": "Progressing" }You can monitor the detailed cluster policy compliance status by using the RHACM dashboard or the command line.

To check policy compliance by using

oc, run the following command:$ oc get policies -n $CLUSTERExample output

NAME REMEDIATION ACTION COMPLIANCE STATE AGE ztp-common.common-config-policy inform Compliant 3h42m ztp-common.common-subscriptions-policy inform NonCompliant 3h42m ztp-group.group-du-sno-config-policy inform NonCompliant 3h42m ztp-group.group-du-sno-validator-du-policy inform NonCompliant 3h42m ztp-install.example1-common-config-policy-pjz9s enforce Compliant 167m ztp-install.example1-common-subscriptions-policy-zzd9k enforce NonCompliant 164m ztp-site.example1-config-policy inform NonCompliant 3h42m ztp-site.example1-perf-policy inform NonCompliant 3h42mTo check policy status from the RHACM web console, perform the following actions:

-

Click Governance

Find policies. - Click on a cluster policy to check it’s status.

-

Click Governance

When all of the cluster policies become compliant, ZTP installation and configuration for the cluster is complete. The ztp-done label is added to the cluster.

In the reference configuration, the final policy that becomes compliant is the one defined in the *-du-validator-policy policy. This policy, when compliant on a cluster, ensures that all cluster configuration, Operator installation, and Operator configuration is complete.

19.4.6. Validating the generation of configuration policy CRs

Policy custom resources (CRs) are generated in the same namespace as the PolicyGenTemplate from which they are created. The same troubleshooting flow applies to all policy CRs generated from a PolicyGenTemplate regardless of whether they are ztp-common, ztp-group, or ztp-site based, as shown using the following commands:

$ export NS=<namespace>$ oc get policy -n $NSThe expected set of policy-wrapped CRs should be displayed.

If the policies failed synchronization, use the following troubleshooting steps.

Procedure

To display detailed information about the policies, run the following command:

$ oc describe -n openshift-gitops application policiesCheck for

Status: Conditions:to show the error logs. For example, setting an invalidsourceFile→fileName:generates the error shown below:Status: Conditions: Last Transition Time: 2021-11-26T17:21:39Z Message: rpc error: code = Unknown desc = `kustomize build /tmp/https___git.com/ran-sites/policies/ --enable-alpha-plugins` failed exit status 1: 2021/11/26 17:21:40 Error could not find test.yaml under source-crs/: no such file or directory Error: failure in plugin configured via /tmp/kust-plugin-config-52463179; exit status 1: exit status 1 Type: ComparisonErrorCheck for

Status: Sync:. If there are log errors atStatus: Conditions:, theStatus: Sync:showsUnknownorError:Status: Sync: Compared To: Destination: Namespace: policies-sub Server: https://kubernetes.default.svc Source: Path: policies Repo URL: https://git.com/ran-sites/policies/.git Target Revision: master Status: ErrorWhen Red Hat Advanced Cluster Management (RHACM) recognizes that policies apply to a

ManagedClusterobject, the policy CR objects are applied to the cluster namespace. Check to see if the policies were copied to the cluster namespace:$ oc get policy -n $CLUSTERExample output:

NAME REMEDIATION ACTION COMPLIANCE STATE AGE ztp-common.common-config-policy inform Compliant 13d ztp-common.common-subscriptions-policy inform Compliant 13d ztp-group.group-du-sno-config-policy inform Compliant 13d Ztp-group.group-du-sno-validator-du-policy inform Compliant 13d ztp-site.example-sno-config-policy inform Compliant 13dRHACM copies all applicable policies into the cluster namespace. The copied policy names have the format:

<policyGenTemplate.Namespace>.<policyGenTemplate.Name>-<policyName>.Check the placement rule for any policies not copied to the cluster namespace. The

matchSelectorin thePlacementRulefor those policies should match labels on theManagedClusterobject:$ oc get placementrule -n $NSNote the

PlacementRulename appropriate for the missing policy, common, group, or site, using the following command:$ oc get placementrule -n $NS <placementRuleName> -o yaml- The status-decisions should include your cluster name.

-

The key-value pair of the

matchSelectorin the spec must match the labels on your managed cluster.

Check the labels on the

ManagedClusterobject using the following command:$ oc get ManagedCluster $CLUSTER -o jsonpath='{.metadata.labels}' | jqCheck to see which policies are compliant using the following command:

$ oc get policy -n $CLUSTERIf the

Namespace,OperatorGroup, andSubscriptionpolicies are compliant but the Operator configuration policies are not, it is likely that the Operators did not install on the managed cluster. This causes the Operator configuration policies to fail to apply because the CRD is not yet applied to the spoke.

19.4.7. Restarting policy reconciliation

You can restart policy reconciliation when unexpected compliance issues occur, for example, when the ClusterGroupUpgrade custom resource (CR) has timed out.

Procedure

A

ClusterGroupUpgradeCR is generated in the namespaceztp-installby the Topology Aware Lifecycle Manager after the managed cluster becomesReady:$ export CLUSTER=<clusterName>$ oc get clustergroupupgrades -n ztp-install $CLUSTERIf there are unexpected issues and the policies fail to become complaint within the configured timeout (the default is 4 hours), the status of the

ClusterGroupUpgradeCR showsUpgradeTimedOut:$ oc get clustergroupupgrades -n ztp-install $CLUSTER -o jsonpath='{.status.conditions[?(@.type=="Ready")]}'A

ClusterGroupUpgradeCR in theUpgradeTimedOutstate automatically restarts its policy reconciliation every hour. If you have changed your policies, you can start a retry immediately by deleting the existingClusterGroupUpgradeCR. This triggers the automatic creation of a newClusterGroupUpgradeCR that begins reconciling the policies immediately:$ oc delete clustergroupupgrades -n ztp-install $CLUSTER

Note that when the ClusterGroupUpgrade CR completes with status UpgradeCompleted and the managed cluster has the label ztp-done applied, you can make additional configuration changes using PolicyGenTemplate. Deleting the existing ClusterGroupUpgrade CR will not make the TALM generate a new CR.

At this point, ZTP has completed its interaction with the cluster and any further interactions should be treated as an update and a new ClusterGroupUpgrade CR created for remediation of the policies.

19.4.8. Changing applied managed cluster CRs using policies

You can remove content from a custom resource (CR) that is deployed in a managed cluster through a policy.

By default, all Policy CRs created from a PolicyGenTemplate CR have the complianceType field set to musthave. A musthave policy without the removed content is still compliant because the CR on the managed cluster has all the specified content. With this configuration, when you remove content from a CR, TALM removes the content from the policy but the content is not removed from the CR on the managed cluster.

With the complianceType field to mustonlyhave, the policy ensures that the CR on the cluster is an exact match of what is specified in the policy.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -