Questo contenuto non è disponibile nella lingua selezionata.

Chapter 14. Deploying installer-provisioned clusters on bare metal

14.1. Overview

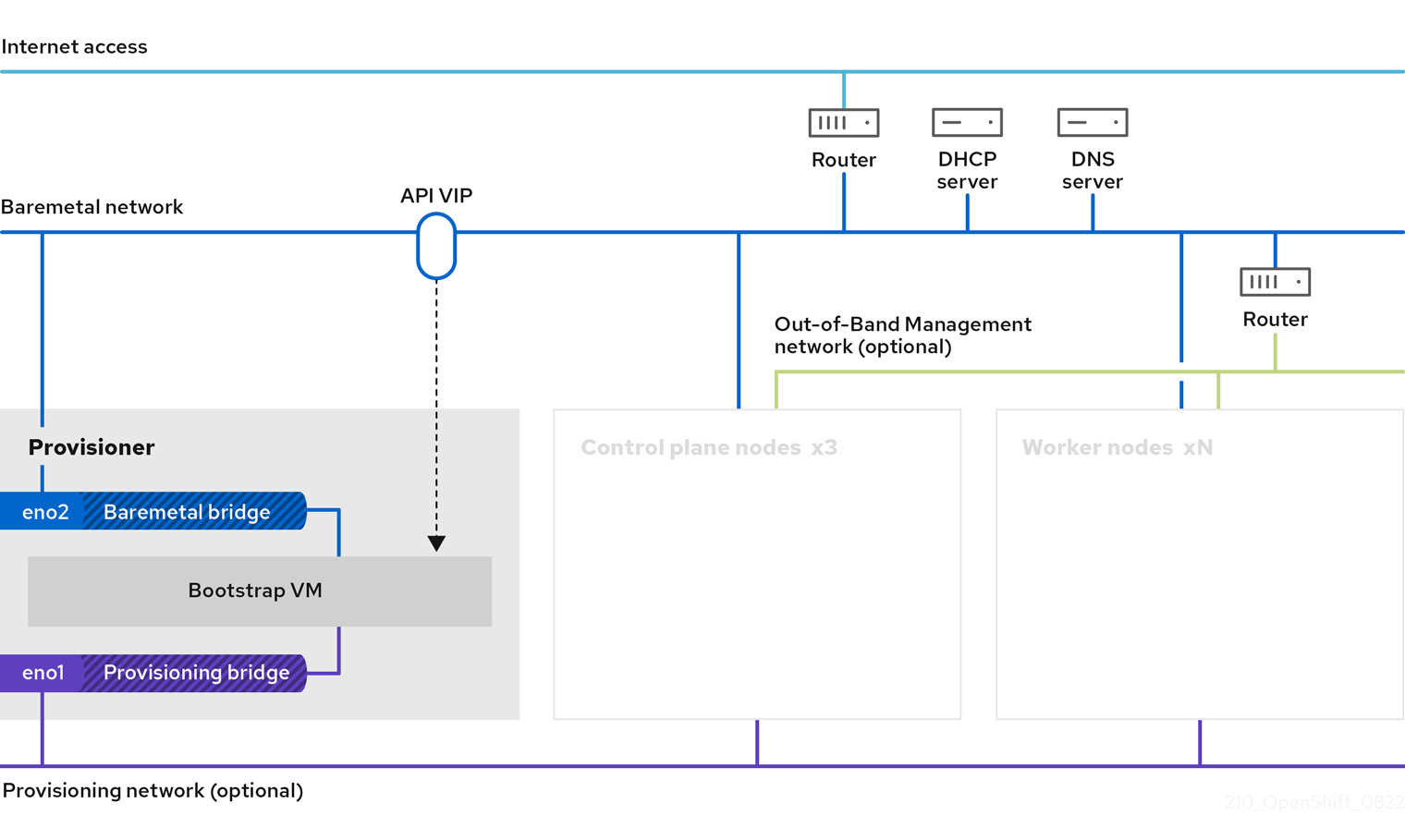

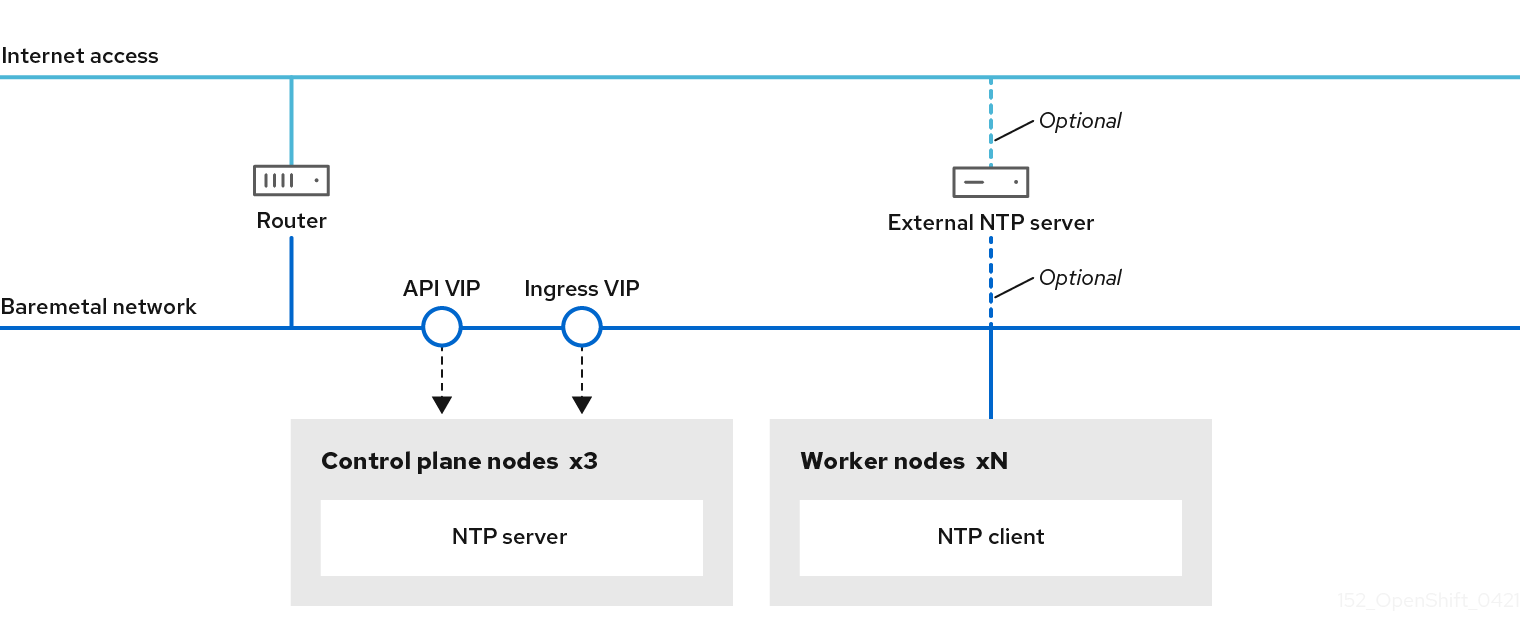

Installer-provisioned installation on bare metal nodes deploys and configures the infrastructure that an OpenShift Container Platform cluster runs on. This guide provides a methodology to achieving a successful installer-provisioned bare-metal installation. The following diagram illustrates the installation environment in phase 1 of deployment:

For the installation, the key elements in the previous diagram are:

- Provisioner: A physical machine that runs the installation program and hosts the bootstrap VM that deploys the control plane of a new OpenShift Container Platform cluster.

- Bootstrap VM: A virtual machine used in the process of deploying an OpenShift Container Platform cluster.

-

Network bridges: The bootstrap VM connects to the bare metal network and to the provisioning network, if present, via network bridges,

eno1andeno2. -

API VIP: An API virtual IP address (VIP) is used to provide failover of the API server across the control plane nodes. The API VIP first resides on the bootstrap VM. A script generates the

keepalived.confconfiguration file before launching the service. The VIP moves to one of the control plane nodes after the bootstrap process has completed and the bootstrap VM stops.

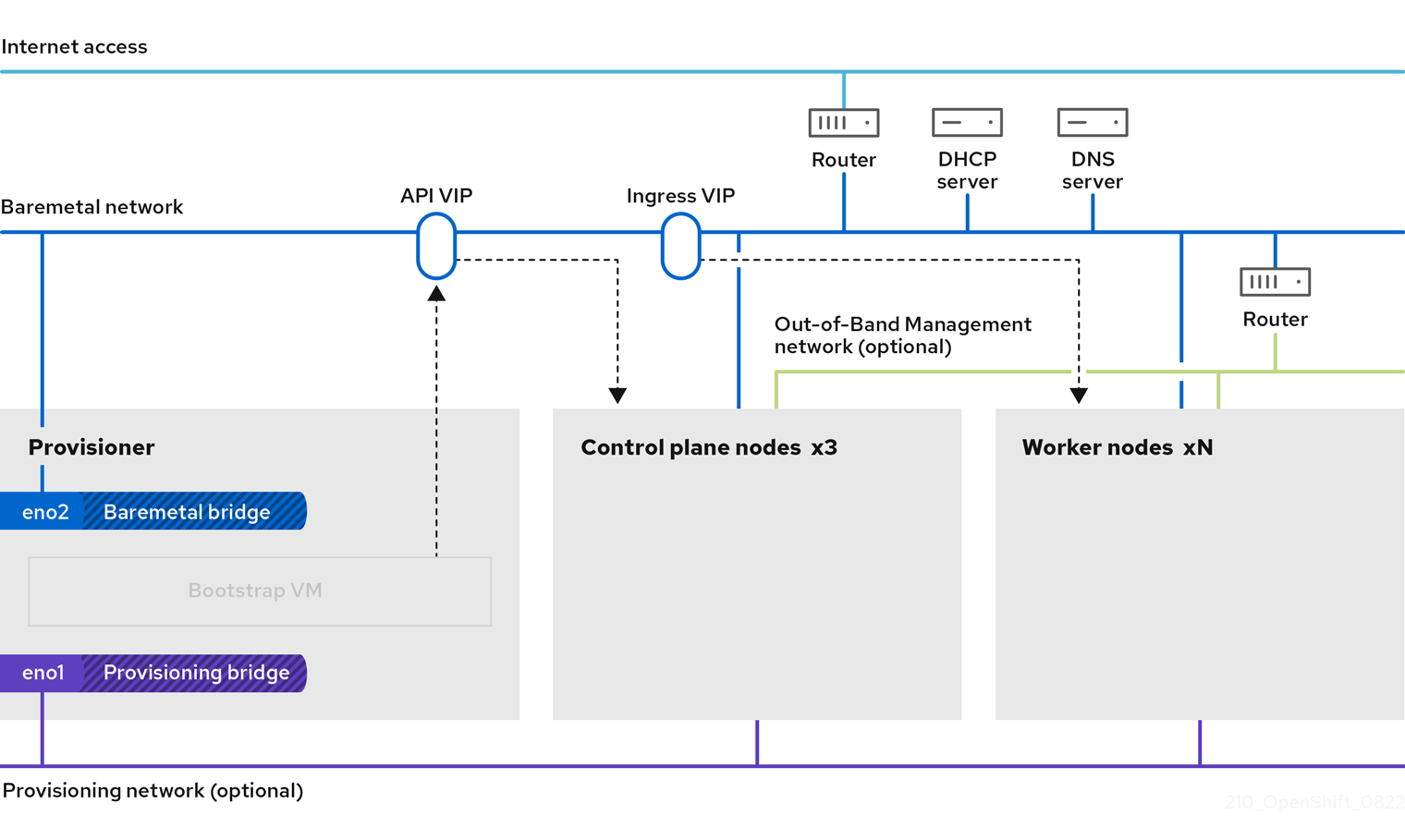

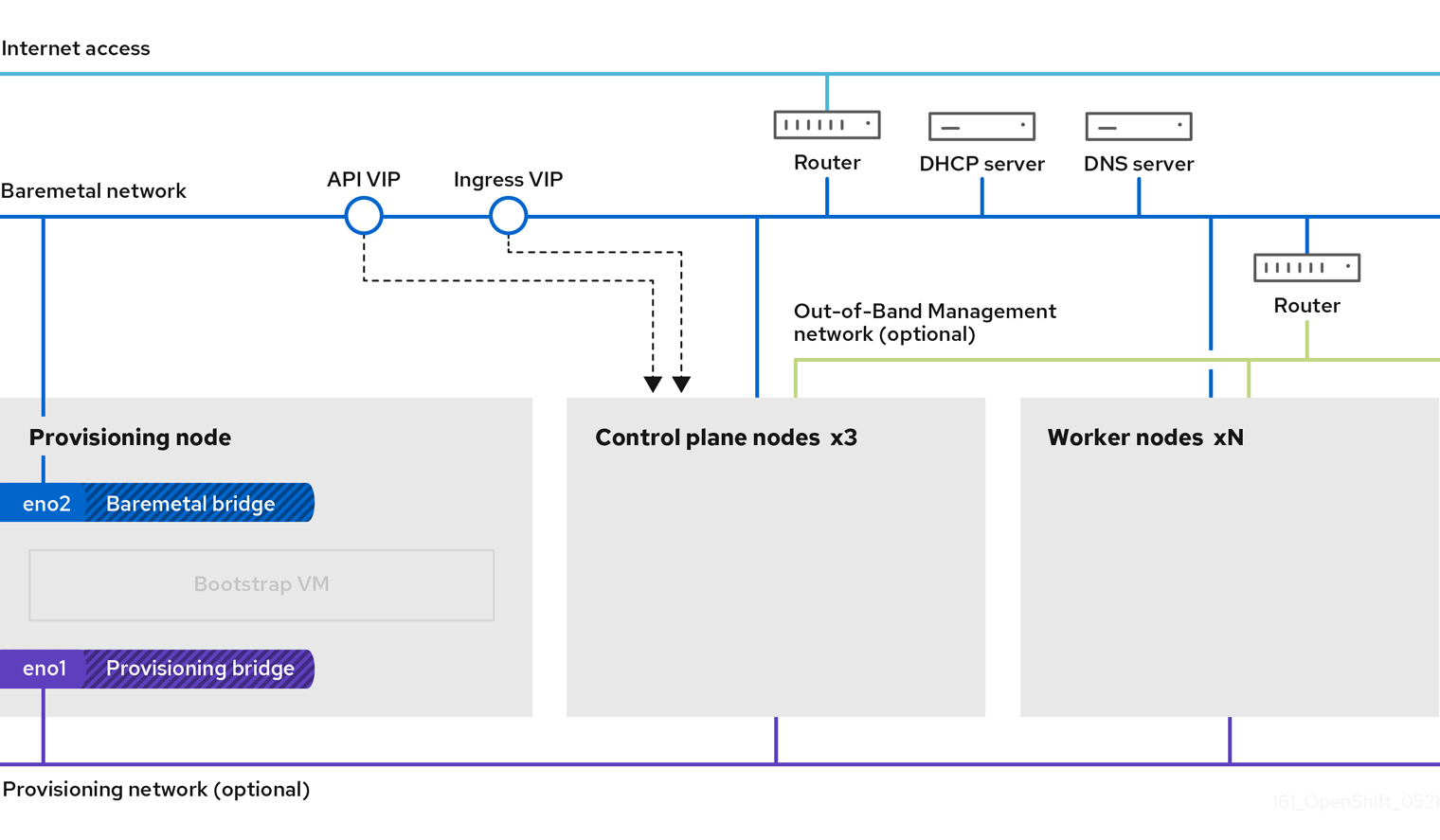

In phase 2 of the deployment, the provisioner destroys the bootstrap VM automatically and moves the virtual IP addresses (VIPs) to the appropriate nodes.

The keepalived.conf file sets the control plane machines with a lower Virtual Router Redundancy Protocol (VRRP) priority than the bootstrap VM, which ensures that the API on the control plane machines is fully functional before the API VIP moves from the bootstrap VM to the control plane. Once the API VIP moves to one of the control plane nodes, traffic sent from external clients to the API VIP routes to an haproxy load balancer running on that control plane node. This instance of haproxy load balances the API VIP traffic across the control plane nodes.

The Ingress VIP moves to the worker nodes. The keepalived instance also manages the Ingress VIP.

The following diagram illustrates phase 2 of deployment:

After this point, the node used by the provisioner can be removed or repurposed. From here, all additional provisioning tasks are carried out by the control plane.

The provisioning network is optional, but it is required for PXE booting. If you deploy without a provisioning network, you must use a virtual media baseboard management controller (BMC) addressing option such as redfish-virtualmedia or idrac-virtualmedia.

14.2. Prerequisites

Installer-provisioned installation of OpenShift Container Platform requires:

- One provisioner node with Red Hat Enterprise Linux (RHEL) 8.x installed. The provisioner can be removed after installation.

- Three control plane nodes

- Baseboard management controller (BMC) access to each node

At least one network:

- One required routable network

- One optional provisioning network

- One optional management network

Before starting an installer-provisioned installation of OpenShift Container Platform, ensure the hardware environment meets the following requirements.

14.2.1. Node requirements

Installer-provisioned installation involves a number of hardware node requirements:

- CPU architecture: All nodes must use x86_64 or AArch64 CPU architecture.

- Similar nodes: Red Hat recommends nodes have an identical configuration per role. That is, Red Hat recommends nodes be the same brand and model with the same CPU, memory, and storage configuration.

-

Baseboard Management Controller: The

provisionernode must be able to access the baseboard management controller (BMC) of each OpenShift Container Platform cluster node. You may use IPMI, Redfish, or a proprietary protocol. -

Latest generation: Nodes must be of the most recent generation. Installer-provisioned installation relies on BMC protocols, which must be compatible across nodes. Additionally, RHEL 8 ships with the most recent drivers for RAID controllers. Ensure that the nodes are recent enough to support RHEL 8 for the

provisionernode and RHCOS 8 for the control plane and worker nodes. - Registry node: (Optional) If setting up a disconnected mirrored registry, it is recommended the registry reside in its own node.

-

Provisioner node: Installer-provisioned installation requires one

provisionernode. - Control plane: Installer-provisioned installation requires three control plane nodes for high availability. You can deploy an OpenShift Container Platform cluster with only three control plane nodes, making the control plane nodes schedulable as worker nodes. Smaller clusters are more resource efficient for administrators and developers during development, production, and testing.

Worker nodes: While not required, a typical production cluster has two or more worker nodes.

ImportantDo not deploy a cluster with only one worker node, because the cluster will deploy with routers and ingress traffic in a degraded state.

-

Network interfaces: Each node must have at least one network interface for the routable

baremetalnetwork. Each node must have one network interface for aprovisioningnetwork when using theprovisioningnetwork for deployment. Using theprovisioningnetwork is the default configuration. Unified Extensible Firmware Interface (UEFI): Installer-provisioned installation requires UEFI boot on all OpenShift Container Platform nodes when using IPv6 addressing on the

provisioningnetwork. In addition, UEFI Device PXE Settings must be set to use the IPv6 protocol on theprovisioningnetwork NIC, but omitting theprovisioningnetwork removes this requirement.ImportantWhen starting the installation from virtual media such as an ISO image, delete all old UEFI boot table entries. If the boot table includes entries that are not generic entries provided by the firmware, the installation might fail.

Secure Boot: Many production scenarios require nodes with Secure Boot enabled to verify the node only boots with trusted software, such as UEFI firmware drivers, EFI applications, and the operating system. You may deploy with Secure Boot manually or managed.

- Manually: To deploy an OpenShift Container Platform cluster with Secure Boot manually, you must enable UEFI boot mode and Secure Boot on each control plane node and each worker node. Red Hat supports Secure Boot with manually enabled UEFI and Secure Boot only when installer-provisioned installations use Redfish virtual media. See "Configuring nodes for Secure Boot manually" in the "Configuring nodes" section for additional details.

Managed: To deploy an OpenShift Container Platform cluster with managed Secure Boot, you must set the

bootModevalue toUEFISecureBootin theinstall-config.yamlfile. Red Hat only supports installer-provisioned installation with managed Secure Boot on 10th generation HPE hardware and 13th generation Dell hardware running firmware version2.75.75.75or greater. Deploying with managed Secure Boot does not require Redfish virtual media. See "Configuring managed Secure Boot" in the "Setting up the environment for an OpenShift installation" section for details.NoteRed Hat does not support Secure Boot with self-generated keys.

14.2.2. Planning a bare metal cluster for OpenShift Virtualization

If you will use OpenShift Virtualization, it is important to be aware of several requirements before you install your bare metal cluster.

If you want to use live migration features, you must have multiple worker nodes at the time of cluster installation. This is because live migration requires the cluster-level high availability (HA) flag to be set to true. The HA flag is set when a cluster is installed and cannot be changed afterwards. If there are fewer than two worker nodes defined when you install your cluster, the HA flag is set to false for the life of the cluster.

NoteYou can install OpenShift Virtualization on a single-node cluster, but single-node OpenShift does not support high availability.

- Live migration requires shared storage. Storage for OpenShift Virtualization must support and use the ReadWriteMany (RWX) access mode.

- If you plan to use Single Root I/O Virtualization (SR-IOV), ensure that your network interface controllers (NICs) are supported by OpenShift Container Platform.

14.2.3. Firmware requirements for installing with virtual media

The installation program for installer-provisioned OpenShift Container Platform clusters validates the hardware and firmware compatibility with Redfish virtual media. The installation program does not begin installation on a node if the node firmware is not compatible. The following tables list the minimum firmware versions tested and verified to work for installer-provisioned OpenShift Container Platform clusters deployed by using Redfish virtual media.

Red Hat does not test every combination of firmware, hardware, or other third-party components. For further information about third-party support, see Red Hat third-party support policy. For information about updating the firmware, see the hardware documentation for the nodes or contact the hardware vendor.

| Model | Management | Firmware versions |

|---|---|---|

| 10th Generation | iLO5 | 2.63 or later |

| Model | Management | Firmware versions |

|---|---|---|

| 15th Generation | iDRAC 9 | v5.10.00.00 - v5.10.50.00 only |

| 14th Generation | iDRAC 9 | v5.10.00.00 - v5.10.50.00 only |

| 13th Generation | iDRAC 8 | v2.75.75.75 or later |

For Dell servers, ensure the OpenShift Container Platform cluster nodes have AutoAttach enabled through the iDRAC console. The menu path is Configuration 04.40.00.00 and all releases up to including the 5.xx series, the virtual console plugin defaults to eHTML5, an enhanced version of HTML5, which causes problems with the InsertVirtualMedia workflow. Set the plugin to use HTML5 to avoid this issue. The menu path is Configuration

14.2.4. Network requirements

Installer-provisioned installation of OpenShift Container Platform involves several network requirements. First, installer-provisioned installation involves an optional non-routable provisioning network for provisioning the operating system on each bare metal node. Second, installer-provisioned installation involves a routable baremetal network.

14.2.4.1. Increase the network MTU

Before deploying OpenShift Container Platform, increase the network maximum transmission unit (MTU) to 1500 or more. If the MTU is lower than 1500, the Ironic image that is used to boot the node might fail to communicate with the Ironic inspector pod, and inspection will fail. If this occurs, installation stops because the nodes are not available for installation.

14.2.4.2. Configuring NICs

OpenShift Container Platform deploys with two networks:

provisioning: Theprovisioningnetwork is an optional non-routable network used for provisioning the underlying operating system on each node that is a part of the OpenShift Container Platform cluster. The network interface for theprovisioningnetwork on each cluster node must have the BIOS or UEFI configured to PXE boot.The

provisioningNetworkInterfaceconfiguration setting specifies theprovisioningnetwork NIC name on the control plane nodes, which must be identical on the control plane nodes. ThebootMACAddressconfiguration setting provides a means to specify a particular NIC on each node for theprovisioningnetwork.The

provisioningnetwork is optional, but it is required for PXE booting. If you deploy without aprovisioningnetwork, you must use a virtual media BMC addressing option such asredfish-virtualmediaoridrac-virtualmedia.-

baremetal: Thebaremetalnetwork is a routable network. You can use any NIC to interface with thebaremetalnetwork provided the NIC is not configured to use theprovisioningnetwork.

When using a VLAN, each NIC must be on a separate VLAN corresponding to the appropriate network.

14.2.4.3. DNS requirements

Clients access the OpenShift Container Platform cluster nodes over the baremetal network. A network administrator must configure a subdomain or subzone where the canonical name extension is the cluster name.

<cluster_name>.<base_domain>For example:

test-cluster.example.comOpenShift Container Platform includes functionality that uses cluster membership information to generate A/AAAA records. This resolves the node names to their IP addresses. After the nodes are registered with the API, the cluster can disperse node information without using CoreDNS-mDNS. This eliminates the network traffic associated with multicast DNS.

In OpenShift Container Platform deployments, DNS name resolution is required for the following components:

- The Kubernetes API

- The OpenShift Container Platform application wildcard ingress API

A/AAAA records are used for name resolution and PTR records are used for reverse name resolution. Red Hat Enterprise Linux CoreOS (RHCOS) uses the reverse records or DHCP to set the hostnames for all the nodes.

Installer-provisioned installation includes functionality that uses cluster membership information to generate A/AAAA records. This resolves the node names to their IP addresses. In each record, <cluster_name> is the cluster name and <base_domain> is the base domain that you specify in the install-config.yaml file. A complete DNS record takes the form: <component>.<cluster_name>.<base_domain>..

| Component | Record | Description |

|---|---|---|

| Kubernetes API |

| An A/AAAA record and a PTR record identify the API load balancer. These records must be resolvable by both clients external to the cluster and from all the nodes within the cluster. |

| Routes |

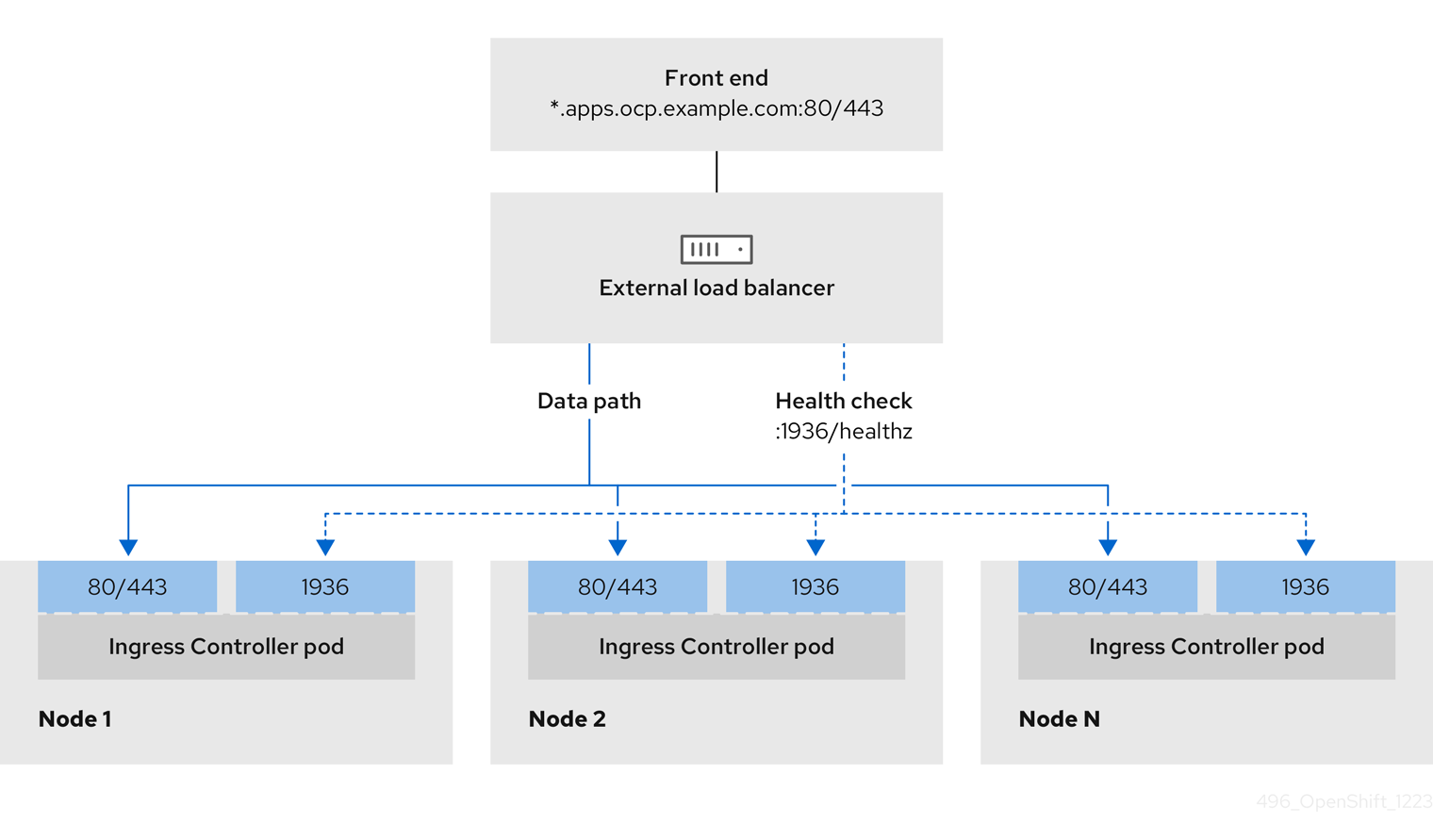

| The wildcard A/AAAA record refers to the application ingress load balancer. The application ingress load balancer targets the nodes that run the Ingress Controller pods. The Ingress Controller pods run on the worker nodes by default. These records must be resolvable by both clients external to the cluster and from all the nodes within the cluster.

For example, |

You can use the dig command to verify DNS resolution.

14.2.4.4. Dynamic Host Configuration Protocol (DHCP) requirements

By default, installer-provisioned installation deploys ironic-dnsmasq with DHCP enabled for the provisioning network. No other DHCP servers should be running on the provisioning network when the provisioningNetwork configuration setting is set to managed, which is the default value. If you have a DHCP server running on the provisioning network, you must set the provisioningNetwork configuration setting to unmanaged in the install-config.yaml file.

Network administrators must reserve IP addresses for each node in the OpenShift Container Platform cluster for the baremetal network on an external DHCP server.

14.2.4.5. Reserving IP addresses for nodes with the DHCP server

For the baremetal network, a network administrator must reserve a number of IP addresses, including:

Two unique virtual IP addresses.

- One virtual IP address for the API endpoint.

- One virtual IP address for the wildcard ingress endpoint.

- One IP address for the provisioner node.

- One IP address for each control plane node.

- One IP address for each worker node, if applicable.

Some administrators prefer to use static IP addresses so that each node’s IP address remains constant in the absence of a DHCP server. To configure static IP addresses with NMState, see "(Optional) Configuring host network interfaces" in the "Setting up the environment for an OpenShift installation" section.

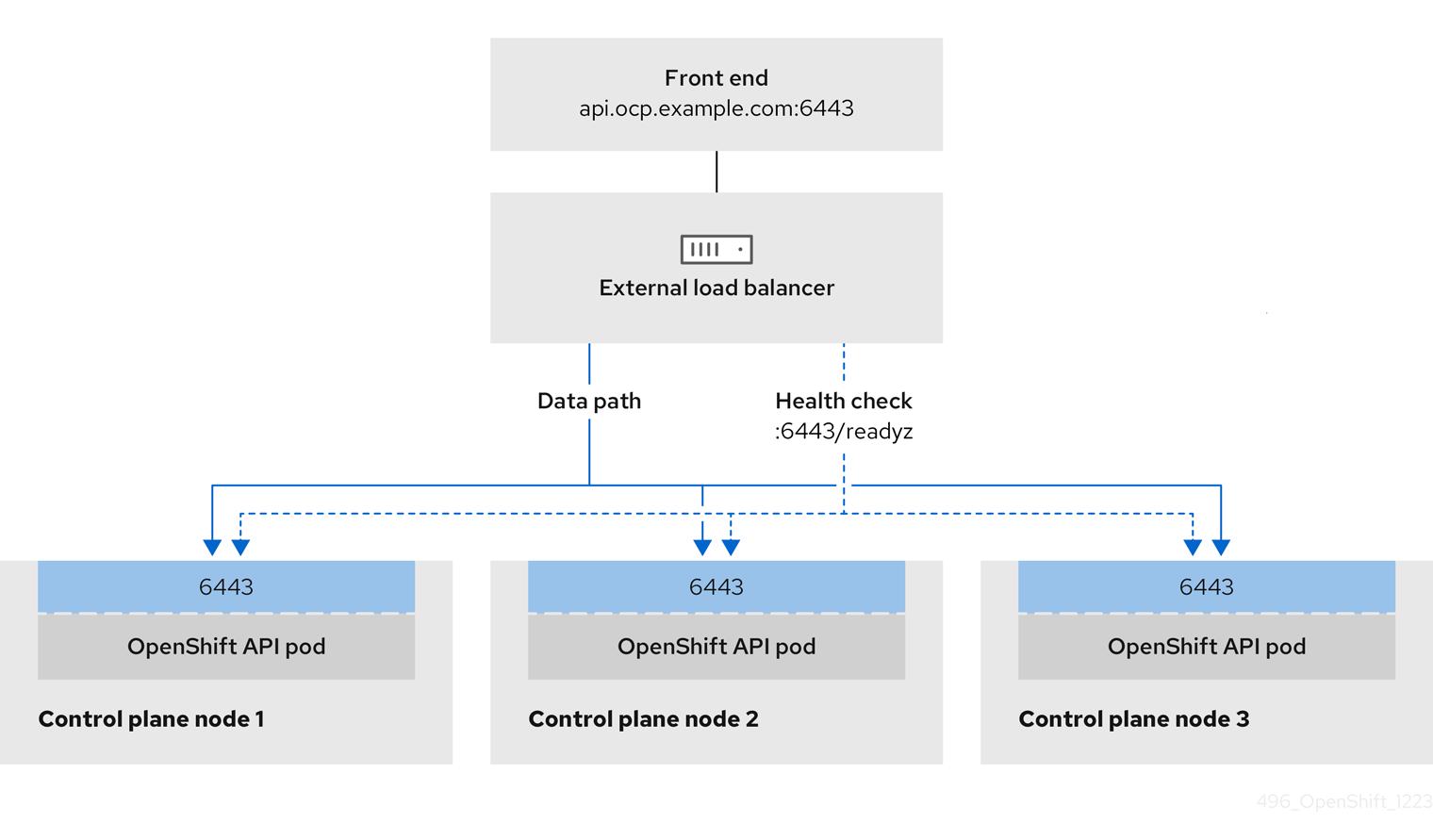

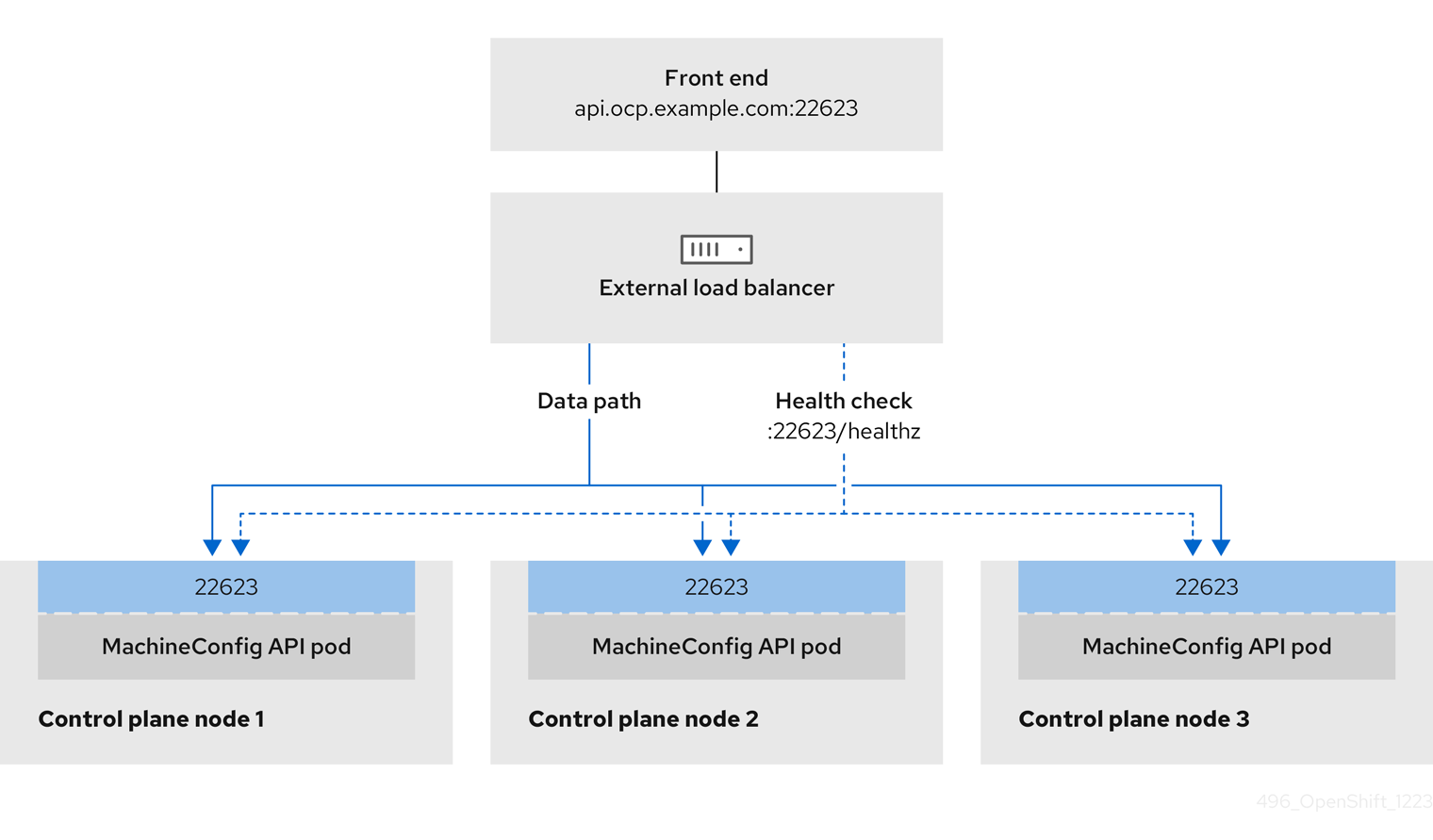

External load balancing services and the control plane nodes must run on the same L2 network, and on the same VLAN when using VLANs to route traffic between the load balancing services and the control plane nodes.

The storage interface requires a DHCP reservation or a static IP.

The following table provides an exemplary embodiment of fully qualified domain names. The API and Nameserver addresses begin with canonical name extensions. The hostnames of the control plane and worker nodes are exemplary, so you can use any host naming convention you prefer.

| Usage | Host Name | IP |

|---|---|---|

| API |

|

|

| Ingress LB (apps) |

|

|

| Provisioner node |

|

|

| Control-plane-0 |

|

|

| Control-plane-1 |

|

|

| Control-plane-2 |

|

|

| Worker-0 |

|

|

| Worker-1 |

|

|

| Worker-n |

|

|

If you do not create DHCP reservations, the installer requires reverse DNS resolution to set the hostnames for the Kubernetes API node, the provisioner node, the control plane nodes, and the worker nodes.

14.2.4.6. Provisioner node requirements

You must specify the MAC address for the provisioner node in your installation configuration. The bootMacAddress specification is typically associated with PXE network booting. However, the Ironic provisioning service also requires the bootMacAddress specification to identify nodes during the inspection of the cluster, or during node redeployment in the cluster.

The provisioner node requires layer 2 connectivity for network booting, DHCP and DNS resolution, and local network communication. The provisioner node requires layer 3 connectivity for virtual media booting.

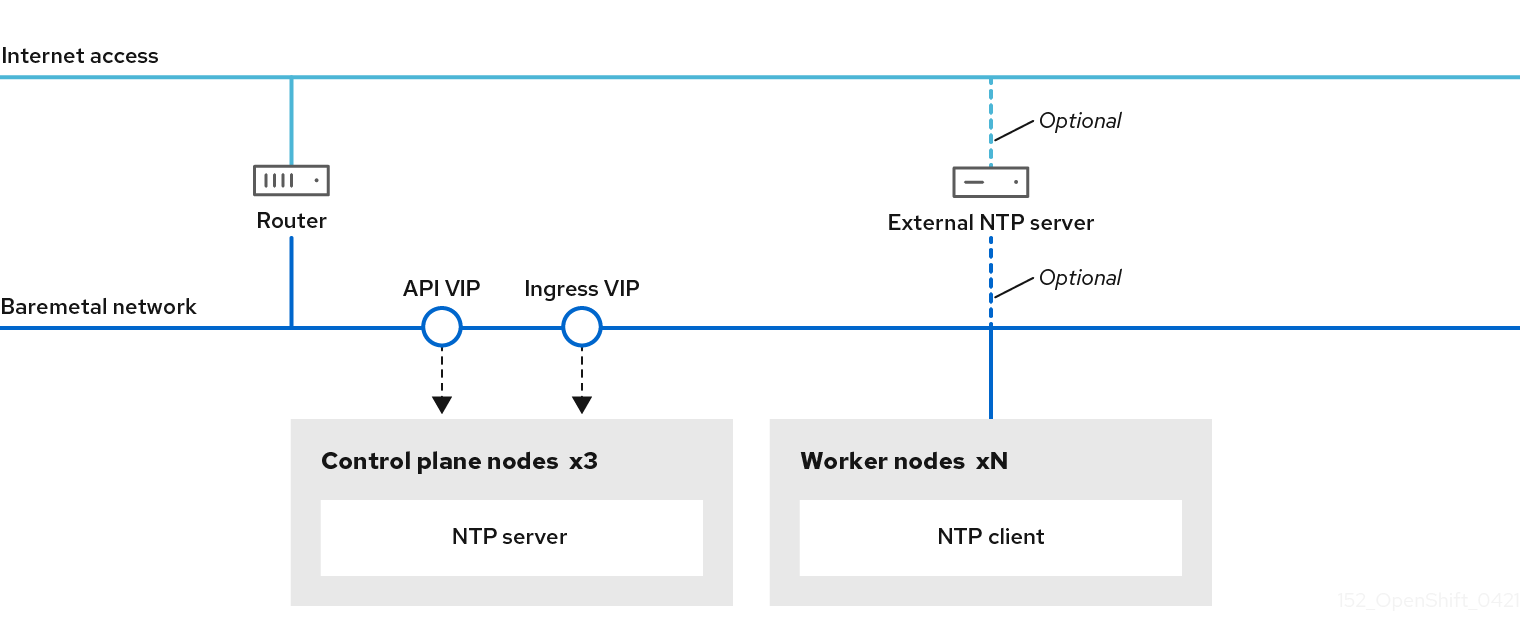

14.2.4.7. Network Time Protocol (NTP)

Each OpenShift Container Platform node in the cluster must have access to an NTP server. OpenShift Container Platform nodes use NTP to synchronize their clocks. For example, cluster nodes use SSL certificates that require validation, which might fail if the date and time between the nodes are not in sync.

Define a consistent clock date and time format in each cluster node’s BIOS settings, or installation might fail.

You can reconfigure the control plane nodes to act as NTP servers on disconnected clusters, and reconfigure worker nodes to retrieve time from the control plane nodes.

14.2.4.8. Port access for the out-of-band management IP address

The out-of-band management IP address is on a separate network from the node. To ensure that the out-of-band management can communicate with the provisioner during installation, the out-of-band management IP address must be granted access to port 80 on the bootstrap host and port 6180 on the OpenShift Container Platform control plane hosts. TLS port 6183 is required for virtual media installation, for example, via Redfish.

14.2.5. Configuring nodes

Configuring nodes when using the provisioning network

Each node in the cluster requires the following configuration for proper installation.

A mismatch between nodes will cause an installation failure.

While the cluster nodes can contain more than two NICs, the installation process only focuses on the first two NICs. In the following table, NIC1 is a non-routable network (provisioning) that is only used for the installation of the OpenShift Container Platform cluster.

| NIC | Network | VLAN |

|---|---|---|

| NIC1 |

|

|

| NIC2 |

|

|

The Red Hat Enterprise Linux (RHEL) 8.x installation process on the provisioner node might vary. To install Red Hat Enterprise Linux (RHEL) 8.x using a local Satellite server or a PXE server, PXE-enable NIC2.

| PXE | Boot order |

|---|---|

|

NIC1 PXE-enabled | 1 |

|

NIC2 | 2 |

Ensure PXE is disabled on all other NICs.

Configure the control plane and worker nodes as follows:

| PXE | Boot order |

|---|---|

| NIC1 PXE-enabled (provisioning network) | 1 |

Configuring nodes without the provisioning network

The installation process requires one NIC:

| NIC | Network | VLAN |

|---|---|---|

| NICx |

|

|

NICx is a routable network (baremetal) that is used for the installation of the OpenShift Container Platform cluster, and routable to the internet.

The provisioning network is optional, but it is required for PXE booting. If you deploy without a provisioning network, you must use a virtual media BMC addressing option such as redfish-virtualmedia or idrac-virtualmedia.

Configuring nodes for Secure Boot manually

Secure Boot prevents a node from booting unless it verifies the node is using only trusted software, such as UEFI firmware drivers, EFI applications, and the operating system.

Red Hat only supports manually configured Secure Boot when deploying with Redfish virtual media.

To enable Secure Boot manually, refer to the hardware guide for the node and execute the following:

Procedure

- Boot the node and enter the BIOS menu.

-

Set the node’s boot mode to

UEFI Enabled. - Enable Secure Boot.

Red Hat does not support Secure Boot with self-generated keys.

Configuring the Compatibility Support Module for Fujitsu iRMC

The Compatibility Support Module (CSM) configuration provides support for legacy BIOS backward compatibility with UEFI systems. You must configure the CSM when you deploy a cluster with Fujitsu iRMC, otherwise the installation might fail.

For information about configuring the CSM for your specific node type, refer to the hardware guide for the node.

Prerequisites

-

Ensure that you have disabled Secure Boot Control. You can disable the feature under Security

Secure Boot Configuration Secure Boot Control.

Procedure

- Boot the node and select the BIOS menu.

- Under the Advanced tab, select CSM Configuration from the list.

Enable the Launch CSM option and set the following values:

Expand Item Value Boot option filter

UEFI and Legacy

Launch PXE OpROM Policy

UEFI only

Launch Storage OpROM policy

UEFI only

Other PCI device ROM priority

UEFI only

14.2.6. Out-of-band management

Nodes typically have an additional NIC used by the baseboard management controllers (BMCs). These BMCs must be accessible from the provisioner node.

Each node must be accessible via out-of-band management. When using an out-of-band management network, the provisioner node requires access to the out-of-band management network for a successful OpenShift Container Platform installation.

The out-of-band management setup is out of scope for this document. Using a separate management network for out-of-band management can enhance performance and improve security. However, using the provisioning network or the bare metal network are valid options.

The bootstrap VM features a maximum of two network interfaces. If you configure a separate management network for out-of-band management, and you are using a provisioning network, the bootstrap VM requires routing access to the management network through one of the network interfaces. In this scenario, the bootstrap VM can then access three networks:

- the bare metal network

- the provisioning network

- the management network routed through one of the network interfaces

14.2.7. Required data for installation

Prior to the installation of the OpenShift Container Platform cluster, gather the following information from all cluster nodes:

Out-of-band management IP

Examples

- Dell (iDRAC) IP

- HP (iLO) IP

- Fujitsu (iRMC) IP

When using the provisioning network

-

NIC (

provisioning) MAC address -

NIC (

baremetal) MAC address

When omitting the provisioning network

-

NIC (

baremetal) MAC address

14.2.8. Validation checklist for nodes

When using the provisioning network

-

❏ NIC1 VLAN is configured for the

provisioningnetwork. -

❏ NIC1 for the

provisioningnetwork is PXE-enabled on the provisioner, control plane, and worker nodes. -

❏ NIC2 VLAN is configured for the

baremetalnetwork. - ❏ PXE has been disabled on all other NICs.

- ❏ DNS is configured with API and Ingress endpoints.

- ❏ Control plane and worker nodes are configured.

- ❏ All nodes accessible via out-of-band management.

- ❏ (Optional) A separate management network has been created.

- ❏ Required data for installation.

When omitting the provisioning network

-

❏ NIC1 VLAN is configured for the

baremetalnetwork. - ❏ DNS is configured with API and Ingress endpoints.

- ❏ Control plane and worker nodes are configured.

- ❏ All nodes accessible via out-of-band management.

- ❏ (Optional) A separate management network has been created.

- ❏ Required data for installation.

14.3. Setting up the environment for an OpenShift installation

14.3.1. Installing RHEL on the provisioner node

With the configuration of the prerequisites complete, the next step is to install RHEL 8.x on the provisioner node. The installer uses the provisioner node as the orchestrator while installing the OpenShift Container Platform cluster. For the purposes of this document, installing RHEL on the provisioner node is out of scope. However, options include but are not limited to using a RHEL Satellite server, PXE, or installation media.

14.3.2. Preparing the provisioner node for OpenShift Container Platform installation

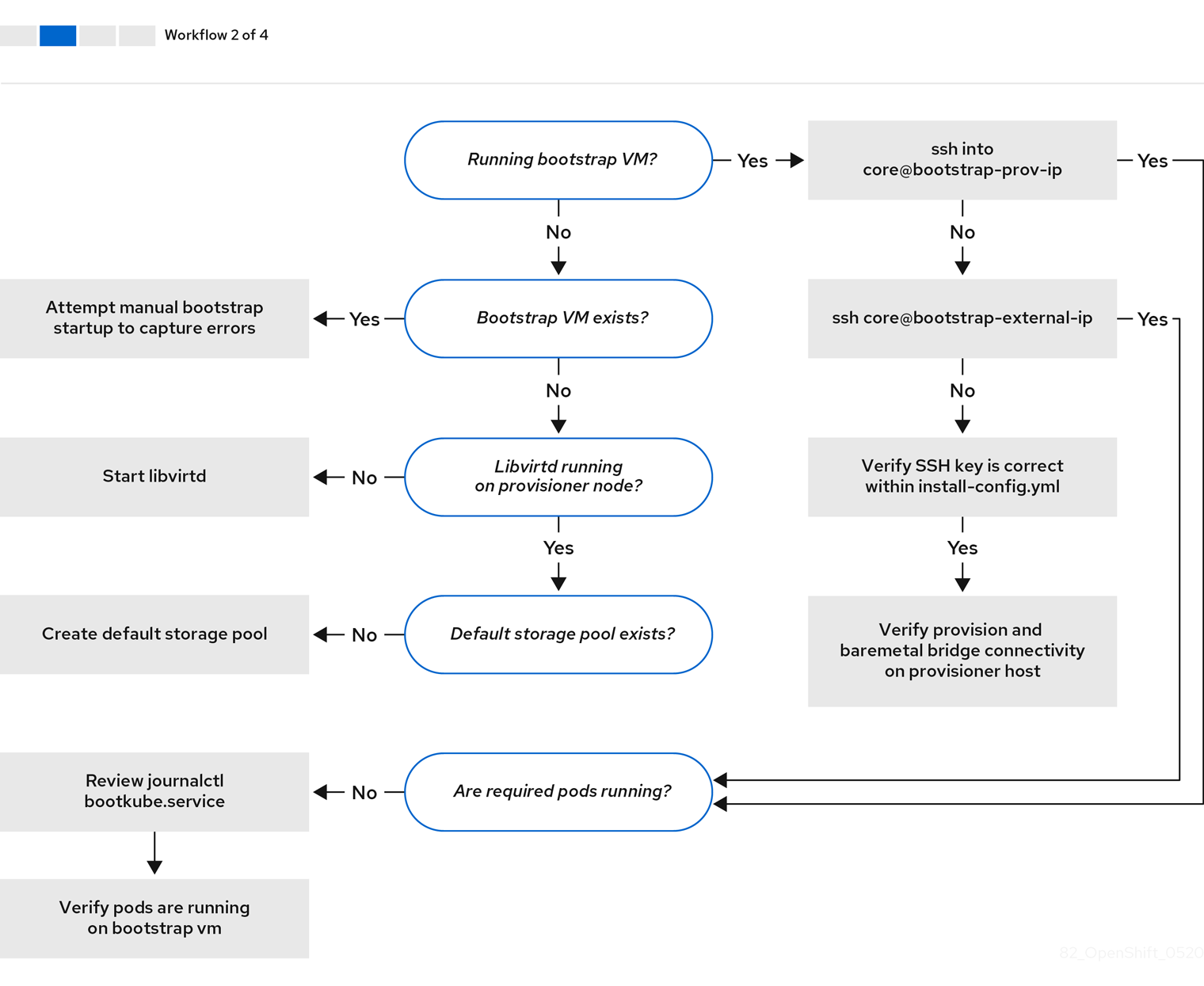

Perform the following steps to prepare the environment.

Procedure

-

Log in to the provisioner node via

ssh. Create a non-root user (

kni) and provide that user withsudoprivileges:# useradd kni# passwd kni# echo "kni ALL=(root) NOPASSWD:ALL" | tee -a /etc/sudoers.d/kni# chmod 0440 /etc/sudoers.d/kniCreate an

sshkey for the new user:# su - kni -c "ssh-keygen -t ed25519 -f /home/kni/.ssh/id_rsa -N ''"Log in as the new user on the provisioner node:

# su - kniUse Red Hat Subscription Manager to register the provisioner node:

$ sudo subscription-manager register --username=<user> --password=<pass> --auto-attach $ sudo subscription-manager repos --enable=rhel-8-for-<architecture>-appstream-rpms --enable=rhel-8-for-<architecture>-baseos-rpmsNoteFor more information about Red Hat Subscription Manager, see Using and Configuring Red Hat Subscription Manager.

Install the following packages:

$ sudo dnf install -y libvirt qemu-kvm mkisofs python3-devel jq ipmitoolModify the user to add the

libvirtgroup to the newly created user:$ sudo usermod --append --groups libvirt <user>Restart

firewalldand enable thehttpservice:$ sudo systemctl start firewalld$ sudo firewall-cmd --zone=public --add-service=http --permanent$ sudo firewall-cmd --reloadStart and enable the

libvirtdservice:$ sudo systemctl enable libvirtd --nowCreate the

defaultstorage pool and start it:$ sudo virsh pool-define-as --name default --type dir --target /var/lib/libvirt/images$ sudo virsh pool-start default$ sudo virsh pool-autostart defaultCreate a

pull-secret.txtfile:$ vim pull-secret.txtIn a web browser, navigate to Install OpenShift on Bare Metal with installer-provisioned infrastructure. Click Copy pull secret. Paste the contents into the

pull-secret.txtfile and save the contents in thekniuser’s home directory.

14.3.3. Checking NTP server synchronization

The OpenShift Container Platform installation program installs the chrony Network Time Protocol (NTP) service on the cluster nodes. To complete installation, each node must have access to an NTP time server. You can verify NTP server synchronization by using the chrony service.

For disconnected clusters, you must configure the NTP servers on the control plane nodes. For more information see the Additional resources section.

Prerequisites

-

You installed the

chronypackage on the target node.

Procedure

-

Log in to the node by using the

sshcommand. View the NTP servers available to the node by running the following command:

$ chronyc sourcesExample output

MS Name/IP address Stratum Poll Reach LastRx Last sample =============================================================================== ^+ time.cloudflare.com 3 10 377 187 -209us[ -209us] +/- 32ms ^+ t1.time.ir2.yahoo.com 2 10 377 185 -4382us[-4382us] +/- 23ms ^+ time.cloudflare.com 3 10 377 198 -996us[-1220us] +/- 33ms ^* brenbox.westnet.ie 1 10 377 193 -9538us[-9761us] +/- 24msUse the

pingcommand to ensure that the node can access an NTP server, for example:$ ping time.cloudflare.comExample output

PING time.cloudflare.com (162.159.200.123) 56(84) bytes of data. 64 bytes from time.cloudflare.com (162.159.200.123): icmp_seq=1 ttl=54 time=32.3 ms 64 bytes from time.cloudflare.com (162.159.200.123): icmp_seq=2 ttl=54 time=30.9 ms 64 bytes from time.cloudflare.com (162.159.200.123): icmp_seq=3 ttl=54 time=36.7 ms ...

14.3.4. Configuring networking

Before installation, you must configure the networking on the provisioner node. Installer-provisioned clusters deploy with a bare-metal bridge and network, and an optional provisioning bridge and network.

You can also configure networking from the web console.

Procedure

Export the bare-metal network NIC name:

$ export PUB_CONN=<baremetal_nic_name>Configure the bare-metal network:

NoteThe SSH connection might disconnect after executing these steps.

$ sudo nohup bash -c " nmcli con down \"$PUB_CONN\" nmcli con delete \"$PUB_CONN\" # RHEL 8.1 appends the word \"System\" in front of the connection, delete in case it exists nmcli con down \"System $PUB_CONN\" nmcli con delete \"System $PUB_CONN\" nmcli connection add ifname baremetal type bridge con-name baremetal bridge.stp no nmcli con add type bridge-slave ifname \"$PUB_CONN\" master baremetal pkill dhclient;dhclient baremetal "Optional: If you are deploying with a provisioning network, export the provisioning network NIC name:

$ export PROV_CONN=<prov_nic_name>Optional: If you are deploying with a provisioning network, configure the provisioning network:

$ sudo nohup bash -c " nmcli con down \"$PROV_CONN\" nmcli con delete \"$PROV_CONN\" nmcli connection add ifname provisioning type bridge con-name provisioning nmcli con add type bridge-slave ifname \"$PROV_CONN\" master provisioning nmcli connection modify provisioning ipv6.addresses fd00:1101::1/64 ipv6.method manual nmcli con down provisioning nmcli con up provisioning "NoteThe ssh connection might disconnect after executing these steps.

The IPv6 address can be any address as long as it is not routable via the bare-metal network.

Ensure that UEFI is enabled and UEFI PXE settings are set to the IPv6 protocol when using IPv6 addressing.

Optional: If you are deploying with a provisioning network, configure the IPv4 address on the provisioning network connection:

$ nmcli connection modify provisioning ipv4.addresses 172.22.0.254/24 ipv4.method manualsshback into theprovisionernode (if required):# ssh kni@provisioner.<cluster-name>.<domain>Verify the connection bridges have been properly created:

$ sudo nmcli con showNAME UUID TYPE DEVICE baremetal 4d5133a5-8351-4bb9-bfd4-3af264801530 bridge baremetal provisioning 43942805-017f-4d7d-a2c2-7cb3324482ed bridge provisioning virbr0 d9bca40f-eee1-410b-8879-a2d4bb0465e7 bridge virbr0 bridge-slave-eno1 76a8ed50-c7e5-4999-b4f6-6d9014dd0812 ethernet eno1 bridge-slave-eno2 f31c3353-54b7-48de-893a-02d2b34c4736 ethernet eno2

14.3.5. Establishing communication between subnets

In a typical OpenShift Container Platform cluster setup, all nodes, including the control plane and worker nodes, reside in the same network. However, for edge computing scenarios, it can be beneficial to locate worker nodes closer to the edge. This often involves using different network segments or subnets for the remote worker nodes than the subnet used by the control plane and local worker nodes. Such a setup can reduce latency for the edge and allow for enhanced scalability. However, the network must be configured properly before installing OpenShift Container Platform to ensure that the edge subnets containing the remote worker nodes can reach the subnet containing the control plane nodes and receive traffic from the control plane too.

All control plane nodes must run in the same subnet. When using more than one subnet, you can also configure the Ingress VIP to run on the control plane nodes by using a manifest. See "Configuring network components to run on the control plane" for details.

Deploying a cluster with multiple subnets requires using virtual media.

This procedure details the network configuration required to allow the remote worker nodes in the second subnet to communicate effectively with the control plane nodes in the first subnet and to allow the control plane nodes in the first subnet to communicate effectively with the remote worker nodes in the second subnet.

In this procedure, the cluster spans two subnets:

-

The first subnet (

10.0.0.0) contains the control plane and local worker nodes. -

The second subnet (

192.168.0.0) contains the edge worker nodes.

Procedure

Configure the first subnet to communicate with the second subnet:

Log in as

rootto a control plane node by running the following command:$ sudo su -Get the name of the network interface:

# nmcli dev status-

Add a route to the second subnet (

192.168.0.0) via the gateway: s+

# nmcli connection modify <interface_name> +ipv4.routes "192.168.0.0/24 via <gateway>"

+ Replace <interface_name> with the interface name. Replace <gateway> with the IP address of the actual gateway.

+ .Example

+

# nmcli connection modify eth0 +ipv4.routes "192.168.0.0/24 via 192.168.0.1"Apply the changes:

# nmcli connection up <interface_name>Replace

<interface_name>with the interface name.Verify the routing table to ensure the route has been added successfully:

# ip routeRepeat the previous steps for each control plane node in the first subnet.

NoteAdjust the commands to match your actual interface names and gateway.

- Configure the second subnet to communicate with the first subnet:

Log in as

rootto a remote worker node:$ sudo su -Get the name of the network interface:

# nmcli dev statusAdd a route to the first subnet (

10.0.0.0) via the gateway:# nmcli connection modify <interface_name> +ipv4.routes "10.0.0.0/24 via <gateway>"Replace

<interface_name>with the interface name. Replace<gateway>with the IP address of the actual gateway.Example

# nmcli connection modify eth0 +ipv4.routes "10.0.0.0/24 via 10.0.0.1"Apply the changes:

# nmcli connection up <interface_name>Replace

<interface_name>with the interface name.Verify the routing table to ensure the route has been added successfully:

# ip routeRepeat the previous steps for each worker node in the second subnet.

NoteAdjust the commands to match your actual interface names and gateway.

- Once you have configured the networks, test the connectivity to ensure the remote worker nodes can reach the control plane nodes and the control plane nodes can reach the remote worker nodes.

From the control plane nodes in the first subnet, ping a remote worker node in the second subnet:

$ ping <remote_worker_node_ip_address>If the ping is successful, it means the control plane nodes in the first subnet can reach the remote worker nodes in the second subnet. If you don’t receive a response, review the network configurations and repeat the procedure for the node.

From the remote worker nodes in the second subnet, ping a control plane node in the first subnet:

$ ping <control_plane_node_ip_address>If the ping is successful, it means the remote worker nodes in the second subnet can reach the control plane in the first subnet. If you don’t receive a response, review the network configurations and repeat the procedure for the node.

14.3.6. Retrieving the OpenShift Container Platform installer

Use the stable-4.x version of the installation program and your selected architecture to deploy the generally available stable version of OpenShift Container Platform:

$ export VERSION=stable-4.11$ export RELEASE_ARCH=<architecture>$ export RELEASE_IMAGE=$(curl -s https://mirror.openshift.com/pub/openshift-v4/$RELEASE_ARCH/clients/ocp/$VERSION/release.txt | grep 'Pull From: quay.io' | awk -F ' ' '{print $3}')14.3.7. Extracting the OpenShift Container Platform installer

After retrieving the installer, the next step is to extract it.

Procedure

Set the environment variables:

$ export cmd=openshift-baremetal-install$ export pullsecret_file=~/pull-secret.txt$ export extract_dir=$(pwd)Get the

ocbinary:$ curl -s https://mirror.openshift.com/pub/openshift-v4/clients/ocp/$VERSION/openshift-client-linux.tar.gz | tar zxvf - ocExtract the installer:

$ sudo cp oc /usr/local/bin$ oc adm release extract --registry-config "${pullsecret_file}" --command=$cmd --to "${extract_dir}" ${RELEASE_IMAGE}$ sudo cp openshift-baremetal-install /usr/local/bin

14.3.8. Optional: Creating an RHCOS images cache

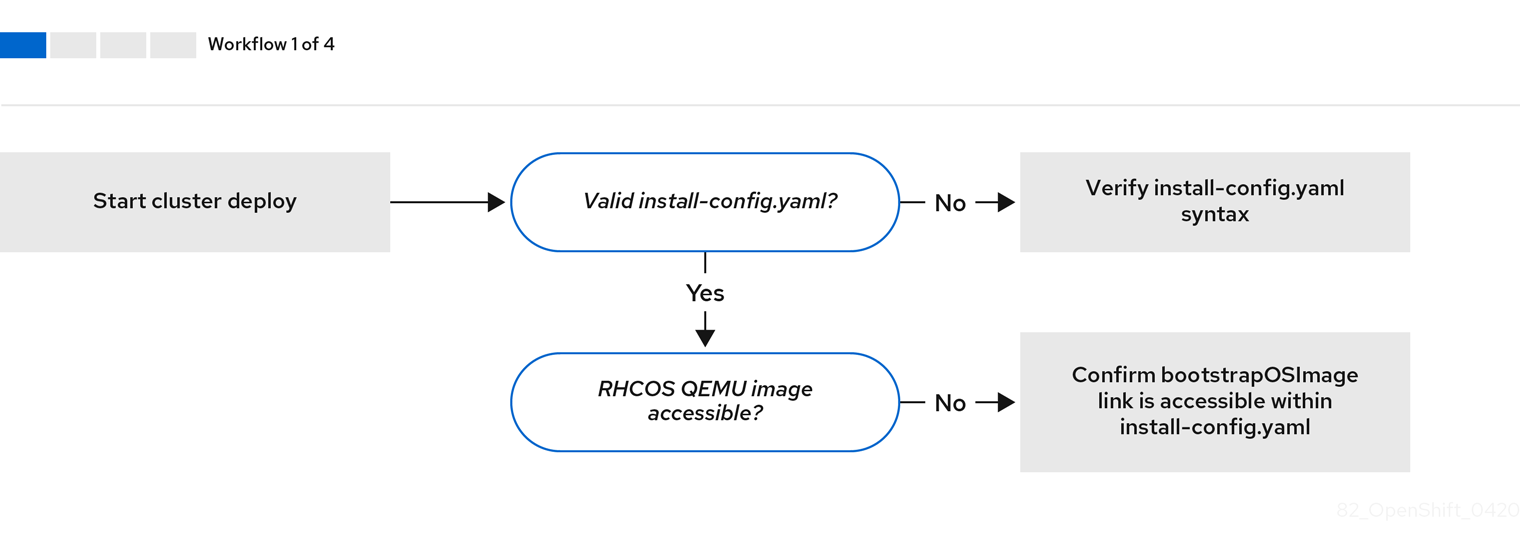

To employ image caching, you must download the Red Hat Enterprise Linux CoreOS (RHCOS) image used by the bootstrap VM to provision the cluster nodes. Image caching is optional, but it is especially useful when running the installation program on a network with limited bandwidth.

The installation program no longer needs the clusterOSImage RHCOS image because the correct image is in the release payload.

If you are running the installation program on a network with limited bandwidth and the RHCOS images download takes more than 15 to 20 minutes, the installation program will timeout. Caching images on a web server will help in such scenarios.

If you enable TLS for the HTTPD server, you must confirm the root certificate is signed by an authority trusted by the client and verify the trusted certificate chain between your OpenShift Container Platform hub and spoke clusters and the HTTPD server. Using a server configured with an untrusted certificate prevents the images from being downloaded to the image creation service. Using untrusted HTTPS servers is not supported.

Install a container that contains the images.

Procedure

Install

podman:$ sudo dnf install -y podmanOpen firewall port

8080to be used for RHCOS image caching:$ sudo firewall-cmd --add-port=8080/tcp --zone=public --permanent$ sudo firewall-cmd --reloadCreate a directory to store the

bootstraposimage:$ mkdir /home/kni/rhcos_image_cacheSet the appropriate SELinux context for the newly created directory:

$ sudo semanage fcontext -a -t httpd_sys_content_t "/home/kni/rhcos_image_cache(/.*)?"$ sudo restorecon -Rv /home/kni/rhcos_image_cache/Get the URI for the RHCOS image that the installation program will deploy on the bootstrap VM:

$ export RHCOS_QEMU_URI=$(/usr/local/bin/openshift-baremetal-install coreos print-stream-json | jq -r --arg ARCH "$(arch)" '.architectures[$ARCH].artifacts.qemu.formats["qcow2.gz"].disk.location')Get the name of the image that the installation program will deploy on the bootstrap VM:

$ export RHCOS_QEMU_NAME=${RHCOS_QEMU_URI##*/}Get the SHA hash for the RHCOS image that will be deployed on the bootstrap VM:

$ export RHCOS_QEMU_UNCOMPRESSED_SHA256=$(/usr/local/bin/openshift-baremetal-install coreos print-stream-json | jq -r --arg ARCH "$(arch)" '.architectures[$ARCH].artifacts.qemu.formats["qcow2.gz"].disk["uncompressed-sha256"]')Download the image and place it in the

/home/kni/rhcos_image_cachedirectory:$ curl -L ${RHCOS_QEMU_URI} -o /home/kni/rhcos_image_cache/${RHCOS_QEMU_NAME}Confirm SELinux type is of

httpd_sys_content_tfor the new file:$ ls -Z /home/kni/rhcos_image_cacheCreate the pod:

$ podman run -d --name rhcos_image_cache \1 -v /home/kni/rhcos_image_cache:/var/www/html \ -p 8080:8080/tcp \ quay.io/centos7/httpd-24-centos7:latest- 1

- Creates a caching webserver with the name

rhcos_image_cache. This pod serves thebootstrapOSImageimage in theinstall-config.yamlfile for deployment.

Generate the

bootstrapOSImageconfiguration:$ export BAREMETAL_IP=$(ip addr show dev baremetal | awk '/inet /{print $2}' | cut -d"/" -f1)$ export BOOTSTRAP_OS_IMAGE="http://${BAREMETAL_IP}:8080/${RHCOS_QEMU_NAME}?sha256=${RHCOS_QEMU_UNCOMPRESSED_SHA256}"$ echo " bootstrapOSImage=${BOOTSTRAP_OS_IMAGE}"Add the required configuration to the

install-config.yamlfile underplatform.baremetal:platform: baremetal: bootstrapOSImage: <bootstrap_os_image>1 - 1

- Replace

<bootstrap_os_image>with the value of$BOOTSTRAP_OS_IMAGE.

See the "Configuring the install-config.yaml file" section for additional details.

14.3.9. Configuring the install-config.yaml file

14.3.9.1. Configuring the install-config.yaml file

The install-config.yaml file requires some additional details. Most of the information teaches the installation program and the resulting cluster enough about the available hardware that it is able to fully manage it.

The installation program no longer needs the clusterOSImage RHCOS image because the correct image is in the release payload.

Configure

install-config.yaml. Change the appropriate variables to match the environment, includingpullSecretandsshKey:apiVersion: v1 baseDomain: <domain> metadata: name: <cluster_name> networking: machineNetwork: - cidr: <public_cidr> networkType: OVNKubernetes compute: - name: worker replicas: 21 controlPlane: name: master replicas: 3 platform: baremetal: {} platform: baremetal: apiVIP: <api_ip> ingressVIP: <wildcard_ip> provisioningNetworkCIDR: <CIDR> bootstrapExternalStaticIP: <bootstrap_static_ip_address>2 bootstrapExternalStaticGateway: <bootstrap_static_gateway>3 hosts: - name: openshift-master-0 role: master bmc: address: ipmi://<out_of_band_ip>4 username: <user> password: <password> bootMACAddress: <NIC1_mac_address> rootDeviceHints: deviceName: "<installation_disk_drive_path>"5 - name: <openshift_master_1> role: master bmc: address: ipmi://<out_of_band_ip> username: <user> password: <password> bootMACAddress: <NIC1_mac_address> rootDeviceHints: deviceName: "<installation_disk_drive_path>" - name: <openshift_master_2> role: master bmc: address: ipmi://<out_of_band_ip> username: <user> password: <password> bootMACAddress: <NIC1_mac_address> rootDeviceHints: deviceName: "<installation_disk_drive_path>" - name: <openshift_worker_0> role: worker bmc: address: ipmi://<out_of_band_ip> username: <user> password: <password> bootMACAddress: <NIC1_mac_address> - name: <openshift_worker_1> role: worker bmc: address: ipmi://<out_of_band_ip> username: <user> password: <password> bootMACAddress: <NIC1_mac_address> rootDeviceHints: deviceName: "<installation_disk_drive_path>" pullSecret: '<pull_secret>' sshKey: '<ssh_pub_key>'- 1

- Scale the worker machines based on the number of worker nodes that are part of the OpenShift Container Platform cluster. Valid options for the

replicasvalue are0and integers greater than or equal to2. Set the number of replicas to0to deploy a three-node cluster, which contains only three control plane machines. A three-node cluster is a smaller, more resource-efficient cluster that can be used for testing, development, and production. You cannot install the cluster with only one worker. - 2

- When deploying a cluster with static IP addresses, you must set the

bootstrapExternalStaticIPconfiguration setting to specify the static IP address of the bootstrap VM when there is no DHCP server on the bare-metal network. - 3

- When deploying a cluster with static IP addresses, you must set the

bootstrapExternalStaticGatewayconfiguration setting to specify the gateway IP address for the bootstrap VM when there is no DHCP server on the bare-metal network. - 4

- See the BMC addressing sections for more options.

- 5

- To set the path to the installation disk drive, enter the kernel name of the disk. For example,

/dev/sda.ImportantBecause the disk discovery order is not guaranteed, the kernel name of the disk can change across booting options for machines with multiple disks. For instance,

/dev/sdabecomes/dev/sdband vice versa. To avoid this issue, you must use persistent disk attributes, such as the disk World Wide Name (WWN). To use the disk WWN, replace thedeviceNameparameter with thewwnWithExtensionparameter. Depending on the parameter that you use, enter the disk name, for example,/dev/sdaor the disk WWN, for example,"0x64cd98f04fde100024684cf3034da5c2". Ensure that you enter the disk WWN value within quotes so that it is used as a string value and not a hexadecimal value.Failure to meet these requirements for the

rootDeviceHintsparameter might result in the following error:ironic-inspector inspection failed: No disks satisfied root device hints

Create a directory to store the cluster configuration:

$ mkdir ~/clusterconfigsCopy the

install-config.yamlfile to the new directory:$ cp install-config.yaml ~/clusterconfigsEnsure all bare metal nodes are powered off prior to installing the OpenShift Container Platform cluster:

$ ipmitool -I lanplus -U <user> -P <password> -H <management-server-ip> power offRemove old bootstrap resources if any are left over from a previous deployment attempt:

for i in $(sudo virsh list | tail -n +3 | grep bootstrap | awk {'print $2'}); do sudo virsh destroy $i; sudo virsh undefine $i; sudo virsh vol-delete $i --pool $i; sudo virsh vol-delete $i.ign --pool $i; sudo virsh pool-destroy $i; sudo virsh pool-undefine $i; done

14.3.9.2. Additional install-config parameters

See the following tables for the required parameters, the hosts parameter, and the bmc parameter for the install-config.yaml file.

| Parameters | Default | Description |

|---|---|---|

|

|

The domain name for the cluster. For example, | |

|

|

|

The boot mode for a node. Options are |

|

| The static IP address for the bootstrap VM. You must set this value when deploying a cluster with static IP addresses when there is no DHCP server on the bare-metal network. | |

|

| The static IP address of the gateway for the bootstrap VM. You must set this value when deploying a cluster with static IP addresses when there is no DHCP server on the bare-metal network. | |

|

|

The | |

|

|

The | |

|

The name to be given to the OpenShift Container Platform cluster. For example, | |

|

The public CIDR (Classless Inter-Domain Routing) of the external network. For example, | |

| The OpenShift Container Platform cluster requires a name be provided for worker (or compute) nodes even if there are zero nodes. | |

| Replicas sets the number of worker (or compute) nodes in the OpenShift Container Platform cluster. | |

| The OpenShift Container Platform cluster requires a name for control plane (master) nodes. | |

| Replicas sets the number of control plane (master) nodes included as part of the OpenShift Container Platform cluster. | |

|

|

The name of the network interface on nodes connected to the provisioning network. For OpenShift Container Platform 4.9 and later releases, use the | |

|

| The default configuration used for machine pools without a platform configuration. | |

|

| (Optional) The virtual IP address for Kubernetes API communication.

This setting must either be provided in the | |

|

|

|

|

|

| (Optional) The virtual IP address for ingress traffic.

This setting must either be provided in the |

| Parameters | Default | Description |

|---|---|---|

|

|

| Defines the IP range for nodes on the provisioning network. |

|

|

| The CIDR for the network to use for provisioning. This option is required when not using the default address range on the provisioning network. |

|

|

The third IP address of the |

The IP address within the cluster where the provisioning services run. Defaults to the third IP address of the provisioning subnet. For example, |

|

|

The second IP address of the |

The IP address on the bootstrap VM where the provisioning services run while the installer is deploying the control plane (master) nodes. Defaults to the second IP address of the provisioning subnet. For example, |

|

|

| The name of the bare-metal bridge of the hypervisor attached to the bare-metal network. |

|

|

|

The name of the provisioning bridge on the |

|

|

Defines the host architecture for your cluster. Valid values are | |

|

| The default configuration used for machine pools without a platform configuration. | |

|

|

A URL to override the default operating system image for the bootstrap node. The URL must contain a SHA-256 hash of the image. For example: | |

|

|

The

| |

|

| Set this parameter to the appropriate HTTP proxy used within your environment. | |

|

| Set this parameter to the appropriate HTTPS proxy used within your environment. | |

|

| Set this parameter to the appropriate list of exclusions for proxy usage within your environment. |

Hosts

The hosts parameter is a list of separate bare metal assets used to build the cluster.

| Name | Default | Description |

|---|---|---|

|

|

The name of the | |

|

|

The role of the bare metal node. Either | |

|

| Connection details for the baseboard management controller. See the BMC addressing section for additional details. | |

|

|

The MAC address of the NIC that the host uses for the provisioning network. Ironic retrieves the IP address using the Note You must provide a valid MAC address from the host if you disabled the provisioning network. | |

|

| Set this optional parameter to configure the network interface of a host. See "(Optional) Configuring host network interfaces" for additional details. |

14.3.9.3. BMC addressing

Most vendors support Baseboard Management Controller (BMC) addressing with the Intelligent Platform Management Interface (IPMI). IPMI does not encrypt communications. It is suitable for use within a data center over a secured or dedicated management network. Check with your vendor to see if they support Redfish network boot. Redfish delivers simple and secure management for converged, hybrid IT and the Software Defined Data Center (SDDC). Redfish is human readable and machine capable, and leverages common internet and web services standards to expose information directly to the modern tool chain. If your hardware does not support Redfish network boot, use IPMI.

IPMI

Hosts using IPMI use the ipmi://<out-of-band-ip>:<port> address format, which defaults to port 623 if not specified. The following example demonstrates an IPMI configuration within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: ipmi://<out-of-band-ip>

username: <user>

password: <password>

The provisioning network is required when PXE booting using IPMI for BMC addressing. It is not possible to PXE boot hosts without a provisioning network. If you deploy without a provisioning network, you must use a virtual media BMC addressing option such as redfish-virtualmedia or idrac-virtualmedia. See "Redfish virtual media for HPE iLO" in the "BMC addressing for HPE iLO" section or "Redfish virtual media for Dell iDRAC" in the "BMC addressing for Dell iDRAC" section for additional details.

Redfish network boot

To enable Redfish, use redfish:// or redfish+http:// to disable TLS. The installer requires both the hostname or the IP address and the path to the system ID. The following example demonstrates a Redfish configuration within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish://<out-of-band-ip>/redfish/v1/Systems/1

username: <user>

password: <password>

While it is recommended to have a certificate of authority for the out-of-band management addresses, you must include disableCertificateVerification: True in the bmc configuration if using self-signed certificates. The following example demonstrates a Redfish configuration using the disableCertificateVerification: True configuration parameter within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish://<out-of-band-ip>/redfish/v1/Systems/1

username: <user>

password: <password>

disableCertificateVerification: TrueRedfish APIs

Several redfish API endpoints are called onto your BCM when using the bare-metal installer-provisioned infrastructure.

You need to ensure that your BMC supports all of the redfish APIs before installation.

- List of redfish APIs

Power on

curl -u $USER:$PASS -X POST -H'Content-Type: application/json' -H'Accept: application/json' -d '{"Action": "Reset", "ResetType": "On"}' https://$SERVER/redfish/v1/Systems/$SystemID/Actions/ComputerSystem.ResetPower off

curl -u $USER:$PASS -X POST -H'Content-Type: application/json' -H'Accept: application/json' -d '{"Action": "Reset", "ResetType": "ForceOff"}' https://$SERVER/redfish/v1/Systems/$SystemID/Actions/ComputerSystem.ResetTemporary boot using

pxecurl -u $USER:$PASS -X PATCH -H "Content-Type: application/json" https://$Server/redfish/v1/Systems/$SystemID/ -d '{"Boot": {"BootSourceOverrideTarget": "pxe", "BootSourceOverrideEnabled": "Once"}}Set BIOS boot mode using

LegacyorUEFIcurl -u $USER:$PASS -X PATCH -H "Content-Type: application/json" https://$Server/redfish/v1/Systems/$SystemID/ -d '{"Boot": {"BootSourceOverrideMode":"UEFI"}}

- List of redfish-virtualmedia APIs

Set temporary boot device using

cdordvdcurl -u $USER:$PASS -X PATCH -H "Content-Type: application/json" https://$Server/redfish/v1/Systems/$SystemID/ -d '{"Boot": {"BootSourceOverrideTarget": "cd", "BootSourceOverrideEnabled": "Once"}}'Mount virtual media

curl -u $USER:$PASS -X PATCH -H "Content-Type: application/json" -H "If-Match: *" https://$Server/redfish/v1/Managers/$ManagerID/VirtualMedia/$VmediaId -d '{"Image": "https://example.com/test.iso", "TransferProtocolType": "HTTPS", "UserName": "", "Password":""}'

The PowerOn and PowerOff commands for redfish APIs are the same for the redfish-virtualmedia APIs.

HTTPS and HTTP are the only supported parameter types for TransferProtocolTypes.

14.3.9.4. BMC addressing for Dell iDRAC

The address field for each bmc entry is a URL for connecting to the OpenShift Container Platform cluster nodes, including the type of controller in the URL scheme and its location on the network.

platform:

baremetal:

hosts:

- name: <hostname>

role: <master | worker>

bmc:

address: <address>

username: <user>

password: <password>- 1

- The

addressconfiguration setting specifies the protocol.

For Dell hardware, Red Hat supports integrated Dell Remote Access Controller (iDRAC) virtual media, Redfish network boot, and IPMI.

BMC address formats for Dell iDRAC

| Protocol | Address Format |

|---|---|

| iDRAC virtual media |

|

| Redfish network boot |

|

| IPMI |

|

Use idrac-virtualmedia as the protocol for Redfish virtual media. redfish-virtualmedia will not work on Dell hardware. Dell’s idrac-virtualmedia uses the Redfish standard with Dell’s OEM extensions.

See the following sections for additional details.

Redfish virtual media for Dell iDRAC

For Redfish virtual media on Dell servers, use idrac-virtualmedia:// in the address setting. Using redfish-virtualmedia:// will not work.

The following example demonstrates using iDRAC virtual media within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: idrac-virtualmedia://<out-of-band-ip>/redfish/v1/Systems/System.Embedded.1

username: <user>

password: <password>

While it is recommended to have a certificate of authority for the out-of-band management addresses, you must include disableCertificateVerification: True in the bmc configuration if using self-signed certificates. The following example demonstrates a Redfish configuration using the disableCertificateVerification: True configuration parameter within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: idrac-virtualmedia://<out-of-band-ip>/redfish/v1/Systems/System.Embedded.1

username: <user>

password: <password>

disableCertificateVerification: True

There is a known issue on Dell iDRAC 9 with firmware version 04.40.00.00 or later for installer-provisioned installations on bare metal deployments. The Virtual Console plugin defaults to eHTML5, an enhanced version of HTML5, which causes problems with the InsertVirtualMedia workflow. Set the plugin to use HTML5 to avoid this issue. The menu path is Configuration

Ensure the OpenShift Container Platform cluster nodes have AutoAttach enabled through the iDRAC console. The menu path is: Configuration

Use idrac-virtualmedia:// as the protocol for Redfish virtual media. Using redfish-virtualmedia:// will not work on Dell hardware, because the idrac-virtualmedia:// protocol corresponds to the idrac hardware type and the Redfish protocol in Ironic. Dell’s idrac-virtualmedia:// protocol uses the Redfish standard with Dell’s OEM extensions. Ironic also supports the idrac type with the WSMAN protocol. Therefore, you must specify idrac-virtualmedia:// to avoid unexpected behavior when electing to use Redfish with virtual media on Dell hardware.

Redfish network boot for iDRAC

To enable Redfish, use redfish:// or redfish+http:// to disable transport layer security (TLS). The installer requires both the hostname or the IP address and the path to the system ID. The following example demonstrates a Redfish configuration within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish://<out-of-band-ip>/redfish/v1/Systems/System.Embedded.1

username: <user>

password: <password>

While it is recommended to have a certificate of authority for the out-of-band management addresses, you must include disableCertificateVerification: True in the bmc configuration if using self-signed certificates. The following example demonstrates a Redfish configuration using the disableCertificateVerification: True configuration parameter within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish://<out-of-band-ip>/redfish/v1/Systems/System.Embedded.1

username: <user>

password: <password>

disableCertificateVerification: True

There is a known issue on Dell iDRAC 9 with firmware version 04.40.00.00 and all releases up to including the 5.xx series for installer-provisioned installations on bare metal deployments. The virtual console plugin defaults to eHTML5, an enhanced version of HTML5, which causes problems with the InsertVirtualMedia workflow. Set the plugin to use HTML5 to avoid this issue. The menu path is Configuration

Ensure the OpenShift Container Platform cluster nodes have AutoAttach enabled through the iDRAC console. The menu path is: Configuration

14.3.9.5. BMC addressing for HPE iLO

The address field for each bmc entry is a URL for connecting to the OpenShift Container Platform cluster nodes, including the type of controller in the URL scheme and its location on the network.

platform:

baremetal:

hosts:

- name: <hostname>

role: <master | worker>

bmc:

address: <address>

username: <user>

password: <password>- 1

- The

addressconfiguration setting specifies the protocol.

For HPE integrated Lights Out (iLO), Red Hat supports Redfish virtual media, Redfish network boot, and IPMI.

| Protocol | Address Format |

|---|---|

| Redfish virtual media |

|

| Redfish network boot |

|

| IPMI |

|

See the following sections for additional details.

Redfish virtual media for HPE iLO

To enable Redfish virtual media for HPE servers, use redfish-virtualmedia:// in the address setting. The following example demonstrates using Redfish virtual media within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish-virtualmedia://<out-of-band-ip>/redfish/v1/Systems/1

username: <user>

password: <password>

While it is recommended to have a certificate of authority for the out-of-band management addresses, you must include disableCertificateVerification: True in the bmc configuration if using self-signed certificates. The following example demonstrates a Redfish configuration using the disableCertificateVerification: True configuration parameter within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish-virtualmedia://<out-of-band-ip>/redfish/v1/Systems/1

username: <user>

password: <password>

disableCertificateVerification: TrueRedfish virtual media is not supported on 9th generation systems running iLO4, because Ironic does not support iLO4 with virtual media.

Redfish network boot for HPE iLO

To enable Redfish, use redfish:// or redfish+http:// to disable TLS. The installer requires both the hostname or the IP address and the path to the system ID. The following example demonstrates a Redfish configuration within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish://<out-of-band-ip>/redfish/v1/Systems/1

username: <user>

password: <password>

While it is recommended to have a certificate of authority for the out-of-band management addresses, you must include disableCertificateVerification: True in the bmc configuration if using self-signed certificates. The following example demonstrates a Redfish configuration using the disableCertificateVerification: True configuration parameter within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish://<out-of-band-ip>/redfish/v1/Systems/1

username: <user>

password: <password>

disableCertificateVerification: True14.3.9.6. BMC addressing for Fujitsu iRMC

The address field for each bmc entry is a URL for connecting to the OpenShift Container Platform cluster nodes, including the type of controller in the URL scheme and its location on the network.

platform:

baremetal:

hosts:

- name: <hostname>

role: <master | worker>

bmc:

address: <address>

username: <user>

password: <password>- 1

- The

addressconfiguration setting specifies the protocol.

For Fujitsu hardware, Red Hat supports integrated Remote Management Controller (iRMC) and IPMI.

| Protocol | Address Format |

|---|---|

| iRMC |

|

| IPMI |

|

iRMC

Fujitsu nodes can use irmc://<out-of-band-ip> and defaults to port 443. The following example demonstrates an iRMC configuration within the install-config.yaml file.

platform:

baremetal:

hosts:

- name: openshift-master-0

role: master

bmc:

address: irmc://<out-of-band-ip>

username: <user>

password: <password>Currently Fujitsu supports iRMC S5 firmware version 3.05P and above for installer-provisioned installation on bare metal.

14.3.9.7. Root device hints

The rootDeviceHints parameter enables the installer to provision the Red Hat Enterprise Linux CoreOS (RHCOS) image to a particular device. The installer examines the devices in the order it discovers them, and compares the discovered values with the hint values. The installer uses the first discovered device that matches the hint value. The configuration can combine multiple hints, but a device must match all hints for the installer to select it.

| Subfield | Description |

|---|---|

|

|

A string containing a Linux device name like |

|

|

A string containing a SCSI bus address like |

|

| A string containing a vendor-specific device identifier. The hint can be a substring of the actual value. |

|

| A string containing the name of the vendor or manufacturer of the device. The hint can be a sub-string of the actual value. |

|

| A string containing the device serial number. The hint must match the actual value exactly. |

|

| An integer representing the minimum size of the device in gigabytes. |

|

| A string containing the unique storage identifier. The hint must match the actual value exactly. |

|

| A string containing the unique storage identifier with the vendor extension appended. The hint must match the actual value exactly. |

|

| A string containing the unique vendor storage identifier. The hint must match the actual value exactly. |

|

| A boolean indicating whether the device should be a rotating disk (true) or not (false). |

Example usage

- name: master-0

role: master

bmc:

address: ipmi://10.10.0.3:6203

username: admin

password: redhat

bootMACAddress: de:ad:be:ef:00:40

rootDeviceHints:

deviceName: "/dev/sda"14.3.9.8. Optional: Setting proxy settings

To deploy an OpenShift Container Platform cluster using a proxy, make the following changes to the install-config.yaml file.

apiVersion: v1

baseDomain: <domain>

proxy:

httpProxy: http://USERNAME:PASSWORD@proxy.example.com:PORT

httpsProxy: https://USERNAME:PASSWORD@proxy.example.com:PORT

noProxy: <WILDCARD_OF_DOMAIN>,<PROVISIONING_NETWORK/CIDR>,<BMC_ADDRESS_RANGE/CIDR>

The following is an example of noProxy with values.

noProxy: .example.com,172.22.0.0/24,10.10.0.0/24With a proxy enabled, set the appropriate values of the proxy in the corresponding key/value pair.

Key considerations:

-

If the proxy does not have an HTTPS proxy, change the value of

httpsProxyfromhttps://tohttp://. -

If using a provisioning network, include it in the

noProxysetting, otherwise the installer will fail. -

Set all of the proxy settings as environment variables within the provisioner node. For example,

HTTP_PROXY,HTTPS_PROXY, andNO_PROXY.

When provisioning with IPv6, you cannot define a CIDR address block in the noProxy settings. You must define each address separately.

14.3.9.9. Optional: Deploying with no provisioning network

To deploy an OpenShift Container Platform cluster without a provisioning network, make the following changes to the install-config.yaml file.

platform:

baremetal:

apiVIP: <api_VIP>

ingressVIP: <ingress_VIP>

provisioningNetwork: "Disabled" - 1

- Add the

provisioningNetworkconfiguration setting, if needed, and set it toDisabled.

The provisioning network is required for PXE booting. If you deploy without a provisioning network, you must use a virtual media BMC addressing option such as redfish-virtualmedia or idrac-virtualmedia. See "Redfish virtual media for HPE iLO" in the "BMC addressing for HPE iLO" section or "Redfish virtual media for Dell iDRAC" in the "BMC addressing for Dell iDRAC" section for additional details.

14.3.9.10. Optional: Deploying with dual-stack networking

To deploy an OpenShift Container Platform cluster with dual-stack networking, edit the machineNetwork, clusterNetwork, and serviceNetwork configuration settings in the install-config.yaml file. Each setting must have two CIDR entries each. Ensure the first CIDR entry is the IPv4 setting and the second CIDR entry is the IPv6 setting.

machineNetwork:

- cidr: {{ extcidrnet }}

- cidr: {{ extcidrnet6 }}

clusterNetwork:

- cidr: 10.128.0.0/14

hostPrefix: 23

- cidr: fd02::/48

hostPrefix: 64

serviceNetwork:

- 172.30.0.0/16

- fd03::/112The API VIP IP address and the Ingress VIP address must be of the primary IP address family when using dual-stack networking. Currently, Red Hat does not support dual-stack VIPs or dual-stack networking with IPv6 as the primary IP address family. However, Red Hat does support dual-stack networking with IPv4 as the primary IP address family. Therefore, the IPv4 entries must go before the IPv6 entries.

14.3.9.11. Optional: Configuring host network interfaces

Before installation, you can set the networkConfig configuration setting in the install-config.yaml file to configure host network interfaces using NMState.

The most common use case for this functionality is to specify a static IP address on the bare-metal network, but you can also configure other networks such as a storage network. This functionality supports other NMState features such as VLAN, VXLAN, bridges, bonds, routes, MTU, and DNS resolver settings.

Prerequisites

-

Configure a

PTRDNS record with a valid hostname for each node with a static IP address. -

Install the NMState CLI (

nmstate).

Procedure

Optional: Consider testing the NMState syntax with

nmstatectl gcbefore including it in theinstall-config.yamlfile, because the installer will not check the NMState YAML syntax.NoteErrors in the YAML syntax might result in a failure to apply the network configuration. Additionally, maintaining the validated YAML syntax is useful when applying changes using Kubernetes NMState after deployment or when expanding the cluster.

Create an NMState YAML file:

interfaces: - name: <nic1_name>1 type: ethernet state: up ipv4: address: - ip: <ip_address>2 prefix-length: 24 enabled: true dns-resolver: config: server: - <dns_ip_address>3 routes: config: - destination: 0.0.0.0/0 next-hop-address: <next_hop_ip_address>4 next-hop-interface: <next_hop_nic1_name>5 Test the configuration file by running the following command:

$ nmstatectl gc <nmstate_yaml_file>Replace

<nmstate_yaml_file>with the configuration file name.

Use the

networkConfigconfiguration setting by adding the NMState configuration to hosts within theinstall-config.yamlfile:hosts: - name: openshift-master-0 role: master bmc: address: redfish+http://<out_of_band_ip>/redfish/v1/Systems/ username: <user> password: <password> disableCertificateVerification: null bootMACAddress: <NIC1_mac_address> bootMode: UEFI rootDeviceHints: deviceName: "/dev/sda" networkConfig:1 interfaces: - name: <nic1_name>2 type: ethernet state: up ipv4: address: - ip: <ip_address>3 prefix-length: 24 enabled: true dns-resolver: config: server: - <dns_ip_address>4 routes: config: - destination: 0.0.0.0/0 next-hop-address: <next_hop_ip_address>5 next-hop-interface: <next_hop_nic1_name>6 ImportantAfter deploying the cluster, you cannot modify the

networkConfigconfiguration setting ofinstall-config.yamlfile to make changes to the host network interface. Use the Kubernetes NMState Operator to make changes to the host network interface after deployment.

14.3.9.12. Configuring host network interfaces for subnets

For edge computing scenarios, it can be beneficial to locate worker nodes closer to the edge. To locate remote worker nodes in subnets, you might use different network segments or subnets for the remote worker nodes than you used for the control plane subnet and local worker nodes. You can reduce latency for the edge and allow for enhanced scalability by setting up subnets for edge computing scenarios.

If you have established different network segments or subnets for remote worker nodes as described in the section on "Establishing communication between subnets", you must specify the subnets in the machineNetwork configuration setting if the workers are using static IP addresses, bonds or other advanced networking. When setting the node IP address in the networkConfig parameter for each remote worker node, you must also specify the gateway and the DNS server for the subnet containing the control plane nodes when using static IP addresses. This ensures the remote worker nodes can reach the subnet containing the control plane nodes and that they can receive network traffic from the control plane.

All control plane nodes must run in the same subnet. When using more than one subnet, you can also configure the Ingress VIP to run on the control plane nodes by using a manifest. See "Configuring network components to run on the control plane" for details.

Deploying a cluster with multiple subnets requires using virtual media, such as redfish-virtualmedia and idrac-virtualmedia.

Procedure

Add the subnets to the

machineNetworkin theinstall-config.yamlfile when using static IP addresses:networking: machineNetwork: - cidr: 10.0.0.0/24 - cidr: 192.168.0.0/24 networkType: OVNKubernetesAdd the gateway and DNS configuration to the

networkConfigparameter of each edge worker node using NMState syntax when using a static IP address or advanced networking such as bonds:networkConfig: nmstate: interfaces: - name: <interface_name>1 type: ethernet state: up ipv4: enabled: true dhcp: false address: - ip: <node_ip>2 prefix-length: 24 gateway: <gateway_ip>3 dns-resolver: config: server: - <dns_ip>4

14.3.9.13. Optional: Configuring address generation modes for SLAAC in dual-stack networks

For dual-stack clusters that use Stateless Address AutoConfiguration (SLAAC), you must specify a global value for the ipv6.addr-gen-mode network setting. You can set this value using NMState to configure the ramdisk and the cluster configuration files. If you don’t configure a consistent ipv6.addr-gen-mode in these locations, IPv6 address mismatches can occur between CSR resources and BareMetalHost resources in the cluster.

Prerequisites

-

Install the NMState CLI (

nmstate).

Procedure

Optional: Consider testing the NMState YAML syntax with the

nmstatectl gccommand before including it in theinstall-config.yamlfile because the installation program will not check the NMState YAML syntax.Create an NMState YAML file:

interfaces: - name: eth0 ipv6: addr-gen-mode: <address_mode>1 - 1

- Replace

<address_mode>with the type of address generation mode required for IPv6 addresses in the cluster. Valid values areeui64,stable-privacy, orrandom.

Test the configuration file by running the following command:

$ nmstatectl gc <nmstate_yaml_file>1 - 1

- Replace

<nmstate_yaml_file>with the name of the test configuration file.

Add the NMState configuration to the

hosts.networkConfigsection within the install-config.yaml file:hosts: - name: openshift-master-0 role: master bmc: address: redfish+http://<out_of_band_ip>/redfish/v1/Systems/ username: <user> password: <password> disableCertificateVerification: null bootMACAddress: <NIC1_mac_address> bootMode: UEFI rootDeviceHints: deviceName: "/dev/sda" networkConfig: interfaces: - name: eth0 ipv6: addr-gen-mode: <address_mode>1 ...- 1

- Replace

<address_mode>with the type of address generation mode required for IPv6 addresses in the cluster. Valid values areeui64,stable-privacy, orrandom.

14.3.9.14. Configuring multiple cluster nodes

You can simultaneously configure OpenShift Container Platform cluster nodes with identical settings. Configuring multiple cluster nodes avoids adding redundant information for each node to the install-config.yaml file. This file contains specific parameters to apply an identical configuration to multiple nodes in the cluster.

Compute nodes are configured separately from the controller node. However, configurations for both node types use the highlighted parameters in the install-config.yaml file to enable multi-node configuration. Set the networkConfig parameters to BOND, as shown in the following example:

hosts:

- name: ostest-master-0

[...]

networkConfig: &BOND

interfaces:

- name: bond0

type: bond

state: up

ipv4:

dhcp: true

enabled: true

link-aggregation:

mode: active-backup

port:

- enp2s0

- enp3s0

- name: ostest-master-1

[...]

networkConfig: *BOND

- name: ostest-master-2

[...]

networkConfig: *BONDConfiguration of multiple cluster nodes is only available for initial deployments on installer-provisioned infrastructure.

14.3.9.15. Optional: Configuring managed Secure Boot

You can enable managed Secure Boot when deploying an installer-provisioned cluster using Redfish BMC addressing, such as redfish, redfish-virtualmedia, or idrac-virtualmedia. To enable managed Secure Boot, add the bootMode configuration setting to each node:

Example

hosts:

- name: openshift-master-0

role: master

bmc:

address: redfish://<out_of_band_ip>

username: <username>

password: <password>

bootMACAddress: <NIC1_mac_address>

rootDeviceHints:

deviceName: "/dev/sda"

bootMode: UEFISecureBoot - 1

- Ensure the

bmc.addresssetting usesredfish,redfish-virtualmedia, oridrac-virtualmediaas the protocol. See "BMC addressing for HPE iLO" or "BMC addressing for Dell iDRAC" for additional details. - 2

- The

bootModesetting isUEFIby default. Change it toUEFISecureBootto enable managed Secure Boot.

See "Configuring nodes" in the "Prerequisites" to ensure the nodes can support managed Secure Boot. If the nodes do not support managed Secure Boot, see "Configuring nodes for Secure Boot manually" in the "Configuring nodes" section. Configuring Secure Boot manually requires Redfish virtual media.

Red Hat does not support Secure Boot with IPMI, because IPMI does not provide Secure Boot management facilities.