Questo contenuto non è disponibile nella lingua selezionata.

Chapter 6. Ingress Operator in OpenShift Container Platform

6.1. OpenShift Container Platform Ingress Operator

When you create your OpenShift Container Platform cluster, pods and services running on the cluster are each allocated their own IP addresses. The IP addresses are accessible to other pods and services running nearby but are not accessible to outside clients. The Ingress Operator implements the IngressController API and is the component responsible for enabling external access to OpenShift Container Platform cluster services.

The Ingress Operator makes it possible for external clients to access your service by deploying and managing one or more HAProxy-based Ingress Controllers to handle routing. You can use the Ingress Operator to route traffic by specifying OpenShift Container Platform Route and Kubernetes Ingress resources. Configurations within the Ingress Controller, such as the ability to define endpointPublishingStrategy type and internal load balancing, provide ways to publish Ingress Controller endpoints.

6.2. The Ingress configuration asset

The installation program generates an asset with an Ingress resource in the config.openshift.io API group, cluster-ingress-02-config.yml.

YAML Definition of the Ingress resource

apiVersion: config.openshift.io/v1

kind: Ingress

metadata:

name: cluster

spec:

domain: apps.openshiftdemos.com

The installation program stores this asset in the cluster-ingress-02-config.yml file in the manifests/ directory. This Ingress resource defines the cluster-wide configuration for Ingress. This Ingress configuration is used as follows:

- The Ingress Operator uses the domain from the cluster Ingress configuration as the domain for the default Ingress Controller.

-

The OpenShift API Server Operator uses the domain from the cluster Ingress configuration. This domain is also used when generating a default host for a

Routeresource that does not specify an explicit host.

6.3. Ingress Controller configuration parameters

The ingresscontrollers.operator.openshift.io resource offers the following configuration parameters.

| Parameter | Description |

|---|---|

|

|

The

If empty, the default value is |

|

|

|

|

|

If not set, the default value is based on

For most platforms, the

|

|

|

The

The secret must contain the following keys and data: *

If not set, a wildcard certificate is automatically generated and used. The certificate is valid for the Ingress Controller The in-use certificate, whether generated or user-specified, is automatically integrated with OpenShift Container Platform built-in OAuth server. |

|

|

|

|

|

|

|

|

If not set, the defaults values are used. Note

The |

|

|

If not set, the default value is based on the

When using the

The minimum TLS version for Ingress Controllers is Note

Ciphers and the minimum TLS version of the configured security profile are reflected in the Important

The Ingress Operator converts the TLS |

|

|

The

The |

|

|

|

|

|

|

|

|

By setting the

By default, the policy is set to

By setting These adjustments are only applied to cleartext, edge-terminated, and re-encrypt routes, and only when using HTTP/1.

For request headers, these adjustments are applied only for routes that have the |

|

|

|

|

|

|

|

|

For any cookie that you want to capture, the following parameters must be in your

For example: |

|

|

|

|

|

|

|

|

The

|

|

|

The

These connections come from load balancer health probes or web browser speculative connections (preconnect) and can be safely ignored. However, these requests can be caused by network errors, so setting this field to |

All parameters are optional.

6.3.1. Ingress Controller TLS security profiles

TLS security profiles provide a way for servers to regulate which ciphers a connecting client can use when connecting to the server.

6.3.1.1. Understanding TLS security profiles

You can use a TLS (Transport Layer Security) security profile to define which TLS ciphers are required by various OpenShift Container Platform components. The OpenShift Container Platform TLS security profiles are based on Mozilla recommended configurations.

You can specify one of the following TLS security profiles for each component:

| Profile | Description |

|---|---|

|

| This profile is intended for use with legacy clients or libraries. The profile is based on the Old backward compatibility recommended configuration.

The Note For the Ingress Controller, the minimum TLS version is converted from 1.0 to 1.1. |

|

| This profile is the recommended configuration for the majority of clients. It is the default TLS security profile for the Ingress Controller, kubelet, and control plane. The profile is based on the Intermediate compatibility recommended configuration.

The |

|

| This profile is intended for use with modern clients that have no need for backwards compatibility. This profile is based on the Modern compatibility recommended configuration.

The |

|

| This profile allows you to define the TLS version and ciphers to use. Warning

Use caution when using a |

When using one of the predefined profile types, the effective profile configuration is subject to change between releases. For example, given a specification to use the Intermediate profile deployed on release X.Y.Z, an upgrade to release X.Y.Z+1 might cause a new profile configuration to be applied, resulting in a rollout.

6.3.1.2. Configuring the TLS security profile for the Ingress Controller

To configure a TLS security profile for an Ingress Controller, edit the IngressController custom resource (CR) to specify a predefined or custom TLS security profile. If a TLS security profile is not configured, the default value is based on the TLS security profile set for the API server.

Sample IngressController CR that configures the Old TLS security profile

apiVersion: operator.openshift.io/v1

kind: IngressController

...

spec:

tlsSecurityProfile:

old: {}

type: Old

...The TLS security profile defines the minimum TLS version and the TLS ciphers for TLS connections for Ingress Controllers.

You can see the ciphers and the minimum TLS version of the configured TLS security profile in the IngressController custom resource (CR) under Status.Tls Profile and the configured TLS security profile under Spec.Tls Security Profile. For the Custom TLS security profile, the specific ciphers and minimum TLS version are listed under both parameters.

The HAProxy Ingress Controller image supports TLS 1.3 and the Modern profile.

The Ingress Operator also converts the TLS 1.0 of an Old or Custom profile to 1.1.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole.

Procedure

Edit the

IngressControllerCR in theopenshift-ingress-operatorproject to configure the TLS security profile:$ oc edit IngressController default -n openshift-ingress-operatorAdd the

spec.tlsSecurityProfilefield:Sample

IngressControllerCR for aCustomprofileapiVersion: operator.openshift.io/v1 kind: IngressController ... spec: tlsSecurityProfile: type: Custom1 custom:2 ciphers:3 - ECDHE-ECDSA-CHACHA20-POLY1305 - ECDHE-RSA-CHACHA20-POLY1305 - ECDHE-RSA-AES128-GCM-SHA256 - ECDHE-ECDSA-AES128-GCM-SHA256 minTLSVersion: VersionTLS11 ...- Save the file to apply the changes.

Verification

Verify that the profile is set in the

IngressControllerCR:$ oc describe IngressController default -n openshift-ingress-operatorExample output

Name: default Namespace: openshift-ingress-operator Labels: <none> Annotations: <none> API Version: operator.openshift.io/v1 Kind: IngressController ... Spec: ... Tls Security Profile: Custom: Ciphers: ECDHE-ECDSA-CHACHA20-POLY1305 ECDHE-RSA-CHACHA20-POLY1305 ECDHE-RSA-AES128-GCM-SHA256 ECDHE-ECDSA-AES128-GCM-SHA256 Min TLS Version: VersionTLS11 Type: Custom ...

6.3.1.3. Configuring mutual TLS authentication

You can configure the Ingress Controller to enable mutual TLS (mTLS) authentication by setting a spec.clientTLS value. The clientTLS value configures the Ingress Controller to verify client certificates. This configuration includes setting a clientCA value, which is a reference to a config map. The config map contains the PEM-encoded CA certificate bundle that is used to verify a client’s certificate. Optionally, you can also configure a list of certificate subject filters.

If the clientCA value specifies an X509v3 certificate revocation list (CRL) distribution point, the Ingress Operator downloads and manages a CRL config map based on the HTTP URI X509v3 CRL Distribution Point specified in each provided certificate. The Ingress Controller uses this config map during mTLS/TLS negotiation. Requests that do not provide valid certificates are rejected.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole. - You have a PEM-encoded CA certificate bundle.

If your CA bundle references a CRL distribution point, you must have also included the end-entity or leaf certificate to the client CA bundle. This certificate must have included an HTTP URI under

CRL Distribution Points, as described in RFC 5280. For example:Issuer: C=US, O=Example Inc, CN=Example Global G2 TLS RSA SHA256 2020 CA1 Subject: SOME SIGNED CERT X509v3 CRL Distribution Points: Full Name: URI:http://crl.example.com/example.crl

Procedure

In the

openshift-confignamespace, create a config map from your CA bundle:$ oc create configmap \ router-ca-certs-default \ --from-file=ca-bundle.pem=client-ca.crt \1 -n openshift-config- 1

- The config map data key must be

ca-bundle.pem, and the data value must be a CA certificate in PEM format.

Edit the

IngressControllerresource in theopenshift-ingress-operatorproject:$ oc edit IngressController default -n openshift-ingress-operatorAdd the

spec.clientTLSfield and subfields to configure mutual TLS:Sample

IngressControllerCR for aclientTLSprofile that specifies filtering patternsapiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: clientTLS: clientCertificatePolicy: Required clientCA: name: router-ca-certs-default allowedSubjectPatterns: - "^/CN=example.com/ST=NC/C=US/O=Security/OU=OpenShift$"

6.4. View the default Ingress Controller

The Ingress Operator is a core feature of OpenShift Container Platform and is enabled out of the box.

Every new OpenShift Container Platform installation has an ingresscontroller named default. It can be supplemented with additional Ingress Controllers. If the default ingresscontroller is deleted, the Ingress Operator will automatically recreate it within a minute.

Procedure

View the default Ingress Controller:

$ oc describe --namespace=openshift-ingress-operator ingresscontroller/default

6.5. View Ingress Operator status

You can view and inspect the status of your Ingress Operator.

Procedure

View your Ingress Operator status:

$ oc describe clusteroperators/ingress

6.6. View Ingress Controller logs

You can view your Ingress Controller logs.

Procedure

View your Ingress Controller logs:

$ oc logs --namespace=openshift-ingress-operator deployments/ingress-operator -c <container_name>

6.7. View Ingress Controller status

Your can view the status of a particular Ingress Controller.

Procedure

View the status of an Ingress Controller:

$ oc describe --namespace=openshift-ingress-operator ingresscontroller/<name>

6.8. Configuring the Ingress Controller

6.8.1. Setting a custom default certificate

As an administrator, you can configure an Ingress Controller to use a custom certificate by creating a Secret resource and editing the IngressController custom resource (CR).

Prerequisites

- You must have a certificate/key pair in PEM-encoded files, where the certificate is signed by a trusted certificate authority or by a private trusted certificate authority that you configured in a custom PKI.

Your certificate meets the following requirements:

- The certificate is valid for the ingress domain.

-

The certificate uses the

subjectAltNameextension to specify a wildcard domain, such as*.apps.ocp4.example.com.

You must have an

IngressControllerCR. You may use the default one:$ oc --namespace openshift-ingress-operator get ingresscontrollersExample output

NAME AGE default 10m

If you have intermediate certificates, they must be included in the tls.crt file of the secret containing a custom default certificate. Order matters when specifying a certificate; list your intermediate certificate(s) after any server certificate(s).

Procedure

The following assumes that the custom certificate and key pair are in the tls.crt and tls.key files in the current working directory. Substitute the actual path names for tls.crt and tls.key. You also may substitute another name for custom-certs-default when creating the Secret resource and referencing it in the IngressController CR.

This action will cause the Ingress Controller to be redeployed, using a rolling deployment strategy.

Create a Secret resource containing the custom certificate in the

openshift-ingressnamespace using thetls.crtandtls.keyfiles.$ oc --namespace openshift-ingress create secret tls custom-certs-default --cert=tls.crt --key=tls.keyUpdate the IngressController CR to reference the new certificate secret:

$ oc patch --type=merge --namespace openshift-ingress-operator ingresscontrollers/default \ --patch '{"spec":{"defaultCertificate":{"name":"custom-certs-default"}}}'Verify the update was effective:

$ echo Q |\ openssl s_client -connect console-openshift-console.apps.<domain>:443 -showcerts 2>/dev/null |\ openssl x509 -noout -subject -issuer -enddatewhere:

<domain>- Specifies the base domain name for your cluster.

Example output

subject=C = US, ST = NC, L = Raleigh, O = RH, OU = OCP4, CN = *.apps.example.com issuer=C = US, ST = NC, L = Raleigh, O = RH, OU = OCP4, CN = example.com notAfter=May 10 08:32:45 2022 GMTipYou can alternatively apply the following YAML to set a custom default certificate:

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: defaultCertificate: name: custom-certs-defaultThe certificate secret name should match the value used to update the CR.

Once the IngressController CR has been modified, the Ingress Operator updates the Ingress Controller’s deployment to use the custom certificate.

6.8.2. Removing a custom default certificate

As an administrator, you can remove a custom certificate that you configured an Ingress Controller to use.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole. -

You have installed the OpenShift CLI (

oc). - You previously configured a custom default certificate for the Ingress Controller.

Procedure

To remove the custom certificate and restore the certificate that ships with OpenShift Container Platform, enter the following command:

$ oc patch -n openshift-ingress-operator ingresscontrollers/default \ --type json -p $'- op: remove\n path: /spec/defaultCertificate'There can be a delay while the cluster reconciles the new certificate configuration.

Verification

To confirm that the original cluster certificate is restored, enter the following command:

$ echo Q | \ openssl s_client -connect console-openshift-console.apps.<domain>:443 -showcerts 2>/dev/null | \ openssl x509 -noout -subject -issuer -enddatewhere:

<domain>- Specifies the base domain name for your cluster.

Example output

subject=CN = *.apps.<domain> issuer=CN = ingress-operator@1620633373 notAfter=May 10 10:44:36 2023 GMT

6.8.3. Scaling an Ingress Controller

Manually scale an Ingress Controller to meeting routing performance or availability requirements such as the requirement to increase throughput. oc commands are used to scale the IngressController resource. The following procedure provides an example for scaling up the default IngressController.

Scaling is not an immediate action, as it takes time to create the desired number of replicas.

Procedure

View the current number of available replicas for the default

IngressController:$ oc get -n openshift-ingress-operator ingresscontrollers/default -o jsonpath='{$.status.availableReplicas}'Example output

2Scale the default

IngressControllerto the desired number of replicas using theoc patchcommand. The following example scales the defaultIngressControllerto 3 replicas:$ oc patch -n openshift-ingress-operator ingresscontroller/default --patch '{"spec":{"replicas": 3}}' --type=mergeExample output

ingresscontroller.operator.openshift.io/default patchedVerify that the default

IngressControllerscaled to the number of replicas that you specified:$ oc get -n openshift-ingress-operator ingresscontrollers/default -o jsonpath='{$.status.availableReplicas}'Example output

3TipYou can alternatively apply the following YAML to scale an Ingress Controller to three replicas:

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 31 - 1

- If you need a different amount of replicas, change the

replicasvalue.

6.8.4. Configuring Ingress access logging

You can configure the Ingress Controller to enable access logs. If you have clusters that do not receive much traffic, then you can log to a sidecar. If you have high traffic clusters, to avoid exceeding the capacity of the logging stack or to integrate with a logging infrastructure outside of OpenShift Container Platform, you can forward logs to a custom syslog endpoint. You can also specify the format for access logs.

Container logging is useful to enable access logs on low-traffic clusters when there is no existing Syslog logging infrastructure, or for short-term use while diagnosing problems with the Ingress Controller.

Syslog is needed for high-traffic clusters where access logs could exceed the OpenShift Logging stack’s capacity, or for environments where any logging solution needs to integrate with an existing Syslog logging infrastructure. The Syslog use-cases can overlap.

Prerequisites

-

Log in as a user with

cluster-adminprivileges.

Procedure

Configure Ingress access logging to a sidecar.

To configure Ingress access logging, you must specify a destination using

spec.logging.access.destination. To specify logging to a sidecar container, you must specifyContainerspec.logging.access.destination.type. The following example is an Ingress Controller definition that logs to aContainerdestination:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: ContainerWhen you configure the Ingress Controller to log to a sidecar, the operator creates a container named

logsinside the Ingress Controller Pod:$ oc -n openshift-ingress logs deployment.apps/router-default -c logsExample output

2020-05-11T19:11:50.135710+00:00 router-default-57dfc6cd95-bpmk6 router-default-57dfc6cd95-bpmk6 haproxy[108]: 174.19.21.82:39654 [11/May/2020:19:11:50.133] public be_http:hello-openshift:hello-openshift/pod:hello-openshift:hello-openshift:10.128.2.12:8080 0/0/1/0/1 200 142 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"

Configure Ingress access logging to a Syslog endpoint.

To configure Ingress access logging, you must specify a destination using

spec.logging.access.destination. To specify logging to a Syslog endpoint destination, you must specifySyslogforspec.logging.access.destination.type. If the destination type isSyslog, you must also specify a destination endpoint usingspec.logging.access.destination.syslog.endpointand you can specify a facility usingspec.logging.access.destination.syslog.facility. The following example is an Ingress Controller definition that logs to aSyslogdestination:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Syslog syslog: address: 1.2.3.4 port: 10514NoteThe

syslogdestination port must be UDP.

Configure Ingress access logging with a specific log format.

You can specify

spec.logging.access.httpLogFormatto customize the log format. The following example is an Ingress Controller definition that logs to asyslogendpoint with IP address 1.2.3.4 and port 10514:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Syslog syslog: address: 1.2.3.4 port: 10514 httpLogFormat: '%ci:%cp [%t] %ft %b/%s %B %bq %HM %HU %HV'

Disable Ingress access logging.

To disable Ingress access logging, leave

spec.loggingorspec.logging.accessempty:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: null

6.8.5. Setting Ingress Controller thread count

A cluster administrator can set the thread count to increase the amount of incoming connections a cluster can handle. You can patch an existing Ingress Controller to increase the amount of threads.

Prerequisites

- The following assumes that you already created an Ingress Controller.

Procedure

Update the Ingress Controller to increase the number of threads:

$ oc -n openshift-ingress-operator patch ingresscontroller/default --type=merge -p '{"spec":{"tuningOptions": {"threadCount": 8}}}'NoteIf you have a node that is capable of running large amounts of resources, you can configure

spec.nodePlacement.nodeSelectorwith labels that match the capacity of the intended node, and configurespec.tuningOptions.threadCountto an appropriately high value.

6.8.6. Configuring an Ingress Controller to use an internal load balancer

When creating an Ingress Controller on cloud platforms, the Ingress Controller is published by a public cloud load balancer by default. As an administrator, you can create an Ingress Controller that uses an internal cloud load balancer.

If your cloud provider is Microsoft Azure, you must have at least one public load balancer that points to your nodes. If you do not, all of your nodes will lose egress connectivity to the internet.

If you want to change the scope for an IngressController, you can change the .spec.endpointPublishingStrategy.loadBalancer.scope parameter after the custom resource (CR) is created.

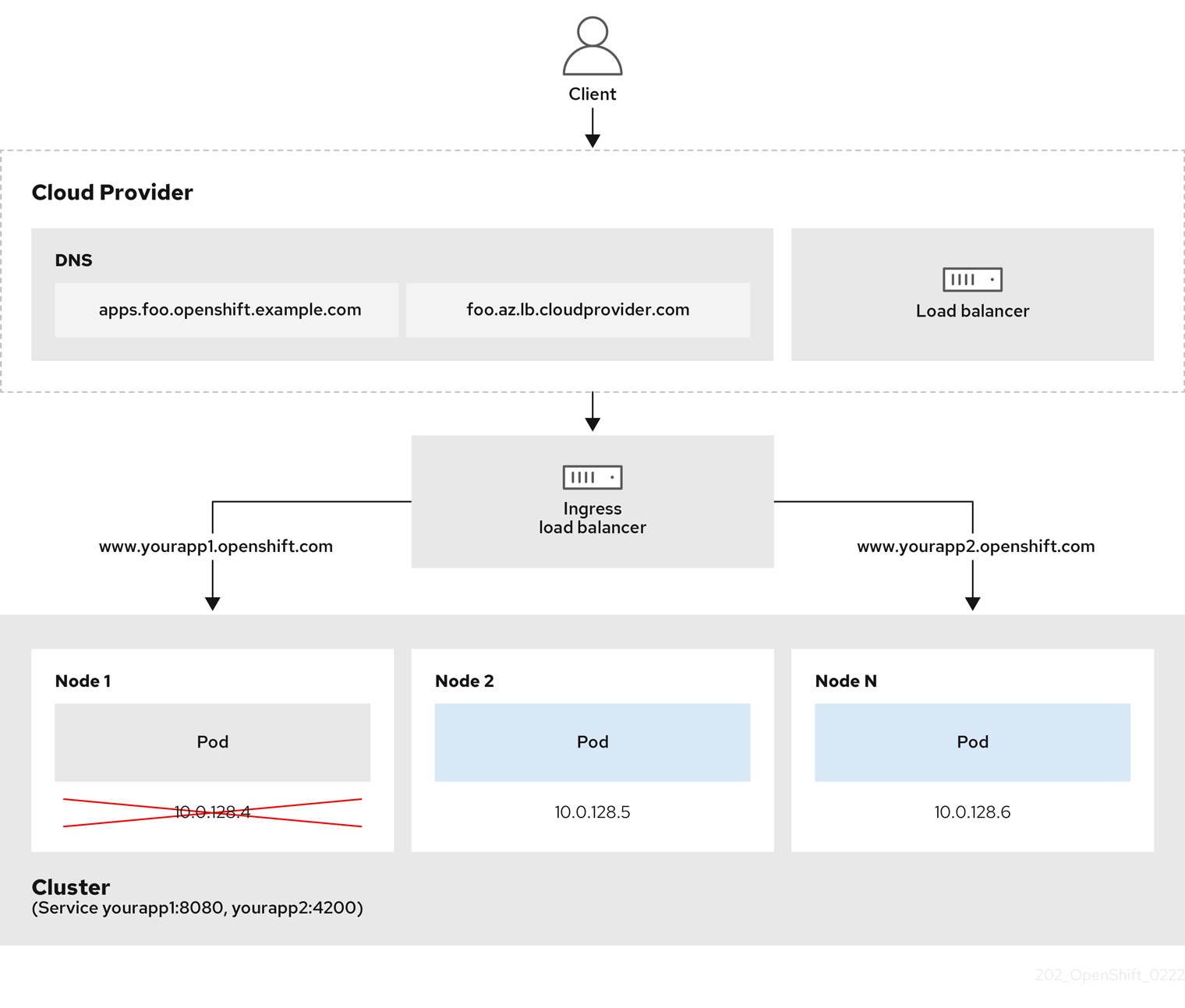

Figure 6.1. Diagram of LoadBalancer

The preceding graphic shows the following concepts pertaining to OpenShift Container Platform Ingress LoadBalancerService endpoint publishing strategy:

- You can load balance externally, using the cloud provider load balancer, or internally, using the OpenShift Ingress Controller Load Balancer.

- You can use the single IP address of the load balancer and more familiar ports, such as 8080 and 4200 as shown on the cluster depicted in the graphic.

- Traffic from the external load balancer is directed at the pods, and managed by the load balancer, as depicted in the instance of a down node. See the Kubernetes Services documentation for implementation details.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create an

IngressControllercustom resource (CR) in a file named<name>-ingress-controller.yaml, such as in the following example:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: namespace: openshift-ingress-operator name: <name>1 spec: domain: <domain>2 endpointPublishingStrategy: type: LoadBalancerService loadBalancer: scope: Internal3 Create the Ingress Controller defined in the previous step by running the following command:

$ oc create -f <name>-ingress-controller.yaml1 - 1

- Replace

<name>with the name of theIngressControllerobject.

Optional: Confirm that the Ingress Controller was created by running the following command:

$ oc --all-namespaces=true get ingresscontrollers

6.8.7. Configuring global access for an Ingress Controller on GCP

An Ingress Controller created on GCP with an internal load balancer generates an internal IP address for the service. A cluster administrator can specify the global access option, which enables clients in any region within the same VPC network and compute region as the load balancer, to reach the workloads running on your cluster.

For more information, see the GCP documentation for global access.

Prerequisites

- You deployed an OpenShift Container Platform cluster on GCP infrastructure.

- You configured an Ingress Controller to use an internal load balancer.

-

You installed the OpenShift CLI (

oc).

Procedure

Configure the Ingress Controller resource to allow global access.

NoteYou can also create an Ingress Controller and specify the global access option.

Configure the Ingress Controller resource:

$ oc -n openshift-ingress-operator edit ingresscontroller/defaultEdit the YAML file:

Sample

clientAccessconfiguration toGlobalspec: endpointPublishingStrategy: loadBalancer: providerParameters: gcp: clientAccess: Global1 type: GCP scope: Internal type: LoadBalancerService- 1

- Set

gcp.clientAccesstoGlobal.

- Save the file to apply the changes.

Run the following command to verify that the service allows global access:

$ oc -n openshift-ingress edit svc/router-default -o yamlThe output shows that global access is enabled for GCP with the annotation,

networking.gke.io/internal-load-balancer-allow-global-access.

6.8.8. Setting the Ingress Controller health check interval

A cluster administrator can set the health check interval to define how long the router waits between two consecutive health checks. This value is applied globally as a default for all routes. The default value is 5 seconds.

Prerequisites

- The following assumes that you already created an Ingress Controller.

Procedure

Update the Ingress Controller to change the interval between back end health checks:

$ oc -n openshift-ingress-operator patch ingresscontroller/default --type=merge -p '{"spec":{"tuningOptions": {"healthCheckInterval": "8s"}}}'NoteTo override the

healthCheckIntervalfor a single route, use the route annotationrouter.openshift.io/haproxy.health.check.interval

6.8.9. Configuring the default Ingress Controller for your cluster to be internal

You can configure the default Ingress Controller for your cluster to be internal by deleting and recreating it.

If your cloud provider is Microsoft Azure, you must have at least one public load balancer that points to your nodes. If you do not, all of your nodes will lose egress connectivity to the internet.

If you want to change the scope for an IngressController, you can change the .spec.endpointPublishingStrategy.loadBalancer.scope parameter after the custom resource (CR) is created.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Configure the

defaultIngress Controller for your cluster to be internal by deleting and recreating it.$ oc replace --force --wait --filename - <<EOF apiVersion: operator.openshift.io/v1 kind: IngressController metadata: namespace: openshift-ingress-operator name: default spec: endpointPublishingStrategy: type: LoadBalancerService loadBalancer: scope: Internal EOF

6.8.10. Configuring the route admission policy

Administrators and application developers can run applications in multiple namespaces with the same domain name. This is for organizations where multiple teams develop microservices that are exposed on the same hostname.

Allowing claims across namespaces should only be enabled for clusters with trust between namespaces, otherwise a malicious user could take over a hostname. For this reason, the default admission policy disallows hostname claims across namespaces.

Prerequisites

- Cluster administrator privileges.

Procedure

Edit the

.spec.routeAdmissionfield of theingresscontrollerresource variable using the following command:$ oc -n openshift-ingress-operator patch ingresscontroller/default --patch '{"spec":{"routeAdmission":{"namespaceOwnership":"InterNamespaceAllowed"}}}' --type=mergeSample Ingress Controller configuration

spec: routeAdmission: namespaceOwnership: InterNamespaceAllowed ...TipYou can alternatively apply the following YAML to configure the route admission policy:

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: routeAdmission: namespaceOwnership: InterNamespaceAllowed

6.8.11. Using wildcard routes

The HAProxy Ingress Controller has support for wildcard routes. The Ingress Operator uses wildcardPolicy to configure the ROUTER_ALLOW_WILDCARD_ROUTES environment variable of the Ingress Controller.

The default behavior of the Ingress Controller is to admit routes with a wildcard policy of None, which is backwards compatible with existing IngressController resources.

Procedure

Configure the wildcard policy.

Use the following command to edit the

IngressControllerresource:$ oc edit IngressControllerUnder

spec, set thewildcardPolicyfield toWildcardsDisallowedorWildcardsAllowed:spec: routeAdmission: wildcardPolicy: WildcardsDisallowed # or WildcardsAllowed

6.8.12. Using X-Forwarded headers

You configure the HAProxy Ingress Controller to specify a policy for how to handle HTTP headers including Forwarded and X-Forwarded-For. The Ingress Operator uses the HTTPHeaders field to configure the ROUTER_SET_FORWARDED_HEADERS environment variable of the Ingress Controller.

Procedure

Configure the

HTTPHeadersfield for the Ingress Controller.Use the following command to edit the

IngressControllerresource:$ oc edit IngressControllerUnder

spec, set theHTTPHeaderspolicy field toAppend,Replace,IfNone, orNever:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: httpHeaders: forwardedHeaderPolicy: Append

Example use cases

As a cluster administrator, you can:

Configure an external proxy that injects the

X-Forwarded-Forheader into each request before forwarding it to an Ingress Controller.To configure the Ingress Controller to pass the header through unmodified, you specify the

neverpolicy. The Ingress Controller then never sets the headers, and applications receive only the headers that the external proxy provides.Configure the Ingress Controller to pass the

X-Forwarded-Forheader that your external proxy sets on external cluster requests through unmodified.To configure the Ingress Controller to set the

X-Forwarded-Forheader on internal cluster requests, which do not go through the external proxy, specify theif-nonepolicy. If an HTTP request already has the header set through the external proxy, then the Ingress Controller preserves it. If the header is absent because the request did not come through the proxy, then the Ingress Controller adds the header.

As an application developer, you can:

Configure an application-specific external proxy that injects the

X-Forwarded-Forheader.To configure an Ingress Controller to pass the header through unmodified for an application’s Route, without affecting the policy for other Routes, add an annotation

haproxy.router.openshift.io/set-forwarded-headers: if-noneorhaproxy.router.openshift.io/set-forwarded-headers: neveron the Route for the application.NoteYou can set the

haproxy.router.openshift.io/set-forwarded-headersannotation on a per route basis, independent from the globally set value for the Ingress Controller.

6.8.13. Enabling HTTP/2 Ingress connectivity

You can enable transparent end-to-end HTTP/2 connectivity in HAProxy. It allows application owners to make use of HTTP/2 protocol capabilities, including single connection, header compression, binary streams, and more.

You can enable HTTP/2 connectivity for an individual Ingress Controller or for the entire cluster.

To enable the use of HTTP/2 for the connection from the client to HAProxy, a route must specify a custom certificate. A route that uses the default certificate cannot use HTTP/2. This restriction is necessary to avoid problems from connection coalescing, where the client re-uses a connection for different routes that use the same certificate.

The connection from HAProxy to the application pod can use HTTP/2 only for re-encrypt routes and not for edge-terminated or insecure routes. This restriction is because HAProxy uses Application-Level Protocol Negotiation (ALPN), which is a TLS extension, to negotiate the use of HTTP/2 with the back-end. The implication is that end-to-end HTTP/2 is possible with passthrough and re-encrypt and not with insecure or edge-terminated routes.

Using WebSockets with a re-encrypt route and with HTTP/2 enabled on an Ingress Controller requires WebSocket support over HTTP/2. WebSockets over HTTP/2 is a feature of HAProxy 2.4, which is unsupported in OpenShift Container Platform at this time.

For non-passthrough routes, the Ingress Controller negotiates its connection to the application independently of the connection from the client. This means a client may connect to the Ingress Controller and negotiate HTTP/1.1, and the Ingress Controller may then connect to the application, negotiate HTTP/2, and forward the request from the client HTTP/1.1 connection using the HTTP/2 connection to the application. This poses a problem if the client subsequently tries to upgrade its connection from HTTP/1.1 to the WebSocket protocol, because the Ingress Controller cannot forward WebSocket to HTTP/2 and cannot upgrade its HTTP/2 connection to WebSocket. Consequently, if you have an application that is intended to accept WebSocket connections, it must not allow negotiating the HTTP/2 protocol or else clients will fail to upgrade to the WebSocket protocol.

Procedure

Enable HTTP/2 on a single Ingress Controller.

To enable HTTP/2 on an Ingress Controller, enter the

oc annotatecommand:$ oc -n openshift-ingress-operator annotate ingresscontrollers/<ingresscontroller_name> ingress.operator.openshift.io/default-enable-http2=trueReplace

<ingresscontroller_name>with the name of the Ingress Controller to annotate.

Enable HTTP/2 on the entire cluster.

To enable HTTP/2 for the entire cluster, enter the

oc annotatecommand:$ oc annotate ingresses.config/cluster ingress.operator.openshift.io/default-enable-http2=trueTipYou can alternatively apply the following YAML to add the annotation:

apiVersion: config.openshift.io/v1 kind: Ingress metadata: name: cluster annotations: ingress.operator.openshift.io/default-enable-http2: "true"

6.8.14. Configuring the PROXY protocol for an Ingress Controller

A cluster administrator can configure the PROXY protocol when an Ingress Controller uses either the HostNetwork or NodePortService endpoint publishing strategy types. The PROXY protocol enables the load balancer to preserve the original client addresses for connections that the Ingress Controller receives. The original client addresses are useful for logging, filtering, and injecting HTTP headers. In the default configuration, the connections that the Ingress Controller receives only contain the source address that is associated with the load balancer.

This feature is not supported in cloud deployments. This restriction is because when OpenShift Container Platform runs in a cloud platform, and an IngressController specifies that a service load balancer should be used, the Ingress Operator configures the load balancer service and enables the PROXY protocol based on the platform requirement for preserving source addresses.

You must configure both OpenShift Container Platform and the external load balancer to either use the PROXY protocol or to use TCP.

The PROXY protocol is unsupported for the default Ingress Controller with installer-provisioned clusters on non-cloud platforms that use a Keepalived Ingress VIP.

Prerequisites

- You created an Ingress Controller.

Procedure

Edit the Ingress Controller resource:

$ oc -n openshift-ingress-operator edit ingresscontroller/defaultSet the PROXY configuration:

If your Ingress Controller uses the hostNetwork endpoint publishing strategy type, set the

spec.endpointPublishingStrategy.hostNetwork.protocolsubfield toPROXY:Sample

hostNetworkconfiguration toPROXYspec: endpointPublishingStrategy: hostNetwork: protocol: PROXY type: HostNetworkIf your Ingress Controller uses the NodePortService endpoint publishing strategy type, set the

spec.endpointPublishingStrategy.nodePort.protocolsubfield toPROXY:Sample

nodePortconfiguration toPROXYspec: endpointPublishingStrategy: nodePort: protocol: PROXY type: NodePortService

6.8.15. Specifying an alternative cluster domain using the appsDomain option

As a cluster administrator, you can specify an alternative to the default cluster domain for user-created routes by configuring the appsDomain field. The appsDomain field is an optional domain for OpenShift Container Platform to use instead of the default, which is specified in the domain field. If you specify an alternative domain, it overrides the default cluster domain for the purpose of determining the default host for a new route.

For example, you can use the DNS domain for your company as the default domain for routes and ingresses for applications running on your cluster.

Prerequisites

- You deployed an OpenShift Container Platform cluster.

-

You installed the

occommand line interface.

Procedure

Configure the

appsDomainfield by specifying an alternative default domain for user-created routes.Edit the ingress

clusterresource:$ oc edit ingresses.config/cluster -o yamlEdit the YAML file:

Sample

appsDomainconfiguration totest.example.comapiVersion: config.openshift.io/v1 kind: Ingress metadata: name: cluster spec: domain: apps.example.com1 appsDomain: <test.example.com>2

Verify that an existing route contains the domain name specified in the

appsDomainfield by exposing the route and verifying the route domain change:NoteWait for the

openshift-apiserverfinish rolling updates before exposing the route.Expose the route:

$ oc expose service hello-openshift route.route.openshift.io/hello-openshift exposedExample output:

$ oc get routes NAME HOST/PORT PATH SERVICES PORT TERMINATION WILDCARD hello-openshift hello_openshift-<my_project>.test.example.com hello-openshift 8080-tcp None

6.8.16. Converting HTTP header case

HAProxy 2.2 lowercases HTTP header names by default, for example, changing Host: xyz.com to host: xyz.com. If legacy applications are sensitive to the capitalization of HTTP header names, use the Ingress Controller spec.httpHeaders.headerNameCaseAdjustments API field for a solution to accommodate legacy applications until they can be fixed.

Because OpenShift Container Platform includes HAProxy 2.2, make sure to add the necessary configuration by using spec.httpHeaders.headerNameCaseAdjustments before upgrading.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have access to the cluster as a user with the

cluster-adminrole.

Procedure

As a cluster administrator, you can convert the HTTP header case by entering the oc patch command or by setting the HeaderNameCaseAdjustments field in the Ingress Controller YAML file.

Specify an HTTP header to be capitalized by entering the

oc patchcommand.Enter the

oc patchcommand to change the HTTPhostheader toHost:$ oc -n openshift-ingress-operator patch ingresscontrollers/default --type=merge --patch='{"spec":{"httpHeaders":{"headerNameCaseAdjustments":["Host"]}}}'Annotate the route of the application:

$ oc annotate routes/my-application haproxy.router.openshift.io/h1-adjust-case=trueThe Ingress Controller then adjusts the

hostrequest header as specified.

Specify adjustments using the

HeaderNameCaseAdjustmentsfield by configuring the Ingress Controller YAML file.The following example Ingress Controller YAML adjusts the

hostheader toHostfor HTTP/1 requests to appropriately annotated routes:Example Ingress Controller YAML

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: httpHeaders: headerNameCaseAdjustments: - HostThe following example route enables HTTP response header name case adjustments using the

haproxy.router.openshift.io/h1-adjust-caseannotation:Example route YAML

apiVersion: route.openshift.io/v1 kind: Route metadata: annotations: haproxy.router.openshift.io/h1-adjust-case: true1 name: my-application namespace: my-application spec: to: kind: Service name: my-application- 1

- Set

haproxy.router.openshift.io/h1-adjust-caseto true.

6.8.17. Using router compression

You configure the HAProxy Ingress Controller to specify router compression globally for specific MIME types. You can use the mimeTypes variable to define the formats of MIME types to which compression is applied. The types are: application, image, message, multipart, text, video, or a custom type prefaced by "X-". To see the full notation for MIME types and subtypes, see RFC1341.

Memory allocated for compression can affect the max connections. Additionally, compression of large buffers can cause latency, like heavy regex or long lists of regex.

Not all MIME types benefit from compression, but HAProxy still uses resources to try to compress if instructed to. Generally, text formats, such as html, css, and js, formats benefit from compression, but formats that are already compressed, such as image, audio, and video, benefit little in exchange for the time and resources spent on compression.

Procedure

Configure the

httpCompressionfield for the Ingress Controller.Use the following command to edit the

IngressControllerresource:$ oc edit -n openshift-ingress-operator ingresscontrollers/defaultUnder

spec, set thehttpCompressionpolicy field tomimeTypesand specify a list of MIME types that should have compression applied:apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: httpCompression: mimeTypes: - "text/html" - "text/css; charset=utf-8" - "application/json" ...

6.8.18. Exposing router metrics

You can expose the HAProxy router metrics by default in Prometheus format on the default stats port, 1936. The external metrics collection and aggregation systems such as Prometheus can access the HAProxy router metrics. You can view the HAProxy router metrics in a browser in the HTML and comma separated values (CSV) format.

Prerequisites

- You configured your firewall to access the default stats port, 1936.

Procedure

Get the router pod name by running the following command:

$ oc get pods -n openshift-ingressExample output

NAME READY STATUS RESTARTS AGE router-default-76bfffb66c-46qwp 1/1 Running 0 11hGet the router’s username and password, which the router pod stores in the

/var/lib/haproxy/conf/metrics-auth/statsUsernameand/var/lib/haproxy/conf/metrics-auth/statsPasswordfiles:Get the username by running the following command:

$ oc rsh <router_pod_name> cat metrics-auth/statsUsernameGet the password by running the following command:

$ oc rsh <router_pod_name> cat metrics-auth/statsPassword

Get the router IP and metrics certificates by running the following command:

$ oc describe pod <router_pod>Get the raw statistics in Prometheus format by running the following command:

$ curl -u <user>:<password> http://<router_IP>:<stats_port>/metricsAccess the metrics securely by running the following command:

$ curl -u user:password https://<router_IP>:<stats_port>/metrics -kAccess the default stats port, 1936, by running the following command:

$ curl -u <user>:<password> http://<router_IP>:<stats_port>/metricsExample 6.1. Example output

… # HELP haproxy_backend_connections_total Total number of connections. # TYPE haproxy_backend_connections_total gauge haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route"} 0 haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route-alt"} 0 haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route01"} 0 … # HELP haproxy_exporter_server_threshold Number of servers tracked and the current threshold value. # TYPE haproxy_exporter_server_threshold gauge haproxy_exporter_server_threshold{type="current"} 11 haproxy_exporter_server_threshold{type="limit"} 500 … # HELP haproxy_frontend_bytes_in_total Current total of incoming bytes. # TYPE haproxy_frontend_bytes_in_total gauge haproxy_frontend_bytes_in_total{frontend="fe_no_sni"} 0 haproxy_frontend_bytes_in_total{frontend="fe_sni"} 0 haproxy_frontend_bytes_in_total{frontend="public"} 119070 … # HELP haproxy_server_bytes_in_total Current total of incoming bytes. # TYPE haproxy_server_bytes_in_total gauge haproxy_server_bytes_in_total{namespace="",pod="",route="",server="fe_no_sni",service=""} 0 haproxy_server_bytes_in_total{namespace="",pod="",route="",server="fe_sni",service=""} 0 haproxy_server_bytes_in_total{namespace="default",pod="docker-registry-5-nk5fz",route="docker-registry",server="10.130.0.89:5000",service="docker-registry"} 0 haproxy_server_bytes_in_total{namespace="default",pod="hello-rc-vkjqx",route="hello-route",server="10.130.0.90:8080",service="hello-svc-1"} 0 …

Launch the stats window by entering the following URL in a browser:

http://<user>:<password>@<router_IP>:<stats_port>Optional: Get the stats in CSV format by entering the following URL in a browser:

http://<user>:<password>@<router_ip>:1936/metrics;csv

6.8.19. Customizing HAProxy error code response pages

As a cluster administrator, you can specify a custom error code response page for either 503, 404, or both error pages. The HAProxy router serves a 503 error page when the application pod is not running or a 404 error page when the requested URL does not exist. For example, if you customize the 503 error code response page, then the page is served when the application pod is not running, and the default 404 error code HTTP response page is served by the HAProxy router for an incorrect route or a non-existing route.

Custom error code response pages are specified in a config map then patched to the Ingress Controller. The config map keys have two available file names as follows: error-page-503.http and error-page-404.http.

Custom HTTP error code response pages must follow the HAProxy HTTP error page configuration guidelines. Here is an example of the default OpenShift Container Platform HAProxy router http 503 error code response page. You can use the default content as a template for creating your own custom page.

By default, the HAProxy router serves only a 503 error page when the application is not running or when the route is incorrect or non-existent. This default behavior is the same as the behavior on OpenShift Container Platform 4.8 and earlier. If a config map for the customization of an HTTP error code response is not provided, and you are using a custom HTTP error code response page, the router serves a default 404 or 503 error code response page.

If you use the OpenShift Container Platform default 503 error code page as a template for your customizations, the headers in the file require an editor that can use CRLF line endings.

Procedure

Create a config map named

my-custom-error-code-pagesin theopenshift-confignamespace:$ oc -n openshift-config create configmap my-custom-error-code-pages \ --from-file=error-page-503.http \ --from-file=error-page-404.httpImportantIf you do not specify the correct format for the custom error code response page, a router pod outage occurs. To resolve this outage, you must delete or correct the config map and delete the affected router pods so they can be recreated with the correct information.

Patch the Ingress Controller to reference the

my-custom-error-code-pagesconfig map by name:$ oc patch -n openshift-ingress-operator ingresscontroller/default --patch '{"spec":{"httpErrorCodePages":{"name":"my-custom-error-code-pages"}}}' --type=mergeThe Ingress Operator copies the

my-custom-error-code-pagesconfig map from theopenshift-confignamespace to theopenshift-ingressnamespace. The Operator names the config map according to the pattern,<your_ingresscontroller_name>-errorpages, in theopenshift-ingressnamespace.Display the copy:

$ oc get cm default-errorpages -n openshift-ingressExample output

NAME DATA AGE default-errorpages 2 25s1 - 1

- The example config map name is

default-errorpagesbecause thedefaultIngress Controller custom resource (CR) was patched.

Confirm that the config map containing the custom error response page mounts on the router volume where the config map key is the filename that has the custom HTTP error code response:

For 503 custom HTTP custom error code response:

$ oc -n openshift-ingress rsh <router_pod> cat /var/lib/haproxy/conf/error_code_pages/error-page-503.httpFor 404 custom HTTP custom error code response:

$ oc -n openshift-ingress rsh <router_pod> cat /var/lib/haproxy/conf/error_code_pages/error-page-404.http

Verification

Verify your custom error code HTTP response:

Create a test project and application:

$ oc new-project test-ingress$ oc new-app django-psql-exampleFor 503 custom http error code response:

- Stop all the pods for the application.

Run the following curl command or visit the route hostname in the browser:

$ curl -vk <route_hostname>

For 404 custom http error code response:

- Visit a non-existent route or an incorrect route.

Run the following curl command or visit the route hostname in the browser:

$ curl -vk <route_hostname>

Check if the

errorfileattribute is properly in thehaproxy.configfile:$ oc -n openshift-ingress rsh <router> cat /var/lib/haproxy/conf/haproxy.config | grep errorfile

6.8.20. Setting the Ingress Controller maximum connections

A cluster administrator can set the maximum number of simultaneous connections for OpenShift router deployments. You can patch an existing Ingress Controller to increase the maximum number of connections.

Prerequisites

- The following assumes that you already created an Ingress Controller

Procedure

Update the Ingress Controller to change the maximum number of connections for HAProxy:

$ oc -n openshift-ingress-operator patch ingresscontroller/default --type=merge -p '{"spec":{"tuningOptions": {"maxConnections": 7500}}}'WarningIf you set the

spec.tuningOptions.maxConnectionsvalue greater than the current operating system limit, the HAProxy process will not start. See the table in the "Ingress Controller configuration parameters" section for more information about this parameter.