Questo contenuto non è disponibile nella lingua selezionata.

Chapter 3. Reference design specifications

3.1. Telco core and RAN DU reference design specifications

The telco core reference design specification (RDS) describes OpenShift Container Platform 4.14 clusters running on commodity hardware that can support large scale telco applications including control plane and some centralized data plane functions.

The telco RAN RDS describes the configuration for clusters running on commodity hardware to host 5G workloads in the Radio Access Network (RAN).

3.1.1. Reference design specifications for telco 5G deployments

Red Hat and certified partners offer deep technical expertise and support for networking and operational capabilities required to run telco applications on OpenShift Container Platform 4.14 clusters.

Red Hat’s telco partners require a well-integrated, well-tested, and stable environment that can be replicated at scale for enterprise 5G solutions. The telco core and RAN DU reference design specifications (RDS) outline the recommended solution architecture based on a specific version of OpenShift Container Platform. Each RDS describes a tested and validated platform configuration for telco core and RAN DU use models. The RDS ensures an optimal experience when running your applications by defining the set of critical KPIs for telco 5G core and RAN DU. Following the RDS minimizes high severity escalations and improves application stability.

5G use cases are evolving and your workloads are continually changing. Red Hat is committed to iterating over the telco core and RAN DU RDS to support evolving requirements based on customer and partner feedback.

3.1.2. Reference design scope

The telco core and telco RAN reference design specifications (RDS) capture the recommended, tested, and supported configurations to get reliable and repeatable performance for clusters running the telco core and telco RAN profiles.

Each RDS includes the released features and supported configurations that are engineered and validated for clusters to run the individual profiles. The configurations provide a baseline OpenShift Container Platform installation that meets feature and KPI targets. Each RDS also describes expected variations for each individual configuration. Validation of each RDS includes many long duration and at-scale tests.

The validated reference configurations are updated for each major Y-stream release of OpenShift Container Platform. Z-stream patch releases are periodically re-tested against the reference configurations.

3.1.3. Deviations from the reference design

Deviating from the validated telco core and telco RAN DU reference design specifications (RDS) can have significant impact beyond the specific component or feature that you change. Deviations require analysis and engineering in the context of the complete solution.

All deviations from the RDS should be analyzed and documented with clear action tracking information. Due diligence is expected from partners to understand how to bring deviations into line with the reference design. This might require partners to provide additional resources to engage with Red Hat to work towards enabling their use case to achieve a best in class outcome with the platform. This is critical for the supportability of the solution and ensuring alignment across Red Hat and with partners.

Deviation from the RDS can have some or all of the following consequences:

- It can take longer to resolve issues.

- There is a risk of missing project service-level agreements (SLAs), project deadlines, end provider performance requirements, and so on.

Unapproved deviations may require escalation at executive levels.

NoteRed Hat prioritizes the servicing of requests for deviations based on partner engagement priorities.

3.2. Telco RAN DU reference design specification

3.2.1. Telco RAN DU 4.14 reference design overview

The Telco RAN distributed unit (DU) 4.14 reference design configures an OpenShift Container Platform 4.14 cluster running on commodity hardware to host telco RAN DU workloads. It captures the recommended, tested, and supported configurations to get reliable and repeatable performance for a cluster running the telco RAN DU profile.

3.2.1.1. OpenShift Container Platform 4.14 features for telco RAN DU

The following features that are included in OpenShift Container Platform 4.14 and are leveraged by the telco RAN DU reference design specification (RDS) have been added or updated.

| Feature | Description |

|---|---|

| GitOps ZTP independence from managed cluster version | You can now use GitOps ZTP to manage clusters that are running different versions of OpenShift Container Platform compared to the version that is running on the hub cluster. You can also have a mix of OpenShift Container Platform versions in the deployed fleet of clusters. |

| Using custom CRs alongside the reference CRs in GitOps ZTP |

You can now use custom CRs alongside the reference configuration CRs provided in the |

|

Using custom node labels in the |

You can now use the |

| Intel Westport Channel e810 NIC as PTP Grandmaster clock (Technology Preview) | You can use the Intel Westport Channel E810-XXVDA4T as a GNSS-sourced grandmaster clock. The NIC is automatically configured by the PTP Operator with the E810 hardware plugin. |

| PTP Operator hardware specific functionality plugin (Technology Preview) | A new E810 NIC hardware plugin is now available in the PTP Operator. You can use the E810 plugin to configure the NIC directly. |

| PTP events and metrics |

The |

| Precaching user-specified images | You can now precache application workload images before upgrading your applications on single-node OpenShift clusters with Topology Aware Lifecycle Manager. |

| Using OpenShift capabilities to further reduce the single-node OpenShift DU footprint |

Use cluster capabilities to enable or disable optional components before you install the cluster. In OpenShift Container Platform 4.14, the following optional capabilities are available: |

|

Set | single-node OpenShift clusters that run DU workloads require logging and log forwarding. |

3.2.1.2. Deployment architecture overview

You deploy the telco RAN DU 4.14 reference configuration to managed clusters from a centrally managed RHACM hub cluster. The reference design specification (RDS) includes configuration of the managed clusters and the hub cluster components.

Figure 3.1. Telco RAN DU deployment architecture overview

3.2.2. Telco RAN DU use model overview

Use the following information to plan telco RAN DU workloads, cluster resources, and hardware specifications for the hub cluster and managed single-node OpenShift clusters.

3.2.2.1. Telco RAN DU application workloads

DU worker nodes must have 3rd Generation Xeon (Ice Lake) 2.20 GHz or better CPUs with firmware tuned for maximum performance.

5G RAN DU user applications and workloads should conform to the following best practices and application limits:

- Develop cloud-native network functions (CNFs) that conform to the latest version of the CNF best practices guide.

- Use SR-IOV for high performance networking.

Use exec probes sparingly and only when no other suitable options are available

-

Do not use exec probes if a CNF uses CPU pinning. Use other probe implementations, for example,

httpGetortcpSocket. - When you need to use exec probes, limit the exec probe frequency and quantity. The maximum number of exec probes must be kept below 10, and frequency must not be set to less than 10 seconds.

-

Do not use exec probes if a CNF uses CPU pinning. Use other probe implementations, for example,

Startup probes require minimal resources during steady-state operation. The limitation on exec probes applies primarily to liveness and readiness probes.

3.2.2.2. Telco RAN DU representative reference application workload characteristics

The representative reference application workload has the following characteristics:

- Has a maximum of 15 pods and 30 containers for the vRAN application including its management and control functions

-

Uses a maximum of 2

ConfigMapand 4SecretCRs per pod - Uses a maximum of 10 exec probes with a frequency of not less than 10 seconds

Incremental application load on the

kube-apiserveris less than 10% of the cluster platform usageNoteYou can extract CPU load can from the platform metrics. For example:

query=avg_over_time(pod:container_cpu_usage:sum{namespace="openshift-kube-apiserver"}[30m])- Application logs are not collected by the platform log collector

- Aggregate traffic on the primary CNI is less than 1 MBps

3.2.2.3. Telco RAN DU worker node cluster resource utilization

The maximum number of running pods in the system, inclusive of application workloads and OpenShift Container Platform pods, is 120.

- Resource utilization

OpenShift Container Platform resource utilization varies depending on many factors including application workload characteristics such as:

- Pod count

- Type and frequency of probes

- Messaging rates on primary CNI or secondary CNI with kernel networking

- API access rate

- Logging rates

- Storage IOPS

Cluster resource requirements are applicable under the following conditions:

- The cluster is running the described representative application workload.

- The cluster is managed with the constraints described in "Telco RAN DU worker node cluster resource utilization".

- Components noted as optional in the RAN DU use model configuration are not applied.

You will need to do additional analysis to determine the impact on resource utilization and ability to meet KPI targets for configurations outside the scope of the Telco RAN DU reference design. You might have to allocate additional resources in the cluster depending on your requirements.

3.2.2.4. Hub cluster management characteristics

Red Hat Advanced Cluster Management (RHACM) is the recommended cluster management solution. Configure it to the following limits on the hub cluster:

- Configure a maximum of 5 RHACM policies with a compliant evaluation interval of at least 10 minutes.

- Use a maximum of 10 managed cluster templates in policies. Where possible, use hub-side templating.

Disable all RHACM add-ons except for the

policy-controllerandobservability-controlleradd-ons. SetObservabilityto the default configuration.ImportantConfiguring optional components or enabling additional features will result in additional resource usage and can reduce overall system performance.

For more information, see Reference design deployment components.

| Metric | Limit | Notes |

|---|---|---|

| CPU usage | Less than 4000 mc – 2 cores (4 hyperthreads) | Platform CPU is pinned to reserved cores, including both hyperthreads in each reserved core. The system is engineered to use 3 CPUs (3000mc) at steady-state to allow for periodic system tasks and spikes. |

| Memory used | Less than 16G |

3.2.2.5. Telco RAN DU RDS components

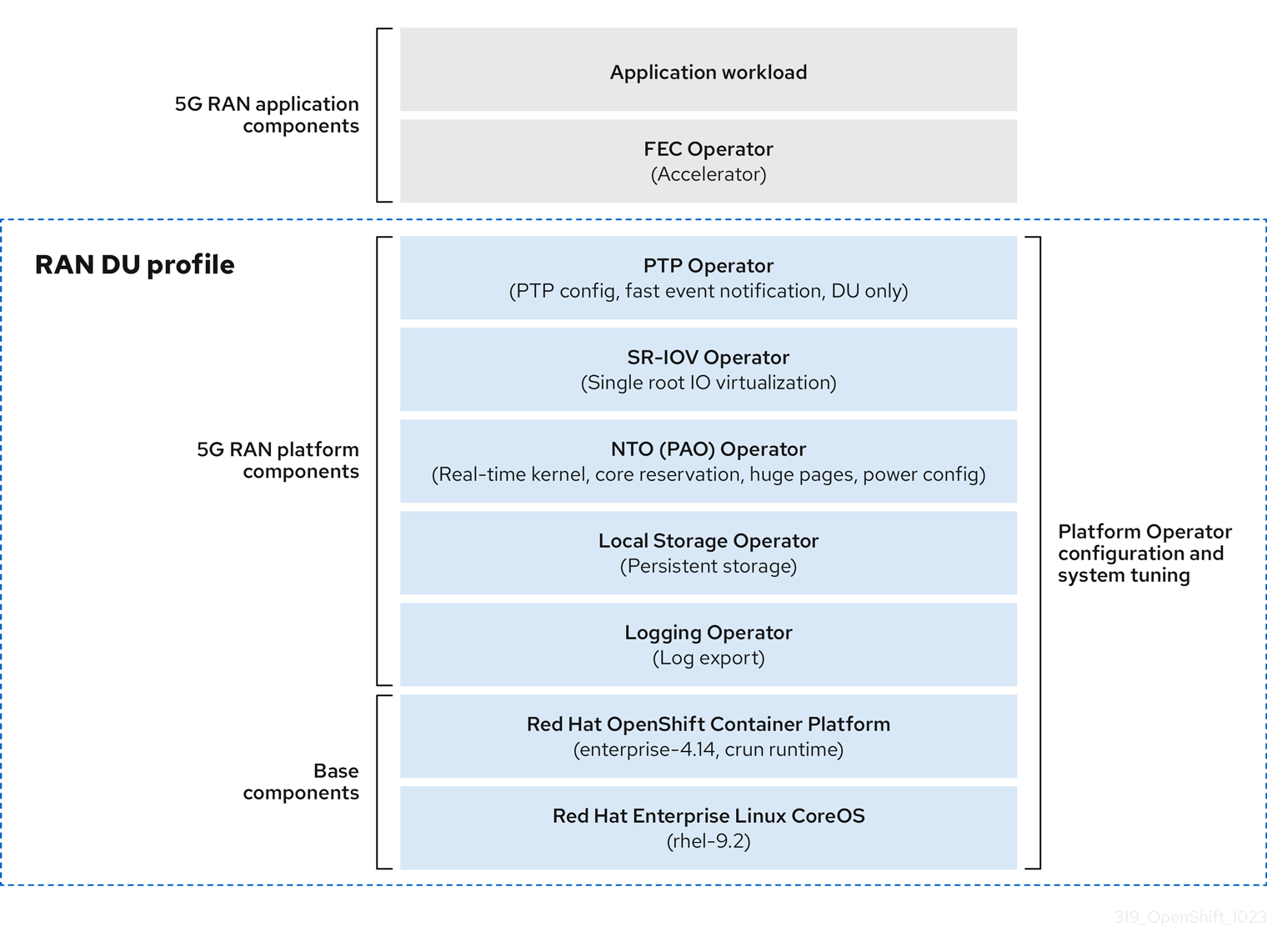

The following sections describe the various OpenShift Container Platform components and configurations that you use to configure and deploy clusters to run telco RAN DU workloads.

Figure 3.2. Telco RAN DU reference design components

Ensure that components that are not included in the telco RAN DU profile do not affect the CPU resources allocated to workload applications.

Out of tree drivers are not supported.

3.2.3. Telco RAN DU 4.14 reference design components

The following sections describe the various OpenShift Container Platform components and configurations that you use to configure and deploy clusters to run RAN DU workloads.

3.2.3.1. Host firmware tuning

- New in this release

- No reference design updates in this release

- Description

Configure system level performance. See Configuring host firmware for low latency and high performance for recommended settings.

If Ironic inspection is enabled, the firmware setting values are available from the per-cluster

BareMetalHostCR on the hub cluster. You enable Ironic inspection with a label in thespec.clusters.nodesfield in theSiteConfigCR that you use to install the cluster. For example:nodes: - hostName: "example-node1.example.com" ironicInspect: "enabled"NoteThe telco RAN DU reference

SiteConfigdoes not enable theironicInspectfield by default.- Limits and requirements

- Hyperthreading must be enabled

- Engineering considerations

Tune all settings for maximum performance

NoteYou can tune firmware selections for power savings at the expense of performance as required.

3.2.3.2. Node Tuning Operator

- New in this release

- No reference design updates in this release

- Description

You tune the cluster performance by creating a performance profile. Settings that you configure with a performance profile include:

- Selecting the realtime or non-realtime kernel.

-

Allocating cores to a reserved or isolated

cpuset. OpenShift Container Platform processes allocated to the management workload partition are pinned to reserved set. - Enabling kubelet features (CPU manager, topology manager, and memory manager).

- Configuring huge pages.

- Setting additional kernel arguments.

- Setting per-core power tuning and max CPU frequency.

- Limits and requirements

The Node Tuning Operator uses the

PerformanceProfileCR to configure the cluster. You need to configure the following settings in the RAN DU profilePerformanceProfileCR:- Select reserved and isolated cores and ensure that you allocate at least 4 hyperthreads (equivalent to 2 cores) on Intel 3rd Generation Xeon (Ice Lake) 2.20 GHz CPUs or better with firmware tuned for maximum performance.

-

Set the reserved

cpusetto include both hyperthread siblings for each included core. Unreserved cores are available as allocatable CPU for scheduling workloads. Ensure that hyperthread siblings are not split across reserved and isolated cores. - Configure reserved and isolated CPUs to include all threads in all cores based on what you have set as reserved and isolated CPUs.

- Set core 0 of each NUMA node to be included in the reserved CPU set.

- Set the huge page size to 1G.

You should not add additional workloads to the management partition. Only those pods which are part of the OpenShift management platform should be annotated into the management partition.

- Engineering considerations

You should use the RT kernel to meet performance requirements.

NoteYou can use the non-RT kernel if required.

- The number of huge pages that you configure depends on the application workload requirements. Variation in this parameter is expected and allowed.

- Variation is expected in the configuration of reserved and isolated CPU sets based on selected hardware and additional components in use on the system. Variation must still meet the specified limits.

- Hardware without IRQ affinity support impacts isolated CPUs. To ensure that pods with guaranteed whole CPU QoS have full use of the allocated CPU, all hardware in the server must support IRQ affinity. For more information, see About support of IRQ affinity setting.

In OpenShift Container Platform 4.14, any PerformanceProfile CR configured on the cluster causes the Node Tuning Operator to automatically set all cluster nodes to use cgroup v1.

For more information about cgroups, see Configuring Linux cgroup.

3.2.3.3. PTP Operator

- New in this release

- PTP grandmaster clock (T-GM) GPS timing with Intel E810-XXV-4T Westport Channel NIC – minimum firmware version 4.30 (Technology Preview)

- PTP events and metrics for grandmaster (T-GM) are new in OpenShift Container Platform 4.14 (Technology Preview)

- Description

Configure of PTP timing support for cluster nodes. The DU node can run in the following modes:

- As an ordinary clock synced to a T-GM or boundary clock (T-BC)

- As dual boundary clocks, one per NIC (high availability is not supported)

- As grandmaster clock with support for E810 Westport Channel NICs (Technology Preview)

- Optionally as a boundary clock for radio units (RUs)

Optional: subscribe applications to PTP events that happen on the node that the application is running. You subscribe the application to events via HTTP.

- Limits and requirements

- High availability is not supported with dual NIC configurations.

- Westport Channel NICs configured as T-GM do not support DPLL with the current ice driver version.

- GPS offsets are not reported. Use a default offset of less than or equal to 5.

- DPLL offsets are not reported. Use a default offset of less than or equal to 5.

- Engineering considerations

- Configurations are provided for ordinary clock, boundary clock, or grandmaster clock

-

PTP fast event notifications uses

ConfigMapCRs to store PTP event subscriptions - Use Intel E810-XXV-4T Westport Channel NICs for PTP grandmaster clocks with GPS timing, minimum firmware version 4.40

3.2.3.4. SR-IOV Operator

- New in this release

- No reference design updates in this release

- Description

-

The SR-IOV Operator provisions and configures the SR-IOV CNI and device plugins. Both

netdevice(kernel VFs) andvfio(DPDK) devices are supported. - Engineering considerations

-

Customer variation on the configuration and number of

SriovNetworkandSriovNetworkNodePolicycustom resources (CRs) is expected. -

IOMMU kernel command-line settings are applied with a

MachineConfigCR at install time. This ensures that theSriovOperatorCR does not cause a reboot of the node when adding them.

-

Customer variation on the configuration and number of

3.2.3.5. Logging

- New in this release

- Vector is now the recommended log collector.

- Description

- Use logging to collect logs from the far edge node for remote analysis.

- Engineering considerations

- Handling logs beyond the infrastructure and audit logs, for example, from the application workload requires additional CPU and network bandwidth based on additional logging rate.

As of OpenShift Container Platform 4.14, vector is the reference log collector.

NoteUse of fluentd in the RAN use model is deprecated.

3.2.3.6. SRIOV-FEC Operator

- New in this release

- No reference design updates in this release

- Description

- SRIOV-FEC Operator is an optional 3rd party Certified Operator supporting FEC accelerator hardware.

- Limits and requirements

Starting with FEC Operator v2.7.0:

-

SecureBootis supported -

The

vfiodriver for thePFrequires the usage ofvfio-tokenthat is injected into Pods. TheVFtoken can be passed to DPDK by using the EAL parameter--vfio-vf-token.

-

- Engineering considerations

-

The SRIOV-FEC Operator uses CPU cores from the

isolatedCPU set. - You can validate FEC readiness as part of the pre-checks for application deployment, for example, by extending the validation policy.

-

The SRIOV-FEC Operator uses CPU cores from the

3.2.3.7. Local Storage Operator

- New in this release

- No reference design updates in this release

- Description

-

You can create persistent volumes that can be used as

PVCresources by applications with the Local Storage Operator. The number and type ofPVresources that you create depends on your requirements. - Engineering considerations

-

Create backing storage for

PVCRs before creating thePV. This can be a partition, a local volume, LVM volume, or full disk. Refer to the device listing in

LocalVolumeCRs by the hardware path used to access each device to ensure correct allocation of disks and partitions. Logical names (for example,/dev/sda) are not guaranteed to be consistent across node reboots.For more information, see the RHEL 9 documentation on device identifiers.

-

Create backing storage for

3.2.3.8. LVMS Operator

- New in this release

- No reference design updates in this release

- New in this release

-

Simplified LVMS

deviceSelectorlogic -

LVM Storage with

ext4andPVresources

-

Simplified LVMS

LVMS Operator is an optional component.

- Description

The LVMS Operator provides dynamic provisioning of block and file storage. The LVMS Operator creates logical volumes from local devices that can be used as

PVCresources by applications. Volume expansion and snapshots are also possible.The following example configuration creates a

vg1volume group that leverages all available disks on the node except the installation disk:StorageLVMCluster.yaml

apiVersion: lvm.topolvm.io/v1alpha1 kind: LVMCluster metadata: name: storage-lvmcluster namespace: openshift-storage annotations: ran.openshift.io/ztp-deploy-wave: "10" spec: {} storage: deviceClasses: - name: vg1 thinPoolConfig: name: thin-pool-1 sizePercent: 90 overprovisionRatio: 10- Limits and requirements

- In single-node OpenShift clusters, persistent storage must be provided by either LVMS or Local Storage, not both.

- Engineering considerations

- The LVMS Operator is not the reference storage solution for the DU use case. If you require LVMS Operator for application workloads, the resource use is accounted for against the application cores.

- Ensure that sufficient disks or partitions are available for storage requirements.

3.2.3.9. Workload partitioning

- New in this release

- No reference design updates in this release

- Description

Workload partitioning pins OpenShift platform and Day 2 Operator pods that are part of the DU profile to the reserved

cpusetand removes the reserved CPU from node accounting. This leaves all unreserved CPU cores available for user workloads.The method of enabling and configuring workload partitioning changed in OpenShift Container Platform 4.14.

- 4.14 and later

Configure partitions by setting installation parameters:

cpuPartitioningMode: AllNodes-

Configure management partition cores with the reserved CPU set in the

PerformanceProfileCR

- 4.13 and earlier

-

Configure partitions with extra

MachineConfigurationCRs applied at install-time

-

Configure partitions with extra

- Limits and requirements

-

NamespaceandPodCRs must be annotated to allow the pod to be applied to the management partition - Pods with CPU limits cannot be allocated to the partition. This is because mutation can change the pod QoS.

- For more information about the minimum number of CPUs that can be allocated to the management partition, see Node Tuning Operator.

-

- Engineering considerations

- Workload Partitioning pins all management pods to reserved cores. A sufficient number of cores must be allocated to the reserved set to account for operating system, management pods, and expected spikes in CPU use that occur when the workload starts, the node reboots, or other system events happen.

3.2.3.10. Cluster tuning

- New in this release

You can remove the Image Registry Operator by using the cluster capabilities feature.

NoteYou configure cluster capabilities by using the

spec.clusters.installConfigOverridesfield in theSiteConfigCR that you use to install the cluster.

- Description

The cluster capabilities feature now includes a

MachineAPIcomponent which, when excluded, disables the following Operators and their resources in the cluster:-

openshift/cluster-autoscaler-operator -

openshift/cluster-control-plane-machine-set-operator -

openshift/machine-api-operator

-

- Limits and requirements

- Cluster capabilities are not available for installer-provisioned installation methods.

You must apply all platform tuning configurations. The following table lists the required platform tuning configurations:

Expand Table 3.3. Cluster capabilities configurations Feature Description Remove optional cluster capabilities

Reduce the OpenShift Container Platform footprint by disabling optional cluster Operators on single-node OpenShift clusters only.

- Remove all optional Operators except the Marketplace and Node Tuning Operators.

Configure cluster monitoring

Configure the monitoring stack for reduced footprint by doing the following:

-

Disable the local

alertmanagerandtelemetercomponents. -

If you use RHACM observability, the CR must be augmented with appropriate

additionalAlertManagerConfigsCRs to forward alerts to the hub cluster. Reduce the

Prometheusretention period to 24h.NoteThe RHACM hub cluster aggregates managed cluster metrics.

Disable networking diagnostics

Disable networking diagnostics for single-node OpenShift because they are not required.

Configure a single Operator Hub catalog source

Configure the cluster to use a single catalog source that contains only the Operators required for a RAN DU deployment. Each catalog source increases the CPU use on the cluster. Using a single

CatalogSourcefits within the platform CPU budget.

3.2.3.11. Machine configuration

- New in this release

-

Set

rcu_normalafter node recovery

-

Set

- Limits and requirements

The CRI-O wipe disable

MachineConfigassumes that images on disk are static other than during scheduled maintenance in defined maintenance windows. To ensure the images are static, do not set the podimagePullPolicyfield toAlways.Expand Table 3.4. Machine configuration options Feature Description Container runtime

Sets the container runtime to

crunfor all node roles.kubelet config and container mount hiding

Reduces the frequency of kubelet housekeeping and eviction monitoring to reduce CPU usage. Create a container mount namespace, visible to kubelet and CRI-O, to reduce system mount scanning resource usage.

SCTP

Optional configuration (enabled by default) Enables SCTP. SCTP is required by RAN applications but disabled by default in RHCOS.

kdump

Optional configuration (enabled by default) Enables kdump to capture debug information when a kernel panic occurs.

CRI-O wipe disable

Disables automatic wiping of the CRI-O image cache after unclean shutdown.

SR-IOV-related kernel arguments

Includes additional SR-IOV related arguments in the kernel command line.

RCU Normal systemd service

Sets

rcu_normalafter the system is fully started.One-shot time sync

Runs a one-time system time synchronization job for control plane or worker nodes.

3.2.3.12. Reference design deployment components

The following sections describe the various OpenShift Container Platform components and configurations that you use to configure the hub cluster with Red Hat Advanced Cluster Management (RHACM).

3.2.3.12.1. Red Hat Advanced Cluster Management (RHACM)

- New in this release

- Additional node labels can be configured during installation.

- Description

RHACM provides Multi Cluster Engine (MCE) installation and ongoing lifecycle management functionality for deployed clusters. You declaratively specify configurations and upgrades with

PolicyCRs and apply the policies to clusters with the RHACM policy controller as managed by Topology Aware Lifecycle Manager.- GitOps Zero Touch Provisioning (ZTP) uses the MCE feature of RHACM

- Configuration, upgrades, and cluster status are managed with the RHACM policy controller

- Limits and requirements

-

A single hub cluster supports up to 3500 deployed single-node OpenShift clusters with 5

PolicyCRs bound to each cluster.

-

A single hub cluster supports up to 3500 deployed single-node OpenShift clusters with 5

- Engineering considerations

-

Cluster specific configuration: managed clusters typically have some number of configuration values that are specific to the individual cluster. These configurations should be managed using RHACM policy hub-side templating with values pulled from

ConfigMapCRs based on the cluster name. - To save CPU resources on managed clusters, policies that apply static configurations should be unbound from managed clusters after GitOps ZTP installation of the cluster. For more information, see Release a persistent volume.

-

Cluster specific configuration: managed clusters typically have some number of configuration values that are specific to the individual cluster. These configurations should be managed using RHACM policy hub-side templating with values pulled from

3.2.3.12.2. Topology Aware Lifecycle Manager (TALM)

- New in this release

- Added support for pre-caching additional user-specified images

- Description

- Managed updates

TALM is an Operator that runs only on the hub cluster for managing how changes (including cluster and Operator upgrades, configuration, and so on) are rolled out to the network. TALM does the following:

-

Progressively applies updates to fleets of clusters in user-configurable batches by using

PolicyCRs. -

Adds

ztp-donelabels or other user configurable labels on a per-cluster basis

-

Progressively applies updates to fleets of clusters in user-configurable batches by using

- Precaching for single-node OpenShift clusters

TALM supports optional precaching of OpenShift Container Platform, OLM Operator, and additional user images to single-node OpenShift clusters before initiating an upgrade.

A new

PreCachingConfigcustom resource is available for specifying optional pre-caching configurations. For example:apiVersion: ran.openshift.io/v1alpha1 kind: PreCachingConfig metadata: name: example-config namespace: example-ns spec: additionalImages: - quay.io/foobar/application1@sha256:3d5800990dee7cd4727d3fe238a97e2d2976d3808fc925ada29c559a47e2e - quay.io/foobar/application2@sha256:3d5800123dee7cd4727d3fe238a97e2d2976d3808fc925ada29c559a47adf - quay.io/foobar/applicationN@sha256:4fe1334adfafadsf987123adfffdaf1243340adfafdedga0991234afdadfs spaceRequired: 45 GiB1 overrides: preCacheImage: quay.io/test_images/pre-cache:latest platformImage: quay.io/openshift-release-dev/ocp-release@sha256:3d5800990dee7cd4727d3fe238a97e2d2976d3808fc925ada29c559a47e2e operatorsIndexes: - registry.example.com:5000/custom-redhat-operators:1.0.0 operatorsPackagesAndChannels: - local-storage-operator: stable - ptp-operator: stable - sriov-network-operator: stable excludePrecachePatterns:2 - aws - vsphere

- Backup and restore for single-node OpenShift

- TALM supports taking a snapshot of the cluster operating system and configuration to a dedicated partition on a local disk. A restore script is provided that returns the cluster to the backed up state.

- Limits and requirements

- TALM supports concurrent cluster deployment in batches of 400

- Precaching and backup features are for single-node OpenShift clusters only.

- Engineering considerations

-

The

PreCachingConfigCR is optional and does not need to be created if you just wants to precache platform related (OpenShift and OLM Operator) images. ThePreCachingConfigCR must be applied before referencing it in theClusterGroupUpgradeCR. - Create a recovery partition during installation if you opt to use the TALM backup and restore feature.

-

The

3.2.3.12.3. GitOps and GitOps ZTP plugins

- New in this release

- GA support for inclusion of user-provided CRs in Git for GitOps ZTP deployments

- GitOps ZTP independence from the deployed cluster version

- Description

GitOps and GitOps ZTP plugins provide a GitOps-based infrastructure for managing cluster deployment and configuration. Cluster definitions and configurations are maintained as a declarative state in Git. ZTP plugins provide support for generating installation CRs from the

SiteConfigCR and automatic wrapping of configuration CRs in policies based onPolicyGenTemplateCRs.You can deploy and manage multiple versions of OpenShift Container Platform on managed clusters with the baseline reference configuration CRs in a

/source-crssubdirectory provided that subdirectory also contains thekustomization.yamlfile. You add user-provided CRs to this subdirectory that you use with the predefined CRs that are specified in thePolicyGenTemplateCRs. This allows you to tailor your configurations to suit your specific requirements and provides GitOps ZTP version independence between managed clusters and the hub cluster.For more information, see the following:

- Limits

-

300

SiteConfigCRs per ArgoCD application. You can use multiple applications to achieve the maximum number of clusters supported by a single hub cluster. -

Content in the

/source-crsfolder in Git overrides content provided in the GitOps ZTP plugin container. Git takes precedence in the search path. Add the

/source-crsfolder in the same directory as thekustomization.yamlfile, which includes thePolicyGenTemplateas a generator.NoteAlternative locations for the

/source-crsdirectory are not supported in this context.

-

300

- Engineering considerations

-

To avoid confusion or unintentional overwriting of files when updating content, use unique and distinguishable names for user-provided CRs in the

/source-crsfolder and extra manifests in Git. -

The

SiteConfigCR allows multiple extra-manifest paths. When files with the same name are found in multiple directory paths, the last file found takes precedence. This allows the full set of version specific Day 0 manifests (extra-manifests) to be placed in Git and referenced from theSiteConfig. With this feature, you can deploy multiple OpenShift Container Platform versions to managed clusters simultaneously. -

The

extraManifestPathfield of theSiteConfigCR is deprecated from OpenShift Container Platform 4.15 and later. Use the newextraManifests.searchPathsfield instead.

-

To avoid confusion or unintentional overwriting of files when updating content, use unique and distinguishable names for user-provided CRs in the

3.2.3.12.4. Agent-based installer

- New in this release

- No reference design updates in this release

- Description

Agent-based installer (ABI) provides installation capabilities without centralized infrastructure. The installation program creates an ISO image that you mount to the server. When the server boots it installs OpenShift Container Platform and supplied extra manifests.

NoteYou can also use ABI to install OpenShift Container Platform clusters without a hub cluster. An image registry is still required when you use ABI in this manner.

Agent-based installer (ABI) is an optional component.

- Limits and requirements

- You can supply a limited set of additional manifests at installation time.

-

You must include

MachineConfigurationCRs that are required by the RAN DU use case.

- Engineering considerations

- ABI provides a baseline OpenShift Container Platform installation.

- You install Day 2 Operators and the remainder of the RAN DU use case configurations after installation.

3.2.3.13. Additional components

3.2.3.13.1. Bare Metal Event Relay

The Bare Metal Event Relay is an optional Operator that runs exclusively on the managed spoke cluster. It relays Redfish hardware events to cluster applications.

The Bare Metal Event Relay is not included in the RAN DU use model reference configuration and is an optional feature. If you want to use the Bare Metal Event Relay, assign additional CPU resources from the application CPU budget.

3.2.4. Telco RAN distributed unit (DU) reference configuration CRs

Use the following custom resources (CRs) to configure and deploy OpenShift Container Platform clusters with the telco RAN DU profile. Some of the CRs are optional depending on your requirements. CR fields you can change are annotated in the CR with YAML comments.

You can extract the complete set of RAN DU CRs from the ztp-site-generate container image. See Preparing the GitOps ZTP site configuration repository for more information.

3.2.4.1. Day 2 Operators reference CRs

| Component | Reference CR | Optional | New in this release |

|---|---|---|---|

| Cluster logging | No | No | |

| Cluster logging | No | No | |

| Cluster logging | No | No | |

| Cluster logging | No | No | |

| Cluster logging | No | No | |

| Local Storage Operator | Yes | No | |

| Local Storage Operator | Yes | No | |

| Local Storage Operator | Yes | No | |

| Local Storage Operator | Yes | No | |

| Local Storage Operator | Yes | No | |

| Node Tuning Operator | No | No | |

| Node Tuning Operator | No | No | |

| PTP fast event notifications | Yes | No | |

| PTP Operator | No | No | |

| PTP Operator | No | Yes | |

| PTP Operator | No | No | |

| PTP Operator | No | No | |

| PTP Operator | No | No | |

| PTP Operator | No | No | |

| PTP Operator | No | No | |

| SR-IOV FEC Operator | Yes | No | |

| SR-IOV FEC Operator | Yes | No | |

| SR-IOV FEC Operator | Yes | No | |

| SR-IOV FEC Operator | Yes | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No |

3.2.4.2. Cluster tuning reference CRs

| Component | Reference CR | Optional | New in this release |

|---|---|---|---|

| Cluster capabilities | No | No | |

| Disabling network diagnostics | No | No | |

| Monitoring configuration | No | No | |

| OperatorHub | No | No | |

| OperatorHub | No | No | |

| OperatorHub | No | No | |

| OperatorHub | No | No |

3.2.4.3. Machine configuration reference CRs

| Component | Reference CR | Optional | New in this release |

|---|---|---|---|

| Container runtime (crun) | No | No | |

| Container runtime (crun) | No | No | |

| Disabling CRI-O wipe | No | No | |

| Disabling CRI-O wipe | No | No | |

| Enabling kdump | No | Yes | |

| Enabling kdump | No | Yes | |

| Enabling kdump | No | No | |

| Enabling kdump | No | No | |

| Kubelet configuration and container mount hiding | No | No | |

| Kubelet configuration and container mount hiding | No | No | |

| One-shot time sync | No | Yes | |

| One-shot time sync | No | Yes | |

| SCTP | No | No | |

| SCTP | No | No | |

| Set RCU Normal | No | No | |

| Set RCU Normal | No | No | |

| SR-IOV related kernel arguments | No | Yes | |

| SR-IOV related kernel arguments | No | No |

3.2.4.4. YAML reference

The following is a complete reference for all the custom resources (CRs) that make up the telco RAN DU 4.14 reference configuration.

3.2.4.4.1. Day 2 Operators reference YAML

ClusterLogForwarder.yaml

apiVersion: "logging.openshift.io/v1"

kind: ClusterLogForwarder

metadata:

name: instance

namespace: openshift-logging

annotations: {}

spec:

outputs: $outputs

pipelines: $pipelinesClusterLogging.yaml

apiVersion: logging.openshift.io/v1

kind: ClusterLogging

metadata:

name: instance

namespace: openshift-logging

annotations: {}

spec:

managementState: "Managed"

collection:

logs:

type: "vector"ClusterLogNS.yaml

---

apiVersion: v1

kind: Namespace

metadata:

name: openshift-logging

annotations:

workload.openshift.io/allowed: managementClusterLogOperGroup.yaml

---

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: cluster-logging

namespace: openshift-logging

annotations: {}

spec:

targetNamespaces:

- openshift-loggingClusterLogSubscription.yaml

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: cluster-logging

namespace: openshift-logging

annotations: {}

spec:

channel: "stable"

name: cluster-logging

source: redhat-operators-disconnected

sourceNamespace: openshift-marketplace

installPlanApproval: Manual

status:

state: AtLatestKnownStorageClass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

annotations: {}

name: example-storage-class

provisioner: kubernetes.io/no-provisioner

reclaimPolicy: DeleteStorageLV.yaml

apiVersion: "local.storage.openshift.io/v1"

kind: "LocalVolume"

metadata:

name: "local-disks"

namespace: "openshift-local-storage"

annotations: {}

spec:

logLevel: Normal

managementState: Managed

storageClassDevices:

# The list of storage classes and associated devicePaths need to be specified like this example:

- storageClassName: "example-storage-class"

volumeMode: Filesystem

fsType: xfs

# The below must be adjusted to the hardware.

# For stability and reliability, it's recommended to use persistent

# naming conventions for devicePaths, such as /dev/disk/by-path.

devicePaths:

- /dev/disk/by-path/pci-0000:05:00.0-nvme-1

#---

## How to verify

## 1. Create a PVC

# apiVersion: v1

# kind: PersistentVolumeClaim

# metadata:

# name: local-pvc-name

# spec:

# accessModes:

# - ReadWriteOnce

# volumeMode: Filesystem

# resources:

# requests:

# storage: 100Gi

# storageClassName: example-storage-class

#---

## 2. Create a pod that mounts it

# apiVersion: v1

# kind: Pod

# metadata:

# labels:

# run: busybox

# name: busybox

# spec:

# containers:

# - image: quay.io/quay/busybox:latest

# name: busybox

# resources: {}

# command: ["/bin/sh", "-c", "sleep infinity"]

# volumeMounts:

# - name: local-pvc

# mountPath: /data

# volumes:

# - name: local-pvc

# persistentVolumeClaim:

# claimName: local-pvc-name

# dnsPolicy: ClusterFirst

# restartPolicy: Always

## 3. Run the pod on the cluster and verify the size and access of the `/data` mountStorageNS.yaml

apiVersion: v1

kind: Namespace

metadata:

name: openshift-local-storage

annotations:

workload.openshift.io/allowed: managementStorageOperGroup.yaml

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: openshift-local-storage

namespace: openshift-local-storage

annotations: {}

spec:

targetNamespaces:

- openshift-local-storageStorageSubscription.yaml

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: local-storage-operator

namespace: openshift-local-storage

annotations: {}

spec:

channel: "stable"

name: local-storage-operator

source: redhat-operators-disconnected

sourceNamespace: openshift-marketplace

installPlanApproval: Manual

status:

state: AtLatestKnownPerformanceProfile.yaml

apiVersion: performance.openshift.io/v2

kind: PerformanceProfile

metadata:

# if you change this name make sure the 'include' line in TunedPerformancePatch.yaml

# matches this name: include=openshift-node-performance-${PerformanceProfile.metadata.name}

# Also in file 'validatorCRs/informDuValidator.yaml':

# name: 50-performance-${PerformanceProfile.metadata.name}

name: openshift-node-performance-profile

annotations:

ran.openshift.io/reference-configuration: "ran-du.redhat.com"

spec:

additionalKernelArgs:

- "rcupdate.rcu_normal_after_boot=0"

- "efi=runtime"

- "vfio_pci.enable_sriov=1"

- "vfio_pci.disable_idle_d3=1"

- "module_blacklist=irdma"

cpu:

isolated: $isolated

reserved: $reserved

hugepages:

defaultHugepagesSize: $defaultHugepagesSize

pages:

- size: $size

count: $count

node: $node

machineConfigPoolSelector:

pools.operator.machineconfiguration.openshift.io/$mcp: ""

nodeSelector:

node-role.kubernetes.io/$mcp: ""

numa:

topologyPolicy: "restricted"

# To use the standard (non-realtime) kernel, set enabled to false

realTimeKernel:

enabled: true

workloadHints:

# WorkloadHints defines the set of upper level flags for different type of workloads.

# See https://github.com/openshift/cluster-node-tuning-operator/blob/master/docs/performanceprofile/performance_profile.md#workloadhints

# for detailed descriptions of each item.

# The configuration below is set for a low latency, performance mode.

realTime: true

highPowerConsumption: false

perPodPowerManagement: falseTunedPerformancePatch.yaml

apiVersion: tuned.openshift.io/v1

kind: Tuned

metadata:

name: performance-patch

namespace: openshift-cluster-node-tuning-operator

annotations: {}

spec:

profile:

- name: performance-patch

# Please note:

# - The 'include' line must match the associated PerformanceProfile name, following below pattern

# include=openshift-node-performance-${PerformanceProfile.metadata.name}

# - When using the standard (non-realtime) kernel, remove the kernel.timer_migration override from

# the [sysctl] section and remove the entire section if it is empty.

data: |

[main]

summary=Configuration changes profile inherited from performance created tuned

include=openshift-node-performance-openshift-node-performance-profile

[sysctl]

kernel.timer_migration=1

[scheduler]

group.ice-ptp=0:f:10:*:ice-ptp.*

group.ice-gnss=0:f:10:*:ice-gnss.*

[service]

service.stalld=start,enable

service.chronyd=stop,disable

recommend:

- machineConfigLabels:

machineconfiguration.openshift.io/role: "$mcp"

priority: 19

profile: performance-patchPtpOperatorConfigForEvent.yaml

apiVersion: ptp.openshift.io/v1

kind: PtpOperatorConfig

metadata:

name: default

namespace: openshift-ptp

annotations: {}

spec:

daemonNodeSelector:

node-role.kubernetes.io/$mcp: ""

ptpEventConfig:

enableEventPublisher: true

transportHost: "http://ptp-event-publisher-service-NODE_NAME.openshift-ptp.svc.cluster.local:9043"PtpConfigBoundary.yaml

apiVersion: ptp.openshift.io/v1

kind: PtpConfig

metadata:

name: boundary

namespace: openshift-ptp

annotations: {}

spec:

profile:

- name: "boundary"

ptp4lOpts: "-2"

phc2sysOpts: "-a -r -n 24"

ptpSchedulingPolicy: SCHED_FIFO

ptpSchedulingPriority: 10

ptpSettings:

logReduce: "true"

ptp4lConf: |

# The interface name is hardware-specific

[$iface_slave]

masterOnly 0

[$iface_master_1]

masterOnly 1

[$iface_master_2]

masterOnly 1

[$iface_master_3]

masterOnly 1

[global]

#

# Default Data Set

#

twoStepFlag 1

slaveOnly 0

priority1 128

priority2 128

domainNumber 24

#utc_offset 37

clockClass 248

clockAccuracy 0xFE

offsetScaledLogVariance 0xFFFF

free_running 0

freq_est_interval 1

dscp_event 0

dscp_general 0

dataset_comparison G.8275.x

G.8275.defaultDS.localPriority 128

#

# Port Data Set

#

logAnnounceInterval -3

logSyncInterval -4

logMinDelayReqInterval -4

logMinPdelayReqInterval -4

announceReceiptTimeout 3

syncReceiptTimeout 0

delayAsymmetry 0

fault_reset_interval -4

neighborPropDelayThresh 20000000

masterOnly 0

G.8275.portDS.localPriority 128

#

# Run time options

#

assume_two_step 0

logging_level 6

path_trace_enabled 0

follow_up_info 0

hybrid_e2e 0

inhibit_multicast_service 0

net_sync_monitor 0

tc_spanning_tree 0

tx_timestamp_timeout 50

unicast_listen 0

unicast_master_table 0

unicast_req_duration 3600

use_syslog 1

verbose 0

summary_interval 0

kernel_leap 1

check_fup_sync 0

clock_class_threshold 135

#

# Servo Options

#

pi_proportional_const 0.0

pi_integral_const 0.0

pi_proportional_scale 0.0

pi_proportional_exponent -0.3

pi_proportional_norm_max 0.7

pi_integral_scale 0.0

pi_integral_exponent 0.4

pi_integral_norm_max 0.3

step_threshold 2.0

first_step_threshold 0.00002

max_frequency 900000000

clock_servo pi

sanity_freq_limit 200000000

ntpshm_segment 0

#

# Transport options

#

transportSpecific 0x0

ptp_dst_mac 01:1B:19:00:00:00

p2p_dst_mac 01:80:C2:00:00:0E

udp_ttl 1

udp6_scope 0x0E

uds_address /var/run/ptp4l

#

# Default interface options

#

clock_type BC

network_transport L2

delay_mechanism E2E

time_stamping hardware

tsproc_mode filter

delay_filter moving_median

delay_filter_length 10

egressLatency 0

ingressLatency 0

boundary_clock_jbod 0

#

# Clock description

#

productDescription ;;

revisionData ;;

manufacturerIdentity 00:00:00

userDescription ;

timeSource 0xA0

recommend:

- profile: "boundary"

priority: 4

match:

- nodeLabel: "node-role.kubernetes.io/$mcp"PtpConfigGmWpc.yaml

# The grandmaster profile is provided for testing only

# It is not installed on production clusters

apiVersion: ptp.openshift.io/v1

kind: PtpConfig

metadata:

name: grandmaster

namespace: openshift-ptp

annotations: {}

spec:

profile:

- name: "grandmaster"

ptp4lOpts: "-2 --summary_interval -4"

phc2sysOpts: -r -u 0 -m -O -37 -N 8 -R 16 -s $iface_master -n 24

ptpSchedulingPolicy: SCHED_FIFO

ptpSchedulingPriority: 10

ptpSettings:

logReduce: "true"

plugins:

e810:

enableDefaultConfig: false

settings:

LocalMaxHoldoverOffSet: 1500

LocalHoldoverTimeout: 14400

MaxInSpecOffset: 1500

pins: $e810_pins

# "$iface_master":

# "U.FL2": "0 2"

# "U.FL1": "0 1"

# "SMA2": "0 2"

# "SMA1": "0 1"

ublxCmds:

- args: #ubxtool -P 29.20 -z CFG-HW-ANT_CFG_VOLTCTRL,1

- "-P"

- "29.20"

- "-z"

- "CFG-HW-ANT_CFG_VOLTCTRL,1"

reportOutput: false

- args: #ubxtool -P 29.20 -e GPS

- "-P"

- "29.20"

- "-e"

- "GPS"

reportOutput: false

- args: #ubxtool -P 29.20 -d Galileo

- "-P"

- "29.20"

- "-d"

- "Galileo"

reportOutput: false

- args: #ubxtool -P 29.20 -d GLONASS

- "-P"

- "29.20"

- "-d"

- "GLONASS"

reportOutput: false

- args: #ubxtool -P 29.20 -d BeiDou

- "-P"

- "29.20"

- "-d"

- "BeiDou"

reportOutput: false

- args: #ubxtool -P 29.20 -d SBAS

- "-P"

- "29.20"

- "-d"

- "SBAS"

reportOutput: false

- args: #ubxtool -P 29.20 -t -w 5 -v 1 -e SURVEYIN,600,50000

- "-P"

- "29.20"

- "-t"

- "-w"

- "5"

- "-v"

- "1"

- "-e"

- "SURVEYIN,600,50000"

reportOutput: true

- args: #ubxtool -P 29.20 -p MON-HW

- "-P"

- "29.20"

- "-p"

- "MON-HW"

reportOutput: true

- args: #ubxtool -P 29.20 -p CFG-MSG,1,38,300

- "-P"

- "29.20"

- "-p"

- "CFG-MSG,1,38,300"

reportOutput: true

ts2phcOpts: " "

ts2phcConf: |

[nmea]

ts2phc.master 1

[global]

use_syslog 0

verbose 1

logging_level 7

ts2phc.pulsewidth 100000000

#GNSS module s /dev/ttyGNSS* -al use _0

#cat /dev/ttyGNSS_1700_0 to find available serial port

#example value of gnss_serialport is /dev/ttyGNSS_1700_0

ts2phc.nmea_serialport $gnss_serialport

leapfile /usr/share/zoneinfo/leap-seconds.list

[$iface_master]

ts2phc.extts_polarity rising

ts2phc.extts_correction 0

ptp4lConf: |

[$iface_master]

masterOnly 1

[$iface_master_1]

masterOnly 1

[$iface_master_2]

masterOnly 1

[$iface_master_3]

masterOnly 1

[global]

#

# Default Data Set

#

twoStepFlag 1

priority1 128

priority2 128

domainNumber 24

#utc_offset 37

clockClass 6

clockAccuracy 0x27

offsetScaledLogVariance 0xFFFF

free_running 0

freq_est_interval 1

dscp_event 0

dscp_general 0

dataset_comparison G.8275.x

G.8275.defaultDS.localPriority 128

#

# Port Data Set

#

logAnnounceInterval -3

logSyncInterval -4

logMinDelayReqInterval -4

logMinPdelayReqInterval 0

announceReceiptTimeout 3

syncReceiptTimeout 0

delayAsymmetry 0

fault_reset_interval -4

neighborPropDelayThresh 20000000

masterOnly 0

G.8275.portDS.localPriority 128

#

# Run time options

#

assume_two_step 0

logging_level 6

path_trace_enabled 0

follow_up_info 0

hybrid_e2e 0

inhibit_multicast_service 0

net_sync_monitor 0

tc_spanning_tree 0

tx_timestamp_timeout 50

unicast_listen 0

unicast_master_table 0

unicast_req_duration 3600

use_syslog 1

verbose 0

summary_interval -4

kernel_leap 1

check_fup_sync 0

clock_class_threshold 7

#

# Servo Options

#

pi_proportional_const 0.0

pi_integral_const 0.0

pi_proportional_scale 0.0

pi_proportional_exponent -0.3

pi_proportional_norm_max 0.7

pi_integral_scale 0.0

pi_integral_exponent 0.4

pi_integral_norm_max 0.3

step_threshold 2.0

first_step_threshold 0.00002

clock_servo pi

sanity_freq_limit 200000000

ntpshm_segment 0

#

# Transport options

#

transportSpecific 0x0

ptp_dst_mac 01:1B:19:00:00:00

p2p_dst_mac 01:80:C2:00:00:0E

udp_ttl 1

udp6_scope 0x0E

uds_address /var/run/ptp4l

#

# Default interface options

#

clock_type BC

network_transport L2

delay_mechanism E2E

time_stamping hardware

tsproc_mode filter

delay_filter moving_median

delay_filter_length 10

egressLatency 0

ingressLatency 0

boundary_clock_jbod 0

#

# Clock description

#

productDescription ;;

revisionData ;;

manufacturerIdentity 00:00:00

userDescription ;

timeSource 0x20

recommend:

- profile: "grandmaster"

priority: 4

match:

- nodeLabel: "node-role.kubernetes.io/$mcp"PtpConfigSlave.yaml

apiVersion: ptp.openshift.io/v1

kind: PtpConfig

metadata:

name: ordinary

namespace: openshift-ptp

annotations: {}

spec:

profile:

- name: "ordinary"

# The interface name is hardware-specific

interface: $interface

ptp4lOpts: "-2 -s"

phc2sysOpts: "-a -r -n 24"

ptpSchedulingPolicy: SCHED_FIFO

ptpSchedulingPriority: 10

ptpSettings:

logReduce: "true"

ptp4lConf: |

[global]

#

# Default Data Set

#

twoStepFlag 1

slaveOnly 1

priority1 128

priority2 128

domainNumber 24

#utc_offset 37

clockClass 255

clockAccuracy 0xFE

offsetScaledLogVariance 0xFFFF

free_running 0

freq_est_interval 1

dscp_event 0

dscp_general 0

dataset_comparison G.8275.x

G.8275.defaultDS.localPriority 128

#

# Port Data Set

#

logAnnounceInterval -3

logSyncInterval -4

logMinDelayReqInterval -4

logMinPdelayReqInterval -4

announceReceiptTimeout 3

syncReceiptTimeout 0

delayAsymmetry 0

fault_reset_interval -4

neighborPropDelayThresh 20000000

masterOnly 0

G.8275.portDS.localPriority 128

#

# Run time options

#

assume_two_step 0

logging_level 6

path_trace_enabled 0

follow_up_info 0

hybrid_e2e 0

inhibit_multicast_service 0

net_sync_monitor 0

tc_spanning_tree 0

tx_timestamp_timeout 50

unicast_listen 0

unicast_master_table 0

unicast_req_duration 3600

use_syslog 1

verbose 0

summary_interval 0

kernel_leap 1

check_fup_sync 0

clock_class_threshold 7

#

# Servo Options

#

pi_proportional_const 0.0

pi_integral_const 0.0

pi_proportional_scale 0.0

pi_proportional_exponent -0.3

pi_proportional_norm_max 0.7

pi_integral_scale 0.0

pi_integral_exponent 0.4

pi_integral_norm_max 0.3

step_threshold 2.0

first_step_threshold 0.00002

max_frequency 900000000

clock_servo pi

sanity_freq_limit 200000000

ntpshm_segment 0

#

# Transport options

#

transportSpecific 0x0

ptp_dst_mac 01:1B:19:00:00:00

p2p_dst_mac 01:80:C2:00:00:0E

udp_ttl 1

udp6_scope 0x0E

uds_address /var/run/ptp4l

#

# Default interface options

#

clock_type OC

network_transport L2

delay_mechanism E2E

time_stamping hardware

tsproc_mode filter

delay_filter moving_median

delay_filter_length 10

egressLatency 0

ingressLatency 0

boundary_clock_jbod 0

#

# Clock description

#

productDescription ;;

revisionData ;;

manufacturerIdentity 00:00:00

userDescription ;

timeSource 0xA0

recommend:

- profile: "ordinary"

priority: 4

match:

- nodeLabel: "node-role.kubernetes.io/$mcp"PtpOperatorConfig.yaml

apiVersion: ptp.openshift.io/v1

kind: PtpOperatorConfig

metadata:

name: default

namespace: openshift-ptp

annotations: {}

spec:

daemonNodeSelector:

node-role.kubernetes.io/$mcp: ""PtpSubscription.yaml

---

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: ptp-operator-subscription

namespace: openshift-ptp

annotations: {}

spec:

channel: "stable"

name: ptp-operator

source: redhat-operators-disconnected

sourceNamespace: openshift-marketplace

installPlanApproval: Manual

status:

state: AtLatestKnownPtpSubscriptionNS.yaml

---

apiVersion: v1

kind: Namespace

metadata:

name: openshift-ptp

annotations:

workload.openshift.io/allowed: management

labels:

openshift.io/cluster-monitoring: "true"PtpSubscriptionOperGroup.yaml

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: ptp-operators

namespace: openshift-ptp

annotations: {}

spec:

targetNamespaces:

- openshift-ptpAcceleratorsNS.yaml

apiVersion: v1

kind: Namespace

metadata:

name: vran-acceleration-operators

annotations: {}AcceleratorsOperGroup.yaml

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: vran-operators

namespace: vran-acceleration-operators

annotations: {}

spec:

targetNamespaces:

- vran-acceleration-operatorsAcceleratorsSubscription.yaml

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: sriov-fec-subscription

namespace: vran-acceleration-operators

annotations: {}

spec:

channel: stable

name: sriov-fec

source: certified-operators

sourceNamespace: openshift-marketplace

installPlanApproval: Manual

status:

state: AtLatestKnownSriovFecClusterConfig.yaml

apiVersion: sriovfec.intel.com/v2

kind: SriovFecClusterConfig

metadata:

name: config

namespace: vran-acceleration-operators

annotations: {}

spec:

drainSkip: $drainSkip # true if SNO, false by default

priority: 1

nodeSelector:

node-role.kubernetes.io/master: ""

acceleratorSelector:

pciAddress: $pciAddress

physicalFunction:

pfDriver: "vfio-pci"

vfDriver: "vfio-pci"

vfAmount: 16

bbDevConfig: $bbDevConfig

#Recommended configuration for Intel ACC100 (Mount Bryce) FPGA here: https://github.com/smart-edge-open/openshift-operator/blob/main/spec/openshift-sriov-fec-operator.md#sample-cr-for-wireless-fec-acc100

#Recommended configuration for Intel N3000 FPGA here: https://github.com/smart-edge-open/openshift-operator/blob/main/spec/openshift-sriov-fec-operator.md#sample-cr-for-wireless-fec-n3000SriovNetwork.yaml

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetwork

metadata:

name: ""

namespace: openshift-sriov-network-operator

annotations: {}

spec:

# resourceName: ""

networkNamespace: openshift-sriov-network-operator

# vlan: ""

# spoofChk: ""

# ipam: ""

# linkState: ""

# maxTxRate: ""

# minTxRate: ""

# vlanQoS: ""

# trust: ""

# capabilities: ""SriovNetworkNodePolicy.yaml

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetworkNodePolicy

metadata:

name: $name

namespace: openshift-sriov-network-operator

annotations: {}

spec:

# The attributes for Mellanox/Intel based NICs as below.

# deviceType: netdevice/vfio-pci

# isRdma: true/false

deviceType: $deviceType

isRdma: $isRdma

nicSelector:

# The exact physical function name must match the hardware used

pfNames: [$pfNames]

nodeSelector:

node-role.kubernetes.io/$mcp: ""

numVfs: $numVfs

priority: $priority

resourceName: $resourceNameSriovOperatorConfig.yaml

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovOperatorConfig

metadata:

name: default

namespace: openshift-sriov-network-operator

annotations: {}

spec:

configDaemonNodeSelector:

"node-role.kubernetes.io/$mcp": ""

# Injector and OperatorWebhook pods can be disabled (set to "false") below

# to reduce the number of management pods. It is recommended to start with the

# webhook and injector pods enabled, and only disable them after verifying the

# correctness of user manifests.

# If the injector is disabled, containers using sr-iov resources must explicitly assign

# them in the "requests"/"limits" section of the container spec, for example:

# containers:

# - name: my-sriov-workload-container

# resources:

# limits:

# openshift.io/<resource_name>: "1"

# requests:

# openshift.io/<resource_name>: "1"

enableInjector: true

enableOperatorWebhook: true

logLevel: 0SriovSubscription.yaml

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: sriov-network-operator-subscription

namespace: openshift-sriov-network-operator

annotations: {}

spec:

channel: "stable"

name: sriov-network-operator

source: redhat-operators-disconnected

sourceNamespace: openshift-marketplace

installPlanApproval: Manual

status:

state: AtLatestKnownSriovSubscriptionNS.yaml

apiVersion: v1

kind: Namespace

metadata:

name: openshift-sriov-network-operator

annotations:

workload.openshift.io/allowed: managementSriovSubscriptionOperGroup.yaml

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: sriov-network-operators

namespace: openshift-sriov-network-operator

annotations: {}

spec:

targetNamespaces:

- openshift-sriov-network-operator3.2.4.4.2. Cluster tuning reference YAML

example-sno.yaml

# example-node1-bmh-secret & assisted-deployment-pull-secret need to be created under same namespace example-sno

---

apiVersion: ran.openshift.io/v1

kind: SiteConfig

metadata:

name: "example-sno"

namespace: "example-sno"

spec:

baseDomain: "example.com"

pullSecretRef:

name: "assisted-deployment-pull-secret"

clusterImageSetNameRef: "openshift-4.10"

sshPublicKey: "ssh-rsa AAAA..."

clusters:

- clusterName: "example-sno"

networkType: "OVNKubernetes"

# installConfigOverrides is a generic way of passing install-config

# parameters through the siteConfig. The 'capabilities' field configures

# the composable openshift feature. In this 'capabilities' setting, we

# remove all but the marketplace component from the optional set of

# components.

# Notes:

# - OperatorLifecycleManager is needed for 4.15 and later

# - NodeTuning is needed for 4.13 and later, not for 4.12 and earlier

installConfigOverrides: |

{

"capabilities": {

"baselineCapabilitySet": "None",

"additionalEnabledCapabilities": [

"NodeTuning",

"OperatorLifecycleManager"

]

}

}

# It is strongly recommended to include crun manifests as part of the additional install-time manifests for 4.13+.

# The crun manifests can be obtained from source-crs/optional-extra-manifest/ and added to the git repo ie.sno-extra-manifest.

# extraManifestPath: sno-extra-manifest

clusterLabels:

# These example cluster labels correspond to the bindingRules in the PolicyGenTemplate examples

du-profile: "latest"

# These example cluster labels correspond to the bindingRules in the PolicyGenTemplate examples in ../policygentemplates:

# ../policygentemplates/common-ranGen.yaml will apply to all clusters with 'common: true'

common: true

# ../policygentemplates/group-du-sno-ranGen.yaml will apply to all clusters with 'group-du-sno: ""'

group-du-sno: ""

# ../policygentemplates/example-sno-site.yaml will apply to all clusters with 'sites: "example-sno"'

# Normally this should match or contain the cluster name so it only applies to a single cluster

sites : "example-sno"

clusterNetwork:

- cidr: 1001:1::/48

hostPrefix: 64

machineNetwork:

- cidr: 1111:2222:3333:4444::/64

serviceNetwork:

- 1001:2::/112

additionalNTPSources:

- 1111:2222:3333:4444::2

# Initiates the cluster for workload partitioning. Setting specific reserved/isolated CPUSets is done via PolicyTemplate

# please see Workload Partitioning Feature for a complete guide.

cpuPartitioningMode: AllNodes

# Optionally; This can be used to override the KlusterletAddonConfig that is created for this cluster:

#crTemplates:

# KlusterletAddonConfig: "KlusterletAddonConfigOverride.yaml"

nodes:

- hostName: "example-node1.example.com"

role: "master"

# Optionally; This can be used to configure desired BIOS setting on a host:

#biosConfigRef:

# filePath: "example-hw.profile"

bmcAddress: "idrac-virtualmedia+https://[1111:2222:3333:4444::bbbb:1]/redfish/v1/Systems/System.Embedded.1"

bmcCredentialsName:

name: "example-node1-bmh-secret"

bootMACAddress: "AA:BB:CC:DD:EE:11"

# Use UEFISecureBoot to enable secure boot

bootMode: "UEFI"

rootDeviceHints:

deviceName: "/dev/disk/by-path/pci-0000:01:00.0-scsi-0:2:0:0"

# disk partition at `/var/lib/containers` with ignitionConfigOverride. Some values must be updated. See DiskPartitionContainer.md for more details

ignitionConfigOverride: |

{

"ignition": {

"version": "3.2.0"

},

"storage": {

"disks": [

{

"device": "/dev/disk/by-path/pci-0000:01:00.0-scsi-0:2:0:0",

"partitions": [

{

"label": "var-lib-containers",

"sizeMiB": 0,

"startMiB": 250000

}

],

"wipeTable": false

}

],

"filesystems": [

{

"device": "/dev/disk/by-partlabel/var-lib-containers",

"format": "xfs",

"mountOptions": [

"defaults",

"prjquota"

],

"path": "/var/lib/containers",

"wipeFilesystem": true

}

]

},

"systemd": {

"units": [

{

"contents": "# Generated by Butane\n[Unit]\nRequires=systemd-fsck@dev-disk-by\\x2dpartlabel-var\\x2dlib\\x2dcontainers.service\nAfter=systemd-fsck@dev-disk-by\\x2dpartlabel-var\\x2dlib\\x2dcontainers.service\n\n[Mount]\nWhere=/var/lib/containers\nWhat=/dev/disk/by-partlabel/var-lib-containers\nType=xfs\nOptions=defaults,prjquota\n\n[Install]\nRequiredBy=local-fs.target",

"enabled": true,

"name": "var-lib-containers.mount"

}

]

}

}

nodeNetwork:

interfaces:

- name: eno1

macAddress: "AA:BB:CC:DD:EE:11"

config:

interfaces:

- name: eno1

type: ethernet

state: up

ipv4:

enabled: false

ipv6:

enabled: true

address:

# For SNO sites with static IP addresses, the node-specific,

# API and Ingress IPs should all be the same and configured on

# the interface

- ip: 1111:2222:3333:4444::aaaa:1

prefix-length: 64

dns-resolver:

config:

search:

- example.com

server:

- 1111:2222:3333:4444::2

routes:

config:

- destination: ::/0

next-hop-interface: eno1

next-hop-address: 1111:2222:3333:4444::1

table-id: 254DisableSnoNetworkDiag.yaml

apiVersion: operator.openshift.io/v1

kind: Network

metadata:

name: cluster

annotations: {}

spec:

disableNetworkDiagnostics: trueReduceMonitoringFootprint.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

annotations: {}

data:

config.yaml: |

grafana:

enabled: false

alertmanagerMain:

enabled: false

telemeterClient:

enabled: false

prometheusK8s:

retention: 24hDisableOLMPprof.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: collect-profiles-config

namespace: openshift-operator-lifecycle-manager

annotations: {}

data:

pprof-config.yaml: |

disabled: TrueDefaultCatsrc.yaml

apiVersion: operators.coreos.com/v1alpha1

kind: CatalogSource

metadata:

name: default-cat-source

namespace: openshift-marketplace

annotations:

target.workload.openshift.io/management: '{"effect": "PreferredDuringScheduling"}'

spec:

displayName: default-cat-source

image: $imageUrl

publisher: Red Hat

sourceType: grpc

updateStrategy:

registryPoll:

interval: 1h

status:

connectionState:

lastObservedState: READYDisconnectedICSP.yaml

apiVersion: operator.openshift.io/v1alpha1

kind: ImageContentSourcePolicy

metadata:

name: disconnected-internal-icsp

annotations: {}

spec:

repositoryDigestMirrors:

- $mirrorsOperatorHub.yaml

apiVersion: config.openshift.io/v1

kind: OperatorHub

metadata:

name: cluster

annotations: {}

spec:

disableAllDefaultSources: true3.2.4.4.3. Machine configuration reference YAML

enable-crun-master.yaml

apiVersion: machineconfiguration.openshift.io/v1

kind: ContainerRuntimeConfig

metadata:

name: enable-crun-master

spec:

machineConfigPoolSelector:

matchLabels:

pools.operator.machineconfiguration.openshift.io/master: ""

containerRuntimeConfig:

defaultRuntime: crunenable-crun-worker.yaml

apiVersion: machineconfiguration.openshift.io/v1

kind: ContainerRuntimeConfig

metadata:

name: enable-crun-worker

spec:

machineConfigPoolSelector:

matchLabels:

pools.operator.machineconfiguration.openshift.io/worker: ""

containerRuntimeConfig:

defaultRuntime: crun99-crio-disable-wipe-master.yaml

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

metadata:

labels:

machineconfiguration.openshift.io/role: master

name: 99-crio-disable-wipe-master

spec:

config:

ignition:

version: 3.2.0

storage:

files:

- contents:

source: data:text/plain;charset=utf-8;base64,W2NyaW9dCmNsZWFuX3NodXRkb3duX2ZpbGUgPSAiIgo=

mode: 420

path: /etc/crio/crio.conf.d/99-crio-disable-wipe.toml99-crio-disable-wipe-worker.yaml

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

metadata:

labels:

machineconfiguration.openshift.io/role: worker

name: 99-crio-disable-wipe-worker

spec:

config:

ignition:

version: 3.2.0

storage:

files:

- contents:

source: data:text/plain;charset=utf-8;base64,W2NyaW9dCmNsZWFuX3NodXRkb3duX2ZpbGUgPSAiIgo=

mode: 420

path: /etc/crio/crio.conf.d/99-crio-disable-wipe.toml05-kdump-config-master.yaml

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

metadata:

labels:

machineconfiguration.openshift.io/role: master

name: 05-kdump-config-master

spec:

config:

ignition:

version: 3.2.0

systemd:

units:

- enabled: true

name: kdump-remove-ice-module.service

contents: |

[Unit]

Description=Remove ice module when doing kdump

Before=kdump.service

[Service]

Type=oneshot

RemainAfterExit=true

ExecStart=/usr/local/bin/kdump-remove-ice-module.sh

[Install]

WantedBy=multi-user.target

storage:

files:

- contents:

source: data:text/plain;charset=utf-8;base64,IyEvdXNyL2Jpbi9lbnYgYmFzaAoKIyBUaGlzIHNjcmlwdCByZW1vdmVzIHRoZSBpY2UgbW9kdWxlIGZyb20ga2R1bXAgdG8gcHJldmVudCBrZHVtcCBmYWlsdXJlcyBvbiBjZXJ0YWluIHNlcnZlcnMuCiMgVGhpcyBpcyBhIHRlbXBvcmFyeSB3b3JrYXJvdW5kIGZvciBSSEVMUExBTi0xMzgyMzYgYW5kIGNhbiBiZSByZW1vdmVkIHdoZW4gdGhhdCBpc3N1ZSBpcwojIGZpeGVkLgoKc2V0IC14CgpTRUQ9Ii91c3IvYmluL3NlZCIKR1JFUD0iL3Vzci9iaW4vZ3JlcCIKCiMgb3ZlcnJpZGUgZm9yIHRlc3RpbmcgcHVycG9zZXMKS0RVTVBfQ09ORj0iJHsxOi0vZXRjL3N5c2NvbmZpZy9rZHVtcH0iClJFTU9WRV9JQ0VfU1RSPSJtb2R1bGVfYmxhY2tsaXN0PWljZSIKCiMgZXhpdCBpZiBmaWxlIGRvZXNuJ3QgZXhpc3QKWyAhIC1mICR7S0RVTVBfQ09ORn0gXSAmJiBleGl0IDAKCiMgZXhpdCBpZiBmaWxlIGFscmVhZHkgdXBkYXRlZAoke0dSRVB9IC1GcSAke1JFTU9WRV9JQ0VfU1RSfSAke0tEVU1QX0NPTkZ9ICYmIGV4aXQgMAoKIyBUYXJnZXQgbGluZSBsb29rcyBzb21ldGhpbmcgbGlrZSB0aGlzOgojIEtEVU1QX0NPTU1BTkRMSU5FX0FQUEVORD0iaXJxcG9sbCBucl9jcHVzPTEgLi4uIGhlc3RfZGlzYWJsZSIKIyBVc2Ugc2VkIHRvIG1hdGNoIGV2ZXJ5dGhpbmcgYmV0d2VlbiB0aGUgcXVvdGVzIGFuZCBhcHBlbmQgdGhlIFJFTU9WRV9JQ0VfU1RSIHRvIGl0CiR7U0VEfSAtaSAncy9eS0RVTVBfQ09NTUFORExJTkVfQVBQRU5EPSJbXiJdKi8mICcke1JFTU9WRV9JQ0VfU1RSfScvJyAke0tEVU1QX0NPTkZ9IHx8IGV4aXQgMAo=

mode: 448

path: /usr/local/bin/kdump-remove-ice-module.sh05-kdump-config-worker.yaml

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

metadata:

labels:

machineconfiguration.openshift.io/role: worker

name: 05-kdump-config-worker

spec:

config:

ignition:

version: 3.2.0

systemd:

units:

- enabled: true

name: kdump-remove-ice-module.service

contents: |

[Unit]

Description=Remove ice module when doing kdump

Before=kdump.service

[Service]

Type=oneshot

RemainAfterExit=true

ExecStart=/usr/local/bin/kdump-remove-ice-module.sh

[Install]

WantedBy=multi-user.target

storage:

files:

- contents:

source: data:text/plain;charset=utf-8;base64,IyEvdXNyL2Jpbi9lbnYgYmFzaAoKIyBUaGlzIHNjcmlwdCByZW1vdmVzIHRoZSBpY2UgbW9kdWxlIGZyb20ga2R1bXAgdG8gcHJldmVudCBrZHVtcCBmYWlsdXJlcyBvbiBjZXJ0YWluIHNlcnZlcnMuCiMgVGhpcyBpcyBhIHRlbXBvcmFyeSB3b3JrYXJvdW5kIGZvciBSSEVMUExBTi0xMzgyMzYgYW5kIGNhbiBiZSByZW1vdmVkIHdoZW4gdGhhdCBpc3N1ZSBpcwojIGZpeGVkLgoKc2V0IC14CgpTRUQ9Ii91c3IvYmluL3NlZCIKR1JFUD0iL3Vzci9iaW4vZ3JlcCIKCiMgb3ZlcnJpZGUgZm9yIHRlc3RpbmcgcHVycG9zZXMKS0RVTVBfQ09ORj0iJHsxOi0vZXRjL3N5c2NvbmZpZy9rZHVtcH0iClJFTU9WRV9JQ0VfU1RSPSJtb2R1bGVfYmxhY2tsaXN0PWljZSIKCiMgZXhpdCBpZiBmaWxlIGRvZXNuJ3QgZXhpc3QKWyAhIC1mICR7S0RVTVBfQ09ORn0gXSAmJiBleGl0IDAKCiMgZXhpdCBpZiBmaWxlIGFscmVhZHkgdXBkYXRlZAoke0dSRVB9IC1GcSAke1JFTU9WRV9JQ0VfU1RSfSAke0tEVU1QX0NPTkZ9ICYmIGV4aXQgMAoKIyBUYXJnZXQgbGluZSBsb29rcyBzb21ldGhpbmcgbGlrZSB0aGlzOgojIEtEVU1QX0NPTU1BTkRMSU5FX0FQUEVORD0iaXJxcG9sbCBucl9jcHVzPTEgLi4uIGhlc3RfZGlzYWJsZSIKIyBVc2Ugc2VkIHRvIG1hdGNoIGV2ZXJ5dGhpbmcgYmV0d2VlbiB0aGUgcXVvdGVzIGFuZCBhcHBlbmQgdGhlIFJFTU9WRV9JQ0VfU1RSIHRvIGl0CiR7U0VEfSAtaSAncy9eS0RVTVBfQ09NTUFORExJTkVfQVBQRU5EPSJbXiJdKi8mICcke1JFTU9WRV9JQ0VfU1RSfScvJyAke0tEVU1QX0NPTkZ9IHx8IGV4aXQgMAo=

mode: 448

path: /usr/local/bin/kdump-remove-ice-module.sh06-kdump-master.yaml

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

metadata:

labels:

machineconfiguration.openshift.io/role: master

name: 06-kdump-enable-master

spec:

config:

ignition:

version: 3.2.0

systemd:

units:

- enabled: true

name: kdump.service

kernelArguments:

- crashkernel=512M06-kdump-worker.yaml

apiVersion: machineconfiguration.openshift.io/v1