Questo contenuto non è disponibile nella lingua selezionata.

Chapter 23. Hardware networks

23.1. About Single Root I/O Virtualization (SR-IOV) hardware networks

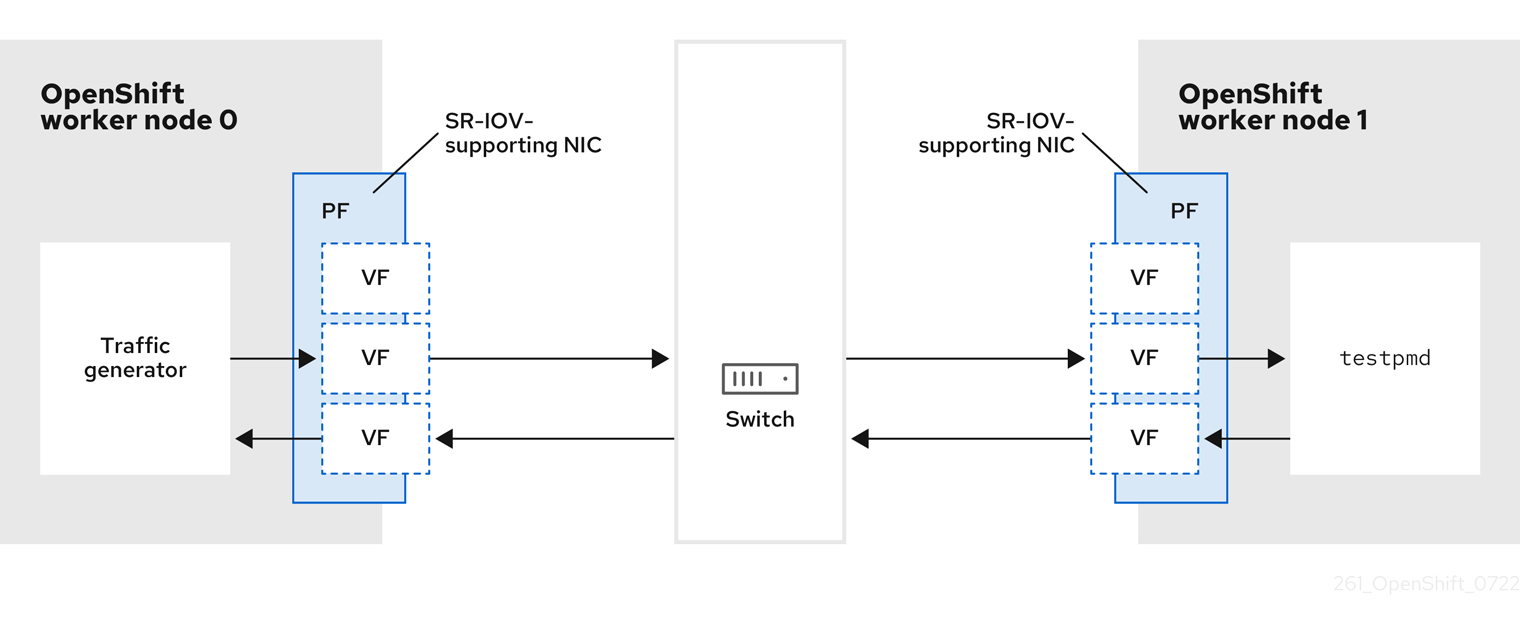

The Single Root I/O Virtualization (SR-IOV) specification is a standard for a type of PCI device assignment that can share a single device with multiple pods.

SR-IOV can segment a compliant network device, recognized on the host node as a physical function (PF), into multiple virtual functions (VFs). The VF is used like any other network device. The SR-IOV network device driver for the device determines how the VF is exposed in the container:

-

netdevicedriver: A regular kernel network device in thenetnsof the container -

vfio-pcidriver: A character device mounted in the container

You can use SR-IOV network devices with additional networks on your OpenShift Container Platform cluster installed on bare metal or Red Hat OpenStack Platform (RHOSP) infrastructure for applications that require high bandwidth or low latency.

You can configure multi-network policies for SR-IOV networks. The support for this is technology preview and SR-IOV additional networks are only supported with kernel NICs. They are not supported for Data Plane Development Kit (DPDK) applications.

Creating multi-network policies on SR-IOV networks might not deliver the same performance to applications compared to SR-IOV networks without a multi-network policy configured.

Multi-network policies for SR-IOV network is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

You can enable SR-IOV on a node by using the following command:

$ oc label node <node_name> feature.node.kubernetes.io/network-sriov.capable="true"23.1.1. Components that manage SR-IOV network devices

The SR-IOV Network Operator creates and manages the components of the SR-IOV stack. It performs the following functions:

- Orchestrates discovery and management of SR-IOV network devices

-

Generates

NetworkAttachmentDefinitioncustom resources for the SR-IOV Container Network Interface (CNI) - Creates and updates the configuration of the SR-IOV network device plugin

-

Creates node specific

SriovNetworkNodeStatecustom resources -

Updates the

spec.interfacesfield in eachSriovNetworkNodeStatecustom resource

The Operator provisions the following components:

- SR-IOV network configuration daemon

- A daemon set that is deployed on worker nodes when the SR-IOV Network Operator starts. The daemon is responsible for discovering and initializing SR-IOV network devices in the cluster.

- SR-IOV Network Operator webhook

- A dynamic admission controller webhook that validates the Operator custom resource and sets appropriate default values for unset fields.

- SR-IOV Network resources injector

-

A dynamic admission controller webhook that provides functionality for patching Kubernetes pod specifications with requests and limits for custom network resources such as SR-IOV VFs. The SR-IOV network resources injector adds the

resourcefield to only the first container in a pod automatically. - SR-IOV network device plugin

- A device plugin that discovers, advertises, and allocates SR-IOV network virtual function (VF) resources. Device plugins are used in Kubernetes to enable the use of limited resources, typically in physical devices. Device plugins give the Kubernetes scheduler awareness of resource availability, so that the scheduler can schedule pods on nodes with sufficient resources.

- SR-IOV CNI plugin

- A CNI plugin that attaches VF interfaces allocated from the SR-IOV network device plugin directly into a pod.

- SR-IOV InfiniBand CNI plugin

- A CNI plugin that attaches InfiniBand (IB) VF interfaces allocated from the SR-IOV network device plugin directly into a pod.

The SR-IOV Network resources injector and SR-IOV Network Operator webhook are enabled by default and can be disabled by editing the default SriovOperatorConfig CR. Use caution when disabling the SR-IOV Network Operator Admission Controller webhook. You can disable the webhook under specific circumstances, such as troubleshooting, or if you want to use unsupported devices.

23.1.1.1. Supported platforms

The SR-IOV Network Operator is supported on the following platforms:

- Bare metal

- Red Hat OpenStack Platform (RHOSP)

23.1.1.2. Supported devices

OpenShift Container Platform supports the following network interface controllers:

| Manufacturer | Model | Vendor ID | Device ID |

|---|---|---|---|

| Broadcom | BCM57414 | 14e4 | 16d7 |

| Broadcom | BCM57508 | 14e4 | 1750 |

| Broadcom | BCM57504 | 14e4 | 1751 |

| Intel | X710 | 8086 | 1572 |

| Intel | X710 Backplane | 8086 | 1581 |

| Intel | X710 Base T | 8086 | 15ff |

| Intel | XL710 | 8086 | 1583 |

| Intel | XXV710 | 8086 | 158b |

| Intel | E810-CQDA2 | 8086 | 1592 |

| Intel | E810-2CQDA2 | 8086 | 1592 |

| Intel | E810-XXVDA2 | 8086 | 159b |

| Intel | E810-XXVDA4 | 8086 | 1593 |

| Intel | E810-XXVDA4T | 8086 | 1593 |

| Mellanox | MT27700 Family [ConnectX‑4] | 15b3 | 1013 |

| Mellanox | MT27710 Family [ConnectX‑4 Lx] | 15b3 | 1015 |

| Mellanox | MT27800 Family [ConnectX‑5] | 15b3 | 1017 |

| Mellanox | MT28880 Family [ConnectX‑5 Ex] | 15b3 | 1019 |

| Mellanox | MT28908 Family [ConnectX‑6] | 15b3 | 101b |

| Mellanox | MT2892 Family [ConnectX‑6 Dx] | 15b3 | 101d |

| Mellanox | MT2894 Family [ConnectX‑6 Lx] | 15b3 | 101f |

| Mellanox | MT42822 BlueField‑2 in ConnectX‑6 NIC mode | 15b3 | a2d6 |

| Pensando [1] | DSC-25 dual-port 25G distributed services card for ionic driver | 0x1dd8 | 0x1002 |

| Pensando [1] | DSC-100 dual-port 100G distributed services card for ionic driver | 0x1dd8 | 0x1003 |

| Silicom | STS Family | 8086 | 1591 |

- OpenShift SR-IOV is supported, but you must set a static, Virtual Function (VF) media access control (MAC) address using the SR-IOV CNI config file when using SR-IOV.

For the most up-to-date list of supported cards and compatible OpenShift Container Platform versions available, see Openshift Single Root I/O Virtualization (SR-IOV) and PTP hardware networks Support Matrix.

23.1.1.3. Automated discovery of SR-IOV network devices

The SR-IOV Network Operator searches your cluster for SR-IOV capable network devices on worker nodes. The Operator creates and updates a SriovNetworkNodeState custom resource (CR) for each worker node that provides a compatible SR-IOV network device.

The CR is assigned the same name as the worker node. The status.interfaces list provides information about the network devices on a node.

Do not modify a SriovNetworkNodeState object. The Operator creates and manages these resources automatically.

23.1.1.3.1. Example SriovNetworkNodeState object

The following YAML is an example of a SriovNetworkNodeState object created by the SR-IOV Network Operator:

An SriovNetworkNodeState object

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetworkNodeState

metadata:

name: node-25

namespace: openshift-sriov-network-operator

ownerReferences:

- apiVersion: sriovnetwork.openshift.io/v1

blockOwnerDeletion: true

controller: true

kind: SriovNetworkNodePolicy

name: default

spec:

dpConfigVersion: "39824"

status:

interfaces:

- deviceID: "1017"

driver: mlx5_core

mtu: 1500

name: ens785f0

pciAddress: "0000:18:00.0"

totalvfs: 8

vendor: 15b3

- deviceID: "1017"

driver: mlx5_core

mtu: 1500

name: ens785f1

pciAddress: "0000:18:00.1"

totalvfs: 8

vendor: 15b3

- deviceID: 158b

driver: i40e

mtu: 1500

name: ens817f0

pciAddress: 0000:81:00.0

totalvfs: 64

vendor: "8086"

- deviceID: 158b

driver: i40e

mtu: 1500

name: ens817f1

pciAddress: 0000:81:00.1

totalvfs: 64

vendor: "8086"

- deviceID: 158b

driver: i40e

mtu: 1500

name: ens803f0

pciAddress: 0000:86:00.0

totalvfs: 64

vendor: "8086"

syncStatus: Succeeded23.1.1.4. Example use of a virtual function in a pod

You can run a remote direct memory access (RDMA) or a Data Plane Development Kit (DPDK) application in a pod with SR-IOV VF attached.

This example shows a pod using a virtual function (VF) in RDMA mode:

Pod spec that uses RDMA mode

apiVersion: v1

kind: Pod

metadata:

name: rdma-app

annotations:

k8s.v1.cni.cncf.io/networks: sriov-rdma-mlnx

spec:

containers:

- name: testpmd

image: <RDMA_image>

imagePullPolicy: IfNotPresent

securityContext:

runAsUser: 0

capabilities:

add: ["IPC_LOCK","SYS_RESOURCE","NET_RAW"]

command: ["sleep", "infinity"]The following example shows a pod with a VF in DPDK mode:

Pod spec that uses DPDK mode

apiVersion: v1

kind: Pod

metadata:

name: dpdk-app

annotations:

k8s.v1.cni.cncf.io/networks: sriov-dpdk-net

spec:

containers:

- name: testpmd

image: <DPDK_image>

securityContext:

runAsUser: 0

capabilities:

add: ["IPC_LOCK","SYS_RESOURCE","NET_RAW"]

volumeMounts:

- mountPath: /dev/hugepages

name: hugepage

resources:

limits:

memory: "1Gi"

cpu: "2"

hugepages-1Gi: "4Gi"

requests:

memory: "1Gi"

cpu: "2"

hugepages-1Gi: "4Gi"

command: ["sleep", "infinity"]

volumes:

- name: hugepage

emptyDir:

medium: HugePages23.1.1.5. DPDK library for use with container applications

An optional library, app-netutil, provides several API methods for gathering network information about a pod from within a container running within that pod.

This library can assist with integrating SR-IOV virtual functions (VFs) in Data Plane Development Kit (DPDK) mode into the container. The library provides both a Golang API and a C API.

Currently there are three API methods implemented:

GetCPUInfo()- This function determines which CPUs are available to the container and returns the list.

GetHugepages()-

This function determines the amount of huge page memory requested in the

Podspec for each container and returns the values. GetInterfaces()- This function determines the set of interfaces in the container and returns the list. The return value includes the interface type and type-specific data for each interface.

The repository for the library includes a sample Dockerfile to build a container image, dpdk-app-centos. The container image can run one of the following DPDK sample applications, depending on an environment variable in the pod specification: l2fwd, l3wd or testpmd. The container image provides an example of integrating the app-netutil library into the container image itself. The library can also integrate into an init container. The init container can collect the required data and pass the data to an existing DPDK workload.

23.1.1.6. Huge pages resource injection for Downward API

When a pod specification includes a resource request or limit for huge pages, the Network Resources Injector automatically adds Downward API fields to the pod specification to provide the huge pages information to the container.

The Network Resources Injector adds a volume that is named podnetinfo and is mounted at /etc/podnetinfo for each container in the pod. The volume uses the Downward API and includes a file for huge pages requests and limits. The file naming convention is as follows:

-

/etc/podnetinfo/hugepages_1G_request_<container-name> -

/etc/podnetinfo/hugepages_1G_limit_<container-name> -

/etc/podnetinfo/hugepages_2M_request_<container-name> -

/etc/podnetinfo/hugepages_2M_limit_<container-name>

The paths specified in the previous list are compatible with the app-netutil library. By default, the library is configured to search for resource information in the /etc/podnetinfo directory. If you choose to specify the Downward API path items yourself manually, the app-netutil library searches for the following paths in addition to the paths in the previous list.

-

/etc/podnetinfo/hugepages_request -

/etc/podnetinfo/hugepages_limit -

/etc/podnetinfo/hugepages_1G_request -

/etc/podnetinfo/hugepages_1G_limit -

/etc/podnetinfo/hugepages_2M_request -

/etc/podnetinfo/hugepages_2M_limit

As with the paths that the Network Resources Injector can create, the paths in the preceding list can optionally end with a _<container-name> suffix.

23.1.3. Next steps

23.2. Installing the SR-IOV Network Operator

You can install the Single Root I/O Virtualization (SR-IOV) Network Operator on your cluster to manage SR-IOV network devices and network attachments.

23.2.1. Installing the SR-IOV Network Operator

As a cluster administrator, you can install the Single Root I/O Virtualization (SR-IOV) Network Operator by using the OpenShift Container Platform CLI or the web console.

23.2.1.1. CLI: Installing the SR-IOV Network Operator

As a cluster administrator, you can install the Operator using the CLI.

Prerequisites

- A cluster installed on bare-metal hardware with nodes that have hardware that supports SR-IOV.

-

Install the OpenShift CLI (

oc). -

An account with

cluster-adminprivileges.

Procedure

To create the

openshift-sriov-network-operatornamespace, enter the following command:$ cat << EOF| oc create -f - apiVersion: v1 kind: Namespace metadata: name: openshift-sriov-network-operator annotations: workload.openshift.io/allowed: management EOFTo create an OperatorGroup CR, enter the following command:

$ cat << EOF| oc create -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: sriov-network-operators namespace: openshift-sriov-network-operator spec: targetNamespaces: - openshift-sriov-network-operator EOFTo create a Subscription CR for the SR-IOV Network Operator, enter the following command:

$ cat << EOF| oc create -f - apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: sriov-network-operator-subscription namespace: openshift-sriov-network-operator spec: channel: stable name: sriov-network-operator source: redhat-operators sourceNamespace: openshift-marketplace EOFTo verify that the Operator is installed, enter the following command:

$ oc get csv -n openshift-sriov-network-operator \ -o custom-columns=Name:.metadata.name,Phase:.status.phaseExample output

Name Phase sriov-network-operator.4.14.0-202310121402 Succeeded

23.2.1.2. Web console: Installing the SR-IOV Network Operator

As a cluster administrator, you can install the Operator using the web console.

Prerequisites

- A cluster installed on bare-metal hardware with nodes that have hardware that supports SR-IOV.

-

Install the OpenShift CLI (

oc). -

An account with

cluster-adminprivileges.

Procedure

Install the SR-IOV Network Operator:

-

In the OpenShift Container Platform web console, click Operators

OperatorHub. - Select SR-IOV Network Operator from the list of available Operators, and then click Install.

- On the Install Operator page, under Installed Namespace, select Operator recommended Namespace.

- Click Install.

-

In the OpenShift Container Platform web console, click Operators

Verify that the SR-IOV Network Operator is installed successfully:

-

Navigate to the Operators

Installed Operators page. Ensure that SR-IOV Network Operator is listed in the openshift-sriov-network-operator project with a Status of InstallSucceeded.

NoteDuring installation an Operator might display a Failed status. If the installation later succeeds with an InstallSucceeded message, you can ignore the Failed message.

If the Operator does not appear as installed, to troubleshoot further:

- Inspect the Operator Subscriptions and Install Plans tabs for any failure or errors under Status.

-

Navigate to the Workloads

Pods page and check the logs for pods in the openshift-sriov-network-operatorproject. Check the namespace of the YAML file. If the annotation is missing, you can add the annotation

workload.openshift.io/allowed=managementto the Operator namespace with the following command:$ oc annotate ns/openshift-sriov-network-operator workload.openshift.io/allowed=managementNoteFor single-node OpenShift clusters, the annotation

workload.openshift.io/allowed=managementis required for the namespace.

-

Navigate to the Operators

23.2.2. Next steps

- Optional: Configuring the SR-IOV Network Operator

23.3. Configuring the SR-IOV Network Operator

The Single Root I/O Virtualization (SR-IOV) Network Operator manages the SR-IOV network devices and network attachments in your cluster.

23.3.1. Configuring the SR-IOV Network Operator

Modifying the SR-IOV Network Operator configuration is not normally necessary. The default configuration is recommended for most use cases. Complete the steps to modify the relevant configuration only if the default behavior of the Operator is not compatible with your use case.

The SR-IOV Network Operator adds the SriovOperatorConfig.sriovnetwork.openshift.io CustomResourceDefinition resource. The Operator automatically creates a SriovOperatorConfig custom resource (CR) named default in the openshift-sriov-network-operator namespace.

The default CR contains the SR-IOV Network Operator configuration for your cluster. To change the Operator configuration, you must modify this CR.

23.3.1.1. SR-IOV Network Operator config custom resource

The fields for the sriovoperatorconfig custom resource are described in the following table:

| Field | Type | Description |

|---|---|---|

|

|

|

Specifies the name of the SR-IOV Network Operator instance. The default value is |

|

|

|

Specifies the namespace of the SR-IOV Network Operator instance. The default value is |

|

|

| Specifies the node selection to control scheduling the SR-IOV Network Config Daemon on selected nodes. By default, this field is not set and the Operator deploys the SR-IOV Network Config daemon set on worker nodes. |

|

|

|

Specifies whether to disable the node draining process or enable the node draining process when you apply a new policy to configure the NIC on a node. Setting this field to

For single-node clusters, set this field to |

|

|

|

Specifies whether to enable or disable the Network Resources Injector daemon set. By default, this field is set to |

|

|

| Specifies whether to enable or disable the Operator Admission Controller webhook daemon set. |

|

|

|

Specifies the log verbosity level of the Operator. By default, this field is set to |

23.3.1.2. About the Network Resources Injector

The Network Resources Injector is a Kubernetes Dynamic Admission Controller application. It provides the following capabilities:

- Mutation of resource requests and limits in a pod specification to add an SR-IOV resource name according to an SR-IOV network attachment definition annotation.

-

Mutation of a pod specification with a Downward API volume to expose pod annotations, labels, and huge pages requests and limits. Containers that run in the pod can access the exposed information as files under the

/etc/podnetinfopath.

By default, the Network Resources Injector is enabled by the SR-IOV Network Operator and runs as a daemon set on all control plane nodes. The following is an example of Network Resources Injector pods running in a cluster with three control plane nodes:

$ oc get pods -n openshift-sriov-network-operatorExample output

NAME READY STATUS RESTARTS AGE

network-resources-injector-5cz5p 1/1 Running 0 10m

network-resources-injector-dwqpx 1/1 Running 0 10m

network-resources-injector-lktz5 1/1 Running 0 10m23.3.1.3. About the SR-IOV Network Operator admission controller webhook

The SR-IOV Network Operator Admission Controller webhook is a Kubernetes Dynamic Admission Controller application. It provides the following capabilities:

-

Validation of the

SriovNetworkNodePolicyCR when it is created or updated. -

Mutation of the

SriovNetworkNodePolicyCR by setting the default value for thepriorityanddeviceTypefields when the CR is created or updated.

By default the SR-IOV Network Operator Admission Controller webhook is enabled by the Operator and runs as a daemon set on all control plane nodes.

Use caution when disabling the SR-IOV Network Operator Admission Controller webhook. You can disable the webhook under specific circumstances, such as troubleshooting, or if you want to use unsupported devices. For information about configuring unsupported devices, see Configuring the SR-IOV Network Operator to use an unsupported NIC.

The following is an example of the Operator Admission Controller webhook pods running in a cluster with three control plane nodes:

$ oc get pods -n openshift-sriov-network-operatorExample output

NAME READY STATUS RESTARTS AGE

operator-webhook-9jkw6 1/1 Running 0 16m

operator-webhook-kbr5p 1/1 Running 0 16m

operator-webhook-rpfrl 1/1 Running 0 16m23.3.1.4. About custom node selectors

The SR-IOV Network Config daemon discovers and configures the SR-IOV network devices on cluster nodes. By default, it is deployed to all the worker nodes in the cluster. You can use node labels to specify on which nodes the SR-IOV Network Config daemon runs.

23.3.1.5. Disabling or enabling the Network Resources Injector

To disable or enable the Network Resources Injector, which is enabled by default, complete the following procedure.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges. - You must have installed the SR-IOV Network Operator.

Procedure

Set the

enableInjectorfield. Replace<value>withfalseto disable the feature ortrueto enable the feature.$ oc patch sriovoperatorconfig default \ --type=merge -n openshift-sriov-network-operator \ --patch '{ "spec": { "enableInjector": <value> } }'TipYou can alternatively apply the following YAML to update the Operator:

apiVersion: sriovnetwork.openshift.io/v1 kind: SriovOperatorConfig metadata: name: default namespace: openshift-sriov-network-operator spec: enableInjector: <value>

23.3.1.6. Disabling or enabling the SR-IOV Network Operator admission controller webhook

To disable or enable the admission controller webhook, which is enabled by default, complete the following procedure.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges. - You must have installed the SR-IOV Network Operator.

Procedure

Set the

enableOperatorWebhookfield. Replace<value>withfalseto disable the feature ortrueto enable it:$ oc patch sriovoperatorconfig default --type=merge \ -n openshift-sriov-network-operator \ --patch '{ "spec": { "enableOperatorWebhook": <value> } }'TipYou can alternatively apply the following YAML to update the Operator:

apiVersion: sriovnetwork.openshift.io/v1 kind: SriovOperatorConfig metadata: name: default namespace: openshift-sriov-network-operator spec: enableOperatorWebhook: <value>

23.3.1.7. Configuring a custom NodeSelector for the SR-IOV Network Config daemon

The SR-IOV Network Config daemon discovers and configures the SR-IOV network devices on cluster nodes. By default, it is deployed to all the worker nodes in the cluster. You can use node labels to specify on which nodes the SR-IOV Network Config daemon runs.

To specify the nodes where the SR-IOV Network Config daemon is deployed, complete the following procedure.

When you update the configDaemonNodeSelector field, the SR-IOV Network Config daemon is recreated on each selected node. While the daemon is recreated, cluster users are unable to apply any new SR-IOV Network node policy or create new SR-IOV pods.

Procedure

To update the node selector for the operator, enter the following command:

$ oc patch sriovoperatorconfig default --type=json \ -n openshift-sriov-network-operator \ --patch '[{ "op": "replace", "path": "/spec/configDaemonNodeSelector", "value": {<node_label>} }]'Replace

<node_label>with a label to apply as in the following example:"node-role.kubernetes.io/worker": "".TipYou can alternatively apply the following YAML to update the Operator:

apiVersion: sriovnetwork.openshift.io/v1 kind: SriovOperatorConfig metadata: name: default namespace: openshift-sriov-network-operator spec: configDaemonNodeSelector: <node_label>

23.3.1.8. Configuring the SR-IOV Network Operator for single node installations

By default, the SR-IOV Network Operator drains workloads from a node before every policy change. The Operator performs this action to ensure that there no workloads using the virtual functions before the reconfiguration.

For installations on a single node, there are no other nodes to receive the workloads. As a result, the Operator must be configured not to drain the workloads from the single node.

After performing the following procedure to disable draining workloads, you must remove any workload that uses an SR-IOV network interface before you change any SR-IOV network node policy.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges. - You must have installed the SR-IOV Network Operator.

Procedure

To set the

disableDrainfield totrue, enter the following command:$ oc patch sriovoperatorconfig default --type=merge \ -n openshift-sriov-network-operator \ --patch '{ "spec": { "disableDrain": true } }'TipYou can alternatively apply the following YAML to update the Operator:

apiVersion: sriovnetwork.openshift.io/v1 kind: SriovOperatorConfig metadata: name: default namespace: openshift-sriov-network-operator spec: disableDrain: true

23.3.1.9. Deploying the SR-IOV Operator for hosted control planes

Hosted control planes on the AWS platform is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

After you configure and deploy your hosting service cluster, you can create a subscription to the SR-IOV Operator on a hosted cluster. The SR-IOV pod runs on worker machines rather than the control plane.

Prerequisites

You must configure and deploy the hosted cluster on AWS. For more information, see Configuring the hosting cluster on AWS (Technology Preview).

Procedure

Create a namespace and an Operator group:

apiVersion: v1 kind: Namespace metadata: name: openshift-sriov-network-operator --- apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: sriov-network-operators namespace: openshift-sriov-network-operator spec: targetNamespaces: - openshift-sriov-network-operatorCreate a subscription to the SR-IOV Operator:

apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: sriov-network-operator-subsription namespace: openshift-sriov-network-operator spec: channel: stable name: sriov-network-operator config: nodeSelector: node-role.kubernetes.io/worker: "" source: s/qe-app-registry/redhat-operators sourceNamespace: openshift-marketplace

Verification

To verify that the SR-IOV Operator is ready, run the following command and view the resulting output:

$ oc get csv -n openshift-sriov-network-operatorExample output

NAME DISPLAY VERSION REPLACES PHASE sriov-network-operator.4.14.0-202211021237 SR-IOV Network Operator 4.14.0-202211021237 sriov-network-operator.4.14.0-202210290517 SucceededTo verify that the SR-IOV pods are deployed, run the following command:

$ oc get pods -n openshift-sriov-network-operator

23.3.2. Next steps

23.4. Configuring an SR-IOV network device

You can configure a Single Root I/O Virtualization (SR-IOV) device in your cluster.

23.4.1. SR-IOV network node configuration object

You specify the SR-IOV network device configuration for a node by creating an SR-IOV network node policy. The API object for the policy is part of the sriovnetwork.openshift.io API group.

The following YAML describes an SR-IOV network node policy:

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetworkNodePolicy

metadata:

name: <name>

namespace: openshift-sriov-network-operator

spec:

resourceName: <sriov_resource_name>

nodeSelector:

feature.node.kubernetes.io/network-sriov.capable: "true"

priority: <priority>

mtu: <mtu>

needVhostNet: false

numVfs: <num>

externallyManaged: false

nicSelector:

vendor: "<vendor_code>"

deviceID: "<device_id>"

pfNames: ["<pf_name>", ...]

rootDevices: ["<pci_bus_id>", ...]

netFilter: "<filter_string>"

deviceType: <device_type>

isRdma: false

linkType: <link_type>

eSwitchMode: "switchdev"

excludeTopology: false - 1

- The name for the custom resource object.

- 2

- The namespace where the SR-IOV Network Operator is installed.

- 3

- The resource name of the SR-IOV network device plugin. You can create multiple SR-IOV network node policies for a resource name.

When specifying a name, be sure to use the accepted syntax expression

^[a-zA-Z0-9_]+$in theresourceName. - 4

- The node selector specifies the nodes to configure. Only SR-IOV network devices on the selected nodes are configured. The SR-IOV Container Network Interface (CNI) plugin and device plugin are deployed on selected nodes only.Important

The SR-IOV Network Operator applies node network configuration policies to nodes in sequence. Before applying node network configuration policies, the SR-IOV Network Operator checks if the machine config pool (MCP) for a node is in an unhealthy state such as

DegradedorUpdating. If a node is in an unhealthy MCP, the process of applying node network configuration policies to all targeted nodes in the cluster pauses until the MCP returns to a healthy state.To avoid a node in an unhealthy MCP from blocking the application of node network configuration policies to other nodes, including nodes in other MCPs, you must create a separate node network configuration policy for each MCP.

- 5

- Optional: The priority is an integer value between

0and99. A smaller value receives higher priority. For example, a priority of10is a higher priority than99. The default value is99. - 6

- Optional: The maximum transmission unit (MTU) of the physical function and all its virtual functions. The maximum MTU value can vary for different network interface controller (NIC) models.Important

If you want to create virtual function on the default network interface, ensure that the MTU is set to a value that matches the cluster MTU.

If you want to modify the MTU of a single virtual function while the function is assigned to a pod, leave the MTU value blank in the SR-IOV network node policy. Otherwise, the SR-IOV Network Operator reverts the MTU of the virtual function to the MTU value defined in the SR-IOV network node policy, which might trigger a node drain.

- 7

- Optional: Set

needVhostNettotrueto mount the/dev/vhost-netdevice in the pod. Use the mounted/dev/vhost-netdevice with Data Plane Development Kit (DPDK) to forward traffic to the kernel network stack. - 8

- The number of the virtual functions (VF) to create for the SR-IOV physical network device. For an Intel network interface controller (NIC), the number of VFs cannot be larger than the total VFs supported by the device. For a Mellanox NIC, the number of VFs cannot be larger than

127. - 9

- The

externallyManagedfield indicates whether the SR-IOV Network Operator manages all, or only a subset of virtual functions (VFs). With the value set tofalsethe SR-IOV Network Operator manages and configures all VFs on the PF.NoteWhen

externallyManagedis set totrue, you must manually create the Virtual Functions (VFs) on the physical function (PF) before applying theSriovNetworkNodePolicyresource. If the VFs are not pre-created, the SR-IOV Network Operator’s webhook will block the policy request.When

externallyManagedis set tofalse, the SR-IOV Network Operator automatically creates and manages the VFs, including resetting them if necessary.To use VFs on the host system, you must create them through NMState, and set

externallyManagedtotrue. In this mode, the SR-IOV Network Operator does not modify the PF or the manually managed VFs, except for those explicitly defined in thenicSelectorfield of your policy. However, the SR-IOV Network Operator continues to manage VFs that are used as pod secondary interfaces. - 10

- The NIC selector identifies the device to which this resource applies. You do not have to specify values for all the parameters. It is recommended to identify the network device with enough precision to avoid selecting a device unintentionally.

If you specify

rootDevices, you must also specify a value forvendor,deviceID, orpfNames. If you specify bothpfNamesandrootDevicesat the same time, ensure that they refer to the same device. If you specify a value fornetFilter, then you do not need to specify any other parameter because a network ID is unique. - 11

- Optional: The vendor hexadecimal vendor identifier of the SR-IOV network device. The only allowed values are

8086(Intel) and15b3(Mellanox). - 12

- Optional: The device hexadecimal device identifier of the SR-IOV network device. For example,

101bis the device ID for a Mellanox ConnectX-6 device. - 13

- Optional: An array of one or more physical function (PF) names the resource must apply to.

- 14

- Optional: An array of one or more PCI bus addresses the resource must apply to. For example

0000:02:00.1. - 15

- Optional: The platform-specific network filter. The only supported platform is Red Hat OpenStack Platform (RHOSP). Acceptable values use the following format:

openstack/NetworkID:xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx. Replacexxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxxwith the value from the/var/config/openstack/latest/network_data.jsonmetadata file. This filter ensures that VFs are associated with a specific OpenStack network. The operator uses this filter to map the VFs to the appropriate network based on metadata provided by the OpenStack platform. - 16

- Optional: The driver to configure for the VFs created from this resource. The only allowed values are

netdeviceandvfio-pci. The default value isnetdevice.For a Mellanox NIC to work in DPDK mode on bare metal nodes, use the

netdevicedriver type and setisRdmatotrue. - 17

- Optional: Configures whether to enable remote direct memory access (RDMA) mode. The default value is

false.If the

isRdmaparameter is set totrue, you can continue to use the RDMA-enabled VF as a normal network device. A device can be used in either mode.Set

isRdmatotrueand additionally setneedVhostNettotrueto configure a Mellanox NIC for use with Fast Datapath DPDK applications.NoteYou cannot set the

isRdmaparameter totruefor intel NICs. - 18

- Optional: The link type for the VFs. The default value is

ethfor Ethernet. Change this value to 'ib' for InfiniBand.When

linkTypeis set toib,isRdmais automatically set totrueby the SR-IOV Network Operator webhook. WhenlinkTypeis set toib,deviceTypeshould not be set tovfio-pci.Do not set linkType to

ethfor SriovNetworkNodePolicy, because this can lead to an incorrect number of available devices reported by the device plugin. - 19

- Optional: To enable hardware offloading, you must set the

eSwitchModefield to"switchdev". For more information about hardware offloading, see "Configuring hardware offloading". - 20

- Optional: To exclude advertising an SR-IOV network resource’s NUMA node to the Topology Manager, set the value to

true. The default value isfalse.

23.4.1.1. SR-IOV network node configuration examples

The following example describes the configuration for an InfiniBand device:

Example configuration for an InfiniBand device

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetworkNodePolicy

metadata:

name: policy-ib-net-1

namespace: openshift-sriov-network-operator

spec:

resourceName: ibnic1

nodeSelector:

feature.node.kubernetes.io/network-sriov.capable: "true"

numVfs: 4

nicSelector:

vendor: "15b3"

deviceID: "101b"

rootDevices:

- "0000:19:00.0"

linkType: ib

isRdma: trueThe following example describes the configuration for an SR-IOV network device in a RHOSP virtual machine:

Example configuration for an SR-IOV device in a virtual machine

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetworkNodePolicy

metadata:

name: policy-sriov-net-openstack-1

namespace: openshift-sriov-network-operator

spec:

resourceName: sriovnic1

nodeSelector:

feature.node.kubernetes.io/network-sriov.capable: "true"

numVfs: 1

nicSelector:

vendor: "15b3"

deviceID: "101b"

netFilter: "openstack/NetworkID:ea24bd04-8674-4f69-b0ee-fa0b3bd20509" 23.4.1.2. Virtual function (VF) partitioning for SR-IOV devices

In some cases, you might want to split virtual functions (VFs) from the same physical function (PF) into multiple resource pools. For example, you might want some of the VFs to load with the default driver and the remaining VFs load with the vfio-pci driver. In such a deployment, the pfNames selector in your SriovNetworkNodePolicy custom resource (CR) can be used to specify a range of VFs for a pool using the following format: <pfname>#<first_vf>-<last_vf>.

For example, the following YAML shows the selector for an interface named netpf0 with VF 2 through 7:

pfNames: ["netpf0#2-7"]-

netpf0is the PF interface name. -

2is the first VF index (0-based) that is included in the range. -

7is the last VF index (0-based) that is included in the range.

You can select VFs from the same PF by using different policy CRs if the following requirements are met:

-

The

numVfsvalue must be identical for policies that select the same PF. -

The VF index must be in the range of

0to<numVfs>-1. For example, if you have a policy withnumVfsset to8, then the<first_vf>value must not be smaller than0, and the<last_vf>must not be larger than7. - The VFs ranges in different policies must not overlap.

-

The

<first_vf>must not be larger than the<last_vf>.

The following example illustrates NIC partitioning for an SR-IOV device.

The policy policy-net-1 defines a resource pool net-1 that contains the VF 0 of PF netpf0 with the default VF driver. The policy policy-net-1-dpdk defines a resource pool net-1-dpdk that contains the VF 8 to 15 of PF netpf0 with the vfio VF driver.

Policy policy-net-1:

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetworkNodePolicy

metadata:

name: policy-net-1

namespace: openshift-sriov-network-operator

spec:

resourceName: net1

nodeSelector:

feature.node.kubernetes.io/network-sriov.capable: "true"

numVfs: 16

nicSelector:

pfNames: ["netpf0#0-0"]

deviceType: netdevice

Policy policy-net-1-dpdk:

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetworkNodePolicy

metadata:

name: policy-net-1-dpdk

namespace: openshift-sriov-network-operator

spec:

resourceName: net1dpdk

nodeSelector:

feature.node.kubernetes.io/network-sriov.capable: "true"

numVfs: 16

nicSelector:

pfNames: ["netpf0#8-15"]

deviceType: vfio-pciVerifying that the interface is successfully partitioned

Confirm that the interface partitioned to virtual functions (VFs) for the SR-IOV device by running the following command.

$ ip link show <interface> - 1

- Replace

<interface>with the interface that you specified when partitioning to VFs for the SR-IOV device, for example,ens3f1.

Example output

5: ens3f1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP mode DEFAULT group default qlen 1000

link/ether 3c:fd:fe:d1:bc:01 brd ff:ff:ff:ff:ff:ff

vf 0 link/ether 5a:e7:88:25:ea:a0 brd ff:ff:ff:ff:ff:ff, spoof checking on, link-state auto, trust off

vf 1 link/ether 3e:1d:36:d7:3d:49 brd ff:ff:ff:ff:ff:ff, spoof checking on, link-state auto, trust off

vf 2 link/ether ce:09:56:97:df:f9 brd ff:ff:ff:ff:ff:ff, spoof checking on, link-state auto, trust off

vf 3 link/ether 5e:91:cf:88:d1:38 brd ff:ff:ff:ff:ff:ff, spoof checking on, link-state auto, trust off

vf 4 link/ether e6:06:a1:96:2f:de brd ff:ff:ff:ff:ff:ff, spoof checking on, link-state auto, trust off23.4.2. Configuring SR-IOV network devices

The SR-IOV Network Operator adds the SriovNetworkNodePolicy.sriovnetwork.openshift.io custom resource definition (CRD) to OpenShift Container Platform. You can configure an SR-IOV network device by creating a SriovNetworkNodePolicy custom resource (CR).

When applying the configuration specified in a SriovNetworkNodePolicy object, the SR-IOV Operator might drain the nodes, and in some cases, reboot nodes.

It might take several minutes for a configuration change to apply.

Prerequisites

-

You installed the OpenShift CLI (

oc). -

You have access to the cluster as a user with the

cluster-adminrole. - You have installed the SR-IOV Network Operator.

- You have enough available nodes in your cluster to handle the evicted workload from drained nodes.

- You have not selected any control plane nodes for SR-IOV network device configuration.

Procedure

-

Create an

SriovNetworkNodePolicyobject, and then save the YAML in the<name>-sriov-node-network.yamlfile. Replace<name>with the name for this configuration. -

Optional: Label the SR-IOV capable cluster nodes with

SriovNetworkNodePolicy.Spec.NodeSelectorif they are not already labeled. For more information about labeling nodes, see "Understanding how to update labels on nodes". Create the

SriovNetworkNodePolicyobject. When running the following command, replace<name>with the name for this configuration:$ oc create -f <name>-sriov-node-network.yamlAfter applying the configuration update, all the pods in

sriov-network-operatornamespace transition to theRunningstatus.To verify that the SR-IOV network device is configured, enter the following command. Replace

<node_name>with the name of a node with the SR-IOV network device that you just configured.$ oc get sriovnetworknodestates -n openshift-sriov-network-operator <node_name> -o jsonpath='{.status.syncStatus}'

23.4.2.1. Configuring parallel node draining during SR-IOV network policy updates

By default, the SR-IOV Network Operator drains workloads from a node before every policy change. The Operator completes this action, one node at a time, to ensure that no workloads are affected by the reconfiguration.

In large clusters, draining nodes sequentially can be time-consuming, taking hours or even days. In time-sensitive environments, you can enable parallel node draining in an SriovNetworkPoolConfig custom resource (CR) for faster rollouts of SR-IOV network configurations.

To configure parallel draining, use the SriovNetworkPoolConfig CR to create a node pool. You can then add nodes to the pool and define the maximum number of nodes in the pool that the Operator can drain in parallel. With this approach, you can enable parallel draining for faster reconfiguration while ensuring you still have enough nodes remaining in the pool to handle any running workloads.

A node can belong to only one SR-IOV network pool configuration. If a node is not part of a pool, it is added to a virtual, default pool that is configured to drain one node at a time only.

The node might restart during the draining process.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges. - Install the SR-IOV Network Operator.

- Ensure that nodes have hardware that supports SR-IOV.

Procedure

Create a

SriovNetworkPoolConfigresource:Create a YAML file that defines the

SriovNetworkPoolConfigresource:Example

sriov-nw-pool.yamlfileapiVersion: v1 kind: SriovNetworkPoolConfig metadata: name: pool-11 namespace: openshift-sriov-network-operator2 spec: maxUnavailable: 23 nodeSelector:4 matchLabels: node-role.kubernetes.io/worker: ""- 1

- Specify the name of the

SriovNetworkPoolConfigobject. - 2

- Specify namespace where the SR-IOV Network Operator is installed.

- 3

- Specify an integer number or percentage value for nodes that can be unavailable in the pool during an update. For example, if you have 10 nodes and you set the maximum unavailable value to 2, then only 2 nodes can be drained in parallel at any time, leaving 8 nodes for handling workloads.

- 4

- Specify the nodes to add the pool by using the node selector. This example adds all nodes with the

workerrole to the pool.

Create the

SriovNetworkPoolConfigresource by running the following command:$ oc create -f sriov-nw-pool.yaml

Create the

sriov-testnamespace by running the following comand:$ oc create namespace sriov-testCreate a

SriovNetworkNodePolicyresource:Create a YAML file that defines the

SriovNetworkNodePolicyresource:Example

sriov-node-policy.yamlfileapiVersion: sriovnetwork.openshift.io/v1 kind: SriovNetworkNodePolicy metadata: name: sriov-nic-1 namespace: openshift-sriov-network-operator spec: deviceType: netdevice nicSelector: pfNames: ["ens1"] nodeSelector: node-role.kubernetes.io/worker: "" numVfs: 5 priority: 99 resourceName: sriov_nic_1Create the

SriovNetworkNodePolicyresource by running the following command:$ oc create -f sriov-node-policy.yaml

Create a

SriovNetworkresource:Create a YAML file that defines the

SriovNetworkresource:Example

sriov-network.yamlfileapiVersion: sriovnetwork.openshift.io/v1 kind: SriovNetwork metadata: name: sriov-nic-1 namespace: openshift-sriov-network-operator spec: linkState: auto networkNamespace: sriov-test resourceName: sriov_nic_1 capabilities: '{ "mac": true, "ips": true }' ipam: '{ "type": "static" }'Create the

SriovNetworkresource by running the following command:$ oc create -f sriov-network.yaml

Verification

View the node pool you created by running the following command:

$ oc get sriovNetworkpoolConfig -n openshift-sriov-network-operatorExample output

NAME AGE pool-1 67s1 - 1

- In this example,

pool-1contains all the nodes with theworkerrole.

To demonstrate the node draining process by using the example scenario from the previous procedure, complete the following steps:

Update the number of virtual functions in the

SriovNetworkNodePolicyresource to trigger workload draining in the cluster:$ oc patch SriovNetworkNodePolicy sriov-nic-1 -n openshift-sriov-network-operator --type merge -p '{"spec": {"numVfs": 4}}'Monitor the draining status on the target cluster by running the following command:

$ oc get sriovNetworkNodeState -n openshift-sriov-network-operatorExample output

NAMESPACE NAME SYNC STATUS DESIRED SYNC STATE CURRENT SYNC STATE AGE openshift-sriov-network-operator worker-0 InProgress Drain_Required DrainComplete 3d10h openshift-sriov-network-operator worker-1 InProgress Drain_Required DrainComplete 3d10hWhen the draining process is complete, the

SYNC STATUSchanges toSucceeded, and theDESIRED SYNC STATEandCURRENT SYNC STATEvalues return toIDLE.Example output

NAMESPACE NAME SYNC STATUS DESIRED SYNC STATE CURRENT SYNC STATE AGE openshift-sriov-network-operator worker-0 Succeeded Idle Idle 3d10h openshift-sriov-network-operator worker-1 Succeeded Idle Idle 3d10h

23.4.3. Troubleshooting SR-IOV configuration

After following the procedure to configure an SR-IOV network device, the following sections address some error conditions.

To display the state of nodes, run the following command:

$ oc get sriovnetworknodestates -n openshift-sriov-network-operator <node_name>

where: <node_name> specifies the name of a node with an SR-IOV network device.

Error output: Cannot allocate memory

"lastSyncError": "write /sys/bus/pci/devices/0000:3b:00.1/sriov_numvfs: cannot allocate memory"When a node indicates that it cannot allocate memory, check the following items:

- Confirm that global SR-IOV settings are enabled in the BIOS for the node.

- Confirm that VT-d is enabled in the BIOS for the node.

23.4.4. Assigning an SR-IOV network to a VRF

As a cluster administrator, you can assign an SR-IOV network interface to your VRF domain by using the CNI VRF plugin.

To do this, add the VRF configuration to the optional metaPlugins parameter of the SriovNetwork resource.

Applications that use VRFs need to bind to a specific device. The common usage is to use the SO_BINDTODEVICE option for a socket. SO_BINDTODEVICE binds the socket to a device that is specified in the passed interface name, for example, eth1. To use SO_BINDTODEVICE, the application must have CAP_NET_RAW capabilities.

Using a VRF through the ip vrf exec command is not supported in OpenShift Container Platform pods. To use VRF, bind applications directly to the VRF interface.

23.4.4.1. Creating an additional SR-IOV network attachment with the CNI VRF plugin

The SR-IOV Network Operator manages additional network definitions. When you specify an additional SR-IOV network to create, the SR-IOV Network Operator creates the NetworkAttachmentDefinition custom resource (CR) automatically.

Do not edit NetworkAttachmentDefinition custom resources that the SR-IOV Network Operator manages. Doing so might disrupt network traffic on your additional network.

To create an additional SR-IOV network attachment with the CNI VRF plugin, perform the following procedure.

Prerequisites

- Install the OpenShift Container Platform CLI (oc).

- Log in to the OpenShift Container Platform cluster as a user with cluster-admin privileges.

Procedure

Create the

SriovNetworkcustom resource (CR) for the additional SR-IOV network attachment and insert themetaPluginsconfiguration, as in the following example CR. Save the YAML as the filesriov-network-attachment.yaml.apiVersion: sriovnetwork.openshift.io/v1 kind: SriovNetwork metadata: name: example-network namespace: additional-sriov-network-1 spec: ipam: | { "type": "host-local", "subnet": "10.56.217.0/24", "rangeStart": "10.56.217.171", "rangeEnd": "10.56.217.181", "routes": [{ "dst": "0.0.0.0/0" }], "gateway": "10.56.217.1" } vlan: 0 resourceName: intelnics metaPlugins : | { "type": "vrf",1 "vrfname": "example-vrf-name"2 }Create the

SriovNetworkresource:$ oc create -f sriov-network-attachment.yaml

Verifying that the NetworkAttachmentDefinition CR is successfully created

Confirm that the SR-IOV Network Operator created the

NetworkAttachmentDefinitionCR by running the following command.$ oc get network-attachment-definitions -n <namespace>1 - 1

- Replace

<namespace>with the namespace that you specified when configuring the network attachment, for example,additional-sriov-network-1.

Example output

NAME AGE additional-sriov-network-1 14mNoteThere might be a delay before the SR-IOV Network Operator creates the CR.

Verifying that the additional SR-IOV network attachment is successful

To verify that the VRF CNI is correctly configured and the additional SR-IOV network attachment is attached, do the following:

- Create an SR-IOV network that uses the VRF CNI.

- Assign the network to a pod.

Verify that the pod network attachment is connected to the SR-IOV additional network. Remote shell into the pod and run the following command:

$ ip vrf showExample output

Name Table ----------------------- red 10Confirm the VRF interface is master of the secondary interface:

$ ip linkExample output

... 5: net1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master red state UP mode ...

23.4.5. Exclude the SR-IOV network topology for NUMA-aware scheduling

You can exclude advertising the Non-Uniform Memory Access (NUMA) node for the SR-IOV network to the Topology Manager for more flexible SR-IOV network deployments during NUMA-aware pod scheduling.

In some scenarios, it is a priority to maximize CPU and memory resources for a pod on a single NUMA node. By not providing a hint to the Topology Manager about the NUMA node for the pod’s SR-IOV network resource, the Topology Manager can deploy the SR-IOV network resource and the pod CPU and memory resources to different NUMA nodes. This can add to network latency because of the data transfer between NUMA nodes. However, it is acceptable in scenarios when workloads require optimal CPU and memory performance.

For example, consider a compute node, compute-1, that features two NUMA nodes: numa0 and numa1. The SR-IOV-enabled NIC is present on numa0. The CPUs available for pod scheduling are present on numa1 only. By setting the excludeTopology specification to true, the Topology Manager can assign CPU and memory resources for the pod to numa1 and can assign the SR-IOV network resource for the same pod to numa0. This is only possible when you set the excludeTopology specification to true. Otherwise, the Topology Manager attempts to place all resources on the same NUMA node.

23.4.5.1. Excluding the SR-IOV network topology for NUMA-aware scheduling

To exclude advertising the SR-IOV network resource’s Non-Uniform Memory Access (NUMA) node to the Topology Manager, you can configure the excludeTopology specification in the SriovNetworkNodePolicy custom resource. Use this configuration for more flexible SR-IOV network deployments during NUMA-aware pod scheduling.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have configured the CPU Manager policy to

static. For more information about CPU Manager, see the Additional resources section. -

You have configured the Topology Manager policy to

single-numa-node. - You have installed the SR-IOV Network Operator.

Procedure

Create the

SriovNetworkNodePolicyCR:Save the following YAML in the

sriov-network-node-policy.yamlfile, replacing values in the YAML to match your environment:apiVersion: sriovnetwork.openshift.io/v1 kind: SriovNetworkNodePolicy metadata: name: <policy_name> namespace: openshift-sriov-network-operator spec: resourceName: sriovnuma01 nodeSelector: kubernetes.io/hostname: <node_name> numVfs: <number_of_Vfs> nicSelector:2 vendor: "<vendor_ID>" deviceID: "<device_ID>" deviceType: netdevice excludeTopology: true3 - 1

- The resource name of the SR-IOV network device plugin. This YAML uses a sample

resourceNamevalue. - 2

- Identify the device for the Operator to configure by using the NIC selector.

- 3

- To exclude advertising the NUMA node for the SR-IOV network resource to the Topology Manager, set the value to

true. The default value isfalse.

NoteIf multiple

SriovNetworkNodePolicyresources target the same SR-IOV network resource, theSriovNetworkNodePolicyresources must have the same value as theexcludeTopologyspecification. Otherwise, the conflicting policy is rejected.Create the

SriovNetworkNodePolicyresource by running the following command:$ oc create -f sriov-network-node-policy.yamlExample output

sriovnetworknodepolicy.sriovnetwork.openshift.io/policy-for-numa-0 created

Create the

SriovNetworkCR:Save the following YAML in the

sriov-network.yamlfile, replacing values in the YAML to match your environment:apiVersion: sriovnetwork.openshift.io/v1 kind: SriovNetwork metadata: name: sriov-numa-0-network1 namespace: openshift-sriov-network-operator spec: resourceName: sriovnuma02 networkNamespace: <namespace>3 ipam: |-4 { "type": "<ipam_type>", }- 1

- Replace

sriov-numa-0-networkwith the name for the SR-IOV network resource. - 2

- Specify the resource name for the

SriovNetworkNodePolicyCR from the previous step. This YAML uses a sampleresourceNamevalue. - 3

- Enter the namespace for your SR-IOV network resource.

- 4

- Enter the IP address management configuration for the SR-IOV network.

Create the

SriovNetworkresource by running the following command:$ oc create -f sriov-network.yamlExample output

sriovnetwork.sriovnetwork.openshift.io/sriov-numa-0-network created

Create a pod and assign the SR-IOV network resource from the previous step:

Save the following YAML in the

sriov-network-pod.yamlfile, replacing values in the YAML to match your environment:apiVersion: v1 kind: Pod metadata: name: <pod_name> annotations: k8s.v1.cni.cncf.io/networks: |- [ { "name": "sriov-numa-0-network",1 } ] spec: containers: - name: <container_name> image: <image> imagePullPolicy: IfNotPresent command: ["sleep", "infinity"]- 1

- This is the name of the

SriovNetworkresource that uses theSriovNetworkNodePolicyresource.

Create the

Podresource by running the following command:$ oc create -f sriov-network-pod.yamlExample output

pod/example-pod created

Verification

Verify the status of the pod by running the following command, replacing

<pod_name>with the name of the pod:$ oc get pod <pod_name>Example output

NAME READY STATUS RESTARTS AGE test-deployment-sriov-76cbbf4756-k9v72 1/1 Running 0 45hOpen a debug session with the target pod to verify that the SR-IOV network resources are deployed to a different node than the memory and CPU resources.

Open a debug session with the pod by running the following command, replacing <pod_name> with the target pod name.

$ oc debug pod/<pod_name>Set

/hostas the root directory within the debug shell. The debug pod mounts the root file system from the host in/hostwithin the pod. By changing the root directory to/host, you can run binaries from the host file system:$ chroot /hostView information about the CPU allocation by running the following commands:

$ lscpu | grep NUMAExample output

NUMA node(s): 2 NUMA node0 CPU(s): 0,2,4,6,8,10,12,14,16,18,... NUMA node1 CPU(s): 1,3,5,7,9,11,13,15,17,19,...$ cat /proc/self/status | grep CpusExample output

Cpus_allowed: aa Cpus_allowed_list: 1,3,5,7$ cat /sys/class/net/net1/device/numa_nodeExample output

0In this example, CPUs 1,3,5, and 7 are allocated to

NUMA node1but the SR-IOV network resource can use the NIC inNUMA node0.

If the excludeTopology specification is set to True, it is possible that the required resources exist in the same NUMA node.

23.4.6. Next steps

23.5. Configuring an SR-IOV Ethernet network attachment

You can configure an Ethernet network attachment for an Single Root I/O Virtualization (SR-IOV) device in the cluster.

23.5.1. Ethernet device configuration object

You can configure an Ethernet network device by defining an SriovNetwork object.

The following YAML describes an SriovNetwork object:

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovNetwork

metadata:

name: <name>

namespace: openshift-sriov-network-operator

spec:

resourceName: <sriov_resource_name>

networkNamespace: <target_namespace>

vlan: <vlan>

spoofChk: "<spoof_check>"

ipam: |-

{}

linkState: <link_state>

maxTxRate: <max_tx_rate>

minTxRate: <min_tx_rate>

vlanQoS: <vlan_qos>

trust: "<trust_vf>"

capabilities: <capabilities> - 1

- A name for the object. The SR-IOV Network Operator creates a

NetworkAttachmentDefinitionobject with same name. - 2

- The namespace where the SR-IOV Network Operator is installed.

- 3

- The value for the

spec.resourceNameparameter from theSriovNetworkNodePolicyobject that defines the SR-IOV hardware for this additional network. - 4

- The target namespace for the

SriovNetworkobject. Only pods in the target namespace can attach to the additional network. - 5

- Optional: A Virtual LAN (VLAN) ID for the additional network. The integer value must be from

0to4095. The default value is0. - 6

- Optional: The spoof check mode of the VF. The allowed values are the strings

"on"and"off".ImportantYou must enclose the value you specify in quotes or the object is rejected by the SR-IOV Network Operator.

- 7

- A configuration object for the IPAM CNI plugin as a YAML block scalar. The plugin manages IP address assignment for the attachment definition.

- 8

- Optional: The link state of virtual function (VF). Allowed value are

enable,disableandauto. - 9

- Optional: A maximum transmission rate, in Mbps, for the VF.

- 10

- Optional: A minimum transmission rate, in Mbps, for the VF. This value must be less than or equal to the maximum transmission rate.Note

Intel NICs do not support the

minTxRateparameter. For more information, see BZ#1772847. - 11

- Optional: An IEEE 802.1p priority level for the VF. The default value is

0. - 12

- Optional: The trust mode of the VF. The allowed values are the strings

"on"and"off".ImportantYou must enclose the value that you specify in quotes, or the SR-IOV Network Operator rejects the object.

- 13

- Optional: The capabilities to configure for this additional network. You can specify

'{ "ips": true }'to enable IP address support or'{ "mac": true }'to enable MAC address support.

23.5.1.1. Configuration of IP address assignment for an additional network

The IP address management (IPAM) Container Network Interface (CNI) plugin provides IP addresses for other CNI plugins.

You can use the following IP address assignment types:

- Static assignment.

- Dynamic assignment through a DHCP server. The DHCP server you specify must be reachable from the additional network.

- Dynamic assignment through the Whereabouts IPAM CNI plugin.

23.5.1.1.1. Static IP address assignment configuration

The following table describes the configuration for static IP address assignment:

| Field | Type | Description |

|---|---|---|

|

|

|

The IPAM address type. The value |

|

|

| An array of objects specifying IP addresses to assign to the virtual interface. Both IPv4 and IPv6 IP addresses are supported. |

|

|

| An array of objects specifying routes to configure inside the pod. |

|

|

| Optional: An array of objects specifying the DNS configuration. |

The addresses array requires objects with the following fields:

| Field | Type | Description |

|---|---|---|

|

|

|

An IP address and network prefix that you specify. For example, if you specify |

|

|

| The default gateway to route egress network traffic to. |

| Field | Type | Description |

|---|---|---|

|

|

|

The IP address range in CIDR format, such as |

|

|

| The gateway where network traffic is routed. |

| Field | Type | Description |

|---|---|---|

|

|

| An array of one or more IP addresses for to send DNS queries to. |

|

|

|

The default domain to append to a hostname. For example, if the domain is set to |

|

|

|

An array of domain names to append to an unqualified hostname, such as |

Static IP address assignment configuration example

{

"ipam": {

"type": "static",

"addresses": [

{

"address": "191.168.1.7/24"

}

]

}

}23.5.1.1.2. Dynamic IP address (DHCP) assignment configuration

The following JSON describes the configuration for dynamic IP address address assignment with DHCP.

A pod obtains its original DHCP lease when it is created. The lease must be periodically renewed by a minimal DHCP server deployment running on the cluster.

The SR-IOV Network Operator does not create a DHCP server deployment; The Cluster Network Operator is responsible for creating the minimal DHCP server deployment.

To trigger the deployment of the DHCP server, you must create a shim network attachment by editing the Cluster Network Operator configuration, as in the following example:

Example shim network attachment definition

apiVersion: operator.openshift.io/v1

kind: Network

metadata:

name: cluster

spec:

additionalNetworks:

- name: dhcp-shim

namespace: default

type: Raw

rawCNIConfig: |-

{

"name": "dhcp-shim",

"cniVersion": "0.3.1",

"type": "bridge",

"ipam": {

"type": "dhcp"

}

}

# ...| Field | Type | Description |

|---|---|---|

|

|

|

The IPAM address type. The value |

Dynamic IP address (DHCP) assignment configuration example

{

"ipam": {

"type": "dhcp"

}

}23.5.1.1.3. Dynamic IP address assignment configuration with Whereabouts

The Whereabouts CNI plugin allows the dynamic assignment of an IP address to an additional network without the use of a DHCP server.

The following table describes the configuration for dynamic IP address assignment with Whereabouts:

| Field | Type | Description |

|---|---|---|

|

|

|

The IPAM address type. The value |

|

|

| An IP address and range in CIDR notation. IP addresses are assigned from within this range of addresses. |

|

|

| Optional: A list of zero or more IP addresses and ranges in CIDR notation. IP addresses within an excluded address range are not assigned. |

Dynamic IP address assignment configuration example that uses Whereabouts

{

"ipam": {

"type": "whereabouts",

"range": "192.0.2.192/27",

"exclude": [

"192.0.2.192/30",

"192.0.2.196/32"

]

}

}23.5.1.2. Creating a configuration for assignment of dual-stack IP addresses dynamically

Dual-stack IP address assignment can be configured with the ipRanges parameter for:

- IPv4 addresses

- IPv6 addresses

- multiple IP address assignment

Procedure

-

Set

typetowhereabouts. Use

ipRangesto allocate IP addresses as shown in the following example:cniVersion: operator.openshift.io/v1 kind: Network =metadata: name: cluster spec: additionalNetworks: - name: whereabouts-shim namespace: default type: Raw rawCNIConfig: |- { "name": "whereabouts-dual-stack", "cniVersion": "0.3.1, "type": "bridge", "ipam": { "type": "whereabouts", "ipRanges": [ {"range": "192.168.10.0/24"}, {"range": "2001:db8::/64"} ] } }- Attach network to a pod. For more information, see "Adding a pod to an additional network".

- Verify that all IP addresses are assigned.

Run the following command to ensure the IP addresses are assigned as metadata.

$ oc exec -it mypod -- ip a

23.5.2. Configuring SR-IOV additional network

You can configure an additional network that uses SR-IOV hardware by creating an SriovNetwork object. When you create an SriovNetwork object, the SR-IOV Network Operator automatically creates a NetworkAttachmentDefinition object.

Do not modify or delete an SriovNetwork object if it is attached to any pods in a running state.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create a

SriovNetworkobject, and then save the YAML in the<name>.yamlfile, where<name>is a name for this additional network. The object specification might resemble the following example:apiVersion: sriovnetwork.openshift.io/v1 kind: SriovNetwork metadata: name: attach1 namespace: openshift-sriov-network-operator spec: resourceName: net1 networkNamespace: project2 ipam: |- { "type": "host-local", "subnet": "10.56.217.0/24", "rangeStart": "10.56.217.171", "rangeEnd": "10.56.217.181", "gateway": "10.56.217.1" }To create the object, enter the following command:

$ oc create -f <name>.yamlwhere

<name>specifies the name of the additional network.Optional: To confirm that the

NetworkAttachmentDefinitionobject that is associated with theSriovNetworkobject that you created in the previous step exists, enter the following command. Replace<namespace>with thenetworkNamespacevalue you specified in theSriovNetworkobject.$ oc get net-attach-def -n <namespace>

23.5.3. Next steps

23.6. Configuring an SR-IOV InfiniBand network attachment

You can configure an InfiniBand (IB) network attachment for an Single Root I/O Virtualization (SR-IOV) device in the cluster.

23.6.1. InfiniBand device configuration object

You can configure an InfiniBand (IB) network device by defining an SriovIBNetwork object.

The following YAML describes an SriovIBNetwork object:

apiVersion: sriovnetwork.openshift.io/v1

kind: SriovIBNetwork

metadata:

name: <name>

namespace: openshift-sriov-network-operator

spec:

resourceName: <sriov_resource_name>

networkNamespace: <target_namespace>

ipam: |-

{}

linkState: <link_state>

capabilities: <capabilities> - 1

- A name for the object. The SR-IOV Network Operator creates a

NetworkAttachmentDefinitionobject with same name. - 2

- The namespace where the SR-IOV Operator is installed.

- 3

- The value for the

spec.resourceNameparameter from theSriovNetworkNodePolicyobject that defines the SR-IOV hardware for this additional network. - 4

- The target namespace for the

SriovIBNetworkobject. Only pods in the target namespace can attach to the network device. - 5

- Optional: A configuration object for the IPAM CNI plugin as a YAML block scalar. The plugin manages IP address assignment for the attachment definition.

- 6

- Optional: The link state of virtual function (VF). Allowed values are

enable,disableandauto. - 7

- Optional: The capabilities to configure for this network. You can specify

'{ "ips": true }'to enable IP address support or'{ "infinibandGUID": true }'to enable IB Global Unique Identifier (GUID) support.

23.6.1.1. Configuration of IP address assignment for an additional network

The IP address management (IPAM) Container Network Interface (CNI) plugin provides IP addresses for other CNI plugins.

You can use the following IP address assignment types:

- Static assignment.

- Dynamic assignment through a DHCP server. The DHCP server you specify must be reachable from the additional network.

- Dynamic assignment through the Whereabouts IPAM CNI plugin.

23.6.1.1.1. Static IP address assignment configuration

The following table describes the configuration for static IP address assignment:

| Field | Type | Description |

|---|---|---|

|

|

|

The IPAM address type. The value |

|

|

| An array of objects specifying IP addresses to assign to the virtual interface. Both IPv4 and IPv6 IP addresses are supported. |

|

|

| An array of objects specifying routes to configure inside the pod. |

|

|

| Optional: An array of objects specifying the DNS configuration. |

The addresses array requires objects with the following fields:

| Field | Type | Description |

|---|---|---|

|

|

|

An IP address and network prefix that you specify. For example, if you specify |

|

|

| The default gateway to route egress network traffic to. |

| Field | Type | Description |

|---|---|---|

|

|

|

The IP address range in CIDR format, such as |

|

|

| The gateway where network traffic is routed. |

| Field | Type | Description |

|---|---|---|

|

|

| An array of one or more IP addresses for to send DNS queries to. |

|

|

|

The default domain to append to a hostname. For example, if the domain is set to |

|

|

|

An array of domain names to append to an unqualified hostname, such as |

Static IP address assignment configuration example

{

"ipam": {

"type": "static",

"addresses": [

{

"address": "191.168.1.7/24"

}

]

}

}23.6.1.1.2. Dynamic IP address (DHCP) assignment configuration

The following JSON describes the configuration for dynamic IP address address assignment with DHCP.

A pod obtains its original DHCP lease when it is created. The lease must be periodically renewed by a minimal DHCP server deployment running on the cluster.

To trigger the deployment of the DHCP server, you must create a shim network attachment by editing the Cluster Network Operator configuration, as in the following example:

Example shim network attachment definition

apiVersion: operator.openshift.io/v1

kind: Network

metadata:

name: cluster

spec:

additionalNetworks:

- name: dhcp-shim

namespace: default

type: Raw

rawCNIConfig: |-

{

"name": "dhcp-shim",

"cniVersion": "0.3.1",

"type": "bridge",

"ipam": {

"type": "dhcp"

}

}

# ...| Field | Type | Description |

|---|---|---|

|

|

|

The IPAM address type. The value |

Dynamic IP address (DHCP) assignment configuration example

{

"ipam": {

"type": "dhcp"

}

}23.6.1.1.3. Dynamic IP address assignment configuration with Whereabouts

The Whereabouts CNI plugin allows the dynamic assignment of an IP address to an additional network without the use of a DHCP server.

The following table describes the configuration for dynamic IP address assignment with Whereabouts:

| Field | Type | Description |

|---|---|---|

|

|

|

The IPAM address type. The value |

|

|

| An IP address and range in CIDR notation. IP addresses are assigned from within this range of addresses. |

|

|

| Optional: A list of zero or more IP addresses and ranges in CIDR notation. IP addresses within an excluded address range are not assigned. |

Dynamic IP address assignment configuration example that uses Whereabouts

{

"ipam": {

"type": "whereabouts",

"range": "192.0.2.192/27",

"exclude": [

"192.0.2.192/30",

"192.0.2.196/32"

]

}

}23.6.1.2. Creating a configuration for assignment of dual-stack IP addresses dynamically

Dual-stack IP address assignment can be configured with the ipRanges parameter for:

- IPv4 addresses

- IPv6 addresses

- multiple IP address assignment

Procedure

-

Set

typetowhereabouts. Use

ipRangesto allocate IP addresses as shown in the following example:cniVersion: operator.openshift.io/v1 kind: Network =metadata: name: cluster spec: additionalNetworks: - name: whereabouts-shim namespace: default type: Raw rawCNIConfig: |- { "name": "whereabouts-dual-stack", "cniVersion": "0.3.1, "type": "bridge", "ipam": { "type": "whereabouts", "ipRanges": [ {"range": "192.168.10.0/24"}, {"range": "2001:db8::/64"} ] } }- Attach network to a pod. For more information, see "Adding a pod to an additional network".

- Verify that all IP addresses are assigned.

Run the following command to ensure the IP addresses are assigned as metadata.

$ oc exec -it mypod -- ip a

23.6.2. Configuring SR-IOV additional network

You can configure an additional network that uses SR-IOV hardware by creating an SriovIBNetwork object. When you create an SriovIBNetwork object, the SR-IOV Network Operator automatically creates a NetworkAttachmentDefinition object.

Do not modify or delete an SriovIBNetwork object if it is attached to any pods in a running state.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create a

SriovIBNetworkobject, and then save the YAML in the<name>.yamlfile, where<name>is a name for this additional network. The object specification might resemble the following example:apiVersion: sriovnetwork.openshift.io/v1 kind: SriovIBNetwork metadata: name: attach1 namespace: openshift-sriov-network-operator spec: resourceName: net1 networkNamespace: project2 ipam: |- { "type": "host-local", "subnet": "10.56.217.0/24", "rangeStart": "10.56.217.171", "rangeEnd": "10.56.217.181", "gateway": "10.56.217.1" }To create the object, enter the following command:

$ oc create -f <name>.yamlwhere

<name>specifies the name of the additional network.Optional: To confirm that the